Abnormal Water Quality Monitoring Based on Visual Sensing of Three-Dimensional Motion Behavior of Fish

Abstract

:1. Introduction

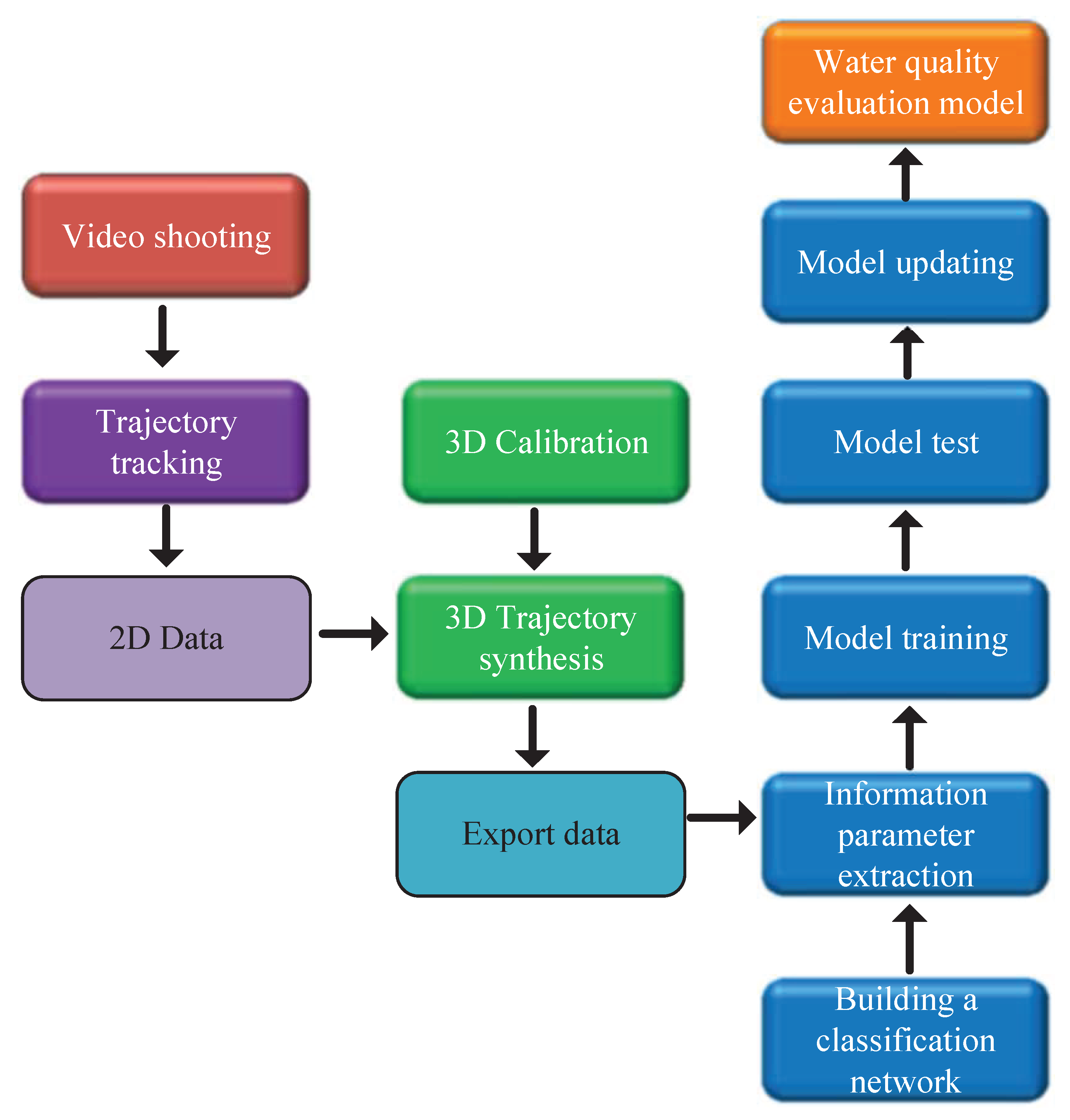

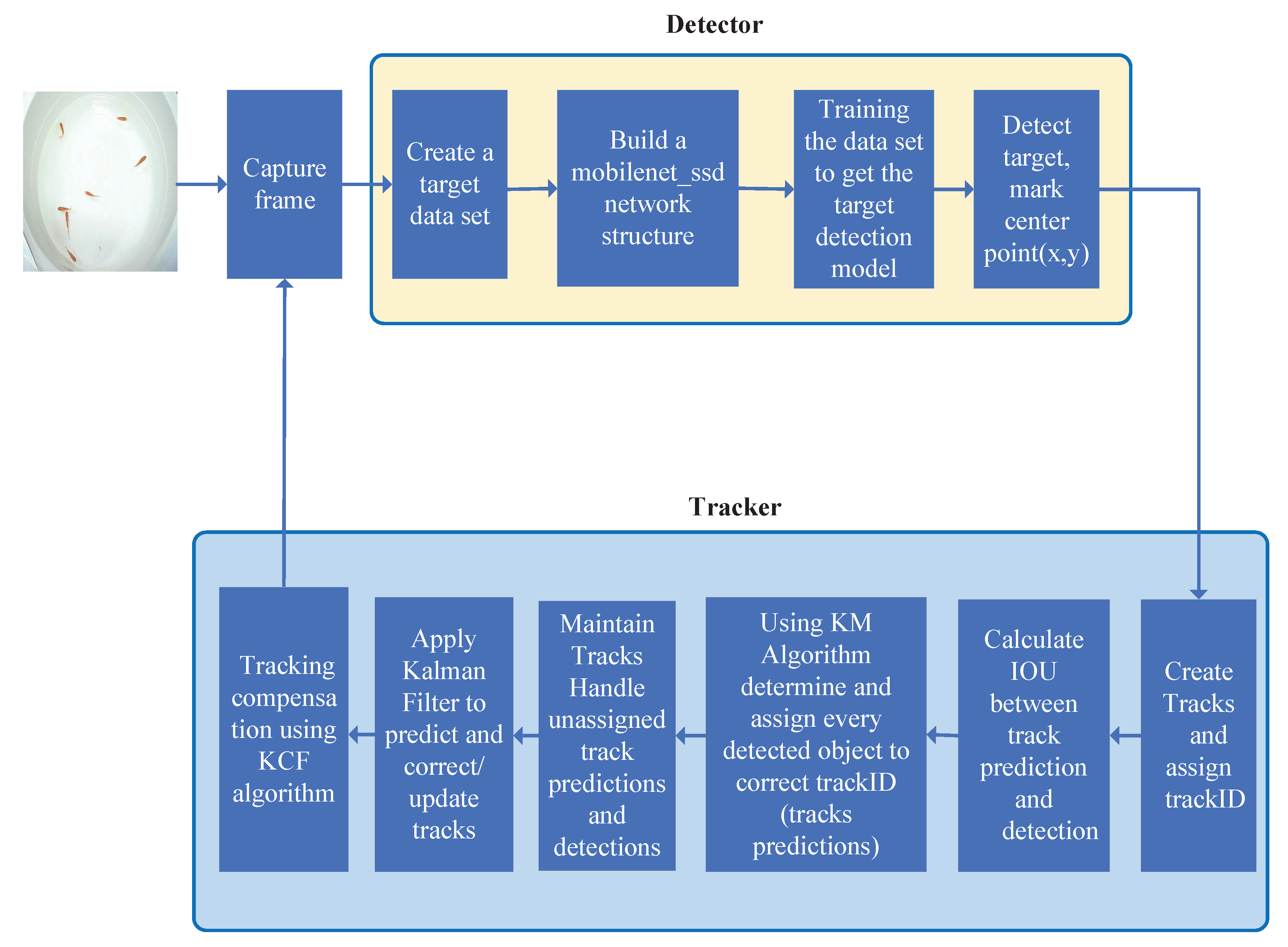

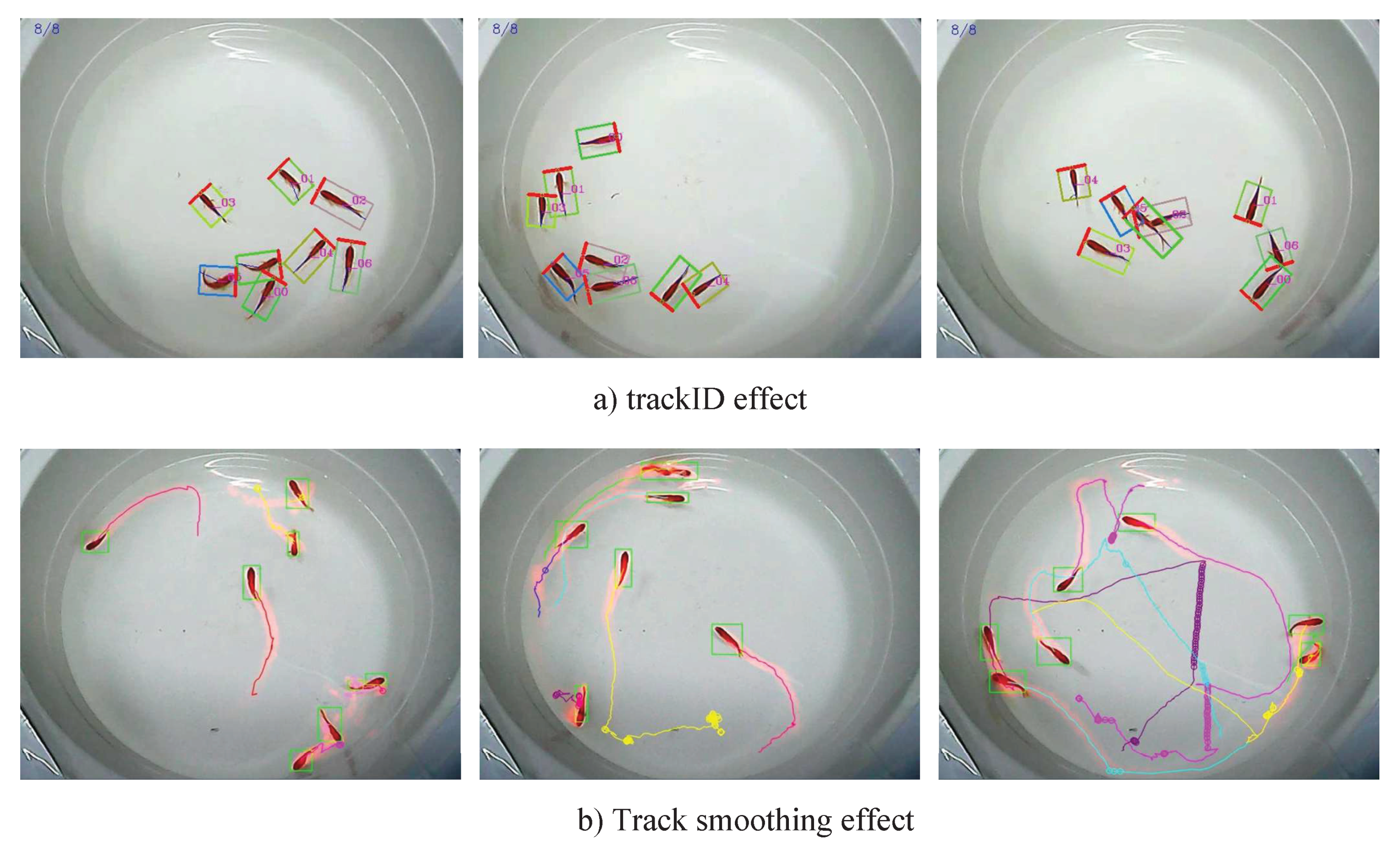

2. Fish Target Tracking

2.1. Fish Body Detection

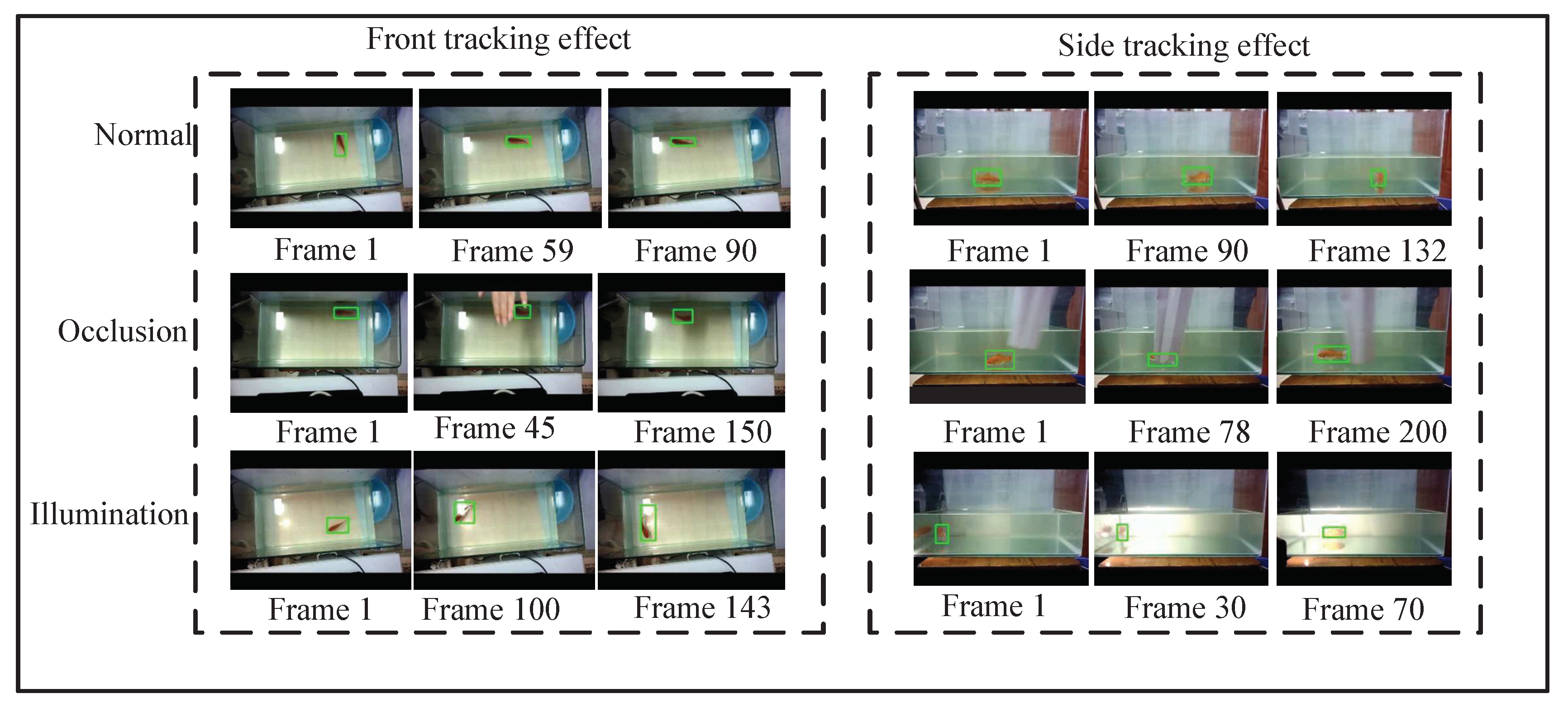

2.2. Fish Tracking

2.2.1. Kalman Filter Algorithm

2.2.2. KM Algorithm

2.2.3. Correlation Filtering

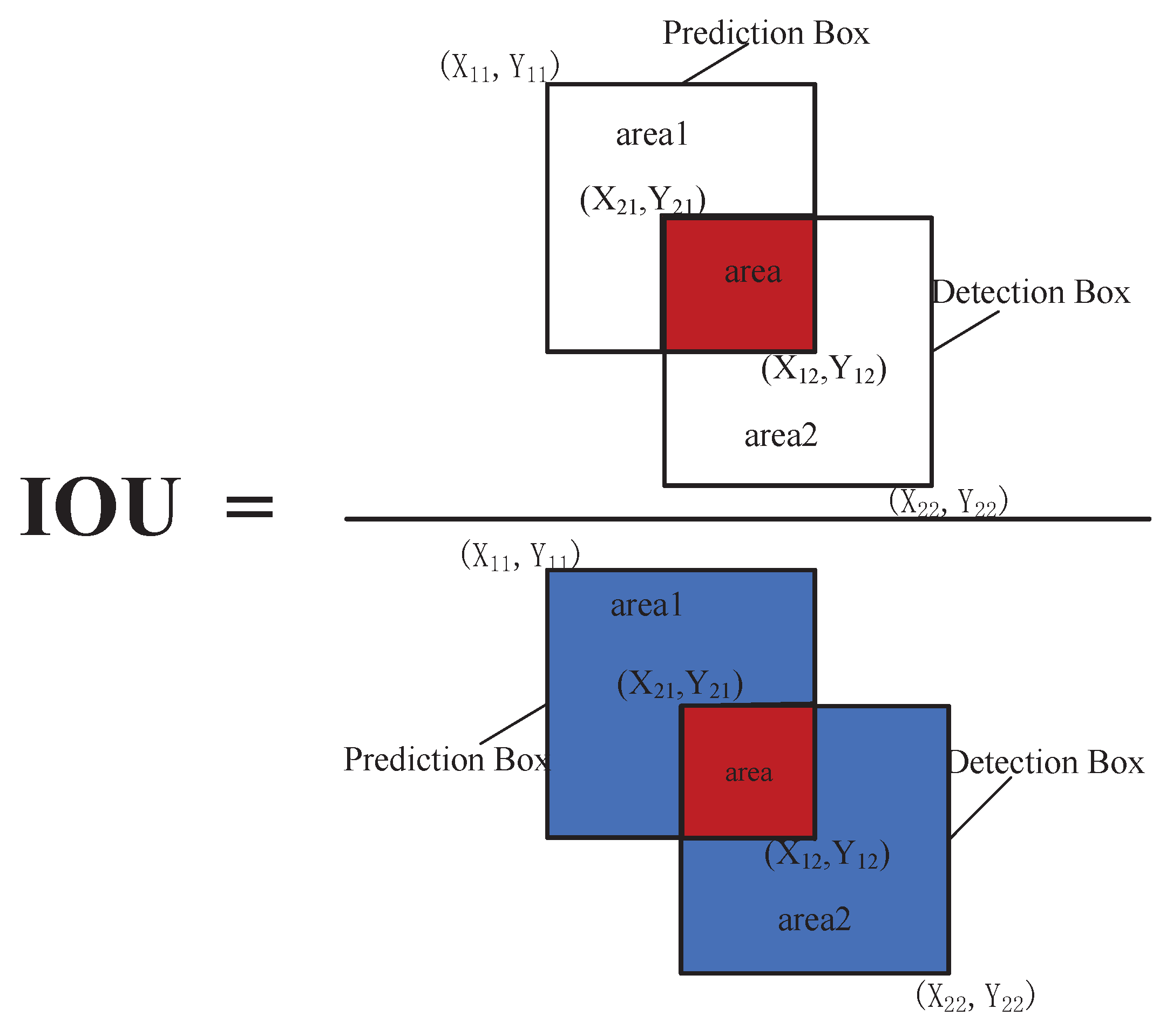

2.2.4. IOU Calculation

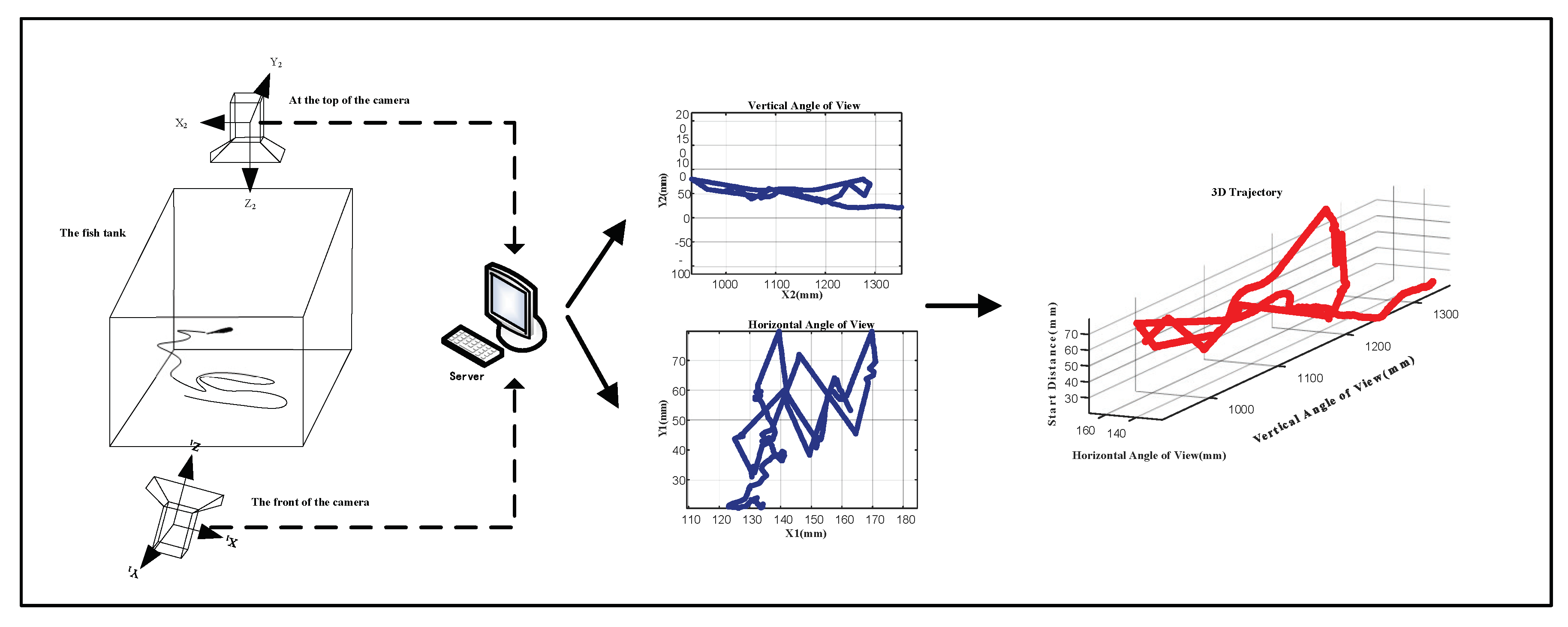

3. 3D Trajectory Pixel Coordinate Reconstruction

| Algorithm 1 Camera calibration and point reconstruction based on direct linear transformation (DLT) |

| 1:Camera calibration by DLT using known object points and their image points. |

| This code performs 2D or 3D DLT camera calibration with any number of views (cameras). |

| Iuput |

| nd is the number of dimensions of the object space: 3 for 3D DLT and 2 for 2D DLT. |

| xyz are the coordinates in the object 3D or 2D space of the calibration points. |

| uv are the coordinates in the image 2D space of these calibration points. |

| The coordinates (x,y,z and u,v) are given as columns and the different points as rows. |

| For the 2D DLT (object planar space), only the first 2 columns (x and y) are used. |

| There must be at least 6 calibration points for the 3D DLT and 4 for the 2D DLT. |

| Ouput |

| L:array of the 8 or 11 parameters of the calibration matrix |

| err:error of the DLT (mean residual of the DLT transformation in units of camera coordinates). |

| 2:Reconstruction of object point from image point(s) based on the DLT parameters. |

| This code performs 2D or 3D DLT point reconstruction with any number of views (cameras). |

| For 3D DLT, at least two views (cameras) are necessary. |

| Input |

| nd is the number of dimensions of the object space: 3 for 3D DLT and 2 for 2D DLT. |

| nc is the number of cameras (views) used. |

| Ls (array type) are the camera calibration parameters of each camera (is the output of DLT |

| calib function). The Ls parameters are given as columns and the Ls for different cameras as |

| rows. |

| vs are the coordinates of the point in the image 2D space of each camera. |

| The coordinates of the point are given as columns and the different views as rows. |

| Ouput |

| xyz: point coordinates in space |

| 3:Normalization of coordinates (centroid to the origin and mean distance of sqrt (2 or 3) |

| Iuput |

| nd:number of dimensions (2 for 2D; 3 for 3D) |

| x:the data to be normalized (directions at different columns and points at rows) |

| Ouput |

| Tr:the transformation matrix (translation plus scaling) |

| x:the transformed data |

3D Trajectory Synthesis

4. Establishment of Classification Model

4.1. The Model Structure of Pointnet

4.2. SVM Water Quality Classifier

4.3. XGBoost Water Quality Classifier

4.4. The Model Merging of SVM and XGBoost Classifiers

4.5. Ensemble Learning

5. Experiment

5.1. The Environment of Experiment

5.2. Experimental Results and Analysis

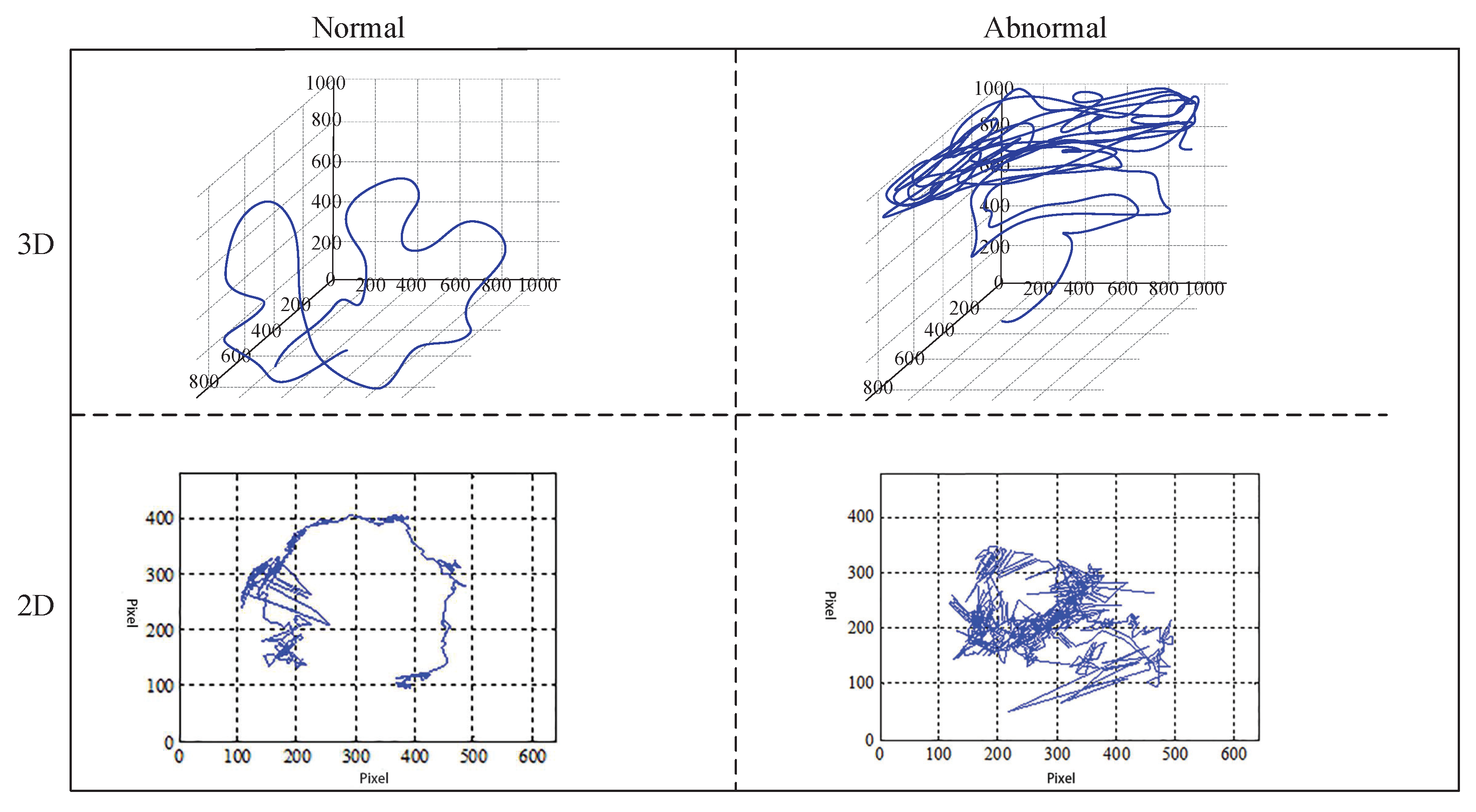

5.2.1. Use Trajectory to Identify Water Quality

5.2.2. The Training and Optimization of Pointnet Model

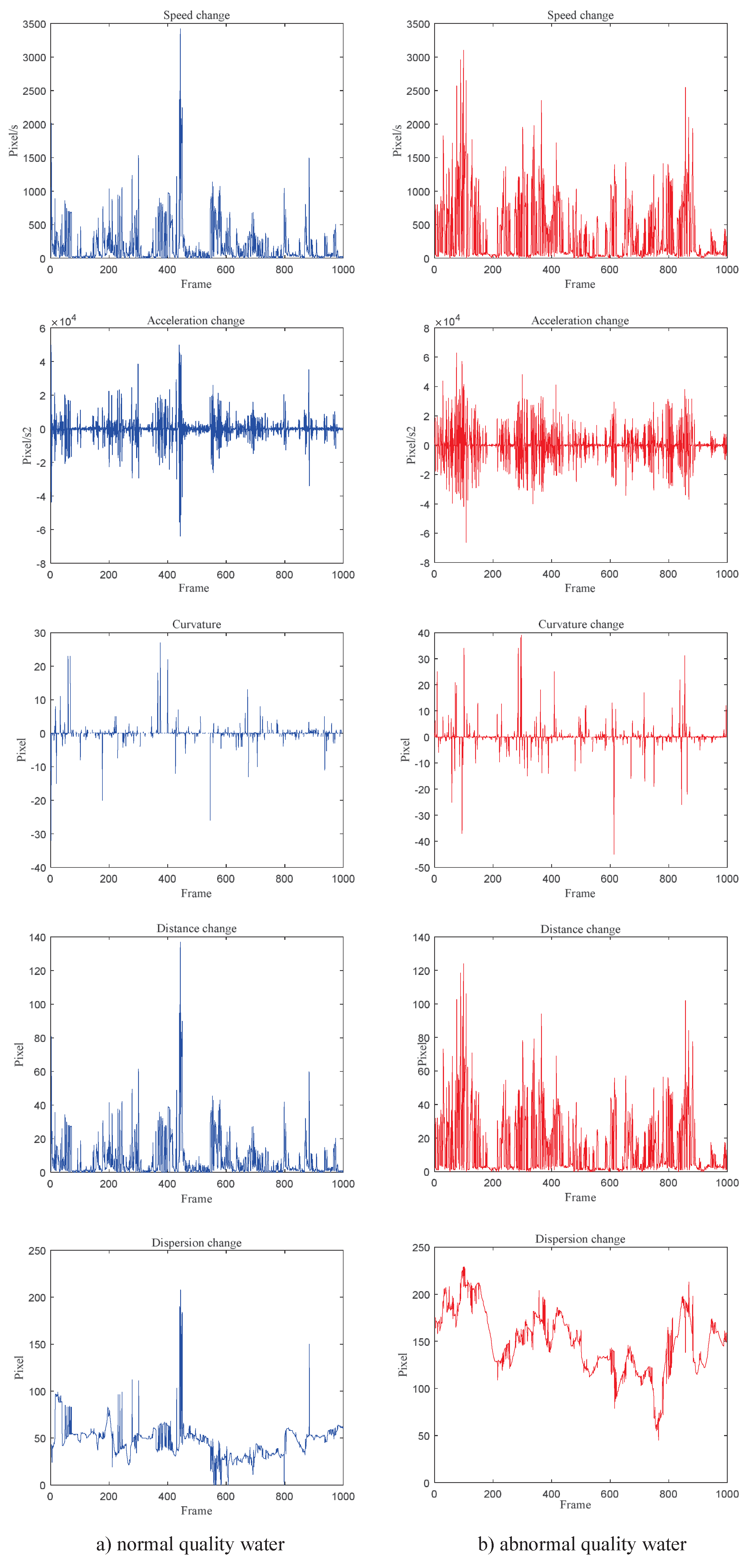

5.2.3. Extraction of Feature Parameters

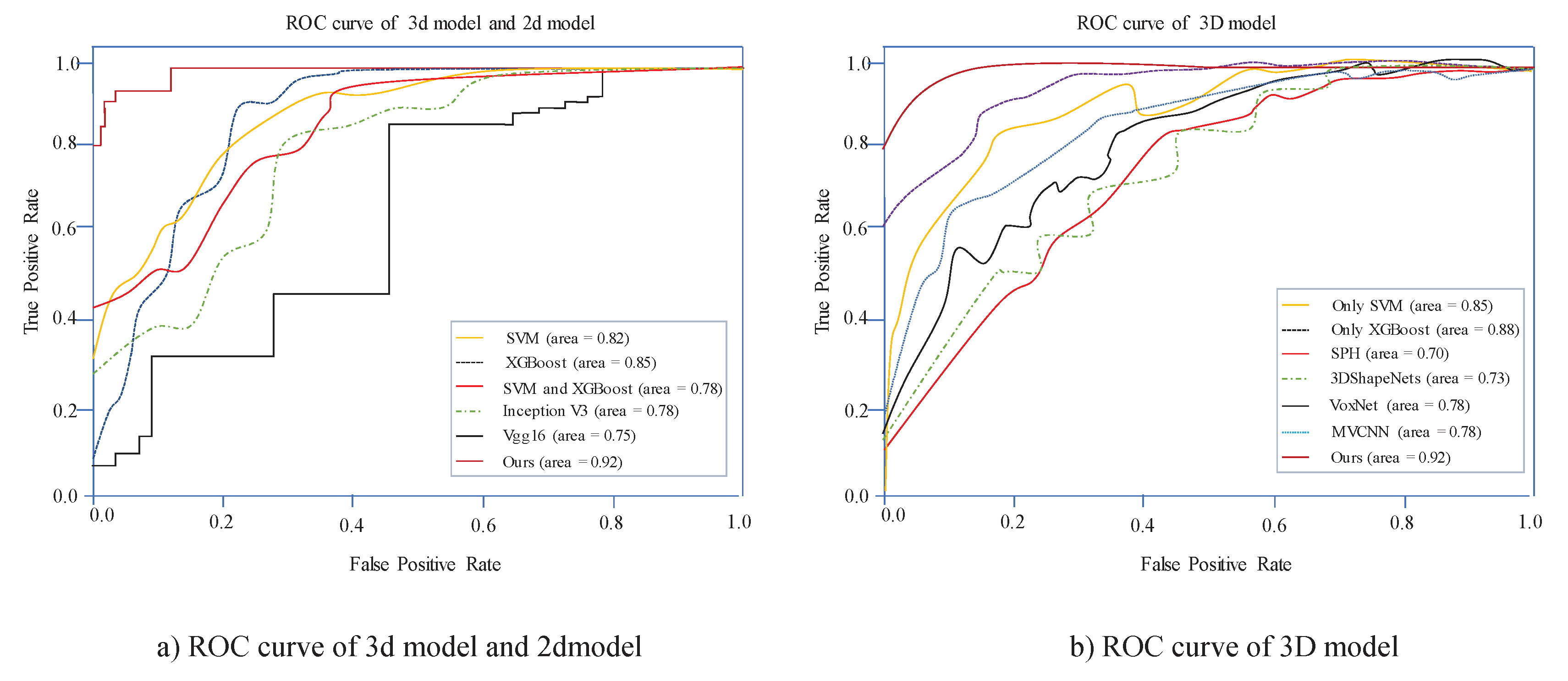

5.2.4. Performance Evaluation

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| KM | Kuhn-Munkres |

| SVM | support vector machine |

| XGBoost | eXtreme Gradient Boosting |

| KCF | Kernelized Correlation Filters |

| MAIS | Multiclass Alpha Integration of Scores |

| FPS | Frames Per Second |

| YOLO | You only look once |

| IOU | Intersection over Union |

| DLT | Direct linear transformation |

| CCD | charge coupled device |

| SGD | stochastic gradient descent |

| ROC | Receiver Operating Characteristic |

| AUC | Area under Curve |

References

- Jang, A.; Zou, Z.; Lee, K.K.; Ahn, C.H.; Bishop, P.L. State-of-the-art lab chip sensors for environmental water monitoring. Meas. Sci. Technol. 2011, 3, 251–259. [Google Scholar] [CrossRef]

- Beyan, C.; Fisher, R.B. A filtering mechanism for normal fish trajectories. In Proceedings of the 21st International Conference on Pattern Recognition (ICPR2012), Tsukuba, Japan, 11–15 November 2012; pp. 2286–2289. [Google Scholar]

- Nian, R.; Wang, X.; Che, R.; He, B.; Xu, X.; Li, P.; Lendasse, A. Online fish tracking with portable smart device for ocean observatory network. In Proceedings of the OCEANS 2017, Anchorage, AK, USA, 18–21 September 2017; pp. 1–7. [Google Scholar]

- Kim, C.M.; Shin, M.W.; Jeong, S.M.; Kang, I.C. Real-time motion generating method for artifical fish. Comput. Sci. Netw. Secur. 2007, 7, 52–61. [Google Scholar]

- Zheng, H.; Liu, R.; Zhang, R.; Hu, Y. A method for real-time measurement of respiratory rhythms in medaka (Oryzias latipes) using computer vision for water quality monitoring. Ecotoxicol. Environ. Saf. 2014, 100, 76–86. [Google Scholar] [CrossRef] [PubMed]

- Maa, H.; Tsaib, T.-F.; Liuc, C.-C. Real-time monitoring of water quality using temporal trajectory of live fish. Expert Syst. Appl. 2010, 7, 5158–5171. [Google Scholar] [CrossRef]

- Chen, J.; Chen, T.; Ma, Z. Application of improved mater-element model in water quality evaluation. Water Resour. Power 2014, 32, 50–52. [Google Scholar]

- Zhang, C.; Chen, Z.; Li, M. Direct method for 3D motion estimation and depth reconstruction of pyramid optical flow. Chin. J. Sci. Instrum. 2015, 36, 1093–1105. [Google Scholar]

- Stewart, A.M.; Grieco, F.; Tegelenbosch, R.A.; Kyzar, E.J.; Nguyen, M.; Kaluyeva, A.; Song, C.; Noldus, L.P.J.J.; Kalueff, A.V. A novel 3D method of locomotor analysis in adult zebrafish: Implications for automated detection of CNS drug-evoked phenotypes. J. Neurosci. Methods 2015, 255, 66–74. [Google Scholar] [CrossRef] [PubMed]

- Welch, G.; Bishop, G. An Introduction to the Kalman Filter; University of North Carolina: Chapel Hill, NC, USA, 1995. [Google Scholar]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Wey, T.; Andreetto, M.; Adam, H. Mobilenets: Efficient convolutional neural networks for mobile vision applications. arXiv 2017, arXiv:1704.04861. [Google Scholar]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.Y.; Berg, A.C. Ssd: Single shot multibox detector. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 11–14 October 2016; Springer: Cham, Switzerland, 2016; pp. 21–37. [Google Scholar]

- Zhu, H.; Zhou, M.C.; Alkins, R. Group role assignment via a Kuhn–Munkres algorithm-based solution. IEEE Trans. Syst. Man Cybern. Part Syst. Hum. 2011, 42, 739–750. [Google Scholar] [CrossRef]

- Henriques, J.F.; Caseiro, R.; Martins, P.; Batista, J. High-speed tracking with kernelized correlation filters. IEEE Trans. Pattern Anal. Mach. Intell. 2015, 37, 583–596. [Google Scholar] [CrossRef] [PubMed]

- Qi, C.R.; Su, H.; Mo, K.; Guibas, L.J. Pointnet: Deep learning on point sets for 3d classification and segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 652–660. [Google Scholar]

- Joachims, T. Making Large-Scale SVM Learning Practical. In Advances in Kernel Methods—Support Vector Learning; Schölkopf, B., Burges, C., Smola, A., Eds.; MIT Press: Cambridge, MA, USA, 1999; pp. 169–184. [Google Scholar]

- Chen, T.; Guestrin, C. Xgboost: A scalable tree boosting system. In Proceedings of the 22nd ACM Sigkdd International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016; pp. 785–794. [Google Scholar]

- Safont, G.; Salazar, A.; Vergara, L. Multiclass alpha integration of scores from multiple classifiers. Neural Comput. 2019, 31, 806–825. [Google Scholar] [CrossRef] [PubMed]

- Jia, Y.; Shelhamer, E.; Donahue, J.; Karayev, S.; Long, J.; Girshick, R.; Guadarrama, S.; Darrell, T. Caffe: Convolutional architecture for fast feature embedding. In Proceedings of the 22nd ACM International Conference on Multimedia, Orlando, FL, USA, 3–7 November 2014; pp. 675–678. [Google Scholar]

- Chollet, F. Xception: Deep learning with depthwise separable convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 1251–1258. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster r-cnn: Towards real-time object detection with region proposal networks. In Advances in Neural Information Processing Systems; Curran Associates, Inc.: Red Hook, NY, USA, 2015; pp. 91–99. [Google Scholar]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You only look once: Unified, real-time object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 26 June–1 July 2016; pp. 779–788. [Google Scholar]

- Abdel-Aziz, Y.I.; Karara, H.M.; Hauck, M. Direct linear transformation from comparator coordinates into object space coordinates in close-range photogrammetry. Photogramm. Eng. Remote. Sens. 2015, 81, 103–107. [Google Scholar] [CrossRef]

- Longstaff, I.D.; Cross, J.F. A pattern recognition approach to understanding the multi-layer perception. Pattern Recognit. Lett. 1987, 5, 315–319. [Google Scholar] [CrossRef]

- Li, X.; Bing, L.; Lam, W.; Shi, B. Transformation networks for target-oriented sentiment classification. arXiv 2018, arXiv:1805.01086. [Google Scholar]

- Robbins, H.; Monro, S. A stochastic approximation method. Ann. Math. Stat. 1951, 22, 400–407. [Google Scholar] [CrossRef]

- Kazhdan, M.; Funkhouser, T.; Rusinkiewicz, S. Rotation invariant spherical harmonic representation of 3d shape descriptors. In Proceedings of the Symposium on Geometry Processing, Aachen, Germany, 23–25 June 2003; Volume 6, pp. 156–164. [Google Scholar]

- Wu, Z.; Song, S.; Khosla, A.; Yu, F.; Zhang, L.; Tang, X.; Xiao, J. 3d shapenets: A deep representation for volumetric shapes. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 1912–1920. [Google Scholar]

- Maturana, D.; Scherer, S. Voxnet: A 3d convolutional neural network for real-time object recognition. In Proceedings of the 2015 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Hamburg, Germany, 28 September–2 October 2015; pp. 922–928. [Google Scholar]

- Su, H.; Maji, S.; Kalogerakis, E.; Learned-Miller, E. Multi-view convolutional neural networks for 3d shape recognition. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 945–953. [Google Scholar]

- Szegedy, C.; Vanhoucke, V.; Ioffe, S.; Shlens, J.; Wojna, Z. Rethinking the Inception Architecture for Computer Vision. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 2818–2826. [Google Scholar]

- Han, S.; Mao, H.; Dally, W.J. Deep compression: Compressing deep neural networks with pruning, trained quantization and huffman coding. arXiv 2015, arXiv:1510.00149. [Google Scholar]

| Model | Recall | Precision | FPS |

|---|---|---|---|

| Faster R-cnn | 48.3 | 75.4 | 7 |

| YOLO | 30.1 | 66.5 | 40 |

| Mobilenet_ssd | 45.5 | 72.3 | 30 |

| Features | Formula | Description |

|---|---|---|

| Swimming Distance | () and () are used to indicate the coordinates of the start and end points on the fish target tracking trajectory per unit time d is the distance the fish swims in t time | |

| Speed | d is the distance the fish swims in t time | |

| Acceleration | and represent the speeds of and , respectively | |

| Curvature | ||

| Dispersion | The relationship between the overall center of gravity of the fish school and the position of the center of gravity of each individual |

| Combination Strategy | Test Sample 1 | Test Sample 2 | Test Sample 3 |

|---|---|---|---|

| MAIS | |||

| Voting method | |||

| Average method | |||

| Learning method |

| Epoch | Learning Rate |

|---|---|

| 0–65 | 1 × 10 |

| 66–85 | 1 × 10 |

| 86–99 | 1 × 10 |

| Model | Input | Accuracy Sample 1 | Accuracy Sample 2 | Accuracy Sample 3 | Accuracy Sample 4 |

|---|---|---|---|---|---|

| Only SVM | Parameters | 87.2 | 86.5 | 89.1 | 90.9 |

| Only XGBoost | Parameters | 88.3 | 91.6 | 92.3 | 90.0 |

| SPH [27] | Mesh | 65.2 | 64.3 | 66.6 | 69.5 |

| 3DShapeNets [28] | Volume | 77.8 | 75.6 | 77.1 | 79.6 |

| VoxNet [29] | Volume | 80.5 | 83.4 | 80.1 | 82.0 |

| MVCNN [30] | Volume | 80.5 | 83.4 | 80.1 | 82.0 |

| Ours | Point | 95.5 | 96.2 | 95.6 | 94.9 |

| Model | Input | Accuracy Sample 5 | Accuracy Sample 6 | Accuracy Sample 7 | Accuracy Sample 8 |

|---|---|---|---|---|---|

| SVM | Parameters | 86.3 | 82.5 | 87.9 | 86.5 |

| XGBoost | Parameters | 89.5 | 90.3 | 91.1 | 90.7 |

| Inception v3 [31] | Image | 92.3 | 91.5 | 90.3 | 93.8 |

| Vgg16 [32] | Image | 90.6 | 88.1 | 89.5 | 90.2 |

| SVM and XGBoost | Parameters | 91.9 | 92.5 | 92.8 | 92.5 |

| Ours | Parameters and Point | 94.5 | 95.2 | 94.6 | 93.9 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cheng, S.; Zhao, K.; Zhang, D. Abnormal Water Quality Monitoring Based on Visual Sensing of Three-Dimensional Motion Behavior of Fish. Symmetry 2019, 11, 1179. https://doi.org/10.3390/sym11091179

Cheng S, Zhao K, Zhang D. Abnormal Water Quality Monitoring Based on Visual Sensing of Three-Dimensional Motion Behavior of Fish. Symmetry. 2019; 11(9):1179. https://doi.org/10.3390/sym11091179

Chicago/Turabian StyleCheng, Shuhong, Kaopeng Zhao, and Dianfan Zhang. 2019. "Abnormal Water Quality Monitoring Based on Visual Sensing of Three-Dimensional Motion Behavior of Fish" Symmetry 11, no. 9: 1179. https://doi.org/10.3390/sym11091179

APA StyleCheng, S., Zhao, K., & Zhang, D. (2019). Abnormal Water Quality Monitoring Based on Visual Sensing of Three-Dimensional Motion Behavior of Fish. Symmetry, 11(9), 1179. https://doi.org/10.3390/sym11091179