Corn Classification System based on Computer Vision

Abstract

:1. Introduction

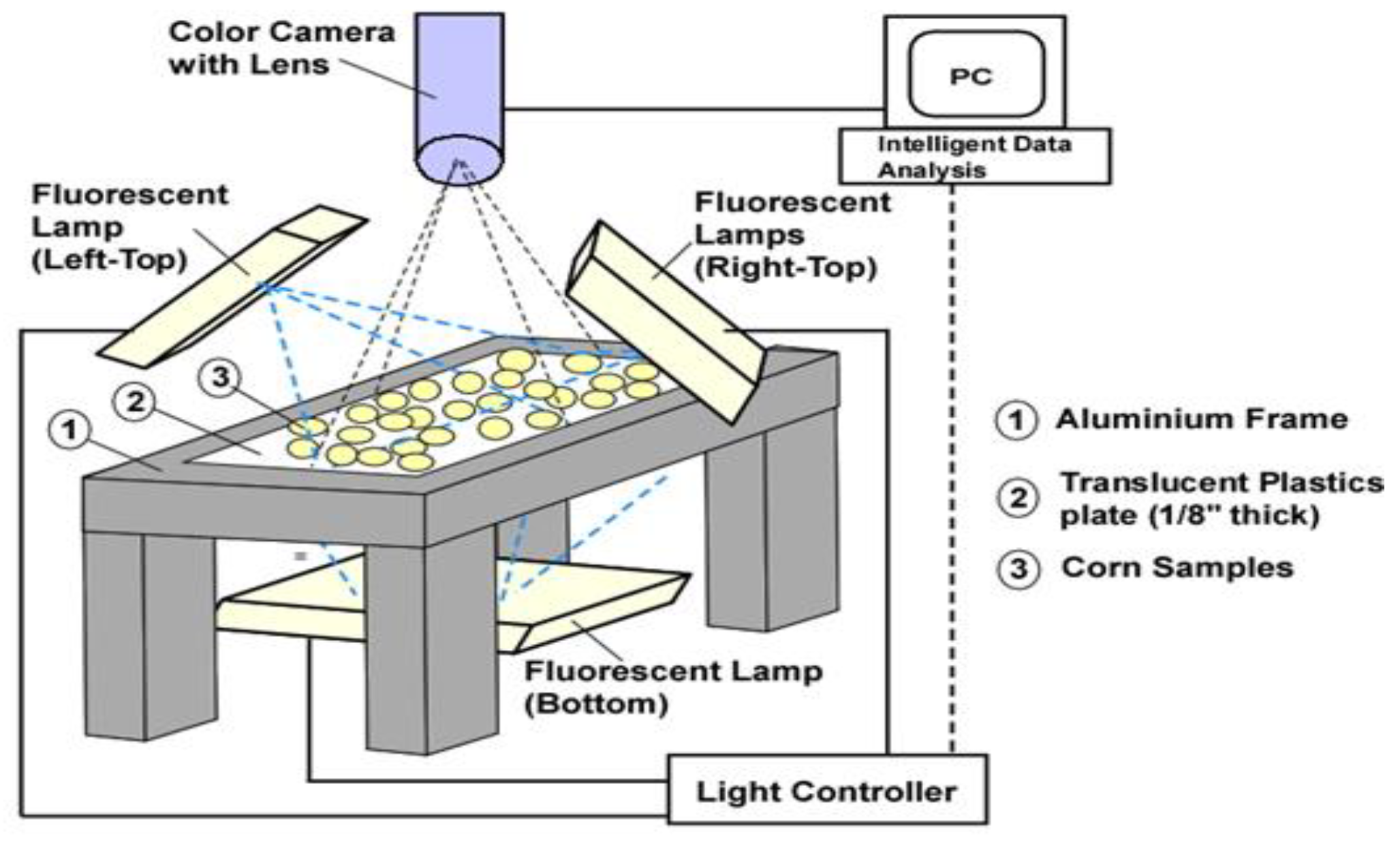

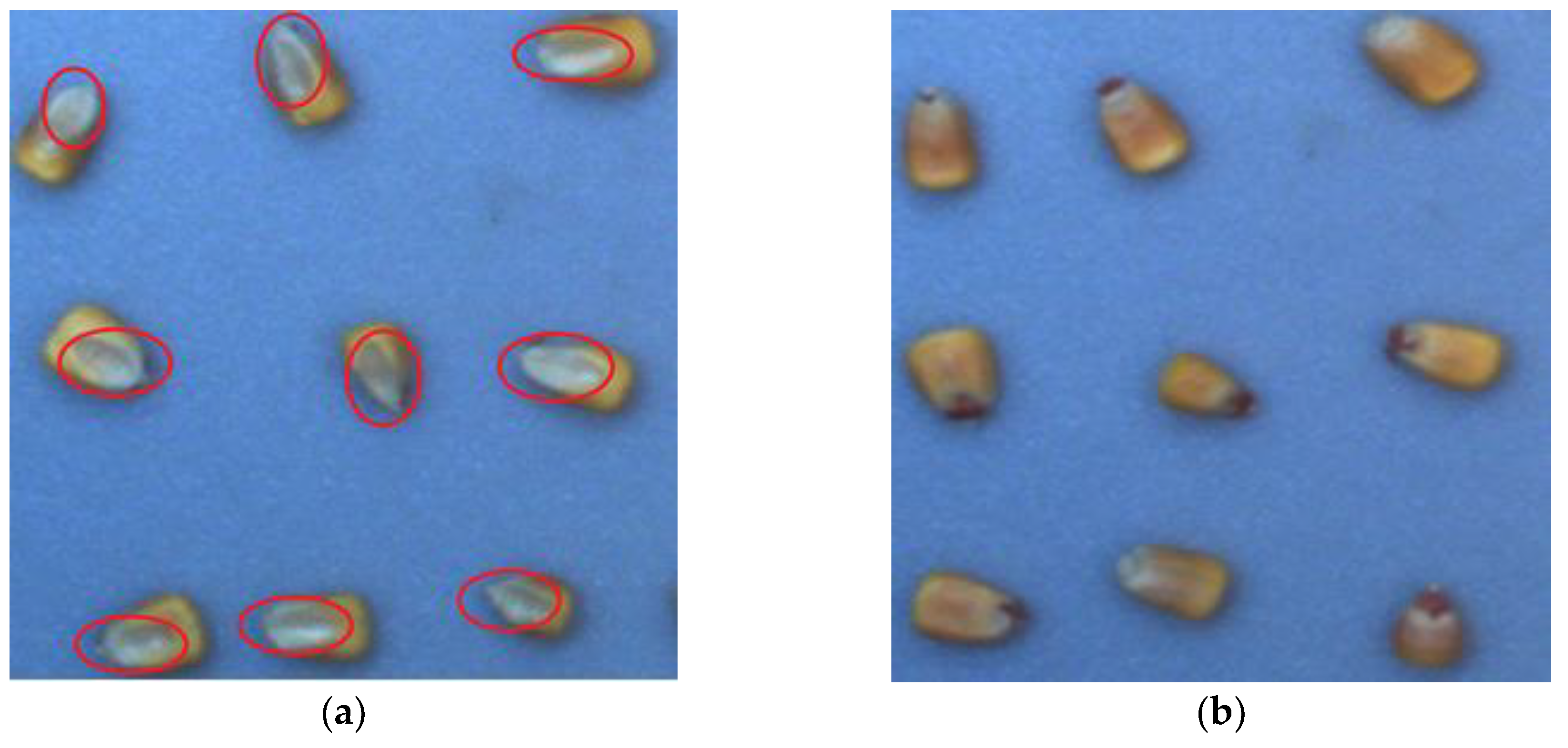

2. Experimental Data

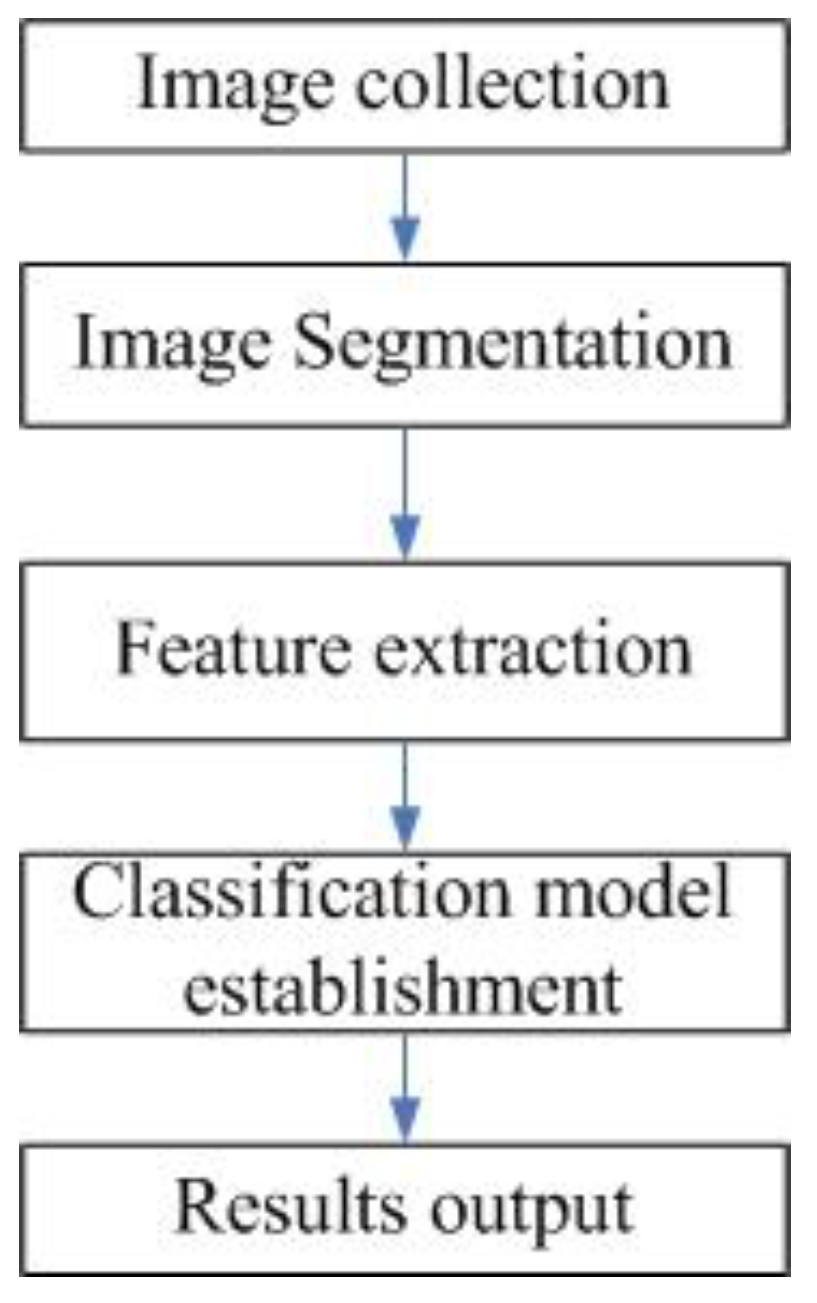

3. Methodology for Corn Classification

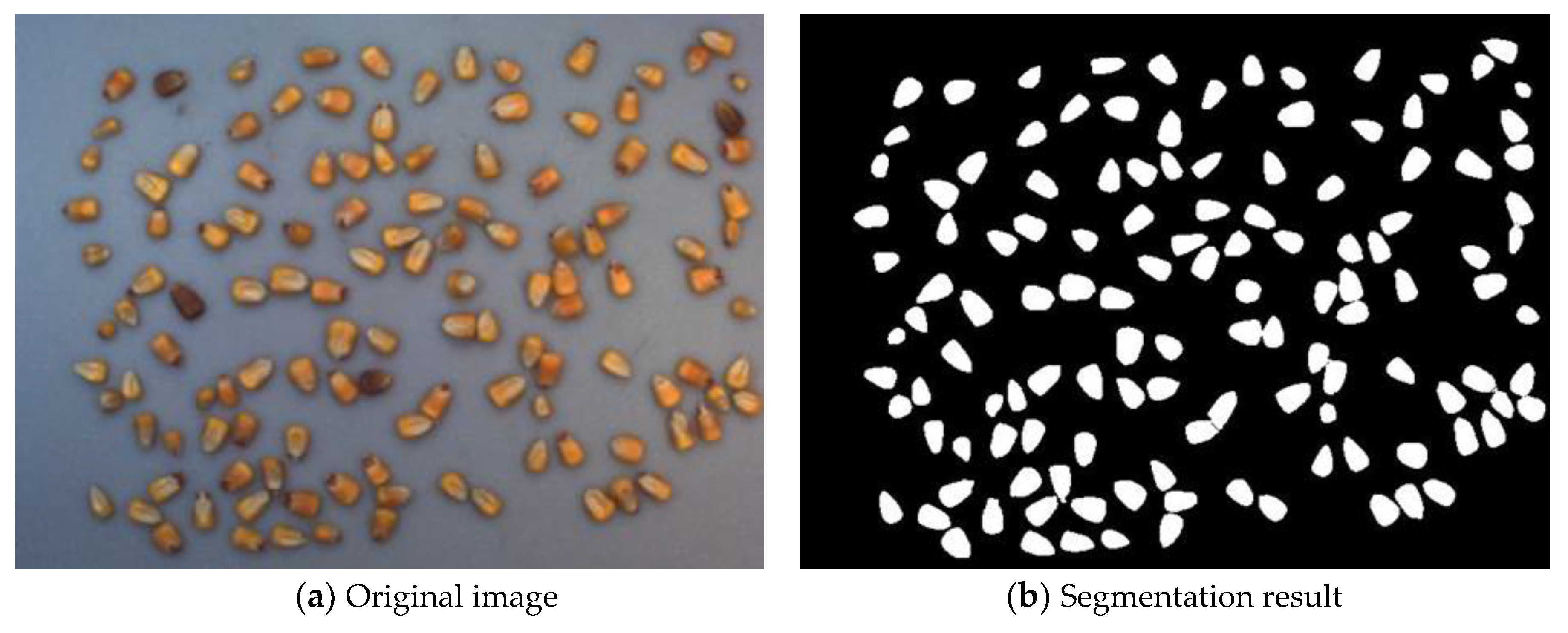

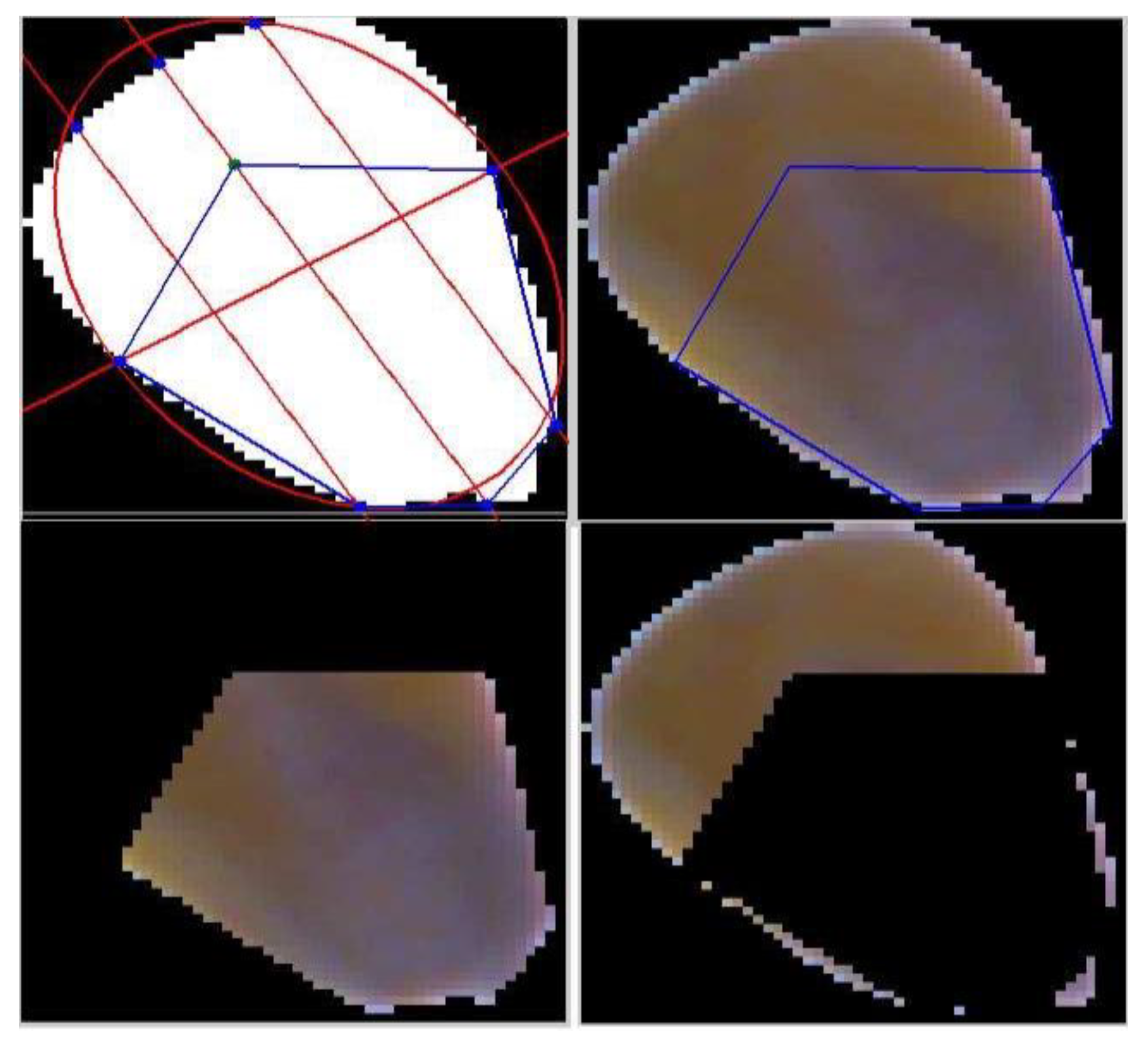

3.1. Image Segmentation

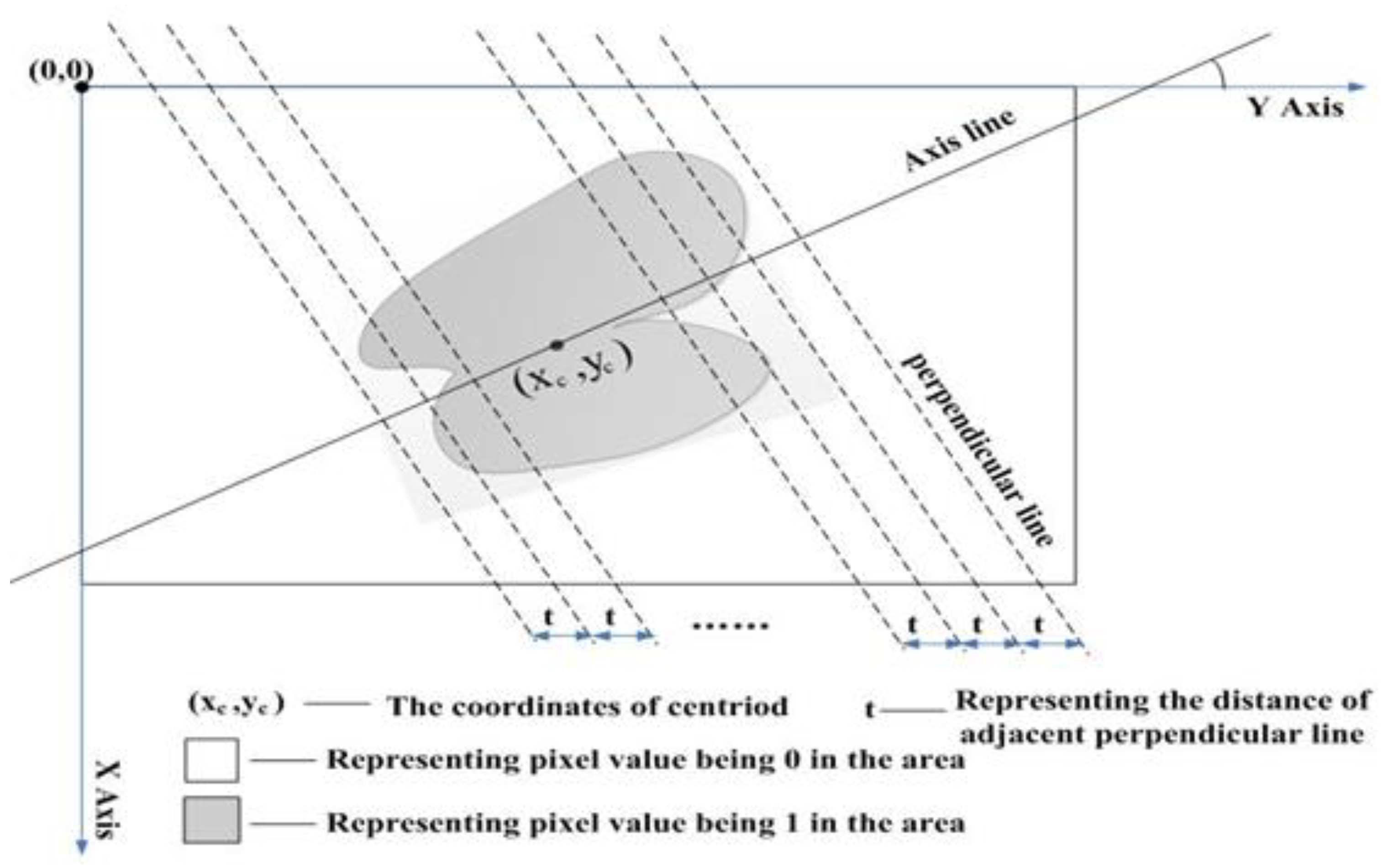

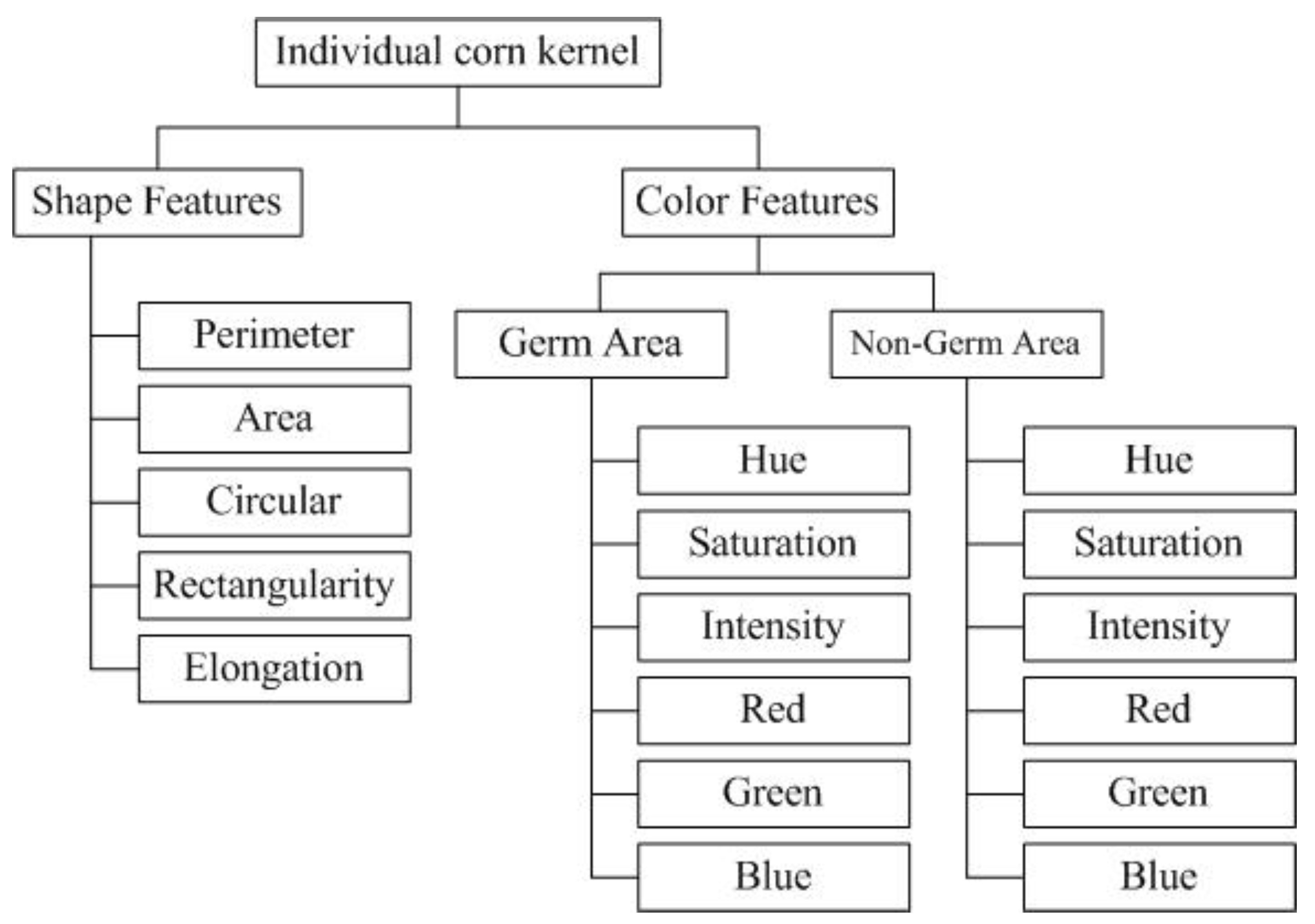

3.2. Feature Extraction

3.3. Classification

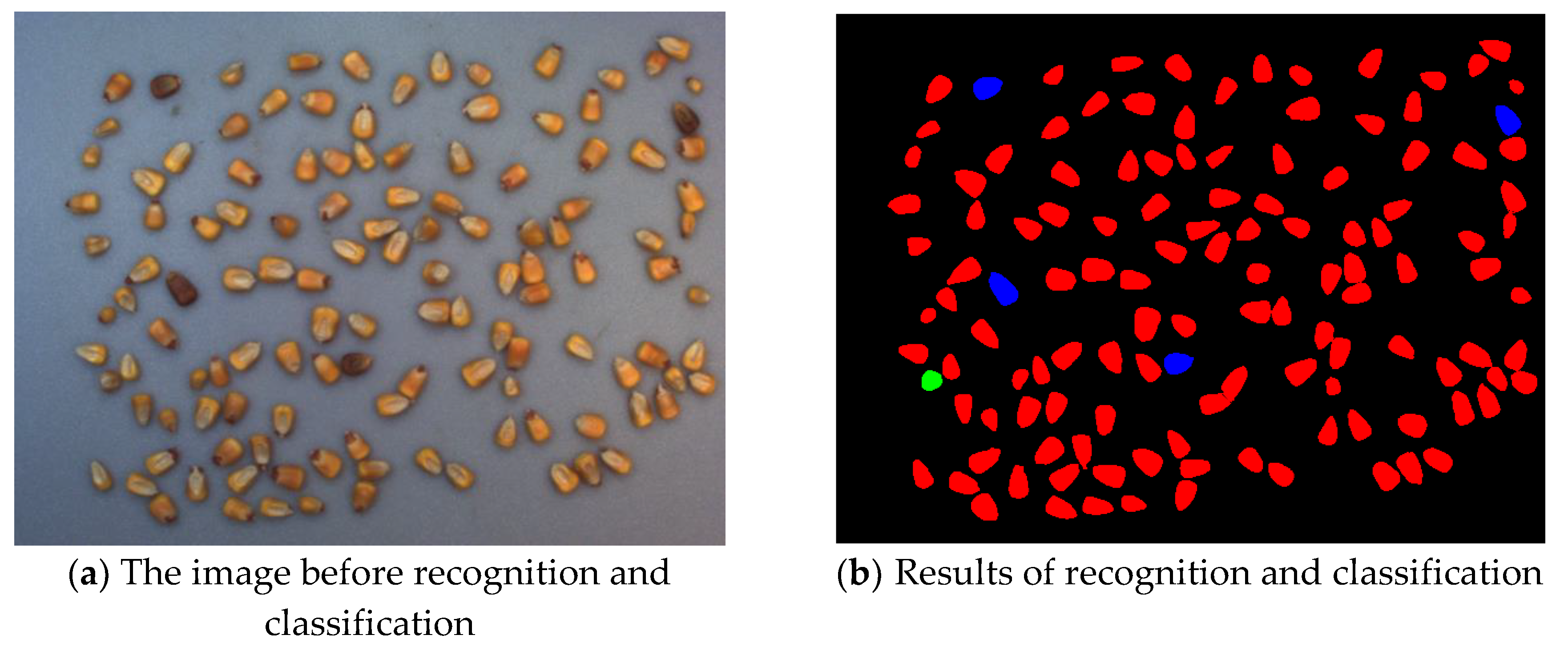

4. Experiments and Results

4.1. Experiment Settings

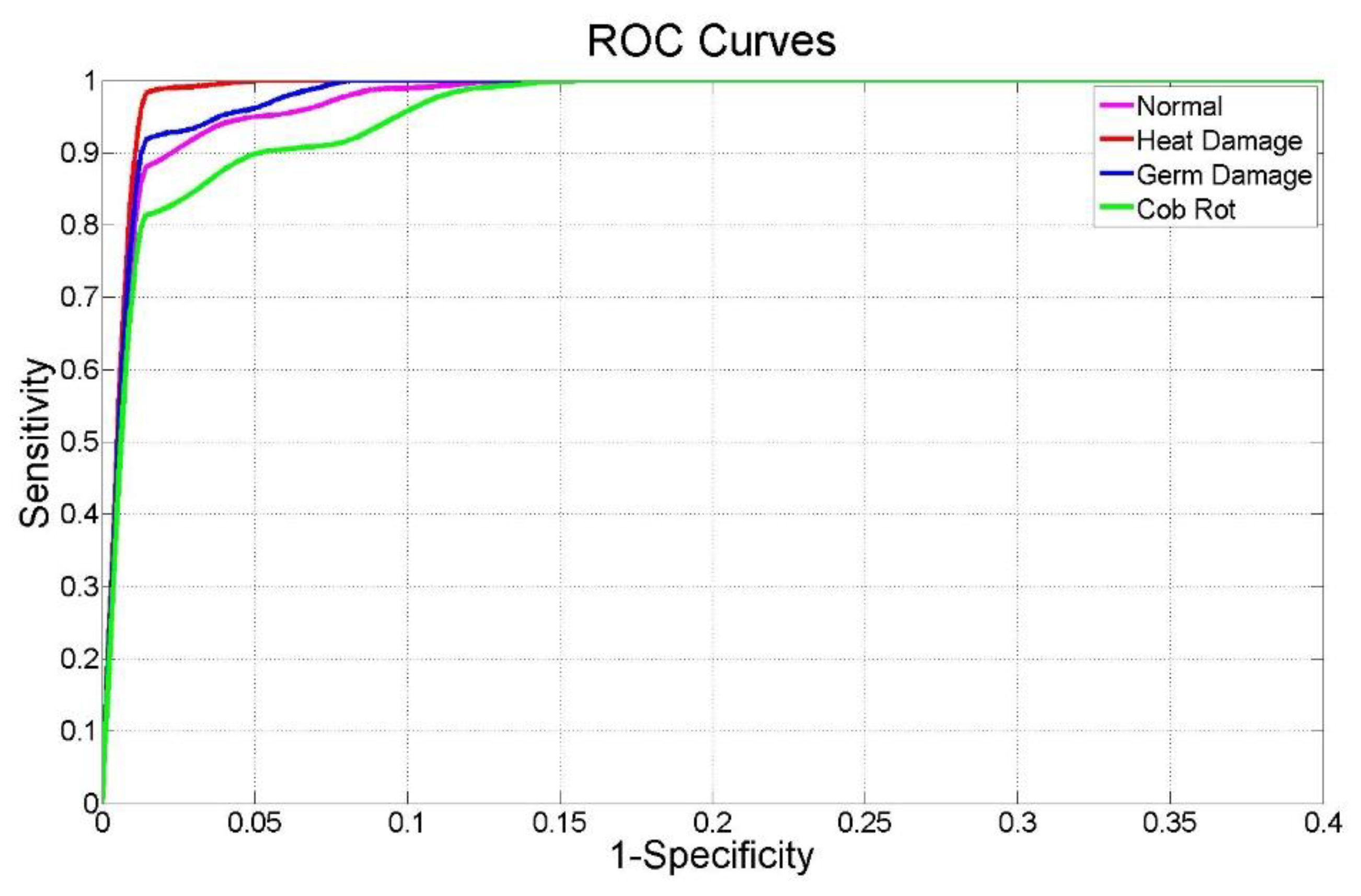

4.2. Results for Three Damaged and Normal Corns

4.3. Results and Discussion for Six Damaged and Normal Corns

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- USDA. World Agricultural Supply and Demand Estimates. Available online: https://www.usda.gov/oce/commodity/wasde/ (accessed on 1 March 2019).

- Hill, L.D. Grain Grades and Standards: Historical Issues Shaping the Future; University of Illinois Press: Champaign, IL, USA, 1990. [Google Scholar]

- Rendleman, M.; Legacy, J. Grain Grading and Handling. Available online: https://eric.ed.gov/?id=ED208230 (accessed on 1 March 2019).

- U.S. Department of Agriculture; Grain Inspection, Packers and Stockyards Administration; Federal Grain Inspection Service. Grain Inspection Handbook, Book II, Chapter 4: Corn; U.S. Department of Agriculture: Washington, DC, USA, 2007.

- Zayas, I.; Converse, H.; Steele, J. Discrimination of Whole from Broken Corn Kernels with Image Analysis. Transac. ASAE 1990, 33, 1642–1646. [Google Scholar]

- Ni, B.; Paulsen, M.R.; Liao, K.; Reid, J.F. Design of an automated corn kernel inspection system for machine vision. Trans. ASAE 1997, 40, 491–497. [Google Scholar]

- Ni, B.; Paulsen, M.R.; Reid, J.F. Corn kernel crown shape identification using image processing. Trans. ASAE 1997, 40, 833–838. [Google Scholar] [CrossRef]

- Ni, B.; Paulsen, M.R.; Reid, J.F. Size Grading of Corn Kernels with Machine Vision. Am. Soc. Agric. Eng. 1998, 17, 567–571. [Google Scholar] [CrossRef]

- Luo, X.; Jayas, D.S.; Symons, S.J. Identification of Damaged Kernels in Wheat using a Colour Machine Vision System. J. Cereal Sci. 1998, 30, 49–59. [Google Scholar] [CrossRef]

- Steenhoek, L.W.; Misra, M.K.; Hurburgh, R.H., Jr.; Bern, C.J. Implementing a Computer Vision System for Corn Kernel Damage Evaluation. Am. Soc. Agric. Eng. 2001, 17, 235–240. [Google Scholar] [CrossRef]

- Dana, W.; Ivo, W. Computer image analysis of seed shape and seed color for flax cultivar description. Comput. Electron. Agric. 2008, 61, 126–135. [Google Scholar] [CrossRef]

- Chen, X.; Xun, Y.; Li, W.; Zhang, J.X. Combining discriminant analysis and neural networks for corn variety identification. Comput. Electron. Agric. 2010, 71, 48–53. [Google Scholar] [CrossRef]

- Arribas, J.I.; Gonzalo, V.; Sánchez-Ferrero, R.-R.G. Leaf classification in sunflower crops by computer vision and neural networks. Comput. Electron. Agric. 2011, 78, 9–18. [Google Scholar] [CrossRef]

- Gao, J.G.; Zhang, S. A rapid corn sorting algorithm based on machine vision. J. Theor. Appli. Inf. Technol. 2012, 45, 315–319. [Google Scholar]

- Valiente-González, J.M. Automatic corn (Zea mays) kernel inspection system using novelty detection based on principal component analysis. Biosys. Eng. 2014, 117, 94–103. [Google Scholar] [CrossRef]

- Liu, C.Q.; Chen, B.Q. Method of image detection for ear of corn based on computer vision. Transac. Chin. Soc. Agric. Eng. 2014, 30, 131–138. [Google Scholar]

- Mohammad, A.J.G.; Khamis, S.; Mohammad, F. Feature decision-making ant colony optimization system for an automated recognition of plant species. Exp. Syst. Appl. 2015, 42, 2361–2370. [Google Scholar]

- Gao, X.H.; Liu, B. Design and experiment of fresh corn quality detection classifier based on machine vision. Transac. Chin. Soc. Agric. Eng. 2016, 32, 298–303. [Google Scholar]

- Sun, X.; Guo, M.; Ma, M. Identification and classification of damaged corn kernels with impact acoustics multi-domain patterns. Comput. Electron. Agric. 2018, 150, 152–161. [Google Scholar] [CrossRef]

- Zhang, J.; Dai, L.M.; Cheng, F. Classification of Frozen Corn Seeds Using Hyperspectral VIS/NIR Reflectence Imaging. Molecules 2019, 24, 149. [Google Scholar] [CrossRef] [PubMed]

- Chouhan, S.S.; Singh, U.P.; Jain, S. Applications of Computer Vision in Plant Pathology: A Survey. Arch. Comput. Methods Eng. 2019, 2, 1–22. [Google Scholar] [CrossRef]

- Sabzi, S.; Abbaspour-Gilandeh, Y.; García-Mateos, G.; Ruiz-Canales, A.; Molina-Martínez, J.M.; Arribas, J.I. An Automatic Non-Destructive Method for the Classification of the Ripeness Stage of Red Delicious Apples in Orchards Using Aerial Video. Agronomy 2019, 9, 84. [Google Scholar] [CrossRef]

- Mahdi, A.; Qin, J. Line Profile Based Segmentation Algorithm for Touching Corn Kernels. Available online: https://arxiv.org/pdf/1706.00396 (accessed on 1 March 2019).

- Gonzalez, R.C.; Woods, R.E. Digital Image Processing, 3rd ed.; Pearson: London, UK, 2007. [Google Scholar]

- White, P.J.; Johnson, L.A. Corn: Chemistry and Technology, 2nd ed.; American Association of Cereal Chemists: Eagan, MN, USA, 2003. [Google Scholar]

- Liu, J.; Paulsen, M.R. Corn Whiteness Measurement and Classification Using Machine Vision. Trans. ASAE 2000, 43, 757–763. [Google Scholar] [CrossRef]

- Zheng, M.J.; Huang, X.F. The image of maize seeds’ modal characteristics extraction. Sci. Pap. Online 2008, 3, 1–5. [Google Scholar]

- Duda, R.O.; Hart, P.E.; Stork, D.G. Pattern Classification, 2nd ed.; Wiley: Hoboken, NJ, USA, 2012. [Google Scholar]

- Li, T.F.; Yen, T. A Bayes Empirical Bayes Decision Rule for Classification. Commun. Stat. 2005, 34, 1137–1149. [Google Scholar] [CrossRef]

| Name | Perimeter (px) | Area (px) | Circularity | Rectangularity | Elongation | |

|---|---|---|---|---|---|---|

| Category | ||||||

| Blue eye mold | 306.1493 | 6239 | 0.8365 | 0.7294 | 0.9681 | |

| 273.0782 | 4556 | 0.7677 | 0.6537 | 0.9647 | ||

| 338.6346 | 7320 | 0.8022 | 0.7253 | 0.7500 | ||

| Cob Rot | 335.8061 | 7691 | 0.8571 | 0.76987 | 0.8108 | |

| 366.9605 | 8193 | 0.7646 | 0.6417 | 0.9825 | ||

| 318.6346 | 6684 | 0.8273 | 0.7193 | 0.9109 | ||

| Germ damage | 289.3919 | 5002 | 0.7506 | 0.7061 | 0.8366 | |

| 300.6518 | 5918 | 0.82273 | 0.8189 | 0.7374 | ||

| 284.3503 | 5868 | 0.9120 | 0.8162 | 0.8681 | ||

| Heat damage | 323.5219 | 7131 | 0.8562 | 0.8178 | 0.7339 | |

| 358.9777 | 8389 | 0.8181 | 0.7688 | 0.7097 | ||

| 335.7645 | 6619 | 0.7378 | 0.7580 | 0.6271 | ||

| Insect damage | 358.4924 | 8148 | 0.7967 | 0.7760 | 0.6720 | |

| 359.4335 | 6171 | 0.6002 | 0.6128 | 0.8962 | ||

| 337.7645 | 7300 | 0.8041 | 0.7865 | 0.6555 | ||

| Surface mold | 312.4924 | 6240 | 0.8030 | 0.7212 | 0.8155 | |

| 310.7939 | 6244 | 0.8123 | 0.8030 | 0.6667 | ||

| 322.0071 | 7061 | 0.8557 | 0.7930 | 0.7925 | ||

| Normal | 349.0193 | 7759 | 0.8004 | 0.7070 | 0.7881 | |

| 350.3330 | 8058 | 0.8250 | 0.704 | 0.8220 | ||

| 332.5219 | 7459 | 0.8477 | 0.8222 | 0.7232 | ||

| No. | Category | Numbers of Training Sets | Numbers of Testing Sets |

|---|---|---|---|

| 1 | Blue eye mold | 70 | 30 |

| 2 | Cob rot | 70 | 30 |

| 3 | Germ damage | 70 | 30 |

| 4 | Heat damage | 70 | 30 |

| 5 | Insect damage | 70 | 30 |

| 6 | Surface mold | 70 | 30 |

| 7 | Normal | 70 | 30 |

| Predicted/Real Class | 1 | 2 | 3 | 4 | All Data | Classification Error by Class (%) | Classification Accuracy (%) |

|---|---|---|---|---|---|---|---|

| 1 | 29 | 0 | 1 | 0 | 30 | 3.33 | 96.67 |

| 2 | 0 | 30 | 0 | 0 | 30 | 0.00 | |

| 3 | 0 | 0 | 30 | 0 | 30 | 0.00 | |

| 4 | 3 | 0 | 0 | 27 | 30 | 10.00 |

| Class | Sensitivity (%) | Accuracy (%) | Specificity (%) | AUC |

|---|---|---|---|---|

| 1 | 96.67 | 96.67 | 98.86 | 0.9884 |

| 2 | 100.00 | 100.00 | 98.85 | 0.9945 |

| 3 | 100.00 | 99.17 | 100.00 | 0.9915 |

| 4 | 90.00 | 97.50 | 96.74 | 0.9833 |

| Predicted/Real Class | 1 | 2 | 3 | 4 | 5 | 6 | 7 | All Data | Classification Error by Class (%) | Classification Accuracy (%) |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 27 | 0 | 0 | 0 | 2 | 1 | 0 | 30 | 10.00 | 74.76 |

| 2 | 0 | 27 | 0 | 0 | 0 | 2 | 1 | 30 | 10.00 | |

| 3 | 0 | 2 | 26 | 1 | 0 | 0 | 1 | 30 | 13.33 | |

| 4 | 0 | 1 | 1 | 27 | 0 | 1 | 0 | 30 | 10.00 | |

| 5 | 0 | 2 | 6 | 5 | 16 | 1 | 0 | 30 | 46.67 | |

| 6 | 1 | 6 | 4 | 1 | 0 | 16 | 2 | 30 | 46.67 | |

| 7 | 0 | 4 | 1 | 1 | 1 | 5 | 18 | 30 | 40.00 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Li, X.; Dai, B.; Sun, H.; Li, W. Corn Classification System based on Computer Vision. Symmetry 2019, 11, 591. https://doi.org/10.3390/sym11040591

Li X, Dai B, Sun H, Li W. Corn Classification System based on Computer Vision. Symmetry. 2019; 11(4):591. https://doi.org/10.3390/sym11040591

Chicago/Turabian StyleLi, Xiaoming, Baisheng Dai, Hongmin Sun, and Weina Li. 2019. "Corn Classification System based on Computer Vision" Symmetry 11, no. 4: 591. https://doi.org/10.3390/sym11040591

APA StyleLi, X., Dai, B., Sun, H., & Li, W. (2019). Corn Classification System based on Computer Vision. Symmetry, 11(4), 591. https://doi.org/10.3390/sym11040591