Image Denoising via Improved Dictionary Learning with Global Structure and Local Similarity Preservations

Abstract

1. Introduction

- We developed an image denoising approach that processes advantages of both reconstruction-based and learning-based methods. A practical two-stage optimization solution is proposed for the implementation.

- We introduced a sparse term to reduce the multiplicative noise approximately to additive noise. Consequently, our method is capable of removing both additive and multiplicative noise from a noisy image.

- We used the Laplacian Schatten norm to capture the edge information and preserve small details that may be potentially ignored by learning based methods. Hence, both global and local information can be preserved in our model for image denoising.

- We established a new method that combines Method of Optimal Directions (MOD) with Approximate K-SVD (AK-SVD) for dictionary learning.

2. Proposed Method

2.1. Formulation

- Global Structure Reconstruction: High pass filter emphasizes fine details of an image by effectively enhancing contents that are of high intensity gradient in the image. After high pass filtering, clean image contains the high frequency contents that represent global structures while low frequency contents are eliminated, making the filtered image of low rank. However, since noise usually has high-frequency components too, it may still remain together with the structural information after high-pass filtering. For each pixel, noise usually does not depend on neighboring pixels while the pixels on the global structure such as edges and textures have correlations with their neighbouring pixels. To differentiate noise and structural pixels, we consider minimizing the rank of high-pass filtered image. As Schatten norm can effectively approximate the rank [24], we use the Schatten norm of high-pass filtered image to capture the underlying structures.Let be a matrix with singular value decomoposition (SVD) where and are unitary matrices consisting of singular vectors of X, and is a rectangular diagonal matrix consisting of singular values of X. Then, the Schatten p-norm ( norm) of X is defined aswhere is the order of Schatten norm and is the kth singular value of X. The family of Schatten norms include three common matrix norms, including the nuclear norm (), the Frobenius norm () and the spectral norm ().In this paper, to high-pass filter the image, we adopt an 8-neighborhoods Laplacian operator defined asThis Laplacian filter captures 8-directional connectedness of each pixel and thus the structures of the image as well. By filtering the image with such Laplacian filter, we can obtain a low-rank filtered image containing the global structures of the image. Hence, it is desireable to minimize the rank of to ensure the low-rankness of the global structures. To achieve this goal, we propose to adopt the above defined Hessian Schatten-p norm as rank approximation, and by minimizing , the global structures of the image can be well preserved.Because multiplicative noise is image content dependent, it may remain mixed with the clean image after minimizing Laplacian Schatten norm of the noisy image. To alleviate the effect of multiplicative noise, we introduce a sparse matrix S that may as well capture the outliers in the case of additive noise. In a now-standard way, we minimize the 1-norm of S to obtain the sparsity. In summary, our model is as follows:where Y is the noisy image, X is the clean image, S denotes a matrix containing globally sparse noise, and E is the remaining noise matrix. For convenience of optimization, we use Frobenius norm as a loss function to measure the strength of E. Combining them together, we formulate an objective function to preserve the global structure as follows:where represents the matrix norm, and are balancing parameters.

- Local Similarity Preservation: We define the local similarity of an image using its patches with a size of pixels. We define an operator that extracts the ith patch from X and orders it as a column vector, i.e., . To preserve the local similarity of image patches we exploit the dictionary learning. Define a dictionary , where is the number of dictionary basis. Each column of is a basis, i.e., and the dictionary is redundant. The local similarity suggests that every patch in the clean image may be sparsely represented over this dictionary. The sparse representation vector is obtained by solving the following constrained minimization problem:or alternatively by MAP estimatorwhere is the sparse representation vector of patch , represents the norm and and T are parameters that control the error of the sparse coding and the sparsity of representation.

2.2. Practical Solution for Optimization

2.2.1. Global Structure Reconstruction Stage

2.2.2. Dictionary Learning Stage

| Algorithm 1 The Laplacian Schatten p-norm and Learning Algorithm (LSLA-p). |

| Require: Noisy Image: Y; Penalty parameter: ; Smoothing parameter: ; Stopping tolerence: ; Clearn Image X;

|

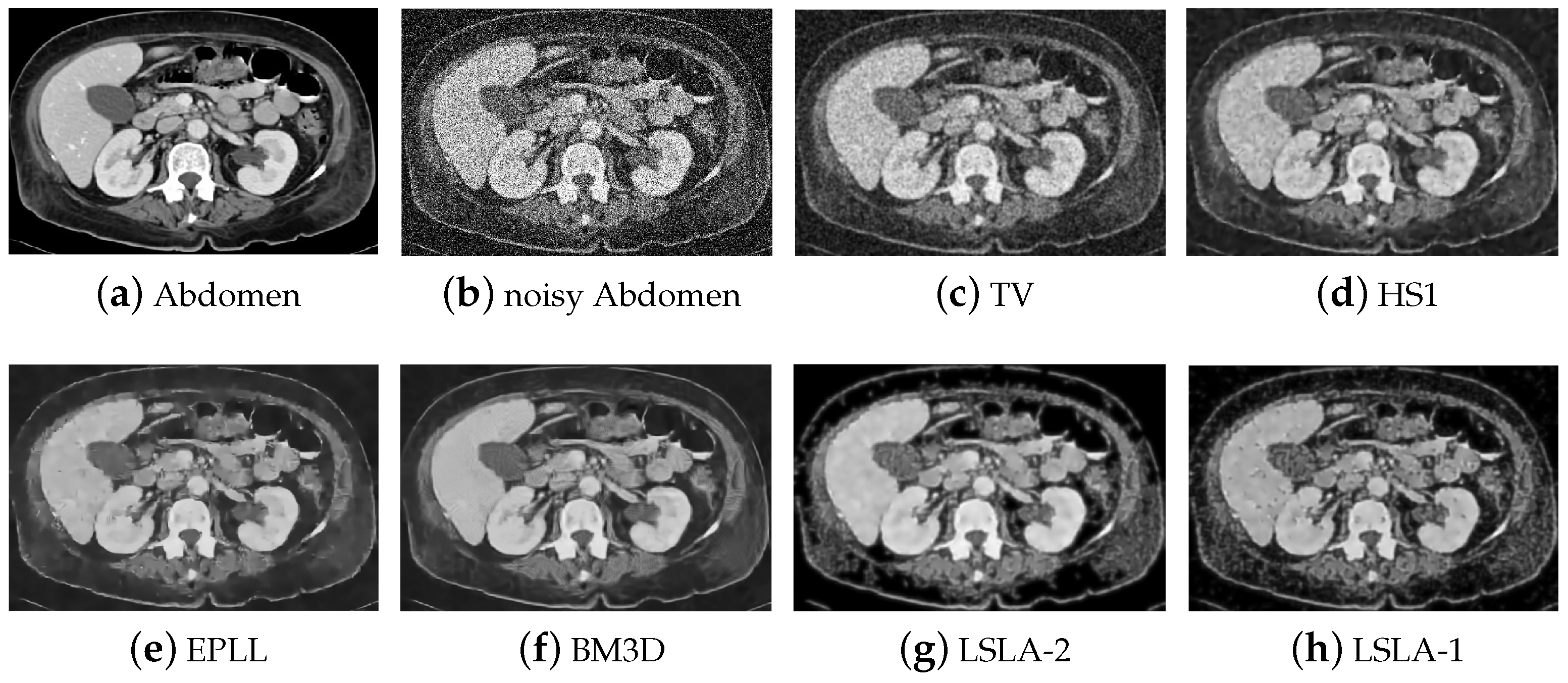

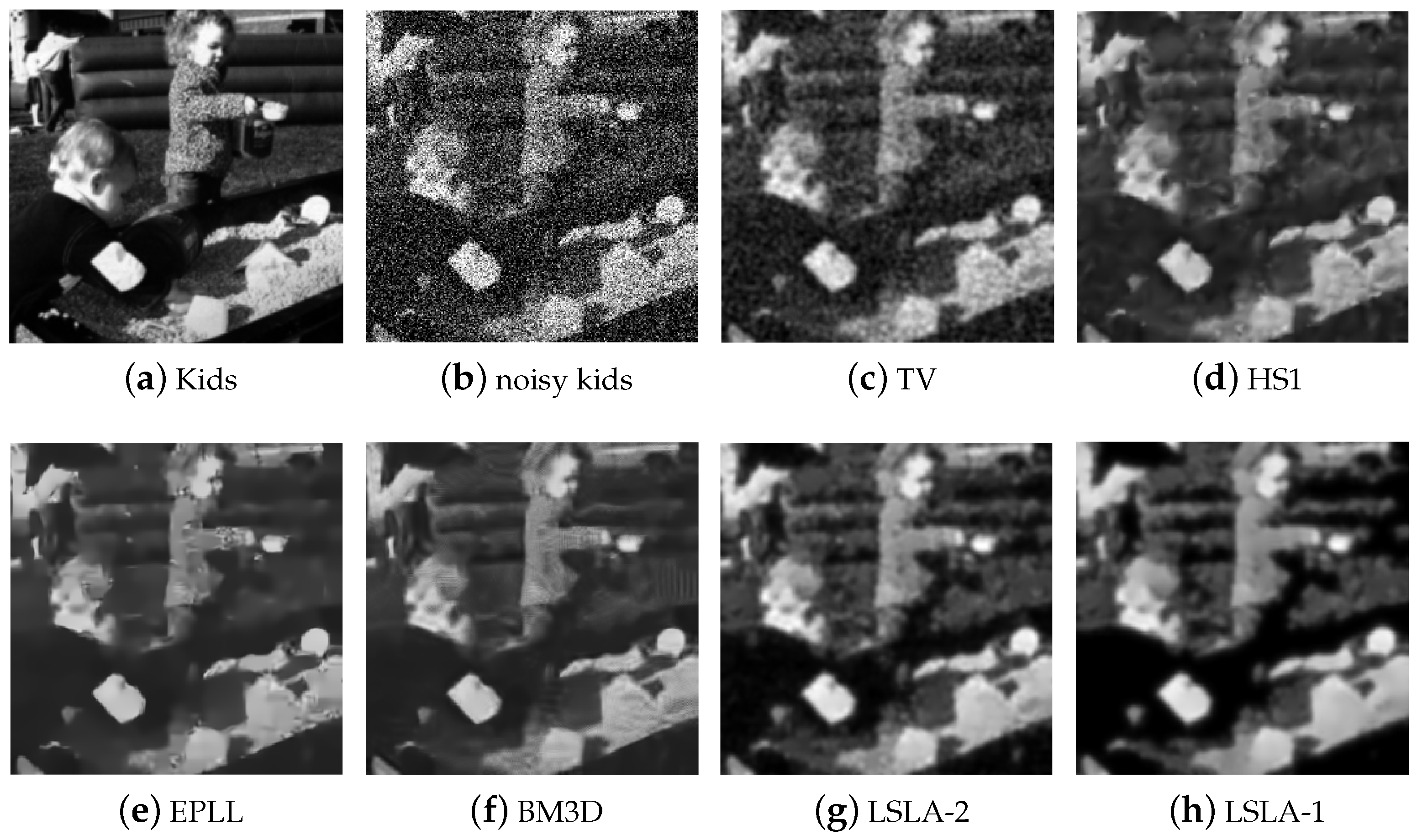

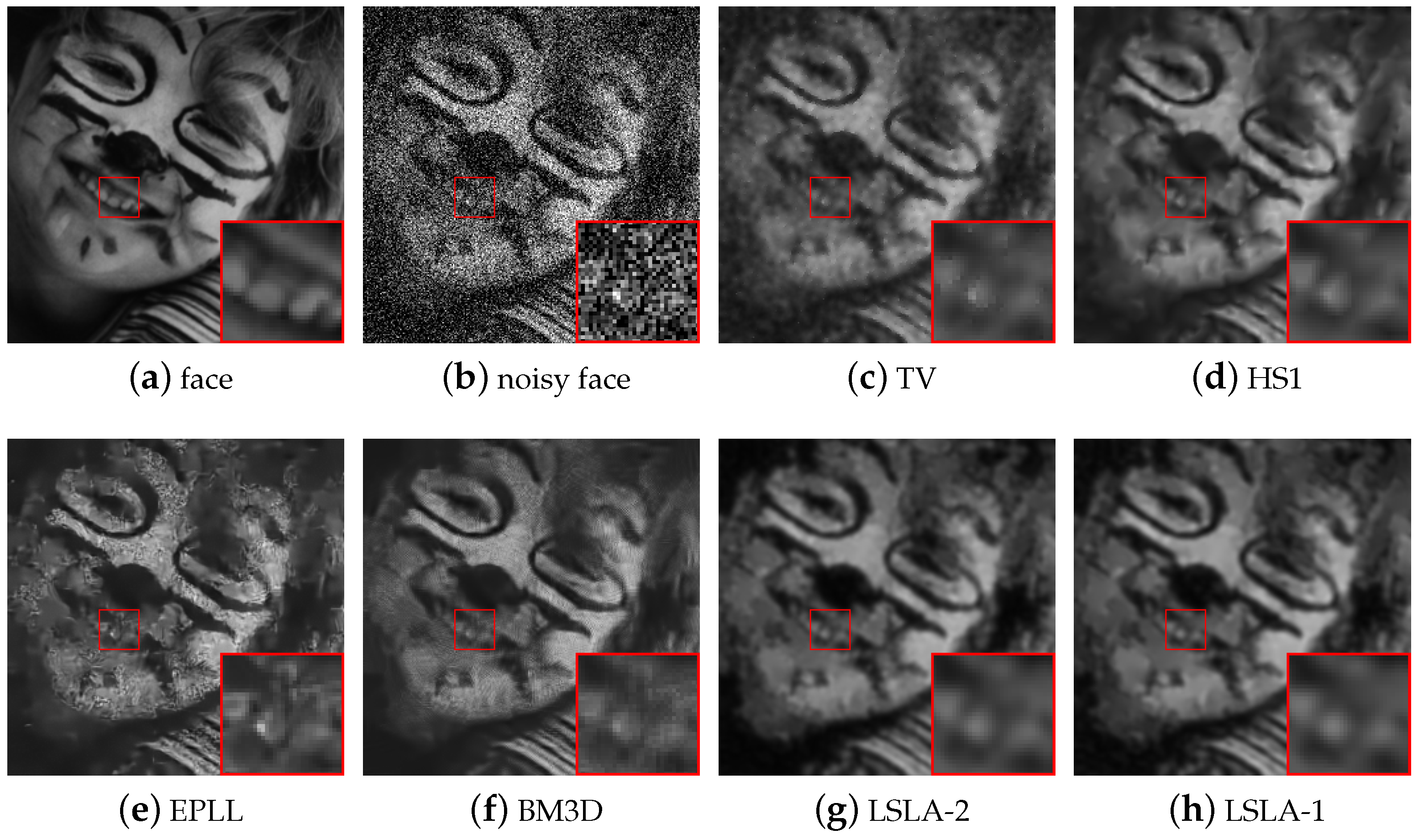

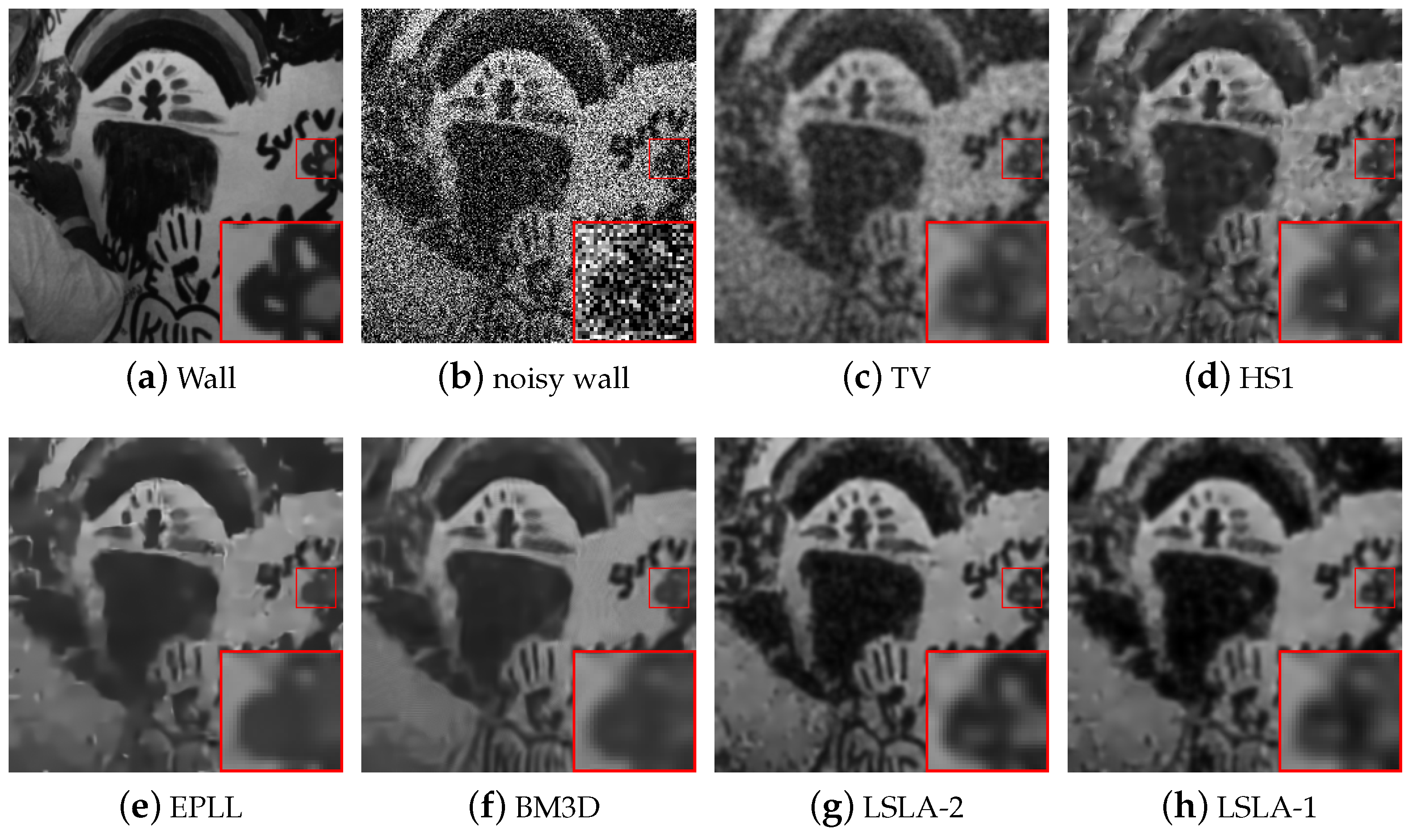

3. Experiments

3.1. Parameter Setting

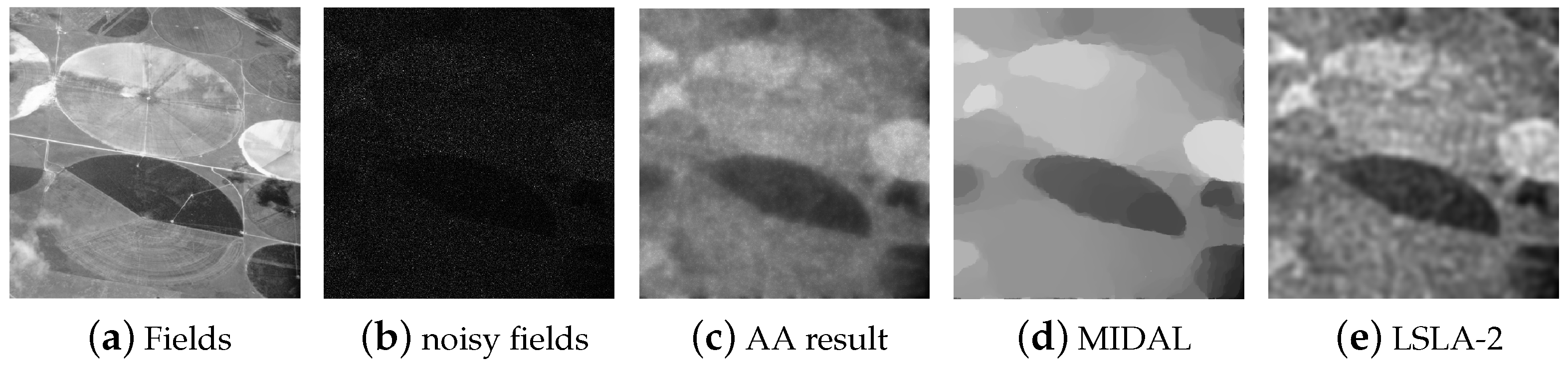

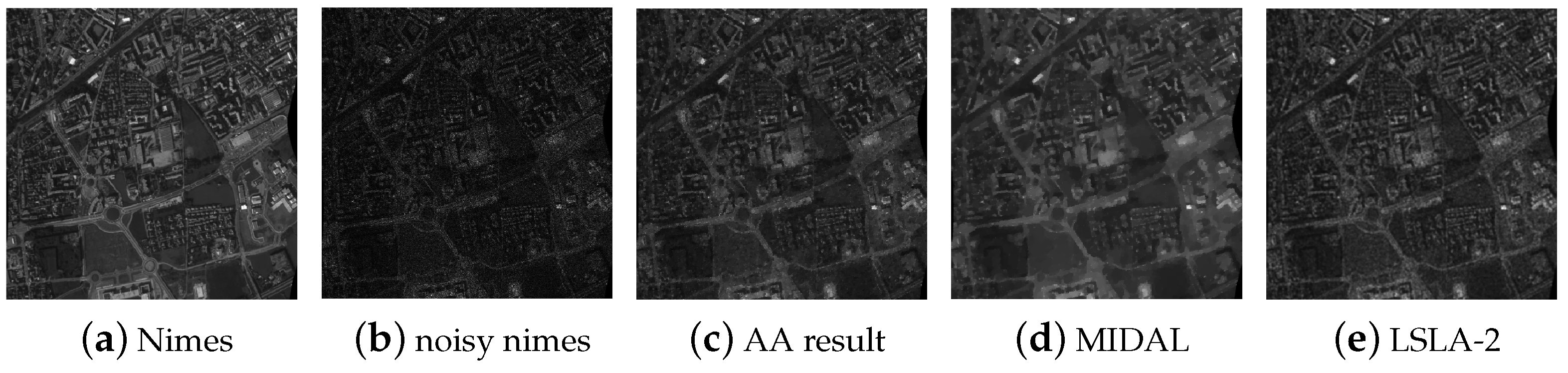

3.2. Performance and Analysis

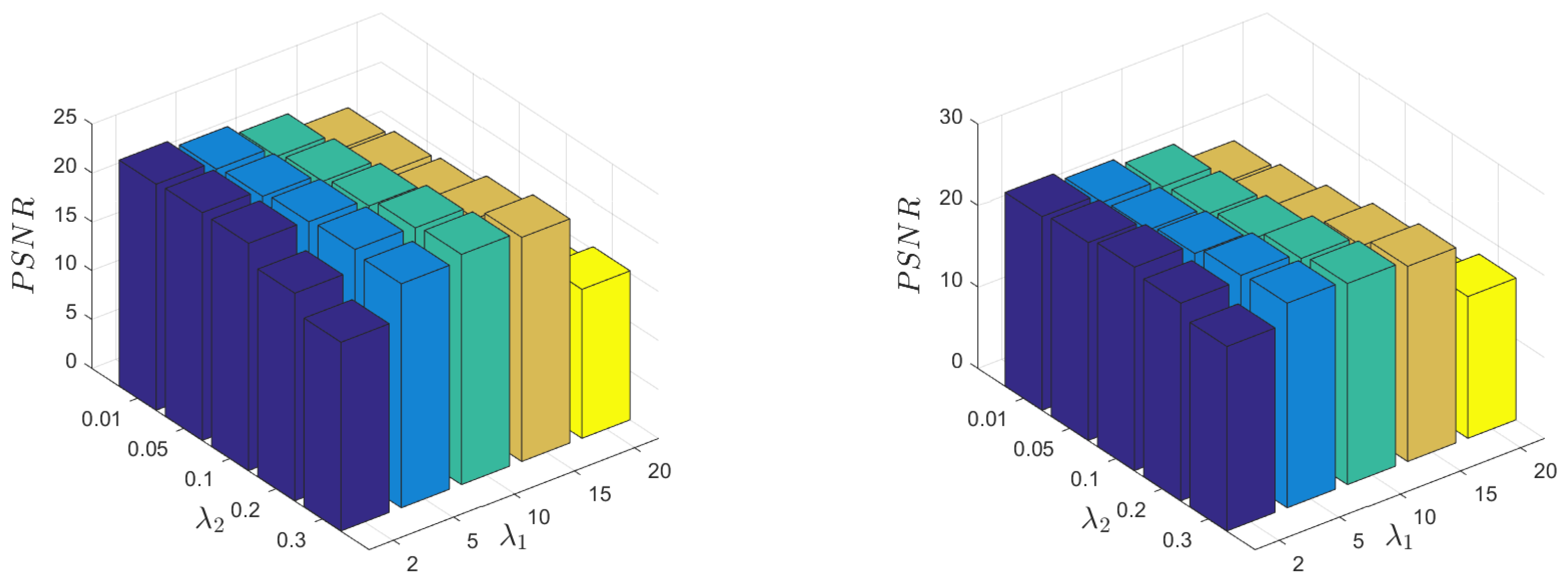

3.3. Parameter Sensitivity

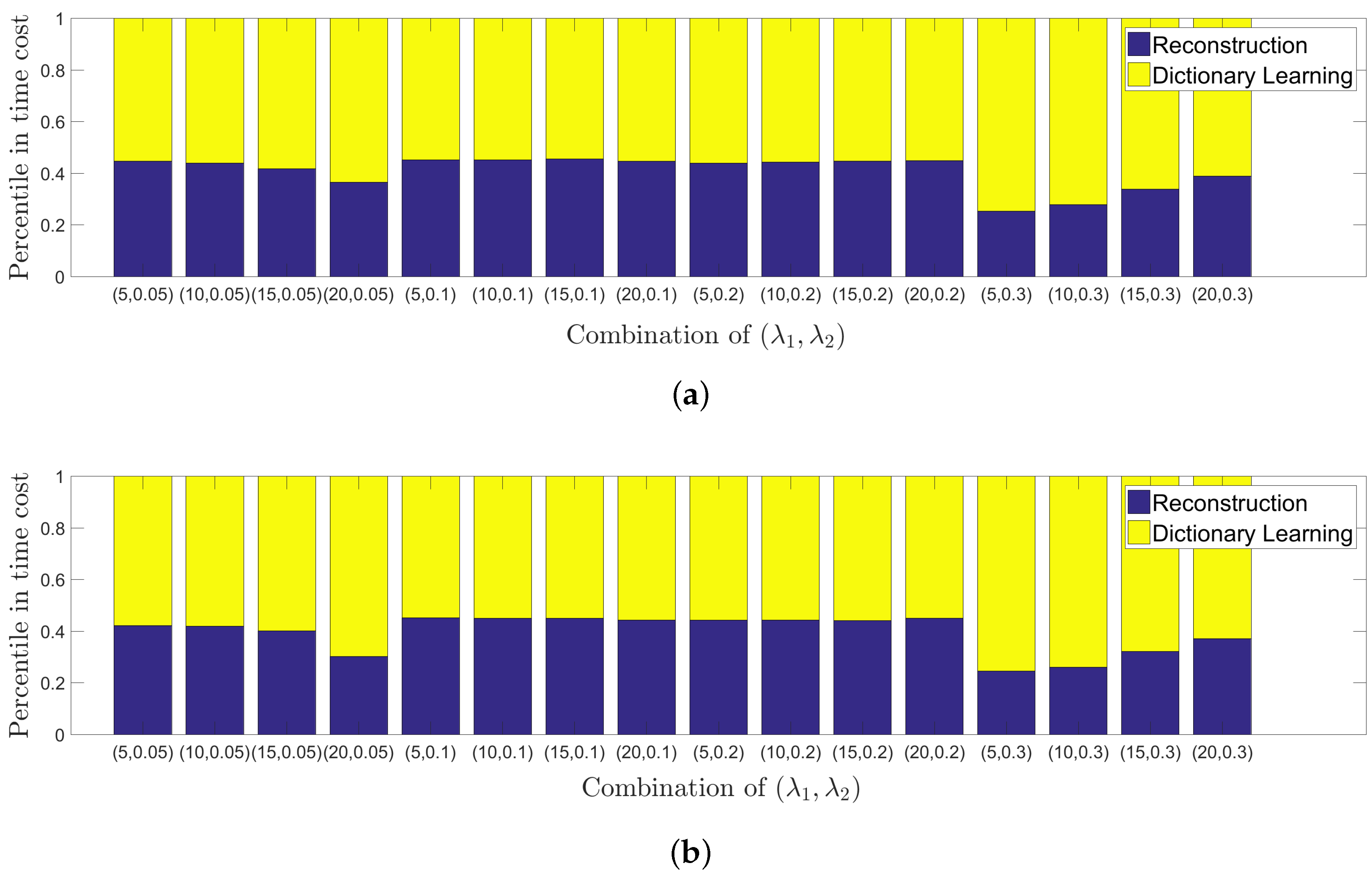

3.4. Time Comparison and Analysis

3.5. Discussion

4. Conclusions

Author Contributions

Acknowledgments

Conflicts of Interest

Abbreviations

| MDPI | Multidisciplinary Digital Publishing Institute |

| DOAJ | Directory of open access journals |

| TLA | Three Letter Acronym |

| LD | Linear Dichroism |

Appendix A

References

- Jabarullah, B.M.; Saxena, S.; Babu, D.K. Survey on Noise Removal in Digital Images. IOSR J. Comput. Eng. 2012, 6, 45–51. [Google Scholar] [CrossRef]

- Chouzenoux, E.; Jezierska, A.; Pesquet, J.C.; Talbot, H. A Convex Approach for Image Restoration with Exact Poisson-Gaussian Likelihood. SIAM J. Imaging Sci. 2015, 8, 2662–2682. [Google Scholar] [CrossRef]

- Peng, C.; Cheng, J.; Cheng, Q. A Supervised Learning Model for High-Dimensional and Large-Scale Data. ACM Trans. Intell. Syst. Technol. 2017, 8, 30. [Google Scholar] [CrossRef]

- Peng, C.; Kang, Z.; Cheng, Q. Subspace Clustering via Variance Regularized Ridge Regression. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 682–691. [Google Scholar]

- Kang, Z.; Peng, C.; Cheng, Q. Kernel-driven similarity learning. Neurocomputing 2017, 267, 210–219. [Google Scholar] [CrossRef]

- Pham, D.L.; Xu, C.; Prince, J.L. Current methods in medical image segmentation. Annu. Rev. Biomed. Eng. 2000, 2, 315–337. [Google Scholar] [CrossRef] [PubMed]

- Buades, A.; Coll, B.; Morel, J.M. A Non-Local Algorithm for Image Denoising. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, San Diego, CA, USA, 20–25 June 2005; pp. 60–65. [Google Scholar]

- Chatterjee, P.; Milanfar, P. Is denoising dead? IEEE Trans. Image Process. 2010, 19, 895–911. [Google Scholar] [CrossRef] [PubMed]

- Rudin, L.I.; Osher, S.; Fatemi, E. Nonlinear total variation based noise removal algorithms. Phys. D Nonlinear Phenom. 1992, 60, 259–268. [Google Scholar] [CrossRef]

- Dabov, K.; Foi, A.; Katkovnik, V.; Egiazarian, K. Image Denoising by Sparse 3-D Transform-Domain Collaborative Filtering. IEEE Trans. Image Process. 2007, 16, 2080–2095. [Google Scholar] [CrossRef] [PubMed]

- Dong, W.; Shi, G.; Li, X. Nonlocal Image Restoration With Bilateral Variance Estimation: A Low-Rank Approach. IEEE Trans. Image Process. 2013, 22, 700–711. [Google Scholar] [CrossRef] [PubMed]

- Zuo, W.; Zhang, L.; Song, C.; Zhang, D. Texture Enhanced Image Denoising via Gradient Histogram Preservation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, USA, 23–28 June 2013; pp. 1203–1210. [Google Scholar]

- Fergus, R.; Singh, B.; Hertzmann, A.; Roweis, S.T.; Freeman, W.T. Removing camera shake from a single photograph. ACM Trans. Graph. 2006, 25, 787–794. [Google Scholar] [CrossRef]

- Han, Y.; Xu, C.; Baciu, G.; Li, M.; Islam, M.R. Cartoon and texture decomposition-based color transfer for fabric images. IEEE Trans. Multimed. 2017, 19, 80–92. [Google Scholar] [CrossRef]

- Lefkimmiatis, S.; Ward, J.P.; Unser, M. Hessian Schatten-Norm Regularization for Linear Inverse Problems. IEEE Trans. Image Process. 2013, 22, 1873–1888. [Google Scholar] [CrossRef] [PubMed]

- Harmeling, S. Image Denoising: Can Plain Neural Networks Compete with BM3D? In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Providence, RI, USA, 16–21 June 2012; pp. 2392–2399. [Google Scholar]

- Zoran, D.; Weiss, Y. From Learning Models of Natural Image Patches to Whole Image Restoration. In Proceedings of the IEEE International Conference on Computer Vision, Barcelona, Spain, 6–13 November 2011; pp. 479–486. [Google Scholar]

- Roth, S.; Black, M.J. Fields of Experts: A Framework for Learning Image Priors. Int. J. Comput. Vis. 2009, 82, 205. [Google Scholar] [CrossRef]

- Elad, M. Sparse and Redundant Representations: From Theory to Applications in Signal and Image Processing; Springer: Berlin/Heidelberg, Germany, 2010. [Google Scholar]

- Elad, M.; Aharon, M. Image denoising via sparse and redundant representations over learned dictionaries. IEEE Trans. Image Process. 2006, 15, 3736–3745. [Google Scholar] [CrossRef] [PubMed]

- Mairal, J.; Bach, F.; Ponce, J. Sparse modeling for image and vision processing. Found. Trends Comput. Graph. Vis. 2014, 8, 85–283. [Google Scholar] [CrossRef]

- Cai, S.; Weng, S.; Luo, B.; Hu, D.; Yu, S.; Xu, S. A Dictionary-Learning Algorithm based on Method of Optimal Directions and Approximate K-SVD. In Proceedings of the 35th Chinese Control Conference (CCC), Chengdu, China, 27–29 July 2016; pp. 6957–6961. [Google Scholar]

- Lin, Z.; Liu, R.; Su, Z. Linearized alternating direction method with adaptive penalty for low-rank representation. In Proceedings of the Advances in Neural Information Processing Systems, Granada, Spain, 12–14 December 2011; pp. 612–620. [Google Scholar]

- Yu, J.; Gao, X.; Tao, D.; Li, X.; Zhang, K. A unified learning framework for single image super-resolution. IEEE Trans. Neural Netw. Learn. Syst. 2014, 25, 780–792. [Google Scholar] [PubMed]

- Chen, S.S.; Donoho, D.L.; Saunders, M.A. Atomic Decomposition by Basis Pursuit; Society for Industrial and Applied Mathematics: Philadelphia, PA, USA, 1998. [Google Scholar]

- Cotter, S.F.; Rao, B.D. Sparse channel estimation via matching pursuit with application to equalization. IEEE Trans. Wirel. Commun. 2002, 50, 374–377. [Google Scholar] [CrossRef]

- Tropp, J.A.; Gilbert, A.C. Signal Recovery from Random Measurements via Orthogonal Matching Pursuit. IEEE Trans. Inf. Theory 2007, 53, 4655–4666. [Google Scholar] [CrossRef]

- Gorodnitsky, I.F.; Rao, B.D. Sparse signal reconstruction from limited data using FOCUSS: A re-weighted minimum norm algorithm. IEEE Trans. Signal Process. 2002, 45, 600–616. [Google Scholar] [CrossRef]

- Aharon, M.; Elad, M.; Bruckstein, A. K-SVD: An algorithm for designing overcomplete dictionaries for sparse representation. IEEE Trans. Signal Process. 2006, 54, 4311–4322. [Google Scholar] [CrossRef]

- Golub, G.H.; Van Loan, C.F. Matrix Computations; JHU Press: Baltimore, MD, USA, 2012; Volume 3. [Google Scholar]

- Rubinstein, R.; Zibulevsky, M.; Elad, M. Efficient Implementation of the K-SVD Algorithm using Batch Orthogonal Matching Pursuit. Cs Tech. 2008, 40, 1–15. [Google Scholar]

- Smith, L.N.; Elad, M. Improving dictionary learning: Multiple dictionary updates and coefficient reuse. IEEE Signal Process. Lett. 2013, 20, 79–82. [Google Scholar] [CrossRef]

- Lefkimmiatis, S.; Unser, M. Poisson image reconstruction with Hessian Schatten-norm regularization. IEEE Trans. Image Process. 2013, 22, 4314–4327. [Google Scholar] [CrossRef] [PubMed]

- Combettes, P.L.; Pesquet, J.C. Image restoration subject to a total variation constraint. IEEE Trans. Image Process. 2004, 13, 1213–1222. [Google Scholar] [CrossRef] [PubMed]

- Bioucas-Dias, J.M.; Figueiredo, M.A. Multiplicative noise removal using variable splitting and constrained optimization. IEEE Trans. Image Process. 2010, 19, 1720–1730. [Google Scholar] [CrossRef] [PubMed]

- Aubert, G.; Aujol, J.F. A variational approach to removing multiplicative noise. SIAM J. Appl. Math. 2008, 68, 925–946. [Google Scholar] [CrossRef]

| Parameter | Symbol | Empirical Value for | Empirical Value for |

|---|---|---|---|

| Penalty parameter | 10 | 10 | |

| Penalty parameter | 0.1 | 0.1 | |

| Penalty parameter | |||

| Smoothing parameter | 0.12 | 0 | |

| Stopping tolerence | 0.001 | 0.001 | |

| Stopping tolerence | 0.001 | 0.001 |

| Image | Face | Kids | ||||||

| Method | TV | PSNR | 21.47 | 20.44 | 19.02 | 23.06 | 21.03 | 19.49 |

| SSIM | 0.6915 | 0.6435 | 0.6131 | 0.6790 | 0.5932 | 0.5667 | ||

| HS | PSNR | 22.13 | 20.92 | 19.42 | 24.03 | 21.60 | 19.96 | |

| SSIM | 0.7417 | 0.6992 | 0.6616 | 0.7409 | 0.6706 | 0.6333 | ||

| EPLL | PSNR | 22.02 | 20.85 | 19.30 | 24.02 | 21.62 | 19.39 | |

| SSIM | 0.7320 | 0.7030 | 0.6636 | 0.7531 | 0.6817 | 0.6366 | ||

| BM3D | PSNR | 22.80 | 20.76 | 20.05 | 24.52 | 22.08 | 20.40 | |

| SSIM | 0.7536 | 0.6679 | 0.6765 | 0.7603 | 0.6882 | 0.6458 | ||

| LSLA-2 | PSNR | 23.25 | 22.50 | 20.95 | 24.69 | 23.03 | 23.68 | |

| SSIM | 0.7679 | 0.7396 | 0.6851 | 0.7555 | 0.7052 | 0.6578 | ||

| LSLA-1 | PSNR | 23.48 | 22.05 | 21.19 | 24.59 | 23.29 | 22.45 | |

| SSIM | 0.7694 | 0.7217 | 0.6912 | 0.7423 | 0.7063 | 0.6825 | ||

| Image | Wall | Abdomen | ||||||

| Method | TV | PSNR | 20.70 | 18.19 | 16.80 | 22.57 | 20.06 | 18.50 |

| SSIM | 0.6521 | 0.5601 | 0.4978 | 0.5579 | 0.4940 | 0.4697 | ||

| HS | PSNR | 21.33 | 18.54 | 17.03 | 23.29 | 20.52 | 18.77 | |

| SSIM | 0.7043 | 0.5975 | 0.5460 | 0.6384 | 0.5592 | 0.5300 | ||

| EPLL | PSNR | 21.36 | 18.38 | 16.76 | 23.51 | 20.64 | 18.84 | |

| SSIM | 0.7254 | 0.6254 | 0.5698 | 0.6517 | 0.5915 | 0.5440 | ||

| BM3D | PSNR | 21.97 | 19.04 | 17.42 | 24.14 | 21.26 | 19.50 | |

| SSIM | 0.7421 | 0.6410 | 0.5838 | 0.6700 | 0.6026 | 0.5603 | ||

| LSLA-2 | PSNR | 22.28 | 20.11 | 19.22 | 25.06 | 22.68 | 21.47 | |

| SSIM | 0.7598 | 0.6730 | 0.6477 | 0.7530 | 0.6680 | 0.6237 | ||

| LSLA-1 | PSNR | 22.51 | 20.31 | 19.12 | 24.97 | 22.72 | 21.37 | |

| SSIM | 0.7675 | 0.6736 | 0.6311 | 0.7462 | 0.6663 | 0.6096 | ||

| Image | Noise Level | Method | |||||

|---|---|---|---|---|---|---|---|

| AA | MIDAL | LSLA-2 | |||||

| PSNR | SSIM | PSNR | SSIM | PSNR | SSIM | ||

| Nimes | 22.40 | 0.5378 | 22.68 | 0.5041 | 23.64 | 0.5942 | |

| 25.59 | 0.7572 | 25.36 | 0.7537 | 26.50 | 0.7757 | ||

| 27.53 | 0.8511 | 27.88 | 0.8910 | 28.51 | 0.8625 | ||

| Fields | 24.38 | 0.3369 | 25.13 | 0.3380 | 25.27 | 0.3505 | |

| 26.43 | 0.4230 | 27.40 | 0.4024 | 27.46 | 0.4622 | ||

| 26.77 | 0.4464 | 28.27 | 0.5371 | 28.64 | 0.5421 | ||

| Algorithm | Time (s) | |||

|---|---|---|---|---|

| Face | Kids | Wall | Abdomen | |

| BM3D | 1.0284 | 1.0336 | 1.1008 | 3.7333 |

| HS1 | 16.9454 | 18.0842 | 17.5324 | 37.0296 |

| EPLL | 146.2443 | 78.3126 | 146.561 | 502.4728 |

| TV | 0.6696 | 0.6841 | 0.6538 | 1.3947 |

| LSLA2 | 124.036 | 185.6624 | 122.9288 | 404.6401 |

| LSLA1 | 169.4185 | 139.1726 | 178.3549 | 438.5715 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cai, S.; Kang, Z.; Yang, M.; Xiong, X.; Peng, C.; Xiao, M. Image Denoising via Improved Dictionary Learning with Global Structure and Local Similarity Preservations. Symmetry 2018, 10, 167. https://doi.org/10.3390/sym10050167

Cai S, Kang Z, Yang M, Xiong X, Peng C, Xiao M. Image Denoising via Improved Dictionary Learning with Global Structure and Local Similarity Preservations. Symmetry. 2018; 10(5):167. https://doi.org/10.3390/sym10050167

Chicago/Turabian StyleCai, Shuting, Zhao Kang, Ming Yang, Xiaoming Xiong, Chong Peng, and Mingqing Xiao. 2018. "Image Denoising via Improved Dictionary Learning with Global Structure and Local Similarity Preservations" Symmetry 10, no. 5: 167. https://doi.org/10.3390/sym10050167

APA StyleCai, S., Kang, Z., Yang, M., Xiong, X., Peng, C., & Xiao, M. (2018). Image Denoising via Improved Dictionary Learning with Global Structure and Local Similarity Preservations. Symmetry, 10(5), 167. https://doi.org/10.3390/sym10050167