Monthly Temperature Prediction in the Han River Basin, South Korea, Using Long Short-Term Memory (LSTM) and Multiple Linear Regression (MLR) Models

Abstract

1. Introduction

2. Materials and Methods

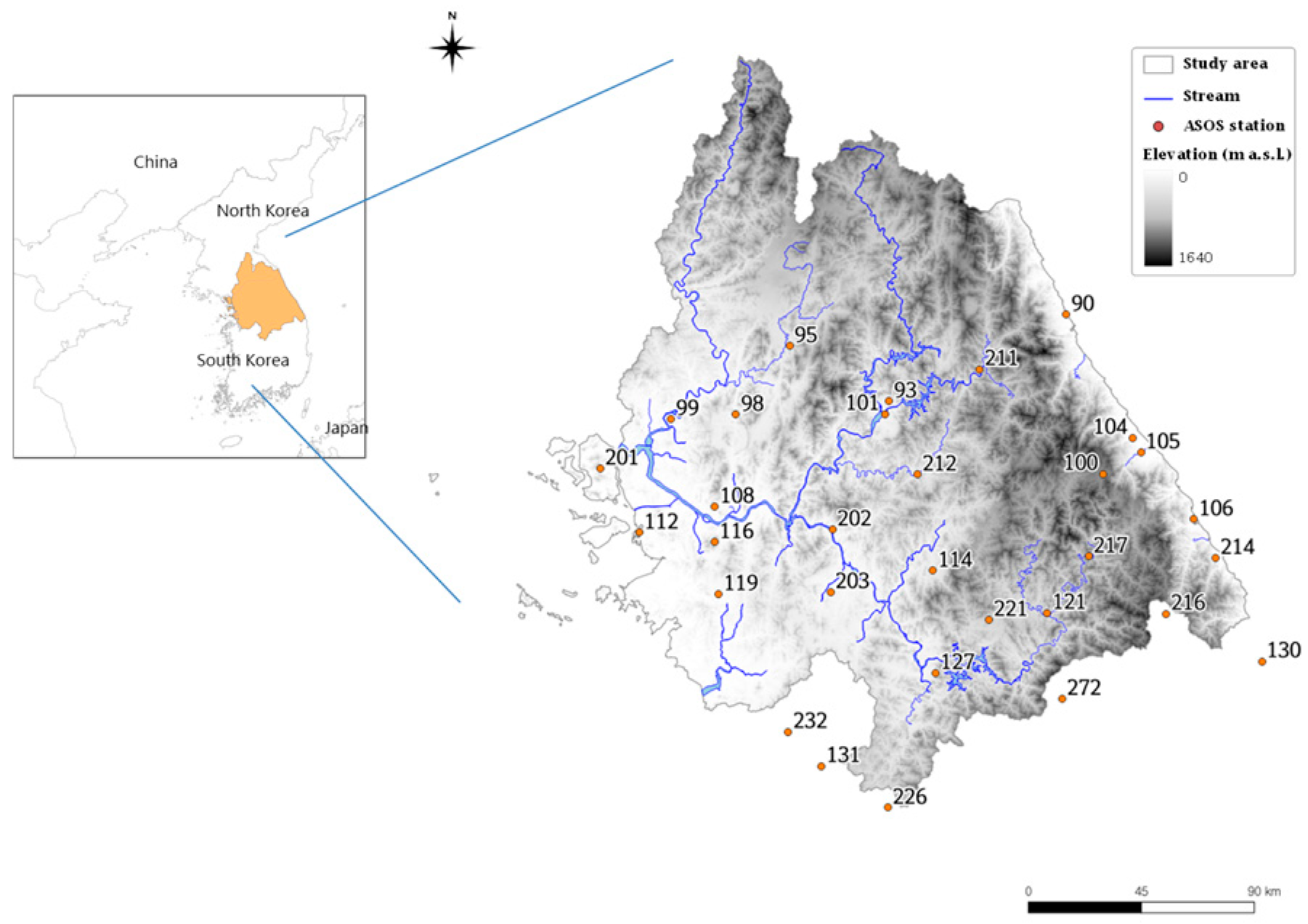

2.1. Study Area and Data

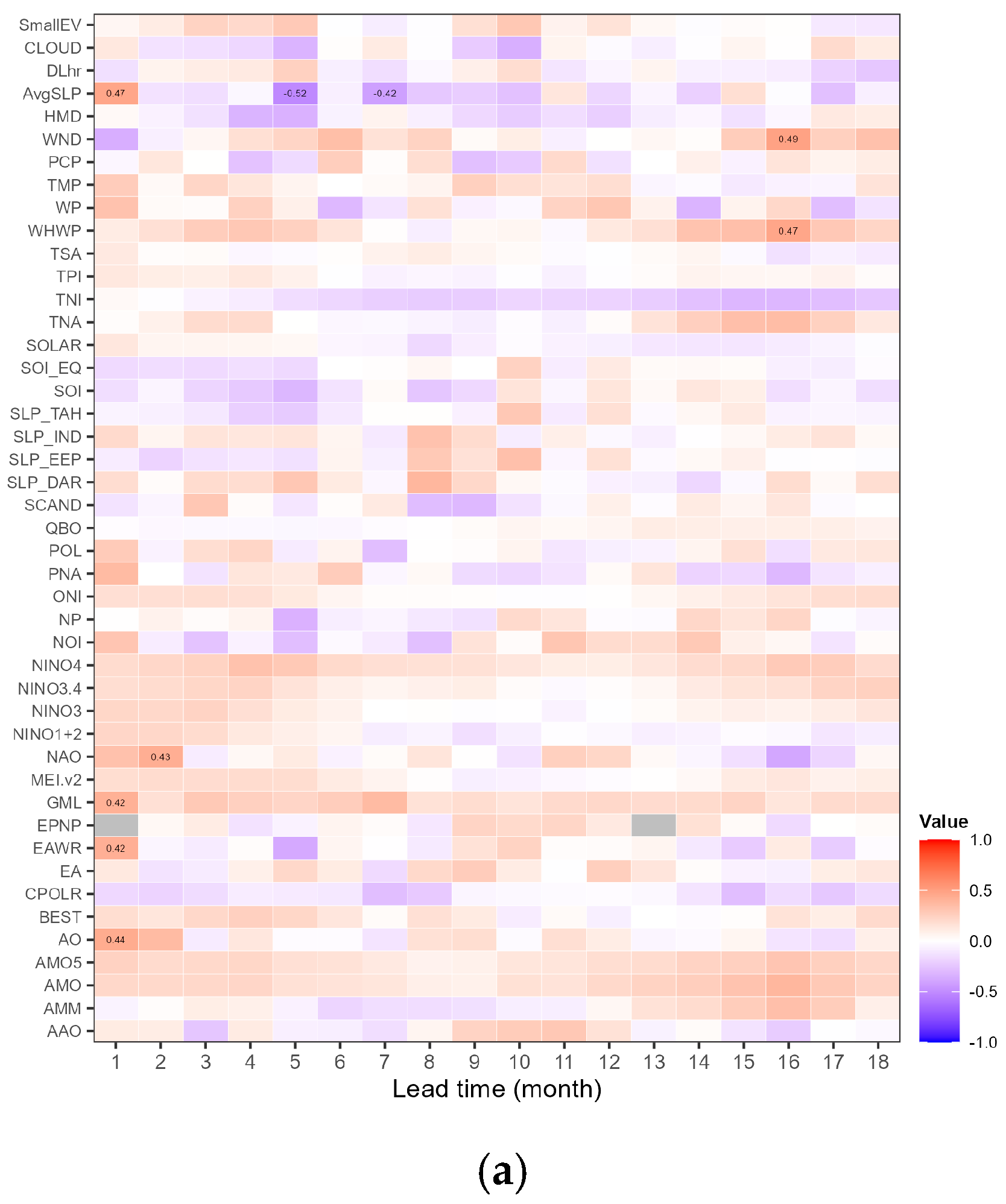

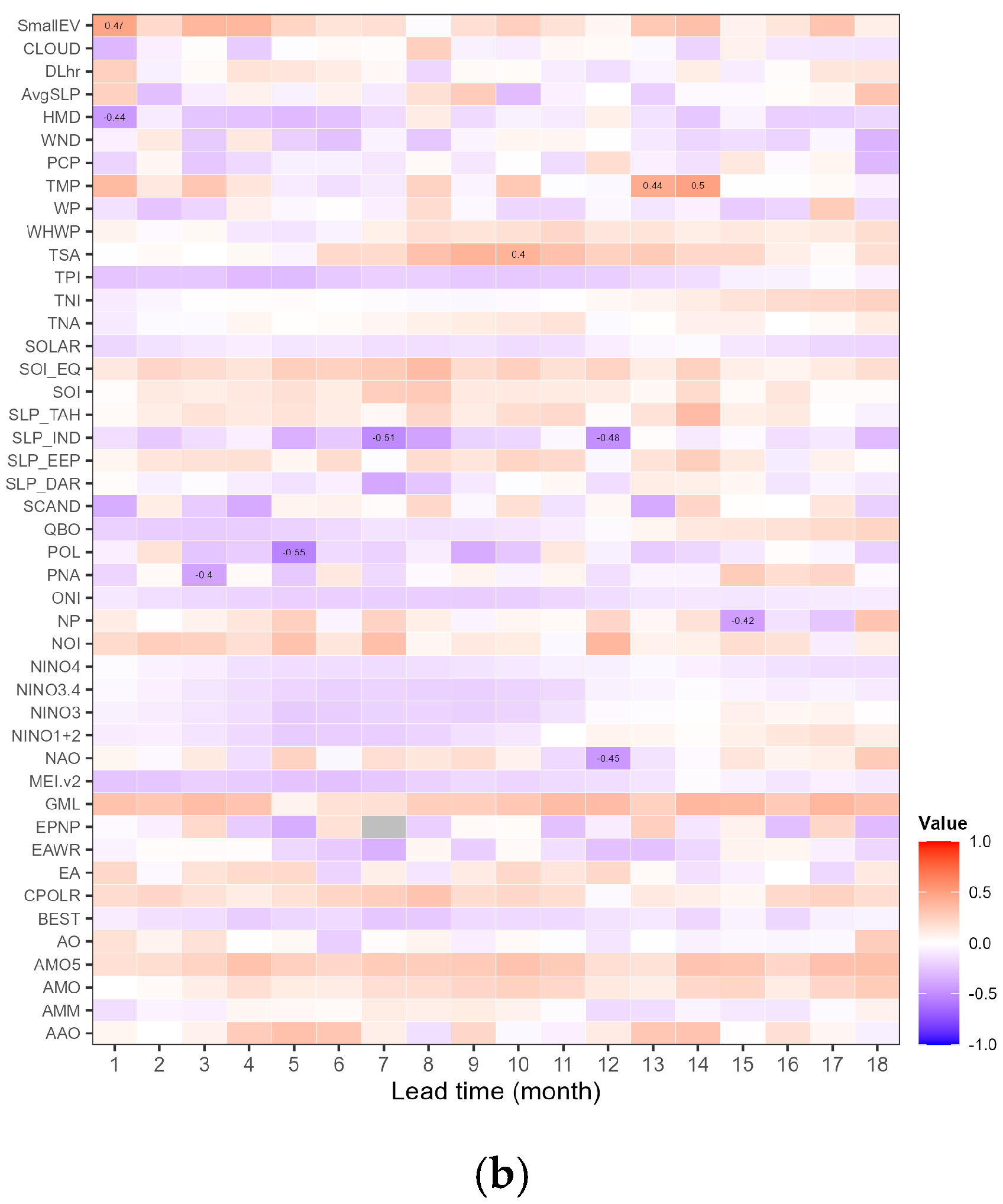

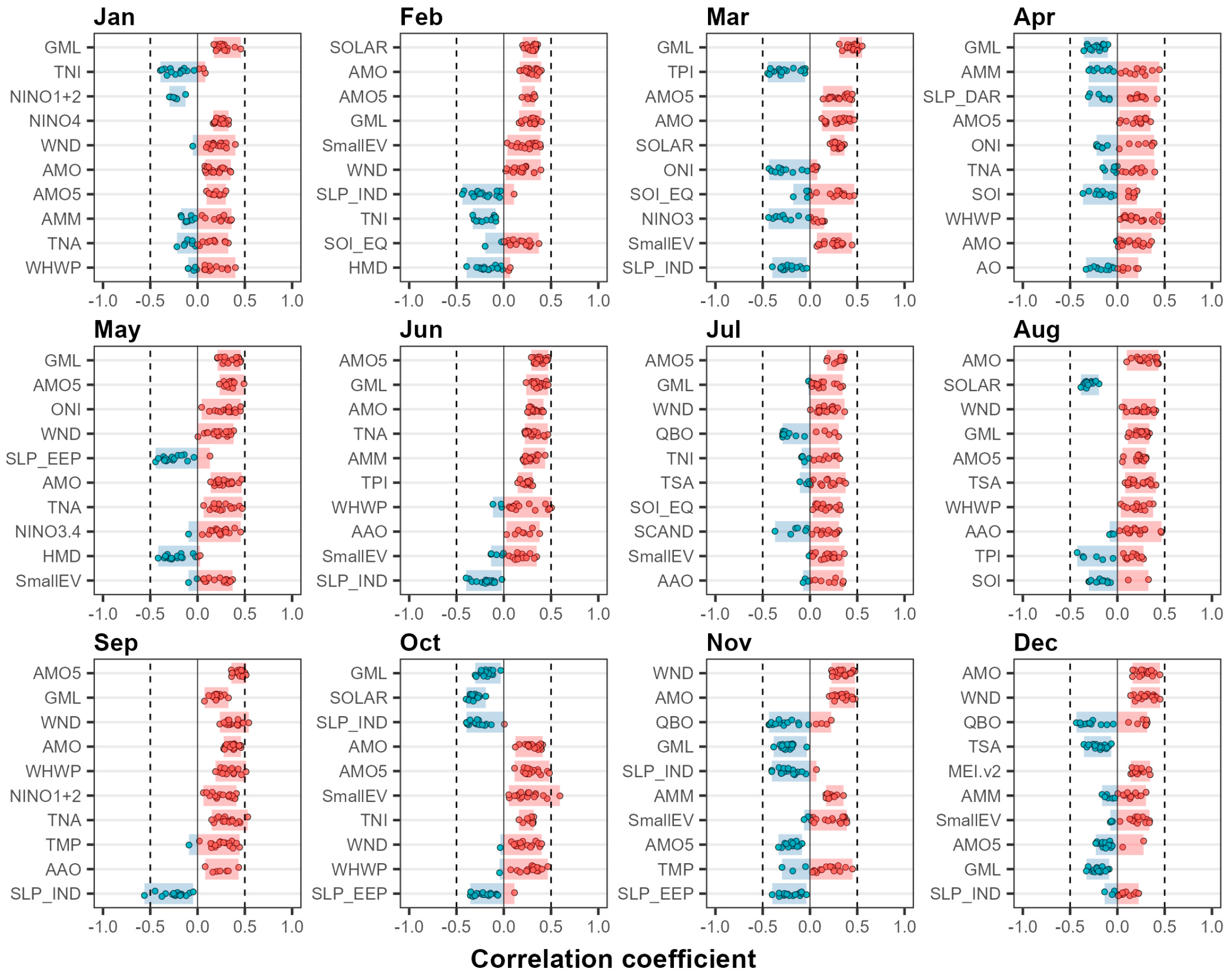

2.2. Preprocessing of Climate Indices

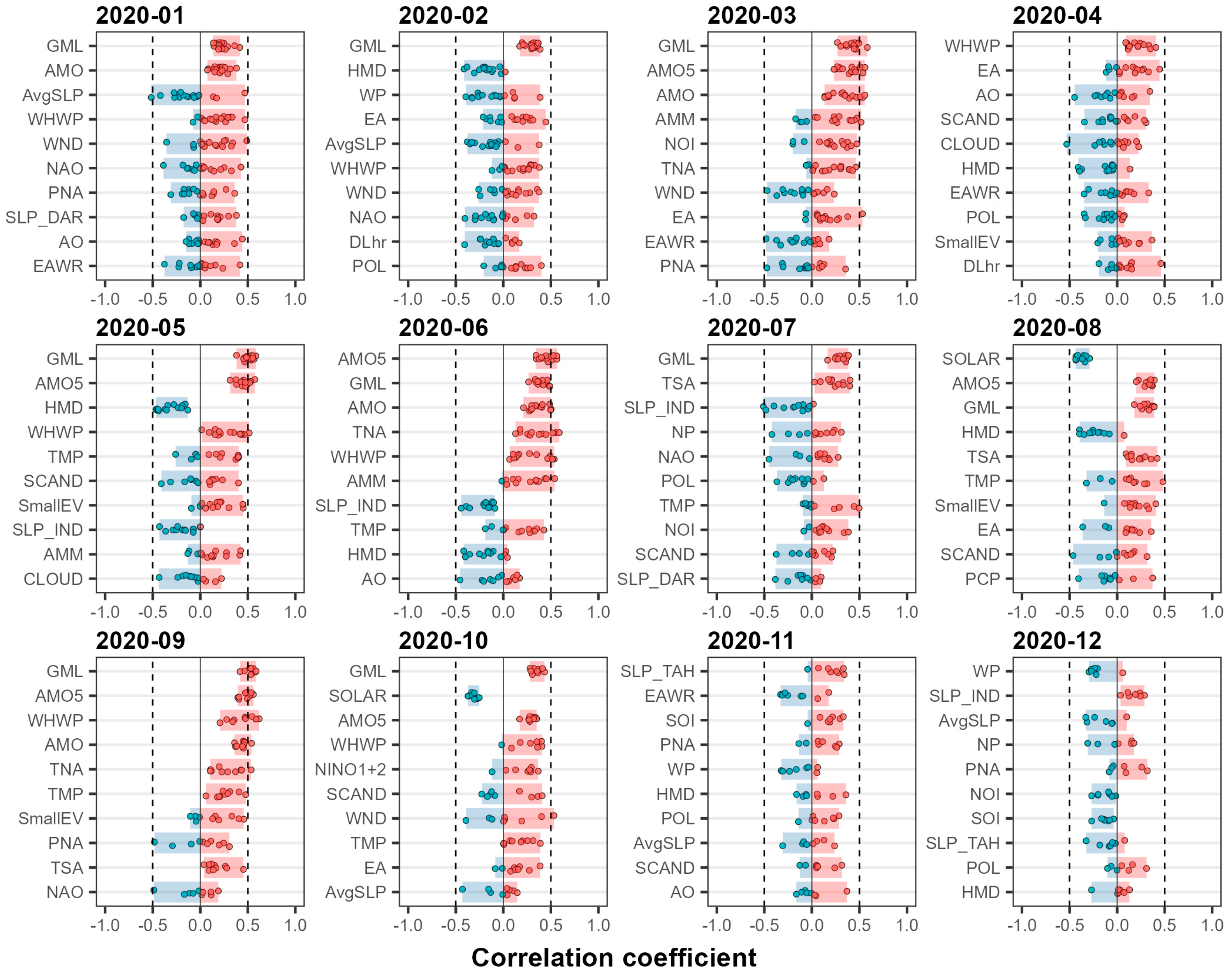

2.3. Selection of Predictors

2.4. Development of the LSTM-Based Model

2.5. Development of the MLR-Based Model

3. Results

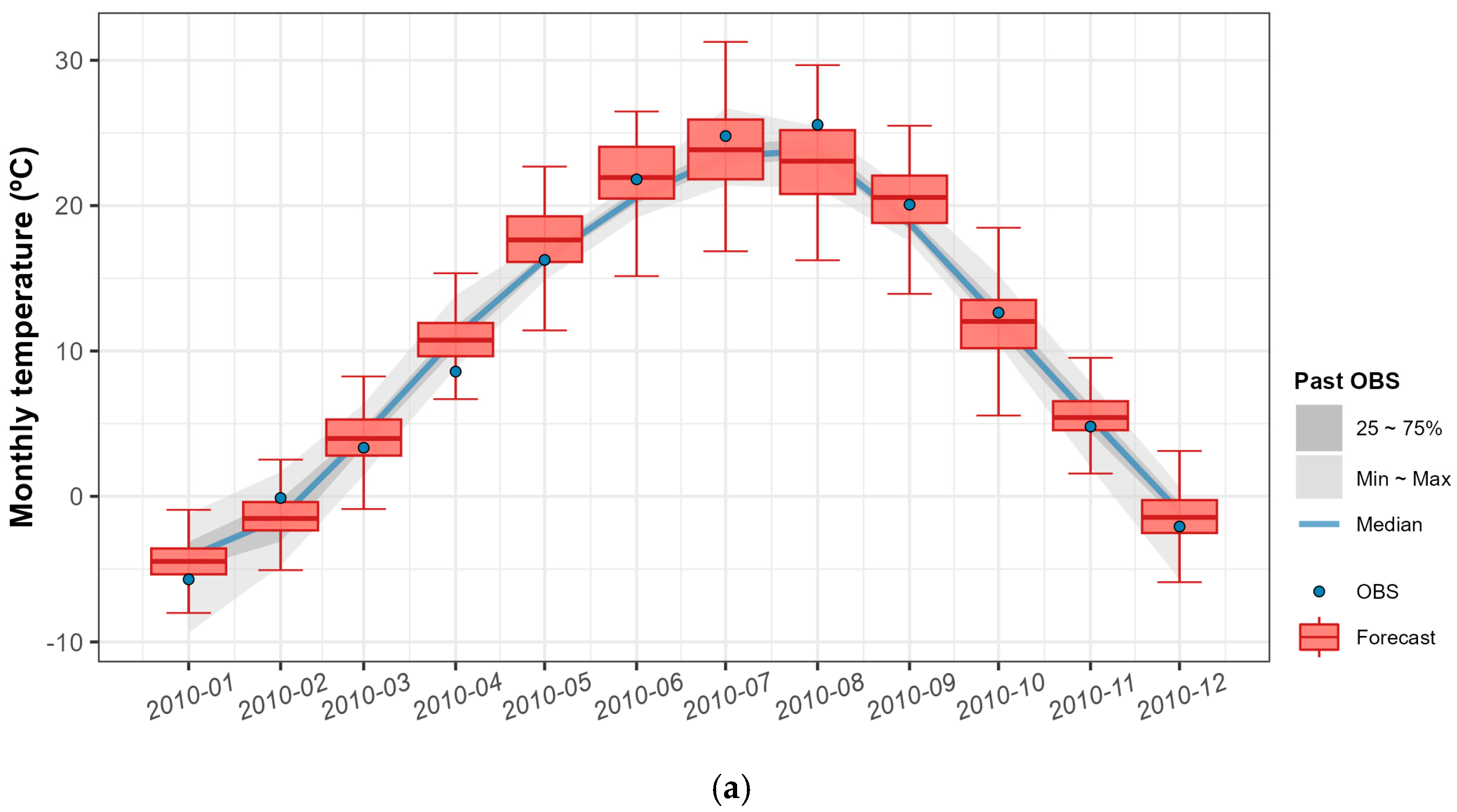

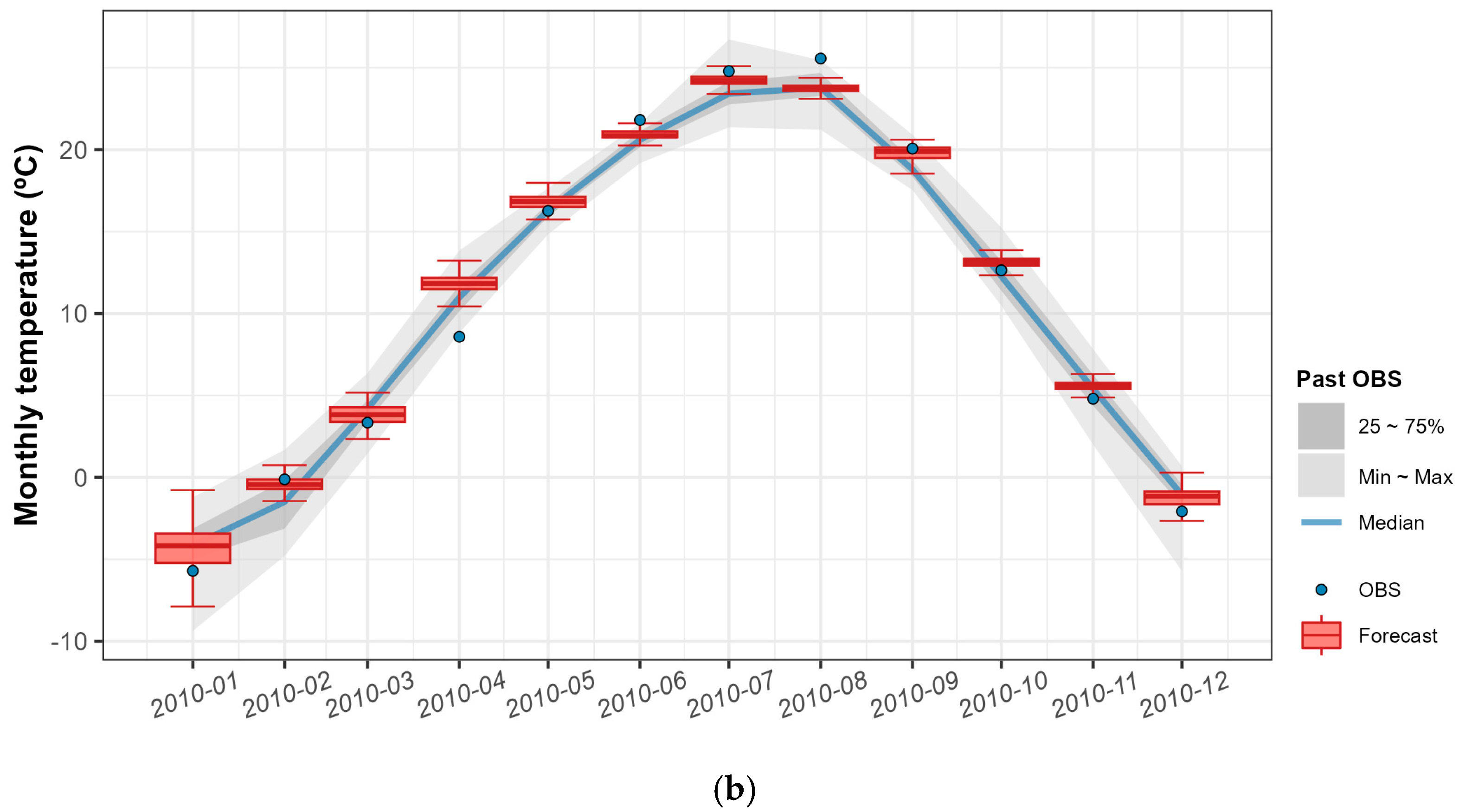

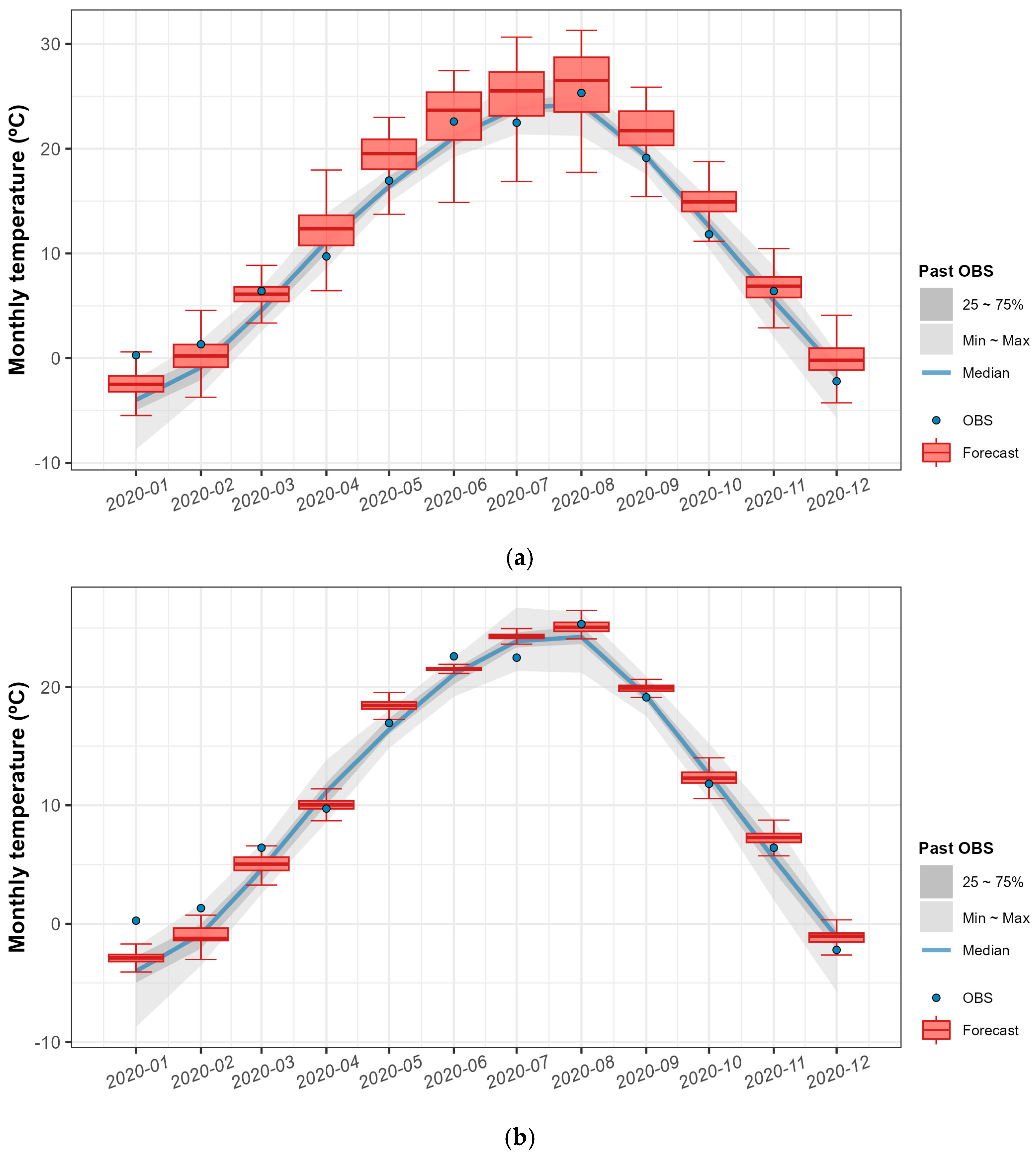

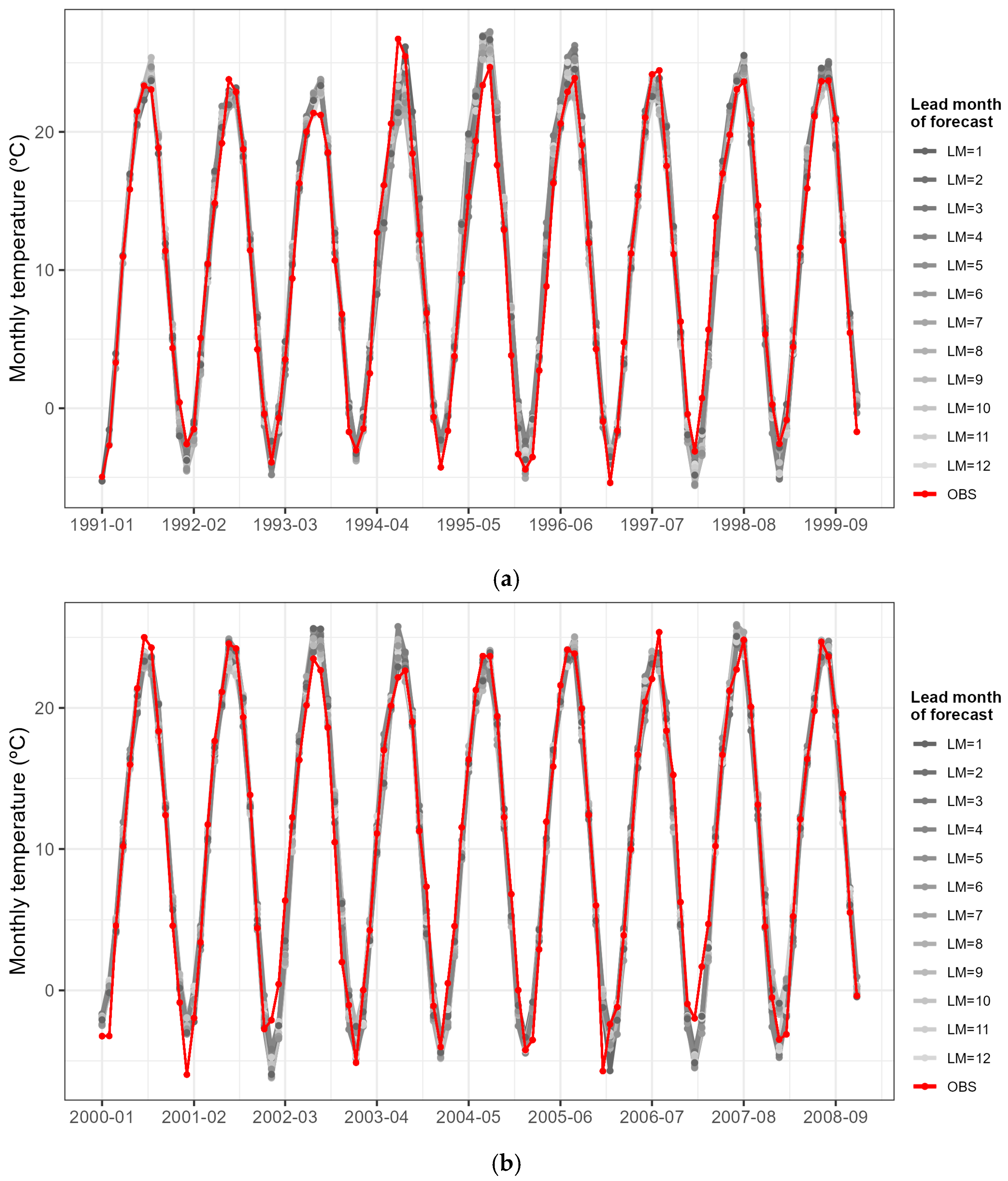

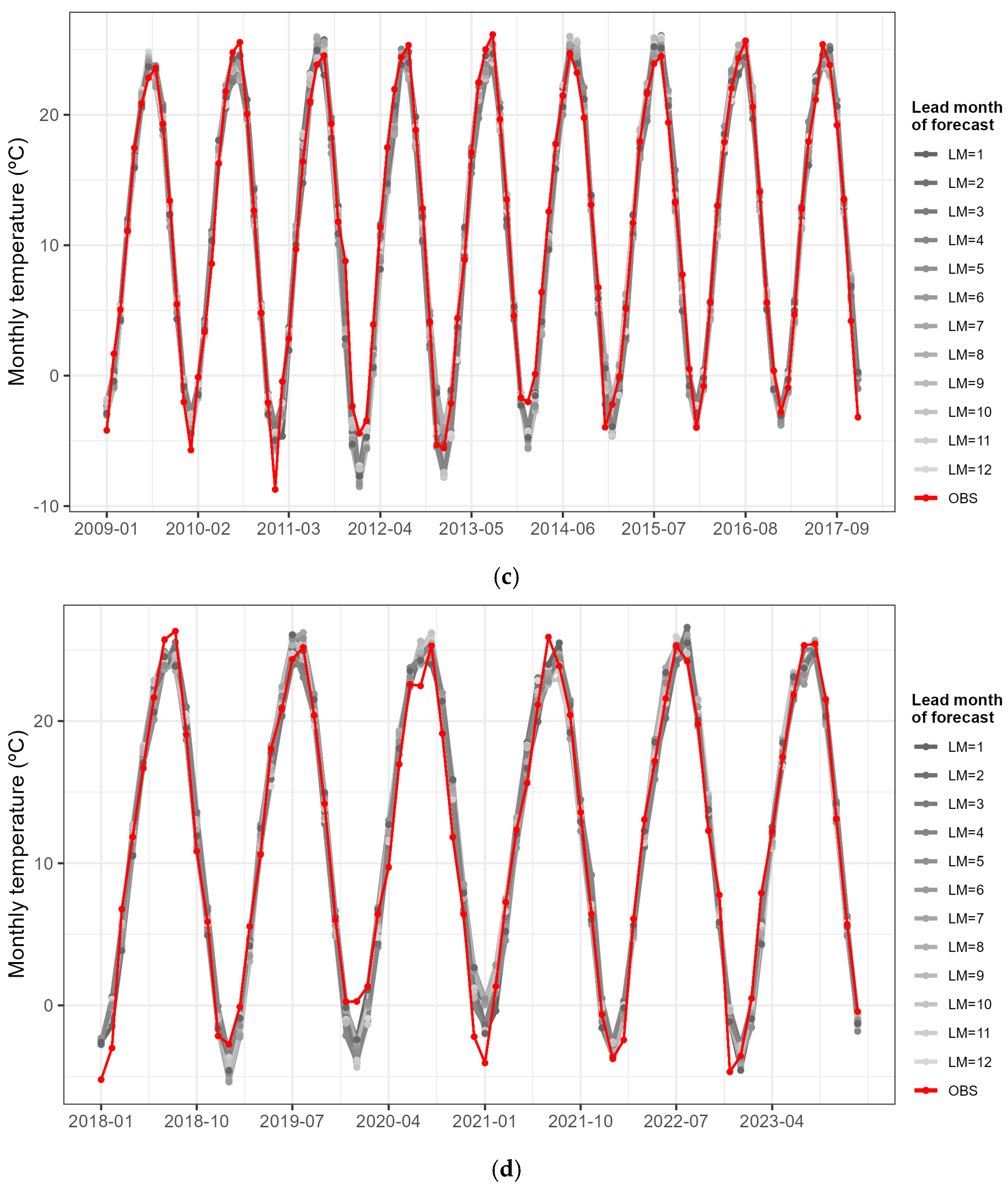

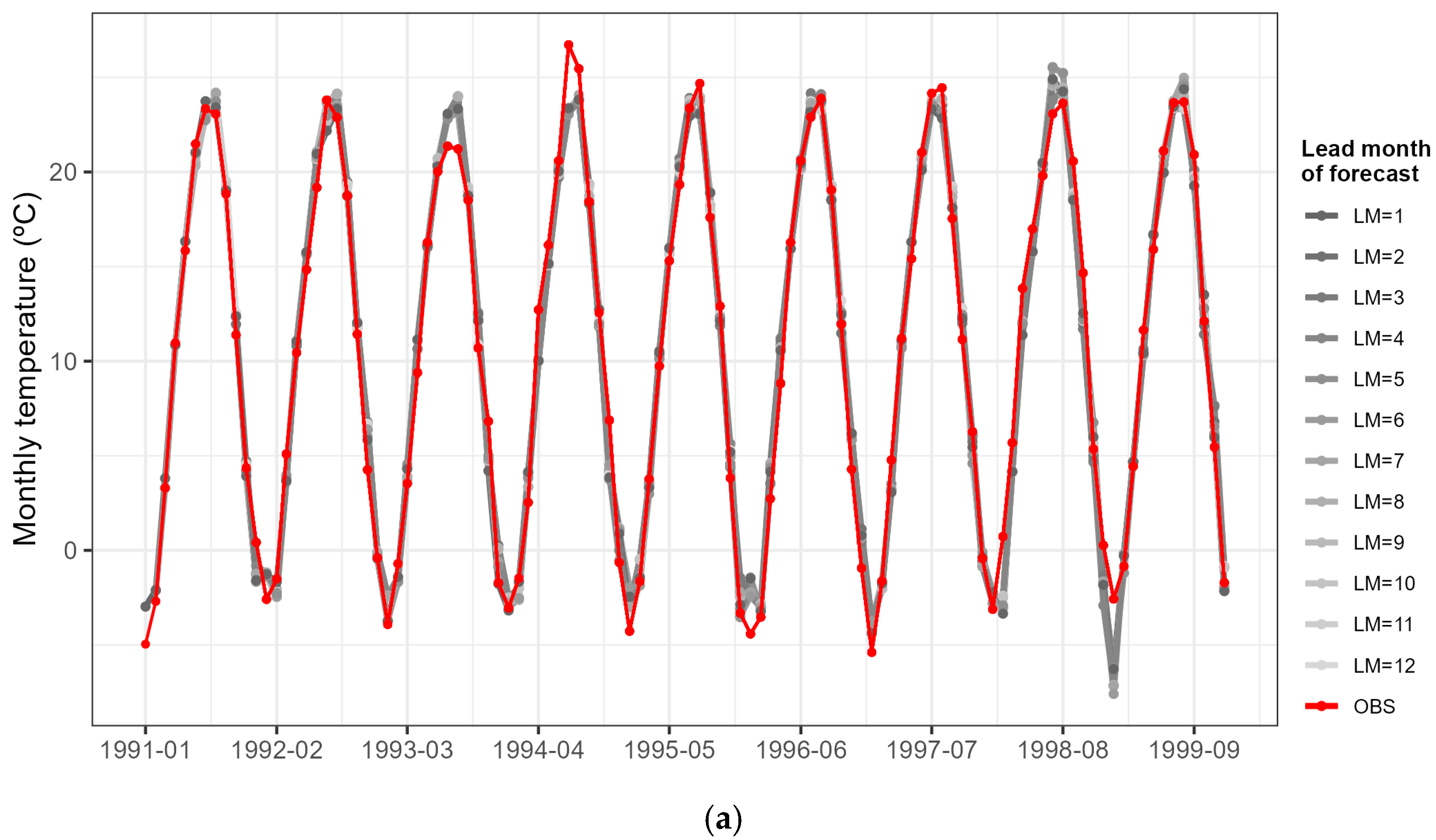

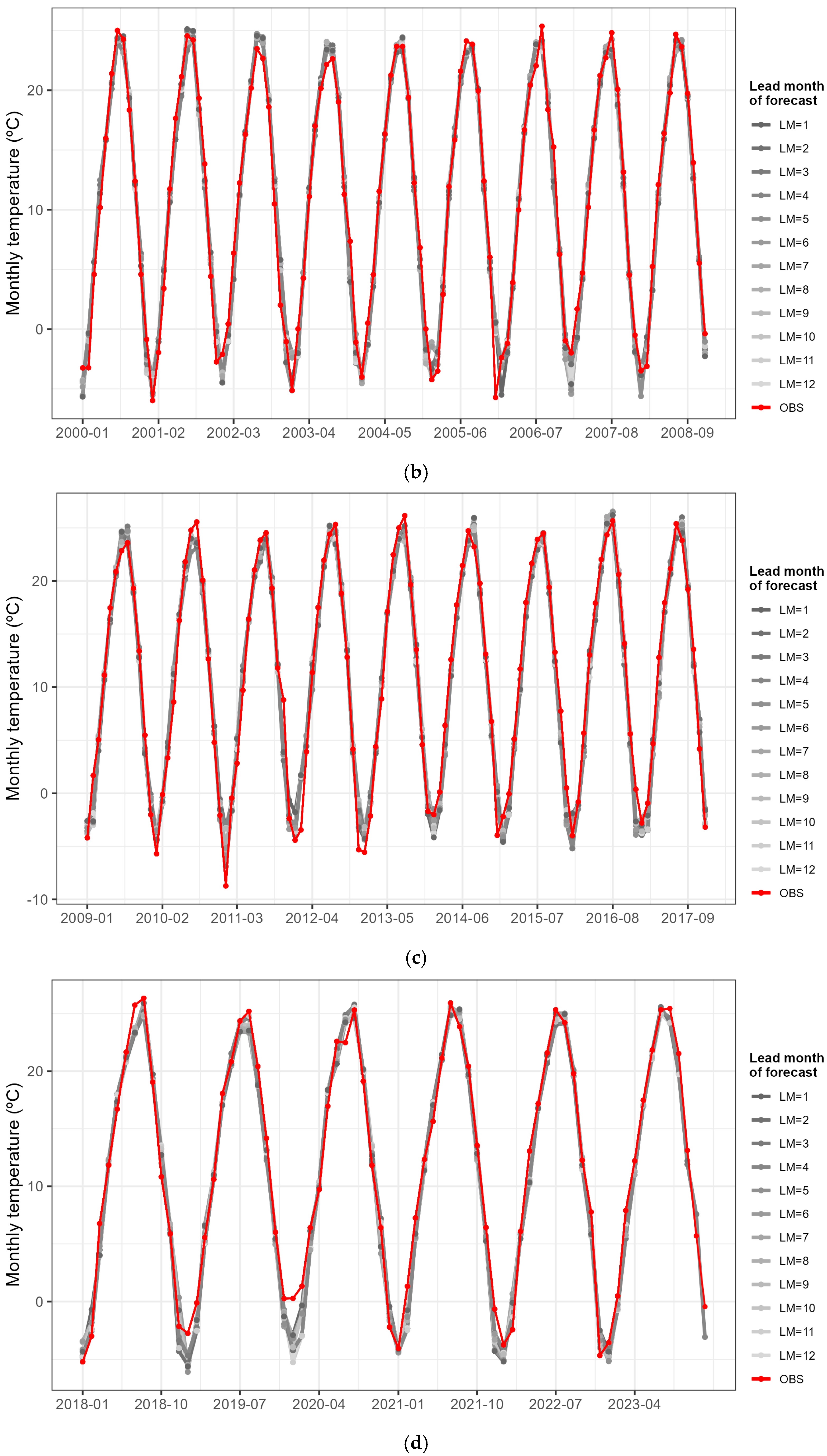

3.1. Monthly Temperature Prediction

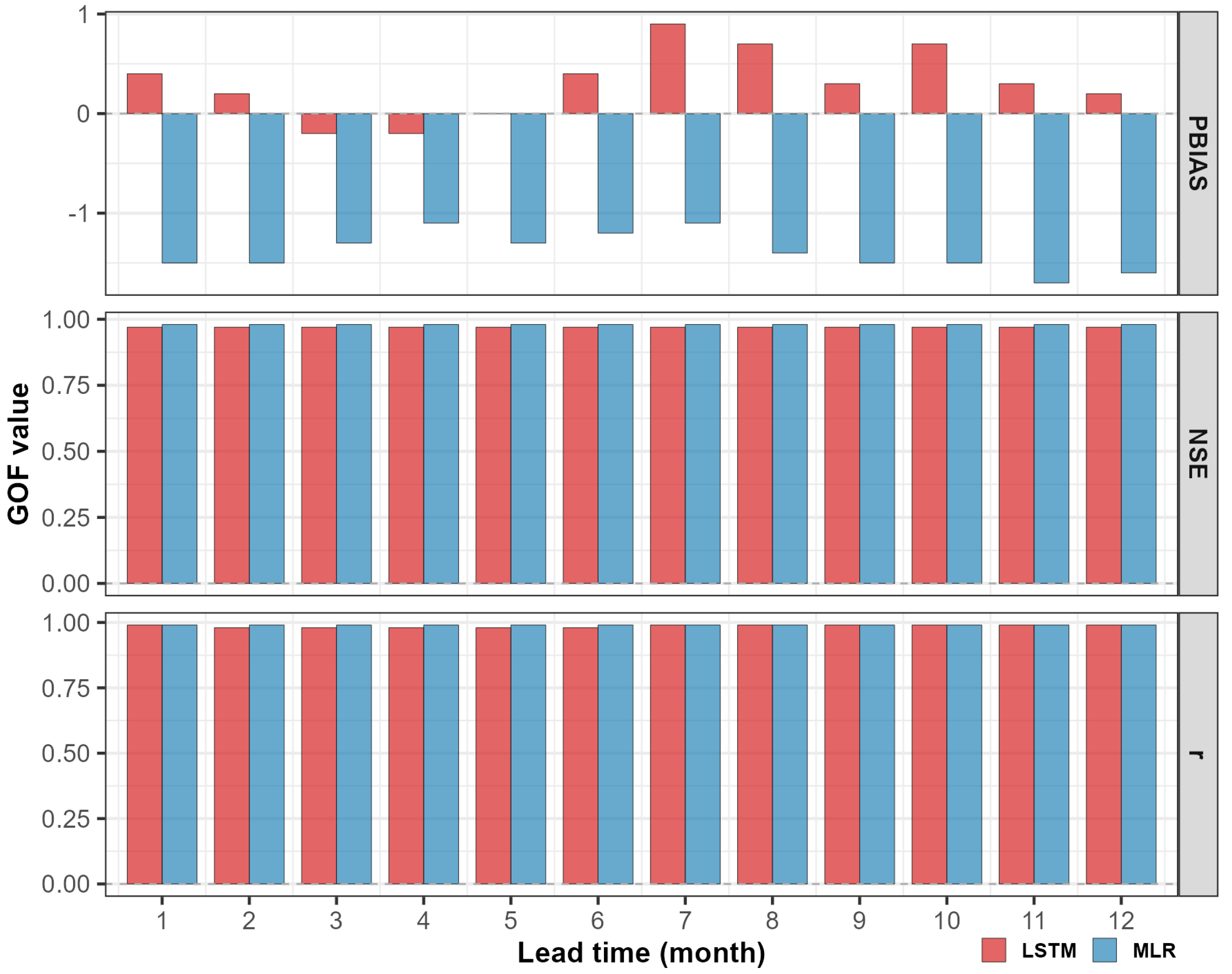

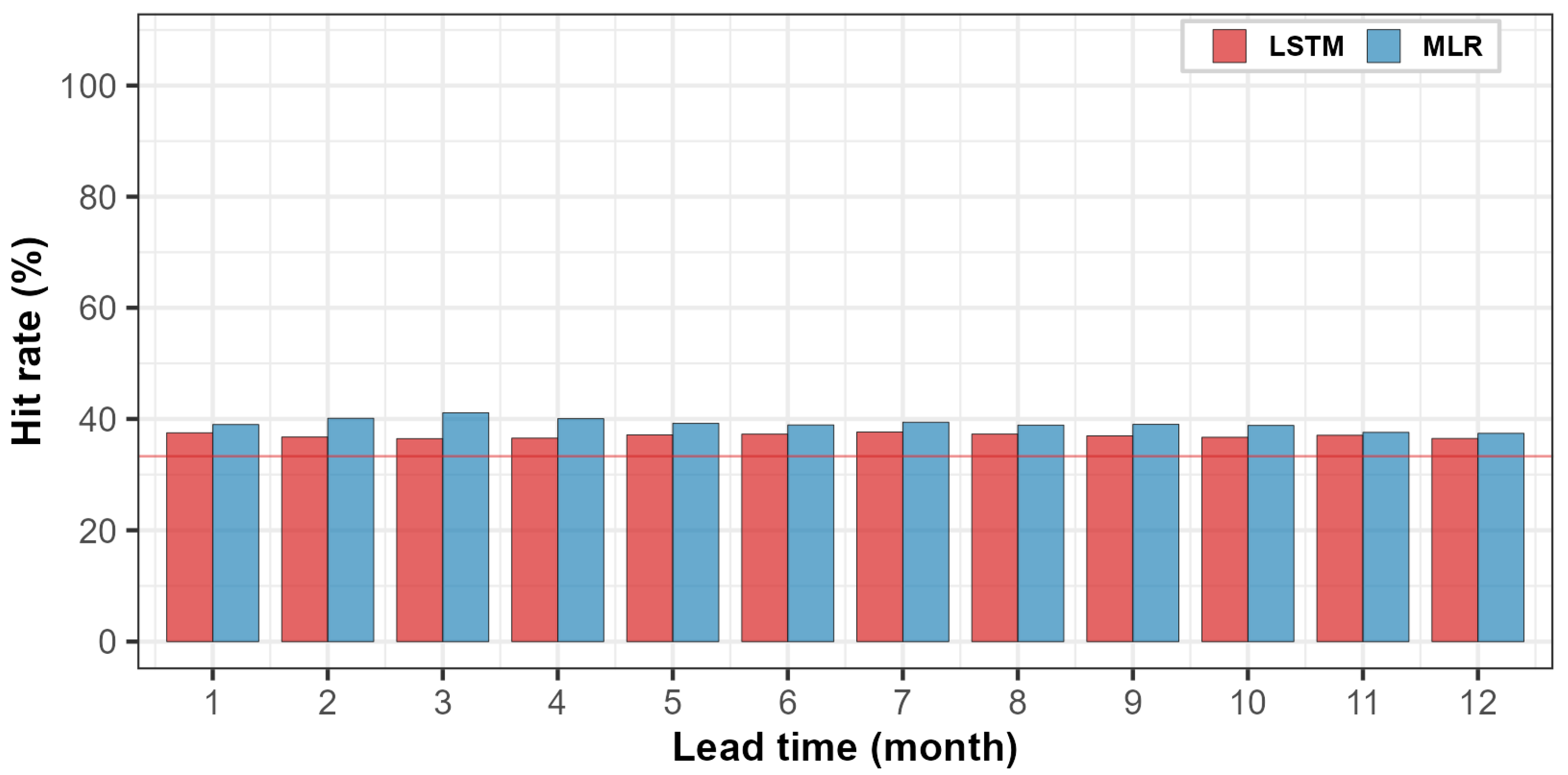

3.2. Comparison of Predictive Performance by Lead Time

4. Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Ministry of Environment, Han River Flood Control Office (MOE HRFCO). Statistical and Analytical Report of the 2023 River Basin Survey; Han River Flood Control Office: Seoul, Republic of Korea, 2024. (In Korean) [Google Scholar]

- Jeong, J.-H.; Ho, C.-H. Changes in occurrence of cold surges over East Asia in association with Arctic Oscillation. Geophys. Res. Lett. 2005, 32, L14704. [Google Scholar] [CrossRef]

- Li, F.; Wang, H.; Gao, Y. On the strengthened relationship between the East Asian winter monsoon and Arctic Oscillation: A comparison of 1950–70 and 1983–2012. J. Clim. 2014, 27, 5075–5091. [Google Scholar] [CrossRef]

- Dong, X. Influences of the Pacific Decadal Oscillation on the East Asian summer monsoon in non-ENSO years. Atmos. Sci. Lett. 2016, 17, 115–120. [Google Scholar] [CrossRef]

- He, S.; Gao, Y.; Li, F.; Wang, H.; He, Y. Impact of Arctic Oscillation on the East Asian climate: A review. Earth-Sci. Rev. 2017, 164, 48–62. [Google Scholar] [CrossRef]

- Chen, W.; Feng, J.; Wu, R. Roles of ENSO and PDO in the link of the East Asian winter monsoon to the following summer monsoon. J. Clim. 2022, 26, 622–635. [Google Scholar] [CrossRef]

- Molteni, F.; Stockdale, T.N.; Vitart, F. Understanding and modelling extra-tropical teleconnections with the Indo-Pacific region during the northern winter. Clim. Dyn. 2015, 45, 3119–3140. [Google Scholar] [CrossRef]

- Johnson, S.J.; Stockdale, T.N.; Ferranti, L.; Balmaseda, M.A.; Molteni, F.; Magnusson, L.; Tietsche, S.; Decremer, D.; Weisheimer, A.; Balsamo, G.; et al. SEAS5: The new ECMWF seasonal forecast system. Geosci. Model Dev. 2019, 12, 1087–1117. [Google Scholar] [CrossRef]

- Wei, W.W.S. Time Series Analysis: Univariate and Multivariate Methods, 2nd ed.; Pearson Addison Wesley: Boston, MA, USA, 2006. [Google Scholar]

- Hyndman, R.J.; Athanasopoulos, G. Forecasting: Principles and Practice, 2nd ed.; OTexts: Melbourne, Australia, 2018; Available online: https://otexts.com/fpp2/ (accessed on 3 November 2025).

- Zhang, G.P. Time series forecasting using a hybrid ARIMA and neural network model. Neurocomputing 2003, 50, 159–175. [Google Scholar] [CrossRef]

- Khashei, M.; Bijari, M. A novel hybridization of artificial neural networks and ARIMA models for time series forecasting. Appl. Soft Comput. 2011, 11, 2664–2675. [Google Scholar] [CrossRef]

- Shi, X.; Chen, Z.; Wang, H.; Yeung, D.-Y.; Wong, W.-K.; Woo, W.-C. Convolutional LSTM network: A machine learning approach for precipitation nowcasting. In Proceedings of the 29th Annual Conference on Neural Information Processing Systems (NIPS), Montreal, QC, Canada, 7–12 December 2015; pp. 802–810. [Google Scholar]

- Kratzert, F.; Klotz, D.; Brenner, C.; Schulz, K.; Herrnegger, M. Rainfall–runoff modelling using Long Short-Term Memory (LSTM) networks. Hydrol. Earth Syst. Sci. 2018, 22, 6005–6022. [Google Scholar] [CrossRef]

- Mu, B.; Qin, B.; Yuan, S. ENSO-ASC 1.0.0: ENSO deep learning forecast model with a multivariate air-sea coupler. Geosci. Model Dev. 2021, 14, 6977–6999. [Google Scholar] [CrossRef]

- Ibebuchi, C.C.; Richman, M.B. Deep learning with autoencoders and LSTM for ENSO forecasting. Clim. Dyn. 2024, 62, 5683–5697. [Google Scholar] [CrossRef]

- Waqas, M.; Humphries, U.W.; Hlaing, P.T.; Ahmad, S. Seasonal WaveNet-LSTM: A deep learning framework for precipitation forecasting with integrated large scale climate drivers. Water 2024, 16, 3194. [Google Scholar] [CrossRef]

- Halpert, M.S.; Ropelewski, C.F. Surface temperature patterns associated with the Southern Oscillation. J. Clim. 1992, 5, 577–593. [Google Scholar] [CrossRef]

- Thompson, D.W.J.; Wallace, J.M. The Arctic Oscillation signature in the wintertime geopotential height and temperature fields. Geophys. Res. Lett. 1998, 25, 1297–1300. [Google Scholar] [CrossRef]

- Wakabayashi, S.; Kawamura, R. Extraction of major teleconnection patterns possibly associated with the anomalous summer climate in Japan. J. Meteorol. Soc. Jpn. 2004, 82, 1577–1588. [Google Scholar] [CrossRef]

- Katz, S.L.; Hampton, S.E.; Izmest’eva, L.R.; Moore, M.V. Influence of long-distance climate teleconnection on seasonality of water temperature in the world’s largest lake—Lake Baikal, Siberia. PLoS ONE 2011, 6, e14688. [Google Scholar] [CrossRef]

- Lim, Y.-K.; Kim, H.-D. Impact of the dominant large-scale teleconnections on winter temperature variability over East Asia. J. Geophys. Res. Atmos. 2013, 118, 7835–7848. [Google Scholar] [CrossRef]

- Park, H.-J.; Ahn, J.-B. Combined effect of the Arctic Oscillation and the Western Pacific pattern on East Asia winter temperature. Clim. Dyn. 2016, 46, 3205–3221. [Google Scholar] [CrossRef]

- Han, B.-R.; Lim, Y.; Kim, H.-J.; Son, S.-W. Development and evaluation of statistical prediction model of monthly-mean winter surface air temperature in Korea. Atmosphere 2018, 28, 153–162. (In Korean) [Google Scholar]

- Lee, J.H.; Julien, P.Y.; Maloney, E.D. The variability of South Korean temperature associated with climate indicators. Theor. Appl. Climatol. 2019, 138, 469–489. [Google Scholar] [CrossRef]

- Jung, E.; Jeong, J.-H.; Woo, S.-H.; Kim, B.-M.; Yoon, J.-H.; Lim, G.-H. Impacts of the Arctic-midlatitude teleconnection on wintertime seasonal climate forecasts. Environ. Res. Lett. 2020, 15, 094019. [Google Scholar] [CrossRef]

- Kim, C.-G.; Lee, J.; Lee, J.E.; Kim, N.W.; Kim, H. Monthly precipitation forecasting in the Han River Basin, South Korea, using large-scale teleconnections and multiple regression models. Water 2020, 12, 1590. [Google Scholar] [CrossRef]

- Kim, C.-G.; Lee, J.; Lee, J.E.; Kim, N.W.; Kim, H. Monthly temperature forecasting using large-scale climate teleconnections and multiple regression models. J. Korea Water Resour. Assoc. 2021, 54, 731–745. (In Korean) [Google Scholar]

- Lee, Y.; Cho, D.; Im, J.; Yoo, C.; Lee, J.; Ham, Y.-G.; Lee, M.-I. Unveiling teleconnection drivers for heatwave prediction in South Korea using explainable artificial intelligence. npj Clim. Atmos. Sci. 2024, 7, 51. [Google Scholar] [CrossRef]

- IPCC. Climate Change 2021—The Physical Science Basis: Working Group I Contribution to the Sixth Assessment Report of the Intergovernmental Panel on Climate Change; Cambridge Univ. Press: Cambridge, UK; New York, NY, USA, 2021; 2391p. [Google Scholar] [CrossRef]

- Korea Meteorological Administration (KMA). Annual Report on Climate Change Monitoring 2020; KMA: Seoul, Republic of Korea, 2021; ISSN 2799-4937. (In Korean) [Google Scholar]

- Korea Meteorological Administration (KMA). Annual Report on Climate Characteristics 2024; KMA: Seoul, Republic of Korea, 2025; ISSN 2765-3714. (In Korean) [Google Scholar]

- Trenberth, K.E.; Dai, A.; van der Schrier, G.; Jones, P.D.; Barichivich, J.; Briffa, K.R.; Sheffield, J. Global warming and changes in drought. Nat. Clim. Change 2014, 4, 17–22. [Google Scholar] [CrossRef]

- Rübbelke, D.; Vögele, S. Short-term distributional consequences of climate change impacts on the power sector: Who gains and who loses? Clim. Change 2013, 116, 191–206. [Google Scholar] [CrossRef]

- Wheeler, T.; von Braun, J. Climate change impacts on global food security. Science 2013, 341, 508–513. [Google Scholar] [CrossRef]

- Shumway, R.H.; Stoffer, D.S. Time Series Analysis and Its Applications: With R Examples, 4th ed.; Springer: Cham, Switzerland, 2017. [Google Scholar]

- Navid, M.A.I.; Niloy, N.H. Multiple linear regressions for predicting rainfall for Bangladesh. Communications 2018, 6, 1–4. [Google Scholar] [CrossRef]

- Jo, S.; Ahn, J.-B. Statistical forecast of early spring precipitation over South Korea using multiple linear regression. Clim. Res. 2017, 12, 53–71. (In Korean) [Google Scholar] [CrossRef]

- Kim, J.-Y.; Seo, K.-H.; Son, J.-H.; Ha, K.-J. Development of statistical prediction models for Changma precipitation: An ensemble approach. Asia-Pac. J. Atmos. Sci. 2017, 53, 207–216. [Google Scholar] [CrossRef]

- Mekanik, F.; Imteaz, M.A.; Gato-Trinidad, S.; Elmahdi, A. Multiple regression and artificial neural network for long-term rainfall forecasting using large scale climate modes. J. Hydrol. 2013, 503, 11–21. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Chollet, F.; Allaire, J.J. Deep Learning with R; Manning Publications: Shelter Island, NY, USA, 2017. [Google Scholar]

- Brownlee, J. Deep Learning for Time Series Forecasting: Predict the Future with MLPs, CNNs and LSTMs in Python; Machine Learning Mastery: San Francisco, CA, USA, 2018. [Google Scholar]

- Greff, K.; Srivastava, R.K.; Koutnik, J.; Steunebrink, B.R.; Schmidhuber, J. LSTM: A search space odyssey. IEEE Trans. Neural Netw. Learn. Syst. 2015, 28, 2222–2232. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Srivastava, N.; Hinton, G.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Dropout: A simple way to prevent neural networks from overfitting. J. Mach. Learn. Res. 2014, 15, 1929–1958. [Google Scholar]

- Zaremba, W.; Sutskever, I.; Vinyals, O. Recurrent Neural Network Regularization. arXiv 2014, arXiv:1409.2329. [Google Scholar]

- Kingma, D.P.; Ba, J.L. Adam: A method for stochastic optimization. In Proceedings of the International Conference on Learning Representations (ICLR), Banff, AB, Canada, 14–16 April 2014. [Google Scholar]

- Bishop, C. Pattern Recognition and Machine Learning; Springer: Cambridge, UK, 2006. [Google Scholar]

- Chiew, F.H.S.; McMahon, T.A. Global ENSO–streamflow teleconnection, streamflow forecasting and interannual variability. Hydrol. Sci. J. 2002, 47, 505–522. [Google Scholar] [CrossRef]

- Schepen, A.; Wang, Q.J.; Robertson, D. Evidence for using lagged climate indices to forecast Australian seasonal rainfall. J. Clim. 2012, 25, 1230–1246. [Google Scholar] [CrossRef]

- Wang, B.; Wu, R.; Fu, X. Pacific–East Asian teleconnection: How does ENSO affect East Asian climate? J. Clim. 2000, 13, 1517–1536. [Google Scholar] [CrossRef]

- Liu, Z.; Alexander, M. Atmospheric bridge, oceanic tunnel, and global climatic teleconnections. Rev. Geophys. 2007, 45, RG2005. [Google Scholar] [CrossRef]

- Nash, J.E.; Sutcliffe, J.V. River flow forecasting through conceptual models. Part 1: A discussion of principles. J. Hydrol. 1970, 10, 282–290. [Google Scholar] [CrossRef]

- Gal, Y.; Ghahramani, Z. Dropout as a Bayesian approximation: Representing model uncertainty in deep learning. In Proceedings of the 33rd International Conference on Machine Learning (ICML), New York, NY, USA, 19–24 June 2016; PMLR 48. pp. 1050–1059. [Google Scholar]

- Hipel, K.W.; McLeod, A.I. Time Series Modelling of Water Resources and Environmental Systems; Elsevier: Amsterdam, The Netherlands, 1994. [Google Scholar]

- Moriasi, D.N.; Arnold, J.G.; Van Liew, M.W.; Bingner, R.L.; Harmel, R.D.; Veith, T.L. Model evaluation guidelines for systematic quantification of accuracy in watershed simulations. Trans. ASABE 2007, 50, 885–900. [Google Scholar] [CrossRef]

- Wilks, D.S. Statistical Methods in the Atmospheric Sciences, 3rd ed.; Academic Press: San Diego, CA, USA, 2011. [Google Scholar]

- Goddard, L.; Kumar, A.; Solomon, A.; Smith, D.; Boer, G.; Gonzalez, P.; Kharin, V.; Merryfield, W.; Deser, C.; Mason, S.J.; et al. A verification framework for interannual-to-decadal predictions experiments. Clim. Dyn. 2013, 40, 245–272. [Google Scholar] [CrossRef]

- Pasini, A. Artificial neural networks for small dataset analysis. Information 2015, 6, 208–225. [Google Scholar] [CrossRef]

- Schulz, M.-A.; Yeo, B.T.T.; Vogelstein, J.T.; Mourao-Miranada, J.; Kather, J.N.; Kording, K.; Richards, B.; Bzdok, D. Different scaling of linear models and deep learning in UK Biobank brain images versus established machine-learning benchmarks. Nat. Commun. 2020, 11, 4238. [Google Scholar] [CrossRef]

| ID | Station Name | Latitude (°N) | Longitude (°E) | Elevation (m a.s.l) |

|---|---|---|---|---|

| 90 | Sokcho | 38.25 | 128.56 | 18.06 |

| 93 | Bukchuncheon | 37.95 | 127.75 | 95.61 |

| 95 | Cheorwon | 38.15 | 127.30 | 155.48 |

| 98 | Dongducheon | 37.90 | 127.06 | 115.62 |

| 99 | Paju | 37.89 | 126.77 | 30.59 |

| 100 | Daegwallyeong | 37.68 | 128.72 | 772.57 |

| 101 | Chuncheon | 37.90 | 127.74 | 76.47 |

| 104 | Bukgangneung | 37.80 | 128.86 | 78.90 |

| 105 | Gangneung | 37.75 | 128.89 | 26.04 |

| 106 | Donghae | 37.51 | 129.12 | 39.91 |

| 108 | Seoul | 37.57 | 126.97 | 85.67 |

| 112 | Incheon | 37.48 | 126.62 | 68.99 |

| 114 | Wonju | 37.34 | 127.95 | 148.60 |

| 116 | Gwanaksan | 37.44 | 126.96 | 626.76 |

| 119 | Suwon | 37.27 | 126.99 | 34.84 |

| 121 | Yeongwol | 37.18 | 128.46 | 240.60 |

| 127 | Chungju | 36.97 | 127.95 | 116.30 |

| 130 | Uljin | 36.99 | 129.41 | 50.00 |

| 131 | Cheongju | 36.64 | 127.44 | 58.70 |

| 201 | Ganghwa | 37.71 | 126.45 | 47.84 |

| 202 | Yangpyeong | 37.49 | 127.49 | 47.26 |

| 203 | Icheon | 37.26 | 127.48 | 80.09 |

| 211 | Inje | 38.06 | 128.17 | 200.16 |

| 212 | Hongcheon | 37.68 | 127.88 | 139.95 |

| 214 | Samcheok | 37.37 | 129.22 | 3.90 |

| 216 | Taebaek | 37.17 | 128.99 | 712.82 |

| 217 | Jeongseongun | 37.38 | 128.65 | 307.40 |

| 221 | Jecheon | 37.16 | 128.19 | 259.80 |

| 226 | Boeun | 36.49 | 127.73 | 174.99 |

| 232 | Cheonan | 36.76 | 127.29 | 81.50 |

| 272 | Yeongju | 36.87 | 128.52 | 210.79 |

| Predictor | Description | Provider | |

|---|---|---|---|

| Global climate index | AAO | Antarctic oscillation | NOAA |

| AMM | Atlantic meridional mode | NOAA | |

| AMO | Atlantic multidecadal oscillation | NOAA | |

| AMO5 | ERSST AMO (North Atlantic 0–60 N SSTA) | NOAA | |

| AO | Arctic oscillation | NOAA | |

| BEST | Bivariate ENSO timeseries | NOAA | |

| CPOLR | Monthly central Pacific outgoing long wave radiation index (170 E–140 W, 5 S–5 N) | NOAA | |

| EA | East Atlantic pattern | NOAA | |

| EAWR | East Atlantic/Western Russia pattern | NOAA | |

| EPNP | East Pacific/North Pacific oscillation | NOAA | |

| GML | Global mean land-ocean temperature index | NOAA | |

| MEI.v2 | Multivariate ENSO index version 2 | NOAA | |

| NAO | North Atlantic Oscillation | NOAA | |

| NINO1+2 | Extreme eastern tropical Pacific SST (0–10 S, 90 W–80 W) | NOAA | |

| NINO3 | Eastern tropical Pacific SST (5 N–5 S, 150 W–90 W) | NOAA | |

| NINO3.4 | East central tropical Pacific SST (5 N–5 S, 170–120 W) | NOAA | |

| NINO4 | Central tropical Pacific SST (5 N–5 S, 160 E–150 W) | NOAA | |

| NOI | Northern Oscillation Index | NOAA | |

| NP | North Pacific pattern | NOAA | |

| ONI | Oceanic Niño Index | NOAA | |

| PNA | Pacific American Index | NOAA | |

| POL | Polar/Eurasia pattern | NOAA | |

| QBO | Quasi-biennial oscillation | NOAA | |

| SCAND | Scandinavia pattern | NOAA | |

| SLP_DAR | Darwin sea level pressure | NOAA | |

| SLP_EEP | Equatorial eastern Pacific sea level pressure | NOAA | |

| SLP_IND | Indonesia sea level pressure | NOAA | |

| SLP_TAH | Tahiti sea level pressure | NOAA | |

| SOI | Southern Oscillation Index | NOAA | |

| SOI_EQ | Equatorial SOI | NOAA | |

| SOLAR | Solar flux (10.7 cm) | NOAA | |

| TNA | Tropical Northern Atlantic Index | NOAA | |

| TNI | Trans-Niño Index | NOAA | |

| TPI | Tripole index for the interdecadal Pacific oscillation | NOAA | |

| TSA | Tropical Southern Atlantic Index | NOAA | |

| WHWP | Western Hemisphere warm pool | NOAA | |

| WP | Western Pacific Index | NOAA | |

| Local climate index | PCP | Monthly precipitation | KMA |

| TMP | Monthly average temperature | KMA | |

| HMD | Monthly average relative humidity | KMA | |

| AvgSLP | Monthly average sea level pressure | KMA | |

| DLhr | Monthly sum of daylight hours | KMA | |

| WND | Monthly average wind speed | KMA | |

| CLOUD | Monthly average cloud cover | KMA | |

| SmallEV | Monthly sum of small pan evaporation | KMA | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Kim, C.-G.; Lee, J.; Lee, J.-E.; Kim, H. Monthly Temperature Prediction in the Han River Basin, South Korea, Using Long Short-Term Memory (LSTM) and Multiple Linear Regression (MLR) Models. Water 2026, 18, 98. https://doi.org/10.3390/w18010098

Kim C-G, Lee J, Lee J-E, Kim H. Monthly Temperature Prediction in the Han River Basin, South Korea, Using Long Short-Term Memory (LSTM) and Multiple Linear Regression (MLR) Models. Water. 2026; 18(1):98. https://doi.org/10.3390/w18010098

Chicago/Turabian StyleKim, Chul-Gyum, Jeongwoo Lee, Jeong-Eun Lee, and Hyeonjun Kim. 2026. "Monthly Temperature Prediction in the Han River Basin, South Korea, Using Long Short-Term Memory (LSTM) and Multiple Linear Regression (MLR) Models" Water 18, no. 1: 98. https://doi.org/10.3390/w18010098

APA StyleKim, C.-G., Lee, J., Lee, J.-E., & Kim, H. (2026). Monthly Temperature Prediction in the Han River Basin, South Korea, Using Long Short-Term Memory (LSTM) and Multiple Linear Regression (MLR) Models. Water, 18(1), 98. https://doi.org/10.3390/w18010098