A Deep Learning Method Based on Two-Stage CNN Framework for Recognition of Chinese Reservoirs with Sentinel-2 Images

Abstract

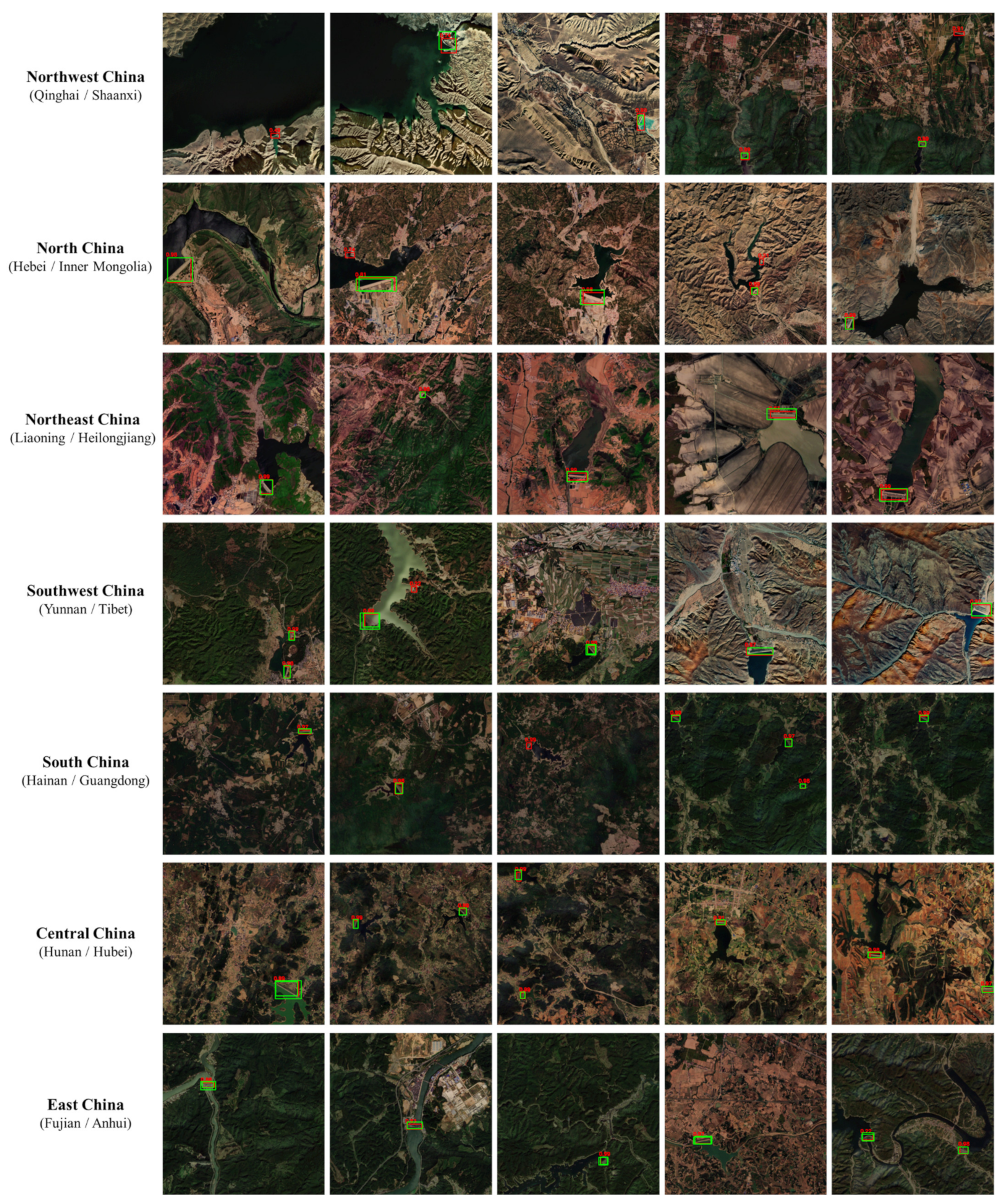

:1. Introduction

2. Materials and Methods

2.1. Study Area

2.2. Datasets

2.2.1. Remote Sensing Satellite Imagery

- (1)

- Level-1C is a Top-of-Atmosphere reflectance product that has gone through orthographic correction and geometric correction but without atmospheric correction.

- (2)

- Level-2A mainly includes corrected reflectance data of Bottom-of-Atmosphere.

2.2.2. Acquisition of the Training and Testing Dataset

2.2.3. Geographical Coordinate Data of Large and Medium-Sized Reservoirs in China

2.2.4. Annotations

2.3. Methods

2.3.1. Architecture of the Two-Stage CNN Framework

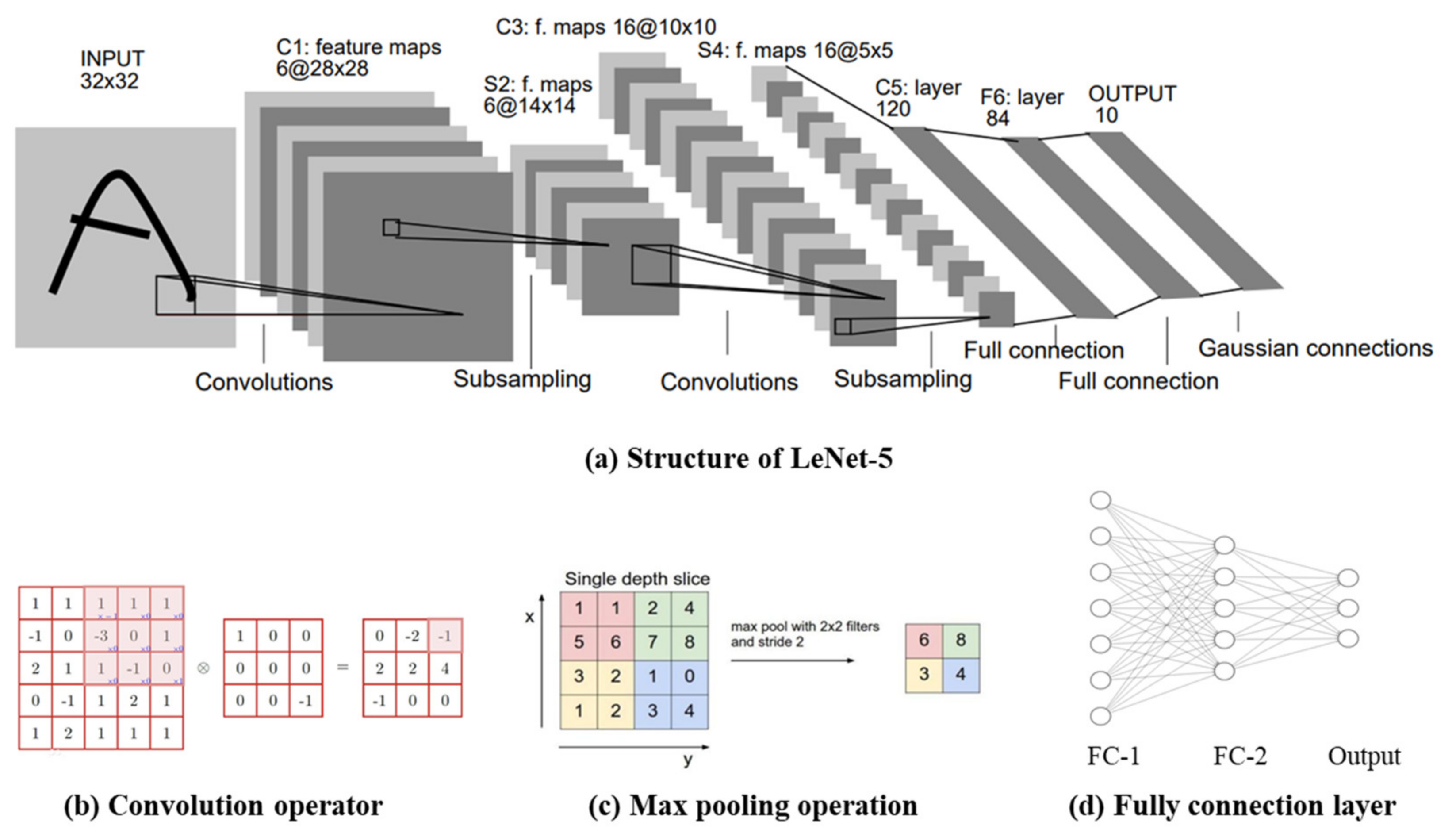

2.3.2. Convolutional Neural Network (CNN)

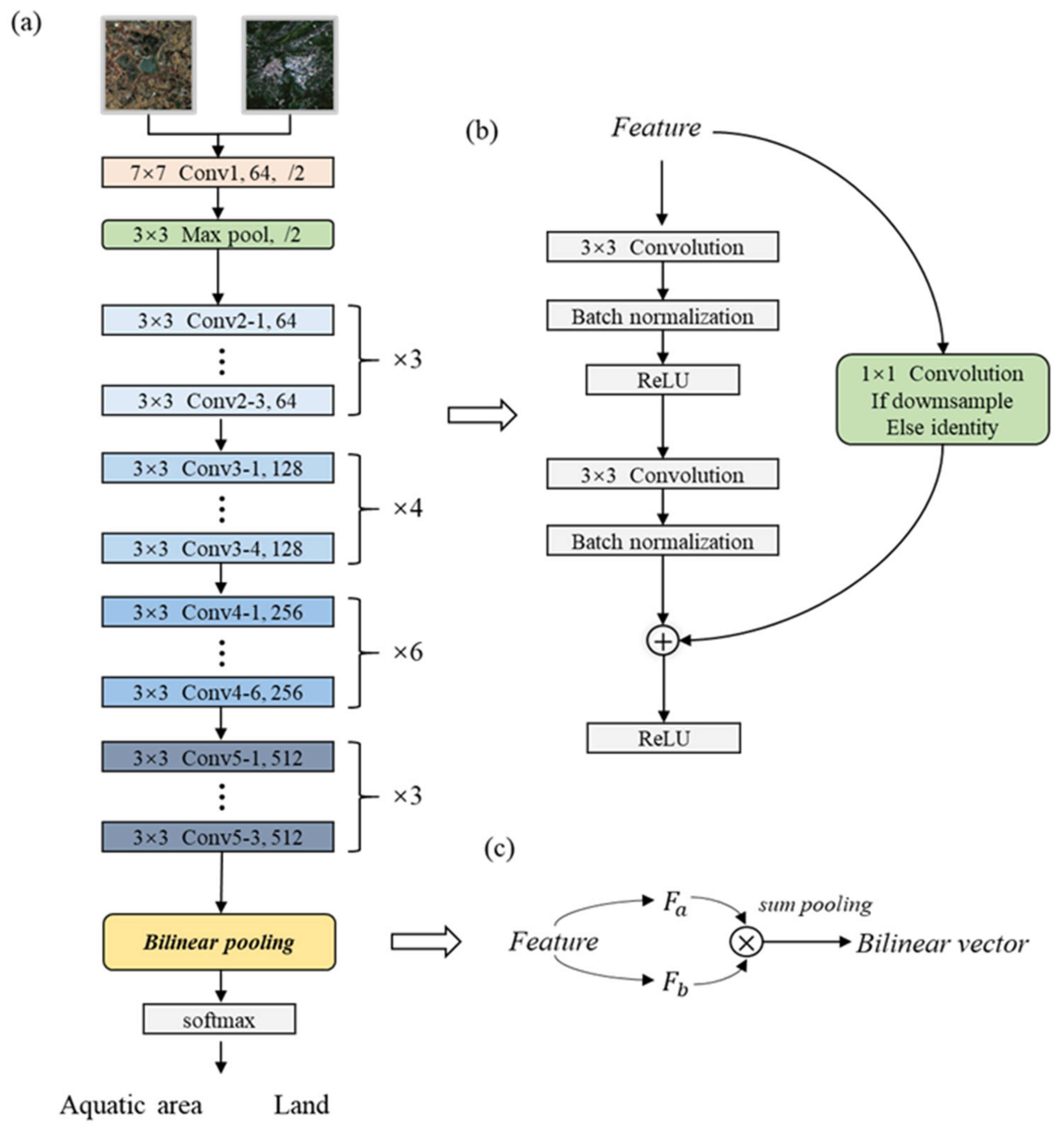

2.3.3. Classification of Aquatic Areas and Land

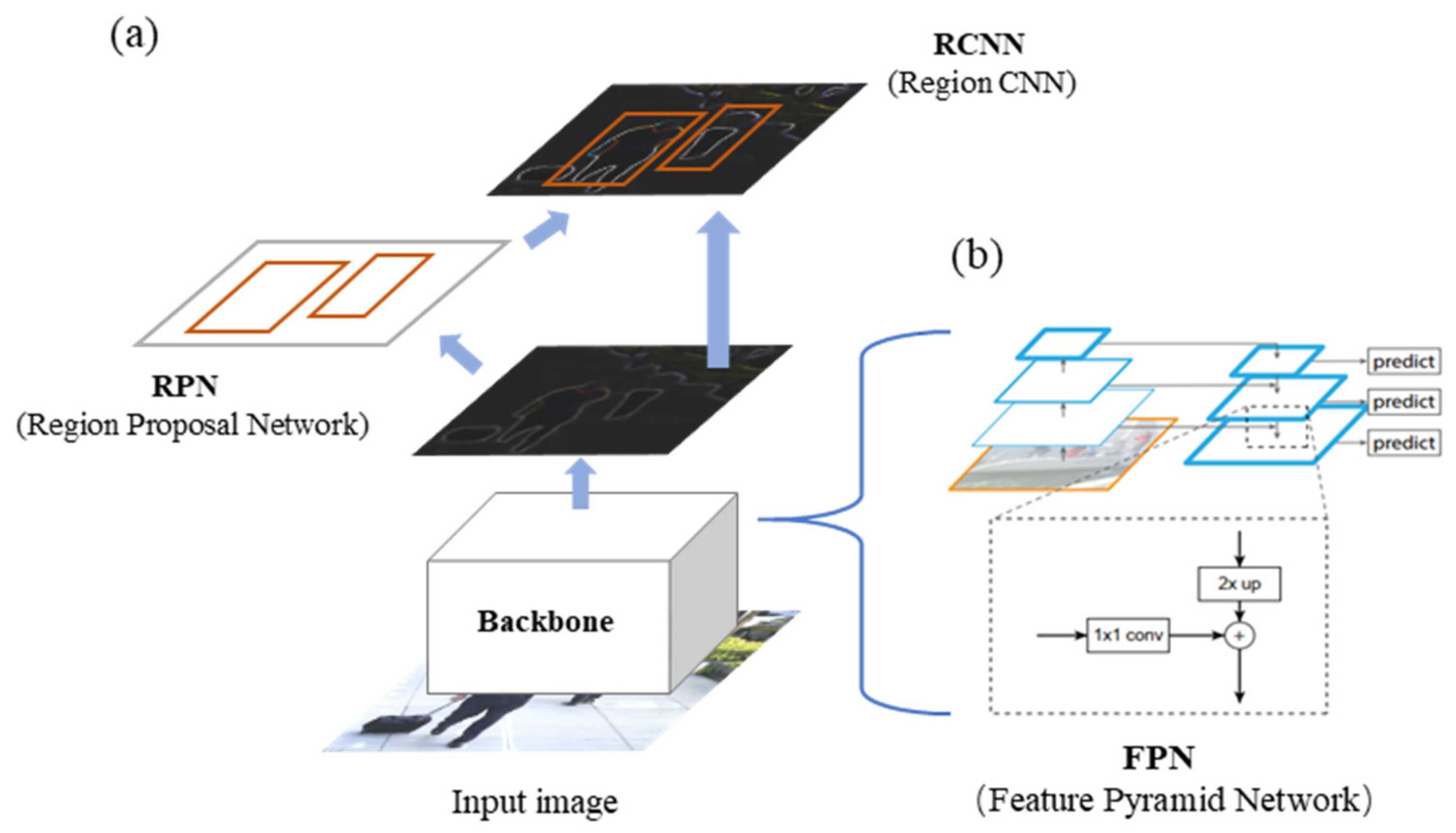

2.3.4. Detection of the Reservoirs and Dams

2.3.5. Implement Details

3. Results and Discussion

- (1)

- To evaluate the model on the dataset with image annotations we constructed in Section 2.2.2.

- (2)

- To compare the detection results with the geographical coordinates of the large and medium-sized reservoirs and dams released by the China Institute of Water Resources and Hydropower Research in Section 2.2.3.

3.1. Metrics

- TP: True Positive (correctly classified aquatic area images/detected reservoir targets)

- FP: False Positive (misclassified aquatic area images/misidentified reservoir targets)

- FN: False Negative (incorrectly classified as land images/undetected reservoir targets)

- TN: True Negative (correctly classified land images)

| Annotation | Positive | Negative | |

|---|---|---|---|

| Prediction | |||

| Positive | TP (True Positive) | FP (False Positive) | |

| Negative | FN (False Negative) | TN (True Negative) | |

3.2. Evaluation on the Constructed Dataset with Labels

3.2.1. Accuracy Assessments of the First-Stage Classification Network

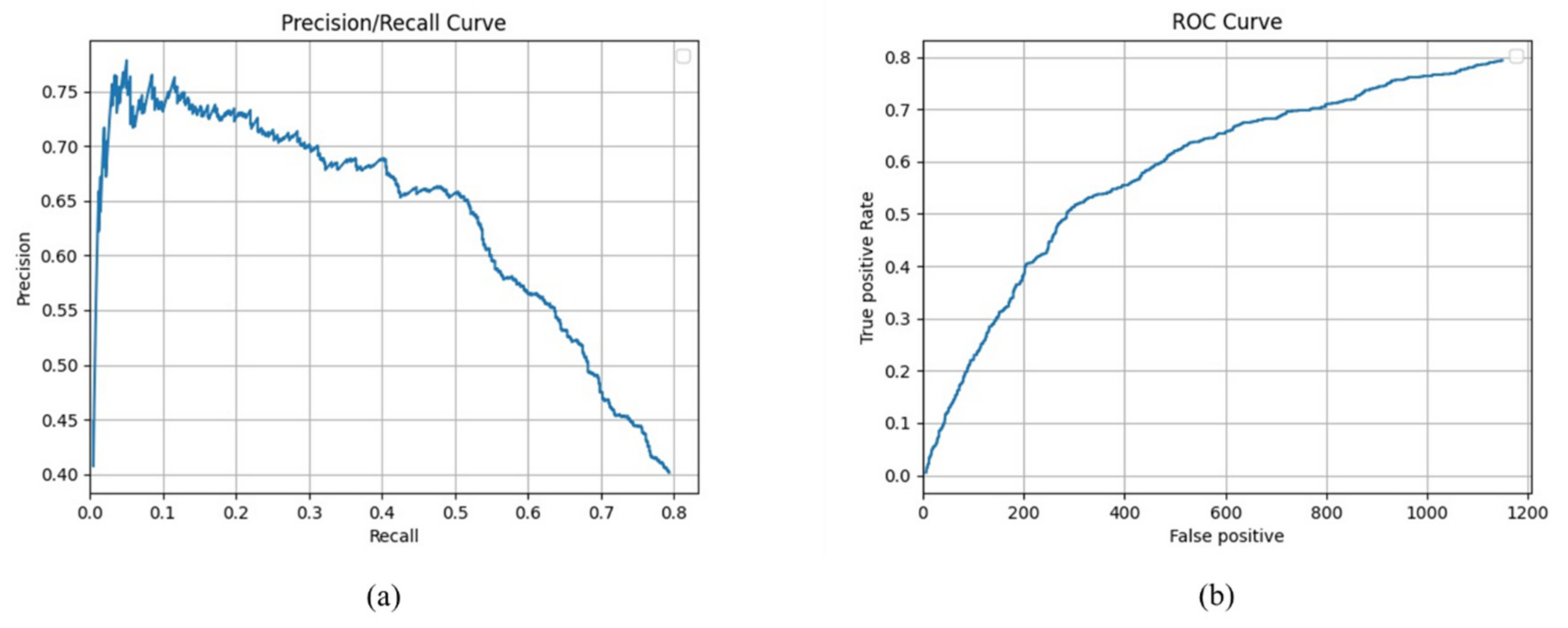

3.2.2. Accuracy Assessments of the Second-Stage Detection Network

3.2.3. Ablation Study

3.3. Comparison with Data Released by the China Institute of Water Resources and Hydropower Research

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Fang, W.; Wang, C.; Chen, X.; Wan, W.; Li, H.; Zhu, S.; Fang, Y.; Liu, B.; Hong, Y. Recognizing global reservoirs from Landsat 8 images: A deep learning approach. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2019, 12, 3168–3177. [Google Scholar] [CrossRef]

- Serpoush, B.; Khanian, M.; Shamsai, A. Hydropower plant site spotting using geographic information system and a MATLAB based algorithm. J. Clean. Prod. 2017, 152, 7–16. [Google Scholar] [CrossRef]

- Zhou, F.; Bo, Y.; Ciais, P.; Dumas, P.; Tang, Q.; Wang, X.; Liu, J.; Zheng, C.; Polcher, J.; Yin, Z. Deceleration of China’s human water use and its key drivers. Proc. Natl. Acad. Sci. USA 2020, 117, 7702–7711. [Google Scholar] [CrossRef] [PubMed]

- Chen, J.; Wang, J.; Guo, J.; Yu, J.; Zeng, Y.; Yang, H.; Zhang, R. Eco-environment of reservoirs in China: Characteristics and research prospects. Prog. Phys. Geogr. Earth Environ. 2018, 42, 185–201. [Google Scholar] [CrossRef]

- Amani, M.; Mahdavi, S.; Berard, O. Supervised wetland classification using high spatial resolution optical, SAR, and LiDAR imagery. J. Appl. Remote Sens. 2020, 14, 024502. [Google Scholar] [CrossRef]

- Adam, E.; Mutanga, O.; Rugege, D. Multispectral and hyperspectral remote sensing for identification and mapping of wetland vegetation: A review. Wetl. Ecol. Manag. 2010, 18, 281–296. [Google Scholar] [CrossRef]

- Lehner, B.; Döll, P. Development and validation of a global database of lakes, reservoirs and wetlands. J. Hydrol. 2004, 296, 1–22. [Google Scholar] [CrossRef]

- Dan, L.; Baosheng, W.; Bowei, C.; Yuan, X.; Yi, Z. Review of water body information extraction based on satellite remote sensing. J. Tsinghua Univ. Sci. Technol. 2020, 60, 147–161. [Google Scholar]

- Woolway, R.I.; Kraemer, B.M.; Lenters, J.D.; Merchant, C.J.; O’Reilly, C.M.; Sharma, S. Global lake responses to climate change. Nat. Rev. Earth Environ. 2020, 1, 388–403. [Google Scholar] [CrossRef]

- Grant, L.; Vanderkelen, I.; Gudmundsson, L.; Tan, Z.; Perroud, M.; Stepanenko, V.M.; Debolskiy, A.V.; Droppers, B.; Janssen, A.B.; Woolway, R.I. Attribution of global lake systems change to anthropogenic forcing. Nat. Geosci. 2021, 14, 849–854. [Google Scholar] [CrossRef]

- Lehner, B.; Liermann, C.R.; Revenga, C.; Vörösmarty, C.; Fekete, B.; Crouzet, P.; Döll, P.; Endejan, M.; Frenken, K.; Magome, J. High-resolution mapping of the world’s reservoirs and dams for sustainable river-flow management. Front. Ecol. Environ. 2011, 9, 494–502. [Google Scholar] [CrossRef]

- Gerardo, R.; De Lima, I.P. Monitoring Duckweeds (Lemna minor) in Small Rivers Using Sentinel-2 Satellite Imagery: Application of Vegetation and Water Indices to the Lis River (Portugal). Water 2022, 14, 2284. [Google Scholar] [CrossRef]

- Lu, S.; Ma, J.; Ma, X.; Tang, H.; Zhao, H.; Hasan Ali Baig, M. Time series of the Inland Surface Water Dataset in China (ISWDC) for 2000–2016 derived from MODIS archives. Earth Syst. Sci. Data 2019, 11, 1099–1108. [Google Scholar] [CrossRef] [Green Version]

- Tang, H.; Lu, S.; Ali Baig, M.H.; Li, M.; Fang, C.; Wang, Y. Large-Scale Surface Water Mapping Based on Landsat and Sentinel-1 Images. Water 2022, 14, 1454. [Google Scholar] [CrossRef]

- Gholizadeh, M.H.; Melesse, A.M.; Reddi, L. A comprehensive review on water quality parameters estimation using remote sensing techniques. Sensors 2016, 16, 1298. [Google Scholar] [CrossRef] [Green Version]

- Costa, J.D.S.; Liesenberg, V.; Schimalski, M.B.; De Sousa, R.V.; Biffi, L.J.; Gomes, A.R.; Neto, S.L.R.; Mitishita, E.; Bispo, P.D.C. Benefits of combining ALOS/PALSAR-2 and Sentinel-2A data in the classification of land cover classes in the Santa Catarina southern Plateau. Remote Sens. 2021, 13, 229. [Google Scholar] [CrossRef]

- McFeeters, S.K. The use of the Normalized Difference Water Index (NDWI) in the delineation of open water features. Int. J. Remote Sens. 1996, 17, 1425–1432. [Google Scholar] [CrossRef]

- Melgani, F.; Bruzzone, L. Classification of hyperspectral remote sensing images with support vector machines. IEEE Trans. Geosci. Remote Sens. 2004, 42, 1778–1790. [Google Scholar] [CrossRef] [Green Version]

- Huang, C.; Davis, L.; Townshend, J. An assessment of support vector machines for land cover classification. Int. J. Remote Sens. 2002, 23, 725–749. [Google Scholar] [CrossRef]

- Mahdavi, S.; Salehi, B.; Granger, J.; Amani, M.; Brisco, B.; Huang, W. Remote sensing for wetland classification: A comprehensive review. GISci. Remote Sens. 2018, 55, 623–658. [Google Scholar] [CrossRef]

- Quinlan, J.R. Induction of decision trees. Mach. Learn. 1986, 1, 81–106. [Google Scholar] [CrossRef]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef] [Green Version]

- Kumar, L.; Sinha, P.; Taylor, S. Improving image classification in a complex wetland ecosystem through image fusion techniques. J. Appl. Remote Sens. 2014, 8, 083616. [Google Scholar] [CrossRef] [Green Version]

- Yang, Y.; Newsam, S. Bag-of-Visual-Words and Spatial Extensions for Land-Use Classification. In Proceedings of the 18th SIGSPATIAL International Conference on Advances in Geographic Information Systems, San Jose, CA, USA, 2–5 November 2010; pp. 270–279. [Google Scholar]

- Hu, F.; Xia, G.-S.; Wang, Z.; Zhang, L.; Sun, H. Unsupervised feature coding on local patch manifold for satellite image scene classification. In Proceedings of the 2014 IEEE Geoscience and Remote Sensing Symposium, Quebec City, QC, Canada, 13–18 July 2014; IEEE: Piscataway, NJ, USA; pp. 1273–1276. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. Commun. ACM 2017, 60, 84–90. [Google Scholar] [CrossRef] [Green Version]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster r-cnn: Towards real-time object detection with region proposal networks. Adv. Neural Inf. Process. Syst. 2015, 28, 1137–1149. [Google Scholar] [CrossRef] [Green Version]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 3431–3440. [Google Scholar]

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative adversarial networks. Commun. ACM 2020, 63, 139–144. [Google Scholar] [CrossRef]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Marmanis, D.; Datcu, M.; Esch, T.; Stilla, U. Deep learning earth observation classification using ImageNet pretrained networks. IEEE Geosci. Remote Sens. Lett. 2015, 13, 105–109. [Google Scholar] [CrossRef] [Green Version]

- Li, J.; Zhao, X.; Li, Y.; Du, Q.; Xi, B.; Hu, J. Classification of hyperspectral imagery using a new fully convolutional neural network. IEEE Geosci. Remote Sens. Lett. 2018, 15, 292–296. [Google Scholar] [CrossRef]

- Han, J.; Ding, J.; Xue, N.; Xia, G.-S. Redet: A rotation-equivariant detector for aerial object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 2786–2795. [Google Scholar]

- Kemker, R.; Salvaggio, C.; Kanan, C. Algorithms for semantic segmentation of multispectral remote sensing imagery using deep learning. ISPRS J. Photogramm. Remote Sens. 2018, 145, 60–77. [Google Scholar] [CrossRef] [Green Version]

- Liu, X.; Lathrop, R., Jr. Urban change detection based on an artificial neural network. Int. J. Remote Sens. 2002, 23, 2513–2518. [Google Scholar] [CrossRef]

- Xia, G.-S.; Bai, X.; Ding, J.; Zhu, Z.; Belongie, S.; Luo, J.; Datcu, M.; Pelillo, M.; Zhang, L. DOTA: A large-scale dataset for object detection in aerial images. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 3974–3983. [Google Scholar]

- Xia, G.-S.; Hu, J.; Hu, F.; Shi, B.; Bai, X.; Zhong, Y.; Zhang, L.; Lu, X. AID: A benchmark data set for performance evaluation of aerial scene classification. IEEE Trans. Geosci. Remote Sens. 2017, 55, 3965–3981. [Google Scholar] [CrossRef] [Green Version]

- Wei, S.; Zeng, X.; Qu, Q.; Wang, M.; Su, H.; Shi, J. HRSID: A high-resolution SAR images dataset for ship detection and instance segmentation. IEEE Access 2020, 8, 120234–120254. [Google Scholar] [CrossRef]

- Drusch, M.; Del Bello, U.; Carlier, S.; Colin, O.; Fernandez, V.; Gascon, F.; Hoersch, B.; Isola, C.; Laberinti, P.; Martimort, P. Sentinel-2: ESA’s optical high-resolution mission for GMES operational services. Remote Sens. Environ. 2012, 120, 25–36. [Google Scholar] [CrossRef]

- Lin, T.-Y.; RoyChowdhury, A.; Maji, S. Bilinear CNN models for fine-grained visual recognition. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 1449–1457. [Google Scholar]

- Lin, T.-Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature pyramid networks for object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 2117–2125. [Google Scholar]

| Score Thr | Recall (%) | Precision (%) |

|---|---|---|

| 0.05 | 85.16 | 24.34 |

| 0.03 | 88.70 | 21.49 |

| 0.01 | 95.82 | 15.52 |

| Region | TIF Number | Reservoir Number | Recall | Precision | F1 Score |

|---|---|---|---|---|---|

| Northwest China | 3 | 35 | 78.14 | 34.29 | 47.67 |

| North China | 2 | 32 | 78.75 | 26.36 | 39.50 |

| Northeast China | 2 | 53 | 84.40 | 41.46 | 55.60 |

| Southwest China | 3 | 85 | 69.31 | 29.10 | 40.99 |

| South China | 2 | 157 | 61.64 | 30.68 | 40.97 |

| Central China | 2 | 352 | 87.86 | 50.90 | 64.46 |

| East China | 3 | 192 | 88.61 | 42.85 | 57.77 |

| China | 17 | 906 | 80.83 | 40.56 | 54.01 |

| Method | Recall (%) | Precision (%) | Overall Accuracy (%) |

|---|---|---|---|

| CNN | 84.39 | 20.44 | 73.10 |

| Bilinear CNN | 85.16 | 24.34 | 78.13 |

| Method | Backbone Feature | ROI Feature | Recall (%) | Precision (%) | F1 Score (%) |

|---|---|---|---|---|---|

| NIR RCNN | ✓ | 80.21 | 39.54 | 52.97 | |

| ✓ | ✓ | 80.83 | 40.56 | 54.01 | |

| Faster RCNN | 78.13 | 37.95 | 51.09 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhao, G.; Yao, P.; Fu, L.; Zhang, Z.; Lu, S.; Long, T. A Deep Learning Method Based on Two-Stage CNN Framework for Recognition of Chinese Reservoirs with Sentinel-2 Images. Water 2022, 14, 3755. https://doi.org/10.3390/w14223755

Zhao G, Yao P, Fu L, Zhang Z, Lu S, Long T. A Deep Learning Method Based on Two-Stage CNN Framework for Recognition of Chinese Reservoirs with Sentinel-2 Images. Water. 2022; 14(22):3755. https://doi.org/10.3390/w14223755

Chicago/Turabian StyleZhao, Guodongfang, Ping Yao, Li Fu, Zhibin Zhang, Shanlong Lu, and Tengfei Long. 2022. "A Deep Learning Method Based on Two-Stage CNN Framework for Recognition of Chinese Reservoirs with Sentinel-2 Images" Water 14, no. 22: 3755. https://doi.org/10.3390/w14223755