1. Introduction

Bulk water suppliers and water utilities in Australia need to provide safe, secure and reliable water supply for consumers. They are regulated to implement the Australian Drinking Water Guidelines Framework for the management of drinking water quality (the ADWG Framework) which sets out a comprehensive, integrated approach for managing water contamination risks across all stages of water supply—from catchment to tap [

1,

2]. This represents a multi-barrier approach in which the catchment is the first barrier providing the ecosystem services for water quality [

3], hence bulk water suppliers have a significant role as catchment managers.

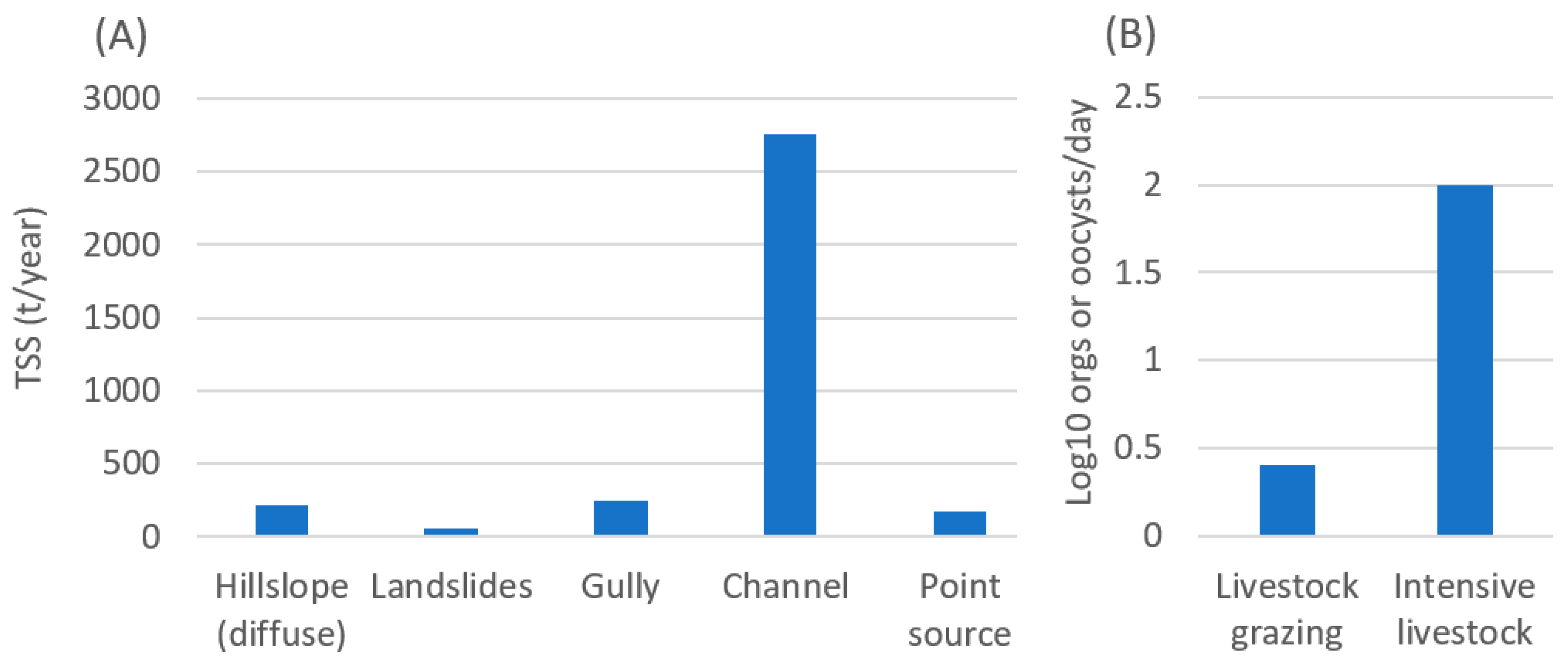

In open catchments, land use changes for agriculture, forestry, industry, recreation and residential dwelling, have led to significant point and diffuse sources of water quality risks and degradation of ecosystem services the catchment once provided [

4,

5]. In southeast Queensland, Australia, where more than 90% of source water supply is from open catchments, the priority contaminants considered risks to water quality that have a direct impact on water treatment plants (WTP) capacity are pathogenic microorganisms [

6] and total suspended sediments (TSS) [

7]. The ADWG Framework highlights pathogens as the greatest risk to consumers of drinking water and catchment sources can include domestic onsite wastewater systems (OSFs), sewerage treatment plants (STPs), stormwater and animal waste from broadscale grazing and intensive animal industries [

2]. Elevated levels of TSS can cause treatability issues at WTPs, through reducing the effectiveness of disinfection, increasing the requirement for chemical dosing [

8] and reducing drinking water production rates.

Riparian rehabilitation projects [

9,

10,

11] and other interventions to mitigate water quality risks such as OSF upgrades [

12], intensive animal effluent treatment upgrades, hardening laneways and stream crossings, are essential to improve the first barrier or the ecosystem service the catchment provides [

13]. However, catchment managers have been grappling with decisions regarding the location, type, and scale of these catchment interventions (mitigation measures and rehabilitation), particularly when finite resources have to be allocated across large catchment areas [

14,

15]. This poses the question: How can catchment managers or bulk water suppliers optimally allocate resources to effectively improve drinking water quality?

Many agencies use ‘hotspot’ or ‘threat’ maps to define the distribution, intensity and frequency of hazardous events to water quality. These maps can be helpful for identifying the location of risks, but they cannot always provide a robust method to allocate intervention resources, particularly when multiple objectives need to be considered [

15,

16]. Instead, the data and other information provided in the maps must be integrated into a structured, transparent and repeatable framework to develop intervention portfolios to provide the greatest return for a fixed budget [

16].

The solution to providing this framework has been the development of the ‘Decision Support System’ (DSS). Typically, a DSS operates in a GIS environment and combines spatial datasets, non-spatial data (quantitative and qualitative) and other information to assess where contaminants arise, their mobilisation and their relative contribution to changes in water quality within a source water catchment. By linking the contaminant to a land use activity or catchment process through data analysis, a DSS can provide direction on where interventions should be targeted to get the best outcomes for water quality improvement [

17,

18]. The DSS and the underlying data must also be detailed enough to give an acceptable level of (un)certainty in the results [

19]. Similarly, the concept of longitudinal connectivity has to be included in the planning process and has been successfully applied with DSSs designed for conservation planning [

20,

21], and for some catchment-based water quality models such as eWater Source [

22].

In order to successfully manage multiple risks to water quality with catchment interventions, a DSS must be designed to evaluate alternative combinations of interventions and the trade-offs between them [

16]. In many instances, agencies develop ‘hotspot’ or ‘threat’ maps from the outputs of catchment-based water quality models. A review of existing catchment-based water quality models and platforms was recently undertaken by Fu et al. [

23], noting that main catchment models used across the peer-reviewed literature were the Soil and Water Assessment Tool [

24], Hydrological Simulation Program—FORTRAN [

25], Integrated Catchment Model (INCA) [

26] and eWater Source [

22]. While these models can be used to predict changes in water quality based on surrounding land use or catchment management, they cannot apply catchment interventions and link these to an optimisation algorithm so that a preferred set of catchment interventions (i.e., investment scenario) can be selected. Instead, the investment scenario must be developed a priori so that the input intervention portfolio includes pre-selected interventions. This means that the set of catchment interventions selected and their location and amount may not be the optimal for the given budget. Additionally, as running each scenario separately is very time consuming, this would limit the number of different interventions that can be used and therefore the number of portfolios generated.

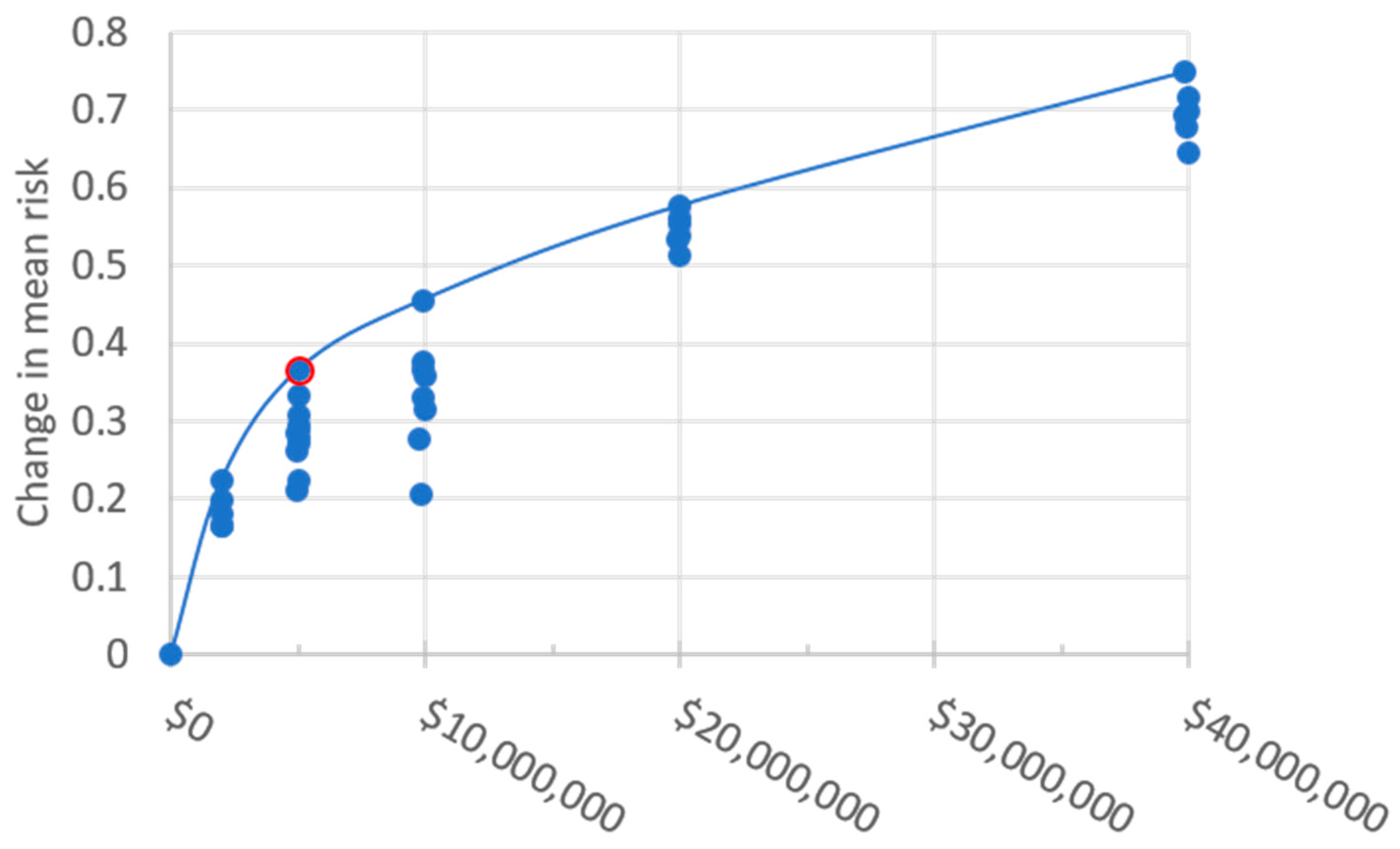

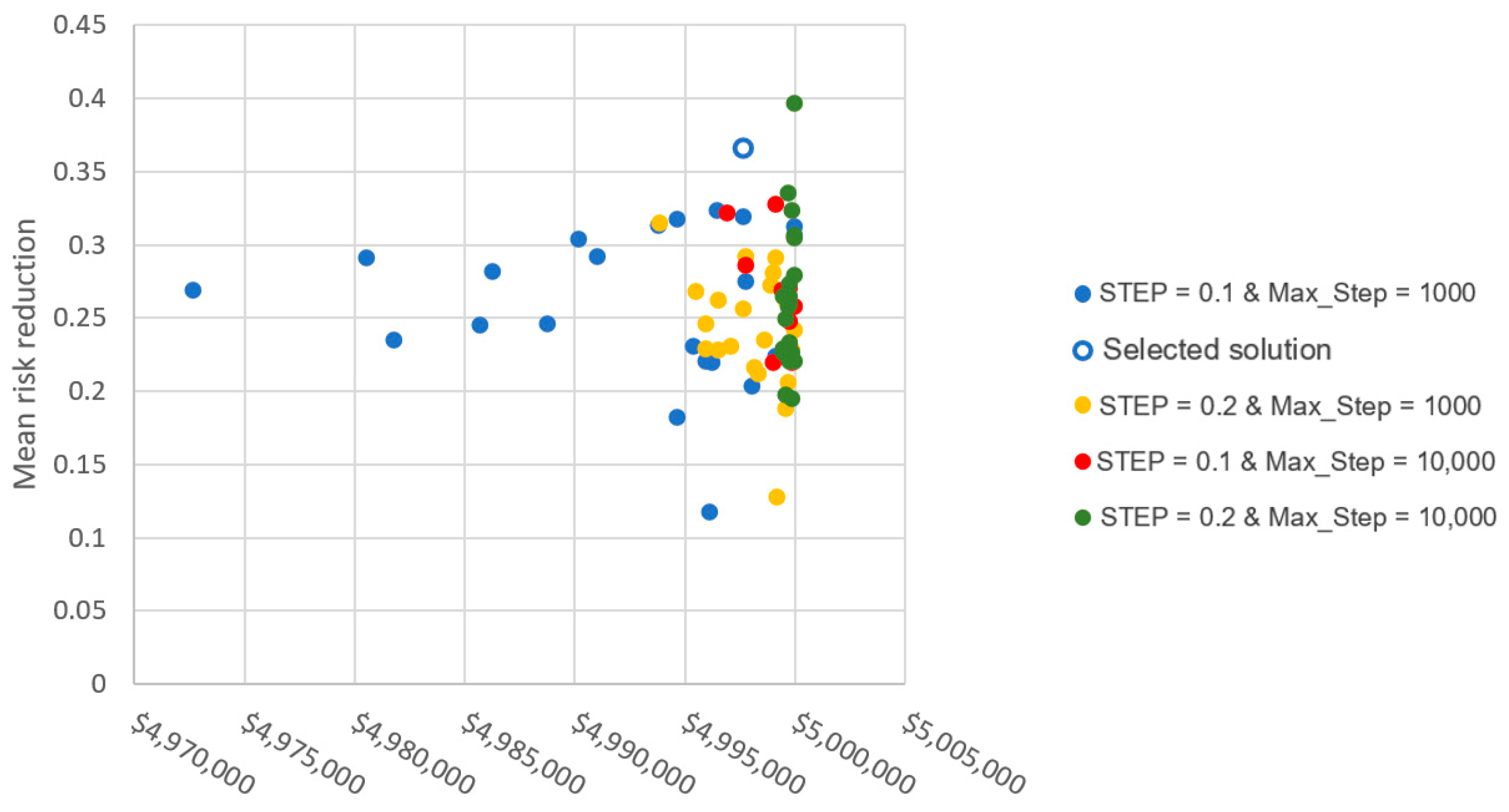

Optimization algorithms can be used to select the optimal intervention portfolio for set targets, for example the largest reduction in risk to water quality for a given investment. A spatial optimization algorithm creates portfolios of interventions and compares the risk reduction between intervention portfolios to arrive at an optimal or near optimal portfolio. This process of generating portfolios and calculating the risk reduction is computationally demanding when the number of spatial units and interventions is large and when there are multiple risks to trade off. There are several approaches to optimization which attempt to deal with a large solution space in order to reduce prolonged run-times.

Genetic algorithms have been successfully applied to optimize the location of best management practices to control of diffuse pollution sources [

27,

28], as well as many other parts of the water supply industry [

29,

30]. However, genetic algorithms do not handle multiple intervention options and spatial complexity well [

31,

32]. An alternative to complete optimization is multi-criteria analysis where pre-developed scenarios, prepared through general rules, that represent ‘likely’ optimal solutions are compared [

33]. This approach is simple and supposedly not as resource demanding as implementing a full optimization process, but it is largely unknown what the cost/benefits are of the scenarios, how different these results would be to an optimized result and become very inefficient when considering complex models with multiple interventions [

34].

Simulated annealing is an optimization routine that has been applied to resource allocation [

35], conservation planning tools [

20,

21] and planning catchment erosion mitigation [

15]. Simulated annealing is a probabilistic technique for finding the global optimum in a search space. The approach is to select a potential solution from the search space, compare it to the previously generated best performing solution and then reject the potential solution or replace the best performing solution with the potential solution. Subsequent solutions are based on minor variations of the current best performing solution. To avoid local minima, the simulated annealing approach initially explores the broader solution space before focusing on minima [

32]. The approach is favoured due to its ability to deal with multiple intervention options and spatial complexity and also has the ability to reduce run-times in order to select an optimal set of interventions [

31,

34].

For DSSs to successfully improve catchment water quality, the DSS framework must be made relevant for the bulk water suppliers and provide interpretable and meaningful direction for those responsible for implementing the actions [

35,

36]. Environmental risk assessments have been used extensively to understand the relative impacts of multiple stressors on a selected environmental value. A relative risk framework was used to provide an estimate of the risk of contaminants from different catchment areas to the iconic Great Barrier Reef in Australia based on anthropogenic load score, reef condition score and reef exposure score [

37]. Each parameter was given a score between 1 and 5 based on data ranges and assumed relationships between the value and degree of risk. This application allowed for the identification of suspended sediment, dissolved inorganic nitrogen and PS-II herbicides as most likely to pose a threat to the quality of run-off water entering the GBR ecosystem [

37]. Given that data and resource limitations can lead to uncertainty of the absolute values of modelled contaminant loads [

33,

38], the strength of the relative risk assessment is that it can allow for the different sources of contaminants to be compared against particular land use types [

37].

In southeast Queensland, there are over 30 WTPs supplying a population of over 3.4 million with a median growth rate of 2% [

39]. Each of the WTPs has a different capability of treating bacteria, protozoa, virus and TSS. Given that determining precise loads of TSS and pathogens would be unrealistic for the 1.8 M ha of southeast Queensland water supply, a relative risk framework has been adopted to facilitate the identification and location of the sources of priority contaminants considered risks to water quality received at a specific WTP. Furthermore, the risk framework approach enables comparison between the contaminants for each WTP, as well as across the water supply region.

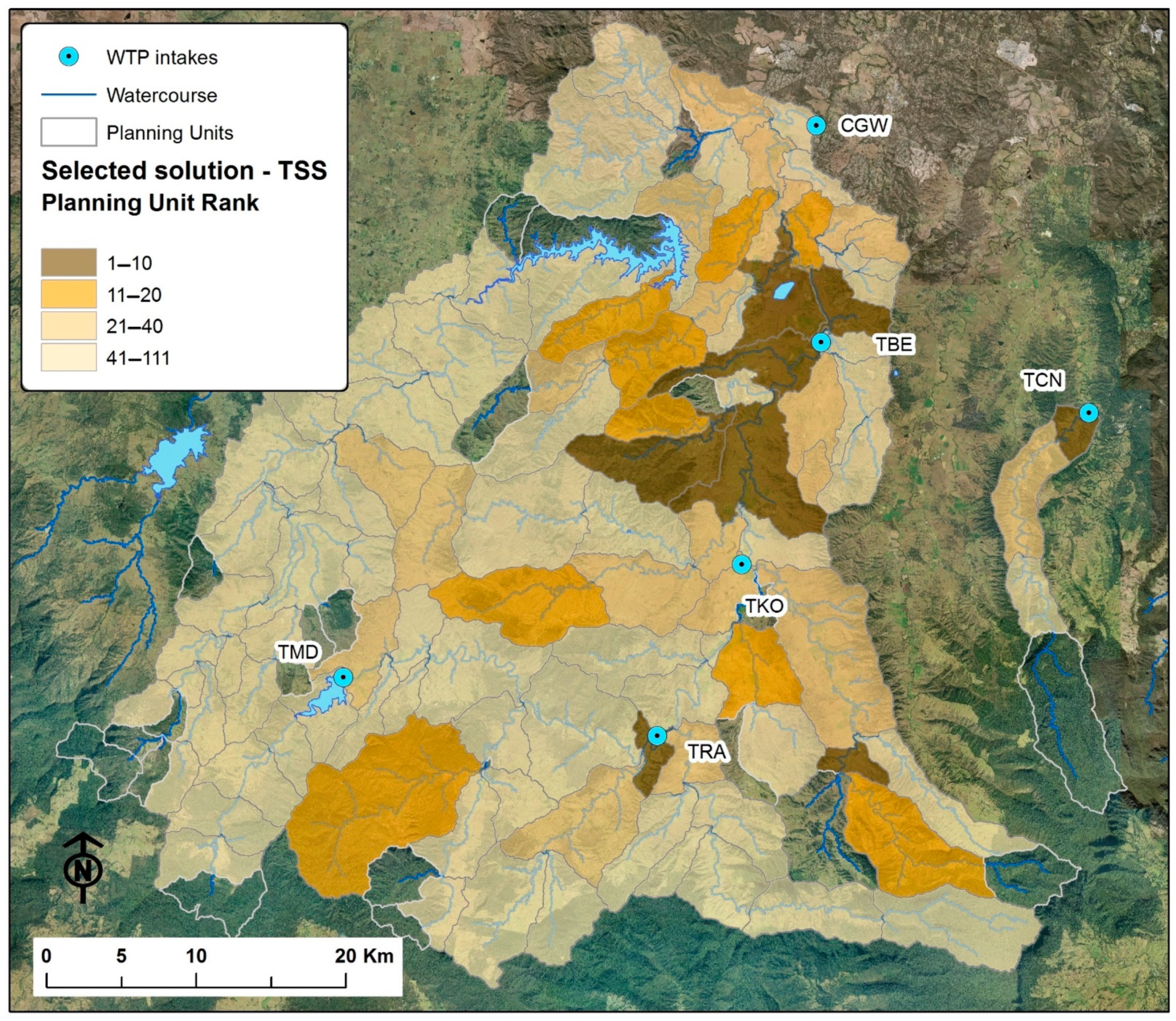

The aim of the paper is to outline a new approach for combining model and survey data on hazardous processes to drinking water quality (TSS, Bacteria, Viral and Protozoa) in open catchments using a risk framework and demonstrate how spatial optimisation of mitigation (intervention) measures are applied to reduce the highest risks to WTP intake based on intervention cost, efficacy and connectivity between the hazard source and WTP. Herein, the paper describes Seqwater’s Catchment Investment Decision Support System (CIDSS) and provides a case study of the Logan-Albert Catchment. Specific objectives of the case study catchment analysis are: (1) Can drinking water risk from Bacteria, Protozoa and TSS be reduced in the catchment and at what cost? (2) If so, where should funds be invested and what type of mitigation measures are required to achieve risk reduction for a given budget? (3) How does one prioritise the on-ground work program based on the optimal solution of mitigation measures? (4) What are the hazard treatment challenges when identifying new drinking water sources in highly developed catchments?

2. Study Area

The CIDSS is being applied to Seqwater’s source catchments in southeast Queensland, Australia (

Figure 1). The region has a subtropical climate with average summer and winter temperatures of 24 °C and 14 °C respectively. Annual and seasonal rainfall are variable with most rainfall occurring in Summer and autumn. As a result, river discharge regimes have very high hydrological variability [

40]. Drinking water is sourced from seven coastal catchments each with one or more nested off-takes for water treatment located either along a river reach or within reservoirs. Additionally, there are two ground water bores and a desalination plant used for drinking water supply.

The combined catchment area for the surface source water is ~1.8 million ha of which Seqwater owns <5%. Approximately 70% of the source catchments is used for agriculture, dominated by livestock industries, and only 22% of the source catchments remains as natural environment. The catchments also include urban, peri-urban and rural residences where wastewater treatment varies from old OSFs (e.g., septic tanks) to high-capacity STPs for urban developments. Stormwater generally has low levels of treatment across the source catchment areas.

The CIDSS has been populated for all 30 WTPs and associated source catchments in SEQ. However, this paper focuses on a small subset (six WTPs) and their source catchments to demonstrate the application of the approach. The source water catchment demonstrated and discussed in this paper is the Logan-Albert catchment, which includes the Logan River and Canungra Creek (

Figure 1C). Mean annual rainfall for mid (Beaudesert) and upper catchment (Lamington National Park) are 916 mm and 1580 mm respectively. Logan River currently has four off-take locations to supply WTPs to service communities along the catchment valley (

Table 1). One-fifth of off-take is proposed in the Lower Logan River to accommodate growing water supply demand and water quality treatment challenges posed by the catchment. The proposed WTP would see the source catchment area increased by 996 km

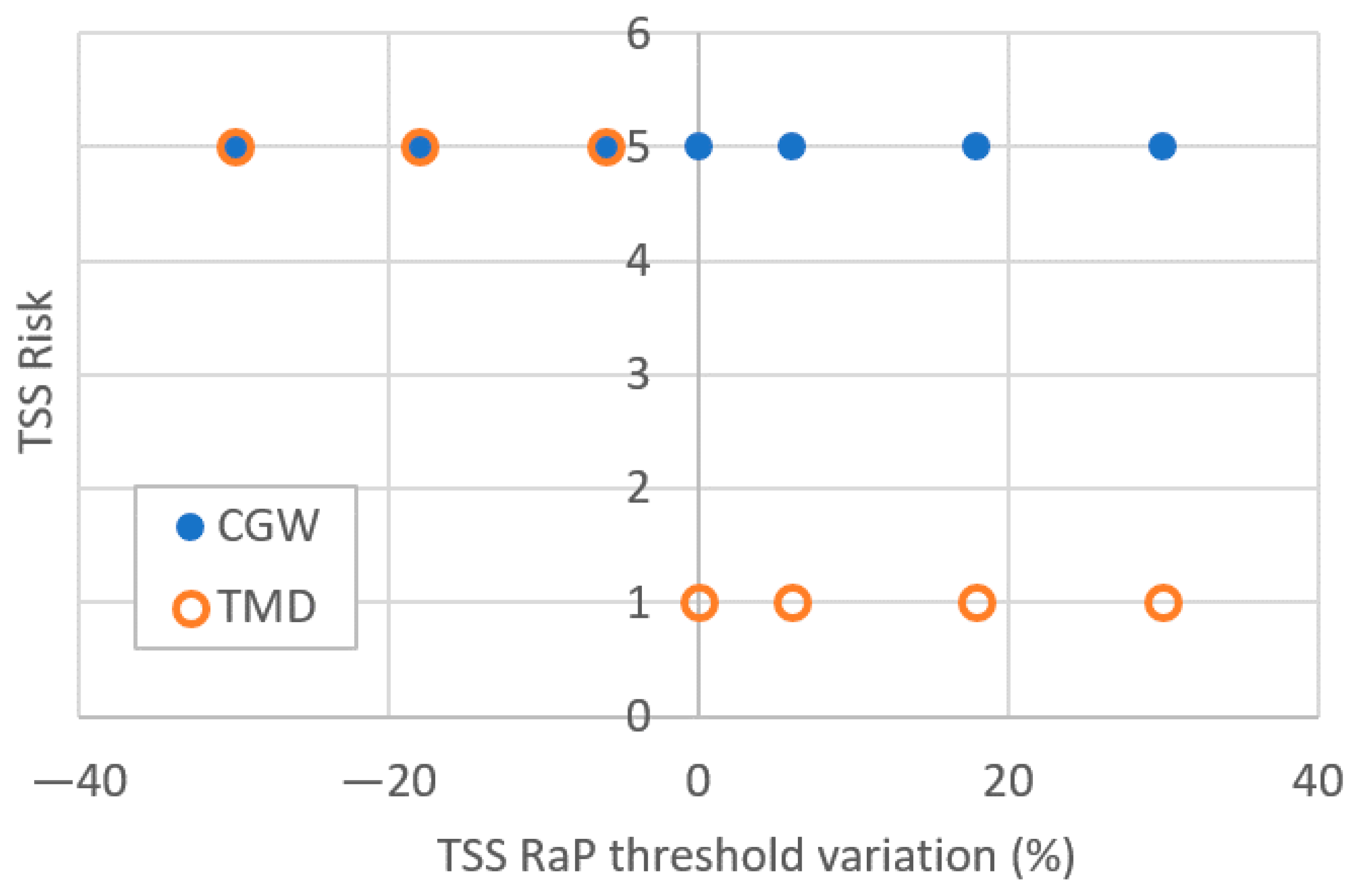

2 and include a 102,884 ML reservoir (Wyaralong Dam). Canungra Creek has a single off-take for WTP to service the township of Canungra. The modelling scenarios detailed below consider water quality hazards impacting all six WTP (including proposed new WTP with an off-take at Cedar Grove Weir (CGW)). The catchment area across which interventions can be applied to reduce water quality risk is 2473 km

2 and comprises predominantly livestock grazing and also public lands for nature conservation in some headwaters, cropping on floodplains and residential areas (

Figure 1C).