Quantile Mixture and Probability Mixture Models in a Multi-Model Approach to Flood Frequency Analysis

Abstract

:1. Introduction

- adding a new way of aggregation of distributions—the first variant of aggregation, presented by the authors in [31,32,33], is based on the averaging the values of quantiles of the same order from candidate distributions—here referred to as the mean magnitude (MM) variant. In this paper, it is compared with a new variant of aggregation, named the mean frequency (MF) and based on the averaging over the non-exceedance probabilities (p) of the determined flow value for candidate distributions;

- introducing the analysis of the accuracy of aggregated quantile for both ways of aggregation—so far the analysis of the accuracy of the aggregated quantiles has been a missing element of the aggregation procedure. Here, the analytical form of the asymptotic standard error of aggregated quantile is derived for both aggregation variants. Apart from the classic standard error, its version with the bias of quantiles from candidate distributions relative to the aggregate quantile is also presented.

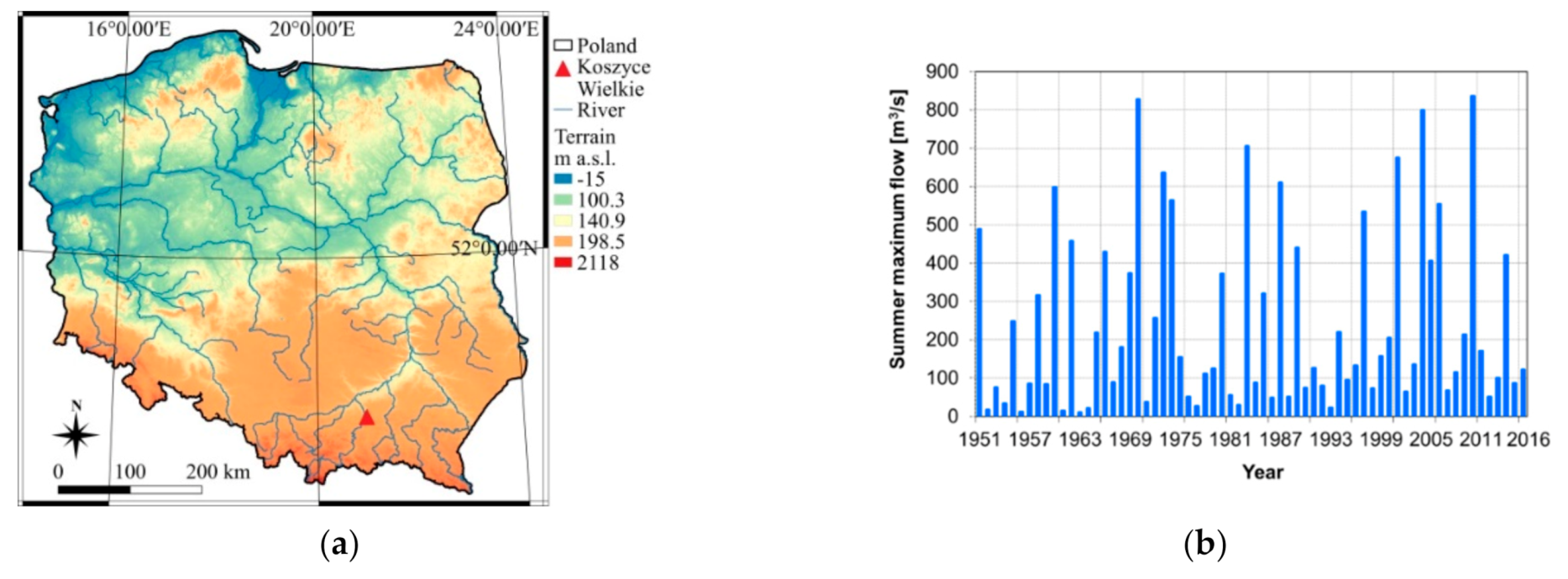

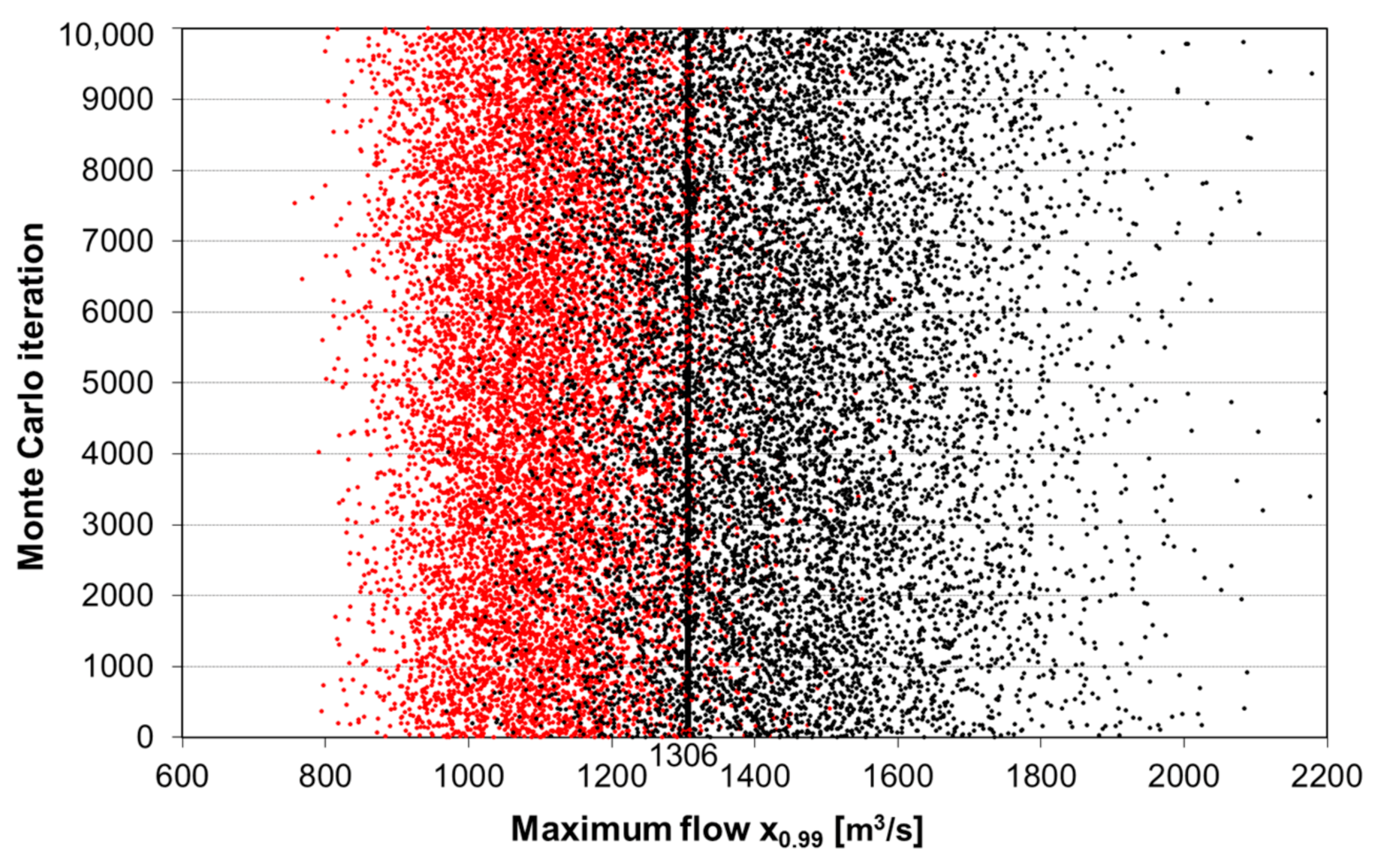

2. Case Study—Consequences of the Classical Approach to FFA

3. Methods

3.1. Probability Distributions for Modeling of Maximum Flows

3.2. Two Aggregation Schemes

- the aggregation by a mixture of probability from candidate distributions obtained for fixed quantile value (MF—Mean frequency), which is our new and original proposal.

3.3. Aggregation by Quantile Mixture—Mean Magnitude (MM)

3.4. Aggregation by Probability Mixture—Mean Frequency (MF)

4. Accuracy of Aggregated Quantile

4.1. Analytical vs. Numerical Form of Standard Error

4.2. Asymptotic Standard Error of Quantiles from the MM Method

4.3. Simulation Experiment on the Accuracy of Standard Error Formulas

- generating a 1000-element series of data from a given probability distribution serving as a population distribution

- fitting to the generated sample of two different distributions, determination of their weights, p-order quantiles and aggregated quantile according to Equation (1)

- generating 10,000 N-element data series from the population distribution

- matching to each data series of two selected (in point 2) distributions, determining the weights of both distributions, the values of the aggregated quantile (Equation (1)), its asymptotic standard error (Equations (17) and (19)) and the confidence interval with a confidence level of 68.3%, called a one-sigma confidence interval [39,51].

4.4. Asymptotic Standard Error of Quantile from MF Method

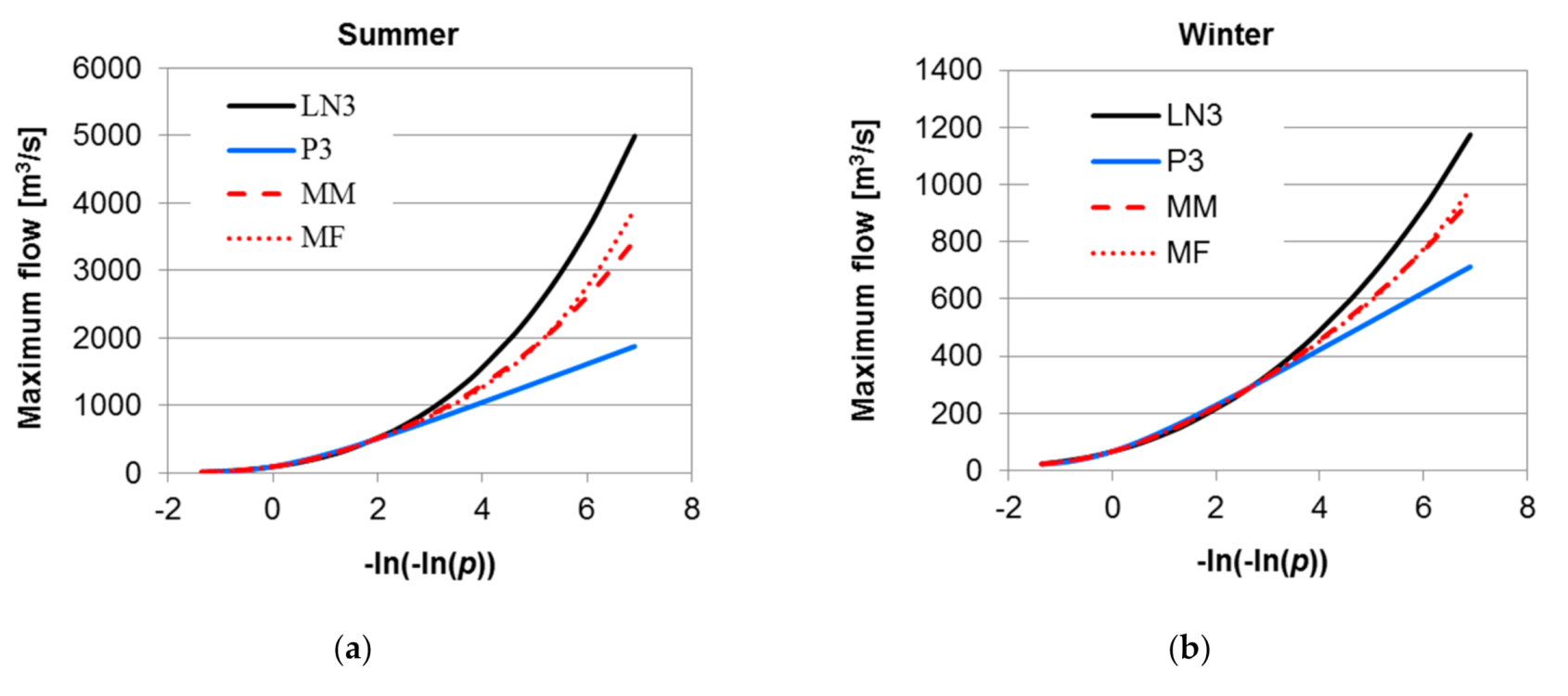

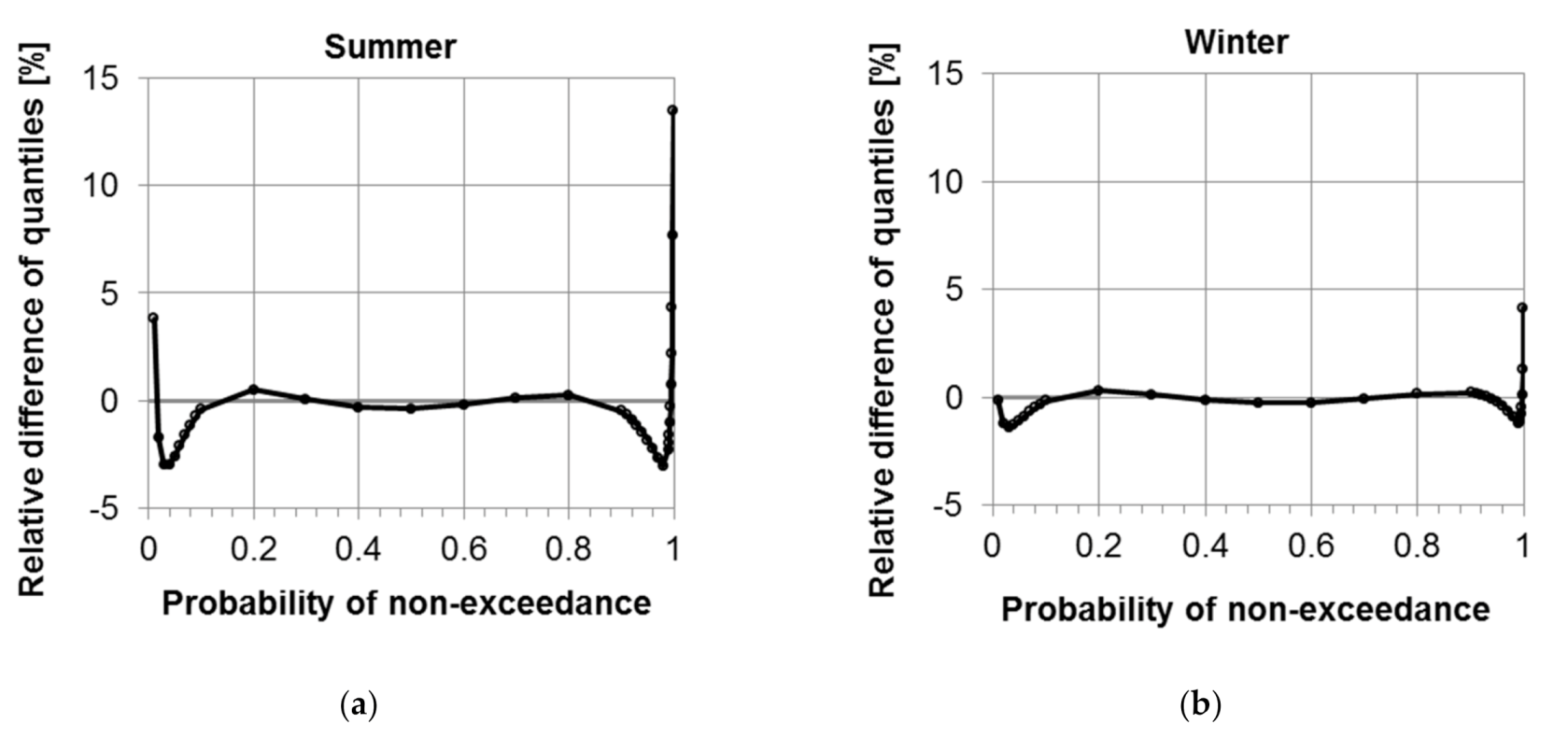

5. Case Study of MM and MF Methods of Distribution Aggregation

6. Conclusions

- the MM method allows for determining the p-order quantile of aggregated distribution as a simple and explicit form (Equation (1)), while the MF method requires a numerical solution of Equation (6), assuming .

- in the MF method, there is an explicit form of the cumulative distribution function and the density function of aggregated distribution . In the MM method, these functions can be determined only numerically.

- Calculation of the accuracy of the p-order quantile in the MM method requires the determination of the variance of the same-order quantile for the candidate distributions, in the MF method, it is necessary to determine the variance of quantiles at a wider range of probability values.

- Aggregation by quantile mixture (MM) leads to slightly different results than aggregation by probability mixture (MF) with respect both to the p-order quantile value and its uncertainty.

- The largest difference in quantile values from both approaches occurs for high probabilities of non-exceedance as , i.e., in the upper tail of model distributions.

- In the probability range representing the main mass of the distribution, when the density functions are similar, both aggregation methods are approximately consistent.

- The derived analytical formulas for the asymptotic standard error for MM and MF aggregation can be used to approximate assessment of the accuracy of the p-order quantile, effectively competing with other simulation techniques of this assessment

- A more accurate estimate of the 0.99 quantile accuracy is obtained when the bias of quantiles of candidate distributions is included.

- Taking into account the bias of the quantile from individual distribution Fi in relation to the aggregated quantile allows for reducing the bias of the quantile from distribution Fi relative to the unknown quantile value in the population.

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Blöschl, G.; Hall, J.; Parajka, J.; Perdigão, R.A.P.; Merz, B.; Arheimer, B.; Aronica, G.T.; Bilibashi, A.; Bonacci, O.; Borga, M.; et al. Changing climate shifts timing of European floods. Science 2017, 357, 588–590. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Blöschl, G.; Hall, J.; Viglione, A.; Perdigão, R.A.P.; Parajka, J.; Merz, B.; Lun, D.; Arheimer, B.; Aronica, G.T.; Bilibashi, A.; et al. Changing climate both increases and decreases European river floods. Nat. Cell Biol. 2019, 573, 108–111. [Google Scholar] [CrossRef] [PubMed]

- Didovets, I.; Krysanova, V.; Bürger, G.; Snizhko, S.; Balabukh, V.; Bronstert, A. Climate change impact on regional floods in the Carpathian region. J. Hydrol. Reg. Stud. 2019, 22, 100590. [Google Scholar] [CrossRef]

- Kundzewicz, Z.W. Changes in Flood Risk in Europe; CRC Press: London, UK, 2019. [Google Scholar]

- Jamshed, A.; Birkmann, J.; McMillan, J.M.; Rana, I.A.; Lauer, H. The Impact of Extreme Floods on Rural Communities: Evidence from Pakistan. In Climate Change, Hazards and Adaptation Options; Leal Filho, W., Nagy, G., Borga, M., Chávez Muñoz, P., Magnuszewski, A., Eds.; Springer: Cham, Switzerland, 2020; pp. 585–613. [Google Scholar] [CrossRef]

- Cunnane, C. Operational Hydrology Report No.33: Statistical Distributions for Flood Frequency Analysis; World Meteorological Organization: Geneva, Switzerland, 1989; ISBN 9789263107183. [Google Scholar]

- Rao, A.R.; Hamed, K.H. Flood Frequency Analysis; CRC Press: Boca Raton, FL, USA, 2000; ISBN 9780849300837. [Google Scholar]

- Strupczewski, W.; Kochanek, K.; Bogdanowicz, E.; Markiewicz, I. On seasonal approach to flood frequency modelling. Part I: Two-component distribution revisited. Hydrol. Process. 2011, 26, 705–716. [Google Scholar] [CrossRef]

- Kochanek, K.; Strupczewski, W.G.; Bogdanowicz, E. On seasonal approach to flood frequency modelling. Part II: Flood frequency analysis of Polish rivers. Hydrol. Process. 2011, 26, 717–730. [Google Scholar] [CrossRef]

- Strupczewski, W.; Kochanek, K.; Feluch, W.; Bogdanowicz, E.; Singh, V.P. On seasonal approach to nonstationary flood frequency analysis. Phys. Chem. Earth Parts A/B/C 2009, 34, 612–618. [Google Scholar] [CrossRef]

- Petrow, T.; Merz, B. Trends in flood magnitude, frequency and seasonality in Germany in the period 1951–2002. J. Hydrol. 2009, 371, 129–141. [Google Scholar] [CrossRef] [Green Version]

- Ozga-Zielinska, M.; Brzezinski, J.; Ozga-Zielinski, B. Guidelines for Flood Frequency Analysis; Long Measurement Series of River Discharge WMO HOMS Component I81.3.01; Institute of Meteorology and Water Management: Warsaw, Poland, 2005. [Google Scholar]

- Ozga-Zielińska, M.; Brzeziński, J.; Ozga-Zieliński, B. Zasady Obliczania Największych Przepływów Rocznych o Określonym Prawdopodobieństwie Przewyższenia przy Projektowaniu Obiektów Budownictwa Hydrotechnicznego. Długie Ciągi Pomiarowe Przepływów. [Guidelines for the Determining the Annual Maximum Flows with a Certain Probability of Exceedance in the Design of Hydrotechnical Structures. Long Data Series of Flows]; Materiały Badawcze, Seria: Hydrologia i Oceanologia, 27; IMGW: Warszawa, Poland, 1999. (In Polish) [Google Scholar]

- Strupczewski, W. Częstość wielkich wód. Prz. Geof. X (XVIII) 1965, 1, 83–93. (In Polish) [Google Scholar]

- Debele, S.E.; Bogdanowicz, E.; Strupczewski, W. The impact of seasonal flood peak dependence on annual maxima design quantiles. Hydrol. Sci. J. 2017, 62, 1603–1617. [Google Scholar] [CrossRef] [Green Version]

- Salvadori, G.; De Michele, C. Frequency analysis via copulas: Theoretical aspects and applications to hydrological events. Water Resour. Res. 2004, 40, 1–17. [Google Scholar] [CrossRef]

- Durrans, S.R.; Eiffe, M.A.; Thomas, W.O.; Goranflo, H.M. Joint Seasonal/Annual Flood Frequency Analysis. J. Hydrol. Eng. 2003, 8, 181–189. [Google Scholar] [CrossRef]

- Ye, W.; Wang, C.; Xu, X. On seasonal and semi-annual approach for flood frequency analysis. Stoch. Environ. Res. Risk Assess. 2017, 32, 51–62. [Google Scholar] [CrossRef]

- U.S. Water Resources Council. Guidelines for Determining Flood Flow Frequency Bull 17B Hydrol. Comm.; U.S. Water Resources Council: Washington, DC, USA, 1982.

- FEH. Flood Estimation Handbook 3: Statistical Procedures for Flood Frequency Estimation; Institute of Hydrology: Wallingford, UK, 1999; ISBN 9781906698003. [Google Scholar]

- Griffis, V.W.; Stedinger, J.R. Evolution of Flood Frequency Analysis with Bulletin 17. J. Hydrol. Eng. 2007, 12, 283–297. [Google Scholar] [CrossRef]

- Zasady Obliczania Największych Przepływów Rocznych o Określonym Prawdopodobieństwie Pojawiania się Przy Projektowaniu Urządzeń Inżynierskich i Urządzeń Hydrotechnicznych Gospodarki Wodnej w Zakresie Budownictwa Hydrotechnicznego; Central Office of Water Management: Warsaw, Poland, 1969. (In Polish)

- Banasik, K.; Wałęga, A.; Węglarczyk, S.; Więzik, B. Aktualizacja Metodyki Obliczania Przepływów i Opadów Maksymalnych o Określonym Prawdopodobieństwie Przewyższenia dla Zlewni Kontrolowanych i Niekontrolowanych Oraz Identyfikacji Modeli Transformacji Opadu w Odpływ [Updating of the Methodology for Determining Maximum Flows and Rainfall of a Set Probability of Exceedance for Controlled and Uncontrolled Catchments and Identification of Models of Transformation of Precipitation into Outflow]; National Water Management Board, Association of Polish Hydrologists: Warsaw, Poland, 2017. (In Polish)

- Stedinger, J.R.; Vogel, R.M.; Foufoula-Georgiou, E. Frequency analysis of extreme events. In Handbook of Hydrology; Maidment, D.R., Ed.; McGraw Hill: New York, NY, USA, 1993; Chapter 18. [Google Scholar]

- Bobée, B.; Rasmussen, P.F. Recent advances in flood frequency analysis. Rev. Geophys. 1995, 33, 1111–1116. [Google Scholar] [CrossRef]

- Rizwan, M.; Guo, S.; Xiong, F.; Yin, J. Evaluation of Various Probability Distributions for Deriving Design Flood Featuring Right-Tail Events in Pakistan. Water 2018, 10, 1603. [Google Scholar] [CrossRef] [Green Version]

- Strupczewski, W.; Mitosek, H.T.; Kochanek, K.; Singh, V.P.; Weglarczyk, S. Probability of correct selection from lognormal and convective diffusion models based on the likelihood ratio. Stoch. Environ. Res. Risk Assess. 2006, 20, 152–163. [Google Scholar] [CrossRef]

- Mitosek, H.; Strupczewski, W.; Singh, V.P. Three procedures for selection of annual flood peak distribution. J. Hydrol. 2006, 323, 57–73. [Google Scholar] [CrossRef]

- Ouarda, T.B.M.J.; Ashkar, F.; Bensaid, E.; Hourani, I. Statistical Distributions Used in Hydrology. Transformations and Asymptotic Properties; Scientific Report; Department of Mathematics, University of Moncton: Moncton, NB, Canada, 1994; p. 31. [Google Scholar]

- El Adlouni, S.; Ouarda, T.B.M.J.; Bobée, B. Orthogonal projection L-moment estimators for three-parameter distributions. Adv. Appl. Stat. 2007, 7, 19–209. [Google Scholar]

- Bogdanowicz, E. Podejście wielomodelowe w zagadnieniach estymacji kwantyli rozkładu wartości maksymalnych [Multimodel approach to estimation of extreme value distribution quantiles]. In Monografie Komitetu Inżynierii Środowiska Polskiej Akademii Nauk; Więzik, B., Ed.; Wydawnictwa KGW PAN: Warsaw, Poland, 2010; Volume 68, pp. 57–70. ISBN 9788389293930. (In Polish) [Google Scholar]

- Markiewicz, I.; Strupczewski, W.G.; Bogdanowicz, E.; Kochanek, K. Generalized Exponential Distribution in Flood Frequency Analysis for Polish Rivers. PLoS ONE 2015, 10, e0143965. [Google Scholar] [CrossRef]

- Markiewicz, I.; Bogdanowicz, E.; Kochanek, K. On the Uncertainty and Changeability of the Estimates of Seasonal Maximum Flows. Water 2020, 12, 704. [Google Scholar] [CrossRef] [Green Version]

- Kendall, M.G.; Stuart, A. The advanced theory of statistics; Vol. 2. Inference and Relationship; Charles Griffin and Company Limited: London, UK, 1973. [Google Scholar]

- Kaczmarek, Z. Statistical Methods in Hydrology and Meteorology; Published for the Geological Survey; US Department of the Interior and the National Science Foundation: Washington, DC, USA; Foreign Scientific Publications Department of the National Centre for Scientific, Technical and Economic Information: Warsaw, Poland, 1977.

- Akaike, H. A new look at the statistical model identification. IEEE Trans. Autom. Control. 1974, 19, 716–723. [Google Scholar] [CrossRef]

- Wolfram, S. The Mathematica Book, 4th ed.; Wolfram Media, Cambridge University Press: Cambridge, UK, 1999; ISBN 0521643147. [Google Scholar]

- Strupczewski, W.G.; Kochanek, K.; Markiewicz, I.; Bogdanowicz, E.; Weglarczyk, S.; Singh, V.P. On the tails of distributions of annual peak flow. Hydrol. Res. 2011, 42, 171–192. [Google Scholar] [CrossRef] [Green Version]

- Debele, S.E.; Strupczewski, W.G.; Bogdanowicz, E. A comparison of three approaches to non-stationary flood frequency analysis. Acta Geophys. 2017, 65, 863–883. [Google Scholar] [CrossRef]

- Volinsky, C.T.; Raftery, A.E.; Madigan, D.; Hoeting, J.A. David Draper and E. I. George, and a rejoinder by the authors. Stat. Sci. 1999, 14, 382–417. [Google Scholar] [CrossRef]

- Laio, F.; Di Baldassarre, G.; Montanari, A. Model selection techniques for the frequency analysis of hydrological extremes. Water Resour. Res. 2009, 45. [Google Scholar] [CrossRef]

- Szulczewski, W.; Jakubowski, W. The Application of Mixture Distribution for the Estimation of Extreme Floods in Controlled Catchment Basins. Water Resour. Manag. 2018, 32, 3519–3534. [Google Scholar] [CrossRef] [Green Version]

- Strupczewski, W.G.; Singh, V.P.; Weglarczyk, S. Asymptotic bias of estimation methods caused by the assumption of false probability distribution. J. Hydrol. 2002, 258, 122–148. [Google Scholar] [CrossRef]

- Weglarczyk, S.; Strupczewski, W.G.; Singh, V.P. A note on the applicability of log-Gumbel and log-logistic probability distributions in hydrological analyses: II. Assumed pdf. Hydrol. Sci. J. 2002, 47, 123–137. [Google Scholar] [CrossRef] [Green Version]

- Markiewicz, I.; Strupczewski, W.G.; Kochanek, K. On accuracy of upper quantiles estimation. Hydrol. Earth Syst. Sci. 2010, 14, 2167–2175. [Google Scholar] [CrossRef] [Green Version]

- Gatnar, E. Podejście Wielomodelowe w Zagadnieniach Dyskryminacji i Regresji [A Multi-Model Approach to Issues of Discrimination and Regression]; Wydawnictwa Naukowe PWN: Warsaw, Poland, 2008. (In Polish) [Google Scholar]

- Dorfman, R. A note on the delta-method for finding variance formulae. Biom. Bull. 1938, 1, 129–137. [Google Scholar]

- Oehlert, G.W. A Note on the Delta Method. Am. Stat. 1992, 46, 27–29. [Google Scholar] [CrossRef]

- Kaczmarek, Z. Przedział ufności jako miara dokładności oszacowania przepływów powodziowych [Confidence interval as a measure of accuracy of estimation of flood flows]. Wiadomości Służby Hydrol. Meteorol. 1960, 7, 133–185. (In Polish) [Google Scholar]

- Kite, G.W. Frequency and Risk Analysis in Hydrology; Water Resources Publications: Fort Collins, CO, USA, 1977. [Google Scholar]

- Woodall, W.H.; Wheeler, D.J.; Chambers, D.S. Understanding Statistical Process Control. Technometrics 1986, 28, 402. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

| Distribution | Cumulative Distribution Function (CDF) |

|---|---|

| Gamma (Ga) | |

| Weibull (We) | |

| Inverse Gaussian (IG) | |

| Generalized exponential (GE) | |

| Log-normal (LN) |

| Quantile in the GE Population (m3/s) | 1306.43 |

| Quantile assuming Ga distribution; estimated by MLM (m3/s) | 1205.34 |

| Quantile assuming LN distribution; estimated by MLM (m3/s) | 1391.19 |

| Weight of quantile from Ga distribution (−) (Equation (2)) | 0.232 |

| Weight of quantile from LN distribution (−) (Equation (2)) | 0.768 |

| Aggregated (Ga and LN) quantile (m3/s) | 1347.98 |

| Correlation coefficient of quantiles from Ga and LN distributions (−) | 0.96 |

| Author | Probability of Coverage According to Equation (17) (%) | Probability of Coverage According to Equation (19) (%) |

|---|---|---|

| 1. Rao and Hamed [7] | 61.48 | 67.23 |

| 2. Kaczmarek [49] | 63.13 | 68.39 |

| 3. Banasik et al. [23] | 69.90 | 73.79 |

| Quantile x0.99 (m3/s) | Accuracy of Quantile x0.99 (m3/s) | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| SE (x0.99) | RMSE (x0.99) | |||||||||

| Estimation method | LN3 | P3 | MM | MF | LN3 | P3 | MM(17) | MF(23) | MM(19) | MF(24) |

| Summer | 2050 | 1220 | 1630 | 1600 | 565.9 | 193.7 | 380.9 | 379.5 | 581.4 | 547.0 |

| Winter | 599 | 482 | 541 | 534 | 124.5 | 66.3 | 95.4 | 92.9 | 112.8 | 108.1 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Markiewicz, I.; Bogdanowicz, E.; Kochanek, K. Quantile Mixture and Probability Mixture Models in a Multi-Model Approach to Flood Frequency Analysis. Water 2020, 12, 2851. https://doi.org/10.3390/w12102851

Markiewicz I, Bogdanowicz E, Kochanek K. Quantile Mixture and Probability Mixture Models in a Multi-Model Approach to Flood Frequency Analysis. Water. 2020; 12(10):2851. https://doi.org/10.3390/w12102851

Chicago/Turabian StyleMarkiewicz, Iwona, Ewa Bogdanowicz, and Krzysztof Kochanek. 2020. "Quantile Mixture and Probability Mixture Models in a Multi-Model Approach to Flood Frequency Analysis" Water 12, no. 10: 2851. https://doi.org/10.3390/w12102851

APA StyleMarkiewicz, I., Bogdanowicz, E., & Kochanek, K. (2020). Quantile Mixture and Probability Mixture Models in a Multi-Model Approach to Flood Frequency Analysis. Water, 12(10), 2851. https://doi.org/10.3390/w12102851