Modelling Reservoir Turbidity Using Landsat 8 Satellite Imagery by Gene Expression Programming

Abstract

1. Introduction

2. Materials and Methods

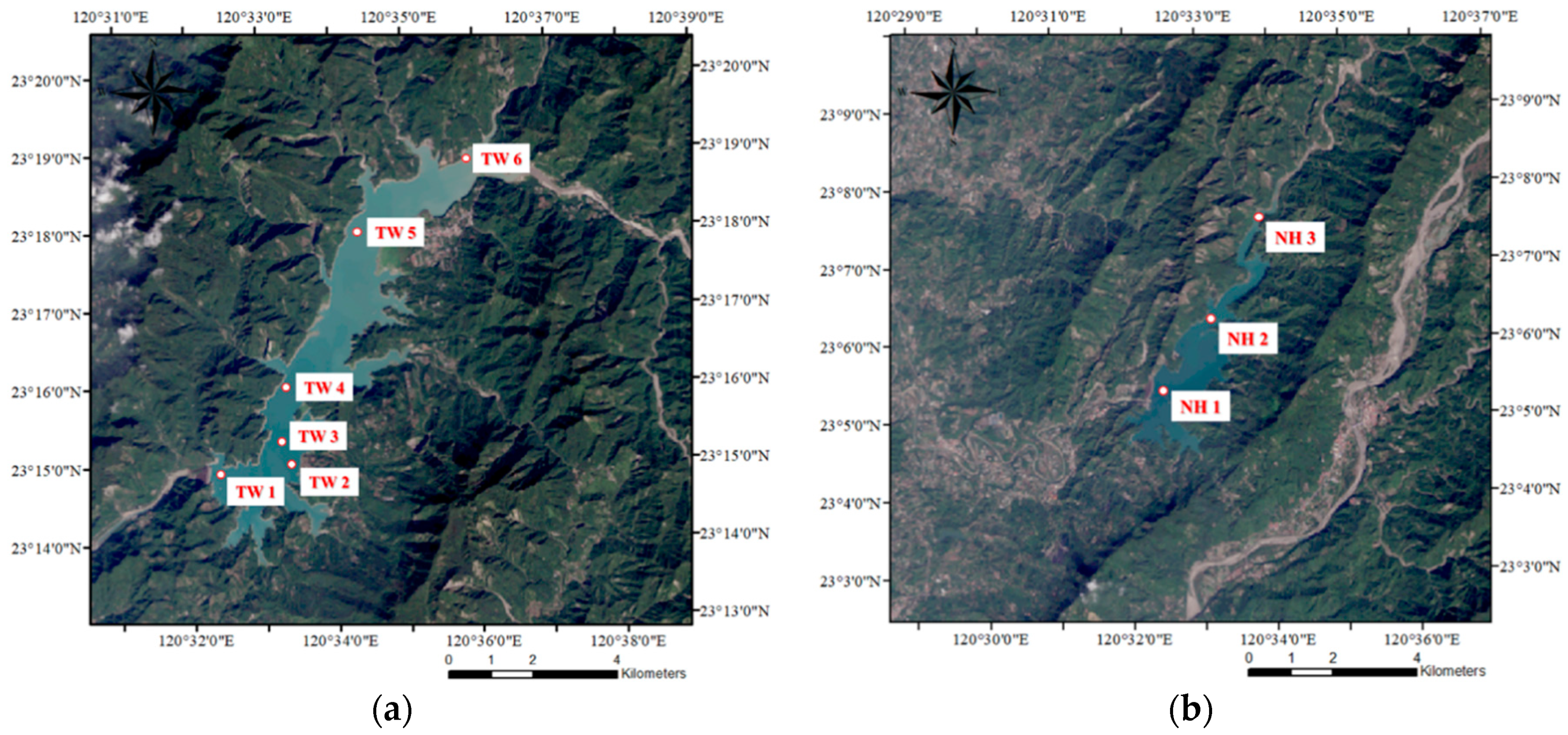

2.1. Study Area

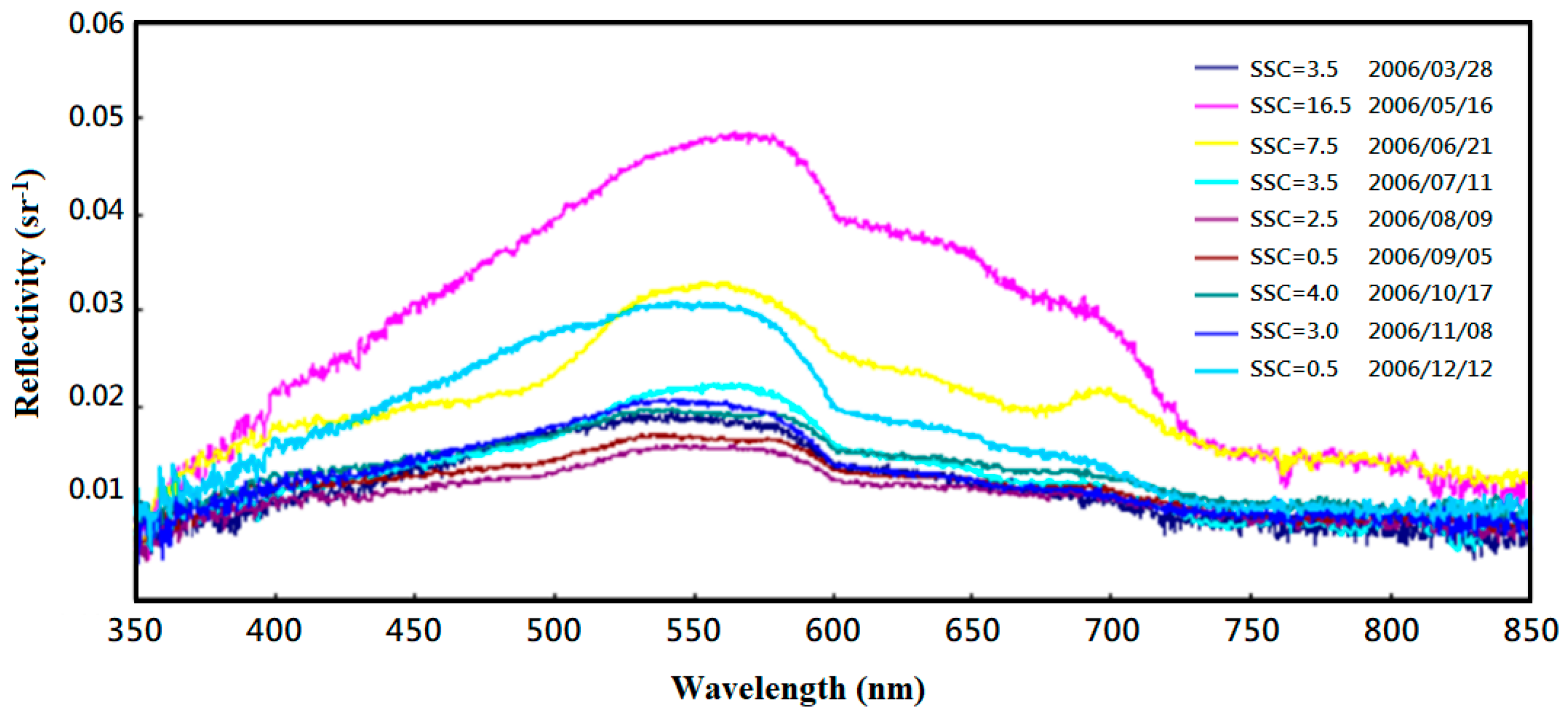

2.2. Data Collection

2.3. Turbidity Simulation Model Development

2.3.1. Multiple Linear Regression (MLR) Model

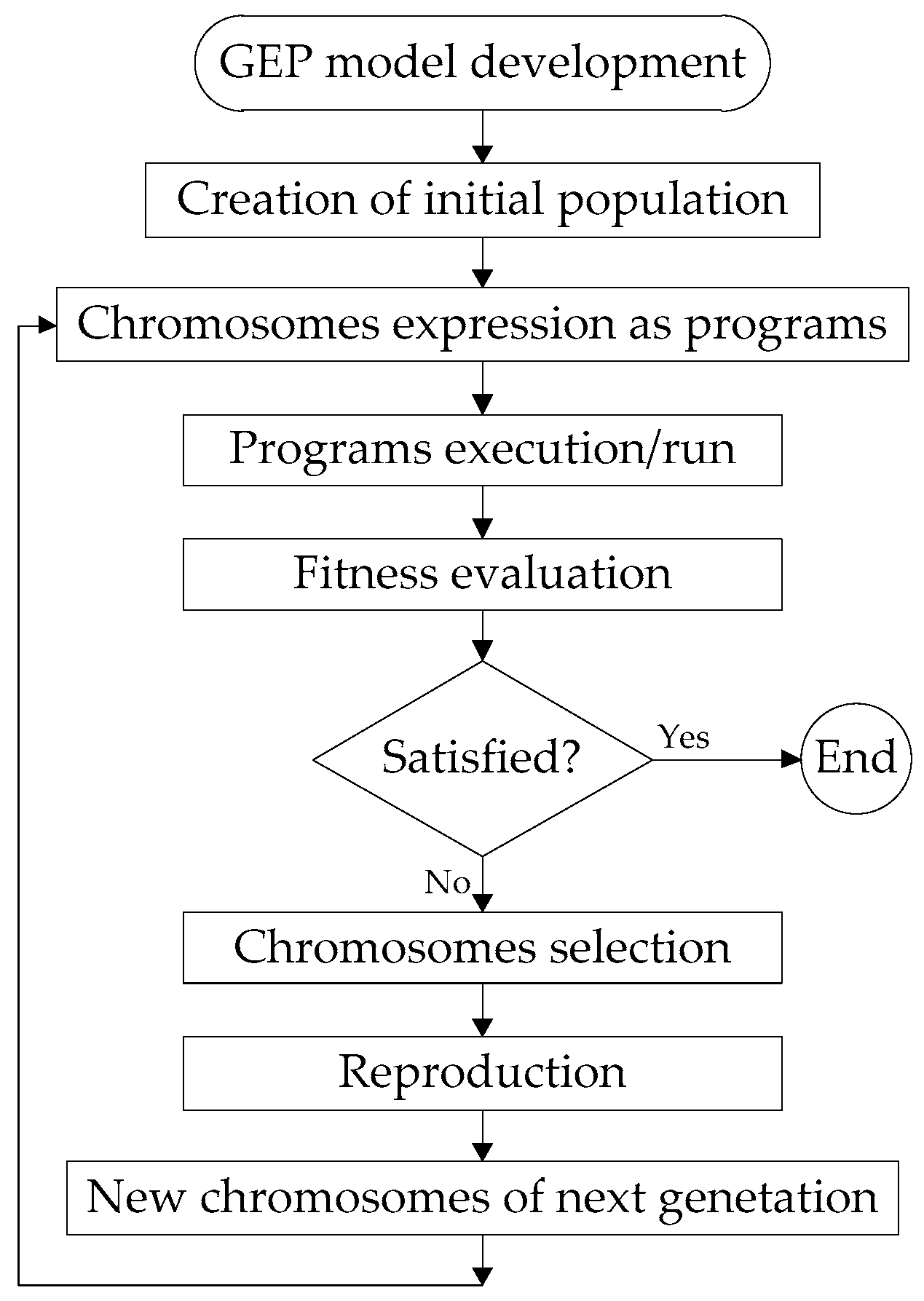

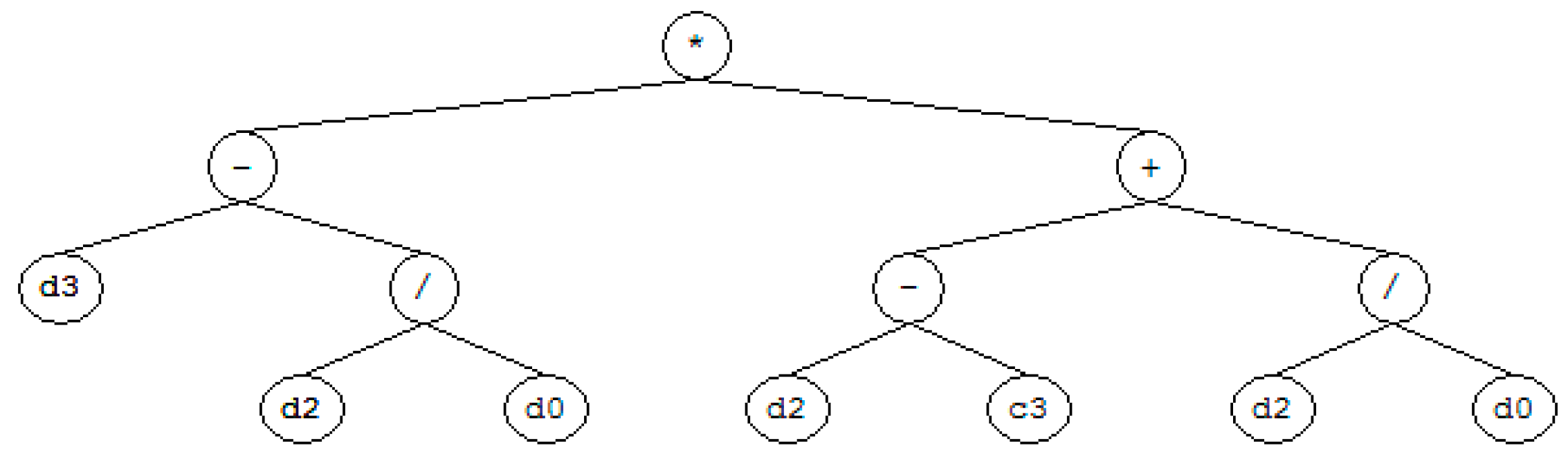

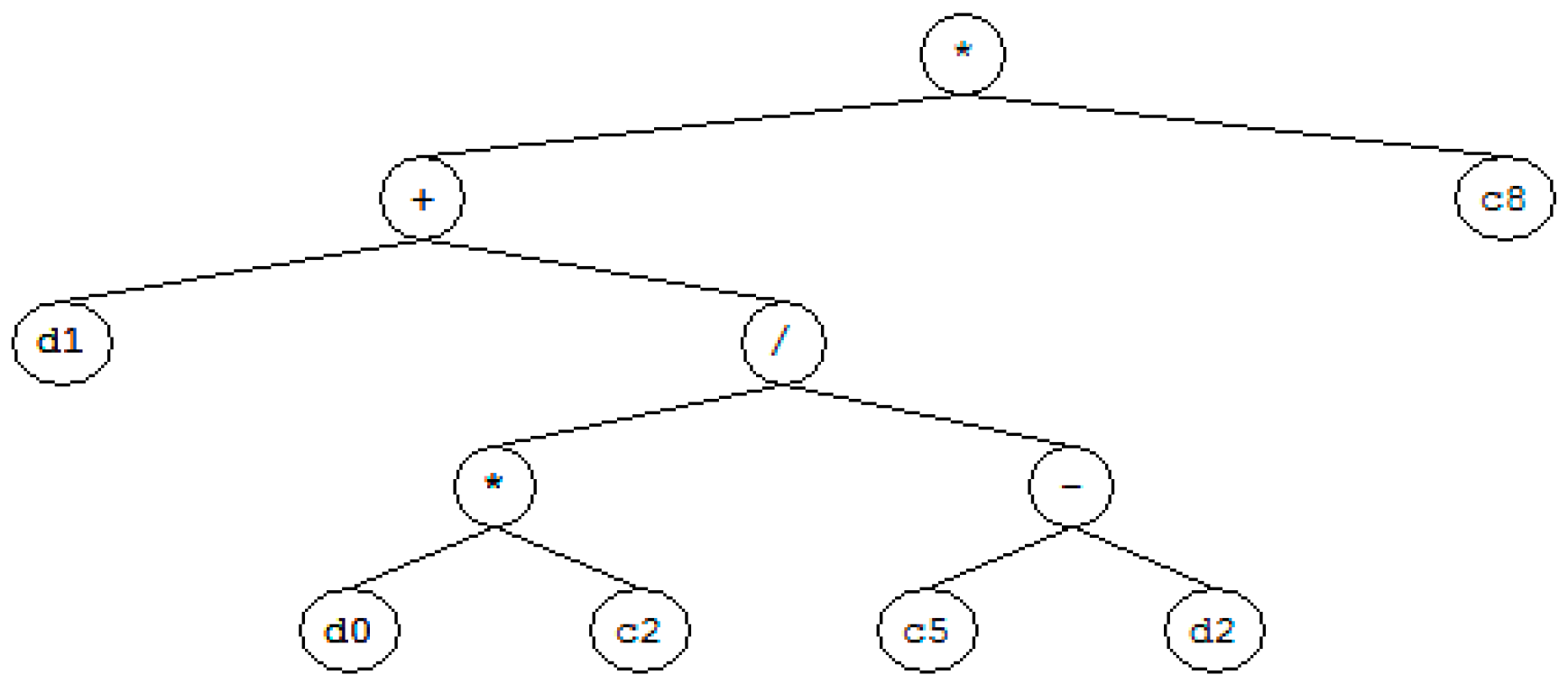

2.3.2. Gene-Expression Programming (GEP) Model

2.4. Accuracy Assessment

3. Results and Discussion

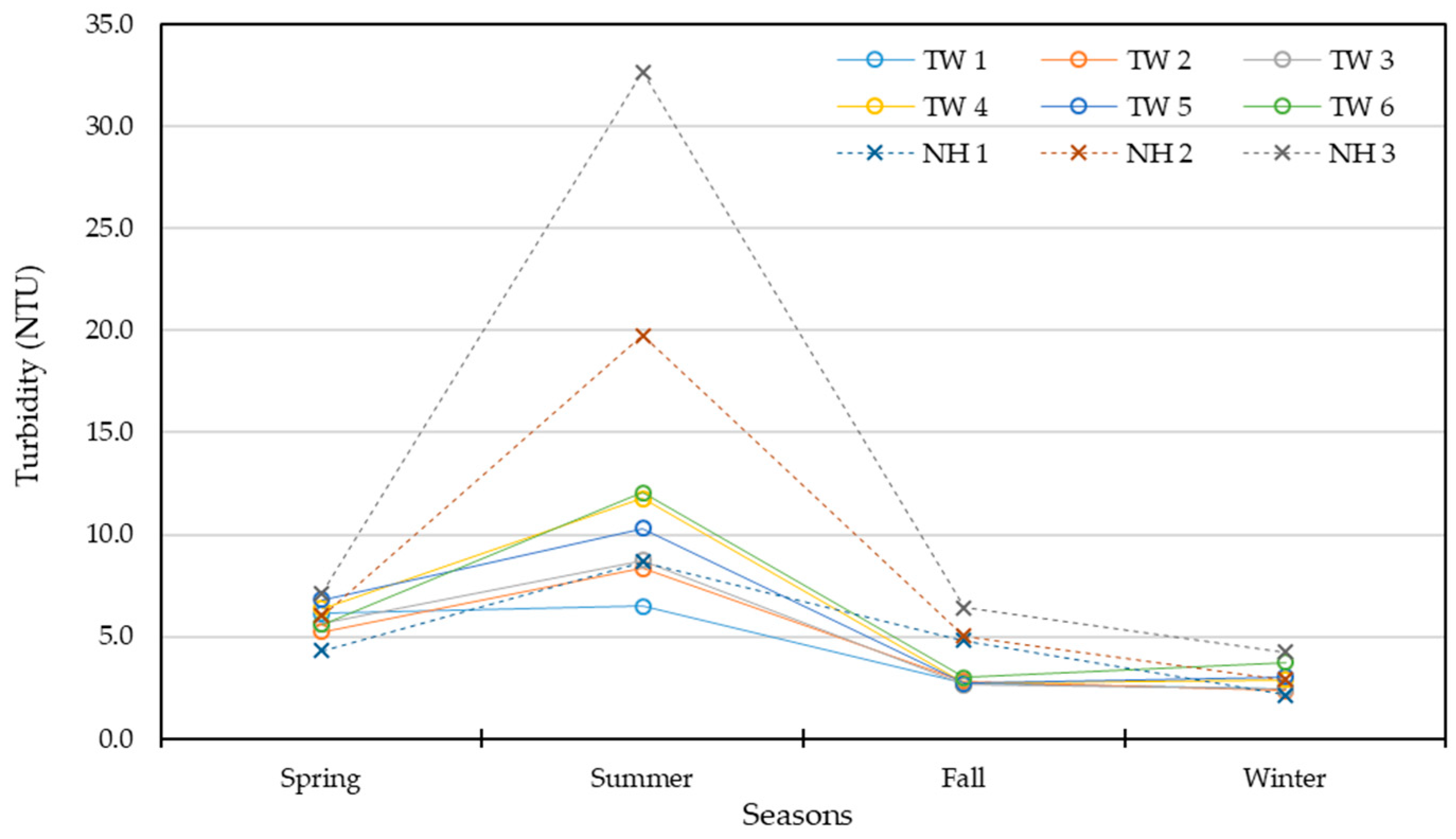

3.1. Data Collection

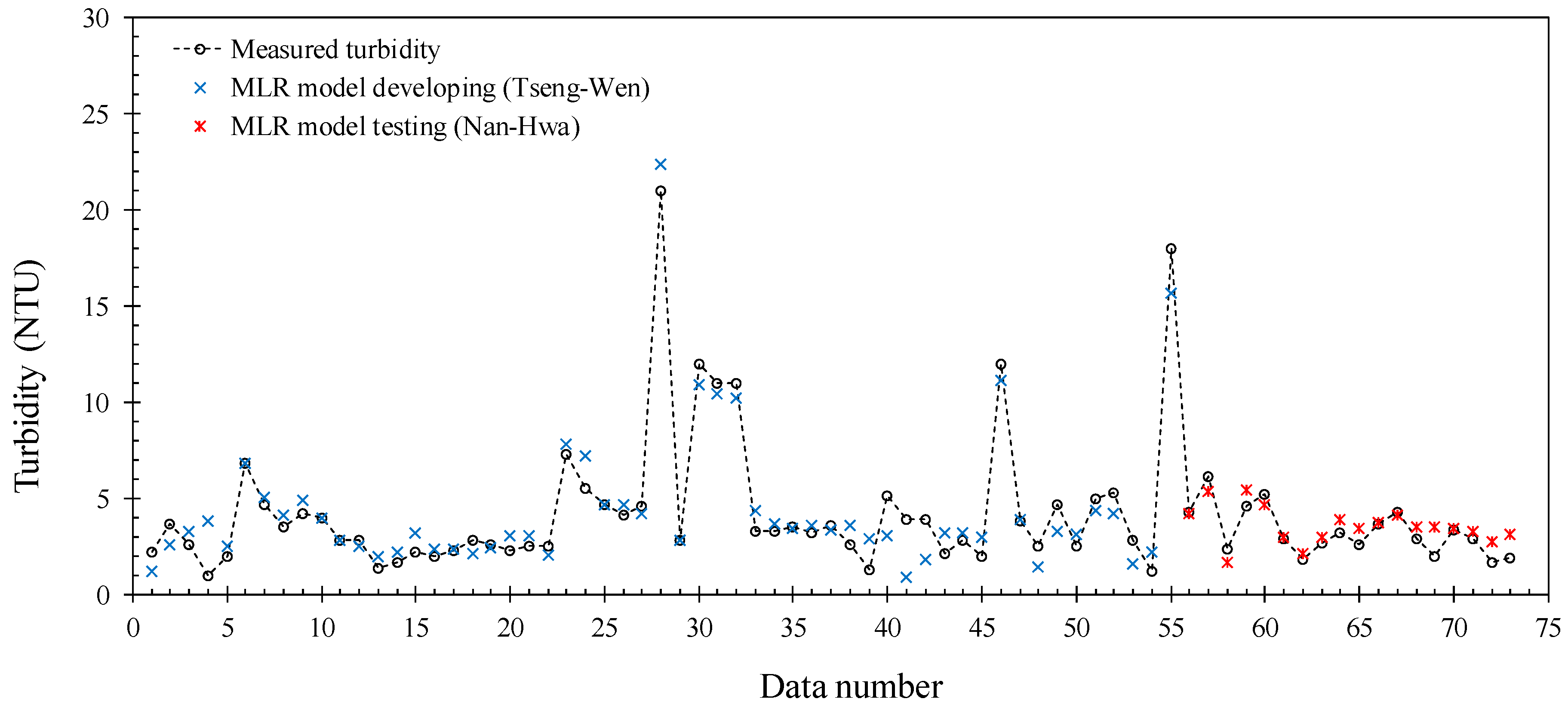

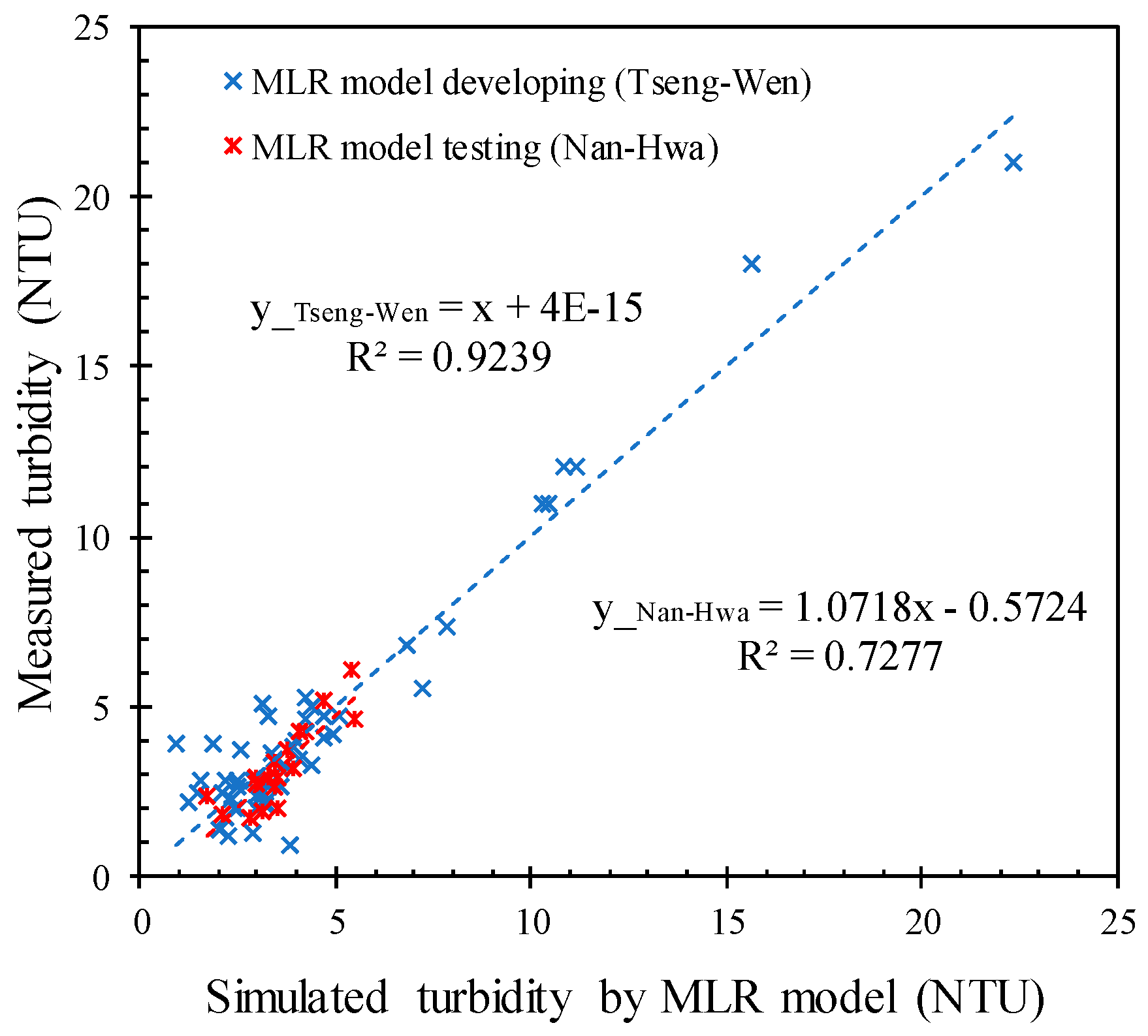

3.2. Simulated Turbidity by Multiple Linear Regression (MLR) Model

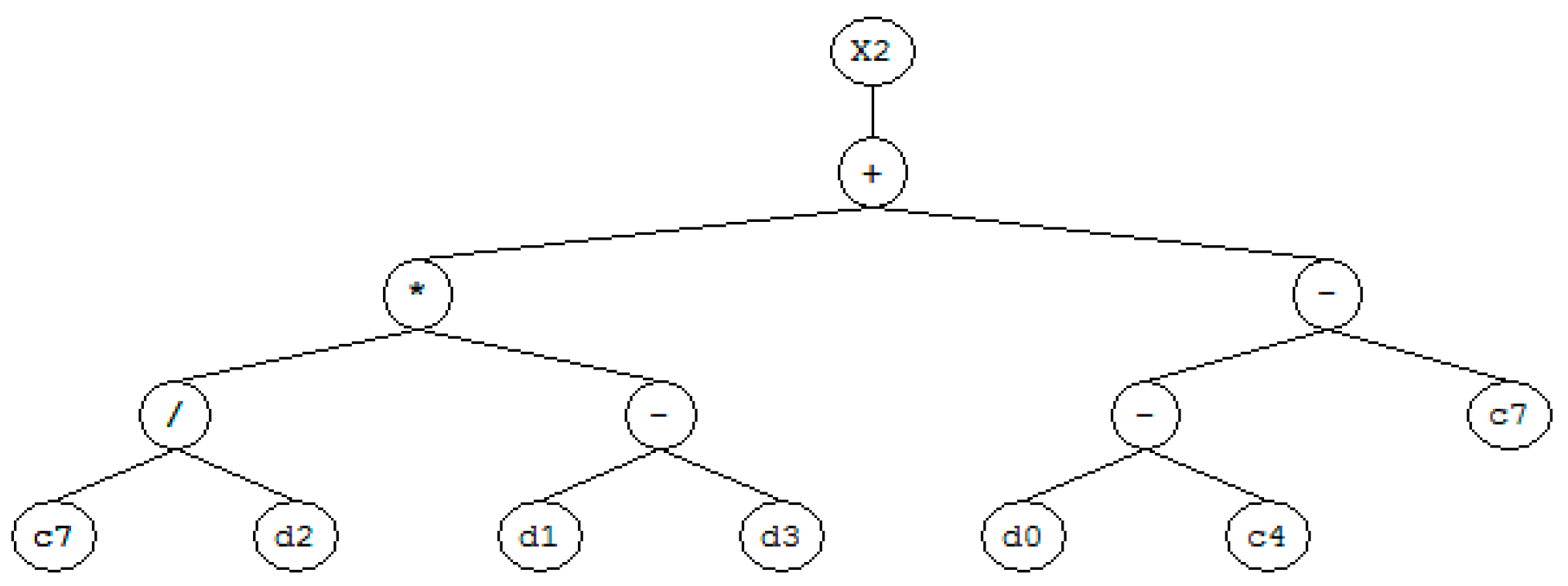

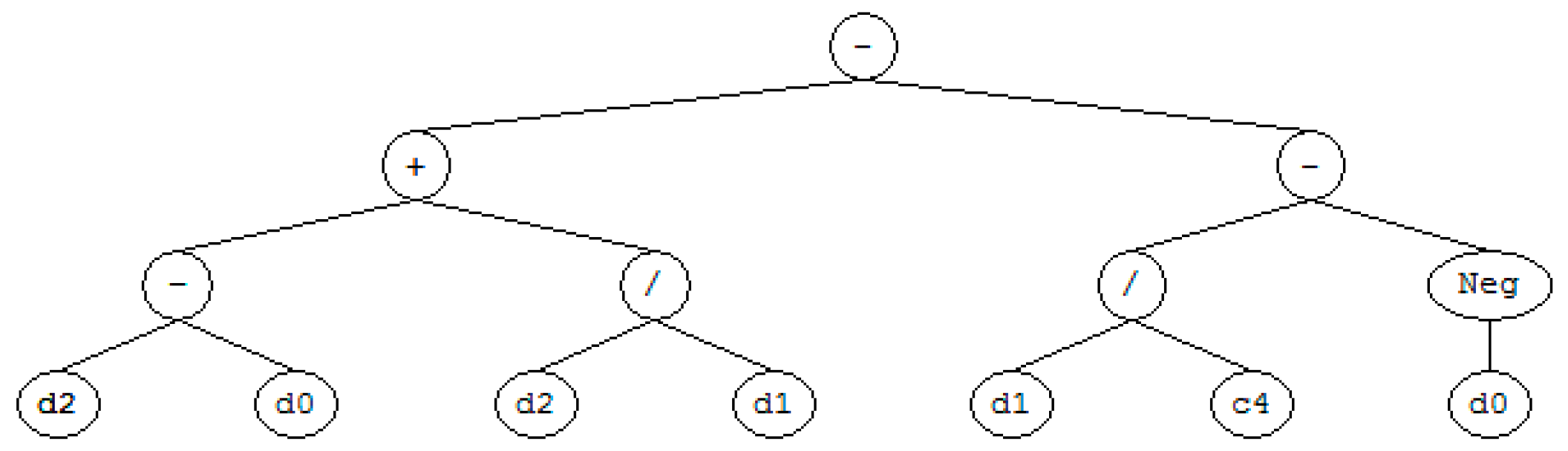

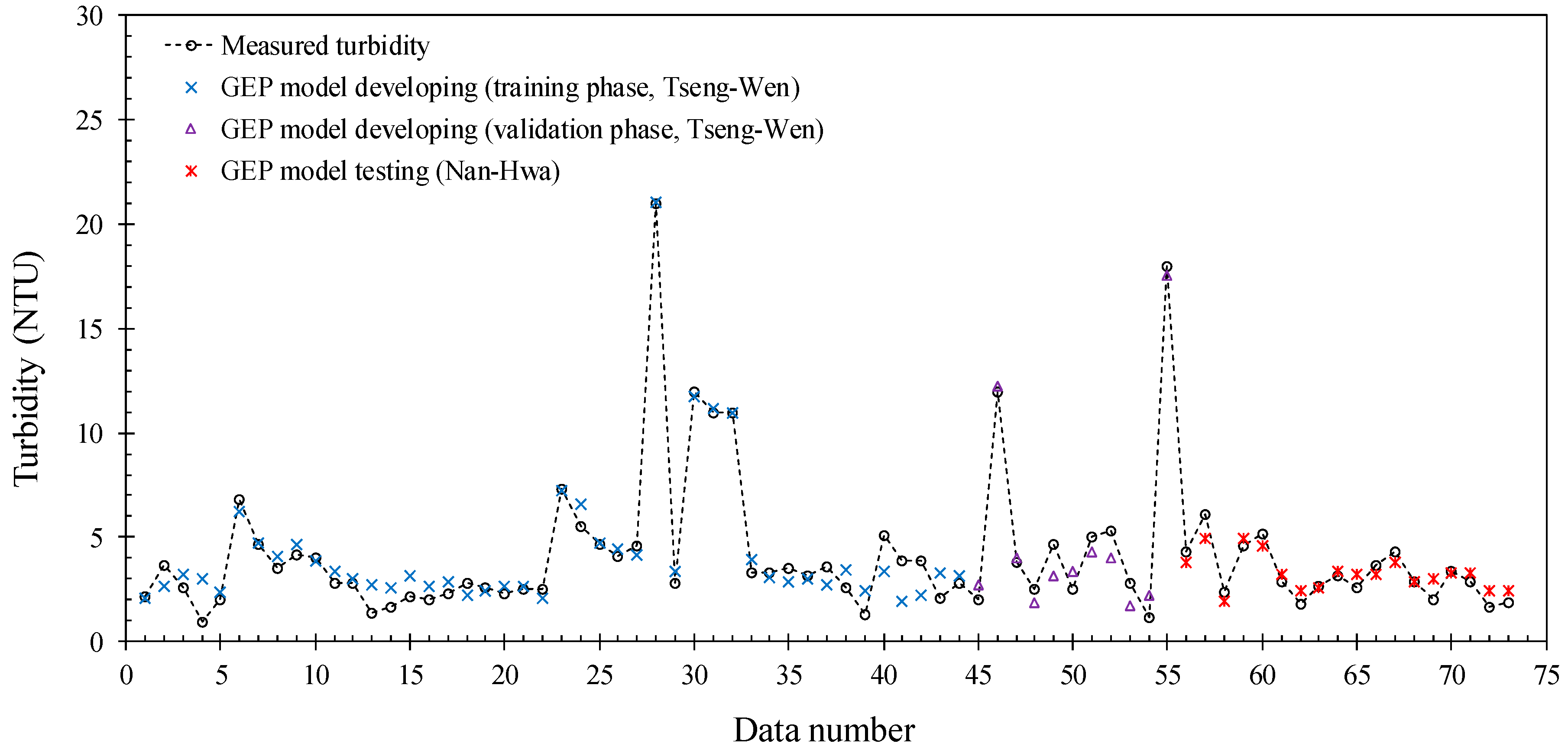

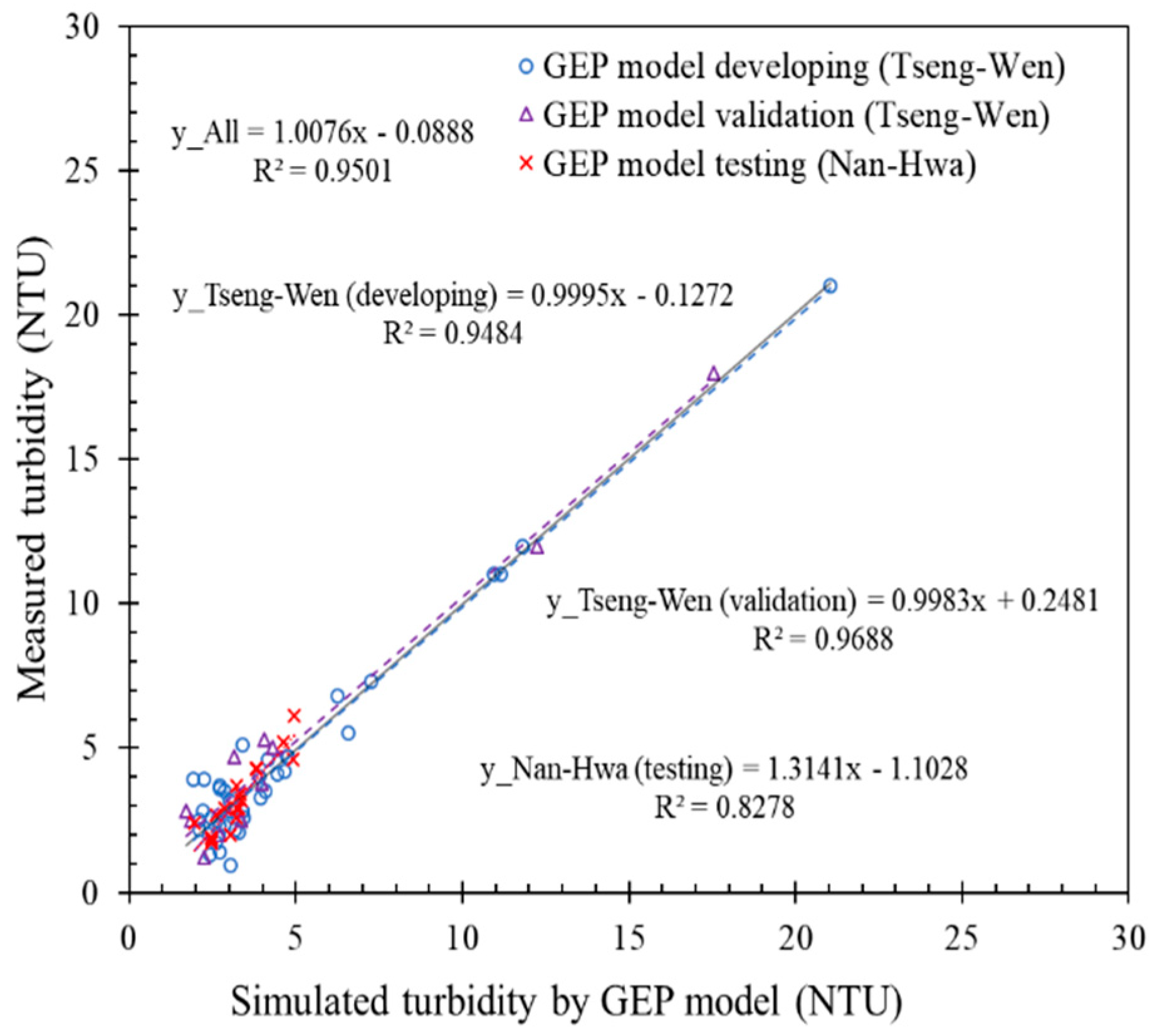

3.3. Simulated Turbidity by Gene-Expression Programming (GEP) Model

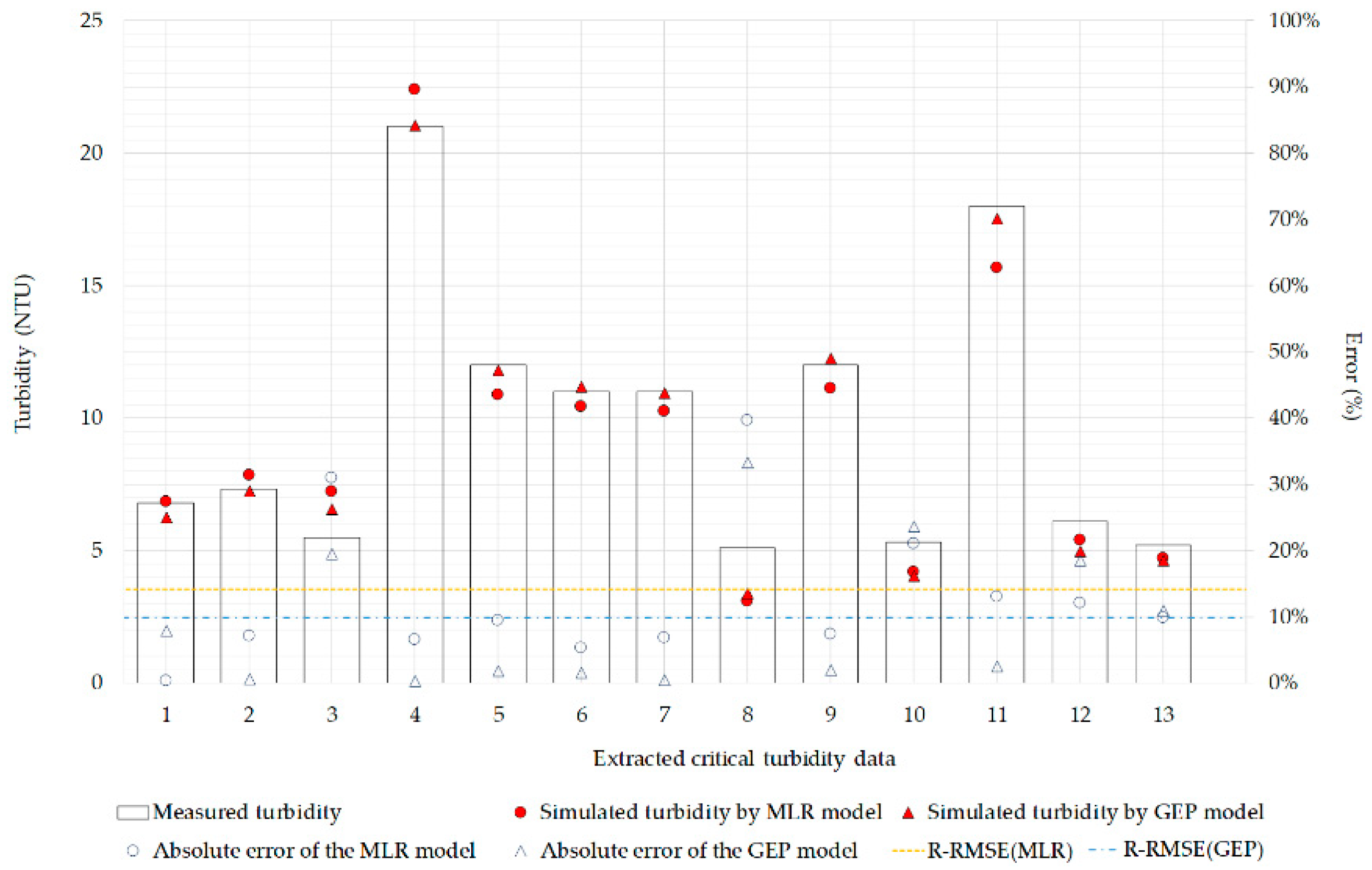

3.4. Simulated Turbidity Accuracy Assessment

4. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- EPA. Water Turbidity Test Standard-Turbiditymeter Method (niea w219.52); Environmental Protection Administration: Taipei, Taiwan, 2005; pp. 1–4.

- Yang, M.; Sykes, R.; Merry, C. Estimation of algal biological parameters using water quality modeling and SPOT satellite data. Ecol. Model. 2000, 125, 1–13. [Google Scholar] [CrossRef]

- Kallio, K.; Kutser, T.; Hannonen, T.; Koponen, S.; Pulliainen, J.; Vepsäläinen, J.; Pyhälahti, T. Retrieval of water quality from airborne imaging spectrometry of various lake types in different seasons. Sci. Total Environ. 2001, 268, 59–77. [Google Scholar] [CrossRef]

- Roelfsema, C.; Phinn, S.; Dennison, W.; Dekker, A.; Brando, V.E. Monitoring toxic cyanobacteria Lyngbya majuscula (Gomont) in Moreton Bay, Australia by integrating satellite image data and field mapping. Harmful Algae 2006, 5, 45–56. [Google Scholar] [CrossRef]

- Robert, E.; Grippa, M.; Kergoat, L.; Pinet, S.; Gal, L.; Cochonneau, G.; Martinez, J.-M. Monitoring water turbidity and surface suspended sediment concentration of the Bagre Reservoir (Burkina Faso) using MODIS and field reflectance data. Int. J. Appl. Earth Obs. Geoinf. 2016, 52, 243–251. [Google Scholar] [CrossRef]

- Chen, S.; Fang, L.; Zhang, L.; Huang, W. Remote sensing of turbidity in seawater intrusion reaches of Pearl River Estuary–A case study in Modaomen water way, China. Estuar. Coast. Shelf Sci. 2009, 82, 119–127. [Google Scholar] [CrossRef]

- Miller, R.L.; McKee, B.A. Using MODIS Terra 250 m imagery to map concentrations of total suspended matter in coastal waters. Remote Sens. Environ. 2004, 93, 259–266. [Google Scholar] [CrossRef]

- Doxaran, D.; Froidefond, J.-M.; Lavender, S.; Castaing, P. Spectral signature of highly turbid waters: Application with SPOT data to quantify suspended particulate matter concentrations. Remote Sens. Environ. 2002, 81, 149–161. [Google Scholar] [CrossRef]

- Min, J.-E.; Ryu, J.-H.; Lee, S.; Son, S. Monitoring of suspended sediment variation using Landsat and MODIS in the Saemangeum coastal area of Korea. Mar. Pollut. Bull. 2012, 64, 382–390. [Google Scholar] [CrossRef]

- Gordon, H.R.; Clark, D.K.; Brown, J.W.; Brown, O.B.; Evans, R.H.; Broenkow, W.W. Phytoplankton pigment concentrations in the Middle Atlantic Bight: Comparison of ship determinations and CZCS estimates. Appl. Opt. 1983, 22, 20–36. [Google Scholar] [CrossRef]

- Chebud, Y.; Naja, G.M.; Rivero, R.G.; Melesse, A.M. Water quality monitoring using remote sensing and an artificial neural network. Water Air Soil Pollut. 2012, 223, 4875–4887. [Google Scholar] [CrossRef]

- Ng, W.-T.; Rima, P.; Einzmann, K.; Immitzer, M.; Atzberger, C.; Eckert, S. Assessing the Potential of Sentinel-2 and Pléiades Data for the Detection of Prosopis and Vachellia spp. in Kenya. Remote Sens. 2017, 9, 74. [Google Scholar] [CrossRef]

- Kawamura, K.; Ikeura, H.; Phongchanmaixay, S.; Khanthavong, P. Canopy Hyperspectral Sensing of Paddy Fields at the Booting Stage and PLS Regression can Assess Grain Yield. Remote Sens. 2018, 10, 1249. [Google Scholar] [CrossRef]

- Lebourgeois, V.; Dupuy, S.; Vintrou, É.; Ameline, M.; Butler, S.; Bégué, A. A Combined Random Forest and OBIA Classification Scheme for Mapping Smallholder Agriculture at Different Nomenclature Levels Using Multisource Data (Simulated Sentinel-2 Time Series, VHRS and DEM). Remote Sens. 2017, 9, 259. [Google Scholar] [CrossRef]

- Atzberger, C.; Guérif, M.; Baret, F.; Werner, W. Comparative analysis of three chemometric techniques for the spectroradiometric assessment of canopy chlorophyll content in winter wheat. Comput. Electron. Agric. 2010, 73, 165–173. [Google Scholar] [CrossRef]

- Thanh Noi, P.; Kappas, M. Comparison of Random Forest, k-Nearest Neighbor, and Support Vector Machine Classifiers for Land Cover Classification Using Sentinel-2 Imagery. Sensors 2017, 18, 18. [Google Scholar] [CrossRef] [PubMed]

- Ferrant, S.; Selles, A.; Le Page, M.; Herrault, P.-A.; Pelletier, C.; Al-Bitar, A.; Mermoz, S.; Gascoin, S.; Bouvet, A.; Saqalli, M.; et al. Detection of Irrigated Crops from Sentinel-1 and Sentinel-2 Data to Estimate Seasonal Groundwater Use in South India. Remote Sens. 2017, 9, 1119. [Google Scholar] [CrossRef]

- Pandit, S.; Tsuyuki, S.; Dube, T. Estimating Above-Ground Biomass in Sub-Tropical Buffer Zone Community Forests, Nepal, Using Sentinel 2 Data. Remote Sens. 2018, 10, 601. [Google Scholar] [CrossRef]

- Novák, J.; Lukas, V.; Křen, J. Estimation of Soil Properties Based on Soil Colour Index. Agric. Conspec. Sci. 2018, 83, 71–76. [Google Scholar]

- Sothe, C.; Almeida, C.; Liesenberg, V.; Schimalski, M. Evaluating Sentinel-2 and Landsat-8 Data to Map Sucessional Forest Stages in a Subtropical Forest in Southern Brazil. Remote Sens. 2017, 9, 838. [Google Scholar] [CrossRef]

- Immitzer, M.; Vuolo, F.; Atzberger, C. First Experience with Sentinel-2 Data for Crop and Tree Species Classifications in Central Europe. Remote Sens. 2016, 8, 166. [Google Scholar] [CrossRef]

- Liu, Y.; Gong, W.; Hu, X.; Gong, J. Forest Type Identification with Random Forest Using Sentinel-1A, Sentinel-2A, Multi-Temporal Landsat-8 and DEM Data. Remote Sens. 2018, 10, 946. [Google Scholar] [CrossRef]

- Mohite, J.; Trivedi, M.; Surve, A.; Sawant, M.; Urkude, R.; Pappula, S. Hybrid classification-clustering approach for export-non export grape area mapping and health estimation using sentinel-2 satellite data. In Proceedings of the 2017 6th International Conference on Agro-Geoinformatics, Fairfax, VA, USA, 7–10 August 2017; pp. 1–6. [Google Scholar]

- Ramoelo, A.; Cho, M.A.; Mathieu, R.; Madonsela, S.; van de Kerchove, R.; Kaszta, Z.; Wolff, E. Monitoring grass nutrients and biomass as indicators of rangeland quality and quantity using random forest modelling and WorldView-2 data. Int. J. Appl. Earth Obs. Geoinf. 2015, 43, 43–54. [Google Scholar] [CrossRef]

- Filgueiras, R.; Mantovani, E.C.; Dias, S.H.B.; Fernandes Filho, E.I.; Cunha, F.F.d.; Neale, C.M.U. New approach to determining the surface temperature without thermal band of satellites. Eur. J. Agron. 2019, 106, 12–22. [Google Scholar] [CrossRef]

- Xiong, J.; Thenkabail, P.; Tilton, J.; Gumma, M.; Teluguntla, P.; Oliphant, A.; Congalton, R.; Yadav, K.; Gorelick, N. Nominal 30-m Cropland Extent Map of Continental Africa by Integrating Pixel-Based and Object-Based Algorithms Using Sentinel-2 and Landsat-8 Data on Google Earth Engine. Remote Sens. 2017, 9, 1065. [Google Scholar] [CrossRef]

- Richter, K.; Hank, T.B.; Vuolo, F.; Mauser, W.; D’Urso, G. Optimal Exploitation of the Sentinel-2 Spectral Capabilities for Crop Leaf Area Index Mapping. Remote Sens. 2012, 4, 561–582. [Google Scholar] [CrossRef]

- Ramoelo, A.; Cho, M.; Mathieu, R.; Skidmore, A.K. Potential of Sentinel-2 spectral configuration to assess rangeland quality. J. Appl. Remote Sens. 2015, 9, 094096. [Google Scholar] [CrossRef]

- Sakowska, K.; Juszczak, R.; Gianelle, D. Remote Sensing of Grassland Biophysical Parameters in the Context of the Sentinel-2 Satellite Mission. J. Sens. 2016, 2016, 4612809. [Google Scholar] [CrossRef]

- Sitokonstantinou, V.; Papoutsis, I.; Kontoes, C.; Arnal, A.; Andrés, A.P.; Zurbano, J.A. Scalable Parcel-Based Crop Identification Scheme Using Sentinel-2 Data Time-Series for the Monitoring of the Common Agricultural Policy. Remote Sens. 2018, 10, 911. [Google Scholar] [CrossRef]

- Belgiu, M.; Csillik, O. Sentinel-2 cropland mapping using pixel-based and object-based time-weighted dynamic time warping analysis. Remote Sens. Environ. 2018, 204, 509–523. [Google Scholar] [CrossRef]

- Van Tricht, K.; Gobin, A.; Gilliams, S.; Piccard, I. Synergistic Use of Radar Sentinel-1 and Optical Sentinel-2 Imagery for Crop Mapping: A Case Study for Belgium. Remote Sens. 2018, 10, 1642. [Google Scholar] [CrossRef]

- Atzberger, C.; Richter, K.; Vuolo, F.; Darvishzadeh, R.; Schlerf, M. Why confining to vegetation indices? Exploiting the potential of improved spectral observations using radiative transfer models. In Proceedings of the Remote Sensing for Agriculture, Ecosystems, and Hydrology XIII, Prague, Czech Republic, 19–21 September 2011; SPIE: Washington, DC, USA, 2011; Volume 8174. [Google Scholar]

- Tassan, S. Local algorithms using SeaWiFS data for the retrieval of phytoplankton, pigments, suspended sediment, and yellow substance in coastal waters. Appl. Opt. 1994, 33, 2369–2378. [Google Scholar] [CrossRef] [PubMed]

- Shieh, M.-L. Application of Remote Sensing Technique on Estimating Suspended Sediment Concentration. Ph.D. Thesis, National Cheng Kung University, Tainan, Taiwan, 2009. [Google Scholar]

- Chang, C.-H.; Liu, C.-C.; Wen, C.-G.; Cheng, I.-F.; Tam, C.-K.; Huang, C.-S. Monitoring reservoir water quality with Formosat-2 high spatiotemporal imagery. J. Environ. Monit. 2009, 11, 1982–1992. [Google Scholar] [CrossRef] [PubMed]

- Quang, N.H.; Sasaki, J.; Higa, H.; Huan, N.H. Spatiotemporal Variation of Turbidity Based on Landsat 8 OLI in Cam Ranh Bay and Thuy Trieu Lagoon, Vietnam. Water 2017, 9, 570. [Google Scholar] [CrossRef]

- EPA. Environmental Water Quality Information. Available online: https://wq.epa.gov.tw/Code/Default.aspx?Water=Dam (accessed on 22 May 2019).

- Ma, R.; Dai, J. Investigation of chlorophyll-a and total suspended matter concentrations using Landsat ETM and field spectral measurement in Taihu Lake, China. Int. J. Remote Sens. 2005, 26, 2779–2795. [Google Scholar] [CrossRef]

- Zhou, W.; Wang, S.; Zhou, Y.; Troy, A. Mapping the concentrations of total suspended matter in Lake Taihu, China, using Landsat-5 TM data. Int. J. Remote Sens. 2006, 27, 1177–1191. [Google Scholar] [CrossRef]

- Petus, C.; Chust, G.; Gohin, F.; Doxaran, D.; Froidefond, J.-M.; Sagarminaga, Y. Estimating turbidity and total suspended matter in the Adour River plume (South Bay of Biscay) using MODIS 250-m imagery. Cont. Shelf Res. 2010, 30, 379–392. [Google Scholar] [CrossRef]

- Cui, L.; Qiu, Y.; Fei, T.; Liu, Y.; Wu, G. Using remotely sensed suspended sediment concentration variation to improve management of Poyang Lake, China. Lake Reserv. Manag. 2013, 29, 47–60. [Google Scholar] [CrossRef]

- Ferreira, F.L.; Bota, D.P.; Bross, A.; Mélot, C.; Vincent, J.-L. Serial evaluation of the SOFA score to predict outcome in critically ill patients. JAMA 2001, 286, 1754–1758. [Google Scholar] [CrossRef]

- Ferreira, C. Gene expression programming in problem solving. In Soft Computing and Industry; Springer: Cham, Switzerland, 2002; pp. 635–653. [Google Scholar]

- Traore, S.; Luo, Y.; Fipps, G. Deployment of artificial neural network for short-term forecasting of evapotranspiration using public weather forecast restricted messages. Agric. Water Manag. 2016, 163, 363–379. [Google Scholar] [CrossRef]

- Tsai, Y.-Y. A Research on the GEP and GA Regulated Box Theory in Stock Markets. Master’s Thesis, Fu Jen Catholic University, New Taipei, Taiwan, 2016. [Google Scholar]

- Gohin, F.; Druon, J.; Lampert, L. A five channel chlorophyll concentration algorithm applied to SeaWiFS data processed by SeaDAS in coastal waters. Int. J. Remote Sens. 2002, 23, 1639–1661. [Google Scholar] [CrossRef]

- WHO. Guidelines for Drinking-Water Quality. Vol. 2, Health Criteria and Other Supporting Information: Addendum; WHO: Geneva, Switzerland, 1998. [Google Scholar]

- Heinemann, A.B.; Van Oort, P.A.; Fernandes, D.S.; Maia, A.d.H.N. Sensitivity of APSIM/ORYZA model due to estimation errors in solar radiation. Bragantia 2012, 71, 572–582. [Google Scholar] [CrossRef]

- Li, M.-F.; Zhang, Y.-R.; Luo, K.-H.; Wu, L.-A.; Fan, H. Time-correspondence differential ghost imaging. Phys. Rev. A 2013, 87, 033813. [Google Scholar] [CrossRef]

- Despotovic, M.; Nedic, V.; Despotovic, D.; Cvetanovic, S. Evaluation of empirical models for predicting monthly mean horizontal diffuse solar radiation. Renew. Sustain. Energy Rev. 2016, 56, 246–260. [Google Scholar] [CrossRef]

| Season | Station | 2013 | 2014 | 2015 | 2016 | 2017 | 2018 | Avg. |

|---|---|---|---|---|---|---|---|---|

| Spring (January to March) | TW 1 | 13.0 | 3.4 | 4.0 | 4.7 | 3.4 | 8.4 | 6.1 |

| TW 2 | 6.7 | 3.3 | 5.3 | 3.8 | 3.7 | 8.6 | 5.2 | |

| TW 3 | 7.5 | 4.0 | 4.2 | 3.9 | 3.5 | 10.7 | 5.6 | |

| TW 4 | 8.7 | 4.6 | 3.5 | 3.9 | 3.7 | 14.0 | 6.4 | |

| TW 5 | 9.2 | 8.6 | 4.7 | 3.8 | 4.7 | 10.0 | 6.8 | |

| TW 6 | - | - | 6.8 | 5.1 | 4.9 | - | 5.6 | |

| NH 1 | 4.1 | 3.7 | 4.3 | 5.7 | 4.0 | 4.0 | 4.3 | |

| NH 2 | 5.8 | 7.8 | 6.1 | 4.8 | 6.6 | 5.1 | 6.0 | |

| NH 3 | 6.2 | 11.0 | 6.3 | 6.5 | - | 5.6 | 7.1 | |

| Summer (April to June) | TW 1 | 3.6 | 8.1 | 2.6 | 2.1 | 7.6 | 15.0 | 6.5 |

| TW 2 | 5.5 | 12.0 | 2.8 | 1.7 | 7.9 | 20.1 | 8.3 | |

| TW 3 | 9.8 | 8.3 | 3.8 | 2.2 | 9.3 | 19.1 | 8.8 | |

| TW 4 | 12.0 | 26.0 | 6.4 | 2.2 | 13.0 | 11.0 | 11.8 | |

| TW 5 | - | - | - | 2.6 | 18.0 | - | 10.3 | |

| TW 6 | - | - | - | 3.1 | 21.0 | - | 12.1 | |

| NH 1 | 4.1 | 16.0 | 13.0 | 2.3 | 7.3 | 9.2 | 8.7 | |

| NH 2 | 3.7 | 60.0 | - | 5.8 | 8.3 | 21.0 | 19.8 | |

| NH 3 | 5.9 | 85.0 | - | 9.7 | 30.0 | - | 32.7 | |

| Fall (July to September) | TW 1 | 2.7 | 1.8 | 2.3 | 1.6 | 3.5 | 4.3 | 2.7 |

| TW 2 | 2.6 | 2.1 | 2.0 | 1.9 | 3.7 | 4.7 | 2.8 | |

| TW 3 | 2.6 | 2.0 | 2.2 | 1.7 | 3.4 | 4.0 | 2.6 | |

| TW 4 | 2.9 | 2.1 | 1.7 | 1.6 | 3.3 | 4.7 | 2.7 | |

| TW 5 | 3.1 | 2.4 | 1.4 | 1.5 | 3.5 | 4.4 | 2.7 | |

| TW 6 | - | 2.7 | 2.8 | 1.9 | 3.1 | 4.5 | 3.0 | |

| NH 1 | 2.6 | 2.2 | 5.6 | 2.4 | 3.6 | 12.5 | 4.8 | |

| NH 2 | 3.1 | 2.8 | 8.1 | 2.7 | 4.8 | 8.6 | 5.0 | |

| NH 3 | 4.9 | 4.7 | 7.7 | 3.4 | 6.3 | 11.5 | 6.4 | |

| Winter (October to December) | TW 1 | 2.6 | 2.0 | 1.9 | 2.5 | 3.5 | 2.2 | 2.5 |

| TW 2 | 2.6 | 1.0 | 2.0 | 2.5 | 3.8 | 2.2 | 2.3 | |

| TW 3 | 2.5 | 1.3 | 2.5 | 2.3 | 3.4 | 2.6 | 2.4 | |

| TW 4 | 3.7 | 1.2 | 3.5 | 2.6 | 4.2 | 2.1 | 2.9 | |

| TW 5 | 2.2 | 2.1 | 2.3 | 2.0 | 5.9 | 3.6 | 3.0 | |

| TW 6 | 2.8 | 2.6 | 3.5 | 2.8 | 7.8 | 3.0 | 3.8 | |

| NH 1 | 1.4 | 1.6 | 1.5 | 3.1 | 2.7 | 2.5 | 2.1 | |

| NH 2 | 2.0 | 2.6 | 2.2 | 3.6 | 3.7 | 3.3 | 2.9 | |

| NH 3 | 3.1 | 4.1 | 2.7 | 5.4 | 4.6 | 5.6 | 4.3 |

| Head | Tail | |||||

|---|---|---|---|---|---|---|

| 0 | 1 | 2 | 3 | 4 | 5 | 6 |

| AND | > | < | E | 2 | F | 3 |

| Reservoirs | Date | Path | Landsat Scene ID | Stations | Number of Samples |

|---|---|---|---|---|---|

| Tseng-Wen | 2013/10/25 | 117/44 | LC81170442013298LGN01 | TW 1, 3, 4, 5, 6 | 5 |

| 2014/11/04 | 118/44 | LC81180442014308LGN01 | TW 1, 2, 3, 4, 6 | 5 | |

| 2015/01/23 | 118/44 | LC81180442015023LGN01 | TW 1, 2, 3, 4, 5, 6 | 6 | |

| 2015/05/08 | 117/44 | LC81170442015128LGN01 | TW 2, 3, 4 | 3 | |

| 2015/07/18 | 118/44 | LC81170442015128LGN01 | TW 1, 2, 3, 4, 5, 6 | 6 | |

| 2016/01/19 | 117/44 | LC81170442016019LGN02 | TW 1, 3, 4, 6 | 4 | |

| 2016/11/09 | 118/44 | LC81180442016314LGN01 | TW 1, 2, 3, 4, 5, 6 | 6 | |

| 2017/01/12 | 118/44 | LC81180442017012LGN01 | TW 1, 2, 3 | 3 | |

| 2017/02/06 | 117/44 | LC81170442017037LGN00 | TW 1, 2, 3, 4, 5, 6 | 6 | |

| 2017/06/21 | 118/44 | LC81180442017172LGN00 | TW 1, 2, 3, 4, 5, 6 | 6 | |

| 2017/08/17 | 117/44 | LC81170442017229LGN00 | TW 2, 3, 4, 5, 6 | 5 | |

| Nan-Hwa | 2015/01/23 | 118/44 | LC81180442015023LGN01 | NH 1, 2 | 2 |

| 2016/03/30 | 118/44 | LC81180442016090LGN01 | NH 1 | 1 | |

| 2016/04/08 | 117/44 | LC81170442016099LGN01 | NH 1 | 1 | |

| 2016/08/05 | 118/44 | LC81180442016218LGN01 | NH 1 | 1 | |

| 2016/12/04 | 117/44 | LC81170442016339LGN01 | NH 1 | 1 | |

| 2016/12/11 | 118/44 | LC81180442016346LGN01 | NH 1 | 1 | |

| 2017/01/12 | 118/44 | LC81180442017012LGN01 | NH 1 | 1 | |

| 2017/01/28 | 118/44 | LC81180442017028LGN00 | NH 1 | 1 | |

| 2017/02/06 | 117/44 | LC81170442017037LGN00 | NH 1, 2 | 2 | |

| 2017/02/13 | 118/44 | LC81180442017044LGN00 | NH 1 | 1 | |

| 2017/06/30 | 117/44 | LC81170442017181LGN00 | NH 1 | 1 | |

| 2017/10/11 | 118/44 | LC81180442017284LGN00 | NH 1 | 1 | |

| 2017/10/20 | 117/44 | LC81170442017293LGN00 | NH 1 | 1 | |

| 2017/10/27 | 118/44 | LC81180442017300LGN00 | NH 1 | 1 | |

| 2017/11/21 | 117/44 | LC81170442017325LGN00 | NH 1 | 1 | |

| 2017/11/28 | 118/44 | LC81180442017332LGN00 | NH 1 | 1 |

| Chromosomes | Head Size | Genes | Linking Function | Calculation Functions |

|---|---|---|---|---|

| 50 | 7 | 4 | Multiplication | +, -, *, /, 1/x, -x, x, x2 |

| MLR | GEP | |||||

|---|---|---|---|---|---|---|

| R2 | RMSE | R-RMSE | R2 | RMSE | R-RMSE | |

| (NTU) | (%) | (NTU) | (%) | |||

| Model developing (Tseng-Wen) | 0.9239 | 1.0726 | 24.08% | 0.9484 1 | 0.8190 1 | 19.46% 1 |

| 0.9688 2 | 0.9315 2 | 17.13% 2 | ||||

| Model testing (Nan-Hwa) | 0.7277 | 0.7248 | 22.26% | 0.8278 | 0.5815 | 17.86% |

| MLR | GEP | |||||

|---|---|---|---|---|---|---|

| R2 | RMSE | R-RMSE | R2 | RMSE | R-RMSE | |

| (NTU) | (%) | (NTU) | (%) | |||

| Critical turbidity (> 5 NTU) | 0.9507 | 1.2284 | 13.32% | 0.9837 | 0.7766 | 8.28% |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, L.-W.; Wang, Y.-M. Modelling Reservoir Turbidity Using Landsat 8 Satellite Imagery by Gene Expression Programming. Water 2019, 11, 1479. https://doi.org/10.3390/w11071479

Liu L-W, Wang Y-M. Modelling Reservoir Turbidity Using Landsat 8 Satellite Imagery by Gene Expression Programming. Water. 2019; 11(7):1479. https://doi.org/10.3390/w11071479

Chicago/Turabian StyleLiu, Li-Wei, and Yu-Min Wang. 2019. "Modelling Reservoir Turbidity Using Landsat 8 Satellite Imagery by Gene Expression Programming" Water 11, no. 7: 1479. https://doi.org/10.3390/w11071479

APA StyleLiu, L.-W., & Wang, Y.-M. (2019). Modelling Reservoir Turbidity Using Landsat 8 Satellite Imagery by Gene Expression Programming. Water, 11(7), 1479. https://doi.org/10.3390/w11071479