Abstract

The inherent complexity of planning at sea, called maritime spatial planning (MSP), requires a planning approach where science (data and evidence) and stakeholders (their engagement and involvement) are integrated throughout the planning process. An increasing number of innovative planning support systems (PSS) in terrestrial planning incorporate scientific models and data into multi-player digital game platforms with an element of role-play. However, maritime PSS are still early in their innovation curve, and the use and usefulness of existing tools still needs to be demonstrated. Therefore, the authors investigate the serious game, MSP Challenge 2050, for its potential use as an innovative maritime PSS and present the results of three case studies on participant learning in sessions of game events held in Newfoundland, Venice, and Copenhagen. This paper focusses on the added values of MSP Challenge 2050, specifically at the individual, group, and outcome levels, through the promotion of the knowledge co-creation cycle. During the three game events, data was collected through participant surveys. Additionally, participants of the Newfoundland event were audiovisually recorded to perform an interaction analysis. Results from survey answers and the interaction analysis provide evidence that MSP Challenge 2050 succeeds at the promotion of group and individual learning by translating complex information to players and creating a forum wherein participants can share their thoughts and perspectives all the while (co-) creating new types of knowledge. Overall, MSP Challenge and serious games in general represent promising tools that can be used to facilitate the MSP process.

1. Introduction

Human activities are increasingly affecting ocean spaces across the globe [1,2]. Traditionally used for fishing and transportation, today’s oceans are a hub of human activities that are constantly diversifying [1,2,3,4,5]. Although many ocean activities occur in the same space, these activities are traditionally managed separately, leading to a fragmented system of ocean management. Fragmented management fails to address or resolve user conflicts and trade-offs, leaving oceans susceptible to the cumulative effects of these multiple uses [3]. Given the increased demand for maritime goods and resources, the main obstacle for human activities at sea is competition for maritime space [6]. Maritime spaces are prime examples of socio-technical complexity; i.e. complexity stemming from the overlap between the natural-technical-physical realm and the socio-political world [2]. Addressing this complexity requires new methods of ocean management that coordinate the different sectors to maintain ocean health. Maritime spatial planning (MSP) refers to the inherent complexity of planning at sea and requires balancing both social and economic objectives with ecological conservation by properly allocating human ocean activities spatially and temporally [3]. Tools are required to manage the complexity and to pre-empt conflicts that may arise throughout the planning and decision-making stages. Not all conflicts can be fully resolved, but tools that allow planners to identify and predict areas of conflict are useful to the planning process [7].

The term, MSP, first appeared on the European Union legal stage in July 2014 with the MSP Directive [6], and, although it borrows many of the same methods and applications as terrestrial spatial planning, it lags in some respects [8]. Terrestrial planning has a long history of planning support systems (PSS) that MSP lacks. PSS are tools and technologies that support the planning process [9]. For the purposes of this research, serious games (SG) will be isolated as a potential PSS for use in MSP. First coined in 1989 by Harris [10], PSS are informational frameworks that integrate the information technologies for planning and (ideally) provide interactive, integrated, and participatory procedures. According to Kuller et al. [11], these systems are meant to (1) provide users with a deeper understanding of the task, and (2) the formulation and communication of ideas, values, and preferences of stakeholders. Modern PSS help synthesize information and models for users. Information is transferred to users via a user-friendly interface that simplifies the technological complexities operating behind the scenes, allowing for a simplistic representation of complex spatial data [12,13]. PSS focus on the planning process as a whole and although they may facilitate decision-making, this is not their primary purpose [12].

PSS have been developing rapidly due to advances in technology that allow for portability and rapid computation [14]. It is important that the focus of a PSS is not the technology it uses, but rather the problems it was meant to solve. This ensures the system remains user-friendly and not overly complex [15]. An effective PSS must emphasize communication and collaboration in order to support collective design, a form of interaction and communication that seeks to achieve collective goals while dealing with common concerns [14]. An effective PSS allows for what Pelzer, Geertman, van der Heijden, and Rouwette [14] refer to as added value. This added value can be divided into three levels: (1) The individual level, (2) the group level, and (3) the outcome level. At the individual level, PSS have the added value of teaching the users about specific issues [14,16,17]. Pelzer, Geertman, van der Heijden, and Rouwette [14] classify this individual learning as two-fold: (1) Learning about the object of planning, and (2) learning about other stakeholders’ perspectives and views [14]. At the group level, added value takes the form of collaboration, communication, consensus building, and efficiency [14,18]. These group level outcomes seek to bring users and stakeholders together by fostering environments that facilitate communication. This allows for users to hone their collaboration skills, facilitate consensus, and therefore increase the efficiency of the planning process [14,18]. The outcome level, Pelzer, Geertman, van der Heijden, and Rouwette [14] explain, is much more difficult to gauge, though it generally refers to whether or not a PSS improves the validity of the plans created.

Looking at these individual and group learning goals, a link to SG can be made. SG have long been used for planning in the healthcare, defense, and education sectors [19]. They are a subset of game-based learning, a field of research that uses gamification principles to help players achieve a specific goal [20]. These games can take the form of either board games or computer games; the format depends, among other factors, on the goal of the game, the complexity of the issue being modeled, and the resources available to the target audience [21,22]. Given the multiple uses of SG, their potential to act as PSS must be investigated.

1.1. Knowledge Co-creation and Stakeholder Engagement

PSS place a focus on communication and collaboration between users. Although certain SG can stimulate competition between players, the trend in SG for natural resource management is to promote collaboration and communication, a goal similar to that of a PSS [23]. This focus on collaboration is based on a constructivist view of learning. Constructivist learning theories view learning as both a process and a by-product [24]. The process of learning involves acquiring information and assimilating it while potentially resulting in changes to one’s values [24]. The by-product of learning constitutes a change in the subject’s knowledge, skills, values, or worldview [24,25]. The constructivist view emphasizes the importance of one’s past experiences and how those experiences can be used to inform others [15]. As such, the constructivist view places an emphasis on both tacit and explicit knowledge, concepts developed by Polanyi [26]. Explicit knowledge is readily transferred in formal and systematic ways (e.g., knowledge of certain legislation) [27,28], while tacit knowledge is more experiential and personal, taking the form of intuitions and hunches [29] (e.g., ease of use with computers/technology). Tacit knowledge is less transferable than explicit knowledge, yet a significant portion of knowledge comes in the tacit form [29,30]. Tacit and explicit knowledge, however, are not mutually exclusive, rather they are mutually complementary and intermingle with each other through interactions between diverse individuals and groups [30,31,32,33,34].

These concepts link to the knowledge co-creation cycle developed by Nonaka et al. [35]. Knowledge co-creation refers to an institutional mechanism that enables learning and collaboration within a governance setting. The four stages of the knowledge co-creation process are described as follows:

- -

- Socialization: From tacit to tacit knowledge, which involves the sharing and transferring of tacit knowledge between individuals and groups through physical proximity and direct interactions;

- -

- externalization: From tacit to explicit knowledge, which requires tacit knowledge to be articulated and translated into comprehensible forms that can be understood by others;

- -

- combination: From explicit to more complex sets of explicit knowledge, which requires communication and diffusion processes and the systematization of knowledge; and

- -

- internalization: From explicit to tacit knowledge.

Preliminary research [36] involving the SG AquaRepublica has shown that SG have the potential to stimulate the four stages of the knowledge co-creation process, and Barreteau, Le Page, and Perez [23] add that SG and gaming simulations offer a unique opportunity to facilitate decision-making processes. One type of SG used in environmental management combines computer simulations powered by environmental models with role-play as a method to address the complexity inherent to environmental problems, while promoting collaboration and learning among stakeholders [37,38,39]. By gamifying the experience, stakeholders are given the space to exchange perspectives, knowledge, and ideas in a low-stakes environment, allowing them to better understand the boundaries that separate them. This allows for the opportunity to co-create new forms of knowledge that can help mitigate these boundaries. Many different types of boundaries can exist between stakeholder groups, for example, these boundaries can be administrative, jurisdictional, socio-cultural, and cognitive. Boundaries often carry a negative connotation denoting a divide or a separation that can lead to discontinuity or inaction [36,37,40]. However, the discontinuities created by boundaries can trigger learning by a process of collaborative reframing between stakeholders, resulting in the creation of new and different types of knowledge [16,22]. In this way, boundaries can be viewed as connecting rather than separating. By crossing boundaries, a variety of learning mechanisms may occur [41]: (1) Identification, referring to the understanding of how different practices on the boundary relate to each other; (2) coordination, referring to learning about how to work on the boundary with others; (3) reflection, referring to the expansion of perspectives gleamed from working along the boundary; and (4) transformation, referring to the co-development of new knowledge and practices. This last transformative mechanism refers back to knowledge co-creation and the constructivist view of learning that states that learning can simply be a by-product of experience [24].

An important application of SG is their use as a tool to facilitate the socialization process among players. This process is important to the phenomena of boundary crossing by fostering relationships between participants and allowing for the sharing and creation of new types of tacit and explicit knowledge [41]. Although the game artefacts themselves generally provide some information on the planning and management processes, players generally learn from each other through the exchange of information that occurs throughout gameplay [42]. The game environment lowers the pressure associated with planning and decision-making and allows the players to discuss different management strategies, which promotes externalization of knowledge [42]. Additionally, Van Bilsen, Bekebrede, and Mayer [42] found that as game-play progresses, trust builds between participants, which helps them articulate a common understanding of issues. One of the assumptions of game-based learning is that the learning that occurs via gameplay can sometimes be transferred to similar situations outside of the game [39]. To ensure that participants understand how they can use what they have learned during gameplay in a real-world setting, a debriefing session following a game event is particularly important [43,44]. The role of the facilitator during the debriefing allows for the combination of tacit and explicit knowledge through the communication of lessons learnt by certain players to the rest of the group [36,43].

1.2. Research Objective and Questions

For this study, the SG MSP Challenge 2050 was used. MSP Challenge 2050 uses ecosystem models to simulate the spatial dimension of MSP and adds a game layer atop the embedded models. This game layer allows for a greater focus on communication and collaboration, which has been isolated as lacking with current PSS that focus more on the technology behind them rather than the outcomes [14]. Ultimately, this paper seeks to investigate whether MSP Challenge 2050 fosters the added values of PSS as defined by Pelzer, Geertman, van der Heijden, and Rouwette [14]. The following research questions focus on each of the three levels of added value. This objective will be achieved by addressing the following research questions:

- Individual level: Does MSP Challenge 2050 offer a platform for participants to learn about MSP by helping them understand information derived from data, analyses, and models?

- Group level: Does playing MSP Challenge 2050 promote quality interactions and cooperation between participants facilitating the knowledge co-creation cycle? and

- Outcome level: What are the characteristics of the plans developed while playing the game and how do the plans differ from team-to-team?

Research question 1 will determine whether MSP Challenge 2050 fulfills one of the main criteria of a PSS by determining whether participants are able to more easily understand the complex information that the game is transmitting to participants via the user-interface. This first question also helps to determine whether or not MSP Challenge 2050 promotes individual added value referring to explicit knowledge transfer to an individual either via the game artefact itself or through team discussions. Research question 2 will explore the group added value by taking a critical look at the interactions of players throughout the planning stage of MSP Challenge 2050. Research question 3 will explore the outcome level added value by analyzing the plans produced by the teams playing the game and how those relate to the interactions investigated in question 2. Together, the three questions will help to determine if MSP Challenge 2050 can provide an effective PSS.

2. Materials and Methods

2.1. MSP Challenge 2050

This research used MSP Challenge 2050, which was developed in 2013 in the Netherlands by the Ministry of Infrastructure and Water Management and its executive agency called Rijkswaterstaat [45]. It follows the previous version of the game, MSP Challenge 2011, and introduces more complex data sets from the North Sea using the Ecopath food chain model to calculate the cumulative impacts of players’ decisions [45]. It was developed to mimic four concepts inherent to MSP: (1) Vague system boundaries, (2) ambiguity, (3) competing interests, and (4) uncertainty caused by missing information [2]. The following section will provide a brief overview of the game and how it is played. For more information on the development and research behind the game, please refer to Mayer et al. [45], and visit www.mspchallenge.info.

MSP Challenge 2050 transports players to the Sea of Colours, a fictional sea based on the North Sea, bordered by six countries: Green, Indigo, Orange, Purple, Red, and Yellow [2]. Anywhere from 18 to 40 people can play the game at once and a game can last from four to 40 hours depending on the number of people per team and the availability of the players. The game requires at least two facilitators: A technical facilitator that helps with the computer set up and a lead facilitator that serves as a game master. The lead facilitator divides players into groups (countries) of three to eight players; teams are all in the same room so as to facilitate inter-team discussions [2]. Each team member selects a specific role within their country (such as an environmental planner, military, oil and gas, etc.) based on cue-cards given to each team before the game starts [2]. Each team is given a minimum of one computer and is provided with written instructions, as well as goals specific to their country and roles (such as developing the oil and gas industry, focusing on fisheries’ protection, etc.). Examples of the games materials can be found in Figure 1. Given this information, teams start the game by planning objectives according to their country’s vision for the year, 2050 [45]. The goal of the game is for each team to develop a national maritime spatial plan and for the teams to work together on an international level to coordinate their objectives and plans. The planning phase ends once the teams present their objectives and preliminary national plan to the game overall director (G.O.D.), who provides them with feedback and adds country specific challenges. Players from different teams with similar roles must then interact with each other and work together to coordinate their plans and objectives on an international level for the Sea of Colours [45]. The game ideally ends when the clock reaches the year, 2050, although, due to time constraints, the lead facilitator may choose to end the game early. There are no clear winners or losers of the game, rather the focus is on the overall MSP process.

Figure 1.

Game materials (adapted from [46]).

The geospatial data used in the game is based on real data from the North Sea [2,46]. The information is displayed on 55 different layers with information on commercial fishing areas, marine protected areas, pipelines, etc. Teams can choose to display the information on layers or hide these layers when the information is superfluous. When a team is building a plan, for example, to expand a wind farm, they draw the new items on a proposition layer. The teams’ computers are connected by a local area network (LAN), and a graphical user interface (GUI) in the game allows the teams to publish their proposition layers for other teams to comment on and either approve or reject their plans and related projects [45]. Once the plans are implemented, the game computes the cumulative effects of the plans and the GUI shows their evolution on screen. This helps players understand the consequences of their decisions, not only on their own country, but on other countries as well [2,45]. The game does not keep a record of decisions made by the teams at each turn. An added challenge during the game is that in-game time passes slowly at first, but accelerates as the game progresses [45]. Given the time constraints, the teams cannot always develop a comprehensive national plan, but they can still start the process of international coordination. By the end of the game, each team can visualize the cumulative effects of their decisions on the GUI.

2.2. Game Events

A workshop using MSP Challenge 2050 was held in Newfoundland in late spring 2017 at Memorial University in St-John’s. The event was organized as a collaboration between McGill University, Memorial University, and the MSP Challenge 2050 creators. The event was to act as a conclusion to the Memorial University MSP master’s program, with invitations being extended to a group of McGill students with expertise in integrated water resource management and SG. A group of local stakeholders working in MSP in Newfoundland was also invited to participate. A total of 18 participants played at the event: Nine students from Memorial University, five local stakeholders, two postdoctoral researchers, and two graduate students from McGill University.

The event took place over two days and consisted of playing an MSP board game that served as an icebreaker during the morning of the first day followed by a day and a half of playing the MSP Challenge 2050 simulation. The moderators for the Newfoundland event consisted of the two facilitators (lead and programmer) and an expert in MSP playing the G.O.D. character. At the start of the simulation game, the lead facilitator gave players a brief explanation on how to navigate the game. The teams were then given an hour to get to know each other and to familiarize themselves with the software by clicking through the various layers and menus in the game with their teams while facilitators circulated the room to answer any questions that would arise. On day two, the game began. The simulation ended with a short lecture and a debriefing session. Teams with three players were provided with one computer, while teams with four players were given two computers. The breakdown of the teams can be seen in Table 1 below:

Table 1.

Team composition and coding.

Events run by a different research team took place in Copenhagen and Venice. Supplementary survey data from these events were used to corroborate any trends discovered in the data from the Newfoundland event. The Venice event was organized in 2017 for students from the EU Erasmus Mundus Master’s program in MSP at the Universitá luav di Venezia. The event was attended by 15 students with a variety of professional backgrounds from across Europe, most having less than two years of professional experience working in MSP. The Copenhagen event was organized as a kick-off event for the NorthSEE and BalticLines partnership meeting. A total of 34 professionals were in attendance from across the North Sea and Baltic Sea countries. Twenty-six of the participants recorded working in the field of MSP for at least one year; nine of which reported practicing MSP for over five years. From all three events, the Copenhagen event involved the most experienced MSP professionals. Both these additional events were one day long and had a facilitator team consisting of one lead facilitator, one programmer for technical support, and one facilitator playing the role of G.O.D. The Copenhagen cohort was the largest and therefore was divided into six teams, unlike the Newfoundland and Venice events where participants were divided into five teams. For the Venice event, teams ranged from three to four players, while the Copenhagen event had teams of three to five players.

2.3. Data Collection and Analysis Methods

Participants were asked to complete three short surveys by ranking their experiences on a Likert scale and by answering short answer questions. The Likert scale involves an incremental scale that was customized to the specific questions in the survey. A pre-game survey was given to participants from all three game events to gather personal and professional information. This survey gauged their knowledge of MSP and measured their willingness to try a novel tool, such as SG. During the Newfoundland event, a mid-game survey was completed by each team at the end of the planning phase. This survey gathered information on the players’ experience using the game and their relationship with other teams. Finally, the post-game survey was given to all participants from the three game events and focused on their impression of the game, the power dynamics within their team, and the usefulness of SG as a learning and policy tool. All surveys were adapted from an earlier study by Zhou [47]. A Mann-Whitney U test was performed to determine the statistical difference between scores from different events and between participants with different levels of expertise in MSP. The Mann-Whitney U test was chosen because the responses from the surveys were not normally distributed [48].

During the Newfoundland event, exclusively, teams were audiovisually recorded to later perform an interaction analysis. The interaction analysis was performed based on methodology developed by Jordan and Henderson [49]. Both verbal and non-verbal interactions between players were studied and analyzed [49]. Also, the participants’ interactions with the game artefacts were analyzed. For example, Jordan and Henderson [49] found that in a group setting, passing the mouse between participants is a collaborative problem-solving technique. The interaction analysis was performed on the planning phase of the game, which corresponded to the time before teams met with G.O.D. Once all teams had met with G.O.D., the implementation phase began. It should be noted that interaction analysis was not performed after the planning stage due to the increased movement of players often out of bounds of the audiovisual equipment. Following the planning phase, the teams started to disperse and talk amongst themselves. Although a previous study by Mayer, Zhou, Lo, Abspoel, Keijser, Olsen, Nixon, and Kannen [2] used survey data to research the quality of interactions and cooperation between players of MSP Challenge 2050, this study is the first to use audiovisual recordings to conduct an interaction analysis to count and qualitatively analyze the interactions between players throughout the game. Interactions and cooperation are important because they relate to knowledge co-creation through socialization and externalization. By putting the players in close proximity in a low-stakes environment, the likelihood of positive interactions and cooperation increases [50]. These interactions also simulate the socio-part of the socio-technical complexity of MSP [49].

During the planning phase, teams reflected on their goals and developed a strategy to achieve them, therefore, the interaction analysis of this phase provides insight into the potential of MSP Challenge 2050 as a PSS by providing information on how the teams analyzed the data and used the models in the game to create their plans. Subsequently, an interaction analysis was conducted on the debriefing session at the end of the game, providing insight into lessons learnt by participants. Interaction analysis was conducted by two researchers from the team to remove any biases and gain a deeper understanding of interactions. This approach provides deeper insight into player interactions facilitated through the SG event than the surveys and helps corroborate the answers given by the participants. The answers from the surveys and the interaction analysis can be found in the Supplementary Material provided with this article.

The first research question will be answered using data from the interaction analysis of the Newfoundland event, the post-game survey from all three events, and the survey given to the participants during the game at the Newfoundland event. To answer the second research question and to assess if the game indeed promotes cooperation and interactions between players, the number of interactions between team members at the Newfoundland event were counted. The interaction analysis of the debriefing session from the Newfoundland event contained important information and insights that helped answer this question. To quantify some of the findings of the interaction analysis of the planning phase, researchers performed an analysis of the quality of participant interactions. Survey answers from the mid-game survey at the Newfoundland event and the post-game survey from all three events were also used to answer this question. To answer the third question, the quality of the plans presented to G.O.D. by each team will be discussed. Their quality will be correlated to the number and quality of interactions presented in question 2. Together all three questions help determine if MSP Challenge 2050 provides added value as a PSS.

3. Results

3.1. Research Question 1: Does MSP Challenge 2050 Offer a Platform for Participants to Learn about MSP by Helping Them Understand Information Derived from Data, Analyses, and Models? (i.e., Individual Added Value)

A small sample of the different interactions between participants during the game can be found in Table 2 below. It identifies how these interactions show that the game helped participants’ understanding of data, analysis, and models, and how they relate to the knowledge co-creation cycle. There were three main types of interactions recorded that totaled 102 interactions among the teams. The first involved players not understanding a certain word or concept presented in the game and asking their teammates for additional information (see, for example, 0:03:32, 0:18:00, and 0:21:40). It represented close to a quarter of interactions recorded (24.5%). This is externalization of knowledge that helps the participants understand the data and the model in the game. Another type of interaction observed involved players helping each other understand features of the game (see 0:09:30, 0:11:09, 1:24:40, and 1:27:40). These types of interactions represented the bulk (51%) of the interactions between players. These interactions helped the players understand the model in the game and corresponds to socialization, because they would not have had access to this knowledge had they not been experiencing the game together. The last major type of interaction recorded happened when teams extrapolated beyond the scope of the game to reflect on how their plans should be implemented in a real-world setting (see 0:07:30, 0:39:40, 0:45:00, and 1:00:10). These interactions are examples of analysis within the teams and the combination of knowledge provided by the game to apply it to more complex and realistic situations. This last type of interaction accounted for close to a quarter of interactions (24.5%) and mostly occurred when the teams discussed how to present their plan to the G.O.D.

Table 2.

Sample of interactions between participants and how these interactions help them understand data, analysis, and models and the corresponding knowledge co-creation stage.

The post-game survey questions and answers relating to learning outcomes from the three game events are presented in Table 3 below. The possible answers that participants chose from range from 1 (strongly disagree) to 5 (strongly agree), where a 3 is neutral, meaning the player neither agreed nor disagreed with the statement. The answers have been grouped based on the players’ experiences working in MSP. The data is divided by experience because statistical analysis (i.e., Mann-Whitney U test) revealed that prior experience in MSP most influenced the players’ answers. The participants had for choice: Less than a year, from one to two years, two to three years, three to five years, five to 10 years, and 10 or more years of experience working in MSP. When unsure, the participants were told to round down their level of experience. One column presents the average answer for players with less than two years of experience (less than two years), and the other for players with two years or more (two years and more). The first three questions ask the players how much they have learned about MSP from the game artefact, which corresponds to externalization of knowledge. A Mann-Whitney U test on the first three questions indicated that the players with less experience of MSP learnt significantly more about MSP from playing the game than the players with more experience (U = 116, p = 0.05). That being said, both cohorts chose, on average, an answer above “neutral”, meaning that they report some learning about MSP from playing the game. Questions 3 to 7 pertain to the social aspect of playing the game, or the socialization part of the knowledge co-creation cycle. The questions relate to the interactions between the players, which acted as practitioners of MSP. There was no statistical difference between the answers of the two cohorts for these questions (U = 116, p = 0.05), with averages above 3 (“neutral”). Furthermore, the players agreed that the game helped them understand the different barriers to the development of a good MSP process (question 5), which can be linked to the combination of knowledge about different systems involved in MSP and how they can conflict with each other. Finally, survey question 8 asks the players about internal reflection throughout the game, which corresponds to an internalization of knowledge and information provided by the game. Although there is no statistical difference between the two cohorts for this answer, it is important to note that participants from the Copenhagen event scored significantly lower (mean = 3.05, SD = 0.94) on this question than players from Newfoundland (mean = 3.67, SD = 0.50, U = 48, p = 0.05) and Venice (mean = 4.20, SD = 0.77, U = 90, p = 0.05). The post-game survey written responses of the Copenhagen event indicate that the players wanted the game to be based on realistic events and conflicts. Two players suggested introducing different planning scenarios that would be specific to a region. Another player suggested using a real case study in the game. Finally, three players thought that the game was too focused on national planning and that there was not enough time for transnational planning. Overall, the interaction analysis and the post-game survey answers showed that playing MSP Challenge 2050 helped players with little work experience in MSP better understand the challenges of MSP and the game helped facilitate the MSP process within each team.

Table 3.

Average question score with standard deviation in parenthesis for post-game survey questions that look at learning outcomes.

The answers from the mid-game survey of the Newfoundland game event indicate that the teams were struggling to define their goals because of the overwhelming amount of data the game provided them. Team Indigo admitted that they did not know “How to geographically spread out some of the new facilities”. To help work through this cognitive loading, the teams identified different strategies, such as “Us[ing] a more integrated approach” and “leaving on [the] most important layers (things that can’t be moved) to plan our work” (team Indigo), “Taking on specific roles” (team Orange), and “Dividing tasks” (team Purple). These findings demonstrate that although the teams were overwhelmed by the amount of data at the end of the planning phase, they developed strategies to redress the situation, such as: Integrated planning, limiting the amount of information on the screen to only show what they deemed most important, and dividing the specific roles and tasks between the players within a team to limit information overload. The game was therefore a good learning tool that helped the players develop skills to adjust and adapt to working with large data sets required for MSP.

3.2. Research Question 2: Does playing MSP Challenge 2050 Promote Quality Interactions and Cooperation Between Participants Facilitating the Knowledge Co-creation Cycle? (i.e., Group Added Value)

First, from the videos of the Newfoundland event, a quantitative analysis of the interactions in each team was performed for the planning phase of the game (defined as the first 90 min of gameplay where the time in the game did not advance). To graphically represent the data, this 90-min timespan was divided into nine sub-phases that show how interactions changed over the course of the planning phase. Due to increased movement of players between teams after the planning phase, interactions past this point could not be counted. Table 4 below shows the quantitative evolution of interactions for each team during the initial planning phase of the MSP Challenge 2050 simulation. There are three points to keep in mind when looking at Table 4 below. The first is that the numbers represent the total number of interactions, although it should be noted that some teams had less players than others. Having one less player in a team surprisingly did not affect the number of interactions. Interaction analysis reveals that this could be because the teams with three players only used one computer instead of two and therefore had to communicate with each other more than a team that used two computers. The player on the computer tended to speak less than the others and instead take instructions from other teammates. Second, the bolded numbers represent moments where the team experienced technical difficulty and a facilitator had to come and reboot their computer. A technical difficulty is always accompanied by a dip in the number of interactions (see teams, OR, IND, YEL). A third thing to note is that each team met with G.O.D. individually towards the end of the first 90 min of gameplay to go over their country plans. These meetings took between 10–15 min, represented in the table as G.O.D.

Table 4.

Quantitative interaction analysis.

From the interaction analysis, it was noted that after meeting with G.O.D., team members were more stressed and retreated into their chosen roles, which appeared to isolate them from other players within their team. Therefore, the number of interactions dropped as players focused their attention on changing their national plans to match G.O.D.’s demands before discussing with players with similar roles in other teams how to coordinate on an international scale. With that in mind, the table shows that interactions within teams are constantly changing, but that interactions stay relatively high throughout the simulation gaming event.

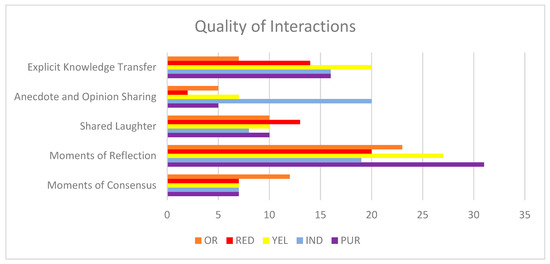

The most important information coming from the quantitative interaction analysis is that MSP Challenge 2050 fosters numerous interactions between teammates during the planning phases. These interactions are necessary for the socialization stage of the knowledge co-creation cycle. Furthermore, it shows that the main hindrance to interactions throughout the game are technical difficulties. Therefore, the utmost care and caution must be exercised when planning these events to reduce instances of technical difficulties to promote the greatest amount of interactions. However, looking at quantity is not enough to come to definitive conclusions, instead, the nature and quality of these interactions must be evaluated. To do this, five qualitative indicators were developed for this research to describe the quality of the interactions. These indicators are described in Table 5 and the results are tallied in Figure 2 below.

Table 5.

Quality of interaction indicators and descriptions.

Figure 2.

Summary of quality of interactions by team.

Once again, it is important to note that team Indigo and team Red had three players while the other teams had four players. Furthermore, interactions between teams during the planning phase were recorded though minimal in number (less than 10% of total interactions). The results for each indicator are similar for each team, with one outlier that outperformed the other teams in each category. Moments of explicit knowledge transfer and of reflection were the most common type of interaction between teams making up 22% and 37% of the quality interactions, respectively. This supports the hypothesis that playing MSP Challenge 2050 can lead to knowledge co-creation through explicit knowledge transfer and combination. Going back to the first research question, it also supports the idea that this SG could be used as a PSS providing added value while it helps participants learn to interpret the data, models, and analysis through interactions that lead to explicit knowledge transfer and reflection. These findings show that MSP Challenge 2050 can serve as a learning tool and as an innovative tool to support planning.

As can be noted by looking at Table 5 above, a great deal of interactions occurred between players and teammates; however, when looking at Figure 2 above, one can see a great deal less in terms of quality interactions. This is not to say that the game is ineffective at fostering quality interactions, rather, a large number of interactions are required to support these quality interactions. Previous research has shown similar results using a different SG (see [36]). Certain quality interactions are made of multiple smaller interactions, which can also explain the discrepancy in occurrence. Survey results from the mid- and post-game questionnaires were also analyzed to determine how the players felt the game helped them collaborate. The answers from the mid-game survey of the Newfoundland game event show that most teams identified team cohesion and collaboration as being helpful to develop and achieve MSP. Teams reported that much of their strategies relied on “lots of communication” (team Red), and “delegation, cooperation” (team Purple). When asked which strategies they were using to develop their MSP, team Yellow responded by saying: “Focus on communication between teams and between different ministers”, while team Orange reported that: “[They] are still having fun working together easily and have chosen some key roles”.

The answers to the mid-game survey indicate that teams valued cooperation during the game. The answers from the post-game survey in Table 6 below reinforce the findings of the mid-game survey and the interaction analysis, i.e. that cooperation took place between participants during the game event. Table 6 summarizes answers from the post-game survey from all three events to questions about the level of collaboration within teams. As before, the possible answers range from 0 (strongly disagree) to 5 (strongly agree), where a 3 is neutral. The Newfoundland and Venice groups ranked the questions similarly (U = 34, p = 0.05). However, we see a statistically significant dip in the values for the Copenhagen group for the second question relating to how well players worked together (UV = 90, UN = 48, p = 0.05). This may be attributed to the fact that for both the Newfoundland and the Venice event, the players were mostly students who knew each other well prior to the game, while the Copenhagen event was attended mostly by MSP professionals with more distant or non-existent relationships. The standard deviation for these questions remains under 1, meaning that most participants shared a similar experience. The high number of interactions and the teams’ focus on collaboration indicates a potential for MSP Challenge 2050 to promote knowledge co-creation through socialization, externalization, and combination. In fact, as noted above, players identified communication as an important strategy for the next steps of the game. Overall, the game promoted ongoing collaboration within teams, despite each player choosing a distinct role for themselves.

Table 6.

Average question score with standard deviation in parenthesis for post-game survey questions that look at collaboration.

The key insights from the debriefing session revolved around economic considerations, collaboration between different teams, and the feasibility of the task given to them. The players reflected on the complexity of MSP, which was made easier by removing economic components during the game, something the players were thankful for, but that removed from the realism of the task given to them. Even without economic considerations, the teams acknowledged that they had to make tradeoffs between different tasks and prioritize certain goals. In response to the complexity of MSP and learning to make decisions in a complex system, player RED2 noted that “It’s not that people are useless—interactions between good and moral people make weird outcomes”. The facilitator reflected on this statement by explaining that political and research cycles have different lengths, therefore, planners often find themselves trying to make decisions that fit within the short span of a political cycle without necessarily having as much scientific research to help them as they would like. This is a reality that most planners deal with, and the game illustrated that reality. From the interaction analysis of the debriefing session, it seems that the complexity of the subject mixed with the fast-paced game allowed some participants to reflect critically on what was happening, but that the game process could be improved to allow for more space for critical thinking. An interesting development occurred during the Newfoundland event, which can be explained by looking at the interaction analysis of the game event and the debrief. A feature of the game requires teams to make their plans visible to other teams to receive feedback and approval. As the game currently stands, it is suggested that teams seek approval from other teams before implementing plans in shared waters (based on the Kyiv (SEA) protocol that states that Member States must notify and consult each other on all major projects under construction that may have adverse environmental impacts across borders). However, the teams realized, one by one, that they did not need to wait for approval to go ahead with implementation of their plans (see Table 7 below).

Table 7.

Interactions regarding consultation for project approval.

The role and importance of this feature of the game will be further explored in the Discussion Section. Overall the interaction analysis showed that players helped each other understand the terms used by the game, the game itself, as well as reflected on how their plans could be implemented in the real world.

3.3. Research Question 3: What are the Characteristics of the Plans Developed While Playing the Game and How Do the Plans Differ From Team-to-team? (i.e., Outcome Added Value)

To assess the outcome of added value of MSP Challenge 2050, the national plans developed by each team during the planning phase provide valuable information. Table 8 below provides an overview of the national plans developed by each team in the first 90 min of game-play and presented to G.O.D. The plans below are divided into five sectors: Ecology, fishing and recreation, oil and gas, renewable energy, shipping, and an additional miscellaneous category.

Table 8.

Results of the planning phase.

When looking at the above plans, some teams’ plans are more comprehensive than others. For example, the Orange team planned for all five sectors as well as having miscellaneous goals. Similarly, the Purple team planned for all sectors, save shipping, although they managed to include timelines for most of their plans. Team Indigo also created a rather comprehensive plan. On the other end of the spectrum, we see that the Yellow and Red teams’ plans are rather lacking, with the Yellow team failing to plan for two of the sectors while the Red team planned for all sectors, though vaguely.

Comparing the quantitative and quality interactions obtained in question 2 shows how these interactions may have supported the development of their plans. Table 9 below shows the total quality and quantitative interactions for all teams.

Table 9.

Total quantitative and quality interactions.

Table 9 above shows that the teams with more comprehensive plans interacted more throughout the game event. These teams generally had more quality interactions than other teams. The exception here being the Orange team that had less interactions (both quantitative and quality). However, it should be noted that for two phases, team Orange was out of view of the recording devices, and the team interacted more than the numbers show. In fact, in their mid-game survey, they reported “we looked at everything as a team, looked at what is missing, what needs more information, to make sure to talk with key partners to avoid conflict.” The Yellow team is also an exception, with the second highest quality interactions, yet they still had an underdeveloped plan. Despite this, the Yellow team noted in their mid-game survey that one of the challenges they faced was “confusion over how to prioritize things”, which may have led to confusion over how to develop their national plan. The Yellow team did, however, have the most instances of knowledge sharing, meaning that although their MSP plans may not have been as comprehensive as most, there was the highest exchange of knowledge occurring.

The outcome added value does not end at the planning phase, it extends into the implementation phase. Although not recorded, teams discussed the implementation phase during the debriefing period, which was recorded as well. The teams discussed an example of international cooperation that occurred during the implementation phase, namely the international Sea of Colours Energy Grid that was a joint creation by all teams. Proposed by the Orange team, a so-called “international summit” was held where representatives from each country were brought together. As a result of this summit, all five countries successfully linked their energy grids in a hub in the center of the Sea of Colours. The Orange team was composed of one student, two local stakeholders, and one postdoctoral fellow; it was the team with the most combined experience. The other teams benefitted from their expertise and were able to learn from their experience and create new knowledge between themselves, resulting in a successfully implemented Energy Grid for the Sea of Colours.

3.4. Summary of Research Results

Though the results and findings for each individual research question are essential to achieve the research objective, it is also important to look at the inter-connections between these questions. An important aspect of this study was to investigate the added values of MSP Challenge 2050 as defined by Pelzer, Geertman, van der Heijden, and Rouwette [14]. Namely, our research questions sought to investigate the individual, group, and outcome added values. By isolating these added values, we add credence to the idea that SG (specifically MSP Challenge 2050) can be used as PSS.

The findings from the first research question allowed for a deeper investigation into the individual added value that PSS can bring out in users. Results and findings relating to this first question indicated that by playing MSP Challenge 2050, participants are given the opportunity to acquire knowledge and information from the game artefact and game experience itself. The results have shown that this opportunity is greater for participants with less experience and formal training in MSP, though, generally, the game experience proved advantageous to all participants. The second research question allowed the researchers to investigate the added group benefits as defined by Pelzer, Geertman, van der Heijden, and Rouwette [14]. A quantitative analysis shows that players are offered a venue in which interactions are common and stable (unless teams experienced technical difficulty). Furthermore, an analysis of quality interactions has shown that knowledge between participants is exchanged either through explicit knowledge transfer or anecdotal exchange. The game experience also fosters moments of reflection, allowing for players to come together and reflect on the information being transmitted to them via the game artefact that was determined in research question 1. This knowledge transfer and reflection therefore allows for the different stages of knowledge co-creation to take place. Table 10 shows the stages of the knowledge conversion cycle and summarizes if and how these stages occurred during the MSP Challenge 2050 game event.

Table 10.

Evidence of knowledge co-creation occurring during MSP Challenge 2050 gameplay.

Finally, research question 3 investigated the added benefits of the outcome level by analyzing the plans that were developed by teams throughout the planning process. These plans were informed by both the individual and group levels as they are a synthesis of all the information and knowledge developed by players throughout the planning stage of the game. Findings from this question indicate that teams that interacted more both in quantity and quality generally created more thorough MSP plans for their respective countries. Overall, it can be concluded that the MSP Challenge 2050 SG displays the added values required for a PSS on an individual, group, and outcome level. It offers opportunities for interaction and discussion within and between teams. This interaction and discussion increase the chances of creating new tacit or explicit knowledge on an individual and group level. The results of this study are preliminary, although this research provides insight into further research opportunities on how SG can effectively be used as a PSS or how PSS can be gamified to allow for learning outcomes that may not be present in traditional PSS.

4. Discussion

4.1. Improving MSP Challenge 2050 to Become a PSS

Although preliminary research results show that MSP Challenge 2050 offers many of the same added benefits as a PSS, there are still opportunities and areas for further improvements to increase the added value. Firstly, several players reported some difficulties in understanding the game early on, noting a learning curve. As mentioned in Section 3.1, one of the types of interactions most frequent during the game involved players helping each other navigate the game interface. Teams therefore spent a large amount of time learning how to use the game artifact instead of learning more about the MSP process. Since the individual added value of a PSS focuses on helping participants use and understand data, it is important to reduce this steep learning curve as much as possible [51]. MSP Challenge 2050, therefore, suffers from the same criticism as other PSS, which can often be characterized as user unfriendly [52]. This could be remedied by adding an instruction manual or by having more facilitators to help teams with the technical aspects of the game, which would allow participants to focus more on the MSP planning process. However, part of the game intends to overstimulate participants to help them learn to compartmentalize information and deal with uncertainty in decision-making [45]. In fact, Van Bilsen, Bekebrede, and Mayer [42] explain that SG are an important tool to teach decision-makers to make choices that affect complex systems. Therefore, the game should not be too simple or the participants will not learn these hard to teach lessons. However, the level of difficulty should always correspond to the types of users that participate in game events.

Although the technical complexity of the game detracted the participants from focusing on the planning process, several participants commented on the absence of financial considerations in the game, which made decisions easier to reach. These participants emphasized that the inclusion of economic analysis parameters in the game will increase the added value of the MSP Challenge 2050 SG as a PSS on the group and outcome level. On the group level, had the cost of decisions been included in the game, the teams would have had to discuss trade-offs in more depth, which could have led to more conflict or consensus building about setting national and international priorities. On the outcome level, the preliminary plans developed by the teams would have better reflected the realities of the MSP planning process. As it stood, the priorities of each nation were provided to teams at the beginning of the game event through cue cards and subsequently changed by G.O.D. during their one-on-one meeting with him. Without economic considerations, the MSP Challenge 2050 SG is unable to respond to the real needs of MSP stakeholders or decision-makers that play the game, because in reality, they require economic considerations to make decisions.

Biermann [52] explains that a common pitfall of PSS is their complexity, which makes it difficult to tailor their use for a specific purpose, therefore, they are often too general and do not respond to the very specific needs of its users. MSP Challenge 2050 experiences this common pitfall and if improved would increase added value on the outcome level. The game’s focus is on teaching the MSP process, which makes it less effective to address more specific planning issues. This feedback was received from three players following the Copenhagen event that wished they could have used the game to test scenarios or learn about a specific case study that was more relevant to their work. Given that the Copenhagen players had the most MSP experience, their comments very likely reflect those of potential users of MSP Challenge 2050 as a PSS. Therefore, future versions of MSP Challenge 2050 should be tailored to meet more specific user needs of participants, whether it be by customizing the region in which they are playing or by setting specific initial boundary conditions that mimic a scenario the players are interested in exploring. This brings up an important concern when working with either SG, PSS, or hybrids of the two: Knowing your specific audience is essential. More experienced users may desire working on a tangible issue while those with less of an understanding of the MSP process benefit more from a game with a broad and more educational scope.

MSP Challenge 2050 relies on a simplified model that abstracts certain information [53]. Being aware of the strengths and weaknesses of a model can help its users make more informed decisions based on the information the model returns [54]. A recent review by Steenbeek, J. (2015) [46] concluded, however, that the model used in MSP Challenge 2050, the Ecopath food chain model, was insufficiently realistic to be used for decision-making purposes. This removes from the validity of the plans created using MSP Challenge 2050, and therefore reduces the added value on an outcome level. The next version of the game, called the MSP Challenge Platform, will be using more complex models with the aim to more closely reflect reality by giving players the option to choose between three real-world locations: The North Sea, The Baltic Sea, and the Clyde Marine region in Scotland. The new version of the game will also enhance roleplay options to allow for players to more fully immerse themselves in the game environment. Updated shipping and energy simulators will also add to the realism. Also, allowing participants to change certain parameters and see the projected outcome will allow them to develop and test scenarios, something that was identified as important by players. Overall, there are several changes that could be made to MSP Challenge 2050; though many of these changes will likely be made in the upcoming version of the game, MSP Challenge Platform.

4.2. Limitations of the Study and Avenues for Future Research

The current study has certain limitations and offers opportunities for further research involving the MSP Challenge 2050 SG and its newer platform version. Firstly, the information gathered in the post-game surveys provided insight into to how the participants felt immediately after playing the game. It should be noted that the research team was unable to determine whether there was a clear increase in knowledge in players after playing the game, which relates to added value on the individual level. To remedy this, future studies should include a survey gauging players’ knowledge before and after the game to definitively show whether or not players increased their understanding of the MSP process through the game and game experience. This will also help researchers isolate which type of stakeholder (i.e., level of understanding of MSP processes) would benefit the most from the game. For example, if players score perfect on the pre-game questionnaire then it follows that the game will be of little use to them, at least in terms of increasing explicit knowledge related to MSP or individual level added value.

A second set of limitations involve improvements needed to assess the SG’s group added value. First, the interaction analysis was performed only on the national planning phase of the game. An interaction analysis of the whole game would have gained more insight into how the game can promote collaboration between teams, and how international conflicts were dealt with during game-play. For future game events, cameras and microphones should be set up to record movement and discussions of participants throughout the room and further analyze interactions between participants within and between teams. Due to this limitation, only the intra-team interactions were analyzed. Had further stages of the game been recorded, researchers could have looked at inter-team interactions as well. Inter-team interactions more closely resemble the reality of decision-making and the MSP process and would allow for researchers to look at other indicators, such as moments of inter- and intra-team conflicts, capacity building, knowledge exchange, etc. Researchers could then look at the frequency of these indicators in both inter-team and intra-team contexts, allowing a greater understanding of the types of interactions that occur both within and between teams that may have conflicting objectives and goals.

Within the context of a simulation gaming event, moments of conflict, for example, can provide insight into the differing perspectives and viewpoints of players on other teams. Facilitating communication of diverging viewpoints directly refers to the group added value. Therefore, during the debrief, facilitators could explore the idea of conflict to give players a greater perspective on why and how these conflicts occur. Another important aspect that must be managed for future events is the fact that teams can publish their plans without the approval of all other countries. Once players became aware of this fact, a distinct decrease in inter-team collaboration was noted. In future versions of the game, it would be essential to evaluate what happens when teams are obligated to receive approval from all other affected teams prior to implementing their country plans. This would add realism to the game given that this feature is based on the Kyiv (SEA) protocol. By-passing approval removes the international dimension from the game; MSP, however, involves international, inter-disciplinary, and cross-actor collaborations that are better reflected by interactions that resemble a network of teams rather than the interactions occurring within each team separately [6]. This will also provide a game experience for participants that more closely resembles the reality and complexity of MSP decision-making processes.

Although we have shown that the added values of a PSS are present in MSP Challenge 2050, more research must be conducted to corroborate these results. Most notably the added value at the outcome level would greatly benefit from being further studied. We have shown that group and individual added values lead to better quality plans, but for the outcome level to be fully realized, these improvements must be transferable to real world settings. Therefore, follow-ups with players of the game will have to be completed in the months following gameplay to determine whether the game experience facilitated planning processes in the real-world. It will be interesting to look at a platform that allows for more in-depth planning where players can interact with data and each other both in person and virtually over the course of a longer time period. This platform may also be integrated into real world planning environments, thus allowing for a more in-depth assessment of the outcome level added value of a game, like MSP Challenge 2050.

After having investigated the benefits of MSP Challenge 2050 as a SG-PSS hybrid, it will also be beneficial to compare it to other non-gamified PSS tools. By organizing two parallel events, one using a gamified PSS and one using a more traditional PSS, it may be possible to further study the benefits that arise solely from the gamification and those that are common in both gamified and standard PSS.

5. Conclusions

Overall, this research outlines the benefits, opportunities, and further research required to study the added value of gamified elements to traditional PSS. On an individual level, the most important added value of gamification, such as that provided in the MSP Challenge 2050, appears to be that of learning [14]. The learning that occurs through gamification of PSS, such as with MSP Challenge 2050, takes several forms. Players of MSP Challenge 2050 indicate to have developed a greater understanding of the myriad of challenges and complexities that exist when developing a marine spatial plan. Game-based learning is increasingly recognized as an essential element for sustainable water and environmental management that seeks to include not only more, but also a broader variety of stakeholders into decision making processes [55]. By using SG, managers can facilitate stakeholder meetings that enhance the socialization of new stakeholders while assuring that all stakeholders have access to the same level of baseline information. This process also allows stakeholders to gain a deeper understanding of the views and perspectives of their peers as well as the context they are working within.

On a group level, it is important that the added value of a gamification tool fosters communication, collaboration, and consensus building. It has been shown in this study and others [36] that SG possess immense potential to foster the quantity as well as the quality of interactions between participants. Specifically, it has been shown that SG can create a space where participants can laugh together, share thoughts, ideas and stories, and reflect on their experiences. This allows for the participants to develop and enhance their collaboration skills while activating the knowledge co-creation cycle and developing new tacit and explicit knowledge through these interactions. Gamifying the collaborative decision-making process removes the risk of real-world consequences, allowing for players to explore, test, and discuss scenarios they may not have been able to otherwise. The outcome level refers to the resultant plans stemming from a PSS. It has been shown that teams that collaborate more and shared more quality interactions developed more comprehensive plans. The outcome in these cases can be said to be based on a deeper consideration of the information provided [14]. The human resource is an important one and allowing players the time to interact and discuss with their teammates allows for an externalization and combination of knowledge that leads teams to create more comprehensive marine spatial plans. Although further research is required to fully understand the extent of the outcome level added value of gamification, a preliminary argument can be made that SG have the ability to improve planning outcomes by fostering spaces wherein the individual and group added values are able to manifest.

Overall, the MSP Challenge 2050 has indicated to foster all three levels of added values; as such an argument can be made that the potential of SG should be further studied for their utility as PSS. SG should be used in the early phases of the MSP process to assure all involved are given the same baseline information (individual level), to allow all involved a chance to socialize and work together on a specific problem (group level), and to aid in the creation of more comprehensive and widely agreed upon plans (outcome level). The results of this study indicate that SG have the potential to be used as tools for stakeholder development and planning. Specifically, MSP Challenge 2050 is a promising tool for planners and future versions of the game may provide more effective tools to enhance MSP processes.

Supplementary Materials

The following are available online at http://www.mdpi.com/2073-4441/10/12/1786/s1.

Author Contributions

I.M. and X.K. have moderated the MSP Challenge game sessions in which data collection for this paper has taken place. S.J., L.G., A.I. and X.K. conducted the research activities; W.M., J.A. and I.M. designed and supervised this research project. S.J. and L.G. wrote the paper; W.M. and X.K. provided ongoing support and reviews to the paper drafts.

Funding

This study was funded by a Social Sciences and Humanities Research Council of Canada (SSHRC) Partnership Development Grant held by Adamowski and contributed to by Nguyen from the McGill University Brace Centre for Water Resources Management. The research is also part of the PhD thesis by author X.K. on the use of Serious Gaming in (transboundary) Maritime Spatial Planning at Wageningen University, The Netherlands, with support of Rijkswaterstaat.

Acknowledgments

The original idea of the MSP Challenge board game is from Lodewijk Abspoel, of the Netherlands Ministry of Infrastructure and Water Management. The concept of the board game and digital game has been further developed by a team, including the authors I.M. and X.K. The authors acknowledge the contributions by Linda van Veen and Bas van Nuland in the design and development of the game materials and software. The authors furthermore wish to thank Francesco Musco, Federica Appiotti and the Erasmus Mundus MSP students at IUAV (Italy), Geoff Coughlan and students at Memorial University (Canada), and the EU Interreg NorthSEE and BalticLINes project partners.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Kannen, A. Marine spatial planning in the context of multiple sea uses, policy arenas and actors. 2014. Available online: https://www.climateservicecentre.de/imperia/md/images/gkss/institut_fuer_kuestenforschung/kso2/kso_kannen.pdf (accessed on 3 December 2018).

- Mayer, I.; Zhou, Q.; Lo, J.; Abspoel, L.; Keijser, X.; Olsen, E.; Nixon, E.; Kannen, A. Integrated, ecosystem-based marine spatial planning: Design and results of a game-based, quasi-experiment. Ocean Coastal Manag. 2013, 82, 7–26. [Google Scholar] [CrossRef]

- Arbo, P.; Thủy, P.T.T. Use conflicts in marine ecosystem-based management—The case of oil versus fisheries. Ocean Coastal Manag. 2016, 122, 77–86. [Google Scholar] [CrossRef]

- Douvere, F.; Ehler, C.N. New perspectives on sea use management: Initial findings from European experience with marine spatial planning. J. Environ. Manag. 2009, 90, 77–88. [Google Scholar] [CrossRef] [PubMed]

- Ehler, C.N. Chapter 1: Marine spatial planning: An idea whose time has come. In Offshore Energy and Marine Spatial Planning; Katherine, L., Yates, K.L., Bradshaw, C.J.A., Eds.; CRC Press: New York, NY, USA, 2018. [Google Scholar]

- Abramic, A.; Bigagli, E.; Barale, V.; Assouline, M.; Lorenzo-Alonso, A.; Norton, C. Maritime spatial planning supported by infrastructure for spatial information in Europe (inspire). Ocean Coast. Manag. 2018, 152, 23–36. [Google Scholar] [CrossRef]

- Pomeroy, R.; Douvere, F. The engagement of stakeholders in the marine spatial planning process. Mar. Policy 2008, 32, 816–822. [Google Scholar] [CrossRef]

- Gazzola, P.; Onyango, V. Shared values for the marine environment–developing a culture of practice for marine spatial planning. J. Environ. Plan. Policy Manag. 2018, 20, 1–14. [Google Scholar] [CrossRef]

- Deal, B.; Gu, Y. Resilience thinking meets social-ecological systems (SESs): A general framework for resilient planning support systems (PSSs). J. Dig. Landsc. Archit 2018, 200–207. [Google Scholar] [CrossRef]

- Harris, B. Beyond geographic information systems. J. Am. Plan. Assoc. 1989, 55, 85–90. [Google Scholar] [CrossRef]

- Kuller, M.; Bach, P.M.; Ramirez-Lovering, D.; Deletic, A. Framing water sensitive urban design as part of the urban form: A critical review of tools for best planning practice. Environ. Model. Softw. 2017, 96, 265–282. [Google Scholar] [CrossRef]

- Geertman, S.; Stillwell, J. Planning support systems: An inventory of current practice. Comput. Environ. Urban Syst. 2004, 28, 291–310. [Google Scholar] [CrossRef]

- Zulkafli, Z.; Perez, K.; Vitolo, C.; Buytaert, W.; Karpouzoglou, T.; Dewulf, A.; De Bievre, B.; Clark, J.; Hannah, D.M.; Shaheed, S. User-driven design of decision support systems for polycentric environmental resources management. Environ. Model. Softw. 2017, 88, 58–73. [Google Scholar] [CrossRef]

- Pelzer, P.; Geertman, S.; van der Heijden, R.; Rouwette, E. The added value of planning support systems: A practitioner’s perspective. Comput. Environ. Urban Syst. 2014, 48, 16–27. [Google Scholar] [CrossRef]

- Brömmelstroet, M.T.; Schrijnen, P.M. From planning support systems to mediated planning support: A structured dialogue to overcome the implementation gap. Environ. Plan. B Plan. Des. 2010, 37, 3–20. [Google Scholar] [CrossRef]

- Amara, N.; Ouimet, M.; Landry, R. New evidence on instrumental, conceptual, and symbolic utilization of university research in government agencies. Sci. Commun. 2004, 26, 75–106. [Google Scholar] [CrossRef]

- Innes, J.E. Planning theory’s emerging paradigm: Communicative action and interactive practice. J. Plan. Educ. Res. 1995, 14, 183–189. [Google Scholar] [CrossRef]

- Pelzer, P.; Geertman, S.; van der Heijden, R. Knowledge in communicative planning practice: A different perspective for planning support systems. Environ. Plan. B Plan. Des. 2015, 42, 638–651. [Google Scholar] [CrossRef]

- Chew, C.; Zabel, A.; Lloyd, G.J.; Gunawardana, I.; Monninkhoff, B. A Serious Gaming Approach for Serious Stakeholder Participation; The City University of New York: New York, NY, USA, 2014. [Google Scholar]

- Mayer, I.; Bekebrede, G.; Warmelink, H.; Zhou, Q. A brief methodology for researching and evaluating serious games and game-based learning. In Psychology, Pedagogy, and Assessment in Serious Games; IGI Global: Hershey, PA, USA, 2014; pp. 357–393. [Google Scholar]

- RATAN, R.A.; Ritterfeld, U. Classifying serious games. In Serious Games; Routledge: London, UK, 2009; pp. 32–46. [Google Scholar]

- Mayer, I.S. The gaming of policy and the politics of gaming: A review. Simul. Gaming 2009, 40, 825–862. [Google Scholar] [CrossRef]

- Barreteau, O.; Le Page, C.; Perez, P. Contribution of Simulation and Gaming to Natural Resource Management Issues: An Introduction; Sage Publications: Thousand Oaks, CA, USA, 2007. [Google Scholar]

- Aubert, A.H.; Lienert, J. Gamified online survey to elicit citizens’ preferences and enhance learning for environmental decisions. Environ. Model. Softw. 2019, 111, 1–12. [Google Scholar] [CrossRef]

- Merriam, S.B.; Caffarella, R.S.; Baumgartner, L.M. Learning in Adulthood: A Comprehensive Guide; John Wiley & Sons: Hoboken, NJ, USA, 2012. [Google Scholar]

- Polanyi, M. Tacit knowing: Its bearing on some problems of philosophy. Rev. Mod. Phys. 1962, 34, 601. [Google Scholar] [CrossRef]

- Roux, D.J.; Rogers, K.H.; Biggs, H.C.; Ashton, P.J.; Sergeant, A. Bridging the science–management divide: Moving from unidirectional knowledge transfer to knowledge interfacing and sharing. Ecol. Soc. 2006, 11, 1–20. [Google Scholar] [CrossRef]

- Gröhn, P.; Kasu, D.; Swiac, M.; Zafar, A. Organizing the organization: Recommendation of development for explicit and tacit knowledge sharing at a university library in north america. 2017. Available online: http://www.diva-portal.org/smash/record.jsf?pid=diva2%3A1085189&dswid=-7231 (accessed on 17 August 2017).

- Polanyi, M. The logic of tacit inference. Philosophy 1966, 41, 1–18. [Google Scholar] [CrossRef]

- Sudhindra, S.; Ganesh, L.; Arshinder, K. Knowledge transfer: An information theory perspective. Knowl. Manag. Res. Pract. 2017, 15, 400–412. [Google Scholar] [CrossRef]

- Nonaka, I.; Von Krogh, G. Perspective—tacit knowledge and knowledge conversion: Controversy and advancement in organizational knowledge creation theory. Organiz. Sci. 2009, 20, 635–652. [Google Scholar] [CrossRef]

- Nonaka, l.; Takeuchi, H.; Umemoto, K. A theory of organizational knowledge creation. Int. J. Technol. Manag. 1996, 11, 833–845. [Google Scholar]

- Nonaka, I.; Byosiere, P.; Borucki, C.C.; Konno, N. Organizational knowledge creation theory: A first comprehensive test. Int. Bus. Rev. 1994, 3, 337–351. [Google Scholar] [CrossRef]

- Alavi, M.; Leidner, D.E. Knowledge management and knowledge management systems: Conceptual foundations and research issues. MIS Q. 2001, 25, 107–136. [Google Scholar] [CrossRef]

- Nonaka, I.; Takeuchi, H. The Knowledge-Creating Company: How Japanese Companies Create the Dynamics of Innovation; Oxford University Press: Oxford, UK, 1995. [Google Scholar]

- Jean, S.; Medema, W.; Adamowski, J.; Chew, C.; Delaney, P.; Wals, A. Serious games as a catalyst for boundary crossing, collaboration and knowledge co-creation in a watershed governance context. J. Environ. Manag. 2018, 223, 1010–1022. [Google Scholar] [CrossRef] [PubMed]

- Medema, W.; Wals, A.; Adamowski, J. Multi-loop social learning for sustainable land and water governance: Towards a research agenda on the potential of virtual learning platforms. NJAS-Wageningen J. Life Sci. 2014, 69, 23–38. [Google Scholar] [CrossRef]

- Medema, W.; Adamowski, J.; Orr, C.J.; Wals, A.; Milot, N. Towards sustainable water governance: Examining water governance issues in québec through the lens of multi-loop social learning. Can. Water Resour. J. 2015, 40, 373–391. [Google Scholar] [CrossRef]

- Keijser, X.; Ripken, M.; Mayer, I.; Warmelink, H.; Abspoel, L.; Fairgrieve, R.; Paris, C. Stakeholder engagement in maritime spatial planning: The efficacy of a serious game approach. Water 2018, 10, 724. [Google Scholar] [CrossRef]

- Kaufman, S.; Smith, J. Framing and reframing in land use change conflicts. J. Architect. Plan. Res. 1999, 16, 164–180. [Google Scholar]

- Akkerman, S.; Bruining, T. Multilevel boundary crossing in a professional development school partnership. J. Learn. Sci. 2016, 25, 240–284. [Google Scholar] [CrossRef]

- Van Bilsen, A.; Bekebrede, G.; Mayer, I. Understanding complex adaptive systems by playing games. Inf. Educ. 2010, 9, 1–8. [Google Scholar]

- Crookall, D. Serious games, debriefing, and simulation/gaming as a discipline. Simul. Gaming 2010, 41, 898–920. [Google Scholar] [CrossRef]

- Guillén-Nieto, V.; Aleson-Carbonell, M. Serious games and learning effectiveness: The case of it’sa deal! Comput. Educ. 2012, 58, 435–448. [Google Scholar] [CrossRef]

- Mayer, I.; Zhou, Q.; Keijser, X.; Abspoel, L. Gaming the Future of the Ocean: The Marine Spatial Planning Challenge 2050. 2014. Available online: http://www.mspchallenge.info/uploads/3/1/4/5/31454677/msp_game_2014_sgda.pdf (accessed on 16 June 2018).

- Steenbeek, J. Integrating ecopath with Ecosim into the MSP Software—Conceptual Design; Figshare: London, UK, 2015. [Google Scholar]