Abstract

This research aims to develop a farm management emulation tool that enables agrifood producers to effectively introduce advanced digital technologies, like intelligent and autonomous unmanned ground vehicles (UGVs), in real-world field operations. To that end, we first provide a critical taxonomy of studies investigating agricultural robotic systems with regard to: (i) the analysis approach, i.e., simulation, emulation, real-world implementation; (ii) farming operations; and (iii) the farming type. Our analysis demonstrates that simulation and emulation modelling have been extensively applied to study advanced agricultural machinery while the majority of the extant research efforts focuses on harvesting/picking/mowing and fertilizing/spraying activities; most studies consider a generic agricultural layout. Thereafter, we developed AgROS, an emulation tool based on the Robot Operating System, which could be used for assessing the efficiency of real-world robot systems in customized fields. The AgROS allows farmers to select their actual field from a map layout, import the landscape of the field, add characteristics of the actual agricultural layout (e.g., trees, static objects), select an agricultural robot from a predefined list of commercial systems, import the selected UGV into the emulation environment, and test the robot’s performance in a quasi-real-world environment. AgROS supports farmers in the ex-ante analysis and performance evaluation of robotized precision farming operations while lays the foundations for realizing “digital twins” in agriculture.

1. Introduction

The range of human- and environment-related risks that are associated with agricultural operations, along with the elevated demand for food supplies, highlights the need for intensification and industrialization in agriculture [1]. Agricultural intensification and industrialization are prerequisites for harnessing agro-ecological and socio-technological processes’ complexity in order to increase agri-food production [2], while contemporarily reducing the associated ecological footprint [3] (p. 130). In this regard, technology-enabled agricultural solutions could support farmers in improving productivity levels while at the same time ensuring safety and sustainability in agricultural operations [4,5]. In particular, Industry 4.0 in agriculture is considered a financially, environmentally and technically viable option to tackle agrifood value chain-related challenges by introducing [6,7]:

- connected equipment and farmers’ interaction with legacy technology;

- automation in agricultural operations; and

- scientific assessment methods for real-time monitoring of input requirements and farming outputs.

Intelligent autonomous systems and robotics pose a promising option to respond towards the constantly growing food demand and the increased resources’ stewardship requirements in the agricultural sector [8]. The digitalization of agricultural processes and services requires multi-engineering domains and disciplines [9], thus dictating the adoption of technology-based intelligent solutions which integrate both software and hardware components. In terms of software constituents, decision support tools can assist farmers in making evidence-based decisions regarding agricultural operations to improve productivity and prevent environmental degradation [10]. In particular, the use of information and communication technology can support the bottom-up transformation of agricultural activities, from monitoring and surveying of plants to tilling, seeding, watering, fertilizing, weeding and harvesting tasks [11]. To a greater extent, modern information technology-based systems should create cyber-physical interfaces to demonstrate and communicate the potential benefits of intelligent machinery, such as unmanned ground vehicles (UGVs), to farmers so as to create facilitating conditions towards the adoption of advanced technology applications in real-world operations [12]. Notwithstanding the auxiliary role of decision support systems, the extant literature clearly demonstrates that the majority of such systems are poorly adapted to actual farmers’ needs; hence, their uptake and use in agriculture is limited [13]. In addition, most information technology-based agricultural platforms are limited to software applications, thus failing to efficiently couple cyber applications to physical machinery. Therefore, existing agricultural toolkits inhibit farmers from apprehending the connections and linkages within and across the physical and cyber world [14]. However, to effectively transition towards Agriculture 4.0, i.e., agri-activities enabled by intelligent applications alike Industry 4.0 in manufacturing, it is significant to realize “digital twins” and understand the implications of advanced agri-technologies at both software and hardware levels. In particular, applications at a cyber level have to capture multi-physic and multi-scale operational aspects that mirror the corresponding physical reality [15].

At a software—cyberspace—level, capturing the plethora of parameters pertinent to agricultural operations, along with the associated sustainability impact thereof, is a complex task requiring knowledge over local climate, crop varieties and field geomorphological and hydrological specifications [16]. Extant tools to evaluate the benefits arising from the adoption of intelligent autonomous systems have limited capabilities. Simulation, a method extensively used to assess a system’s impact on an operational setting, requires simplified approximations and assumptions to deliver a realistic idea about an intelligent system’s operational performance and outputs [17]. In this regard, emulation techniques are devised to imitate, through cyber-physical interfaces, the behaviour of real-world equivalent systems under alternative pragmatic conditions in real-time [18]. However, the application of emulation modelling for a regular farmer is challenging, particularly during the entire life-cycle of a UGV system, due to time, cost, and capability constrains. In addition, integrating field characteristics and machinery functionality depends on the complexity of the technology-enabled farming system.

At a hardware—physical space—level, in today’s digitalization era intelligent technological applications are pertinent to field operations to tackle uncertainty and variability in rural activities (e.g., soil dynamics and crops’ water requirements), and ultimately enable self-optimized agricultural supply networks [19]. At a field level, automation in agriculture provides an appealing alternative to the repeated heavy-duty tasks that are currently being performed by human labour. In this sense, automation relates to agriculture by means of highly specialized machinery. Despite the progress in intelligent automated technologies for agricultural environments, further research is needed to engage farmers into the intelligent systems’ control loop [8]. Digital technologies in the agrifood sector have only been studied in isolation with a cross-functional analysis. Related research efforts focus merely on detailed technical aspects of developed robot systems and do not enable farmers to perform a predictive analysis and ex-ante assessment of agricultural operations efficiency. Therefore, farmers cannot become aware of the sustainability benefits that could be derived from the adoption and implementation of automated systems (e.g., UGVs) and other digital technologies (e.g., sensors) in farming operations.

Software- or hardware-based digital solutions to promote sustainable intensification in agriculture should be able to be tested and assessed prior to their actual deployment [2]. Notwithstanding the intelligent characteristics and technically advanced capabilities of alternative “high-tech” solutions in agriculture, research should also focus on attributes that enable or hinder the uptake of digital interventions like farmers’ personality and objectives, education level, skills, learning style and the scale of the business activities [13].

The aim of this research is to develop an emulation tool that integrates cyber-physical interfaces to enable farmers make evidence-based decisions about the implementation of alternative intelligent farming applications via attempting to answer the following research questions:

- Research Question #1: What are the challenges encountered by current decision support tools for the predictive analysis performance and ex-ante evaluation of digital applications to farming operations?

- Research Question #2: Which characteristics should be included in a farm management emulation-based tool to enable farmers effectively introduce advanced robotic technologies in real-world field operations?

In order to address the abovementioned research questions, we applied a multi-method approach with the aim to provide coherent research output [20], with both academic and practice implications in agriculture. In particular, to address Research Question #1 we performed an inclusive literature review and a critical taxonomy of studies providing evidence about technology-enabled agricultural systems analysed either from a cyber or from a physical application’s perspective. Thereafter, to tackle Research Question #2 we developed AgROS, an integrated farm management tool for emulating the life-cycle of agricultural activities in a field’s physical space. AgROS acronym stems from “Agriculture” (“Ag”) and “ROS—Robot Operating System” (“ROS”). AgROS is based on the Robot Operating System and could be used for emulating operations of real-world intelligent machinery in customized settings for the ex-ante system performance assessment to enable real-world transformations.

The remainder of the paper is structured as follows: Section 2 sets out the materials and methods that underpin this research. Section 3 provides a critical taxonomy over robotic technology-enabled agricultural systems based on the elaborated analysis approach (i.e., simulation, emulation, real-world implementation), farming operations, and unit of farming activities. A discussion over the analysis approach is performed to demonstrate associated benefits and shortcomings. Thereafter, the developed emulation tool is presented in Section 4. AgROS could be used for emulating farming operations of real-world robot systems along with their operational performance in customized fields. Finally, conclusions, scientific and practical implications, limitations and recommendations for future research are discussed in Section 5.

2. Materials and Methods

The scope of this research is to identify the scientific research over software simulation tools and real-world robotic applications on agricultural environments and develop a tool that encompasses the merits of both simulation or emulation only focused approaches and field only tests. In this sense, the two methodological steps that were undertaken to conduct this research are: (i) critical taxonomy of the extant relevant literature; and (ii) programming and development of the AgROS emulation tool.

2.1. Critical Taxonomy

At a first methodological stage, the object of scrutiny is secondary research. Considering the aim of this research, the critical taxonomy of the reviewed studies focuses on: (i) the analysis approach elaborated to assess technology-enabled agricultural systems, i.e., real-world application, simulation, emulation; (ii) the farming operations for which the discussed technology-enabled agricultural system is being evaluated, i.e., monitoring/analysing, harvesting/picking/mowing, fertilizing/spraying, planting/seeding, watering, weeding, tilling; and (iii) the farming type where the studied technology-enabled agricultural system is applied, i.e., generic agricultural layout, orchard, arable field and greenhouse.

In order to ensure scientific integrity, the critical taxonomy of extant studies comprises scientific articles identified in the Scopus® of Elsevier and Web of Science® of Thomson Reuters databases. The focus on these databases is attributed to the fact that they record a broad range of peer-reviewed scientific journals, particularly in the areas of Natural Sciences and Engineering [21].

The following Boolean query was formulated: (“simulation” OR “emulation”) AND (“decision support systems”) AND (“autonomous vehicles”) AND (“agriculture”). The categories “Article Title, Abstract, Keywords” were used while the timespan was set from “All years” to “Present”. The reviewed studies selected to be included in our taxonomy were written in the English language. Pertinent studies were also examined. By 10 February 2019 we identified 41 studies to be retained for review. An extensive literature review of autonomous vehicles in agriculture extends the scope of this research.

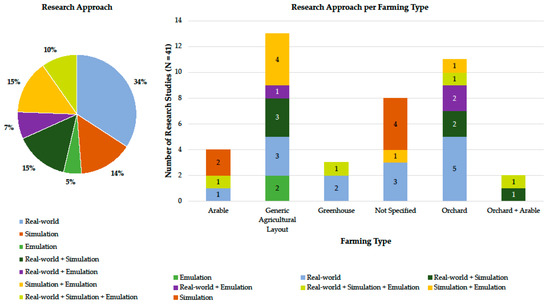

The first relevant published work is detected in 2009. In addition, a remarkably increasing number of relevant publications (about 56%) is documented from 2017 onwards, hence highlighting a growing interest in the field of autonomous intelligent vehicles in agriculture. The distribution of the reviewed publications per analysis approach, along with the allocation of the studies regarding the analysis approach used per farming type, is depicted in Figure 1. First of all, 34% of the reviewed publications report the real-world implementation of autonomous vehicles in field operations. Therefore, the focus of the current body of literature on physical testing of machinery is evident. Furthermore, around 10%–15% of the studied works use either simulation or emulation modelling approaches. Approximately 14% of the reviewed works jointly apply simulation and emulation modelling analysis techniques. Notably, only four studies apply an integrated analysis approach exploring the cyber-physical interface. Secondly, regarding the farming type, most of the studies included in our critical taxonomy consider either a generic agricultural layout (13 articles) or an orchard (11 articles). The generic agricultural layout type provides a great range of analysis opportunities, with simulation and emulation modelling being greatly applied possibly owing to the uncontrolled environment of generic fields and the dynamic-changing conditions that stipulate interesting technical and operational challenges for any tested autonomous vehicles. On the other hand, the more controlled environments in orchards allow the direct testing of real-world vehicles due to the lower possibility of unexpected incidents that could lead to equipment impairment and significant associated costs.

Figure 1.

Publications per applied research analysis approach (pie chart on the left); and distribution of the reviewed publications based on the elaborated research analysis approach per farming type (bar chart on the right).

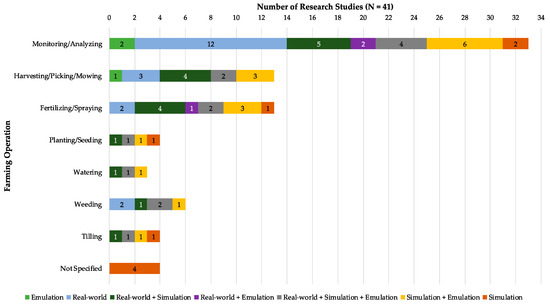

Likewise, the distribution of the reviewed articles per farming operation is illustrated in Figure 2. In particular, Figure 2 depicts the research approach applied to analyse each farming operation; a reviewed research article might had applied a particular research analysis approach to multiple farming operations. Figure 2 clearly demonstrates that monitoring/analysing operations have been extensively studied in the past (N = 33) from a simulation, emulation, and a real-world application perspectives. Intelligent vehicles can be easily modified and equipped with multiple sensor devices to monitor different farming operations. Additionally, simulation and emulation modelling, and real-world application of intelligent vehicles in harvesting/picking/mowing and fertilizing/spraying activities has attracted the research interest (N = 13 for both cases) as these farming operations are quite common in most plantations. However, the extant research with regards to planting/seeding (N = 4), watering (N = 3), weeding (N = 4), and tilling (N = 4) is rather limited possibly due to the advanced technical requirements for UGVs.

Figure 2.

Distribution of the reviewed publications based on the elaborated research analysis approach per farming operation.

2.2. AgROS Development

This research proposes an emulation tool, named AgROS, for the ex-ante assessment of precision farming activities in near to real-world virtual environments. The tool is developed based on the ROS platform; ROS, an open source framework created by Willow Garage and Stanford Artificial Intelligence Laboratory, is considered as a de facto analysis approach and an appropriate tool for analysing “digital twins” of autonomous robotic systems in the manufacturing industry. The advantages of ROS refer to the incorporation of multiple compatible libraries combined with third-party hardware support that contribute to a thorough exploration of a robot system’s functional potential, further providing opportunities for the application and improvement of vehicle routing and optimization algorithms [22].

AgROS is a wrapper that integrates: (i) the ROS platform; (ii) the Gazebo 3D scenery environment; (iii) the Open Street Maps tool; and (iv) several 3D models for objects’ representation. Furthermore, by introducing a business logic layer AgROS enables the autonomous movement of an intelligent vehicle in a customizable cyber environment. The AgROS emulation tool enables a range of functionalities and capabilities to be modelled, including: static agricultural environment field layout/landscape; dynamic agricultural environment (e.g., cultivation, other on-field activities); intelligent vehicle’s type (both commercially available and bespoke); vehicle’s equipment (i.e., sensors, actuators); autonomous environment exploration (i.e., simultaneous localization and mapping—SLAM—planning and scheduling, navigation); and data processing activities (i.e., image processing, deep learning).

3. Robotic Technology-Enabled Agricultural Systems: Background

In order to assess the benefits emanating from the implementation of robotic technologies to the agricultural sector, extant research approaches use software simulation modelling, emulation modelling and/or real-world hardware implementation. Notwithstanding the significant advancements in the analysis approaches that could benefit farmers and provide academic and practical context, extant studies mainly focus on the examination of technology solutions per se. In this regard, Table 1 provides a critical taxonomy of the reviewed studies across three axes, namely: (i) the elaborated analysis approach; (ii) the investigated farming operation; and (iii) the considered farming type. In the following sub-sections, we only focus on the elaborated analysis approach as the level of analysis feasible at the cyber-physical interface is essential for demonstrating expected benefits and thus motivating farmers to use agricultural robotic systems at customized fields.

Table 1.

Critical taxonomy of research studies assessing agricultural robotic systems.

3.1. Cyber Space: Simulation and Emulation Modelling

The use of simulation and emulation modelling methods can provide valuable feedback for the study of real-world agricultural environments. The critical taxonomy revealed that the majority of scientific studies does not consider real-world systems’ implementation basically due to the capital cost associated to UGVs, both at the prototype and commercial outdoor vehicles’ level. Furthermore, the use of simulation and emulation modelling approaches minimizes potential incidents that could impair the robotic agricultural equipment while multiple experiments and scenarios could be assessed.

Emmi et al. [35] presented a simulation environment with 2D and 3D support for examining the behaviour of a fleet of agricultural robots. The authors implemented their simulation model based on the Webots platform while the graphical user interface was composed at the Matlab environment. Habibie et al. [38] used the ROS simulation tool for navigating a UGV in a 3D orchard field implemented in Gazebo for mapping fruits in an apple farm. The authors concluded that the experimental environment provides a good accuracy for precision farming activities. Hameed et al. [41] used 2D and 3D simulation for implementing path planning algorithms for side-to-side field coverage in agriculture. Their work revealed that the uncovered area could be minimized in case an appropriate driving angle is chosen during simulation tests. Wang et al. [62] used the Microsoft Robotics Developer Studio and Matlab for optimizing path planning activities in 3D agricultural fields.

Except for using dedicated robotic platforms, several studies elaborated path planning software tools, integrated into simulation and emulation modelling efforts, that could provide guidance to real-world vehicles [39,40,48]. Finally, several studies utilized 2D simulation methods for path planning of autonomous vehicles in field operations [24,25,29,50,56,63].

3.2. Physical Space: Real-World Implementation

Outdoor agricultural fields provide a challenging environment for autonomous vehicles due to the demanding and dynamically changing field conditions (e.g., soil moisture) and agricultural landscape (e.g., random obstacles). Greenhouses are characterized as structured or semi-structured environments that provide more stable operating conditions for agricultural robotics. Agricultural vehicles use a plethora of sensors (e.g., GPS—Global Positioning System; RTK-GNSS—Real-Time Kinematic-Global Navigation Satellite Systems; LiDAR—Light Detection And Ranging; camera and depth camera; ultrasonic sensors) and actuators (e.g., robotic arm, agricultural equipment) for safely navigating in the field, gathering data and information, and performing farming activities. According to their size, two main categories of vehicles for real-world experimentation are identified: (i) large scale vehicles (e.g., custom tractors, four-wheel-driving four-wheel-steering electric vehicles); and (ii) small- and medium-scale vehicles (e.g., differential steering four-wheel-driving small robots).

Bayar et al. [26] used a UGV navigating in fruit orchards based on data retrieved from a laser scanner, and wheel and steering encoders. The optimal navigation minimized the total costs, thus demonstrating the potential for the use of UGVs in agriculture. De Preter et al. [31] described the development of a harvesting robot tested in a greenhouse for strawberries. The investigated harvesting robot prototype consisted of an electric vehicle and a robotic arm. Blok et al. [27] tested the Husky robot using the ROS platform and concluded that the Particle Filter algorithm was more suitable for ensuring navigation accuracy in most trial applications. De Sousa et al. [32] proposed fuzzy behaviours for navigating the mini-robot Khepera in an agricultural environment. Demim et al. [33] implemented a navigation algorithm using odometer and laser data from a Pioneer3-AT UGV. Hansen et al. [43] used real-world vehicles (i.e., the RobuROC4 platform from Robusoft and a UAS Maxi Joker 3 RC-helicopter) for weed mapping and targeted weed control. Radcliffe et al. [54] implemented machine vision algorithms for orchard navigation using a custom UGV equipped with multiple sensors. Field tests were conducted in a peach orchard and the results demonstrated the potential to guide utility vehicles in orchards. Reina et al. [55] examined terrain assessment for precision agriculture using the Husky A200 UGV. The authors conducted experiments in a farm for validating the proposed terrain classifier and identified terrain classes. Wang et al. [61] tested the robustness of deep learning algorithms in mango orchard trees using real-world ground vehicles equipped with 2D and depth cameras. Durmus et al. [34] implemented a custom real-world vehicle and tested it in a greenhouse environment using the ROS platform. The mobile robot was using a depth camera for mapping the greenhouse environment and was navigating in the greenhouse by using the generated map. Christiansen et al. [30] created a point cloud model using a LiDAR sensor (Velodyne) in an unmanned aerial vehicle (DJI Matrice 100) for mapping an agricultural field in the ROS platform. The aerial vehicle used an RTK-GNSS technique for navigating in the field. Pierzchała et al. [53] implemented a map of a forest environment using ROS and a real-world custom ground vehicle. The authors tested the use of a LiDAR sensor for creating a point cloud of the ground surface and accurately identified the exact position of the trees in the forest.

Large-scale agricultural vehicles, like tractors, have been extensively used in field tests. For example, Hansen et al. [44] investigated the use of LiDAR in localization techniques for tractor navigation in a semi-structured orchard environment. Pérez-Ruiz et al. [52] implemented an intelligent sprayer boom on a tractor for pest control applications. Workshop and field experiments provided evidence that UGVs can be reliably used in performing a range of precision farming activities.

3.3. Cyber–Physical Interface: Joint Simulation, Emulation and Real-World Implementations

On the one hand, simulation and emulation modelling approaches provide an inexpensive option for exploring the proof-of-concept of an algorithm or a robotic vehicle. On the other hand, real-world applications and experimentations with testbeds are necessary for validating a technology-based solution and demonstrating pragmatic results. Typical emulation tools enable the software code transfer to real-world prototypes for validating an algorithm’s efficiency during field tests. Shamshiri et al. [57] proposed the use of open source emulation platforms for performing tedious agricultural tasks to foster the adoption of agricultural robots. The authors compared the V-REP, Gazebo and ARGoS emulation tools while referring to a plethora of other similar platforms (e.g., Webots, CARMEN RNT, CLARAty, Microsoft Robotics Developer Studio, Orca, Orocos, Player, AMOR, Mobotware). All of the emulation platforms support ROS and C++ programming and the developed programming code could be used in real-world vehicles. Mancini et al. [51] used aerial and ground sensor-retrieved data for improving variable rate treatments. The authors used the ROS platform in order to estimate the volume and state of plants to identify field management zones. Jensen et al. [46] focused on tractor guidance systems and used ROS to develop the FroboMind software platform and evaluate the tractor’s performance in precision agriculture tasks. FroboMind was tested in a real-world tractor with the methodology demonstrating significant reductions in development time and resources required to perform field experiments. In addition, Harik and Korsaeth [45] used ROS and a mobile ground vehicle equipped with sensors in a greenhouse environment. The authors used emulation modelling and presented experimental results regarding the robot’s autonomous navigation. Han et al. [42] explored the use of a tractor in a paddy field for auto-guidance. Their emulation environment was programmed in C++ while the test location was obtained through Google Maps. The emulation environment was validated by using a real-world tractor platform. Furthermore, in the GRAPE project a fully equipped (i.e., GPS, IMU, LiDAR) mobile robot was used in vineyards [23]. Emulation experiments were conducted using the ROS platform prior to the use of a Husky robot on the field. Vasudevan et al. [60] used the data gathered via real-world robots (both unmanned aerial and ground vehicles) in order to create a simulation environment and capture specific indices for precision farming activities. Gan and Lee [37] applied a Husky UGV in a real-world environment after evaluating its performance and obstacle avoidance algorithms in the Gazebo emulation environment.

Furthermore, a number of studies jointly explored the use of simulation methods in 2D environments along with real-world implementations on the field [28,36,47,49,58,59]. Typically, in such studies: the farming plans and the vehicles’ trajectories are firstly being implemented at the 2D environment; the real-world vehicle uses the algorithms for navigating in the actual field while simultaneously providing real-time feedback to the algorithm based on the data gathered through sensors.

4. AgROS: An Emulation Tool for Agriculture

The literature review in this research revealed that current analysis tools for robotic systems in agriculture are not yet at a mature development phase. A plethora of simulation and emulation tools exists that use: (i) virtual 2D environments for testing path planning algorithms; (ii) 2D images of the actual field (captured via Google Earth, Open Street Maps, and satellites); and (iii) 3D virtual agricultural sceneries. Existing approaches can be useful for testing purposes but fail to capture the dynamic nature of intelligent systems’ operations in real-world fields. On the other hand, the use of real-world vehicles can bridge this gap by recreating real-world 3D sceneries captured through the vehicle’s sensors (LiDARs and depth cameras); however; this approach is capital and time intensive for actual field tests. In this regard, we propose the combined use of simulation and emulation modelling along with actual field tests.

The proposed AgROS emulation tool takes a step beyond the simple combination of the above-mentioned approaches and uses the actual landscape of a field from a digital elevation model (DEM) file retrieved from Open Street Maps. This practice enables a near to real-world emulation approach as the DEM file includes information regarding the altitude of a field for better simulating a vehicle’s navigation in an actual setting. Furthermore, farmers can insert static objects and the utilized UGV in the 3D scenery from a library of models via an automated procedure. The emulation starts after selecting the appropriate algorithms. Finally, the programming code can be loaded to the real-world UGV equivalent for field tests.

At this perpetual beta stage of AgROS development, capturing soil sciences related parameters (e.g., wet/dry soil conditions, sand/clay composition) and developing respective modules extends the research focus and the tool’s portfolio of functionalities. In addition, AgROS intends to support the operational analysis of UGVs in customized fields; therefore, informing about diversified issues like treatment options for pests, market potential of specific crops or the financial viability of advanced digital machinery in agriculture, is beyond the scope of this research.

4.1. System Architecture

ROS architecture is based on both the publisher-subscriber and the server-client model. The publisher-subscriber model is implemented using nodes and topics. A node is a distinct active part, which gathers, computes and transmits data through channels typically called topics. The server-client model is also based on nodes in order to manage the transmitted data. The communication between the two node-services is then implemented, as a service analogous to a web service. ROS uses the publisher-subscriber model for asynchronous communication and the server-client model for the synchronous communication between nodes. In order to create an emulated environment in a user-friendly manner, the AgROS tool is a wrapper that uses:

- the ROS platform;

- the Gazebo 3D scenery environment;

- the Open Street Maps tool;

- several 3D models to represent objects on the agricultural scenery; and

- the business logic layer that enables a vehicle’s autonomous movement in the virtual world.

4.2. Workflow

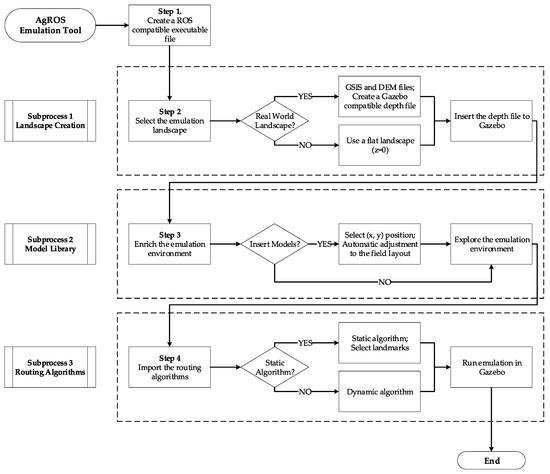

AgROS is a decision support tool that enables the ex-ante evaluation of robotic technologies in a 3D emulation environment with the digital equivalent to a real-world agricultural environment being captured through a geographic information system. In particular, AgROS can emulate the actual field landscape enriched with real-world objects like: (i) trees; (ii) bushes; (iii) grass; (iv) static obstacles (e.g., rocks, sheds); and (v) dynamic objects that include other robot models that simultaneously perform agricultural activities. Furthermore, AgROS provides high customization possibilities by allowing the user to capture an infinite number of field operational conditions like geomorphological terrain characteristics, physical conditions and lighting levels to further assess the performance of sensor devices. Overall, AgROS enables the emulation of a realistic agricultural environment in a defined geographical location for modelling and assessing day-to-day farming operations with high accuracy. Agricultural robots could then be simulated to autonomously navigate within the emulated environment based on programmable deterministic or stochastic algorithms to perform precision farming activities. The stepwise workflow enabled by AgROS, divided in three subprocess—landscape creation; model library; routing algorithms—to emulate the environment and operations in a user-friendly manner, is depicted in Figure 3.

Figure 3.

AgROS workflow.

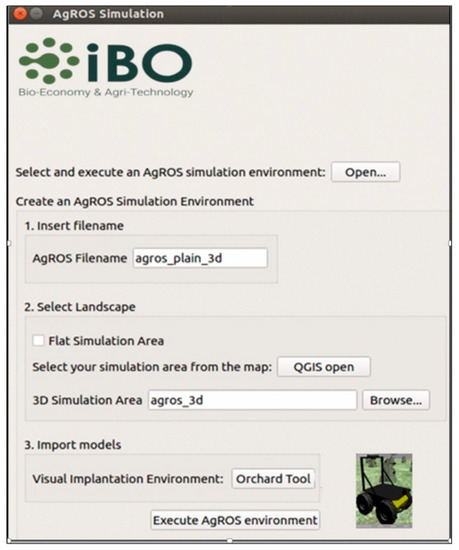

4.3. Graphical User Interface

The graphical user interface in AgROS is developed in Qt, an open source widget toolkit that allows the development of graphical user interfaces on various software platforms. The native language of Qt enables shell script manipulation and uses native C++-based ROS programming. The developed AgROS farm management emulation-based tool is able to execute ROS commands directly from the graphical user interface to gradually represent all the components of a customized agricultural environment. In order to develop a robust emulation model of agricultural activities the user has to follow a stepwise procedure, namely:

- Step 1 – create a ROS compatible executable file (or edit an existing one);

- Step 2 – create a 2D or 3D simulation environment using QGIS, an open source geographic information system tool (field landscape);

- Step 3 – enrich the field by virtually planting trees and placing UGVs;

- Step 4 – explore the field in Gazebo’s simulation environment using routing and object recognition algorithms; and

- Step 5 – execute the final simulation based on the ROS backend.

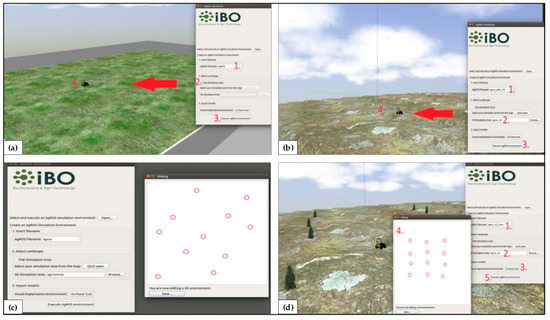

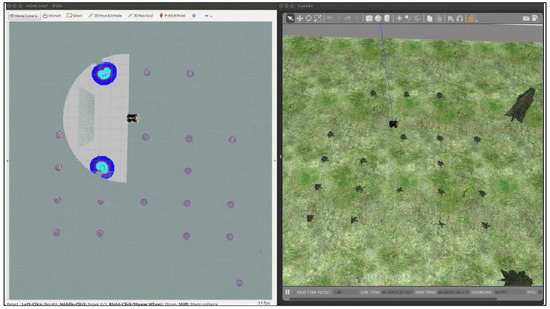

The graphical user interface of AgROS, illustrated in Figure 4, allows: (i) creation of a new model by typing a preferred AgROS filename; (ii) selection between a 2D or a 3D simulation map; (iii) import of 3D models of agricultural entities (e.g., trees), obstacles and UGVs for enriching the emulated environment; and (iv) execution of the emulation model.

Figure 4.

AgROS graphical user interface.

AgROS enables the emulation of agricultural activities in either a simple landscape (flat layout) or in a 3D (real-world layout) landscape with geomorphological characteristics. The user, optionally, can insert models (e.g., plant trees and dynamic objects like vehicles) and finally import the simulated environment to the Gazebo software for performing emulations. The graphical user interface of the AgROS enables the user to: (i) insert a flat landscape (Figure 5a); (ii) insert a 3D landscape (Figure 5b); (iii) import agricultural models (e.g., trees) (Figure 5c); and (d) emulate the integrated field environment (Figure 5d). In particular, the AgROS graphical user interface provides the “Orchard Tool” option that allows a user to develop a customized field by importing different emulated models of orchard trees. In this regard, AgROS allows users to: (i) select the “Orchard Tool”; (ii) define the position of each tree; (iii) use computer input devices to import trees or use the embedded automatic plantation mode enabled through specifying orchard row width, row number, trees’ spacing and initial point at the map; and (iv) save the final world file. As a last step, the user selects to “Execute AgROS environment” to run the emulation.

Figure 5.

Functionalities of the AgROS graphical user interface: (a) insert a flat landscape; (b) insert a 3D landscape; (c) import agricultural models (e.g., trees); and (d) emulate the integrated field environment.

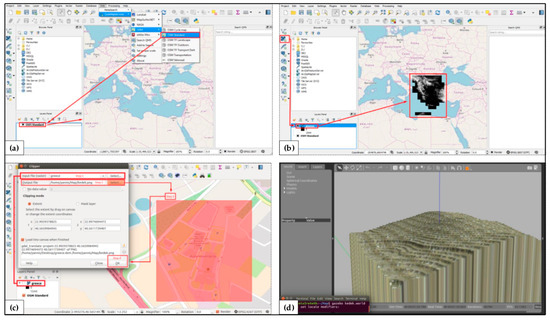

4.3.1. Field Layout Environment

The realistic 3D landscape is directly selected from QGIS. QGIS is able to create, edit and visualize geospatial information on several operating systems and can be used for reading and editing Open Street Maps data that include information regarding GPS data, aerial photography or even local knowledge. Another useful format is DEM, a raster file that allows the representation of the actual terrain including hills hade, slope and aspect information in the QGIS tool. Figure 6 illustrates the process of creating a 3D landscape in AgROS through using the tool’s graphical user interface and the QGIS tool, up to the point of importing the .png format grayscale depth file to the Gazebo software.

Figure 6.

Field layout environment in AgROS comprises of: (a) Standard Open Street Maps; (b) digital elevation model for the region; (c) exact geographical region; and (d) 3D landscape to be used in Gazebo.

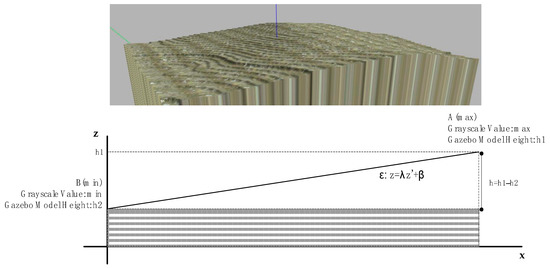

Following the creation of the 3D landscape of a targeted field, the user can import emulation models of agricultural objects (e.g., trees) at selected positions. The technical challenge is to plant a tree model at the proper z scale according to the 3D landscape. The AgROS tool automatically corrects the planting position along the z-axis by using the geometry of the 3D landscape (Figure 7). The correction process starts from processing the values of the .png format grayscale depth file to explore the z-values considering that the grayscale value of each pixel at the .png format file corresponds to the z-value of the pixels. After identifying the highest (h1, max z-value) and the lowest (h2, min z-value) z-values, the total height of the landscape can be calculated (h, model height) along with the field’s typical slope (λ). The field slope is empirically recovered using the field’s dimensions in the Gazebo model. This process enables the calculation of the z-value of a given pixel, from the grayscale value of the corresponding pixel at the depth file, and is used for the plantation of tree models to the final planting position (x,y,z).

Figure 7.

The technique used by AgROS to automatically adjust the planting position at the z-axis.

4.3.2. Static and Dynamic Algorithms

Among the main objectives of the developed AgROS tool is the implementation of a path planning and navigation package of algorithms that could be used for the autonomous navigation of agricultural vehicles in fields. The path planning package was developed at the C++ programming language as an independent module. Alternative static and dynamic path planning algorithms could be implemented and tested using the AgROS tool.

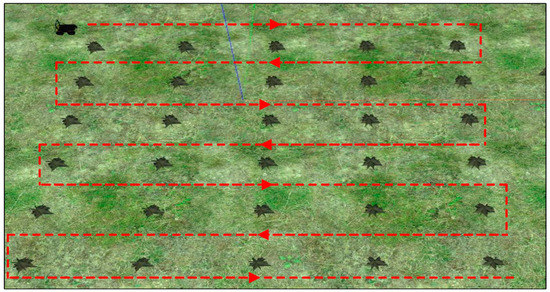

At a first step, a static routing algorithm was implemented that uses feedback from the user in order to capture the number of tree rows, the starting and ending position of the first row and the distance among the rows. This static solution could be used either in orchard fields or even in arable fields. The output of the algorithm is the navigation of the autonomous vehicle across all the rows of the orchard as demonstrated in Figure 8.

Figure 8.

Deterministic area coverage implemented in AgROS.

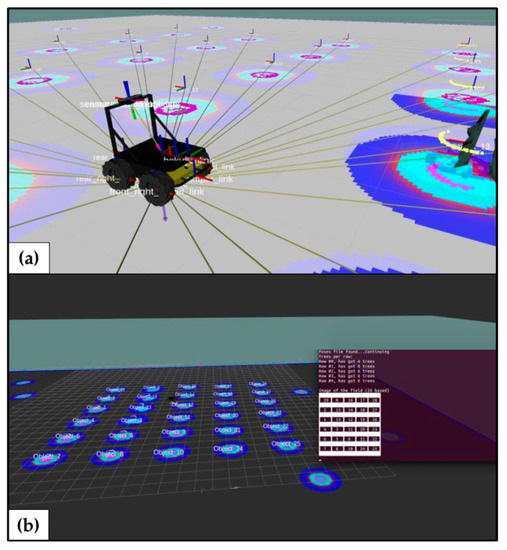

A dynamic routing algorithm is also incorporated that uses the 3D landscape as input from the AgROS emulation tool to navigate the UGV across the orchard field and identify in real-time the surrounding environment with the LiDAR. The unique functionality of AgROS is that the entry position of the vehicle is random and the emulated UGV has no prior information about the field’s layout. A depth camera is used to identify trees and map the field. These processes run in parallel in order to simultaneously identify the exact location of trees at the orchard and map the field. The geospatial coordinates of the orchard trees and their localization on the map are recorded in order to be used in third party services (Figure 9).

Figure 9.

AgROS’s geospatial mapping process: (a) real-time localization of trees; and (b) recording of every tree on the field.

Finally, a dynamic area coverage plan is calculated through the implementation of the generated map and the corresponding recorded coordinates. The routing algorithm explores the rows of the orchard field in order to cover the entire free area of the field by traversing the inter-tree rows (Figure 10).

Figure 10.

Dynamic area coverage by the UGV in AgROS.

4.4. Real-World Implementation

In the AgROS emulation environment the model of an experimental four-driving-wheels four-steering-wheels electric-powered field robot and the source code were tested on a specified orchard. The emulation model enables the direct transfer and testing of the developed modules with the robot in real-world agricultural fields. Figure 11 presents the robot that was used for testing the developed modules on the field.

Figure 11.

Actual robot used for testing the AgROS emulation model on an actual orchard.

5. Conclusions

The future of agriculture heavily lays on technology and this is also supported by labour shortages, especially at harsh agricultural operations. Advanced and intelligent technologies have to become part of a farmer’s toolbox and support every-day farming operations, potentially in a 24/7 basis. Agricultural environments are usually complex and unstructured, while they are characterized by challenging landscapes under various weather conditions. The direct introduction and application of autonomous vehicles in physical fields could be challenging in case such systems are not adequately tested at a cyber level with regard to undertaking heavy-duty farming activities. The proposed AgROS tool is targeted to address these challenges by providing a platform for emulating the functional capabilities and performance of real-world intelligent machinery in physical fields. The ex-ante system performance assessment could foster the upscaled deployment of robotized operations.

In order to support farmers in the transition towards the digital era in agriculture, this research grounded two research questions and applied alternative methodologies in an attempt to effectively address them. In particular, to tackle Research Question #1, we discussed farmers’ characteristics that hinder the adoption of intelligent systems in farming operations. In addition, we referred to the myopic focus of existing software tools on technical and mechanical aspects of agricultural machinery. Thereafter, we provided a critical taxonomy of extant studies along three axes, namely: (i) analysis approach; (ii) farming operation; and (iii) farming type. The taxonomy illustrates the limited scope of existing research efforts; studies consider the application of software tools on generic field layouts and tend to neglect the customized needs of farmers. Moreover, in response to Research Question #2, we devised and analytically described the subprocess that underpins AgROS—landscape creation; model library; routing algorithms—along with the functionalities of a recommended graphical user interface. The aforementioned processes are necessary to emulate an agricultural environment and enable farmers to effectively introduce advanced robotic technologies in real-world field operations.

5.1. Scientific Implications

AgROS enables scenery analysis enabled by the employment of place and object recognition techniques and algorithms. At a greater extent, the capability of AgROS to generate topological maps of customized fields and organize the emulated agricultural environment in an abstract manner, to enable a UGV’s autonomous navigation and sensor-driven task-planning capabilities, methodologically contributes to the field of robotics [64]. In the digitalization discourse, AgROS allows context-aware robot navigation enabled by sensors, thus allowing the realization of “digital twins” in agriculture. The backbone of AgROS integrates into a user-friendly emulation tool: (i) a real-world elevation model via an automated process; (ii) an agri-environment (e.g., plants, orchard trees, etc.) to an elevation model; (iii) a range of UGVs in the emulated agricultural environment; and (iv) a collection of routing algorithms for the autonomous navigation of UGVs in the emulated operational setting.

5.2. Practical Implications

AgROS is a farm management emulation tool, based on ROS, that aims to enable farmers to effectively introduce advanced technologies in real-world field operations. The developed AgROS emulation tool could be used by farmers in order to explore and assess the use of agricultural robotic systems on customized fields. More specifically, the tool allows farmers to: (i) select their actual field from a map layout; (ii) import the landscape of the field to create an emulation equivalent; (iii) add characteristics of the actual agricultural layout (e.g., trees, static objects); (iv) select an agricultural robot (e.g., from a predefined list of commercially available systems) to import at the emulated environment; and (iv) test the robot’s performance in a quasi-real-world environment.

All the implemented algorithms and modules of the emulated robot systems are compatible with commercial real-world hardware equivalents and can even be deployed to custom real-world robots. Any modifications, both at the field layout and at the selected routing algorithms, directly impact the output of the system and provide valuable feedback to the farmer, prior to proceeding to any cost-intensive actual field tests and applications.

5.3. Limitations

In conducting this research, some limitations exist which provide interesting grounds for exploring further research horizons and expanding the practical contributions of AgROS. Firstly, emulation modules of AgROS have been introduced to an experimental four-driving-wheels four-steering-wheels electric-powered field robot for initial testing, as described in Section 4.4. However, a detailed experimental protocol to compare the resulting efficiency and performance of robot navigation or field mapping activities, obtained by both the emulation tool and a real-world equivalent implementation, is still in design. Secondly, AgROS is in a perpetual beta stage of development and inherently incorporates limited technical features from a soil science perspective. For example, along with the field slope, field soil conditions and composition could also significantly affect the traversability of a real-world ground vehicle.

5.4. Future Research

Regarding future research directions, we are planning to deploy an extensive experimentation of AgROS and engage farmers to both familiarize them with digital technologies and receive actual feedback and constructive remarks about the effectiveness and efficiency of the tool. Incorporating feedback from farmers could guide the technical development of AgROS and enrich the tool with further modules. We envision to position the tool as a “digital twin” paradigm in agriculture for enabling predictive emulation of UGVs in field operations to assist in: (i) deriving operational scheduling and planning programs for agricultural activities performed by robots [65]; (ii) informing evidence-based implementation scenarios for agricultural operations with economic, environmental and social sustainability benefits for agrifood supply chain stakeholders [66]; and (iii) empowering farmers in the forthcoming landscape of digitalization in the agri-food sector [67].

Author Contributions

Conceptualization: N.T., D.B. (Dimitrios Bechtsis) and D.B. (Dionysis Bochtis); methodology: N.T., D.B. (Dimitrios Bechtsis) and D.B. (Dionysis Bochtis); resources: D.B. (Dimitrios Bechtsis) and N.T.; writing—original draft preparation: N.T. and D.B. (Dimitrios Bechtsis); writing—review and editing: D.B. (Dionysis Bochtis); project administration: N.T. and D.B. (Dionysis Bochtis); funding acquisition: N.T. and D.B. (Dionysis Bochtis).

Funding

This research has received funding from the General Secretariat for Research and Technology (GSRT) under reference no. 2386, Project Title: “Human-Robot Synergetic Logistics for High Value Crops” (project acronym: SYNERGIE).

Conflicts of Interest

The authors declare no conflict of interest.

References

- Tzounis, A.; Katsoulas, N.; Bartzanas, T.; Kittas, C. Internet of Things in agriculture, recent advances and future challenges. Biosyst. Eng. 2017, 164, 31–48. [Google Scholar] [CrossRef]

- Garnett, T.; Appleby, M.C.; Balmford, A.; Bateman, I.J.; Benton, T.G.; Bloomer, P.; Burlingame, B.; Dawkins, M.; Dolan, L.; Fraser, D.; et al. Sustainable intensification in agriculture: Premises and policies. Science 2013, 341, 33–34. [Google Scholar] [CrossRef] [PubMed]

- Sundmaeker, H.; Verdouw, C.; Wolfert, S.; Pérez Freire, L. Internet of food and farm 2020. In Digitising the Industry—Internet of Things Connecting Physical, Digital and Virtual Worlds, 1st ed.; Vermesan, O., Friess, P., Eds.; River Publishers: Gistrup, Denmark; Delft, The Netherlands, 2016; pp. 129–151. [Google Scholar]

- Vasconez, J.P.; Kantor, G.A.; Auat Cheein, F.A. Human–robot interaction in agriculture: A survey and current challenges. Biosyst. Eng. 2019, 179, 35–48. [Google Scholar] [CrossRef]

- Kunz, C.; Weber, J.F.; Gerhards, R. Benefits of precision farming technologies for mechanical weed control in soybean and sugar beet—Comparison of precision hoeing with conventional mechanical weed control. Agronomy 2015, 5, 130–142. [Google Scholar] [CrossRef]

- Bonneau, V.; Copigneaux, B. Industry 4.0 in Agriculture: Focus on IoT Aspects; Directorate-General Internal Market, Industry, Entrepreneurship and SMEs; Directorate F: Innovation and Advanced Manufacturing; Unit F/3 KETs, Digital Manufacturing and Interoperability by the consortium; European Commission: Brussels, Belgium, 2017. [Google Scholar]

- Lampridi, M.G.; Kateris, D.; Vasileiadis, G.; Marinoudi, V.; Pearson, S.; Sørensen, C.G.; Balafoutis, A.; Bochtis, D. A case-based economic assessment of robotics employment in precision arable farming. Agronomy 2019, 9, 175. [Google Scholar] [CrossRef]

- Bechar, A.; Vigneault, C. Agricultural robots for field operations: Concepts and components. Biosyst. Eng. 2016, 149, 94–111. [Google Scholar] [CrossRef]

- Miranda, J.; Ponce, P.; Molina, A.; Wright, P. Sensing, smart and sustainable technologies for Agri-Food 4.0. Comput. Ind. 2019, 108, 21–36. [Google Scholar] [CrossRef]

- Rose, D.C.; Morris, C.; Lobley, M.; Winter, M.; Sutherland, W.J.; Dicks, L.V. Exploring the spatialities of technological and user re-scripting: The case of decision support tools in UK agriculture. Geoforum 2018, 89, 11–18. [Google Scholar] [CrossRef]

- El Bilali, H.; Allahyari, M.S. Transition towards sustainability in agriculture and food systems: Role of information and communication technologies. Inf. Process. Agric. 2018, 5, 456–464. [Google Scholar] [CrossRef]

- Tsolakis, N.; Bechtsis, D.; Srai, J.S. Intelligent autonomous vehicles in digital supply chains: From conceptualisation, to simulation modelling, to real-world operations. Bus. Process Manag. J. 2019, 25, 414–437. [Google Scholar] [CrossRef]

- Rose, D.C.; Sutherland, W.J.; Parker, C.; Lobley, M.; Winter, M.; Morris, C.; Twining, S.; Ffoulkes, C.; Amano, T.; Dicks, L.V. Decision support tools for agriculture: Towards effective design and delivery. Agric. Syst. 2016, 149, 165–174. [Google Scholar] [CrossRef]

- Tao, F.; Zhang, M.; Nee, A.Y.C. Chapter 12—Digital Twin, Cyber–Physical System, and Internet of Things. In Digital Twin Driven Smart Manufacturing, 1st ed.; Tao, F., Zhang, M., Nee, A.Y.C., Eds.; Academic Press: New York, NY, USA, 2019; pp. 243–256. [Google Scholar]

- Rosen, R.; von Wichert, G.; Lo, G.; Bettenhausen, K.D. About the importance of autonomy and digital twins for the future of manufacturing. IFAC-PapersOnLine 2015, 48, 567–572. [Google Scholar] [CrossRef]

- Leeuwis, C. Communication for Rural Innovation. Rethinking Agricultural Extension; Blackwell Science: Oxford, UK, 2004. [Google Scholar]

- Ayani, M.; Ganebäck, M.; Ng, A.H.C. Digital Twin: Applying emulation for machine reconditioning. Procedia CIRP 2018, 72, 243–248. [Google Scholar] [CrossRef]

- McGregor, I. The relationship between simulation and emulation. In Proceedings of the 2002 Winter Simulation Conference, San Diego, CA, USA, 8–11 December 2002; Volume 2, pp. 1683–1688. [Google Scholar] [CrossRef]

- Zambon, I.; Cecchini, M.; Egidi, G.; Saporito, M.G.; Colantoni, A. Revolution 4.0: Industry vs. agriculture in a future development for SMEs. Processes 2019, 7, 36. [Google Scholar] [CrossRef]

- Espinasse, B.; Ferrarini, A.; Lapeyre, R. A multi-agent system for modelisation and simulation-emulation of supply-chains. IFAC Proc. Vol. 2000, 33, 413–418. [Google Scholar] [CrossRef]

- Mongeon, P.; Paul-Hus, A. The journal coverage of Web of Science and Scopus: A comparative analysis. Scientometrics 2016, 106, 213–228. [Google Scholar] [CrossRef]

- Bechtsis, D.; Moisiadis, V.; Tsolakis, N.; Vlachos, D.; Bochtis, D. Unmanned Ground Vehicles in precision farming services: An integrated emulation modelling approach. In Information and Communication Technologies in Modern Agricultural Development; HAICTA 2017, Communications in Computer and Information Science; Salampasis, M., Bournaris, T., Eds.; Springer: Cham, Switzerland, 2019; Volume 953, pp. 177–190. [Google Scholar] [CrossRef]

- Astolfi, P.; Gabrielli, A.; Bascetta, L.; Matteucci, M. Vineyard autonomous navigation in the Echord++ GRAPE experiment. IFAC-PapersOnLine 2018, 51, 704–709. [Google Scholar] [CrossRef]

- Ayadi, N.; Maalej, B.; Derbel, N. Optimal path planning of mobile robots: A comparison study. In Proceedings of the 15th International Multi-Conference on Systems, Signals & Devices (SSD), Hammamet, Tunisia, 19–22 March 2018; pp. 988–994. [Google Scholar] [CrossRef]

- Backman, J.; Kaivosoja, J.; Oksanen, T.; Visala, A. Simulation environment for testing guidance algorithms with realistic GPS noise model. IFAC-PapersOnLine 2010, 3 Pt 1, 139–144. [Google Scholar] [CrossRef]

- Bayar, G.; Bergerman, M.; Koku, A.B.; Konukseven, E.I. Localization and control of an autonomous orchard vehicle. Comput. Electron. Agric. 2015, 115, 118–128. [Google Scholar] [CrossRef]

- Blok, P.M.; van Boheemen, K.; van Evert, F.K.; Kim, G.-H.; IJsselmuiden, J. Robot navigation in orchards with localization based on Particle filter and Kalman filter. Comput. Electron. Agric. 2019, 157, 261–269. [Google Scholar] [CrossRef]

- Bochtis, D.; Griepentrog, H.W.; Vougioukas, S.; Busato, P.; Berruto, R.; Zhou, K. Route planning for orchard operations. Comput. Electron. Agric. 2015, 113, 51–60. [Google Scholar] [CrossRef]

- Cariou, C.; Gobor, Z. Trajectory planning for robotic maintenance of pasture based on approximation algorithms. Biosyst. Eng. 2018, 174, 219–230. [Google Scholar] [CrossRef]

- Christiansen, M.P.; Laursen, M.S.; Jørgensen, R.N.; Skovsen, S.; Gislum, R. Designing and testing a UAV mapping system for agricultural field surveying. Sensors 2017, 17, 2703. [Google Scholar] [CrossRef] [PubMed]

- De Preter, A.; Anthonis, J.; De Baerdemaeker, J. Development of a robot for harvesting strawberries. IFAC-PapersOnLine 2018, 51, 14–19. [Google Scholar] [CrossRef]

- De Sousa, R.V.; Tabile, R.A.; Inamasu, R.Y.; Porto, A.J.V. A row crop following behavior based on primitive fuzzy behaviors for navigation system of agricultural robots. IFAC Proc. Vol. 2013, 46, 91–96. [Google Scholar] [CrossRef]

- Demim, F.; Nemra, A.; Louadj, K. Robust SVSF-SLAM for unmanned vehicle in unknown environment. IFAC-PapersOnLine 2016, 49, 386–394. [Google Scholar] [CrossRef]

- Durmus, H.; Gunes, E.O.; Kirci, M. Data acquisition from greenhouses by using autonomous mobile robot. In Proceedings of the 5th International Conference on Agro-Geoinformatics, Tianjin, China, 18–20 July 2016; pp. 5–9. [Google Scholar] [CrossRef]

- Emmi, L.; Paredes-Madrid, L.; Ribeiro, A.; Pajares, G.; Gonzalez-De-Santos, P. Fleets of robots for precision agriculture: A simulation environment. Ind. Robot 2013, 40, 41–58. [Google Scholar] [CrossRef]

- Ericson, S.K.; Åstrand, B.S. Analysis of two visual odometry systems for use in an agricultural field environment. Biosyst. Eng. 2018, 166, 116–125. [Google Scholar] [CrossRef]

- Gan, H.; Lee, W.S. Development of a navigation system for a smart farm. IFAC-PapersOnLine 2018, 51, 1–4. [Google Scholar] [CrossRef]

- Habibie, N.; Nugraha, A.M.; Anshori, A.Z.; Masum, M.A.; Jatmiko, W. Fruit mapping mobile robot on simulated agricultural area in Gazebo simulator using simultaneous localization and mapping (SLAM). In Proceedings of the 2017 International Symposium on Micro-NanoMechatronics and Human Science (MHS), Nagoya, Japan, 3–6 December 2018; pp. 1–7. [Google Scholar] [CrossRef]

- Hameed, I.A.; Member, S. Coverage path planning software for autonomous robotic lawn mower using Dubins’ curve. In Proceedings of the 2017 IEEE International Conference on Real-time Computing and Robotics (RCAR), Okinawa, Japan, 14–18 July 2017; pp. 517–522. [Google Scholar] [CrossRef]

- Hameed, I.A.; Bochtis, D.D.; Sørensen, C.G.; Jensen, A.L.; Larsen, R. Optimized driving direction based on a three-dimensional field representation. Comput. Electron. Agric. 2013, 91, 145–153. [Google Scholar] [CrossRef]

- Hameed, I.A.; La Cour-Harbo, A.; Osen, O.L. Side-to-side 3D coverage path planning approach for agricultural robots to minimize skip/overlap areas between swaths. Robot. Auton. Syst. 2016, 76, 36–45. [Google Scholar] [CrossRef]

- Han, X.Z.; Kim, H.J.; Kim, J.Y.; Yi, S.Y.; Moon, H.C.; Kim, J.H.; Kim, Y.J. Path-tracking simulation and field tests for an auto-guidance tillage tractor for a paddy field. Comput. Electron. Agric. 2015, 112, 161–171. [Google Scholar] [CrossRef]

- Hansen, K.D.; Garcia-Ruiz, F.; Kazmi, W.; Bisgaard, M.; la Cour-Harbo, A.; Rasmussen, J.; Andersen, H.J. An autonomous robotic system for mapping weeds in fields. IFAC Proc. Vol. 2013, 46, 217–224. [Google Scholar] [CrossRef]

- Hansen, S.; Blanke, M.; Andersen, J.C. Autonomous tractor navigation in orchard—Diagnosis and supervision for enhanced availability. IFAC Proc. Vol. 2009, 42, 360–365. [Google Scholar] [CrossRef]

- Harik, E.H.; Korsaeth, A. Combining hector SLAM and artificial potential field for autonomous navigation inside a greenhouse. Robotics 2018, 7, 22. [Google Scholar] [CrossRef]

- Jensen, K.; Larsen, M.; Jørgensen, R.; Olsen, K.; Larsen, L.; Nielsen, S. Towards an open software platform for field robots in precision agriculture. Robotics 2014, 3, 207–234. [Google Scholar] [CrossRef]

- Khan, N.; Medlock, G.; Graves, S.; Anwar, S. GPS guided autonomous navigation of a small agricultural robot with automated fertilizing system. SAE Tech. Pap. Ser. 2018, 1, 1–7. [Google Scholar] [CrossRef]

- Kim, D.H.; Choi, C.H.; Kim, Y.J. Analysis of driving performance evaluation for an unmanned tractor. IFAC-PapersOnLine 2018, 51, 227–231. [Google Scholar] [CrossRef]

- Kurashiki, K.; Fukao, T.; Nagata, J.; Ishiyama, K.; Kamiya, T.; Murakami, N. Laser-based vehicle control in orchard. IFAC Proc. Vol. 2010, 43, 127–132. [Google Scholar] [CrossRef]

- Liu, C.; Zhao, X.; Du, Y.; Cao, C.; Zhu, Z.; Mao, E. Research on static path planning method of small obstacles for automatic navigation of agricultural machinery. IFAC-PapersOnLine 2018, 51, 673–677. [Google Scholar] [CrossRef]

- Mancini, A.; Frontoni, E.; Zingaretti, P. Improving variable rate treatments by integrating aerial and ground remotely sensed data. In Proceedings of the 2018 International Conference on Unmanned Aircraft Systems (ICUAS), Dallas, TX, USA, 12–15 June 2018; pp. 856–863. [Google Scholar] [CrossRef]

- Pérez-Ruiz, M.; Gonzalez-de-Santos, P.; Ribeiro, A.; Fernandez-Quintanilla, C.; Peruzzi, A.; Vieri, M.; Tomic, S.; Agüera, J. Highlights and preliminary results for autonomous crop protection. Comput. Electron. Agric. 2015, 110, 150–161. [Google Scholar] [CrossRef]

- Pierzchała, M.; Giguère, P.; Astrup, R. Mapping forests using an unmanned ground vehicle with 3D LiDAR and graph-SLAM. Comput. Electron. Agric. 2018, 145, 217–225. [Google Scholar] [CrossRef]

- Radcliffe, J.; Cox, J.; Bulanon, D.M. Machine vision for orchard navigation. Comput. Ind. 2018, 98, 165–171. [Google Scholar] [CrossRef]

- Reina, G.; Milella, A.; Galati, R. Terrain assessment for precision agriculture using vehicle dynamic modelling. Biosyst. Eng. 2017, 162, 124–139. [Google Scholar] [CrossRef]

- Santoro, E.; Soler, E.M.; Cherri, A.C. Route optimization in mechanized sugarcane harvesting. Comput. Electron. Agric. 2017, 141, 140–146. [Google Scholar] [CrossRef]

- Shamshiri, R.R.; Hameed, I.A.; Pitonakova, L.; Weltzien, C.; Balasundram, S.K.; Yule, I.J.; Grift, T.E.; Chowdharyet, G. Simulation software and virtual environments for acceleration of agricultural robotics: Features highlights and performance comparison. Int. J. Agric. Biol. Eng. 2018, 11, 15–31. [Google Scholar] [CrossRef]

- Thanpattranon, P.; Ahamed, T.; Takigawa, T. Navigation of autonomous tractor for orchards and plantations using a laser range finder: Automatic control of trailer position with tractor. Biosyst. Eng. 2016, 147, 90–103. [Google Scholar] [CrossRef]

- Vaeljaots, E.; Lehiste, H.; Kiik, M.; Leemet, T. Soil sampling automation case-study using unmanned ground vehicle. Eng. Rural Dev. 2018, 17, 982–987. [Google Scholar] [CrossRef]

- Vasudevan, A.; Kumar, D.A.; Bhuvaneswari, N.S. Precision farming using unmanned aerial and ground vehicles. In Proceedings of the International Conference on Technological Innovations in ICT for Agriculture and Rural Development (TIAR), Chennai, India, 15–16 July 2016; pp. 146–150. [Google Scholar] [CrossRef]

- Wang, Z.; Underwood, J.; Walsh, K.B. Machine vision assessment of mango orchard flowering. Comput. Electron. Agric. 2018, 151, 501–511. [Google Scholar] [CrossRef]

- Wang, B.; Li, S.; Guo, J.; Chen, Q. Car-like mobile robot path planning in rough terrain using multi-objective particle swarm optimization algorithm. Neurocomputing 2018, 282, 42–51. [Google Scholar] [CrossRef]

- Yakoubi, M.A.; Laskri, M.T. The path planning of cleaner robot for coverage region using genetic algorithms. J. Innov. Digit. Ecosyst. 2016, 3, 37–43. [Google Scholar] [CrossRef]

- Kostavelis, I.; Gasteratos, A. Semantic mapping for mobile robotics tasks: A survey. Robot. Auton. Syst. 2015, 66, 86–103. [Google Scholar] [CrossRef]

- D’Urso, G.; Smith, S.L.; Mettu, R.; Oksanen, T.; Fitch, R. Multi-vehicle refill scheduling with queueing. Comput. Electron. Agric. 2018, 144, 44–57. [Google Scholar] [CrossRef]

- Bechtsis, D.; Tsolakis, N.; Vlachos, D.; Iakovou, E. Sustainable supply chain management in the digitalisation era: The impact of Automated Guided Vehicles. J. Clean. Prod. 2017, 142 Pt 4, 3970–3984. [Google Scholar] [CrossRef]

- Rotz, S.; Gravely, E.; Mosby, I.; Duncan, E.; Finnis, E.; Horgan, M.; LeBlanc, J.; Martin, R.; Neufeld, H.T.; Nixon, A.; et al. Automated pastures and the digital divide: How agricultural technologies are shaping labour and rural communities. J. Rural Stud. 2019, 112–122. [Google Scholar] [CrossRef]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).