LSTM Neural Network Based Forecasting Model for Wheat Production in Pakistan

Abstract

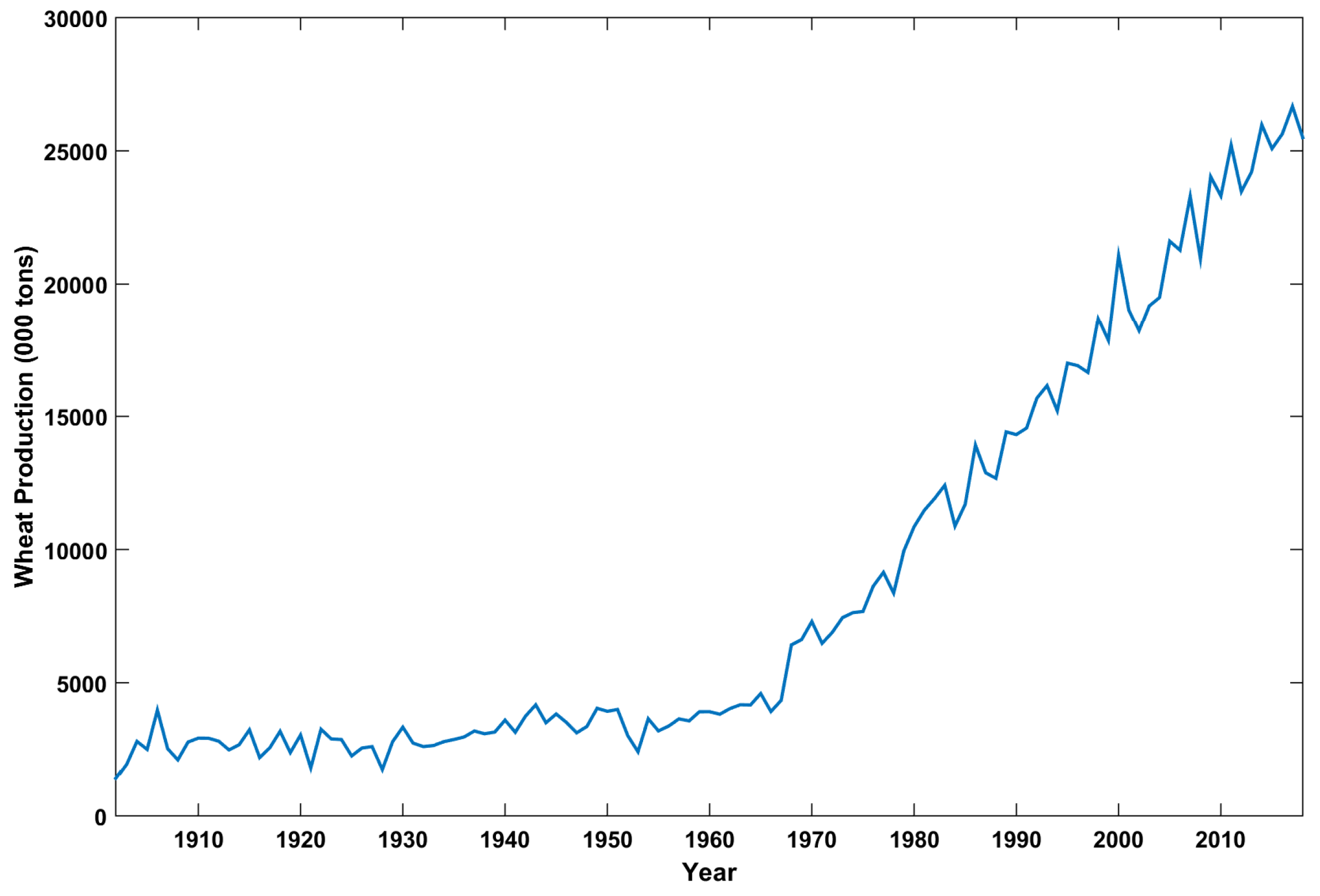

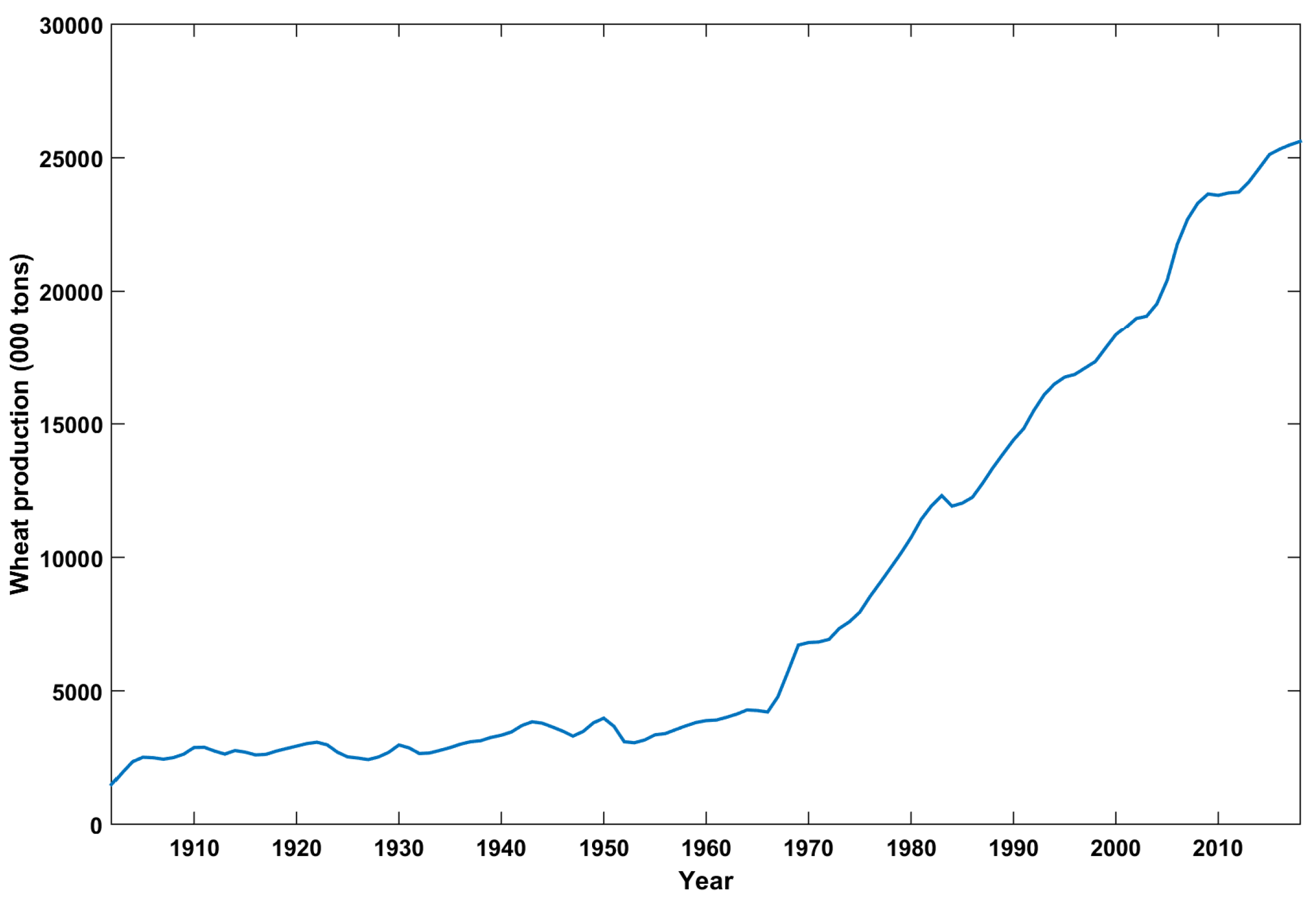

:1. Introduction

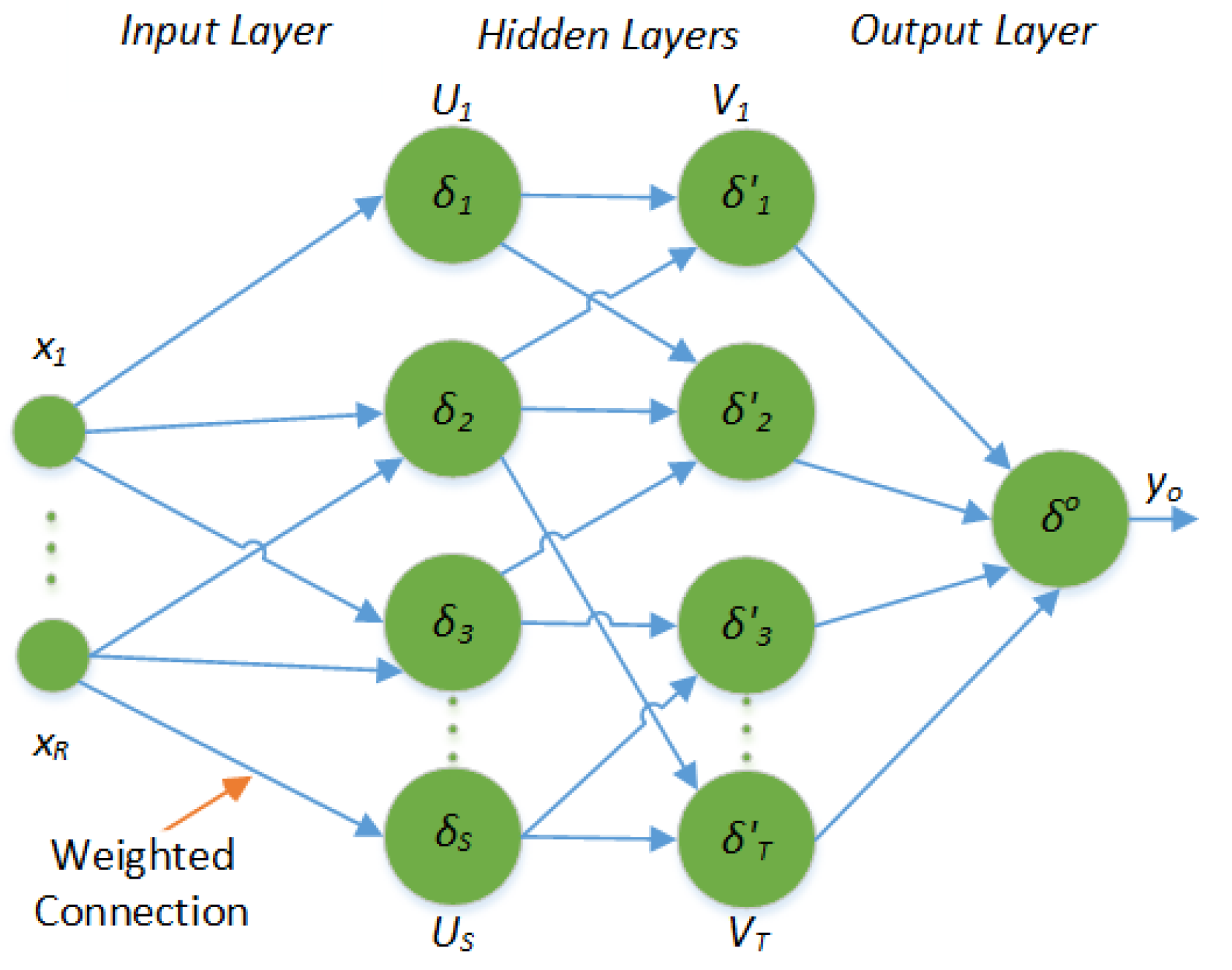

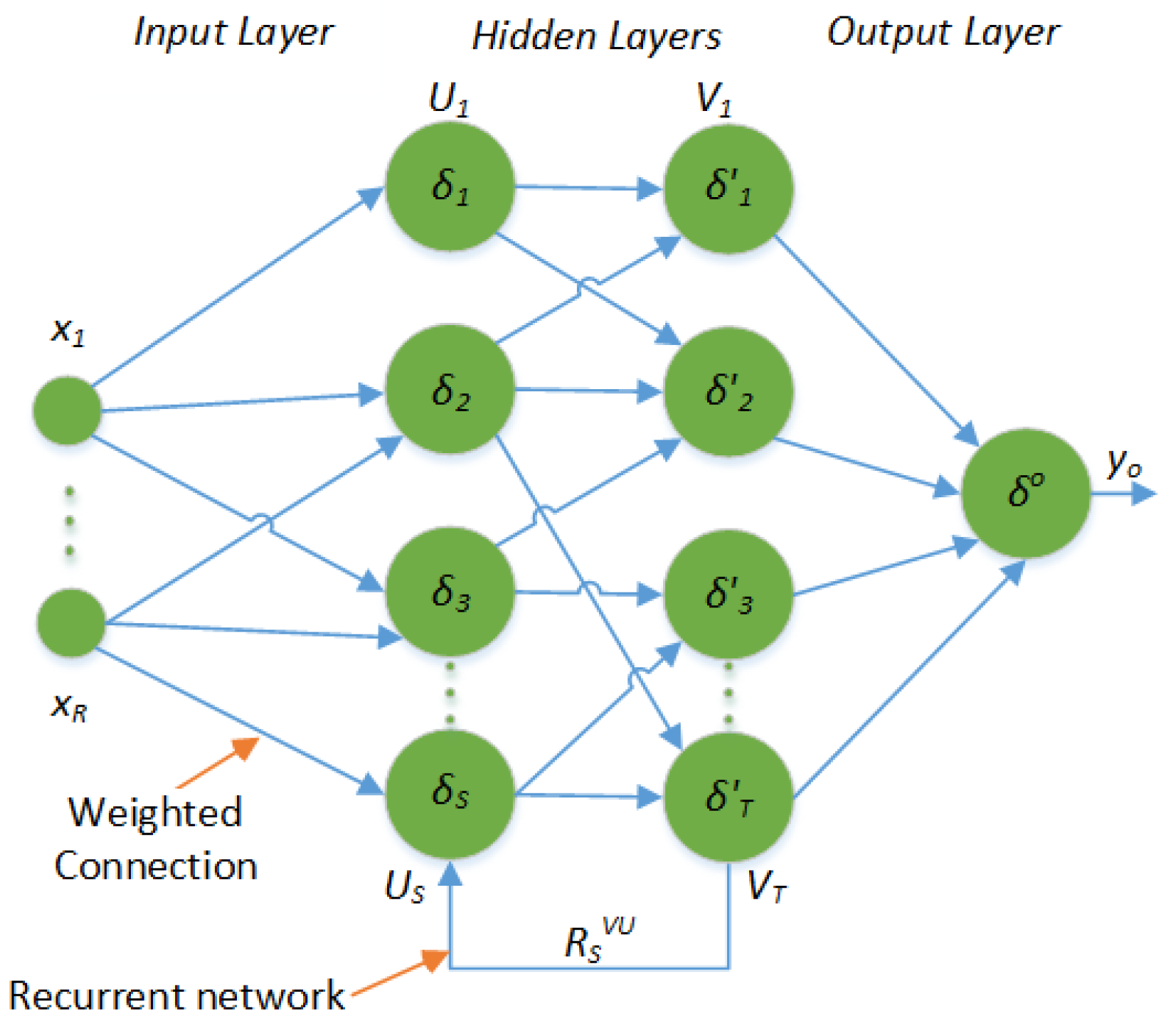

2. Artificial Neural Networks and Long Short Term Memory Neural Networks

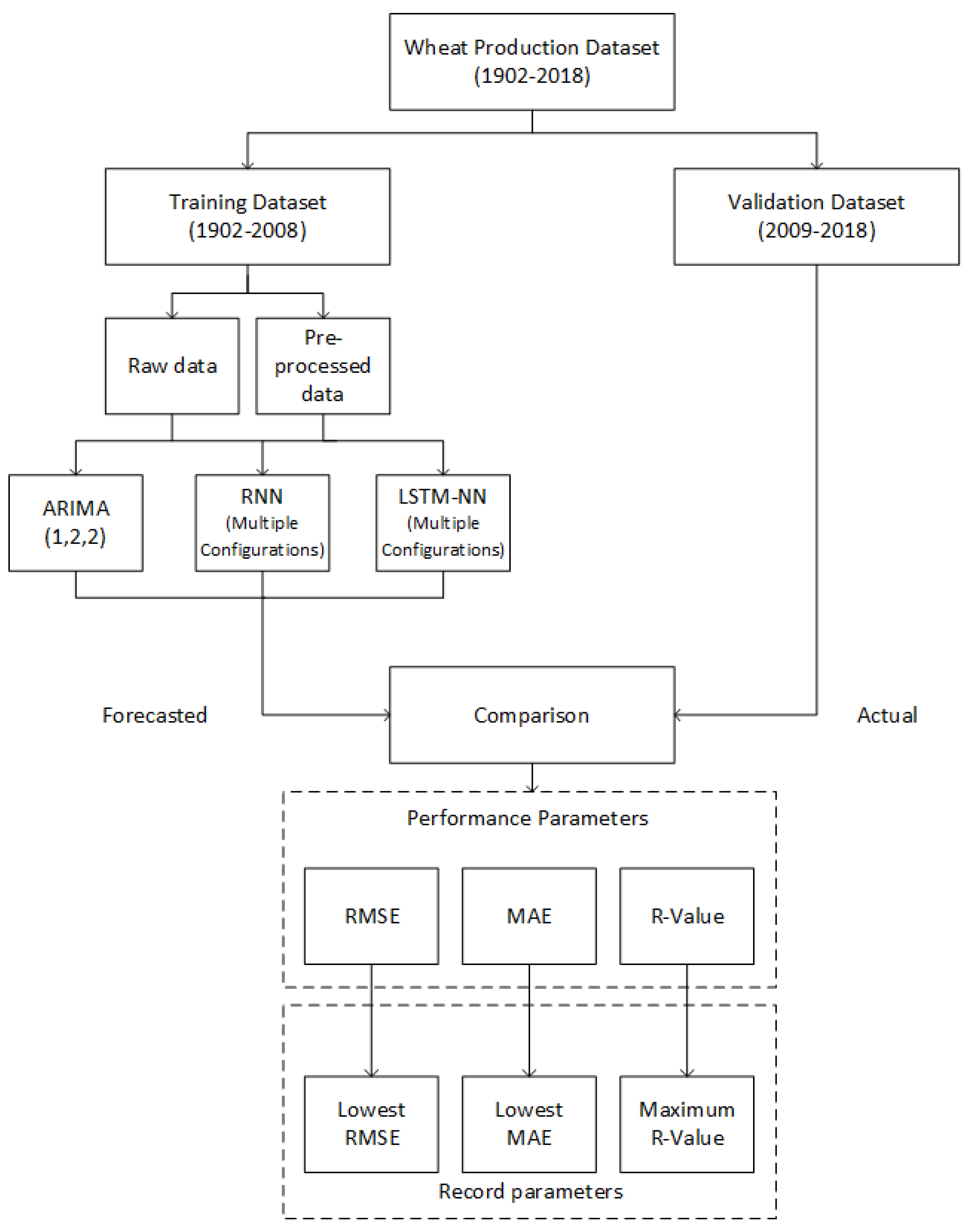

3. Materials and Methods

| Algorithm 1: Proposed Methodology |

|

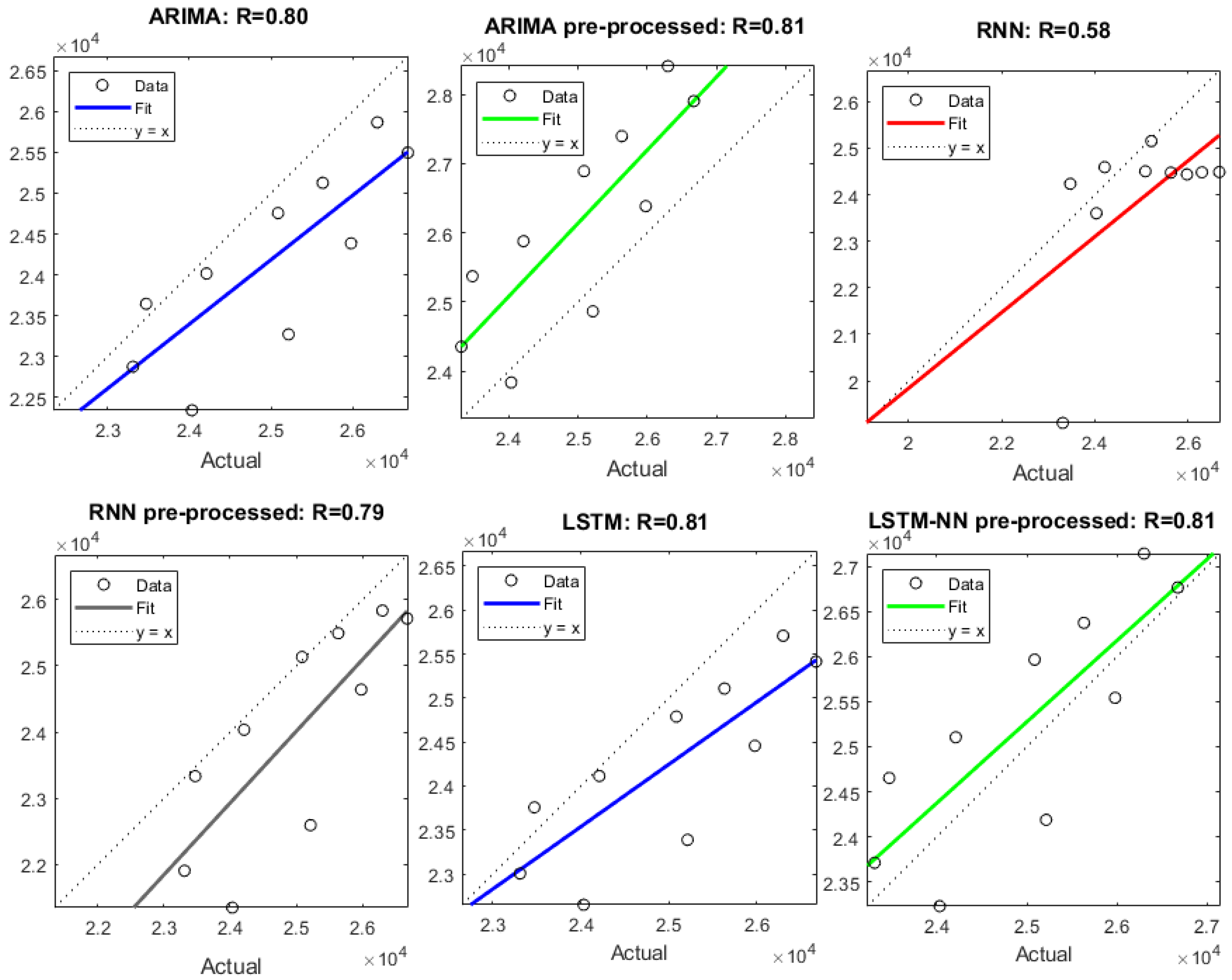

4. Results and Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Ministry of Food Agriculture. Livestock, Islamabad G.o.P. Economic Survey of Pakistan. 2011. Available online: http://www.finance.gov.pk/survey/chapter_12/02-Agriculture.pdf (accessed on 31 January 2019).

- Akram, T.; Naqvi, S.R.; Haider, S.A.; Kamran, M. Towards real-time crops surveillance for disease classification: exploiting parallelism in computer vision. Comput. Electr. Eng. 2017, 59, 15–26. [Google Scholar] [CrossRef]

- Sher, F.; Ahmad, E. Forecasting Wheat Production in Pakistan. Lahore J. Econ. 2008, 13, 57–85. [Google Scholar]

- Amin, M.; Amanullah, M.; Akbar, A. Time Series Modeling for forecasting wheat production of Pakistan. Plant Sci. 2014, 24, 1444–1451. [Google Scholar]

- Iqbal, N.; Bakhsh, K.; Maqbool, A.; Ahmad, A.S. Use of the ARIMA model for forecasting wheat area and production in Pakistan. J. Agric. Soc. Sci. 2005, 1, 120–122. [Google Scholar]

- Naqvi, S.; Akram, T.; Haider, S.; Kamran, M.; Shahzad, A.; Khan, W.; Iqbal, T.; Umer, H. Precision Modeling: Application of Metaheuristics on Current–Voltage Curves of Superconducting Films. Electronics 2018, 7, 138. [Google Scholar] [CrossRef]

- Haider, S.A.; Naqvi, S.R.; Akram, T.; Kamran, M. Prediction of critical currents for a diluted square lattice using Artificial Neural Networks. Appl. Sci. 2017, 7, 238. [Google Scholar] [CrossRef]

- Naqvi, S.R.; Akram, T.; Haider, S.A.; Kamran, M. Artificial neural networks based dynamic priority arbitration for asynchronous flow control. Neural Comput. Appl. 2018, 29, 627–637. [Google Scholar] [CrossRef]

- Naqvi, S.R.; Akram, T.; Iqbal, S.; Haider, S.A.; Kamran, M.; Muhammad, N. A dynamically reconfigurable logic cell: From artificial neural networks to quantum-dot cellular automata. Appl. Nanosci. 2018, 8, 89–103. [Google Scholar] [CrossRef]

- Rather, A.M.; Agarwal, A.; Sastry, V. Recurrent neural network and a hybrid model for prediction of stock returns. Expert Syst. Appl. 2015, 42, 3234–3241. [Google Scholar] [CrossRef]

- Bengio, S.; Vinyals, O.; Jaitly, N.; Shazeer, N. Scheduled sampling for sequence prediction with recurrent neural networks. In Proceedings of the Advances in Neural Information Processing Systems, Montreal, QC, Canada, 7–12 December 2015; pp. 1171–1179. [Google Scholar]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Tan, M.; Santos, C.D.; Xiang, B.; Zhou, B. LSTM-based deep learning models for non-factoid answer selection. arXiv, 2015; arXiv:1511.04108. [Google Scholar]

- Chen, K.; Zhou, Y.; Dai, F. A LSTM-based method for stock returns prediction: A case study of China stock market. In Proceedings of the 2015 IEEE International Conference on Big Data (Big Data), Santa Clara, CA, USA, 29 October–1 November 2015; pp. 2823–2824. [Google Scholar]

- Ma, X.; Tao, Z.; Wang, Y.; Yu, H.; Wang, Y. Long short-term memory neural network for traffic speed prediction using remote microwave sensor data. Transp. Res. Part C Emerg. Technol. 2015, 54, 187–197. [Google Scholar] [CrossRef]

- Garn, W.; Hu, Y.; Nicholson, P.; Jones, B.; Tang, H. LSTM network time series predicts high-risk tenants. In Proceedings of the Euro 2018–29th European Conference on Operational Research, EURO 2018, Valencia, Spain, 8–11 July 2018. [Google Scholar]

- Guo, W.W.; Xue, H. Crop yield forecasting using artificial neural networks: A comparison between spatial and temporal models. Math. Probl. Eng. 2014, 2014, 857865. [Google Scholar] [CrossRef]

- Meena, M.; Singh, P.K. Crop Yield Forecasting Using Neural Networks. In International Conference on Swarm, Evolutionary, and Memetic Computing; Springer: Cham, Switzerland, 2013; pp. 319–331. [Google Scholar]

- Schalkoff, R.J. Artificial Neural Networks; McGraw-Hill: New York, NY, USA, 1997; Volume 1. [Google Scholar]

- Hagan, M.T.; Demuth, H.B.; Beale, M.H.; De Jesús, O. Neural Network Design; Pws Pub.: Boston, MA, USA, 1996; Volume 20. [Google Scholar]

- Kamran, M.; Haider, S.; Akram, T.; Naqvi, S.; He, S. Prediction of IV curves for a superconducting thin film using artificial neural networks. Superlattices Microstruct. 2016, 95, 88–94. [Google Scholar] [CrossRef]

- Haider, S.A.; Naqvi, S.R.; Akram, T.; Kamran, M.; Qadri, N.N. Modeling electrical properties for various geometries of antidots on a superconducting film. Appl. Nanosci. 2017, 7, 933–945. [Google Scholar] [CrossRef]

- Cleveland, W.S. LOWESS: A program for smoothing scatterplots by robust locally weighted regression. Am. Stat. 1981, 35, 54. [Google Scholar] [CrossRef]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv, 2014; arXiv:1412.6980. [Google Scholar]

- Mandt, S.; Hoffman, M.D.; Blei, D.M. Stochastic gradient descent as approximate Bayesian inference. J. Mach. Learn. Res. 2017, 18, 4873–4907. [Google Scholar]

- Wilson, A.C.; Roelofs, R.; Stern, M.; Srebro, N.; Recht, B. The marginal value of adaptive gradient methods in machine learning. In Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 4148–4158. [Google Scholar]

| Parameter | Range | Parameter | Range |

|---|---|---|---|

| Feedback delays | 1 to 5 | No. of neurons in each hidden layer | 1 to 40 |

| No. of Hidden layers | 1 to 3 | Training functions | Bayesian regularization [19], Levenberg–Marquardt [19] |

| Parameter | Range | Parameter | Range |

|---|---|---|---|

| Hidden Units | 1 to 2000 | Learn drop rate period | 1 to 100 |

| Initial learning rate | 0.001 to 0.009 | Solver | Adam optimizer [24], stochastic gradient descent with momentum (SGDM) [25] |

| RNN | RNN pre-processed | ||||||

|---|---|---|---|---|---|---|---|

| Parameter | Value | Parameter | Value | Parameter | Value | Parameter | Value |

| Feedback delays | 2 | No. of neurons in each hidden layer | 28,6 | Feedback delays | 4 | No. of neurons in each hidden layer | 28,10 |

| No. of Hidden layers | 2 | Training function | Bayesian regularization | No. of Hidden layers | 2 | Training function | Bayesian regularization |

| LSTM-NN | LSTM-NN pre-processed | ||||||

| Parameter | Value | Parameter | Value | Parameter | Value | Parameter | Value |

| Hidden units | 2000 | Learn rate drop period | 120 | Hidden units | 200 | Learn rate drop period | 20 |

| Initial learning rate | 0.009 | Solver | SGDM | Initial learning rate | 0.0032 | Solver | SGDM |

| Year | Actual | ARIMA(1,2,2) | ARIMA(1,2,2) Pre-Processed | RNN | RNN Pre-Processed | LSTM-NN | LSTM-NN Pre-Processed |

|---|---|---|---|---|---|---|---|

| 2009 | 24,033 | 1688.6 | 197.7 | 430.06 | 2683.32 | 1382.40 | 802.89 |

| 2010 | 23,311 | 434 | −1042.1 | 4213.38 | 1405.02 | 304.46 | −400.79 |

| 2011 | 25,214 | 1941.2 | 349.8 | 60.18 | 2620.00 | 1824.97 | 1025.45 |

| 2012 | 23,473 | −173.7 | −1899.5 | −761.99 | 138.64 | −284.71 | −1180.16 |

| 2013 | 24,211 | 194 | −1668.6 | −382.72 | 176.09 | 96.99 | −893.75 |

| 2014 | 25,979 | 1592.2 | −407.3 | 1540.87 | 1337.55 | 1521.19 | 436.38 |

| 2015 | 25,086 | 329.5 | −1806.8 | 581.68 | −47.94 | 296.87 | −880.03 |

| 2016 | 25,633 | 506.8 | −1766.2 | 1156.40 | 141.09 | 525.06 | −741.32 |

| 2017 | 26,674 | 1178.1 | −1231.6 | 2185.48 | 959.31 | 1259.67 | −92.83 |

| 2018 | 26,300 | 434.4 | −2112 | 1816.46 | 465.38 | 591.66 | −843.05 |

| Model | RMSE | MAE | R-Value |

|---|---|---|---|

| ARIMA (1,2,2) [4] | 1065 | 847 | 0.80 |

| ARIMA (1,2,2) pre-processed | 1420 | 1248 | 0.81 |

| RNN | 1754 | 1313 | 0.58 |

| RNN pre-processed | 1379 | 997 | 0.79 |

| LSTM-NN | 1002 | 808 | 0.81 |

| LSTM-NN pre-processed | 792 | 729 | 0.81 |

| Year | Wheat Production Forecast |

|---|---|

| 2019 | 27,103 |

| 2020 | 27,388 |

| 2021 | 27,657 |

| 2022 | 27,908 |

| 2023 | 28,143 |

| 2024 | 28,362 |

| 2025 | 28,566 |

| 2026 | 28,756 |

| 2027 | 28,933 |

| 2028 | 29,096 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Haider, S.A.; Naqvi, S.R.; Akram, T.; Umar, G.A.; Shahzad, A.; Sial, M.R.; Khaliq, S.; Kamran, M. LSTM Neural Network Based Forecasting Model for Wheat Production in Pakistan. Agronomy 2019, 9, 72. https://doi.org/10.3390/agronomy9020072

Haider SA, Naqvi SR, Akram T, Umar GA, Shahzad A, Sial MR, Khaliq S, Kamran M. LSTM Neural Network Based Forecasting Model for Wheat Production in Pakistan. Agronomy. 2019; 9(2):72. https://doi.org/10.3390/agronomy9020072

Chicago/Turabian StyleHaider, Sajjad Ali, Syed Rameez Naqvi, Tallha Akram, Gulfam Ahmad Umar, Aamir Shahzad, Muhammad Rafiq Sial, Shoaib Khaliq, and Muhammad Kamran. 2019. "LSTM Neural Network Based Forecasting Model for Wheat Production in Pakistan" Agronomy 9, no. 2: 72. https://doi.org/10.3390/agronomy9020072

APA StyleHaider, S. A., Naqvi, S. R., Akram, T., Umar, G. A., Shahzad, A., Sial, M. R., Khaliq, S., & Kamran, M. (2019). LSTM Neural Network Based Forecasting Model for Wheat Production in Pakistan. Agronomy, 9(2), 72. https://doi.org/10.3390/agronomy9020072