SESQ: Spatially Aware Encoding and Semantically Guided Querying for 3D Grounding

Abstract

1. Introduction

- We develop RSAE, a novel Rotary Spatially Aware Encoder that explicitly encodes and leverages relative spatial relationships, overcoming the limitations of existing implicit methods in understanding complex 3D scenes.

- We introduce SQI, a semantic-aware initialization module that generates context-informed queries by measuring similarity between text and point cloud features, effectively narrowing the search space and resolving initial ambiguity.

- We performed extensive empirical evaluations on the ScanRefer benchmark dataset, demonstrating the significant efficacy and robustness of SESQ, particularly in improving localization accuracy and success rates in complex multi-object scenarios.

2. Related Work

2.1. 2D Visual Grounding

2.2. 3D Visual Grounding

3. Method

3.1. Overview

3.2. Rotary Spatially Aware Encoder

3.3. Semantic Query Initialization

4. Experiments

4.1. Dataset and Experimental Setting

- Datasets. We evaluate our proposed SESQ on two widely recognized benchmarks: ScanRefer and ReferIt3D.

- ScanRefer [7] is a large-scale dataset built on the ScanNet [31] dataset. It pairs natural language descriptions with 3D objects, featuring 51,583 descriptions for 11,046 objects across 800 scenes. We report results on the “Unique” (only one object of its class in the scene) and “Multiple” (multiple objects of the same class) subsets.

- ReferIt3D [8] contains two subsets: Nr3D and Sr3D. Nr3D consists of natural human-written descriptions, while Sr3D includes template-based descriptions. Nr3D is further categorized into “Easy” and “Hard” cases based on the number of distractors of the same category. These datasets provide a rigorous test for the model’s ability to handle fine-grained spatial disambiguation.

- Evaluation Metrics. To quantitatively evaluate the performance of SESQ, we employ the Acc@0.25 and Acc@0.5 metrics, which measure the percentage of predictions where the Intersection over Union (IoU) between the predicted and ground-truth 3D bounding boxes is greater than 0.25 and 0.5, respectively. For ReferIt3D, following standard protocol [8], we report the classification-based localization accuracy.

- Experimental Setting. The proposed framework was implemented on a workstation equipped with three NVIDIA GeForce RTX 4090 GPUs (24 GB VRAM each) and an Intel Core i9-level CPU, operating on Ubuntu 22.04 LTS. The software environment consisted of Python 3.9.10, PyTorch 1.13, and CUDA 11.7. During the training phase, we utilized a total batch size of 12. For the ScanRefer dataset, the model was optimized using an initial learning rate of and a weight decay of , where the best performance was typically achieved around the 60th epoch. For ReferIt3D subsets, the initial learning rate was set to with a weight decay of . The optimal models for Nr3D and Sr3D were obtained around the 160th and 40th epochs, respectively.

4.2. Quantitative Comparison

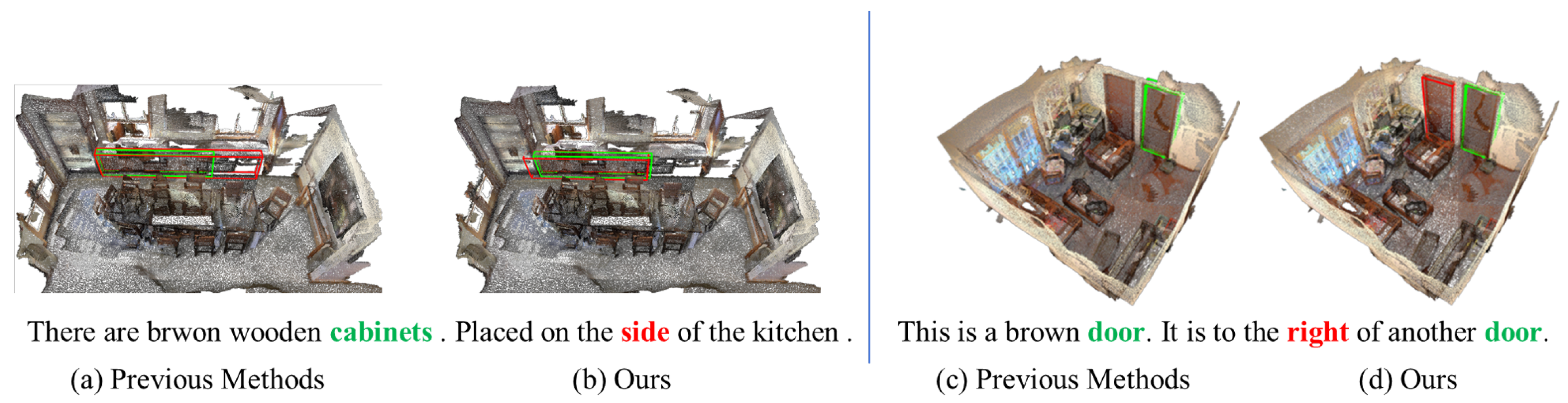

4.3. Qualitative Comparison

4.4. Ablation Study

- Ablation Study. Table 3 presents the ablation results for RSAE and SQI on the ScanRefer dataset. We evaluate the contribution of each module using ACC@0.25 and ACC@0.5 across Unique, Multiple, and Overall categories.

- Baseline. The first row shows the performance without both RSAE and SQI. This baseline relies on absolute position embeddings and heuristic query selection, yielding an Overall accuracy of 53.83% and 41.70% at the two IoU thresholds. These values serve as the reference for all subsequent experiments.

- Effect of RSAE. Incorporating RSAE alone leads to a consistent performance gain. The Overall ACC@0.5 increases to 43.90%, which is a 2.2% improvement over the baseline. Notably, RSAE achieves the highest ACC@0.5 of 39.65% in the Multiple category. This suggests that the rotary-based relative spatial information is effective for regressing precise bounding boxes, especially in scenes with many similar objects.

- Effect of SQI. Using SQI independently also improves the results, with the Overall ACC@0.25 reaching 55.13%. The impact is significant in the Unique setting, where ACC@0.5 rises to 72.16%. These results indicate that semantic-aware query initialization helps the model focus on relevant object candidates and reduces the interference from background clutter.

- Synergy of RSAE and SQI. The full SESQ framework combining both modules achieves the best results in most metrics, particularly 55.41% Overall ACC@0.25 and 44.38% Overall ACC@0.5. The data shows that SQI and RSAE address different challenges. SQI improves the initial query quality through cross-modal alignment, while RSAE refines the spatial localization through geometric awareness. The improvement at the stricter 0.5 IoU threshold confirms the robustness of the integrated approach.

- Efficiency Analysis. We also report the inference speed in the last column of Table 3. As emphasized in [37], a comprehensive evaluation of AI infrastructure should integrate performance, efficiency, and cost dimensions. Following this perspective, we observe that the full SESQ framework maintains a competitive inference speed of 5.01 FPS compared to the baseline. The marginal decrease in FPS suggests that the computational overhead introduced by the RSAE and SQI modules is negligible. Furthermore, we observed that the GPU memory consumption remains almost constant across all configurations. These results indicate that our method achieves significant accuracy gains without compromising the model’s suitability for real-time applications, fulfilling the multifaceted requirements of performance and efficiency.

5. Conclusions and Discussion

- Academic and Practical Implications. From an academic perspective, this work suggests that incorporating relative spatial priors through rotary mechanisms can be more effective for 3D coordinate modeling than relying solely on absolute position embeddings. Practically, the observed performance in multi-object environments indicates that the framework could be adapted for autonomous systems requiring object disambiguation, such as industrial automated guided vehicles (AGVs) or service robots in indoor settings.

- Limitations and Failure Analysis. Despite the observed gains, certain constraints remain. A characteristic failure case occurs when textual descriptions lack sufficient discriminative cues or contain high degrees of semantic ambiguity. In such instances, the SQI module may prioritize incorrect candidates due to the inherent uncertainty in the initial semantic alignment. Furthermore, the current spatial encoding remains focused on global point-wise relations, which may not fully represent the fine-grained hierarchical structures within complex objects.

- Future Directions. Future research could investigate more advanced contrastive learning objectives to enhance the discriminative power of the initial semantic queries. Additionally, evaluating the framework on larger-scale datasets with more diverse object categories and exploring the synergy between grounding and 3D semantic segmentation represent promising directions for further investigation.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Zhan, Y.; Yuan, Y.; Xiong, Z. Mono3dvg: 3d visual grounding in monocular images. In Proceedings of the AAAI Conference on Artificial Intelligence, Vancouver, BC, Canada, 26–27 February 2024; Volume 38, pp. 6988–6996. [Google Scholar]

- Yang, Z.; Chen, L.; Sun, Y.; Li, H. Visual point cloud forecasting enables scalable autonomous driving. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 16–22 June 2024; pp. 14673–14684. [Google Scholar]

- Shao, H.; Hu, Y.; Wang, L.; Song, G.; Waslander, S.L.; Liu, Y.; Li, H. Lmdrive: Closed-loop end-to-end driving with large language models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 16–22 June 2024; pp. 15120–15130. [Google Scholar]

- Duan, J.; Ou, Y.; Xu, S.; Liu, M. Sequential learning unification controller from human demonstrations for robotic compliant manipulation. Neurocomputing 2019, 366, 35–45. [Google Scholar] [CrossRef]

- Wu, C.; Ji, J.; Wang, H.; Ma, Y.; Huang, Y.; Luo, G.; Fei, H.; Sun, X.; Ji, R. Rg-san: Rule-guided spatial awareness network for end-to-end 3d referring expression segmentation. Adv. Neural Inf. Process. Syst. 2024, 37, 110972–110999. [Google Scholar]

- Mane, A.M.; Weerakoon, D.; Subbaraju, V.; Sen, S.; Sarma, S.E.; Misra, A. Ges3ViG: Incorporating Pointing Gestures into Language-Based 3D Visual Grounding for Embodied Reference Understanding. In Proceedings of the Computer Vision and Pattern Recognition Conference, Nashville, TN, USA, 11–15 June 2025; pp. 9017–9026. [Google Scholar]

- Chen, D.Z.; Chang, A.X.; Nießner, M. Scanrefer: 3d object localization in rgb-d scans using natural language. In Proceedings of the European Conference on Computer Vision (ECCV); Springer: Cham, Switzerland, 2020; pp. 202–221. [Google Scholar]

- Achlioptas, P.; Abdelreheem, A.; Xia, F.; Elhoseiny, M.; Guibas, L. Referit3d: Neural listeners for fine-grained 3d object identification in real-world scenes. In Proceedings of the European Conference on Computer Vision (ECCV); Springer: Cham, Switzerland, 2020; pp. 422–440. [Google Scholar]

- Huang, P.H.; Lee, H.H.; Chen, H.T.; Liu, T.L. Text-guided graph neural networks for referring 3d instance segmentation. In Proceedings of the AAAI Conference on Artificial Intelligence (AAAI), Vancouver, BC, Canada, 2–9 February 2021; Volume 35, pp. 1610–1618. [Google Scholar]

- Yuan, Z.; Yan, X.; Liao, Y.; Zhang, R.; Wang, S.; Li, Z.; Cui, S. Instancerefer: Cooperative holistic understanding for visual grounding on point clouds through instance multi-level contextual referring. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 1791–1800. [Google Scholar]

- Yang, Z.; Zhang, S.; Wang, L.; Luo, J. Sat: 2d semantics assisted training for 3d visual grounding. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 1856–1866. [Google Scholar]

- Luo, J.; Fu, J.; Kong, X.; Gao, C.; Ren, H.; Shen, H.; Xia, H.; Liu, S. 3d-sps: Single-stage 3d visual grounding via referred point progressive selection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 16454–16463. [Google Scholar]

- Jain, A.; Gkanatsios, N.; Mediratta, I.; Fragkiadaki, K. Bottom up top down detection transformers for language grounding in images and point clouds. In Proceedings of the European Conference on Computer Vision (ECCV); Springer: Cham, Switzerland, 2022; pp. 417–433. [Google Scholar]

- Wu, Y.; Cheng, X.; Zhang, R.; Cheng, Z.; Zhang, J. Eda: Explicit text-decoupling and dense alignment for 3d visual grounding. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 17–24 June 2023; pp. 19231–19242. [Google Scholar]

- Carion, N.; Massa, F.; Synnaeve, G.; Usunier, N.; Kirillov, A.; Zagoruyko, S. End-to-end object detection with transformers. In Proceedings of the European Conference on Computer Vision (ECCV); Springer: Cham, Switzerland, 2020; pp. 213–229. [Google Scholar]

- Guo, P.; Zhu, H.; Ye, H.; Li, T.; Chen, T. Revisiting 3D visual grounding with Context-aware Feature Aggregation. Neurocomputing 2024, 601, 128195. [Google Scholar] [CrossRef]

- Gao, C.; Chen, J.; Liu, S.; Wang, L.; Zhang, Q.; Wu, Q. Room-and-object aware knowledge reasoning for remote embodied referring expression. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021; pp. 3064–3073. [Google Scholar]

- Hu, R.; Xu, H.; Rohrbach, M.; Feng, J.; Saenko, K.; Darrell, T. Natural language object retrieval. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 4555–4564. [Google Scholar]

- Yu, L.; Lin, Z.; Shen, X.; Yang, J.; Lu, X.; Bansal, M.; Berg, T.L. Mattnet: Modular attention network for referring expression comprehension. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 1307–1315. [Google Scholar]

- Hong, R.; Liu, D.; Mo, X.; He, X.; Zhang, H. Learning to compose and reason with language tree structures for visual grounding. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 44, 684–696. [Google Scholar] [CrossRef] [PubMed]

- Liu, D.; Zhang, H.; Wu, F.; Zha, Z.J. Learning to assemble neural module tree networks for visual grounding. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 4673–4682. [Google Scholar]

- Zhang, H.; Niu, Y.; Chang, S.F. Grounding referring expressions in images by variational context. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–23 June 2018; pp. 4158–4166. [Google Scholar]

- Deng, J.; Yang, Z.; Chen, T.; Zhou, W.; Li, H. Transvg: End-to-end visual grounding with transformers. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 1769–1779. [Google Scholar]

- Liao, Y.; Liu, S.; Li, G.; Wang, F.; Chen, Y.; Qian, C.; Li, B. A real-time cross-modality correlation filtering method for referring expression comprehension. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 10880–10889. [Google Scholar]

- Qi, C.R.; Su, H.; Mo, K.; Guibas, L.J. Pointnet: Deep learning on point sets for 3d classification and segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 652–660. [Google Scholar]

- Qi, C.R.; Yi, L.; Su, H.; Guibas, L.J. Pointnet++: Deep hierarchical feature learning on point sets in a metric space. In Advances in Neural Information Processing Systems (NeurIPS); Curran Associates, Inc.: Red Hook, NY, USA, 2017; Volume 30. [Google Scholar]

- Zhao, H.; Jiang, L.; Jia, J.; Torr, P.H.; Koltun, V. Point transformer. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 16259–16268. [Google Scholar]

- Wu, X.; Jiang, L.; Wang, P.S.; Liu, Z.; Liu, X.; Qiao, Y.; Ouyang, W.; He, T.; Zhao, H. Point Transformer V3: Simpler Faster Stronger. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 16–22 June 2024; pp. 4840–4851. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. In Advances in Neural Information Processing Systems; Curran Associates, Inc.: Red Hook, NY, USA, 2017; Volume 30. [Google Scholar]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Minneapolis, MN, USA, 2–7 June 2019; Volume 1 (Long and Short Papers), pp. 4171–4186. [Google Scholar]

- Dai, A.; Chang, A.X.; Savva, M.; Halber, M.; Funkhouser, T.; Nießner, M. Scannet: Richly-annotated 3d reconstructions of indoor scenes. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 22–25 July 2017; pp. 5828–5839. [Google Scholar]

- Kamath, A.; Singh, M.; LeCun, Y.; Synnaeve, G.; Misra, I.; Carion, N. Mdetr-modulated detection for end-to-end multi-modal understanding. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 1780–1790. [Google Scholar]

- Qian, Z.; Ma, Y.; Lin, Z.; Ji, J.; Zheng, X.; Sun, X.; Ji, R. Multi-branch Collaborative Learning Network for 3D Visual Grounding. In Proceedings of the European Conference on Computer Vision (ECCV); Springer: Cham, Switzerland, 2024; pp. 381–398. [Google Scholar]

- Wang, X.; Zhao, N.; Han, Z.; Guo, D.; Yang, X. Augrefer: Advancing 3d visual grounding via cross-modal augmentation and spatial relation-based referring. In Proceedings of the AAAI Conference on Artificial Intelligence, Philadelphia, PA, USA, 25 February–4 March 2025; Volume 39, pp. 8006–8014. [Google Scholar]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. Roberta: A robustly optimized bert pretraining approach. arXiv 2019, arXiv:1907.11692. [Google Scholar]

- Lv, C.; Lv, X.; Wang, Z.; Zhao, T.; Tian, W.; Zhou, Q.; Zeng, L.; Wan, M.; Liu, C. A focal quotient gradient system method for deep neural network training. Appl. Soft Comput. 2025, 184, 113704. [Google Scholar] [CrossRef]

- He, Q. A Unified Metric Architecture for AI Infrastructure: A Cross-Layer Taxonomy Integrating Performance, Efficiency, and Cost. arXiv 2026, arXiv:2511.21772. [Google Scholar]

| Method | Pub. | Year | Unique (∼19%) | Multiple (∼81%) | Overall | |||

|---|---|---|---|---|---|---|---|---|

| 0.25 | 0.5 | 0.25 | 0.5 | 0.25 | 0.5 | |||

| ScanRefer [7] | ECCV | 2020 | 67.46 | 46.19 | 32.06 | 21.26 | 38.97 | 26.10 |

| ReferIt3D [8] | ECCV | 2020 | 53.8 | 37.5 | 21.0 | 12.8 | 26.4 | 16.9 |

| TGNN [9] | AAAI | 2021 | 68.61 | 56.80 | 29.84 | 23.18 | 37.37 | 29.70 |

| InstanceRefer [10] | ICCV | 2021 | 77.45 | 66.83 | 31.27 | 24.77 | 40.23 | 32.93 |

| 3D-SPS [12] | CVPR | 2022 | 84.12 | 66.72 | 40.32 | 29.82 | 48.82 | 36.98 |

| BUTD-DETR [13] | ECCV | 2022 | 82.88 | 64.98 | 44.73 | 33.97 | 50.42 | 38.60 |

| EDA [14] | CVPR | 2023 | 86.40 | 69.42 | 48.11 | 36.82 | 53.83 | 41.70 |

| MCLN [33] | ECCV | 2024 | 84.43 | 68.36 | 49.72 | 38.41 | 54.30 | 42.64 |

| AugRefer [34] | AAAI | 2025 | 86.21 | 70.75 | 49.96 | 39.06 | 55.68 | 44.03 |

| Ours | – | – | 86.75 | 72.94 | 49.90 | 39.37 | 55.41 | 44.38 |

| Method | Pub. | Year | Easy | Hard | View-dep. | View-indep. | Overall |

|---|---|---|---|---|---|---|---|

| Nr3D | |||||||

| ReferIt3DNet [8] | ECCV | 2020 | 43.6 | 27.9 | 32.5 | 37.1 | 35.6 |

| TGNN [9] | AAAI | 2021 | 44.2 | 30.6 | 35.8 | 38.0 | 37.3 |

| InstanceRefer [10] | ICCV | 2021 | 46.0 | 31.8 | 34.5 | 41.9 | 38.8 |

| 3D-SPS [12] | CVPR | 2022 | 58.1 | 45.1 | 48.0 | 53.2 | 51.5 |

| BUTD-DETR [13] | ECCV | 2022 | – | – | – | – | 49.1 |

| EDA [14] | CVPR | 2023 | 58.2 | 46.1 | 50.2 | 53.1 | 52.1 |

| MCLN [33] | ECCV | 2024 | – | – | – | – | 59.8 |

| Ours | – | – | 62.4 | 51.0 | 53.1 | 58.4 | 56.6 |

| Sr3D | |||||||

| ReferIt3DNet [8] | ECCV | 2020 | 44.7 | 31.5 | 39.2 | 40.8 | 40.8 |

| TGNN [9] | AAAI | 2021 | 48.5 | 36.9 | 45.8 | 45.0 | 45.0 |

| InstanceRefer [10] | ICCV | 2021 | 51.1 | 40.5 | 45.4 | 48.1 | 48.0 |

| 3D-SPS [12] | CVPR | 2022 | 56.2 | 65.4 | 49.2 | 63.2 | 62.6 |

| BUTD-DETR [13] | ECCV | 2022 | – | – | – | – | 65.6 |

| EDA [14] | CVPR | 2023 | 70.3 | 62.9 | 54.1 | 68.8 | 68.1 |

| MCLN [33] | ECCV | 2024 | – | – | – | – | 68.4 |

| Ours | – | – | 71.8 | 63.2 | 53.7 | 69.9 | 69.2 |

| W/o | W/o | Unique (∼19%) | Multiple (∼81%) | Overall | Inference | |||

|---|---|---|---|---|---|---|---|---|

| RSAE | SQI | 0.25 | 0.5 | 0.25 | 0.5 | 0.25 | 0.5 | Speed (FPS) |

| 86.40 | 69.42 | 48.11 | 36.82 | 53.83 | 41.70 | 5.43 | ||

| ✓ | 87.67 | 70.26 | 49.28 | 39.65 | 54.78 | 43.90 | 5.18 | |

| ✓ | 87.60 | 72.16 | 49.44 | 38.57 | 55.13 | 43.58 | 5.22 | |

| ✓ | ✓ | 86.75 | 72.94 | 49.90 | 39.37 | 55.41 | 44.38 | 5.01 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Li, J.; Wu, Y.; Huang, T.; Cao, M. SESQ: Spatially Aware Encoding and Semantically Guided Querying for 3D Grounding. Computers 2026, 15, 145. https://doi.org/10.3390/computers15030145

Li J, Wu Y, Huang T, Cao M. SESQ: Spatially Aware Encoding and Semantically Guided Querying for 3D Grounding. Computers. 2026; 15(3):145. https://doi.org/10.3390/computers15030145

Chicago/Turabian StyleLi, Jinyuan, Yundong Wu, Tiancai Huang, and Mengyun Cao. 2026. "SESQ: Spatially Aware Encoding and Semantically Guided Querying for 3D Grounding" Computers 15, no. 3: 145. https://doi.org/10.3390/computers15030145

APA StyleLi, J., Wu, Y., Huang, T., & Cao, M. (2026). SESQ: Spatially Aware Encoding and Semantically Guided Querying for 3D Grounding. Computers, 15(3), 145. https://doi.org/10.3390/computers15030145