1. Introduction

Semantic segmentation serves as a cornerstone of computer vision, focusing on assigning a categorical label to each individual pixel. While conventional approaches [

1,

2,

3,

4] have achieved significant progress, they are typically constrained to a predefined and static label set, limiting their generalization to novel classes. To overcome this limitation, open-vocabulary semantic segmentation (OVSS) has gained increasing attention. Specifically, OVSS aims to transcend the constraints of predefined training categories, allowing models to generalize to arbitrary concepts defined by textual descriptions. The core objective lies in reconciling the inherent modality gap between dense, pixel-level visual features and discrete, high-level textual semantics by establishing an effective cross-modal connection, which enables the model to leverage linguistic knowledge to assign accurate semantic labels to each individual pixel.

Early research [

5,

6] in OVSS primarily focused on bridging the gap between visual features and textual semantics through vision–semantic space mapping. These approaches almost all followed two strategies. The first, characterized by structural substitution [

5], involves replacing fixed classifiers in traditional segmentation models with pre-trained word embeddings while incorporating class-agnostic localization branches to enhance generalization to novel categories. The second strategy focuses on designing specialized mapping modules and optimization objectives [

6], such as ranking or cross-modal alignment losses, to facilitate efficient visual-to-semantic transformation. Although these pioneering methods struggled to fully bridge the modality gap between pixel-level features and high-level semantics, they established the methodological foundation for subsequent advancements in the field.

The emergence of powerful vision–language model (VLM) like CLIP [

7] and ALIGN [

8] has brought OVSS into a new stage centered on pre-trained foundation models. Leveraging the cross-modal alignment capabilities of CLIP, numerous methodologies have emerged, among which the two-stage framework proposed by MaskCLIP [

9] has become a representative baseline. In this classic two-stage method, a class-agnostic generator first produces a mask proposal, which is then classified by a frozen CLIP encoder. To further improve segmentation accuracy, researchers have introduced several optimization strategies: OVSeg [

10] introduces mask-prompt tuning to adapt the CLIP model for the specific task of mask proposal classification. FreeSeg [

11] expands the text space by employing unified text representations to handle diverse concepts. Recent works like ODISE [

12] and DiffSegmenter [

13] incorporate diffusion models to extract high-quality proposals and localization information. Despite their strong performance, these two-stage methods face notable bottleneck: (1) The separation of mask generation and classification hinders end-to-end optimization. (2) Cropping the masked regions for independent classification inevitably disrupts the global contextual information of the image, which limits the model’s discriminative performance. (3) The classification of multiple mask proposals using VLM incurs substantial computational overhead.

To overcome the limitations of two-stage methods, one-stage methods strive to enable end-to-end training through fine-tuning of the VLM model. LSeg [

14] represents one of the early explorations, achieving cross-category segmentation by aligning pixel-level visual features with pre-trained text embeddings. To preserve CLIP’s open-vocabulary capability while improving its adaptability to the segmentation task, SAN [

15] introduced a side-adapter network to efficiently fine-tune CLIP, while ZegCLIP [

16] employed a deep prompt tuning strategy to enhance the alignment between text embeddings and pixel-level features. In recent years, cost volume-based methods have gained significant attention due to their superior open-vocabulary generalization. CAT-Seg [

17] is the first to introduce this approach, where cost volumes are aggregated separately along the spatial and categorical dimensions to enhance segmentation results. Building upon this, SED [

18] introduces a simple encoder–decoder structure that fuses encoder’s features to better recover image details. Furthermore, several studies have begun integrating additional foundation models to compensate for CLIP’s deficiency in capturing spatial information. For instance, EBSeg [

19] and Trident [

20] both leverage the Segment Anything Model (SAM) [

21], either its features or its high-resolution encoder, to provide the essential spatial information required for precise segmentation. In addition, some studies focus on cross-modal interaction. BBN [

22] proposes a bidirectional bridging network that employs optimal transport to purify text embeddings before guiding visual–semantic aggregation. Similarly, ITA [

23] emphasizes the role of text features, utilizing class mining and detail enhancement modules to introduce image–textual correlations.

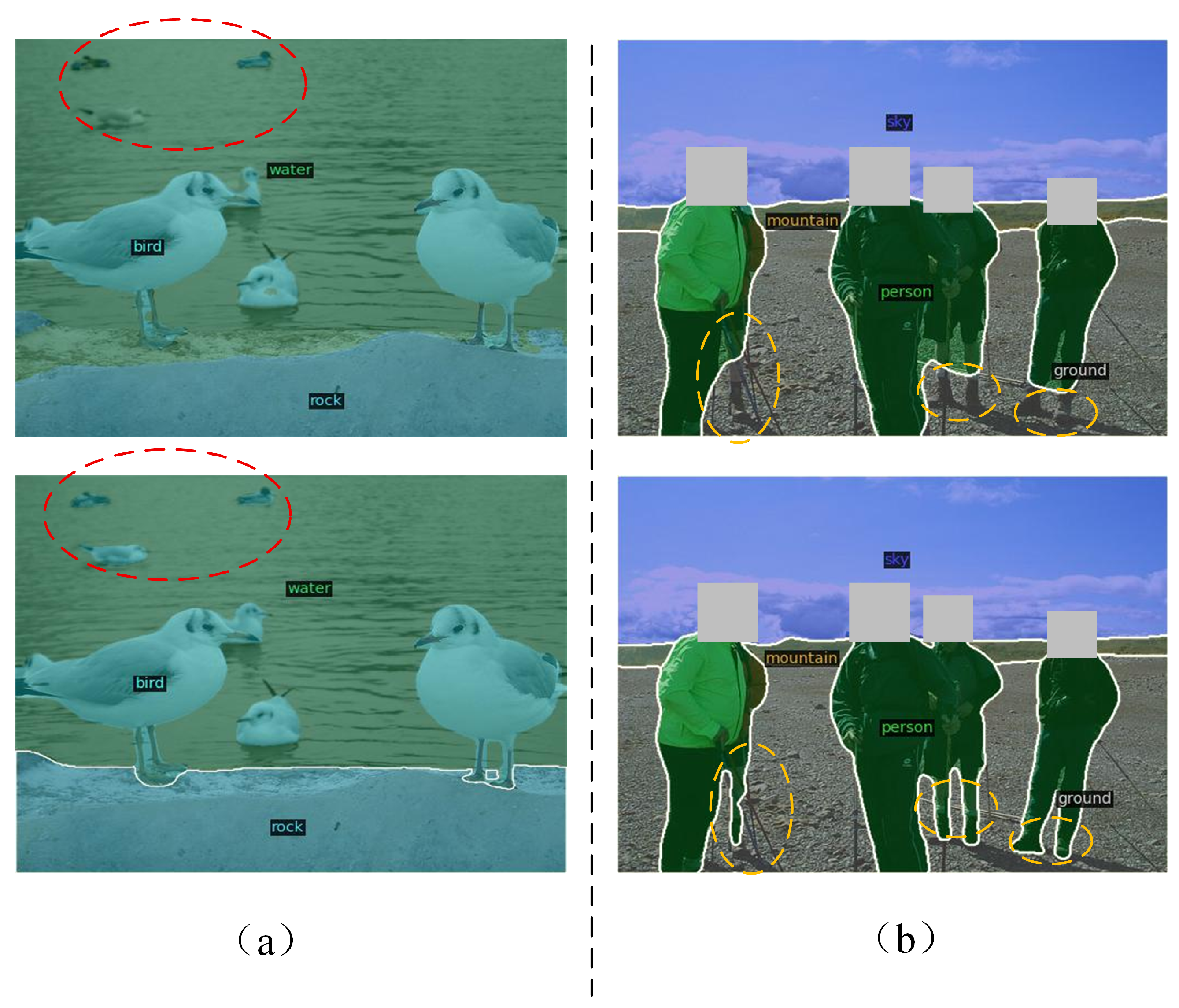

Despite these advances, most one-stage OVSS methods devote the majority of their design effort to harnessing the cross-modal alignment capabilities of ViT-based VLM [

24], while offering comparatively little architectural support for the decoding process itself. This oversight manifests in two interrelated limitations. First, the decoding stage typically operates on the single-scale output of ViT-based VLM, which lacks the multi-scale spatial granularity essential for recovering fine details. Second, the decoding stage lacks explicit boundary constraints to regulate feature aggregation, directly resulting in inaccurate boundary localization and blurred object contours. Together, these deficiencies lead to missed small objects and inaccurate boundary localization, as illustrated by the concrete example in

Figure 1.

To address these issues, we propose CLIP-HBD, a hierarchical boundary-constrained decoding network. Our approach reconstructs multi-scale features by leveraging the rich semantic priors of VLM and introduces a novel boundary-constrained decoding strategy to recover intricate edge details. Specifically, CLIP-HBD employs a ConvNeXt-based backbone together with a hierarchical adaptation mechanism that fuses multi-layer VLM features, producing a comprehensive multi-scale representation. To overcome the challenge of inaccurate boundaries, we perform explicit boundary prediction from the multi-scale features and transform the resulting boundary maps into structural constraints that guide the decoder to focus on boundary regions. By integrating these structural constraints with hierarchical features, the decoding process preserves semantic consistency while restoring precise object boundaries. Extensive experiments on multiple benchmarks demonstrate that CLIP-HBD achieves superior performance in both segmentation accuracy and boundary quality. The main contributions of this paper are summarized as follows:

- 1.

CLIP-HBD overcomes the single-scale limitation of ViT and develops an effective approach to construct multi-scale features enriched with spatial priors, compensating for the weak spatial detail perception of vision–language model and providing a solid feature foundation for the subsequent decoding process.

- 2.

CLIP-HBD introduces a boundary-constrained decoding strategy that converts predicted boundary maps into spatial attention priors, steering semantic feature propagation and boundary reconstruction to enforce geometric constraints and significantly improve mask boundary quality.

- 3.

Extensive experiments on multiple benchmarks demonstrate that CLIP-HBD achieves superior performance in both segmentation accuracy and boundary quality, validating the effectiveness of our method.

The remainder of this paper is organized as follows:

Section 2 reviews the related work in vision–language models, boundary-aware segmentation, and the evolution of open-vocabulary semantic segmentation.

Section 3 details the proposed CLIP-HBD framework, covering hierarchical feature construction, boundary and cost volume generation, boundary-constrained decoding, and the multi-task hybrid loss.

Section 4 presents the experimental results, comparative analysis with state-of-the-art methods, and extensive ablation studies across multiple benchmarks, including ADE20K [

25], Pascal Context [

26], and Pascal VOC [

27]. Finally,

Section 5 concludes the paper and discusses the implications of our findings for future research in open-vocabulary semantic segmentation.

3. Methods

Figure 2 illustrates the overall architecture of our open-vocabulary semantic segmentation model CLIP-HBD. In this framework, we first extract fundamental image features using a ConvNeXt [

41] backbone, while integrating semantic priors via the deep reuse of CLIP’s features. This design effectively synergizes CLIP’s rich semantics with the structured spatial representations of ConvNeXt, fully leveraging the latent capabilities of pre-trained CLIP models. Building on this, CLIP-HBD introduces an explicit boundary prediction branch paired with a boundary-constrained decoding strategy. By utilizing predicted boundary maps as spatial attention priors, the network dynamically steers the decoding process to enhance both semantic consistency and boundary sharpness, ultimately yielding the final high-resolution semantic segmentation result. During the training phase, a multi-task hybrid loss function is employed for training, ensuring joint optimization for semantic mask accuracy and geometric boundary precision.

3.1. Multi-Scale Hierarchical Feature Construction

A persistent challenge in semantic segmentation is that single-scale feature representations often fail to capture global structures and local details at the same time. This is because deep, low-resolution features excel at capturing overall contours via rich semantics, while shallow, high-resolution features are indispensable for recovering fine-grained boundary details. Leveraging the intrinsic complementarity between these features through multi-scale modeling allows the network to capture both global context and local details simultaneously, leading to superior object boundary reconstruction and enhanced overall segmentation accuracy. Driven by this motivation, we select ConvNeXt as the backbone network to construct multi-scale information extraction. Through its hierarchical convolutional architecture, ConvNeXt generates features with progressively decreasing resolutions, providing a solid foundation for capturing rich geometric information from holistic contours to local boundaries. To further empower the model’s semantic understanding in open-vocabulary scenarios, we integrate the corresponding deep semantic features from the CLIP encoder at each feature extraction level via a custom-designed adaptation mechanism.

Given an input image

with height

H, width

W, and three color channels (where

and

in our default setting), the ConvNeXt encoder generates hierarchical features

, with progressively decreasing spatial resolutions, a process that naturally preserves rich multi-scale spatial details and local geometric information essential for dense prediction. Here,

denotes the hierarchical stage index, with the spatial dimensions scaled as

relative to the original image dimensions

, while

represents the channel dimension at stage

l, which doubles progressively at each successive level. Simultaneously, given the CLIP image Transformer encoder, we extract intermediate semantic representations from three distinct layers, denoted as

. Here, the spatial resolution is fixed at

, corresponding to the number of image patches defined by the ViT-B architecture, while

D represents the CLIP latent dimension. Here,

refers to the index of the Transformer blocks from shallow to deep levels. These representations serve as robust semantic priors, empowering the multi-scale feature reconstruction process to better handle the complexities of open-vocabulary scenarios. To more clearly illustrate these dimensional relationships, we detail the feature dimensions of the encoder architectures in

Table 1.

In order to fuse the complementary feature representations from both the CLIP and ConvNeXt encoders, we introduce a Feature Fusion Module at the ConvNeXt stage. This module first performs a two-step alignment process on the CLIP features to ensure architectural compatibility: bi-linear upsampling is applied to expand the spatial resolution, while a

convolution is utilized to project the CLIP latent channels into the corresponding ConvNeXt stage’s dimension. These aligned features are then integrated using a learnable spatial-adaptive weighting mechanism to generate the enhanced multi-scale features, formulated as:

where

denotes the enhanced multi-scale feature for the

l-th stage of the ConvNeXt encoder, and

is a learnable weight for the

l-th stage’s fusion that dynamically adjusts the relative contribution of the structural features from ConvNeXt and the semantic priors from CLIP.

denotes the upsampling operation. Different upsampling factors are applied at different stages of the ConvNeXt. At the

l-th stage, it upsamples a feature map of spatial size

to the target resolution

.

denotes the original feature map extracted from the

k-th specific layer of the CLIP Transformer encoder. Specifically, we define

, establishing a direct layer-to-stage correspondence between the

k-th specific layer of the CLIP and the

l-th stage of the ConvNeXt backbone. This symmetrical mapping ensures that both the localized structural details and global semantic information are aligned and fused at homologous levels of their respective hierarchies. Notably, in certain stages where the spatial resolution or channel dimension already aligns between the two encoders, the corresponding adjustment operations are bypassed, allowing for direct weighted fusion to preserve the original feature integrity. Additionally,

denotes a

convolution layer employed for channel dimension projection.

Finally, the resulting enhanced multi-scale features are propagated as inputs to the subsequent ConvNeXt stage. Through this iterative refinement of representations, the network continuously enriches the feature hierarchy, culminating in high-quality multi-scale feature maps that are well-optimized for dense prediction.

3.2. Boundary and Cost Volume Generation

In this section, we detail the generation of explicit boundary maps from multi-scale features, as well as the construction of cost volumes from CLIP features, both of which serve as foundational primitives to guide the subsequent constrained decoding process.

To begin with, we employ a lightweight boundary prediction head to predict the corresponding boundary map from the extracted multi-scale features. Specifically, to maintain a lightweight design,

convolutions are utilized to project all hierarchical features to a uniform channel dimension

, significantly cutting down the floating-point operations. The projected features

at the

l-th stage are formulated as follows:

where

denotes the

convolution operation with input channels

(the original channel depth of the

l-th stage) and output channels

(the unified dimension for the boundary head).

Subsequently, we perform a hierarchical top-down fusion starting from the deepest stage (Stage 4) towards the highest resolution level. To provide a clearer visualization of the architectural flow, the details of this fusion process are illustrated in

Figure 3. Let

be the initial fusion feature, denoted as

. For each subsequent layer

, the fused feature from the previous level

is bi-linearly upsampled by a factor of 2 to match the spatial resolution of the current stage feature

. These features are then concatenated along the channel dimension, followed by a

convolution layer for information aggregation to generate the current level’s fused feature

. The process is formulated as:

Finally, based on the final fused feature

, we employ two cascaded

transposed convolution layers to gradually restore the spatial resolution to match the original input image. Notably, this operation inherently performs a smoothing effect that suppresses potential checkerboard artifacts arising from the upsampling process [

42]. A

convolution is then used to compress the channel dimension to 1, followed by a Sigmoid function to normalize the output into the range

. This yields the final boundary probability map

, which is of the same resolution as the input image:

where

denotes the Sigmoid activation function, and

denotes a

transposed convolution operation, which are employed to achieve a

upsampling. The value of

represents the probability of a pixel belonging to an object boundary. This boundary probability map serves as an explicit geometric constraint to guide the semantic flow during the subsequent decoding process, forcing the network to focus on the reconstruction of boundary regions.

Following the previous open-vocabulary segmentation method [

17,

18], we generate the image–text cost volume from the output of CLIP’s image encoder. The output feature map

serves as the final aligned visual representation, where

D represents the CLIP latent dimension. Meanwhile, given an arbitrary set of

C category names, we adopt the prompt template strategy to generate textual descriptions for each category. Feeding these descriptions into the CLIP text encoder yields the text embeddings

, where

P denotes the number of prompt templates per category.

To compute the similarity between dense visual features and text embeddings, we calculate the cosine similarity between

and

E at each spatial location

:

where

c and

p represent the indices of the category and the prompt template, respectively. Consequently, we obtain the initial multi-template cost volume

. To condense this representation for the subsequent decoding layers, a convolution layer is employed to aggregate information along the template dimension, projecting it into a structured feature representation

, where

denotes the channel dimension of the cost volume feature, and

C denotes the number of open-vocabulary categories.

3.3. Boundary-Constrained Decoding Strategy

In semantic segmentation, boundary information plays a critical role in improving the discriminability of object contours. However, within the context of open-vocabulary scenarios, capitalizing on boundary information to dynamically steer feature fusion during decoding is still in its infancy. Therefore, in this section, in addition to incorporating the multi-scale features extracted in the previous section, we further propose a boundary-constrained decoding strategy, which converts explicitly predicted boundary map into spatial attention, which guides the decoder’s focus toward boundary regions during decoding, thus achieving accurate boundary reconstruction.

The detailed architecture of our boundary-constrained decoder is visualized in

Figure 4. The decoding process commences from the most semantically enriched image–text alignment feature

, progressively restoring the spatial resolution through multiple decoding stages. Formally, let

denote the input feature at the

i-th decoding stage, where

. For the first stage (

),

is initialized from the cost volume feature

. Across the three stages, the spatial resolution is progressively restored such that

and

, while the channel dimension is halved at each subsequent stage:

.

To extract fine-grained textures and high-frequency geometric details of object boundaries, we first employ a depthwise separable convolution to perform independent spatial filtering on the input features. This operation decouples standard convolution into depthwise and pointwise components, significantly reducing computational overhead while forcing each channel to focus on local spatial structures:

where

represents the result after depthwise separable convolution, and

denotes the depthwise separable convolution operation, and the experiments regarding the kernel size setting can be found in

Section 4.3.3.

Subsequently, to enhance semantic representation while maintaining the spatial structure, a Feed-Forward Network (MLP) is utilized for non-linear cross-channel information fusion. The MLP consists of two linear transformation layers and a GELU activation function. Specifically, the first linear layer maps the features into a higher-dimensional space with non-linear activation, followed by a second linear layer that restores the original dimensions:

where

denotes the output feature map of the MLP module,

and

represent the linear transformations, and

denotes the GELU activation function.

Simultaneously, the original boundary probability map

is adjusted to the resolution of the current decoding stage via downsampling. It is then transformed into a spatial attention weight map

through a

convolution and Sigmoid normalization:

where

represents the downsampling operation. At each stage, a different downsampling factor is adopted: at the

i-th stage, it downsamples

H and

W to the corresponding resolutions

and

, respectively.

This attention map is subsequently broadcast and applied to the original input features via element-wise multiplication within a residual connection. This mechanism implements a selective gating strategy to constrain the semantic flow using boundary priors:

where

denotes the boundary-constrained gated feature map, ⊙ denotes element-wise multiplication, and

signifies the dimension broadcasting operation.

Subsequently, the multi-scale encoder feature

is first aligned in resolution and the channel dimension to produce

. This feature is then concatenated with the upsampled gated representation and fused via a

convolution, achieving an effective fusion of local spatial details and global semantic context:

where

denotes bi-linear interpolation upsampling with a factor of 2 (

spatial upsampling),

denotes a

transposed convolution with stride = 2 that also performs

upsampling, and

signifies the dimension broadcasting operation.

These operations are executed iteratively across the three decoding stages (

), where feature resolution and semantic discriminability are concurrently enhanced. Upon completion of these stages, the top-layer feature

reaches half of the original image resolution. Finally, a lightweight segmentation head maps these features into the pixel-level semantic prediction map

:

3.4. Multi-Task Hybrid Loss

To facilitate end-to-end optimization, we formulate a multi-task hybrid loss function that jointly supervises semantic region generation and geometric boundary delineation. The overarching objective is to harmonize semantic fidelity and boundary crispness, fostering a mutually beneficial learning paradigm between the two sub-tasks.

For region-level semantic consistency, the segmentation prediction is penalized by a combined loss

. Specifically, a standard pixel-wise cross-entropy loss

is applied to secure local classification accuracy. Concurrently, a Dice loss

is integrated to maximize the Intersection-over-Union between the prediction and the ground-truth. This effectively safeguards the topological completeness of the extracted regions and mitigates the severe foreground–background class imbalance problem. The compound segmentation loss is formulated as:

where

and

represent the predicted segmentation mask and the corresponding ground-truth label, respectively.

Meanwhile, to sharpen the object contours and enforce boundary continuity, we impose a dedicated boundary loss

. Given that boundary pixels constitute only a fraction of the entire image, a weighted binary cross-entropy loss is deployed to prevent the optimization from being dominated by massive background pixels:

where

denotes the binary edge map derived from

, and

N is the total pixel count. The balancing weights

and

ensure that the network remains highly sensitive to sparse boundary signals.

By aggregating these task-specific constraints, the total loss function for training our CLIP-HBD is defined as:

where

is a hyperparameter balancing the primary segmentation task and the auxiliary boundary detection task. This joint optimization strategy circumvents the limitations of single supervision information, leveraging both semantic and geometric dimensions to collaboratively drive the network toward learning more robust and spatially precise feature representations. A detailed analysis of this hybrid loss is provided in

Section 4.3.4.