1. Introduction

Popular music lyrics occupy a unique position at the intersection of linguistic creativity and mass culture. Unlike many other written genres, commercially successful song lyrics must simultaneously satisfy aesthetic, affective, and formal constraints, conveying emotional content within the phonological, rhythmic, and structural demands imposed by musical composition. These competing pressures make lyrics a particularly rich corpus for examining the statistical and linguistic regularities that emerge at the boundary between constrained and expressive language use [

1,

2]. Yet despite the global reach of streaming platforms and the extraordinary scale of the data they generate, quantitative linguistic study of popular lyrics has remained largely fragmented along disciplinary lines: music information retrieval (MIR), computational linguistics, and the physics of complex systems have each developed analytical traditions that rarely speak to one another [

3,

4,

5].

The computational analysis of sentiment and emotion in song lyrics has a substantial history, much of it focused on automatic mood classification within music information retrieval and recommendation systems [

6,

7]. Early approaches relied on bag-of-words representations and domain-specific lexicons, with VADER (Valence Aware Dictionary and sEntiment Reasoner; [

8]) becoming a widely used baseline due to its strong performance on short, informal social-media text. However, VADER was constructed exclusively from English-language data, meaning that its application to non-English lyrics is methodologically suspect: the lexicon fails to recognize sentiment-bearing vocabulary in other languages, typically producing artificially negative scores when tokens are simply unlisted rather than genuinely negative. This limitation is rarely acknowledged in the music sentiment literature, which has largely conducted analyses within single-language corpora [

1,

3], often without explicitly addressing potential cross-linguistic measurement artifacts.

The development of multilingual transformer models, particularly multilingual BERT variants that can be fine-tuned for sentiment classification, has made it possible to revisit cross-linguistic comparisons with considerably greater methodological rigor [

9]. Pre-trained on text from over 100 languages and fine-tuned for star-rating prediction on product reviews spanning English, Dutch, German, French, Italian, and Spanish, multilingual BERT [

10,

11] can produce sentiment estimates that are genuinely comparable across languages, rather than reflecting the coverage asymmetries of English-centric lexicons. An open empirical question is how much of the cross-linguistic sentiment variation reported in prior work survives when an equitable multilingual model is applied, with direct implications for theories linking language typology to the emotional character of popular music.

In parallel, a largely independent line of research has applied tools from statistical physics to the analysis of natural language structure. Zipf’s law—the observation that word frequency is approximately inversely proportional to word rank—has been documented extensively across multiple languages [

12,

13,

14], and its behavior in constrained registers such as song lyrics has received growing but still limited attention [

15]. At the same time, detrended fluctuation analysis (DFA; [

16]) and its multifractal extension (MF-DFA; [

17]) have revealed persistent long-range correlations in literary texts [

18,

19,

20], indicating that natural language carries structured temporal memory at multiple scales [

4]. Whether these properties extend to the highly constrained and repetitive register of popular song lyrics remains unexplored.

The present study brings these three strands of inquiry together in an integrated cross-linguistic analysis of Spotify chart lyrics across English, Spanish, and German. Using a corpus of 2023 unique tracks drawn from 54 weekly Top 200 chart snapshots (2019–2021), we address four interrelated questions: (1) Do multilingual BERT-based sentiment models produce cross-linguistically consistent estimates compared to the English-centric VADER lexicon [

8]? (2) Do lyrics word frequency distributions adhere to Zipf’s law [

12,

13,

21], and do they vary in slope and lexical richness across languages? (3) Do lyrics exhibit long-range correlations and multifractal scaling [

16,

17,

18], and are these properties shared across the three languages examined or language-specific? (4) How concentrated is the within-Top-200 streaming distribution, and does this within-chart concentration vary across English, Spanish, and German language markets?

By combining transformer-based sentiment analysis with complexity science methods in a single large-scale cross-linguistic corpus, this study provides a methodologically rigorous account of the statistical properties of commercially successful song lyrics, with implications for computational musicology, cross-linguistic NLP, and the broader study of language use under formal compositional constraints.

3. Materials and Methods

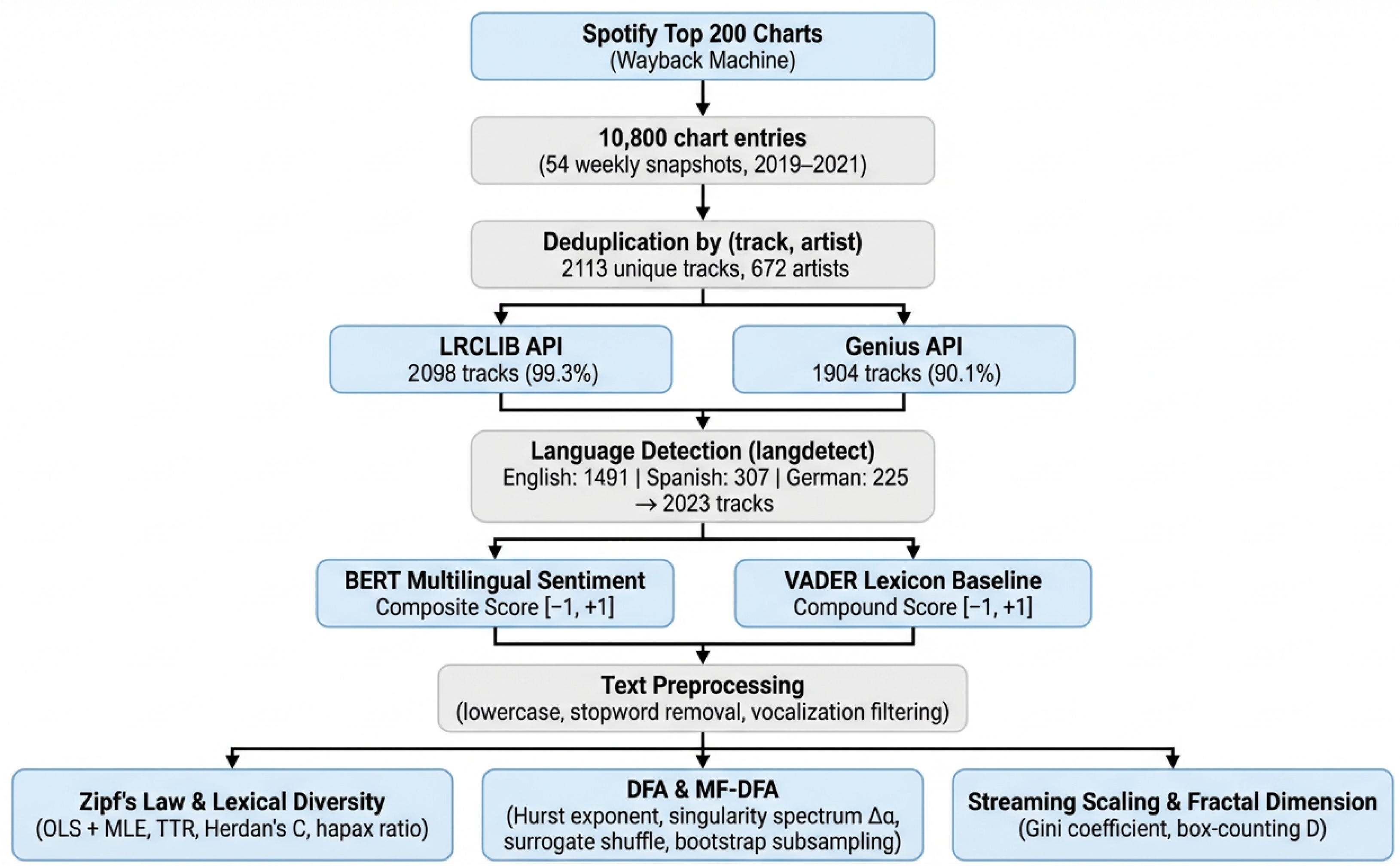

Figure 1 provides an overview of the complete analytical pipeline, from data collection through sentiment and complexity analyses.

3.1. Data Collection

Chart performance data were obtained from Spotify’s historical weekly Top 200 charts via the Wayback Machine internet archive (web.archive.org). The Common Data Index (CDX) API was queried for archived snapshots of the spotifycharts.com download endpoints across seven regional markets: Global, United States, United Kingdom, Germany, France, Spain, and Poland. The collection targeted the period 2019–2023; however, no usable archival snapshots of the spotifycharts.com download endpoints were retrieved beyond 2021. The retrieved dataset therefore effectively spans 2019–2021 and comprises 54 weekly chart snapshots distributed unevenly across three years: 2019 ( snapshots, 6200 chart entries), 2020 ( snapshots, 3400 entries), and 2021 ( snapshots, 1200 entries), yielding 10,800 chart entries in total. All subsequent references to the temporal coverage of the corpus refer to this 2019–2021 effective window. Each entry contained the track name, artist name, chart position (1–200), and weekly stream count.

To construct a corpus of unique songs, duplicate entries arising from tracks appearing across multiple weekly snapshots were removed on the basis of the (track name, artist name) combination, retaining the first occurrence. This yielded 2113 unique tracks by 672 distinct artists, which constitute the deduplicated full corpus. The analytical subset of 2023 tracks used in all sentiment and complexity analyses corresponds to tracks classified as English, Spanish, or German by the language detection step (

Section 3.3). The remaining 90 tracks comprised songs in other detected languages or tracks classified as unknown because lyrics were not retrieved. Throughout the remainder of this paper, “deduplicated full corpus” refers to the 2113 tracks and “analytical corpus” to the 2023 English, Spanish, and German tracks. The retained stream count for each track reflects a single weekly snapshot rather than a cumulative, peak, or average popularity measure; stream counts in the deduplicated full corpus ranged from 284,556 to 65,873,080 (

;

;

).

3.2. Lyrics Retrieval

Lyrics were retrieved from two independent sources to support both lexicon-based and transformer-based sentiment analyses. The primary source was LRCLIB (lrclib.net), a community-maintained lyrics database accessed via its public REST API without authentication. For each of the 2113 unique tracks, a search query was issued using track name and artist name. LRCLIB returned matching lyrics for 2098 tracks (99.29% of the deduplicated full corpus), while 6 tracks were identified as instrumental and 9 were not found. Median lyrics length was 2047 characters (; ; range: 46–7772 characters). Only one track had lyrics shorter than 100 characters.

As a secondary source, the Genius API (genius.com) was queried independently for the same 2113 tracks. Genius returned lyrics for 1904 tracks (90.11%), with 209 tracks unmatched. The Genius-sourced lyrics served as the basis for a lexicon-based sentiment baseline using the VADER sentiment analyzer [

8], while the LRCLIB-sourced lyrics were reserved for transformer-based sentiment analysis described in

Section 3.6. Rate limits of 0.3 s per request (LRCLIB) and 0.5 s (Genius) were imposed to comply with API usage policies.

3.3. Language Detection

Language identification was performed on the LRCLIB-sourced lyrics using the

langdetect Python library, version 1.0.9 [

30], which implements a Naïve Bayes classifier trained on Wikipedia character

n-gram profiles. Prior to detection, lyrics were preprocessed by removing common non-lexical vocalizations (e.g., “uh,” “yeah,” “oh no”), non-alphabetic characters, and excess whitespace. The detector’s random seed was fixed (seed

) to ensure reproducibility.

A total of 18 distinct languages were identified across the corpus. English was the dominant language (; 70.56%), followed by Spanish (; 14.53%) and German (; 10.65%). These three languages jointly accounted for 2023 tracks (95.74% of the deduplicated full corpus). The remaining 90 tracks comprised 75 tracks assigned to 15 additional detected languages, each represented by fewer than 30 tracks, and 15 tracks (0.71%) classified as “unknown” because no lyrics were retrieved.

3.4. Baseline Sentiment Analysis (VADER)

A lexicon-based sentiment baseline was established using the VADER (Valence Aware Dictionary and sEntiment Reasoner) sentiment analyzer [

8], applied to Genius-sourced lyrics. VADER computes four scores for each text: a positive proportion (pos), negative proportion (neg), neutral proportion (neu), and a normalized compound score ranging from

(most negative) to

(most positive). Following established practice in music sentiment research, songs were classified as positive if pos > neg, and negative otherwise. This baseline provides a reference against which the transformer-based BERT analysis (

Section 3.6) is compared.

3.5. Data Integration and Language Filtering

The VADER sentiment scores derived from Genius lyrics (

Section 3.4) were integrated with the LRCLIB-based lyrics corpus (

Section 3.2) via a two-stage encoding-normalized merge on (track name, artist name). The first stage applied Unicode NFKD decomposition with diacritical mark removal to both datasets; the second stage stripped all non-alphanumeric characters to resolve residual mismatches arising from character encoding discrepancies between data sources (e.g., “ROSALÍA” vs. “ROSAL?A”). This procedure matched 1833 of the 1904 Genius-scored tracks (96.27%); the remaining 71 tracks could not be linked due to track-level mismatches across sources.

For all subsequent analyses, the corpus was restricted to the three dominant languages: English (), Spanish (), and German (), yielding an analytical subset of 2023 tracks (95.74% of the deduplicated full corpus). Of these, 1771 had VADER sentiment scores (English: 1312; Spanish: 246; German: 213).

3.6. Multilingual BERT Sentiment Scoring

To address the inherent limitations of applying the English-centric VADER lexicon to multilingual lyrics, a transformer-based sentiment analysis was conducted using the

nlptown/bert-base-multilingual-uncased-sentiment model [

9]. This model, fine-tuned for multilingual sentiment classification on product reviews across six languages (including English, Spanish, and German), outputs a probability distribution over five sentiment classes (1-star to 5-star). Sentiment was scored on the original, unprocessed lyrics rather than the stopword-removed text used in downstream lexical analyses, as BERT’s contextual attention mechanism relies on grammatical structure and function words for accurate inference [

10].

Two continuous sentiment indices were derived from the five-class probability vector. The BERT Composite Score was computed as the dot product of the probability vector with the weight vector

, yielding a score in

analogous to VADER’s compound score, where negative values indicate negative sentiment and positive values indicate positive sentiment. The BERT Weighted Average was computed as the dot product with

divided by 5, producing a normalized score in

. Tracks with fewer than 50 words in the original lyrics were excluded to ensure sufficient textual input for reliable transformer inference, and input texts were truncated at the model’s maximum context window of 512 tokens; for longer songs, only the opening portion was scored. To quantify the impact of this truncation, a robustness check was performed in which every track was additionally scored by splitting its full lyrics into ≤500-token chunks, scoring each chunk independently, and averaging the per-chunk composite scores; results are reported in

Section 4.3.1. All inference was performed in evaluation mode (no gradient computation) using PyTorch 2.6.0+cu124 and Hugging Face Transformers 4.57.3, with automatic device selection.

A separate preprocessing step generated a cleaned lyrics column for use in the subsequent fractal and complexity analyses (

Section 3.7). This cleaning involved lowercasing, removal of URLs and non-alphabetic characters (preserving language-specific accented characters), elimination of repeated word patterns, and removal of multilingual stopwords (English, Spanish, and German) including domain-specific vocalizations common in song lyrics (e.g., “yeah,” “oh,” “uh”). The cleaned text was retained in the output dataset but was not used as input to the BERT model.

External Validation on Lyric-Domain Mood Annotations

Because the multilingual BERT model used here was fine-tuned on product reviews rather than song lyrics, an additional external validation was performed against an independent, lyric-specific gold standard. We used the MoodyLyrics dataset [

31], which provides mood annotations for 2595 English-language tracks distributed across four categories (happy, relaxed, sad, angry) drawn from the four quadrants of Russell’s valence–arousal model. Lyrics were retrieved via the Genius API; 2485 tracks (95.8%) returned a match, of which 2444 met the same ≥50-word threshold used for the main analysis. Each track was scored with the same BERT pipeline (

Section 3.6), and the BERT composite score was compared against the MoodyLyrics valence label, defined as positive for happy and relaxed and as negative for sad and angry. Two complementary analyses were conducted. First, we computed binary classification metrics treating the BERT composite as a positive/negative classifier (composite

predicts positive). Second, we treated the BERT composite as a continuous score and computed its rank correlation (Spearman

) with the four-class mood label and the point-biserial correlation with the binary valence label. Per-quadrant means provided a fine-grained check on whether BERT’s score ordering matched the underlying valence–arousal structure of the gold standard.

3.7. Zipf’s Law and Power-Law Analysis

The word frequency distributions of the lyrics corpus were analyzed for adherence to Zipf’s law, which predicts a power-law relationship between word rank and frequency such that the frequency of the

r-th ranked word is proportional to

[

12]. For each language subcorpus, lyrics were tokenized by lowercasing, removing non-alphabetic characters (preserving language-specific accented characters: ä, ö, ü, ß, á, é, í, ó, ú, ñ), and filtering tokens shorter than two characters. Word frequencies were computed and sorted in descending order, and the Zipf exponent

was estimated via ordinary least squares (OLS) regression of

on

. Goodness of fit was assessed by

and the standard error of the slope estimate.

To supplement the OLS Zipf analysis, maximum likelihood estimates of the scaling exponent

and lower bound

were obtained using the

powerlaw Python package [

32]. The MLE estimates are reported alongside the OLS estimates, but likelihood-ratio comparisons against alternative distributions were not retained because the corresponding diagnostic outputs were unavailable. Additionally, vocabulary richness was quantified using four complementary metrics: the type–token ratio (TTR), Herdan’s C (a log-corrected variant of TTR that partially controls for corpus size), Brunet’s W, and the hapax legomena ratio (proportion of word types occurring exactly once). These metrics provide converging evidence on lexical diversity independent of the Zipf exponent and are less sensitive to differences in subcorpus size than the raw TTR.

3.8. Multifractal Detrended Fluctuation Analysis and Long-Range Dependence

To characterize the scaling and long-range dependence properties of the lyrics corpus, detrended fluctuation analysis (DFA; [

16]) and its multifractal generalization (MF-DFA; [

17]) were applied to word-level time series constructed from each language subcorpus. Two types of series were analyzed: a word-length series, in which each word was represented by its character count, and a word frequency rank series, in which each word was replaced by the natural logarithm of its corpus-wide frequency rank. These representations capture complementary structural aspects of text: the word-length series reflects phonotactic and morphological patterns, while the rank series captures the sequential deployment of common versus rare vocabulary.

Standard DFA estimates a single Hurst exponent H from the scaling of the root-mean-square fluctuation function across segment sizes s, where indicates persistent long-range correlations and corresponds to an uncorrelated random process. The cumulative profile was computed by subtracting the series mean and applying a cumulative sum. Segments were detrended using a first-order polynomial fit, and the fluctuation function was computed across 20 logarithmically spaced scales. H was estimated as the slope of versus via ordinary least squares.

MF-DFA extends this framework by computing a generalized fluctuation function for moment orders q from to in steps of 0.5, yielding the generalized Hurst exponent . The singularity spectrum was obtained via a Legendre transform, and the spectrum width was used as the primary measure of multifractality. Two validation procedures were employed: (1) a surrogate shuffle test ( permutations per series) to confirm that reflects genuine long-range dependence rather than distributional artifacts, and (2) a bootstrap subsampling test (100 iterations, songs per language) to control for unequal subcorpus sizes when comparing across languages, with pairwise Mann–Whitney U tests for statistical comparison.

3.9. Streaming Distribution Scaling and Box-Counting Fractal Dimension

The streaming counts available in the present corpus reflect a single weekly snapshot per track (the first occurrence retained during deduplication;

Section 3.1) drawn from the Top 200 chart only. They therefore do not represent peak, cumulative, or average popularity, and they truncate the long tail of less-streamed releases that never entered the Top 200. The analyses in this section should accordingly be understood as descriptors of the internal concentration of weekly streaming activity within the Top 200, not as estimates of the population-level distribution of song popularity. Within this restricted scope, we regressed

on

within each language subcorpus to obtain a within-chart streaming exponent for descriptive comparison with the lexical Zipf exponent estimated in

Section 3.7. Concentration of streaming activity was further quantified using the Gini coefficient, where values closer to 1 indicate greater inequality (i.e., a small number of tracks capturing a disproportionate share of streams). Additionally, box-counting fractal dimension was computed on two representations: (1) the one-dimensional distribution of word lengths (character counts per token), and (2) the two-dimensional log-rank versus log-frequency scatter of word types. For each representation, the number of occupied boxes

was counted across 20 logarithmically spaced box sizes

, and the fractal dimension

D was estimated as the slope of

versus

via ordinary least squares.

5. Discussion

This study set out to bridge three largely independent research traditions—sentiment analysis, statistical physics of language, and music information retrieval—in a single cross-linguistic analysis of popular song lyrics. The results yield several findings with implications for each of these fields.

The most striking result concerns the dramatic revision of cross-linguistic sentiment patterns when moving from the English-centric VADER lexicon to a multilingual BERT model. The 1.003-point gap between English and German under VADER collapsed to just 0.127 points under BERT, confirming that the extreme negativity scores previously attributed to non-English lyrics were largely measurement artifacts arising from VADER’s inability to recognize sentiment-bearing vocabulary outside English. This finding carries a cautionary methodological message for the broader computational musicology literature: studies that have applied English-centric lexicons to multilingual corpora without cross-validating against language-equitable models may have reported spurious cross-linguistic differences [

3,

25]. The residual cross-linguistic variation under BERT—with Spanish lyrics slightly more negative than English or German—may reflect genuine cultural or genre-level differences in lyrical content, though domain transfer limitations of the BERT model (fine-tuned on product reviews rather than song lyrics) prevent strong conclusions on this point [

10].

The complexity-science results reveal a striking degree of cross-linguistic consistency in the structural organization of popular music lyrics within the three languages examined here. English, Spanish, and German all exhibited Zipfian word frequency distributions (

), persistent long-range correlations (

–

; none of the 50 shuffled surrogates exceeded the observed values), and broad multifractal spectra (

–

). Critically, the bootstrap subsampling test demonstrated that the apparent cross-linguistic differences in multifractal spectrum width were not statistically significant when corpus size was controlled (all pairwise

), suggesting that, within this set, multifractal scaling is a shared rather than language-specific structural property of commercially successful lyrics. This extends the findings of Montemurro and Pury [

18] and Drożdż et al. [

19], who documented similar properties in literary prose, to the more constrained register of song lyrics, and is consistent with the interpretation that songwriting constraints—melodic structure, verse–chorus repetition, rhyme schemes—impose long-range organizational patterns that transcend individual languages. We emphasize, however, that the three languages analyzed here all belong to the Indo-European family and that English is heavily over-represented relative to Spanish and German; whether the same patterns hold in typologically distant languages, in non-chart popular music, or in larger and more balanced corpora cannot be determined from the present data.

Where the languages did differ was in lexical diversity: German exhibited the shallowest Zipf exponent (

), highest type–token ratio (0.099), and largest hapax ratio (50.3%), while English showed the steepest exponent (

) and lowest diversity. These differences are consistent with German’s productive compound word formation system [

13] and suggest that cross-linguistic variation in lyrics is better explained by linguistic typology than by the constraints of songwriting per se.

Read together, the structural and lexical findings invite a more concrete interpretation of what the chart-lyric register actually looks like as a written form. The Zipf exponents observed here (

–

) sit clearly above the value of approximately

typically reported for literary prose [

13,

21], meaning that a small number of high-frequency words carries proportionally more of the text than in long-form writing. Operationally, this is the statistical signature of the chorus-driven structure of popular songs: a short refrain that recurs many times, intersected with shorter verses that introduce new vocabulary at a much lower rate. The same compositional fact appears, from a different angle, in the persistent long-range correlations measured by DFA (

–

). A Hurst exponent in this range means that the choice of word at one point in a song is statistically informative about word choices several stanzas away—i.e., the lyrics are not locally independent but cohere as an extended sequence, even after controlling for surface repetition. The broad multifractal spectra (

–

) indicate that this temporal coherence is not produced by a single mechanism: rare and frequent words follow distinct scaling regimes, consistent with songs in which a stable lexical core (function words, refrain vocabulary) coexists with thematically clustered open-class words that arrive in bursts. The cross-linguistic divergence in lexical diversity admits a similarly concrete reading: German’s higher hapax ratio (50.3%, against 43.3% for English) is unlikely to reflect more "varied" songwriting in any aesthetic sense, and is more parsimoniously explained by morphology—German’s productive nominal compounding inflates the type count for what would, in English, be expressed as multiword phrases. From the standpoint of language use, the joint pattern of steep Zipf slopes, persistent long-range memory, and broad multifractality places chart lyrics close to literary prose in their structural organization while remaining lexically far more compressed, supporting the view that the lyric register is not a degenerate or impoverished form of language but a constrained register whose departures from prose are interpretable in terms of compositional function rather than linguistic deficit.

Several limitations should be acknowledged. First, the corpus, while substantial (2023 tracks), is drawn exclusively from Spotify Top 200 charts across a limited temporal window (effectively 2019–2021), and may not generalize to non-chart music, historical periods, or languages beyond English, Spanish, and German. Relatedly, the streaming counts retained for each track represent a single weekly Top 200 snapshot rather than a peak, cumulative, or average measure of popularity, and the long tail of less-streamed releases that never entered the Top 200 is truncated by construction; the within-chart streaming exponents and Gini coefficients reported in

Section 4.6 should therefore be interpreted as descriptors of internal Top 200 concentration rather than as evidence of a population-level power-law popularity distribution. Second, the BERT model used for sentiment analysis was fine-tuned on product reviews rather than song lyrics. To quantify the resulting domain-transfer cost, we externally validated the model against the MoodyLyrics gold standard [

31] (

Section 4.3.2); BERT achieved 71.5% accuracy, AUC

, and a Spearman rank correlation of

with the four-class mood labels, with the per-quadrant score ordering (happy > relaxed > angry > sad) matching the expected valence ranking. These values indicate that BERT’s signal is genuinely informative on lyric data, while leaving room for improvement via a domain-adapted classifier. Third, BERT’s 512-token input limit meant that the primary sentiment scores reflected only the opening window of each track, and 65.8% of analyzed lyrics exceeded this limit (with substantially higher truncation rates for Spanish, 83.6%, and German, 82.7%, than for English, 59.6%, given that Spanish and German lyrics in the corpus tended to be longer). To quantify the resulting bias, we re-scored every track by averaging BERT composites across all ≤500-token chunks of the full lyrics (

Section 4.3.1); the chunk-averaged and opening-window scores correlated strongly (

overall;

–

within each language) and agreed on positive/negative valence for

of tracks. Crucially, the by-language means shifted by less than

in all three languages, leaving the cross-linguistic comparisons reported above qualitatively unchanged. We retain the opening-window scores as the primary measurement, but note that German lyrics, which had the highest median token count (663) and the highest sign-flip rate (17.3%), are the most sensitive to truncation, and that more sophisticated long-document approaches (e.g., hierarchical pooling, Longformer-style attention) would be a useful direction for future work. Fourth, the German bootstrap subsample (

) constitutes the full German subcorpus, meaning that the narrow variance (

) reflects minimal rather than zero sampling variability. Finally, the box-counting fractal dimension of the rank–frequency scatter (

–

) is geometrically trivial given the near-linear Zipf relationship and does not add independent information.

Future work should expand the linguistic coverage to typologically diverse languages (e.g., tonal languages, agglutinative languages), incorporate larger and temporally balanced corpora, and explore domain-adapted sentiment models fine-tuned specifically on song lyrics. The integration of audio features with textual complexity measures represents another promising direction for understanding the multimodal structure of popular music.