Semantic Segmentation-Based Identification and Quantitative Analysis of Cross-Sectional Quality Features in Luzhou-Flavor Liquor Daqu

Abstract

1. Introduction

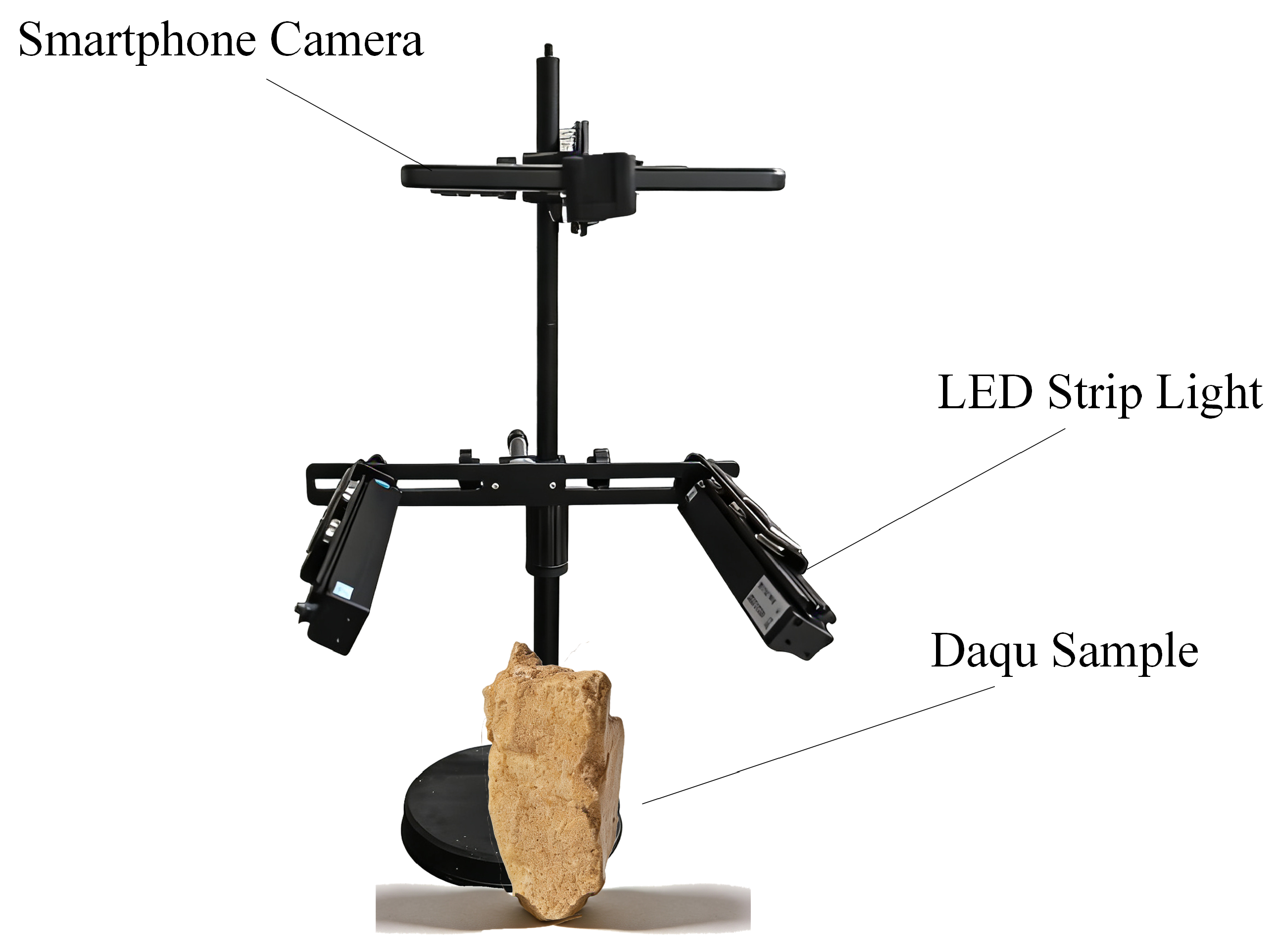

2. Materials and Methods

2.1. Sample Collection

2.2. Image Annotation and Preprocessing

- (i)

- Geometric transformations: random horizontal and vertical flipping (), rotation (), scaling (0.8–1.2), and random cropping;

- (ii)

- Photometric and color perturbations: controlled adjustments of brightness, contrast, and saturation to emulate changes in illumination and color cast, enhancing model adaptability to varying lighting conditions;

- (iii)

- Spatial composite augmentation: inspired by the Mosaic strategy, combining patches from multiple images to increase structural diversity and background complexity;

- (iv)

- Small-object targeted augmentation: designed specifically for low-proportion, fine-grained targets such as fissures and plaques, involving mask-guided local magnification and random cropping to increase the model’s exposure to small objects and improve segmentation precision.

2.3. Construction of Image Segmentation Models

2.4. Evaluation Metrics

3. Results

3.1. Selection of Cross-Sectional Features and Parameter Extraction

- (1)

- Plaque Area Ratio: Microbial plaques are contamination regions caused by undesired microorganisms and represent an important factor in evaluating the sanitary condition of Daqu. Common contaminants include Penicillium, Monascus, Aspergillus flavus, and Aspergillus niger [36]. These microorganisms often proliferate when the Daqu block cools to room temperature while retaining high internal moisture, forming dominant colonies. In industrial evaluation, the proportion of plaque area in the cross-section is routinely used as an indicator of Daqu quality and potential microbial risk. The plaque area ratio is defined as:where denotes the number of pixels belonging to plaque regions, and denotes the total number of pixels within the Daqu cross-section.

- (2)

- Pizhang Thickness: The pizhang refers to the outer layer of Daqu formed during fermentation and storage, consisting primarily of partially ungelatinized starch on the Daqu surface [12]. Its thickness is strongly associated with ventilation properties, moisture retention capacity, and fermentation stability. The fire cycle is a ring-like structure formed by Maillard reactions between the surface and middle layers during temperature rise [37], and is commonly used as a practical visual boundary to distinguish the pizhang from the inner Daqu region. In this study, the thickness of the pizhang is computed by measuring the pixel-wise minimum distance between the outer boundary of the fire cycle and the external contour of the Daqu, using the following definition:where P is the set of boundary pixels of the fire cycle region, is the number of such pixels, E is the set of outer contour pixels of the Daqu, and denotes the Euclidean distance between pixel p and pixel e. This averaging process avoids the influence of extreme points and provides a robust measure of pizhang thickness.

- (3)

- Fissure Length: Fissures are common structural defects formed during the production and storage of Daqu [38]. Their number and length reflect the structural stability of the block. Fissure formation is influenced by moisture content, drying conditions, and internal stress distribution. Excessive fissure may compromise mechanical strength and disrupt the diffusion of gases and moisture within the Daqu, thereby affecting microbial community dynamics. Total fissure length is computed as:where is the length of the i-th fissure, obtained via connected-component analysis and skeletonization of the fissure region, and n is the total number of fissures. This approach reduces measurement variability caused by irregular fissure boundaries.

3.2. Model Training Performance

3.3. Ablation Study and Performance Analysis

- By integrating and , the model’s mIoU increased from 84.01% (M1) to 85.48% (M7).

- More importantly, the Dice coefficient rose to 98.09%, indicating that the composite loss effectively encourages the model to focus on regional overlap and the structural integrity of minority classes.

- Comparing M7 and M8, the addition of data augmentation further elevated the mIoU by 2.06%, reaching a peak performance of 87.54%.

- The enhancement is particularly pronounced for the “Fissure” category, where the IoU rose from 74.81% (M7) to 79.46% (M8).

- This improvement validates that geometric and photometric perturbations, especially the small-object targeted augmentation, effectively enriched the morphological diversity of the training set. This allowed U2-Net to learn more robust and invariant features, mitigating overfitting on the limited samples of fine-grained defects.

3.4. Extraction and Visualization of Key Morphological Parameters

- (a)

- Visualization of pizhang thickness: The average pizhang thickness was computed as the mean of the minimum Euclidean distances from each point on the outer boundary of the fire-cycle region (P) to the Daqu outer contour (E), following Equation (7). The measured distances were visualized using line markers in Figure 8a, and all values were reported in pixel units. This visualization highlights spatial variations in pizhang thickness, providing insights into the thermal response intensity and the maturity uniformity of the Daqu body.

- (b)

- Visualization of plaque area ratio: A pseudo-color mapping was applied to highlight plaque regions, with color gradients indicating their spatial distribution (Figure 8b). The model accurately identified plaque regions, and their area ratios were presented in percentage form, allowing an intuitive assessment of contamination severity.

- (c)

- Fissure morphology and length analysis: Skeletonization was applied to the fissure regions to extract their main structural lines (Figure 8c). Fissure areas were marked with green lines, while the skeletons were indicated by white solid lines. The corresponding length-related parameters were also recorded in pixel units.

4. Discussions and Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Jin, G.Y.; Zhu, Y.; Xu, Y. Mystery behind Chinese liquor fermentation. Trends Food Sci. Technol. 2017, 63, 18–28. [Google Scholar] [CrossRef]

- He, G.Q.; Huang, J.; Wu, C.D.; Jin, Y.; Zhou, R.Q. Bioturbation effect of fortified Daqu on microbial community and flavor metabolite in Chinese strong-flavor liquor brewing microecosystem. Food Res. Int. 2020, 129, 108851. [Google Scholar] [CrossRef]

- Hou, Q.; Wang, Y.; Qu, D.; Zhao, H.; Tian, L.; Zhou, J.; Liu, J.; Guo, Z. Microbial communities, functional, and flavor differences among three different-colored high-temperature Daqu: A comprehensive metagenomic, physicochemical, and electronic sensory analysis. Food Res. Int. 2024, 184, 114257. [Google Scholar] [CrossRef]

- Zhang, Y.D.; Shen, Y.; Niu, J.; Ding, F.; Ren, Y.; Chen, X.X.; Han, B.Z. Bacteria-induced amino acid metabolism involved in appearance characteristics of high-temperature Daqu. J. Sci. Food Agric. 2023, 103, 243–254. [Google Scholar] [CrossRef]

- Song, Z.L.; Li, Y.B.; Zhao, H.Y.; Liu, X.G.; Ding, H.L.; Ding, Q.S.; Ma, D.N.; Liu, S.P.; Mao, J. Application of computer vision techniques to fermented foods: An overview. Trends Food Sci. Technol. 2025, 160, 104982. [Google Scholar] [CrossRef]

- Sardari, H.; Firouz, M.S.; Hosseinpour, S.; Bohlol, P. Image-based discrimination of ultrasound-assisted frozen meat using deep learning. Future Foods 2025, 12, 100822. [Google Scholar] [CrossRef]

- Fan, S.X.; Li, J.B.; Zhang, Y.H.; Tian, X.; Wang, Q.Y.; He, X.; Zhang, C.; Huang, W.Q. On line detection of defective apples using computer vision system combined with deep learning methods. J. Food Eng. 2020, 286, 110102. [Google Scholar] [CrossRef]

- Davis, K.A.; Harris, C. Microbial evaluation of inoculated fresh meat utilizing imaging and computer vision. J. Anim. Sci. 2025, 103, 510–511. [Google Scholar] [CrossRef]

- Xia, Y.Y.; Tian, J.P.; Huang, D.; Wang, J.; He, K.L.; Xie, L.L.; Hu, X.J.; Yang, H.L. Hyperspectral Reconstruction in Combination With a Crown Porcupine Optimization-Optimized Support Vector Regression (CPO-SVR) Machine Learning Model for Predicting the Total Acid Content of Daqu. J. Food Process Eng. 2025, 48, e70172. [Google Scholar] [CrossRef]

- Huang, H.P.; Hu, X.J.; Tian, J.P.; Chen, P.; Huang, D. Multigranularity cascade forest algorithm based on hyperspectral imaging to detect moisture content in Daqu. J. Food Process Eng. 2021, 44, e13633. [Google Scholar] [CrossRef]

- Jiang, X.N.; Hu, X.J.; Huang, H.P.; Tian, J.P.; Bu, Y.H.; Huang, D.; Luo, H.B. Detecting total acid content quickly and accurately by combining hyperspectral imaging and an optimized algorithm method. J. Food Process Eng. 2021, 44, e13844. [Google Scholar] [CrossRef]

- Zhao, M.K.; Han, C.Y.; Xue, T.H.; Ren, C.; Nie, X.; Jing, X.; Hao, H.Y.; Liu, Q.F.; Jia, L.Y. Establishment of a Daqu Grade Classification Model Based on Computer Vision and Machine Learning. Foods 2025, 14, 668. [Google Scholar] [CrossRef]

- Wang, T.; Huang, R.L.; Wei, Z.; Xie, M.Z.; Chen, L.P.; Wang, W.T.; Yun, Y.H. Multimodal nondestructive testing of tender coconut water quality using spectroscopy, computer vision, and deep learning. Food Control 2026, 180, 111660. [Google Scholar] [CrossRef]

- Song, W.R.; Wang, H.; Yun, Y.H. Smartphone video imaging: A versatile, low-cost technology for food authentication. Food Chem. 2025, 462, 140911. [Google Scholar] [CrossRef]

- Ghosh, S.; Das, N.; Das, I.; Maulik, U. Understanding Deep Learning Techniques for Image Segmentation. ACM Comput. Surv. 2019, 52, 81. [Google Scholar] [CrossRef]

- Isensee, F.; Jaeger, P.F.; Kohl, S.A.A.; Petersen, J.; Maier-Hein, K.H. nnU-Net: A self-configuring method for deep learning-based biomedical image segmentation. Nat. Methods 2021, 18, 203–211. [Google Scholar] [CrossRef] [PubMed]

- Tian, L.; Zhong, X.R.; Chen, M. Semantic Segmentation of Remote Sensing Image Based on GAN and FCN Network Model. Sci. Program. 2021, 2021, 9491376. [Google Scholar] [CrossRef]

- Lukács, H.I.; Beregi, B.Z.; Porteleki, B.; Fischl, T.; Botzheim, J. Attention U-Net-based semantic segmentation for welding line detection. Sci. Rep. 2025, 15, 1314. [Google Scholar] [CrossRef]

- Bastian, M.; Angela, M.-A.; Constanza, A.; Eduardo, A. Lightweight DeepLabv3+ for Semantic Food Segmentation. Foods 2025, 14, 1306. [Google Scholar]

- Cao, M.Y.; Tang, F.F.; Ji, P.; Ma, F.Y. Improved real-time semantic segmentation network model for crop vision navigation line detection. Front. Plant Sci. 2023, 14, 1140560. [Google Scholar] [CrossRef]

- Kong, X.Y.; Sun, X.H.; Wang, Y.Z.; Peng, R.Y.; Li, X.Y.; Yang, Y.H.; Lv, Y.R.; Tseng, S.P. Food Calorie Estimation System Based on Semantic Segmentation Network. Sens. Mater. 2023, 35, 2013–2033. [Google Scholar] [CrossRef]

- Gonzalez, B.; Garcia, G.; Velastin, S.A.; Gholamhosseini, H.; Tejeda, L.; Farias, G. Automated Food Weight and Content Estimation Using Computer Vision and AI Algorithms. Sensors 2024, 24, 7660. [Google Scholar] [CrossRef]

- Wen, Y.; Xue, J.L.; Sun, H.; Song, Y.; Lv, P.F.; Liu, S.H.; Chu, Y.Y.; Zhang, T.Y. High-precision target ranging in complex orchard scenes by utilizing semantic segmentation results and binocular vision. Comput. Electron. Agric. 2023, 215, 108440. [Google Scholar] [CrossRef]

- Nima, N.; Ali, R.; Hamed, S.; Soleiman, H. Detection of external defects of tomato crop using appearance parameters by convolutional neural networks. Future Foods 2025, 11, 100611. [Google Scholar] [CrossRef]

- Casado-García, A.; Domínguez, C.; García-Domínguez, M.; Heras, J.; Inés, A.; Mata, E.; Pascual, V. CLoDSA: A tool for augmentation in classification, localization, detection, semantic segmentation and instance segmentation tasks. BMC Bioinform. 2019, 20, 323. [Google Scholar] [CrossRef] [PubMed]

- Abu Alhaija, H.; Mustikovela, S.K.; Mescheder, L.; Geiger, A.; Rother, C. Augmented Reality Meets Computer Vision: Efficient Data Generation for Urban Driving Scenes. Int. J. Comput. Vis. 2018, 126, 961–972. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. In Medical Image Computing and Computer-Assisted Intervention—MICCAI 2015; Navab, N., Hornegger, J., Wells, W.M., Frangi, A.F., Eds.; Springer International Publishing: Cham, Switzerland, 2015; pp. 234–241. [Google Scholar]

- Roy, K.; Chaudhuri, S.S.; Pramanik, S. Deep learning based real-time Industrial framework for rotten and fresh fruit detection using semantic segmentation. Microsyst. Technol. 2021, 27, 3365–3375. [Google Scholar] [CrossRef]

- Zang, H.C.; Wang, C.S.; Zhao, Q.; Zhang, J.; Wang, J.M.; Zheng, G.Q.; Li, G.Q. Constructing segmentation method for wheat powdery mildew using deep learning. Front. Plant Sci. 2025, 16, 1524283. [Google Scholar] [CrossRef]

- Zhou, Z.; Siddiquee, M.M.R.; Tajbakhsh, N.; Liang, J. UNet++: A Nested U-Net Architecture for Medical Image Segmentation. In Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Support; Springer: Cham, Switzerland, 2018; Volume 11045, pp. 3–11. [Google Scholar]

- Zhou, Z.W.; Siddiquee, M.M.R.; Tajbakhsh, N.; Liang, J.M. UNet++: Redesigning Skip Connections to Exploit Multiscale Features in Image Segmentation. IEEE Trans. Med. Imaging 2020, 39, 1856–1867. [Google Scholar] [CrossRef] [PubMed]

- Qin, X.B.; Zhang, Z.C.; Huang, C.Y.; Dehghan, M.; Zaiane, O.R.; Jagersand, M. U2-Net: Going deeper with nested U-structure for salient object detection. Pattern Recognit. 2020, 106, 107404. [Google Scholar] [CrossRef]

- Liu, Y.H.; Yao, J.; Lu, X.H.; Xie, R.P.; Li, L. DeepCrack: A deep hierarchical feature learning architecture for crack segmentation. Neurocomputing 2019, 338, 139–153. [Google Scholar] [CrossRef]

- Shelhamer, E.; Long, J.; Darrell, T. Fully Convolutional Networks for Semantic Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 640–651. [Google Scholar] [CrossRef]

- Can, D.; Ruijie, G.A.O.; Yongwei, Z.; Lihong, M.; Miankun, W.; Pulin, L.I.U.; Jiahui, C.; Peiwen, F.A.N. Relationship between sensory indexes, physicochemical indexes, microbial community and volatile compounds in high-temperature Daqu. Food Ferment. Ind. 2022, 23, 78–85. [Google Scholar]

- Wang, X.; Deng, X.; Wan, Q.; Luo, W.; Zhao, R.; Gao, Z.; Zhang, Y.; Zhang, D.; Yang, J. Isolation, identification and control of molds on the surface of medium-high-temperature Daqu. China Brew. 2024, 43, 90–94. [Google Scholar]

- Xu, B.Y.; Xu, S.S.; Cai, J.; Sun, W.; Mu, D.D.; Wu, X.F.; Li, X.J. Analysis of the microbial community and the metabolic profile in medium-temperature Daqu after inoculation with Bacillus licheniformis and Bacillus velezensis. LWT 2022, 160, 113214. [Google Scholar] [CrossRef]

- Xin-hao, Z.; Dan-ping, H.; Jian-ping, T.; Dan, H. Research on the Daqu quality detection system based on machine vision. Food Mach. 2018, 4, 80–84. [Google Scholar]

- Qilin, S.; Ruirui, Z.; Liping, C.; Linhuan, Z.; Hongming, Z.; Chunjiang, Z. Semantic segmentation and path planning for orchards based on UAV images. Comput. Electron. Agric. 2022, 200, 107222. [Google Scholar] [CrossRef]

- Chai, D.F.; Newsam, S.; Huang, J.F. Aerial image semantic segmentation using DCNN predicted distance maps. ISPRS J. Photogramm. Remote Sens. 2020, 161, 309–322. [Google Scholar] [CrossRef]

- Hu, Q.; Wang, K.K.; Ren, F.S.; Wang, Z.Y. Research on underwater robot ranging technology based on semantic segmentation and binocular vision. Sci. Rep. 2024, 14, 11844. [Google Scholar] [CrossRef] [PubMed]

| Class | Total Instances 1 | Images 2 | Area Ratio (%) 3 |

|---|---|---|---|

| Daqu Body | 759 | 659 | 82.55 |

| Fire Cycle | 603 | 367 | 5.98 |

| Fissure | 497 | 329 | 1.05 |

| Plaque | 629 | 275 | 7.24 |

| Total | 2488 | 660 | 100.00 |

| Model | mIoU (%) | Dice (%) | PA (%) | Params (M) | FLOPs (G) |

|---|---|---|---|---|---|

| SegFormer | 79.23 ± 0.68 | 96.21 ± 0.44 | 98.91 ± 0.08 | 7.03 | 5.21 |

| U-Net | 78.32 ± 1.73 | 95.43 ± 0.57 | 96.49 ± 0.24 | 13.40 | 48.65 |

| U-Net++ | 76.48 ± 3.23 | 95.35 ± 0.58 | 96.25 ± 0.57 | 9.16 | 54.55 |

| U2-Net | 85.48 ± 0.39 | 98.09 ± 0.13 | 97.27 ± 0.10 | 44.07 | 74.38 |

| Model | Background (%) | Daqu (%) | Fire Cycle (%) | Fissure (%) | Plaque (%) |

|---|---|---|---|---|---|

| SegFormer | 94.28 ± 0.73 | 98.49 ± 0.04 | 91.10 ± 0.06 | 69.70 ± 1.61 | 61.18 ± 2.50 |

| U-Net | 97.68 ± 0.46 | 90.90 ± 0.46 | 70.37 ± 6.45 | 63.71 ± 4.04 | 68.94 ± 1.87 |

| U-Net++ | 97.30 ± 0.98 | 90.51 ± 1.23 | 67.25 ± 8.10 | 62.55 ± 4.83 | 64.82 ± 11.40 |

| U2-Net | 98.53 ± 0.18 | 92.62 ± 0.21 | 82.51 ± 0.65 | 74.81 ± 1.10 | 78.92 ± 0.62 |

| ID | Components | mIoU (%) | Dice (%) | PA (%) | |||

|---|---|---|---|---|---|---|---|

| Aug | WCE | Dice | Lovasz | ||||

| M1 | - | ✓ | - | - | 84.01 ± 0.34 | 83.76 ± 0.78 | 97.35 ± 0.04 |

| M2 | - | - | ✓ | - | 83.59 ± 0.48 | 80.67 ± 0.63 | 96.86 ± 0.39 |

| M3 | - | - | - | ✓ | 83.17 ± 0.94 | 80.01 ± 1.19 | 97.27 ± 0.09 |

| M4 | ✓ | ✓ | - | - | 85.99 ± 0.45 | 97.70 ± 0.16 | 97.72 ± 0.02 |

| M5 | - | ✓ | ✓ | - | 84.32 ± 0.34 | 98.18 ± 0.09 | 97.37 ± 0.04 |

| M6 | ✓ | ✓ | ✓ | - | 87.05 ± 0.30 | 87.76 ± 0.23 | 97.66 ± 0.02 |

| M7 | - | ✓ | ✓ | ✓ | 85.48 ± 0.39 | 98.09 ± 0.13 | 97.27 ± 0.10 |

| M8 | ✓ | ✓ | ✓ | ✓ | 87.54 ± 0.17 | 98.30 ± 0.10 | 97.68 ± 0.03 |

| ID | Components | Val_IoU (%) | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Aug | WCE | Dice | Lovasz | Background | Daqu | Fire Cycle | Fissure | Plaque | |

| M1 | - | ✓ | - | - | 98.86 ± 0.11 | 92.76 ± 0.08 | 75.53 ± 1.59 | 73.16 ± 1.44 | 79.75 ± 0.46 |

| M2 | - | - | ✓ | - | 98.08 ± 0.71 | 91.74 ± 0.78 | 77.82 ± 1.04 | 72.23 ± 1.22 | 78.06 ± 1.55 |

| M3 | - | - | - | ✓ | 98.67 ± 0.19 | 92.57 ± 0.15 | 76.76 ± 1.56 | 76.17 ± 1.16 | 71.66 ± 3.49 |

| M4 | ✓ | ✓ | - | - | 99.12 ± 0.02 | 93.60 ± 0.07 | 81.75 ± 1.83 | 73.41 ± 1.71 | 82.07 ± 0.54 |

| M5 | - | ✓ | ✓ | - | 98.68 ± 0.08 | 92.94 ± 0.12 | 76.74 ± 1.31 | 74.01 ± 0.71 | 79.22 ± 0.70 |

| M6 | ✓ | ✓ | ✓ | - | 98.91 ± 0.05 | 93.45 ± 0.04 | 82.72 ± 1.33 | 77.56 ± 0.54 | 82.63 ± 0.30 |

| M7 | - | ✓ | ✓ | ✓ | 98.53 ± 0.18 | 92.62 ± 0.21 | 82.51 ± 0.65 | 74.81 ± 1.10 | 78.92 ± 0.62 |

| M8 | ✓ | ✓ | ✓ | ✓ | 99.02 ± 0.03 | 93.56 ± 0.09 | 82.95 ± 0.44 | 79.46 ± 0.84 | 82.72 ± 0.50 |

| Sample ID | (px) | (px) | Daqu (%) | Fire Cycle (%) | Plaque (%) | Ratio (%) |

|---|---|---|---|---|---|---|

| Daqu_5 | 0.00 | 1066 | 33.78 | 0.00 | 0.00 | 0.00 |

| Daqu_136 | 287.12 | 0 | 29.81 | 6.48 | 0.00 | 0.00 |

| Daqu_210 | 197.65 | 728 | 35.82 | 1.62 | 3.09 | 8.62 |

| Daqu_214 | 0.00 | 0 | 34.24 | 0.00 | 5.17 | 15.10 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Song, Z.; Dong, Y.; Wang, C.; Zhang, X.; Sun, A.; You, C.; Mao, J.; Liu, S. Semantic Segmentation-Based Identification and Quantitative Analysis of Cross-Sectional Quality Features in Luzhou-Flavor Liquor Daqu. Computers 2026, 15, 307. https://doi.org/10.3390/computers15050307

Song Z, Dong Y, Wang C, Zhang X, Sun A, You C, Mao J, Liu S. Semantic Segmentation-Based Identification and Quantitative Analysis of Cross-Sectional Quality Features in Luzhou-Flavor Liquor Daqu. Computers. 2026; 15(5):307. https://doi.org/10.3390/computers15050307

Chicago/Turabian StyleSong, Zheli, Yi Dong, Chao Wang, Xiu Zhang, Aibao Sun, Cuiping You, Jian Mao, and Shuangping Liu. 2026. "Semantic Segmentation-Based Identification and Quantitative Analysis of Cross-Sectional Quality Features in Luzhou-Flavor Liquor Daqu" Computers 15, no. 5: 307. https://doi.org/10.3390/computers15050307

APA StyleSong, Z., Dong, Y., Wang, C., Zhang, X., Sun, A., You, C., Mao, J., & Liu, S. (2026). Semantic Segmentation-Based Identification and Quantitative Analysis of Cross-Sectional Quality Features in Luzhou-Flavor Liquor Daqu. Computers, 15(5), 307. https://doi.org/10.3390/computers15050307