Artificial Intelligence for High-Availability Systems: A Comprehensive Review

Abstract

1. Introduction

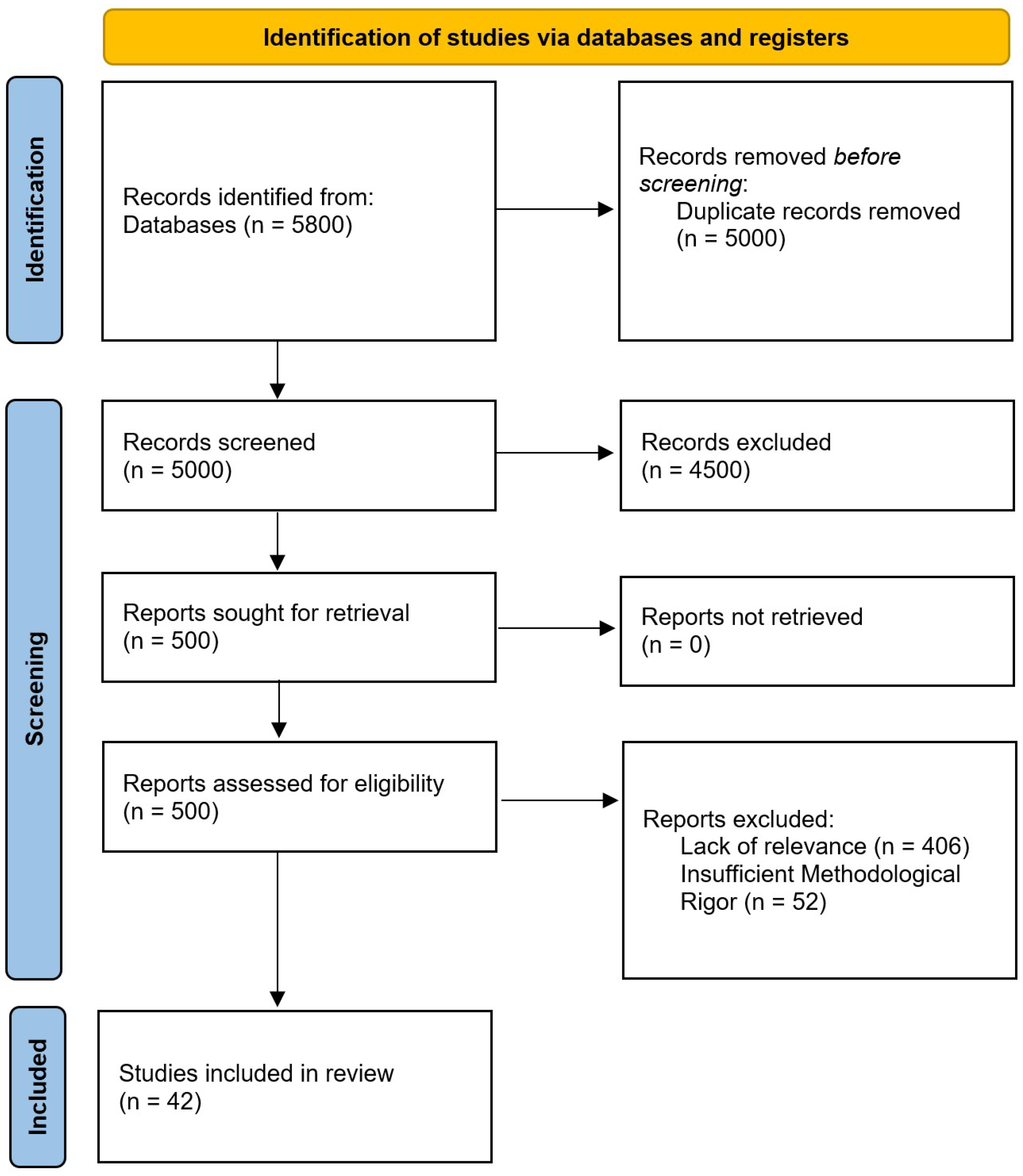

2. Review Research Method

2.1. Research Questions

- RQ1: What types of high-availability architecture adopt AI-based solutions for operational management?

- RQ2: Which AI techniques are employed for monitoring, fault detection, and fault prediction in HA systems?

- RQ3: How are AI-based methods used to support recovery processes and reduce service downtime in HA systems?

- RQ4: What are the main challenges and open research issues in applying AI to the operational management of HA systems?

2.2. Inclusion and Exclusion Criteria

- (I1) Studies explicitly addressing high availability, fault tolerance, or fault management in computing systems.

- (I2) Works proposing or evaluating AI-based techniques for monitoring, fault detection, fault prediction, or recovery.

- (I3) Peer-reviewed journal articles, conference papers, or book chapters.

- (I4) Publications over the time span of 2015–2025, reflecting the recent adoption of AI in HA systems.

- (I5) English-language publications.

- (E1) Studies lacking technical detail or experimental validation.

- (E2) Works not related to the operational management of HA systems.

- (E3) Non-peer-reviewed sources, such as white papers or technical reports.

- (E4) Duplicate studies.

- (E5) Publications without accessible full text.

2.3. Search Process

- ((high availability OR fault tolerance) AND (monitoring OR fault detection OR fault prediction OR recovery) AND (artificial intelligence OR machine learning OR deep learning))

- Publication venue and year.

- Target system architecture (Cloud, Edge, hybrid).

- AI techniques used.

- Operational focus (monitoring, fault detection, prediction, recovery).

- Evaluation metrics (availability, MTTR, MTTF, downtime).

2.4. Quality Assessment

- Clarity of the research objectives;

- Adequacy of the methodology;

- Reproducibility of the results;

- Relevance to the research questions;

- Empirical validation (if applicable).

- 0 = low (criterion not satisfied or poorly addressed);

- 1 = medium (partially addressed);

- 2 = high (fully satisfied with clear methodological evidence).

2.5. Classification Rationale

- AI-based Monitoring and Fault Detection: studies focusing on real-time system observation, anomaly identification, and early fault detection.

- AI-based Fault Prediction: studies addressing the anticipation of failures to enable proactive intervention and preventive actions.

- AI-driven Recovery Mechanisms in HA Systems: studies covering automated remediation, system reconfiguration, and service restoration.

2.6. Cross-Study Synthesis and Research Trends

2.7. Trade-Offs Between Classical Machine Learning and Deep Learning Approaches

2.8. Integration of AI Models in Production-Grade HA Systems

2.9. Linking AI Techniques to High-Availability Management

- Fault detection: identification of anomalies or deviations from normal behavior;

- Fault diagnosis: classification and root-cause analysis of detected issues;

- Fault recovery and mitigation: support for automated or semi-automated corrective actions.

2.10. Limitations of Simulation-Based Validation

2.11. Security Considerations and Vulnerabilities

2.12. Search Strategies

3. AI-Based Monitoring and Fault Detection

3.1. Machine Learning Approaches

3.2. Deep Learning Approaches

3.3. Deployment Considerations in Cloud and Edge

3.4. Discussion

4. AI-Based Fault Prediction

4.1. Machine Learning Techniques

4.2. Deep Learning Approaches

4.3. Incorporating Software Defect Prediction

4.4. Application Scenarios

4.5. Performance Metrics and Evaluation

4.6. Comparative Analysis and Critical Commentary

5. AI-Driven Recovery Mechanisms

5.1. Automated Remediation Strategies

5.2. Adaptive Resource Management

5.3. Integration with AI Monitoring and Detection

5.4. Discussion

6. Research Questions Discussion

- RQ1: AI-based solutions for operational management are primarily adopted in high-availability architectures characterized by distribution, scalability requirements, and partial observability of internal states. In Cloud-native environments and large-scale data centers, AI is embedded within microservices and container-orchestrated infrastructures to enhance autoscaling, anomaly detection, and traffic engineering. These architectures typically rely on layered observability pipelines (metrics, logs, traces) feeding ML models that operate either centrally (control-plane intelligence) or in a distributed fashion close to execution nodes. In HPC systems, AI is integrated into job schedulers and log analysis frameworks to predict node failures, classify runtime anomalies, and optimize workload placement under reliability constraints [24]. In cyber–physical systems (CPSs) and Industrial IoT deployments, AI-enhanced HA architectures combine Edge-level inference for low-latency anomaly detection with Cloud-level aggregation for model retraining and long-term optimization [19]. Distributed storage systems adopting erasure coding incorporate predictive models to anticipate disk or node failures and proactively migrate fragments to preserve data availability [62]. Similarly, SDN-enabled Cloud networking architectures embed learned path-quality estimators within controllers to maintain performance and resilience under congestion or partial link failures [66]. Across these domains, AI adoption is most prevalent in architectures featuring (i) software-defined control planes, (ii) high telemetry availability, and (iii) orchestration layers capable of executing automated remediation actions, thus enabling closed-loop intelligent operational management.

- RQ2: AI for monitoring and fault detection in Cloud, CPS, and IoT environments tends to converge on a small set of recurring macro-techniques that differ mainly in (i) data processing, (ii) type of models, and (iii) where computation is placed. On the classical ML side, supervised pipelines based on feature engineering remain common because they are efficient and relatively interpretable: tree-based models such as decision trees and random forests are frequently used for anomaly detection and anomaly-type classification when deviations can be summarized into discriminative features [31], while boosted ensembles (e.g., XGBoost and C5.0) are often top performers for failure/performance prediction from infrastructure telemetry, especially when combined with explicit imbalance handling through over/under-sampling [23]. Linear models also appear as lightweight predictors or baselines in operational analytics, often coupled with periodic retraining to cope with drift in day-to-day deployments [33]. Complementary to purely discriminative learning, several works adopt residual- and statistics-driven detection, where faults are identified through model mismatch rather than directly learned labels: least-squares identified residual generators paired with a decision statistic support distributed fault detection in interconnected systems [32], and distance-based scoring (Mahalanobis distance) combined with adaptive EWMA smoothing and dynamic thresholds enables low-latency anomaly flagging in sensor networks [19]. Fault tolerance is also treated as a decision/optimization problem beyond detection: once faults are detected, data-driven predictive control can compute corrective inputs to recover performance [32], while in WSN-assisted IIoT routing, reinforcement learning combined with metaheuristic optimization is used to rapidly reconfigure routes under node/link faults while balancing delay and energy objectives [27].Deep learning approaches are mainly adopted when the monitored signals are strongly sequential or weakly structured, as per logs and multivariate time-series, making manual feature extraction brittle: temporal convolutional and attention-based detectors are used to operate online, sometimes preceded by multi-step forecasting to anticipate anomalies ahead of time [18], and transformer encoders adapted via domain/task pretraining support scalable log-based failure classification in HPC settings [24]. For time-series fault classification, sequence models such as multi-scale dilated BiLSTM with attention are used to enlarge receptive fields and focus on salient temporal segments [20]. In addition, hybrid convolutional–recurrent architectures (e.g., CNN–GRU/CNN–LSTM) are often selected in industrial monitoring settings where local temporal patterns and longer dependencies must both be captured, as shown in power grid fault classification from station telemetry [29]. Finally, robustness to missing data and sensor failures is often handled through prediction or generation, enabling monitoring continuity even when sensors drop out: time–frequency-informed GANs with LSTM generators reconstruct missing structural responses while preserving spectral characteristics [34], and compact 1D-CNN models can estimate key environmental variables or discretized states in IoT deployments to tolerate faulty readings [35]. Overall, classical ML dominates when features are well-defined and interpretability is important, statistical/residual methods provide controllable decision rules in distributed settings, and deep sequence or hybrid convolutional–recurrent models are preferred for complex temporal dependencies, unstructured logs, or scarce/rare fault signatures, albeit with higher deployment and maintenance complexity [18,19,29].

- RQ3: AI-based methods reduce service downtime in HA systems by coupling AI monitoring outputs to automated or semi-automated recovery actions. On the monitoring side, log/time-series models (e.g., transformer classifiers or TCN-based detectors, sometimes preceded by forecasting) detect and localize incidents earlier than static thresholds, while statistical/residual techniques provide explicit decision statistics for reliable triggering [18,24,32]. These signals feed recovery mechanisms that either execute pre-approved remediation (restart, isolate, rollback, failover) via an orchestrator, or adapt system behavior through controllers. For example, failure-time/type prediction can drive proactive repair and migration decisions in erasure-coded storage to prevent data unavailability [62]; residual-based detection can directly trigger fault-tolerant control inputs to contain fault propagation [32]; and in networked/IoT settings, anomaly confirmation combined with consensus can justify isolating faulty nodes, while RL-based routing reconfigures paths to maintain delivery under node/link failures [19,27]. In Cloud networking, learned path-quality scoring embedded in an SDN controller can reroute traffic away from congestion, acting as a rapid recovery from performance degradation [66]. Across these approaches, downtime is reduced by (i) anticipating failures, (ii) narrowing action scope to the affected components, and (iii) gating automation with confidence/validation to avoid disruptive false positives [63].

- RQ4: The main challenges and open research issues in applying AI to operational management of HA systems concern reliability guarantees, life cycle management, scalability, and trustworthiness. First, AI models introduce probabilistic decision making into infrastructures that traditionally rely on deterministic guarantees; ensuring bounded risk in anomaly detection and prediction remains an open problem, particularly under concept drift and non-stationary workloads [33]. Second, maintaining model validity over time requires continuous retraining, validation, and monitoring pipelines (MLOps integration), which increases architectural complexity and operational overhead. Third, scalability constraints arise when deep sequence or transformer-based models process high-volume telemetry streams, potentially conflicting with latency requirements of HA control loops [24]. Data sparsity for rare fault events further limits supervised approaches, motivating research into self-supervised, transfer, and few-shot learning paradigms. Explainability and accountability also remain critical challenges, especially in CPS and IIoT contexts where automated remediation may affect physical processes [32]. Security concerns—including adversarial manipulation of telemetry data or poisoning of training pipelines—represent an emerging research frontier, as compromising the AI layer may indirectly degrade availability policies. Finally, hybrid architectures that combine rule-based safeguards with learning-based adaptation are increasingly recognized as necessary to prevent the intelligence layer itself from becoming a single point of failure. Addressing these issues requires advances in robust learning, drift-aware adaptation, certifiable AI, and dependable AI–orchestrator co-design.

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Bartlett, J.; Gray, J.; Horst, B. Fault tolerance in tandem computer systems. In The Evolution of Fault-Tolerant Computing: In the Honor of William C. Carter; Springer: Berlin/Heidelberg, Germany, 1987; pp. 55–76. [Google Scholar]

- Somasekaram, P.; Calinescu, R.; Buyya, R. High-availability clusters: A taxonomy, survey, and future directions. J. Syst. Softw. 2022, 187, 111208. [Google Scholar] [CrossRef]

- Vega-Luna, J.; Salgado-Guzmán, G.; Cosme-Aceves, J.; Tapia-Vargas, V.; Andrade-González, E. Linux cluster programming for high availability with an Oracle instance. J. Appl. Res. Technol. 2025, 23, 108–119. [Google Scholar] [CrossRef]

- Hark, R.; Koziolek, H.; Yussupov, V.; Eskandani, N. Kubernetes High-Availability Software Architecture Options for Two-Node Clusters in IoT Applications. In Proceedings of the 2025 IEEE 22nd International Conference on Software Architecture Companion (ICSA-C), Stuttgart, Germany, 31 March–4 April 2025; IEEE: Piscataway, NJ, USA; pp. 69–76. [Google Scholar]

- Malleni, S.S.; Sevilla, R.; Lema, J.C.; Bauer, A. Bridging Clusters: A Comparative Look at Multi-Cluster Networking Performance in Kubernetes. In Proceedings of the Proceedings of the 16th ACM/SPEC International Conference on Performance Engineering, Toronto, ON, Canada, 5–9 May 2025; pp. 113–123. [Google Scholar]

- Nguyen, T.N.; Lee, J.; Vitumbiko, M.; Kim, Y. A design and development of operator for logical kubernetes cluster over distributed clouds. In Proceedings of the NOMS 2024–2024 IEEE Network Operations and Management Symposium, Seoul, Republic of Korea, 6–10 May 2024; IEEE: Piscataway, NJ, USA; pp. 1–6. [Google Scholar]

- Pereira, P.; Araujo, J.; Melo, C.; Santos, V.; Maciel, P. Analytical models for availability evaluation of edge and fog computing nodes. J. Supercomput. 2021, 77, 9905–9933. [Google Scholar] [CrossRef]

- Sangolli, D.R.; Ravindrarao, N.M.; Patil, P.C.; Palissery, T.; Liu, K. Enabling high availability edge computing platform. In Proceedings of the 2019 7th IEEE International Conference on Mobile Cloud Computing, Services, and Engineering (MobileCloud), Paris, France, 24–27 June 2019; IEEE Computer Society: Los Alamitos, CA, USA; pp. 85–92.

- Swetha, R.; Thriveni, J.; Venugopal, K. Resource Utilization-Based Container Orchestration: Closing the Gap for Enhanced Cloud Application Performance. SN Comput. Sci. 2025, 6, 191. [Google Scholar] [CrossRef]

- Liu, X.; Cheng, B.; Wang, S. Availability-aware and energy-efficient virtual cluster allocation based on multi-objective optimization in cloud datacenters. IEEE Trans. Netw. Serv. Manag. 2020, 17, 972–985. [Google Scholar] [CrossRef]

- Sivakumar, J.; Salman, N.R.; Salman, F.R.; Salimova, H.R.; Ghimire, E. AI-driven cyber threat detection: Enhancing security through intelligent engineering systems. J. Inf. Syst. Eng. Manag. 2025, 10, 790–798. [Google Scholar] [CrossRef]

- Gbenle, P.; Abieba, O.A.; Owobu, W.O.; Onoja, J.P.; Daraojimba, A.I.; Adepoju, A.H.; Chibunna, U.B. A Conceptual Model for Scalable and Fault-Tolerant Cloud-Native Architectures Supporting Critical Real-Time Analytics in Emergency Response Systems. 2021. Available online: https://worldscientificnews.com/a-conceptual-model-for-scalable-and-fault-tolerant-cloud-native-architectures-supporting-critical-real-time-analytics-in-emergency-response-systems/ (accessed on 10 January 2026).

- Toumi, N.; Bagaa, M.; Ksentini, A. Machine learning for service migration: A survey. IEEE Commun. Surv. Tutor. 2023, 25, 1991–2020. [Google Scholar] [CrossRef]

- Endo, P.T.; Rodrigues, M.; Gonçalves, G.E.; Kelner, J.; Sadok, D.H.; Curescu, C. High availability in clouds: Systematic review and research challenges. J. Cloud Comput. 2016, 5, 16. [Google Scholar] [CrossRef]

- Maciel, P.; Dantas, J.; Melo, C.; Pereira, P.; Oliveira, F.; Araujo, J.; Matos, R. A survey on reliability and availability modeling of edge, fog, and cloud computing. J. Reliab. Intell. Environ. 2022, 8, 227–245. [Google Scholar] [CrossRef]

- Hasan, M.; Goraya, M.S. Fault tolerance in cloud computing environment: A systematic survey. Comput. Ind. 2018, 99, 156–172. [Google Scholar] [CrossRef]

- Kitchenham, B. Procedures for Performing Systematic Reviews; Technical Report; Keele University: Keele, UK, 2004. [Google Scholar]

- Guo, Y.; Sun, Y.; Xiong, P. Research on Online Log Anomaly Detection Model Based on Informer. Concurr. Comput. Pract. Exp. 2025, 37, e70300. [Google Scholar] [CrossRef]

- Boubiche, S.; Boubiche, D.E.; Toral-Cruz, H. ASOCIDA: Adaptive self-optimizing approach for anomaly detection and collaborative isolation in wireless sensor networks. Ad Hoc Netw. 2025, 178, 103959. [Google Scholar] [CrossRef]

- Selvaraj, C.; Justin, J. Automatic fault detection in self healing network using multiscale dilated bidirectional long short-term memory with attention mechanism. Signal Image Video Process. 2025, 19, 907. [Google Scholar] [CrossRef]

- Zhuang, N.; Ren, Z.; Yang, D.; Tian, X.; Wang, Y. A Knowledge-Guide Data-Driven Model with Selective Wavelet Kernel Fusion Neural Network for Gearbox Intelligent Fault Diagnosis. Sensors 2025, 25, 7656. [Google Scholar] [CrossRef]

- Tran, D.H.; Nguyen, V.L.; Nguyen, H.; Jang, Y.M. Self-Supervised Learning for Time-Series Anomaly Detection in Industrial Internet of Things. Electronics 2022, 11, 2146. [Google Scholar] [CrossRef]

- Kalaskar, C.; Thangam, S. Fault Tolerance of Cloud Infrastructure with Machine Learning. Cybern. Inf. Technol. 2023, 23, 26–50. [Google Scholar] [CrossRef]

- Bang, B.; Kim, J.; Song, U.; Lee, J.; Ma, J. HAMS: An AI-driven framework for real-time failure detection in HPC system logs. J. Supercomput. 2025, 81, 1364. [Google Scholar] [CrossRef]

- Gollapalli, M.; Al Metrik, M.; Alnajrani, B.; Alomari, A.; AlDawoud, S.; Almunsour, Y.; Abdulqader, M.; Aloup, K. Task Failure Prediction Using Machine Learning Techniques in the Google Cluster Trace Cloud Computing Environment. Math. Model. Eng. Probl. 2022, 9, 545–553. [Google Scholar] [CrossRef]

- Carter, A.; Imtiaz, S.; Naterer, G.F. Review of interpretable machine learning for process industries. Process Saf. Environ. Prot. 2023, 170, 647–659. [Google Scholar] [CrossRef]

- Kaur, G.; Chanak, P. An Intelligent Fault Tolerant Data Routing Scheme for Wireless Sensor Network-Assisted Industrial Internet of Things. IEEE Trans. Ind. Inform. 2023, 19, 5543–5553. [Google Scholar] [CrossRef]

- Zhao, X.; Guo, F.; Huang, A.; Ding, J.; Yan, C.; Yuan, W.; Su, Y.; Li, Q.; Zhang, Q. Adaptive resource management in dynamic Cyber–Physical Systems using Artificial Intelligence. Eng. Appl. Artif. Intell. 2025, 162, 112409. [Google Scholar] [CrossRef]

- Almasoudi, F.M. Enhancing Power Grid Resilience through Real-Time Fault Detection and Remediation Using Advanced Hybrid Machine Learning Models. Sustainability 2023, 15, 8348. [Google Scholar] [CrossRef]

- De la Cruz Cabello, M.; Prince Sales, T.; Machado, M.R. AIOps for log anomaly detection in the era of LLMs: A systematic literature review. Intell. Syst. Appl. 2025, 28, 200608. [Google Scholar] [CrossRef]

- Odyurt, U.; Pimentel, A.D.; Gonzalez Alonso, I. Improving the robustness of industrial Cyber–Physical Systems through machine learning-based performance anomaly identification. J. Syst. Archit. 2022, 131, 102716. [Google Scholar] [CrossRef]

- Li, B.; Li, W.; Yang, Y. Data-Driven Distributed Fault Detection and Fault-Tolerant Control for Large-Scale Systems: A Subspace Predictor-Assisted Integrated Design Scheme. IEEE Trans. Cybern. 2025, 55, 5346–5357. [Google Scholar] [CrossRef]

- Khomenko, Y.; Babichev, S. Modular IoT Architecture for Monitoring and Control of Office Environments Based on Home Assistant. IoT 2025, 6, 69. [Google Scholar] [CrossRef]

- Wang, Z.; Kachireddy, M.; Mondal, T.G.; Tang, W.; Jahanshahi, M.R. Time-frequency informed stacked long short-term memory-based generative adversarial network for missing data imputation in sensor networks. Eng. Appl. Artif. Intell. 2025, 155, 110973. [Google Scholar] [CrossRef]

- Mohammadhossein Shekarian, S.; Aminian, M.; Mohammad Fallah, A.; Akbary Moghaddam, V. AI-powered sensor fault detection for cost-effective smart greenhouses. Comput. Electron. Agric. 2024, 224, 109198. [Google Scholar] [CrossRef]

- Isern, J.; Jimenez-Perera, G.; Medina-Valdes, L.; Chaves, P.; Pampliega, D.; Ramos, F.; Barranco, F. A Cyber-Physical System for Integrated Remote Control and Protection of Smart grid Critical Infrastructures. J. Signal Process. Syst. 2023, 95, 1127–1140. [Google Scholar] [CrossRef]

- Comi, A.; Fotia, L.; Messina, F.; Rosaci, D.; Sarné, G.M. Grouptrust: Finding trust-based group structures in social communities. In Proceedings of the International Symposium on Intelligent and Distributed Computing, Paris, France, 21–23 September 2016; Springer: Cham, Switzerland; pp. 143–152.

- Comi, A.; Fotia, L.; Messina, F.; Pappalardo, G.; Rosaci, D.; Sarné, G.M. Forming homogeneous classes for e-learning in a social network scenario. In Proceedings of the Intelligent Distributed Computing IX: Proceedings of the 9th International Symposium on Intelligent Distributed Computing–IDC’2015, Guimarães, Portugal, 21–23 September 2015; Springer: Cham, Switzerland; pp. 131–141.

- Comi, A.; Fotia, L.; Messina, F.; Pappalardo, G.; Rosaci, D.; Sarné, G.M. An evolutionary approach for cloud learning agents in multi-cloud distributed contexts. In Proceedings of the 2015 IEEE 24th International Conference on Enabling Technologies: Infrastructure for Collaborative Enterprises, Larnaca, Cyprus, 15–17 June 2015; IEEE: Piscataway, NJ, USA; pp. 99–104.

- Comi, A.; Fotia, L.; Messina, F.; Pappalardo, G.; Rosaci, D.; Sarné, G.M. Using semantic negotiation for ontology enrichment in e-learning multi-agent systems. In Proceedings of the 2015 Ninth International Conference on Complex, Intelligent, and Software Intensive Systems, Blumenau, Brazil, 8–10 July 2015; IEEE: Piscataway, NJ, USA; pp. 474–479.

- Comi, A.; Fotia, L.; Messina, F.; Rosaci, D.; Sarnè, G.M. A qos-aware, trust-based aggregation model for grid federations. In Proceedings of the OTM Confederated International Conferences “On the Move to Meaningful Internet Systems”, Amantea, Italy, 27–31 October 2014; Springer: Berlin/Heidelberg, Germany; pp. 277–294.

- Mohammed, B.; Awan, I.; Ugail, H.; Younas, M. Failure prediction using machine learning in a virtualised HPC system and application. Clust. Comput. 2019, 22, 471–485. [Google Scholar] [CrossRef]

- Yang, H.; Kim, Y. Design and Implementation of Machine Learning-Based Fault Prediction System in Cloud Infrastructure. Electronics 2022, 11, 3765. [Google Scholar] [CrossRef]

- Asmawi, T.N.T.; Ismail, A.; Shen, J. Cloud failure prediction based on traditional machine learning and deep learning. J. Cloud Comput. 2022, 11, 47. [Google Scholar] [CrossRef]

- Albattah, W.; Alzahrani, M. Software defect prediction based on machine learning and deep learning techniques: An empirical approach. AI 2024, 5, 1743–1758. [Google Scholar] [CrossRef]

- Yu, H.; Zhang, Y.; Li, Q.; Wang, X.; Liu, Z.; Chen, Y. Research on software defect prediction based on machine learning. J. Chongqing Univ. 2025, 48, 10–21. [Google Scholar] [CrossRef]

- Pachouly, J.; Ahirrao, S.; Kotecha, K.; Selvachandran, G.; Abraham, A. A systematic literature review on software defect prediction using artificial intelligence: Datasets, Data Validation Methods, Approaches, and Tools. Eng. Appl. Artif. Intell. 2022, 111, 104773. [Google Scholar] [CrossRef]

- Ubal, C.; Di-Giorgi, G.; Contreras-Reyes, J.E.; Salas, R. Predicting the Long-Term Dependencies in Time Series Using Recurrent Artificial Neural Networks. Mach. Learn. Knowl. Extr. 2023, 5, 1340–1358. [Google Scholar] [CrossRef]

- Uppal, M.; Gupta, D.; Juneja, S.; Sulaiman, A.; Rajab, K.; Rajab, A.; Elmagzoub, M.A.; Shaikh, A. Cloud-Based Fault Prediction for Real-Time Monitoring of Sensor Data in Hospital Environment Using Machine Learning. Sustainability 2022, 14, 11667. [Google Scholar] [CrossRef]

- Borghesi, A.; Libri, A.; Benini, L.; Bartolini, A. Online Anomaly Detection in HPC Systems. In Proceedings of the IEEE International Conference on Artificial Intelligence Circuits and Systems (AICAS), Hsinchu, Taiwan, 18–20 March 2019; pp. 229–233. [Google Scholar] [CrossRef]

- Netti, A.; Kiziltan, Z.; Babaoglu, O.; Sîrbu, A.; Bartolini, A.; Borghesi, A. FINJ: A Fault Injection Tool for HPC Systems. In Proceedings of the Euro-Par 2018: Parallel Processing Workshops, Turin, Italy, 27–31 August 2018; Springer: Cham, Switzerland; Volume 11339, pp. 800–812. [CrossRef]

- Jorayeva, M.; Akbulut, A.; Catal, C.; Mishra, A. Machine Learning-Based Software Defect Prediction for Mobile Applications: A Systematic Literature Review. Sensors 2022, 22, 2551. [Google Scholar] [CrossRef] [PubMed]

- Yang, Y.; Wang, H. Random Forest-Based Machine Failure Prediction: A Performance Comparison. Appl. Sci. 2025, 15, 8841. [Google Scholar] [CrossRef]

- Ji, Y.; Gu, L.; Huang, H.; Wang, W.; Zhang, W. Research on fault prediction and management of charging station combined with deep learning model. Int. J. Low Carbon Technol. 2025, 20, 848–854. [Google Scholar] [CrossRef]

- Wang, L.; Zhu, Z.; Zhao, X. Dynamic predictive maintenance strategy for system remaining useful life prediction via deep learning ensemble method. Reliab. Eng. Syst. Saf. 2024, 245, 110012. [Google Scholar] [CrossRef]

- Akheel, M.; Anjanikar, A.; Navale, S.; Sairam, V.; Pareek, P.; Wagle, S.A.; Ansari, M.A. Proactive maintenance for water pumps: Multitask neural network for fault detection and RUL estimation. Life Cycle Reliab. Saf. Eng. 2025, 15, 249–260. [Google Scholar] [CrossRef]

- Abdu, A.; Zhai, Z.; Abdo, H.A.; Algabri, R.; Lee, S. Graph-Based Feature Learning for Cross-Project Software Defect Prediction. Comput. Mater. Contin. 2023, 77, 161–180. [Google Scholar] [CrossRef]

- Zhang, W.; Zhao, J.; Qin, G.; Wang, S. Cross-project defect prediction based on autoencoder with dynamic adversarial adaptation. Appl. Intell. 2025, 55, 324. [Google Scholar] [CrossRef]

- Bommala, H.; Udaya, M.V. Machine learning job failure analysis and prediction model for the cloud environment. High Confid. Comput. 2023, 3, 100165. [Google Scholar] [CrossRef]

- Rafique, S.H.; Abdallah, A.; Musa, N.S.; Murugan, T. Machine learning and deep learning techniques for internet of things network anomaly detection—Current research trends. Sensors 2024, 24, 1968. [Google Scholar] [CrossRef]

- Bellini, E.; D’Aniello, G.; Flammini, F.; Gaeta, R. Situation Awareness for Cyber Resilience: A review. Int. J. Crit. Infrastruct. Prot. 2025, 49, 100755. [Google Scholar] [CrossRef]

- Song, Y.; Zheng, P.; Tian, Y.; Wang, B. ACPR: Adaptive Classification Predictive Repair Method for Different Fault Scenarios. IEEE Access 2024, 12, 4631–4641. [Google Scholar] [CrossRef]

- Zota, R.D.; Bărbulescu, C.; Constantinescu, R. A Practical Approach to Defining a Framework for Developing an Agentic AIOps System. Electronics 2025, 14, 1775. [Google Scholar] [CrossRef]

- Toprani, D.; Madisetti, V.K. LLM Agentic Workflow for Automated Vulnerability Detection and Remediation in Infrastructure-as-Code. IEEE Access 2025, 13, 69175–69181. [Google Scholar] [CrossRef]

- Al-Na’amneh, Q.; Aljawarneh, M.; Hazaymih, R.; Alsarhan, A.; Alnafisah, K.; Alshammari, N.; Alshammari, S. Autonomous Self-Adaptation in the Cloud: ML-Heal’s Framework for Proactive Fault Detection and Recovery. Int. J. Adv. Comput. Sci. Appl. 2025, 16. [Google Scholar] [CrossRef]

- İpek, A.D.; Cicioğlu, M.; Çalhan, A. AIRSDN: AI based routing in software-defined networks for multimedia traffic transmission. Comput. Commun. 2025, 240, 108222. [Google Scholar] [CrossRef]

- Lv, J.; Babbar, H.; Rani, S. AI-Driven Resource Management for Energy-Efficient Aerial Computing in Large-Scale Healthcare SDN–IoT Systems. IEEE Internet Things J. 2025, 12, 23536–23549. [Google Scholar] [CrossRef]

- Acharya, D.B.; Kuppan, K.; Divya, B. Agentic AI: Autonomous intelligence for complex goals—A comprehensive survey. IEEE Access 2025, 13, 18912–18936. [Google Scholar] [CrossRef]

| Score Range | Number of Studies | Interpretation |

|---|---|---|

| 8–10 | 24 | High quality |

| 6–7 | 18 | Medium quality |

| <6 | 0 | Excluded |

| AI Technique | Fault Detection | Diagnosis | Recovery | Support |

|---|---|---|---|---|

| Anomaly Detection [18,19,20] | ✓ | ✓ | ||

| Classification Models [21,22,23] | ✓ | ✓ | ✓ | |

| Time-series Forecasting [18,24,25,26] | ✓ | ✓ | ||

| Reinforcement Learning [27,28] | ✓ | ✓ | ||

| Hybrid Approaches [29] | ✓ | ✓ | ✓ | ✓ |

| Macro-Technique | Input | Models/Mechanisms | Main Use |

|---|---|---|---|

| Supervised ML | Telemetry/features | DT, RF [31]; XGBoost, C5.0 [23]; linear/ridge [33] | Fault/anomaly classification; interpretable drivers |

| Residual/statistical | I/O signals | LS residuals– test [32] | Fault decision via test statistic (distributed-friendly) |

| Distance/thresholds | Sensor streams | Mahalanobis–adaptive EWMA–thresholds [19] | Fast anomaly flagging; adaptive false-alarm control |

| Deep sequence models | Logs/time-series | TCN+attn (+forecast) [18]; Transformers [24]; dilated BiLSTM+attn [20]; CNN–GRU/CNN–LSTM hybrids [29] | Temporal anomaly detection and early warning; fault classification from sequential telemetry |

| Imputation/ reconstruction | Gappy time-series | LSTM-GAN–PSD [34]; 1D-CNN surrogates [35] | Recover missing data to keep monitoring reliable |

| Detection– mitigation | Context–alarms | Predictive control [32]; RL–metaheuristic routing [27]; consensus/isolation [19]; remedial action enablement via fast classification [29] | Trigger recovery actions; limit propagation |

| Family | Advantages | Typical Limitations |

|---|---|---|

| Trees/ensembles (RF, boosting) | Strong performance on tabular telemetry; handles nonlinearities; some interpretability; efficient inference | Sensitive to labeling/preprocessing and imbalance choices [23]; may degrade under drift if not recalibrated [33] |

| Statistical/residual methods | Explicit decision statistic (thresholding); good for distributed/engineering settings [32]; controllable false alarms | May rely on structural assumptions; can be restrictive under complex topologies [32] |

| Deep sequence models (TCN/LSTM/Transformers; CNN–RNN hybrids) | Learns temporal features; strong on logs/time-series; supports early warning via forecasting [18,24]; effective fault classification from sequential telemetry with hybrid CNN–GRU/LSTM designs [29] | Heavier deployment; log parsing/template dependence; portability issues across sources [18,24]; may be station/system-specific and sensitive to rare-fault regimes without careful validation [29] |

| Generative/imputation models | Maintains monitoring under missing data; can preserve spectral/physical properties [34] | Additional component to train/monitor; sensitive to sensor placement/noise [34] |

| Metric | Description and Critical Commentary |

|---|---|

| Accuracy | Ratio of correctly classified instances to total instances. Misleading in imbalanced datasets where the majority class dominates. |

| Precision | Proportion of true positives among all positive predictions. High precision reduces unnecessary interventions. |

| Recall (Sensitivity) | Proportion of actual faults correctly detected. Critical in HA as missed faults may lead to outages. |

| F1-score | Harmonic mean of precision and recall. Provides a balanced measure, especially when both false positives and false negatives are important. |

| AUC–ROC | Evaluates classification quality across thresholds. Useful for selecting operating points in probabilistic models. |

| MCC | Balanced metric suitable for imbalanced datasets. Provides more reliable comparison across models than accuracy. |

| Metric | Use in HA Systems and Critical Insights |

|---|---|

| Lead Time | Time difference between prediction and actual fault. Longer lead times enable proactive interventions such as migration or resource reallocation. |

| Mean Time to Prediction (MTTP) | Average duration between fault detection and occurrence. Short MTTP may reduce operational benefit despite high classification accuracy. |

| Mean Time to Recovery (MTTR) Impact | Quantifies reduction in recovery time due to early fault prediction. Links predictive performance to measurable operational gains. |

| False Alarm Rate | Frequency of incorrect fault predictions. High rates can cause unnecessary remediation, wasting resources and potentially destabilizing HA systems. |

| Prediction Horizon Coverage | Range over which model predictions remain reliable. Longer horizons are desirable for strategic planning but may decrease precision. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Fotia, L.; Gaeta, R.; Messina, F.; Rosaci, D.; Sarné, G.M.L. Artificial Intelligence for High-Availability Systems: A Comprehensive Review. Computers 2026, 15, 231. https://doi.org/10.3390/computers15040231

Fotia L, Gaeta R, Messina F, Rosaci D, Sarné GML. Artificial Intelligence for High-Availability Systems: A Comprehensive Review. Computers. 2026; 15(4):231. https://doi.org/10.3390/computers15040231

Chicago/Turabian StyleFotia, Lidia, Rosario Gaeta, Fabrizio Messina, Domenico Rosaci, and Giuseppe M. L. Sarné. 2026. "Artificial Intelligence for High-Availability Systems: A Comprehensive Review" Computers 15, no. 4: 231. https://doi.org/10.3390/computers15040231

APA StyleFotia, L., Gaeta, R., Messina, F., Rosaci, D., & Sarné, G. M. L. (2026). Artificial Intelligence for High-Availability Systems: A Comprehensive Review. Computers, 15(4), 231. https://doi.org/10.3390/computers15040231