Weed Detection in Challenging Field Conditions: A Semi-Supervised Framework for Overcoming Shadow Bias and Data Scarcity

Abstract

1. Introduction

- A systematic comparative framework that benchmarks both quadrant-based classification (ResNet) and object detection (YOLO, RF-DETR), incorporating Grad-CAM interpretability analysis to diagnose “shadow bias” as a critical failure mode in agricultural vision systems.

- A diagnostic-driven semi-supervised learning pipeline that integrates unlabelled data through single-pass pseudo-labelling, demonstrating measurable improvements in recall and generalisation under challenging field conditions.

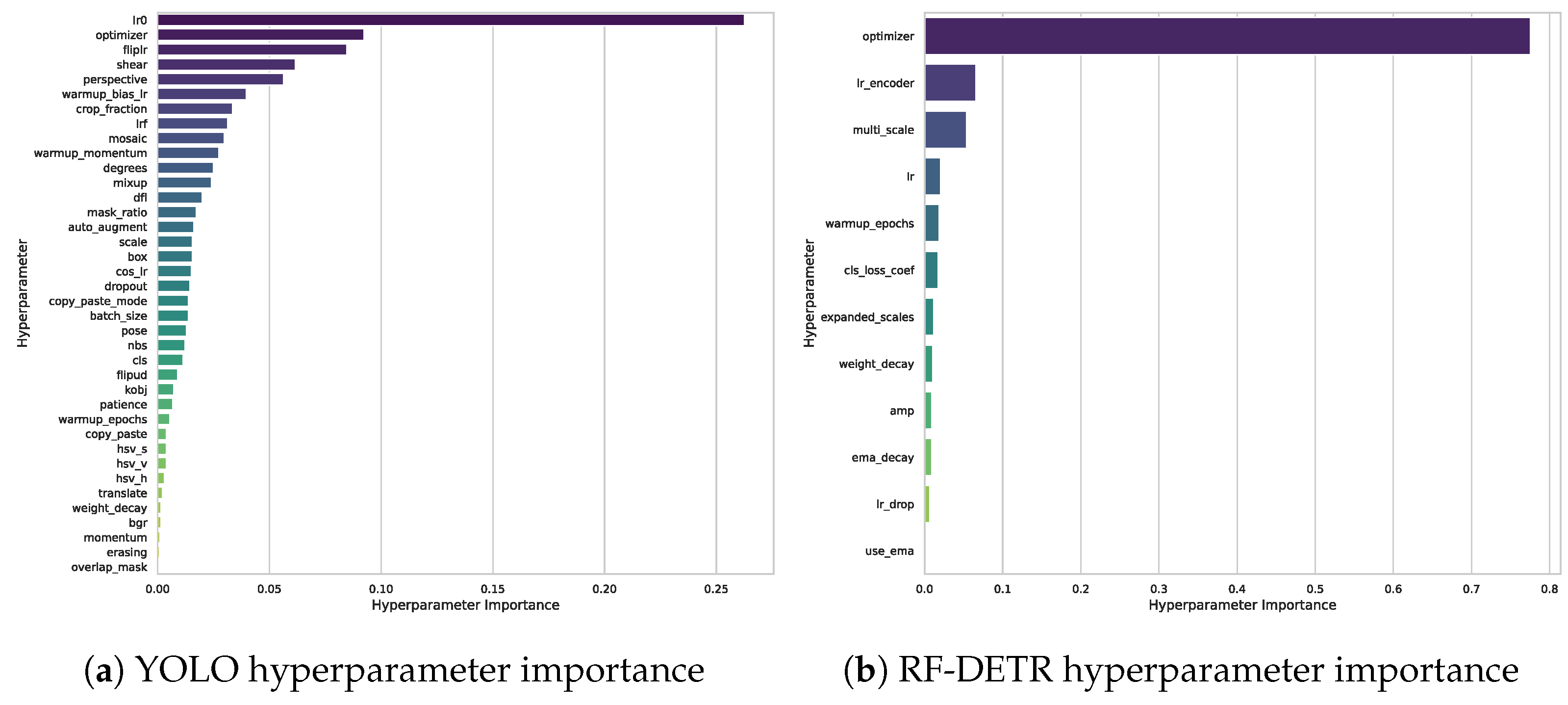

- A rigorous and transparent evaluation methodology, including explicit data leakage prevention, cross-architecture comparison with Optuna hyperparameter optimisation, and external validation on the public CropAndWeed benchmark.

2. Related Work

2.1. Classical and Early Machine Learning Approaches

2.2. Supervised Deep Learning for Weed Detection

2.3. Transformer-Based Architectures in Agriculture

2.4. Semi-Supervised Learning to Reduce Annotation Burden

3. Experiments

3.1. Dataset Curation and Preparation

3.1.1. Labelled Datasets: A and B

- Dataset A: Comprising images from paddocks ‘mw5_1330’ and ‘mw5_1331’, this set features relatively clear, well-lit conditions, serving as our baseline for model performance. Dataset A contains approximately 620 images at 4000 × 3000 pixel resolution, with 4442 sugarcane and 351 Guinea Grass bounding-box annotations, collected under predominantly sunny midday conditions.

- Dataset B: A more challenging compilation including images from ‘mw5_1327’ and ‘paddock_wt2’. Dataset B contains approximately 355 images with 506 sugarcane and 281 Guinea Grass annotations. This set is characterised by darker images, strong, inconsistent shadows, and higher visual similarity between crop and weed, designed specifically to test model robustness under adverse conditions. Quantitative illumination statistics are not available for these subsets; the qualitative distinction is supported by Figure 1 and the performance differential in our experiments.

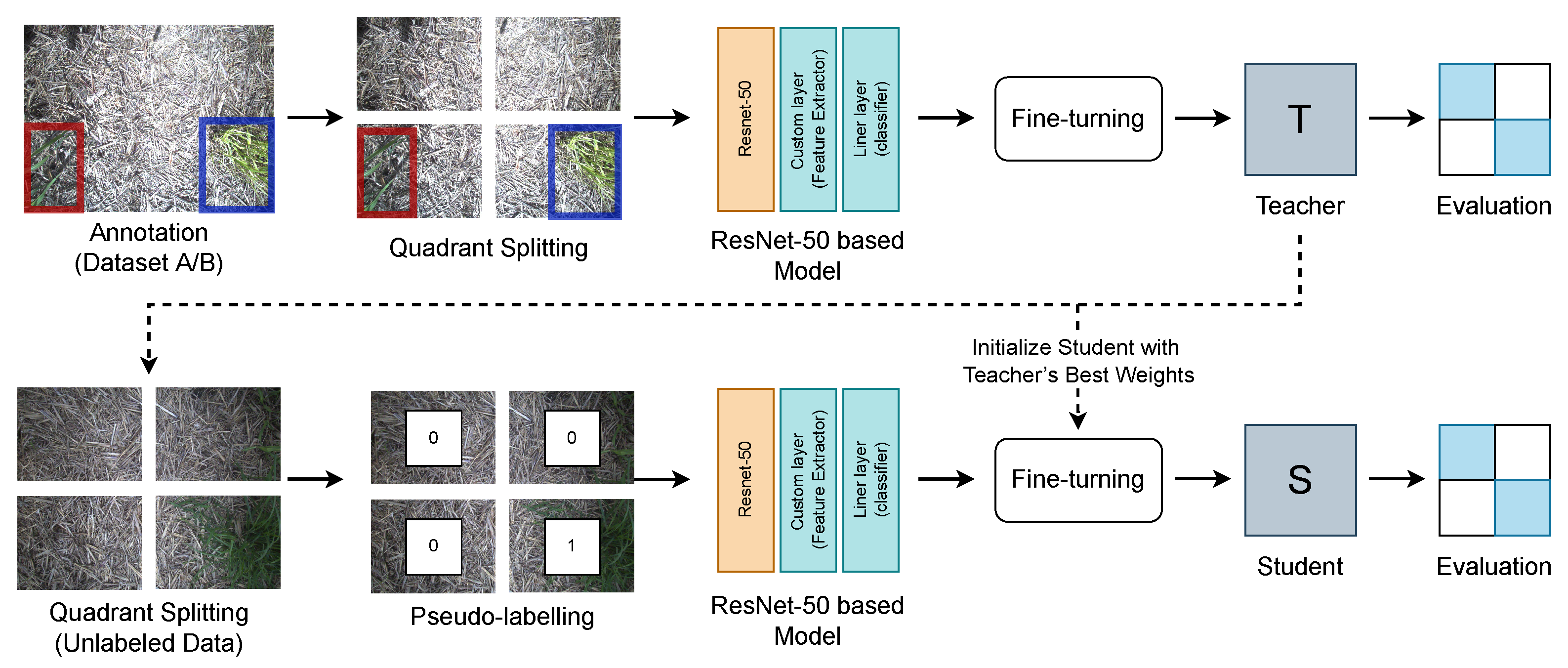

3.1.2. Quadrant Splitting and Label Generation for Classification

3.1.3. Handling Class Imbalance

3.1.4. Dataset Integrity and Validation Strategy

3.1.5. Public Benchmark Dataset for Method Validation

3.2. Supervised Learning Pipelines

3.2.1. Model Architectures

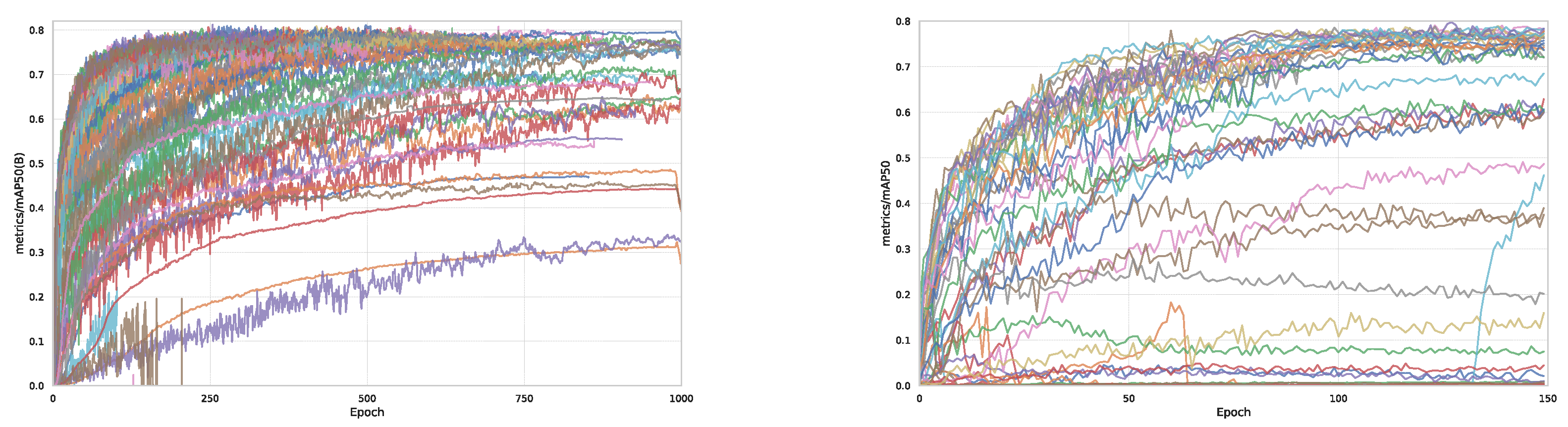

3.2.2. Implementation and Hyperparameter Optimisation

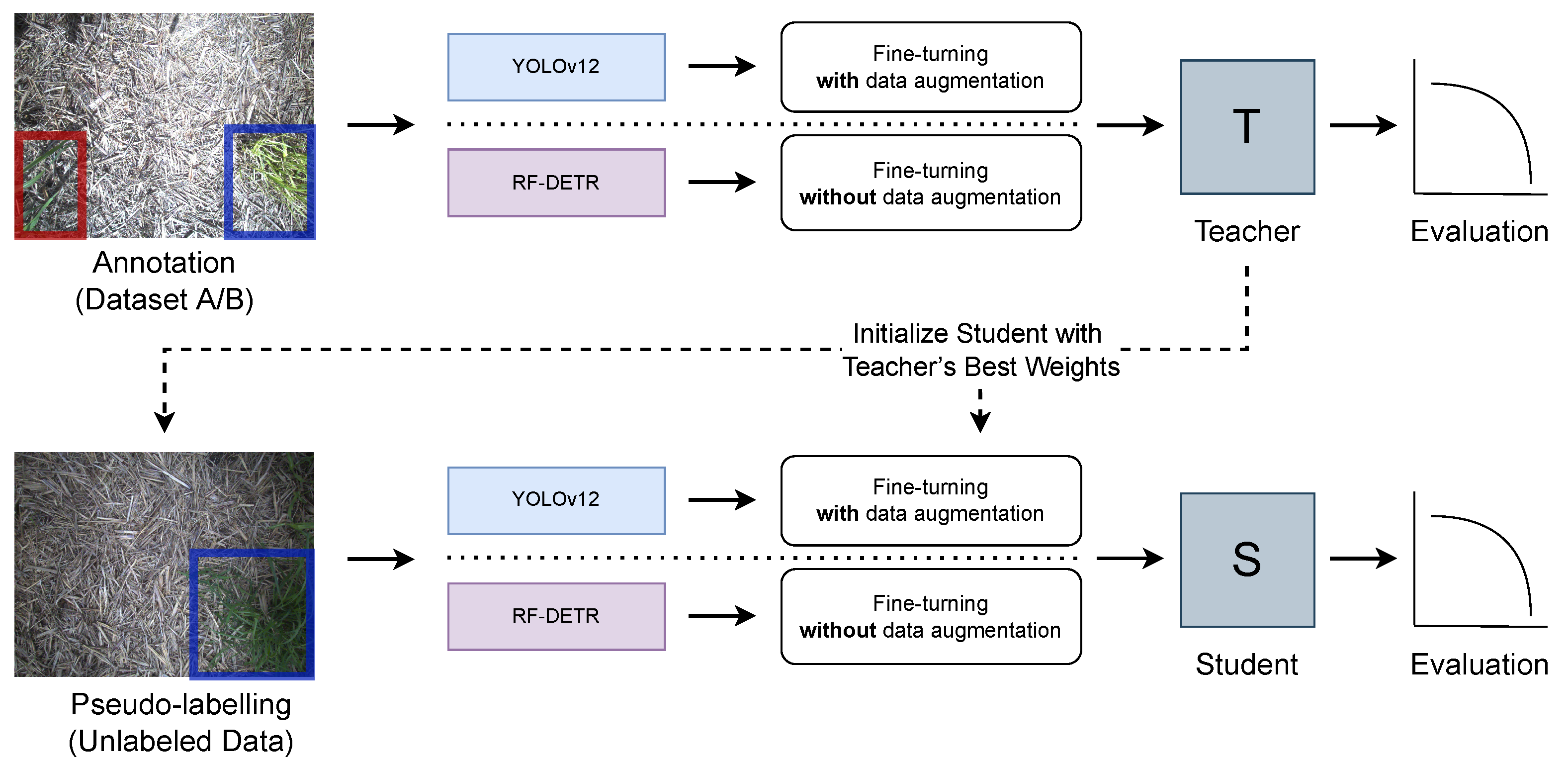

3.3. Semi-Supervised Learning Pipeline

- Train Teacher: A teacher model, , is first trained exclusively on the labelled set .

- Generate Pseudo-Labels: The teacher model is used to predict bounding boxes and class labels, , for each image . Predictions were filtered using class-specific confidence thresholds: uniformly for YOLOv12-s, and a mixed strategy ( for Guinea Grass, for sugarcane) for RF-DETR. Bounding boxes smaller than pixels were removed. This yielded approximately 8200 and 6500 pseudo-labelled images for YOLO and RF-DETR, respectively, from unlabelled images.

- Train Student: A final student model, , is then trained on the combined dataset . Its training objective is a weighted combination of the supervised loss on labelled data and the pseudo-supervised loss on unlabelled data:where is the standard detection loss (e.g., from YOLO or DETR), is a weighting hyperparameter, is an indicator function that includes an unlabelled sample only if its predicted confidence exceeds the threshold c. The weighting hyperparameter was set to in all experiments, prioritising the supervised loss. No EMA decay was used, as our single-pass pipeline employs a fixed teacher.

3.4. Evaluation Metrics

4. Results

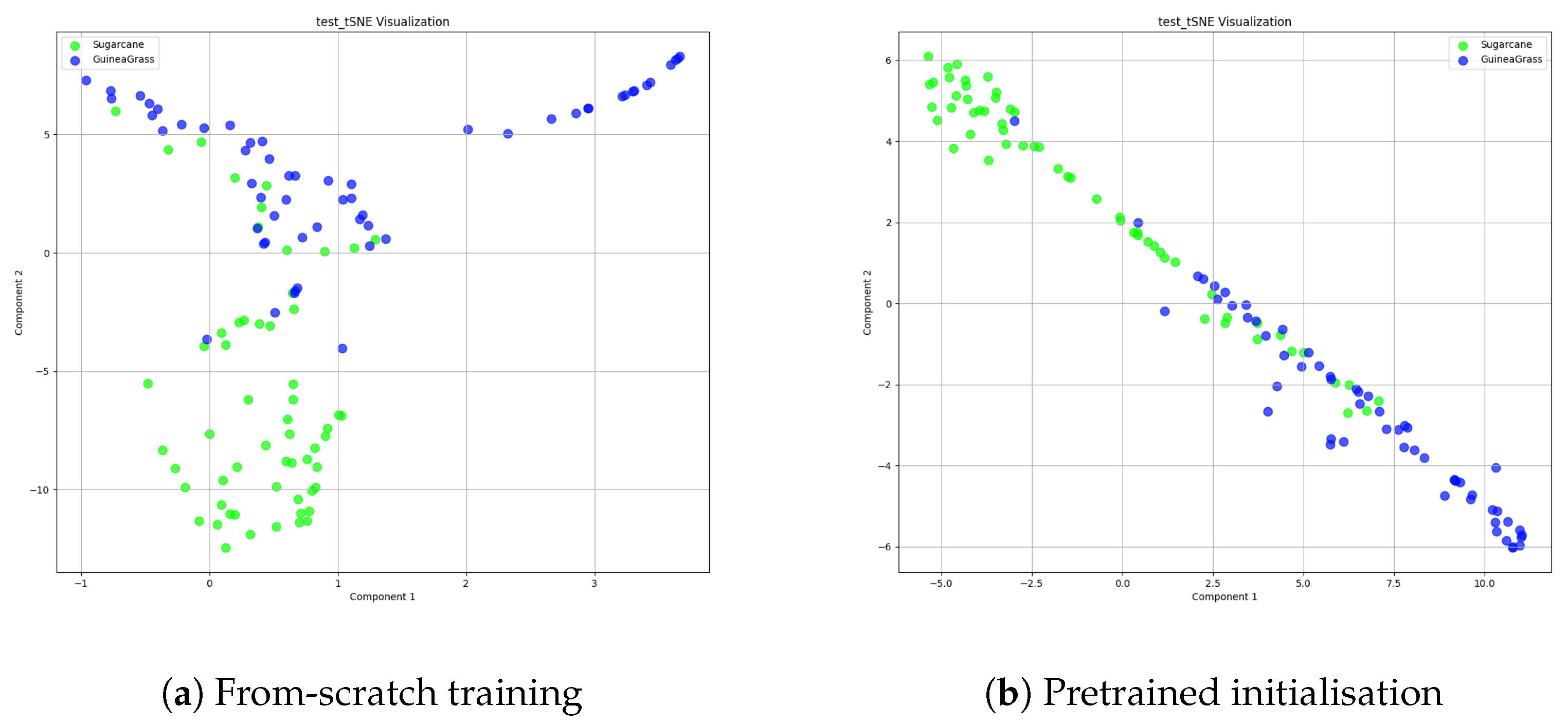

4.1. Fully Supervised Classification

4.1.1. Analysis of Model Behaviour

4.1.2. Diagnostic Finding: “Shadow Bias”

4.2. Semi-Supervised Classification Results

4.3. Object Detection Performance

4.3.1. Fully Supervised Detection Baselines

4.3.2. Semi-Supervised Detection Enhancements

4.3.3. Qualitative Analysis and Public Benchmark Validation

5. Discussion

5.1. From Classification to Detection: The Necessity of Spatial Awareness

5.2. Architectural Comparison: The Value of Specialisation vs. Potential of Transformers

5.3. The Practical Impact of Semi-Supervised Learning

5.4. Limitations

- Proprietary Dataset: Our field-collected dataset is not publicly available, limiting direct reproducibility. Validation on the public CropAndWeed benchmark partially mitigates this concern.

- Single-Pass SSL: We employed a single-pass pseudo-labelling strategy for computational efficiency, which may underperform more advanced iterative or consistency-based SSL methods.

- Unequal Architecture Tuning: Our RF-DETR models were not subjected to the same degree of augmentation and tuning as our YOLO models, meaning performance differences between architectures should be interpreted cautiously.

- Temporal and Environmental Scope: The dataset was collected at a single geographic location during a specific growth stage. Plant morphology, canopy density, and shadow patterns vary with phenological stage and seasonal conditions.

- Single Crop–Weed Pair: All primary experiments involve sugarcane and Guinea Grass only.

- No Statistical Significance Testing: Due to the computational cost of Optuna-based training, we do not report confidence intervals or multi-seed evaluations, though consistent improvements across architectures and benchmarks provide indirect robustness evidence.

- No Edge-Device Evaluation: Inference speed and power consumption on deployment hardware were not assessed.

5.5. Future Directions

- Creating and releasing a large-scale, high-density public benchmark for crop–weed detection in complex field conditions.

- Investigating advanced SSL techniques such as iterative teacher–student loops, consistency regularisation, and domain adaptation.

- Conducting a more exhaustive hyperparameter search for Transformer-based detectors with domain-specific augmentation strategies.

- Performing cross-season, cross-location, and multi-species validation to establish temporal and geographic robustness.

- Evaluating model quantisation and pruning for edge deployment on agricultural robots.

- Reporting multi-seed evaluations with confidence intervals for formal statistical validation.

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Oerke, E.C. Crop losses to pests. J. Agric. Sci. 2006, 144, 31–43. [Google Scholar] [CrossRef]

- Azghadi, M.R.; Olsen, A.; Wood, J.; Saleh, A.; Calvert, B.; Granshaw, T.; Fillols, E.; Philippa, B. Precision robotic spot-spraying: Reducing herbicide use and enhancing environmental outcomes in sugarcane. Comput. Electron. Agric. 2025, 235, 110365. [Google Scholar] [CrossRef]

- Lammie, C.; Olsen, A.; Carrick, T.; Rahimi Azghadi, M. Low-power and high-speed deep FPGA inference engines for weed classification at the edge. IEEE Access 2019, 7, 51171–51184. [Google Scholar] [CrossRef]

- Redmon, J.; Farhadi, A. YOLOv3: An incremental improvement. arXiv 2018, arXiv:1804.02767. [Google Scholar] [CrossRef]

- Carion, N.; Massa, F.; Synnaeve, G.; Usunier, N.; Kirillov, A.; Zagoruyko, S. End-to-End Object Detection with Transformers. In Proceedings of the European Conference on Computer Vision (ECCV); LNCS; Springer: Berlin/Heidelberg, Germany, 2020; Volume 12346, pp. 213–229. [Google Scholar] [CrossRef]

- Guo, Z.; Cai, D.; Zhou, Y.; Xu, T.; Yu, F. Identifying rice field weeds from unmanned aerial vehicle remote sensing imagery using deep learning. Plant Methods 2024, 20, 105. [Google Scholar] [CrossRef]

- Zhao, Y.; Lv, W.; Xu, S.; Wei, J.; Wang, G.; Dang, Q.; Liu, Y.; Chen, J. DETRs Beat YOLOs on Real-time Object Detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 17–21 June 2024; IEEE: Piscataway, NJ, USA, 2024; pp. 16965–16974. [Google Scholar] [CrossRef]

- Saleh, A.; Olsen, A.; Wood, J.; Philippa, B.; Azghadi, M.R. WeedCLR: Weed contrastive learning through visual representations with class-optimized loss in long-tailed datasets. Comput. Electron. Agric. 2024, 227, 109526. [Google Scholar]

- Saleh, A.; Olsen, A.; Wood, J.; Philippa, B.; Azghadi, M.R. FieldNet: Efficient real-time shadow removal for enhanced vision in field robotics. Expert Syst. Appl. 2025, 279, 127442. [Google Scholar]

- Dyrmann, M.; Karstoft, H.; Midtiby, H.S. Plant species classification using deep convolutional neural network. Biosyst. Eng. 2016, 151, 72–80. [Google Scholar] [CrossRef]

- Van Engelen, J.; Hoos, H. A survey on semi-supervised learning. Mach. Learn. 2020, 109, 373–440. [Google Scholar]

- Pérez-Ortiz, M.; Peña, J.; Gutiérrez, P.; Torres-Sánchez, J.; Hervás-Martínez, C.; López-Granados, F. A semi-supervised system for weed mapping in sunflower crops using unmanned aerial vehicles and a crop row detection method. Appl. Soft Comput. 2015, 37, 533–544. [Google Scholar] [CrossRef]

- Nong, C.; Fan, X.; Wang, J. Semi-supervised learning for weed and crop segmentation using UAV imagery. Front. Plant Sci. 2022, 13, 927368. [Google Scholar] [CrossRef]

- Saleh, A.; Olsen, A.; Wood, J.; Philippa, B.; Azghadi, M.R. Semi-supervised weed detection for rapid deployment and enhanced efficiency. Comput. Electron. Agric. 2025, 236, 110410. [Google Scholar] [CrossRef]

- Torres-Sánchez, J.; López-Granados, F.; Peña, J.M. An automatic object-based method for optimal thresholding in UAV images: Application for vegetation detection in herbaceous crops. Comput. Electron. Agric. 2015, 114, 43–52. [Google Scholar] [CrossRef]

- Wang, A.; Zhang, W.; Wei, X. A review on weed detection using ground-based machine vision and image processing techniques. Comput. Electron. Agric. 2019, 158, 226–240. [Google Scholar] [CrossRef]

- Hasan, A.S.M.M.; Sohel, F.; Diepeveen, D.; Laga, H.; Jones, M.G.K. A survey of deep learning techniques for weed detection from images. Comput. Electron. Agric. 2021, 184, 106067. [Google Scholar] [CrossRef]

- Ferreira, A.; Freitas, D.; Silva, G.; Pistori, H.; Folhes, M. Weed detection in soybean crops using ConvNets. Comput. Electron. Agric. 2017, 143, 314–324. [Google Scholar] [CrossRef]

- Olsen, A.; Konovalov, D.A.; Philippa, B.; Ridd, P.; Wood, J.C.; Johns, J.; Banks, W.; Girgenti, B.; Kenny, O.; Whinney, J.; et al. DeepWeeds: A multiclass weed species image dataset for deep learning. Sci. Rep. 2019, 9, 2058. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NA, USA, 27–30 June 2016; IEEE: Piscataway, NJ, USA, 2016; pp. 770–778. [Google Scholar] [CrossRef]

- Saleem, M.H.; Potgieter, J.; Arif, K.M. Weed detection by faster RCNN model: An enhanced anchor box approach. Agronomy 2022, 12, 1580. [Google Scholar] [CrossRef]

- Dang, F.; Chen, D.; Lu, Y.; Li, Z. YOLOWeeds: A novel benchmark of YOLO object detectors for multi-class weed detection in cotton production systems. Comput. Electron. Agric. 2023, 205, 107655. [Google Scholar] [CrossRef]

- Peng, H.; Li, Z.; Zhou, Z.; Shao, Y. Weed detection in paddy field using an improved RetinaNet network. Comput. Electron. Agric. 2022, 199, 107179. [Google Scholar] [CrossRef]

- Xu, Y.; Ren, S.; Li, L.; Wei, P.; Deng, H.; Wang, A.; Rao, Y. SP-DETR: Superior point weak semi-supervised DETR with teacher–student paradigm for crop and weed detection. Comput. Electron. Agric. 2025, 239, 111130. [Google Scholar] [CrossRef]

- Islam, T.; Sarker, T.T.; Ahmed, K.R.; Rankrape, C.B.; Gage, K. WeedVision: Multi-Stage Growth and Classification of Weeds using DETR and RetinaNet for Precision Agriculture. arXiv 2025, arXiv:2502.14890. [Google Scholar]

- Robicheaux, P.; Gallagher, J.; Nelson, J.; Robinson, I. RF-DETR: A SOTA Real-Time Object Detection Model. 2025. Available online: https://blog.roboflow.com/rf-detr/ (accessed on 20 March 2025).

- Liu, T.; Jin, X.; Zhang, L.; Wang, J.; Chen, Y.; Hu, C.; Yu, J. Semi-supervised learning and attention mechanism for weed detection in wheat. Crop Prot. 2023, 174, 106389. [Google Scholar] [CrossRef]

- Shorewala, S.; Ashfaque, A.; Sidharth, R.; Verma, U. Weed Density and Distribution Estimation for Precision Agriculture Using Semi-Supervised Learning. IEEE Access 2021, 9, 27971–27986. [Google Scholar] [CrossRef]

- Steininger, D.; Trondl, A.; Croonen, G.; Simon, J.; Widhalm, V. The CropAndWeed Dataset: A Multi-Modal Learning Approach for Efficient Crop and Weed Manipulation. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), Waikoloa, HI, USA, 2–7 January 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 3729–3738. [Google Scholar]

- Akiba, T.; Sano, S.; Yanase, T.; Ohta, T.; Koyama, M. Optuna: A next-generation hyperparameter optimization framework. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, Anchorage, AK, USA, 4–8 August 2019; Association for Computing Machinery: Anchorage, AK, USA, 2019; pp. 2623–2631. [Google Scholar]

| Paddock ID | Sugarcane | Guinea Grass |

|---|---|---|

| paddock_A1 | 3605 | 239 |

| paddock_A2 | 837 | 112 |

| Dataset A | 4442 | 351 |

| paddock_B1 | 170 | 29 |

| paddock_B2 | 336 | 252 |

| Dataset B | 506 | 281 |

| ID | Dataset (s) | Training Strategy | Val F1 | Test F1 |

|---|---|---|---|---|

| SC1 | A Only | From Scratch | 0.96 | 0.88 |

| SC2 | A + B | From Scratch | 0.86 | 0.88 |

| SC3 | A + B | SC2 as Pretrained Init | 0.86 | 0.89 |

| ID | Training Strategy | Val F1 | Test F1 |

|---|---|---|---|

| SC3 | Fully Supervised (Best) | 0.86 | 0.89 |

| SSC1 | Semi-Supervised (Student) | 0.85 | 0.90 |

| ID | Model | mAP@50 | mAP@50-95 | Precision | Recall |

|---|---|---|---|---|---|

| SD26 | YOLOv12-s | 0.807 | 0.543 | 0.804 | 0.771 |

| SD27 | RF-DETR | 0.777 | 0.513 | 0.777 | 0.664 |

| ID | Model | mAP@50 | mAP@50-95 | Precision | Recall |

|---|---|---|---|---|---|

| SSD8 | YOLOv12-s | 0.828 | 0.529 | 0.814 | 0.782 |

| SSD10 | RF-DETR | 0.785 | 0.507 | 0.785 | 0.675 |

| Training Method | Labelled Data Used | mAP@50 |

|---|---|---|

| Supervised Baseline | 10% | 0.90 |

| Our SSD Pipeline | 10% (+90% unlabelled) | 0.91 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Saleh, A.; Hatano, S.; Rahimi Azghadi, M. Weed Detection in Challenging Field Conditions: A Semi-Supervised Framework for Overcoming Shadow Bias and Data Scarcity. Computers 2026, 15, 171. https://doi.org/10.3390/computers15030171

Saleh A, Hatano S, Rahimi Azghadi M. Weed Detection in Challenging Field Conditions: A Semi-Supervised Framework for Overcoming Shadow Bias and Data Scarcity. Computers. 2026; 15(3):171. https://doi.org/10.3390/computers15030171

Chicago/Turabian StyleSaleh, Alzayat, Shunsuke Hatano, and Mostafa Rahimi Azghadi. 2026. "Weed Detection in Challenging Field Conditions: A Semi-Supervised Framework for Overcoming Shadow Bias and Data Scarcity" Computers 15, no. 3: 171. https://doi.org/10.3390/computers15030171

APA StyleSaleh, A., Hatano, S., & Rahimi Azghadi, M. (2026). Weed Detection in Challenging Field Conditions: A Semi-Supervised Framework for Overcoming Shadow Bias and Data Scarcity. Computers, 15(3), 171. https://doi.org/10.3390/computers15030171