1. Introduction

Iron deficiency is one of the most widespread nutritional deficiencies worldwide. Despite its recognition as a public health concern, there is a lack of reliable prevalence data globally and regionally [

1]. Serum ferritin concentration, an indicator of body iron stores, is recommended by WHO to assess population iron status in the absence of inflammation [

2], but it also increases during inflammation, independently of iron status. Thus, when not taking inflammation into account, the prevalence of iron deficiency is underestimated in populations with a high burden of infections [

3]. Iron deficiency in pregnancy is associated with adverse health outcomes for both mother and infant, such as maternal mortality, preterm birth, being born small for gestational age, and low birth weight [

4]. Accurate assessment of iron deficiency is necessary to evaluate nutrition interventions in pregnancy and infancy.

Several methods have been proposed to assess iron status in the presence of inflammation. Scientists from the Biomarkers Reflecting Inflammation and Nutrition Determinants of Anemia (BRINDA) project recently proposed the use of linear regression methods to adjust ferritin concentration for the effects of inflammation [

5]. Previously proposed methods to assess iron status are: (a) correction factors for ferritin concentration [

2,

6,

7]; (b) exclusion of subjects with inflammation from the analysis; and (c) increased cut-off values for serum ferritin concentration to define iron deficiency. All of these methods rely on concurrent inflammatory markers. C-reactive protein (CRP) is an acute phase protein that increases rapidly after the onset of inflammation and declines rapidly after cessation of inflammatory stimuli. By contrast,

α1-acid glycoprotein (AGP) increases and declines slowly with these events.

To our knowledge, although the regression correction method proposed by the BRINDA group has been tested in children and women of reproductive age, no data have been reported so far on prevalence estimates of iron deficiency in pregnancy using this method. In this paper, we re-analyzed two datasets of Kenyan pregnant women—urban and rural—to assess to what extent this newly developed regression method adjusting for inflammation will change the assessment of ferritin concentrations and the prevalence of iron deficiency in pregnancy.

2. Materials and Methods

Study design and population: We used data from studies that were conducted from 2011 to 2014 in two distinct regions in Kenya—a rural area (Kisumu County, Kisumu, Kenya; henceforth referred to as the Kisumu data), and an urban area (Nairobi County, Nairobi, Kenya; henceforth referred to as the Nairobi data).

Plasmodium infection is highly endemic in the Kisumu study area but is uncommon in Nairobi study area due to its high altitude (around 1,650–1,800 m above sea level). In Nairobi,

Plasmodium infection is mostly imported from other regions. The main results of these studies have been published elsewhere [

8,

9].

Rural area (Kisumu) data: The prenatal iron and malaria (PIMAL) study concerned a randomized placebo-controlled trial to measure the effect of antenatal iron supplementation on maternal

Plasmodium infection risk, and maternal and neonatal outcomes at delivery and one-month post-partum. For the current study, we used data from samples collected at baseline. The study was conducted in the administrative areas of Ojolla, Kanyawegi, Osiri, and Rota Sub-Locations, Kisumu County, Kisumu, Kenya. Pregnant women were recruited when aged 15–45 years, with singleton pregnancies, gestational age 13–23 weeks (determined by obstetric ultrasonography), and hemoglobin concentration >90 g/L. Venous blood samples were collected in EDTA tubes. Plasma concentrations of ferritin, soluble transferrin receptor, transferrin, CRP, and AGP were assessed on a Beckman Coulter Unicel DxC 880i analyzer at Meander Medical Centre, Amersfoort, The Netherlands [

9]. For CRP, data below the assay limit of detection (LOD) of 1 mg/L were censored and reported by the laboratory as imputed values at LOD/2 (i.e., 0.5 mg/L).

Plasmodium infection was indicated by the presence in plasma of

Plasmodium antigens (histidine-rich protein-2, which is specific for

P.

falciparum; or lactate dehydrogenase specific to either

P.

falciparum or to non-falciparum human

Plasmodium species; Access Bio rapid dipstick test), or the presence in erythrocytes of

P.

falciparum-specific DNA, as determined by quantitative polymerase chain reaction.

Urban area (Nairobi) data: The MNS 2014 study concerned a survey to assess micronutrient status, nutritional knowledge, and dietary patterns among pregnant women in their second trimester of pregnancy who attended antenatal clinics at Aga Khan Hospital, St. Mary’s Hospital, and Mama Lucy hospital in Nairobi County, Kenya [

8]. The three hospitals were purposely chosen to represent urban women from high, medium, and low socio-economic status, respectively. The subjects recruited were sampled consecutively and proportionately to the daily turnover of women in their second pregnancy trimester for each of these three facilities, until the sample size for each facility was attained. Experienced research staff were trained on study-specific procedures of data collection, specimen handling, and analysis. Venous blood was collected in EDTA tubes.

Plasmodium infection tests were done on site using rapid diagnostic tests specific for

P.

falciparum (histidine-rich protein 2). Serum concentrations of ferritin, soluble transferrin receptor, CRP, and AGP were measured by a multiplex enzyme immunoassay sandwich method with fluorescence detection [

10]. No limit of detection was reported for CRP.

Sample size requirements were calculated for the original purposes of each study and not reported because they are irrelevant to the present article.

2.1. Ethics and Registration

The PIMAL study was approved by independent ethics committees from London School of Hygiene and Tropical Medicine, UK, and the Kenyatta National Hospital/University of Nairobi, Kenya. It was registered at

Clinicaltrials.gov (identifier: NCT 01308112). For re-analysis of the Kisumu data for the current article, the authors obtained additional approval from the Kenyatta National Hospital/University of Nairobi Ethical Review Board. The MNS 2014 study was approved by the Kenya Medical Research Institute Scientific and Ethics Review Unit (KEMRI/CPHR/SERU/2769—

www.kemri.org) and the Aga Khan University Research Ethics Committee (2014/REC-53). Written informed consent was obtained from all study subjects in both studies.

2.2. Statistical Analysis

The following data, collected in the second pregnancy trimester, were used for this article: Kisumu 2011–2013 data and Nairobi 2014 data. Statistical Package for Social Sciences (SPSS) software version 22 and SAS 9.4 software (SAS Institute, Cary, NC, USA) were used for data analysis.

As per recommendations by the BRINDA group, we used the Internal Regression Correction (IRC) approach (10) to adjust for inflammation using CRP and AGP. The IRC approach uses linear regression to adjust a biomarker by the concentration of CRP or AGP on a continuous scale and

Plasmodium infection as a dichotomous variable. Ferritin concentration was log-transformed to normalize its distribution and to stabilize its variance, and concentrations of CRP and AGP concentrations were log-transformed under the assumption that this would linearize their relationship with the log-transformed ferritin concentration. Thus, the following regression equation was applied to adjust individual ferritin concentrations:

where the subscripts

adj and

unadj refer to adjusted and unadjusted ferritin concentrations, β

1, β

2, and β

3 are the regression coefficients for CRP, AGP, and

Plasmodium infection, respectively, and the subscript

ref refers to reference values that are recommended under the assumption that ferritin concentrations increase only when these inflammatory markers exceed this threshold value [

5,

11]. For CRP, internal reference values employed were 0.5 mg/L and 1.0 mg/L for Kisumu and Nairobi, respectively. For AGP, internal reference values utilized were 0.5 g/L and 0.3 g/L for Kisumu and Nairobi, respectively. A test of multicollinearity between log-transformed CRP and AGP (ln-CRP and ln-AGP) was assessed on the basis of a test of tolerance (>0.1) to determine whether it was appropriate to include all variables in the model. Because the BRINDA group did not report estimates for the regression coefficients for their meta-regression of data from pregnant women, we estimated these coefficients separately for the Kisumu and the Nairobi studies. Estimates for the regression coefficients were exponentiated to express associations in the original units of measurements. Iron deficiency was determined by applying a cut-off of <15 μg/L [

2] to inflammation-corrected ferritin concentrations.

As per BRINDA recommendations, the lowest deciles of CRP and AGP were set as internal reference values to avoid over-adjustment for low levels of inflammation, and adjustments were restricted to ferritin concentrations that corresponded to the CRP or AGP exceeding their lowest decile. Specific internal reference values were obtained for each of the groups described above. This was done on the basis of all values for CRP and AGP, as was suggested by the BRINDA group. We considered, however, that regression over censored independent variables is likely to result in biased estimators for the regression coefficients. For this reason, we also conducted an analysis of the Kisumu dataset with exclusion of observations with CRP values below the LOD. Although this truncation leads to a reduced sample size, and thus to a loss of efficiency, it has the advantages that the method is simple and that regression coefficients will be consistent, i.e., with increasing sample size, the estimates will tend towards the true value [

12].

The categorical Correction Factor (CF) approach, as proposed by Thurnham et al. [

6], uses arithmetic CFs that are derived from the following 4-group inflammation-adjustment model: (1) reference (CRP concentration ≤5 mg/L and AGP concentration ≤1 g/L); (2) incubation (CRP concentration >5 mg/L and AGP concentration ≤1 g/L); (3) early convalescence (CRP concentration >5 mg/L and AGP concentration >1 g/L); and (4) late convalescence (CRP concentration ≤5 mg/L and concentration AGP >1 g/L). In addition, CFs were derived by grouping those with inflammation or

Plasmodium infection into 2 groups, in which CRP, AGP, or

Plasmodium infection were used independently of each other. Internal Correction Factors (ICFs) were then generated by dividing geometric mean (GM) ferritin values of the non-inflammation group by GM ferritin values of each inflammation group:

where

ref and

inflam denote the reference group and the inflammation group, respectively.

Subsequently, raw ferritin values in individuals in the groups with raised inflammatory markers were multiplied by the ICFs matching their respective inflammation group to arrive at adjusted ferritin values. In line with the IRC approach, ICFs were calculated for each of the groups described above. To compare ferritin concentrations between Kisumu and Nairobi, after excluding cases with inflammation, a t-test was utilized to test the log-normal ratio of the geometric means and obtain corresponding 95% CIs.

In the “exclusion” approach, individuals with inflammation (as defined by a CRP concentration >5 mg/L or AGP concentration >1 g/L, or both) or with Plasmodium infection were excluded from the analysis. The estimated prevalence of iron deficiency was then calculated among those remaining. The method used to calculate 95% CIs of prevalence estimates was Wilson’s score interval.

For the increased ferritin concentration approach, we defined iron deficiency as ferritin concentrations <15 μg/L or <30 μg/L in individuals without or with inflammation (CRP concentration >5 mg/L or AGP concentration >1 g/L, or both), respectively. Wilson’s score interval was used to calculate corresponding 95% CIs.

Lastly, we reported unadjusted prevalence estimates for iron deficiency. Again, 95% CIs were obtained with Wilson’s score interval.

4. Discussion

We aimed to compare estimates of iron status of Kenyan pregnant women, with circulating ferritin concentrations adjusted for inflammation using newly proposed methods by the BRINDA project, or using previously proposed adjustment methods. Application of linear regression methods to adjust circulating ferritin concentration for inflammation led to markedly decreased point estimates for ferritin concentration and increased estimates for the prevalence of iron deficiency. We observed better (higher) estimates of the prevalence of iron deficiency after correcting for inflammation using the BRINDA (IRC) regression method compared to other conventional approaches. This was similar to observations in non-pregnant populations [

5].

All examined indicators of iron status were affected by inflammation. As expected, the geometric mean of ferritin was lowest in women without inflammation, probably because ferritin is a positive acute-phase protein, which is markedly elevated during states of inflammation [

13].

Plasmodium infection was associated with ferritin even after controlling for CRP and AGP (

Figure S2). The criterion to compare and evaluate models is not to judge on their ability to increase estimates of the prevalence of iron deficiency, but rather to what extent they improve model fit (i.e., the ability to predict values as close as possible to the ones observed). When comparing nested models, the difference in model fit is indicated by the change in R

2 and corresponding

p-value. For models that differ in the presence or absence of a single explanatory variable, the

p-value for the change in R

2 is identical to the

p-value that corresponds to the regression coefficient for that explanatory variable (in this case,

Plasmodium infection). This is the reason why we believe that a model with CRP/AGP/

Plasmodium is better than a similar model that excludes

Plasmodium. Ignoring censored data resulted in substantial bias in the estimates of iron deficiency.

The correction of iron-status indicators for inflammation with the use of regression correction has been found to substantially change estimates of iron deficiency prevalence in both low and high infection burden settings [

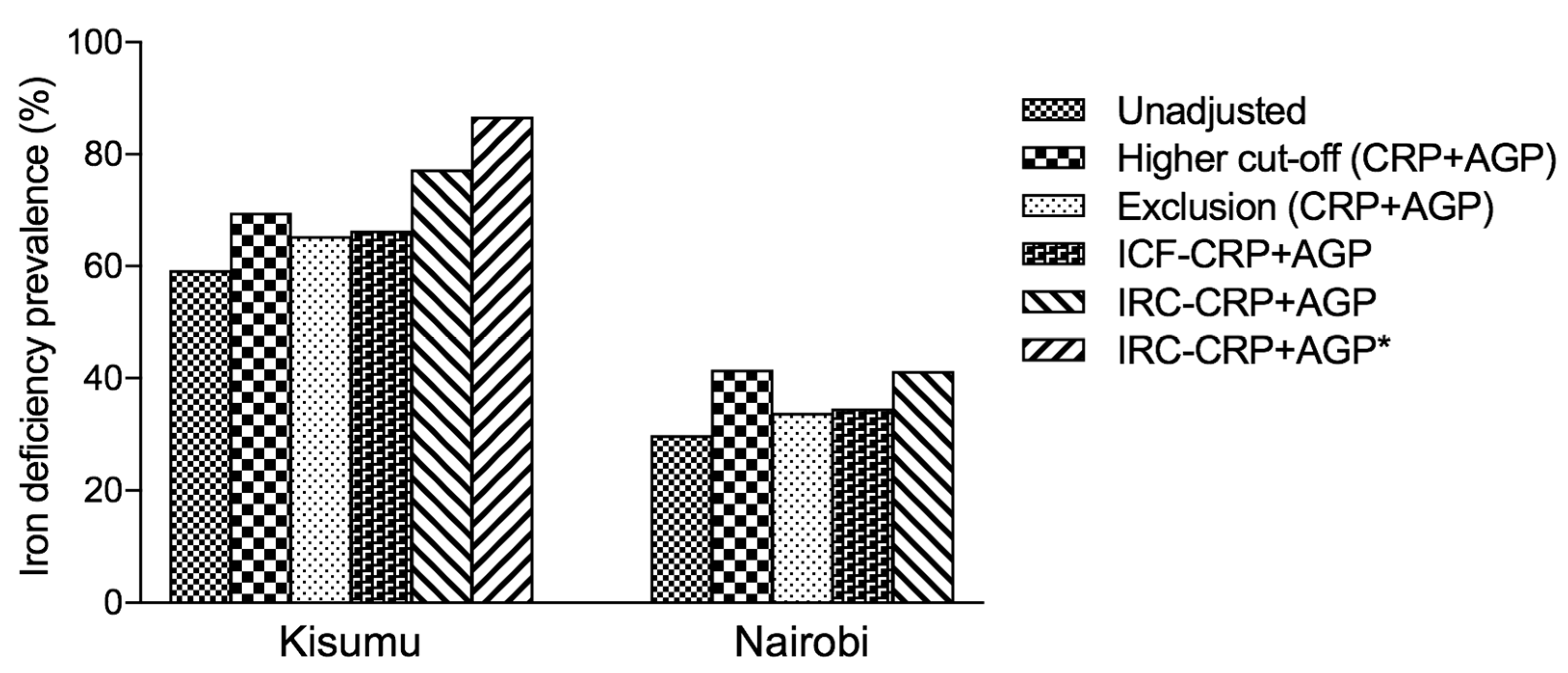

14]. Using AGP, on its own or in combination with CRP, led to higher prevalence estimates of iron deficiency (

Figure 1 and

Table 3). In our analysis, although the prevalence of iron deficiency was much higher after using the ICF approach, exclusion approach [

5] or higher cut-off compared to the unadjusted estimates, we found the prevalence of iron deficiency to be highest after using the IRC-CRP+AGP approach and was highest in Kisumu. However, for the exclusion approach, the greatest absolute change in the estimated prevalence of iron deficiency in subjects with CRP ≤ 5 mg/L was observed in pregnant women from Kisumu. A similar observation in the same population was made in subjects with AGP ≤ 1 g/L. Overall, the prevalence of iron deficiency increased in both Kisumu and Nairobi groups after using the

IRC approach to adjust for CRP or AGP.

Iron status indicators changed at low concentrations of CRP and AGP. This observation may suggest that continuous correction using a regression correction approach may better account for the full range and severity of inflammation than would the exclusion or correction-factor approaches that rely on dichotomous cutoffs to define inflammation [

14]. The higher ferritin cut-off approach performed quite well with CRP in comparison with the IRC approach, though the latter was more consistent in its performance, meaning that it always gave the highest prevalence estimates of iron deficiency. In similar resource-poor, high-infection burden regions, CRP alone may be sufficient to adjust for inflammation when using the IRC approach, but there is value in using AGP whenever possible, as it does lead to higher estimates of the prevalence of iron deficiency. In addition, the exclusion approach led to a decrease in precision and may have introduced bias. The ICF approach as outlined by Thurnham et al. [

6] led to lower estimates of iron deficiency prevalence than the IRC approach. We postulate that the ICF approach categories as currently defined may result in lower estimates of iron deficiency. For example, elevated CRP and AGP are now labelled as early convalescence but they also coexist in chronic infections such as

Plasmodium infection. “Convalescence” suggests that a person is recovering from illness, but asymptomatic infections can also cause elevated CRP or AGP concentrations [

6].

Anemia (IDA) in pregnancy is associated with adverse health outcomes for both mother and infant, such as maternal mortality, preterm birth, being born small for gestational age, and low birth weight, even if robust evidence is still lacking [

15]. Assessing iron status in pregnant women is challenging for several reasons, which probably explains why there is a limited number of studies available. On top of the classical pitfalls of iron biomarkers measurement, the increase in plasma volume occurring during pregnancy leads to dilution of seric markers, such as hemoglobin and SF, and specific cut-offs need to be developed for this population. In addition, hepcidin, the master regulator for iron absorption, is suppressed during the two last trimesters of healthy pregnancies to both permit an increase in dietary iron absorption and mobilization of iron stores, even if a high level of inflammation may still induce hepcidin release during pregnancy [

16]. Finally, the inflammatory status fluctuates during pregnancy, with pro-inflammatory status during the first and the third trimesters and anti-inflammatory during the second trimester, which could influence SF level. The analyses we have conducted here were based on women in their second trimester, so extrapolation to first or third pregnancy trimester is questionable.

To the best of our knowledge, this is the first attempt at an estimation of the prevalence of iron deficiency during pregnancy using the regression correction method proposed by the BRINDA group.

In rural Kenya, based on the Kenya National Micronutrient Survey of 2011, the prevalence of iron deficiency among pregnant women was 45.6% [

17]. Compared to the new estimates based on the BRINDA IRC−CRP+AGP method (range; 70.0%–86.7%), there is a gross underestimation of the true prevalence of iron deficiency by 24.4% to 41.1%. The Kenya Demographic and Health Survey of 2014 indicated that only 8% of women aged 15–49 years with a live birth in the last five years received iron supplements for the recommended 90 days or more [

18]. To prevent anemia and other pregnancy-related complications, there is an urgent need to scale up the development and monitoring of public health programs to improve the iron status of pregnant women. It is highly likely that similar trends in the prevalence of iron deficiency are the norm rather than the exception in most low and middle-income countries. The prevalence of iron deficiency is not matched by comprehensive and effective public health mitigation programs.

The current analysis used two comprehensive datasets on pregnant women from two diverse settings, rural and urban. When analyzing trial data, an important question (concerning the BRINDA method) is whether beta coefficients should be calculated within each intervention group, or whether they should be pooled for all groups. The source population of our datasets was quite unique in terms of inflammation and prevalence of Plasmodium infection. As such, specific correction factors, beta coefficients, and reference values were obtained for Kisumu and Nairobi separately, in order to take into account the differences that may exist between these groups. This increases the external validity of the findings and their general applicability. Furthermore, the datasets have a large sample size and contain high-quality laboratory analyses. The analysis also draws from primary data from two studies, each of which used a sampling scheme that was representative. Both datasets are comparable in that they applied similar laboratory methods for measuring the biomarkers of interest, focused on the second trimester of pregnancy, and were cross-sectional in their nature, but designed with a specific aim to look at iron deficiency prevalence in pregnancy. This comparability provides for a generalizable interpretation of the findings.

We have presented results of analyses of data from two markedly different populations (rural versus urban). Our data was selected on the basis of convenience (i.e., the availability of two pregnant women data sets), and was cross-sectional in nature. Because the PIMAL study was a randomized controlled trial with a placebo arm, we applied the hemoglobin cut-off of >90g/L to avoid recruiting women who would need medical intervention into the placebo arm of the trial. The application of this exclusion criterion may mean that the revised estimates of iron deficiency in Kisumu may be an under-estimate of the true prevalence. We did not have a gold-standard measure of iron status to compare against (for example bone marrow iron). Because of this, it is not clear whether CRP and AGP completely explain the relationship between ferritin and inflammation or, alternatively, whether the adjustment approaches over-adjust ferritin concentrations on the basis of a third unknown confounder. A comparison of adjustment approaches against a reference standard and the use of longitudinal data would further contribute to the evidence [

19]. In the absence of such a reference standard, the linear regression method is probably the best method currently available. This observation shows the need to conduct micronutrient surveys in a harmonized fashion and the need to undertake longitudinal studies [

11].