Cross-Layer Feature Fusion and Attention-Based Class Feature Alignment Network for Unsupervised Cross-Domain Remote Sensing Scene Classification

Highlights

- Global distribution alignment alone is insufficient for cross-domain remote sensing scene classification; class-level feature misalignment is a critical yet overlooked factor limiting unsupervised domain adaptation performance.

- The proposed cross-layer feature fusion and attention-based architecture significantly enhances scene representation learning and enables effective class-aware feature alignment across domains.

- Cross-domain adaptation in remote sensing should move beyond global feature distribution alignment and explicitly model class-level structures, as neglecting class-aware alignment can fundamentally limit generalization performance.

- Effective cross-domain scene classification requires joint optimization of multi-layer semantic representation and class-aware alignment, suggesting that future unsupervised domain adaptation architectures should integrate cross-layer feature fusion and adaptive attention mechanisms rather than relying on shallow feature matching.

Abstract

1. Introduction

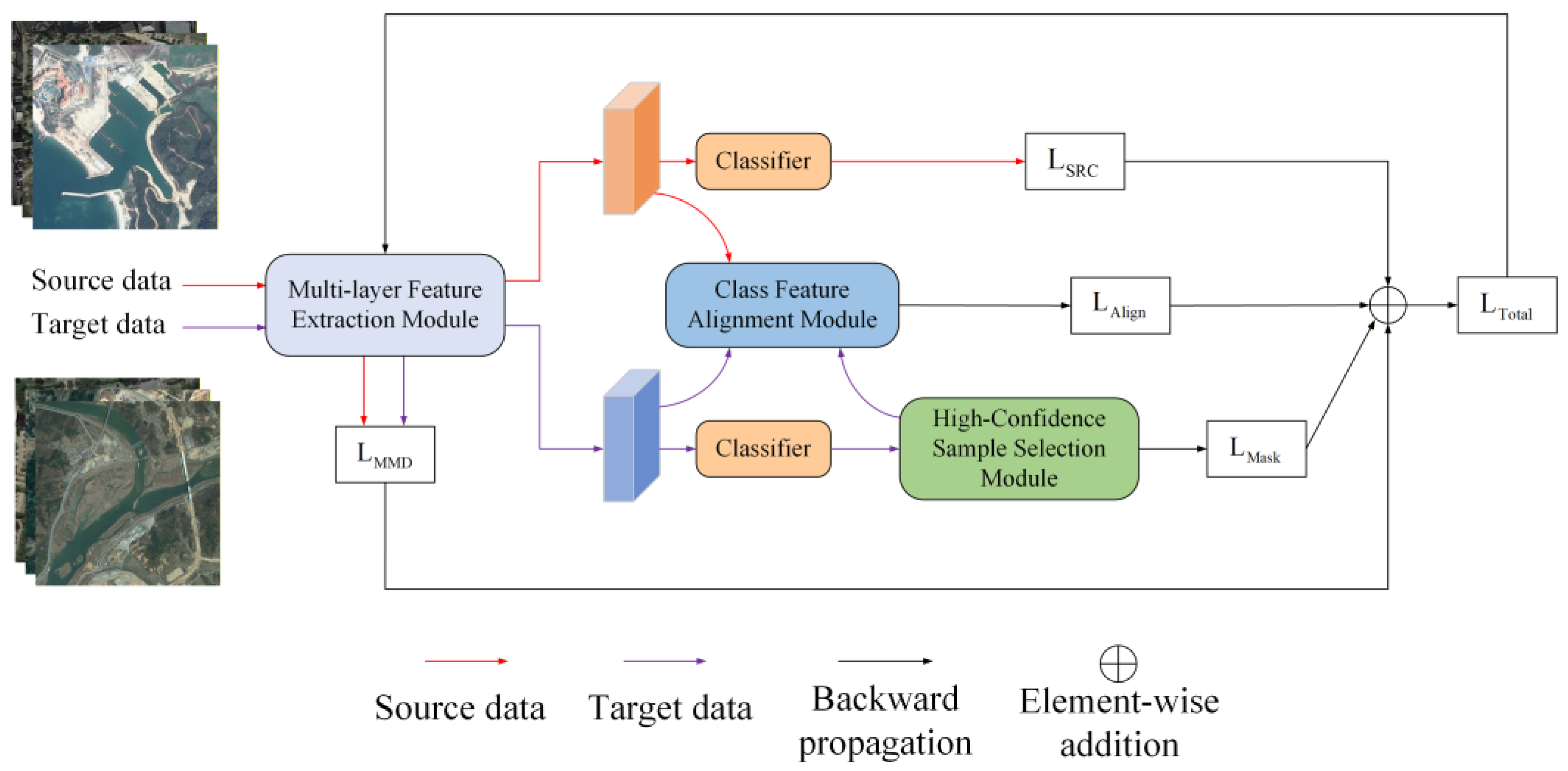

- MFEM is designed to consist of a CFFM, an MSDAM, and an FFOM. Among them, CFFM is used to explore the contextual correlation information among different shallow features, MSDAM aims to enhance the key information in each layer of features, and FFOM optimizes the final aggregated features to eliminate the redundant information caused by various semantic differences.

- A high-confidence sample selection module is introduced, which selects samples by integrating evidence theory and information entropy to ensure the reliability of pseudo-labels for high-confidence samples in the target domain.

- A class feature alignment module based on a two-stage training strategy is proposed, which achieves effective alignment of the same class features between the source and target domains through the corresponding memory bank mechanism in each stage, thereby improving cross-domain classification performance.

- Extensive cross-domain classification performance comparison experiments conducted on three datasets have demonstrated the effectiveness of CFACA-NET.

2. Related Works

2.1. Unsupervised Domain Adaptation

2.2. Attention Mechanism in Domain Adaptation

3. Materials and Methods

3.1. Multi-Layer Feature Extraction Module

3.2. High-Confidence Sample Selection Module

3.3. Class Feature Alignment Module

3.4. Overall Objective Function

4. Results

4.1. Cross-Domain Scene Classification Dataset

4.2. Experimental Setup

4.3. Comparison Experiments and Result Analysis

5. Discussion

5.1. Ablation Study

- (1)

- Net-0: Backbone.

- (2)

- Net-1: Backbone + CFFM.

- (3)

- Net-2: Backbone + CFFM + MSDAM.

- (4)

- Net-3: Backbone + CFFM + MSDAM + FFOM.

- (1)

- Loss-1: .

- (2)

- Loss-2: .

- (3)

- Loss-3: .

- (4)

- Loss-4: .

- (5)

- Loss-5: .

5.2. Hyperparameter Sensitivity Analysis

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Wang, W.; Chen, Y.; Ghamisi, P. Transferring CNN with adaptive learning for remote sensing scene classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 1–18. [Google Scholar] [CrossRef]

- Tang, X.; Ma, Q.; Zhang, X.; Liu, F.; Ma, J.; Jiao, L. Attention consistent network for remote sensing scene classification. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2021, 14, 2030–2045. [Google Scholar] [CrossRef]

- Hu, Y.; Huang, X.; Luo, X.; Han, J.; Cao, X.; Zhang, J. Variational self-distillation for remote sensing scene classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 1–13. [Google Scholar] [CrossRef]

- Peng, C.; Li, Y.; Jiao, L.; Shang, R. Efficient convolutional neural architecture search for remote sensing image scene classification. IEEE Trans. Geosci. Remote Sens. 2020, 59, 6092–6105. [Google Scholar] [CrossRef]

- Miao, W.; Geng, J.; Jiang, W. Multigranularity decoupling network with pseudolabel selection for remote sensing image scene classification. IEEE Trans. Geosci. Remote Sens. 2023, 61, 1–13. [Google Scholar] [CrossRef]

- Wang, X.; Mao, Z.; Shi, A.; Zhang, Z.; Zhou, H. Dropout-based adversarial training networks for remote sensing scene classification. IEEE Geosci. Remote Sens. Lett. 2022, 19, 1–5. [Google Scholar] [CrossRef]

- Lin, H.; Hao, M.; Luo, W.; Yu, H.; Zheng, N. BEARNet: A novel buildings edge-aware refined network for building extraction from high-resolution remote sensing images. IEEE Geosci. Remote Sens. Lett. 2023, 20, 1–5. [Google Scholar] [CrossRef]

- Chen, S.; Shi, W.; Zhou, M.; Zhang, M.; Xuan, Z. CGSANet: A contour-guided and local structure-aware encoder–decoder network for accurate building extraction from very high-resolution remote sensing imagery. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2021, 15, 1526–1542. [Google Scholar] [CrossRef]

- Li, F.; Feng, R.; Han, W.; Wang, L. An augmentation attention mechanism for high-spatial-resolution remote sensing image scene classification. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 13, 3862–3878. [Google Scholar] [CrossRef]

- Li, Z.; Wu, Q.; Cheng, B.; Cao, L.; Yang, H. Remote sensing image scene classification based on object relationship reasoning CNN. IEEE Geosci. Remote Sens. Lett. 2020, 19, 1–5. [Google Scholar] [CrossRef]

- Xu, C.; Zhu, G.; Shu, J. A lightweight and robust lie group-convolutional neural networks joint representation for remote sensing scene classification. IEEE Trans. Geosci. Remote Sens. 2021, 60, 1–15. [Google Scholar] [CrossRef]

- Chen, J.; Huang, H.; Peng, J.; Zhu, J.; Chen, L.; Tao, C.; Li, H. Contextual information-preserved architecture learning for remote-sensing scene classification. IEEE Trans. Geosci. Remote Sens. 2021, 60, 1–14. [Google Scholar] [CrossRef]

- Zhang, J.; Liu, J.; Pan, B.; Shi, Z. Domain adaptation based on correlation subspace dynamic distribution alignment for remote sensing image scene classification. IEEE Trans. Geosci. Remote Sens. 2020, 58, 7920–7930. [Google Scholar] [CrossRef]

- Zhang, L.; Lan, M.; Zhang, J.; Tao, D. Stagewise unsupervised domain adaptation with adversarial self-training for road segmentation of remote-sensing images. IEEE Trans. Geosci. Remote Sens. 2021, 60, 1–13. [Google Scholar] [CrossRef]

- Song, S.; Yu, H.; Miao, Z.; Zhang, Q.; Lin, Y.; Wang, S. Domain adaptation for convolutional neural networks-based remote sensing scene classification. IEEE Geosci. Remote Sens. Lett. 2019, 16, 1324–1328. [Google Scholar] [CrossRef]

- Zheng, J.; Zhao, Y.; Wu, W.; Chen, M.; Li, W.; Fu, H. Partial domain adaptation for scene classification from remote sensing imagery. IEEE Trans. Geosci. Remote Sens. 2022, 61, 1–17. [Google Scholar] [CrossRef]

- Liu, Z.-G.; Ning, L.-B.; Zhang, Z.-W. A new progressive multisource domain adaptation network with weighted decision fusion. IEEE Trans. Neural Netw. Learn. Syst. 2022, 35, 1062–1072. [Google Scholar] [CrossRef]

- Xu, Q.; Shi, Y.; Yuan, X.; Zhu, X.X. Universal domain adaptation for remote sensing image scene classification. IEEE Trans. Geosci. Remote Sens. 2023, 61, 1–15. [Google Scholar] [CrossRef]

- Aryal, J.; Neupane, B. Multi-scale feature map aggregation and supervised domain adaptation of fully convolutional networks for urban building footprint extraction. Remote Sens. 2023, 15, 488. [Google Scholar] [CrossRef]

- Lasloum, T.; Alhichri, H.; Bazi, Y.; Alajlan, N. SSDAN: Multi-source semi-supervised domain adaptation network for remote sensing scene classification. Remote Sens. 2021, 13, 3861. [Google Scholar] [CrossRef]

- Chen, S.; Harandi, M.; Jin, X.; Yang, X. Semi-supervised domain adaptation via asymmetric joint distribution matching. IEEE Trans. Neural Netw. Learn. Syst. 2020, 32, 5708–5722. [Google Scholar]

- Yu, C.; Liu, C.; Song, M.; Chang, C.-I. Unsupervised domain adaptation with content-wise alignment for hyperspectral imagery classification. IEEE Geosci. Remote Sens. Lett. 2021, 19, 1–5. [Google Scholar]

- Zhang, Z.; Doi, K.; Iwasaki, A.; Xu, G. Unsupervised domain adaptation of high-resolution aerial images via correlation alignment and self-training. IEEE Geosci. Remote Sens. Lett. 2020, 18, 746–750. [Google Scholar]

- Tang, X.; Li, C.; Peng, Y. Unsupervised joint adversarial domain adaptation for cross-scene hyperspectral image classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 1–15. [Google Scholar] [CrossRef]

- Guo, J.; Yang, J.; Yue, H.; Li, K. Unsupervised domain adaptation for cloud detection based on grouped features alignment and entropy minimization. IEEE Trans. Geosci. Remote Sens. 2021, 60, 1–13. [Google Scholar] [CrossRef]

- Sun, B.; Feng, J.; Saenko, K. Return of frustratingly easy domain adaptation. Proc. AAAI Conf. Artif. Intell. 2018, 32, 2058–2065. [Google Scholar] [CrossRef]

- Shen, J.; Qu, Y.; Zhang, W.; Yu, Y. Wasserstein distance guided representation learning for domain adaptation. Proc. AAAI Conf. Artif. Intell. 2018, 32, 4058–4065. [Google Scholar]

- Zhao, C.; Qin, B.; Feng, S.; Zhu, W.; Zhang, L.; Ren, J. An unsupervised domain adaptation method towards multi-level features and decision boundaries for cross-scene hyperspectral image classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 1–16. [Google Scholar] [CrossRef]

- Hou, D.; Wang, S.; Tian, X.; Xing, H. PCLUDA: A pseudo-label consistency learning-based unsupervised domain adaptation method for cross-domain optical remote sensing image retrieval. IEEE Trans. Geosci. Remote Sens. 2022, 61, 1–14. [Google Scholar] [CrossRef]

- Chen, X.; Pan, S.; Chong, Y. Unsupervised domain adaptation for remote sensing image semantic segmentation using region and category adaptive domain discriminator. IEEE Trans. Geosci. Remote Sens. 2022, 60, 1–13. [Google Scholar]

- Zhu, J.; Guo, Y.; Sun, G.; Yang, L.; Deng, M.; Chen, J. Unsupervised domain adaptation semantic segmentation of high-resolution remote sensing imagery with invariant domain-level prototype memory. IEEE Trans. Geosci. Remote Sens. 2023, 61, 1–18. [Google Scholar] [CrossRef]

- Li, Z.; Tang, X.; Li, W.; Wang, C.; Liu, C.; He, J. A two-stage deep domain adaptation method for hyperspectral image classification. Remote Sens. 2020, 12, 1054. [Google Scholar] [CrossRef]

- Tzeng, E.; Hoffman, J.; Saenko, K.; Darrell, T. Adversarial discriminative domain adaptation. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 7167–7176. [Google Scholar]

- Deng, W.; Su, Z.; Qiu, Q.; Zhao, L.; Kuang, G.; Pietikäinen, M.; Xiao, H.; Liu, L. Deep ladder reconstruction-classification network for unsupervised domain adaptation. Pattern Recognit. Lett. 2021, 152, 398–405. [Google Scholar] [CrossRef]

- Baktashmotlagh, M.; Harandi, M.T.; Lovell, B.C.; Salzmann, M. Unsupervised domain adaptation by domain invariant projection. In Proceedings of the 2013 IEEE International Conference on Computer Vision (ICCV), Sydney, NSW, Australia, 1–8 December 2013; pp. 769–776. [Google Scholar]

- Ma, L.; Luo, C.; Peng, J.; Du, Q. Unsupervised manifold alignment for cross-domain classification of remote sensing images. IEEE Geosci. Remote Sens. Lett. 2019, 16, 1650–1654. [Google Scholar] [CrossRef]

- Othman, E.; Bazi, Y.; Melgani, F.; Alhichri, H.; Alajlan, N.; Zuair, M. Domain adaptation network for cross-scene classification. IEEE Trans. Geosci. Remote Sens. 2017, 55, 4441–4456. [Google Scholar] [CrossRef]

- Xu, R.; Li, G.; Yang, J.; Lin, L. Larger norm more transferable: An adaptive feature norm approach for unsupervised domain adaptation. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 1426–1435. [Google Scholar]

- Wang, L.; Xiao, P.; Zhang, X.; Chen, X. A fine-grained unsupervised domain adaptation framework for semantic segmentation of remote sensing images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2023, 16, 4109–4121. [Google Scholar] [CrossRef]

- Ganin, Y.; Ustinova, E.; Ajakan, H.; Germain, P.; Larochelle, H.; Laviolette, F.; March, M.; Lempitsky, V. Domain-adversarial training of neural networks. J. Mach. Learn. Res. 2016, 17, 1–35. [Google Scholar]

- Liu, M.; Zhang, P.; Shi, Q.; Liu, M. An adversarial domain adaptation framework with KL-constraint for remote sensing land cover classification. IEEE Geosci. Remote Sens. Lett. 2021, 19, 1–5. [Google Scholar] [CrossRef]

- Yang, Y.; Zhang, T.; Li, G.; Kim, T.; Wang, G. An unsupervised domain adaptation model based on dual-module adversarial training. Neurocomputing 2022, 475, 102–111. [Google Scholar] [CrossRef]

- Ghifary, M.; Kleijn, W.B.; Zhang, M.; Balduzzi, D.; Li, W. Deep reconstruction-classification networks for unsupervised domain adaptation. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 11–14 October 2016; pp. 597–613. [Google Scholar]

- Wei, P.; Ke, Y.; Goh, C.K. Feature analysis of marginalized stacked denoising autoencoder for unsupervised domain adaptation. IEEE Trans. Neural Netw. Learn. Syst. 2018, 30, 1321–1334. [Google Scholar] [CrossRef]

- Cai, W.; Wei, Z. Remote sensing image classification based on a cross-attention mechanism and graph convolution. IEEE Geosci. Remote Sens. Lett. 2020, 19, 1–5. [Google Scholar] [CrossRef]

- Cheng, Q.; Zhou, Y.; Fu, P.; Xu, Y.; Zhang, L. A deep semantic alignment network for cross-modal image-text retrieval in remote sensing. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2021, 14, 4284–4297. [Google Scholar] [CrossRef]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–23 June 2018; pp. 7132–7141. [Google Scholar]

- Woo, S.; Park, J.; Lee, J.-Y.; Kweon, I.S. CBAM: Convolutional block attention module. In Proceedings of the European Conference on Computer Vision, Munich, Germany, 8–14 September 2018; pp. 3–19. [Google Scholar]

- Chen, W.; Hu, H. Generative attention adversarial classification network for unsupervised domain adaptation. Pattern Recognit. 2020, 107, 107440. [Google Scholar] [CrossRef]

- Weng, Q.; Huang, Z.; Lin, J.; Jian, C.; Mao, Z. Remote sensing scene classification via multigranularity alternating feature mining. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2022, 16, 318–330. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Yang, Y.; Newsam, S. Bag-of-visual-words and spatial extensions for land-use classification. In Proceedings of the 18th SIGSPATIAL International Conference on Advances in Geographic Information Systems, San Jose, CA, USA, 3–5 November 2010; pp. 270–279. [Google Scholar]

- Xia, G.-S.; Hu, J.; Hu, F.; Shi, B.; Bai, X.; Zhong, Y.; Zhang, L.; Lu, X. AID: A benchmark data set for performance evaluation of aerial scene classification. IEEE Trans. Geosci. Remote Sens. 2017, 55, 3965–3981. [Google Scholar] [CrossRef]

- Cheng, G.; Han, J.; Lu, X. Remote sensing image scene classification: Benchmark and state of the art. Proc. IEEE 2017, 105, 1865–1883. [Google Scholar] [CrossRef]

- Tzeng, E.; Hoffman, J.; Zhang, N.; Saenko, K.; Darrell, T. Deep domain confusion: Maximizing for domain invariance. arXiv 2014, arXiv:1412.3474. [Google Scholar] [CrossRef]

- Long, M.; Zhu, H.; Wang, J.; Jordan, M.I. Deep transfer learning with joint adaptation networks. In Proceedings of the 34th International Conference on Machine Learning, Sydney, NSW, Australia, 6–11 August 2017; pp. 2208–2217. [Google Scholar]

- Sun, B.; Saenko, K. Deep CORAL: Correlation alignment for deep domain adaptation. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 11–14 October 2016; pp. 443–450. [Google Scholar]

- Cui, S.; Wang, S.; Zhuo, J.; Li, L.; Huang, Q.; Tian, Q. Towards discriminability and diversity: Batch nuclear-norm maximization under label insufficient situations. In Proceedings of the European Conference on Computer Vision, Glasgow, UK, 23–28 August 2020; pp. 3940–3949. [Google Scholar]

- Zhu, Y.; Zhuang, F.; Wang, J.; Chen, J.; Shi, Z.; Wu, W.; He, Q. Multi-representation adaptation network for cross-domain image classification. Neural Netw. 2019, 119, 214–221. [Google Scholar] [CrossRef]

- Long, M.; Cao, Z.; Wang, J.; Jordan, M.I. Conditional adversarial domain adaptation. Adv. Neural Inf. Process. Syst. 2018, 31, 1647–1657. [Google Scholar]

- Zhu, S.; Du, B.; Zhang, L.; Li, X. Attention-based multiscale residual adaptation network for cross-scene classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 1–15. [Google Scholar] [CrossRef]

- Zhu, Y.; Zhuang, F.; Wang, J.; Ke, G.; Chen, J.; Bian, J.; Xiong, H.; He, Q. Deep subdomain adaptation network for image classification. IEEE Trans. Neural Netw. Learn. Syst. 2021, 32, 1713–1722. [Google Scholar] [CrossRef]

- Wang, J.; Chen, Y.; Feng, W.; Yu, H.; Huang, M.; Yang, Q. Transfer learning with dynamic distribution adaptation. ACM Trans. Intell. Syst. Technol. 2020, 11, 1–25. [Google Scholar] [CrossRef]

- Zheng, Z.; Zhong, Y.; Su, Y.; Ma, A. Domain adaptation via a task-specific classifier framework for remote sensing cross-scene classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 1–13. [Google Scholar] [CrossRef]

- Yang, C.; Dong, Y.; Du, B.; Zhang, L. Attention-based dynamic alignment and dynamic distribution adaptation for remote sensing cross-domain scene classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 1–13. [Google Scholar] [CrossRef]

- Zhu, P.; Zhang, X.; Han, X.; Cheng, X.; Gu, J.; Chen, P.; Jiao, L. Cross-domain classification based on frequency component adaptation for remote sensing images. Remote Sens. 2024, 16, 2134. [Google Scholar] [CrossRef]

- Wang, X.; Xu, H.; Shi, F.; Yuan, L.; Wen, X. Multiscale attention-based subdomain dynamic adaptation for cross-domain scene classification. IEEE Geosci. Remote Sens. Lett. 2024, 21, 1–5. [Google Scholar] [CrossRef]

- Hou, D.; Yang, Y.; Wang, S.; Zhou, X.; Wang, W. Spatial–frequency multiple feature alignment for cross-domain remote sensing scene classification. IEEE Geosci. Remote Sens. Lett. 2025, 22, 1–5. [Google Scholar] [CrossRef]

- Abedi, A.; Wu, Q.M.J.; Zhang, N.; Pourpanah, F. EUDA: An efficient unsupervised domain adaptation via self-supervised vision transformer. arXiv 2024, arXiv:2407.21311. [Google Scholar] [CrossRef]

- Maaten, L.V.D.; Hinton, G. Visualizing data using t-SNE. J. Mach. Learn. Res. 2008, 9, 2579–2605. [Google Scholar]

| Method | U→A | U→N | A→U | A→N | N→U | N→A | AA |

|---|---|---|---|---|---|---|---|

| DDC | 72.10 | 67.24 | 74.36 | 82.78 | 76.54 | 85.12 | 76.36 |

| JAN | 73.53 | 66.74 | 75.78 | 83.42 | 77.49 | 85.61 | 77.09 |

| DeepCORAL | 74.61 | 66.50 | 76.50 | 84.50 | 79.38 | 86.91 | 78.07 |

| BNM | 71.13 | 69.13 | 79.50 | 88.63 | 71.75 | 89.43 | 78.26 |

| MRAN | 73.26 | 68.48 | 76.53 | 86.71 | 77.64 | 88.62 | 78.54 |

| CDAN | 67.80 | 66.59 | 77.63 | 90.32 | 76.75 | 93.16 | 78.71 |

| AMRAN | 74.08 | 68.09 | 75.50 | 86.80 | 78.50 | 89.43 | 78.73 |

| DSAN | 74.65 | 74.86 | 73.88 | 87.05 | 78.25 | 88.83 | 79.58 |

| DeepMEDA | 75.18 | 75.84 | 73.75 | 89.70 | 76.63 | 89.08 | 80.03 |

| DATSNET | 76.26 | 73.89 | 82.57 | 87.76 | 88.13 | 94.23 | 83.81 |

| ADA-DDA | 77.78 | 74.76 | 87.50 | 90.70 | 89.63 | 91.98 | 85.39 |

| FCAN | 85.68 | 79.83 | 89.45 | 92.31 | 91.68 | 93.47 | 88.74 |

| SAMRA | 87.34 | 84.36 | 91.36 | 93.02 | 92.84 | 94.12 | 90.51 |

| SFMDA | 89.65 | 88.92 | 93.89 | 94.75 | 96.13 | 95.66 | 93.17 |

| EUDA | 91.77 | 90.86 | 94.00 | 95.23 | 97.88 | 96.03 | 94.30 |

| CFACA-NET | 92.94 | 91.64 | 94.62 | 95.75 | 98.75 | 96.35 | 95.01 |

| Architecture | U→A | U→N | A→U | A→N | N→U | N→A | AA |

|---|---|---|---|---|---|---|---|

| MFEM with SE | 92.41 | 91.61 | 93.25 | 95.50 | 98.00 | 95.89 | 94.44 |

| MFEM with CBAM | 91.74 | 90.41 | 93.25 | 94.55 | 98.25 | 96.03 | 94.03 |

| MFEM with MSDAM | 92.94 | 91.64 | 94.62 | 95.75 | 98.75 | 96.35 | 95.01 |

| Method | Params (M) | GFLOPs | U→A | U→N | A→U | A→N | N→U | N→A | AA |

|---|---|---|---|---|---|---|---|---|---|

| Net-0 | 25.6 | 3.95 | 89.75 | 90.38 | 92.75 | 94.79 | 96.88 | 94.93 | 93.25 |

| Net-1 | 29.3 | 5.96 | 90.53 | 90.66 | 93.62 | 95.32 | 97.62 | 95.32 | 93.84 |

| Net-2 | 41.5 | 5.97 | 91.77 | 91.32 | 94.00 | 95.73 | 98.12 | 95.89 | 94.47 |

| Net-3 | 44.2 | 6.10 | 92.94 | 91.64 | 94.62 | 95.75 | 98.75 | 96.35 | 95.01 |

| Method | U→A | U→N | A→U | A→N | N→U | N→A | AA |

|---|---|---|---|---|---|---|---|

| Loss-1 | 78.37 | 75.36 | 86.25 | 94.36 | 93.12 | 95.43 | 87.14 |

| Loss-2 | 86.45 | 80.45 | 89.88 | 95.07 | 94.62 | 94.57 | 90.17 |

| Loss-3 | 91.06 | 88.25 | 90.12 | 94.84 | 97.38 | 95.57 | 92.87 |

| Loss-4 | 89.89 | 89.52 | 91.50 | 95.68 | 98.00 | 95.89 | 94.06 |

| Loss-5 (ours) | 92.94 | 91.64 | 94.62 | 95.75 | 98.75 | 96.35 | 95.01 |

| U→A | U→N | A→U | A→N | N→U | N→A | AA | ||

|---|---|---|---|---|---|---|---|---|

| 0.2 | 89.93 | 89.48 | 91.50 | 95.45 | 96.00 | 95.32 | 92.94 | |

| 0.3 | 91.95 | 90.00 | 91.88 | 95.54 | 97.88 | 94.65 | 93.65 | |

| 0.4 | 92.30 | 90.68 | 92.38 | 95.14 | 97.25 | 96.38 | 94.02 | |

| 0.5 | 92.94 | 91.64 | 94.62 | 95.75 | 98.75 | 96.35 | 95.01 | |

| 0.6 | 92.87 | 92.29 | 93.38 | 95.59 | 98.38 | 96.10 | 94.77 | |

| U→A | U→N | A→U | A→N | N→U | N→A | AA | ||

|---|---|---|---|---|---|---|---|---|

| 0.1 | 0.9 | 91.24 | 87.45 | 94.38 | 95.66 | 97.88 | 96.13 | 93.79 |

| 0.2 | 0.8 | 91.74 | 89.98 | 93.12 | 95.57 | 98.25 | 96.21 | 94.15 |

| 0.3 | 0.7 | 92.94 | 91.64 | 94.62 | 95.75 | 98.75 | 96.35 | 95.01 |

| 0.4 | 0.6 | 91.63 | 89.96 | 92.62 | 95.05 | 97.25 | 95.96 | 93.75 |

| 0.5 | 0.5 | 91.56 | 90.48 | 92.75 | 94.91 | 96.75 | 95.00 | 93.58 |

| Params (M) | U→A | U→N | A→U | A→N | N→U | N→A | AA | |

|---|---|---|---|---|---|---|---|---|

| 8 | 37.6 | 92.16 | 90.95 | 94.03 | 95.12 | 97.82 | 95.61 | 94.28 |

| 16 | 40.7 | 92.53 | 91.27 | 94.21 | 95.36 | 98.56 | 95.82 | 94.63 |

| 32 | 44.2 | 92.94 | 91.64 | 94.62 | 95.75 | 98.75 | 96.35 | 95.01 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Wei, J.; Li, E.; Zhang, C. Cross-Layer Feature Fusion and Attention-Based Class Feature Alignment Network for Unsupervised Cross-Domain Remote Sensing Scene Classification. Remote Sens. 2026, 18, 859. https://doi.org/10.3390/rs18060859

Wei J, Li E, Zhang C. Cross-Layer Feature Fusion and Attention-Based Class Feature Alignment Network for Unsupervised Cross-Domain Remote Sensing Scene Classification. Remote Sensing. 2026; 18(6):859. https://doi.org/10.3390/rs18060859

Chicago/Turabian StyleWei, Jiahao, Erzhu Li, and Ce Zhang. 2026. "Cross-Layer Feature Fusion and Attention-Based Class Feature Alignment Network for Unsupervised Cross-Domain Remote Sensing Scene Classification" Remote Sensing 18, no. 6: 859. https://doi.org/10.3390/rs18060859

APA StyleWei, J., Li, E., & Zhang, C. (2026). Cross-Layer Feature Fusion and Attention-Based Class Feature Alignment Network for Unsupervised Cross-Domain Remote Sensing Scene Classification. Remote Sensing, 18(6), 859. https://doi.org/10.3390/rs18060859