STAIT: A Spatio-Temporal Alternating Iterative Transformer for Multi-Temporal Remote Sensing Image Cloud Removal

Highlights

- A novel Spatio-Temporal Alternating Iterative Transformer (STAIT) is proposed to explicitly model the dynamic dependencies in multi-temporal remote sensing image cloud removal task.

- An efficient framework combining multi-level feature extraction and a weight-sharing decoder is designed to ensure high-quality, temporally consistent reconstruction.

- The method significantly improves cloud removal accuracy, effectively restoring surface details obscured by thick clouds.

- It provides a robust and efficient solution for generating continuous remote sensing data, enhancing the reliability of Earth observation applications.

Abstract

1. Introduction

- We propose STAIT to explicitly model the inherent spatio-temporal correlations within multi-temporal data. STAIT innovatively employs an alternating iteration of spatial and temporal attention, enabling precise information aggregation from a global spatio-temporal context. Additionally, our approach offers a more direct and effective pathway to understanding and utilizing the dynamic characteristics of remote sensing imagery.

- To resolve the difficulties in feature tokens extraction and the excessive model complexity arising from high-dimensional multi-temporal inputs, we devise an efficient feature token generator. The generator leverages a group convolution-based multi-level structure. On the one hand, it utilizes group convolutions to reduce channel-wise computational redundancy and effectively control the model’s parameter count. On the other hand, its multi-level design captures feature tokens at various scales, significantly strengthening the model’s representation ability.

- To ensure high temporal consistency in the final cloud-free result, we introduce a novel pixel reconstruction decoder where the parameters of the upsampling module are shared across all timesteps. This constraint is critical as it forces the model to learn a unified generation style, effectively eliminating stylistic and textural inconsistencies.

2. Related Work

2.1. Multi-Temporal Remote Sensing Image Cloud Removal

2.2. Transformer in Remote Sensing Image

3. Methods

3.1. Problem Formulation and Motivation

3.2. Overall Framework

3.3. Feature Token Generator

3.4. Spatio-Temporal Alternating Iterative Transformer

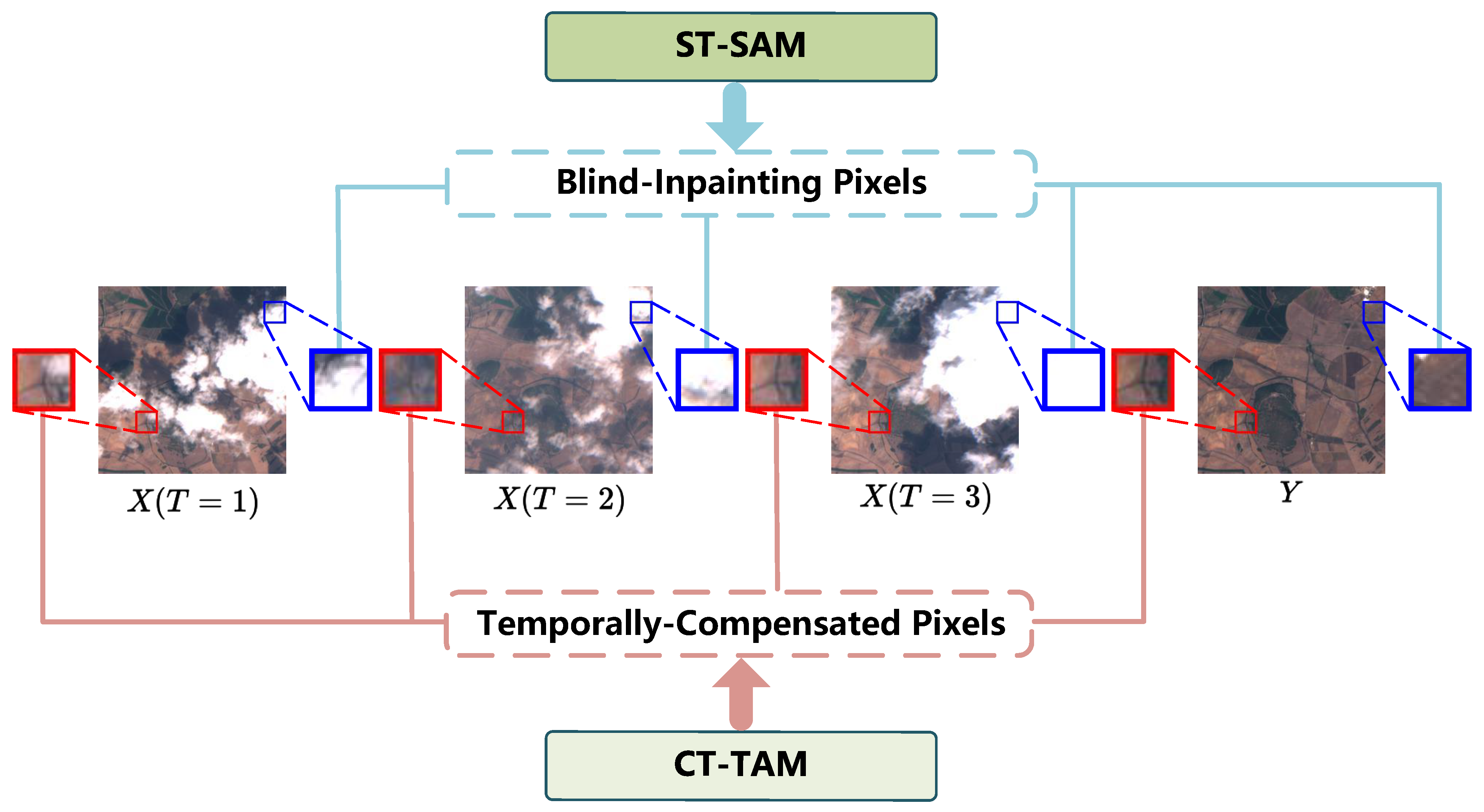

- ST-SAM Step: The primary function of this module is to learn correlations among different feature dimensions within a single timestep. By computing and aggregating this spatial contextual information, the module generates the feature representation . This ensures that the network can comprehend and integrate the interactions between various tokens within each specific timestep. The process is described by the following equation:

- CT-TAM Step: Immediately following, is passed to the Cross-Timestep Temporal Attention Module. This module focuses on capturing correlations between tokens at the same spatial location but across different timesteps. By mixing different time nodes’ tokens, CT-TAM can identify and learn patterns and dependencies of feature evolution over time, thereby yielding . This process is also described by the following equation:

- Single-Timenode Spatial Attention Module. The primary function of the ST-SAM module is to enhance feature representations for the inpainting of degraded regions. It operates by exclusively leveraging the spatial dependencies among feature tokens within each individual time step. Given an input feature tensor to the SAM, a shared self-attention mechanism is employed to compute the internal feature similarities for each time node. This process enables the module to capture the contextual dependencies among spatial elements within a single time node, thereby optimizing the per-time-nodes feature representation prior to subsequent temporal processing. The process can be formulated as:

- Cross-Timenode Temporal Attention Module. The CT-TAM module is designed to capture correlations among feature tokens in the same spatial regions across different time nodes. Given an input feature token , we first partition all tokens into P distinct segments for each time node. Subsequently, for each segment index , we concatenate the p-th segment from all time nodes to form . This step embodies the core mechanism of our approach, which we denote as token mixing. A self-attention mechanism is then applied to these mixed feature tokens , enabling the module to compute the cross-temporal relationships for that specific area. Finally, the inverse of the token mixing operation named token demixing is performed to restore the token representation to the original input state. This process effectively captures the relationships between features in the same spatial regions across varying time nodes. The process can be formulated as:

3.5. Pixel Reconstruction Decoder

3.6. Loss Function

4. Experiments

4.1. Datasets and Metrics

- STGAN is a multispectral dataset derived from the Sentinel-2 satellite. It contains 3130 image sequences from different geographical regions. Each sequence is composed of three cloudy images and a corresponding cloud-free reference image. All images include four spectral bands: Red, Green, Blue (RGB), and Near-Infrared (NIR). In our experiments, we adopted a random splitting strategy, partitioning the dataset into training, validation, and test sets at an 8:1:1 ratio.

- Sen2_MTC_New is structurally similar to STGAN, comprising a total of 3417 multispectral image sequences. Each sequence also consists of three cloudy images, one cloud-free reference image, and four spectral bands. To ensure a fair comparison with previous works, we strictly adhered to the standard partitioning scheme proposed in [40] to construct the training, validation, and test sets.

4.2. Implementation Details

4.3. Experiments on STGAN Dataset

4.4. Experiments on SEN2_MTC_New Dataset

4.5. Efficiency Analysis

4.6. Analysis of Hyperparameters

4.7. Ablation Study on STAIT Module

5. Discussion

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Stumpf, F.; Schneider, M.K.; Keller, A.; Mayr, A.; Rentschler, T.; Meuli, R.G.; Schaepman, M.; Liebisch, F. Spatial monitoring of grassland management using multi-temporal satellite imagery. Ecol. Indic. 2020, 113, 106201. [Google Scholar] [CrossRef]

- Thürkow, F.; Lorenz, C.G.; Pause, M.; Birger, J. Advanced Detection of Invasive Neophytes in Agricultural Landscapes: A Multisensory and Multiscale Remote Sensing Approach. Remote Sens. 2024, 16, 500. [Google Scholar] [CrossRef]

- Cao, B.; Kang, L.; Yang, S.; Tan, D.; Wen, X. Monitoring the Dynamic Changes in Urban Lakes Based on Multi-source Remote Sensing Images. In Communications in Computer and Information Science, Proceedings of the Geo-Informatics in Resource Management and Sustainable Ecosystem—Second International Conference, GRMSE 2014, Ypsilanti, MI, USA, 3–5 October 2014; Bian, F., Xie, Y., Eds.; Springer: Berlin/Heidelberg, Germany, 2014; Volume 482, pp. 68–78. [Google Scholar] [CrossRef]

- Liu, J.; Zhang, Y.; Liu, C.; Liu, X. Monitoring Impervious Surface Area Dynamics in Urban Areas Using Sentinel-2 Data and Improved Deeplabv3+ Model: A Case Study of Jinan City, China. Remote Sens. 2023, 15, 1976. [Google Scholar] [CrossRef]

- Gan, Z.; Guo, S.; Chen, C.; Zheng, H.; Hu, Y.; Su, H.; Wu, W. Tracking the 2D/3D Morphological Changes of Tidal Flats Using Time Series Remote Sensing Data in Northern China. Remote Sens. 2024, 16, 886. [Google Scholar] [CrossRef]

- Derakhshan, S.; Cutter, S.L.; Wang, C. Remote Sensing Derived Indices for Tracking Urban Land Surface Change in Case of Earthquake Recovery. Remote Sens. 2020, 12, 895. [Google Scholar] [CrossRef]

- He, S.; Hua, M.; Zhang, Y.; Du, X.; Zhang, F. Forward Modeling of Scattering Centers From Coated Target on Rough Ground for Remote Sensing Target Recognition Applications. IEEE Trans. Geosci. Remote Sens. 2024, 62, 2000617. [Google Scholar] [CrossRef]

- Han, L.; Paoletti, M.E.; Tao, X.; Wu, Z.; Haut, J.M.; Li, P.; Pastor, R.; Plaza, A. Hash-Based Remote Sensing Image Retrieval. IEEE Trans. Geosci. Remote Sens. 2024, 62, 4411123. [Google Scholar] [CrossRef]

- Hackstein, J.; Sumbul, G.; Clasen, K.N.; Demir, B. Exploring Masked Autoencoders for Sensor-Agnostic Image Retrieval in Remote Sensing. IEEE Trans. Geosci. Remote Sens. 2025, 63, 5602914. [Google Scholar] [CrossRef]

- Xu, S.; Ke, Q.; Peng, J.; Cao, X.; Zhao, Z. Pan-Denoising: Guided Hyperspectral Image Denoising via Weighted Represent Coefficient Total Variation. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5528714. [Google Scholar] [CrossRef]

- Xu, S.; Cao, X.; Peng, J.; Ke, Q.; Ma, C.; Meng, D. Hyperspectral Image Denoising by Asymmetric Noise Modeling. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5545214. [Google Scholar] [CrossRef]

- Xu, S.; Yu, C.; Peng, J.; Chen, S.; Cao, X.; Meng, D. Haar Nuclear Norms with Applications to Remote Sensing Imagery Restoration. IEEE Trans. Image Process. 2025, 34, 6879–6894. [Google Scholar] [CrossRef]

- Xu, S.; Zhao, Z.; Bai, H.; Yu, C.; Peng, J.; Cao, X.; Meng, D. Hipandas: Hyperspectral image joint denoising and super-resolution by image fusion with the panchromatic image. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Honolulu, HI, USA, 19–24 October 2025; pp. 12002–12011. [Google Scholar]

- Lin, J.; Huang, T.; Zhao, X.; Chen, Y.; Zhang, Q.; Yuan, Q. Robust Thick Cloud Removal for Multitemporal Remote Sensing Images Using Coupled Tensor Factorization. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5406916. [Google Scholar] [CrossRef]

- Chen, Y.; Weng, Q.; Tang, L.; Zhang, X.; Bilal, M.; Li, Q. Thick Clouds Removing From Multitemporal Landsat Images Using Spatiotemporal Neural Networks. IEEE Trans. Geosci. Remote Sens. 2022, 60, 4400214. [Google Scholar] [CrossRef]

- Chen, Y.; Tang, L.; Yang, X.; Fan, R.; Bilal, M.; Li, Q. Thick Clouds Removal From Multitemporal ZY-3 Satellite Images Using Deep Learning. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 13, 143–153. [Google Scholar] [CrossRef]

- Li, L.; Huang, T.; Zheng, Y.; Zheng, W.; Lin, J.; Wu, G.; Zhao, X. Thick Cloud Removal for Multitemporal Remote Sensing Images: When Tensor Ring Decomposition Meets Gradient Domain Fidelity. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5512414. [Google Scholar] [CrossRef]

- Peng, H.; Huang, T.; Zhao, X.; Lin, J.; Wu, W.; Li, L. Deep Domain Fidelity and Low-Rank Tensor Ring Regularization for Thick Cloud Removal of Multitemporal Remote Sensing Images. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5409314. [Google Scholar] [CrossRef]

- Zhang, Q.; Yuan, Q.; Li, Z.; Sun, F.; Zhang, L. Combined deep prior with low-rank tensor SVD for thick cloud removal in multitemporal images. ISPRS J. Photogramm. Remote Sens. 2021, 177, 161–173. [Google Scholar] [CrossRef]

- Zheng, W.J.; Zhao, X.L.; Zheng, Y.B.; Lin, J.; Zhuang, L.; Huang, T.Z. Spatial-spectral-temporal connective tensor network decomposition for thick cloud removal. ISPRS J. Photogramm. Remote Sens. 2023, 199, 182–194. [Google Scholar] [CrossRef]

- Xu, S.; Wang, J.; Wang, J. Fast Thick Cloud Removal for Multi-Temporal Remote Sensing Imagery via Representation Coefficient Total Variation. Remote Sens. 2024, 16, 152. [Google Scholar] [CrossRef]

- Xu, S.; Peng, J.; Ji, T.; Cao, X.; Sun, K.; Fei, R.; Meng, D. Stacked Tucker Decomposition with Multi-Nonlinear Products for Remote Sensing Imagery Inpainting. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5533413. [Google Scholar] [CrossRef]

- Xu, S.; Zhao, Z.; Cao, X.; Peng, J.; Zhao, X.; Meng, D.; Zhang, Y.; Timofte, R.; Gool, L.V. Parameterized Low-Rank Regularizer for High-Dimensional Visual Data. Int. J. Comput. Vis. 2025, 133, 8546–8569. [Google Scholar] [CrossRef]

- Sarukkai, V.; Jain, A.; Uzkent, B.; Ermon, S. Cloud Removal in Satellite Images Using Spatiotemporal Generative Networks. In Proceedings of the IEEE Winter Conference on Applications of Computer Vision, WACV 2020, Snowmass, CO, USA, 1–5 March 2020; pp. 1785–1794. [Google Scholar] [CrossRef]

- Hao, Y.; Jiang, W.; Liu, W.; Li, Y.; Liu, B. Selecting Information Fusion Generative Adversarial Network for Remote-Sensing Image Cloud Removal. IEEE Geosci. Remote Sens. Lett. 2023, 20, 6007605. [Google Scholar] [CrossRef]

- Zhou, H.; Wang, Y.; Liu, W.; Tao, D.; Ma, W.; Liu, B. MSC-GAN: A Multistream Complementary Generative Adversarial Network with Grouping Learning for Multitemporal Cloud Removal. IEEE Trans. Geosci. Remote Sens. 2025, 63, 5612014. [Google Scholar] [CrossRef]

- Zou, X.; Li, K.; Xing, J.; Zhang, Y.; Wang, S.; Jin, L.; Tao, P. DiffCR: A Fast Conditional Diffusion Framework for Cloud Removal From Optical Satellite Images. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5612014. [Google Scholar] [CrossRef]

- Zhao, X.; Jia, K. Cloud Removal in Remote Sensing Using Sequential-Based Diffusion Models. Remote Sens. 2023, 15, 2861. [Google Scholar] [CrossRef]

- Jing, R.; Duan, F.; Lu, F.; Zhang, M.; Zhao, W. Denoising Diffusion Probabilistic Feature-Based Network for Cloud Removal in Sentinel-2 Imagery. Remote Sens. 2023, 15, 2217. [Google Scholar] [CrossRef]

- Long, C.; Yang, J.; Guan, X.; Li, X. Thick Cloud Removal from Remote Sensing Images Using Double Shift Networks. In Proceedings of the 2021 IEEE International Geoscience and Remote Sensing Symposium IGARSS, Brussels, Belgium, 11–16 July 2021; pp. 2687–2690. [Google Scholar] [CrossRef]

- Ebel, P.; Garnot, V.S.F.; Schmitt, M.; Wegner, J.D.; Zhu, X.X. UnCRtainTS: Uncertainty Quantification for Cloud Removal in Optical Satellite Time Series. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, CVPR 2023—Workshops, Vancouver, BC, Canada, 17–24 June 2023; pp. 2086–2096. [Google Scholar] [CrossRef]

- Zi, Y.; Song, X.; Xie, F.; Jiang, Z. Thick Cloud Removal in Multitemporal Remote Sensing Images Using a Coarse-to-Fine Framework. IEEE Geosci. Remote Sens. Lett. 2024, 21, 6005605. [Google Scholar] [CrossRef]

- Long, C.; Li, X.; Jing, Y.; Shen, H. Bishift Networks for Thick Cloud Removal with Multitemporal Remote Sensing Images. Int. J. Intell. Syst. 2023, 2023, 9953198. [Google Scholar] [CrossRef]

- Liu, H.; Huang, B.; Cai, J. Thick Cloud Removal Under Land Cover Changes Using Multisource Satellite Imagery and a Spatiotemporal Attention Network. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5601218. [Google Scholar] [CrossRef]

- Li, Y.; Huang, Q.; Pei, X.; Jiao, L.; Shang, R. RADet: Refine Feature Pyramid Network and Multi-Layer Attention Network for Arbitrary-Oriented Object Detection of Remote Sensing Images. Remote Sens. 2020, 12, 389. [Google Scholar] [CrossRef]

- Peng, D.; Zhang, Y.; Guan, H. End-to-End Change Detection for High Resolution Satellite Images Using Improved UNet++. Remote Sens. 2019, 11, 1382. [Google Scholar] [CrossRef]

- He, N.; Fang, L.; Li, S.; Plaza, J.; Plaza, A. Skip-Connected Covariance Network for Remote Sensing Scene Classification. IEEE Trans. Neural Netw. Learn. Syst. 2020, 31, 1461–1474. [Google Scholar] [CrossRef] [PubMed]

- Han, Q.; Zhi, X.; Hu, J.; Zhang, S.; Chen, W.; Huang, Y.; Jiang, S. StyleFormer: Spatial-Temporal Style Projecting Bidirectional Interactive Transformer for Change Detection. IEEE Trans. Geosci. Remote Sens. 2025, 63, 5609016. [Google Scholar] [CrossRef]

- Tang, X.; Li, M.; Ma, J.; Zhang, X.; Liu, F.; Jiao, L. EMTCAL: Efficient Multiscale Transformer and Cross-Level Attention Learning for Remote Sensing Scene Classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5626915. [Google Scholar] [CrossRef]

- Huang, G.; Wu, P. CTGAN: Cloud Transformer Generative Adversarial Network. In Proceedings of the 2022 IEEE International Conference on Image Processing, ICIP 2022, Bordeaux, France, 16–19 October 2022; pp. 511–515. [Google Scholar] [CrossRef]

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simoncelli, E.P. Image quality assessment: From error visibility to structural similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef]

- Meraner, A.; Ebel, P.; Zhu, X.X.; Schmitt, M. Cloud removal in Sentinel-2 imagery using a deep residual neural network and SAR-optical data fusion. ISPRS J. Photogramm. Remote Sens. 2020, 166, 333–346. [Google Scholar] [CrossRef]

- Ebel, P.; Xu, Y.; Schmitt, M.; Zhu, X. SEN12MS-CR-TS: A Remote Sensing Data Set for Multi-modal Multi-temporal Cloud Removal. arXiv 2022, arXiv:2201.09613. [Google Scholar]

- Zou, X.; Li, K.; Xing, J.; Tao, P.; Cui, Y. PMAA: A Progressive Multi-Scale Attention Autoencoder Model for High-Performance Cloud Removal from Multi-Temporal Satellite Imagery. In Proceedings of the European Conference on Artificial Intelligence; IOS Press: Amsterdam, The Netherlands, 2023; Volume 372, pp. 3165–3172. [Google Scholar] [CrossRef]

| Methods | STGAN Dataset | SEN2_MTC_New Dataset | ||||

|---|---|---|---|---|---|---|

| PSNR ↑ | SSIM ↑ | SAM ↓ | PSNR ↑ | SSIM ↑ | SAM ↓ | |

| Median Filter | 10.495 | 0.441 | 7.756 | 9.485 | 0.404 | 11.658 |

| DSen2-CR | 25.559 | 0.788 | 3.801 | 17.417 | 0.576 | 7.378 |

| CTGAN | 25.211 | 0.776 | 4.079 | 18.308 | 0.609 | 6.857 |

| STGAN | 26.310 | 0.796 | 3.376 | 18.158 | 0.556 | 7.018 |

| CR-TS-Net | 26.309 | 0.800 | 3.346 | 18.597 | 0.616 | 6.892 |

| PMAA | 26.930 | 0.829 | 3.269 | 18.369 | 0.614 | 7.155 |

| UnCRtainTS | 27.244 | 0.821 | 3.112 | 18.770 | 0.631 | 6.528 |

| STAIT (Ours) | 27.340 | 0.831 | 2.949 | 18.868 | 0.640 | 6.392 |

| Methods | Efficiency Metrics | Performance Metrics | ||||

|---|---|---|---|---|---|---|

| Params (M) | FLOPs (G) | Latency (ms) ↓ | PSNR ↑ | SSIM ↑ | SAM ↓ | |

| DiffCR | 22.91 | 91.72 | 498.70 | 19.150 | 0.671 | 6.454 |

| STAIT-3 (Ours) | 7.68 | 118.75 | 6.55 | 18.880 | 0.653 | 6.324 |

| Methods | Params, FLOPs, Latency and Memory Comparison | |||

|---|---|---|---|---|

| Params (M) | FLOPs (G) | Latency (ms) | Memory (MB) | |

| DSen2-CR | 18.92 | 2478.73 | 44.77 | 333.39 |

| CTGAN | 642.92 | 1263.40 | 43.20 | 3319.50 |

| STGAN | 231.93 | 2186.51 | 34.25 | 1353.24 |

| CR-TS-Net | 38.39 | 15082.58 | 312.30 | 1575.43 |

| PMAA | 3.45 | 185.09 | 16.42 | 370.59 |

| UnCRtainTS | 0.56 | 167.10 | 38.90 | 785.32 |

| STAIT (Ours) | 7.68 | 118.81 | 6.68 | 574.44 |

| Analysis of Hyperparameter N | Analysis of Hyperparameter L | ||||||

|---|---|---|---|---|---|---|---|

| N | PSNR ↑ | SSIM ↑ | SAM ↓ | L | PSNR ↑ | SSIM ↑ | SAM ↓ |

| 2 | 17.842 | 0.588 | 7.157 | 2 | 17.754 | 0.583 | 7.352 |

| 4 | 18.868 | 0.640 | 6.392 | 4 | 18.868 | 0.640 | 6.392 |

| 6 | 18.859 | 0.637 | 6.458 | 6 | 18.851 | 0.639 | 6.405 |

| 8 | 18.731 | 0.638 | 6.437 | 8 | 18.865 | 0.635 | 6.428 |

| Ablation Experiment | |||

|---|---|---|---|

| Exp | PSNR ↑ | SSIM ↑ | SAM ↓ |

| w/o STAIT | 17.535 | 0.541 | 7.662 |

| CT-TAM only | 18.112 | 0.577 | 7.395 |

| ST-SAM only | 17.631 | 0.538 | 7.928 |

| w/o Feature Token Generator | 18.331 | 0.614 | 6.883 |

| w/o Shared Decoder | 18.742 | 0.632 | 6.487 |

| Ours | 18.868 | 0.640 | 6.392 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Cui, Y.; Zhang, J.; Bai, H.; Zhao, Z.; Deng, L.; Xu, S.; Zhang, C. STAIT: A Spatio-Temporal Alternating Iterative Transformer for Multi-Temporal Remote Sensing Image Cloud Removal. Remote Sens. 2026, 18, 596. https://doi.org/10.3390/rs18040596

Cui Y, Zhang J, Bai H, Zhao Z, Deng L, Xu S, Zhang C. STAIT: A Spatio-Temporal Alternating Iterative Transformer for Multi-Temporal Remote Sensing Image Cloud Removal. Remote Sensing. 2026; 18(4):596. https://doi.org/10.3390/rs18040596

Chicago/Turabian StyleCui, Yukun, Jiangshe Zhang, Haowen Bai, Zixiang Zhao, Lilun Deng, Shuang Xu, and Chunxia Zhang. 2026. "STAIT: A Spatio-Temporal Alternating Iterative Transformer for Multi-Temporal Remote Sensing Image Cloud Removal" Remote Sensing 18, no. 4: 596. https://doi.org/10.3390/rs18040596

APA StyleCui, Y., Zhang, J., Bai, H., Zhao, Z., Deng, L., Xu, S., & Zhang, C. (2026). STAIT: A Spatio-Temporal Alternating Iterative Transformer for Multi-Temporal Remote Sensing Image Cloud Removal. Remote Sensing, 18(4), 596. https://doi.org/10.3390/rs18040596