DeltaVLM: Interactive Remote Sensing Image Change Analysis via Instruction-Guided Difference Perception

Highlights

- A novel interactive paradigm that supports the multi-turn, instruction-guided exploration of changes in bi-temporal remote sensing images.

- We present ChangeChat-105k, a large-scale instruction-following dataset of 105K+ query–response pairs across six change analysis tasks, and DeltaVLM, a tailored vision language model.

- Enables the natural language-based monitoring of dynamic Earth processes for applications such as urban planning, environmental monitoring, and disaster response.

- Provides the first benchmark and architecture for interactive change interpretation in remote sensing, connecting visual detection with human-centered reasoning.

Abstract

1. Introduction

- We introduce RSICA, a novel task that integrates change captioning and VQA into a unified, interactive, and user-driven framework for analyzing bi-temporal RSIs.

- We present ChangeChat-105k, a large-scale RS instruction-following dataset covering diverse change-related tasks including captioning, classification, counting, localization, open-ended QA, and multi-turn dialog.

- We propose DeltaVLM, an innovative VLM architecture tailored to RSICA, which integrates a bi-temporal vision encoder, a visual difference perception module with CSRM, and a instruction-guided Q-former for dynamic, context-aware multi-task and multi-turn interactions.

- We conduct comprehensive evaluations, demonstrating DeltaVLM’s superior performance compared to existing baselines on the RSICA task, validating its effectiveness in addressing complex change analysis challenges.

2. Related Work

2.1. Task-Specific Methods for Change Analysis

2.1.1. Change Detection

2.1.2. Change Captioning

2.2. Interactive Analysis via VQA

2.3. Unified VLMs

2.3.1. General-Purpose VLMs

2.3.2. VLMs for RS

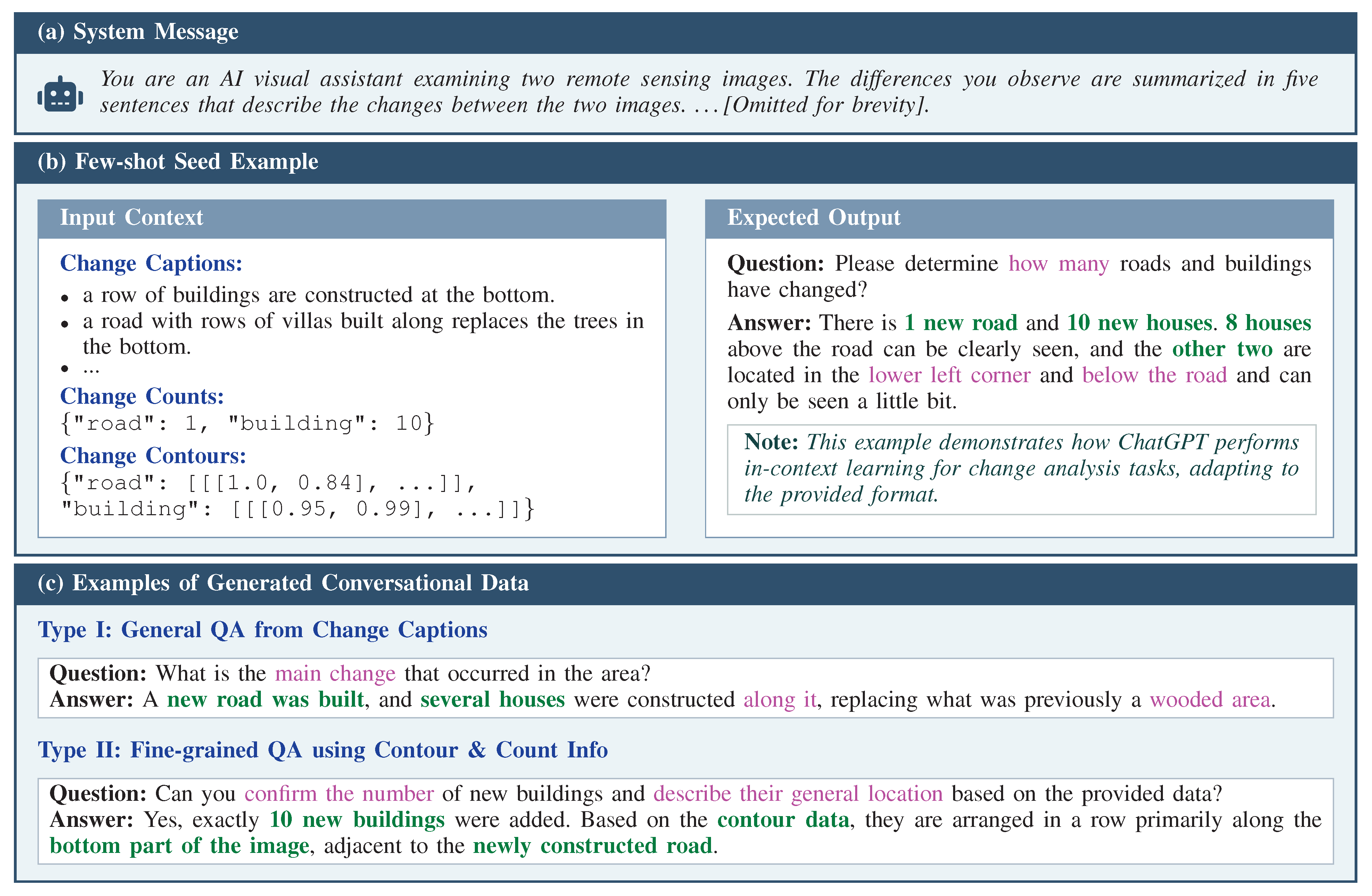

3. ChangeChat-105k Dataset

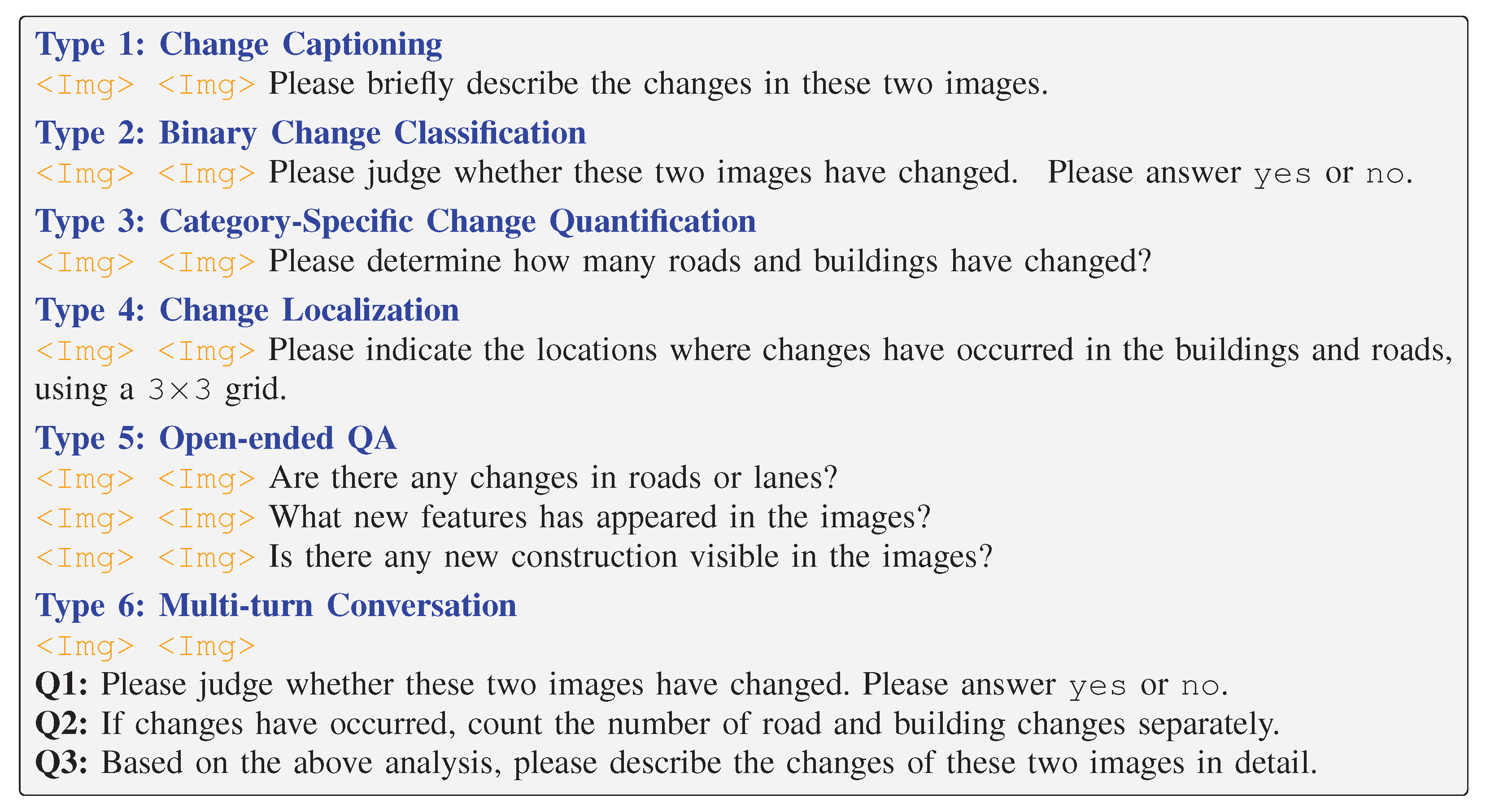

3.1. Change Captioning

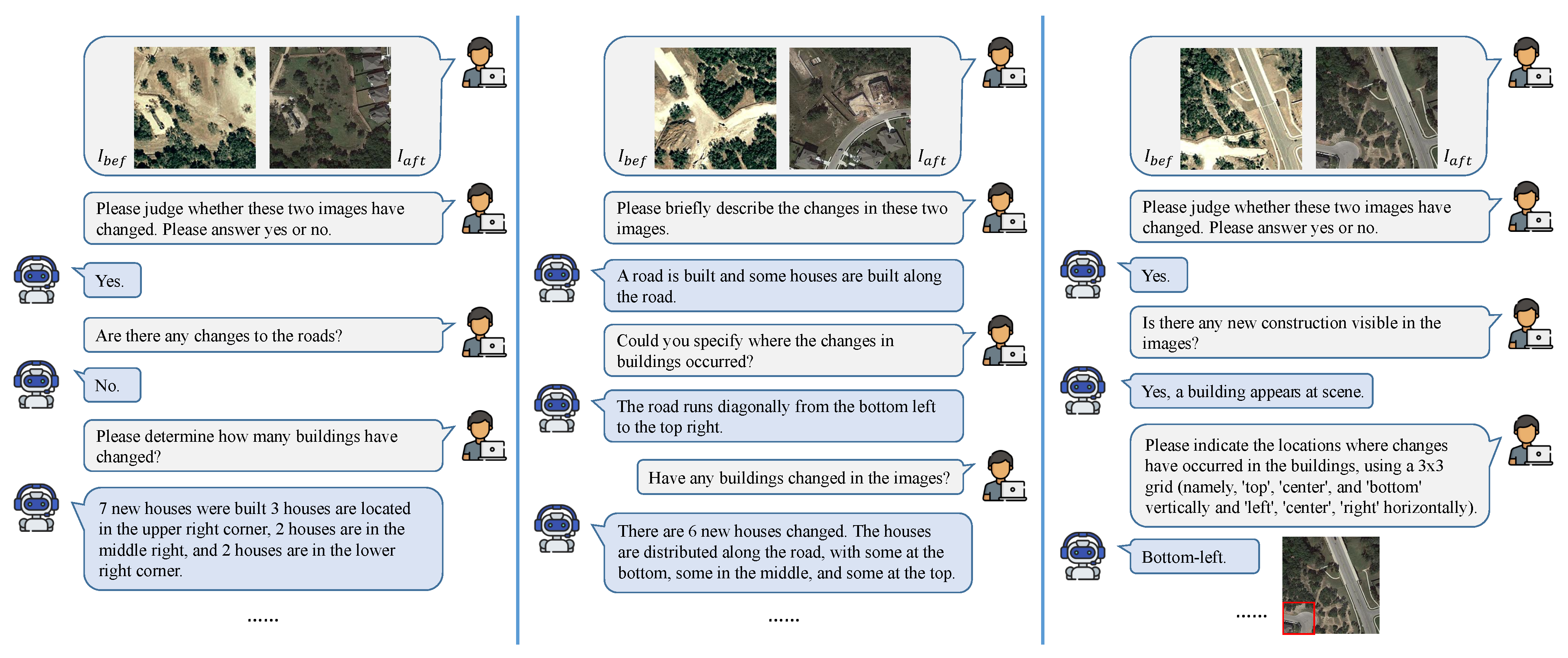

3.2. Binary Change Classification

3.3. Category-Specific Change Quantification

3.4. Change Localization

3.5. Open-Ended QA

3.6. Multi-Turn Conversation

4. Proposed Method

4.1. Overview

4.2. Bi-Temporal Vision Encoding

4.3. Instruction-Guided Difference Perception

4.3.1. Cross-Semantic Relation Measuring

4.3.2. Q-Former for Cross-Modal Alignment

4.4. LLM-Based Language Decoder

4.5. Training Objective

5. Results

5.1. Experimental Setup

5.1.1. Dataset

5.1.2. Implementation Details

5.1.3. Evaluation Metrics

- Binary Change Classification: We use accuracy, precision, recall, and the F1-score to measure the classification performance. The F1-score provides a balanced measure, particularly important for change/no change distributions.

- Category-Specific Change Quantification: We use the mean absolute error (MAE) and root mean squared error (RMSE) to evaluate the counting accuracy, with the MAE capturing the average deviation and the RMSE penalizing larger errors.

- Change Localization: The change localization task requires returning the location of the change in a grid format, and this task belongs to multi-class classification tasks. We use precision, recall, the F1-score, and the overall accuracy to evaluate the localization quality. Precision, recall, and the F1-score are computed using micro-averaging across all images.

5.2. Comparison with Baselines

5.2.1. Change Captioning

5.2.2. Binary Change Classification

5.2.3. Category-Specific Change Quantification

5.2.4. Change Localization

5.2.5. Open-Ended QA

5.2.6. Qualitative Analysis

6. Discussion

6.1. Ablation Analysis

6.2. Model Scale and Efficiency Analysis

6.3. Reliability of LLM-Generated Annotations

6.4. Limitations

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| RS | Remote Sensing |

| RSIs | Remote Sensing Images |

| VQA | Visual Question Answering |

| VLM | Vision Language Model |

| LLM | Large Language Model |

| RSICA | Remote Sensing Image Change Analysis |

| Bi-VE | Bi-Temporal Vision Encoder |

| IDPM | Instruction-Guided Difference Perception Module |

| CSRM | Cross-Semantic Relation Measuring |

References

- Van Westen, C. Remote sensing for natural disaster management. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2000, 33, 1609–1617. [Google Scholar]

- Chowdhury, R.R. Driving forces of tropical deforestation: The role of remote sensing and spatial models. Singap. J. Trop. Geogr. 2006, 27, 82–101. [Google Scholar] [CrossRef]

- Navalgund, R.R.; Jayaraman, V.; Roy, P. Remote sensing applications: An overview. Curr. Sci. 2007, 93, 1747–1766. [Google Scholar]

- Bannari, A.; Morin, D.; Bénié, G.; Bonn, F. A theoretical review of different mathematical models of geometric corrections applied to remote sensing images. Remote Sens. Rev. 1995, 13, 27–47. [Google Scholar] [CrossRef]

- Ding, L.; Hong, D.; Zhao, M.; Chen, H.; Li, C.; Deng, J.; Yokoya, N.; Bruzzone, L.; Chanussot, J. A Survey of Sample-Efficient Deep Learning for Change Detection in Remote Sensing: Tasks, strategies, and challenges. IEEE Geosci. Remote Sens. Mag. 2025, 13, 164–189. [Google Scholar] [CrossRef]

- Qu, B.; Li, X.; Tao, D.; Lu, X. Deep semantic understanding of high resolution remote sensing image. In Proceedings of the 2016 International Conference on Computer, Information and Telecommunication Systems (CITS), Kunming, China, 6–8 July 2016; IEEE: Piscataway, NJ, USA, 2016; pp. 1–5. [Google Scholar]

- Lobry, S.; Marcos, D.; Murray, J.; Tuia, D. RSVQA: Visual question answering for remote sensing data. IEEE Trans. Geosci. Remote Sens. 2020, 58, 8555–8566. [Google Scholar] [CrossRef]

- Liu, C.; Zhao, R.; Chen, H.; Zou, Z.; Shi, Z. Remote sensing image change captioning with dual-branch transformers: A new method and a large scale dataset. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5633520. [Google Scholar] [CrossRef]

- Radford, A.; Wu, J.; Child, R.; Luan, D.; Amodei, D.; Sutskever, I. Language models are unsupervised multitask learners. OpenAI Blog 2019, 1, 9. [Google Scholar]

- Lu, J.; Batra, D.; Parikh, D.; Lee, S. ViLBERT: Pretraining Task-Agnostic Visiolinguistic Representations for Vision-and-Language Tasks. In Proceedings of the Advances in Neural Information Processing Systems, Vancouver, BC, Canada, 8–14 December 2019. [Google Scholar]

- Wei, J.; Bosma, M.; Zhao, V.Y.; Guu, K.; Yu, A.W.; Lester, B.; Du, N.; Dai, A.M.; Le, Q.V. Finetuned language models are zero-shot learners. arXiv 2021, arXiv:2109.01652. [Google Scholar]

- Hu, Y.; Yuan, J.; Wen, C.; Lu, X.; Liu, Y.; Li, X. RSGPT: A remote sensing vision language model and benchmark. ISPRS J. Photogramm. Remote Sens. 2025, 224, 272–286. [Google Scholar] [CrossRef]

- Kuckreja, K.; Danish, M.S.; Naseer, M.; Das, A.; Khan, S.; Khan, F.S. Geochat: Grounded large vision-language model for remote sensing. In Proceedings of the 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 16–22 June 2024; pp. 27831–27840. [Google Scholar]

- Bazi, Y.; Bashmal, L.; Al Rahhal, M.M.; Ricci, R.; Melgani, F. RS-llava: A large vision-language model for joint captioning and question answering in remote sensing imagery. Remote Sens. 2024, 16, 1477. [Google Scholar] [CrossRef]

- Liu, H.; Li, C.; Wu, Q.; Lee, Y.J. Visual instruction tuning. Adv. Neural Inf. Process. Syst. 2023, 36, 34892–34916. [Google Scholar]

- Rasti, B.; Scheunders, P.; Ghamisi, P.; Licciardi, G.; Chanussot, J. Noise reduction in hyperspectral imagery: Overview and application. Remote Sens. 2018, 10, 482. [Google Scholar] [CrossRef]

- OpenAI. ChatGPT: Optimizing Language Models for Dialogue. 2022. Available online: https://openai.com/blog/chatgpt (accessed on 19 May 2025).

- Singh, A. Review article digital change detection techniques using remotely-sensed data. Int. J. Remote Sens. 1989, 10, 989–1003. [Google Scholar] [CrossRef]

- Nelson, R.F. Detecting forest canopy change due to insect activity using Landsat MSS. Photogramm. Eng. Remote Sens. 1983, 49, 1303–1314. [Google Scholar]

- Nielsen, A.A.; Conradsen, K.; Simpson, J.J. Multivariate alteration detection (MAD) and MAF postprocessing in multispectral, bitemporal image data: New approaches to change detection studies. Remote Sens. Environ. 1998, 64, 19. [Google Scholar] [CrossRef]

- Serra, P.; Pons, X.; Sauri, D. Post-classification change detection with data from different sensors: Some accuracy considerations. Int. J. Remote Sens. 2003, 24, 3311–3340. [Google Scholar] [CrossRef]

- Wu, C.; Du, B.; Zhang, L. Slow feature analysis for change detection in multitemporal remote sensing images. IEEE Trans. Geosci. Remote Sens. 2013, 52, 2858–2874. [Google Scholar] [CrossRef]

- Nielsen, A.A. Regularized iteratively reweighted MAD method for change detection in multi-and hyperspectral data. IEEE Trans. Image Process. 2007, 16, 463–478. [Google Scholar] [CrossRef]

- Blaschke, T. Object based image analysis for remote sensing. ISPRS J. Photogramm. Remote Sens. 2010, 65, 2–16. [Google Scholar] [CrossRef]

- Daudt, R.C.; Le Saux, B.; Boulch, A. Fully convolutional siamese networks for change detection. In Proceedings of the 2018 25th IEEE International Conference on Image Processing (ICIP), Athens, Greece, 7–10 October 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 4063–4067. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Proceedings of the 18th International Conference Medical Image Computing and Computer-Assisted Intervention—MICCAI 2015, 5–9 October 2015; Springer: Cham, Switzerland, 2015; pp. 234–241. [Google Scholar]

- Chen, H.; Qi, Z.; Shi, Z. Remote sensing image change detection with transformers. IEEE Trans. Geosci. Remote Sens. 2021, 60, 5607514. [Google Scholar] [CrossRef]

- Bandara, W.G.C.; Patel, V.M. A transformer-based siamese network for change detection. In Proceedings of the IGARSS 2022—2022 IEEE International Geoscience and Remote Sensing Symposium, Kuala Lumpur, Malaysia, 17–22 July 2022; IEEE: Piscataway, NJ, USA, 2022; pp. 207–210. [Google Scholar]

- Chen, H.; Song, J.; Han, C.; Xia, J.; Yokoya, N. ChangeMamba: Remote sensing change detection with spatiotemporal state space model. IEEE Trans. Geosci. Remote Sens. 2024, 62, 4409720. [Google Scholar] [CrossRef]

- Chen, T.; Kornblith, S.; Norouzi, M.; Hinton, G. A simple framework for contrastive learning of visual representations. In Proceedings of the International Conference on Machine Learning, PmLR, Virtual, 13–18 July 2020; pp. 1597–1607. [Google Scholar]

- Bao, H.; Dong, L.; Piao, S.; Wei, F. BEiT: BERT pre-training of image transformers. In Proceedings of the 10th International Conference on Learning Representations, Online, 5–29 April 2022. [Google Scholar]

- Zheng, Z.; Zhong, Y.; Wang, J.; Ma, A.; Zhang, L. Building damage assessment for rapid disaster response with a deep object-based semantic change detection framework: From natural disasters to man-made disasters. Remote Sens. Environ. 2021, 265, 112636. [Google Scholar] [CrossRef]

- Chen, H.; Song, J.; Dietrich, O.; Broni-Bediako, C.; Xuan, W.; Wang, J.; Shao, X.; Wei, Y.; Xia, J.; Lan, C.; et al. BRIGHT: A globally distributed multimodal building damage assessment dataset with very-high-resolution for all-weather disaster response. Earth Syst. Sci. Data Discuss. 2025, 17, 6217–6253. [Google Scholar] [CrossRef]

- Hoxha, G.; Chouaf, S.; Melgani, F.; Smara, Y. Change captioning: A new paradigm for multitemporal remote sensing image analysis. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5627414. [Google Scholar] [CrossRef]

- You, Q.; Jin, H.; Wang, Z.; Fang, C.; Luo, J. Image captioning with semantic attention. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 4651–4659. [Google Scholar]

- Sun, D.; Bao, Y.; Liu, J.; Cao, X. A lightweight sparse focus transformer for remote sensing image change captioning. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 18727–18738. [Google Scholar] [CrossRef]

- Liu, C.; Yang, J.; Qi, Z.; Zou, Z.; Shi, Z. Progressive scale-aware network for remote sensing image change captioning. In Proceedings of the IGARSS 2023—2023 IEEE International Geoscience and Remote Sensing Symposium, Pasadena, CA, USA, 16–21 July 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 6668–6671. [Google Scholar]

- Liu, C.; Chen, K.; Chen, B.; Zhang, H.; Zou, Z.; Shi, Z. Rscama: Remote sensing image change captioning with state space model. IEEE Geosci. Remote Sens. Lett. 2024, 21, 6010405. [Google Scholar] [CrossRef]

- Liu, C.; Zhao, R.; Chen, J.; Qi, Z.; Zou, Z.; Shi, Z. A decoupling paradigm with prompt learning for remote sensing image change captioning. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5622018. [Google Scholar] [CrossRef]

- Zhu, Y.; Li, L.; Chen, K.; Liu, C.; Zhou, F.; Shi, Z.X. Semantic-CC: Boosting Remote Sensing Image Change Captioning via Foundational Knowledge and Semantic Guidance. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5648916. [Google Scholar] [CrossRef]

- Noman, M.; Ahsan, N.; Naseer, M.; Cholakkal, H.; Anwer, R.M.; Khan, S.; Khan, F.S. CDCHAT: A large multimodal model for remote sensing change description. arXiv 2024, arXiv:2409.16261. [Google Scholar] [CrossRef]

- Antol, S.; Agrawal, A.; Lu, J.; Mitchell, M.; Batra, D.; Zitnick, C.L.; Parikh, D. Vqa: Visual question answering. In Proceedings of the 2015 IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; pp. 2425–2433. [Google Scholar]

- Zhang, Z.; Jiao, L.; Li, L.; Liu, X.; Chen, P.; Liu, F.; Li, Y.; Guo, Z. A spatial hierarchical reasoning network for remote sensing visual question answering. IEEE Trans. Geosci. Remote Sens. 2023, 61, 4400815. [Google Scholar] [CrossRef]

- Wang, J.; Zheng, Z.; Chen, Z.; Ma, A.; Zhong, Y. Earthvqa: Towards queryable earth via relational reasoning-based remote sensing visual question answering. In Proceedings of the AAAI Conference on Artificial Intelligence, Vancouver, BC, Canada, 26–27 February 2024; Volume 38, pp. 5481–5489. [Google Scholar]

- Chappuis, C.; Zermatten, V.; Lobry, S.; Le Saux, B.; Tuia, D. Prompt-RSVQA: Prompting visual context to a language model for remote sensing visual question answering. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), New Orleans, LA, USA, 19–20 June 2022; pp. 1372–1381. [Google Scholar]

- Yuan, Z.; Mou, L.; Zhu, X.X. Change-aware visual question answering. In Proceedings of the IGARSS 2022—2022 IEEE International Geoscience and Remote Sensing Symposium, Kuala Lumpur, Malaysia, 17–22 July 2022; IEEE: Piscataway, NJ, USA, 2022; pp. 227–230. [Google Scholar]

- Li, J.; Li, D.; Xiong, C.; Hoi, S. Blip: Bootstrapping language-image pre-training for unified vision-language understanding and generation. In Proceedings of the 39th International Conference on Machine Learning Research, PMLR, Online, 28 November–9 December 2022; pp. 12888–12900. [Google Scholar]

- Alayrac, J.B.; Donahue, J.; Luc, P.; Miech, A.; Barr, I.; Hasson, Y.; Lenc, K.; Mensch, A.; Millican, K.; Reynolds, M.; et al. Flamingo: A visual language model for few-shot learning. Adv. Neural Inf. Process. Syst. 2022, 35, 23716–23736. [Google Scholar]

- Hurst, A.; Lerer, A.; Goucher, A.P.; Perelman, A.; Ramesh, A.; Clark, A.; Ostrow, A.; Welihinda, A.; Hayes, A.; Radford, A.; et al. Gpt-4o system card. arXiv 2024, arXiv:2410.21276. [Google Scholar] [CrossRef]

- Wang, P.; Bai, S.; Tan, S.; Wang, S.; Fan, Z.; Bai, J.; Chen, K.; Liu, X.; Wang, J.; Ge, W.; et al. Qwen2-VL: Enhancing vision-language model’s perception of the world at any resolution. arXiv 2024, arXiv:2409.12191. [Google Scholar]

- GLM, T.; Zeng, A.; Xu, B.; Wang, B.; Zhang, C.; Yin, D.; Zhang, D.; Rojas, D.; Feng, G.; Zhao, H.; et al. ChatGLM: A family of large language models from glm-130b to glm-4 all tools. arXiv 2024, arXiv:2406.12793. [Google Scholar]

- Team, G.; Georgiev, P.; Lei, V.I.; Burnell, R.; Bai, L.; Gulati, A.; Tanzer, G.; Vincent, D.; Pan, Z.; Wang, S.; et al. Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context. arXiv 2024, arXiv:2403.05530. [Google Scholar] [CrossRef]

- Wu, Z.; Chen, X.; Pan, Z.; Liu, X.; Liu, W.; Dai, D.; Gao, H.; Ma, Y.; Wu, C.; Wang, B.; et al. Deepseek-vl2: Mixture-of-experts vision-language models for advanced multimodal understanding. arXiv 2024, arXiv:2412.10302. [Google Scholar]

- Zhang, Z.; Zhao, T.; Guo, Y.; Yin, J. RS5M and GeoRSCLIP: A large scale vision-language dataset and a large vision-language model for remote sensing. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5642123. [Google Scholar] [CrossRef]

- Dai, W.; Li, J.; Li, D.; Tiong, A.; Zhao, J.; Wang, W.; Li, B.; Fung, P.N.; Hoi, S. Instructblip: Towards general-purpose vision-language models with instruction tuning. Adv. Neural Inf. Process. Syst. 2023, 36, 49250–49267. [Google Scholar]

- Zhan, Y.; Xiong, Z.; Yuan, Y. Skyeyegpt: Unifying remote sensing vision-language tasks via instruction tuning with large language model. ISPRS J. Photogramm. Remote Sens. 2025, 221, 64–77. [Google Scholar] [CrossRef]

- Liu, X.; Lian, Z. RSUniVLM: A unified vision language model for remote sensing via granularity-oriented mixture of experts. arXiv 2024, arXiv:2412.05679. [Google Scholar]

- Deng, P.; Zhou, W.; Wu, H. Changechat: An interactive model for remote sensing change analysis via multimodal instruction tuning. In Proceedings of the ICASSP 2025—2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Hyderabad, India, 6–11 April 2025; IEEE: Piscataway, NJ, USA, 2025; pp. 1–5. [Google Scholar]

- Liu, C.; Chen, K.; Zhang, H.; Qi, Z.; Zou, Z.; Shi, Z. Change-Agent: Towards Interactive Comprehensive Remote Sensing Change Interpretation and Analysis. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5635616. [Google Scholar] [CrossRef]

- Fang, Y.; Wang, W.; Xie, B.; Sun, Q.; Wu, L.; Wang, X.; Huang, T.; Wang, X.; Cao, Y. EVA: Exploring the Limits of Masked Visual Representation Learning at Scale. In Proceedings of the 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 17–24 June 2023; pp. 19358–19369. [Google Scholar]

- Cho, K.; van Merrienboer, B.; Gülçehre, Ç.; Bahdanau, D.; Bougares, F.; Schwenk, H.; Bengio, Y. Learning Phrase Representations using RNN Encoder–Decoder for Statistical Machine Translation. In Proceedings of the Conference on Empirical Methods in Natural Language Processing (EMNLP), Doha, Qatar, 25–29 October 2014. [Google Scholar]

- Zheng, L.; Chiang, W.L.; Sheng, Y.; Zhuang, S.; Wu, Z.; Zhuang, Y.; Lin, Z.; Li, Z.; Li, D.; Xing, E.; et al. Judging llm-as-a-judge with mt-bench and chatbot arena. Adv. Neural Inf. Process. Syst. 2023, 36, 46595–46623. [Google Scholar]

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. Llama: Open and efficient foundation language models. arXiv 2023, arXiv:2302.13971. [Google Scholar] [CrossRef]

- Loshchilov, I.; Hutter, F. Decoupled weight decay regularization. In Proceedings of the International Conference on Learning Representations, New Orleans, LA, USA, 6–9 May 2019. [Google Scholar]

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W.J. BLEU: A method for automatic evaluation of machine translation. In Proceedings of the 40th Annual Meeting on Association for Computational Linguistics, Philadelphia, PA, USA, 7–12 July 2002; pp. 311–318. [Google Scholar]

- Banerjee, S.; Lavie, A. METEOR: An automatic metric for MT evaluation with improved correlation with human judgments. In Proceedings of the ACL Workshop on Intrinsic and Extrinsic Evaluation Measures for Machine Translation and/or Summarization, Ann Arbor, MI, USA, 29 June 2005; pp. 65–72. [Google Scholar]

- Lin, C.Y. Rouge: A package for automatic evaluation of summaries. In Proceedings of the Text Summarization Branches Out, Barcelona, Spain, 25–26 July 2004; pp. 74–81. [Google Scholar]

- Vedantam, R.; Zitnick, C.L.; Parikh, D. CIDEr: Consensus-based image description evaluation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 4566–4575. [Google Scholar]

| Instruction Type | Source Data | Gen. Method | Response Format | Train | Test |

|---|---|---|---|---|---|

| Change Captioning | LEVIR-CC | Rule-based | Descriptive Text | 34,075 | 1929 |

| Binary Change Classification | LEVIR-MCI | Rule-based | Yes/No | 6815 | 1929 |

| Category-Specific Change Quantification | LEVIR-MCI | Rule-based | Object Count | 6815 | 1929 |

| Change Localization | LEVIR-MCI | Rule-based | Grid Location | 6815 | 1929 |

| Open-Ended QA | Derived | GPT-assisted | Q&A Pair | 26,600 | 7527 |

| Multi-Turn Conversation | Derived | GPT-assisted | Dialog | 6815 | 1929 |

| Total | – | – | – | 87,935 | 17,172 |

| Category | Method | BLEU-1 | BLEU-2 | BLEU-3 | BLEU-4 | METEOR | ROUGE-L | CIDEr |

|---|---|---|---|---|---|---|---|---|

| RS Change Captioning Models | PromptCC [39] | 83.66 | 75.73 | 69.10 | 63.54 | 38.82 | 73.72 | 136.44 |

| PSNet [37] | 83.86 | 75.13 | 67.89 | 62.11 | 38.80 | 73.60 | 132.62 | |

| RSICCFormer [8] | 84.72 | 76.27 | 68.87 | 62.77 | 39.61 | 74.12 | 134.12 | |

| SFT [36] | 84.56 | 75.87 | 68.64 | 62.87 | 39.93 | 74.69 | 137.05 | |

| RSCaMa [38] | 85.79 | 77.99 | 71.04 | 65.24 | 39.91 | 75.24 | 136.56 | |

| VLMs | Deepseek-VL2 [53] | 40.94 | 34.50 | 30.26 | 25.68 | 19.48 | 54.37 | 101.79 |

| Gemini-1.5-Pro [52] | 45.68 | 33.59 | 25.53 | 19.01 | 22.64 | 56.25 | 91.37 | |

| GLM-4V-Plus [51] | 35.59 | 24.26 | 18.54 | 13.85 | 20.13 | 54.39 | 93.16 | |

| GPT-4o [49] | 46.03 | 33.09 | 24.66 | 18.05 | 22.50 | 56.49 | 90.92 | |

| Qwen-VL-Plus [50] | 41.31 | 33.19 | 27.96 | 22.95 | 18.04 | 51.24 | 92.99 | |

| RSUniVLM [57] | 82.07 | 72.56 | 63.94 | 56.27 | 36.10 | 74.07 | 138.61 | |

| DeltaVLM | 85.78 | 77.15 | 69.24 | 62.51 | 39.47 | 75.01 | 136.72 |

| Method | Accuracy (%) | Precision (%) | Recall (%) | F1-Score (%) |

|---|---|---|---|---|

| Deepseek-VL2 [53] | 59.72 | 97.95 | 19.81 | 32.96 |

| Gemini-1.5-Pro [52] | 83.83 | 84.03 | 83.51 | 83.77 |

| GLM-4V-Plus [51] | 79.83 | 88.38 | 68.67 | 77.29 |

| GPT-4o [49] | 84.81 | 83.58 | 86.62 | 85.07 |

| Qwen-VL-Plus [50] | 58.22 | 73.65 | 25.52 | 37.90 |

| RSUniVLM [57] | 91.24 | 93.63 | 88.49 | 90.99 |

| DeltaVLM | 93.99 | 96.29 | 91.49 | 93.83 |

| Method | Roads | Buildings | ||

|---|---|---|---|---|

| MAE | RMSE | MAE | RMSE | |

| Deepseek-VL2 [53] | 0.58 | 0.95 | 4.17 | 8.84 |

| Gemini-1.5-Pro [52] | 0.58 | 1.25 | 2.56 | 8.71 |

| GLM-4V-Plus [51] | 0.82 | 1.62 | 2.05 | 4.61 |

| GPT-4o [49] | 0.49 | 1.00 | 1.86 | 4.57 |

| Qwen-VL-Plus [50] | 0.90 | 1.50 | 4.41 | 9.03 |

| RSUniVLM [57] | – | – | – | – |

| DeltaVLM | 0.24 | 0.70 | 1.32 | 2.89 |

| Category | Method | Precision (%) | Recall (%) | F1-Score (%) | Accuracy (%) |

|---|---|---|---|---|---|

| Roads | Deepseek-VL2 [53] | 30.07 | 10.43 | 15.49 | 64.90 |

| Gemini-1.5-Pro [52] | 43.01 | 40.55 | 41.74 | 48.63 | |

| GLM-4V-Plus [51] | 21.99 | 33.32 | 26.49 | 6.79 | |

| GPT-4o [49] | 30.44 | 27.01 | 28.62 | 33.85 | |

| Qwen-VL-Plus [50] | 15.42 | 1.40 | 2.56 | 67.19 | |

| RSUniVLM [57] | 42.04 | 89.93 | 57.29 | 10.99 | |

| DeltaVLM | 69.63 | 66.32 | 67.94 | 70.92 | |

| Buildings | Deepseek-VL2 [53] | 61.98 | 14.52 | 23.52 | 57.59 |

| Gemini-1.5-Pro [52] | 65.71 | 51.75 | 57.90 | 45.62 | |

| GLM-4V-Plus [51] | 38.98 | 57.83 | 46.57 | 17.11 | |

| GPT-4o [49] | 55.63 | 33.70 | 41.98 | 41.47 | |

| Qwen-VL-Plus [50] | 22.23 | 20.78 | 21.48 | 7.26 | |

| RSUniVLM [57] | 66.95 | 96.36 | 79.00 | 23.28 | |

| DeltaVLM | 77.79 | 80.22 | 78.99 | 65.53 |

| Method | BLEU-1 | BLEU-2 | BLEU-3 | BLEU-4 | METEOR | ROUGE-L | CIDEr |

|---|---|---|---|---|---|---|---|

| Deepseek-VL2 [53] | 40.57 | 30.16 | 23.84 | 19.51 | 22.26 | 40.01 | 170.08 |

| Gemini-1.5-Pro [52] | 28.76 | 19.46 | 13.68 | 9.88 | 19.66 | 32.03 | 85.45 |

| GLM-4V-Plus [51] | 31.46 | 22.31 | 16.66 | 12.80 | 21.32 | 35.93 | 129.48 |

| GPT-4o [49] | 29.47 | 20.34 | 14.56 | 10.71 | 20.42 | 33.08 | 93.71 |

| Qwen-VL-Plus [50] | 20.66 | 11.70 | 6.70 | 4.25 | 14.26 | 22.83 | 31.75 |

| RSUniVLM [57] | 14.10 | 7.12 | 4.37 | 2.84 | 9.75 | 33.36 | 70.54 |

| DeltaVLM | 43.25 | 32.53 | 25.71 | 20.87 | 21.65 | 49.24 | 203.34 |

| Method | BLEU-1 | BLEU-2 | BLEU-3 | BLEU-4 | METEOR | ROUGE-L | CIDEr |

|---|---|---|---|---|---|---|---|

| w/o CSRM | 64.42 | 56.52 | 53.08 | 51.40 | 29.31 | 60.54 | 101.92 |

| w/o Bi-VE FT | 84.24 | 75.62 | 67.91 | 61.40 | 39.29 | 74.73 | 134.76 |

| DeltaVLM | 85.78 | 77.15 | 69.24 | 62.51 | 39.47 | 75.01 | 136.72 |

| Method | Accuracy (%) | Precision (%) | Recall (%) | F1 (%) |

|---|---|---|---|---|

| w/o CSRM | 50.13 | 75.00 | 0.31 | 0.62 |

| w/o Bi-VE FT | 90.57 | 99.49 | 81.54 | 89.62 |

| DeltaVLM | 93.99 | 96.29 | 91.49 | 93.83 |

| Category | Method | Total Params | Trainable Params |

|---|---|---|---|

| RS Change Captioning Models | PromptCC [39] | 408.58 M | 196.28 M |

| PSNet [37] | 319.76 M | 231.53 M | |

| RSICCFormer [8] | 172.80 M | 81.51 M | |

| SFT [36] | 647 M | 647 M | |

| RSCaMa [38] | 176.90 M | 176.90 M | |

| VLMs | Deepseek-VL2 [53] | 27 B | 27 B |

| RSUniVLM [57] | ∼1.0 B | ∼1.0 B | |

| DeltaVLM | ∼8.2 B | 288 M |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Deng, P.; Zhou, W.; Wu, H. DeltaVLM: Interactive Remote Sensing Image Change Analysis via Instruction-Guided Difference Perception. Remote Sens. 2026, 18, 541. https://doi.org/10.3390/rs18040541

Deng P, Zhou W, Wu H. DeltaVLM: Interactive Remote Sensing Image Change Analysis via Instruction-Guided Difference Perception. Remote Sensing. 2026; 18(4):541. https://doi.org/10.3390/rs18040541

Chicago/Turabian StyleDeng, Pei, Wenqian Zhou, and Hanlin Wu. 2026. "DeltaVLM: Interactive Remote Sensing Image Change Analysis via Instruction-Guided Difference Perception" Remote Sensing 18, no. 4: 541. https://doi.org/10.3390/rs18040541

APA StyleDeng, P., Zhou, W., & Wu, H. (2026). DeltaVLM: Interactive Remote Sensing Image Change Analysis via Instruction-Guided Difference Perception. Remote Sensing, 18(4), 541. https://doi.org/10.3390/rs18040541