CSSA: A Cross-Modal Spatial–Semantic Alignment Framework for Remote Sensing Image Captioning

Highlights

- A novel remote sensing image captioning framework based on cross-modal spatial–semantic alignment (CSSA) is designed, which utilizes a multi-branch cross-modal contrastive learning (MCCL) mechanism to effectively narrow the representation gap between image and text.

- A dynamic geometry Transformer (DG-former) is designed to utilize spatial geometry information in remote sensing image scenes with scattered objects, realizing spatial alignment.

- Compared to discrete text, an image is fine-grained and contains more noise, making it more challenging to perceive the semantic alignment. CSSA significantly improves the semantic fidelity and spatial coherence of generated captions by explicitly modeling the alignment between visual regions and textual phrases, which is particularly beneficial for complex and heterogeneous remote sensing scenes.

- By incorporating geometric priors through the DG-former, the model achieves superior generalization in scenes with sparse, irregularly distributed objects, which is common in real-world remote sensing applications, thereby advancing the integration of spatial reasoning into image captioning.

Abstract

1. Introduction

- How to perceive the semantic relationship between image and text? Compared to discrete text, RSIs are fine-grained and contain more noise, making it more challenging to perceive the semantic alignment. However, RSIs and texts should have similar semantics, which are closely related in the semantic space, while image–text pairs with different meanings should be pushed far away. Hence, we can use text representation to provide direct guidance for extracting vision representation, achieving semantic consistency between different modalities.

- How to introduce geometry information to achieve spatial alignment? The global self-attention mechanism utilized in the Transformer structure enables the modeling of relationships between any positions within an RSI, which aligns with the properties of RSIs that contain rich objects, scattered distribution and complex relationships. However, the one-dimensional positional encoding used by Transformers is unsuitable for two-dimensional vision feature maps, leading to suboptimal capture of the spatial structure.

- Considering sparsity and noisy properties in RSIs, we propose a cross-modal spatial–semantic alignment (CSSA) framework for an RSIC task to learn consistent representation between image and text.

- To realize semantic alignment, we propose a multi-branch cross-modal contrastive learning (MCCL) mechanism, which narrows the modality gap between image and text in the representation space.

- A dynamic geometry Transformer (DG-former) is proposed to utilize spatial geometry information in RSI scenes with scattered objects, realizing spatial alignment.

- To demonstrate the effectiveness of our method, we conduct extensive experiments on three remote sensing image captioning datasets, achieving state-of-the-art performance.

2. Related Work

3. Proposed Method

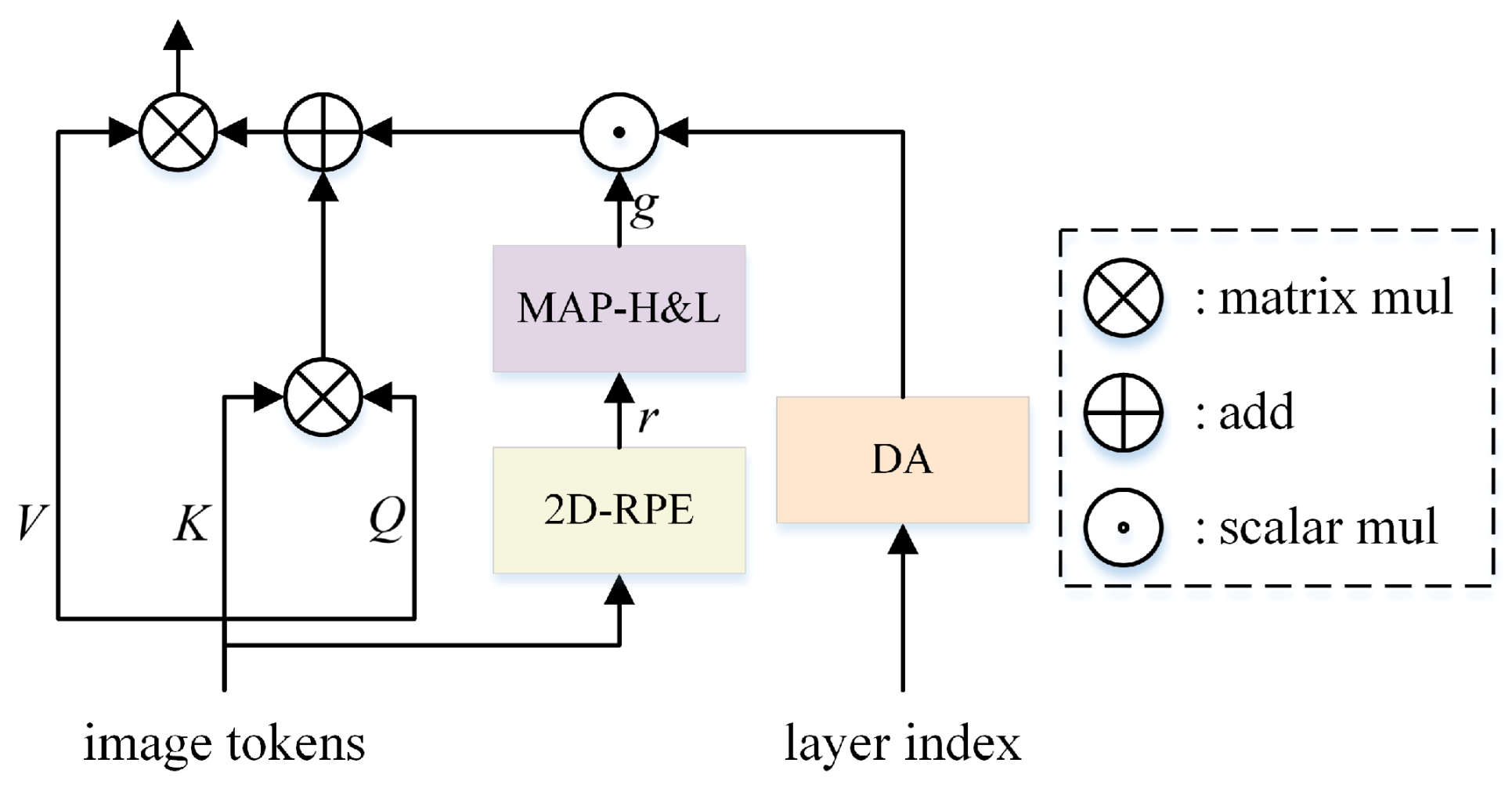

3.1. Dynamic Geometry Transformer

3.2. Dynamic Geometry Positional Encoding Module

3.2.1. Exponential Decay

3.2.2. Logarithmic Decay

3.2.3. Cosine Decay

3.3. Text Decoder

3.3.1. Text Decoder in MCCL

3.3.2. Text Decoder in RSIC

3.4. Training Strategy and Loss Function

3.4.1. MCCL Stage

3.4.2. RSIC Stage

4. Experiments

4.1. Datasets

- Sydney-Captions: The Sydney-Captions dataset is based on the Sydney dataset, with five descriptive sentences annotated per RSI. There are 613 images in the dataset involving seven types of ground objects, including residential, airport, meadow, rivers, ocean, industrial and runway. The size of the images is 500 × 500.

- UCM-Captions: The UCM-Captions dataset is based on the UC-Merced land use dataset with five descriptive sentences per image. The dataset contains 21 categories of ground objects, each with 100 images. The size of each RSI is 256 × 256.

- RSICD: The RSICD is the largest RSIC dataset. These RSIs are from Google Earth, Baidu Map, MapABC and Tianditu, and are fixed to 224 × 224 pixels with various resolutions. The total number of RSIs is 10,921, with five sentence descriptions per image.

4.2. Evaluation Metrics

4.3. Experimental Settings

4.4. Comparison with Other Methods

- SAT [48] utilizes a CNN to extract image features. It employs a spatial attention mechanism to select relevant image regions, which are subsequently translated into natural language sentences using an LSTM model.

- FC(SM)-ATT + LSTM [21] incorporates high-level attribute information from a CNN to guide the attention layer, which can choose the most informative context vectors.

- Structured-ATT [4] integrates an RSI object segmentation map into a structured attention mechanism, enabling selective attention to essential object contour.

- GVFGA + LSGA [22] filters out redundant feature components and irrelevant information in the attention mechanism by exploiting GVFGA and LSGA mechanisms.

- RASG [10] combines stacked LSTMs with a recurrent attention mechanism, which leverages the recurrence property of LSTM to capture the relevance between image and text.

- Word–Sentence [13] is a two-stage method, which divides the image captioning task into word extraction and sentence generation.

- JTTS [14] is also a two-stage approach, where the initial stage involves a multi-label classification task, followed by the fusion of predictions during the captioning stage.

- VRTMM [11] employs a variational autoencoder to generate semantic vision features and utilizes a transformer to generate sentences.

- The CNN + Transformer [12] framework uses a CNN as an encoder to extract image features and uses a Transformer as an decoder to generate sentences.

- TrTr-CMR [28] integrates swin-Transformer as an encoder for multi-scale visual features, while a Transformer decoder generates a well-formed sentence for an RSI.

- KE [29] effectively captures the intrinsic semantic information of remote sensing categories for entity embeddings and relationship embeddings. In the decoder stage, combining the visual features of RSIs with structural information on embedded knowledge improves the detail expressiveness of the generated descriptions.

4.4.1. Quantitative Comparison

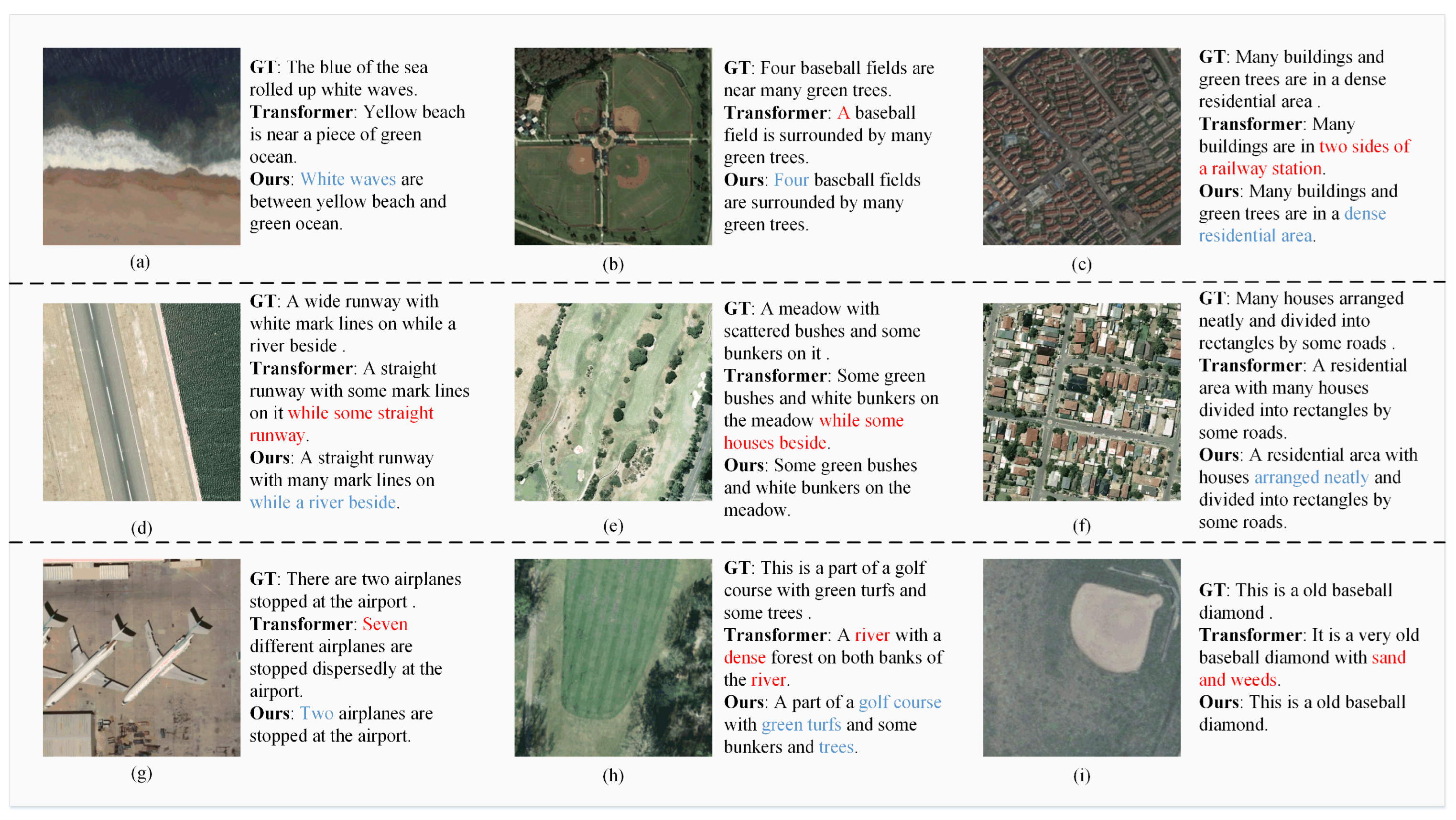

4.4.2. Qualitative Comparison

5. Discussion

5.1. Ablation Study

- DGP: This component dynamically augments geometry information based on the number of layers.

- MCCL: This component facilitates the acquisition of robust image representations, thereby reducing the semantic gap between image and text.

5.1.1. Quantitative Comparison

5.1.2. Qualitative Comparison

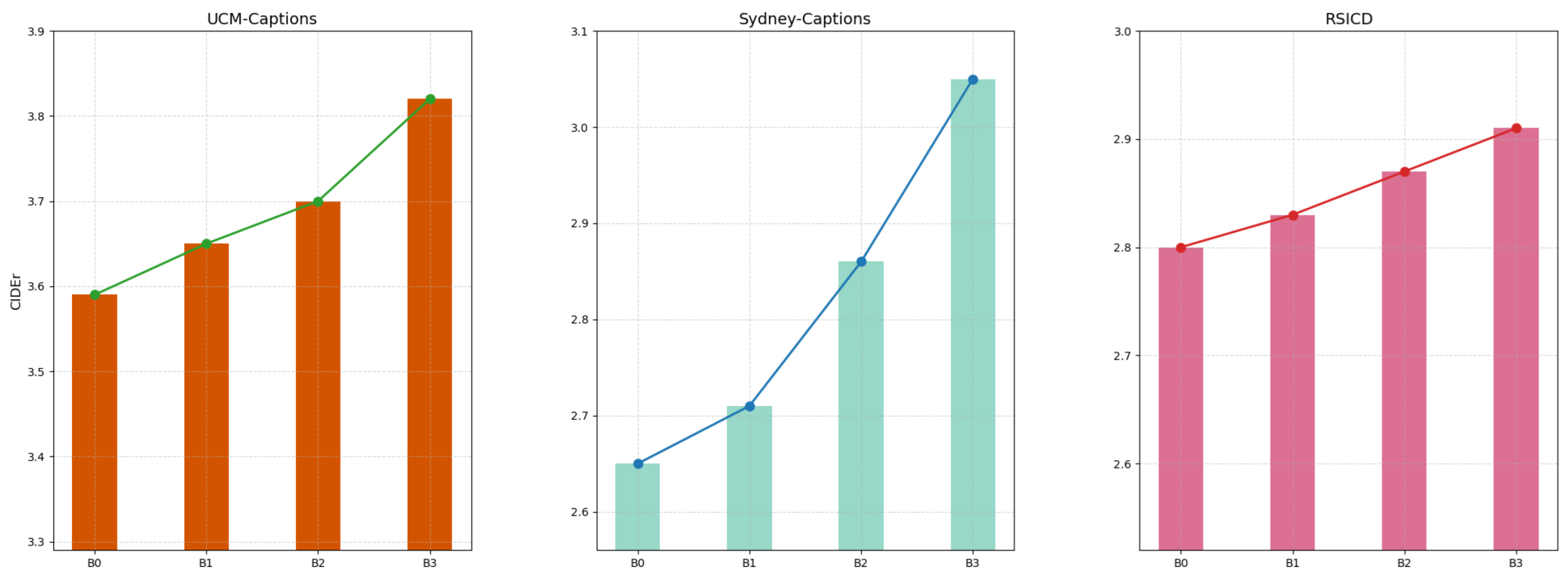

5.2. Parameter Sensitivity Analysis

5.2.1. DGP Parameter

5.2.2. MCCL Parameter

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| RSIC | Remote sensing image captioning. |

| RSI | Remote sensing image. |

| CNN | Convolutional neural network. |

| LSTM | Long short-term memory. |

| MCA | Multi-head cross attention. |

| CSSA | Cross-modal spatial-semantic alignment framework. |

| MCCL | Multi-branch cross-modal contrastive learning mechanism. |

| MGA | Multi-head dynamic geometry enhancement attention. |

| DGP | Dynamic geometry positional encoding module. |

| BLEU | Biingual evaluation understudy. |

| ROUGE | Recall-oriented understudy for gisting evaluation—Longest. |

| METEOR | Metric for Evaluation of translation with explicit ordering. |

| CIDEr | Consensus-based image description evaluation. |

| The result of the i-th MGA head. | |

| Dynamic geometry positional encoding information. | |

| The relative positional encoding. | |

| The original RSI features in the MCCL stage. | |

| The enhanced RSI features in the MCCL stage. | |

| The text augmentation result. | |

| The original text features in the MCCL stage. | |

| The enhanced text features in the MCCL stage. | |

| T | The max length in ground-truth sentence. |

| The generated word at t time. |

References

- Cheng, G.; Yang, C.; Yao, X.; Guo, L.; Han, J. When deep learning meets metric learning: Remote sensing image scene classification via learning discriminative CNNs. IEEE Trans. Geosci. Remote Sens. 2018, 56, 2811–2821. [Google Scholar] [CrossRef]

- Wang, G.; Zhang, X.; Peng, Z.; Jia, X.; Tang, X.; Jiao, L. MOL: Towards accurate weakly supervised remote sensing object detection via Multi-view nOisy Learning. ISPRS J. Photogramm. Remote Sens. 2023, 196, 457–470. [Google Scholar] [CrossRef]

- Nie, J.; Wang, C.; Yu, S.; Shi, J.; Lv, X.; Wei, Z. MIGN: Multiscale Image Generation Network for Remote Sensing Image Semantic Segmentation. IEEE Trans. Multimed. 2022, 25, 5601–5613. [Google Scholar] [CrossRef]

- Zhao, R.; Shi, Z.; Zou, Z. High-resolution remote sensing image captioning based on structured attention. IEEE Trans. Geosci. Remote Sens. 2021, 60, 5603814. [Google Scholar] [CrossRef]

- Zhang, X.; Li, Y.; Wang, X.; Liu, F.; Wu, Z.; Cheng, X.; Jiao, L. Multi-Source Interactive Stair Attention for Remote Sensing Image Captioning. Remote Sens. 2023, 15, 579. [Google Scholar] [CrossRef]

- Zhao, K.; Xiong, W. Exploring region features in remote sensing image captioning. Int. J. Appl. Earth Obs. Geoinf. 2024, 127, 103672. [Google Scholar] [CrossRef]

- Li, Y.; Zhang, X.; Zhang, T.; Wang, G.; Wang, X.; Li, S. A patch-level region-aware module with a multi-label framework for remote sensing image captioning. Remote Sens. 2024, 16, 3987. [Google Scholar] [CrossRef]

- Zhang, Z.; Diao, W.; Zhang, W.; Yan, M.; Gao, X.; Sun, X. LAM: Remote sensing image captioning with label-attention mechanism. Remote Sens. 2019, 11, 2349. [Google Scholar] [CrossRef]

- Li, Y.; Zhang, X.; Cheng, X.; Tang, X.; Jiao, L. Learning consensus-aware semantic knowledge for remote sensing image captioning. Pattern Recognit. 2024, 145, 109893. [Google Scholar] [CrossRef]

- Li, Y.; Zhang, X.; Gu, J.; Li, C.; Wang, X.; Tang, X.; Jiao, L. Recurrent attention and semantic gate for remote sensing image captioning. IEEE Trans. Geosci. Remote Sens. 2021, 60, 5608816. [Google Scholar] [CrossRef]

- Shen, X.; Liu, B.; Zhou, Y.; Zhao, J.; Liu, M. Remote sensing image captioning via Variational Autoencoder and Reinforcement Learning. Knowl.-Based Syst. 2020, 203, 105920. [Google Scholar] [CrossRef]

- Zhuang, S.; Wang, P.; Wang, G.; Wang, D.; Chen, J.; Gao, F. Improving remote sensing image captioning by combining grid features and transformer. IEEE Geosci. Remote Sens. Lett. 2021, 19, 6504905. [Google Scholar] [CrossRef]

- Wang, Q.; Huang, W.; Zhang, X.; Li, X. Word–sentence framework for remote sensing image captioning. IEEE Trans. Geosci. Remote Sens. 2020, 59, 10532–10543. [Google Scholar] [CrossRef]

- Ye, X.; Wang, S.; Gu, Y.; Wang, J.; Wang, R.; Hou, B.; Giunchiglia, F.; Jiao, L. A Joint-Training Two-Stage Method For Remote Sensing Image Captioning. IEEE Trans. Geosci. Remote Sens. 2022, 60, 4709616. [Google Scholar] [CrossRef]

- Cheng, K.; Liu, J.; Mao, R.; Wu, Z.; Cambria, E. CSA-RSIC: Cross-Modal Semantic Alignment for Remote Sensing Image Captioning. IEEE Geosci. Remote Sens. Lett. 2025, 22, 6012305. [Google Scholar] [CrossRef]

- Qu, B.; Li, X.; Tao, D.; Lu, X. Deep semantic understanding of high resolution remote sensing image. In Proceedings of the 2016 International Conference on Computer, Information and Telecommunication Systems (Cits), Kunming, China, 6–8 July 2016; IEEE: New York, NY, USA, 2016; pp. 1–5. [Google Scholar]

- Lu, X.; Wang, B.; Zheng, X.; Li, X. Exploring models and data for remote sensing image caption generation. IEEE Trans. Geosci. Remote Sens. 2017, 56, 2183–2195. [Google Scholar] [CrossRef]

- Shi, Z.; Zou, Z. Can a machine generate humanlike language descriptions for a remote sensing image? IEEE Trans. Geosci. Remote Sens. 2017, 55, 3623–3634. [Google Scholar] [CrossRef]

- Huang, W.; Wang, Q.; Li, X. Denoising-based multiscale feature fusion for remote sensing image captioning. IEEE Geosci. Remote Sens. Lett. 2020, 18, 436–440. [Google Scholar] [CrossRef]

- Ma, X.; Zhao, R.; Shi, Z. Multiscale methods for optical remote-sensing image captioning. IEEE Geosci. Remote Sens. Lett. 2020, 18, 2001–2005. [Google Scholar] [CrossRef]

- Zhang, X.; Wang, X.; Tang, X.; Zhou, H.; Li, C. Description generation for remote sensing images using attribute attention mechanism. Remote Sens. 2019, 11, 612. [Google Scholar] [CrossRef]

- Zhang, Z.; Zhang, W.; Yan, M.; Gao, X.; Fu, K.; Sun, X. Global visual feature and linguistic state guided attention for remote sensing image captioning. IEEE Trans. Geosci. Remote Sens. 2021, 60, 5615216. [Google Scholar] [CrossRef]

- Li, Y.; Fang, S.; Jiao, L.; Liu, R.; Shang, R. A multi-level attention model for remote sensing image captions. Remote Sens. 2020, 12, 939. [Google Scholar] [CrossRef]

- Lu, X.; Wang, B.; Zheng, X. Sound active attention framework for remote sensing image captioning. IEEE Trans. Geosci. Remote Sens. 2019, 58, 1985–2000. [Google Scholar] [CrossRef]

- Cui, W.; Wang, F.; He, X.; Zhang, D.; Xu, X.; Yao, M.; Wang, Z.; Huang, J. Multi-scale semantic segmentation and spatial relationship recognition of remote sensing images based on an attention model. Remote Sens. 2019, 11, 1044. [Google Scholar] [CrossRef]

- Wang, S.; Ye, X.; Gu, Y.; Wang, J.; Meng, Y.; Tian, J.; Hou, B.; Jiao, L. Multi-label semantic feature fusion for remote sensing image captioning. ISPRS J. Photogramm. Remote Sens. 2022, 184, 1–18. [Google Scholar] [CrossRef]

- Ren, Z.; Gou, S.; Guo, Z.; Mao, S.; Li, R. A mask-guided transformer network with topic token for remote sensing image captioning. Remote Sens. 2022, 14, 2939. [Google Scholar] [CrossRef]

- Wu, Y.; Li, L.; Jiao, L.; Liu, F.; Liu, X.; Yang, S. TrTr-CMR: Cross-Modal Reasoning Dual Transformer for Remote Sensing Image Captioning. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5643912. [Google Scholar] [CrossRef]

- Cheng, K.; Cambria, E.; Liu, J.; Chen, Y.; Wu, Z. KE-RSIC: Remote Sensing Image Captioning Based on Knowledge Embedding. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2025, 18, 4286–4304. [Google Scholar] [CrossRef]

- Wang, B.; Lu, X.; Zheng, X.; Li, X. Semantic descriptions of high-resolution remote sensing images. IEEE Geosci. Remote Sens. Lett. 2019, 16, 1274–1278. [Google Scholar] [CrossRef]

- Sumbul, G.; Nayak, S.; Demir, B. SD-RSIC: Summarization-driven deep remote sensing image captioning. IEEE Trans. Geosci. Remote Sens. 2020, 59, 6922–6934. [Google Scholar] [CrossRef]

- Wang, B.; Zheng, X.; Qu, B.; Lu, X. Retrieval topic recurrent memory network for remote sensing image captioning. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 13, 256–270. [Google Scholar] [CrossRef]

- Fu, K.; Li, Y.; Zhang, W.; Yu, H.; Sun, X. Boosting memory with a persistent memory mechanism for remote sensing image captioning. Remote Sens. 2020, 12, 1874. [Google Scholar] [CrossRef]

- Li, X.; Zhang, X.; Huang, W.; Wang, Q. Truncation cross entropy loss for remote sensing image captioning. IEEE Trans. Geosci. Remote Sens. 2020, 59, 5246–5257. [Google Scholar] [CrossRef]

- Hoxha, G.; Melgani, F. A novel SVM-based decoder for remote sensing image captioning. IEEE Trans. Geosci. Remote Sens. 2021, 60, 5404514. [Google Scholar] [CrossRef]

- Radford, A.; Narasimhan, K.; Salimans, T.; Sutskever, I. Improving Language Understanding by Generative Pre-Training; OpenAI: San Francisco, CA, USA, 2018. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Yang, Q.; Ni, Z.; Ren, P. Meta captioning: A meta learning based remote sensing image captioning framework. ISPRS J. Photogramm. Remote Sens. 2022, 186, 190–200. [Google Scholar] [CrossRef]

- Song, R.; Zhao, B.; Yu, L. Enhanced CLIP-GPT Framework for Cross-Lingual Remote Sensing Image Captioning. IEEE Access 2024, 13, 904–915. [Google Scholar] [CrossRef]

- Lin, Q.; Wang, S.; Ye, X.; Wang, R.; Yang, R.; Jiao, L. CLIP-based grid features and masking for remote sensing image captioning. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 18, 2631–2642. [Google Scholar] [CrossRef]

- Zhang, Y.; Jiang, H.; Miura, Y.; Manning, C.D.; Langlotz, C.P. Contrastive learning of medical visual representations from paired images and text. In Proceedings of the Machine Learning for Healthcare Conference, PMLR, Durham, NC, USA, 5–6 August 2022; pp. 2–25. [Google Scholar]

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning transferable visual models from natural language supervision. In Proceedings of the International Conference on Machine Learning, PMLR, Virtual Event, 18–24 July 2021; pp. 8748–8763. [Google Scholar]

- Yang, Y.; Newsam, S. Bag-of-visual-words and spatial extensions for land-use classification. In Proceedings of the 18th SIGSPATIAL International Conference on Advances in Geographic Information Systems, San Jose, CA, USA, 2–5 November 2010; pp. 270–279. [Google Scholar]

- Banerjee, S.; Lavie, A. METEOR: An automatic metric for MT evaluation with improved correlation with human judgments. In Proceedings of the ACL Workshop on Intrinsic and Extrinsic Evaluation Measures for Machine Translation and/or Summarization, Ann Arbor, MI, USA, 29 June 2005; pp. 65–72. [Google Scholar]

- Lin, C. ROUGE: A package for automatic evaluation of summaries. In Text Summarization Branches Out; Association for Computational Linguistics: Barcelona, Spain, 2004. [Google Scholar]

- Vedantam, R.; Zitnick, C.; Parikh, D. Cider: Consensus-based image description evaluation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 4566–4575. [Google Scholar]

- Anderson, P.; Fernando, B.; Johnson, M.; Gould, S. Spice: Semantic propositional image caption evaluation. In Proceedings of the 14th European Conference, Amsterdam, The Netherlands, 11–14 October 2016; pp. 382–398. [Google Scholar]

- Xu, K.; Ba, J.; Kiros, R.; Cho, K.; Courville, A.; Salakhudinov, R.; Zemel, R.; Bengio, Y. Show, attend and tell: Neural image caption generation with visual attention. In Proceedings of the International Conference on Machine Learning, PMLR, San Diego, CA, USA, 9–12 May 2015; pp. 2048–2057. [Google Scholar]

| Method | BLEU-1 | BLEU-2 | BLEU-3 | BLEU-4 | METEOR | ROUGE | CIDEr | SPICE |

|---|---|---|---|---|---|---|---|---|

| SAT [48] | 0.7905 | 0.7020 | 0.6232 | 0.5477 | 0.3925 | 0.7206 | 2.2013 | - |

| FC-ATT + LSTM [21] | 0.8076 | 0.7160 | 0.6276 | 0.5544 | 0.4099 | 0.7144 | 2.2033 | - |

| SM-ATT + LSTM [21] | 0.8143 | 0.7351 | 0.6586 | 0.5806 | 0.4111 | 0.7195 | 2.3021 | - |

| SAT(LAM) [8] | 0.7405 | 0.6550 | 0.5904 | 0.5304 | 0.3689 | 0.6814 | 2.3519 | 0.4308 |

| Structured-ATT [4] | 0.7795 | 0.7019 | 0.6392 | 0.5861 | 0.3954 | 0.7299 | 2.3791 | - |

| GVFGA + LSGA [22] | 0.7681 | 0.6846 | 0.6145 | 0.5504 | 0.3866 | 0.7030 | 2.4522 | 0.4532 |

| RASG [10] | 0.8000 | 0.7217 | 0.6531 | 0.5909 | 0.3908 | 0.7218 | 2.6311 | 0.4301 |

| Word–Sentence [13] | 0.7891 | 0.7094 | 0.6317 | 0.5625 | 0.4181 | 0.6922 | 2.0411 | - |

| JTTS [14] | 0.8492 | 0.7797 | 0.7137 | 0.6496 | 0.4457 | 0.7660 | 2.8010 | 0.4679 |

| VRTMM [11] | 0.7443 | 0.6723 | 0.6172 | 0.5699 | 0.3748 | 0.6698 | 2.5285 | - |

| CNN + Transformer [12] | 0.8100 | 0.7320 | 0.6500 | 0.5710 | - | 0.7490 | 2.5670 | - |

| TrTr-CMR [28] | 0.8270 | 0.6994 | 0.6002 | 0.5199 | 0.3803 | 0.7220 | 2.2728 | - |

| KE [29] | 0.8550 | 0.7810 | 0.6450 | 0.5800 | 0.4530 | 0.7280 | 2.9440 | - |

| CSSA (Ours) | 0.8406 | 0.7777 | 0.7138 | 0.6544 | 0.4555 | 0.7839 | 3.0501 | 0.5050 |

| Method | BLEU-1 | BLEU-2 | BLEU-3 | BLEU-4 | METEOR | ROUGE | CIDEr | SPICE |

|---|---|---|---|---|---|---|---|---|

| SAT [48] | 0.7993 | 0.7355 | 0.6790 | 0.6244 | 0.4174 | 0.7441 | 3.0038 | - |

| FC-ATT + LSTM [21] | 0.8135 | 0.7502 | 0.6849 | 0.6352 | 0.4173 | 0.7504 | 2.9958 | - |

| SM-ATT + LSTM [21] | 0.8154 | 0.7575 | 0.6936 | 0.6458 | 0.4240 | 0.7632 | 3.1864 | - |

| SAT(LAM) [8] | 0.8195 | 0.7764 | 0.7485 | 0.7161 | 0.4837 | 0.7908 | 3.6171 | 0.5024 |

| Structured-ATT [4] | 0.8538 | 0.8035 | 0.7572 | 0.7149 | 0.4632 | 0.8141 | 3.3489 | - |

| GVFGA + LSGA [22] | 0.8319 | 0.7657 | 0.7103 | 0.6596 | 0.4436 | 0.7845 | 3.3270 | 0.4853 |

| RASG [10] | 0.8518 | 0.7925 | 0.7432 | 0.6976 | 0.4571 | 0.8072 | 3.3887 | 0.4891 |

| Word–Sentence [13] | 0.7931 | 0.7237 | 0.6671 | 0.6202 | 0.4395 | 0.7132 | 2.7871 | - |

| JTTS [14] | 0.8696 | 0.8224 | 0.7788 | 0.7376 | 0.4906 | 0.8364 | 3.7102 | 0.5231 |

| VRTMM [11] | 0.8394 | 0.7785 | 0.7283 | 0.6828 | 0.4527 | 0.8026 | 3.4948 | - |

| CNN + Transformer [12] | 0.8340 | 0.7720 | 0.7180 | 0.6730 | - | 0.7700 | 3.3150 | - |

| TrTr-CMR [28] | 0.8156 | 0.7091 | 0.6220 | 0.5469 | 0.3978 | 0.7442 | 2.4742 | - |

| KE [29] | 0.8990 | 0.8290 | 0.7860 | 0.7170 | 0.4950 | 0.8430 | 3.7660 | - |

| CSSA (Ours) | 0.8911 | 0.8469 | 0.8037 | 0.7598 | 0.4870 | 0.8474 | 3.8248 | 0.5428 |

| Method | BLEU-1 | BLEU-2 | BLEU-3 | BLEU-4 | METEOR | ROUGE | CIDEr | SPICE |

|---|---|---|---|---|---|---|---|---|

| SAT [48] | 0.7336 | 0.6129 | 0.5190 | 0.4402 | 0.3549 | 0.6419 | 2.2486 | - |

| FC-ATT + LSTM [21] | 0.7459 | 0.6250 | 0.5338 | 0.4574 | 0.3395 | 0.6333 | 2.3664 | - |

| SM-ATT + LSTM [21] | 0.7571 | 0.6336 | 0.5385 | 0.4612 | 0.3513 | 0.6458 | 2.3563 | - |

| SAT(LAM) [8] | 0.6753 | 0.5537 | 0.4686 | 0.4026 | 0.3254 | 0.5823 | 2.585 | 0.4636 |

| Structured-ATT [4] | 0.7016 | 0.5614 | 0.4648 | 0.3934 | 0.3291 | 0.5706 | 1.7031 | - |

| GVFGA + LSGA [22] | 0.6779 | 0.5600 | 0.4781 | 0.4165 | 0.3285 | 0.5929 | 2.6012 | 0.4683 |

| RASG [10] | 0.7729 | 0.6651 | 0.5782 | 0.5062 | 0.3626 | 0.6691 | 2.7549 | 0.4719 |

| Word–Sentence [13] | 0.7240 | 0.5861 | 0.4933 | 0.4250 | 0.3197 | 0.6260 | 2.0629 | - |

| JTTS [14] | 0.7893 | 0.6795 | 0.5893 | 0.5135 | 0.3773 | 0.6823 | 2.7958 | 0.4877 |

| VRTMM [11] | 0.7813 | 0.6721 | 0.5645 | 0.5123 | 0.3737 | 0.6713 | 2.7150 | - |

| CNN + Transformer [12] | 0.7740 | 0.6680 | 0.5810 | 0.5100 | - | 0.6780 | 2.7730 | - |

| TrTr-CMR [28] | 0.6201 | 0.3937 | 0.2671 | 0.1932 | 0.2399 | 0.4859 | 0.7518 | - |

| KE [29] | 0.7910 | 0.6790 | 0.5910 | 0.5170 | 0.3810 | 0.6910 | 2.8320 | - |

| CSSA (Ours) | 0.8131 | 0.7016 | 0.6021 | 0.5275 | 0.3997 | 0.6932 | 2.9056 | 0.4988 |

| Dataset | DGP | MCCL | BLEU-1 | BLEU-2 | BLEU-3 | BLEU-4 | METEOR | ROUGE | CIDEr | SPICE |

|---|---|---|---|---|---|---|---|---|---|---|

| Sydney-Captions | × | × | 0.8368 | 0.7525 | 0.6683 | 0.5874 | 0.4160 | 0.7471 | 2.6533 | 0.4357 |

| ✓ | × | 0.8128 | 0.7510 | 0.6906 | 0.6319 | 0.4315 | 0.7497 | 2.8862 | 0.4868 | |

| × | ✓ | 0.8333 | 0.7608 | 0.6879 | 0.6178 | 0.4364 | 0.7782 | 2.8773 | 0.4642 | |

| ✓ | ✓ | 0.8406 | 0.7777 | 0.7138 | 0.6544 | 0.4555 | 0.7839 | 3.0501 | 0.5050 | |

| UCM-Captions | × | × | 0.8600 | 0.8106 | 0.7637 | 0.7188 | 0.4628 | 0.8041 | 3.5912 | 0.4848 |

| ✓ | × | 0.8700 | 0.8252 | 0.7782 | 0.7322 | 0.4712 | 0.8192 | 3.6639 | 0.5024 | |

| × | ✓ | 0.8770 | 0.8278 | 0.7801 | 0.7338 | 0.4772 | 0.8326 | 3.6781 | 0.5208 | |

| ✓ | ✓ | 0.8911 | 0.8469 | 0.8037 | 0.7598 | 0.4870 | 0.8474 | 3.8248 | 0.5428 | |

| RSICD | × | × | 0.7839 | 0.6711 | 0.5812 | 0.5074 | 0.3666 | 0.6680 | 2.7963 | 0.4800 |

| ✓ | × | 0.7938 | 0.6818 | 0.5909 | 0.5136 | 0.3776 | 0.6853 | 2.8367 | 0.4891 | |

| × | ✓ | 0.7632 | 0.6528 | 0.5634 | 0.4902 | 0.3915 | 0.6915 | 2.8390 | 0.5005 | |

| ✓ | ✓ | 0.8131 | 0.7016 | 0.6021 | 0.5275 | 0.3997 | 0.6932 | 2.9056 | 0.4988 |

| Decay | Init. Params | CIDEr | ||

|---|---|---|---|---|

| UCM-Captions | Sydney-Captions | RSICD | ||

| Exp | 0.0 | 3.5924 | 2.7801 | 2.8144 |

| 0.5 | 3.6398 | 2.7912 | 2.8542 | |

| 1.0 | 3.6639 | 2.8862 | 2.8367 | |

| 2.0 | 3.6483 | 2.8176 | 2.8587 | |

| Log | - | 3.6476 | 2.8735 | 2.8325 |

| Cos | - | 3.6539 | 2.8746 | 2.8426 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Han, X.; Wu, Z.; Li, Y.; Zhang, X.; Wang, G.; Hou, B. CSSA: A Cross-Modal Spatial–Semantic Alignment Framework for Remote Sensing Image Captioning. Remote Sens. 2026, 18, 522. https://doi.org/10.3390/rs18030522

Han X, Wu Z, Li Y, Zhang X, Wang G, Hou B. CSSA: A Cross-Modal Spatial–Semantic Alignment Framework for Remote Sensing Image Captioning. Remote Sensing. 2026; 18(3):522. https://doi.org/10.3390/rs18030522

Chicago/Turabian StyleHan, Xiao, Zhaoji Wu, Yunpeng Li, Xiangrong Zhang, Guanchun Wang, and Biao Hou. 2026. "CSSA: A Cross-Modal Spatial–Semantic Alignment Framework for Remote Sensing Image Captioning" Remote Sensing 18, no. 3: 522. https://doi.org/10.3390/rs18030522

APA StyleHan, X., Wu, Z., Li, Y., Zhang, X., Wang, G., & Hou, B. (2026). CSSA: A Cross-Modal Spatial–Semantic Alignment Framework for Remote Sensing Image Captioning. Remote Sensing, 18(3), 522. https://doi.org/10.3390/rs18030522