Frequency Domain and Gradient-Spatial Multi-Scale Swin KANsformer for Remote Sensing Scene Classification

Highlights

- We propose the FG-Swin KANsformer, utilizing frequency domain and gradient prior information and combining the nonlinear modeling capability of the Swin Transformer with the fusion of KAN to enhance the utilization of original image information and feature extraction capabilities.

- The FG-Swin KANsformer achieves exceptional performance in remote sensing scene classification on multiple benchmark datasets, with a classification accuracy superior to numerous mainstream models based on CNN and Transformer.

- This study utilizes the original image and global semantic information, integrating detailed features and multi-scale spatial relationships, providing a reliable model for remote sensing scene classification tasks.

- The model is scalable and offers a new feature extraction modeling approach based on the Transformer architecture for other remote sensing tasks.

Abstract

1. Introduction

- Insufficient utilization of frequency domain information—Traditional Transformer-based models typically perform patch embedding directly from raw pixel inputs, without fully exploiting the potential of frequency domain features in modeling high-frequency texture details and low-frequency global structures. As a result, frequency domain information is not sufficiently leveraged.

- Inadequate modeling of gradient and structural priors—Most existing studies focus on capturing contextual semantics using self-attention mechanisms or convolutional operations, while neglecting structural priors such as gradients and edges, making the model difficult to recognize in scenarios with blurred class boundaries or dense small targets, affecting classification accuracy.

- Limited expressive capability of feedforward networks—The MLPs exhibit restricted nonlinear mapping capabilities and are insufficient for modeling the complex and highly variable visual patterns present in RS imagery. In scenarios involving multi-scale objects and high interclass similarity, this limitation often leads to insufficient feature extraction.

- We design a new RSSC model named FG-Swin KANsformer. Based on the Swin Transformer, it introduces the frequency domain feature enhancement DCT module and the gradient-spatial structure enhancement GSFE module, while replacing the MLP in the Swin Transformer Blocks with KAN. This approach enhances both input feature enhancement and deep nonlinear modeling, significantly improving the overall performance of RSSC.

- An effective-frequency spatial-gradient-aware feature construction mechanism is introduced to strengthen the quality of input representations. The DCT module decomposes input images into high- and low-frequency components, which are then adaptively fused to maintain a balance between global structural information and fine-grained texture features. Meanwhile, the GSFE module utilizes multi-scale convolutional filters and the Sobel operator to extract discriminative gradient and spatial structural features, leading to more expressive feature embeddings that capture edges, shapes, and contextual dependencies.

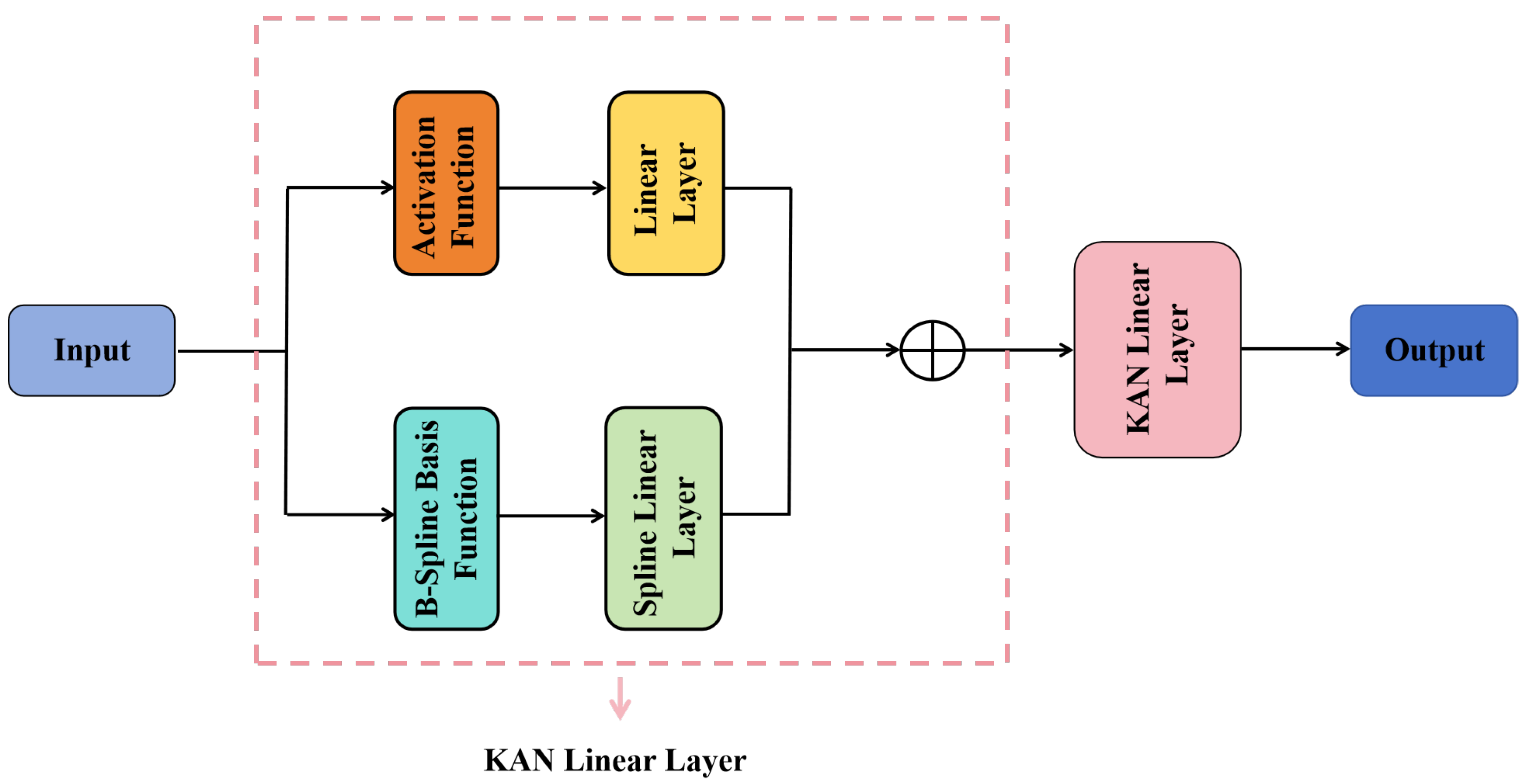

- To enhance nonlinear modeling capability, the KAN-augmented feedforward layer is constructed by replacing the standard MLP in Swin Transformer Blocks with KAN. This modification enhances the FG-Swin KANsformer’s nonlinear function approximation ability and strengthens the representation of complex feature representation, leading to more effective deep semantic modeling and higher classification accuracy.

2. Related Work

2.1. Transformer-Based Models for RSSC

2.2. Kolmogorov–Arnold Networks (KAN)

2.3. Feature Enhancement Method Based on Frequency Domain and Gradients in Image Analysis

3. Methods

3.1. Overview

3.2. Feature Enhancement Module

3.2.1. DCT Module

3.2.2. GSFE Module

3.3. KAN Module in Swin Transformer Blocks

4. Results

4.1. Dataset

4.2. Training Settings

4.3. Comparison Experiments

4.3.1. Experimental Results of UCM Dataset

4.3.2. Experimental Results of AID

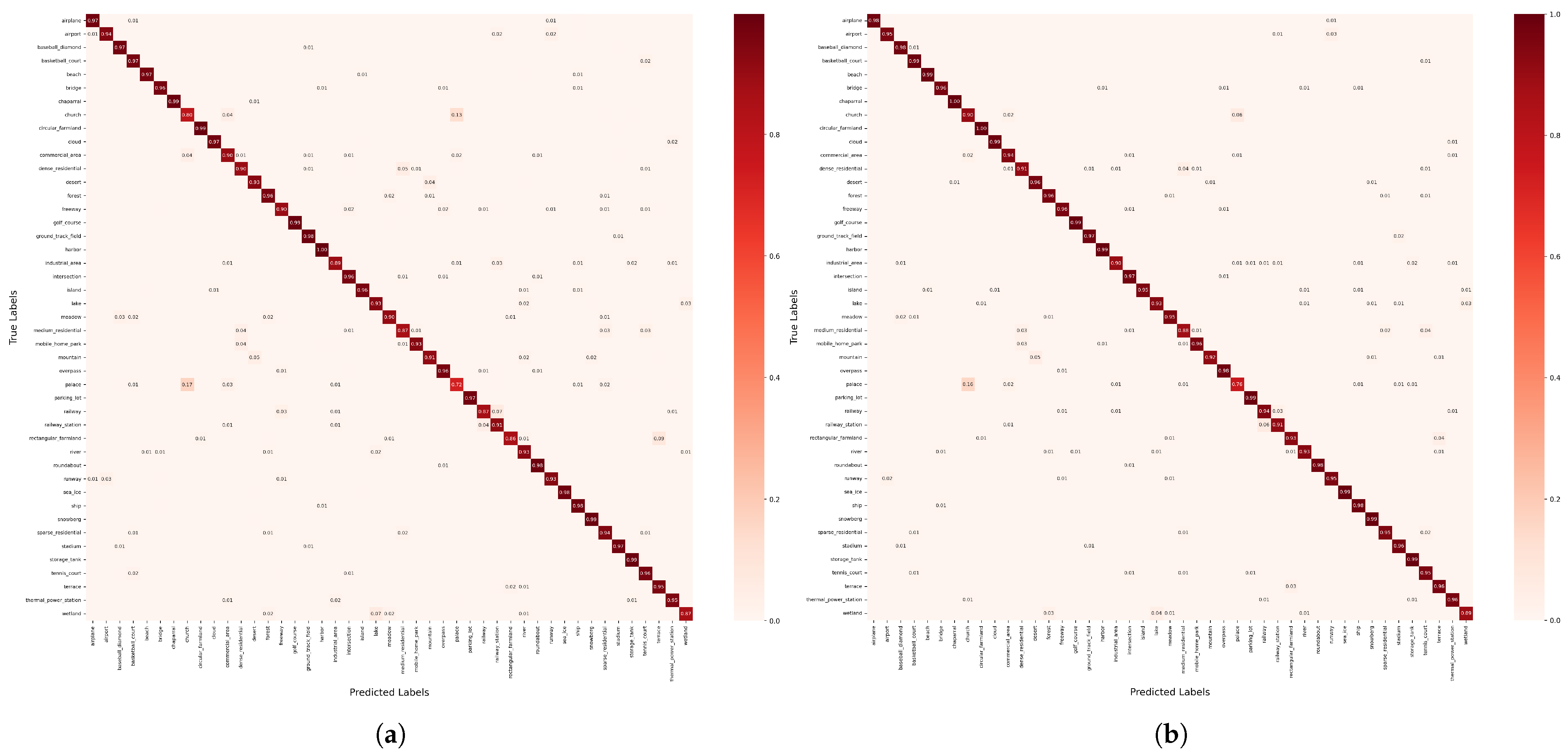

4.3.3. Experimental Results of NWPU Dataset

5. Discussion

5.1. Ablation Study

5.2. Evaluation of Size of Models

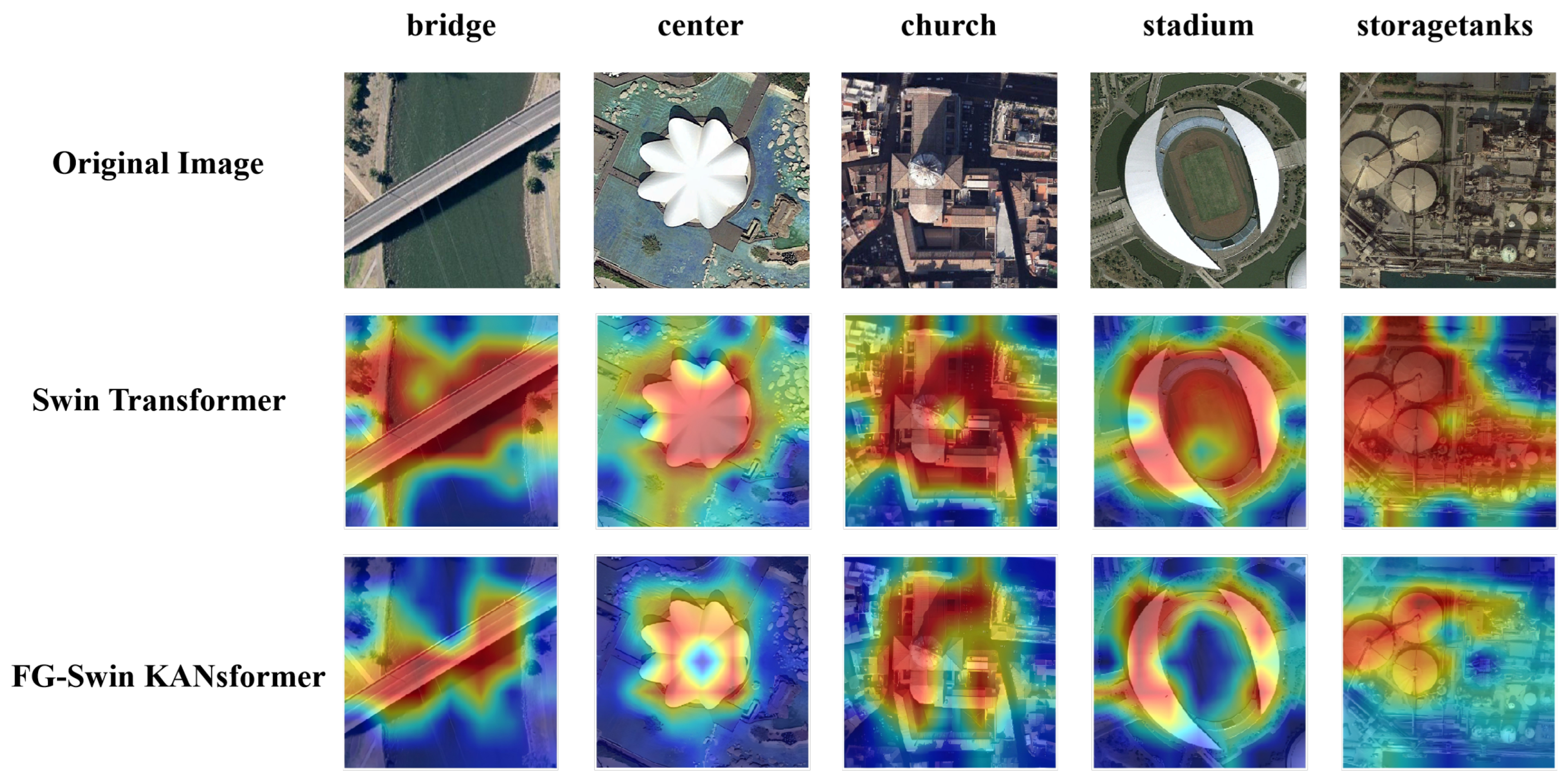

5.3. Visualization Study

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Cheng, G.; Han, J.; Lu, X. Remote sensing image scene classification: Benchmark and state of the art. Proc. IEEE. 2017, 105, 1865–1883. [Google Scholar] [CrossRef]

- Han, W.; Zhang, X.H.; Wang, Y.; Wang, L.Z.; Huang, X.H.; Li, J.; Wang, S.; Chen, W.T.; Li, X.J.; Feng, R.Y.; et al. A survey of machine learning and deep learning in remote sensing of geological environment: Challenges, advances, and opportunities. ISPRS J. Photogramm. Remote Sens. 2023, 202, 87–113. [Google Scholar] [CrossRef]

- Qiu, C.P.; Zhang, X.Y.; Tong, X.C.; Guan, N.Y.; Yi, X.D.; Ke, Y.; Zhu, J.J.; Yu, A.Z. Few-shot remote sensing image scene classification: Recent advances, new baselines, and future trends. ISPRS J. Photogramm. Remote Sens. 2024, 209, 368–382. [Google Scholar] [CrossRef]

- Xu, K.J.; Huang, H.; Yuan, L.; Shi, G.Y. Multilayer feature fusion network for scene classification in remote sensing. IEEE Geosci. Remote Sens. Lett. 2020, 17, 1894–1898. [Google Scholar] [CrossRef]

- Wu, K.; Zhang, Y.Y.; Ru, L.X.; Dang, B.; Lao, J.W.; Yu, L.; Luo, J.W.; Zhu, Z.F.; Sun, Y.; Zhang, J.H.; et al. A semantic-enhanced multi-modal remote sensing foundation model for Earth observation. Nat. Mach. Intell. 2025, 7, 1235–1249. [Google Scholar] [CrossRef]

- Zhu, Q.; Guo, X.; Deng, W.; Shi, S.; Guan, Q.F.; Zhong, Y.F.; Zhang, L.P.; Li, D. Land-use/land-cover change detection based on a Siamese global learning framework for high spatial resolution remote sensing imagery. ISPRS J. Photogramm. Remote Sens. 2022, 184, 63–78. [Google Scholar] [CrossRef]

- Kucharczyk, M.; Hugenholtz, C. Remote sensing of natural hazard-related disasters with small drones: Global trends, biases, and research opportunities. Remote Sens. Environ. 2021, 264, 112577. [Google Scholar] [CrossRef]

- Chen, Z.; Si, W.; Johnson, V.C.; Oke, S.A.; Wang, S.; Lv, X.; Tan, M.L.; Zhang, F.; Ma, X. Remote sensing research on plastics in marine and inland water: Development, opportunities and challenge. J. Environ. Manag. 2025, 373, 123815. [Google Scholar] [CrossRef] [PubMed]

- Cheng, G.; Xie, X.; Han, J.W.; Guo, L.; Xia, G.S. Remote sensing image scene classification meets deep learning: Challenges, methods, benchmarks, and opportunities. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 13, 3735–3756. [Google Scholar] [CrossRef]

- Zhu, Q.; Sun, X.L.; Zhong, Y.F.; Zhang, L.P. High-resolution remote sensing image scene understanding: A review. In Proceedings of the 2019 IEEE International Geoscience and Remote Sensing Symposium, Yokohama, Japan, 28 July–2 August 2019; pp. 3061–3064. [Google Scholar]

- Grigorescu, S.; Petkov, N.; Kruizinga, P. Comparison of texture features based on Gabor filters. IEEE Trans. Image Process. 2002, 11, 1160–1167. [Google Scholar] [CrossRef]

- Tang, X.; Jiao, L.; Emery, W. SAR image content retrieval based on fuzzy similarity and relevance feedback. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2017, 10, 1824–1842. [Google Scholar] [CrossRef]

- Mei, S.; Ji, J.; Hou, J.; Li, X.; Du, Q. Learning sensor-specific spatial-spectral features of hyperspectral images via convolutional neural networks. IEEE Trans. Geosci. Remote Sens. 2017, 55, 4520–4533. [Google Scholar] [CrossRef]

- Jiao, L.; Tang, X.; Hou, B.; Wang, S. SAR images retrieval based on semantic classification and region-based similarity measure for earth observation. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2015, 8, 3876–3891. [Google Scholar] [CrossRef]

- Sedaghat, A.; Mohammadi, N. Uniform competency-based local feature extraction for remote sensing images. ISPRS J. Photogramm. Remote Sens. 2018, 135, 142–157. [Google Scholar] [CrossRef]

- Tang, X.; Jiao, L.; Emery, W.J.; Liu, F.; Zhang, D. Two-stage reranking for remote sensing image retrieval. IEEE Trans. Geosci. Remote Sens. 2017, 55, 5798–5817. [Google Scholar] [CrossRef]

- Zhang, L.; Zhou, W.; Jiao, L. Wavelet support vector machine. IEEE Trans. Syst. Man Cybern. Part B Cybern. 2004, 34, 34–39. [Google Scholar] [CrossRef] [PubMed]

- Peng, D.; Liu, X.; Zhang, Y.J.; Guan, H.; Li, Y.S.; Bruzzone, L. Deep learning change detection techniques for optical remote sensing imagery: Status, perspectives and challenges. Int. J. Appl. Earth Obs. Geoinf. 2025, 136, 104282. [Google Scholar] [CrossRef]

- Gomaa, A.; Saad, O.M. Residual Channel-attention (RCA) network for remote sensing image scene classification. Multimed. Tools Appl. 2025, 84, 33837–33861. [Google Scholar] [CrossRef]

- BAZI, Y.; Rahhal, M.M.; Alhichri, H.; Alajlan, N. Simple yet effective fine-tuning of deep CNNs using an auxiliary classification loss for remote sensing scene classification. Remote Sens. 2019, 11, 2908. [Google Scholar] [CrossRef]

- Li, W.; Wang, Z.T.; Wang, Y.; Wu, J.Q.; Wang, J.; Jia, Y. Classification of high-spatial-resolution remote sensing scenes method using transfer learning and deep convolutional neural network. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 13, 1986–1995. [Google Scholar] [CrossRef]

- Wang, W.; Chen, Y.; Ghamisi, P. Transferring CNN with adaptive learning for remote sensing scene classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5533918. [Google Scholar] [CrossRef]

- Wang, Q.; Liu, S.; Channusot, J.; Li, X. Scene classification with recurrent attention of VHR remote sensing images. IEEE Trans. Geosci. Remote Sens. 2018, 57, 1155–1167. [Google Scholar] [CrossRef]

- Lu, X.; Sun, H.; Zheng, X. A feature aggregation convolutional neural network for remote sensing scene classification. IEEE Trans. Geosci. Remote Sens. 2019, 57, 7894–7906. [Google Scholar] [CrossRef]

- Wang, X.; Wang, S.Y.; Ning, C.; Zhou, H.Y. Enhanced feature pyramid network with deep semantic embedding for remote sensing scene classification. IEEE Trans. Geosci. Remote Sens. 2021, 59, 7918–7932. [Google Scholar] [CrossRef]

- Xia, G.; Hu, J.; Hu, F.; Shi, B.; Bai, X.; Zhong, Y.; Zhang, L.; Lu, X. AID: A benchmark data set for performance evaluation of aerial scene classification. IEEE Trans. Geosci. Remote Sens. 2017, 55, 3965–3981. [Google Scholar] [CrossRef]

- Tang, X.; Zhang, X.R.; Liu, F.; Jiao, L. Unsupervised deep feature learning for remote sensing image retrieval. Remote Sens. 2018, 10, 1243. [Google Scholar] [CrossRef]

- Wu, H.; Shi, C.P.; Wang, L.G.; Jin, Z. A cross-channel dense connection and multi-scale dual aggregated attention network for hyperspectral image classification. Remote Sens. 2023, 15, 2367. [Google Scholar] [CrossRef]

- Shi, C.; Wu, H.; Wang, L. A feature complementary attention network based on adaptive knowledge filtering for hyperspectral image classification. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5527219. [Google Scholar] [CrossRef]

- Roy, S.; Deria, A.; Hong, D.; Rasti, B.; Plaza, A.; Channusot, J. Multimodal fusion transformer for remote sensing image classification. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5515620. [Google Scholar] [CrossRef]

- Zhang, J.; Zhao, H.; Li, J. TRS: Transformers for remote sensing scene classification. Remote Sens. 2021, 13, 4143. [Google Scholar] [CrossRef]

- Lv, P.; Wu, W.; Zhong, Y.F.; Du, F.; Zhang, L.P. SCViT: A spatial-channel feature preserving vision transformer for remote sensing image scene classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 4409512. [Google Scholar] [CrossRef]

- Li, L.; Han, L.; Ye, Y.X.; Xiang, Y.M.; Zhang, T.Y. Deep learning in remote sensing image matching: A survey. ISPRS J. Photogramm. Remote Sens. 2025, 225, 88–112. [Google Scholar] [CrossRef]

- Deng, P.; Xu, K.; Huang, H. When CNNs meet vision transformer: A joint framework for remote sensing scene classification. IEEE Geosci. Remote Sens. Lett. 2021, 19, 8020305. [Google Scholar] [CrossRef]

- Chen, X.; Ma, M.Y.; Li, Y.; Mei, S.H.; Han, Z.H.; Zhao, J. Hierarchical feature fusion of transformer with patch dilating for remote sensing scene classification. IEEE Trans. Geosci. Remote Sens. 2023, 61, 4410516. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. In Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; p. 30. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16 × 16 words: Transformers for image recognition at scale. In Proceedings of the International Conference on Learning Representations, Virtual, 3–7 May 2021; pp. 1–22. [Google Scholar]

- Wang, W.; Xie, E.; Li, X.; Fan, D.P.; Song, K.; Liang, D.; Lu, T.; Luo, P.; Shao, L. Pyramid vision transformer: A versatile backbone for dense prediction without convolutions. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 10–17 October 2021; pp. 568–578. [Google Scholar]

- Bazi, Y.; Bashmal, L.; Rahhal, M.M.; Dayii, R.; Ajlan, N. Vision transformers for remote sensing image classification. Remote Sens. 2021, 13, 516. [Google Scholar] [CrossRef]

- Sha, Z.; Li, J. MITformer: A multiinstance vision transformer for remote sensing scene classification. IEEE Geosci. Remote Sens. Lett. 2022, 19, 6510305. [Google Scholar] [CrossRef]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, X.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 10–17 October 2021; pp. 10012–10022. [Google Scholar]

- Haykin, S. Neural Networks: A Comprehensive Foundation; Prentice Hall PTR: Upper Saddle River, NJ, USA, 1994; p. 168. [Google Scholar]

- Cybenko, G. Approximation by superpositions of a sigmoidal function. Math. Control. Signal. 1989, 2, 303–314. [Google Scholar] [CrossRef]

- Hornik, K.; Stinchcombe, M.; White, H. Multilayer feedforward networks are universal approximators. Neural Netw. 1989, 2, 359–366. [Google Scholar] [CrossRef]

- Lin, S.; Lyu, P.; Liu, D.; Tang, T.; Liang, X.; Song, A.; Chang, X.J. Mlp can be a good transformer learner. In Proceedings of the 2024 IEEE/CVF International Conference on Computer Vision, Seattle, WA, USA, 17–19 June 2024; pp. 19489–19498. [Google Scholar]

- Guo, J.Y.; Han, K.; Wu, H.; Tang, Y.H.; Chen, X.H.; Wang, Y.H.; Xu, C. Cmt: Convolutional neural networks meet vision transformers. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 19–24 June 2022; pp. 12175–12185. [Google Scholar]

- Liu, Z.; Wang, Y.X.; Vaidya, S.; Ruehle, F.; Halverson, J.; Soljačić, M.; Hou, T.Y.; Tegmark, M. Kan: Kolmogorov-arnold networks. arXiv 2024, arXiv:2404.19756. [Google Scholar] [PubMed]

- Kolmogorov, A. On the representation of continuous functions of many variables by superposition of continuous functions of one variable and addition. Transl. Am. Math. Soc. 1963, 2, 55–59. [Google Scholar]

- Braun, J.; Griebel, M. On a constructive proof of Kolmogorov’s superposition theorem. Constr. Approx. 2009, 30, 653–675. [Google Scholar] [CrossRef]

- Ahmed, N.; Natarajan, T.; Rao, K. Discrete cosine transform. IEEE Trans. Comput. 2006, 100, 90–93. [Google Scholar] [CrossRef]

- Mallat, S. Multiresolution approximations and wavelet orthonormal bases of L2(R). Trans. Am. Math. Soc. 1989, 315, 69–87. [Google Scholar]

- Guibas, J.; Mardani, M.; Li, Z.; Tao, A.; Anandkumar, A.; Catanzaro, B. Adaptive fourier neural operators: Efficient token mixers for transformers. arXiv 2021, arXiv:2111.13587. [Google Scholar]

- Jin, L.; Song, Y.H.; Zhao, H.; Cao, J.Y.; Cheung, V.C.K.; Liao, W.H. Frequency-Aware Spatial-Temporal Attention Explainable Network for EEG Decoding. IEEE J. Biomed. Health. Inf. 2025, 10, 7175–7185. [Google Scholar] [CrossRef]

- Huan, H.; Zhang, B. FDAENet: Frequency domain attention encoder-decoder network for road extraction of remote sensing images. J. Appl. Remote Sens. 2024, 18, 024510. [Google Scholar] [CrossRef]

- Ravivarma, G.; Gavaskar, K.; Malathi, D.; Asha, K.G.; Ashok, B.; Aarthi, S. Implementation of Sobel operator based image edge detection on FPGA. Mater. Today Proc. 2021, 45, 2401–2407. [Google Scholar] [CrossRef]

- Wang, C.; Yu, B.; Zhou, J. A learnable gradient operator for face presentation attack detection. Pattern Recognit. 2023, 135, 109146. [Google Scholar] [CrossRef]

- Yang, Y.; Newsam, S. Bag-of-visual-words and spatial extensions for land-use classification. In Proceedings of the 18th SIGSPATIAL International Conference on Advances in Geographic Information Systems, San Jose, CA, USA, 2–5 November 2010; pp. 270–279. [Google Scholar]

- Cheng, G.; Li, Z.; Yao, X.; Guo, L.; Wei, Z. Remote sensing image scene classification using bag of convolutional features. IEEE Geosci. Remote Sens. Lett. 2017, 14, 1735–1739. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.Y.; Ren, S.Q.; Sun, J. Deep residual learning for image recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Zhao, Y.; Liu, J.; Yang, J.L.; Wu, Z. EMSCNet: Efficient multisample contrastive network for remote sensing image scene classification. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5605814. [Google Scholar] [CrossRef]

- Chen, W.; Ouyang, S.; Tong, W.; Li, X.J.; Zheng, X.W.; Wang, L.Z. GCSANet: A global context spatial attention deep learning network for remote sensing scene classification. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2022, 15, 1150–1162. [Google Scholar] [CrossRef]

- Zhang, G.; Xu, W.; Zhao, W.; Huang, C.; Yk, E.N.; Chen, Y.; Su, J. A multiscale attention network for remote sensing scene images classification. IEEE J. Sel. Top. Appl. Earth Observ. Remote Sens. 2021, 14, 9530–9545. [Google Scholar] [CrossRef]

- Dai, W.; Shi, F.; Wang, X.; Xu, H.; Yuan, L.; Wen, X. A multi-scale dense residual correlation network for remote sensing scene classification. Sci. Rep. 2024, 14, 22197. [Google Scholar] [CrossRef]

- Heo, B.; Yun, S.; Han, D.; Chun, S.; Choe, J.; Oh, S. Rethinking spatial dimensions of vision transformers. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 11–17 October 2021; pp. 11936–11945. [Google Scholar]

- Tang, X.; Li, M.; Ma, J.; Zhang, X.; Liu, F.; Jiao, L. EMTCAL: Efficient multiscale transformer and cross-level attention learning for remote sensing scene classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5626915. [Google Scholar] [CrossRef]

- Guo, J.; Jia, N.; Bai, J. Transformer based on channel-spatial attention for accurate classification of scenes in remote sensing image. Sci. Rep. 2022, 12, 15473. [Google Scholar] [CrossRef]

- Zheng, F.; Lin, S.; Zhou, W.; Huang, H. A lightweight dual-branch swin transformer for remote sensing scene classification. Remote Sens. 2023, 15, 2865. [Google Scholar] [CrossRef]

- Duan, Y.; Song, C.; Zhang, Y.F.; Cheng, P.Y.; Mei, S.H. STMSF: Swin Transformer with Multi-Scale Fusion for Remote Sensing Scene Classification. Remote Sens. 2025, 17, 668. [Google Scholar] [CrossRef]

- Xie, C.; Zhao, S.; Ye, S.; Fei, Y.Q.; Dai, X.Y.; Tan, Y.P. ACTFormer: A Transformer Network with Attention and Convolutional Synergy for Remote Sensing Scene Classification. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2025, 18, 18674–18687. [Google Scholar] [CrossRef]

- Ma, J.J.; Jiang, W.; Tang, X.; Zhang, X.R.; Liu, F.; Jiao, L. Multiscale sparse cross-attention network for remote sensing scene classification. IEEE Trans. Geosci. Remote Sens. 2025, 63, 5605416. [Google Scholar] [CrossRef]

- Tong, L.; Liu, J.; Du, B. SceneFormer: Neural architecture search of Transformers for remote sensing scene classification. IEEE Trans. Geosci. Remote Sens. 2025, 63, 3000415. [Google Scholar] [CrossRef]

- Selvaraj, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Mlp can be a good transformer learner. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 618–626. [Google Scholar]

- Maaten, L.; Hinton, H. Visualizing data using t-SNE. J. Mach. Learn. Res. 2008, 9, 2579–2605. [Google Scholar]

| Dataset | Total Number | Classes | Images per Class | Resolution | Image Size |

|---|---|---|---|---|---|

| UCM | 2100 | 21 | 100 | 0.3 m | 256 × 256 |

| AID | 10,000 | 30 | 220–420 | 0.5–0.8 m | 600 × 600 |

| NWPU | 31,500 | 45 | 700 | 0.2–30 m | 256 × 256 |

| Method | 50% Training Ratio | 80% Training Ratio |

|---|---|---|

| GoogLeNet | 92.70 ± 0.60 | 94.31 ± 0.89 |

| VGG | 94.05 ± 0.64 | 96.58 ± 0.41 |

| ResNet | 96.05 ± 0.65 | 97.75 ± 0.24 |

| MSA-Network | 97.80 ± 0.33 | 98.96 ± 0.21 |

| PCNet | 98.71 ± 0.22 | 99.25 ± 0.37 |

| GCSANet | 98.32 ± 0.71 | 99.31 ± 0.56 |

| EMSCNet | 98.70 ± 0.46 | 99.44 ± 0.16 |

| MDRCN | 98.57 ± 0.19 | 99.64 ± 0.12 |

| PiT-S | 95.83 ± 0.39 | 98.33 ± 0.50 |

| EMTCAL | 98.67 ± 0.16 | 99.57 ± 0.28 |

| CSAT | 95.72 ± 0.23 | 97.86 ± 0.16 |

| SCViT | 98.90 ± 0.19 | 99.57 ± 0.31 |

| LDBST | 98.76 ± 0.29 | 99.52 ± 0.24 |

| STMSF | 99.01 ± 0.31 | 99.58 ± 0.23 |

| SceneFormer | - | 99.00 ± 0.28 |

| FG-Swin KANsformer (ours) | 99.51 ± 0.13 | 99.65 ± 0.16 |

| Method | 20% Training Ratio | 50% Training Ratio |

|---|---|---|

| GoogLeNet | 83.44 ± 0.40 | 86.39 ± 0.55 |

| VGG | 92.76 ± 0.10 | 95.37 ± 0.09 |

| ResNet | 93.23 ± 0.12 | 94.12 ± 0.19 |

| MSA-Network | 93.53 ± 0.21 | 96.01 ± 0.43 |

| PCNet | 95.53 ± 0.16 | 96.76 ± 0.25 |

| GCSANet | 95.96 ± 0.38 | 97.53 ± 0.32 |

| EMSCNet | 95.13 ± 0.10 | 96.96 ± 0.10 |

| MDRCN | 93.64 ± 0.19 | 95.66 ± 0.18 |

| PiT-S | 90.51 ± 0.57 | 94.17 ± 0.36 |

| EMTCAL | 94.69 ± 0.14 | 96.41 ± 0.23 |

| CSAT | 92.55 ± 0.28 | 95.44 ± 0.17 |

| SCViT | 95.56 ± 0.17 | 96.98 ± 0.16 |

| LDBST | 95.10 ± 0.09 | 96.84 ± 0.20 |

| STMSF | 96.15 ± 0.16 | 97.51 ± 0.37 |

| SceneFormer | 96.14 ± 0.16 | - |

| MSCN | 95.86 ± 0.16 | 97.46 ± 0.12 |

| ACTFormer | 96.29 ± 0.19 | 97.56 ± 0.24 |

| FG-Swin KANsformer (ours) | 96.86 ± 0.16 | 97.93 ± 0.25 |

| Method | 10% Training Ratio | 20% Training Ratio |

|---|---|---|

| GoogLeNet | 76.19 ± 0.38 | 78.48 ± 0.26 |

| VGG | 87.14 ± 0.17 | 90.64 ± 0.14 |

| ResNet | 89.20 ± 0.14 | 92.12 ± 0.09 |

| MSA-Network | 90.38 ± 0.17 | 93.52 ± 0.21 |

| PCNet | 92.64 ± 0.13 | 94.59 ± 0.07 |

| GCSANet | 93.39 ± 0.39 | 93.95 ± 0.36 |

| EMSCNet | 92.16 ± 0.07 | 94.08 ± 0.20 |

| MDRCN | 91.59 ± 0.29 | 93.82 ± 0.17 |

| PiT-S | 85.85 ± 0.18 | 89.91 ± 0.19 |

| EMTCAL | 91.63 ± 0.19 | 93.65 ± 0.12 |

| CSAT | 89.70 ± 0.18 | 93.06 ± 0.16 |

| SCViT | 92.72 ± 0.04 | 94.66 ± 0.10 |

| LDBST | 90.83 ± 0.11 | 93.56 ± 0.07 |

| STMSF | 92.88 ± 0.16 | 94.95 ± 0.11 |

| SceneFormer | 92.51 ± 0.18 | - |

| MSCN | 92.64 ± 0.09 | 94.59 ± 0.11 |

| ACTFormer | 92.85 ± 0.14 | 94.76 ± 0.21 |

| FG-Swin KANsformer (ours) | 93.62 ± 0.15 | 95.27 ± 0.10 |

| ST | KAN | GSFE | DCT | AID-20% | NWPU-10% |

|---|---|---|---|---|---|

| ✓ | 92.22 ± 0.14 | 88.60 ± 0.21 | |||

| ✓ | ✓ | 96.07 ± 0.18 | 93.02 ± 0.11 | ||

| ✓ | ✓ | ✓ | 96.61 ± 0.13 | 93.38 ± 0.14 | |

| ✓ | ✓ | ✓ | 96.58 ± 0.19 | 93.40 ± 0.11 | |

| ✓ | ✓ | ✓ | ✓ | 96.86 ± 0.16 | 93.62 ± 0.15 |

| Methods | Parameters | FLOPs | OA% (AID-20%) |

|---|---|---|---|

| ViT-Base | 85.67 M | 16.86 G | 91.16 ± 0.41 |

| VGG-VD-16 | 138.4 M | 15.5 G | 87.18 ± 0.29 |

| SwinT-Base | 86.71 M | 15.17 G | 92.22 ± 0.14 |

| FG-Swin KANsformer (Ours) | 69.37 M | 14.98 G | 96.86 ± 0.16 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhu, X.; Huang, J.; Wang, H. Frequency Domain and Gradient-Spatial Multi-Scale Swin KANsformer for Remote Sensing Scene Classification. Remote Sens. 2026, 18, 517. https://doi.org/10.3390/rs18030517

Zhu X, Huang J, Wang H. Frequency Domain and Gradient-Spatial Multi-Scale Swin KANsformer for Remote Sensing Scene Classification. Remote Sensing. 2026; 18(3):517. https://doi.org/10.3390/rs18030517

Chicago/Turabian StyleZhu, Xiaozhang, Junqing Huang, and Haihui Wang. 2026. "Frequency Domain and Gradient-Spatial Multi-Scale Swin KANsformer for Remote Sensing Scene Classification" Remote Sensing 18, no. 3: 517. https://doi.org/10.3390/rs18030517

APA StyleZhu, X., Huang, J., & Wang, H. (2026). Frequency Domain and Gradient-Spatial Multi-Scale Swin KANsformer for Remote Sensing Scene Classification. Remote Sensing, 18(3), 517. https://doi.org/10.3390/rs18030517