Contrastive–Transfer-Synergized Dual-Stream Transformer for Hyperspectral Anomaly Detection

Highlights

- CTDST-HAD achieves an average AUC of 0.988 across nine real hyperspectral datasets, outperforming ten state-of-the-art methods; accuracy remains >0.95 even in complex near-ground jungle scenes.

- Ablation shows that the contrastive–transfer two-stage pre-training, physics-based VAE augmentation, adaptive EWC, and focal loss each contribute 1.5–3.2 AUC points and are all indispensable.

- Each hyperspectral image does not require retraining and can be directly used for fast inference, providing a scalable paradigm for real-time and low-cost applications of hyperspectral anomaly detection.

- The strategy of combining physics guidance and transfer learning can be extended to other remote sensing tasks, providing a general idea for intelligent interpretation under scarce annotation conditions.

Abstract

1. Introduction

2. Related Work

2.1. Vision Transformer

2.2. Autoencoder

3. Proposed Method

3.1. Separate Pre-Training

3.1.1. Spatial Stream

3.1.2. Spectral Stream

3.2. Synergistic Fine-Tuning

| Algorithm 1: Concatenate spatial–spectral features |

| Input: HSI cube |

| Output: Fused feature map where |

| 1. Spatial stream |

| Irgb←PCA(H,3) |

| P←split_into_patches(Irgb,size = n) |

| Fspa←SpatialEncoder(P) |

| Fspa←upsample(Fspa,(M,N)) |

| 2. Spectral stream |

| for to do |

| for to do |

| Fspe(i,j)←SpectralEncoder(W) |

| end for |

| end for |

| 3. Fusion |

| F←concat(Fspa,Fspe,axis = −1) |

| 4. return |

4. Experiment and Analysis

4.1. Datasets and Parameter Settings

4.1.1. Universal Visual Dataset

4.1.2. Hyperspectral Dataset

4.1.3. Environment and Model Settings

4.2. Ablation Experiment

4.2.1. Quality of Stage Training

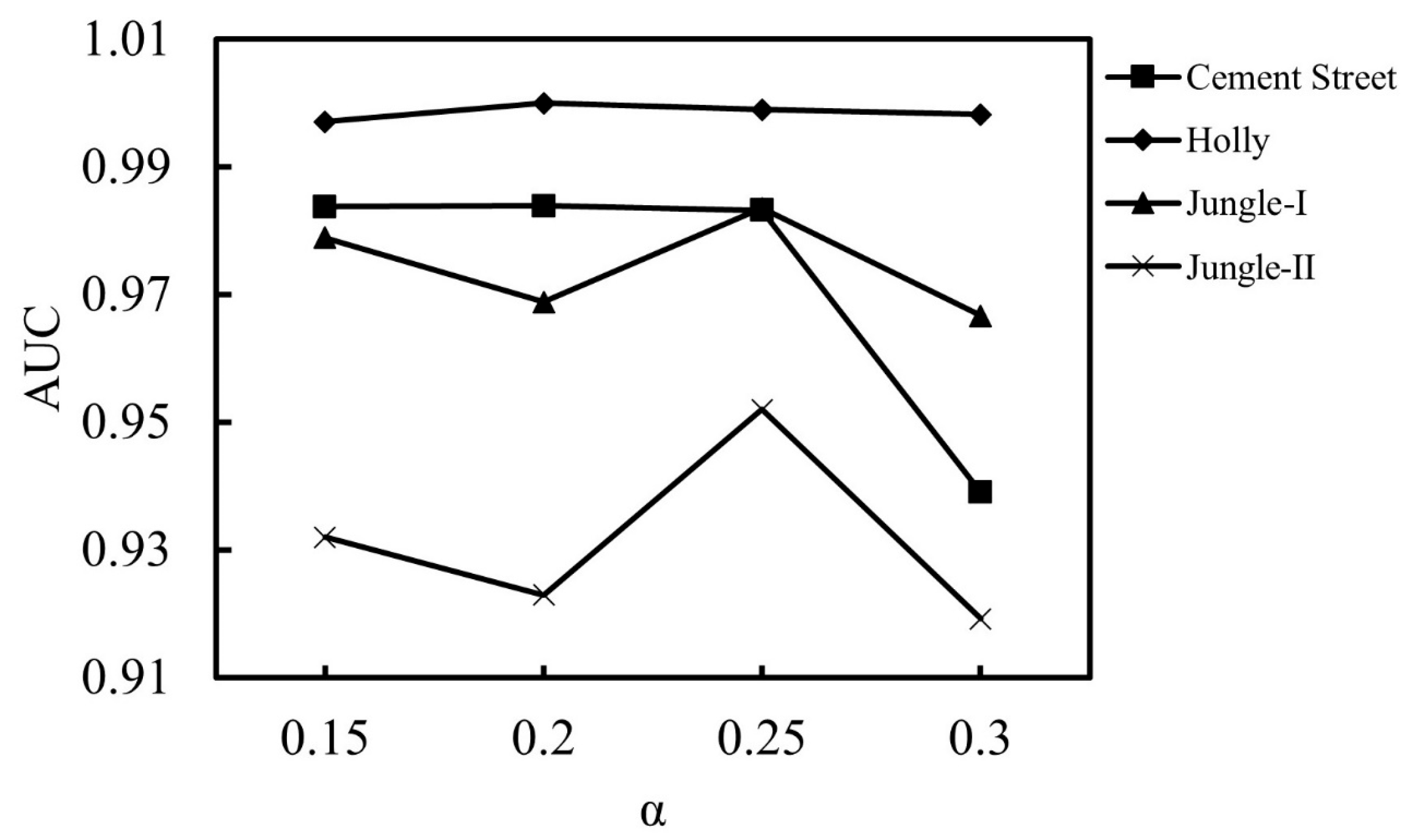

4.2.2. The Influence of Adaptive EWC Weights

4.2.3. The Impact of R-L-Enhanced VAE

4.2.4. The Impact of Focal Loss

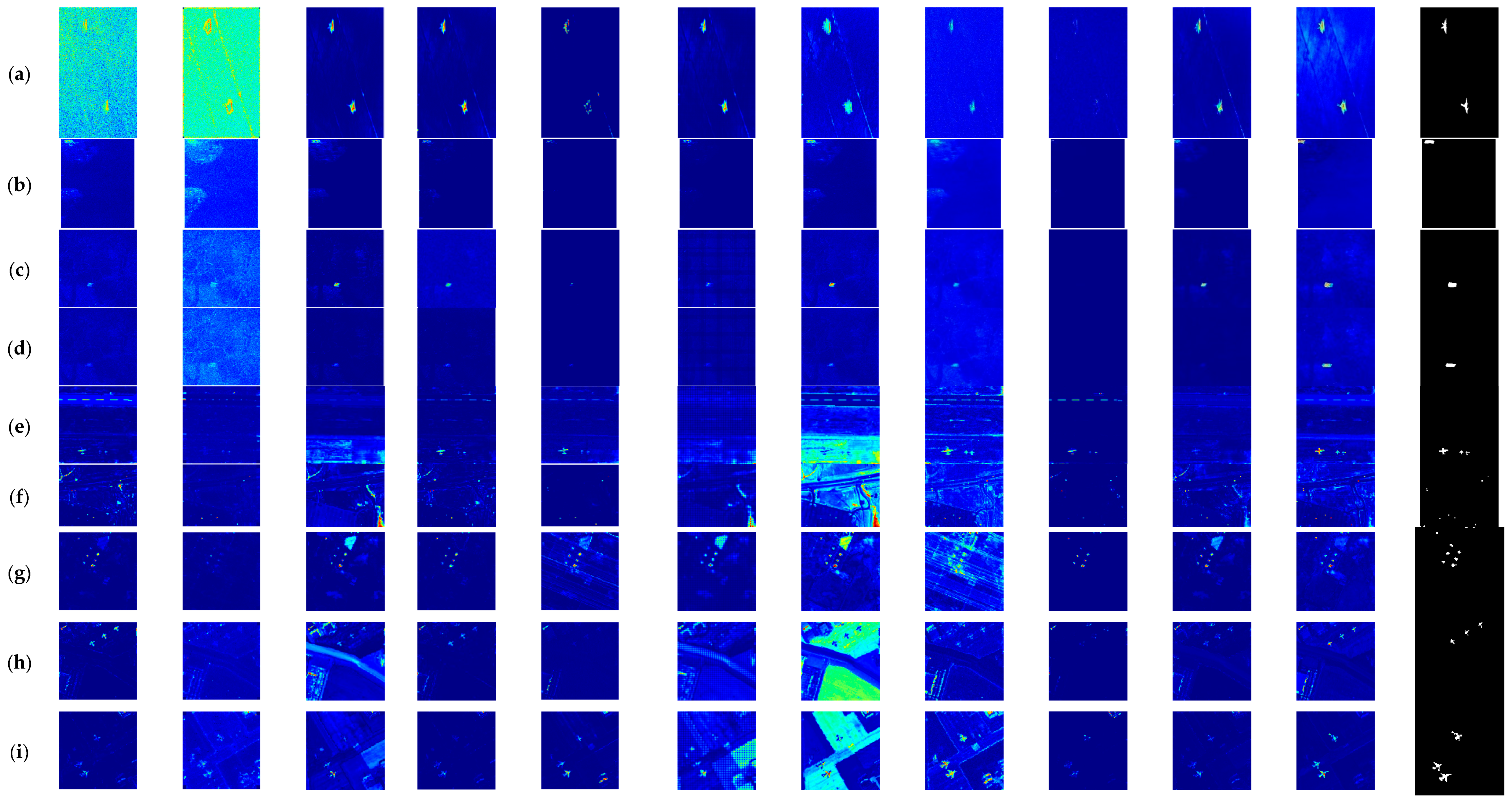

4.3. Comparative Experiment

4.4. Running Time and Model Complexity

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Liu, P.; Bu, Y.; Zhao, Y.; Kong, S.G. Progressive self-supervised framework for anomaly detection in hyperspectral images. Eng. Appl. Artif. Intell. 2025, 156, 111151. [Google Scholar] [CrossRef]

- Li, X.; Shang, W. Hyperspectral anomaly detection based on spectral similarity variability feature. Sensors 2024, 24, 5664. [Google Scholar] [CrossRef] [PubMed]

- Zhao, D.; Zhang, H.; Huang, K.; Zhu, X.; Arun, P.V.; Jiang, W.; Li, S.; Pei, X.; Zhou, H. SASU-Net: Hyperspectral video tracker based on spectral adaptive aggregation weighting and scale updating. Expert Syst. Appl. 2025, 272. [Google Scholar] [CrossRef]

- Jiang, W.; Zhao, D.; Wang, C.; Yu, X.; Arun, P.V.; Asano, Y.; Xiang, P.; Zhou, H. Hyperspectral video object tracking with cross-modal spectral complementary and memory prompt network. Knowl.-Based Syst. 2025, 330, 114595. [Google Scholar] [CrossRef]

- Zhao, D.; Wang, M.; Huang, K.; Zhong, W.; Arun, P.V.; Li, Y.; Asano, Y.; Wu, L.; Zhou, H. OCSCNet-Tracker: Hyperspectral video tracker based on octave convolution and spatial-spectral capsule network. Remote. Sens. 2025, 17, 693. [Google Scholar] [CrossRef]

- Zhao, X.; Liu, K.; Wang, X.; Zhao, S.; Gao, K.; Lin, H.; Zong, Y.; Li, W. Tensor adaptive reconstruction cascaded with global and local feature fusion for hyperspectral target detection. IEEE J. Sel. Top. Appl. Earth Obs. Remote. Sens. 2025, 18, 607–620. [Google Scholar] [CrossRef]

- Gan, Y.; Li, X.; Wu, S.; Wang, M. MACNet: A multiscale attention-guided contextual network for hyperspectral anomaly detection. IEEE Geosci. Remote Sens. Lett. 2025, 22, 5508905. [Google Scholar] [CrossRef]

- Xu, M.; Zhang, J.; Liu, S.; Sheng, H. Hyperspectral anomaly detection based on adaptive background dictionary construction and collaborative representation. Int. J. Remote. Sens. 2024, 45, 3349–3369. [Google Scholar] [CrossRef]

- Zhao, D.; Yan, W.; You, M.; Zhang, J.; Arun, P.V.; Jiao, C.; Wang, Q.; Zhou, H. Hyperspectral anomaly detection based on empirical mode decomposition and local weighted contrast. IEEE Sens. J. 2024, 24, 33847–33861. [Google Scholar] [CrossRef]

- He, K.; Jiang, Z.; Liu, B.; Zhang, X. Fast hyperspectral image anomaly detection based on orthogonal projection. Laser Optoelectron. Prog. 2024, 61, 233–240. [Google Scholar] [CrossRef]

- Zhu, X.; Zhang, H.; Hu, B.; Huang, K.; Arun, P.V.; Jia, X.; Zhao, D.; Wang, Q.; Zhou, H.; Yang, S. DSP-Net: A dynamic spectral-spatial joint perception network for hyperspectral target tracking. IEEE Geosci. Remote Sens. Lett. 2023, 20, 5510905. [Google Scholar] [CrossRef]

- Zhao, D.; Zhong, W.; Ge, M.; Jiang, W.; Zhu, X.; Arun, P.V.; Zhou, H. SiamBSI: Hyperspectral video tracker based on band correlation grouping and spatial-spectral information interaction. Infrared Phys. Technol. 2025, 151, 106063. [Google Scholar] [CrossRef]

- Fu, X.; Zhang, T.; Cheng, J.; Jia, S. MMR-HAD: Multiscale Mamba reconstruction network for hyperspectral anomaly detection. IEEE Trans. Geosci. Remote. Sens. 2025, 63, 5516914. [Google Scholar] [CrossRef]

- Reed, I.; Yu, X. Adaptive multiple-band CFAR detection of an optical pattern with unknown spectral distribution. IEEE Trans. Acoust. Speech Signal Process. 1990, 38, 1760–1770. [Google Scholar] [CrossRef]

- Kwon, H.; Der, S.Z.; Nasrabadi, N.M. Adaptive anomaly detection using subspace separation for hyperspectral imagery. Opt. Eng. 2003, 1, 3342–3351. [Google Scholar] [CrossRef]

- Kwon, H.; Nasrabadi, N. Kernel RX-algorithm: A nonlinear anomaly detector for hyperspectral imagery. IEEE Trans. Geosci. Remote. Sens. 2005, 43, 388–397. [Google Scholar] [CrossRef]

- Banerjee, A.; Burlina, P.; Meth, R. Fast hyperspectral anomaly detection via SVDD. In Proceedings of the 2007 IEEE International Conference on Image Processing, San Antonio, TX, USA, 16 September–19 October 2007; IEEE: New York, NY, USA, 2007; pp. 101–104. [Google Scholar] [CrossRef]

- Tu, B.; Yang, X.; Li, N.; Zhou, C.; He, D. Hyperspectral anomaly detection via density peak clustering. Pattern Recognit. Lett. 2020, 129, 144–149. [Google Scholar] [CrossRef]

- Ling, Q.; Guo, Y.; Lin, Z.; An, W. A Constrained Sparse Representation Model for Hyperspectral Anomaly Detection. IEEE Trans. Geosci. Remote. Sens. 2019, 57, 2358–2371. [Google Scholar] [CrossRef]

- Li, W.; Du, Q. Collaborative Representation for Hyperspectral Anomaly Detection. IEEE Trans. Geosci. Remote. Sens. 2015, 53, 1463–1474. [Google Scholar] [CrossRef]

- Yang, Y.; Zhang, J.; Liu, D.; Wu, X. Low-rank and sparse matrix decomposition with background position estimation for hyperspectral anomaly detection. Infrared Phys. Technol. 2019, 96, 213–227. [Google Scholar] [CrossRef]

- Chang, C.-I.; Cao, H.; Song, M. Orthogonal subspace projection target detector for hyperspectral anomaly detection. IEEE J. Sel. Top. Appl. Earth Obs. Remote. Sens. 2021, 14, 4915–4932. [Google Scholar] [CrossRef]

- Yang, Y.; Zhang, J.; Song, S.; Zhang, C.; Liu, D. Low-rank and sparse matrix decomposition with orthogonal subspace projection-based background suppression for hyperspectral anomaly detection. IEEE Geosci. Remote. Sens. Lett. 2020, 17, 1378–1382. [Google Scholar] [CrossRef]

- Kang, X.; Zhang, X.; Li, S.; Li, K.; Li, J.; Benediktsson, J.A. Hyperspectral Anomaly Detection With Attribute and Edge-Preserving Filters. IEEE Trans. Geosci. Remote. Sens. 2017, 55, 5600–5611. [Google Scholar] [CrossRef]

- Hu, X.; Xie, C.; Fan, Z.; Duan, Q.; Zhang, D.; Jiang, L.; Wei, X.; Hong, D.; Li, G.; Zeng, X.; et al. Hyperspectral anomaly detection using deep learning: A review. Remote. Sens. 2022, 14, 1973. [Google Scholar] [CrossRef]

- Bati, E.; Çalışkan, A.; Koz, A.; Alatan, A.A. Hyperspectral anomaly detection method based on auto-encoder. Proc. SPIE 2015, 9643, 96430S. [Google Scholar] [CrossRef]

- Xie, W.; Lei, J.; Liu, B.; Li, Y.; Jia, X. Spectral constraint adversarial autoencoders approach to feature representation in hyperspectral anomaly detection. Neural Netw. 2019, 119, 222–234. [Google Scholar] [CrossRef]

- Ojha, N.; Sinha, I.K.; Singh, K.P. VAE-AD: Unsupervised variational autoencoder for anomaly detection in hyperspectral images. In Neural Information Process Communications in Computer and Information Science; Tanveer, M., Agarwal, S., Ozawa, S., Ekbal, A., Jatowt, A., Eds.; Springer: Singapore, 2023; Volume 1794, pp. 127–139. [Google Scholar] [CrossRef]

- Wang, S.; Wang, X.; Zhang, L.; Zhong, Y. Auto-AD: Autonomous hyperspectral anomaly detection network based on fully convolutional autoencoder. IEEE Trans. Geosci. Remote. Sens. 2022, 60, 5503314. [Google Scholar] [CrossRef]

- Fan, G.; Ma, Y.; Mei, X.; Fan, F.; Huang, J.; Ma, J. Hyperspectral Anomaly Detection With Robust Graph Autoencoders. IEEE Trans. Geosci. Remote. Sens. 2022, 60, 1–14. [Google Scholar] [CrossRef]

- Liu, H.; Su, X.; Shen, X.; Zhou, C.; Chen, X.; Zhou, X. BiGSeT: Binary mask-guided separation training for DNN-based hyperspectral anomaly detection. arXiv 2023. [Google Scholar] [CrossRef]

- He, Z.; He, D.; Xiao, M.; Lou, A.; Lai, G. Convolutional Transformer-Inspired Autoencoder for Hyperspectral Anomaly Detection. IEEE Geosci. Remote. Sens. Lett. 2023, 20, 1–5. [Google Scholar] [CrossRef]

- Lian, J.; Wang, L.; Sun, H.; Huang, H. GT-HAD: Gated Transformer for Hyperspectral Anomaly Detection. IEEE Trans. Neural Netw. Learn. Syst. 2025, 36, 3631–3645. [Google Scholar] [CrossRef] [PubMed]

- Wu, Z.; Wang, B. Transformer-Based Autoencoder Framework for Nonlinear Hyperspectral Anomaly Detection. IEEE Trans. Geosci. Remote. Sens. 2024, 62, 1–15. [Google Scholar] [CrossRef]

- Cheng, X.; Huo, Y.; Lin, S.; Dong, Y.; Zhao, S.; Zhang, M.; Wang, H. Deep feature aggregation network for hyperspectral anomaly detection. IEEE Trans. Instrum. Meas. 2024, 73, 5033016. [Google Scholar] [CrossRef]

- Liu, H.; Su, X.; Shen, X.; Zhou, X. MSNet: Self-supervised multiscale network with enhanced separation training for hyperspectral anomaly detection. IEEE Trans. Geosci. Remote. Sens. 2024, 62, 5520313. [Google Scholar] [CrossRef]

- Wang, D.; Zhuang, L.; Gao, L.; Sun, X.; Zhao, X. Global feature-injected blind-spot network for hyperspectral anomaly detection. IEEE Geosci. Remote. Sens. Lett. 2024, 21, 5509305. [Google Scholar] [CrossRef]

- Fu, X.; Jia, S.; Zhuang, L.; Xu, M.; Zhou, J.; Li, Q. Hyperspectral anomaly detection via deep plug-and-play denoising CNN regularization. IEEE Trans. Geosci. Remote. Sens. 2021, 59, 9553–9568. [Google Scholar] [CrossRef]

- Lin, S.; Zhang, M.; Cheng, X.; Shi, L.; Gamba, P.; Wang, H. Dynamic low-rank and sparse priors constrained deep autoencoders for hyperspectral anomaly detection. IEEE Trans. Instrum. Meas. 2024, 73, 2500518. [Google Scholar] [CrossRef]

- Xiang, P.; Ali, S.; Zhang, J.; Jung, S.K.; Zhou, H. Pixel-associated autoencoder for hyperspectral anomaly detection. Int. J. Appl. Earth Obs. Geoinf. 2024, 129, 103816. [Google Scholar] [CrossRef]

- Chen, W.; Zhi, X.; Jiang, S.; Huang, Y.; Han, Q.; Zhang, W. DWSDiff: Dual-window spectral diffusion for hyperspectral anomaly detection. IEEE Trans. Geosci. Remote. Sens. 2025, 63, 5504617. [Google Scholar] [CrossRef]

- He, X.; An, W.; Wang, Y.; Ling, Q.; Li, M.; Lin, Z.; Zhou, S. CWIMamba: Cross-scale windowed integration state space model for hyperspectral anomaly detection. IEEE Trans. Geosci. Remote. Sens. 2025, 63, 5527820. [Google Scholar] [CrossRef]

- Zhao, D.; Hu, B.; Jiang, W.; Zhong, W.; Arun, P.V.; Cheng, K.; Zhao, Z.; Zhou, H. Hyperspectral video tracker based on spectral difference matching reduction and deep spectral target perception features. Opt. Lasers Eng. 2025, 194, 109124. [Google Scholar] [CrossRef]

- Zhao, D.; Zhang, H.; Arun, P.V.; Jiao, C.; Zhou, H.; Xiang, P.; Cheng, K. SiamSTU: Hyperspectral video tracker based on spectral spatial angle mapping enhancement and state aware template update. Infrared Phys. Technol. 2025, 150, 105919. [Google Scholar] [CrossRef]

- Li, L.; Wu, Z.; Wang, B. Hyperspectral anomaly detection via merging total variation into low-rank representation. IEEE J. Sel. Top. Appl. Earth Obs. Remote. Sens. 2024, 17, 14894–14907. [Google Scholar] [CrossRef]

- Xiang, P.; Zhang, J.; Qi, S.; Jung, S.K.; Zhou, H.; Zhao, D. Hyperspectral anomaly detection using Taylor expansion and weighted irregular block filter. Infrared Phys. Technol. 2025, 150, 105942. [Google Scholar] [CrossRef]

- Wu, Y.; Li, Z.; Zhao, B.; Song, Y.; Zhang, B. Transfer learning of spatial features from high-resolution RGB images for large-scale and robust hyperspectral remote sensing target detection. IEEE Trans. Geosci. Remote. Sens. 2024, 62, 5505732. [Google Scholar] [CrossRef]

- Zou, Q.; Zhou, J.; Ma, Y.; Luo, M. Random projection-based sub-pixel target detection for hyperspectral image with t-distribution background. IEEE Trans. Geosci. Remote. Sens. 2024, 62, 5538218. [Google Scholar] [CrossRef]

- Sun, X.; Zhuang, L.; Gao, L.; Gao, H.; Sun, X.; Zhang, B. A parameter-free topological disassembly-guided method for hyperspectral target detection. IEEE J. Sel. Top. Appl. Earth Obs. Remote. Sens. 2025, 18, 17875–17888. [Google Scholar] [CrossRef]

- Li, C.; Wang, R.; Chen, Z.; Gao, H.; Xu, S. Transformer-inspired stacked-GAN for hyperspectral target detection. Int. J. Remote. Sens. 2024, 45, 4961–4982. [Google Scholar] [CrossRef]

- Jiang, W.; Zhong, W.; Arun, P.V.; Xiang, P.; Zhao, D. SRTE-Net: Spectral-spatial similarity reduction and reorganized texture encoding for hyperspectral video tracking. IEEE Signal Process. Lett. 2025, 32, 3390–3394. [Google Scholar] [CrossRef]

- Liu, Y.; Jiang, K.; Xie, W.; Zhang, J.; Li, Y.; Fang, L. Hyperspectral anomaly detection with self-supervised anomaly prior. Neural. Netw. 2025, 187, 107294. [Google Scholar] [CrossRef]

- Zhao, D.; Zhou, L.; Li, Y.; He, W.; Arun, P.V.; Zhu, X.; Hu, J. Visibility estimation via near-infrared bispectral real-time imaging in bad weather. Infrared Phys. Technol. 2024, 136, 105008. [Google Scholar] [CrossRef]

- Wang, D.; Ren, L.; Sun, X.; Gao, L.; Chanussot, J. Nonlocal and local feature-coupled self-supervised network for hyperspectral anomaly detection. IEEE J. Sel. Top. Appl. Earth Obs. Remote. Sens. 2025, 18, 6981–6993. [Google Scholar] [CrossRef]

- Zhao, D.; Zhu, X.; Zhang, Z.; Arun, P.V.; Cao, J.; Wang, Q.; Zhou, H.; Jiang, H.; Hu, J.; Qian, K. Hyperspectral video target tracking based on pixel-wise spectral matching reduction and deep spectral cascading texture features. Signal Process. 2023, 209, 109033. [Google Scholar] [CrossRef]

- Sun, X.; Zhang, Y.; Dong, Y.; Du, B. Contrastive self-supervised learning-based background reconstruction for hyperspectral anomaly detection. IEEE Trans. Geosci. Remote. Sens. 2025, 63, 5504312. [Google Scholar] [CrossRef]

- Wang, Z.; Ma, D.; Yue, G.; Li, B.; Cong, R.; Wu, Z. Self-supervised hyperspectral anomaly detection based on finite spatialwise attention. IEEE Trans. Geosci. Remote. Sens. 2024, 62, 5502918. [Google Scholar] [CrossRef]

- Li, M.; Fu, Y.; Zhang, T.; Wen, G. Supervise-assisted self-supervised deep-learning method for hyperspectral image restoration. IEEE Trans. Neural Netw. Learn. Syst. 2025, 36, 7331–7344. [Google Scholar] [CrossRef]

- Gao, L.; Wang, D.; Zhuang, L.; Sun, X.; Huang, M.; Plaza, A. BS3LNet: A new blind-spot self-supervised learning network for hyperspectral anomaly detection. IEEE Trans. Geosci. Remote. Sens. 2023, 61, 5504218. [Google Scholar] [CrossRef]

- Hu, M.; Wu, C.; Zhang, L. HyperNet: Self-supervised hyperspectral spatial-spectral feature understanding network for hyperspectral change detection. IEEE Trans. Geosci. Remote. Sens. 2022, 60, 5543017. [Google Scholar] [CrossRef]

- Li, W.; Wu, G.; Du, Q. Transferred Deep Learning for Anomaly Detection in Hyperspectral Imagery. IEEE Geosci. Remote. Sens. Lett. 2017, 14, 597–601. [Google Scholar] [CrossRef]

- Guan, P.; Lam, E.Y. Progressive self-supervised pretraining for hyperspectral image classification. IEEE Trans. Geosci. Remote. Sens. 2024, 62, 5517713. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is all you need. arXiv 2017. [Google Scholar] [CrossRef]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.H.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv 2020. [Google Scholar] [CrossRef]

- Zhuang, H.; Yan, Y.; He, R.; Zeng, Z. Class incremental learning with analytic learning for hyperspectral image classification. J. Frankl. Inst. 2024, 361, 107285. [Google Scholar] [CrossRef]

| Spatial Encoder | Spectral Encoder | ||

|---|---|---|---|

| image_size | 224 | spectral_band | 89 |

| patch_size | 32 | window_size | 40 |

| feature_dim | 128 | feature_dim | 128 |

| depth | 6 | depth | 5 |

| dropout | 0.1 | dropout | 0.1 |

| emb_dropout | 0.1 | emb_dropout | 0.1 |

| head_dim | 64 | head_dim | 16 |

| heads | 16 | heads | 6 |

| Layer | Dimensions | |

|---|---|---|

| Encoder | FC + ReLU | [89,128] |

| FC + ReLU | [128,64] | |

| FC + ReLU | [64,32] | |

| FC_mean, FC_var | [32,3], [32,3] | |

| Reparametrize | [6,3] | |

| R-L-Enhanced Decoder | z → K | [3,2] |

| K⊕f | [2,89] |

| Parameter | Value |

|---|---|

| Dimension | 20.3 cm (W) × 14.0 cm (H) × 41.7 cm (D) |

| Wavelength range | 450 nm to 800 nm |

| Bandwidth (typical) | 1.5 nm (at 450 nm), 3.5 nm (at 800 nm) |

| Accuracy | ±1 nm (estimated temperature variance ± 5 °C |

| Repeatability | ±0.5 nm |

| Out-of-band rejection | 1:10−3 |

| Numerical aperture | 0.05 |

| Imaging (entrance aperture to CCD image plane) | 1:1 image |

| Switching speed | <100 μs |

| Operating temperature range | 10 °C to 35 °C |

| Camera type | Electron-multiplying charge-coupled device |

| Camera cooling | Internal thermoelectric cooler |

| Minimum camera cooling temperature (air cooling at 25 °C ambient) | −60 °C |

| Camera controller card | PC plug-in card |

| Camera cooler power input | 7.5 VDC |

| AC input (supplied cooler power module) | 100 VAC to 240 VAC, 50–60 Hz |

| Typical case operating temperature rise (above ambient) | 5 °C |

| Cement Street | Holly | Jungle-I | Jungle-II | |

|---|---|---|---|---|

| Adaptive EWC | 0.9832 | 0.999 | 0.9835 | 0.952 |

| EWC | 0.9612 | 0.9533 | 0.9701 | 0.9299 |

| No constraints | 0.9574 | 0.9424 | 0.9669 | 0.9208 |

| Cement Street | Holly | Jungle-I | Jungle-II | Average | |

|---|---|---|---|---|---|

| Random channel masking | 0.9632 | 0.9797 | 0.9468 | 0.9296 | 0.9548 |

| Spectral Gaussian noise | 0.9744 | 0.9832 | 0.9587 | 0.9398 | 0.9640 |

| Random intensity scaling | 0.9623 | 0.9713 | 0.9479 | 0.9299 | 0.9529 |

| Random wavelength shift | 0.9583 | 0.9788 | 0.9435 | 0.9198 | 0.9501 |

| R-L-enhanced VAE | 0.9832 | 0.999 | 0.9835 | 0.952 | 0.9794 |

| Cement Street | Holly | Jungle-I | Jungle-II | Average | |

|---|---|---|---|---|---|

| Focal loss | 0.9832 | 0.999 | 0.9835 | 0.952 | 0.9794 |

| BCE loss | 0.9711 | 0.9981 | 0.9633 | 0.9213 | 0.9635 |

| Method | Parameter Settings | Source |

|---|---|---|

| RX [13] | - | - |

| CRD [19] | Outer window size: 11 Inner window size: 9 Regularization coefficient: 0.1 | Original paper |

| RGAE [29] | Regularization parameter: 0.01 Number of superpixels: 150 Number of hidden layer nodes: 20 | Author’s open code |

| Auto-AD [28] | Number of channels: 5 Loss change threshold: 1.5 × 10−5 | Author’s open code |

| CTA [31] | Learning rate: 1 × 10−4 Total training epochs: 100 | Author’s open code |

| GT-HAD [32] | Embedding dimension: 64 Patch size: 3 | Author’s open code |

| TAEF [33] | Low-pass filter bandwidth: 7 Total training epochs: 20 Learning rate: 5 × 10−3 | Original paper |

| DFAN-HAD [34] | Latent layer dimension: 64 Learning rate: 1 × 10−4 | Author’s open code |

| MSNet [35] | Number of selected bands: 64 Regularization coefficient in loss: 1 × 10−3 | Author’s open code |

| PUNNet [36] | PD stride factor: 2 Number of NAFNet blocks: 4 Dilation factor: 2 Loss function: L1 loss | Author’s open code |

| CTDST-HAD | Regularization: adaptive EWC ()Loss function: focal loss () | - |

| RX [13] | CRD [19] | RGAE [29] | Auto-AD [28] | CTA [31] | GT-HAD [32] | TAEF [33] | DFAN-HAD [34] | MSNet [35] | PUNNet [36] | CTDST-HAD | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Cement Street | 0.7505 | 0.7693 | 0.9893 | 0.9887 | 0.9506 | 0.9895 | 0.9845 | 0.8574 | 0.5734 | 0.9889 | 0.9832 |

| Holly | 0.9978 | 0.9947 | 0.9993 | 0.9935 | 0.9454 | 0.9988 | 0.9980 | 0.9975 | 0.7289 | 0.9996 | 0.999 |

| Jungle-I | 0.8693 | 0.8622 | 0.9072 | 0.8785 | 0.6553 | 0.9104 | 0.9015 | 0.9640 | 0.7704 | 0.9194 | 0.9835 |

| Jungle-II | 0.9214 | 0.8753 | 0.9134 | 0.9006 | 0.7596 | 0.8824 | 0.9074 | 0.9141 | 0.7874 | 0.9103 | 0.952 |

| Gulfport | 0.9521 | 0.9593 | 0.7484 | 0.9429 | 0.9890 | 0.6162 | 0.5724 | 0.9943 | 0.9875 | 0.9209 | 0.9983 |

| HYDICE | 0.9855 | 0.8970 | 0.7633 | 0.8922 | 0.9946 | 0.6224 | 0.6855 | 0.9934 | 0.9809 | 0.8982 | 0.9921 |

| Texas Coast | 0.9906 | 0.9948 | 0.9822 | 0.9887 | 0.9891 | 0.9353 | 0.9850 | 0.9904 | 0.9977 | 0.9856 | 0.9921 |

| San Diego-I | 0.9118 | 0.9532 | 0.9891 | 0.9949 | 0.9657 | 0.6012 | 0.3234 | 0.9583 | 0.9623 | 0.9833 | 0.9963 |

| San Diego-II | 0.9404 | 0.9467 | 0.9918 | 0.9789 | 0.9893 | 0.7813 | 0.7120 | 0.9877 | 0.9811 | 0.9760 | 0.9993 |

| Average | 0.9244 | 0.9169 | 0.9204 | 0.9510 | 0.9154 | 0.8153 | 0.7855 | 0.9619 | 0.8633 | 0.9536 | 0.9884 |

| RX [13] | CRD [19] | RGAE [29] | Auto-AD [28] | CTA [31] | GT-HAD [32] | TAEF [33] | DFAN-HAD [34] | MSNet [35] | PUNNet [36] | CTDST-HAD | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Cement Street | 0.4759 | 0.5441 | 0.3643 | 0.3729 | 0.2272 | 0.3354 | 0.3446 | 0.2267 | 0.0685 | 0.3299 | 0.5279 |

| Holly | 0.3477 | 0.4156 | 0.3256 | 0.1801 | 0.0957 | 0.1995 | 0.4006 | 0.3619 | 0.0752 | 0.3031 | 0.4902 |

| Jungle-I | 0.1484 | 0.2773 | 0.2542 | 0.2419 | 0.0261 | 0.1097 | 0.3232 | 0.2099 | 0.0051 | 0.2506 | 0.3973 |

| Jungle-II | 0.1111 | 0.2797 | 0.0821 | 0.0836 | 0.0273 | 0.0497 | 0.1255 | 0.2358 | 0.0051 | 0.0313 | 0.3509 |

| Gulfport | 0.0727 | 0.0887 | 0.0988 | 0.2456 | 0.3333 | 0.0744 | 0.2242 | 0.5121 | 0.2328 | 0.1177 | 0.5374 |

| HYDICE | 0.2330 | 0.2975 | 0.1118 | 0.2686 | 0.2965 | 0.0426 | 0.3148 | 0.6304 | 0.1186 | 0.3033 | 0.6871 |

| Texas Coast | 0.3117 | 0.1293 | 0.3764 | 0.3372 | 0.4608 | 0.2149 | 0.5359 | 0.5484 | 0.3176 | 0.3682 | 0.4130 |

| San Diego-I | 0.0789 | 0.1153 | 0.2146 | 0.3821 | 0.0456 | 0.1125 | 0.1825 | 0.2261 | 0.0343 | 0.1367 | 0.4512 |

| San Diego-II | 0.1768 | 0.2151 | 0.1661 | 0.1281 | 0.2168 | 0.2350 | 0.3631 | 0.5167 | 0.0678 | 0.1184 | 0.3454 |

| Average | 0.2174 | 0.2625 | 0.2215 | 0.2489 | 0.1922 | 0.1526 | 0.3127 | 0.3853 | 0.1028 | 0.2177 | 0.4667 |

| RX [13] | CRD [19] | RGAE [29] | Auto-AD [28] | CTA [31] | GT-HAD [32] | TAEF [33] | DFAN-HAD [34] | MSNet [35] | PUNNet [36] | CTDST-HAD | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Cement Street | 0.3661 | 0.4532 | 0.0152 | 0.0166 | 0.0065 | 0.0169 | 0.0765 | 0.1217 | 0.0514 | 0.0371 | 0.1489 |

| Holly | 0.0472 | 0.1684 | 0.0101 | 0.0065 | 0.0051 | 0.0059 | 0.0280 | 0.0855 | 0.0051 | 0.0083 | 0.0622 |

| Jungle-I | 0.0531 | 0.1985 | 0.0113 | 0.0585 | 0.0051 | 0.0265 | 0.0464 | 0.0945 | 0.0051 | 0.0099 | 0.0712 |

| Jungle-II | 0.0429 | 0.2016 | 0.0075 | 0.0099 | 0.0051 | 0.0190 | 0.0381 | 0.1133 | 0.0051 | 0.0057 | 0.0794 |

| Gulfport | 0.0247 | 0.0401 | 0.0761 | 0.0409 | 0.0324 | 0.0683 | 0.2101 | 0.1036 | 0.0096 | 0.0383 | 0.0564 |

| HYDICE | 0.0351 | 0.0270 | 0.0611 | 0.0299 | 0.0055 | 0.0462 | 0.2197 | 0.1262 | 0.0069 | 0.0402 | 0.0692 |

| Texas Coast | 0.0554 | 0.0118 | 0.0197 | 0.0101 | 0.0416 | 0.0277 | 0.0719 | 0.1512 | 0.0052 | 0.0159 | 0.0393 |

| San Diego-I | 0.0405 | 0.0584 | 0.0971 | 0.0145 | 0.0144 | 0.0909 | 0.3145 | 0.0646 | 0.0069 | 0.0194 | 0.0409 |

| San Diego-II | 0.0589 | 0.0808 | 0.0676 | 0.0093 | 0.0120 | 0.1195 | 0.2053 | 0.0878 | 0.0070 | 0.0097 | 0.0141 |

| Average | 0.0247 | 0.0118 | 0.0075 | 0.0065 | 0.0051 | 0.0059 | 0.0280 | 0.0646 | 0.0051 | 0.0057 | 0.0141 |

| RX [13] | CRD [19] | RGAE [29] | Auto-AD [28] | CTA [31] | GT-HAD [32] | TAEF [33] | DFAN-HAD [34] | MSNet [35] | PUNNet [36] | CTDST-HAD | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Cement Street | 0.28 | 100.34 | 484.3 | 166.22 | 12.71 | 3.37 | 71.37 | 31.53 | 79.94 | 181.82 | 6.67 |

| Holly | 0.56 | 192.13 | 1184.38 | 139.24 | 24.53 | 6.7 | 129.25 | 41.32 | 85.11 | 182.89 | 8.98 |

| Jungle-I | 1.85 | 628.59 | 7870.62 | 397.00 | 83.33 | 21.5 | 385.57 | 1851.31 | 357.04 | 586.82 | 24.36 |

| Gulfport | 0.06 | 17.45 | 63.13 | 68.15 | 1.79 | 0.30 | 7.30 | 36.12 | 20.05 | 89.40 | 2.20 |

| HYDICE | 0.04 | 13.81 | 48.03 | 43.74 | 1.20 | 0.20 | 6.39 | 29.29 | 27.06 | 73.00 | 2.91 |

| Texas Coast | 0.05 | 17.41 | 63.90 | 57.10 | 1.91 | 0.30 | 7.09 | 45.83 | 20.46 | 61.02 | 3.31 |

| San Diego-I | 0.07 | 23.23 | 74.33 | 47.26 | 2.66 | 0.37 | 7.56 | 71.63 | 21.12 | 98.75 | 3.80 |

| San Diego-II | 0.05 | 17.33 | 62.21 | 43.83 | 1.74 | 0.30 | 7.31 | 45.52 | 19.63 | 85.14 | 3.26 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Deng, L.; Ying, J.; Wang, Q.; Cheng, Y.; Zhou, B. Contrastive–Transfer-Synergized Dual-Stream Transformer for Hyperspectral Anomaly Detection. Remote Sens. 2026, 18, 516. https://doi.org/10.3390/rs18030516

Deng L, Ying J, Wang Q, Cheng Y, Zhou B. Contrastive–Transfer-Synergized Dual-Stream Transformer for Hyperspectral Anomaly Detection. Remote Sensing. 2026; 18(3):516. https://doi.org/10.3390/rs18030516

Chicago/Turabian StyleDeng, Lei, Jiaju Ying, Qianghui Wang, Yue Cheng, and Bing Zhou. 2026. "Contrastive–Transfer-Synergized Dual-Stream Transformer for Hyperspectral Anomaly Detection" Remote Sensing 18, no. 3: 516. https://doi.org/10.3390/rs18030516

APA StyleDeng, L., Ying, J., Wang, Q., Cheng, Y., & Zhou, B. (2026). Contrastive–Transfer-Synergized Dual-Stream Transformer for Hyperspectral Anomaly Detection. Remote Sensing, 18(3), 516. https://doi.org/10.3390/rs18030516