Compilation of a Nationwide River Image Dataset for Identifying River Channels and River Rapids via Deep Learning

Highlights

- A new dataset of 281,024 river images from across the United States, with metadata and labeled subsets to support hydrologic research is made publicly available.

- Demonstrated strong performance of segmentation and classification models for detecting rivers and rapids, which could enable expansion of existing inventories for these key geomorphic features.

- Establishes a hydrologic dataset that enables new machine learning approaches for characterizing rivers via remote sensing, including advanced river segmentation and detection of rapids.

- Provides a framework to support a range of hydrologic applications including discharge estimation, habitat assessment, resource management, and recreation planning.

Abstract

1. Introduction

2. Data Construction

2.1. Google Maps Application Programming Interface (API)

2.2. Metadata

2.3. Annotation Process

3. Experiments

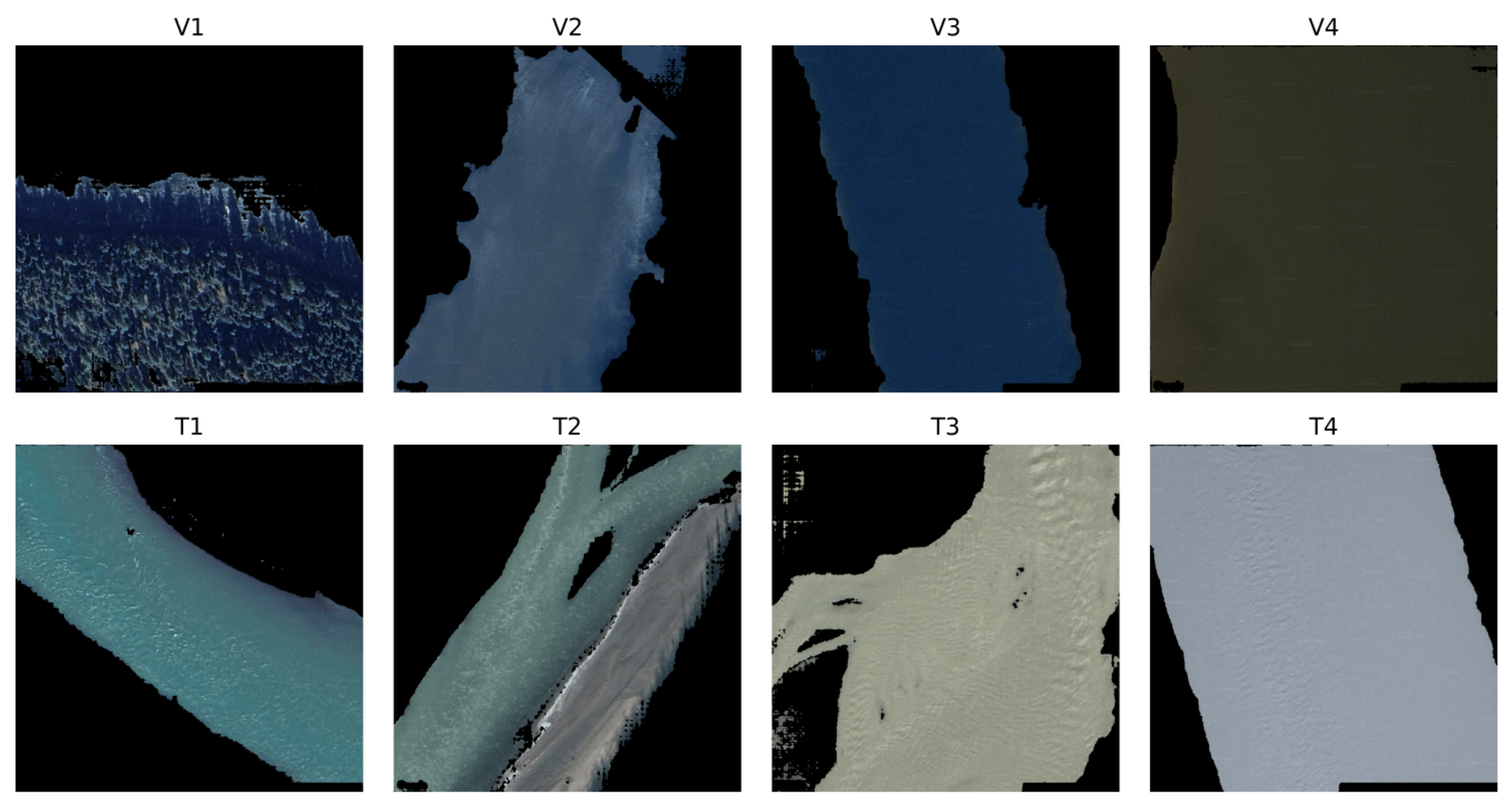

3.1. Segmentation Model

3.1.1. Model and Losses

3.1.2. Implementation Details

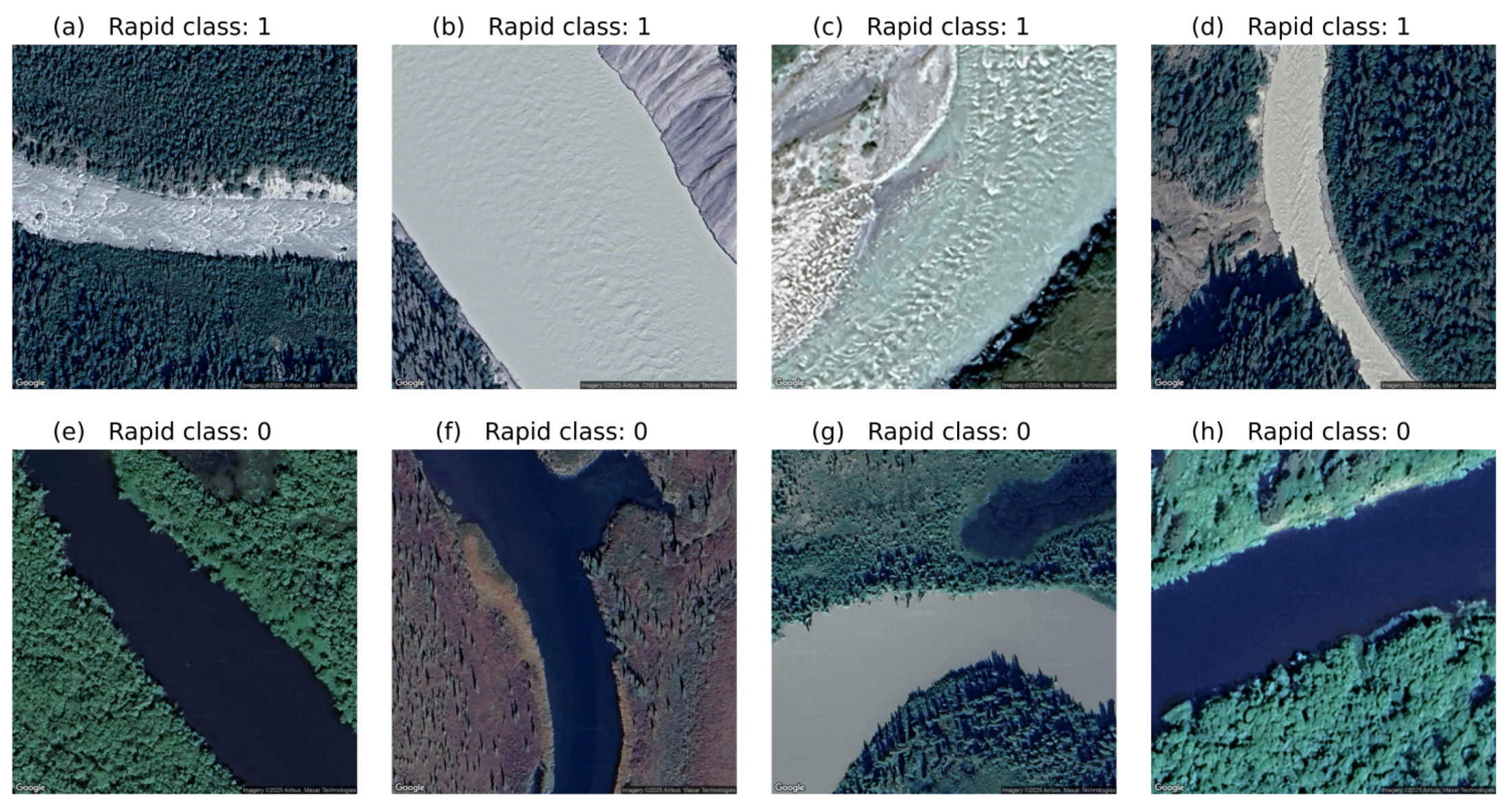

3.2. Classification of River Rapids

3.2.1. Data Preprocessing

- 1.

- Resize: Each image is rescaled to 480 × 480 pixels.

- 2.

- Data Augmentation (training only): random vertical and horizontal flips and color jitter (brightness, contrast, saturation).

- 3.

- Normalization: Standardize RGB (red-green-blue) channels using default ResNet means and standard deviations.

3.2.2. Model Architecture

- Hidden layer: 1024 units, ReLU activation.

- Hidden layer: 512 units, ReLU activation, dropout .

- Output layer: linear projection to rapid classes

3.2.3. Classifier Model Evaluation

3.2.4. Training Procedure

- Optimizer: AdamW with decoupled weight decay

- -

- Backbone learning rate:

- -

- Classifier learning rate:

- -

- Weight decay:

- Scheduler: ReduceLROnPlateau (factor = 0.1; patience = 5 epochs on validation F1)

- Mixed-precision: enabled via torch.cuda.amp

- Early stopping: patience = 10 epochs monitored on validation F1

- Batch size: 32

- Epochs: up to 50

3.3. Classification Input Architectures

3.3.1. Baseline

3.3.2. Masked Inputs

3.3.3. Active Learning

3.4. Data Splitting

- Test set: All images associated with Alaskan HUCs.

- Validation set: A random sample of HUC4s across the conterminous US selected via a constrained random walk, optimized to ensure that approximately 20% of the remaining dataset was held out for validation.

- Training set: All other HUC4s not allocated to test or validation.

4. Results

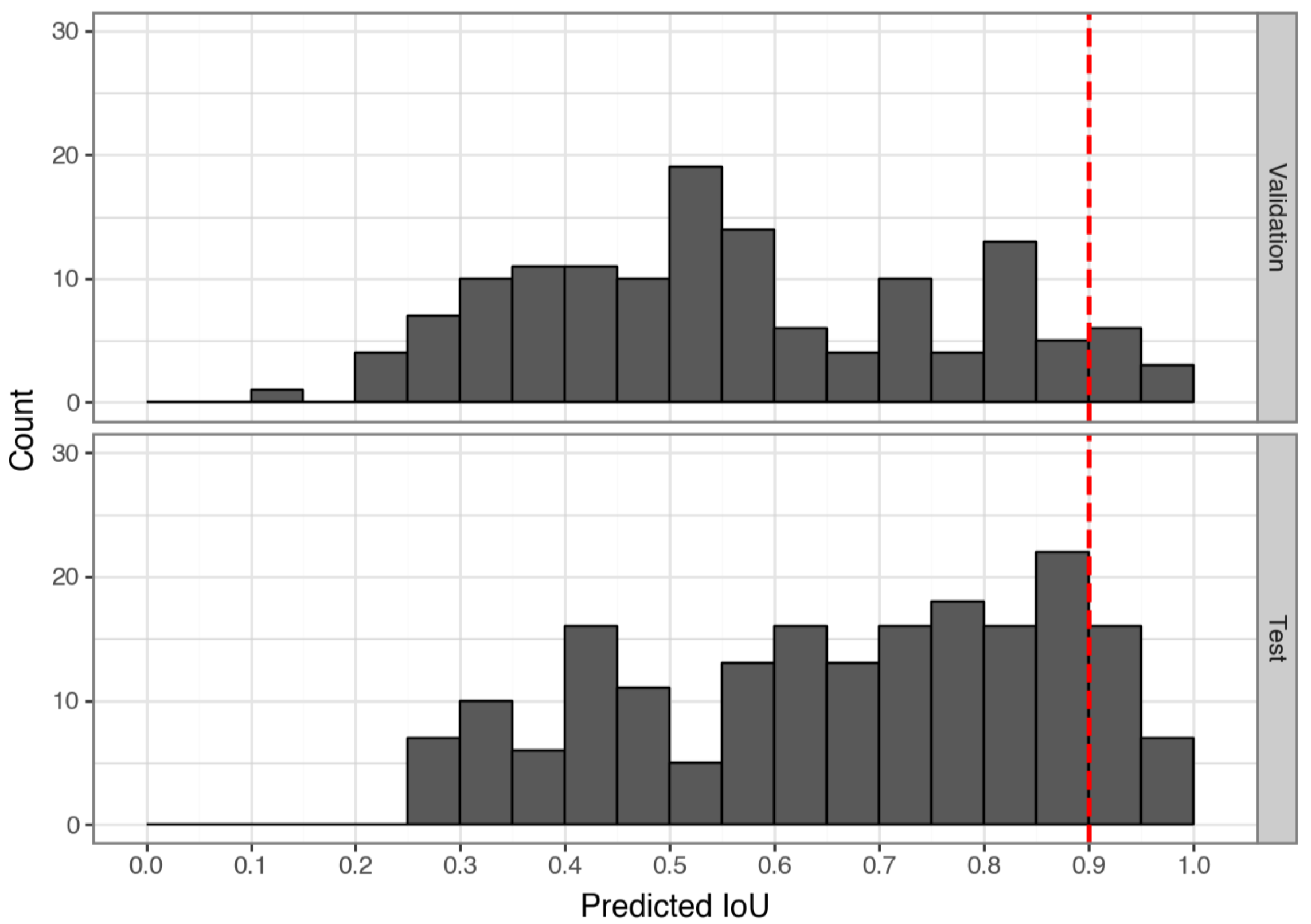

4.1. Results for Segmentation Model

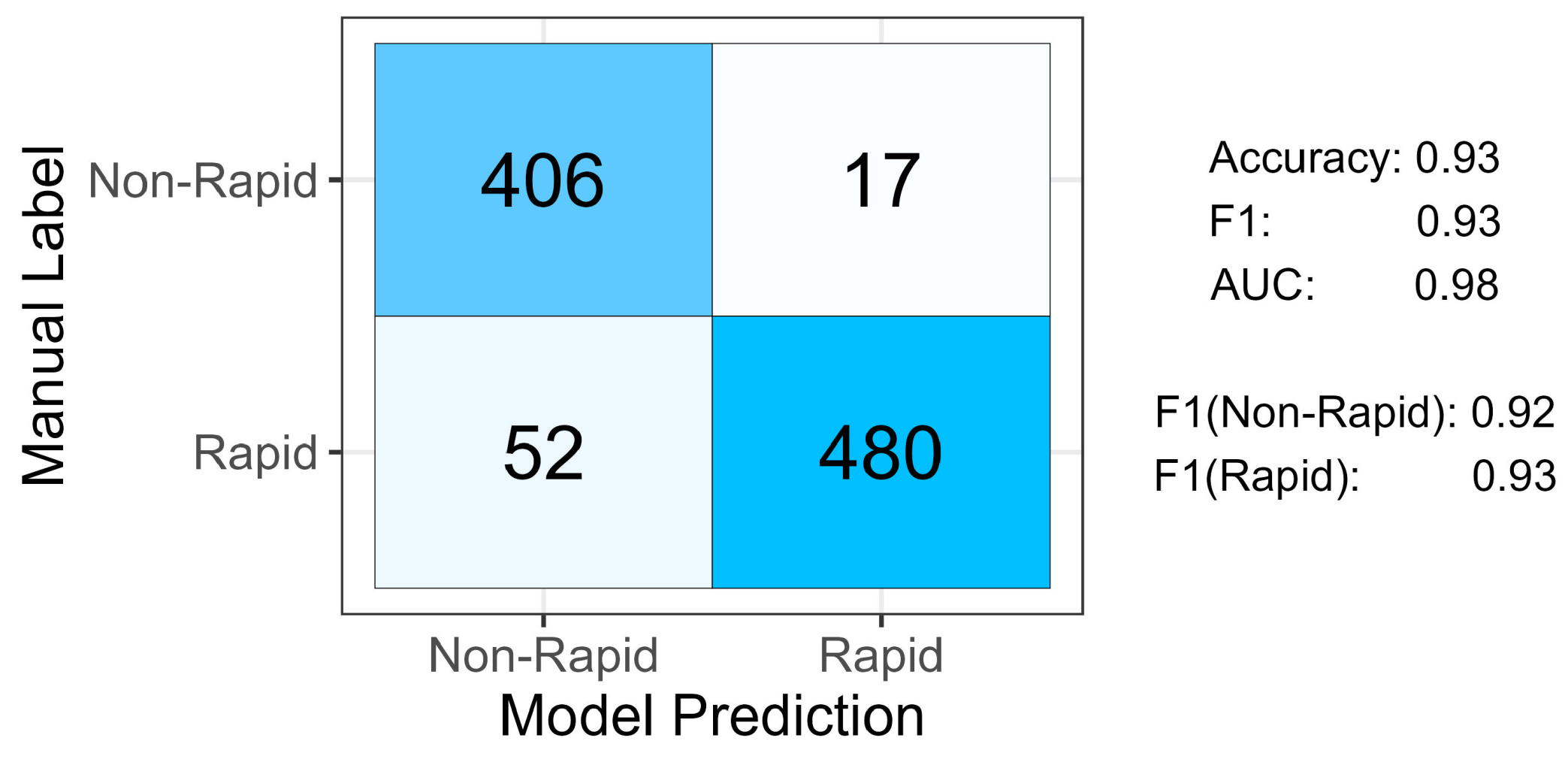

4.2. Results for Classification of River Rapids

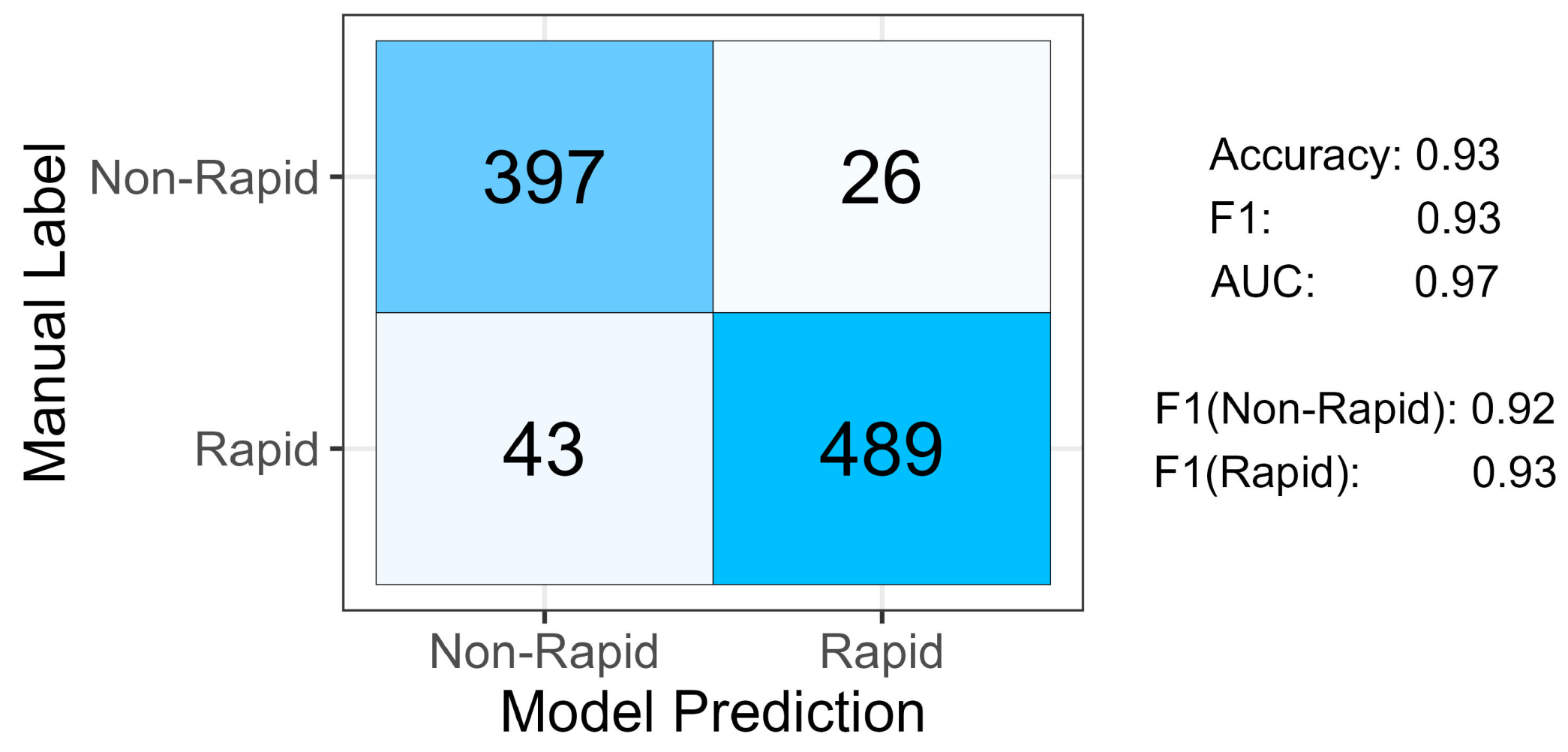

4.2.1. Baseline Rapid Model Results

4.2.2. Mask and Active Learning Results

5. Discussion

5.1. Limitations

5.2. Applications and Extensions

6. Conclusions

- 1.

- An API-based approach allowed for highly automated retrieval of images extracted at a regular interval along flowlines throughout the conterminous US and Alaska and from the locations of known rapids. The resulting database consists of 281,024 images obtained from the Google Maps Static API and made publicly available as the Compilation of Images from River Reaches across the United States (CIRRUS) [27]. CIRRUS includes a subset of images with manually annotated river masks and labels for the presence or absence of river rapids that could support further development and application of approaches for characterizing rivers via remote sensing. As a potential starting point for such efforts, we make the workflow developed for this study accessible by including our code in the data release along with the images.

- 2.

- To rigorously evaluate model performance, we split the data at the watershed level into not only training and validation sets but also an independent test set from a completely distinct area. This approach avoided spatial data leakage and allowed for a more robust assessment of model generalizability across geographic domains.

- 3.

- The segmentation model we developed for identifying river pixels within images led to a mean test of 0.57, which increased to 0.89 when only those images with high confidence were considered. These results suggest that highly automated extraction of rivers from standard, readily available satellite and aerial images is not only feasible but also potentially promising.

- 4.

- Our baseline CNN model for detecting rapids yielded overall accuracy and F1 scores of 0.93, implying that this approach could facilitate more extensive, less labor-intensive inventory and monitoring of river rapids.

- 5.

- The framework established herein could help to support numerous hydrologic applications, including non-contact streamflow measurement, habitat mapping, water resource management, and river-oriented recreation.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| API | Application Programming Interface |

| AUC | Area Under the Receiver Operating Characteristic Curve |

| CIRRUS | Compilation of Images from River Reaches across the United States |

| CNN | Convolutional Neural Network |

| GPU | Graphics Processing Unit |

| GSD | Ground Sampling Distance |

| HR | High Resolution |

| HUC | Hydrologic Unit Code |

| NHD | National Hydrography Dataset |

| NPS | National Park Service |

| OSM | OpenStreetMap |

| RGB | red-green-blue |

| SAM2 | Segment Anything Model 2 |

| SHA-1 | Secure Hash Algorithm-1 |

| URL | Uniform Resource Locator |

| US | United States |

| USGS | U.S. Geological Survey |

References

- Newson, M.D.; Newson, C.L. Geomorphology, Ecology and River Channel Habitat: Mesoscale Approaches to Basin-Scale Challenges. Prog. Phys. Geogr. 2000, 24, 195–217. [Google Scholar] [CrossRef]

- Fausch, K.D.; Torgersen, C.E.; Baxter, C.V.; Li, H.W. Landscapes to Riverscapes: Bridging the Gap between Research and Conservation of Stream Fishes. Bioscience 2002, 52, 483–498. [Google Scholar] [CrossRef]

- Marcus, W.A.; Fonstad, M.A. Remote Sensing of Rivers: The Emergence of a Subdiscipline in the River Sciences. Earth Surf. Processes Landforms 2010, 35, 1867–1872. [Google Scholar] [CrossRef]

- Carbonneau, P.; Fonstad, M.A.; Marcus, W.A.; Dugdale, S.J. Making Riverscapes Real. Geomorphology 2012, 137, 74–86. [Google Scholar] [CrossRef]

- Piégay, H.; Arnaud, F.; Belletti, B.; Bertrand, M.; Bizzi, S.; Carbonneau, P.; Dufour, S.; Liébault, F.; Ruiz-Villanueva, V.; Slater, L. Remotely Sensed Rivers in the Anthropocene: State of the Art and Prospects. Earth Surf. Processes Landforms 2020, 45, 157–188. [Google Scholar] [CrossRef]

- Zavadil, E.A.; Stewardson, M.J.; Turner, M.E.; Ladson, A.R. An Evaluation of Surface Flow Types as a Rapid Measure of Channel Morphology for the Geomorphic Component of River Condition Assessments. Geomorphology 2012, 139–140, 303–312. [Google Scholar] [CrossRef]

- Milan, D.J.; Heritage, G.L.; Large, A.R.G.; Entwistle, N.S. Mapping Hydraulic Biotopes Using Terrestrial Laser Scan Data of Water Surface Properties. Earth Surf. Processes Landforms 2010, 35, 918–931. [Google Scholar] [CrossRef]

- Woodget, A.S.; Visser, F.; Maddock, I.P.; Carbonneau, P.E. The Accuracy and Reliability of Traditional Surface Flow Type Mapping: Is It Time for a New Method of Characterizing Physical River Habitat? River Res. Appl. 2016, 32, 1902–1914. [Google Scholar] [CrossRef]

- Hedger, R.D.; Gosselin, M.P. Automated Fluvial Hydromorphology Mapping from Airborne Remote Sensing. River Res. Appl. 2023, 39, 1889–1901. [Google Scholar] [CrossRef]

- Valman, S.J.; Boyd, D.S.; Carbonneau, P.E.; Johnson, M.F.; Dugdale, S.J. An AI approach to operationalise global daily PlanetScope satellite imagery for river water masking. Remote Sens. Environ. 2024, 301, 113932. [Google Scholar] [CrossRef]

- Lee, S.; Kong, Y.; Lee, T. Development of Deep Intelligence for Automatic River Detection (RivDet). Remote Sens. 2025, 17, 346. [Google Scholar] [CrossRef]

- Zhang, X.; Liu, Q.; Gui, D.; Zhao, J.; Chen, Y.; Liu, Y.; Martínez-Valderrama, J. Enhanced River Connectivity Assessment Across Larger Areas Through Deep Learning with Dam Detection. Hydrol. Processes 2025, 39, e70063. [Google Scholar] [CrossRef]

- Grimmer, G.; Wenger, R.; Forestier, G.; Chardon, V. Automatic detection of in-stream river wood from random forest machine learning and exogenous indices using very high-resolution aerial imagery. Environ. Model. Softw. 2025, 190, 106460. [Google Scholar] [CrossRef]

- Dawson, M.; Dawson, H.; Gurnell, A.; Lewin, J.; Macklin, M.G. AI-assisted interpretation of changes in riparian woodland from archival aerial imagery using Meta’s segment anything model. Earth Surf. Processes Landforms 2025, 50, e6053. [Google Scholar] [CrossRef]

- Harlan, M.E.; Gleason, C.J.; Flores, J.A.; Langhorst, T.M.; Roy, S. Mapping and Characterizing Arctic Beaded Streams through High Resolution Satellite Imagery. Remote Sens. Environ. 2023, 285, 113378. [Google Scholar] [CrossRef]

- Legleiter, C.J.; Grant, G.; Bae, I.; Fasth, B.; Yager, E.; White, D.C.; Hempel, L.; Harlan, M.E.; Leonard, C.; Dudley, R. Remote Sensing of River Discharge Based on Critical Flow Theory. Geophys. Res. Lett. 2025, 52, e2025GL114851. [Google Scholar] [CrossRef]

- James, K. Rafting the Grand Canyon. 2025. Available online: https://www.visitarizona.com/like-a-local/grand-canyon-river-trip (accessed on 19 August 2025).

- Service, N.P. Whitewater—Gauley River National Recreation Area (U.S. National Park Service)—nps.gov. 2025. Available online: https://www.nps.gov/gari/planyourvisit/whitewater.htm (accessed on 19 August 2025).

- Service, N.P. Visitor Use Data—Social Science (U.S. National Park Service)—nps.gov. 2025. Available online: https://www.nps.gov/subjects/socialscience/visitor-use-statistics-dashboard.htm (accessed on 19 August 2025).

- Brinkerhoff, C.B.; Gleason, C.J.; Zappa, C.J.; Raymond, P.A.; Harlan, M.E. Remotely Sensing River Greenhouse Gas Exchange Velocity Using the SWOT Satellite. Glob. Biogeochem. Cycles 2022, 36, e2022GB007419. [Google Scholar] [CrossRef]

- U.S. Geological Survey. National Hydrography Dataset Plus High Resolution; U.S. Geological Survey Data Release; U.S. Geological Survey: Reston, VA, USA, 2022. [Google Scholar] [CrossRef]

- U.S. Geological Survey. 3D Hydrography Dataset Program; U.S. Geological Survey Data Release; U.S. Geological Survey: Reston, VA, USA, 2025. [Google Scholar] [CrossRef]

- Legleiter, C.J.; Bae, I.; Fasth, B.; Grant, G.; Yager, E.; White, D.; Hempel, L.; Harlan, M.; Leonard, C. Image-Based Measurements and Gage Records Used to Test a Method for Inferring River Discharge from Remotely Sensed Data Based on Critical Flow Theory; U.S. Geological Survey Data Release; U.S. Geological Survey: Reston, VA, USA, 2024. [Google Scholar] [CrossRef]

- R Core Team. R: A Language and Environment for Statistical Computing; Version 4.4.0; R Core Team: Vienna, Austria, 2025; Available online: https://www.R-project.org (accessed on 2 October 2025).

- Vaughan, D.; Dancho, M. furrr: Apply Mapping Functions in Parallel Using Futures, R package version 0.3.1; R Core Team: Vienna, Austria, 2022. Available online: https://furrr.futureverse.org/ (accessed on 10 January 2026).

- Csárdi, G. dotenv: Load Environment Variables from ’.env’, R package version 1.0.3, 2021. Available online: https://cran.r-project.org/web/packages/dotenv/index.html (accessed on 10 January 2026).

- Legleiter, C.J.; Bladen, K.; Brimhall, N. Compilation of Images from Rivers Reaches Across the United States (CIRRUS); U.S. Geological Survey Data Release; U.S. Geological Survey: Reston, VA, USA, 2025. [Google Scholar] [CrossRef]

- McHugh, M.L. Interrater reliability: The kappa statistic. Biochem. Medica 2012, 22, 276–282. [Google Scholar] [CrossRef]

- Gwet, K.L. Handbook of Inter-Rater Reliability: The Definitive Guide to Measuring the Extent of Agreement Among Raters, 4th ed.; Advanced Analytics, LLC: Gaithersburg, MD, USA, 2014. [Google Scholar]

- Ravi, N.; Gabeur, V.; Hu, Y.T.; Hu, R.; Ryali, C.; Ma, T.; Khedr, H.; Rädle, R.; Rolland, C.; Gustafson, L.; et al. SAM 2: Segment Anything in Images and Videos. arXiv 2024, arXiv:2408.00714. [Google Scholar] [PubMed]

- Paszke, A.; Gross, S.; Massa, F.; Lerer, A.; Bradbury, J.; Chanan, G.; Killeen, T.; Lin, Z.; Gimelshein, N.; Antiga, L.; et al. PyTorch: An Imperative Style, High-Performance Deep Learning Library. In Advances in Neural Information Processing Systems 32; Wallach, H., Larochelle, H., Beygelzimer, A., d’Alché Buc, F., Fox, E., Garnett, R., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2019; pp. 8024–8035. [Google Scholar]

- Smith, L.N. Cyclical Learning Rates for Training Neural Networks. In Proceedings of the 2017 IEEE Winter Conference on Applications of Computer Vision (WACV), Santa Rosa, CA, USA, 24–31 March 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 464–472. [Google Scholar] [CrossRef]

- Howard, J.; Ruder, S. Universal Language Model Fine-tuning for Text Classification. arXiv 2018, arXiv:1801.06146. [Google Scholar] [CrossRef]

- Masters, D.; Luschi, C. Revisiting small batch training for deep neural networks. arXiv 2018, arXiv:1804.07612. [Google Scholar] [CrossRef]

- Prechelt, L. Early Stopping—But When? In Neural Networks: Tricks of the Trade; Springer: Berlin/Heidelberg, Germany, 1998; pp. 55–69. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Zhou, Z.; Zheng, Y.; Ye, H.; Pu, J.; Sun, G. Satellite image scene classification via convnet with context aggregation. In Proceedings of the Pacific Rim Conference on Multimedia, Hefei, China, 21–22 September 2018; Springer: Berlin/Heidelberg, Germany, 2018; pp. 329–339. [Google Scholar]

- Bilotta, G.; Bibbò, L.; Meduri, G.M.; Genovese, E.; Barrile, V. Deep Learning Innovations: ResNet Applied to SAR and Sentinel-2 Imagery. Remote Sens. 2025, 17, 1961. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Identity mappings in deep residual networks. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 11–14 October 2016; Springer: Berlin/Heidelberg, Germany, 2016; pp. 630–645. [Google Scholar]

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Li, F.-F. Imagenet: A large-scale hierarchical image database. In Proceedings of the 2009 IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 20–25 June 2009; IEEE: Piscataway, NJ, USA, 2009; pp. 248–255. [Google Scholar]

- Lewis, D.D.; Gale, W.A. A sequential algorithm for training text classifiers. In Proceedings of the 17th Annual International ACM SIGIR Conference on Research and Development in Information Retrieval, Dublin, Ireland, 3–6 July 1994; Springer: Berlin/Heidelberg, Germany, 1994; pp. 3–12. [Google Scholar]

- Settles, B. Active Learning Literature Survey; Computer Sciences Technical Report 1648; University of Wisconsin-Madison: Madison, WI, USA, 2009. [Google Scholar]

- Santos-Fernandez, E.; Huser, R.; Castruccio, S.; Stephenson, A.G.; Sisson, S.A. Spatially balanced sampling via Gaussian processes. Bayesian Anal. 2021, 16, 1179–1207. [Google Scholar]

- Konyushkova, K.; Sznitman, R.; Fua, P. Learning active learning from data. In Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; Curran Associates Inc.: Red Hook, NY, USA, 2017; Volume 30. [Google Scholar]

- Roberts, D.R.; Bahn, V.; Ciuti, S.; Boyce, M.S.; Elith, J.; Guillera-Arroita, G.; Hauenstein, S.; Lahoz-Monfort, J.J.; Schröder, B.; Thuiller, W.; et al. Cross-validation strategies for data with temporal, spatial, hierarchical, or phylogenetic structure. Ecography 2017, 40, 913–929. [Google Scholar] [CrossRef]

- Hartmann, D.; Gravey, M.; Price, T.D.; Nijland, W.; de Jong, S.M. Surveying Nearshore Bathymetry Using Multispectral and Hyperspectral Satellite Imagery and Machine Learning. Remote Sens. 2025, 17, 291. [Google Scholar] [CrossRef]

- Meyer, H.; Reudenbach, C.; Wöllauer, S.; Nauss, T. Importance of spatial predictor variable selection in machine learning applications–Moving from data reproduction to spatial prediction. Ecol. Model. 2019, 411, 108815. [Google Scholar] [CrossRef]

- Marmanis, D.; Schindler, K.; Wegner, J.D.; Galliani, S.; Datcu, M.; Stilla, U. Classification with an edge: Improving semantic image segmentation with boundary detection. ISPRS J. Photogramm. Remote Sens. 2018, 135, 158–172. [Google Scholar] [CrossRef]

- Bischke, B.; Helber, P.; Folz, J.; Borth, D.; Dengel, A. Multi-task learning for segmentation of building footprints with deep neural networks. In Proceedings of the 2019 IEEE International Conference on Image Processing (ICIP), Taipei, Taiwan, 22–25 September 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1480–1484. [Google Scholar]

- Kervadec, H.; Bouchtiba, J.D.I.; Desrosiers, C.; Ayed, I.B. Boundary loss for highly unbalanced segmentation. arXiv 2018, arXiv:1812.07032. [Google Scholar] [CrossRef] [PubMed]

- Milletari, F.; Navab, N.; Ahmadi, S.A. V-net: Fully convolutional neural networks for volumetric medical image segmentation. In Proceedings of the 2016 Fourth International Conference on 3D Vision (3DV), Stanford, CA, USA, 25–28 October 2016; IEEE: Piscataway, NJ, USA, 2016; pp. 565–571. [Google Scholar]

- Cohen, J. A coefficient of agreement for nominal scales. Educ. Psychol. Meas. 1960, 20, 37–46. [Google Scholar] [CrossRef]

- Krippendorff, K. Estimating the reliability, systematic error, and random error of interval data. Educ. Psychol. Meas. 1970, 30, 61–70. [Google Scholar] [CrossRef]

| Field | Description |

|---|---|

| image | Image filename (serves as Primary Key) |

| name | River or watershed identifier |

| latitude | Latitude coordinate of the image center in decimal degrees |

| longitude | Longitude coordinate of the image center in decimal degrees |

| zoom | Zoom level at image capture (18 or 19) |

| huc2 | The distinct major hydrological region for an image |

| huc4 | The distinct hydrological sub-region for an image |

| api_timestamp | Timestamp when the image was retrieved from the Maps API |

| mask | Label denoting existence of a manually annotated segmentation mask |

| (0 = no, 1 = yes) | |

| river_class | Manually annotated label indicating river presence (0 = no, 1 = yes) |

| rapid_class | Manually annotated label indicating rapid presence (0 = no, 1 = yes) |

| Model | Train | Validation | Test |

|---|---|---|---|

| SAM2 | 555 | 138 | 192 |

| Baseline CNN | 2485 (899:1586) | 618 (293:325) | 955 (423:532) |

| Masked Inputs CNN | 2995 (1197:1798) | 618 (293:325) | 955 (423:532) |

| Active Learning CNN | 2759 (1087:1672) | 751 (385:367) | 955 (423:532) |

| Model | Accuracy | F1-Score | AUC |

|---|---|---|---|

| Baseline | 0.93 | 0.93 | 0.98 |

| Masked Inputs | 0.93 | 0.93 | 0.97 |

| Active Learning | 0.92 | 0.92 | 0.98 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Brimhall, N.; Bladen, K.K.; Kerby, T.; Legleiter, C.J.; Swapp, C.; Fluckiger, H.; Bahr, J.; Roberts, M.; Hart, K.; Stegman, C.L.; et al. Compilation of a Nationwide River Image Dataset for Identifying River Channels and River Rapids via Deep Learning. Remote Sens. 2026, 18, 375. https://doi.org/10.3390/rs18020375

Brimhall N, Bladen KK, Kerby T, Legleiter CJ, Swapp C, Fluckiger H, Bahr J, Roberts M, Hart K, Stegman CL, et al. Compilation of a Nationwide River Image Dataset for Identifying River Channels and River Rapids via Deep Learning. Remote Sensing. 2026; 18(2):375. https://doi.org/10.3390/rs18020375

Chicago/Turabian StyleBrimhall, Nicholas, Kelvyn K. Bladen, Thomas Kerby, Carl J. Legleiter, Cameron Swapp, Hannah Fluckiger, Julie Bahr, Makenna Roberts, Kaden Hart, Christina L. Stegman, and et al. 2026. "Compilation of a Nationwide River Image Dataset for Identifying River Channels and River Rapids via Deep Learning" Remote Sensing 18, no. 2: 375. https://doi.org/10.3390/rs18020375

APA StyleBrimhall, N., Bladen, K. K., Kerby, T., Legleiter, C. J., Swapp, C., Fluckiger, H., Bahr, J., Roberts, M., Hart, K., Stegman, C. L., Bean, B. L., & Moon, K. R. (2026). Compilation of a Nationwide River Image Dataset for Identifying River Channels and River Rapids via Deep Learning. Remote Sensing, 18(2), 375. https://doi.org/10.3390/rs18020375