4.1. Datasets and Evaluation Metrics

In this work, we use FarmSeg-VL [

49], the first fine-grained image–text dataset specifically constructed for spatiotemporal farmland segmentation. FarmSeg-VL provides rich language-based descriptions that explicitly encode farmland shape, spatial distribution, phenological states, surrounding environmental elements, and regional topographic characteristics, addressing the limitations of conventional label-only remote sensing datasets in modeling spatial relationships and seasonal dynamics. Meanwhile, the textual descriptions in FarmSeg-VL explicitly specify the imaging month and season for each sample, covering all four seasons (spring, summer, autumn, and winter) across different agricultural phenological stages. This seasonal information enables the model to learn season-aware features and ensures comprehensive evaluation across varying temporal conditions. The dataset is built through a semi-automatic annotation pipeline to ensure high semantic quality and efficient caption generation. It covers eight major agricultural regions across China, spans approximately 4300 km

2, includes imagery from all four seasons, and offers a spatial resolution ranging from 0.5 m to 2 m. All images are cropped into 512 × 512 patches to retain detailed spatial structures such as field boundaries and vegetation textures. The dataset contains 15,821 training samples, 4512 validation samples, and 2272 test samples, with test samples drawn from the Northeast China Plain, the Huang-Huai-Hai Plain, the Northern Arid and Semi-Arid Region, the Loess Plateau, the Yangtze River Middle and Lower Reaches Plain, South China, the Sichuan Basin, and the Yungui Plateau. FarmSeg-VL serves as a comprehensive benchmark for evaluating both traditional deep learning methods and modern vision–language models in farmland segmentation.

In this study, four widely adopted metrics are employed to comprehensively assess the performance of our model on the farmland segmentation task. Specifically, we use Pixel Accuracy (ACC), Mean Intersection over Union (MIoU), Mean Dice coefficient (mDice), and Recall, as they capture different aspects of segmentation quality from pixel-level correctness to region-level consistency and class-wise recognition capability. Together, these metrics provide a balanced and reliable evaluation of the model’s effectiveness. The mathematical definitions of these metrics are given as follows:

4.2. Implementation Details

All experiments are conducted on NVIDIA A6000 GPUs using DeepSpeed for distributed training and memory optimization. The multimodal backbone is initialized from the LLaVA-Llama-2-13B-Chat-Lightning-Preview model, while the visual encoder is based on the DINOv3-ViT-H/16 variant, loaded from its official pretrained checkpoint. During training, the DINOv3 vision tower and the multimodal projection layers remain frozen, and only the language model and segmentation-related modules, including the mask decoder and text-guided fusion layers, are updated. To enable parameter-efficient tuning, LoRA adapters with rank r = 16, scaling factor α = 32, and dropout rate 0.1 are injected into selected linear layers of the language model. The AdamW optimizer is employed with a learning rate of 3 × 10−4, weight decay of 0.01, and momentum parameters β1 = 0.9 and β2 = 0.95. A linear warm-up strategy is applied for the first 100 iterations, followed by a scheduled decay using DeepSpeed’s WarmupDecayLR. Training is run for 10 epochs, each consisting of 1000 optimization steps, using a micro-batch size of 2 on each GPU and gradient accumulation over 10 iterations. The input images are resized to 1024 × 1024 pixels, and the maximum text sequence length is set to 512 tokens. Mixed-precision training is enabled through BF16 to improve computational efficiency, with gradient clipping set to 1.0 for training stability. The overall training objective combines cross-entropy loss for language modeling, binary cross-entropy loss for mask prediction, and a Dice loss term, weighted by 1.0, 2.0, and 0.5, respectively. Data loading follows a hybrid sampling strategy that mixes segmentation samples with explanatory text according to predefined sampling rates. During validation, full-resolution predictions are generated and saved for qualitative analysis. Model selection is based on validation mIoU, and all compared methods follow identical data partitions to ensure fair and consistent evaluation.

4.3. Comparisons with Other Methods

To comprehensively evaluate the effectiveness of our proposed approach, we compare it with a diverse set of segmentation baselines that cover general semantic segmentation models, remote-sensing-oriented architectures, and vision–language models tailored for farmland understanding. Among them, DeepLab-v3 [

50] represents typical semantic segmentation frameworks widely adopted across natural-image tasks, providing strong baselines for assessing the general perceptual and feature-aggregation capabilities of our model. DCSwin [

51], UNetFormer [

52], DOCNet [

53] and LOGCAN++ [

54] are specifically designed for remote sensing imagery and thus offer competitive comparisons under domain-specific conditions such as complex terrain, spatial heterogeneity, and high-resolution farmland structures. Finally, FSVLM [

16] is a vision-language segmentation model tailored for farmland, which integrates SAM’s segmentation capabilities with the LLaVA multimodal framework using an “embedding as mask” paradigm, enabling explicit integration of textual knowledge related to farmland attributes and spatiotemporal patterns. By comparing with these methods spanning general, domain-specific, and multimodal paradigms, we demonstrate that our model not only maintains competitive segmentation performance in broad segmentation scenarios but also excels in capturing farmland-specific structural characteristics and leveraging language-driven priors, highlighting its advantages in both robustness and semantic understanding.

Table 1,

Table 2,

Table 3,

Table 4,

Table 5,

Table 6,

Table 7 and

Table 8 present the quantitative comparison results across eight geographical regions in China. Our proposed method achieves the best mIoU in seven out of eight regions, demonstrating consistent competitiveness over existing approaches. Specifically, our model attains mIoU scores of 87.22%, 77.38%, 86.44%, 84.07%, 84.09%, 91.28%, 95.08%, and 94.95% across the Yangtze River Middle and Lower Reaches Plain, South China Areas, Sichuan Basin, Yungui Plateau, Northern Arid and Semi-arid Region, Northeast China Plain, Loess Plateau, and Huang-Huai-Hai Plain, respectively. The most substantial improvements are observed in the northern agricultural regions, where our model surpasses the second-best method by 1.20% mIoU on the Loess Plateau and 1.54% mIoU on the Huang-Huai-Hai Plain. These regions are characterized by large-scale regular farmland parcels with clear geometric boundaries, where our FECF mechanism effectively preserves high-frequency boundary details during feature fusion. Notably, in the Sichuan Basin, FSVLM achieves the best performance (86.52% mIoU), slightly outperforming our method (86.44% mIoU) by only 0.08%. This marginal difference suggests that both vision-language approaches demonstrate comparable effectiveness in this region, and the performance gap falls within the range of experimental variance.

Among the compared methods, UNetFormer and LOGCAN++ consistently rank as strong competitors, achieving competitive performance particularly in northern regions with regular farmland patterns. UNetFormer achieves the second-best mIoU in the Loess Plateau (93.88%) and Northeast China Plain (91.19%), while LOGCAN++ performs well in the Northern Arid and Semi-arid Region (83.72% mIoU). However, these methods show performance degradation in southern regions characterized by fragmented and irregularly shaped parcels, such as the South China Areas where UNetFormer achieves 76.71% mIoU and DOCNet achieves 76.94% mIoU compared to our 77.38%. DeepLab-v3, as a general semantic segmentation baseline, shows relatively lower performance across most regions, achieving 78.27% mIoU in the Yangtze River Middle and Lower Reaches Plain, 62.29% mIoU in the South China Areas, and 84.56% mIoU in the Huang-Huai-Hai Plain, indicating its limited capacity to handle the diverse and complex agricultural landscapes without domain-specific adaptations. The vision-language baseline FSVLM shows variable performance across regions: while achieving the best results in the Sichuan Basin (86.52% mIoU), it underperforms in other regions such as the Yangtze River Middle and Lower Reaches Plain (84.14% mIoU) and South China Areas (74.52% mIoU). DCSwin shows considerable variation across regions, performing adequately in the Huang-Huai-Hai Plain (88.96% mIoU) but struggling in the South China Areas (64.38% mIoU), indicating limited adaptability to diverse agricultural landscapes. In contrast, our proposed method demonstrates superior robustness and consistency across all eight geographical regions with diverse terrain characteristics. The integration of UGAA enables our model to dynamically adjust the fusion strength between textual and visual modalities based on alignment confidence, while the FECF mechanism effectively preserves boundary-relevant high-frequency details. These two mechanisms work synergistically to address the challenges of text-visual correspondence ambiguity and boundary precision that limit the performance of existing methods, resulting in consistently high performance across both northern regions with regular field patterns and southern regions with fragmented, irregularly shaped parcels.

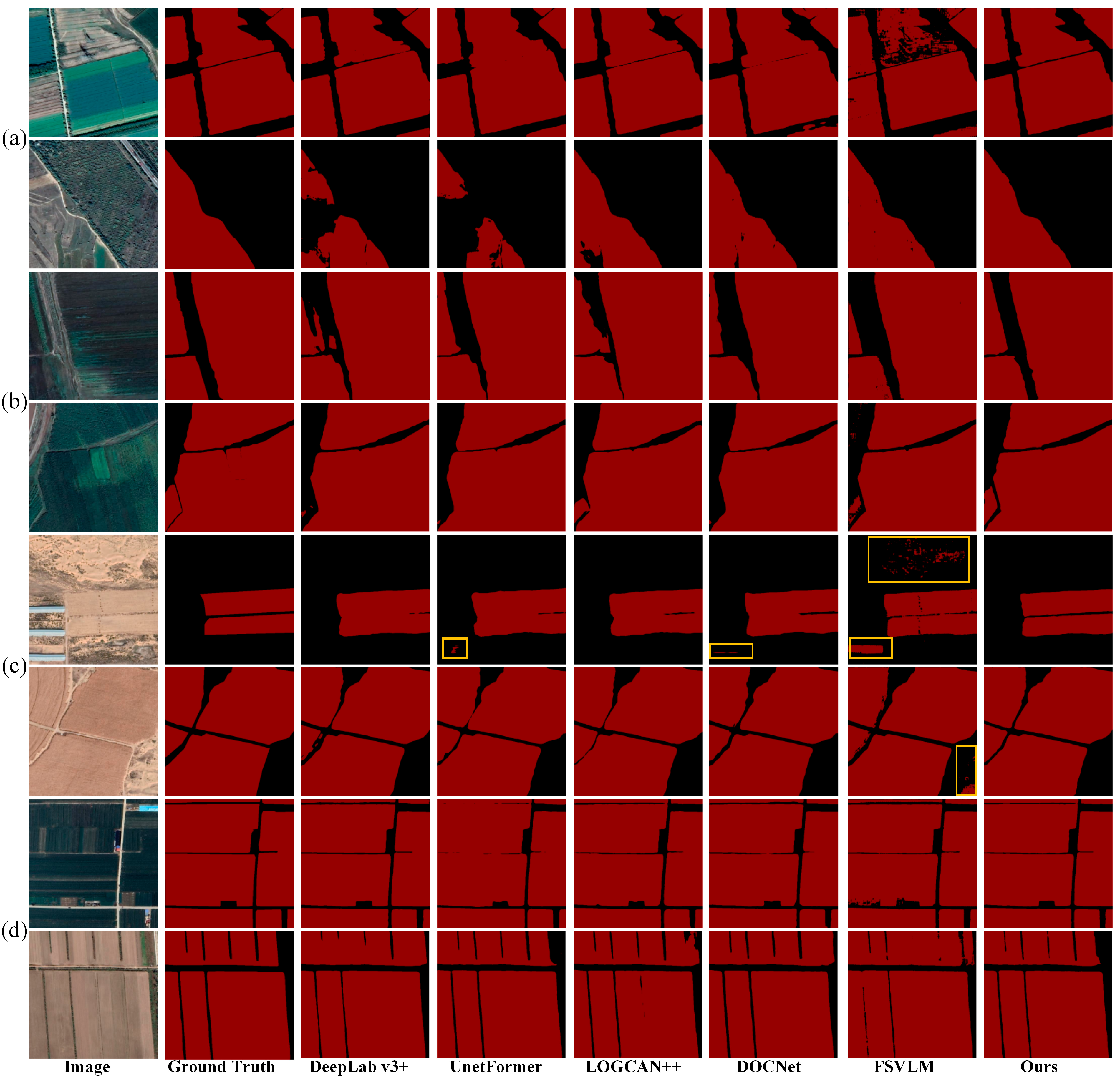

To complement the quantitative evaluation, we provide qualitative comparisons through visualization of segmentation results across representative samples from all eight regions, as illustrated in

Figure 4 and

Figure 5. The yellow boxes in these figures highlight false positive regions where methods incorrectly classify non-farmland areas as farmland. The southern agricultural regions, characterized by small-scale and fragmented farmland parcels embedded within complex surrounding environments, pose significant challenges for accurate segmentation (

Figure 4). In the Yangtze River Middle and Lower Reaches Plain samples (row a), DeepLab-v3, UNetFormer, and LOGCAN++ produce similar false positive predictions in areas adjacent to farmland boundaries in the first sample, whereas our method correctly distinguishes these ambiguous regions through UGAA. In the second sample, most compared methods fail to extract the farmland parcels entirely; only FSVLM and our method successfully segment the farmland regions, with FSVLM still producing fragmented false positive artifacts. The South China Areas samples (row b) reveal that all compared methods except ours suffer from either false positive predictions or incomplete extraction, while our model leverages the UGAA module to achieve complete and accurate farmland extraction by effectively differentiating farmland from visually similar surrounding environments. In the Sichuan Basin samples (row c), although FSVLM achieves the highest quantitative accuracy in this region, it fails to completely extract farmland parcels in the first sample under complex surrounding conditions, whereas our method maintains robust extraction capability. Both FSVLM and our method achieve consistently strong performance in the second sample. The Yungui Plateau samples (row d) demonstrate that other methods either generate false positive predictions or produce incomplete extraction results, whereas our method achieves accurate and complete farmland segmentation by dynamically adjusting the fusion strength between textual and visual modalities based on alignment confidence.

The northern agricultural regions present distinct challenges, primarily involving large-scale farmland parcels where precise boundary delineation and prevention of boundary adhesion become critical (

Figure 5). In the Northern Arid and Semi-arid Region samples (row a), our method achieves precise boundary delineation while maintaining clear separation between adjacent farmland parcels. In contrast, DeepLab-v3, UNetFormer, LOGCAN++, and DOCNet suffer from boundary adhesion where neighboring parcels are incorrectly merged, while FSVLM produces severe fragmentation artifacts. The Northeast China Plain samples (row b) demonstrate that our method not only accurately segments the boundaries of large-scale farmland parcels but also successfully extracts small farmland regions at the edges that other methods fail to detect. The Loess Plateau samples (row c) present particularly challenging scenarios where sparse vegetation and arid soil conditions cause farmland and non-farmland regions to exhibit similar visual characteristics. Other methods produce noticeable boundary adhesion or misalignment in areas with ambiguous land cover transitions, whereas our method successfully separates adjacent parcels with precise boundaries. The Huang-Huai-Hai Plain samples (row d) further confirm that our method achieves accurate boundary segmentation, while some competing methods incorrectly classify adjacent non-farmland areas as farmland. These qualitative observations corroborate the quantitative results, demonstrating that the proposed UGAA and FECF mechanisms effectively enhance both farmland recognition accuracy and boundary precision, particularly in preventing boundary adhesion between adjacent parcels and detecting small farmland regions across diverse geographical and agricultural conditions.

4.4. Ablation Experiments

To validate the effectiveness of each proposed component, we conduct comprehensive ablation experiments on the FarmSeg-VL test set. Specifically, we evaluate the contribution of the UGAA module and the FECF mechanism by progressively adding them to the baseline model. The baseline model consists of the LLaVA multimodal backbone, DINOv3 visual encoder, and DPT-based decoder without our proposed cross-modal fusion modules.

The quantitative results of our ablation study are summarized in

Table 6. The baseline model achieves an mIoU of 87.69%, Accuracy of 93.46%, mDice of 93.43%, and Recall of 93.42%. When incorporating only the FECF mechanism, the model demonstrates modest improvements with mIoU increasing to 88.03% (+0.34%), indicating that frequency-domain fusion effectively preserves boundary-relevant high-frequency details during cross-modal feature integration. The incorporation of only the UGAA module yields more substantial improvements, achieving an mIoU of 89.01% (+1.32%), Accuracy of 94.20%, mDice of 94.18%, and Recall of 94.15%. This significant enhancement validates our hypothesis that dynamically estimating text-visual alignment confidence and adaptively modulating fusion strength is crucial for handling the inherent ambiguity in farmland descriptions. When both modules are combined, the full model achieves the best performance with mIoU of 89.66% (+1.97%), demonstrating that UGAA and FECF provide complementary benefits. While the numerical improvements may appear modest, it is important to note that in fine-grained farmland segmentation tasks, even small gains in mIoU correspond to substantial improvements in boundary precision, as evidenced by the qualitative results in

Figure 6. Moreover, as shown in

Table 9, the additional computational cost introduced by UGAA and FECF is minimal. The full model requires only 68.2 M trainable parameters, representing a modest increase of 5.7 M (9.1%) over the 62.5 M baseline, while the TFLOPs remain virtually unchanged at 15.86. This negligible increase in computational complexity demonstrates the effectiveness of our lightweight module design. UGAA handles semantic alignment uncertainty while FECF preserves geometric boundary details.

To further validate our quantitative findings, we provide qualitative comparisons of segmentation results across different model configurations in

Figure 6. The first row demonstrates the effectiveness of our FECF module in boundary delineation. As highlighted by the yellow boxes, the baseline model and UGAA-only variant produce boundaries with noticeable irregularities and imprecise edges at field margins. In contrast, the configurations incorporating FECF (both FECF-only and the full model) achieve significantly sharper and more accurate boundary predictions. This improvement can be attributed to the frequency-domain decomposition in FECF, which explicitly preserves high-frequency boundary details that are often smoothed during conventional spatial-domain fusion operations. The narrow vegetation strips and subtle elevation changes along field edges are better captured through selective enhancement of boundary-relevant spectral components.

The second row in

Figure 6 illustrates the advantage of our UGAA module in extracting farmland regions when visual features exhibit similarity with surrounding areas. The highlighted yellow regions show areas where farmland parcels share similar spectral and textural characteristics with adjacent regions, making accurate extraction challenging based on visual features alone. The baseline model and FECF-only variant fail to extract these visually similar farmland areas, resulting in incomplete segmentation with missing parcels. However, the UGAA-equipped configurations (both UGAA-only and the full model) successfully identify and extract these challenging farmland regions by leveraging text-guided semantic understanding. When visual features alone are insufficient to distinguish farmland from surrounding areas, the UGAA module appropriately increases the contribution of textual guidance based on the estimated alignment confidence, enabling the model to recognize farmland parcels that would otherwise be missed due to visual ambiguity.

To gain deeper insight into the internal mechanism, we visualize the feature representations at different stages of our decoder architecture in

Figure 7. The columns three to six displays the four-dimensional feature maps output by the decoder before our cross-modal fusion modules, which serve as the foundation for dense prediction. These features capture multi-scale spatial information but exhibit limited discriminability at boundary regions and areas with similar visual appearances. The last column presents the enhanced feature maps after processing through both UGAA and FECF modules. The enhanced features demonstrate substantially improved boundary definition, with clearer separation between adjacent farmland parcels and more distinct activation patterns at field edges. Furthermore, the enhanced features show better consistency in homogeneous farmland regions while maintaining sharp transitions at boundaries, indicating that our dual-module design successfully addresses both semantic alignment challenges and geometric detail preservation. The comparison confirms that our proposed modules effectively transform the raw decoder features into more discriminative representations that are better suited for accurate farmland parcel segmentation.

To investigate the sensitivity of UGAA to text prompt variations and analyze the contribution of the language model branch, we conduct experiments under three conditions, including detailed descriptions from the FarmSeg-VL dataset, simple task instructions (“Segment the farmland.”), and no text prompt where we remove the entire language model branch and retain only the DINOv3 encoder and decoder for training. As shown in

Table 10, the model with detailed prompts achieves 89.66% mIoU, outperforming simple prompts (89.12%) by 0.54% and the no-prompt variant (87.46%) by 2.20%. Similar trends are observed across other metrics, with detailed prompts yielding improvements of 1.17% in Accuracy, 1.25% in mDice, and 0.88% in Recall compared to the no-prompt baseline. The performance gap between detailed and simple prompts remains relatively modest (0.54% mIoU), while the gap between simple prompts and no prompt is more substantial (1.66% mIoU), demonstrating that the language model branch provides significant semantic guidance for farmland segmentation.

To provide deeper insights into how prompt complexity affects segmentation quality, we present qualitative comparisons in

Figure 8. Three representative cases illustrate the benefits of detailed prompts. In the first case, descriptions mentioning “farmland with greenhouses” enable correct identification of greenhouse-covered agricultural areas that simple prompts fail to recognize. In the second case, shape-related descriptions help distinguish farmland from visually similar non-agricultural regions, reducing false positive predictions. In the third case, descriptions specifying road conditions between farmlands produce clearer boundary delineation, avoiding the over-segmentation observed with simple prompts.

The results from the no-prompt variant provide important insights into the role of visual features when text information is unavailable. When the language model branch is completely removed, the model relies entirely on DINOv3 visual features for segmentation. The 87.46% mIoU achieved by this vision-only baseline demonstrates that DINOv3 provides a strong foundation for farmland recognition. However, the 2.20% improvement from adding detailed text prompts indicates that linguistic guidance enhances the model’s ability to disambiguate challenging cases. This behavior can be attributed to the UGAA module’s uncertainty estimation mechanism. When textual descriptions lack discriminative semantic information, the module estimates higher uncertainty scores and consequently reduces the fusion weight of text-guided features, allowing the model to rely more heavily on visual features from DINOv3. This adaptive behavior ensures stable segmentation performance even when text descriptions are ambiguous or uninformative.

These ablation results collectively demonstrate that both UGAA and FECF contribute essential and complementary capabilities to our framework. UGAA provides robust handling of text-visual alignment uncertainty prevalent in farmland descriptions, while FECF ensures precise preservation of boundary details critical for accurate parcel delineation. The synergistic combination of these two modules enables our full model to achieve superior segmentation performance across diverse agricultural landscapes.

4.5. Limitation Analysis

While UGFF-VLM demonstrates strong performance across diverse agricultural regions, we identify a notable limitation in urban-rural fringe areas where farmland and built-up regions are interspersed, as illustrated in

Figure 9. In such peri-urban landscapes, the model faces challenges from multiple factors: small farmland parcels are fragmented by roads, buildings, and other infrastructure; vegetation in residential courtyards and gardens shares similar spectral characteristics with crops; and the complex spatial arrangement increases boundary ambiguity. As shown in

Figure 9a–c, these conditions lead to false positive predictions where non-agricultural green spaces are misclassified as farmland, boundary merging between adjacent micro-parcels, and irregular segmentation where farmland edges blend with surrounding vegetation.

These failure cases suggest that the current model struggles with the high heterogeneity and fragmentation characteristic of urban-rural transition zones. Future work could address this limitation by incorporating spatial context reasoning about land use patterns, integrating building footprint information as auxiliary constraints, and developing specific training strategies for peri-urban agricultural landscapes.