HyperNCMD: A Scene-Adaptive Clutter Measurement Density Estimator for Radar Tracking via Hypernetworks and Normalizing Flows

Highlights

- We propose HyperNCMD, a scene-adaptive clutter measurement density (CMD) estimator that employs a hypernetwork to dynamically generate normalizing flow parameters conditioned on scene representations, enabling fast adaptation to unseen environments without full retraining.

- HyperNCMD leverages Random Fourier Features (RFFs) and a proposed ISAB-LSTM module to encode spatio-temporal information from raw radar measurements, and further improves adaptation to novel environments via Feature-wise Linear Modulation (FiLM)-based test-time fine-tuning.

- HyperNCMD demonstrates strong robustness and estimation accuracy across spatially and temporally varying clutter, highlighting the benefit of hypernetwork-driven parameter generation for adaptive radar CMD modeling.

- The proposed framework provides a scalable and deployment-friendly solution for CMD estimation, enabling more reliable clutter distribution modeling for multi-target tracking (MTT) and downstream radar perception in complex environments.

Abstract

1. Introduction

- Environmental Perception: Beyond tracking, CMD estimation can reveal latent structural cues embedded in clutter measurements. In highway environments, for example, ref. [11] exploits persistent clutter reflections to extract road contours, which can serve as geometric priors for downstream perception tasks such as vehicle tracking [12].

- Scene-adaptive CMD estimation via hypernetworks. A hypernetwork-based architecture is designed to generate NF parameters conditioned on scene embeddings, enabling rapid adaptation to previously unseen environments with minimal tuning and facilitating knowledge transfer across scenes (see Section 4.1.2 and Section 4.1.3).

- Temporal-aware scene encoding. A Temporal Set Transformer [18]-based encoder is proposed to capture spatio-temporal variations in radar clutter. Raw radar measurements are embedded using Random Fourier Features (RFFs) [19], and an ISAB-LSTM architecture models spatio-temporal dynamics to produce compact yet expressive scene-level representations (see Section 4.1.1).

- Spatio-temporal data augmentation for point-based radar measurements. Two lightweight augmentation strategies—Coordinate Flip and Random Context Length Truncation—are introduced to enhance robustness and generalization for spatio-temporal radar data (see Section 4.2.2).

- Efficient test-time adaptation via lightweight fine-tuning. A Feature-wise Linear Modulation (FiLM) [20]-based fine-tuning mechanism is developed to enable efficient adaptation to unseen scenes at test time, achieving a favorable trade-off between accuracy and computational efficiency (see Section 4.3.1).

2. Background

2.1. CMD Modeling

2.2. Neural Density Estimation

2.3. Hypernetwork Architecture

3. Problem Statement

4. Proposed Method

4.1. The HyperNCMD Model

4.1.1. Scene Feature Extractor

- (1)

- Random Fourier Feature Mapping

- (2)

- ISAB-LSTM Module

- (3)

- Mean Pooling

- (4)

- Temporal Attention Pooling

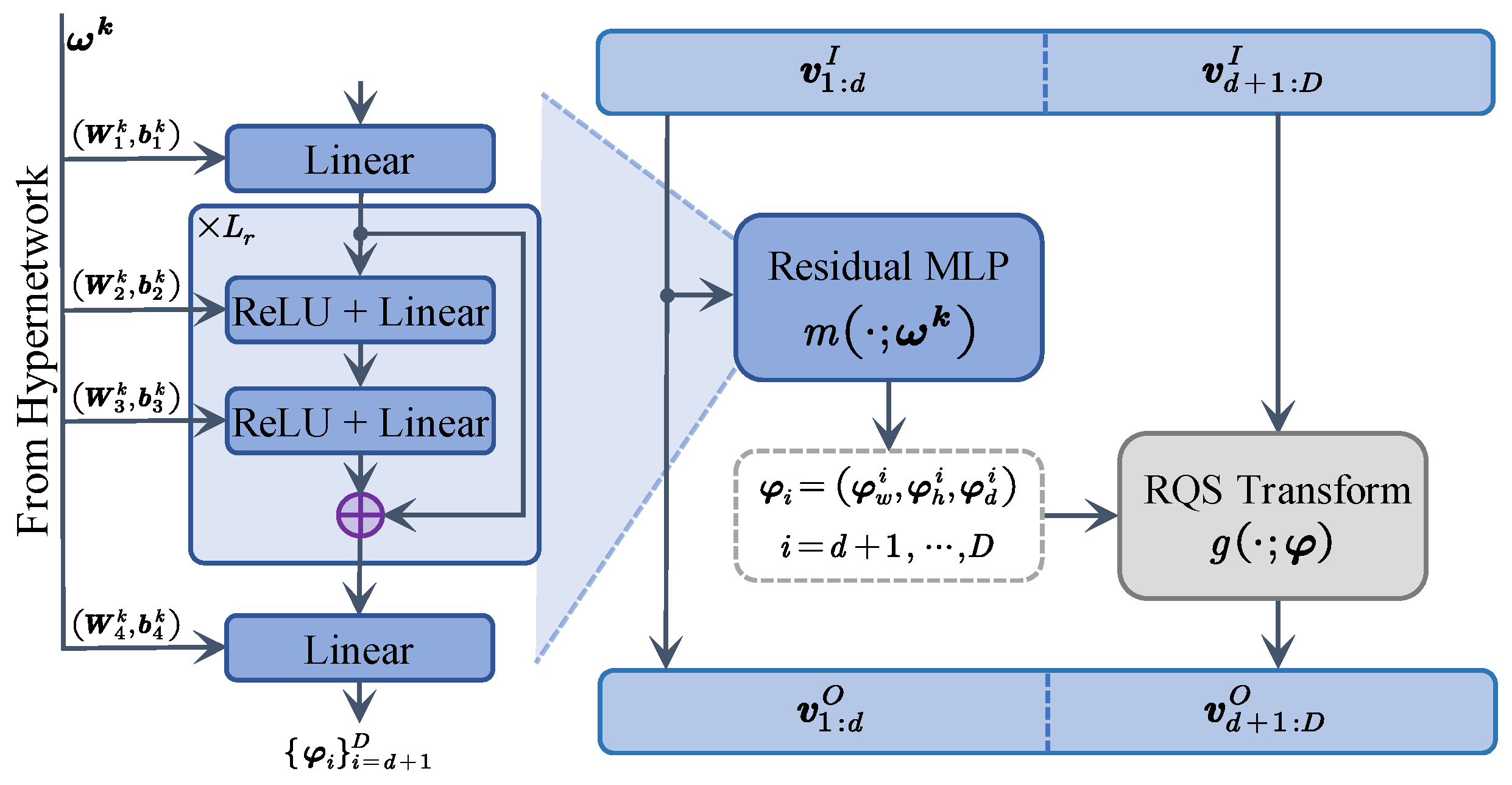

4.1.2. Hypernetwork-Based Parameter Generator

4.1.3. Parameterized Normalizing Flow Model

4.2. Training

4.2.1. Loss Function

- Context set , which provides past observations for generating a scene-level context embedding.

- Query set , used to evaluate the NLL under the NF model.

4.2.2. Data Augmentation

4.2.3. Training Procedure

4.3. Inference

4.3.1. Fine-Tuning with FiLM

4.3.2. Inference Pipeline

5. Simulation Experiments

5.1. Experimental Setup

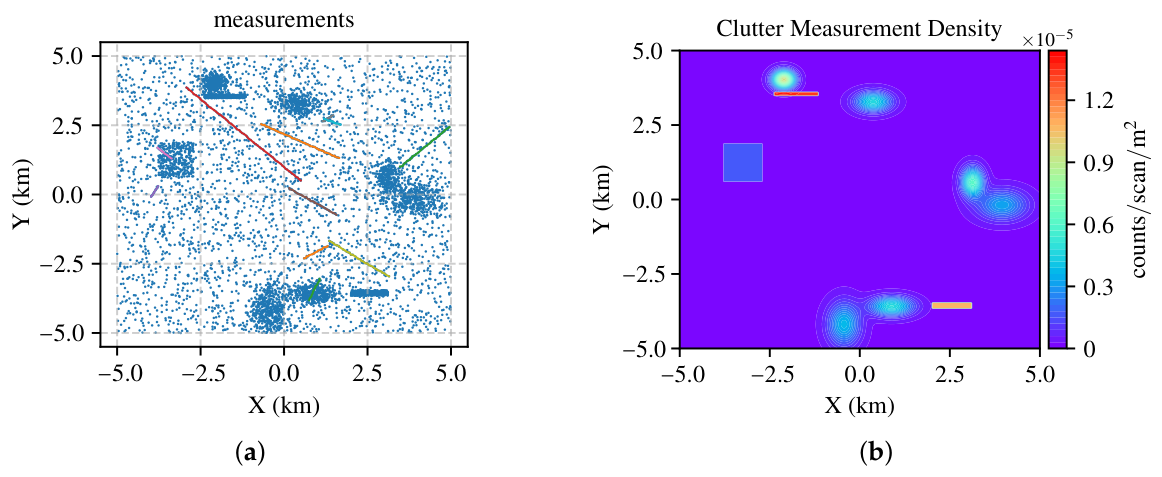

5.1.1. Datasets

5.1.2. Evaluation Metrics

5.1.3. Baselines

5.1.4. Implementation Details

5.2. Results and Analysis

5.2.1. CMD Evaluation Results

5.2.2. MTT Evaluation Results

5.2.3. Ablation Studies and Visualization

- (i)

- ISAB + Temporal Mean Pooling, which aggregates contextual frames via simple averaging without temporal modeling;

- (ii)

- ISAB-LSTM + Temporal Mean Pooling, which incorporates an LSTM to capture sequential dependencies;

- (iii)

- ISAB-LSTM + Temporal Attention Pooling, which further introduces a temporal attention mechanism to adaptively emphasize informative frames; and

- (iv)

- ISAB + LSTM + Temporal Attention Pooling, which directly aggregates temporal information at the spatial point level using an LSTM.

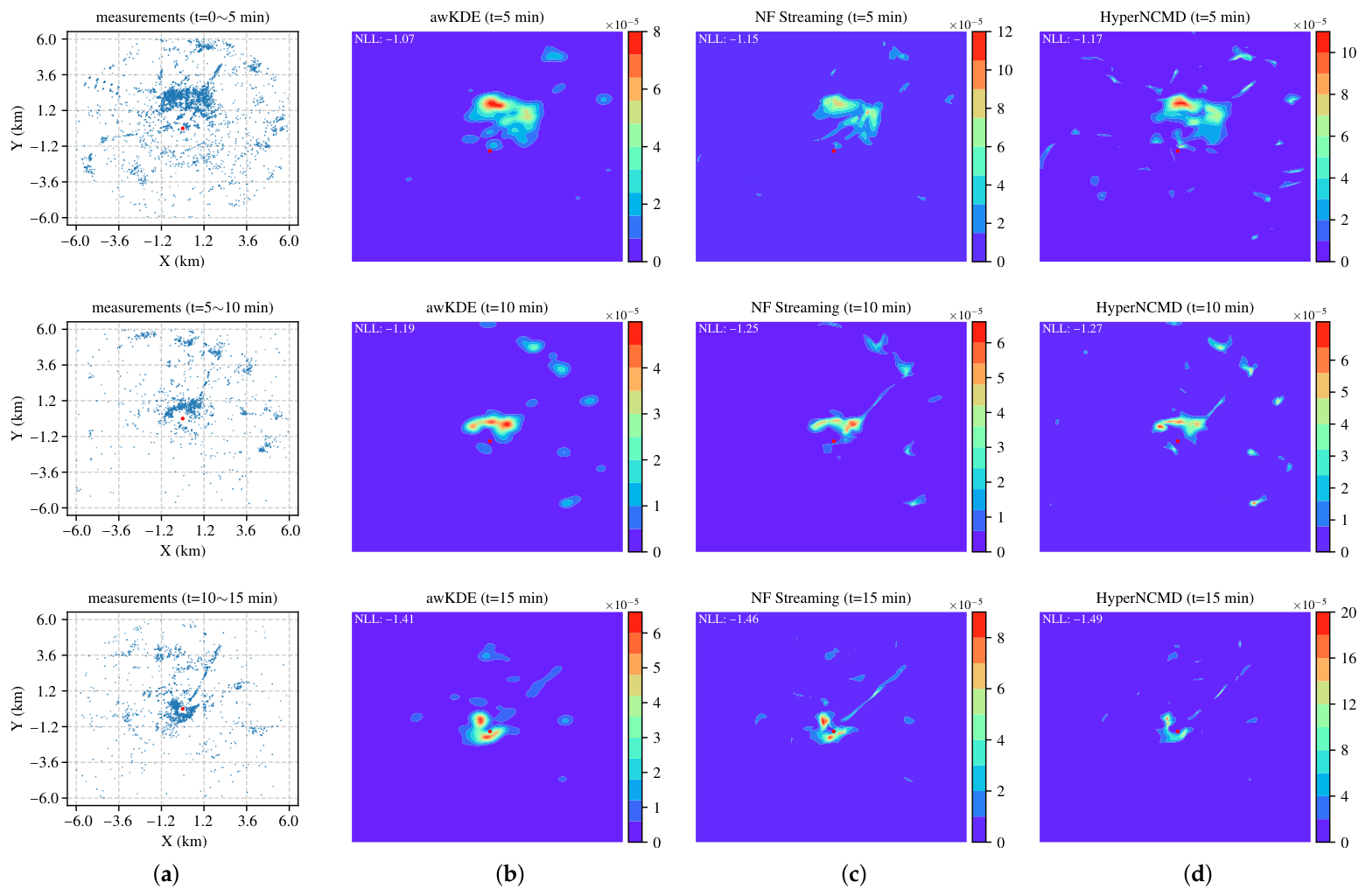

6. Real-World Experiments

6.1. Experimental Setup

6.1.1. Data Collection

6.1.2. Evaluation Metrics

6.1.3. Implementation Details

6.2. Results and Analysis

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Appendix A

Appendix A.1. Training Details for HyperNCMD

| Algorithm A1 Training Procedure for HyperNCMD |

| Input: Training set ; Initial parameters ; Learning rate , number of epochs , batch size . Output: Trained parameters .

|

Appendix A.2. Test-Time Fine-Tuning Details

| Algorithm A2 Test-Time Fine-Tuning with FiLM |

| Input: Test scene data ; Pretrained parameters ; Initial FiLM parameters ; Learning rate ; adaptation steps . Output: Adapted FiLM parameters .

|

References

- Musicki, D.; Suvorova, S.; Morelande, M.; Moran, B. Clutter map and target tracking. In Proceedings of the 7th International Conference on Information Fusion, Philadelphia, PA, USA, 25–28 July 2005; Volume 1, pp. 69–76. [Google Scholar]

- Musicki, D.; Evans, R. Clutter map information for data association and track initialization. IEEE Trans. Aerosp. Electron. Syst. 2004, 40, 387–398. [Google Scholar] [CrossRef]

- Fahad, A.M.; Saritha, D.S. Survey on Clutter Spatial Intensity Estimation Methods for Target Tracking. J. Adv. Inf. Fusion 2022, 17, 3–13. [Google Scholar]

- Luo, X.; Zhang, B.; Liu, J.; Lin, H.; Yu, J. Researches on the method of clutter suppression in radar data processing. Syst. Eng. Electron. 2016, 38, 37–44. [Google Scholar]

- Bar-Shalom, Y.; Li, X.R. Multitarget-Multisensor Tracking: Principles and Techniques; YBS Publications: Storrs, CT, USA, 1995. [Google Scholar]

- Reid, D. An Algorithm for Tracking Multiple Targets. IEEE Trans. Autom. Control 1979, 24, 843–854. [Google Scholar] [CrossRef]

- Challa, S.; Morelande, M.R.; Musicki, D.; Evans, R.J. Fundamentals of Object Tracking; Cambridge University Press: Cambridge, UK, 2011. [Google Scholar]

- Lv, N.; Lian, F.; Han, C. Unknown clutter estimation by FMM approach in multitarget tracking algorithm. Math. Probl. Eng. 2014, 2014, 938242. [Google Scholar] [CrossRef]

- Chen, X.; Tharmarasa, R.; Kirubarajan, T.; McDonald, M. Online clutter estimation using a Gaussian kernel density estimator for multitarget tracking. IET Radar Sonar Navig. 2015, 9, 1–9. [Google Scholar] [CrossRef]

- Wang, B.; Wang, X. Bandwidth selection for weighted kernel density estimation. arXiv 2007, arXiv:0709.1616. [Google Scholar]

- Cao, Z.; Yang, J.; Sun, W.; Lu, X.; Tan, K.; Dai, Z.; Yu, W.; Gu, H. Online Clutter Measurement Modeling for Surveillance Radar Tracking via Normalizing Flows. IEEE Trans. Instrum. Meas. 2025, 74, 1–18. [Google Scholar] [CrossRef]

- Li, T. Single-Road-Constrained Positioning Based on Deterministic Trajectory Geometry. IEEE Commun. Lett. 2019, 23, 80–83. [Google Scholar] [CrossRef]

- Li, X.R.; Li, N. Integrated Real-Time Estimation of Clutter Density for Tracking. IEEE Trans. Signal Process. 2000, 48, 2797–2805. [Google Scholar] [CrossRef]

- Mahler, R. CPHD and PHD Filters for Unknown Backgrounds I: Dynamic Data Clustering. In Proceedings of the SPIE Defense, Security, and Sensing, Orlando, FL, USA, 14–15 April 2009; Volume 7330, pp. 140–151. [Google Scholar]

- Mahler, R. CPHD and PHD Filters for Unknown Backgrounds II: Multitarget Filtering in Dynamic Clutter. In Proceedings of the SPIE Defense, Security, and Sensing, Orlando, FL, USA, 14–15 April 2009; Volume 7330, pp. 152–163. [Google Scholar]

- Chen, X.; Tharmarasa, R.; Pelletier, M.; Kirubarajan, T. Integrated Clutter Estimation and Target Tracking Using Poisson Point Processes. IEEE Trans. Aerosp. Electron. Syst. 2012, 48, 1210–1235. [Google Scholar] [CrossRef]

- Kobyzev, I.; Prince, S.J.; Brubaker, M.A. Normalizing flows: An introduction and review of current methods. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 43, 3964–3979. [Google Scholar] [CrossRef]

- Lee, J.; Lee, Y.; Kim, J.; Kosiorek, A.; Choi, S.; Teh, Y.W. Set Transformer: A Framework for Attention-Based Permutation-Invariant Neural Networks. In Proceedings of the 36th International Conference on Machine Learning (ICML), Long Beach, CA, USA, 9–15 June 2007; pp. 3744–3753. [Google Scholar]

- Rahimi, A.; Recht, B. Random Features for Large-Scale Kernel Machines. In Proceedings of the 20th Advances in Neural Information Processing Systems (NeurIPS), Vancouver, BC, Canada, 3–6 December 2007; pp. 1177–1184. [Google Scholar]

- Perez, E.; Strub, F.; de Vries, H.; Dumoulin, V.; Courville, A.C. FiLM: Visual Reasoning With a General Conditioning Layer. In Proceedings of the 32nd AAAI Conference on Artificial Intelligence (AAAI), New Orleans, LA, USA, 2–7 February 2018; pp. 3942–3951. [Google Scholar]

- Papamakarios, G.; Nalisnick, E.; Rezende, D.J.; Mohamed, S.; Lakshminarayanan, B. Normalizing flows for probabilistic modeling and inference. J. Mach. Learn. Res. 2021, 22, 1–64. [Google Scholar]

- Dinh, L.; Krueger, D.; Bengio, Y. NICE: Non-linear Independent Components Estimation. In Proceedings of the 3rd International Conference on Learning Representations (ICLR), San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- Laurent Dinh, J.S.; Bengio, S. Density estimation using Real NVP. In Proceedings of the 5th International Conference on Learning Representations (ICLR), Toulon, France, 24–26 April 2017. [Google Scholar]

- Papamakarios, G.; Murray, I.; Pavlakou, T. Masked Autoregressive Flow for Density Estimation. In Proceedings of the 31st Advances in Neural Information Processing Systems (NeurIPS), Long Beach, CA, USA, 4–9 December 2017; pp. 2338–2347. [Google Scholar]

- Durkan, C.; Bekasov, A.; Murray, I.; Papamakarios, G. Neural Spline Flows. In Proceedings of the 32nd Advances in Neural Information Processing Systems (NeurIPS), Vancouver, BC, Canada, 8–14 December 2019; pp. 7509–7520. [Google Scholar]

- Ha, D.; Dai, A.M.; Le, Q.V. HyperNetworks. In Proceedings of the 5th International Conference on Learning Representations (ICLR), Toulon, France, 24–26 April 2017. [Google Scholar]

- Rusu, A.A.; Rao, D.; Sygnowski, J.; Vinyals, O.; Pascanu, R.; Osindero, S.; Hadsell, R. Meta-Learning with Latent Embedding Optimization. In Proceedings of the 7th International Conference on Learning Representations (ICLR), New Orleans, LA, USA, 6–9 May 2019. [Google Scholar]

- von Oswald, J.; Henning, C.; Sacramento, J.; Grewe, B.F. Continual Learning with Hypernetworks. In Proceedings of the 8th International Conference on Learning Representations (ICLR), Addis Ababa, Ethiopia, 26–30 April 2020. [Google Scholar]

- Brock, A.; Lim, T.; Ritchie, J.M.; Weston, N. SMASH: One-Shot Model Architecture Search Through HyperNetworks. In Proceedings of the 6th International Conference on Learning Representations (ICLR), Vancouver, BC, Canada, 30 April–3 May 2018. [Google Scholar]

- Oh, G.; Valois, J.S. HCNAF: Hyper-Conditioned Neural Autoregressive Flow and Its Application for Probabilistic Occupancy Map Forecasting. In Proceedings of the 2020 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 14538–14547. [Google Scholar]

- Spurek, P.; Zieba, M.; Tabor, J.; Trzcinski, T. HyperFlow: Representing 3D Objects as Surfaces. arXiv 2020, arXiv:2006.08710. [Google Scholar] [CrossRef]

- Grathwohl, W.; Chen, R.T.; Bettencourt, J.; Sutskever, I.; Duvenaud, D. FFJORD: Free-Form Continuous Dynamics for Scalable Reversible Generative Models. In Proceedings of the 7th International Conference on Learning Representations (ICLR), New Orleans, LA, USA, 6–9 May 2019. [Google Scholar]

- Sun, J.; Wang, Z.; Li, J.; Lu, C. Unified and Fast Human Trajectory Prediction Via Conditionally Parameterized Normalizing Flow. IEEE Robot. Autom. Lett. 2022, 7, 842–849. [Google Scholar] [CrossRef]

- Fons, E.; Sztrajman, A.; El-Laham, Y.; Iosifidis, A.; Vyetrenko, S. HyperTime: Implicit Neural Representation for Time Series. arXiv 2022, arXiv:2208.05836. [Google Scholar] [CrossRef]

- Kosiorek, A.R.; Strathmann, H.; Zoran, D.; Moreno, P.; Schneider, R.; Mokrá, S.; Rezende, D.J. NeRF-VAE: A Geometry Aware 3D Scene Generative Model. In Proceedings of the 38th International Conference on Machine Learning (ICML), Virtual Event, 18–24 July 2021; pp. 5742–5752. [Google Scholar]

- Zaheer, M.; Kottur, S.; Ravanbakhsh, S.; Poczos, B.; Salakhutdinov, R.R.; Smola, A.J. Deep Sets. In Proceedings of the 30th Advances in Neural Information Processing Systems (NeurIPS), Long Beach, CA, USA, 4–9 December 2017; pp. 3391–3401. [Google Scholar]

- Qi, C.R.; Su, H.; Mo, K.; Guibas, L.J. PointNet: Deep Learning on Point Sets for 3D Classification and Segmentation. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 77–85. [Google Scholar]

- Hochreiter, S.; Schmidhuber, J. Long Short-Term Memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Tancik, M.; Srinivasan, P.; Mildenhall, B.; Fridovich-Keil, S.; Raghavan, N.; Singhal, U.; Ramamoorthi, R.; Barron, J.; Ng, R. Fourier Features Let Networks Learn High Frequency Functions in Low Dimensional Domains. In Proceedings of the 33rd Advances in Neural Information Processing Systems (NeurIPS), Virtual, 6–12 December 2020; pp. 7537–7547. [Google Scholar]

- Shi, X.; Chen, Z.; Wang, H.; Yeung, D.Y.; Wong, W.K.; Woo, W.C. Convolutional LSTM Network: A machine learning approach for precipitation nowcasting. In Proceedings of the 29th Advances in Neural Information Processing Systems (NeurIPS), Montreal, QC, Canada, 7–12 December 2015; pp. 802–810. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention Is All You Need. In Proceedings of the 30th Advances in Neural Information Processing Systems (NeurIPS), Long Beach, CA, USA, 4–9 December 2017; pp. 5998–6008. [Google Scholar]

- Ba, J.L.; Kiros, J.R.; Hinton, G.E. Layer Normalization. arXiv 2016, arXiv:1607.06450. [Google Scholar] [CrossRef]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-Excitation Networks. In Proceedings of the 2018 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 7132–7141. [Google Scholar]

- Kingma, D.P.; Dhariwal, P. Glow: Generative Flow with Invertible 1x1 Convolutions. In Proceedings of the 31st Advances in Neural Information Processing Systems (NeurIPS), Montréal, QC, Canada, 3–8 December 2018; pp. 10236–10245. [Google Scholar]

- Huynh-Thu, Q.; Ghanbari, M. Scope of validity of PSNR in image/video quality assessment. Electron. Lett. 2008, 44, 800–801. [Google Scholar] [CrossRef]

- Wang, Z.; Bovik, A.; Sheikh, H.; Simoncelli, E. Image quality assessment: From error visibility to structural similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef]

- Lewis, J. Fast normalized cross-correlation. Vis. Interface 1995, 10, 120–123. [Google Scholar]

- Kullback, S.; Leibler, R. On information and sufficiency. Ann. Math. Stat. 1951, 22, 79–86. [Google Scholar] [CrossRef]

- Bernardin, K.; Stiefelhagen, R. Evaluating multiple object tracking performance: The clear mot metrics. EURASIP J. Image Video Process. 2008, 2008, 246309. [Google Scholar] [CrossRef]

- Musicki, D.; Morelande, M. Non Parametric Target Tracking in Non Uniform Clutter. In Proceedings of the 7th International Conference on Information Fusion, Philadelphia, PA, USA, 25–28 July 2005; Volume 1, pp. 48–53. [Google Scholar]

- Song, T.L.; Musicki, D. Adaptive Clutter Measurement Density Estimation for Improved Target Tracking. IEEE Trans. Aerosp. Electron. Syst. 2011, 47, 1457–1466. [Google Scholar] [CrossRef]

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. In Proceedings of the 3rd International Conference on Learning Representations (ICLR), San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- Loshchilov, I.; Hutter, F. SGDR: Stochastic Gradient Descent with Warm Restarts. In Proceedings of the 5th International Conference on Learning Representations (ICLR), Toulon, France, 24–26 April 2017. [Google Scholar]

- Paszke, A.; Gross, S.; Massa, F.; Lerer, A.; Bradbury, J.; Chanan, G.; Killeen, T.; Lin, Z.; Gimelshein, N.; Antiga, L.; et al. PyTorch: An Imperative Style, High-Performance Deep Learning Library. In Proceedings of the 33rd Advances in Neural Information Processing Systems (NeurIPS), Vancouver, BC, Canada, 8–14 December 2019; pp. 8024–8035. [Google Scholar]

- van der Maaten, L.; Hinton, G. Visualizing Data using t-SNE. J. Mach. Learn. Res. 2008, 9, 2579–2605. [Google Scholar]

- Yang, J.; Lu, X.; Dai, Z.; Yu, W.; Tan, K. A Cylindrical Phased Array Radar System for UAV Detection. In Proceedings of the 6th International Conference on Intelligent Computing and Signal Processing (ICSP), Xi’an, China, 9–11 April 2021; pp. 894–898. [Google Scholar]

| Dataset ID | Evaluation Task | Clutter Type | Duration | #Scenes |

|---|---|---|---|---|

| DS-I | CMD | Static | 50 s | 1000 |

| DS-II | CMD | Dynamic | 100 s | 1000 |

| DS-III | MTT | Static | 150 s | 500 |

| DS-IV | MTT | Dynamic | 150 s | 500 |

| Methods | Platform | RMSE | MAE | PSNR | SSIM | NCC | KLD | Time |

|---|---|---|---|---|---|---|---|---|

| HistSpatial [1] | CPU | 8.68 ± 4.91 | 2.32 ± 0.58 | 30.39 ± 4.45 | 0.90 ± 0.04 | 0.74 ± 0.14 | 0.30 ± 0.13 | 16.4 ± 2.72 |

| SCMDE [51] | CPU | 9.62 ± 5.50 | 2.61 ± 0.58 | 29.51 ± 4.40 | 0.88 ± 0.04 | 0.73 ± 0.13 | 0.32 ± 0.12 | 226.1 ± 57.7 |

| FMM [8] | GPU | 9.93 ± 4.29 | 2.89 ± 0.32 | 28.90 ± 5.64 | 0.76 ± 0.13 | 0.76 ± 0.10 | 0.99 ± 0.44 | 581.9 ± 107.3 |

| KDE [9] | GPU | 7.66 ± 3.97 | 3.17 ± 0.87 | 31.35 ± 4.44 | 0.78 ± 0.08 | 0.82 ± 0.08 | 0.82 ± 0.58 | 1024.0 ± 273.7 |

| awKDE [10] | GPU | 8.13 ± 5.96 | 2.32 ± 0.43 | 31.35 ± 4.13 | 0.85 ± 0.08 | 0.81 ± 0.09 | 0.41 ± 0.11 | 0.14 ± 0.73 |

| NF Streaming [11] | GPU | 6.56 ± 3.50 | 1.89 ± 0.35 | 32.76 ± 5.16 | 0.89 ± 0.05 | 0.86 ± 0.07 | 0.25 ± 0.07 | 950.1 ± 33.4 |

| HyperNCMD (w/o FiLM) | GPU | 6.43 ± 4.48 | 1.15 ± 0.35 | 33.4 ± 5.19 | 0.96 ± 0.02 | 0.88 ± 0.07 | 0.11 ± 0.06 | 68.1 ± 10.7 |

| HyperNCMD (w/ FiLM) | GPU | 6.12 ± 3.95 | 1.16 ± 0.32 | 33.7 ± 5.35 | 0.96 ± 0.02 | 0.89 ± 0.06 | 0.11 ± 0.05 | 227.3 ± 10.0 |

| Methods | Platform | RMSE | MAE | PSNR | SSIM | NCC | KLD | Time |

|---|---|---|---|---|---|---|---|---|

| HistSpatial [1] | CPU | 9.10 ± 5.13 | 2.37 ± 0.60 | 29.7 ± 4.12 | 0.90 ± 0.04 | 0.72 ± 0.13 | 0.31 ± 0.14 | 17.6 ± 2.82 |

| SCMDE [51] | CPU | 9.48 ± 5.03 | 2.61 ± 0.57 | 29.2 ± 4.33 | 0.88 ± 0.05 | 0.72 ± 0.13 | 0.32 ± 0.13 | 177.5 ± 51.3 |

| FMM [8] | GPU | 10.24 ± 4.51 | 2.95 ± 0.33 | 28.28 ± 5.33 | 0.75 ± 0.14 | 0.74 ± 0.10 | 1.02 ± 0.48 | 634.2 ± 106.5 |

| KDE [9] | GPU | 8.10 ± 4.65 | 3.86 ± 2.64 | 30.67 ± 4.12 | 0.76 ± 0.08 | 0.80 ± 0.08 | 0.49 ± 0.21 | 1029.0 ± 268.7 |

| awKDE [10] | GPU | 8.01 ± 5.23 | 2.34 ± 0.41 | 31.00 ± 4.08 | 0.84 ± 0.08 | 0.80 ± 0.09 | 0.41 ± 0.12 | 0.23 ± 1.79 |

| NF Streaming [11] | GPU | 6.97 ± 3.74 | 1.93 ± 0.33 | 31.90 ± 4.75 | 0.89 ± 0.06 | 0.84 ± 0.07 | 0.26 ± 0.07 | 912.7 ± 54.6 |

| HyperNCMD (w/o FiLM) | GPU | 6.64 ± 4.15 | 1.21 ± 0.35 | 32.63 ± 4.71 | 0.96 ± 0.02 | 0.86 ± 0.07 | 0.13 ± 0.06 | 113.2 ± 41.2 |

| HyperNCMD (w/ FiLM) | GPU | 6.40 ± 3.84 | 1.21 ± 0.32 | 32.87 ± 4.81 | 0.96 ± 0.02 | 0.87 ± 0.06 | 0.12 ± 0.05 | 232.1 ± 9.2 |

| Methods | MOTA | MOTP | FTR | P | R | FP | FN | IDS | Frag |

|---|---|---|---|---|---|---|---|---|---|

| HistSpatial [1] | 92.52 | 86.09 | 0.140 | 96.33 | 96.23 | 5822 | 5981 | 62 | 6441 |

| SCMDE [51] | 92.46 | 86.09 | 0.121 | 96.80 | 95.67 | 5021 | 6869 | 58 | 6397 |

| FMM [8] | 80.01 | 86.06 | 0.546 | 86.48 | 94.92 | 23,526 | 8053 | 45 | 6476 |

| awKDE [10] | 94.75 | 86.06 | 0.066 | 98.24 | 96.52 | 2733 | 5500 | 72 | 6432 |

| NF Streaming [11] | 95.35 | 86.08 | 0.052 | 98.63 | 96.75 | 2131 | 5141 | 78 | 6436 |

| HyperNCMD (w/ FiLM) | 95.39 | 86.08 | 0.049 | 98.70 | 96.72 | 2015 | 5188 | 78 | 6433 |

| Exact | 95.47 | 86.07 | 0.051 | 98.64 | 96.86 | 2118 | 4959 | 80 | 6465 |

| Methods | MOTA | MOTP | FTR | P | R | FP | FN | IDS | Frag |

|---|---|---|---|---|---|---|---|---|---|

| HistSpatial [1] | 91.67 | 86.20 | 0.164 | 95.71 | 96.01 | 6833 | 6332 | 55 | 6360 |

| SCMDE [51] | 91.86 | 86.19 | 0.131 | 96.52 | 95.33 | 5450 | 7405 | 56 | 6300 |

| FMM [8] | 70.83 | 86.18 | 0.85 | 80.04 | 94.40 | 37,061 | 8822 | 30 | 6356 |

| awKDE [10] | 94.20 | 86.20 | 0.082 | 97.84 | 96.37 | 3371 | 5741 | 61 | 6385 |

| NF Streaming [11] | 95.06 | 86.29 | 0.057 | 98.50 | 96.57 | 2331 | 5417 | 64 | 6382 |

| HyperNCMD (w/ FiLM) | 95.20 | 86.20 | 0.052 | 98.62 | 96.59 | 2133 | 5391 | 67 | 6386 |

| Exact | 95.49 | 86.20 | 0.050 | 98.67 | 96.85 | 2068 | 4988 | 72 | 6397 |

| Stage | Module Added | PSNR | NCC | KLD | PSNR |

|---|---|---|---|---|---|

| 0 | ISAB (Baseline) | 27.00 | 0.310 | 0.766 | – |

| 1 | +RFFs | 29.66 | 0.716 | 0.296 | +2.66 |

| 2 | +Temporal Modeling | 29.82 | 0.739 | 0.265 | +0.16 |

| 3 | +SE Block | 30.02 | 0.749 | 0.253 | +0.20 |

| 4 | +Coordinate Flip | 31.11 | 0.794 | 0.205 | +1.09 |

| 5 | +Rand. Ctx. Length | 31.22 | 0.798 | 0.196 | +0.11 |

| Ctx. Len | Module | PSNR | NCC | KLD |

|---|---|---|---|---|

| 10 | ISAB + Temporal Mean | 30.76 | 0.779 | 0.220 |

| ISAB-LSTM + Temporal Mean | 30.95 | 0.788 | 0.212 | |

| ISAB-LSTM + Temporal Attention | 31.01 | 0.789 | 0.209 | |

| ISAB + LSTM + Temporal Attention | 30.64 | 0.766 | 0.229 | |

| 20 | ISAB + Temporal Mean | 31.05 | 0.789 | 0.202 |

| ISAB-LSTM + Temporal Mean | 31.22 | 0.798 | 0.196 | |

| ISAB-LSTM + Temporal Attention | 31.34 | 0.803 | 0.191 | |

| ISAB + LSTM + Temporal Attention | 30.94 | 0.782 | 0.210 | |

| 30 | ISAB + Temporal Mean | 30.92 | 0.778 | 0.208 |

| ISAB-LSTM + Temporal Mean | 31.07 | 0.787 | 0.203 | |

| ISAB-LSTM + Temporal Attention | 31.32 | 0.802 | 0.190 | |

| ISAB + LSTM + Temporal Attention | 30.96 | 0.782 | 0.208 | |

| Avg. | ISAB + Temporal Mean | 30.91 | 0.782 | 0.210 |

| ISAB-LSTM + Temporal Mean | 31.08 | 0.791 | 0.204 | |

| ISAB-LSTM + Temporal Attention | 31.22 | 0.798 | 0.196 | |

| ISAB + LSTM + Temporal Attention | 30.84 | 0.777 | 0.215 |

| Method | Avg. NLL | NLL 1 |

|---|---|---|

| awKDE [10] | −0.965 ± 0.35 | baseline |

| NF Streaming [11] | −0.981 ± 0.39 | +1.66% |

| HyperNCMD (Pretrain, no fine-tuning) | −0.724 ± 0.27 | – |

| HyperNCMD (Pretrain, fine-tuned) | −0.989 ± 0.39 | +2.49% |

| HyperNCMD (Retrain, no fine-tuning) | −1.004 ± 0.38 | +4.04% |

| HyperNCMD (Retrain, fine-tuned) | −1.066 ± 0.43 | +10.5% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Cao, Z.; Yang, J.; Sun, W.; Lu, X.; Tan, K.; Dai, Z.; Yu, W.; Gu, H. HyperNCMD: A Scene-Adaptive Clutter Measurement Density Estimator for Radar Tracking via Hypernetworks and Normalizing Flows. Remote Sens. 2026, 18, 1541. https://doi.org/10.3390/rs18101541

Cao Z, Yang J, Sun W, Lu X, Tan K, Dai Z, Yu W, Gu H. HyperNCMD: A Scene-Adaptive Clutter Measurement Density Estimator for Radar Tracking via Hypernetworks and Normalizing Flows. Remote Sensing. 2026; 18(10):1541. https://doi.org/10.3390/rs18101541

Chicago/Turabian StyleCao, Zongqing, Jianchao Yang, Wang Sun, Xingyu Lu, Ke Tan, Zheng Dai, Wenchao Yu, and Hong Gu. 2026. "HyperNCMD: A Scene-Adaptive Clutter Measurement Density Estimator for Radar Tracking via Hypernetworks and Normalizing Flows" Remote Sensing 18, no. 10: 1541. https://doi.org/10.3390/rs18101541

APA StyleCao, Z., Yang, J., Sun, W., Lu, X., Tan, K., Dai, Z., Yu, W., & Gu, H. (2026). HyperNCMD: A Scene-Adaptive Clutter Measurement Density Estimator for Radar Tracking via Hypernetworks and Normalizing Flows. Remote Sensing, 18(10), 1541. https://doi.org/10.3390/rs18101541