Sky-GVIO: Enhanced GNSS/INS/Vision Navigation with FCN-Based Sky Segmentation in Urban Canyon

Abstract

1. Introduction

- Adaptive Sky-view Image Segmentation: We introduce adaptive sky-view image segmentation based on fully convolutional networks (FCNs) that can adjust to varying lighting conditions, addressing a key limitation of traditional methods;

- Integration of Sky-GNSS/INS/Vision: We propose an integrated model that combines GNSS, INS, and Vision. Meantime, we extend the S−NDM method to this model (named Sky−GVIO), enabling a comprehensive approach to vehicle positioning in challenging urban canyon environments;

- Performance Evaluation: A comprehensive evaluation of S−NDM’s performance is conducted, with a focus on its effectiveness within GNSS pseudorange and carrier-phase positioning frameworks, thereby shedding light on its applicability across different GNSS-related integration positioning techniques;

- Open-Source Sky-view Image Dataset: An open-source repository of sky-view images, including the training and testing data, is provided at https://github.com/whuwangjr/sky-view-images, accessed on 27 July 2024, contributing a valuable dataset to the research community and mitigating the lack of available resources in this field.

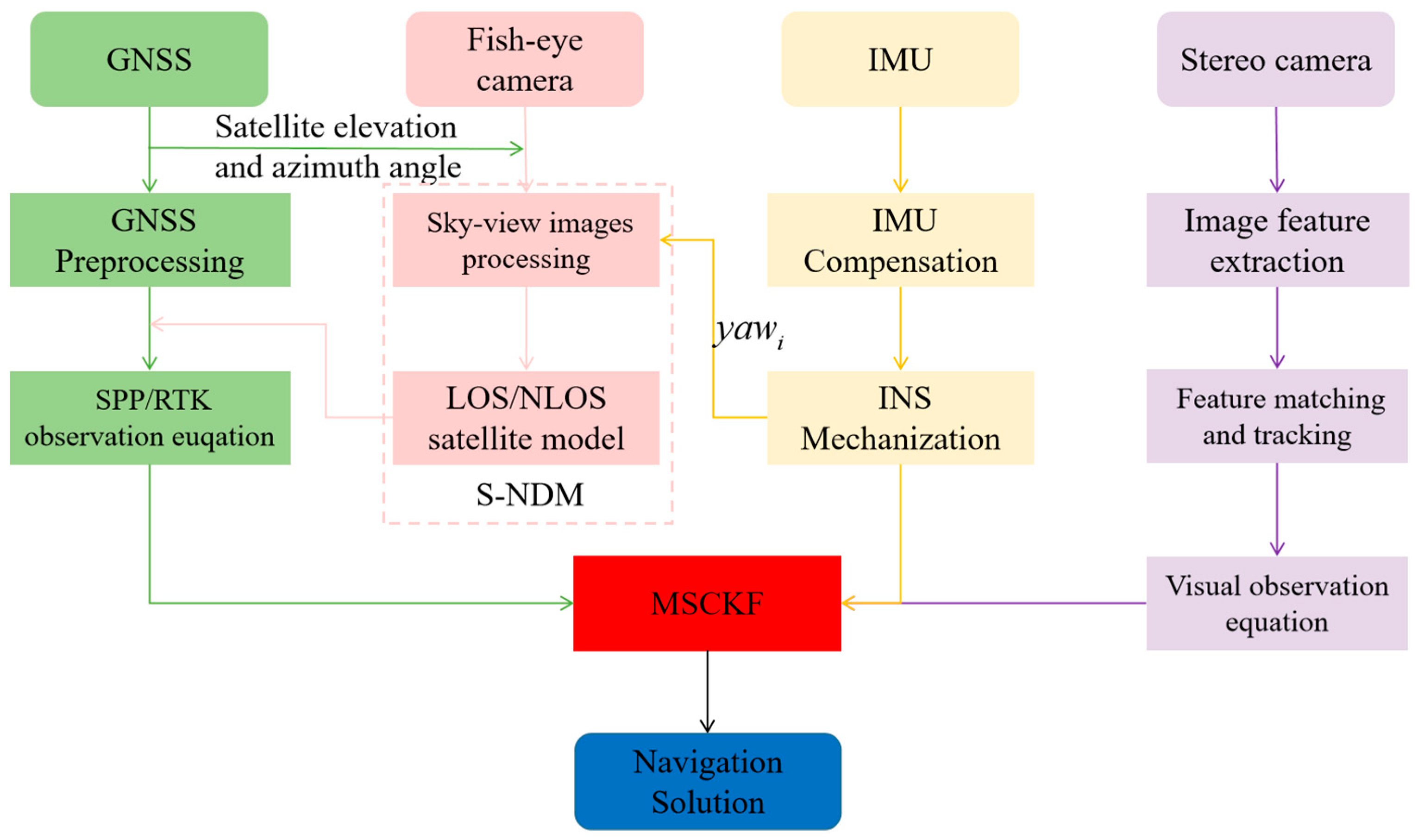

2. System Overview

2.1. Sky-View Image Segmentation

2.2. Tightly Coupled GNSS/INS/Vision Integration Model

- (1)

- GNSS Observation Model: The original pseudorange and carrier-phase observation equations in GNSS positioning are expressed as follows:where and represent the pseudorange and carrier phase, respectively. The angular symbols and refer to satellites and receivers, respectively. denotes the geometric distance between the phase centers of the receiver and satellite antennas. and represent the receiver and satellite clock offsets, respectively. The speed of light is . and refer to the ionospheric and troposphere delay, respectively. represents the carrier wavelength. represents the carrier-phase ambiguity. and represent the pseudorange noise and carrier-phase noise, respectively.

- (2)

- INS Dynamic Model: Considering the noisy measurement of the low-cost IMU, the Coriolis and centrifugal forces due to Earth’s rotation are ignored in the IMU formulation. The inertial measurement can be modeled [21] in the (body) frame as follows:where is the output of the IMU at time , and is the linear acceleration and angular velocity of the IMU sensor. and are the biases of the accelerometer and gyroscope at time , respectively. In addition, and are assumed to be zero-mean Gaussian-distributed with and . denotes the rotation matrix from the IMU body (b) frame to the n frame. is the gravity in the n frame.

- (3)

- Visual Measurement Model: The core idea of the well-known MSCKF is to establish geometric constraints between multi-camera states by utilizing the same visual feature points observed by multi-cameras. Following this concept, we establish a visual model. For a visual feature point observed by a stereo camera at time , its visual observation model [33] on the normalized projection planes of the left and right cameras can be represented as follows:where the subscripts 0 and 1 represent the left and right cameras, respectively. are the pixel coordinates of the feature points on the normalized plane. is the visual measurement noise. represents the feature position in the camera (c) frame, which can be expressed as follows:where and are the rotation matrix and position of the left camera in the n frame, respectively. is the rotation matrix of the left camera to the right camera, and is the translation matrix of the left camera to the right camera, which can be accurately corrected in advance [34]. and , respectively, are the positions of the visual feature point in the n frame and left c frame. We adopted the method proposed by [35] to construct the visual reprojection error between relative camera poses, and the visual state vector was described as follows:where and , respectively, represent the rotation and position error at the time . The subscript represents the total number of camera poses (defined as rotations and translations) in the sliding window. The reprojection residual of a visual measurement can be expressed as follows:where and , respectively, represent the observed and reprojected visual measurements. is the Jacobi matrix of the camera states involved. Specific details can be obtained from [35].

- (4)

- State and Measurement Model of the Tightly Coupled GNSS/INS/Vision: This paper employs MSCKF for tightly coupled GNSS/INS/Vision integration. Based on the above introductions of different sensor models, the complete state model for the integration of tightly coupled GNSS/INS/Vision is as follows:

2.3. The Sky-View Image-Aided GNSS NLOS Detection and Mitigation Method (S−NDM)

3. Experiments

3.1. Experiment Description

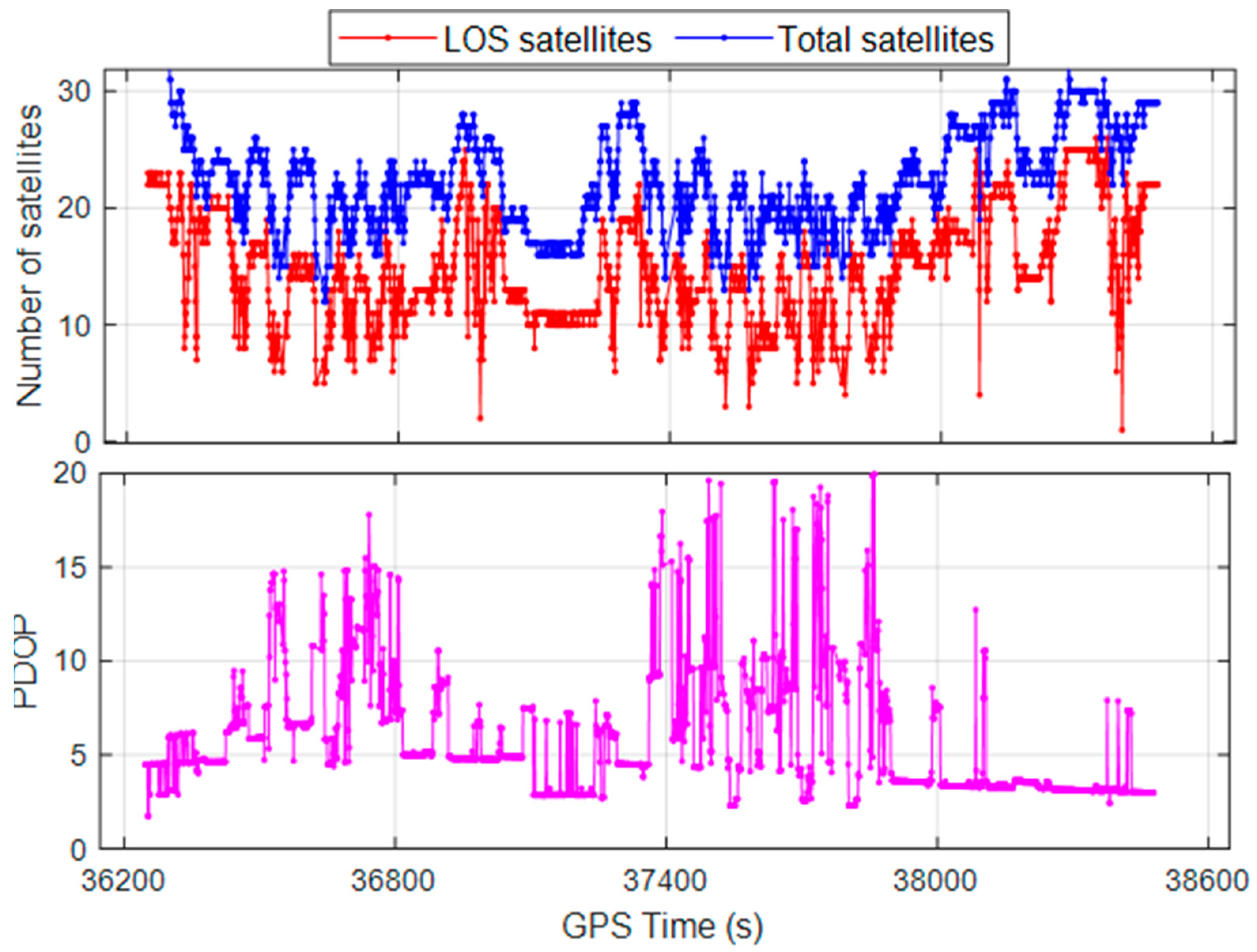

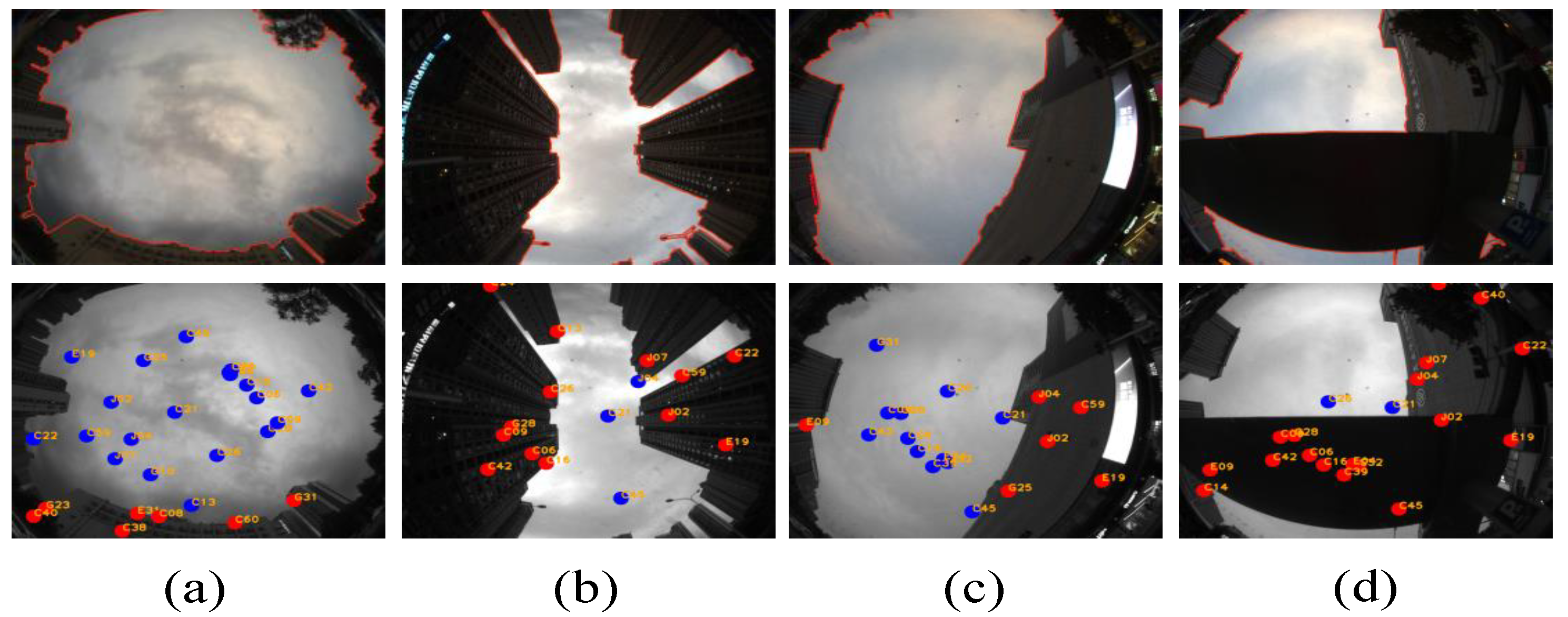

3.2. The Results of Sky-View Image Segmentation and GNSS NLOS Detection

3.3. The Quantitative Analysis of Sky-View Image Segmentation

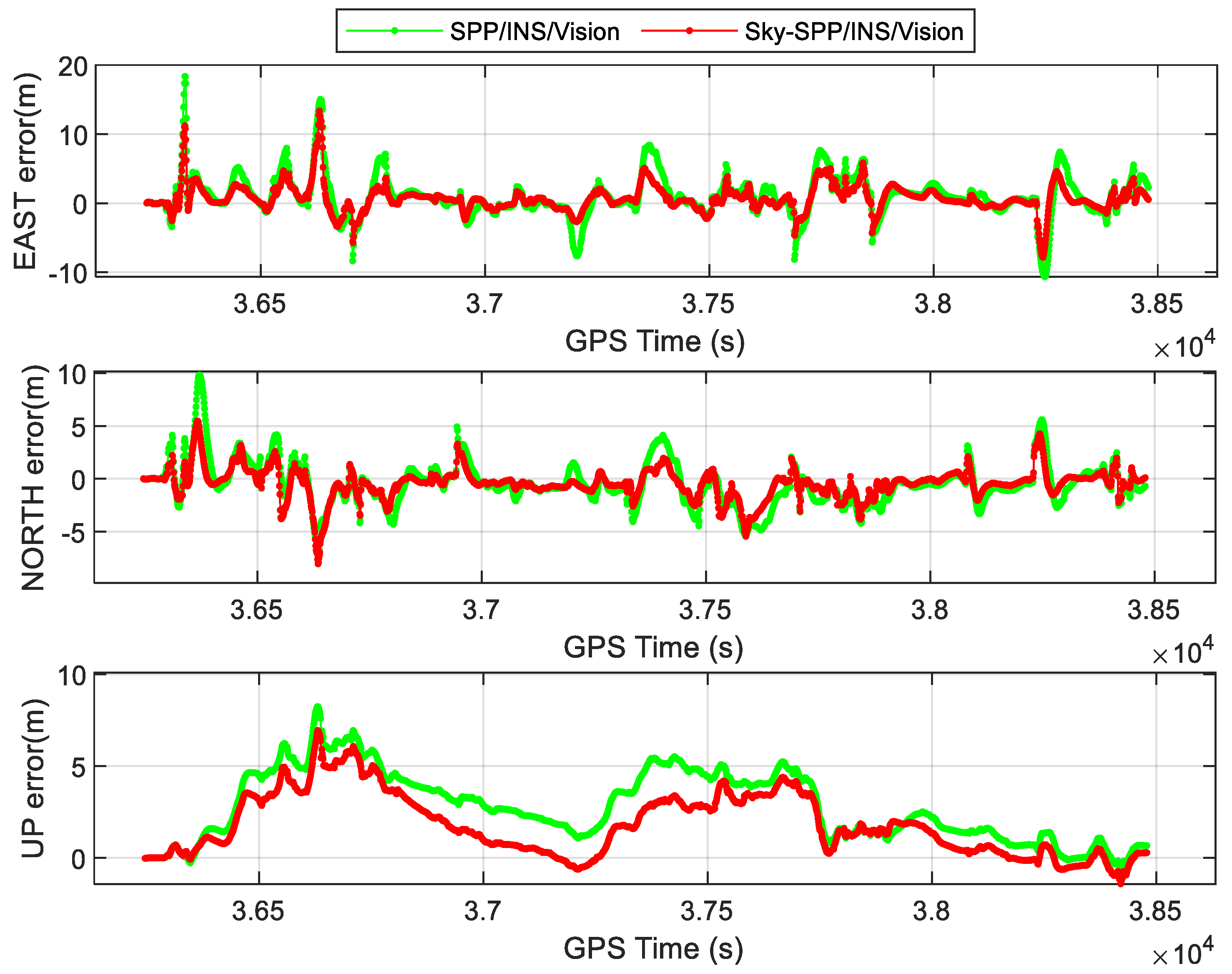

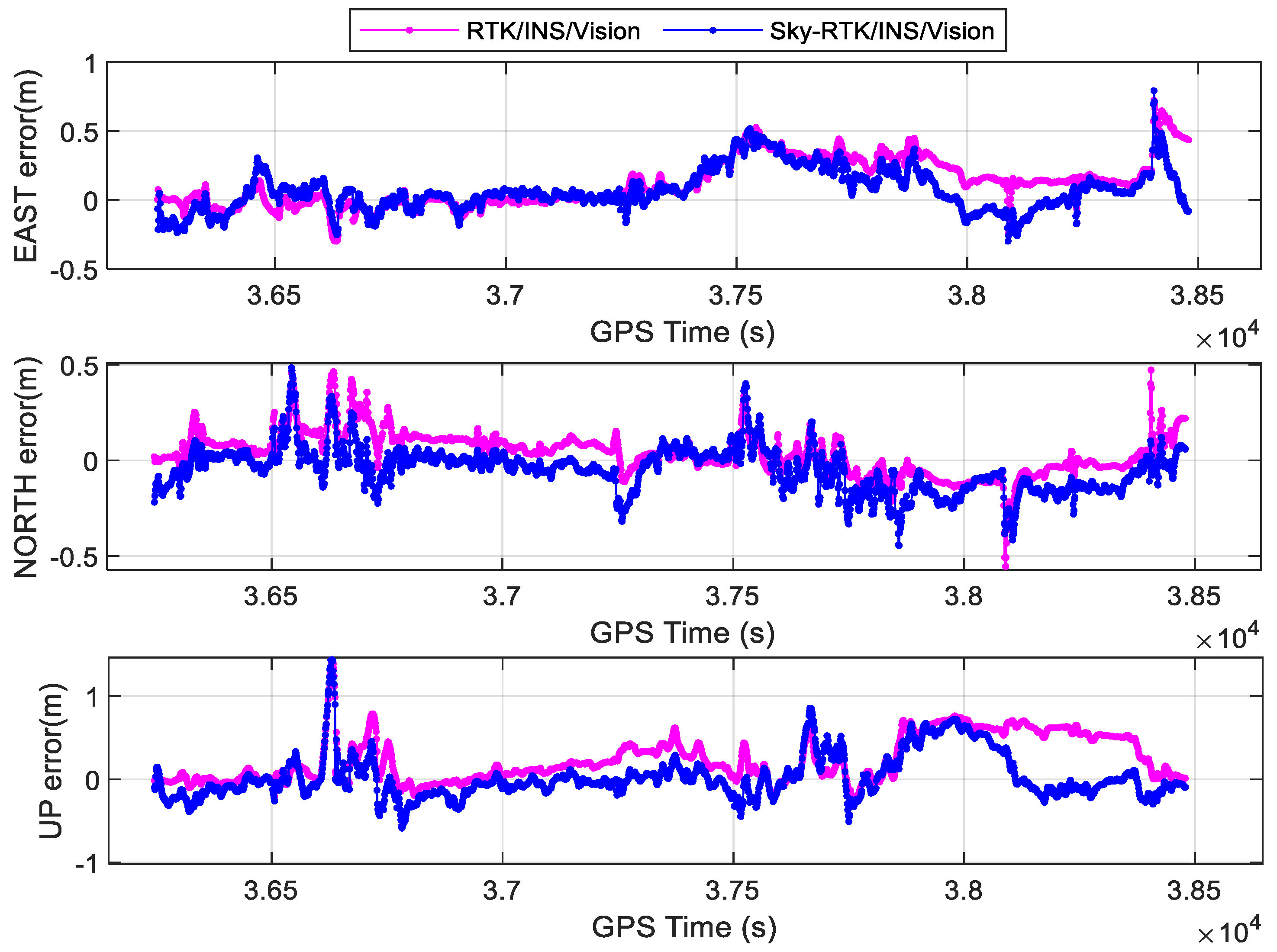

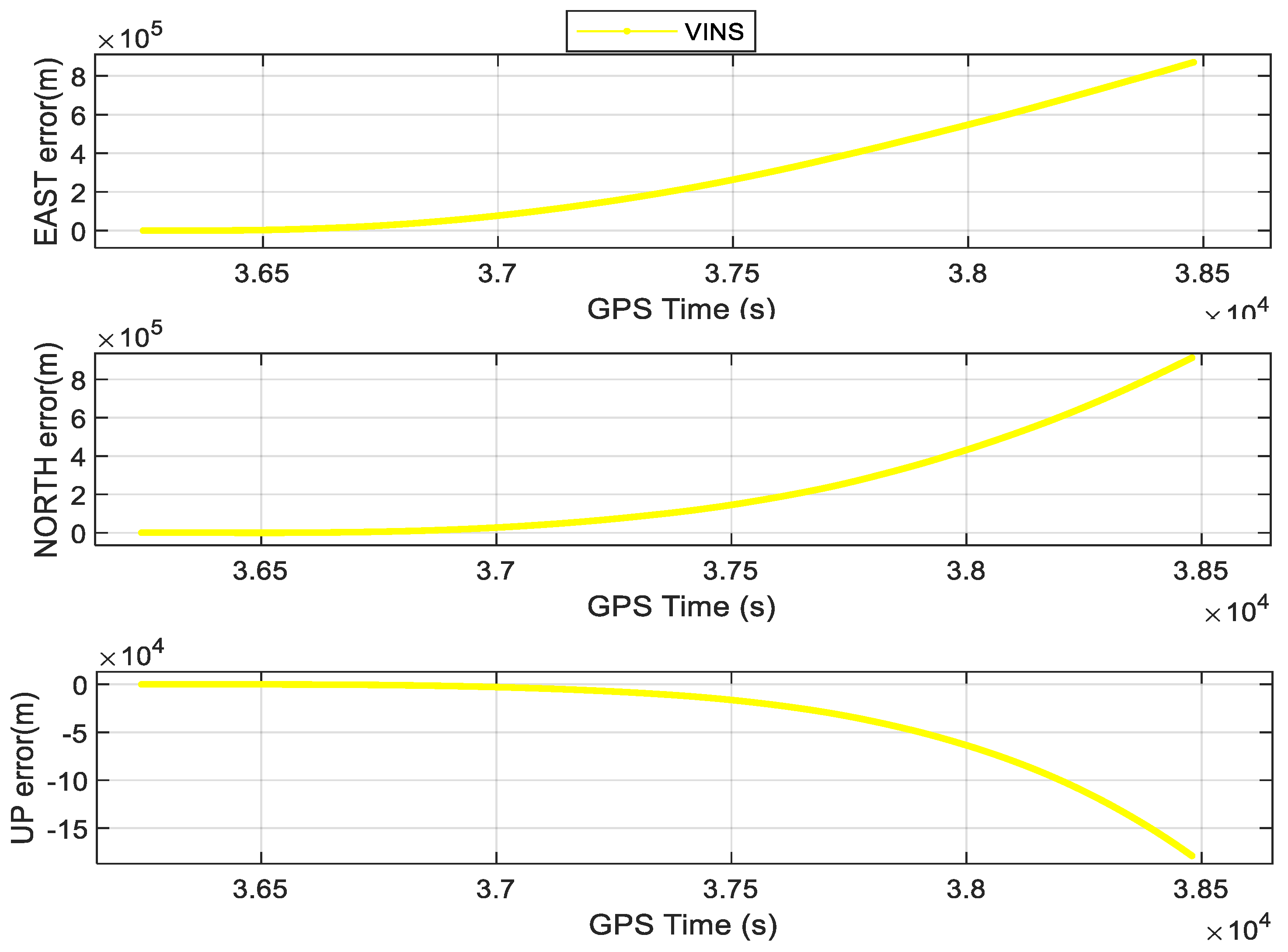

3.4. The Experimental Results of Positioning

4. Conclusions

- (1)

- Enhance the utilization of fish-eye camera data beyond GNSS NLOS detection, potentially integrating fish-eye camera observations into the proposed model;

- (2)

- Reach centimeter-level accuracy. By adding prior information (such as high-precision maps), the whole system can be made more robust and the positioning accuracy can increase.

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Godha, S.; Cannon, M.E. GPS/MEMS INS integrated system for navigation in urban areas. GPS Solut. 2007, 11, 193–203. [Google Scholar] [CrossRef]

- Li, T.; Zhang, H.; Gao, Z.; Chen, Q.; Niu, X. High-accuracy positioning in urban environments using single-frequency multi-GNSS RTK/MEMS-IMU integration. Remote Sens. 2018, 10, 205. [Google Scholar] [CrossRef]

- Niu, X.; Dai, Y.; Liu, T.; Chen, Q.; Zhang, Q. Feature-based GNSS positioning error consistency optimization for GNSS/INS integrated system. GPS Solut. 2023, 27, 89. [Google Scholar] [CrossRef]

- Chen, K.; Chang, G.; Chen, C. GINav: A MATLAB-based software for the data processing and analysis of a GNSS/INS integrated navigation system. GPS Solut. 2021, 25, 108. [Google Scholar] [CrossRef]

- Xu, B.; Wang, P.; He, Y.; Chen, Y.; Chen, Y.; Zhou, M. Leveraging structural information to improve point line visual-inertial odometry. IEEE Robot. Autom. Lett. 2022, 7, 3483–3490. [Google Scholar] [CrossRef]

- He, Y.; Xu, B.; Ouyang, Z.; Li, H. A rotation-translation-decoupled solution for robust and efficient visual-inertial initialization. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 739–748. [Google Scholar]

- Chen, Y.; Xu, B.; Wang, B.; Na, J.; Yang, P. GNSS Reconstrainted Visual–Inertial Odometry System Using Factor Graphs. IEEE Geosci. Remote Sens. Lett. 2023, 20, 1–5. [Google Scholar] [CrossRef]

- Qin, T.; Li, P.; Shen, S. Vins-mono: A robust and versatile monocular visual-inertial state estimator. IEEE Trans. Robot. 2018, 34, 1004–1020. [Google Scholar] [CrossRef]

- Liao, J.; Li, X.; Wang, X.; Li, S.; Wang, H. Enhancing navigation performance through visual-inertial odometry in GNSS-degraded environment. GPS Solut. 2021, 25, 50. [Google Scholar] [CrossRef]

- Cao, S.; Lu, X.; Shen, S. GVINS: Tightly coupled GNSS–visual–inertial fusion for smooth and consistent state estimation. IEEE Trans. Robot. 2022, 38, 2004–2021. [Google Scholar] [CrossRef]

- Mourikis, A.I.; Roumeliotis, S.I. A multi-state constraint Kalman filter for vision-aided inertial navigation. In Proceedings of the 2007 IEEE International Conference on Robotics and Automation, Rome, Italy, 10–14 April 2007. [Google Scholar] [CrossRef]

- Li, T.; Zhang, H.; Gao, Z.; Niu, X.; El-sheimy, N. Tight fusion of a monocular camera, MEMS-IMU, and single-frequency multi-GNSS RTK for precise navigation in GNSS-challenged environments. Remote Sens. 2019, 11, 610. [Google Scholar] [CrossRef]

- Groves, P.D.; Jiang, Z.; Skelton, B.; Cross, P.A.; Lau, L.; Adane, Y.; Kale, I. Novel multipath mitigation methods using a dual-polarization antenna. In Proceedings of the 23rd International Technical Meeting of The Satellite Division of the Institute of Navigation (ION GNSS 2010), Portland, OR, USA, 21–24 September 2010. [Google Scholar]

- Liu, S.; Li, D.; Li, B.; Wang, F. A compact high-precision GNSS antenna with a miniaturized choke ring. IEEE Antennas Wirel. Propag. Lett. 2017, 16, 2465–2468. [Google Scholar] [CrossRef]

- Gupta, I.J.; Weiss, I.M.; Morrison, A.W. Desired features of adaptive antenna arrays for GNSS receivers. Proc. IEEE 2016, 104, 1195–1206. [Google Scholar] [CrossRef]

- Won, D.H.; Ahn, J.; Lee, S.-W.; Lee, J.; Sung, S.; Park, H.-W.; Park, J.-P.; Lee, Y.J. Weighted DOP with consideration on elevation-dependent range errors of GNSS satellites. IEEE Trans. Instrum. Meas. 2012, 61, 3241–3250. [Google Scholar] [CrossRef]

- Groves, P.D.; Jiang, Z. Height aiding, C/N0 weighting and consistency checking for GNSS NLOS and multipath mitigation in urban areas. J. Navig. 2013, 66, 653–669. [Google Scholar] [CrossRef]

- Wen, W.; Zhang, G.; Hsu, L.-T. Exclusion of GNSS NLOS receptions caused by dynamic objects in heavy traffic urban scenarios using real-time 3D point cloud: An approach without 3D maps. In Proceedings of the 2018 IEEE/ION Position, Location and Navigation Symposium (PLANS), Monterey, CA, USA, 23–26 April 2018. [Google Scholar] [CrossRef]

- Wen, W.W.; Zhang, G.; Hsu, L.-T. GNSS NLOS exclusion based on dynamic object detection using LiDAR point cloud. IEEE Trans. Intell. Transp. Syst. 2019, 22, 853–862. [Google Scholar] [CrossRef]

- Wang, L.; Groves, P.D.; Ziebart, M.K. GNSS shadow matching: Improving urban positioning accuracy using a 3D city model with optimized visibility scoring scheme. NAVIGATION J. Inst. Navig. 2013, 60, 195–207. [Google Scholar] [CrossRef]

- Hsu, L.-T.; Gu, Y.; Kamijo, S. 3D building model-based pedestrian positioning method using GPS/GLONASS/QZSS and its reliability calculation. GPS Solut. 2016, 20, 413–428. [Google Scholar] [CrossRef]

- Suzuki, T.; Kubo, N. N-LOS GNSS signal detection using fish-eye camera for vehicle navigation in urban environments. In Proceedings of the 27th International Technical Meeting of the Satellite Division of the Institute of Navigation (ION GNSS+ 2014), Tampa, FL, USA, 8–12 September 2014. [Google Scholar]

- Wen, W.; Bai, X.; Kan, Y.C.; Hsu, L.T. Tightly coupled GNSS/INS integration via factor graph and aided by fish-eye camera. IEEE Trans. Veh. Technol. 2019, 68, 10651–10662. [Google Scholar] [CrossRef]

- Meguro, J.-i.; Murata, T.; Takiguchi, J.I.; Amano, Y.; Hashizume, T. GPS multipath mitigation for urban area using omnidirectional infrared camera. IEEE Trans. Intell. Transp. Syst. 2009, 10, 22–30. [Google Scholar] [CrossRef]

- Cohen, A.; Meurie, C.; Ruichek, Y.; Marais, J.; Flancquart, A. Quantification of gnss signals accuracy: An image segmentation method for estimating the percentage of sky. In Proceedings of the 2009 IEEE International Conference on Vehicular Electronics and Safety (ICVES), Pune, India, 11–12 November 2009. [Google Scholar] [CrossRef]

- Attia, D.; Meurie, C.; Ruichek, Y.; Marais, J. Counting of satellites with direct GNSS signals using Fisheye camera: A comparison of clustering algorithms. In Proceedings of the 2011 14th International IEEE Conference on Intelligent Transportation Systems (ITSC), Washington, DC, USA, 5–7 October 2011. [Google Scholar] [CrossRef]

- Wang, J.; Liu, J.; Zhang, S.; Xu, B.; Luo, Y.; Jin, R. Sky-view images aided NLOS detection and suppression for tightly coupled GNSS/INS system in urban canyon areas. Meas. Sci. Technol. 2023, 35, 025112. [Google Scholar] [CrossRef]

- Vijay, P.; Patil, N.C. Gray scale image segmentation using OTSU Thresholding optimal approach. J. Res. 2016, 2, 20–24. [Google Scholar]

- Dhanachandra, N.; Manglem, K.; Chanu, Y.J. Image segmentation using K-means clustering algorithm and subtractive clustering algorithm. Procedia Comput. Sci. 2015, 54, 764–771. [Google Scholar] [CrossRef]

- Soltani-Nabipour, J.; Khorshidi, A.; Noorian, B. Lung tumor segmentation using improved region growing algorithm. Nucl. Eng. Technol. 2020, 52, 2313–2319. [Google Scholar] [CrossRef]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Xu, B.; Zhang, S.; Kuang, K.; Li, X. A unified cycle-slip, multipath estimation, detection and mitigation method for VIO-aided PPP in urban environments. GPS Solut. 2023, 27, 59. [Google Scholar] [CrossRef]

- Furgale, P.; Rehder, J.; Siegwart, R. Unified temporal and spatial calibration for multi-sensor systems. In Proceedings of the 2013 IEEE/RSJ International Conference on Intelligent Robots and Systems, Tokyo, Japan, 3–7 November 2013; pp. 1280–1286. [Google Scholar] [CrossRef]

- Sun, K.; Mohta, K.; Pfrommer, B.; Watterson, M.; Liu, S.; Mulgaonkar, Y.; Taylor, C.J.; Kumar, V. Robust stereo visual inertial odometry for fast autonomous flight. IEEE Robot. Autom. Lett. 2018, 3, 965–972. [Google Scholar] [CrossRef]

- Rehder, J.; Nikolic, J.; Schneider, T.; Hinzmann, T.; Siegwart, R. Extending kalibr: Calibrating the extrinsics of multiple IMUs and of individual axes. In Proceedings of the 2016 IEEE International Conference on Robotics and Automation (ICRA), Stockholm, Sweden, 16–21 May 2016. [Google Scholar] [CrossRef]

- Sola, J. Quaternion kinematics for the error-state Kalman filter. arXiv 2017, arXiv:1711.02508. [Google Scholar] [CrossRef]

- Herrera, A.M.; Suhandri, H.F.; Realini, E.; Reguzzoni, M.; de Lacy, M.C. goGPS: Open-source MATLAB software. GPS Solut. 2016, 20, 595–603. [Google Scholar] [CrossRef]

- Bradski, G. The OpenCV Library. Dr. Dobb’s J. Softw. Tools 2000, 120, 122–125. Available online: https://opencv.org (accessed on 4 April 2023).

| IMU Sensor | Grade | Sampling Rate (Hz) | Bias Stability | Random Walk | ||

|---|---|---|---|---|---|---|

| Gyro. 1 (°/h) | Acc. 1 (mGal) | Angular (°/) | Velocity (m/s/) | |||

| SPAN-ISA-100C | Tactical | 200 | 0.05 | 100 | 0.005 | 0.018 |

| ADIS-16470 | MEMS | 100 | 8 | 1300 | 0.34 | 0.18 |

| Methods | Kmeans | Otsu | Region Growth | Ours |

|---|---|---|---|---|

| FPS | 0.34 | 5.47 | 3.69 | 10.85 |

| Accuracy | 49.50% | 36.45% | 44.96% | 98.54% |

| Method | Position RMSE (m) | |||

|---|---|---|---|---|

| East | North | Up | ||

| Ours | SPP/INS/Vision | 3.24 | 2.14 | 3.39 |

| Sky−SPP/INS/Vision | 2.07 | 1.51 | 2.47 | |

| RTK/INS/Vision | 0.21 | 0.13 | 0.36 | |

| Sky−RTK/INS/Vision | 0.16 | 0.11 | 0.27 | |

| Others | VINS−mono | - | - | - |

| GVINS | 2.50 | 1.75 | 2.82 | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, J.; Xu, B.; Liu, J.; Gao, K.; Zhang, S. Sky-GVIO: Enhanced GNSS/INS/Vision Navigation with FCN-Based Sky Segmentation in Urban Canyon. Remote Sens. 2024, 16, 3785. https://doi.org/10.3390/rs16203785

Wang J, Xu B, Liu J, Gao K, Zhang S. Sky-GVIO: Enhanced GNSS/INS/Vision Navigation with FCN-Based Sky Segmentation in Urban Canyon. Remote Sensing. 2024; 16(20):3785. https://doi.org/10.3390/rs16203785

Chicago/Turabian StyleWang, Jingrong, Bo Xu, Jingnan Liu, Kefu Gao, and Shoujian Zhang. 2024. "Sky-GVIO: Enhanced GNSS/INS/Vision Navigation with FCN-Based Sky Segmentation in Urban Canyon" Remote Sensing 16, no. 20: 3785. https://doi.org/10.3390/rs16203785

APA StyleWang, J., Xu, B., Liu, J., Gao, K., & Zhang, S. (2024). Sky-GVIO: Enhanced GNSS/INS/Vision Navigation with FCN-Based Sky Segmentation in Urban Canyon. Remote Sensing, 16(20), 3785. https://doi.org/10.3390/rs16203785