A CNN- and Self-Attention-Based Maize Growth Stage Recognition Method and Platform from UAV Orthophoto Images

Abstract

1. Introduction

2. Materials and Methods

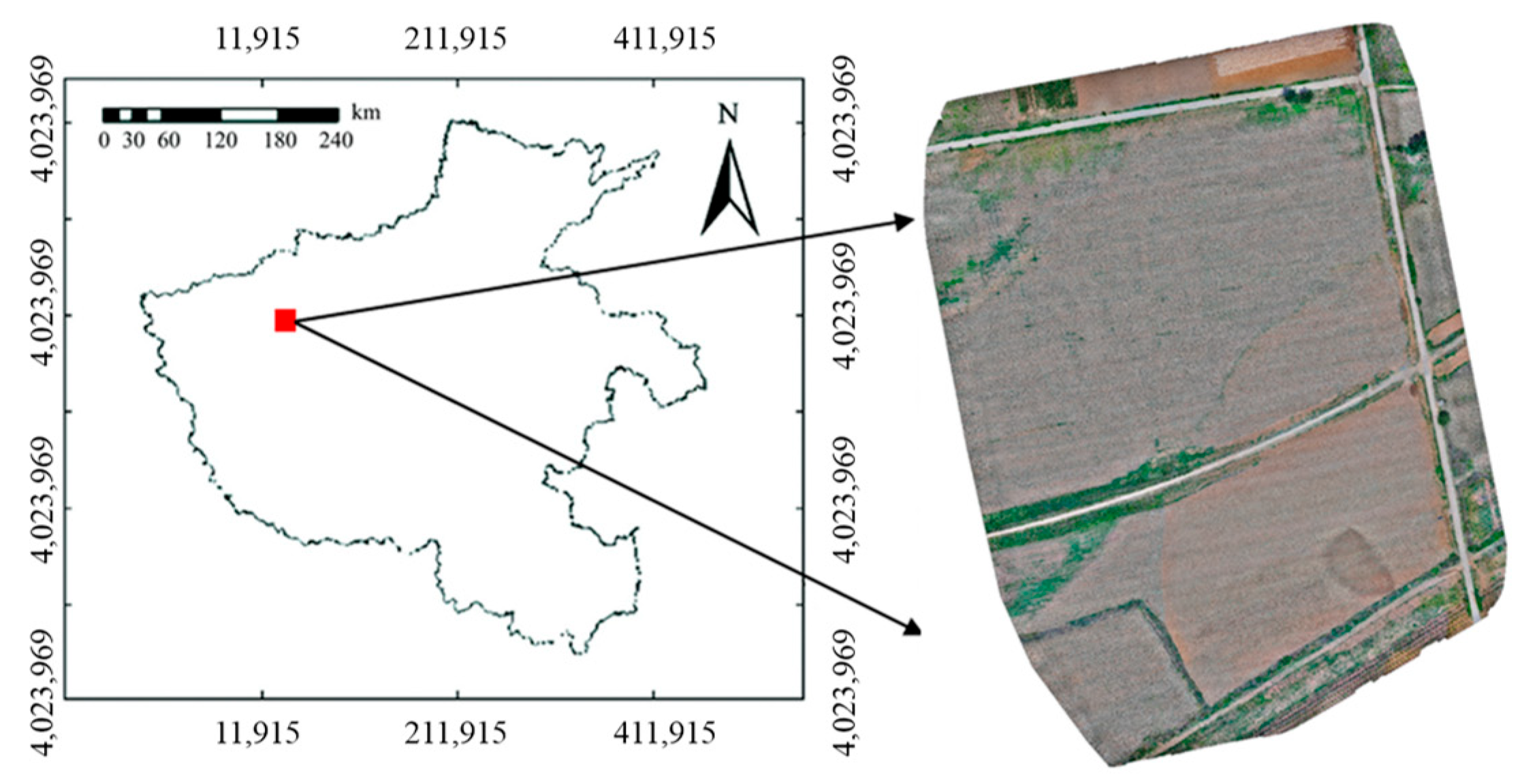

2.1. Date Acquisition and Datasets

2.1.1. Image Data Acquisition

2.1.2. Image Pre-Processing and Dataset Construction

2.2. Maize Growth Stage Recognition Method and Platform

2.2.1. Adaptation of Classic Models

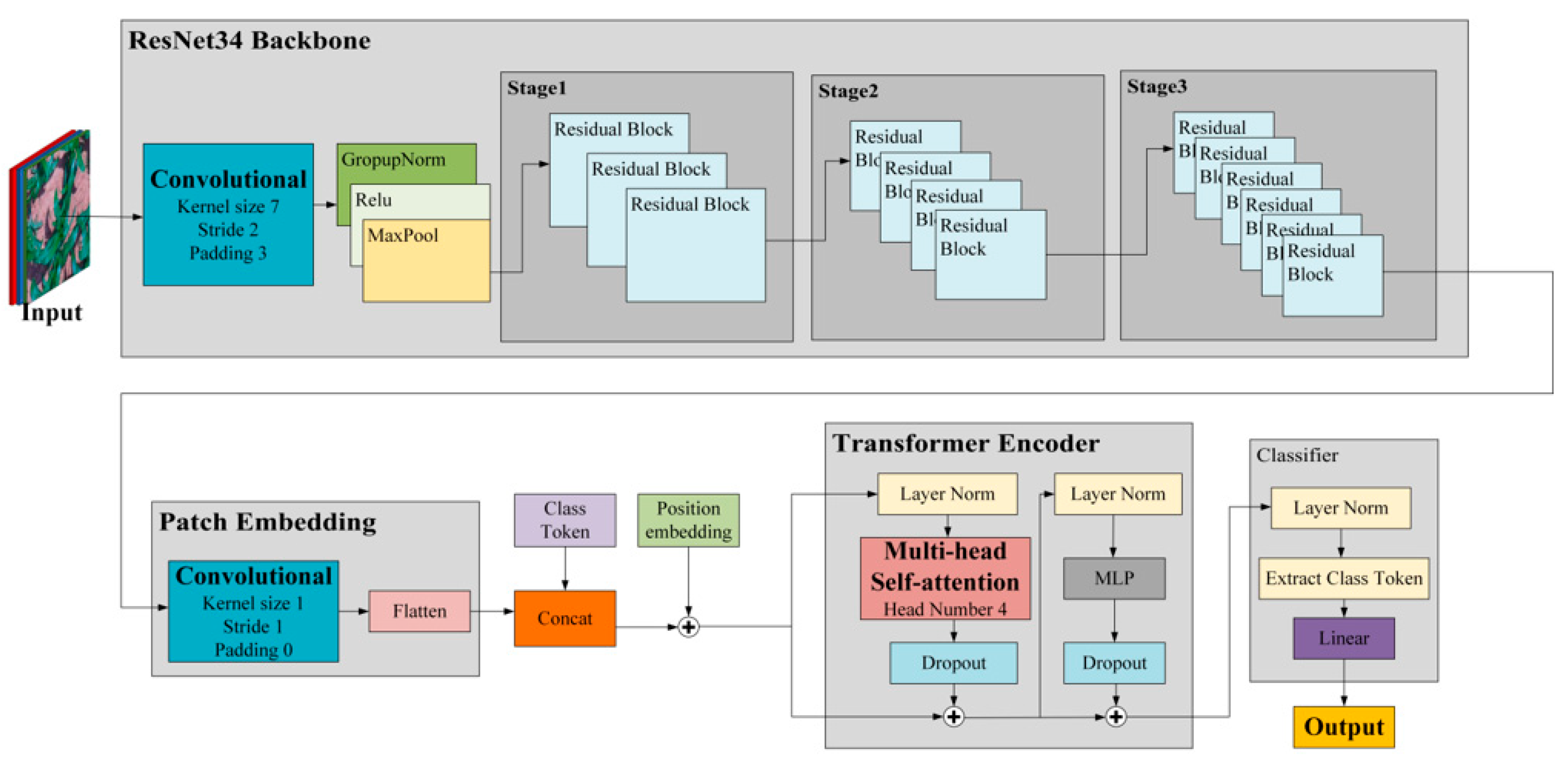

2.2.2. The Proposed Hybrid Model: MaizeHT

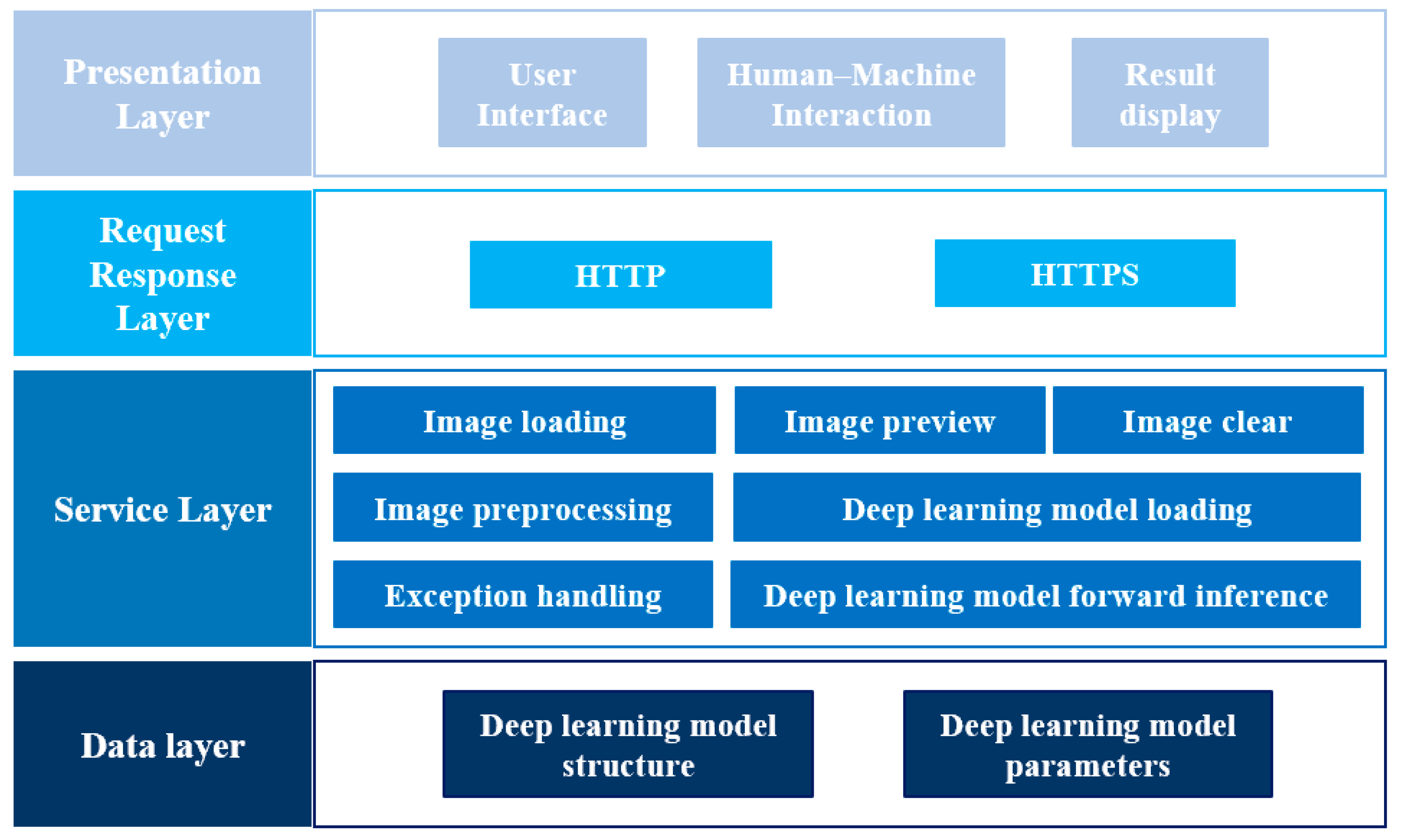

2.2.3. Intelligent Recognition Platform

2.3. Experiment and Evaluation Metrics

2.3.1. Experimental Steps

2.3.2. Evaluation Metrics

3. Results and Discussion

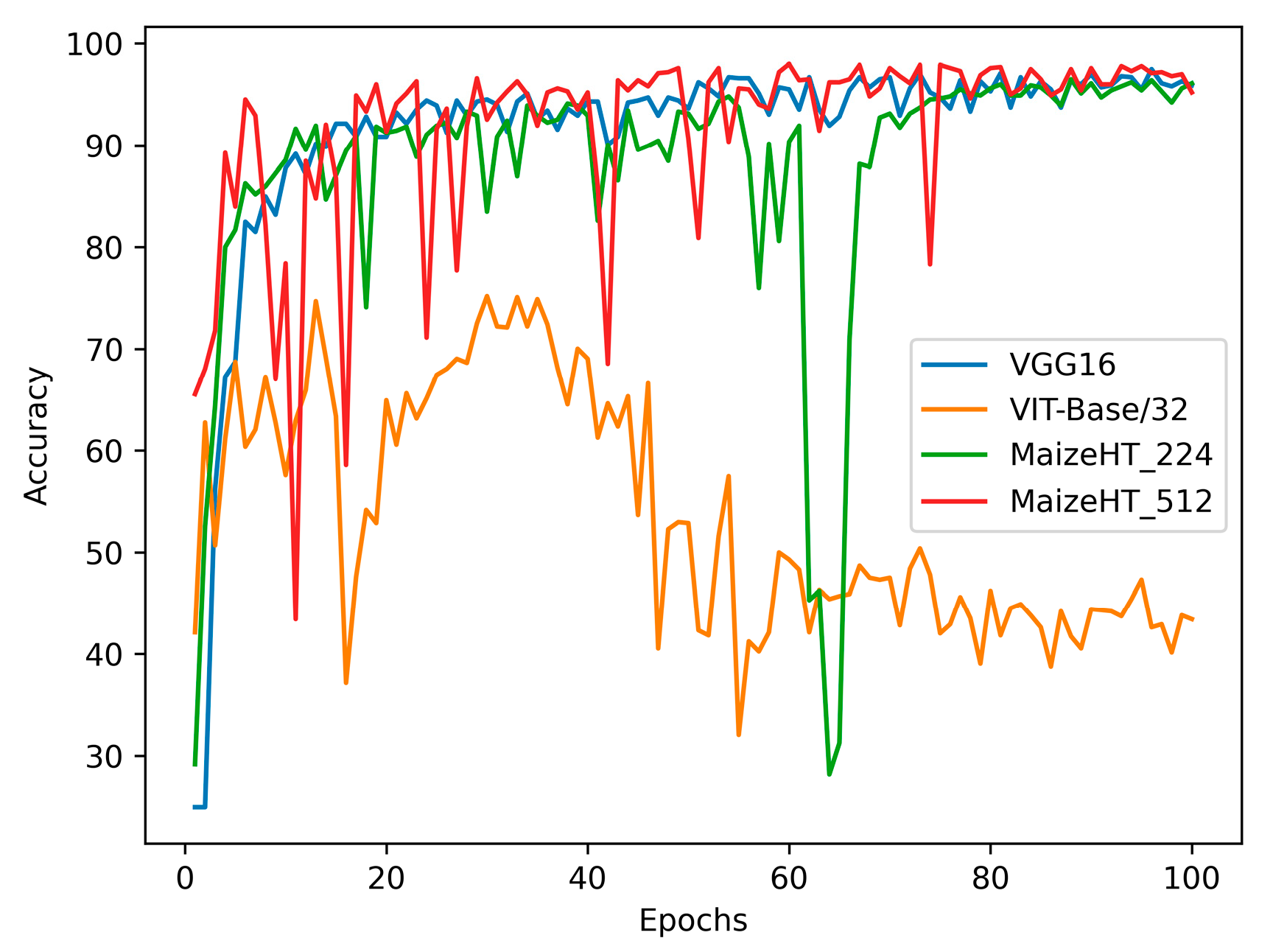

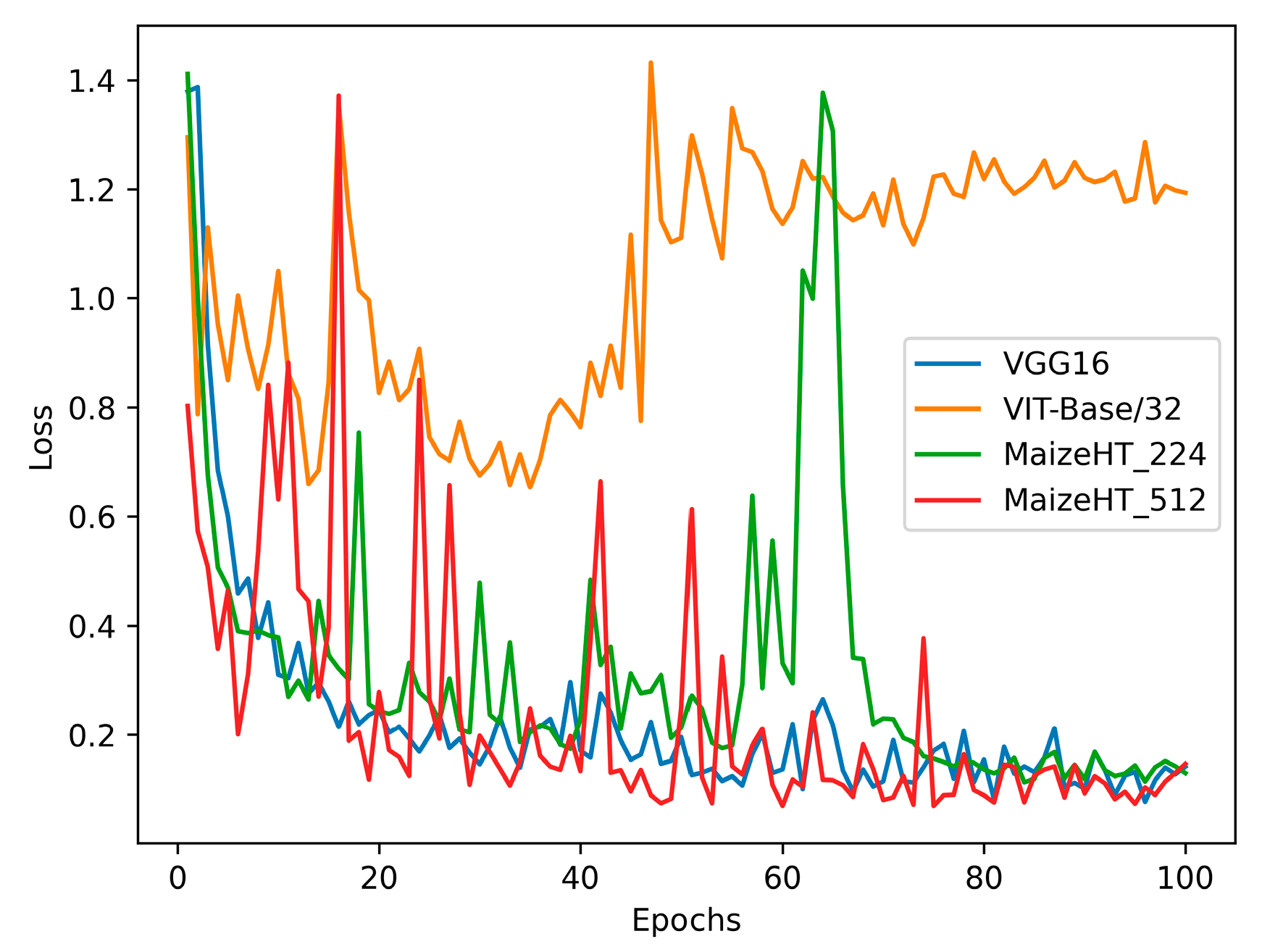

3.1. Model Performance Analysis Results

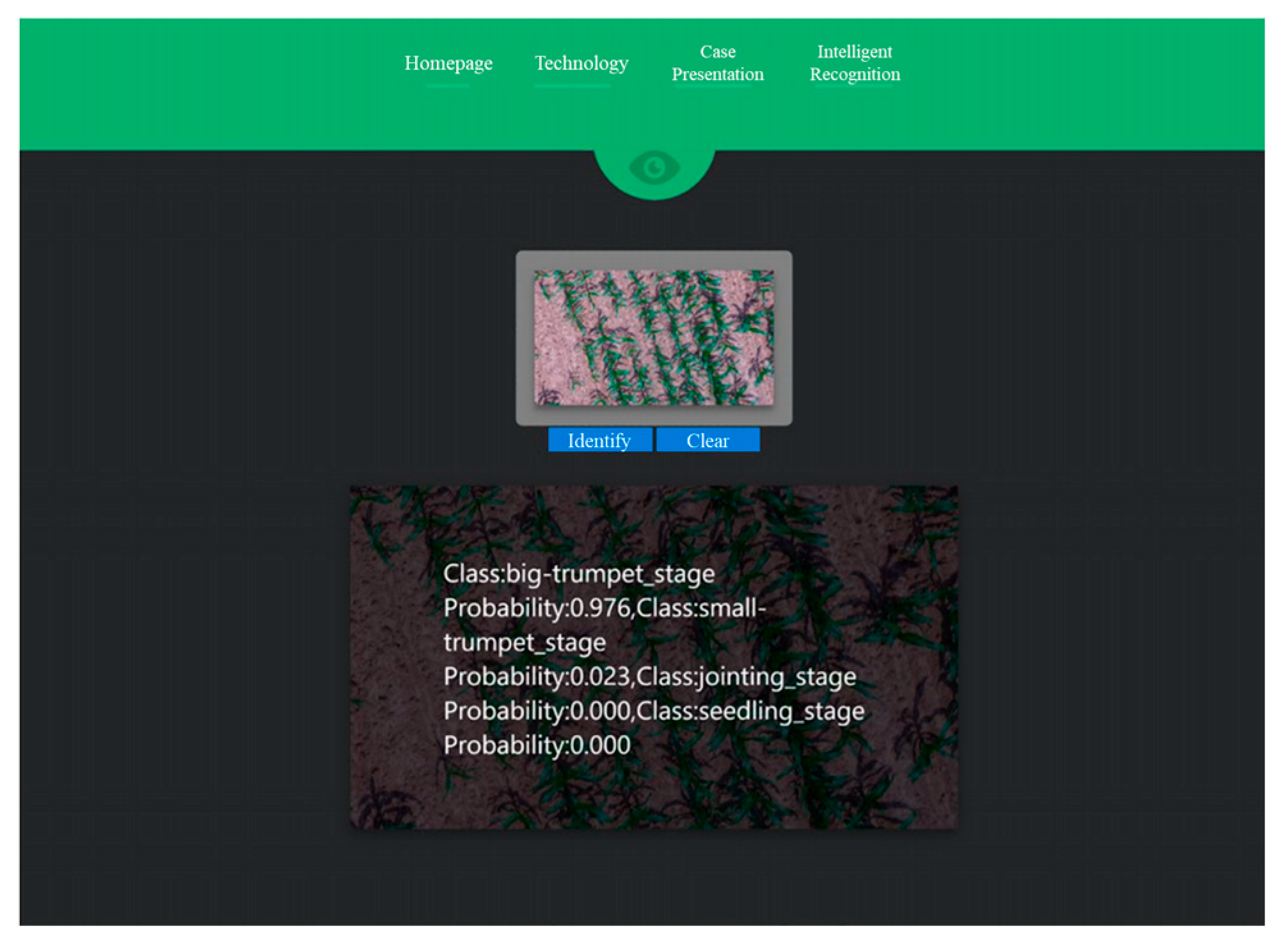

3.2. Platform Application Results

4. Conclusions and Future Work

4.1. Conclusions

4.2. Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Sharma, V.; Tripathi, A.K.; Mittal, H. Technological revolutions in smart farming: Current trends, challenges & future directions. Comput. Electron. Agric. 2022, 201, 107217. [Google Scholar] [CrossRef]

- Pathan, M.; Patel, N.; Yagnik, H.; Shah, M. Artificial cognition for applications in smart agriculture: A comprehensive review. Artif. Intell. Agric. 2020, 4, 81–95. [Google Scholar] [CrossRef]

- Luo, Y.; Cai, X.; Qi, J.; Guo, D.; Che, W. FPGA–accelerated CNN for real-time plant disease identification. Comput. Electron. Agric. 2023, 207, 107715. [Google Scholar] [CrossRef]

- Hridoy, R.H.; Tarek Habib, M.; Sadekur Rahman, M.; Uddin, M.S. Deep Neural Networks-Based Recognition of Betel Plant Diseases by Leaf Image Classification. In Evolutionary Computing and Mobile Sustainable Networks; Lecture Notes on Data Engineering and Communications Technologies; Springer: Singapore, 2022; pp. 227–241. [Google Scholar]

- Xu, W.; Li, W. Deep residual neural networks with feature recalibration for crop image disease recognition. Crop Prot. 2024, 176, 106488. [Google Scholar] [CrossRef]

- Zou, K.; Liao, Q.; Zhang, F.; Che, X.; Zhang, C. A segmentation network for smart weed management in wheat fields. Comput. Electron. Agric. 2022, 202, 107303. [Google Scholar] [CrossRef]

- Jiang, H.; Zhang, C.; Qiao, Y.; Zhang, Z.; Zhang, W.; Song, C. CNN feature based graph convolutional network for weed and crop recognition in smart farming. Comput. Electron. Agric. 2020, 174, 105450. [Google Scholar] [CrossRef]

- Pathak, H.; Igathinathane, C.; Howatt, K.; Zhang, Z. Machine learning and handcrafted image processing methods for classifying common weeds in corn field. Smart Agric. Technol. 2023, 5, 100249. [Google Scholar] [CrossRef]

- Carlier, A.; Dandrifosse, S.; Dumont, B.; Mercatoris, B. Wheat Ear Segmentation Based on a Multisensor System and Superpixel Classification. Plant Phenomics 2022, 2022, 9841985. [Google Scholar] [CrossRef]

- Madec, S.; Jin, X.; Lu, H.; De Solan, B.; Liu, S.; Duyme, F.; Heritier, E.; Baret, F. Ear density estimation from high resolution RGB imagery using deep learning technique. Agric. For. Meteorol. 2019, 264, 225–234. [Google Scholar] [CrossRef]

- Vega, F.A.; Ramírez, F.C.; Saiz, M.P.; Rosúa, F.O. Multi-temporal imaging using an unmanned aerial vehicle for monitoring a sunflower crop. Biosyst. Eng. 2015, 132, 19–27. [Google Scholar] [CrossRef]

- Yang, M.-D.; Tseng, H.-H.; Hsu, Y.-C.; Tsai, H.P. Semantic Segmentation Using Deep Learning with Vegetation Indices for Rice Lodging Identification in Multi-date UAV Visible Images. Remote Sens. 2020, 12, 633. [Google Scholar] [CrossRef]

- Biabi, H.; Abdanan Mehdizadeh, S.; Salehi Salmi, M. Design and implementation of a smart system for water management of lilium flower using image processing. Comput. Electron. Agric. 2019, 160, 131–143. [Google Scholar] [CrossRef]

- Niu, Y.; Han, W.; Zhang, H.; Zhang, L.; Chen, H. Estimating maize plant height using a crop surface model constructed from UAV RGB images. Biosyst. Eng. 2024, 241, 56–67. [Google Scholar] [CrossRef]

- Tsouros, D.C.; Bibi, S.; Sarigiannidis, P.G. A Review on UAV-Based Applications for Precision Agriculture. Information 2019, 10, 349. [Google Scholar] [CrossRef]

- Lee, C.-J.; Yang, M.-D.; Tseng, H.-H.; Hsu, Y.-C.; Sung, Y.; Chen, W.-L. Single-plant broccoli growth monitoring using deep learning with UAV imagery. Comput. Electron. Agric. 2023, 207, 107739. [Google Scholar] [CrossRef]

- Xu, Y.; Zhao, B.; Zhai, Y.; Chen, Q.; Zhou, Y. Maize Diseases Identification Method Based on Multi-Scale Convolutional Global Pooling Neural Network. IEEE Access 2021, 9, 27959–27970. [Google Scholar] [CrossRef]

- Ahila Priyadharshini, R.; Arivazhagan, S.; Arun, M.; Mirnalini, A. Maize leaf disease classification using deep convolutional neural networks. Neural Comput. Appl. 2019, 31, 8887–8895. [Google Scholar] [CrossRef]

- An, J.; Li, W.; Li, M.; Cui, S.; Yue, H. Identification and Classification of Maize Drought Stress Using Deep Convolutional Neural Network. Symmetry 2019, 11, 256. [Google Scholar] [CrossRef]

- Yue, Y.; Li, J.-H.; Fan, L.-F.; Zhang, L.-L.; Zhao, P.-F.; Zhou, Q.; Wang, N.; Wang, Z.-Y.; Huang, L.; Dong, X.-H. Prediction of maize growth stages based on deep learning. Comput. Electron. Agric. 2020, 172, 105351. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet classification with deep convolutional neural networks. Commun. ACM 2017, 60, 84–90. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Huang, G.; Liu, Z.; Van der Maaten, L. Densely connected convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 4700–4708. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 1–9. [Google Scholar]

- Tan, M.; Le, Q.V. Efficientnet: Rethinking model scaling for convolutional neural networks. In Proceedings of the International Conference on Machine Learning (PMLR), Long Beach, CA, USA, 9–15 June 2019; pp. 6105–6114. [Google Scholar]

- Tan, M.; Le, Q.V. Efficientnetv2: Smaller models and faster training. In Proceedings of the International Conference on Machine Learning (PMLR), Virtual, 18–24 July 2021; pp. 10096–10106. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv 2020, arXiv:2010.11929. [Google Scholar] [CrossRef]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin Transformer: Hierarchical Vision Transformer using Shifted Windows. arXiv 2021, arXiv:2103.14030. [Google Scholar] [CrossRef]

- Ioffe, S.; Szegedy, C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. In Proceedings of the 32nd International Conference on Machine Learning (PMLR), Lille, France, 6–11 July 2015; pp. 448–456. [Google Scholar]

- Wu, Y.; He, K. Group normalization. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 3–19. [Google Scholar]

- Ba, J.L.; Kiros, J.R.; Hinton, G.E. Layer normalization. arXiv 2016, arXiv:1607.06450. [Google Scholar] [CrossRef]

- Kingma, D.P.; Ba, J.L. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar] [CrossRef]

| Growth Stage | Training Dataset | Validation Dataset | Test Dataset |

|---|---|---|---|

| Seedling | 2012 | 249 | 251 |

| Jointing | 2010 | 250 | 250 |

| Small trumpet | 2004 | 251 | 250 |

| Big trumpet | 2006 | 254 | 253 |

| Configuration | Parameter |

|---|---|

| CPU | Intel Xeon Gold 6142 |

| GPU | Nvidia RTX 3080 |

| Operating system | Ubuntu 18.04 |

| Accelerated environment | CUDA11.2 cuDNN8.1.1 |

| Development environment | Pycharm2021.1 |

| Random access memory | 27.1 GB |

| Video memory | 10.5 GB |

| PyTorch version | v1.10 |

| Stage | Precision | Recall | Specificity | F1 Score |

|---|---|---|---|---|

| Seedling | 0.976 | 0.976 | 0.992 | 0.976 |

| Jointing | 0.972 | 0.964 | 0.991 | 0.968 |

| Small trumpet | 0.957 | 0.980 | 0.985 | 0.968 |

| Big trumpet | 0.980 | 0.964 | 0.993 | 0.972 |

| Stage | Precision | Recall | Specificity | F1 Score |

|---|---|---|---|---|

| Seedling | 0.996 | 0.984 | 0.999 | 0.990 |

| Jointing | 0.996 | 0.980 | 0.999 | 0.988 |

| Small trumpet | 0.973 | 0.996 | 0.991 | 0.984 |

| Big trumpet | 0.984 | 0.988 | 0.995 | 0.986 |

| Algorithms | Input Resolution | Params (M) | Accuracy (%) | Loss | FLOPs (G) |

|---|---|---|---|---|---|

| CNN | |||||

| AlexNet | 224 × 224 | 25.551 | 97.81 | 0.07843 | 0.633 |

| VGG16 | 224 × 224 | 21.138 | 96.31 | 0.15340 | 14.311 |

| VGG16 | 512 × 512 | 21.138 | 97.81 | 0.06835 | 74.743 |

| ResNet18 | 224 × 224 | 11.706 | 97.31 | 0.07815 | 1.694 |

| ResNet34 | 224 × 224 | 21.815 | 98.21 | 0.06974 | 3.419 |

| ResNet50 | 512 × 512 | 25.617 | 99.48 | 0.01923 | 19.997 |

| DenseNet121 | 512 × 512 | 7.217 | 99.23 | 0.03678 | 13.939 |

| GoogLeNet | 512 × 512 | 5.863 | 99.46 | 0.01909 | 7.318 |

| EfficientNet_V1 (B6) | 512 × 512 | 40.745 | 99.24 | 0.02252 | 16.601 |

| EfficientNet_V2 (L) | 512 × 512 | 117.239 | 99.60 | 0.01848 | 59.755 |

| Self-attention | |||||

| Vision Transformer (base-p16) | 224 × 224 | 85.611 | 97.51 | 0.06673 | 15.691 |

| Vision Transformer (base-p32) | 224 × 224 | 87.381 | 98.41 | 0.05135 | 4.063 |

| Vision Transformer (large-p16) | 224 × 224 | 303.002 | 98.21 | 0.06088 | 55.550 |

| Swin-Transformer (tiny) | 224 × 224 | 27.474 | 97.61 | 0.06904 | 4.051 |

| Swin-Transformer (small) | 224 × 224 | 48.750 | 97.61 | 0.06139 | 7.927 |

| Swin-Transformer (base) | 224 × 224 | 86.626 | 97.71 | 0.06968 | 14.087 |

| MaizeHT_224 (proposed) | 224 × 224 | 15.446 | 97.71 | 0.10176 | 4.148 |

| MaizeHT_512 (proposed) | 512 × 512 | 15.446 | 98.71 | 0.04638 | 5.416 |

| Algorithms | Input Resolution | Train | Validation | Test | |||

|---|---|---|---|---|---|---|---|

| Accuracy (%) | Loss | Accuracy (%) | Loss | Accuracy (%) | Loss | ||

| CNN | |||||||

| AlexNet | 224 × 224 | 96.89 | 0.08802 | 97.21 | 0.08293 | 97.81 | 0.07843 |

| VGG16 | 224 × 224 | 98.63 | 0.04460 | 95.71 | 0.19580 | 96.31 | 0.15340 |

| VGG16 | 512 × 512 | 98.63 | 0.06064 | 97.21 | 0.10111 | 97.81 | 0.06835 |

| ResNet18 | 224 × 224 | 95.80 | 0.11380 | 96.51 | 0.10940 | 97.31 | 0.07815 |

| ResNet34 | 224 × 224 | 96.28 | 0.10350 | 96.02 | 0.10790 | 98.21 | 0.06974 |

| ResNet50 | 512 × 512 | 99.23 | 0.02630 | 98.90 | 0.04082 | 99.48 | 0.01923 |

| DenseNet121 | 512 × 512 | 97.82 | 0.06604 | 98.71 | 0.05063 | 99.23 | 0.03678 |

| GoogLeNet | 512 × 512 | 98.63 | 0.03910 | 98.51 | 0.04487 | 99.46 | 0.01909 |

| EfficientNet_V1 (B6) | 512 × 512 | 99.23 | 0.02016 | 98.80 | 0.03989 | 99.24 | 0.02252 |

| EfficientNet_V2 (L) | 512 × 512 | 99.61 | 0.00922 | 98.61 | 0.05329 | 99.60 | 0.01848 |

| Self-attention | |||||||

| Vision Transformer (base-p16) | 224 × 224 | 98.64 | 0.04666 | 97.21 | 0.07104 | 97.51 | 0.06673 |

| Vision Transformer (base-p32) | 224 × 224 | 98.44 | 0.05128 | 97.41 | 0.09522 | 98.41 | 0.05135 |

| Vision Transformer (large-p16) | 224 × 224 | 98.82 | 0.03576 | 97.61 | 0.06434 | 98.21 | 0.06088 |

| Swin-Transformer (tiny) | 224 × 224 | 97.88 | 0.06934 | 97.41 | 0.08803 | 97.61 | 0.06904 |

| Swin-Transformer (small) | 224 × 224 | 98.26 | 0.05437 | 97.21 | 0.09485 | 97.61 | 0.06139 |

| Swin-Transformer (base) | 224 × 224 | 98.29 | 0.05546 | 98.11 | 0.06973 | 97.71 | 0.06968 |

| MaizeHT_224 (proposed) | 224 × 224 | 97.39 | 0.08262 | 96.41 | 0.1139 | 97.71 | 0.10176 |

| MaizeHT_512 (proposed) | 512 × 512 | 98.06 | 0.06283 | 97.81 | 0.0731 | 98.71 | 0.04638 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ni, X.; Wang, F.; Huang, H.; Wang, L.; Wen, C.; Chen, D. A CNN- and Self-Attention-Based Maize Growth Stage Recognition Method and Platform from UAV Orthophoto Images. Remote Sens. 2024, 16, 2672. https://doi.org/10.3390/rs16142672

Ni X, Wang F, Huang H, Wang L, Wen C, Chen D. A CNN- and Self-Attention-Based Maize Growth Stage Recognition Method and Platform from UAV Orthophoto Images. Remote Sensing. 2024; 16(14):2672. https://doi.org/10.3390/rs16142672

Chicago/Turabian StyleNi, Xindong, Faming Wang, Hao Huang, Ling Wang, Changkai Wen, and Du Chen. 2024. "A CNN- and Self-Attention-Based Maize Growth Stage Recognition Method and Platform from UAV Orthophoto Images" Remote Sensing 16, no. 14: 2672. https://doi.org/10.3390/rs16142672

APA StyleNi, X., Wang, F., Huang, H., Wang, L., Wen, C., & Chen, D. (2024). A CNN- and Self-Attention-Based Maize Growth Stage Recognition Method and Platform from UAV Orthophoto Images. Remote Sensing, 16(14), 2672. https://doi.org/10.3390/rs16142672