1. Introduction

Forecasting precipitations at the short- and mid-term horizon (also known as rain nowcasting) is important for real-life problems, for instance, the World Meteorological Organization recently set out concrete applications in agricultural management, aviation, or management of severe meteorological events [

1]. Rain nowcasting requires a quick and reliable forecast of a process that is highly non-stationary at a local scale. Due to the strong constraints of computing time, operational short-term precipitation forecasting systems are very simple in their design. To our knowledge, there are two main types of operational approaches all based on radar imagery: Methods based on storm cell tracking [

2,

3,

4,

5] try to match image structures (storm cells, obtained by thresholding) seen between two successive acquisitions. Matching criteria are based on the similarity and proximity of these structures. Once the correspondence and their displacement have been established, the position of these cells is extrapolated to the desired time horizon. The second category relies on the estimation of a dense field of apparent velocities at each pixel of the image and modeled by the optical flow [

6,

7]. The forecast is also obtained by extrapolation in time and advection of the last observation with the apparent velocity field.

Over the past few years, machine learning proved to be able to address rain nowcasting and was applied in several regions [

8,

9,

10,

11,

12,

13]. More recently, new neural network architectures were used: in [

14], a PredNet [

15] is adapted to predict rain in the region of Kyoto. In [

16], a U-Net architecture [

17] is used for rain nowcasting of low- to middle-intensity rainfalls in the region of Seattle. The key idea in these works is to train a neural network on sequences of consecutive rain radar images in order to predict the rainfall at a subsequent time. Although rain nowcasting based on deep learning is widely used, it is driven by observed radar or satellite images. In this work, we propose an algorithm merging meteorological forecasts with observed radar data to improve these predictions.

Météo-France (the French national weather service) recently released MeteoNet [

18], a database that provides a large number of meteorological parameters on the French territory. The data available are as diverse as rainfalls (acquired by Doppler radars of the Météo-France network), the outcomes of two meteorological models (high-scale ARPEGE and finer-scale AROME), topographical masks, and so on. The outcomes of the weather forecast model AROME include hourly forecasts of wind velocity, considering that advection is a prominent factor in precipitations evolution we chose to include wind as a significant additional predictor.

The forecasts of the neural network are based on a set of parameters weighting the features of their inputs. A training procedure adjusts the network’s parameters to emphasize the weights on the features significant for the network’s predictions. The deep learning model used in this work is a shallow U-Net architecture [

17] known for its skill in image processing [

19]. Moreover, this architecture is flexible enough to easily add relevant inputs, which is an interesting property for data fusion. Two networks were trained on the data of MeteoNet restricted to the region of Brest in France. Their inputs were sequences of rain radar images and wind forecasts five minutes apart over an hour, and their targets were rain radar images at the horizon of 30 min for the first neural network and 1 h for the second. An accurate regression of rainfall is an ill-posed problem, mainly due to issues of an imbalanced dataset, heavily skewed towards null and small values. We chose to transform the problem into a classification problem, similarly to the work in [

16]. This approach is relevant given the potential uses of rain nowcasting, especially in predicting flash flooding, in aviation and agriculture, where the exact measurement of rain is not as important as the reaching of a threshold [

1]. We split the rain data into several classes depending on its precipitation rate. A major issue faced during the training is rain scarcity. Given that an overwhelming number of images corresponds to a clear sky, the training dataset is imbalanced in favor of null rainfalls which makes it quite difficult for a neural network to extract significant features during training. We present a method of data over-sampling to address this issue.

We compared our model to the persistence model which consists of taking the last rain radar image of an input sequence as the prediction (though simplistic, this model is frequently used in rain nowcasting [

11,

12,

16]) and to an operational and optical flow-based rain nowcasting system [

20]. We also compare the neural network merging radar image and wind forecast to a similar neural network trained using only radar rain images as inputs.

2. Problem Statement

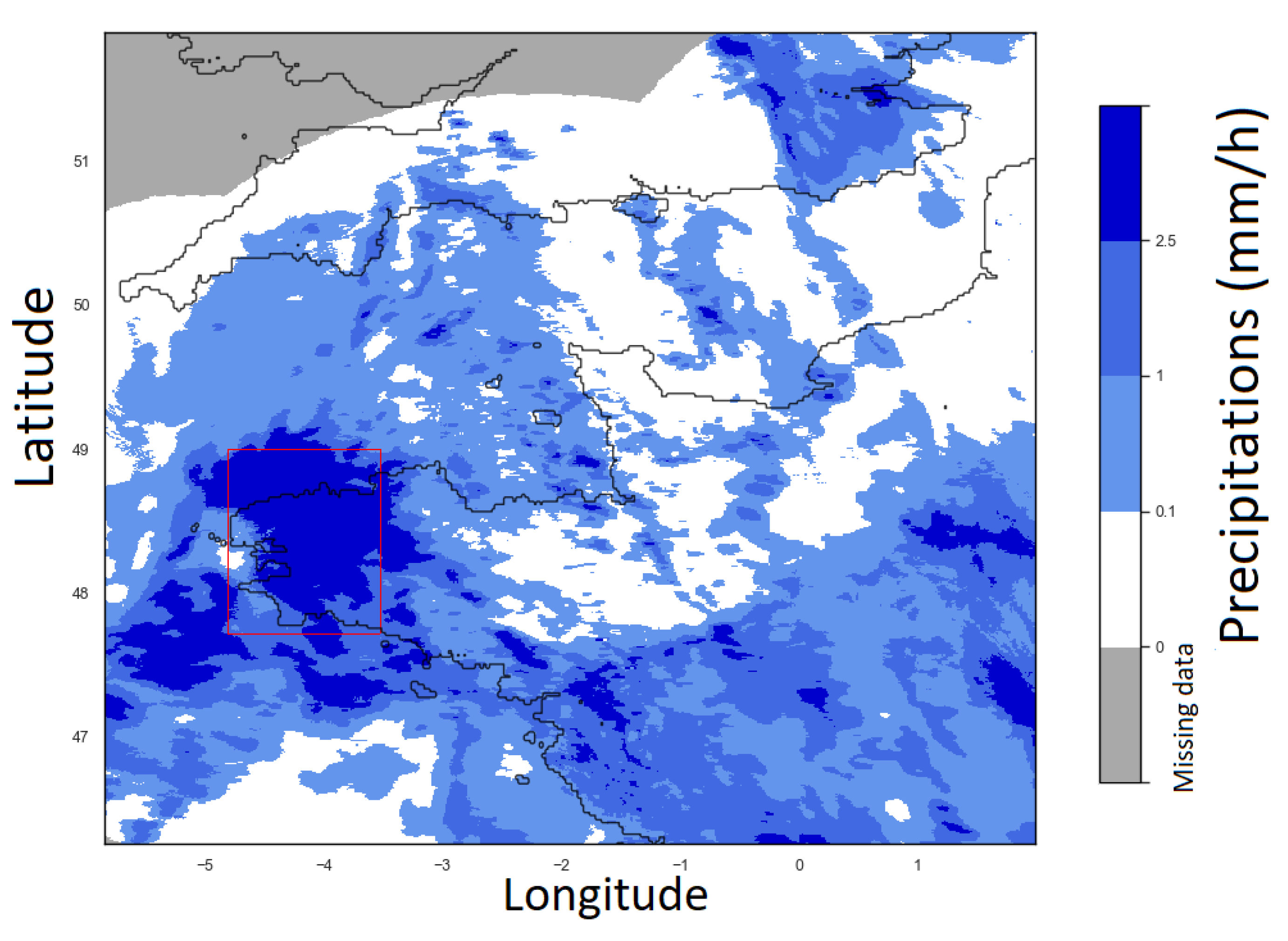

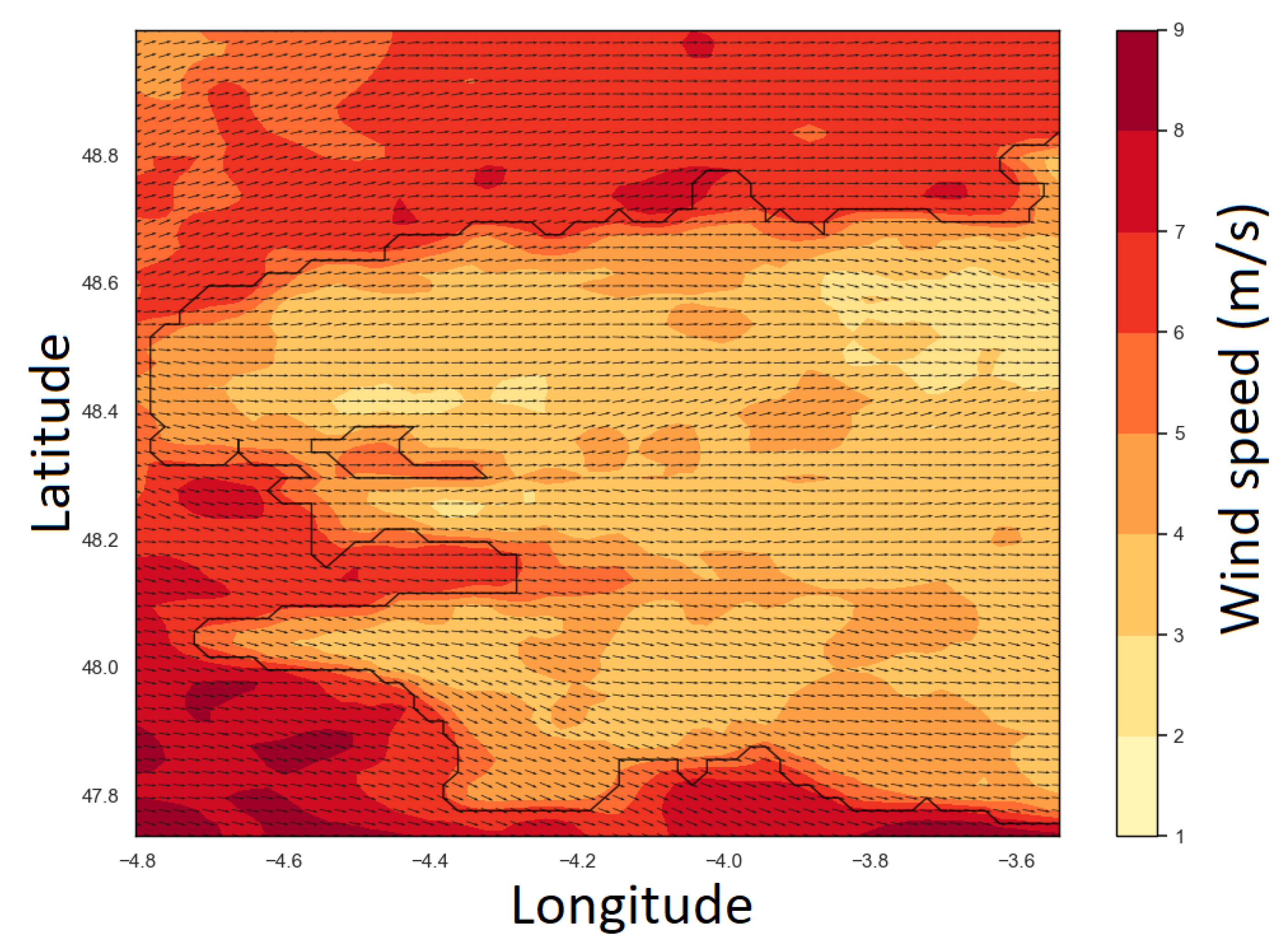

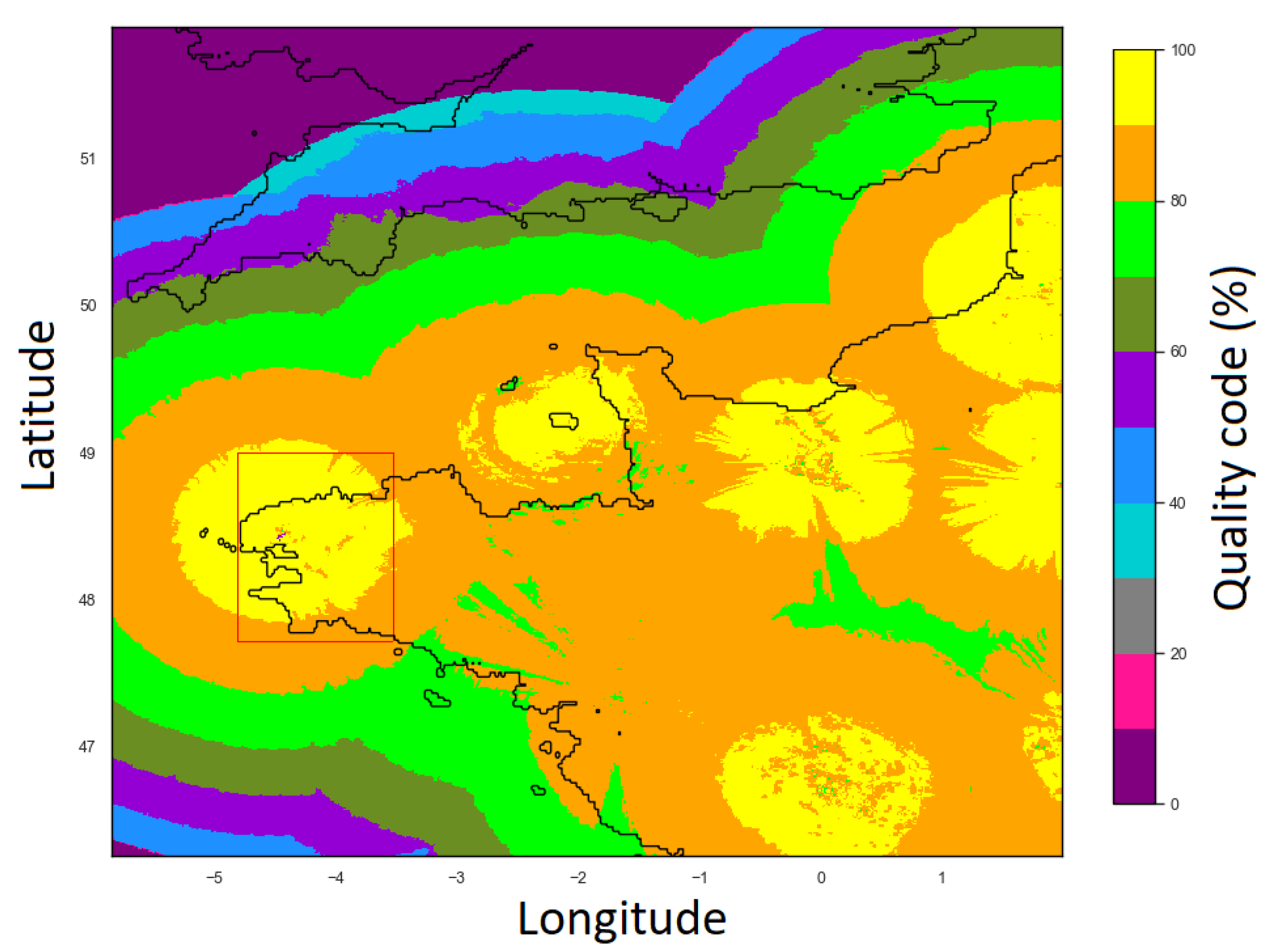

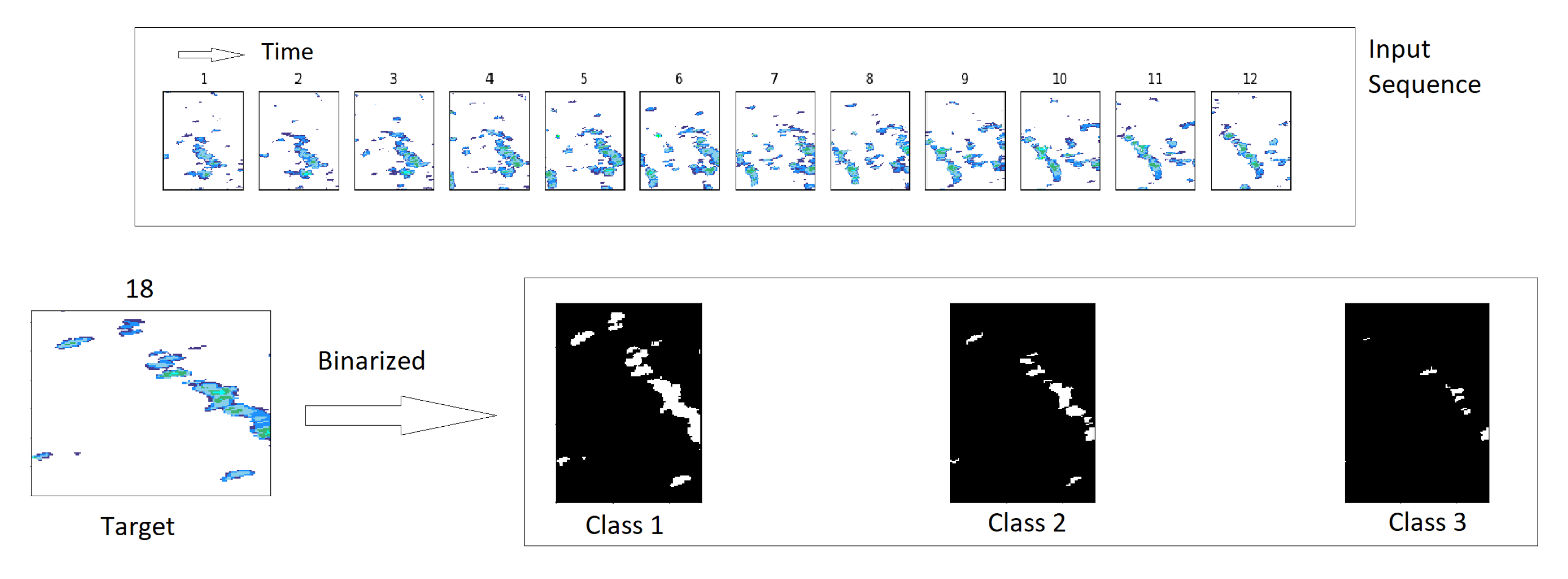

Two types of images are used: rain radar images (also referred to as rainfall maps, see

Figure 1) providing for each pixel the accumulation of rainfall over 5 min and wind maps (see

Figure 2) providing for each pixel the 10 m wind velocity components

U and

V. Both rain and wind data will be detailed further in

Section 3.

Each meteorological parameter (rainfall, wind velocity U, and wind velocity V) is available across metropolitan France at regular time steps. The images are stacked along the temporal axis. Each pixel is indexed by three indices ; i and j index space and, respectively, map a data to its longitude and latitude ; k indexes time and maps a data to its time step . In the following, time and spatial resolutions are assumed to be constant: 0.01 degrees spatially and 5 min temporally.

We define as the cumulative rainfall between times and at longitude and latitude . We define and , respectively, as the horizontal (East to West) and vertical (South to North) components of the wind velocity vector at , longitude , and latitude . Finally, we define as the vector stacking all data. Given a sequence of MeteoNet data where is the length of the sequence, the target of our study would ideally be to forecast the rainfall at a subsequent time where is the lead time step.

As stated before, we have chosen to transform this regression problem into a classification problem. To define these classes we consider a set of

ordered threshold values. These values split the interval

in

classes defined as follows: for

, class

is defined by

. A pixel belongs to a class if the rainfall accumulated between the time

and the time

is greater than the threshold associated with this class. Splitting the cumulative rainfalls into these

classes converts the regression task from directly predicting

to determining to which classes

belongs to. The classes are embedded, i.e., if a

belongs to

, then it also belongs to

. Therefore, a prediction can belong to several classes. This type of problem with embedded classes is formalized as multi-label classification problem, and it is often transformed into

binary classification problems using the binary relevance method [

21]. Therefore, we will train

binary classifiers; classifier

m determines the probability that

exceeds the threshold

.

Knowing

, the classifier

m will estimate the probability

that the cumulative rainfall

belongs to

:

with

reaching 1 if

surely belongs to class

. Ultimately, all values in the sequence of probabilities

that are above 0.5 mark that data as belonging to

. When no classifier satisfies

, no rain is predicted.

4. Method

4.1. Definition of the Datasets

The whole database is split into training, validation, and test sets. The years 2016 and 2017 are used for the training set. For the year 2018, one week out of two is used in the validation set and the other one in the test set. Before splitting, one hour of data is removed at each cut between two consecutive weeks to prevent data leakage [

24]. The splitting process is done on the whole year to assess the seasonal effects of the prediction in both validation and test sets. The training set is used to optimize the so-called trainable parameter of the neural network (see

Section 4.3), the validation set is used to tune the hyperparameters (see

Table 2), and the test set is finally used to estimate the final scores presented in

Section 5.

The inputs of the network are sequences of MeteoNet images: 12 images collected five minutes apart over an hour are concatenated to create an input. Because we use three types of images (

,

U, and

V), the dimension of the inputs is

(12 rainfall maps, 12 wind maps

U, 12 wind maps

V). In the formalism defined in

Section 2 .

Each input sequence is associated with its prediction target which is the rainfall map p time steps after the last image of the input sequence thresholded based on the different thresholds. The dimension of the target is . The channel is composed of binary values equal to 1 if belongs to class m, and 0 otherwise. These class maps are noted .

An example of an input sequence and its target is given in

Figure 6.

If the input sequence or its target contains undefined data (due to a problem of acquisition), or if the last image of the input sequence does not contain any rain (the sky is completely clear), the sequence is set aside and will not be considered. Each input corresponds to a distinct hour: there is no overlapping between the different inputs but note that overlapping can be an option to increase the size of the training set even if it can result in overfitting. It has not been used here as the actual training set contains 16,837 sequences which are considered to be large enough. The validation set contains 4293 sequences and the test set contains 4150 sequences.

4.1.1. Dealing with Rain Scarcity: Oversampling

Oversampling consists of selecting a subset of sequences of the training base and duplicating them so that they appear several times in each epoch of the training phase (see

Section 4.3 for details on epochs and the training phase).

The main issue in rain nowcasting is to tackle rain scarcity that causes imbalanced classes. Indeed, in the training base 92.6% of the pixels does not have rain at all (see

Table 1). Therefore, the last class is the most underrepresented in the dataset and thus it will be the most difficult to predict. An oversampling procedure is thus proposed to balance this underrepresentation. Note that the validation and test sets are left untouched to consistently represent the reality during the evaluation of the performance.

Currently, sequences whose target contains an instance of the last class represent roughly one-third of the training base (see

Figure 4 and

Table 1). These sequences are duplicated until their proportion in the training set reaches a chosen parameter

. In practice, this parameter is chosen to be greater than the original proportion of the last class (

in this case).

It is worth noting that the oversampling is acting image-wise and does not compensate for the unbalanced representation of class between pixels in each image. The impact and tuning of the parameter

are discussed in

Section 6.3.

4.1.2. Data Normalization

Data used as an input to train and validate the neural network are first normalized. The normalization procedure for the rain is the following. After computing the maximum cumulative rainfall over the training dataset,

, the following transformation is applied to each data:

This invertible normalization function brings the dataset into the range while spreading out the values closest to 0.

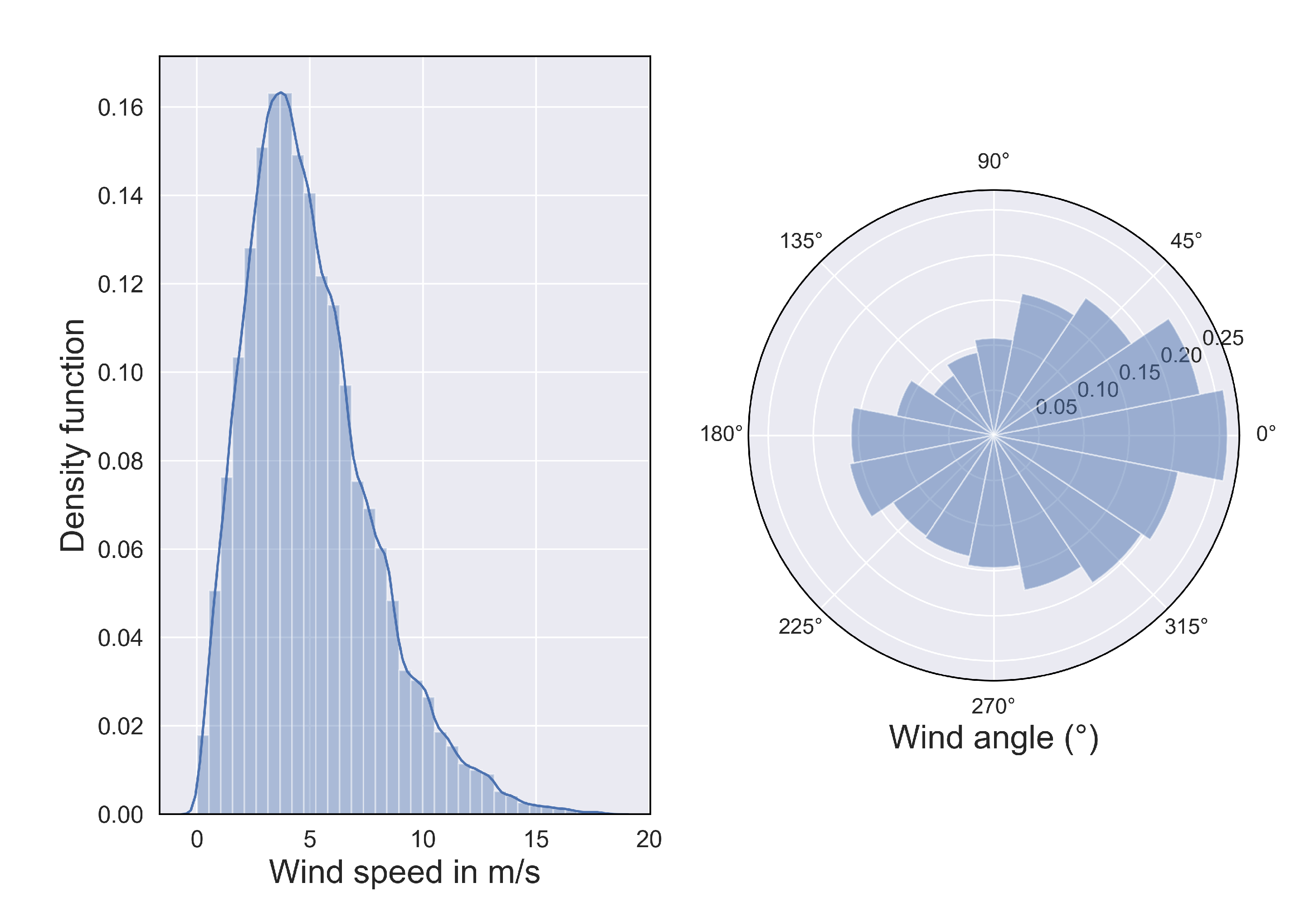

As for wind data, considering that

U and

V follow a Gaussian distribution, with

and

, respectively, the mean and the standard deviation of wind over the overall training set, we apply

4.2. Network Architecture

A convolutional neural network (CNN) is a feedforward neural network stacking several layers: each layer uses the output of the previous layer to calculate its own output, it is a well-established method in computer vision [

25]. We decided to use a specific type of CNN, the U-Net architecture [

17], due to its successes in image segmentation. We chose to perform a temporal embedding through a convolutional model, rather than a Long Short-Term Memory (LSTM) or other recurrent architectures used in other studies (such as [

8,

9]), given that the phenomenon to be predicted is considered to have no memory (also called Markovian process). However, the inclusion of previous time steps remains warranted: the full state of the system is not observed, and the temporal coherence of the time series constraints our prediction to better fit the real rainfall trajectory. The details of the selected architecture are presented in

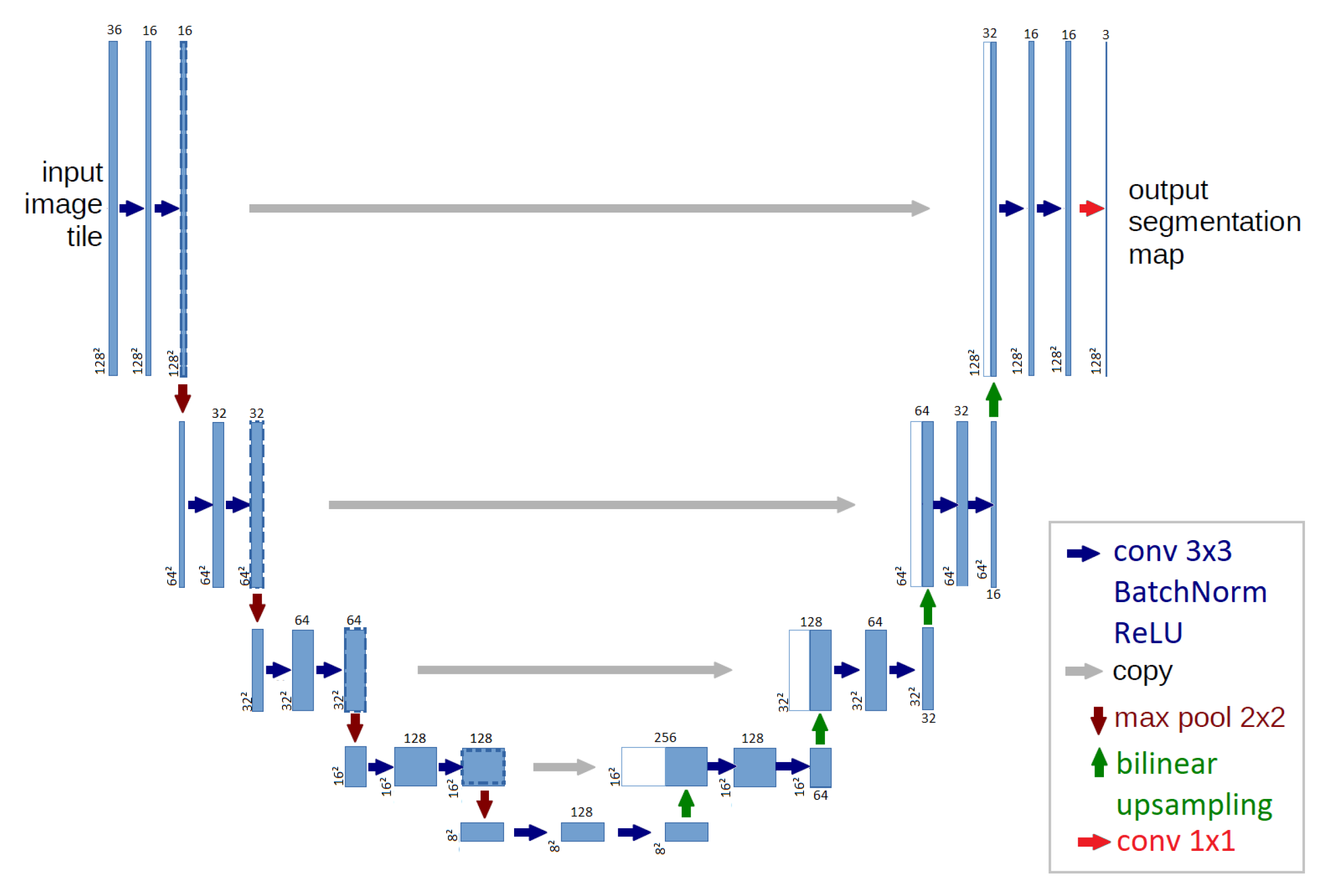

Figure 7.

Like any U-Net network, the architecture is composed of a decreasing path also known as the encoder and an increasing path also known as the decoder. The encoding path starts with two convolutional layers. Then, it is composed of four consecutive cells, each being a succession of a max-pooling layer (red arrows in

Figure 7, detailed hereafter) followed by two convolutional layers (blue arrows in

Figure 7). Note that each convolutional layer used in this architecture is followed by a Batch-norm [

26] layer and a rectifier linear unit (ReLU) [

27] (Batch-norm and ReLU are detailed further down). At the bottom of the network, two convolutional layers are applied. Then the decoding path is composed of four consecutive cells each being a succession of a bilinear upsampling layer (green arrow in

Figure 7, detailed hereafter) followed by two convolutional layers. Finally, a

convolutional layer (red arrow in

Figure 7) combined with an activation function maps the output of the last cell to the segmentation map. The operation and the aim of each layer are now detailed.

4.2.1. Convolutional Layers

Convolutional layers perform a convolution by a kernel of , a padding of 1 is used to preserve the input size. The parameters of the convolutions are to be learned during the training phase. Each convolutional layer in this architecture is followed by a Batch-norm and a ReLU layer.

A Batch-norm layer re-centers and re-scales its inputs to ensure that the mean is close to 0 and the standard deviation is close to 1. Batch-norm helps the network to train faster and to be more stable [

26]. For an input batch Batch, the output is

, where

and

are trainable parameters,

is the average, and

is the variance of the input batch. In our architecture, the constant

is set to

.

A ReLU layer, standing for rectifier linear unit, applies the following nonlinear function . Adding nonlinearities enables the network to model non-linear relations between the input and output images.

4.2.2. Image Sample

To upsample or subsample the images, two types of layers are considered.

The Max-pooling layer is used to reduce the image feature sizes in the encoding part. It browses the input with a filter and maps each patch to its maximum. It reduces the size of the image by a factor between each level of the encoding path. It also contributes to prevent overfitting by reducing the number of parameters to be optimized during the training.

The bilinear upsampling layer is used to increase the image feature sizes in the decoding part. It performs a bilinear interpolation of the input resulting in the size of the output being twice the one of the input.

4.2.3. Skip Connections

Skip connections (in gray in

Figure 7) are the trademarks of the U-Net architecture. The output of an encoding cell is stacked to the output of a decoding cell of the same dimension and the stacking is used as input for the next decoding cell. Therefore, skip connections spread some information from the encoding path to the decoding path and thus help to prevent the vanishing gradient problem [

28] and allow to prevent some small scale features in the encoding path.

4.2.4. Output Layer

The final layer is a convolutional layer with a

kernel. The dimension of its output is

, there is one channel for each class. For a given point we define the score

as the output of channel

m (

). This output

is then transformed using the sigmoid function to obtain the probability

:

where

is defined in

Section 2. Note that, following the definition of the classes in

Table 1, one point can belong to several classes.

Finally, the output is said to belong to the class m if .

4.3. Network Training

We call the vector of length containing the trainable parameters (also name weights) that are to be determined through the training procedure. The training process consists of splitting the training dataset into several batches, inputting successively the batches into the network, calculating the distance between the predictions and the targets via a loss function, and finally, based on the calculated loss, updating the network weights using an optimization algorithm. The training procedure is repeated during several epochs (one epoch being achieved when the entire training set has gone through the network) and aims at minimizing the loss function.

For a given input sequence

, we define the binary cross-entropy loss function [

29]

comparing the output

to its target

:

This loss is averaged across the batch, then a regularization term is added:

The loss function minimizes the discrepancy between the targeted value and the predicted value, and the second term is a square regularization (also called Tikhonov or -regularization) aiming at preventing overfitting and distributing the weights more evenly. The importance of this regularization in the training process is weighted by the factor .

The optimization algorithm used is Adam [

30] (standing for Adaptive Moment Estimation), which is a stochastic gradient descent algorithm. The recommended parameters are used:

,

and

.

Moreover, to prevent an exploding gradient, the gradient clipping technique is used. it consists of re-scaling the gradient if it becomes too large to keep it small.

The training procedure for the two neural networks is the following.

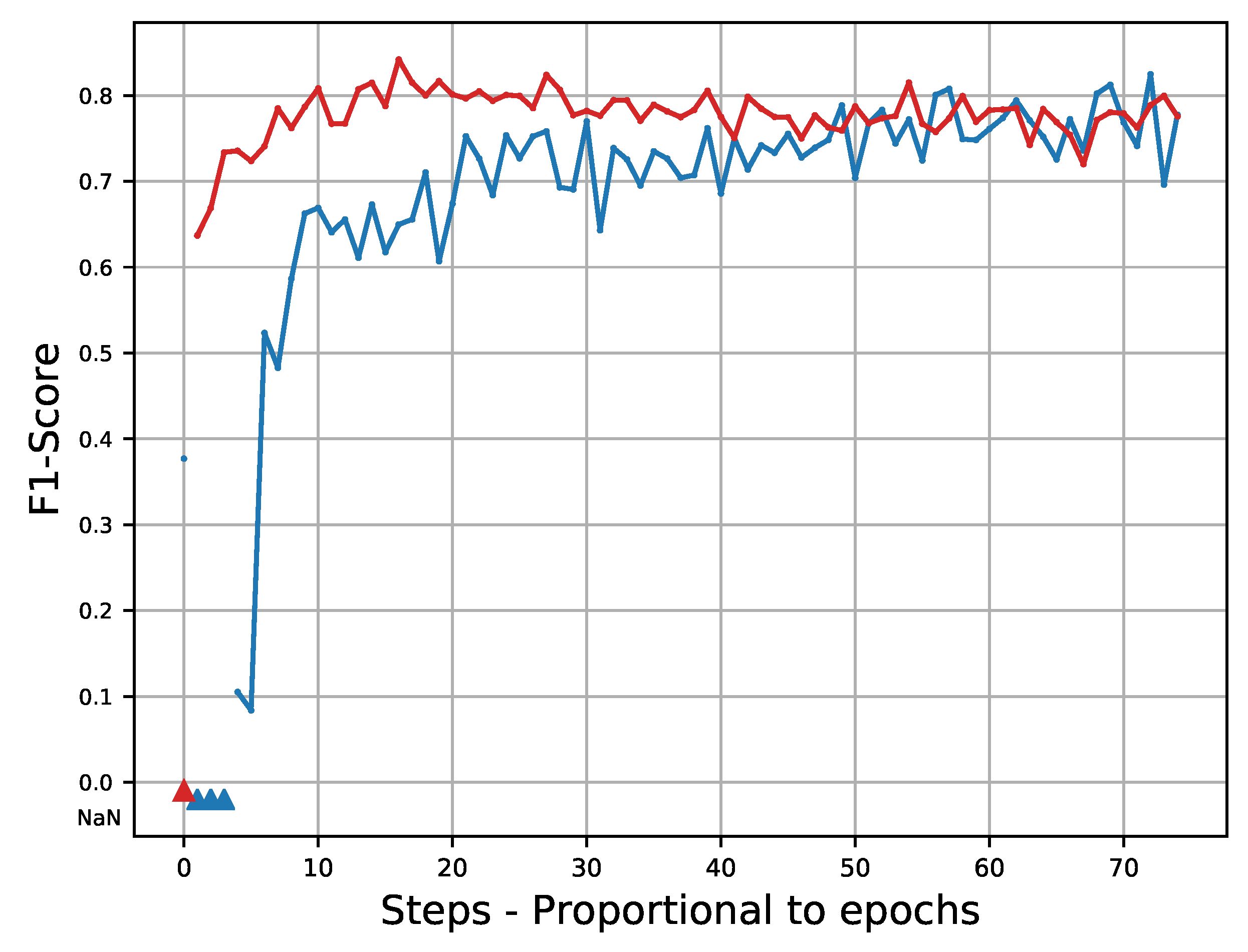

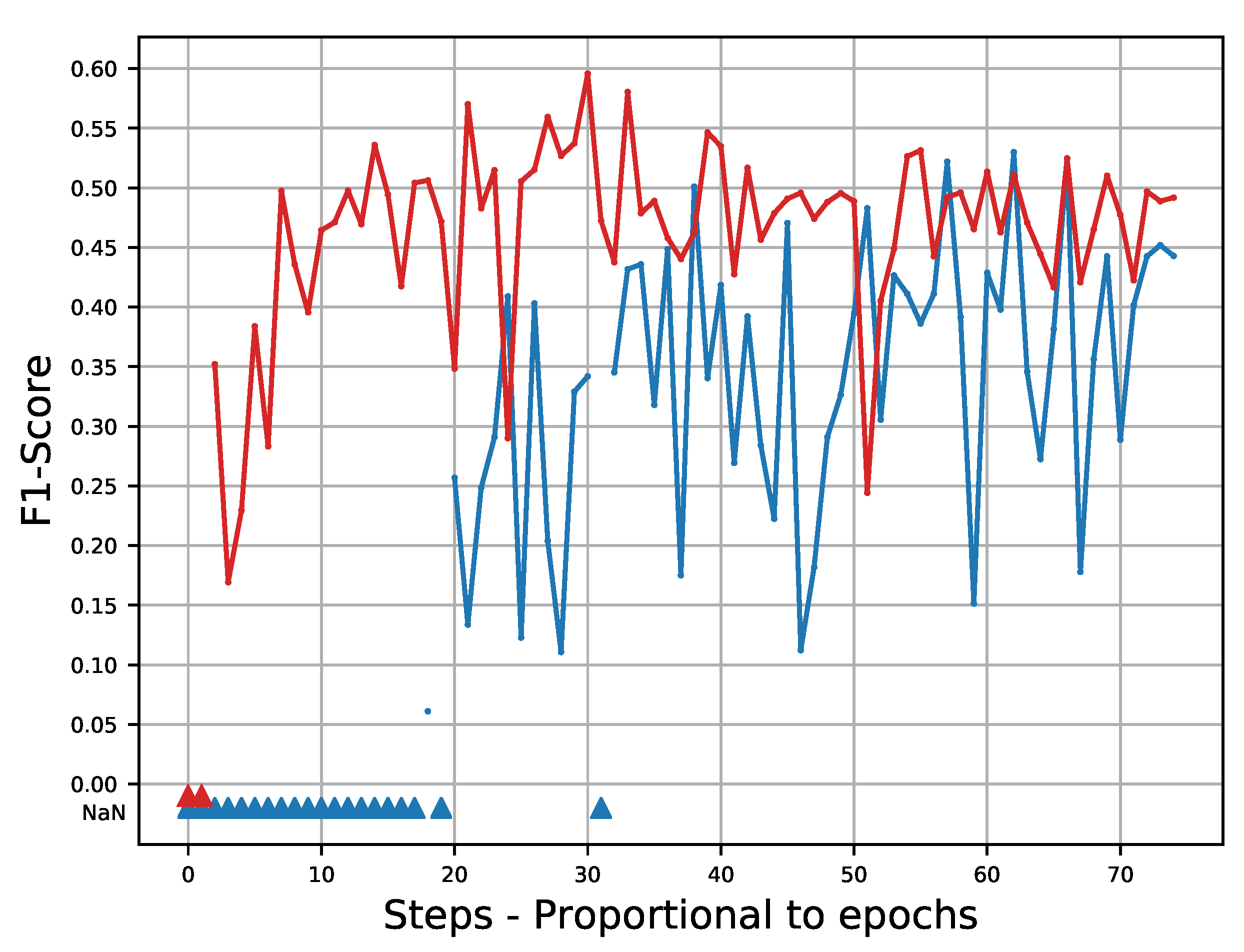

The network whose horizon time is 30 min is trained on 20 epochs. Initially, the learning rate is set to 0.0008 and after 4 epochs it is reduced to 0.0001. After epoch 13, the validation

F1-score (the

F1-score is defined in

Section 4.4) is not increasing. We selected the weights optimized after epoch 13 because their

F1-score are the highest on the validation set.

The network whose horizon time is 1 h is trained on 20 epochs. Initially, the learning rate is set to 0.0008 and after 4 epochs it is reduced to 0.0001. After epoch 17, the validation F1-score is not increasing. We selected the weights of epoch 17 because their F1-score are the highest on the validation set.

The network is particularly sensitive to hyperparameters, specifically the learning rate, the batch size, and the percentage of oversampling. The tuning of the oversampling percentage is detailed in

Section 4.1.1). The other hyperparameters used to train our models are presented in

Table 2.

Neural networks were implemented and trained using PyTorch 1.5.1. on a computer with a CPU Intel(R) Xeon(R) CPU E5-2695 v4, 2.10GHz, and a GPU PNY Tesla P100 (12 GB).

4.4. Scores

Among several metrics presented in the literature [

8,

31], the

F1-score, the Threat Score (

TS), and the

BIAS have been selected. The algorithm seems unable to predict the small scales resulting in smooth borders expressing the uncertainty of the retrieval for these features. This is expected, given that, at small scales, rainfalls are usually related to other processes than the advection of the rain cell (e.g., intensive convection) have been selected. As our algorithm is a multi-label classification problem, each of the

classifiers is assessed independently of the others. For a given input sequence

, we compare the output (

thresholded by 0.5) to its target

. Because it is a binary classification, four possible outcomes can be obtained:

True Positive when the classifier rightly predict the occurrence of an event (also called hits).

True Negative when the classifier rightly predict the absence of an event.

False Positive when the classifier predicts the occurrence of an event that has not occurred (also called false alarm).

False Negative when the classifier predicts the absence of an event that has occurred (also called missed).

On the one hand, we can define the threat score and the

BIAS:

TS range from 0 to 1, where 0 is the worst possible classification and 1 is a perfect classifier. BIAS range from 0 to , 1 corresponds to a non-biased classifier. A score under 1 means that the classifier underestimates the rain and a score greater than 1 means that the classifier overestimates the rain.

On the other hand, we can define the precision and the recall:

Note that, in theory, if the classifier is predicting 0 for all the data (i.e., no rain), the Precision is not defined because its denominator is null. Nevertheless, as the simulation is done over all the samples of the validation or test dataset, this situation hardly occurs in practice.

Based on those definitions, the

-score,

for the classifier

m, can be defined as the harmonic mean between the precision and the recall as

, , and -score range from 0 to 1, where 0 is the worst possible classification and 1 is a perfect classifier.

All these scores will be computed on the test dataset to assess our models’ performance.

4.5. Baseline

We briefly present the optical flow method used in

Section 5 as a baseline. If

I is a sequence of images (in our case, a succession of

maps), the optical flow assumes the advection of

I by velocity

at pixel

and time

t:

where ∇ is the gradient operator and

T the transpose operator, i.e.,

. Recovering velocity

W from images

I by inverting Equation (

13) is an ill-posed problem. The classic approach [

32] is to restrict the space of solution to smooth functions using Tikhonov regularization. To estimate the velocity map at time

t, denoted

, the following cost-function is minimized:

stands for the image domain. Regularization is driven by the hyperparameter

. The gradient is easily derived using calculus of variation. As the cost function

E is convex, standard convex optimization tools can be used to obtain the solution. This approach is known to be limited to small displacements. A solution to fix this issue is to use a data assimilation approach as described in [

20]. Once the estimation of velocity field

is computed, the last observation

is transported, Equation (

15), at the wished temporal horizon. The dynamics of thunderstorm cells is nonstationary, the velocity should also be transported by itself, Equation (

16). Finally the following system of equations is integrated in time to the wished temporal horizon

.

and provide the forecast

. Equations (

15) and (

16) are both approximated using an Euler and semi-Lagrangian scheme.

5. Results

According to the training procedure defined in

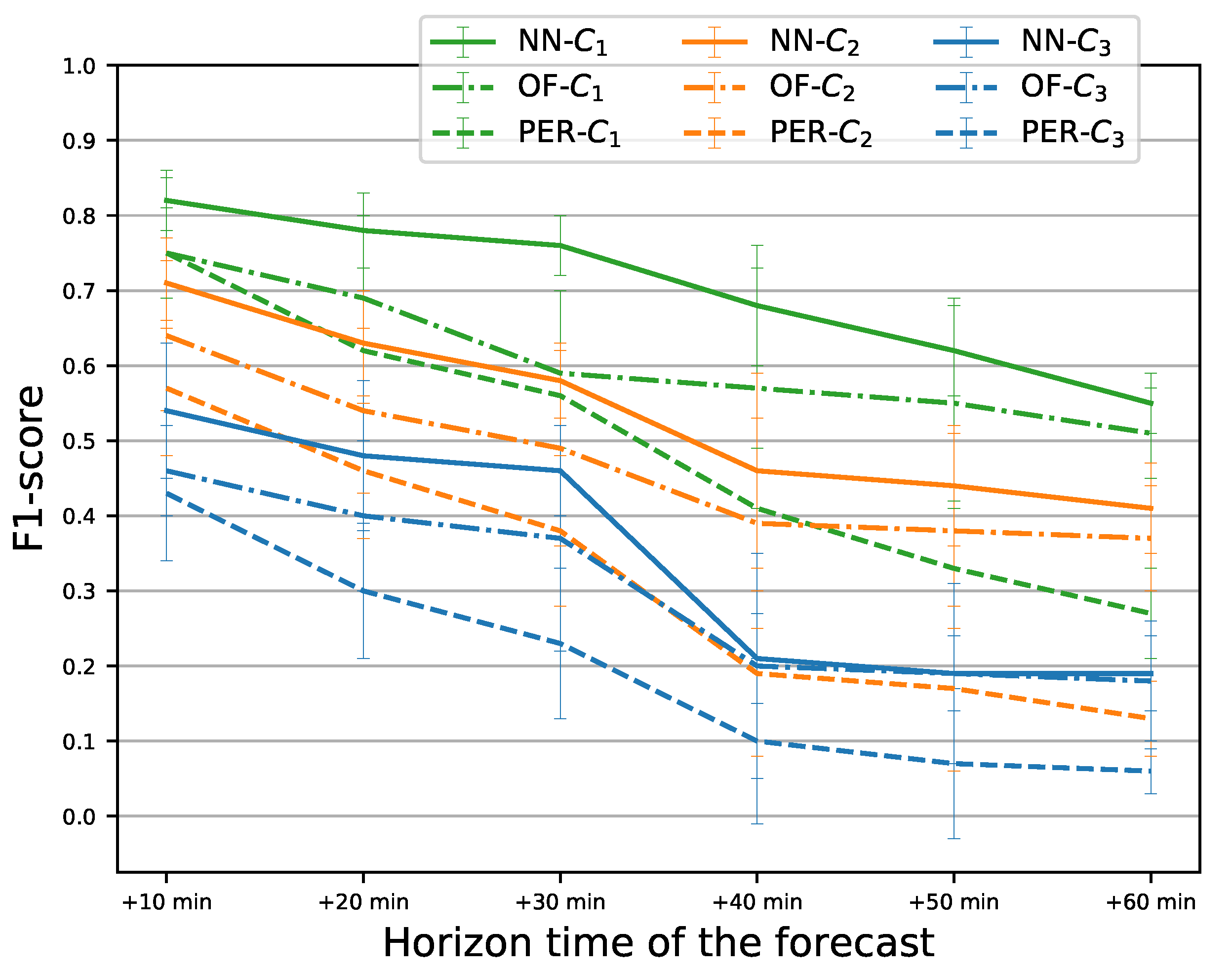

Section 4.3, we trained several neural networks. Using both wind maps and rainfall maps as inputs, a neural network was trained for predictions at a lead time of 30 min and another one for predictions at a lead time of 1 h. Using only rainfall maps as inputs, a neural network was trained for predictions at a lead time of 30 min and another one for predictions at a lead time of 1 h; these two neural networks provide comparison models and are used to assess the impact of wind on the forecasts. The results are compared with the naive baseline given by the persistence model, which consists of taking the last rainfall map of an input sequence of prediction and to the optical flow approach.

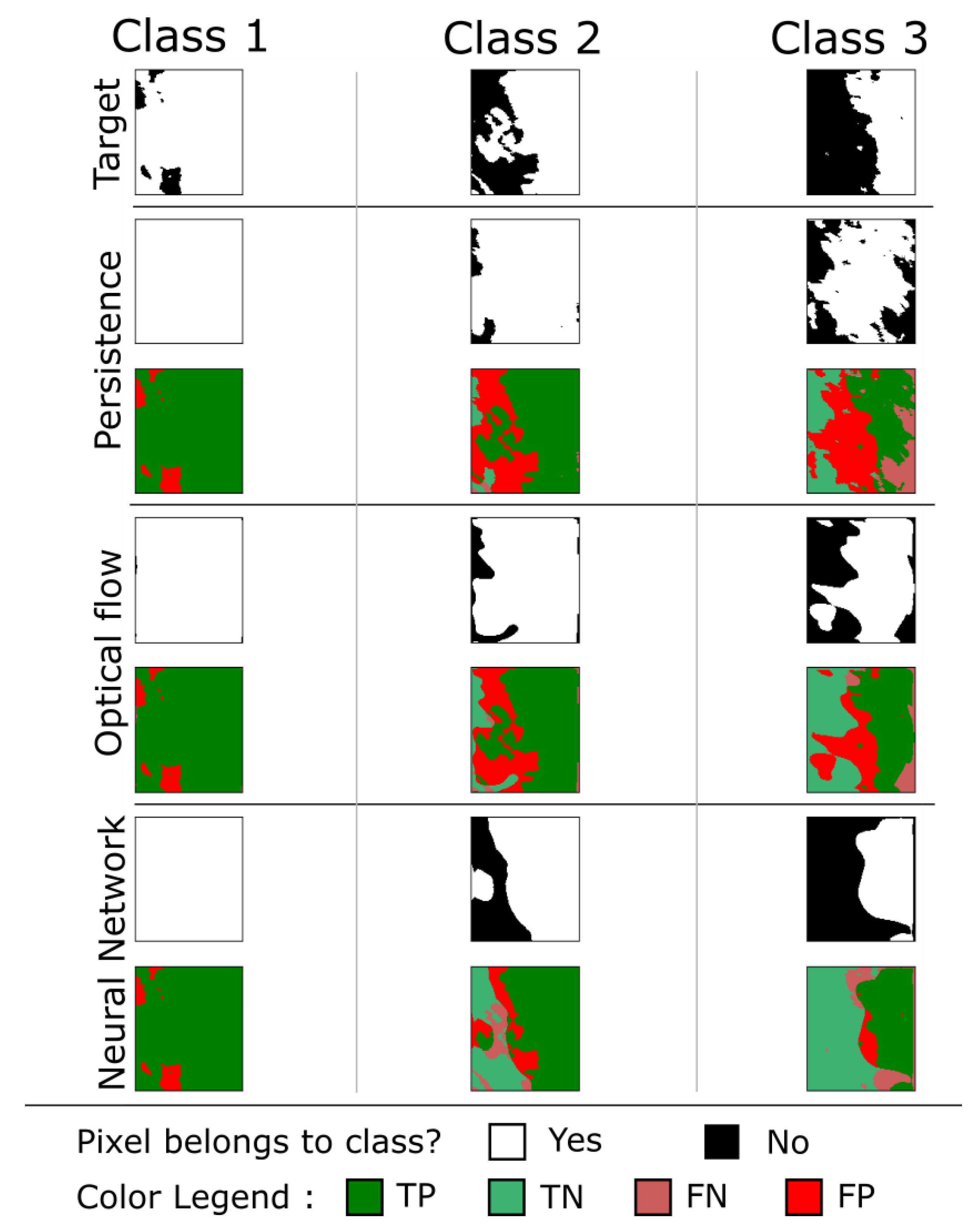

Figure 8 and

Figure 9 present two examples of prediction at 30 min made by the neural networks trained using rainfalls and wind. The forecast is compared to its target, to the persistence, and to the optical flow. The comparison shows that the network is able to model advection to be quite close to the target. The algorithm seems unable to predict the small scales resulting in smooth borders expressing the uncertainty of the retrieval for these features. This is expected, given that, at small scales, rainfalls are usually related to other processes than the advection of the rain cell (e.g., intensive convection).

Using the method proposed in [

33], the results, presented in

Table 3, are calculated on the test set and 100 bootstrapped samples are used to calculate means and standard deviations of the

F1-score. First, it can be noticed that the neural net using both rainfalls maps and wind (denoted NN in

Table 3) outperforms the baselines (PER: Persistence; OF: optical flow) on both the

F1-score and the

TS. The difference is significant for the

F1-score at a lead-time of 30 min. For class 3, the 1-hour prediction presents a similar performance for the neural network and the optical flow. This is not surprising because the optical flow is sensitive to the structures’ contrast and responds better when this contrast is large, which is the case of pixels belonging to class 3.

In contrast, the bias (BIAS) is significantly lower than 1 for the neural network, which indicates that the neural network is predicting on average less rain than observed. It is likely due to the imbalance classes within each image which is not fully compensated by the oversampling procedure. This is confirmed by the fact that class 3, which is the most underrepresented in the training set, has the lowest bias.

The performances of the neural net using only the rainfalls images in the input (denoted NN/R) are also reported in

Table 3. It can be seen that the addition of the wind in input provides a significant improvement in all cases (with a maximum of 10% for class 3 at a lead time of 30 min) for the

F1-score. The improvement is even greater for higher classes which are the most difficult to predict. However, the difference is less significant for a lead-time of 1 h. Quite interestingly, the addition of the wind in the input is also able to reduce the bias of the neural network, suggesting that having adequate predictors is a way to both improve the skill and reduce the bias.

7. Conclusions and Future Work

This work aims at studying the impact of merging rain radar images with wind forecasts to predict rainfalls in a near future. With a few meteorological parameters used as inputs, our model can forecast rainfalls with satisfactory results, outperforming the results obtained using only the radar image (without using the wind velocity).

The problem is transformed into a classification problem by defining classes corresponding to an increasing quantity of rainfall. To overcome the imbalanced distribution of these classes, we perform an oversampling of the highest class which is less frequent in the database. The F1-score calculated on the highest class for forecasts at a horizon time of 30 min is 45%, our model has been compared to a basic persistence model and an approach based on optical flow and outperformed both. Furthermore, it outperforms the same architecture trained using only rainfalls up to 10%; therefore, this paper can be considered as a proof of concept that data fusion has a significant positive impact on rain nowcasting.

An interesting future work would be to fusion the inputs to another determining parameter, such as the orography, which could lead to overcoming some limits observe for a 1 h prediction for the class corresponding to highest rainfall. Optical flow performances provided promising results and it would be interesting to investigate their inclusion through a scheme combining deep learning and data assimilation.