Estimating Tree Diameters from an Autonomous Below-Canopy UAV with Mounted LiDAR

Abstract

:1. Introduction

2. Materials and Methods

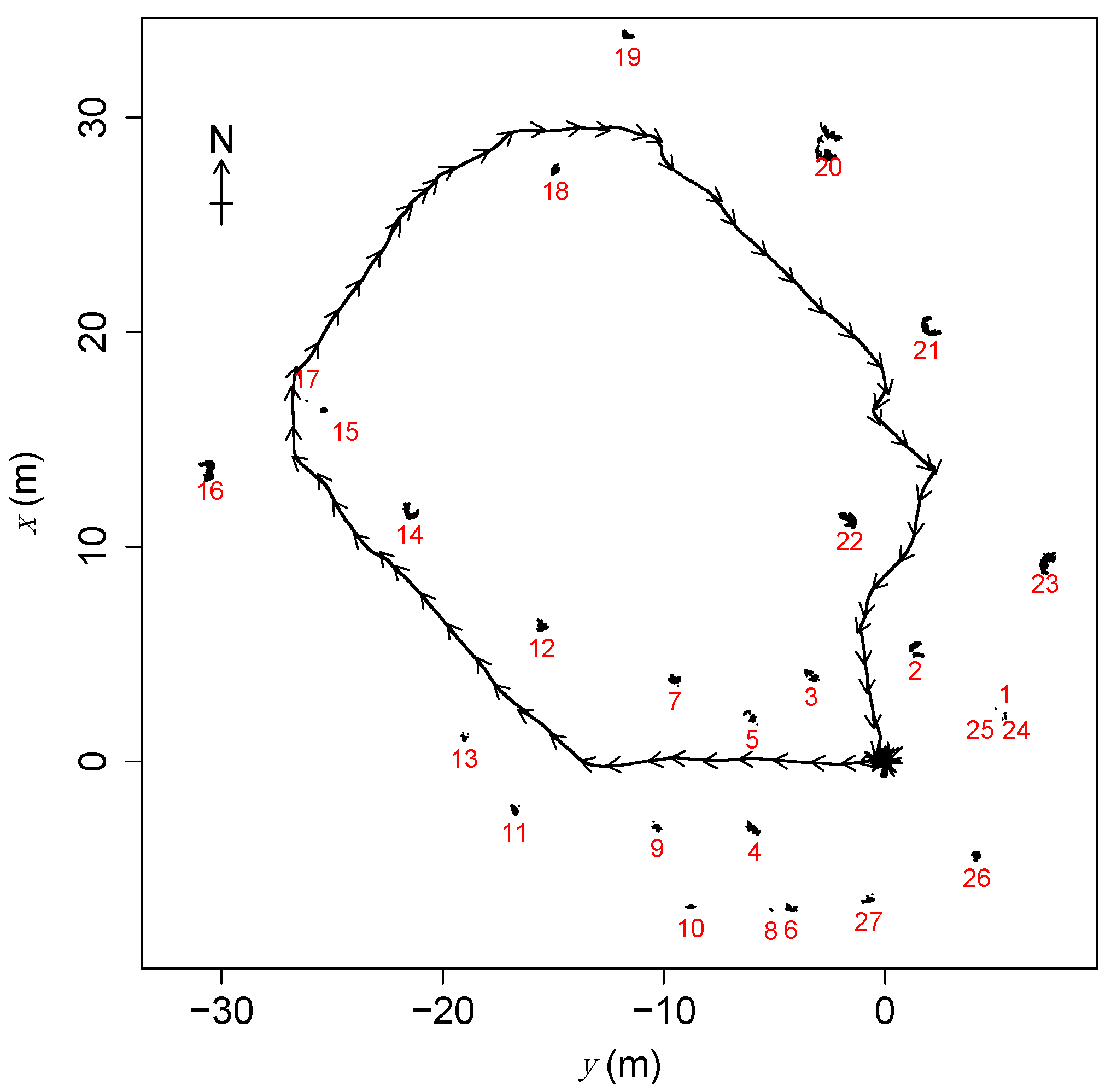

2.1. UAV Survey

2.2. Data Analysis

- (1)

- First, scans with fewer than eight pulses reporting finite distances to target were discarded. Such scans contained too little information for reliable comparison to other scans, and thus for reliable UAV position estimation. To limit the computational load, we used a maximum of 16 scans, equally spaced in time among those available, for each cluster.

- (2)

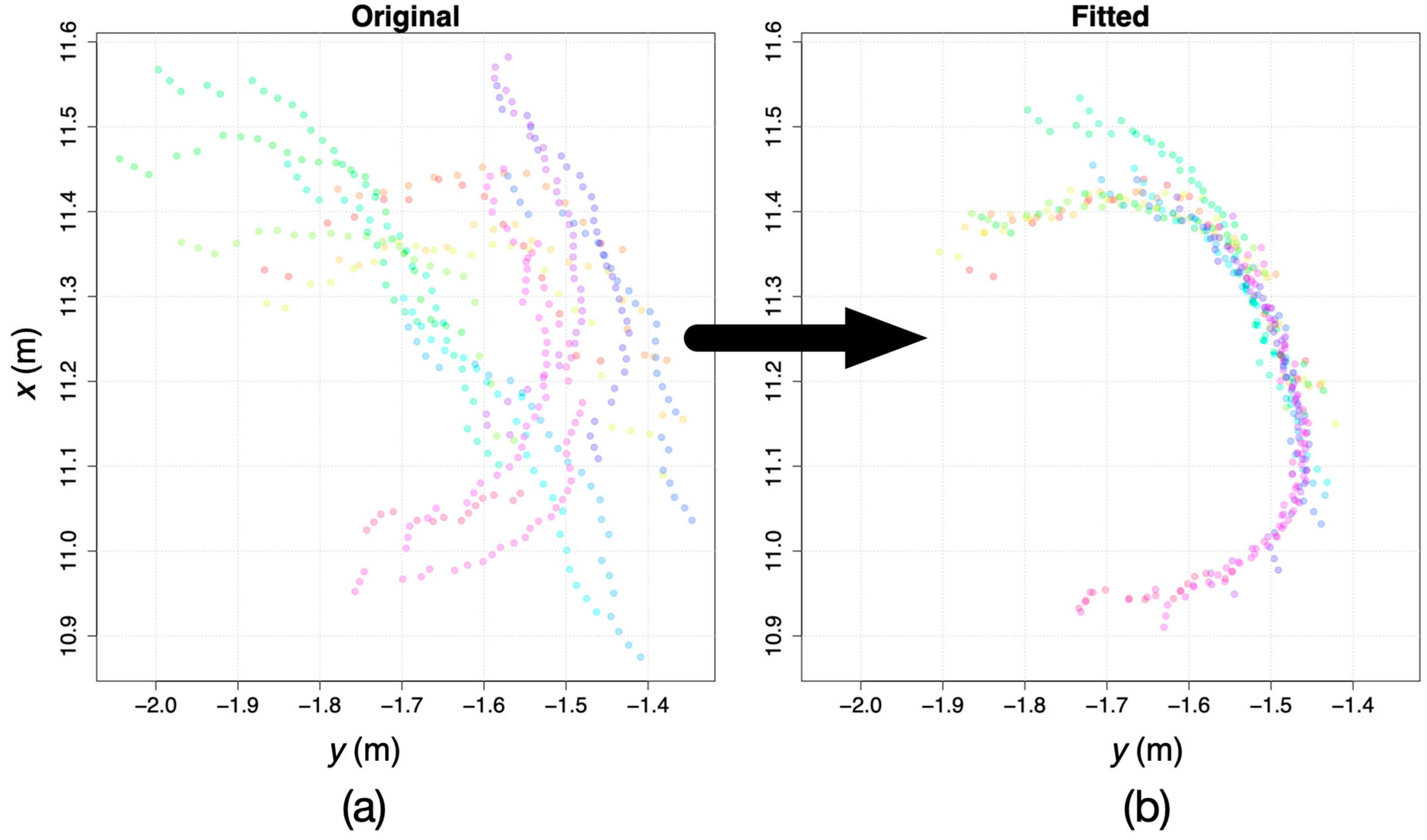

- The two scans closest together in time were merged by adjusting the estimated UAV horizontal-plane coordinates of the second scan () so that the length of the minimal spanning tree of the merged tree was minimized (if there was more than one candidate for this pair of scans, the pair was chosen randomly from among candidate pairs). The minimization was achieved with the optimx() function in R with the L-BFGS-B method with two fitting parameters and , and bounds of ±ϵ on the two parameters, where m was the estimated maximum error in the UAV position from the SLAM algorithm based on preliminary visual inspection of the overlaid scans. The starting estimates for and were chosen randomly from the intervals and . The algorithm repeated the call to optimx() 100 times with different starting values for the parameter estimates, to ensure the global minimum was found in each case.

- (3)

- Step 2 was repeated until all scans had been merged.

- (4)

- A circle was fitted to the resulting merged points using Pratt’s method [34], and the cluster was accepted as a physical tree if the resulting fitted circle had a diameter of less than 1 m, if the circular standard deviation of points was greater than (indicating that a sufficient arc of the putative trunk had been scanned), and if the value was greater than .

- (5)

- If the cluster was accepted as a physical tree in step 4, the diameter of the fitted circle was taken as the estimated diameter of the tree ().

2.3. Manual Survey

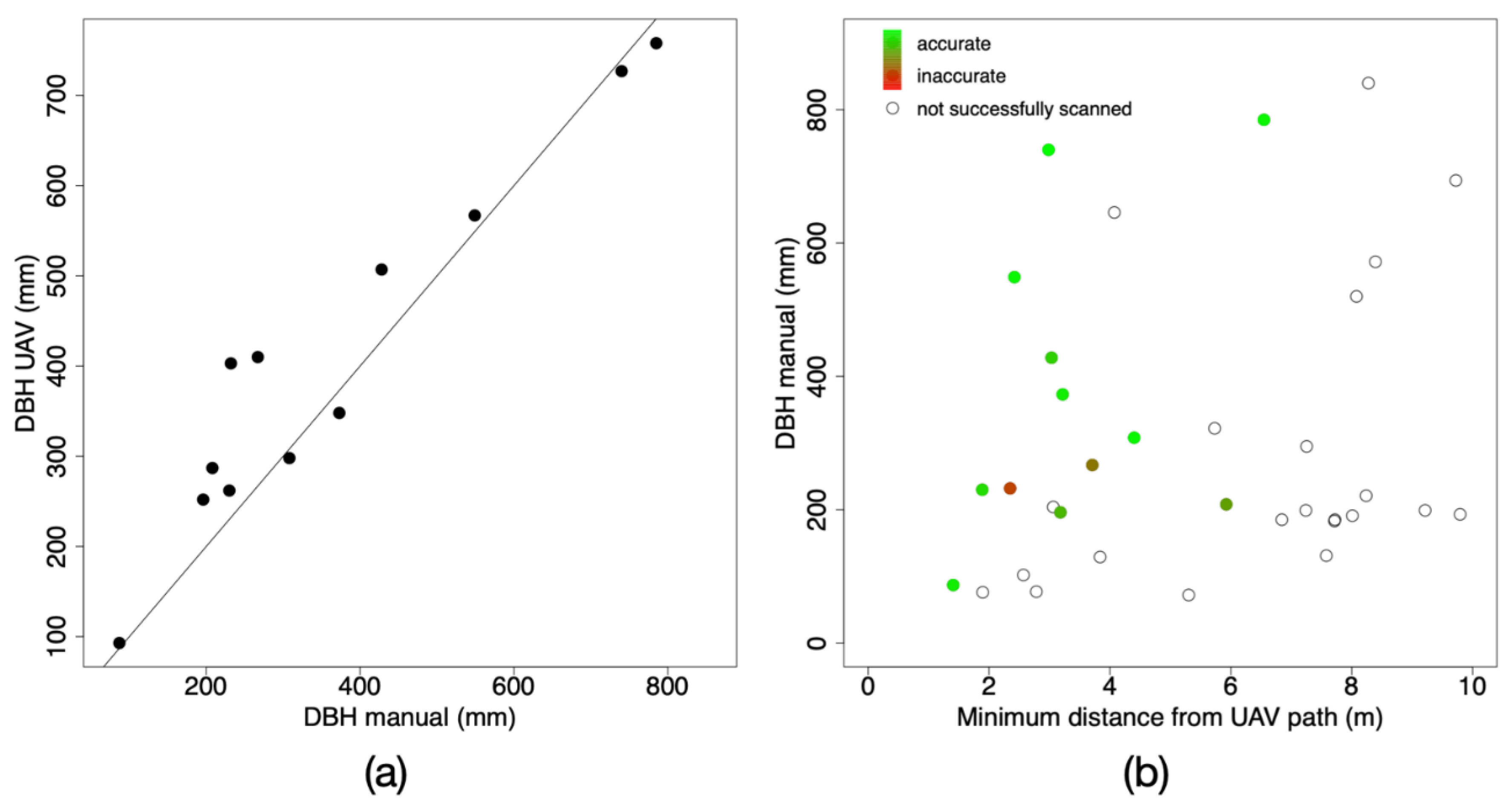

3. Results

4. Discussion

Supplementary Materials

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Guimarães, N.; Pádua, L.; Marques, P.; Silva, N.; Peres, E.; Sousa, J.J. Forestry remote sensing from unmanned aerial vehicles: A review focusing on the data, processing and potentialities. Remote Sens. 2020, 12, 1046. [Google Scholar] [CrossRef] [Green Version]

- Kellner, J.R.; Armston, J.; Birrer, M.; Cushman, K.C.; Duncanson, L.; Eck, C.; Falleger, C.; Imbach, B.; Král, K.; Krůček, M.; et al. New opportunities for forest remote sensing through ultra-high-density drone lidar. Surv. Geophys. 2019, 40, 959–977. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Chen, S.W.; Nardari, G.V.; Lee, E.S.; Qu, C.; Liu, X.; Romero, R.A.F.; Kumar, V. Sloam: Semantic lidar odometry and mapping for forest inventory. IEEE Robot. Autom. Lett. 2020, 5, 612–619. [Google Scholar] [CrossRef] [Green Version]

- Hyyti, H.; Visala, A. Feature based modeling and mapping of tree trunks and natural terrain using 3D laser scanner measurement system. In Proceedings of the 8th IFAC Symposium on Intelligent Autonomous Vehicles, Gold Coast, Australia, 26–28 June 2013; pp. 248–255. [Google Scholar]

- Miettinen, M.; Öhman, M.; Visala, A.; Forsman, P. Simultaneous localisation and mapping for forest harvesters. In Proceedings of the IEEE International Conference on Robotics and Automation, Roma, Italy, 10–14 April 2007. [Google Scholar]

- Forsman, P.; Halme, A. 3-D mapping of natural environments with trees by means of mobile perception. IEEE Trans. Robot. 2005, 21, 482–490. [Google Scholar] [CrossRef]

- McDaniel, M.W.; Nishihata, T.; Brooks, C.A.; Salesses, P.; Iagnemma, K. Terrain classification and identification of tree stems using ground-based LiDAR. J. Field Robot. 2012, 29, 891–910. [Google Scholar] [CrossRef]

- Tsubouchi, T.; Asano, A.; Mochizuki, T.; Kandou, S.; Shiozawa, K.; Matsumoto, M.; Tomimura, S.; Nakanishi, S.; Mochizuki, A.; Chiba, Y.; et al. Forest 3D mapping and tree sizes measurement for forest management based on sensing technology for mobile robots. In Proceedings of the Field and Service Robotics, Matsushima, Japan, 16–19 July 2013; pp. 357–368. [Google Scholar]

- Watt, P.J.; Donoghue, D.N.M. Measuring forest structure with terrestrial laser scanning. Int. J. Remote Sens. 2005, 26, 1437–1446. [Google Scholar] [CrossRef]

- Jones, A.R.; Segaran, R.R.; Clarke, K.D.; Waycott, M.; Goh, W.S.H.; Gillanders, B.M. Estimating mangrove tree biomass and carbon content: A comparison of forest inventory techniques and drone imagery. Front. Mar. Sci. 2020, 6, 784. [Google Scholar] [CrossRef] [Green Version]

- Swinfield, T.; Lindsell, J.A.; Williams, J.V.; Harrison, R.D.; Gemita, E.; Schönlieb, C.B.; Coomes, D.A. Accurate measurement of tropical forest canopy heights and aboveground carbon using structure from motion. Remote Sens. 2019, 11, 928. [Google Scholar] [CrossRef] [Green Version]

- Lin, Y.; Hyyppä, J.; Jaakkola, A. Mini-UAV-borne LiDAR for fine-scale mapping. Geosci. Remote Sens. Lett. 2011, 3, 426–430. [Google Scholar] [CrossRef]

- Wulder, M.A.; White, J.C.; Nelson, R.F.; Næsset, E.; Ørka, H.O.; Coops, N.C.; Hilker, T.; Bater, C.W.; Gobakken, T. Lidar sampling for large-area forest characterization: A review. Remote Sens. Environ. 2012, 121, 196–209. [Google Scholar] [CrossRef] [Green Version]

- Asner, G.P.; Mascaro, J. Mapping tropical forest carbon: Calibrating plot estimates to a simple LiDAR metric. Remote Sens. Environ. 2014, 140, 614–624. [Google Scholar] [CrossRef]

- Iglhaut, J.; Cabo, C.; Puliti, S.; Piermattei, L.; O’Connor, J.; Rosette, J. Structure from motion photogrammetry in forestry: A review. Curr. For. Rep. 2019, 5, 155–168. [Google Scholar] [CrossRef] [Green Version]

- Cushman, K.C.; Kellner, J.R. Prediction of forest aboveground net primary production from high-resolution vertical leaf-area profiles. Ecol. Lett. 2019, 22, 538–546. [Google Scholar] [CrossRef]

- Wallace, L.; Lucieer, A.; Watson, C.; Turner, D. Development of a UAV-LiDAR System with Application to Forest Inventory. Remote Sens. 2012, 4, 1519–1543. [Google Scholar] [CrossRef] [Green Version]

- Zahawi, R.A.; Dandois, J.P.; Holl, K.D.; Nadwodny, D.; Reid, J.L.; Ellis, E.C. Using lightweight unmanned aerial vehicles to monitor tropical forest recovery. Biol. Conserv. 2015, 186, 287–295. [Google Scholar] [CrossRef] [Green Version]

- Patenaude, G.; Hill, R.; Milne, R.; Gaveau, D.; Briggs, B.; Dawson, T. Quantifying forest above ground carbon content using LiDAR remote sensing. Remote Sens. Environ. 2004, 93, 368–380. [Google Scholar] [CrossRef]

- Jaakkola, A.; Hyyppä, J.; Yu, X.; Kukko, A.; Kaartinen, H.; Liang, X.; Hyyppä, H.; Wang, Y. Autonomous collection of forest field reference--the outlook and a first step with UAV laser scanning. Remote Sens. 2017, 9, 785. [Google Scholar] [CrossRef] [Green Version]

- Krůček, M.; Král, K.; Cushman, K.; Missarov, A.; Kellner, J.R. Supervised segmentation of ultra-high-density drone lidar for large-area mapping of individual trees. Remote Sens. 2020, 12, 3260. [Google Scholar] [CrossRef]

- Wilson, E.O.; Peter, F.M.; Raven, P.H. Our diminishing tropical forests. In Biodiversity; Wilson, E.O., Peter, F.M., Eds.; National Academy Press: Washington, DC, USA, 1988. [Google Scholar]

- Sullivan, M.J.P.; Lewis, S.L.; Affum-Baffoe, K.; Castilho, C.; Costa, F.; Sanchez, A.C.; Ewango, C.E.N.; Hubau, W.; Marimon, B.; Monteagudo-Mendoza, A.; et al. Long-term thermal sensitivity of Earth’s tropical forests. Science 2020, 368, 869–874. [Google Scholar] [CrossRef]

- Chisholm, R.A.; Cui, J.; Lum, S.K.Y.; Chen, B.M. UAV LiDAR for below-canopy forest surveys. J. Unmanned Veh. Syst. 2013, 1, 61–68. [Google Scholar] [CrossRef] [Green Version]

- Hyyppä, E.; Hyyppä, J.; Hakala, T.; Kukko, A.; Wulder, M.A.; White, J.C.; Pyörälä, J.; Yu, X.; Wang, Y.; Virtanen, J.-P. Under-canopy UAV laser scanning for accurate forest field measurements. ISPRS J. Photogramm. Remote Sens. 2020, 164, 41–60. [Google Scholar] [CrossRef]

- Zaffar, M.; Ehsan, S.; Stolkin, R.; Maier, K.M. Sensors, SLAM and long-term autonomy: A review. In Proceedings of the 2018 NASA/ESA Conference on Adaptive Hardware and Systems, Edinburgh, UK, 6–9 August 2018; pp. 285–290. [Google Scholar]

- Li, J.; Bi, Y.; Lan, M.; Qin, H.; Shan, M.; Lin, F.; Chen, B.M. Real-time simultaneous localization and mapping for UAV: A survey. In Proceedings of the International Micro Air Vehicle Competition and Conference, Beijing, China, 17–21 October 2016; pp. 237–242. [Google Scholar]

- Bachrach, A.; Prentice, S.; He, R.; Roy, N. RANGE-Robust Autonomous Navigation in GPS-Denied Environments. J. Field Robot. 2011, 28, 644–666. [Google Scholar] [CrossRef]

- Ryding, J.; Williams, E.; Smith, M.J.; Eichhorn, M.P. Assessing handheld mobile laser scanners for forest surveys. Remote Sens. 2015, 7, 1095–1111. [Google Scholar] [CrossRef] [Green Version]

- Taheri, H.; Xia, Z.C. SLAM; definition and evolution. Eng. Appl. Artif. Intell. 2021, 97, 104032. [Google Scholar] [CrossRef]

- Liao, F.; Lai, S.; Hu, Y.; Cui, J.; Wang, J.L.; Teo, R.; Lin, F. 3D motion planning for UAVs in GPS-denied unknown forest environment. In Proceedings of the 2016 IEEE Intelligent Vehicles Symposium (IV), Gothenburg, Sweden, 19–22 June 2016; pp. 246–251. [Google Scholar]

- Gao, F.; Wu, W.; Gao, W.; Shen, S. Flying on point clouds: Online trajectory generation and autonomous navigation for quadrotors in cluttered environments. J. Field Robot. 2019, 36, 710–733. [Google Scholar] [CrossRef]

- Giusti, A.; Guzzi, J.; Cireşan, D.C.; He, F.-L.; Rodríguez, J.P.; Fontana, F.; Faessler, M.; Forster, C.; Schmidhuber, J.; Di Caro, G. A machine learning approach to visual perception of forest trails for mobile robots. IEEE Robot. Autom. Lett. 2015, 1, 661–667. [Google Scholar] [CrossRef] [Green Version]

- Pratt, V. Direct least-squares fitting of algebraic surfaces. SIGGRAPH Comput. Graph. 1987, 21, 145–152. [Google Scholar] [CrossRef]

- Condit, R.; Ashton, P.S.; Manokaran, N.; LaFrankie, J.V.; Hubbell, S.P.; Foster, R.B. Dynamics of the forest communities at Pasoh and Barro Colorado: Comparing two 50-ha plots. Philos. Trans. R. Soc. Lond. Ser. B Biol. Sci. 1999, 354, 1739–1748. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Williams, J.; Schönlieb, C.-B.; Swinfield, T.; Lee, J.; Cai, X.; Qie, L.; Coomes, D.A. 3D segmentation of trees through a flexible multiclass graph cut algorithm. IEEE Trans. Geosci. Remote Sens. 2019, 58, 754–776. [Google Scholar] [CrossRef]

| Object Number | DBH Manual (mm) | DBH UAV (mm) | Minimum Distance from UAV Path (m) |

|---|---|---|---|

| 2 | 232 | 403 | 2.3 |

| 4 | 196 | 252 | 3.2 |

| 7 | 267 | 410 | 3.7 |

| 12 | 373 | 348 | 3.2 |

| 14 | 549 | 567 | 2.4 |

| 15 | 87 | 93 | 1.4 |

| 18 | 230 | 262 | 1.9 |

| 19 | 308 | 298 | 4.4 |

| 21 | 740 | 727 | 3.0 |

| 22 | 428 | 507 | 3.0 |

| 23 | 785 | 758 | 6.5 |

| 26 | 208 | 287 | 5.9 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chisholm, R.A.; Rodríguez-Ronderos, M.E.; Lin, F. Estimating Tree Diameters from an Autonomous Below-Canopy UAV with Mounted LiDAR. Remote Sens. 2021, 13, 2576. https://doi.org/10.3390/rs13132576

Chisholm RA, Rodríguez-Ronderos ME, Lin F. Estimating Tree Diameters from an Autonomous Below-Canopy UAV with Mounted LiDAR. Remote Sensing. 2021; 13(13):2576. https://doi.org/10.3390/rs13132576

Chicago/Turabian StyleChisholm, Ryan A., M. Elizabeth Rodríguez-Ronderos, and Feng Lin. 2021. "Estimating Tree Diameters from an Autonomous Below-Canopy UAV with Mounted LiDAR" Remote Sensing 13, no. 13: 2576. https://doi.org/10.3390/rs13132576

APA StyleChisholm, R. A., Rodríguez-Ronderos, M. E., & Lin, F. (2021). Estimating Tree Diameters from an Autonomous Below-Canopy UAV with Mounted LiDAR. Remote Sensing, 13(13), 2576. https://doi.org/10.3390/rs13132576