1. Introduction

The inertial navigation system (INS) and global navigation satellite system (GNSS) are two of the main and most important approaches for providing position and attitude information for geographical references [

1]. The INS has high accuracy in a short period of time not affected by the environment. However, a standalone INS solution will degrade over time, because of the large errors in the raw measurements of inertial measurement units (IMU) [

2]. In this study, the signal from GNSS is adopted to provide high precision navigation solutions. Under decent visibility conditions, GNSS can provide continuous and accurate navigation information over a long period of time. However, GNSS alone cannot give reliable positions all of the time, as the satellite signal may be blocked or corrupted as a result of high buildings, viaducts, tunnels, mountains, multi-path reflections, and bad weather conditions [

3,

4,

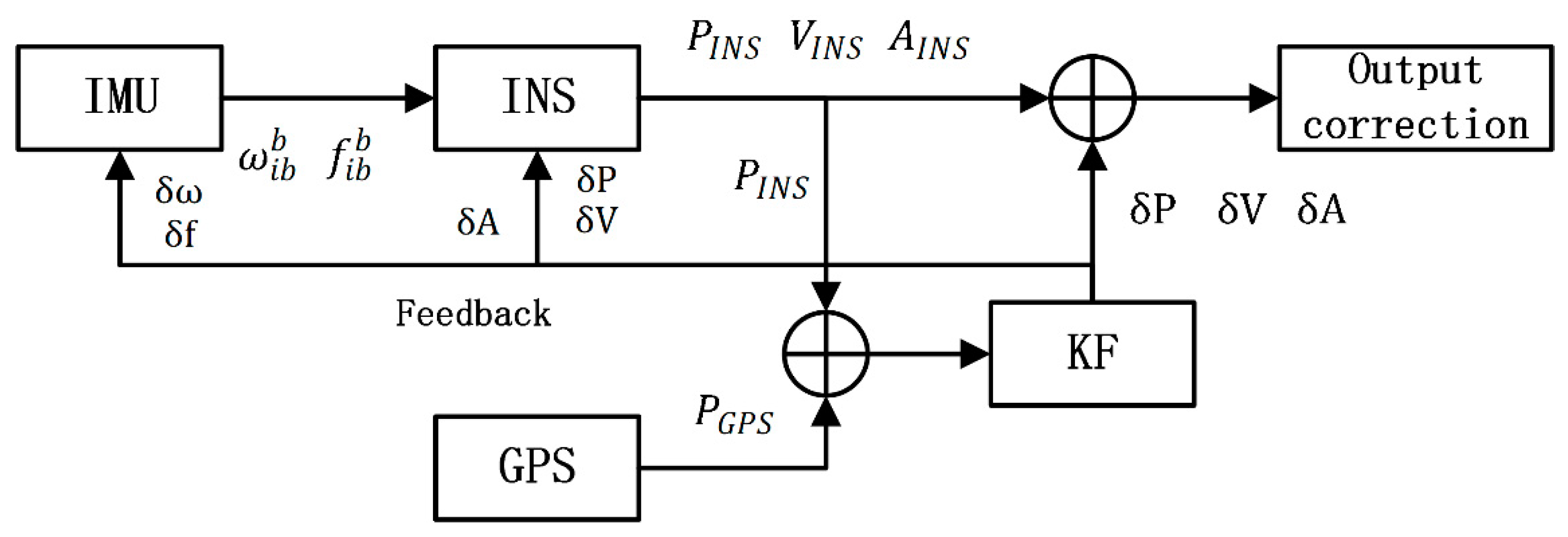

5]. Because of their complementary properties, INS and GNSS are commonly integrated by a Kalman filter (KF) for providing continuous and high precision navigation [

3,

4,

5,

6]. In an INS/GNSS system, GNSS aids INS by estimating its errors in KF [

7,

8]. Besides, INS connects the GNSS signal gap and helps the GNSS signal reacquisition after an outage [

9,

10]. However, when the GNSS signal is blocked, KF cannot update information from the GNSS measurements. Meanwhile, the INS/GNSS system changes into a pure mode, whose position error will diverge over a period of time [

2,

3,

4,

11]. Therefore, an improved fusion algorithm needs to be explored so as to improve the INS navigation performance when the GNSS signal is lost.

With the development of artificial intelligence (AI) and big data, a lot of AI methods have been explored so as to improve navigation accuracy during GNSS outages [

12,

13,

14,

15,

16,

17,

18]. Nowadays, artificial neural networks (ANN) are the most widely used methods to model a complex nonlinear problem. Many researchers have built a variety of neural networks for aiding INS when the GNSS signal is lost. Rashad and his team first used a radial basis function neural network to model the INS position and the position error between INS and GNSS [

12,

13]. EI-Sheimy used time, velocity, and yaw as inputs to model the position error and velocity, showing a more stable and accurate result [

14]. In later works, an improved autoregressive delay-dependent neural network model was applied. The input features are the current and past one-step position and velocity from IMU and INS, while the output is the INS position error

[

15]. Another method based on the ensemble model has been explored to promote the generalization of the algorithm by utilizing a lot of weak learners to construct a strong learner [

7,

15,

17]. Furthermore, the support vector machine and genetic algorithm were also explored so as to overcome the over-fitting and local-minimum problems of neural networks [

18]. Lately, factor graph optimizations have been widely used for multi-sensor fusion in autonomous systems [

19]. This method uses a factor graph model to represent the joint probability distribution function. An efficient incremental inference algorithm over the factor graph is applied, which yields a near-optimal inertial navigation system.

However, almost all of the above methods are based on a static neural network, like the radial basis function neural network (RBF) or multilayer perceptron neural network (MLP). The conception of all above approaches is to model the current and past one-step INS information by training the AI model when the GNSS signal is available. Almost all of the existing INS aiding algorithms try to improve the navigation solutions during GNSS outages by predicting the INS errors using previous INS outputs. The major drawback of which is that they cannot store more past vehicle dynamic information when dealing with a time serial data [

20]. Therefore, under the condition of a long period of GNSS outages, any of above INS aiding algorithms may not have the capability of providing accurate and stable navigation results [

21]. Furthermore, the main shortcoming of the MLP with a sliding window is the rapid increase of computing complexity, as the number of neurons in the input layer has to be equal to the number of past samples [

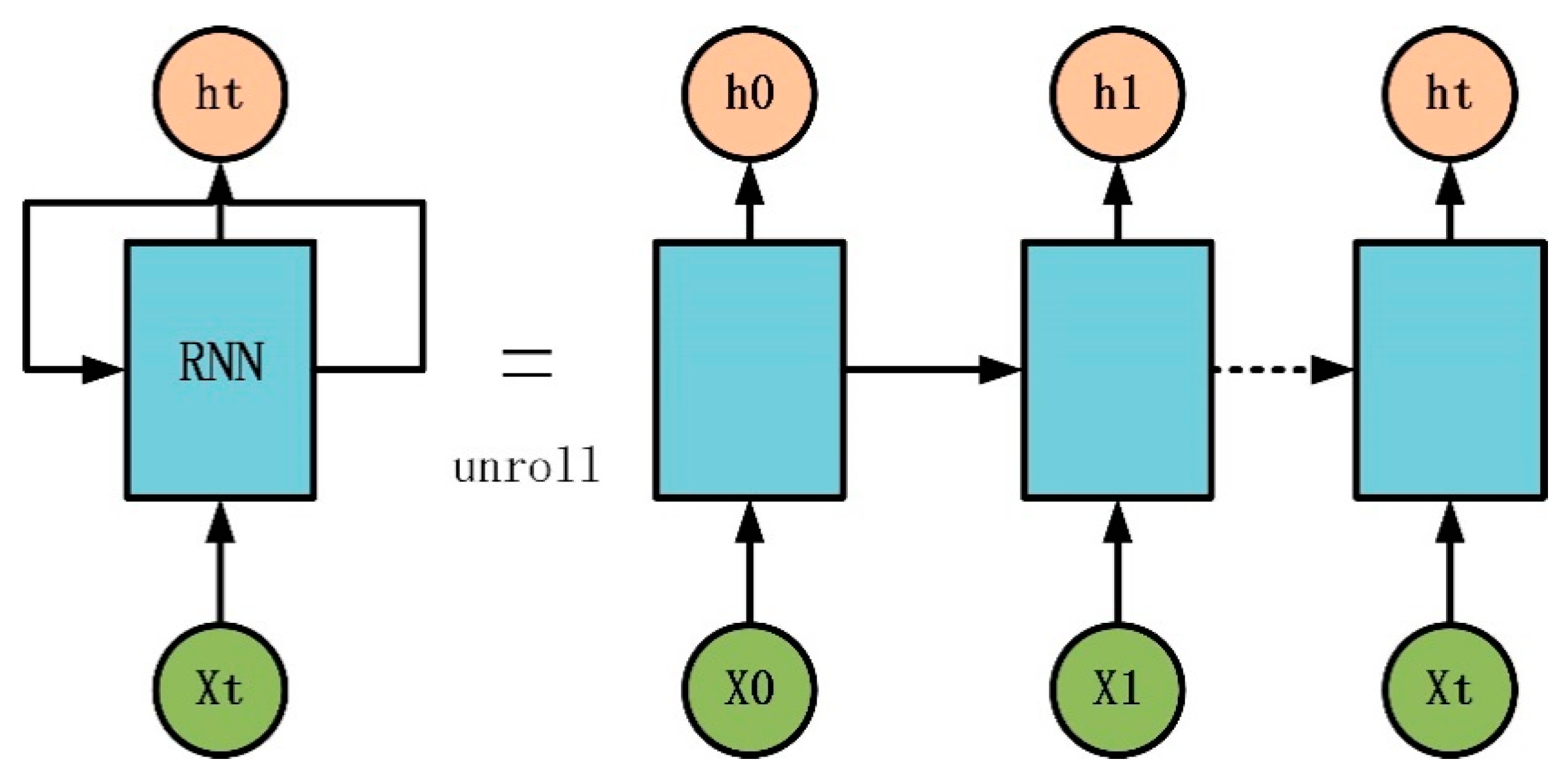

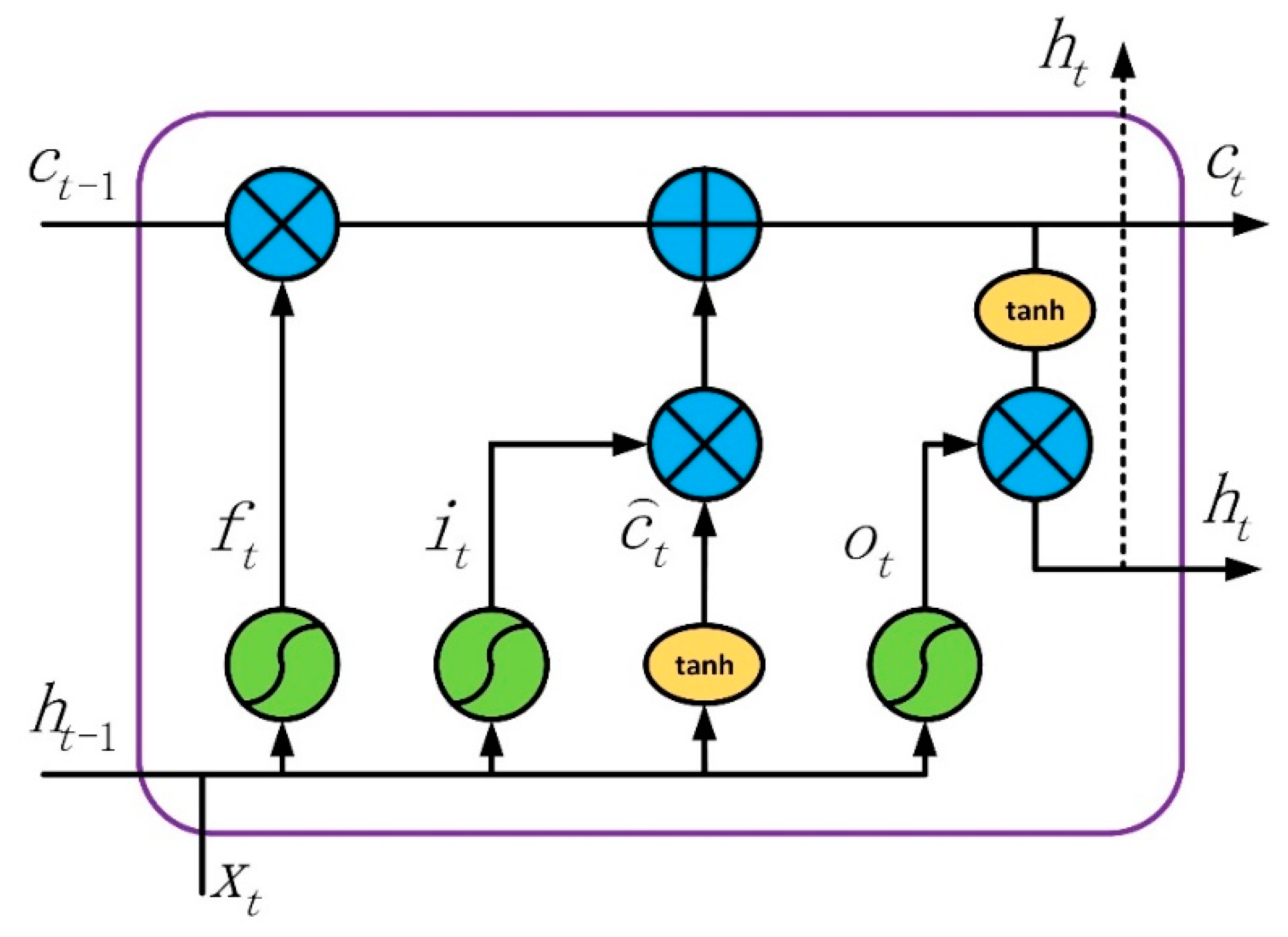

22]. For all of the above reasons, this study suggests that a LSTM neural network identifying a nonlinear dynamic process can solve the above drawbacks of those models, which have capabilities to perform highly nonlinear dynamic mapping and store past information [

23]. LSTM is also a basic neuron in the recurrent neural network (RNN), which can select and store significant information [

24], and has been widely used in a variety dynamic process, such as natural language process (NPL) [

25] and time serial prediction [

26,

27].

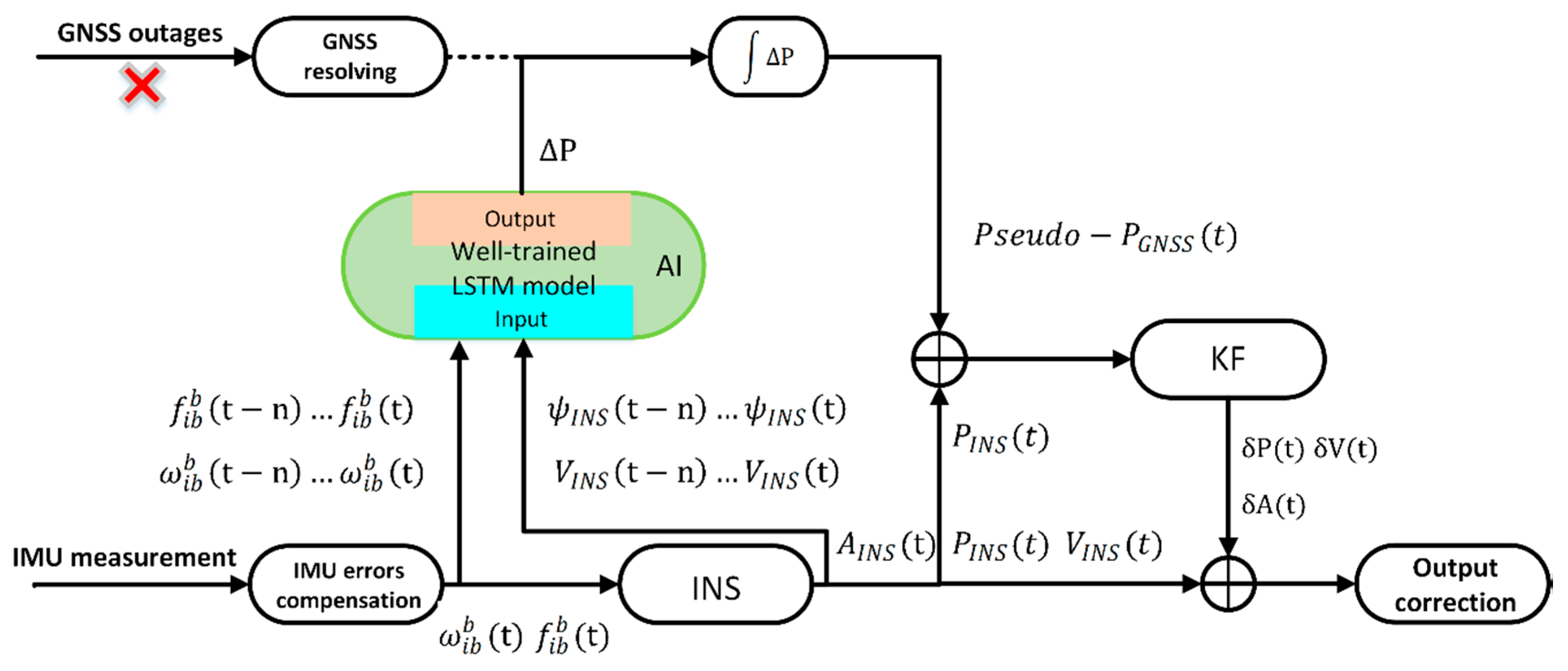

For maintaining good performance of INS during the GNSS outages, a novel AI method based on LSTM is proposed to overcome the drawbacks of the methods discussed above. When the GNSS signal is available, the velocity, yaw of INS, output of IMU are used as the input features for training the LSTM model, while the output of the model is the position increment of the GNSS. Once the GNSS signal is lost for a short time, the information of INS will be fed into the LSTM model to generate the pseudo GNSS increment. After accumulating the pseudo GNSS increments, the pseudo GNSS position is sent to KF for correcting the INS navigation results. The actual test data is used to evaluate the novel algorithm of LSTM, which is also compared with the traditional MLP algorithm. The test results demonstrate that the LSTM algorithm can promote navigation accuracy better than the MLP algorithm during a long period of GNSS outages.

The structure of the rest paper is organized as follows:

Section 2 introduces the simple INS/GNSS loosely coupled navigation system. The LSTM architecture and training method for time serials prediction are illustrated in

Section 3.

Section 4 demonstrates an improved model of the LSTM fusion algorithm aiding for INS. The actual road experiments are presented and discussed in

Section 5. Finally,

Section 6 presents the conclusions.

4. LSTM Algorithm Aiding the INS/GNSS Navigation System during GNSS Outages

The main idea of an AI-aided INS/GNSS integrated system is to mimic the mathematical relationship between the navigation information, and the vehicle dynamical data for maintaining high navigation accuracy during GNSS outages. Generally, navigation information includes the position error between INS and GNSS or the position increment of GNSS. Vehicle dynamical data includes the output from INS, such as velocity, attitude, specific force, and angular rate.

More recently, several AI modules have been explored to find the relationship. Almost all of these models can be divided into three classes by different outputs. The first is

, which relates the information of INS with the position error between GNSS and INS to estimate the error [

36]. The second is

, which relates the information of INS with the state vector

of KF [

37]. Both of the above models have a problem, where the estimated information mixed the error of the INS and GNSS.

If the position error between GNSS and INS

is utilized as output of model, it can be calculated from Equation (28)

where

is the GNSS position measurement,

represents the GNSS position measurement noise,

is the position calculated from INS,

is the position error from INS,

represents true GNSS position,

true INS position. In Equation (28), calculated position error between GNSS and INS

not only includes error

from GNSS, but also includes error

from INS.

Position increments of GNSS is calculated in Equation (29)

In Equation (29), means position increment of GNSS in , it only includes GNSS position measurement noise . It can be considered that the measurement noises at adjacent times belong to the same probability distribution and have the same variance. So, the value of is very small. As the navigation error of INS will propagate along the time, and the INS/GNSS system integrated by KF cannot be absolutely accurate, the value of is bigger than . Thus, the accuracy of is less than .

including the errors from GNSS and INS will bring in additional errors resulting in reducing the predicting accuracy. In order to avoid the mixed errors from INS and GNSS, a model based on estimating and predicting the increment of the GNSS position, which is denoted as , is selected, and then generates the information of the pseudo GNSS position.

In this paper,

is adopted as a model framework, because of its ability to avoid the mixed operation of INS and GNSS. The output of this model is the increment of position

, which can be obtained by the differential equation of INS [

38,

39]. From Equations (2)–(5) indicating position update procedure of INS, it can be concluded that

is the function of

where

is position increment in time

,

is velocity in time

,

represents the functional relationship.

The procedure of velocity

update in INS is listed from Equations (31)–(34)

From Equations (31)–(34), it can be concluded that

is function of

,

,

,

,

,

, while

,

is the incremental value of accelerometer and gyroscope, calculated in Equations (35) and (36).

Then, the parameters related to

can be rewritten as

is the output of the accelerometer unit,

is the output of the gyro,

is the angular rate of in Earth frame relative to the inertial frame,

is the angular rate in navigation frame relative to Earth frame,

is velocity, and

is gravity. All the superscript indicates that these vectors are projected into navigation frame n.

is the direction cosine matrix to transform the coordinates from b-frame to n-frame, calculated by

where

, and

represent the pitch, roll, and yaw, respectively.

and

can be calculated by the latitude (

), longitude (

), and velocity (

). The formulas are

According to Equations (30)–(40), the position increment

is mainly influenced by

,

,

,

,

. In terms of vehicles, the value of the pitch (

) and roll (

) in body frame are very small, and

will be mainly influenced by the value of the yaw (

). From Equations (39) and (40),

is mainly related with the latitude

, longitude

, and velocity

, while

is mainly influenced by the Earth rotation angular rate

During a period of time of GNSS outages, the values of

,

, and

will not change a lot. Therefore,

,

, and

have an imperceptible impact on

and

. In conclusion, the main factors that influence the value of

will mostly be

,

,

, and yaw (

), which is also the reason for choosing them as the input features.

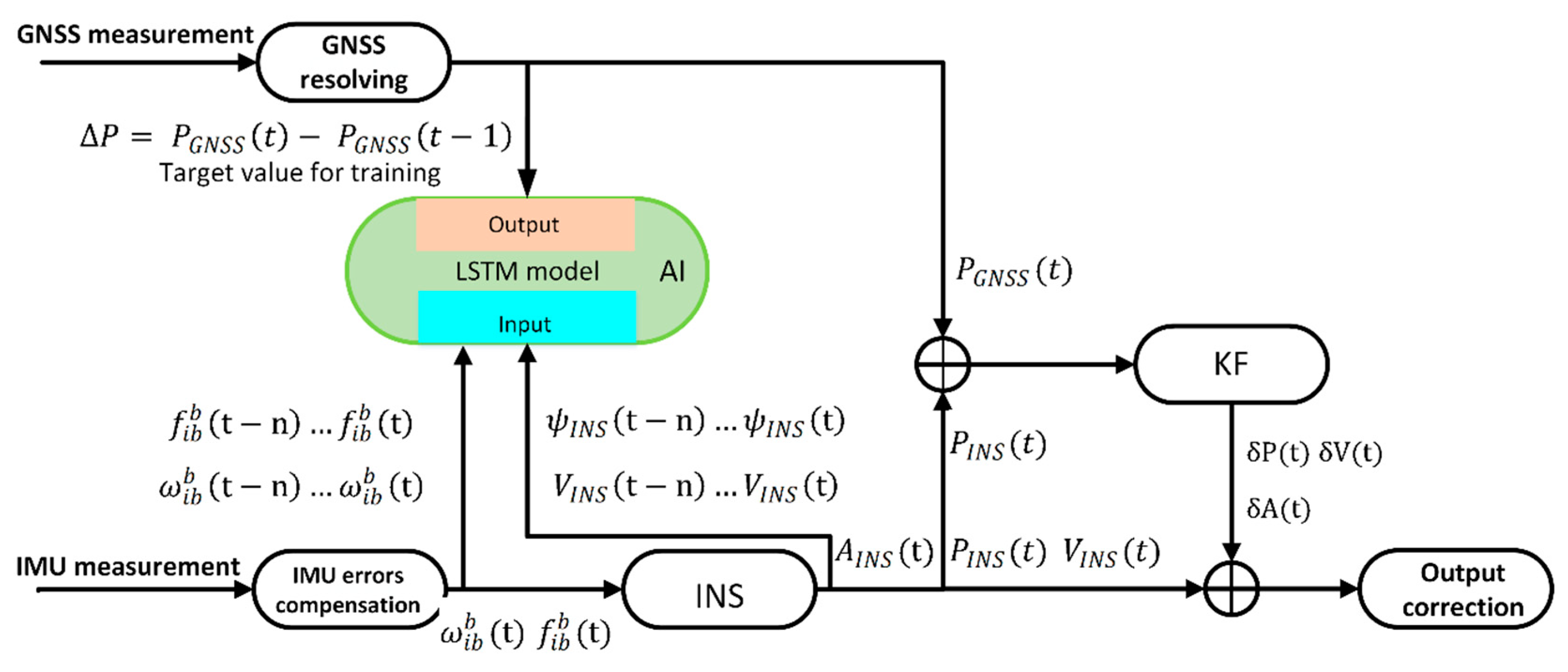

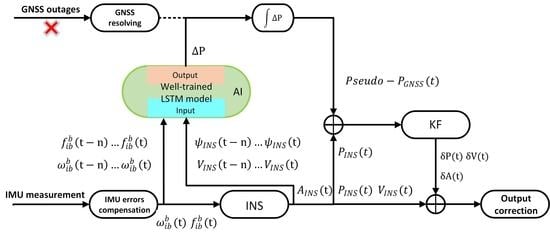

Figure 4 and

Figure 5 illustrate the process and architecture of the LSTM network aiding for an INS/GNSS integrated navigation system.

and

represent the velocity, position, and attitude, respectively. The subscript indicates the data from GNSS or INS.

indicates the estimated errors from the output of KF.

represents the value of the yaw.

When the GNSS signal is available, the AI module works on the training mode, shown in

Figure 4. On the one hand, the measurements of the position from GNSS and INS are fed into KF as inputs to estimate the errors of INS, which is just the loosely coupled mode. In the process of training,

and

from IMUs, as well as

from the INS are fed into the AI module as input features. The position increment from the GNSS measurement as a target value is adopted to compute the loss function. In the training mode, the AI module tries to find a relationship between

and

,

,

, and the yaw. When the GNSS signal is lost, the system changes to predicting mode, indicated in

Figure 5. Under these conditions, the value of

,

,

, and the yaw can still be obtained from INS, which will be fed into a well-trained AI module to get

. After accumulating all of the

from the beginning of predicting, the pseudo GNSS position can be attained at each time. Then, the pseudo GNSS position will be fed into KF as a substitute of the original position from GNSS. The hybrid system will maintain navigation continuously during GNSS outages.

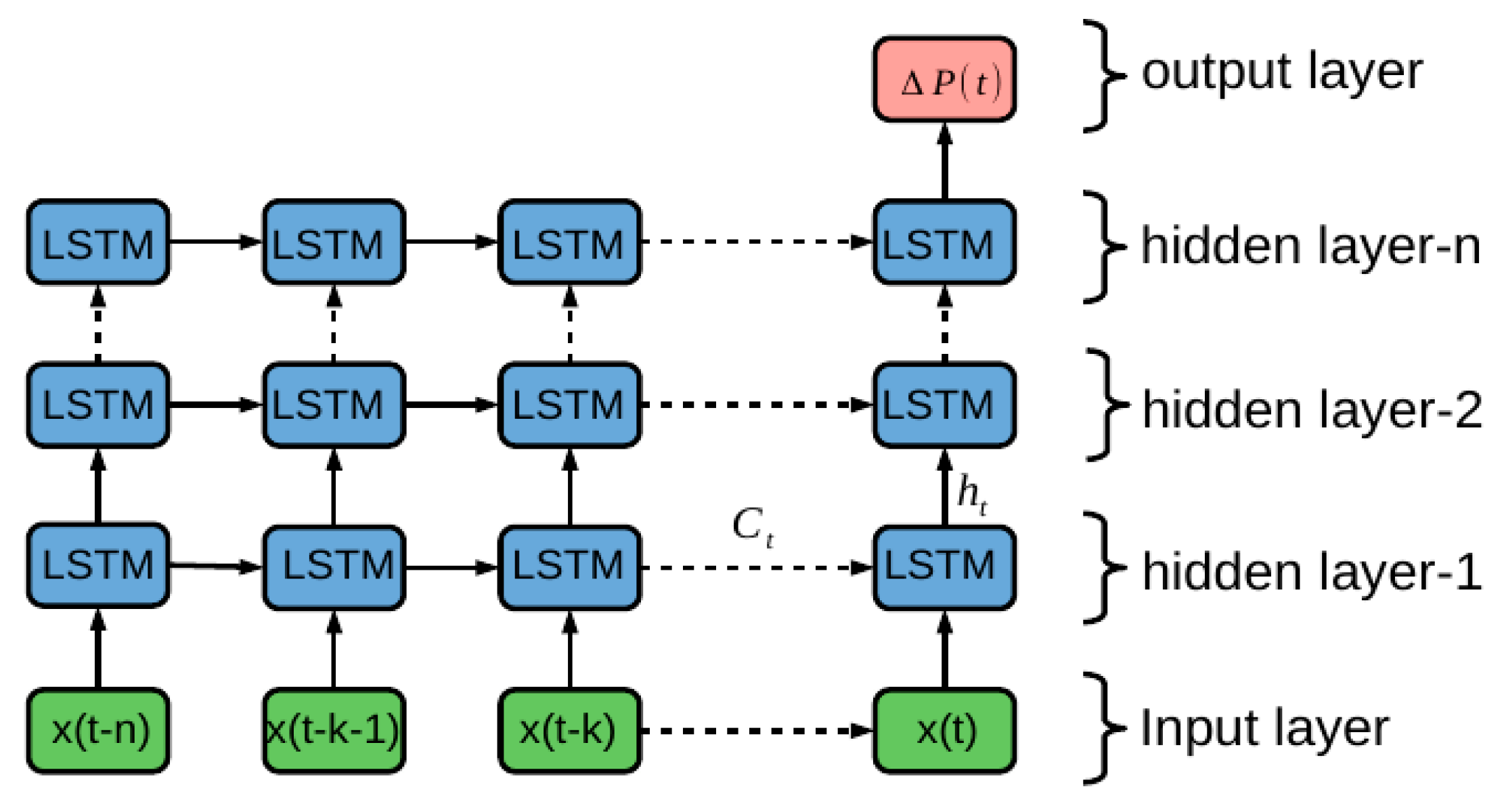

Figure 6 illustrates the inner details of the AI module. In each time, serial vectors of

are used as the inputs fed into the AI module, while

represents a feature vector of {

} and time steps is 4. Then, the value of the last state in the last layer is sent to a fully-connected layer, with a LeakyReLU activation function [

40] as output of the AI module, which is denoted as

. From

Figure 4,

Figure 5 and

Figure 6, Because the LSTM is used for generating pseudo-GNSS position, so the out rate of

is 1 Hz, which is the same as GNSS output rate.

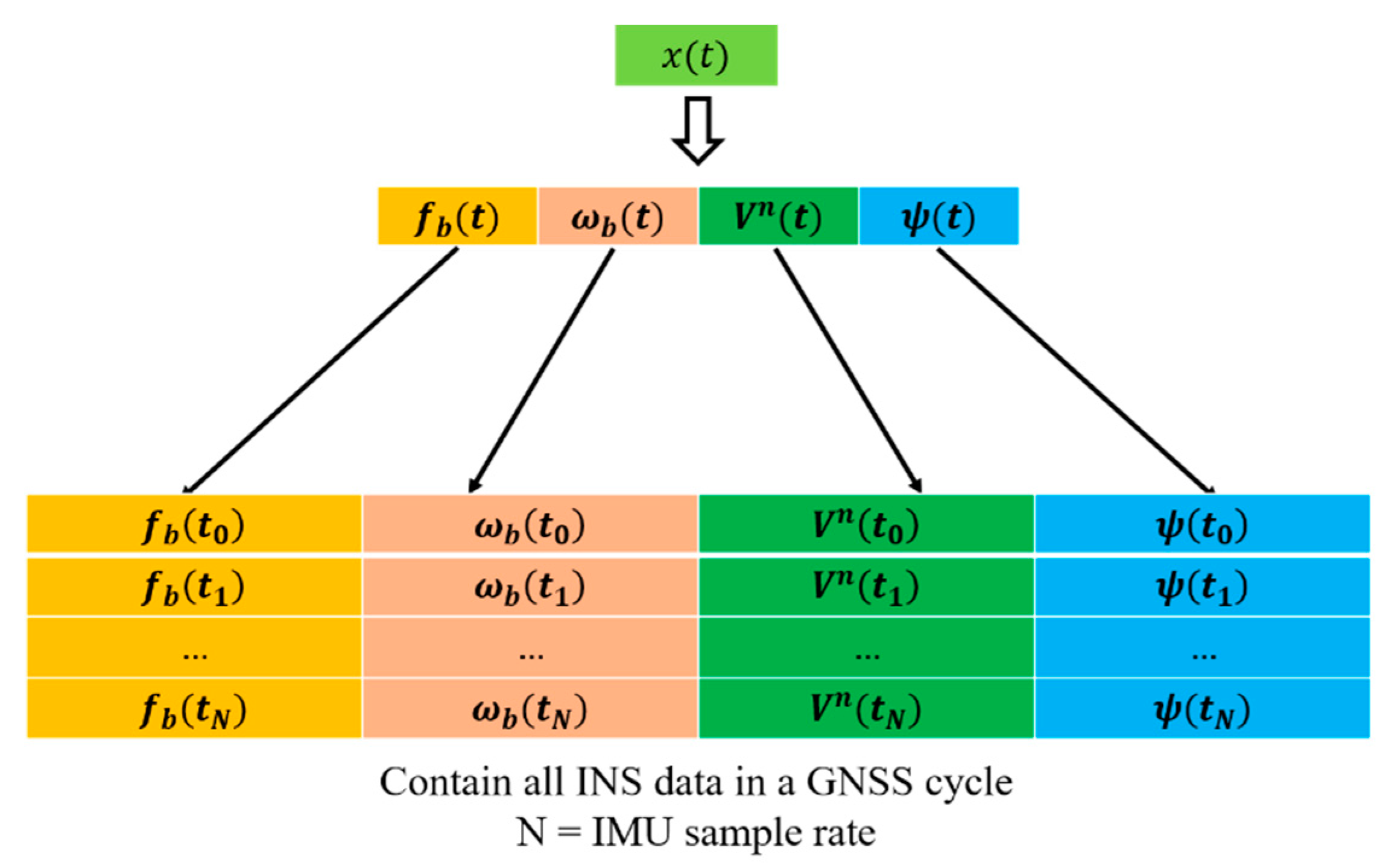

For input features

, all data from INS in a GNSS cycle is used to predict the

. The parameters in vector

including

,

,

,

are all from INS, whose output rate is higher than that of GNSS. As indicated in the illustration in

Figure 7, all data in a GNSS cycle is used for composing

. For example, if the sample rate of IMU is 50 Hz, 50 groups of {

} obtained in a GNSS cycle is composed to

. Then,

,

,

and

are used as input features to predict

. Therefore, this will need four multiples of sample rate of IMU past history data while the GNSS rate is 1 Hz.

The LSTM algorithm is proposed in order to deal with the sequential process. Meanwhile, the vehicle dynamic process is actually a kind of sequential process. In fact, the next arriving position has a strong relationship with the current and previous information, which has been illustrated previously. A traditional static neural network can do a non-linear map well, but cannot solve the sequential process problem as well as the recurrent neural network. Therefore, the LSTM architecture is adopted in order to replace the traditional neural network so as to find a relationship between and , , and the yaw, which also appears to have a better performance than that of the static neural network. The test results will be illustrated in the next section.

5. Road Experimental Testing Results

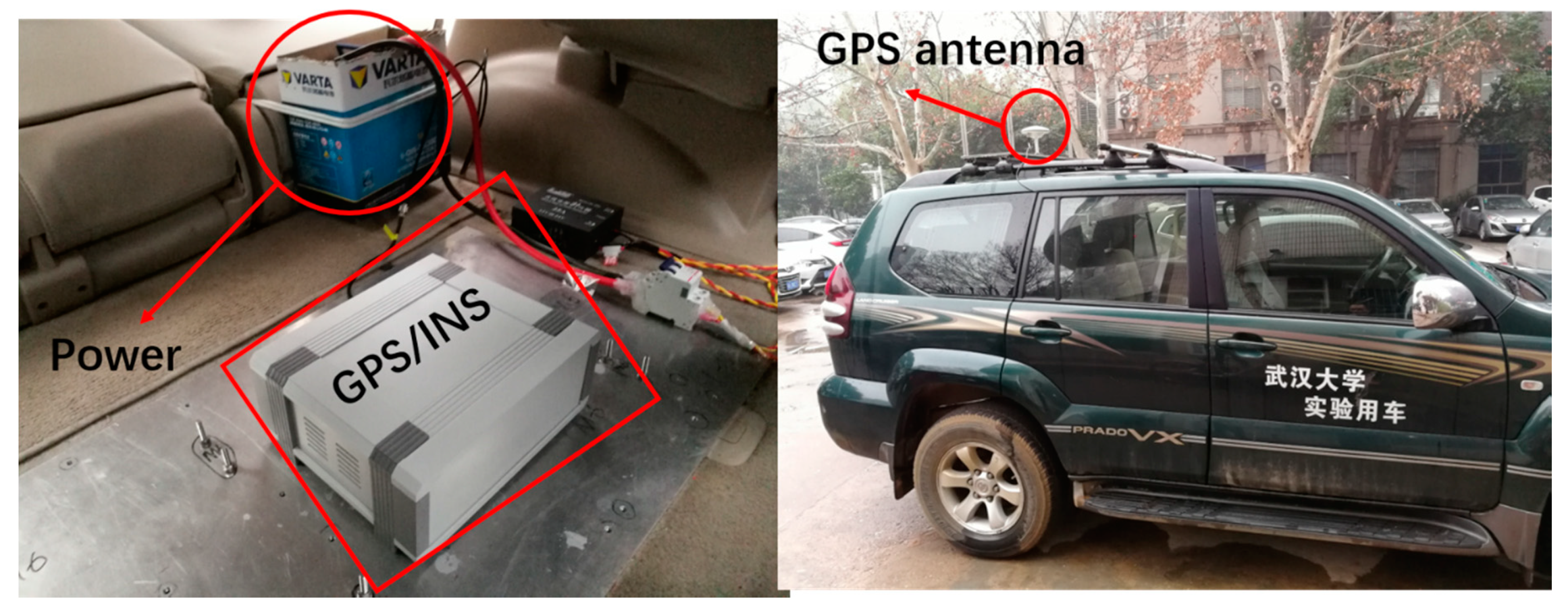

The test data acquisition platform is shown in

Figure 8. The sensors specifications (features) are described in

Table 1. ICM-20602 is a low-cost six-axis MEMS (microelectromechanical systems) motion-tracking device, while Ublox-M8P is a high precise GNSS module. The reference navigation solution—i.e. true position, velocity, and attitude—is recorded by a loose-coupled GNSS/INS system with RTK during vehicle experiment. After all the experiment data was collected, GNSS signal outages can be simulated by software algorithm to evaluate the performance of different neural network algorithms. The aim of this study is to improve the navigation performance of low-cost IMU during GNSS outages by utilizing an AI model based on LSTM.

The proposed LSTM-based method aiding for the INS/GNSS integrated system was tested and analyzed both in the training and prediction mode, and the procedure and diagram are illustrated in

Figure 4 and

Figure 5, respectively. The parameter turning, and the influence of the number of time steps and hidden units in the LSTM model are also explored. The proposed model of AI is trained and tested at different times.

5.1. Data Description

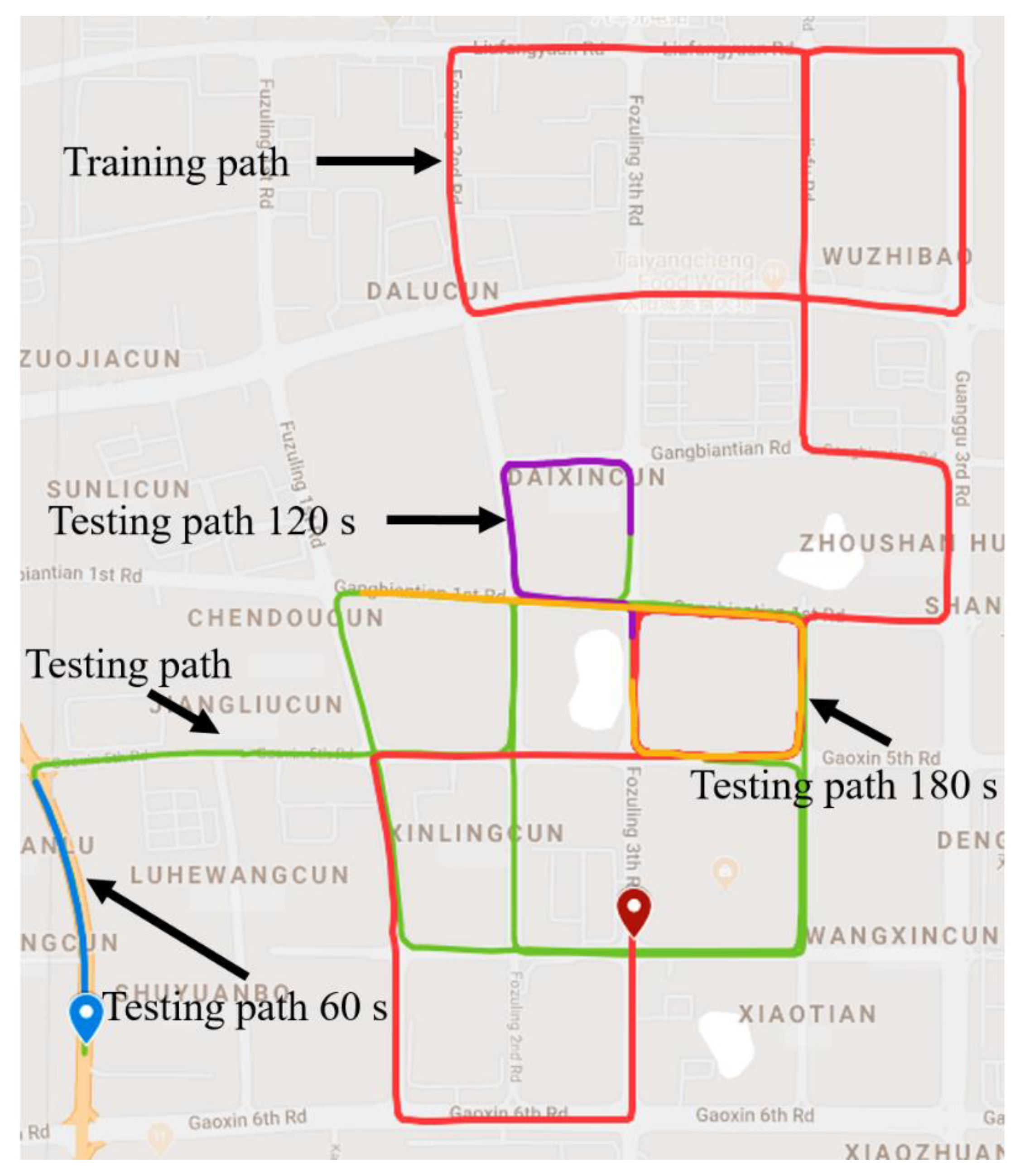

The trajectory of this experiment is shown in

Figure 9, which was collected in China, Hubei Province, at Wu Chang. The sampling rates of the INS and GNSS are 50 Hz and 1 Hz, respectively. The red icon and blue icon represent the start point and end point, separately. During the path of the red line, the whole system works under loosely coupled mode during the trajectory. At that time, the GNSS data and INS data were integrated to maintain continuous and high-precision navigation results, meanwhile the LSTM-based model was under the training process. The input features of the LSTM module include the specific force, angular rate, yaw, and velocity at the current and past seconds, while the position increments calculated from the GNSS are as the output.

When the vehicle was running along with the three parts of test path where the GNSS signal had assumed outages, which means the GNSS signal outages is simulated, the navigation results only depended on pure INS reckoning.

In the experiment, the trajectory data from INS and GNSS (in

Figure 9) was split into the following two parts: the first part with 4400 s, marked by the red line, is used as the training set; the second part with 1400 s, marked by green line, is used as the test set. Meanwhile, three parts of test path for 60 s, 120 s, and 180 s which were signed in

Figure 9 were selected to evaluate the performance of the proposed algorithm. Actually, when a GNSS signal is available, all of the data from INS will be used to train the AI model. Once the GNSS signal is unavailable, the well-trained model will substitute the GNSS to supply the position information for the Kalman filter, so as to decrease the errors of INS. All of the data have been scaled between −1 and 1 for accelerating the process of training.

In a typical machine learning application, the data set is usually iterated many times using a gradient descent algorithm. However, navigation is a real-time application, which needs normalize the data by a section of road data in practical applications. In this experiment, the off-line mode is used to explore the feasibility of the neural network methods in terms of improving the INS navigation solutions when the GNSS signal is lost. Furthermore, different features have different numerical values and sizes. Normalization and standardization are mainly used to make calculations more convenient. For example, the size of two variables is different, while one value is larger than the other. Then, they may cause a numerical calculation error when both of them are simultaneously variables. Therefore, the scaling input data is needed for making calculations more convenient and quickly convergent.

5.2. Design the LSTM Network

The AI model is comprised of two LSTM layer and a fully-connected layer activated by softmax function. In the process of designing an LSTM network, there are several hyper parameters that need careful tuning, namely: (1) the number of hidden units, and (2) the step time length. Setting too many hidden units and too lengthy time steps will spend more time making an algorithm convergence, and may lead an over-fitting problem. In the experiment, the other parameters’ values are shown in

Table 2.

The training set has reserved 20% data as a validation set to adjust the model parameters. That means 80% of the data of the training set is used to train the model, the remaining 20% is used to evaluate the performance of model. The criterion for selecting parameters is the root mean square error value on the validation set. The smaller the value generally means better the generalization ability of model. In this study, a grid search method is adopted to find the best parameter combination, while three time-step lengths (1, 4, and 8) and three hidden units of LSTM (32, 64, and 128) are selected to be evaluated. Three-time steps and three hidden units will generate nine combinations. The model performance is evaluated by the root mean square error (RMSE).

The training time and RMSE in the validation were calculated and compared in each combination, then the final parameters were selected from the best choices of these combinations. The experimental results are displayed in

Table 3 which indicates that the setting time step and hidden units are equal to 4 and 64, respectively, making the LSTM algorithm obtain less RMSE. The low RMSE implies the high confidence of the prediction method. Specifically, the different performance of prediction with different time steps and hidden units relate to the overfitting problem, which produces the large value of RMSE.

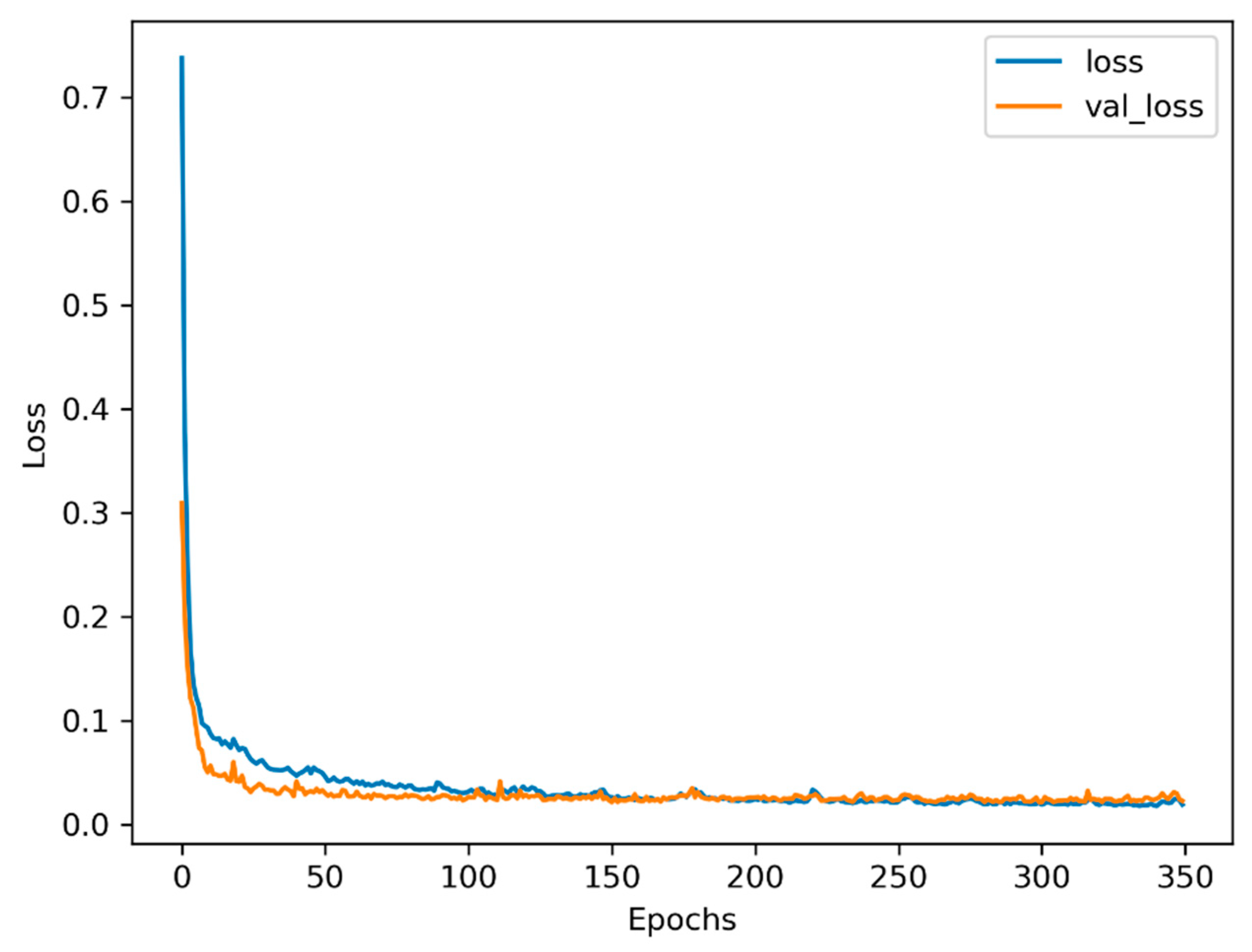

Figure 10 indicates the varying losses with epochs during the training process. It can be seen that the loss of training and validation will decrease to the minimum value after 150 epochs or so. Then, the trends of training and validation tend to be flat, which seems that setting early stopping will save more time without losing any performance.

5.3. Experiment Results

In this section, the navigation performance of the LSTM model is compared with that of MLP. MLP uses a time window length of four to predict the future position increment, whose input features are the same as that of LSTM, including

,

,

, and the yaw. Aiming to have same hidden units as LSTM, MLP has two hidden layers, each of which has 64 hidden units, except for the input and output layer. The algorithm was implemented with Python 3.6 and Tensorflow 1.9, which are very famous deep learning frameworks developed by Google [

41], and trained by Adam optimizer [

42].

Under the training procedure of the above algorithms (LSTM and MLP), the 4400 s training set, including the above features (

,

,

, and the yaw), is employed to train the models of MLP and LSTM, respectively. Part of test set containing the above features, is applied in order to estimate the effect of the trained models, from which the effectiveness of predicting the position increments of three models can be distinguished, while the increment of position is formed with latitude, longitude, and height. Comparison results are shown in

Figure 11, and summarized in

Table 4.

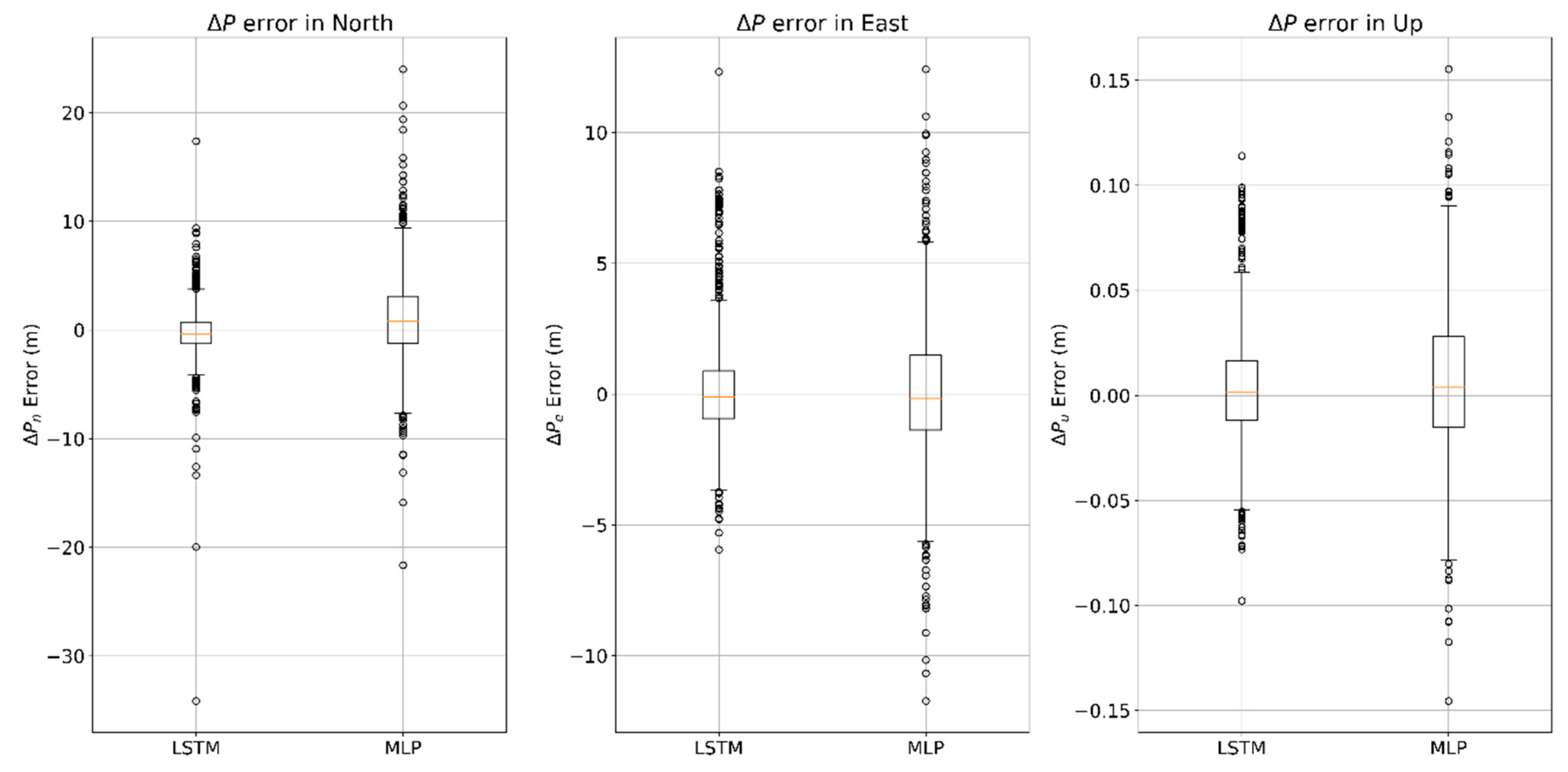

The distribution of prediction

error in MLP and LSTM algorithms are shown in

Figure 11. The value of the mean absolute error (MAE) of the predicting position increments in the north direction using the algorithms of LSTM and MLP are 1.573 m and 3.053 m, respectively, whereas the standard deviation of each algorithm is 2.506 m and 4.109 m, respectively. The value of the mean absolute error of the predicting position increments in the east direction are 1.422 m and 1.965 m, respectively, meanwhile the standard deviations are 2.138 m and 2.686 m, respectively.

Table 4 shows that the performance of predicting

in LSTM algorithm is more reliable and stable than that in the traditional MLP algorithm.

In order to compare the performance of different models during GNSS outages more specifically, three different length of test path were selected, which were 60 s, 120 s, and 180 s. For dealing with land vehicles, the position, velocity in North and East, as well as heading were selected to display the performances of improving the navigation results during GNSS outages in different algorithms.

5.3.1. 60-s Outage Experiment Results

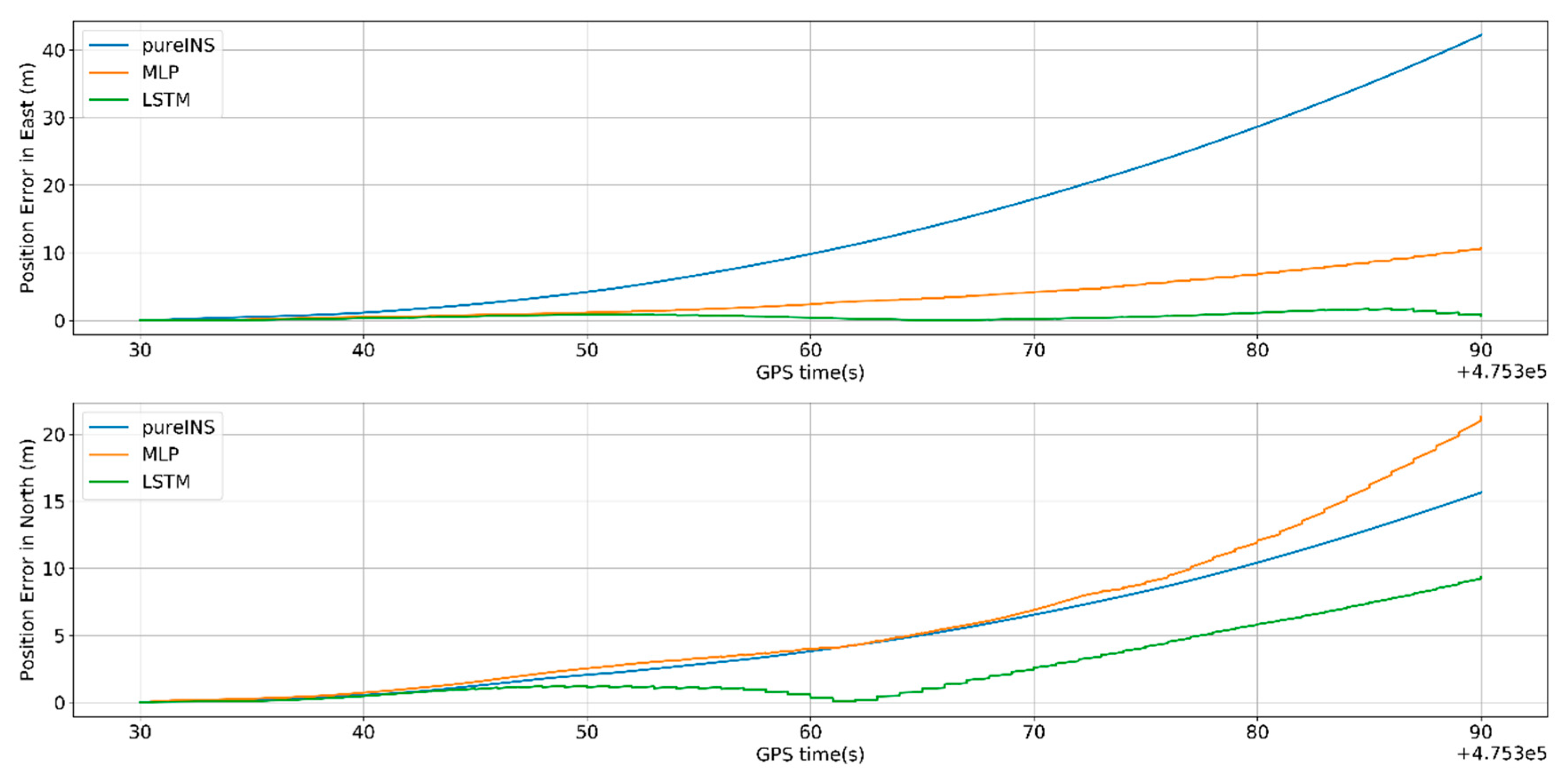

From

Figure 12,

Figure 13 and

Figure 14 show the navigation results among different algorithms during 60 s GNSS outages. Before 475,330 s, where the GNSS signal is still available, the whole system works on loosely coupled mode, while three algorithms show the same results. When the GNSS signal is assumed outages, and the navigation performance varies in three different methods.

In

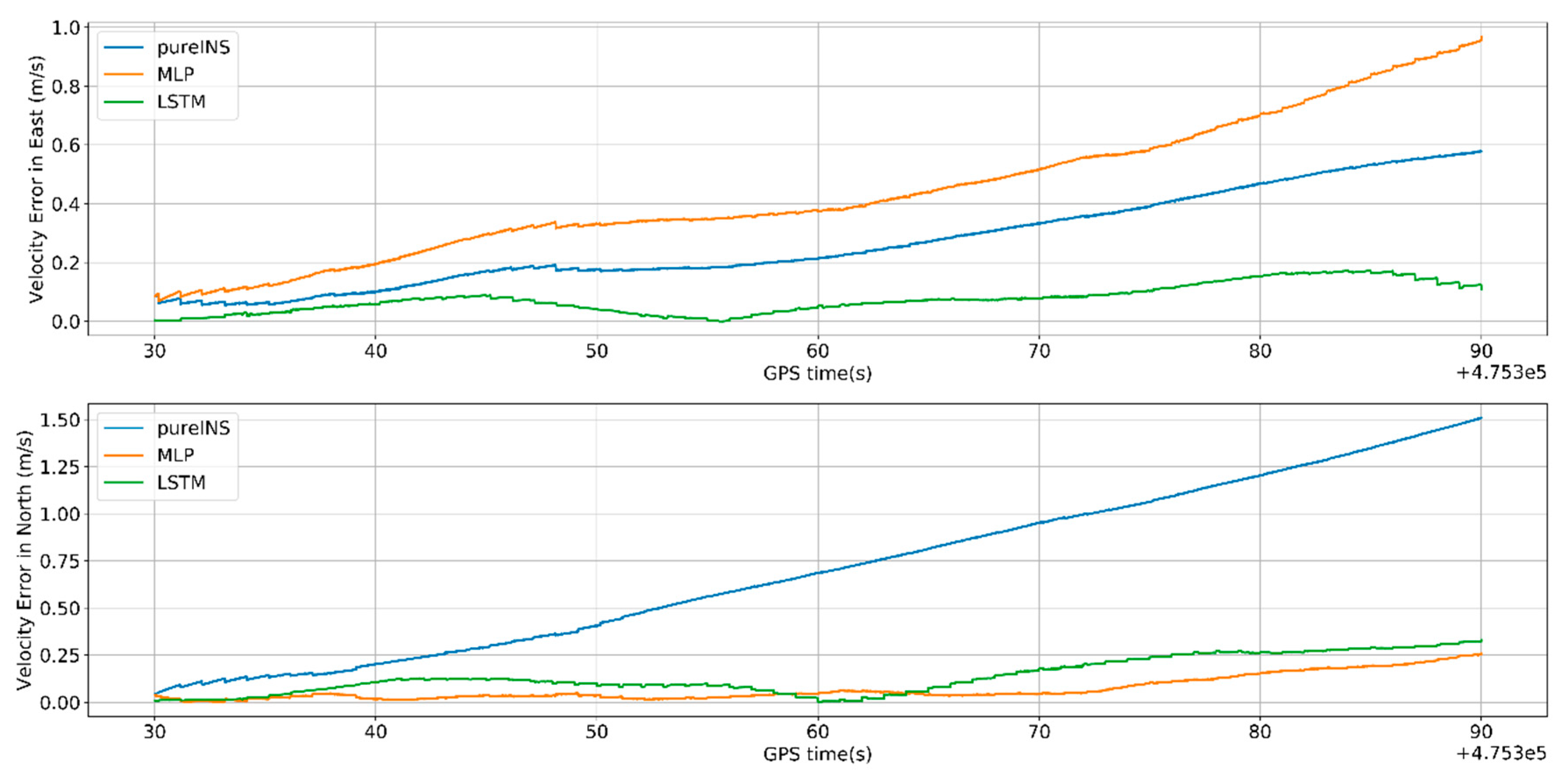

Figure 12 and

Figure 13, the proposed LSTM algorithm obviously outperforms the MLP algorithm and pure INS. The navigation errors of all three methods gradually deteriorates with time during the GNSS signal outages. In

Figure 12, the max position error in east of pure INS, MLP, and LSTM is 42.4, 10.7, and 1.5 m; meanwhile, the max position error in north is 15.6, 21.1, and 9.25 m, respectively. In

Figure 13 the max velocity error in east of pure INS, MLP, and LSTM is 0.58, 0.95, and 0.12 m/s, meanwhile the velocity error in north is 1.5, 0.24, and 0.32 m/s, respectively. In

Figure 14, the heading error among three algorithms is 1.15°, 0.95°, and 0.18°. All the results are summarized in

Table 5. At 475,390 s, the system turns to the loosely coupled mode, and navigation error quickly converges both in three methods.

Figure 15 indicates that LSTM can greatly decrease the pure INS’s navigation error. At the end of GNSS outages, the position error of the LSTM algorithm is 80% performance improvement compared with pure INS mode.

5.3.2. 120-s Outage Experiment Results

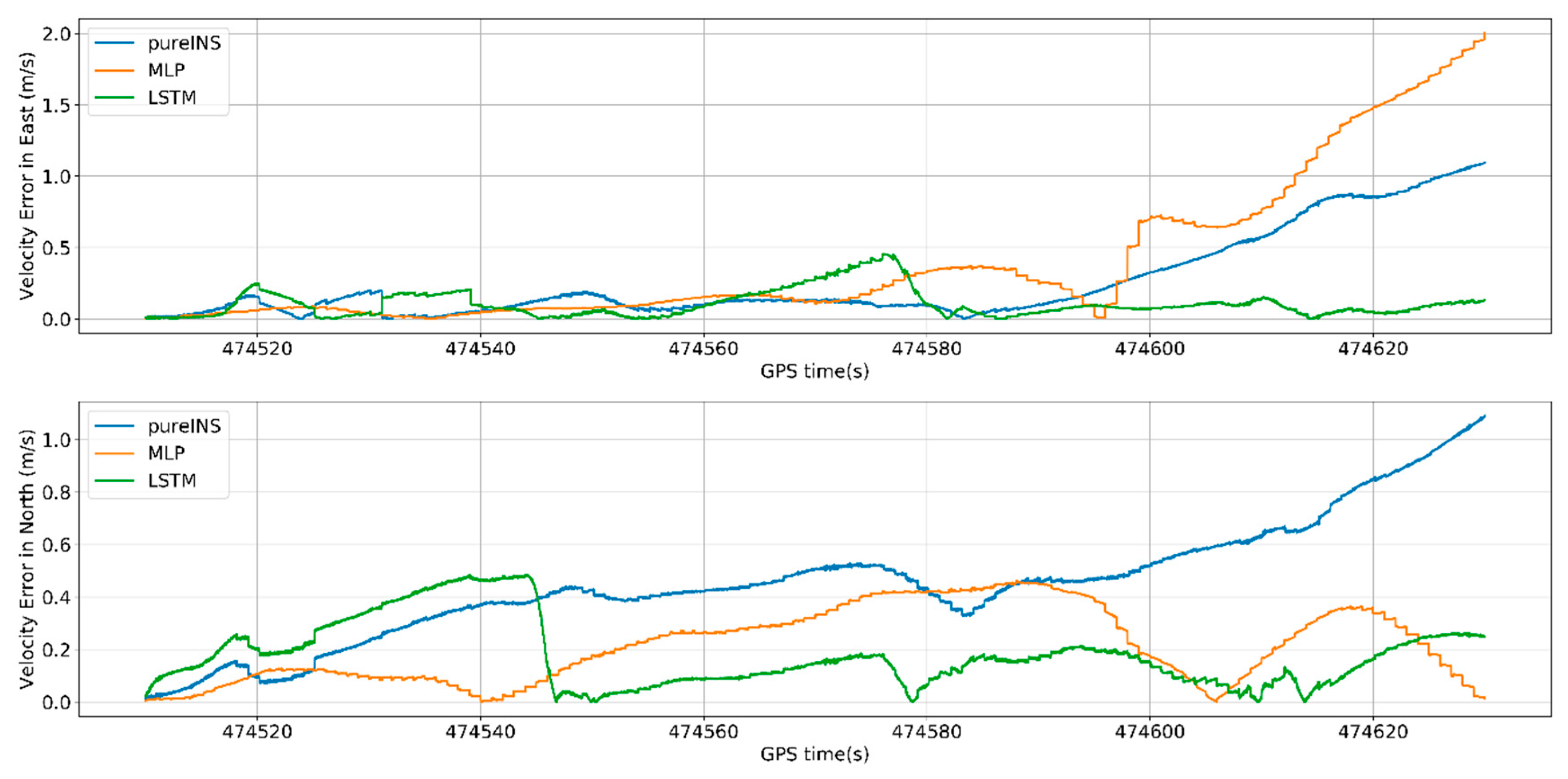

This section, a longer GNSS signal time and more complicated scene was selected to test the performances of different algorithms.

Figure 16,

Figure 17 and

Figure 18 show the navigation results among different algorithms during 120 s GNSS outages.

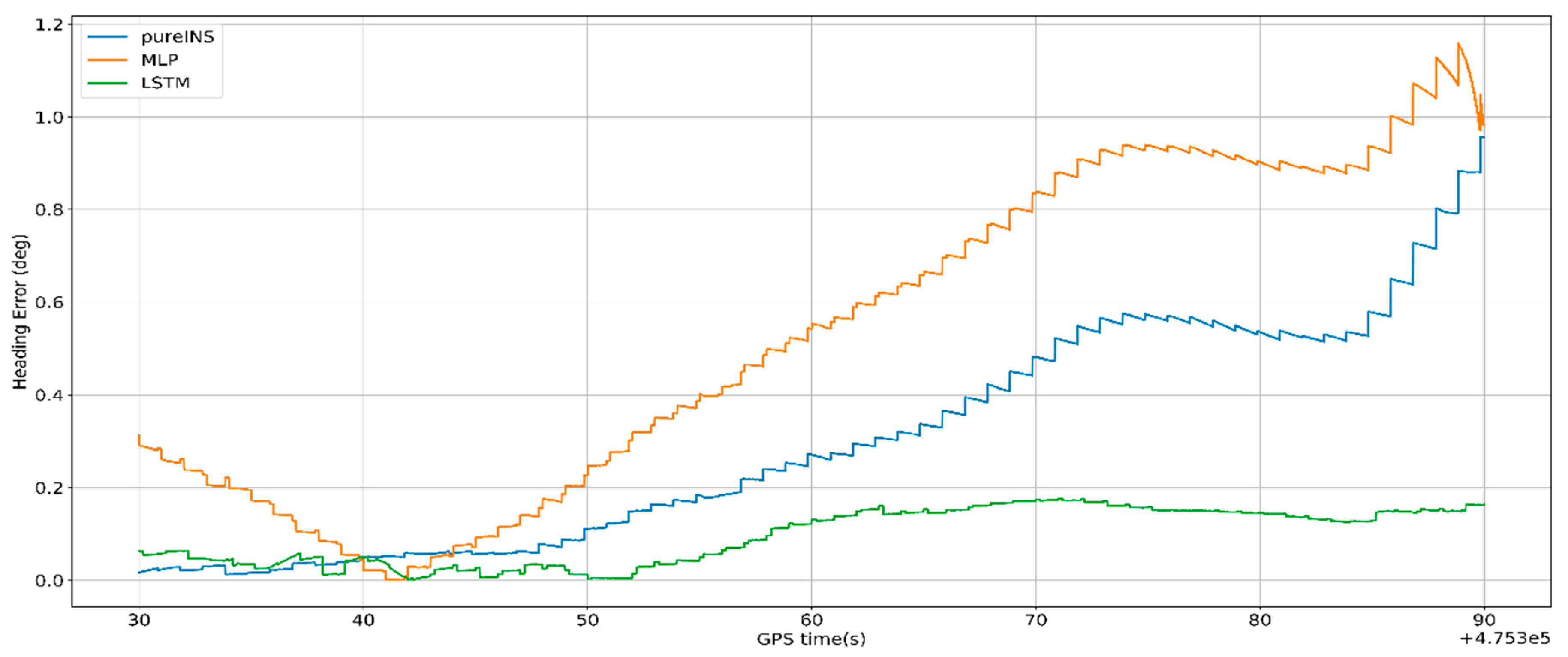

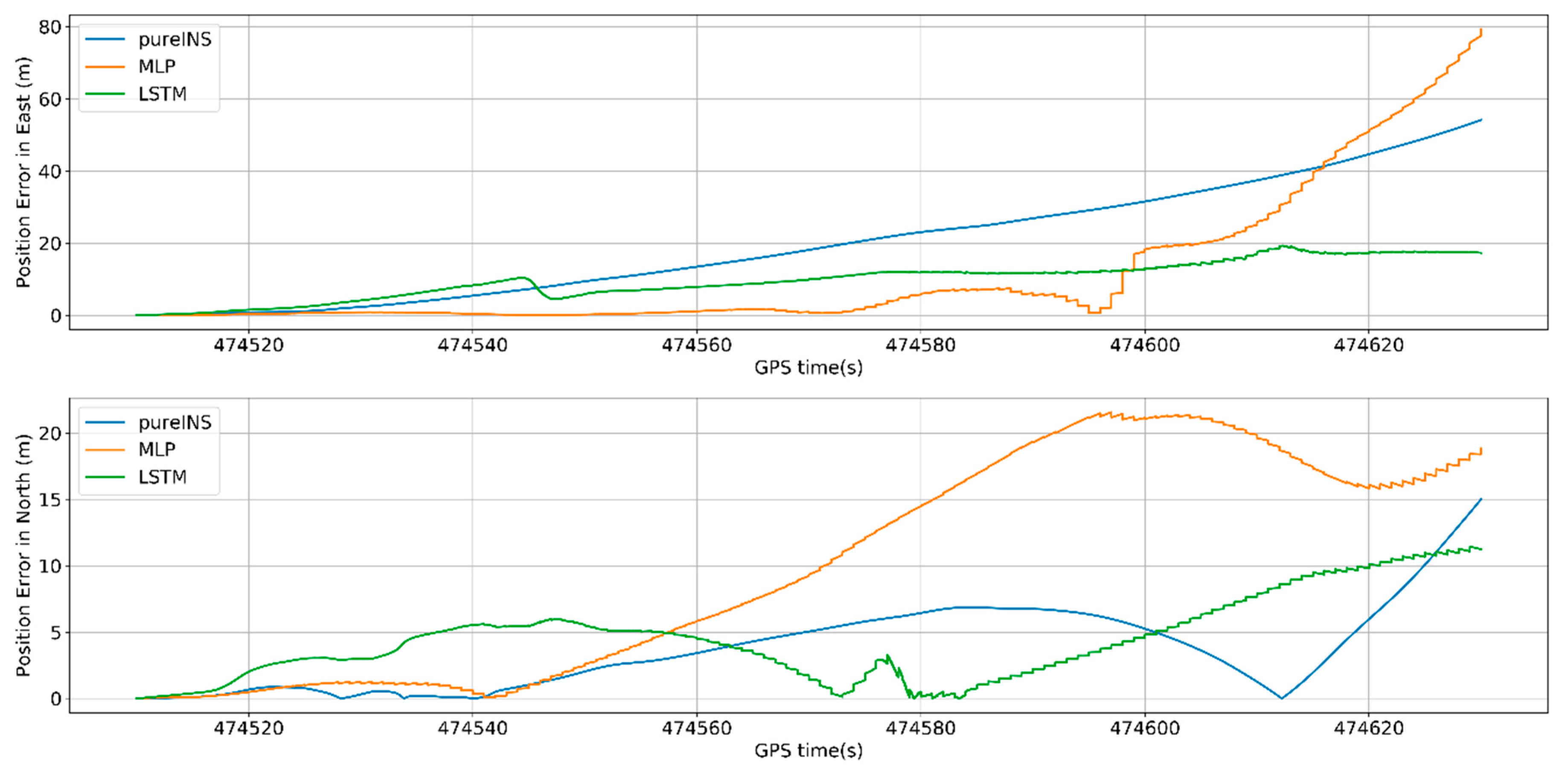

From

Figure 16, at 474,630 s, the max position error in east of pure INS, MLP, and LSTM is 54.2, 79.7, and 19.4 m, meanwhile the max position error in north is 14.9, 18.6, and 11.3 m. In

Figure 17, the max velocity error in east of pure INS, MLP, and LSTM is 1.1, 2.0, and 0.46 m/s, meanwhile the max velocity error in north is 1.1, 0.45, and 0.32 m/s. In

Figure 18, the heading error among three algorithms is 0.63°, 0.62°, and 0.27°. All the results are summarized in

Table 6.

From

Figure 16 and

Figure 17, it can be seen that LSTM performs worse in beginning 40 s in north, but for last 80 s, LSTM performs better than MLP for position and velocity in both directions. From the time average performance, LSTM also performs better than MLP.

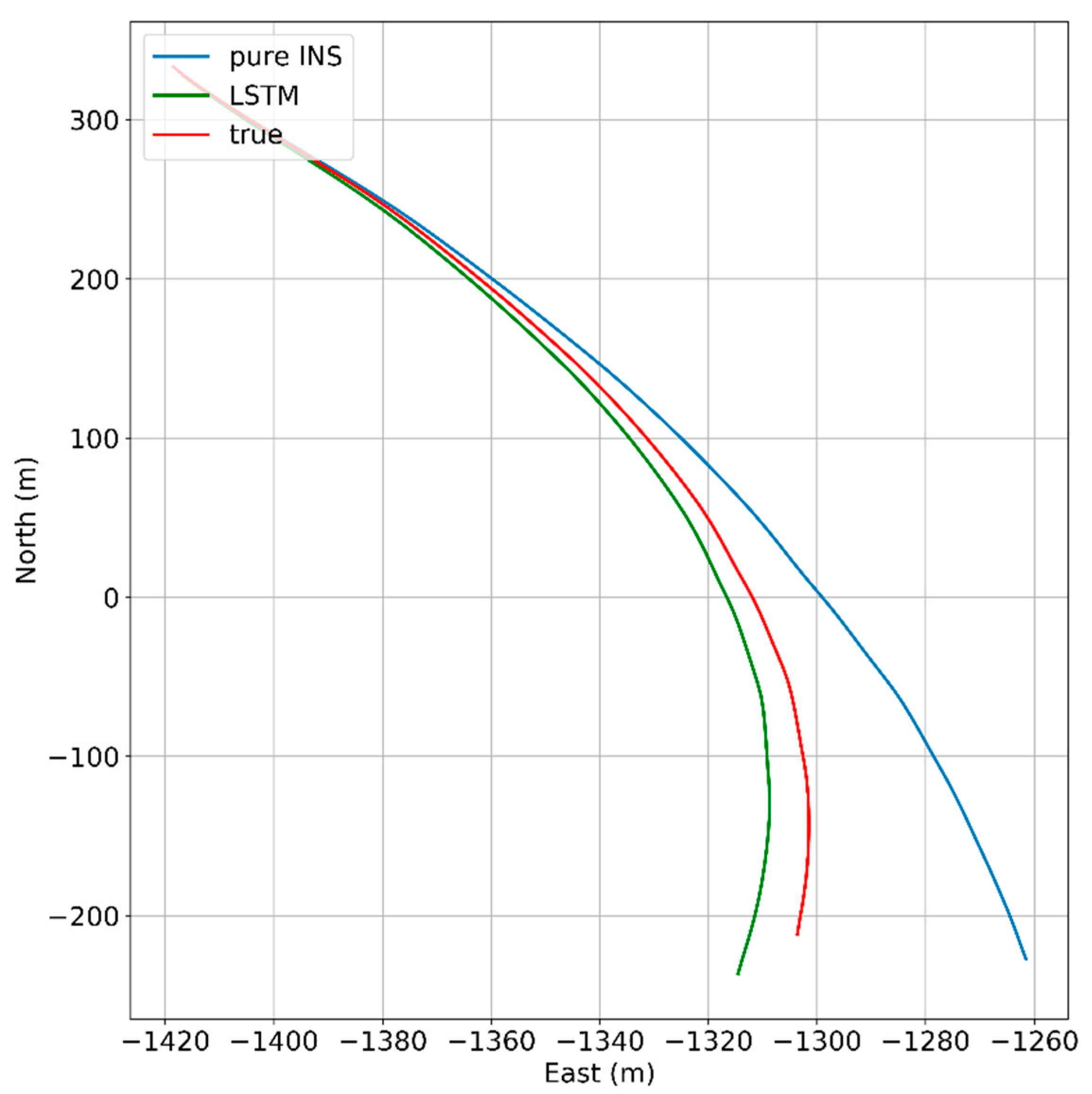

In

Figure 19, under the condition of longer GNSS signal outages and complicated scene with three benches, the performances of all algorithms have reduced, but LSTM also get higher navigation accuracy compared with pure INS. At the end of GNSS outages, the position error of the LSTM algorithm is 68.5% performance improvement compared with the pure INS.

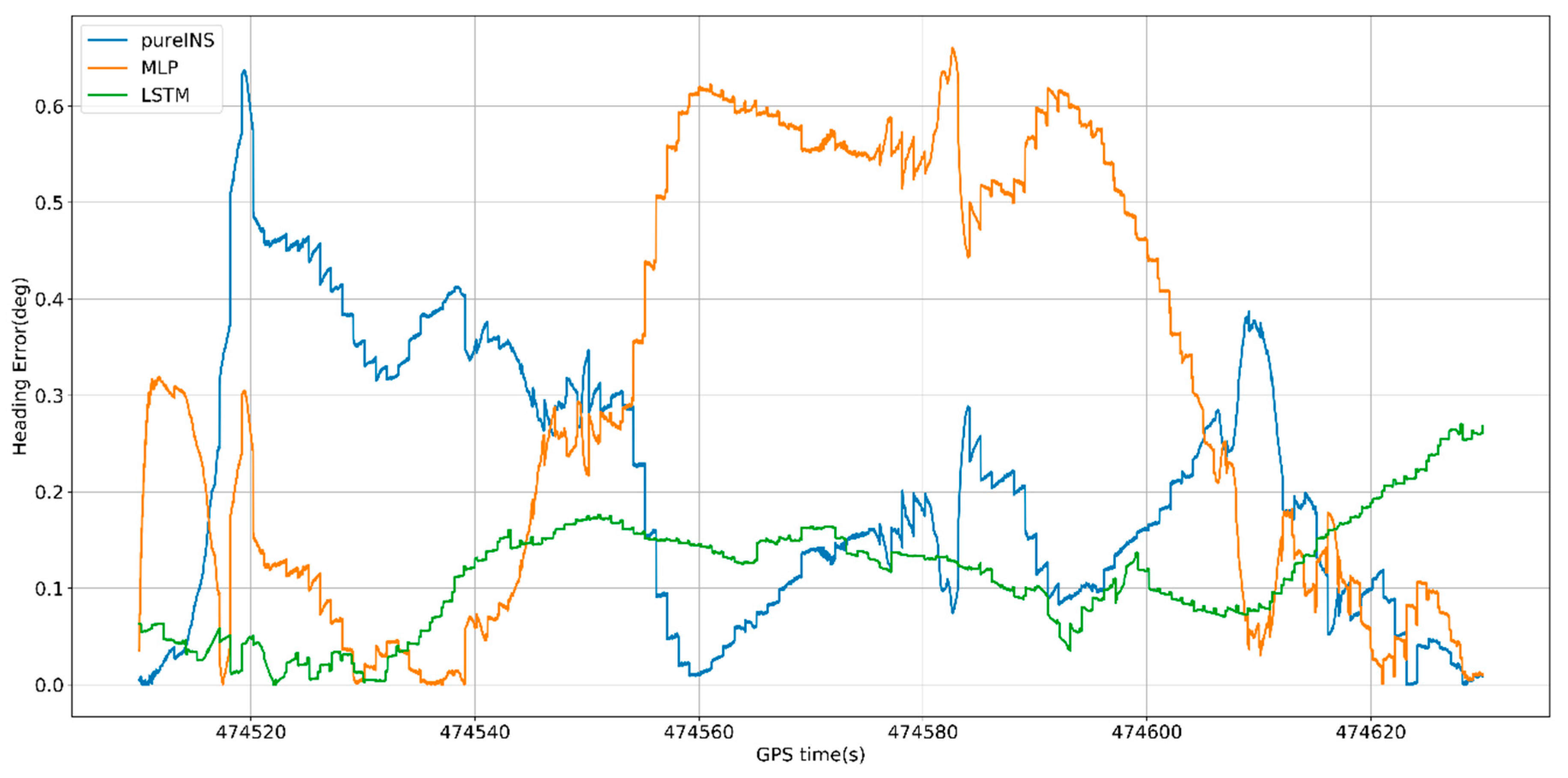

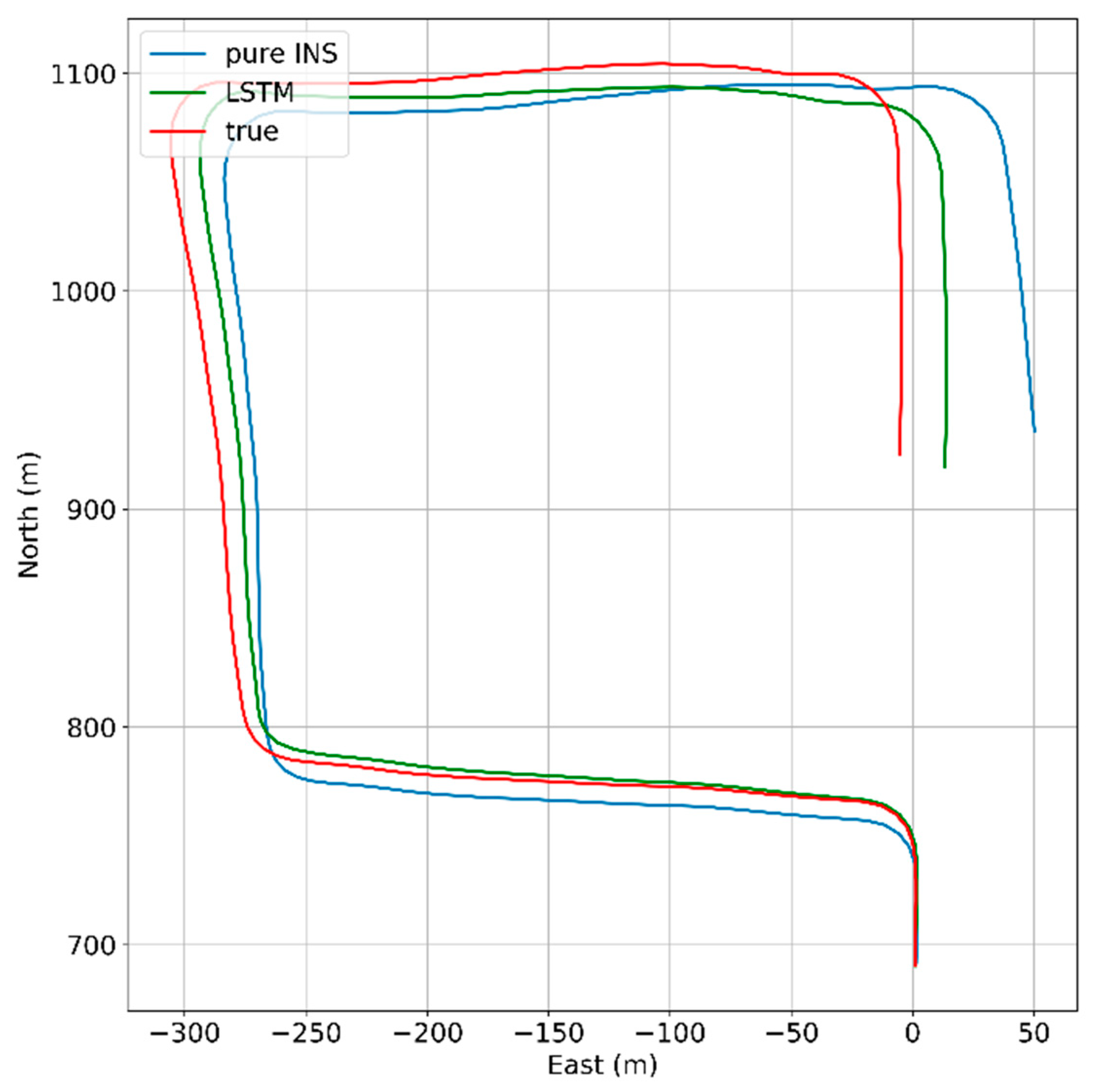

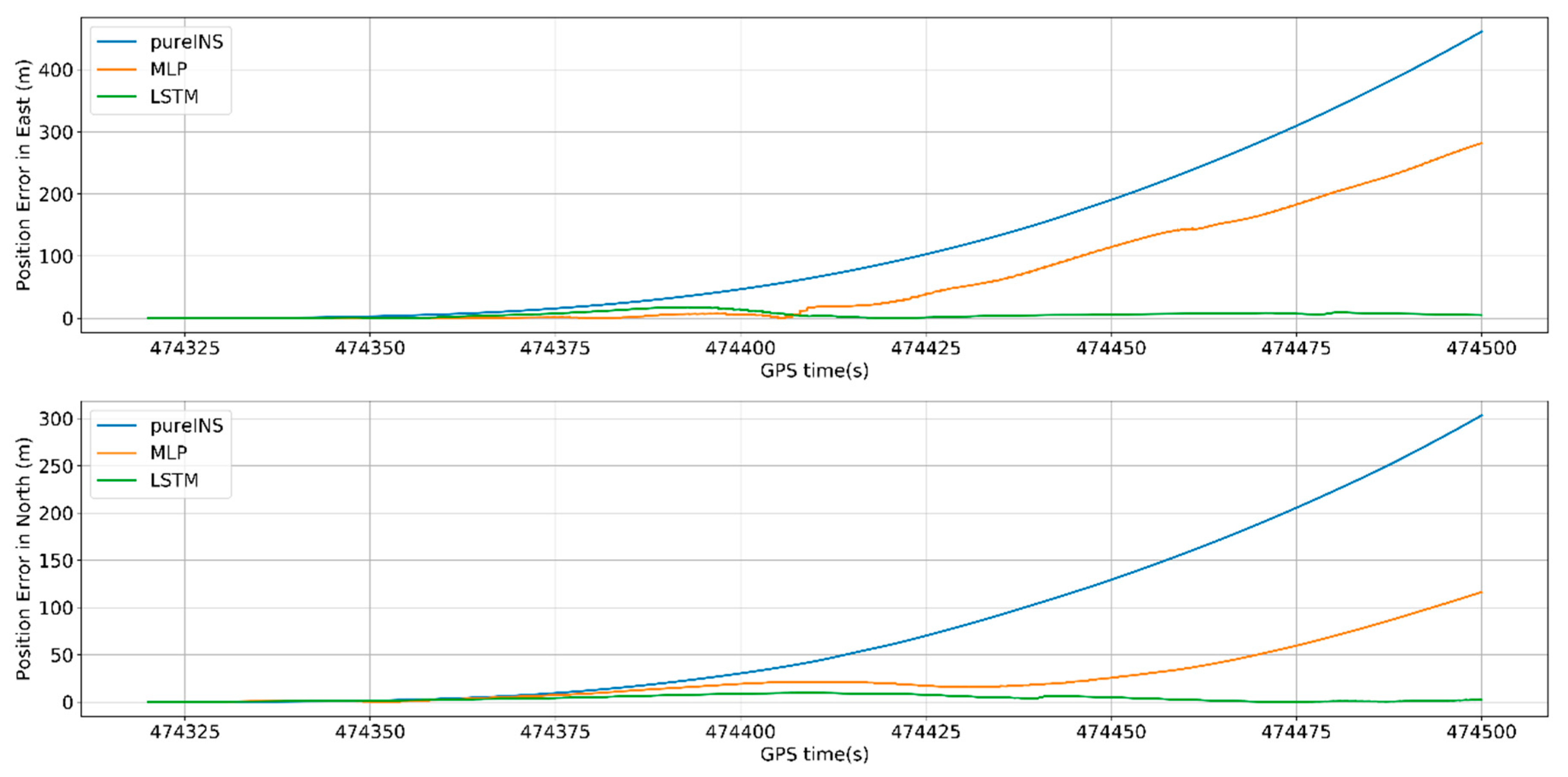

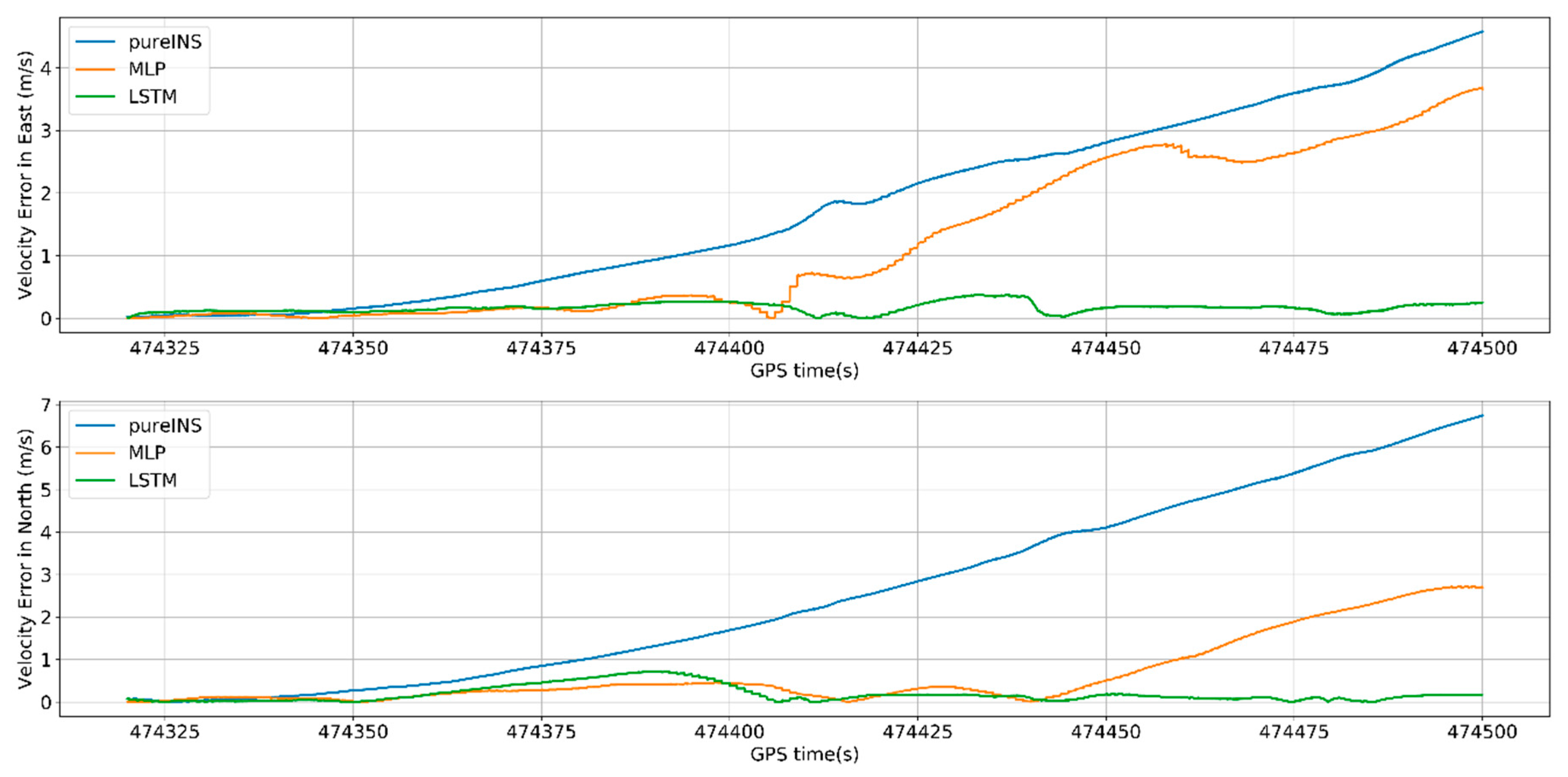

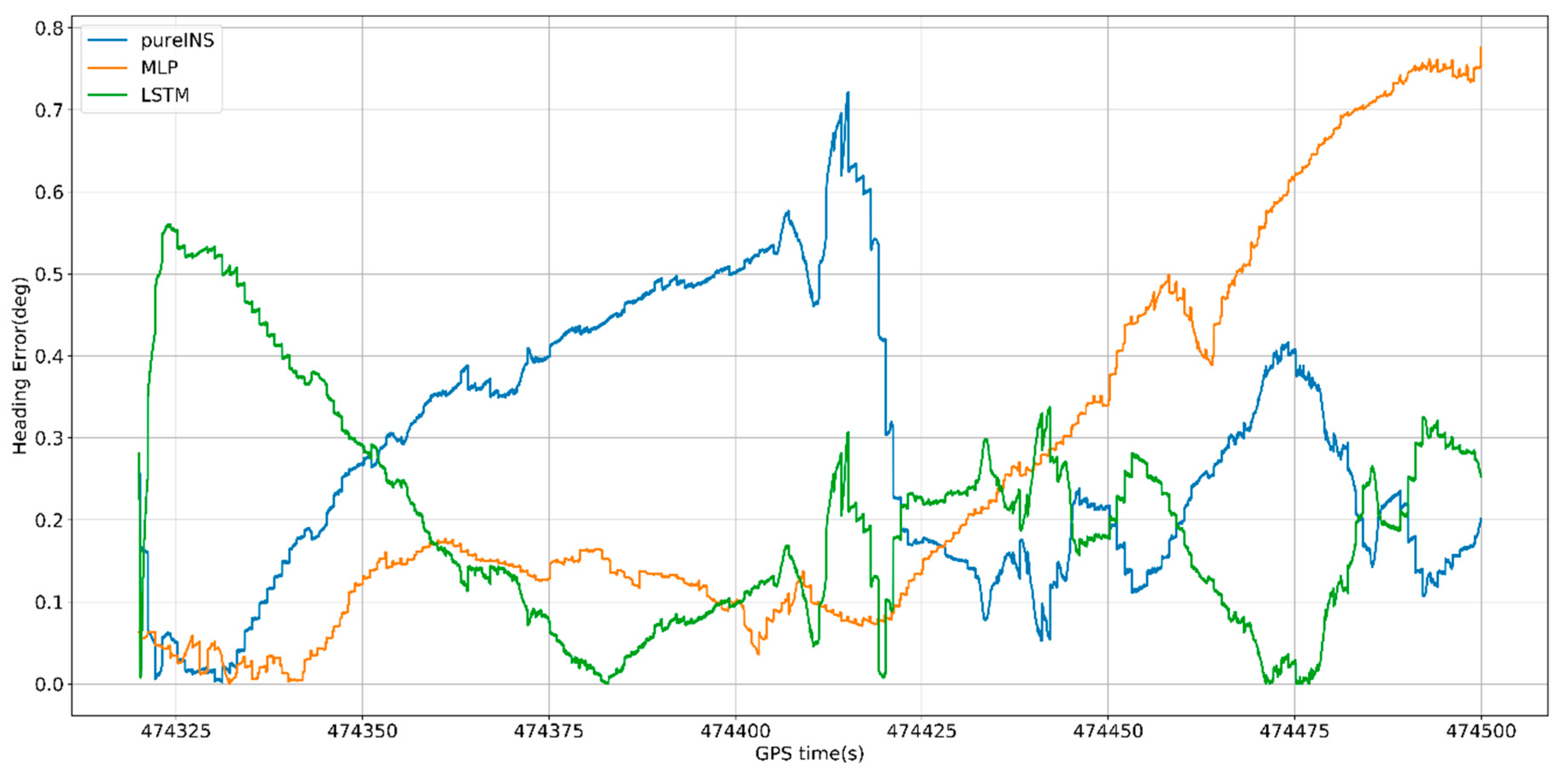

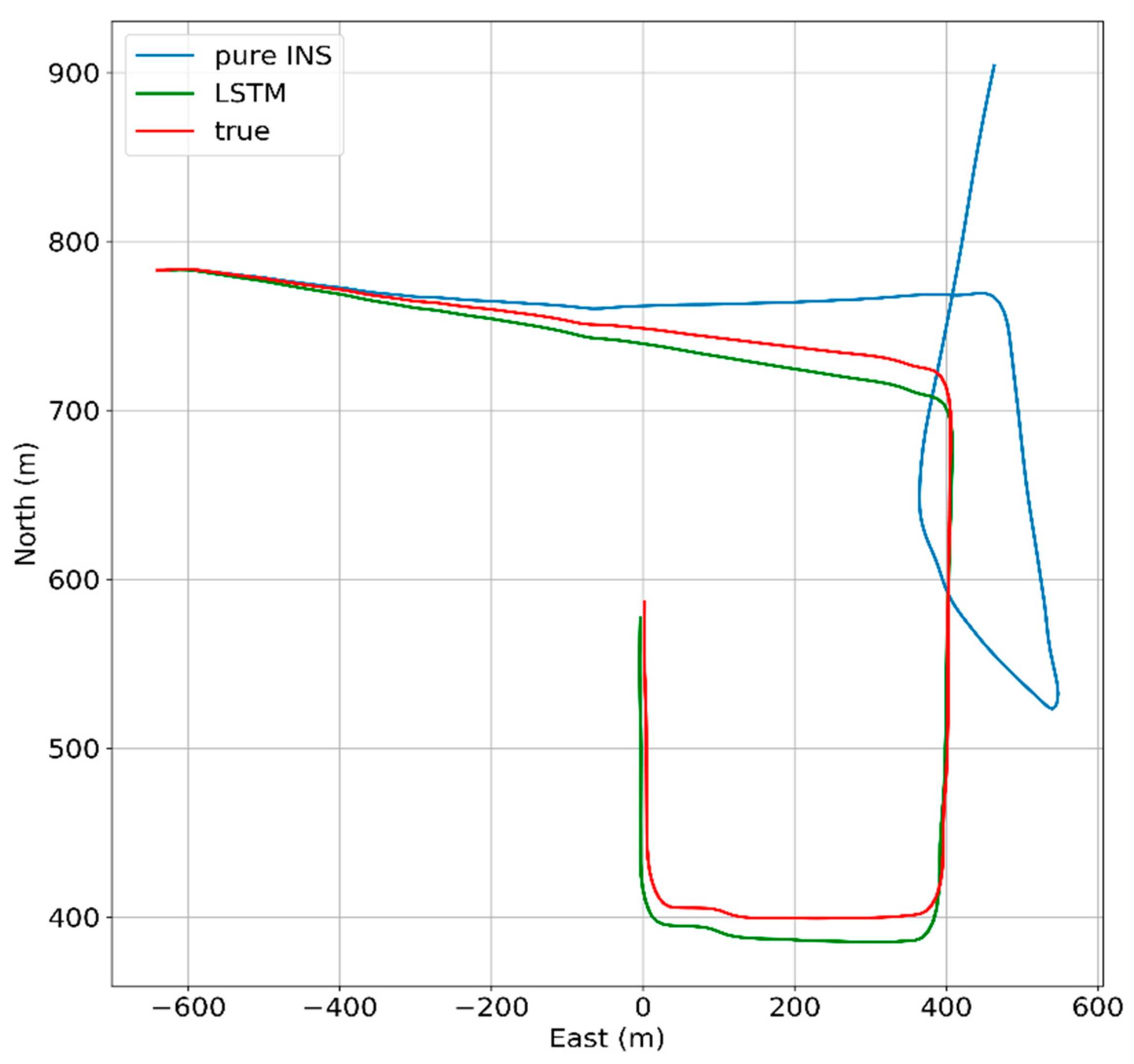

5.3.3. 180-s Outage Experiment Results

This section, a 180 s GNSS signal time was selected to test the performances of different algorithms.

Figure 20,

Figure 21 and

Figure 22 show the navigation results among different algorithms during 180 s GNSS outages.

In

Figure 20, the proposed LSTM algorithm is obviously better than the MLP algorithm and pure INS. At 474,500 s, the max position error in east of pure INS, MLP, and LSTM is 460, 280, and 17 m, meanwhile the max position error in north is 303, 115, and 10 m. In

Figure 21, the max velocity error in east of pure INS, MLP, and LSTM is 4.6, 3.7, and 0.4 m/s, meanwhile the max velocity error in north is 6.7, 2.8, and 0.7 m/s. In

Figure 22, the heading error among three algorithms is 0.7°, 0.75° and 0.55°. All the results are summarized in

Table 7.

In

Figure 23, under the condition of 180 s GNSS signal outages and complicated scene with three benches, the performances of all algorithms have reduced, but LSTM can also improve the navigation errors. At the end of GNSS outages, the position error of the LSTM algorithm is 95.5% performance improvement compared with the pure INS.

5.4. Experiment Summary

When the GNSS signal is not available, the whole system turns to predicting mode. The measurements from INS—which are the specific force, velocity, yaw, and angular rate—are sent to a well-trained AI-based model so as to attain a predicting value of position increments. Then, accumulating these position increments can get the pseudo-GNSS position to act as a substitute of the true GNSS position, which will subtract the position measurement from INS to constitute a part of the input vector of the Kalman filter. Finally, the Kalman filter will correct the INS errors so as to attain the corrected position, attitude, and velocity. The test results indicate that the MLP algorithm, which suffers from an incapability of dealing with time-dependency between current and past vehicle dynamics, cannot be a proper model for aiding INS during GNSS signal outages. When the GNSS signal is lost, the LSTM algorithm can get a more accurate navigation result because of its capability of constructing the relationship between future and past information.