Qualifications of Rice Growth Indicators Optimized at Different Growth Stages Using Unmanned Aerial Vehicle Digital Imagery

Abstract

1. Introduction

2. Materials and Methods

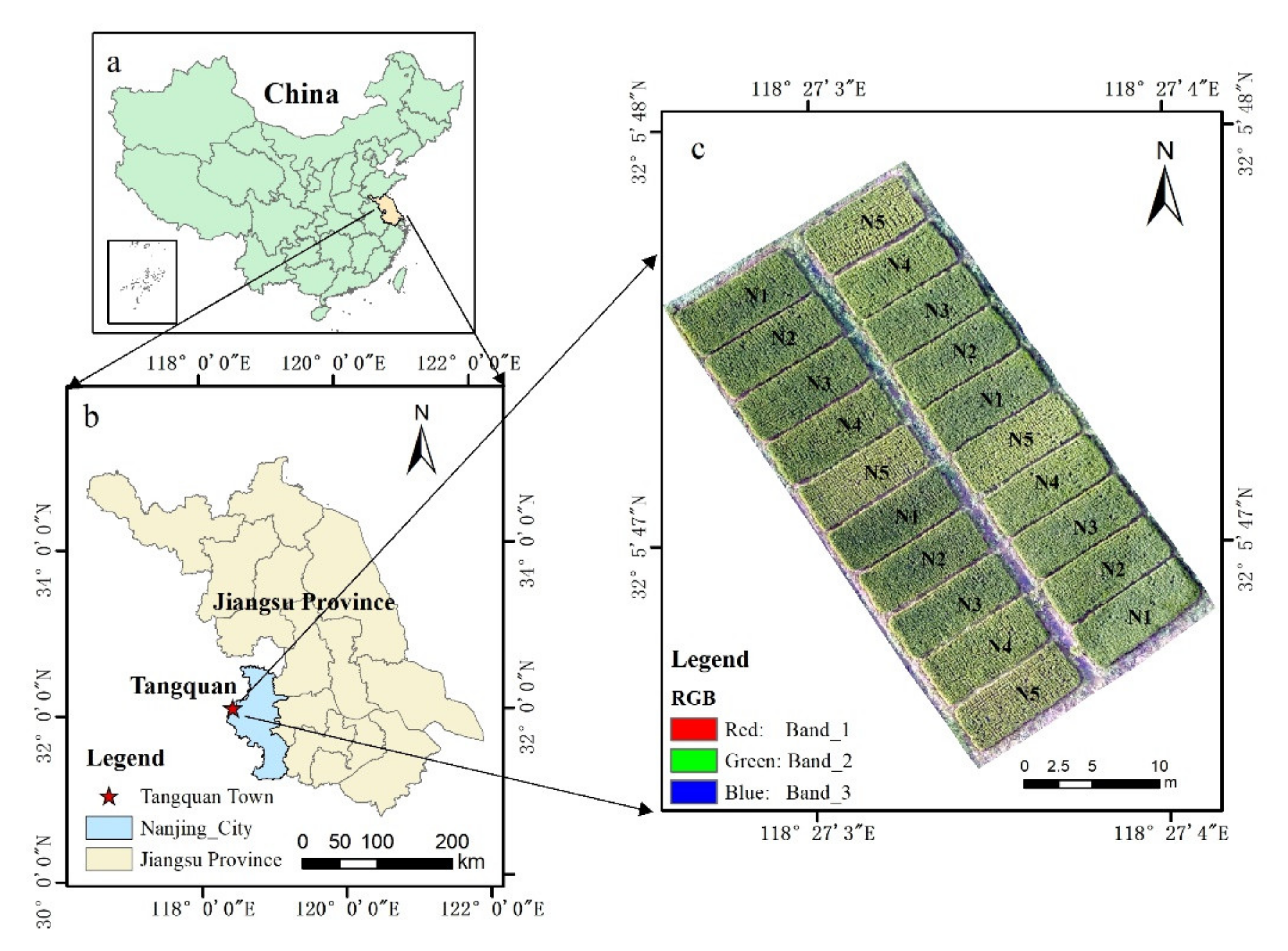

2.1. Study Area

2.2. Field Data Collection

2.3. UAV Data Acquisition

2.4. UAV Image Processing and Index Extraction

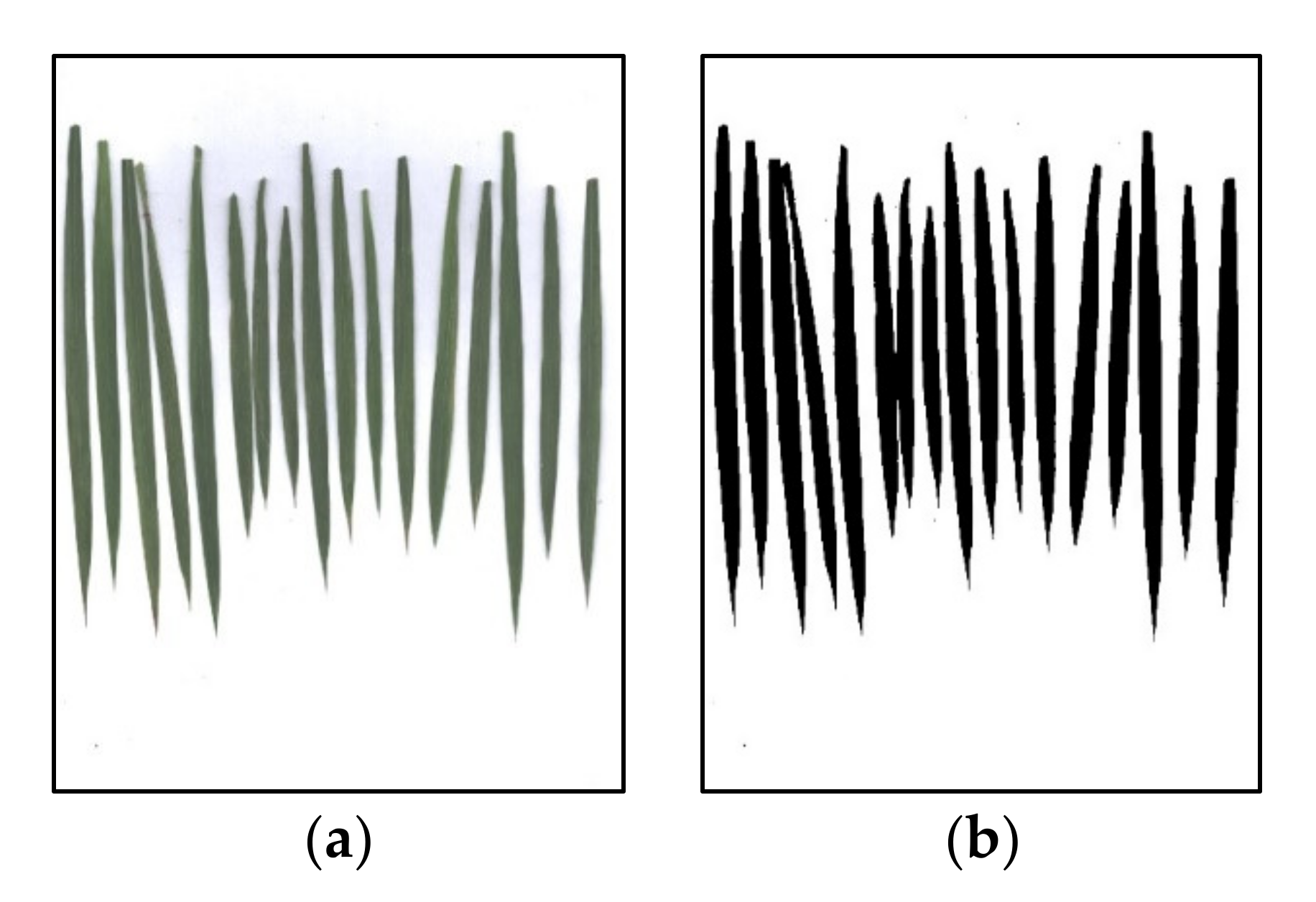

2.5. Image Processing

2.5.1. Optimal Index Method

2.5.2. OS Method

2.5.3. Model Optimization

2.5.4. Method Verification

3. Results

3.1. Correlation between Rice Growth Indicators and UAV-Based Vis at Different Growth Stages

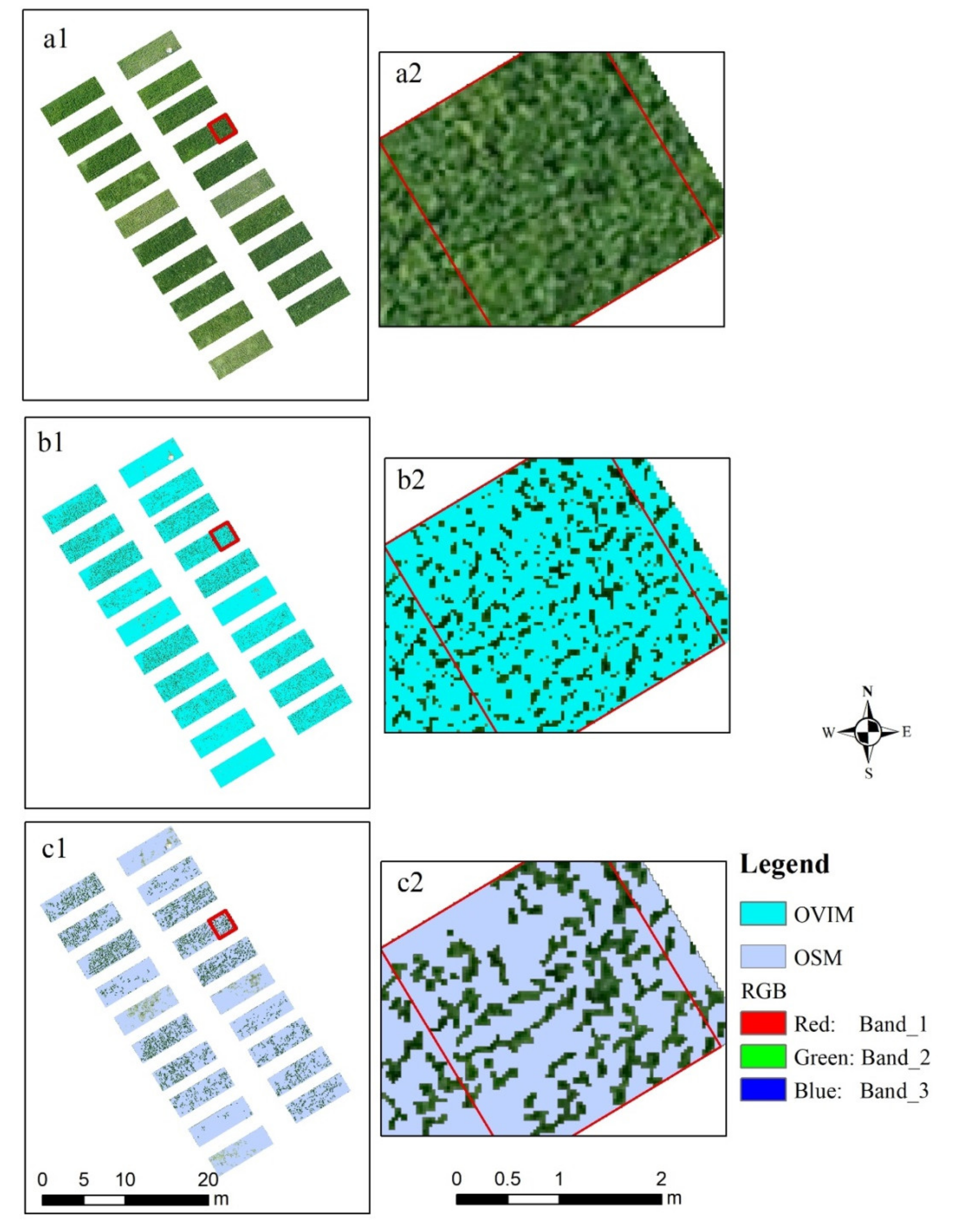

3.2. Image Noncanopy Pixel Removal

3.3. Estimation Model of Key Growth Indicators and OVI Using Different Methods

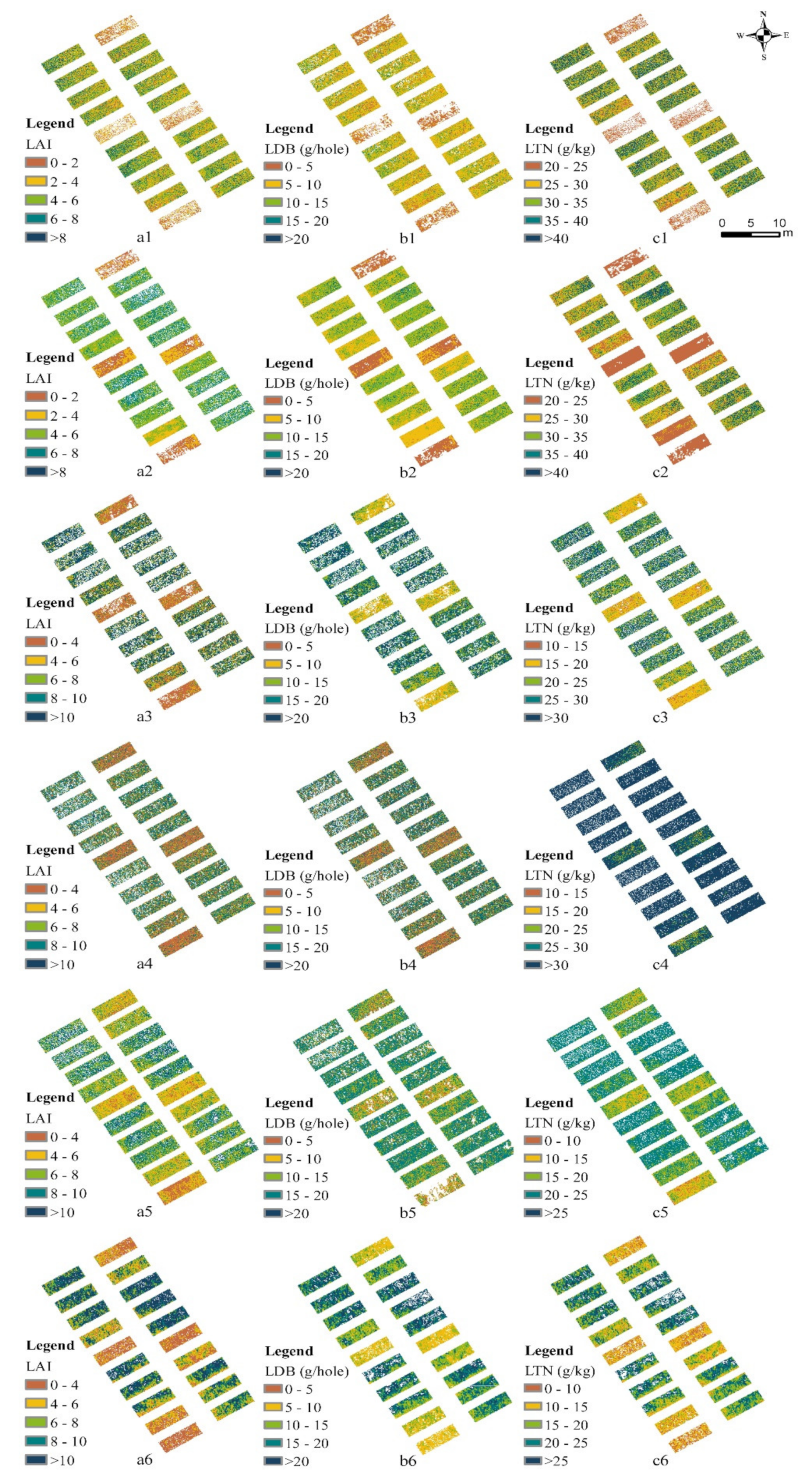

3.4. Estimation Results of Rice Growth Indicators Using a Simple Model Database

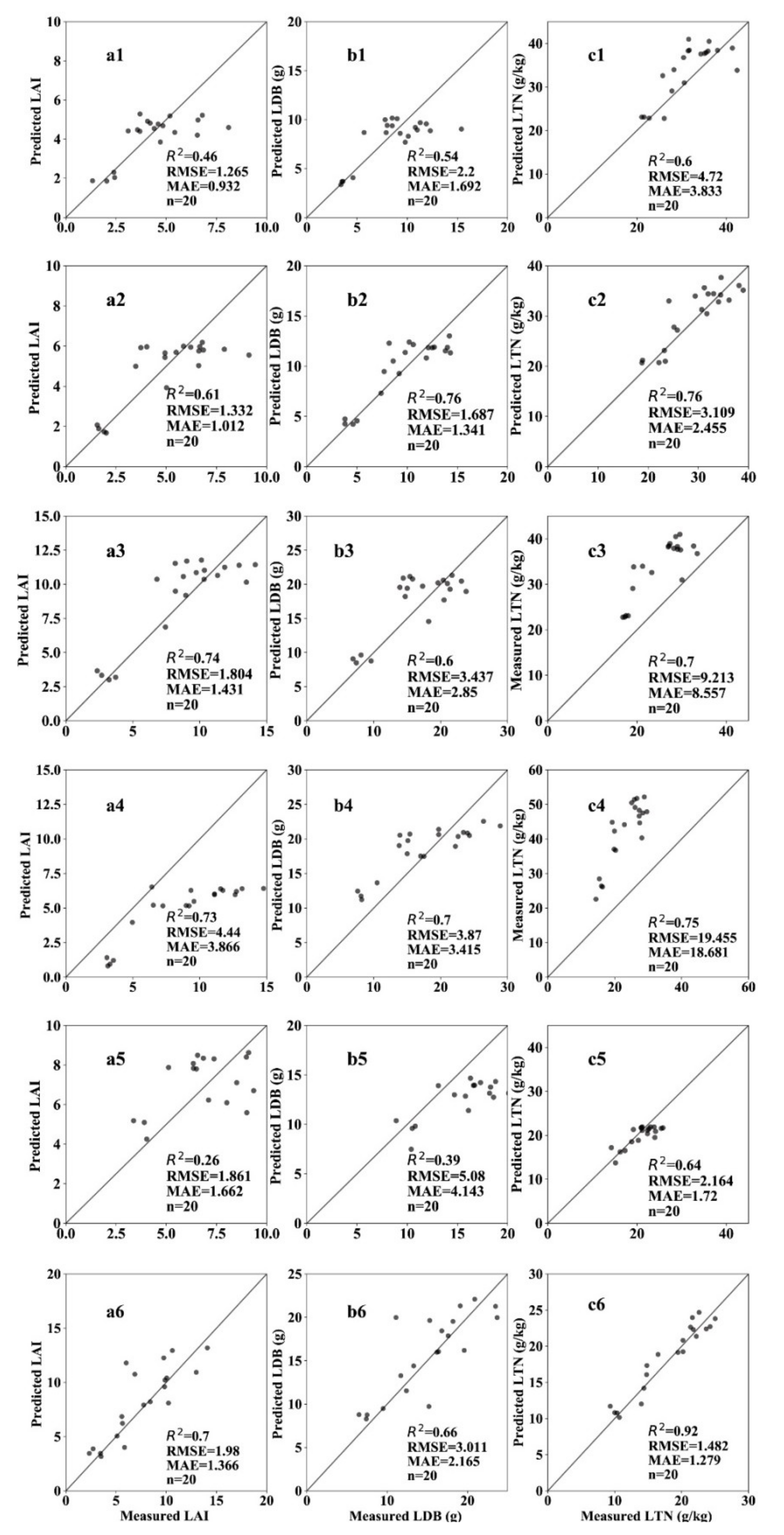

3.5. Validation of Estimation Results of Key Growth Indicators in Different Growth Stages

4. Discussion

4.1. Simple Model Database for Estimating Rice Growth Indicators

4.2. Feasibility of Monitoring Rice Growth Indicators Using Uavs

4.3. Different Methods to Remove Noncanopy Pixels

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Li, P.; Zhang, X.; Wang, W.; Zheng, H.; Yao, X.; Tian, Y.; Zhu, Y.; Cao, W.; Chen, Q.; Cheng, T. Estimating aboveground and organ biomass of plant canopies across the entire season of rice growth with terrestrial laser scanning. Int. J. Appl. Earth Obs. Geoinf. 2020, 91, 102–132. [Google Scholar] [CrossRef]

- Yang, B.; Wang, M.; Sha, Z.; Wang, B.; Chen, J.; Yao, X.; Cheng, T.; Cao, W.; Zhu, Y. Evaluation of aboveground nitrogen content of winter wheat using digital imagery of unmanned aerial vehicles. Sensors 2019, 19, 4416. [Google Scholar] [CrossRef] [PubMed]

- Zhang, N.; Wang, M.; Wang, N. Precision agriculture—A worldwide overview. Comput. Electron. Agric. 2002, 36, 113–132. [Google Scholar] [CrossRef]

- Qiu, Z.; Liu, H.; Zhang, X.; Meng, L.; Xu, M.; Pan, Y.; Bao, Y.; Yu, S. Analysis of spatiotemporal variation of site-specific management zones in a topographic relief area over a period of six years using image segmentation and satellite data. Can. J. Remote Sens. 2019, 45, 746–758. [Google Scholar] [CrossRef]

- Xu, X.; Teng, C.; Zhao, Y.; Du, Y.; Zhao, C.; Yang, G.; Jin, X.; Song, X.; Gu, X.; Casa, R.; et al. Prediction of wheat grain protein by coupling multisource remote sensing imagery and ECMWF data. Remote Sens. 2020, 12, 1349. [Google Scholar] [CrossRef]

- Canisius, F.; Fernandes, R. ALOS PALSAR L-band polarimetric SAR data and in situ measurements for leaf area index assessment. Remote Sens. Lett. 2012, 3, 221–229. [Google Scholar] [CrossRef]

- Gahrouei, O.R.; McNairn, H.; Hosseini, M.; Homayouni, S. Estimation of crop biomass and leaf area index from multitemporal and multispectral imagery using machine learning approaches. Can. J. Remote Sens. 2020, 46, 1712–7971. [Google Scholar] [CrossRef]

- Li, W.; Niu, Z.; Wang, C.; Huang, W.; Chen, H.; Gao, S.; Li, D.; Muhammad, S. Combined use of airborne LiDAR and satellite gf-1 data to estimate leaf area index, height, and aboveground biomass of maize during peak growing season. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2015, 8, 4489–4501. [Google Scholar] [CrossRef]

- Tsui, O.W.; Coops, N.C.; Wulder, M.A.; Marshall, P.L.; McCardle, A. Using multi-frequency radar and discrete-return LiDAR measurements to estimate above-ground biomass and biomass components in a coastal temperate forest. ISPRS J. Photogramm. Remote Sens. 2012, 69, 121–133. [Google Scholar] [CrossRef]

- Zhu, X.; Liu, D. Improving forest aboveground biomass estimation using seasonal Landsat NDVI time-series. ISPRS J. Photogramm. Remote Sens. 2015, 102, 222–231. [Google Scholar] [CrossRef]

- Battude, M.; Al Bitar, A.; Morin, D.; Cros, J.; Huc, M.; Marais Sicre, C.; Le Dantec, V.; Demarez, V. Estimating maize biomass and yield over large areas using high spatial and temporal resolution Sentinel-2 like remote sensing data. Remote Sens. Environ. 2016, 184, 668–681. [Google Scholar] [CrossRef]

- Duan, T.; Chapman, S.C.; Guo, Y.; Zheng, B. Dynamic monitoring of NDVI in wheat agronomy and breeding trials using an unmanned aerial vehicle. Field Crop. Res. 2017, 210, 71–80. [Google Scholar] [CrossRef]

- Li, S.; Ding, X.; Kuang, Q.; Ata-Ui-Karim, S.T.; Cheng, T.; Liu, X.; Tian, Y.; Zhu, Y.; Cao, W.; Cao, Q. Potential of UAV-based active sensing for monitoring rice leaf nitrogen status. Front Plant Sci. 2018, 9, 1834. [Google Scholar] [CrossRef] [PubMed]

- Lu, N.; Wang, W.; Zhang, Q.; Li, D.; Yao, X.; Tian, Y.; Zhu, Y.; Cao, W.; Baret, F.; Liu, S.; et al. Estimation of nitrogen nutrition status in winter wheat from unmanned aerial vehicle based multi-angular multispectral imagery. Front Plant Sci. 2019, 10, 1601. [Google Scholar] [CrossRef]

- Zheng, H.; Cheng, T.; Li, D.; Yao, X.; Tian, Y.; Cao, W.; Zhu, Y. Combining unmanned aerial vehicle (UAV)-based multispectral imagery and ground-based hyperspectral data for plant nitrogen concentration estimation in rice. Front Plant Sci. 2018, 9, 936. [Google Scholar] [CrossRef]

- Zheng, H.; Li, W.; Jiang, J.; Liu, Y.; Cheng, T.; Tian, Y.; Zhu, Y.; Cao, W.; Zhang, Y.; Yao, X. A comparative assessment of different modeling algorithms for estimating leaf nitrogen content in winter wheat using multispectral images from an unmanned aerial vehicle. Remote Sens. 2018, 10, 2026. [Google Scholar] [CrossRef]

- Herrmann, I.; Bdolach, E.; Montekyo, Y.; Rachmilevitch, S.; Townsend, P.A.; Karnieli, A. Assessment of maize yield and phenology by drone-mounted superspectral camera. Precis. Agric. 2020, 21, 51–76. [Google Scholar] [CrossRef]

- Zhou, X.; Zheng, H.B.; Xu, X.Q.; He, J.Y.; Ge, X.K.; Yao, X.; Cheng, T.; Zhu, Y.; Cao, W.X.; Tian, Y.C. Predicting grain yield in rice using multi-temporal vegetation indices from UAV-based multispectral and digital imagery. ISPRS J. Photogramm. Remote Sens. 2017, 130, 246–255. [Google Scholar] [CrossRef]

- Zheng, H.; Cheng, T.; Zhou, M.; Li, D.; Yao, X.; Tian, Y.; Cao, W.; Zhu, Y. Improved estimation of rice aboveground biomass combining textural and spectral analysis of UAV imagery. Precis. Agric. 2018, 20, 611–629. [Google Scholar] [CrossRef]

- Lu, N.; Zhou, J.; Han, Z.; Li, D.; Cao, Q.; Yao, X.; Tian, Y.; Zhu, Y.; Cao, W.; Cheng, T. Improved estimation of aboveground biomass in wheat from RGB imagery and point cloud data acquired with a low-cost unmanned aerial vehicle system. Plant Methods 2019, 15, 17. [Google Scholar] [CrossRef]

- Tilly, N.; Aasen, H.; Bareth, G. Fusion of plant height and vegeta-tion indices for the estimation of barley biomass. Remote Sens. 2015, 7, 11449–11480. [Google Scholar] [CrossRef]

- Li, W.; Niu, Z.; Chen, H.; Li, D.; Wu, M.; Zhao, W. Remote estimation of canopy height and aboveground biomass of maize using high-resolution stereo images from a low-cost unmanned aerial vehicle system. Ecol. Indic. 2016, 67, 637–648. [Google Scholar] [CrossRef]

- Han, L.; Yang, G.; Dai, H.; Yang, H.; Xu, B.; Feng, H.; Li, Z.H.; Yang, X. Fuzzy clustering of maize plant-height patterns using time series of UAV remote-sensing images and variety traits. Front. Plant Sci. 2019, 10, 926. [Google Scholar] [CrossRef] [PubMed]

- Han, L.; Yang, G.; Dai, H.; Xu, B.; Yang, H.; Feng, H.; Li, Z.H.; Yang, X.D. Modeling maize above-ground biomass based on machine learning approaches using UAV remote-sensing data. Plant Methods 2019, 15, 10. [Google Scholar] [CrossRef] [PubMed]

- Liang, W.; Cen, H.; Zhu, J.; Zhang, J.; Zhu, Y.; Sun, D.; Du, X.; Zhai, L.; Weng, H.; Li, Y.; et al. Grain yield prediction of rice using multi-temporal UAV-based RGB and multispectral images and model transfer a case study of small farmlands in the south of China. Agric. For. Meteorol. 2020, 291, 108096. [Google Scholar]

- Wang, W.; Yao, X.; Yao, X.F.; Tian, Y.C.; Liu, X.J.; Ni, J.; Cao, W.X.; Zhu, Y. Estimating leaf nitrogen concentration with three-band vegetation indices in rice and wheat. Field Crop. Res. 2012, 129, 90–98. [Google Scholar] [CrossRef]

- Zha, H.; Miao, Y.; Wang, T.; Li, Y.; Zhang, J.; Sun, W.; Feng, Z.; Kusnierek, K. Improving unmanned aerial vehicle remote sensing-based rice nitrogen nutrition index prediction with machine learning. Remote Sens. 2020, 12, 215. [Google Scholar] [CrossRef]

- Li, B.; Xu, X.; Zhang, L.; Han, J.; Bian, C.; Li, G.; Liu, J.; Jin, L. Above-ground biomass estimation and yield prediction in potato by using UAV-based RGB and hyperspectral imaging. ISPRS J. Photogramm. Remote Sens. 2020, 162, 161–172. [Google Scholar] [CrossRef]

- Zheng, H.; Cheng, T.; Li, D.; Zhou, X.; Yao, X.; Tian, Y.; Cao, W.; Zhu, Y. Evaluation of RGB, color-infrared and multispectral images acquired from unmanned aerial systems for the estimation of nitrogen accumulation in rice. Remote Sens. 2018, 10, 824. [Google Scholar] [CrossRef]

- Wang, Y.; Wang, D.; Zhang, G.; Wang, J. Estimating nitrogen status of rice using the image segmentation of G-R thresholding method. Field Crop. Res. 2013, 149, 33–39. [Google Scholar] [CrossRef]

- Mohan, M.; Silva, C.; Klauberg, C.; Jat, P.; Catts, G.; Cardil, A.; Hudak, A.; Dia, M. Individual tree detection from unmanned aerial vehicle (UAV) derived canopy height model in an open canopy mixed conifer forest. Forests 2017, 8, 340. [Google Scholar] [CrossRef]

- Zhou, C.; Ye, H.; Xu, Z.; Hu, J.; Shi, X.; Hua, S.; Yue, J.; Yang, G. Estimating maize-leaf coverage in field conditions by applying a machine learning algorithm to UAV remote sensing images. Appl. Sci. 2019, 9, 2389. [Google Scholar] [CrossRef]

- Pekkarinen, A. A method for the segmentation of very high spatial resolution images of forested landscapes. Int. J. Remote Sens. 2002, 23, 2817–2836. [Google Scholar] [CrossRef]

- Schiewe, J. Integration of multi-sensor data for landscape modeling using a region-based approach. ISPRS J. Photogramm. Remote Sens. 2003, 57, 371–379. [Google Scholar] [CrossRef]

- Stow, D.; Lopez, A.; Lippitt, C.; Hinton, S.; Weeks, J. Object-based classification of residential land use within Accra, Ghana based on QuickBird satellite data. Int. J. Remote Sens. 2007, 28, 5167–5173. [Google Scholar] [CrossRef] [PubMed]

- Gamanya, R.; De Maeyer, P.; De Dapper, M. Object-oriented change detection for the city of Harare, Zimbabwe. Expert Syst. Appl. 2009, 36, 571–588. [Google Scholar] [CrossRef]

- Liu, H.J.; Whiting, M.L.; Ustin, S.L.; Zarco-Tejada, P.J.; Huffman, T.; Zhang, X.L. Maximizing the relationship of yield to site-specific management zones with object-oriented segmentation of hyperspectral images. Precis. Agric. 2018, 19, 348–364. [Google Scholar] [CrossRef]

- Martha, T.R.; Kerle, N.; Jetten, V.; van Westen, C.J.; Kumar, K.V. Characterizing spectral, spatial and morphometric properties of landslides for semi-automatic detection using object-oriented methods. Geomorphology 2010, 116, 24–36. [Google Scholar] [CrossRef]

- Tong, Q.; Shan, J.; Zhu, B.; Ge, X.; Sun, X.; Liu, Z. Object-oriented coastline classification and extraction from remote sensing imagery. In Remote Sensing of the Environment: 18th National Symposium on Remote Sensing of China, Wuhan, China, 20–23 October 2012; International Society for Optics and Photonics: Bellingham, WA, USA, 2014. [Google Scholar]

- Louhaichi, M.; Borman, M.M.; Johnson, D.E. Spatially located platform and aerial photography for documentation of grazing impacts on wheat. Geocarto Int. 2008, 16, 65–70. [Google Scholar] [CrossRef]

- Tucker, C.J. Red and photographic infrared linear combinations for monitoring vegetation. Remote Sens. Environ. 1979, 8, 127–150. [Google Scholar] [CrossRef]

- Bendig, J.; Yu, K.; Aasen, H.; Bolten, A.; Bennertz, S.; Broscheit, J.; Gnyp, M.L.; Bareth, G. Combining UAV-based plant height from crop surface models, visible, and near infrared vegetation indices for biomass monitoring in barley. Int. J. Appl. Earth Obs. Geoinf. 2015, 39, 79–87. [Google Scholar] [CrossRef]

- Meyer, G.E.; Neto, J.C. Verification of color vegetation indices for automated crop imaging applications. Comput. Electron. Agric. 2008, 63, 282–293. [Google Scholar] [CrossRef]

- Verrelst, J.; Schaepman, M.E.; Koetz, B.; Kneubühler, M. Angular sensitivity analysis of vegetation indices derived from CHRIS/PROBA data. Remote Sens. Environ. 2008, 112, 2341–2353. [Google Scholar] [CrossRef]

- Bo, L.; Jian, C. Segmentation algorithm of high resolution remote sensing images based on LBP and statistical region merging. In Proceedings of the 2012 International Conference on Audio, Language, and Image Processing, Shanghai, China, 16–18 July 2012; Institute of Electrical and Electronics Engineers: Piscataway, NJ, USA, 2012. [Google Scholar]

- Benz, U.C.; Hofmann, P.; Willhauck, G.; Lingenfelder, I.; Heynen, M. Multi-resolution, object-oriented fuzzy analysis of remote sensing data for GIS-ready information. ISPRS J. Photogramm. Remote Sens. 2004, 58, 239–258. [Google Scholar] [CrossRef]

- Frohn, R.C.; Autrey, B.C.; Lane, C.R.; Reif, M. Segmentation and object-oriented classification of wetlands in a karst Florida landscape using multi-season Landsat-7 ETM+ imagery. Int. J. Remote Sens. 2011, 32, 1471–1489. [Google Scholar] [CrossRef]

- Woebbecke, D.M.; Meyer, G.E.; Bargen, K.V.; Mortensen, D.A. Color indices for weed identification under various soil, residue, and lighting conditions. Trans. ASAE 1995, 38, 259–269. [Google Scholar] [CrossRef]

- Gamanya, R.; Maeyer, P.D.; Dapper, M.D. An automated satellite image classification design using object-oriented segmentation algorithms: A move towards standardization. Expert Syst. Appl. 2007, 32, 616–624. [Google Scholar] [CrossRef]

- Dhawan, A.P. Image segmentation and feature extraction. In Principles and Advanced Methods in Medical Imaging and Image Analysis; World Scientific Publishing Company: Singapore, 2015. [Google Scholar]

- Wang, Y.; Qi, Q.; Jiang, L.; Liu, Y. Hybrid remote sensing image segmentation considering intersegment homogeneity and intersegment heterogeneity. IEEE Geosci. Remote Sens. Lett. 2019, 17, 1–5. [Google Scholar] [CrossRef]

| Fertilization Treatments | Compound Fertilizer (kg) | Urea (kg) | Controlled Release Urea (kg) | Proportion of Controlled Release N |

|---|---|---|---|---|

| N1 | 0 | 0 | 0 | 0 |

| N2 | 3 | 0.4 | 0 | 0 |

| N3 | 3 | 0.42 | 0.68 | 30% |

| N4 | 3 | 0.21 | 0.9 | 40% |

| N5 | 3 | 0 | 1.12 | 50% |

| Date | Mean | Median | Standard Deviation | Variance | Kurtosis | Skewness | Min | Max | |

|---|---|---|---|---|---|---|---|---|---|

| LAI | 14 July 19 | 4.38 | 4.20 | 1.78 | 3.18 | −0.32 | 0.31 | 1.33 | 8.10 |

| 26 July 19 | 5.07 | 5.25 | 2.16 | 4.68 | −0.70 | −0.22 | 1.56 | 9.12 | |

| 12 August 19 | 8.68 | 8.99 | 3.51 | 12.29 | −0.50 | −0.47 | 2.34 | 14.15 | |

| 27 August 19 | 8.70 | 9.27 | 3.72 | 13.86 | −1.19 | −0.24 | 3.06 | 14.77 | |

| 08 September 19 | 7.36 | 7.24 | 2.28 | 5.18 | −0.85 | −0.23 | 3.37 | 10.76 | |

| 27 September 19 | 7.53 | 7.31 | 3.36 | 11.28 | −0.76 | 0.19 | 2.33 | 14.09 | |

| LDB g hole−1 | 14 July 19 | 8.62 | 8.75 | 3.22 | 10.37 | −0.23 | −0.09 | 3.40 | 15.40 |

| 26 July 19 | 9.72 | 10.00 | 3.52 | 12.42 | −1.04 | −0.37 | 3.80 | 14.30 | |

| 12 August 19 | 16.41 | 16.55 | 5.25 | 27.57 | −0.76 | −0.51 | 6.90 | 23.80 | |

| 27 August 19 | 17.67 | 17.25 | 6.30 | 39.73 | −0.92 | −0.05 | 7.60 | 28.90 | |

| 08 September 19 | 16.47 | 16.65 | 4.16 | 17.35 | −0.42 | −0.16 | 8.90 | 24.10 | |

| 27 September 19 | 15.10 | 15.75 | 5.11 | 26.14 | −0.77 | −0.09 | 6.50 | 23.70 | |

| LTN g kg−1 | 14 July 19 | 31.40 | 31.53 | 6.11 | 37.37 | −0.61 | −0.03 | 21.07 | 42.44 |

| 26 July 19 | 29.28 | 30.93 | 6.17 | 38.06 | −1.08 | −0.23 | 18.77 | 38.93 | |

| 12 August 19 | 25.24 | 27.27 | 5.56 | 30.94 | −1.42 | −0.33 | 16.83 | 33.50 | |

| 27 August 19 | 23.26 | 25.47 | 5.08 | 25.81 | −1.31 | −0.48 | 14.39 | 29.62 | |

| 08 September 19 | 20.96 | 21.17 | 3.28 | 10.78 | −0.37 | −0.59 | 14.25 | 25.80 | |

| 27 September 19 | 17.86 | 19.83 | 5.19 | 26.96 | −1.31 | −0.36 | 9.34 | 25.01 |

| Data of UAV Flights and Sampling | Growth Stage |

|---|---|

| 14 July 2019 | Tillering stage |

| 26 July 2019 | Early jointing stage |

| 12 August 2019 | Late jointing stage |

| 27 August 2019 | Heading stage |

| 8 September 2019 | Flowering stage |

| 27 September 2019 | Filling stage |

| Name | Index | Formulation | References |

|---|---|---|---|

| Green Leaf Index | GLI | GLI = (2 × g − r + b)/(2 × g + r + b) | [40] |

| Green Red Vegetation Index | GRVI | GRVI = (g − r)/(g + r) | [41] |

| Modified Green Red Vegetation Index | MGRVI | MGRVI = (g2 − r2)/(g2 + r2) | [42] |

| Excess Green minus Excess Red | ExGR | ExGR = (2 × g − r − b) − (1.4 × r − g) | [21] |

| Excess Red Vegetation Index | ExR | ExR = 1.4 × r − g | [43] |

| Red Green Ratio Index | RGRI | RGRI = r/g | [44] |

| Indicators | Date | Optimal VI | Optimal Model | R2 |

|---|---|---|---|---|

| LDB | Tillering stage | GLI | y = 20.38x0.6508 | 0.795 |

| Early jointing stage | RGRI | y = 2.95x−2.4 | 0.853 | |

| Late jointing stage | MGRVI | y = 39.30x1.0989 | 0.673 | |

| Heading stage | MGRVI | y = 77.27x1.9317 | 0.871 | |

| Flowering stage | MGRVI | y = −672.5x2 + 308.3x − 16.9 | 0.475 | |

| Filling stage | ExR | y = 70.90 × 10−12.63x | 0.680 | |

| LAI | Tillering stage | GLI | y = 10.24x0.6278 | 0.631 |

| Early jointing stage | MGRVI | y = 11.17x0.9598 | 0.752 | |

| Late jointing stage | MGRVI | y = 28.73x1.6529 | 0.852 | |

| Heading stage | GRVI | y = −1444.7x2 + 544.7x − 40.5 | 0.602 | |

| Flowering stage | MGRVI | y = 22.53x0.7219 | 0.366 | |

| Filling stage | ExR | y = 66.33 × 10−17.94x | 0.757 | |

| LTN | Tillering stage | RGRI | y = 21.32x−0.75 | 0.677 |

| Early jointing stage | ExR | y = 0.42x−1.917 | 0.848 | |

| Late jointing stage | GRVI | y = 12.11 × 102.6839x | 0.719 | |

| Heading stage | MGRVI | y = 66.94x0.9714 | 0.746 | |

| Flowering stage | GRVI | y = −1468.5x2 + 384.5x − 2.3 | 0.668 | |

| Filling stage | ExR | y = 314.1x2 − 282.8x + 48.6 | 0.915 |

| No Processing | Optimal Index Method | Object-Oriented Segmentation Method | ||||||

|---|---|---|---|---|---|---|---|---|

| Date | UAV-VI | Model 1 | R2 | Model 2 | R2 | Model 3 | R2 | |

| Tillering stage | GLI | y = 20.38x0.6508 | 0.795 | y = 31.37x0.9823 | 0.818 | y = 24.40x0.7844 | 0.829 | |

| Early jointing stage | RGRI | y = 2.95x−2.4 | 0.853 | y = 2.55x−2.842 | 0.881 | y = 2.83x−2.494 | 0.876 | |

| Late jointing stage | MGRVI | y = 39.30x1.0989 | 0.673 | y = 49.13x1.279 | 0.687 | y = 51.73x1.357 | 0.702 | |

| LDB | Heading stage | RGRI | y = − 1482.3x2 + 2081.2x − 709.0 | 0.588 | y = − 2412.5x2 + 3497.7x − 1246.6 | 0.648 | y = − 2186.1x2 + 3150.6x − 1114.3 | 0.626 |

| Flowering stage | MGRVI | y = − 672.55x2 + 308.3x − 16.9 | 0.475 | y = − 913.2x2 + 386.2x − 22.8 | 0.510 | y = − 1138.1x2 + 486.8x − 33.6 | 0.552 | |

| Filling stage | ExR | y = 70.90 × 10−12.63x | 0.680 | y = 90.667 × 10−14.45x | 0.747 | y = 101.20 × 10−15.16x | 0.755 | |

| Tillering stage | GLI | y = 10.24x0.6278 | 0.631 | y = 15.10x0.9435 | 0.688 | y = 11.67x0.7425 | 0.677 | |

| Early jointing stage | MGRVI | y = 11.17x0.9598 | 0.752 | y = 13.33x1.1497 | 0.803 | y = 11.54x1.0217 | 0.790 | |

| Late jointing stage | MGRVI | y = 28.73x1.6529 | 0.852 | y = 40.55x1.9319 | 0.868 | y = 43.35x2.0367 | 0.875 | |

| LAI | Heading stage | GRVI | y = − 1444.7x2 + 544.7x − 40.5 | 0.602 | y = − 2056.8x2 + 709.4x − 51.0 | 0.688 | y = − 1762.3x2 + 626.8x − 45.6 | 0.697 |

| Flowering stage | MGRVI | y = 22.53x0.7219 | 0.366 | y = 30.83x0.8767 | 0.433 | y = 29.65x0.8765 | 0.422 | |

| Filling stage | ExR | y = 66.33 × 10−17.94x | 0.757 | y = 95.01 × 10−20.59x | 0.818 | y = 100.3 × 10−20.93x | 0.777 | |

| Tillering stage | RGRI | y = 21.319x−0.75 | 0.677 | y = 20.051x−0.865 | 0.704 | y = 20.80x−0.795 | 0.704 | |

| Early jointing stage | ExR | y = 0.42x−1.917 | 0.848 | y = 0.27x−2.135 | 0.857 | y = 0.44x−1.9 | 0.861 | |

| Late jointing stage | GRVI | y = 12.11 × 102.6839x | 0.719 | y = 10.59 × 103.618x | 0.737 | y = 10.32 × 103.6643x | 0.735 | |

| LTN | Heading stage | MGRVI | y = 66.94x0.9714 | 0.746 | y = 214.8x1.2114 | 0.800 | y = 204.1x1.1947 | 0.799 |

| Flowering stage | GRVI | y = − 1468.5x2 + 384.5x − 2.3 | 0.668 | y = − 1570.0x2 + 400.3x − 2.60 | 0.717 | y = − 2480.6x2 + 584.8x − 11.6 | 0.720 | |

| Filling stage | ExR | y = 314.05x2 − 282.8x + 48.6 | 0.915 | y = 464.6x2 − 341.1x + 53.8 | 0.930 | y = 904.9x2 − 470.6x + 63.2 | 0.931 | |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Qiu, Z.; Xiang, H.; Ma, F.; Du, C. Qualifications of Rice Growth Indicators Optimized at Different Growth Stages Using Unmanned Aerial Vehicle Digital Imagery. Remote Sens. 2020, 12, 3228. https://doi.org/10.3390/rs12193228

Qiu Z, Xiang H, Ma F, Du C. Qualifications of Rice Growth Indicators Optimized at Different Growth Stages Using Unmanned Aerial Vehicle Digital Imagery. Remote Sensing. 2020; 12(19):3228. https://doi.org/10.3390/rs12193228

Chicago/Turabian StyleQiu, Zhengchao, Haitao Xiang, Fei Ma, and Changwen Du. 2020. "Qualifications of Rice Growth Indicators Optimized at Different Growth Stages Using Unmanned Aerial Vehicle Digital Imagery" Remote Sensing 12, no. 19: 3228. https://doi.org/10.3390/rs12193228

APA StyleQiu, Z., Xiang, H., Ma, F., & Du, C. (2020). Qualifications of Rice Growth Indicators Optimized at Different Growth Stages Using Unmanned Aerial Vehicle Digital Imagery. Remote Sensing, 12(19), 3228. https://doi.org/10.3390/rs12193228