A CNN-Based Pan-Sharpening Method for Integrating Panchromatic and Multispectral Images Using Landsat 8

Abstract

1. Introduction

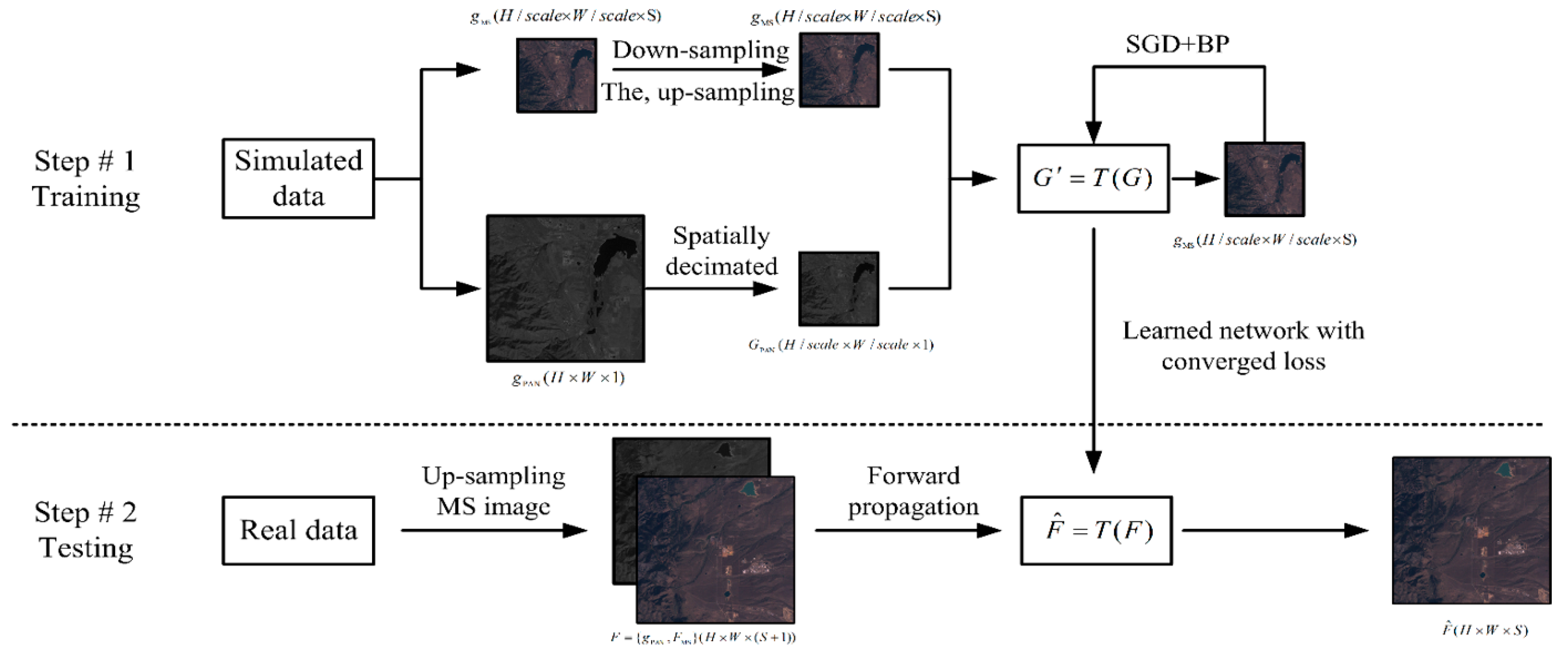

2. Proposed CNN-Based Pan-Sharpening Method

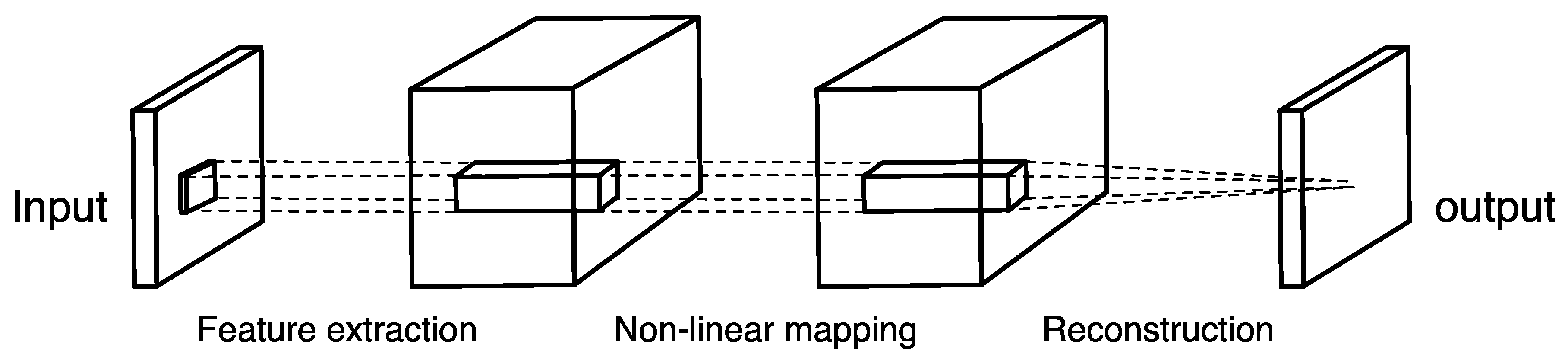

2.1. Pan-Sharpening Structure

- (1)

- Feature extraction. The main task is to extract image blocks overlapping from low-resolution input images and express them with high-dimensional vectors, which is equivalent to convoluting image blocks with a set of filters.

- (2)

- Nonlinear mapping relationship construction. Nonlinear mapping transforms the feature vector of the previous layer from the low-resolution space to the high-resolution space.

- (3)

- Reconstruction. The overlapped high-resolution image blocks are averaged to produce the final image.

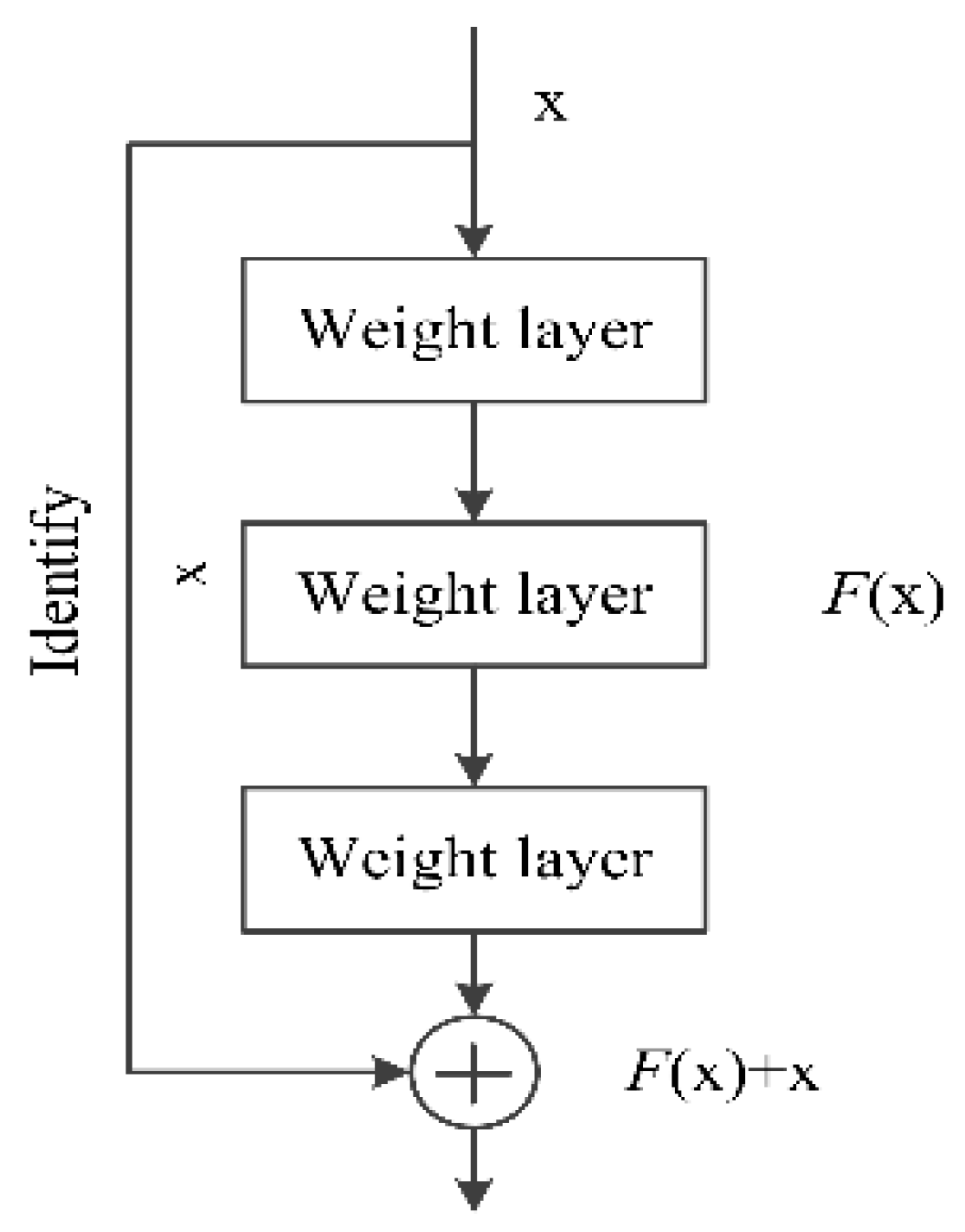

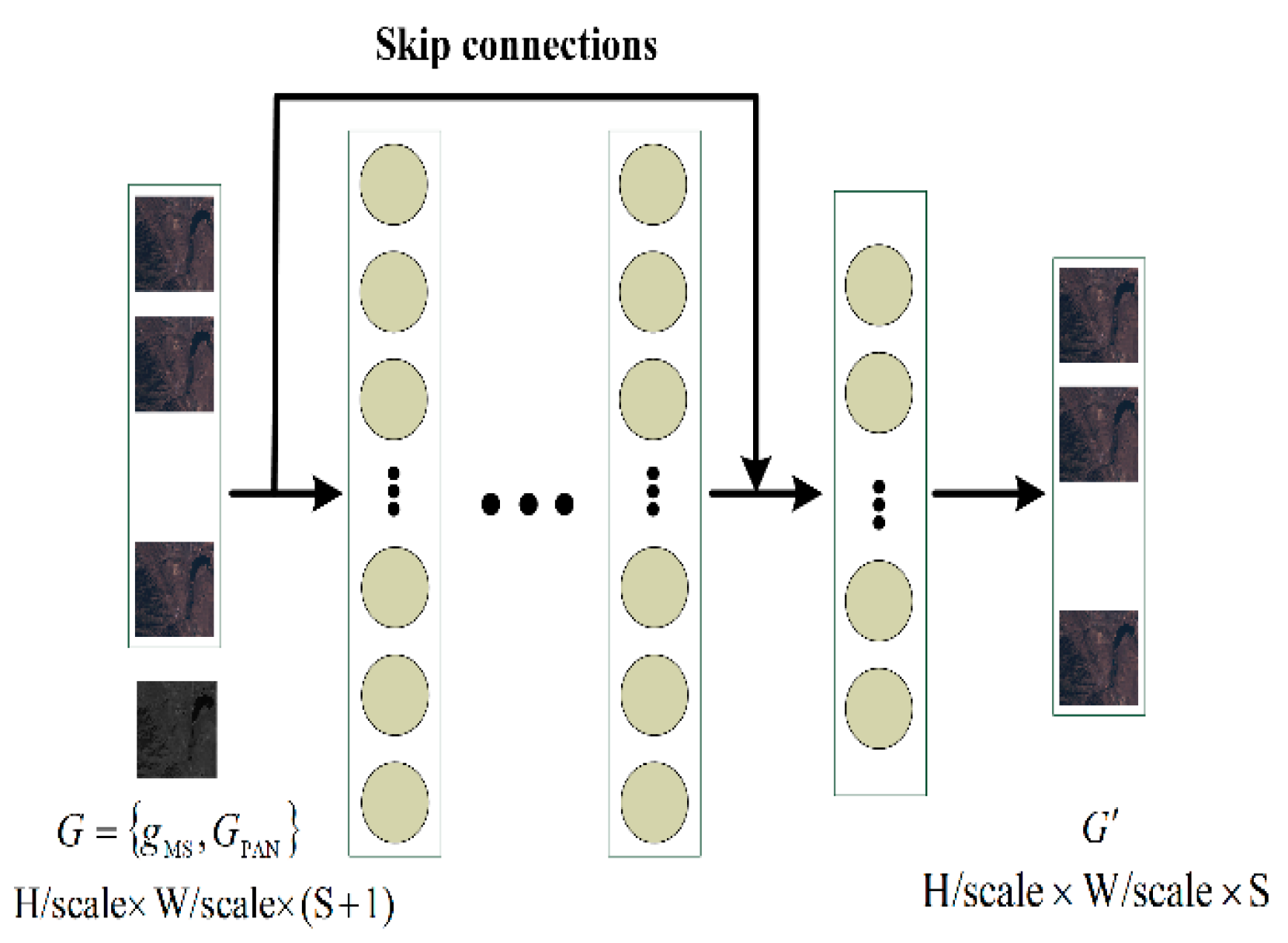

2.2. Resnet-Based Architecture

3. Experimentation and Analysis

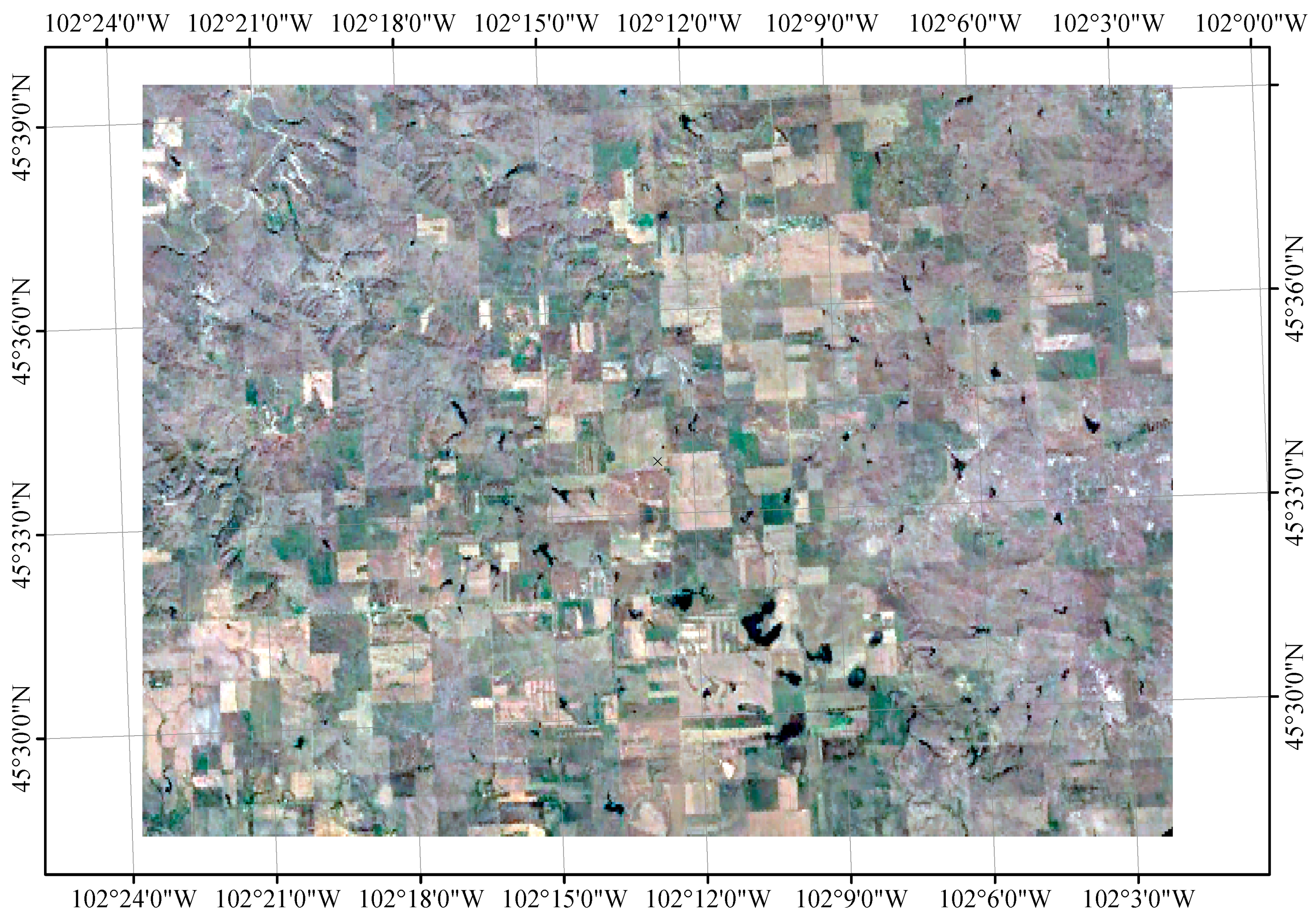

3.1. Experimental Dataset and Evaluation Metric

3.2. Implementation

3.3. Effects of Network Parameters on the Pan-Sharpening Results

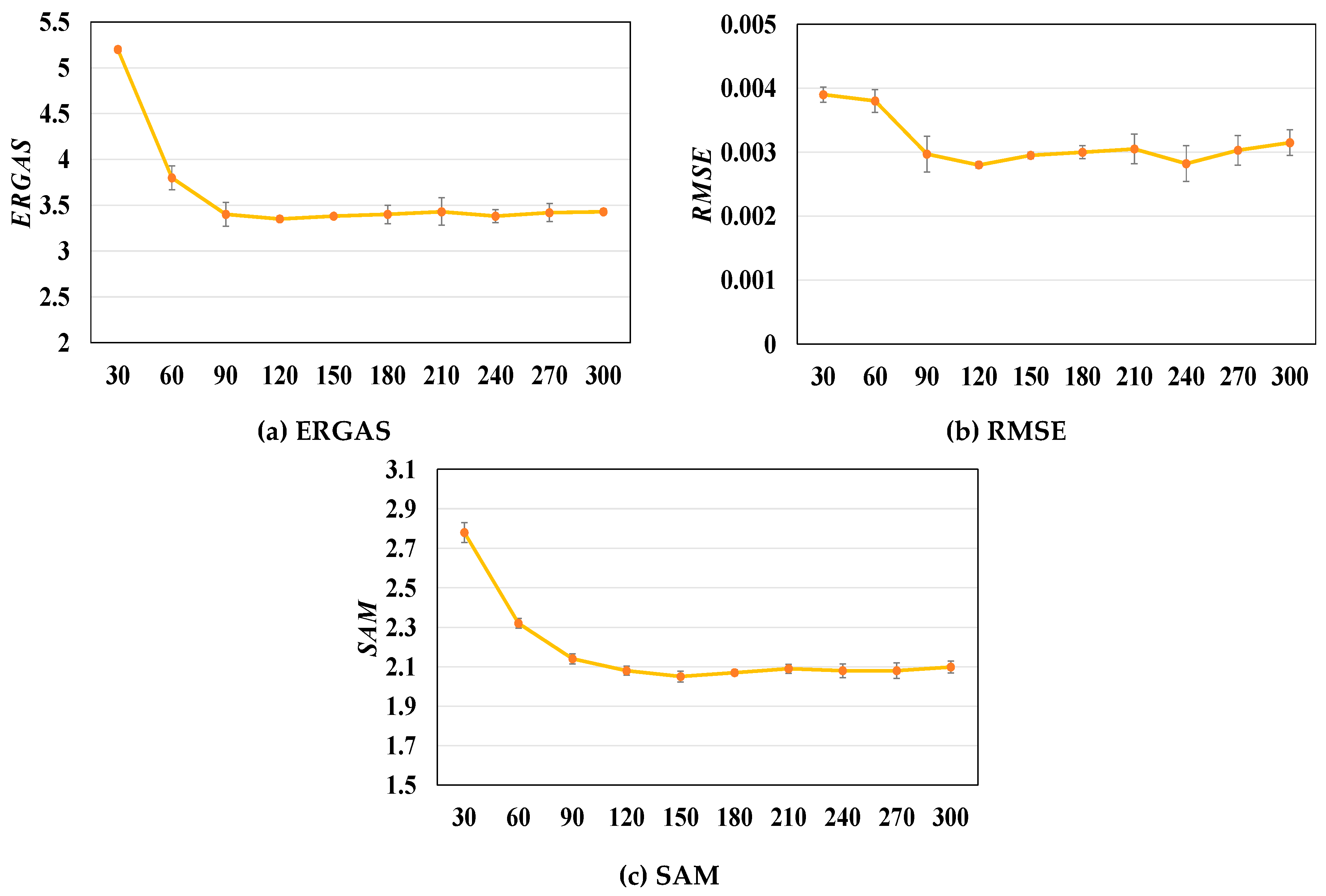

3.3.1. Role of the Number of Training Epochs in the Pan-Sharpening Results

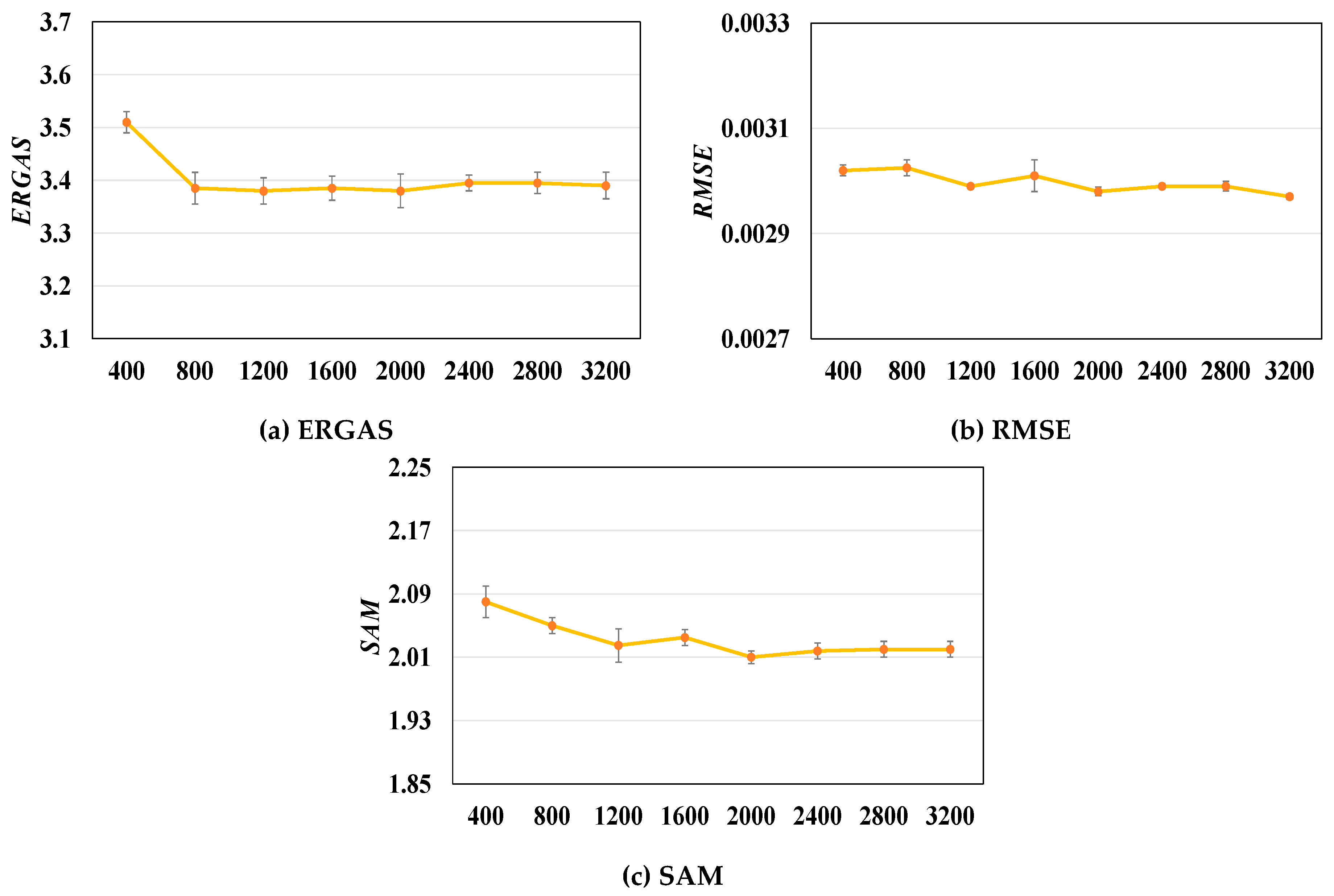

3.3.2. Role of the Number of Training Patches in the Pan-Sharpening Results

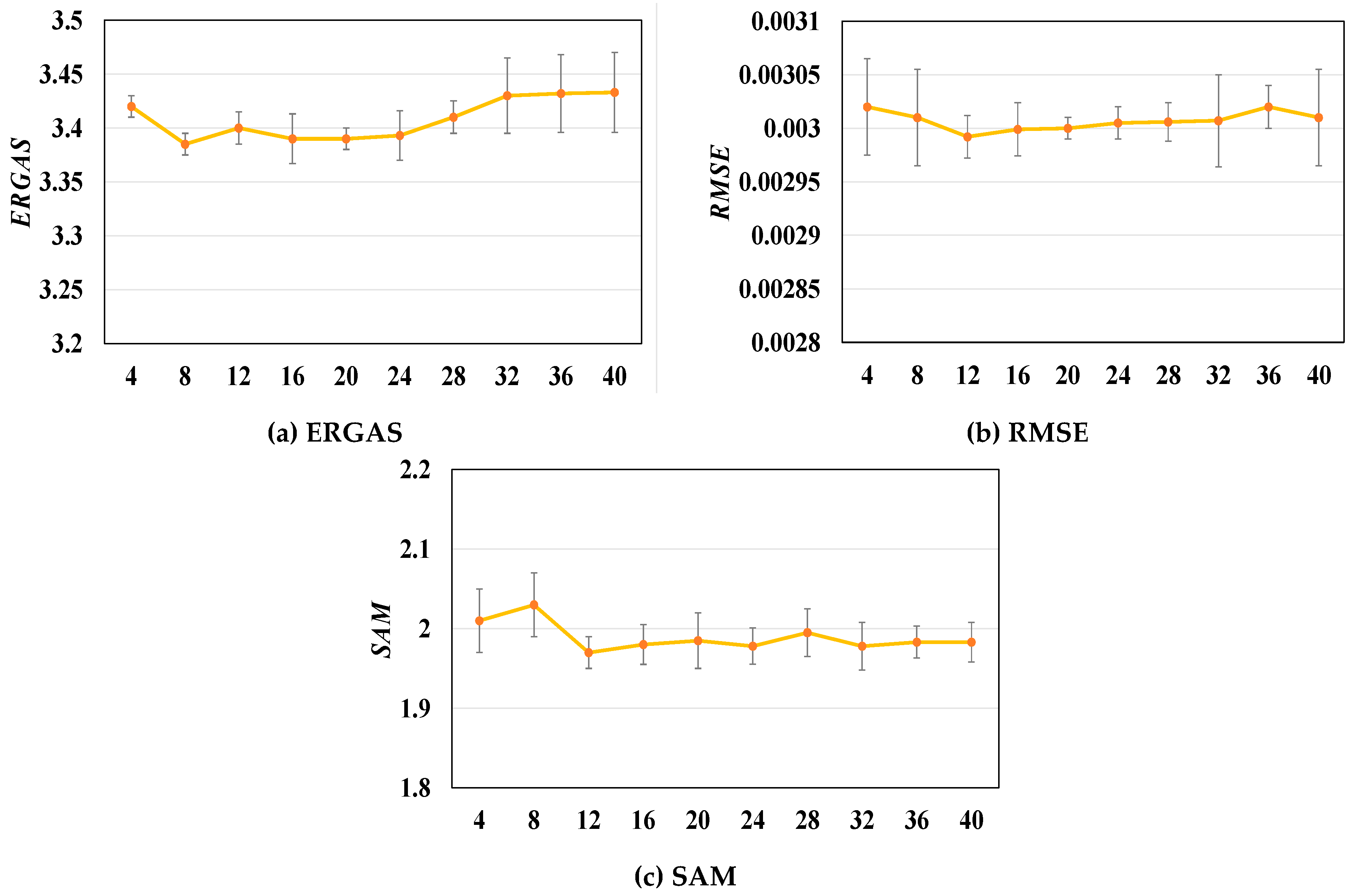

3.3.3. Role of the Size of Patches in the Pan-Sharpening Results

3.4. Comparison with Other Existing Methods, Quantitatively and Qualitatively

4. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Campbell, J.B.; Wynne, R.H. Introduction to Remote Sensing; Guilford Press: New York, NY, USA, 2011. [Google Scholar]

- Tralli, D.M.; Blom, R.G.; Zlotnicki, V.; Donnellan, A.; Evans, D.L. Satellite remote sensing of earthquake, volcano, flood, landslide and coastal inundation hazards. ISPRS J. Photogramm. Remote Sens. 2005, 59, 185–198. [Google Scholar] [CrossRef]

- Rees, W.G.; Pellika, P. Principles of remote sensing. In Remote Sensing of Glaciers; CRC Press: London, UK, 2010. [Google Scholar]

- Palsson, F.; Sveinsson, J.R.; Ulfarsson, M.O. Multispectral and hyperspectral image fusion using a 3-D-convolutional neural network. IEEE Geosci. Remote Sens. Lett. 2017, 14, 639–643. [Google Scholar] [CrossRef]

- Ding, Z.; Wu, Z.; Huang, W.; Yin, X.; Sun, J.; Zhang, Y.; Wei, Z.; Zhang, Y. A Pan-sharpening method for multispectral image with back propagation neural network and its parallel optimization based on Spark. In Proceedings of the 2017 International Conference on Progress in Informatics and Computing (PIC), Nanjing, China, 15–17 December 2017; pp. 113–118. [Google Scholar]

- Laben, C.A.; Brower, B.V. Process for Enhancing the Spatial Resolution of Multispectral Imagery Using Pan-Sharpening. U.S. Patents 6,011,875, 4 January 2000. [Google Scholar]

- Tu, T.-M.; Su, S.-C.; Shyu, H.-C.; Huang, P.S. A new look at IHS-like image fusion methods. Inf. Fusion 2001, 2, 177–186. [Google Scholar] [CrossRef]

- Kwarteng, P.; Chavez, A. Extracting spectral contrast in Landsat Thematic Mapper image data using selective principal component analysis. Photogramm. Eng. Remote Sens. 1989, 55, 1. [Google Scholar]

- Dong, W.; Xiao, S.; Xue, X.; Qu, J. An Improved Hyperspectral Pansharpening Algorithm Based on Optimized Injection Model. IEEE Access 2019, 7, 16718–16729. [Google Scholar] [CrossRef]

- Aiazzi, B.; Baronti, S.; Selva, M. Improving component substitution pansharpening through multivariate regression of MS + Pan data. IEEE Trans. Geosci. Remote Sens. 2007, 45, 3230–3239. [Google Scholar] [CrossRef]

- Rahmani, S.; Strait, M.; Merkurjev, D.; Moeller, M.; Wittman, T. An adaptive IHS pan-sharpening method. IEEE Geosci. Remote Sens. Lett. 2010, 7, 746–750. [Google Scholar] [CrossRef]

- Yang, C.; Zhan, Q.; Liu, H.; Ma, R. An IHS-Based Pan-Sharpening Method for Spectral Fidelity Improvement Using Ripplet Transform and Compressed Sensing. Sensors 2018, 18, 3624. [Google Scholar] [CrossRef]

- Duran, J.; Buades, A. Restoration of Pansharpened Images by Conditional Filtering in the PCA Domain. IEEE Geosci. Remote Sens. Lett. 2018, 16, 442–446. [Google Scholar] [CrossRef]

- Yin, H.; Li, S. Pansharpening with multiscale normalized nonlocal means filter: A two-step approach. IEEE Trans. Geosci. Remote Sens. 2015, 53, 5734–5745. [Google Scholar]

- Aiazzi, B.; Alparone, L.; Baronti, S.; Garzelli, A.; Selva, M. MTF-tailored multiscale fusion of high-resolution MS and Pan imagery. Photogramm. Eng. Remote Sens. 2006, 72, 591–596. [Google Scholar] [CrossRef]

- Vivone, G.; Restaino, R.; Mura, M.D.; Licciardi, G.; Chanussot, J. Contrast and error-based fusion schemes for multispectral image pansharpening. IEEE Geosci. Remote Sens. Lett. 2013, 11, 930–934. [Google Scholar] [CrossRef]

- Liu, J. Smoothing filter-based intensity modulation: A spectral preserve image fusion technique for improving spatial details. Int. J. Remote Sens. 2000, 21, 3461–3472. [Google Scholar] [CrossRef]

- Kaplan, N.H.; Erer, I. Bilateral filtering-based enhanced pansharpening of multispectral satellite images. IEEE Geosci. Remote Sens. Lett. 2014, 11, 1941–1945. [Google Scholar] [CrossRef]

- Dong, W.; Xiao, S.; Qu, J. Fusion of hyperspectral and panchromatic images with guided filter. SignalImage Video Process. 2018, 12, 1369–1376. [Google Scholar] [CrossRef]

- Li, X.; Cui, J.; Zhao, L. Blind nonlinear hyperspectral unmixing based on constrained kernel nonnegative matrix factorization. SignalImage Video Process. 2014, 8, 1555–1567. [Google Scholar] [CrossRef]

- Simões, M.; Bioucas-Dias, J.; Almeida, L.B.; Chanussot, J. A convex formulation for hyperspectral image superresolution via subspace-based regularization. IEEE Trans. Geosci. Remote Sens. 2014, 53, 3373–3388. [Google Scholar] [CrossRef]

- Wei, Q.; Dobigeon, N.; Tourneret, J.-Y. Fast fusion of multi-band images based on solving a Sylvester equation. IEEE Trans. Image Process. 2015, 24, 4109–4121. [Google Scholar] [CrossRef]

- Wei, Q.; Bioucas-Dias, J.; Dobigeon, N.; Tourneret, J.-Y. Hyperspectral and multispectral image fusion based on a sparse representation. IEEE Trans. Geosci. Remote Sens. 2015, 53, 3658–3668. [Google Scholar] [CrossRef]

- Zhang, L.; Shen, H.; Gong, W.; Zhang, H. Adjustable model-based fusion method for multispectral and panchromatic images. IEEE Trans. Syst. Man Cybern. Part B 2012, 42, 1693–1704. [Google Scholar] [CrossRef]

- Li, S.; Yang, B. A new pan-sharpening method using a compressed sensing technique. IEEE Trans. Geosci. Remote Sens. 2010, 49, 738–746. [Google Scholar] [CrossRef]

- Li, S.; Yin, H.; Fang, L. Remote sensing image fusion via sparse representations over learned dictionaries. IEEE Trans. Geosci. Remote Sens. 2013, 51, 4779–4789. [Google Scholar] [CrossRef]

- Shen, H.; Meng, X.; Zhang, L. An integrated framework for the spatio–temporal–spectral fusion of remote sensing images. IEEE Trans. Geosci. Remote Sens. 2016, 54, 7135–7148. [Google Scholar] [CrossRef]

- Jiang, C.; Zhang, H.; Shen, H.; Zhang, L. Two-step sparse coding for the pan-sharpening of remote sensing images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2013, 7, 1792–1805. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. Adv. Neural Inf. Process. Syst. 2012, 1097–1105. [Google Scholar] [CrossRef]

- Oquab, M.; Bottou, L.; Laptev, I.; Sivic, J. Learning and transferring mid-level image representations using convolutional neural networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 1717–1724. [Google Scholar]

- Karpathy, A.; Toderici, G.; Shetty, S.; Leung, T.; Sukthankar, R.; Fei-Fei, L. Large-scale video classification with convolutional neural networks. In Proceedings of the IEEE conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 1725–1732. [Google Scholar]

- Ji, S.; Xu, W.; Yang, M.; Yu, K. 3D convolutional neural networks for human action recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 35, 221–231. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Bengio, Y.; Courville, A.; Vincent, P. Representation learning: A review and new perspectives. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 1798–1828. [Google Scholar] [CrossRef]

- Burger, H.C.; Schuler, C.J.; Harmeling, S. Image denoising: Can plain neural networks compete with BM3D? In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition, Providence, RI, USA, 16–21 June 2012; pp. 2392–2399. [Google Scholar]

- Dong, C.; Loy, C.C.; He, K.; Tang, X. Learning a deep convolutional network for image super-resolution. In Proceedings of the European Conference on Computer Vision, Zurich, Switzerland, 6–12 September 2014; pp. 184–199. [Google Scholar]

- Kim, J.; Lee, J.K.; Lee, K.M. Deeply-recursive convolutional network for image super-resolution. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 1637–1645. [Google Scholar]

- Mao, X.; Shen, C.; Yang, Y.-B. Image restoration using very deep convolutional encoder-decoder networks with symmetric skip connections. In Proceedings of the 30th Conference on Neural Information Processing Systems (NIPS 2016), Barcelona, Spain, 5–10 December 2016; pp. 2802–2810. [Google Scholar]

- Tai, Y.; Yang, J.; Liu, X. Image super-resolution via deep recursive residual network. In Proceedings of the IEEE conference on computer vision and pattern recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 3147–3155. [Google Scholar]

- Stimpel, B.; Syben, C.; Schirrmacher, F.; Hoelter, P.; Dörfler, A.; Maier, A. Multi-Modal Super-Resolution with Deep Guided Filtering. In Bildverarbeitung für die Medizin 2019; Springer: Berlin/Heidelberg, Germany, 2019; pp. 110–115. [Google Scholar]

- Molini, A.B.; Valsesia, D.; Fracastoro, G.; Magli, E. DeepSUM: Deep neural network for Super-resolution of Unregistered Multitemporal images. arXiv 2019, arXiv:1907.06490. [Google Scholar]

- Liu, D.; Wen, B.; Fan, Y.; Loy, C.C.; Huang, T.S. Non-local recurrent network for image restoration. In Proceedings of the 32nd Conference on Neural Information Processing Systems (NeurIPS 2018), Montreal, QC, Canada, 3–8 December 2018; pp. 1673–1682. [Google Scholar]

- Masi, G.; Cozzolino, D.; Verdoliva, L.; Scarpa, G. Pansharpening by convolutional neural networks. Remote Sens. 2016, 8, 594. [Google Scholar] [CrossRef]

- Huang, W.; Xiao, L.; Wei, Z.; Liu, H.; Tang, S. A new pan-sharpening method with deep neural networks. IEEE Geosci. Remote Sens. Lett. 2015, 12, 1037–1041. [Google Scholar] [CrossRef]

- Palsson, F.; Sveinsson, J.; Ulfarsson, M. Sentinel-2 image fusion using a deep residual network. Remote Sens. 2018, 10, 1290. [Google Scholar] [CrossRef]

- Dong, C.; Loy, C.C.; He, K.; Tang, X. Image super-resolution using deep convolutional networks. IEEE Trans. Pattern Anal. Mach. Intell. 2015, 38, 295–307. [Google Scholar] [CrossRef] [PubMed]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Yuanbo, N. Improved Brovey Transform Image Fusion Method. J. Geomat. 2008, 3, 237. [Google Scholar]

- Nunez, J.; Otazu, X.; Fors, O.; Prades, A.; Pala, V.; Arbiol, R. Multiresolution-based image fusion with additive wavelet decomposition. IEEE Trans. Geosci. Remote Sens. 1999, 37, 1204–1211. [Google Scholar] [CrossRef]

- Ballester, C.; Caselles, V.; Igual, L.; Verdera, J.; Rougé, B. A variational model for P+ XS image fusion. Int. J. Comput. Vis. 2006, 69, 43–58. [Google Scholar] [CrossRef]

- Li, S.; Kang, X.; Hu, J. Image fusion with guided filtering. IEEE Trans. Image Process. 2013, 22, 2864–2875. [Google Scholar]

- Alparone, L.; Wald, L.; Chanussot, J.; Thomas, C.; Gamba, P.; Bruce, L.M. Comparison of pansharpening algorithms: Outcome of the 2006 GRS-S data-fusion contest. IEEE Trans. Geosci. Remote Sens. 2007, 45, 3012–3021. [Google Scholar] [CrossRef]

- Zhang, L.; Zhang, L.; Tao, D.; Huang, X. On combining multiple features for hyperspectral remote sensing image classification. IEEE Trans. Geosci. Remote Sens. 2011, 50, 879–893. [Google Scholar] [CrossRef]

- Abadi, M.; Agarwal, A.; Barham, P.; Brevdo, E.; Chen, Z.; Citro, C.; Corrado, G.S.; Davis, A.; Dean, J.; Devin, M.; et al. Tensorflow: Large-scale machine learning on heterogeneous distributed systems. arXiv 2016, arXiv:1603.04467. [Google Scholar]

| Spectral Band | Wavelength (nm) | Resolution (m/Pixel) |

|---|---|---|

| Band 1—Coastal / Aerosol | 433–453 | 30 |

| Band 2—Blue | 450–515 | 30 |

| Band 3—Green | 525–600 | 30 |

| Band 4—Red | 630–680 | 30 |

| Band 5—Near-Infrared | 845–885 | 30 |

| Band 6—Short-Wavelength Infrared | 1560–1660 | 30 |

| Band 7—Short-Wavelength Infrared | 2100–2300 | 30 |

| Band 8—Panchromatic | 500–680 | 15 |

| Band 9—Cirrus | 1360–1390 | 30 |

| Methods | ERGAS | RMSE | SAM |

|---|---|---|---|

| BT | 3.531 | 0.0042 | 2.582 |

| HIS | 4.272 | 0.0074 | 3.420 |

| PCA | 4.589 | 0.0059 | 3.842 |

| WD | 3.652 | 0.0050 | 2.745 |

| P + XS | 4.746 | 0.0067 | 3.211 |

| GF | 3.236 | 0.0039 | 2.442 |

| PNN | 3.201 | 0.0036 | 2.299 |

| Ours | 3.199 | 0.0031 | 2.121 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Li, Z.; Cheng, C. A CNN-Based Pan-Sharpening Method for Integrating Panchromatic and Multispectral Images Using Landsat 8. Remote Sens. 2019, 11, 2606. https://doi.org/10.3390/rs11222606

Li Z, Cheng C. A CNN-Based Pan-Sharpening Method for Integrating Panchromatic and Multispectral Images Using Landsat 8. Remote Sensing. 2019; 11(22):2606. https://doi.org/10.3390/rs11222606

Chicago/Turabian StyleLi, Zhiqiang, and Chengqi Cheng. 2019. "A CNN-Based Pan-Sharpening Method for Integrating Panchromatic and Multispectral Images Using Landsat 8" Remote Sensing 11, no. 22: 2606. https://doi.org/10.3390/rs11222606

APA StyleLi, Z., & Cheng, C. (2019). A CNN-Based Pan-Sharpening Method for Integrating Panchromatic and Multispectral Images Using Landsat 8. Remote Sensing, 11(22), 2606. https://doi.org/10.3390/rs11222606