1. Introduction

The world population is expected to reach 9.1 billion people by 2050. This will require the production of 3 billion tons of cereals annually, up from today’s nearly 2.1 billion tons for both food and animal feed [

1]. Therefore, the use of conservation agricultural methods is necessary to protect water and soil, which are the main sources of agricultural productions [

2,

3].

Conservation tillage systems are an important conservation strategy in agriculture. Residues are left from the previous cultivation on the soil surface in order to protect the soil from water and wind erosion [

4]. A residue cover has several important advantages: it increases the soil organic matter [

5], speeds up enzymatic activities [

6], decreases the soil temperature [

7], and reduces the water consumption [

8]. In conservation tillage systems, at least 30% of the previous crop residues are left on the soil surface after tillage and planting operations [

9]. Depending on the amount of soil surface residue (SSR), conservation tillage systems include minimum (ridge tillage and mulch tillage) and no tillage. In a no-tillage system, the seeding operation is carried out directly into the standing stubble of the previous crops [

9].

Various field measurement methods have been developed to estimate the SSR, including line transect, photo comparison, and computational methods. While line transect is a highly accurate field method, it is hardly applicable to large areas due to the time and labor costs. Therefore, several studies aimed to utilize recent developments of remote sensing instruments (satellite, airborne, and unmanned aerial vehicle (UAV)-based sensors) to estimate the SSR [

10,

11,

12,

13,

14]. To this end, due to the absorption properties of the SSR, the region of 2100 nm of electromagnetic spectrum was studied [

15,

16]. In this region, the presence of lignin, cellulose, and other saccharides in the external wall of the residue allows distinguishing the SSR signal from soil and vegetation signals [

17]. In fact, several methods have been developed for deriving the SSR from spectral images. The majority of approaches calculate the brightness of pixels while object-based image analysis (OBIA) takes other factors, such as texture, color, and geometry of the resulting objects into consideration in addition to brightness.

Several laboratory and field studies have been conducted using per-pixel methods for the identification and mapping of the SSR. van-Deventer et al. [

18] developed multispectral Landsat-based indices, including the simple tillage index (STI) and normalized difference tillage index (NDTI), for classifying tillage practices based on the percentage of residue cover. They found that bands of 5 and 7 due to covering the region near 2100 nm are suitable for the estimation of the SSR. Earlier studies applied NDTI and STI indices to multispectral Landsat 6, 7, and 8 images [

19,

20,

21] and obtained accurate results.

Daughtry et al. [

22] developed a cellulose absorption index (CAI) from hyper-spectral AVIRIS data as another tillage index and found it to be superior to multispectral Landsat 6 tillage indices. The lignin cellulose index and the shortwave infrared normalized difference residue index were two other multispectral tillage indices based on advanced spaceborne thermal emission and reflection radiometer data, which are superior to the Landsat-based tillage indices, but not as good as the CAI in terms of mapping and characterizing the SSR [

23]. Jin et al. [

21] increased the accuracy of the detection of SSR by integrating Landsat-8 based tillage indices and gray level co-occurrence matrix (GLCM) textural features.

Pacheco and McNairn [

24] obtained coefficient of determination (

R2) values of 0.58–0.78 when identifying corn, small grains, and soybean residues from the soil using a pixel-based spectral unmixing analysis method. Sudheer et al. [

25] applied an artificial neural network model to detect and map the SSR using Landsat-5 data and obtained overall accuracies of 0.74–0.91 for experimental fields. Bocco et al. [

26] estimated corn SSR with an

R2 value of 0.95 using an artificial neural network model from Landsat-7 data.

In the context of this state of the art in literature, we believe that applying OBIA to SSR is a novelty and we will investigate its potential in the remainder of this article. Over the last years, the number of applications that conceptually aim for objects—still built on the information of the underlying pixels—rose quickly. Blaschke et al. [

27] identified a high number of relevant publications that use OBIA concepts and even claim that this concept and its instantiation to a particular order of scale—the geographic, as opposed to applications in medical imaging or cell biology—is a new paradigm in remote sensing. For this level of scale and geodomain, this paradigm is also referred to as geographic object-based image analysis (GEOBIA). In essence, an OBIA process typically groups similar pixels within an image through an image segmentation approach by either merging pixels or by splitting the image iteratively. Both strategies—as well as in other segmentation approaches not discussed herein—will result in relatively homogenous image objects. ‘Relative’ means compared to their surroundings. In the classification step, objects are assigned to a particular class based on a set of classification rules.

There have been very few studies on the application of OBIA for identifying and mapping the SSR. Najafi et al. [

28] applied OBIA and Landsat-8 images to classify the SSR into the following three classes: SSR < 30%, SSR 30%–60%, and SSR > 60%.

OBIA is a field within remote sensing and image processing that bridges geographic information science (GIScience, in short). In this article, we highlight two groups of classification approaches based on fuzzy object-based image analysis, namely, a) membership functions and b) nearest neighbor (NN). From its onset, OBIA has often been associated with fuzzy methods, where objects are assigned to a particular class based on fuzzy relations and rules. Many studies illustrate how to assign particular objects to classes based on obtained fuzzy membership values for each object class and fuzzy rules combining several such rules [

29,

30,

31,

32,

33].

OBIA methods have already been used to identify landslides, debris-covered glaciers, and vegetation [

34]. Kalantar et al. [

35] applied an OBIA method to identify land cover features using spectral UAV images. They found that OBIA performed well in comparison to decision tree and support vector machine methods. The accuracy of the results of OBIA is strongly influenced by the selection of fuzzy operators and membership functions [

36]. The nearest neighbor (NN) classifier algorithm aims to classify images based on similarities of object values in the determined features [

37,

38]. Yu et al. [

39] applied an object-based NN classification for land cover mapping using high-resolution UAV spectral images. They considered 52 object-based features in terms of their spectral properties, texture features, topography, and object geometry in a feature space. Blaschke et al. [

37] also investigated the capability of OBIA and NN classification algorithms (spectral, GLCM textural features, and geometry features) for detecting and identifying landslide locations with a semi-automated approach. The capability of the NN method was also reported in other studies [

40,

41].

Based on the above-mentioned justifications, the objectives of this study are a) to describe a novel method based on fuzzy OBIA for extracting and mapping SSR and tillage intensity, b) to compare the capability of Landsat-8 and Sentinel-2A satellite images for mapping the residue cover, and c) to investigate the accuracy of per-pixel and object-based image analysis approaches and their respective indices and algorithms for residue cover assessment.

2. Materials and Methods

2.1. Study Area

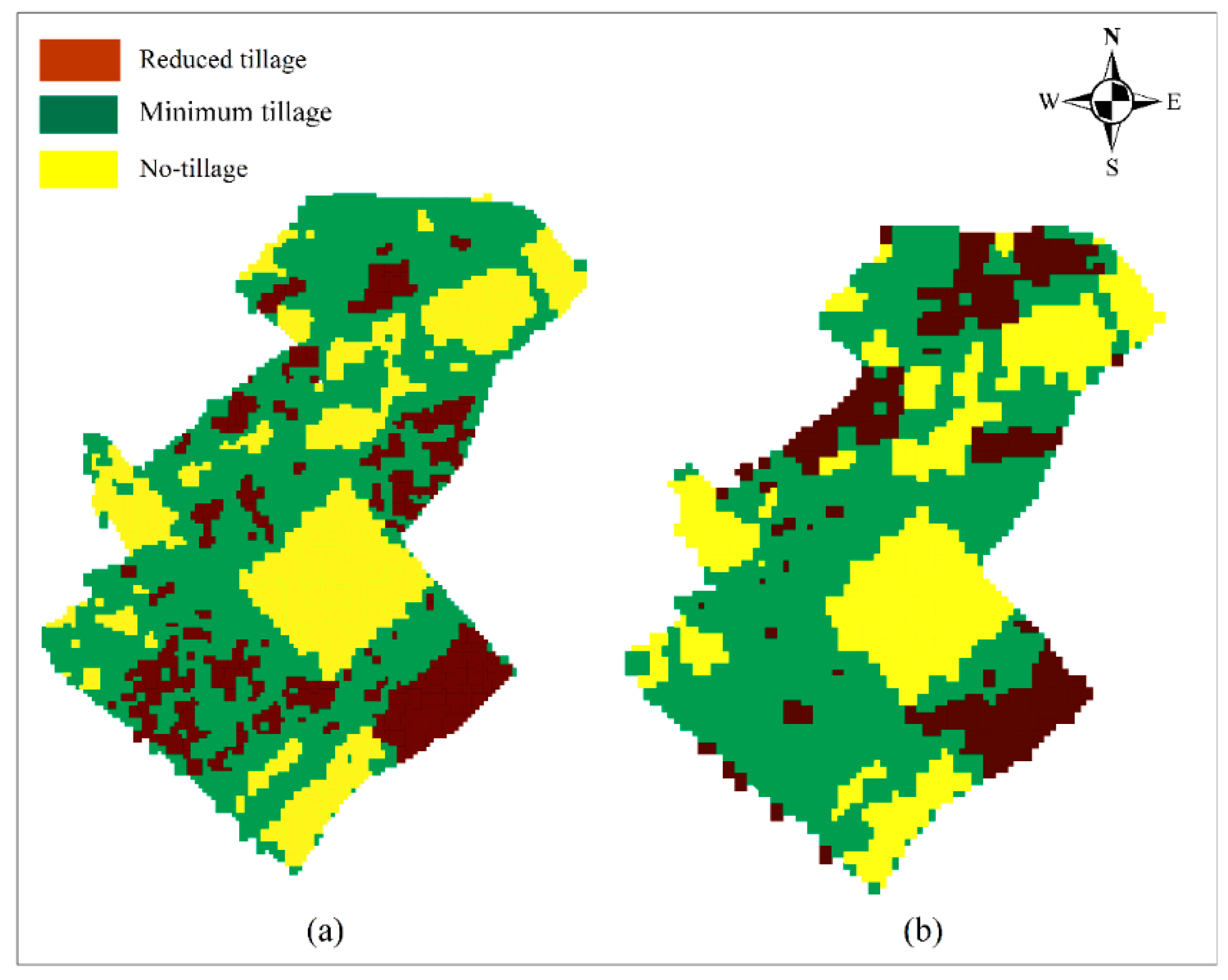

Ground truth data of tillage and planting operations were collected within an agricultural area operated by the Dryland Agricultural Research Institute of Iran’s East Azerbaijan province located at 46° 27′ 29″ E, 37° 15′ 36″ N. The cropping system at the study site was composed of wheat, pea, and forage crops. Different conservation methods and intensive tillage methods were carried out in the study area as tillage/planting practices. As a result, a wide range of SSR levels from full residue cover to bare soil was available across the study area (

Figure 1). In this article, we investigate the agricultural system of the study area with respect to environmental issues such as water scarcity and soil erosion. The outcome of this research shall serve as an input for analyzing the efficiency of conservation tillage systems.

2.2. Field Measurements

Between 5 and 15 October 2017, the SSR was measured at the experimental fields of the study area through line transects. First, we used a 30 m measuring rope, which was divided into 100, 30 cm intervals shown as black markings. At each sampling location, the rope was stretched diagonally (45°) across the rows and the number of markings intersecting the SSR was counted. Then we stretched the rope across the rows again, but in the direction perpendicular to the first mode. After that, the percentage of the SSR was calculated by taking the average number of markings of the two counting exercises. The exact location of each SSR location was obtained by a global positioning system (GPS) measurement in the field. A total of 153 local points was measured with this line transect method over the study area of 450 hectares.

2.3. Remote Sensing Data

Two sources of satellite images were utilized in this study, namely Sentinel-2A and Landsat-8. A Sentinel-2A image from 9 October 2017 and a Landsat-8 image from 16 October 2017 were acquired. We used the ENVI 5.3 software for all image preprocessing tasks, including radiometric and atmospheric corrections. We selected bands 2, 3, 4, 5, 6, and 7 of the Landsat-8 image with a resolution of 30 m and used these bands together with bands 5, 6, 7, 8a, 11, and 12 of the Sentinel-2A image, whereby the latter has a spatial resolution of 20 m. The two images were preprocessed using the ENVI 5.3 software.

2.4. Soil Surface Residue Identification: Workflow

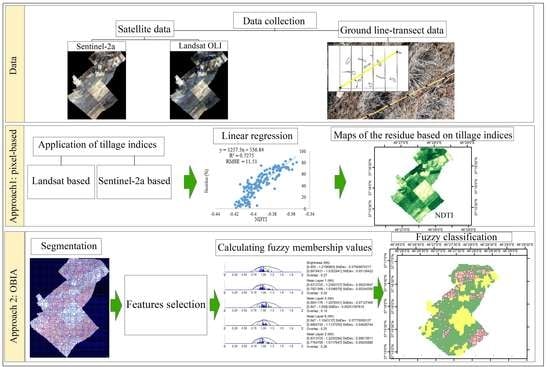

For the Landsat-8 and Sentinel-2A data, two different approaches were applied to map the SSR (

Figure 2), namely, a) a common per-pixel method, which relies on the linear regression between tillage indices and line transect field measurements and b) a classification based on fuzzy OBIA methods. In general, a pixel-based approach estimates the residue cover continuously using tillage indices. However, the OBIA method classifies the residue cover at different levels. While, both the methods have some advantages in terms of estimation of the residue, because the final objective is to determine the applied tillage methods in a region (depending on the percentage of residue cover that is left on the field after tillage and planting practices), object-based classification methods are discussed in particular in this study.

2.5. Tillage Indices

The spectral specifications of Sentinel-2A and Landsat-8 images are shown in

Table 1. As described in

Section 3.2, we only used six bands from each satellite to map the residue.

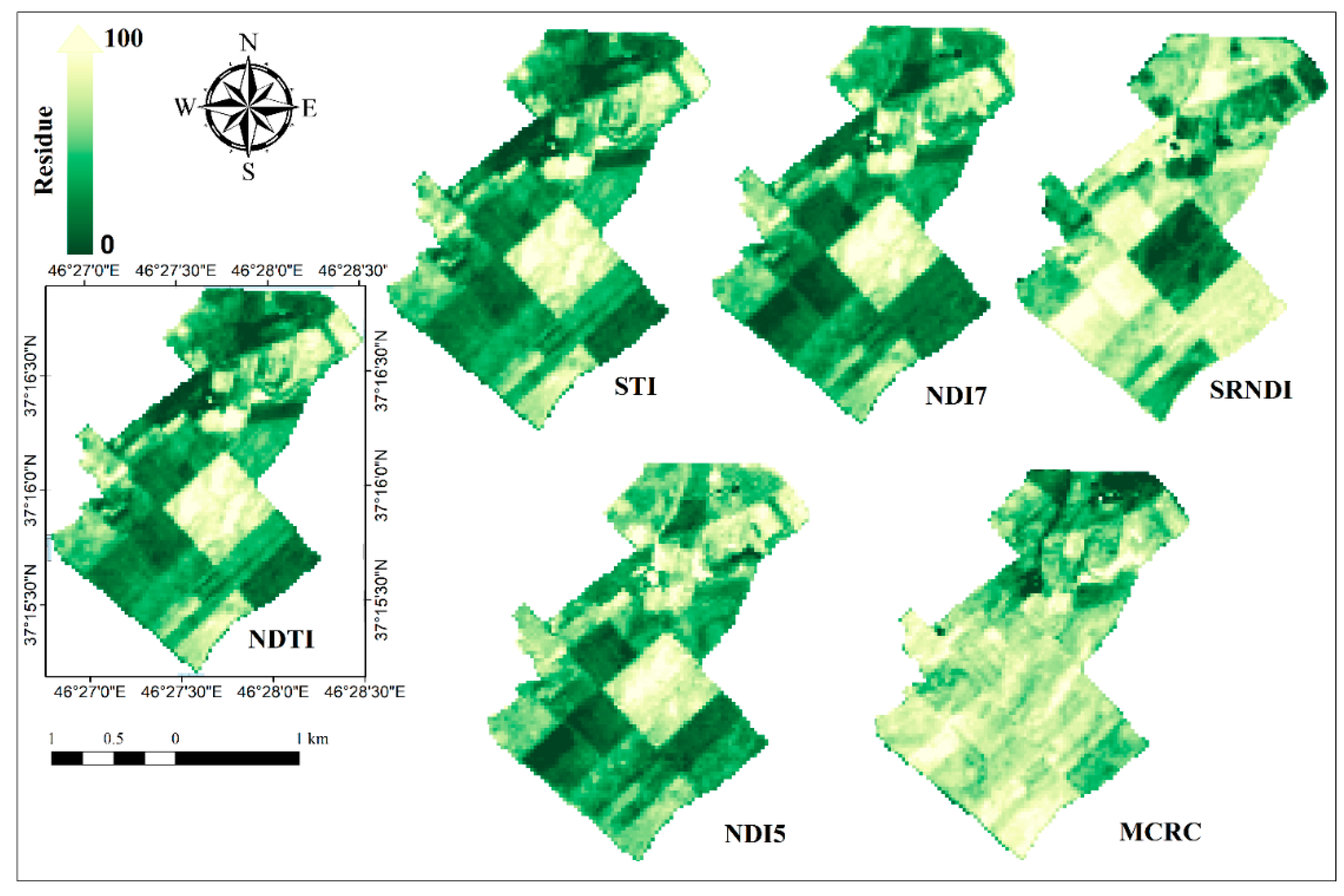

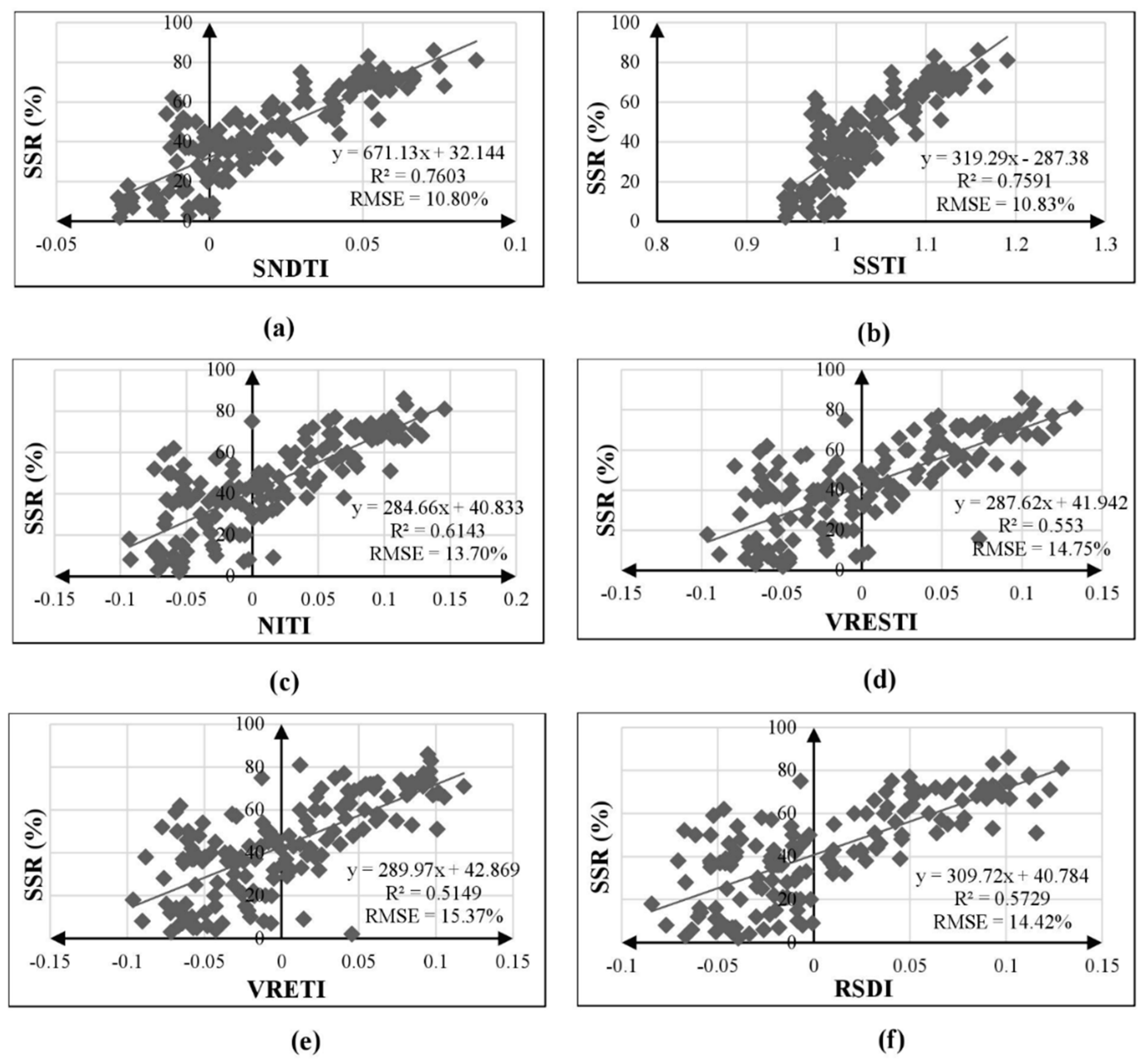

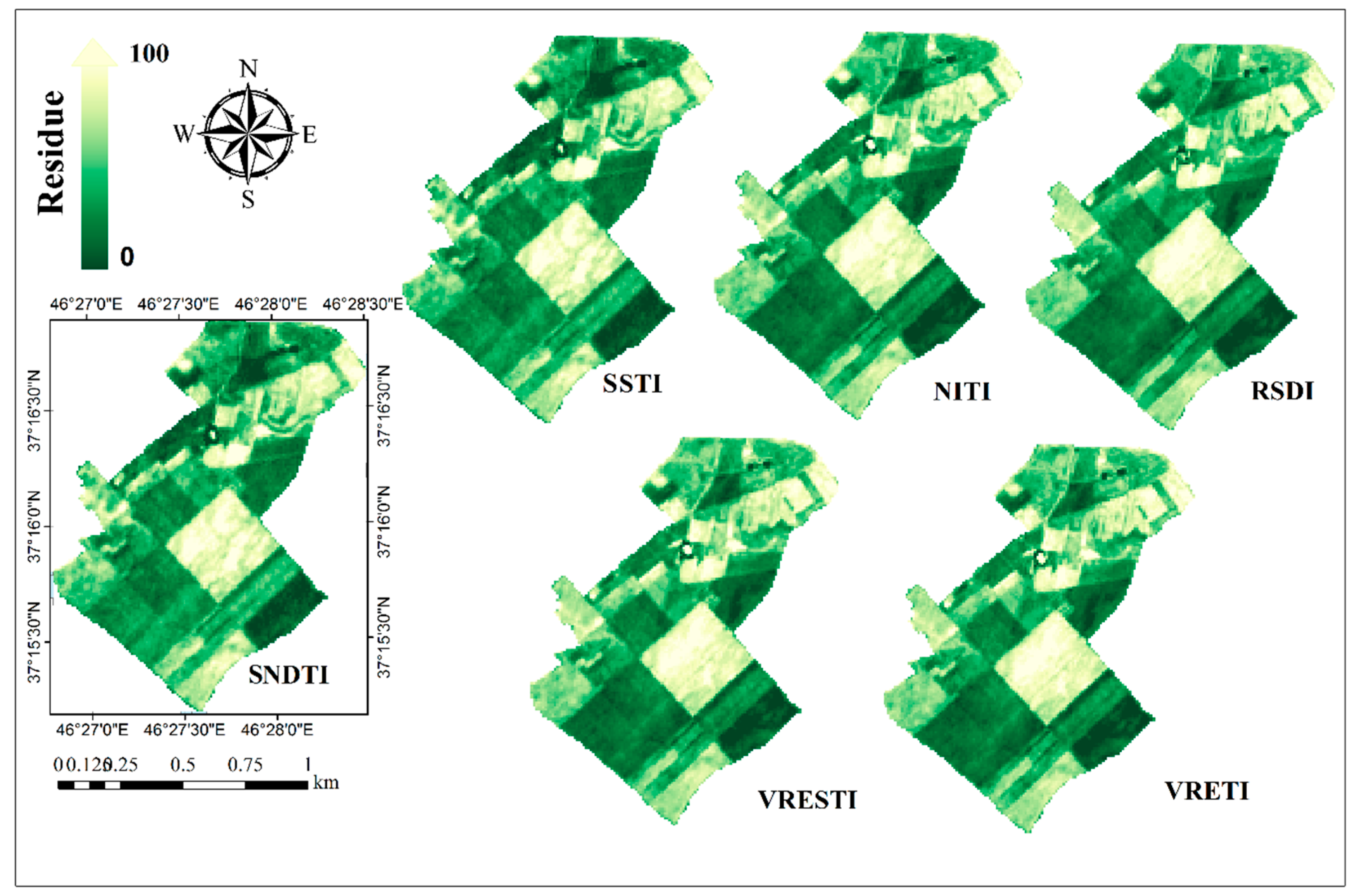

To calculate the relationship between spectral properties and residue cover, two categories of indices were considered for Landsat-8 (

Table 2) based on the results of the literature review, and comparable indices were developed for Sentinel-2A (

Table 3).

Due to the wavelength similarities of Landsat-8 bands 6 and 7 and Sentinel-2A bands 11 and 12, we created similar indices for Sentinel-2A images as Sentinel NDTI (SNDTI) and Sentinel STI (SSTI).

2.6. Object-Based Image Analysis

2.6.1. Image Segmentation

Image segmentation is typically the first step in an OBIA workflow. It clusters relatively homogenous pixels into objects [

42,

43,

44]. Multi-resolution segmentation is the most common image segmentation process in OBIA and it serves the objective to derive “relatively homogeneous regions” by a global optimization heuristic [

42]. It is a bottom-up region-merging algorithm that merges adjacent pixels with similar specifications to create initial image objects. It then merges similar objects together to produce larger objects. This is carried out as long as the internal heterogeneity (color, texture, and shape) of produced objects does not exceed user-defined thresholds [

37,

42,

45,

46,

47,

48].

These multi-resolution segmentation processes usually apply the three parameters of scale, color, and shape. The scale parameter value is not equal to the sizes of the resulting objects, but strongly influences their sizes. A high scale parameter value allows for a high heterogeneity within image objects and tends to result in larger segments. Likewise, a low scale parameter value results in a high homogeneity within image objects and a smaller number of image objects [

28,

44,

49]. Drǎguţ et al. [

47] developed methods for estimating appropriate scale parameters prior to the segmentation step. Shape and color are additional parameters in multi-resolution segmentation that influence spectral and textural homogeneity of the image objects, while shape influences the resulting objects in terms of their smoothness and compactness. Based on the method of Drǎguţ et al. and initial trials, we used color and shape parameters of 0.4 and 0.5, respectively, for Landsat-8 and Sentinel-2A images. The segmentation processes are illustrated in

Figure 3.

2.6.2. Basic Fuzzy Concepts Used in OBIA

In mathematics, two general logics are distinguished, namely, binary and fuzzy. While binary is a two-valued logic that considers only

for each object, fuzzy is a multi-valued logic that considers [0,1] for each member. The fuzzy set theory was introduced by Zadeh [

50] to investigate uncertainty using linguistic terms instead of common numerical variables. As discussed in the literature review, most OBIA studies use fuzzy rule-based classifications and employ either membership functions or a nearest neighbor classifier (for differences and advantages of both methods, see [

51]).

In a fuzzy object-based image analysis procedure, the characteristics of each image object can be qualified by a fuzzy feature space and described by a membership function. A fuzzy rule is a set of orders that makes a correspondence relationship between the features that describe each object and the class.

Membership Function Method

Membership functions assign fuzzy values to a predefined feature space for each object class using a particular membership function (larger than, smaller than, singleton, Gaussian, about range, and full range) [

29,

36,

46]. Features with high membership values (depending on the test conditions) are selected for classification [

33,

52,

53]. The classification operator is another important factor that affects classification accuracy since it determines how several rules are combined. The most frequently used operators are simple ‘AND’ and ‘OR’ functions, but more complex operators can also be defined.

In the present study, the membership function method is applied to both Landsat-8 and Sentinel-2A images through the following steps: a) determining the number of classes, b) selecting feature space(s), c) calculating fuzzy membership values for each object class, and d) applying the classification algorithm.

Basic Concepts of NN

Classifiers are often grouped into the following: (i) parametric classifiers that require a learning/training phase of the classifier parameters, these methods are also known as learning-based approaches, and (ii) nonparametric classifiers, which is a group of classifiers that require no learning/training phase for the determination of classifier parameters [

54]. Classification decision in nonparametric classifiers is directly based on the data. In object-based image analysis, NN is a popular nonparametric classifier that relies on estimating the NN distance from the nearest (most similar) image objects in the database. There are several advantages to using nonparametric classifiers compared to parametric methods, in particular, that a) a learning/training phase is not required, and b) nonparametric classifiers can easily handle a large number of classes. Parametric classifiers require training of parameters which may take several days for large dynamic databases, while for nonparametric classifiers changing classes/training sets is straightforward [

55]. In addition, to validate the results, NN classifiers provide almost unlimited capabilities for a classification system, which can be extended to other areas by selecting training samples [

54]. The NN method in this study consists of the following three main steps [

56]: a) determining the features space, b) training the system with line transect field measurements, and c) applying the classification algorithm.

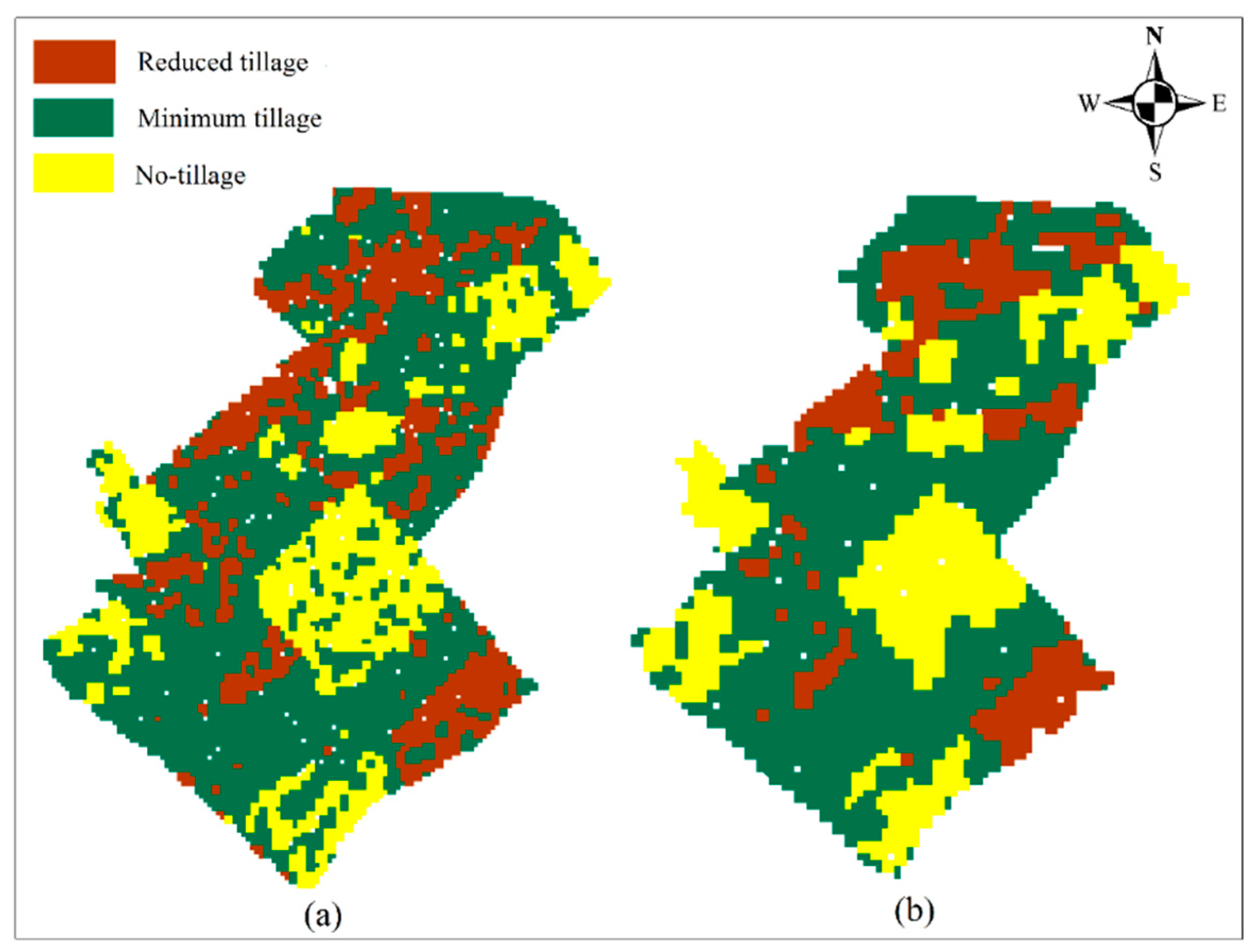

2.7. Classes and Features Space

For the classification of SSR and tillage intensity, three classes were considered in this study: SSR < 30% (reduced tillage), SSR 30%–60% (minimum tillage), and SSR > 60% (no tillage). Three different groups of features, namely, mean features, tillage features, and GLCM textural features, were applied to both the methods, membership functions, and NN. The features were selected based on the results of the literature review.

2.7.1. Mean Features

In this study, band ratio, brightness, and maximum difference (Max. Diff) were considered as the mean functions for both Sentinel-2A and Landsat-8 data. The band ratio included bands 2, 3, 4, 5, 6, and 7 of Landsat-8 data with a resolution of 30 m and bands of 5, 6, 7, 8a, 11, 12 of Sentinel-2A data with a resolution of 20 m. The brightness of an object represents the quality of lightness or darkness within each image object [

41,

57,

58]. Due to the contrast between soil and residue, lightness or darkness within an object may differ from one to another. Accordingly, increasing the SSR within an object increases the brightness of the object while an increasing amount of soil within an object decreases the brightness of the object.

where

B is the brightness of an image object,

is the sum of the mean object brightness in the visible bands, and

is the number of corresponding spectral bands.

In OBIA, Max. Diff (MD) for each image object is defined as the absolute difference between the minimum object mean and the maximum object mean in the visible bands divided by the mean object brightness [

37].

2.7.2. Tillage Features

In the literature review, we discussed different tillage spectral indices which aim to distinguish the SSR from soil. These indices might vary depending on the type of spectral image (multispectral and hyper-spectral) and the characteristics of the sensor. We used the indices described in

Section 3.2 and

Table 2 and

Table 3.

2.7.3. Textural Features

Textural features based on a gray level co-occurrence matrix (GLCM) were first introduced by Haralick et al. [

59]. The initial 23 textural features were decreased to eight major features (

Table 4) in [

60]. Textural features are calculated based on the distance and angle between two pairs of adjacent pixels that are located in a window. The accurate extraction of textural features in a pixel-based image analysis method depends on the size of the window, while in an object-based approach, the results of segmentation create objects with different shapes and sizes. These objects, which are considered as windows, can illustrate the real shape and size of the land cover objects [

61].

The main assumption in the computation of object-based GLCM textural features is the probability of the simultaneous presence of a pair of objects with the same brightness. The GLCM is a square matrix in which each member represents the number of pairs of pixels. The GLCM texture considers the relationship between two pixels at a time. These two pixels are called the reference and neighbor pixels and they are at a distance d and an angle θ to each other [

62]. The directions of analysis for GLCM can be horizontal (0°), vertical (90°), or diagonal (45° and 135°) and are denoted as

,

,

, and

.

2.8. Accuracy Assessment

2.8.1. Overall Accuracy

The estimation of the error matrix is the most common method for estimating the accuracy of the classification results. To analyze the classification quality, the error matrix compares the classification results with ground truth data. The overall accuracy is the key factor to evaluate the accuracy of the classified map. It can be calculated as the area of the correctly classified sample objects divided by the total area of sample objects (Equation (3)) [

27].

where

i is the row number,

j is the column number,

is normalized value in the cell, and

N is the number of rows or columns.

2.8.2. User Accuracy

The user accuracy is the accuracy from the point of view of a map user. It demonstrates how the class on the map will actually be present on the ground. The user accuracy is calculated from the number of correctly identified objects in a given map class divided by the number of claimed objects to be in that map class (Equation (4)) [

27].

2.8.3. Producer Accuracy

The producer accuracy is the accuracy from the point of view of a map maker. It demonstrates how the real objects on the ground are correctly shown on the classified map. It is also calculated from the number of correctly identified objects in the reference plots of a given class divided by the number actually in that reference class (Equation (5)) [

27].

2.8.4. Kappa Statistics

The kappa coefficient is a statistical method that measures the accuracy of an image classification process. The reason for the robustness of this method is that it eliminates agreement occurring by chance through the classification. The range of the kappa coefficient is from −1 to 1. A value of 1 indicates that the raters are in complete agreement [

63]. A value of 0 indicates no agreement between the raters, which means the classification is completely by chance. A negative kappa coefficient indicates agreement worse than occurring by chance. The kappa coefficient is expressed as:

where

is the probability of relative observed agreement between raters and

is the probability of a chance agreement.

5. Conclusions

Due to the water and soil erosion problems affected by agricultural activities, the use of conservation tillage methods has increasingly been recommended in recent years as an appropriate alternative to intensive tillage methods. Conservation tillage methods typically leave the previous crop residues, or parts of it, on the soil surface. It is well demonstrated that this practice can significantly reduce water consumption, especially in arid and semi-arid regions.

Intensive tillage methods with burning or burying previous crop residues are in contrast to sustainable agricultural approaches. Today, conservation tillage methods are widely used, and many equipment (machines, pesticides, and specialists) are provided for. Thus, knowing the percentage of residue on the soil surface in a large agricultural area is very informative for agricultural organizations in planning support programs and providing necessary requirements. In precision farming point of view, satellite images can be used for fast and accurate SSR estimation in order to distinguish conventional and conservation tillage practices on the fields with lower time and labor costs. The aim of this study was to provide a fast, inexpensive, and precise solution to map and characterize the residue left on the soil surface after tillage and planting practices. We have found that satellite remote sensing data can be used to identify areas under conservation tillage from those under intensive tillage methods. To this end, we also designed a novel and successful fuzzy object-based approach to estimate SSR and map tillage intensity and then compared it with per-pixel methods. Results indicated that, in general, the remote sensing-based methods can provide appropriate information on the applied tillage methods to technical experts, farmers, and decision makers to improve conservation management efficiency in a region, but with slightly different results between the methods used.

In total, among the applied approaches (pixel-based and object-based), OBIA due to the capability of SSR classification in individual classes is more applicable for decision makers than pixel-based methods (continuous residue cover mapping). When comparing two different OBIA classification strategies, the membership function classifier yielded the highest accuracies for residue mapping. In terms of the comparison of the two satellites used, we can state that Sentinel-2A data yielded better SSR mapping results for both pixel-based (tillage indices) and object-based (membership functions and NN) approaches compared with Landsat-8 data. It was due to the better spatial resolution of the Sentinel-2 images in which the details were better specified and accuracy increased.