Abstract

The Advanced Satellite with New system ARchitecture for Observation-2 (ASNARO-2), which carries the X-band Synthetic Aperture Radar (XSAR), was launched on 17 January 2018 and is expected to be used to supplement data provided by larger satellites. Land cover classification is one of the most common applications of remote sensing, and the results provide a reliable resource for agricultural field management and estimating potential harvests. This paper describes the results of the first experiments in which ASNARO-2 XSAR data were applied for agricultural crop classification. In previous studies, Sentinel-1 C-SAR data have been widely utilized to identify crop types. Comparisons between ASNARO-2 XSAR and Sentinel-1 C-SAR using data obtained in June and August 2018 were conducted to identify five crop types (beans, beetroot, maize, potato, and winter wheat), and the combination of these data was also tested. To assess the potential for accurate crop classification, some radar vegetation indices were calculated from the backscattering coefficients for two dates. In addition, the potential of each type of SAR data was evaluated using four popular supervised learning models: Support vector machine (SVM), random forest (RF), multilayer feedforward neural network (FNN), and kernel-based extreme learning machine (KELM). The combination of ASNARO-2 XSAR and Sentinel-1 C-SAR data was effective, and overall classification accuracies of 85.4 ± 1.8% were achieved using SVM.

1. Introduction

Agricultural practices determine the level of food production, and increases in agricultural output are essential for global political and social stability and equity [1]. Cultivated land has been developed and managed through a range of social actions and policies to meet this need [2]. Cropland mapping is necessary for estimating the amount and type of crops harvested and supporting the management of agricultural fields. A system of individual income support for farmers has been adopted in Japan, and some local governments use manual surveys to document field properties such as crop type and location [3]. Recently, more efficient methods of cropland mapping have become necessary to reduce costs, and as a result the application of remote sensing techniques based on satellite data has received considerable attention.

Tokachi Plain is one of Japan’s foremost food production regions, and beans, beetroot, maize, potatoes, and winter wheat are its predominant crops. A number of studies have shown that optical remote sensing data can be used to produce maps with high spatial and spectral resolutions [4] and are effective for gathering various types of biomass information, such as leaf chlorophyll content [5] and leaf area index (LAI) [6]. Indeed, Landsat series data have proven effective for identifying crop types with a high level of accuracy [7,8], and red-edge and shortwave infrared reflectance data are useful for improving crop monitoring over large areas [8,9,10]. However, the quality of optical remote sensing data depends on atmospheric influences and weather conditions.

Substantial information about soil and vegetation parameters has been obtained through microwave remote sensing, and this type of technique is increasingly being used to manage land and water resources for agricultural applications [11,12,13,14]. Synthetic aperture radar (SAR) systems offer a large amount of information about soil moisture, crop height, and crop cover rate, which are useful for monitoring plant phenology [12,15]. Furthermore, since SARs are not subject to atmospheric influences or weather conditions, they can be used for multi-temporal analysis in monsoon areas. Previous studies have shown that the C-band is the most effective frequency for agricultural applications because of its sensitivity to structural properties, crop growth stages, and soil moisture conditions [16,17]. More opportunities were provided to obtain C-band SAR data with the recent launches of Sentinel-1A and Sentinel-1B by the European Space Agency (ESA) in 2014 and 2016, respectively. These data, which are distributed free of charge, have an average revisit time of two days between 0 and 45 degrees latitude [18]. As a result, Sentinel-1 data have been widely used for land cover classification [19,20,21], monitoring of phenology [22], and biomass or production estimation. The interferometric wide-swath (IW) mode that offers VV (vertical transmit and receive) and VH (vertical transmit, horizontal receive) polarization data is normally used as the default acquisition mode [23,24].

In addition to C-band SARs, the high sensitivity of the sigma naught of X-band sensors has been confirmed, and the potential of the X-band for identifying and forecasting crop growth using indices such as LAI has widely been confirmed [25,26]. For examples, TerraSAR-X/TanDEM-X (Germany), SEOSAR/Paz (Spain), COSMO-SkyMed (Italy), and RISAT-2 (India) have been launched or planned. The backscattering coefficient of agricultural fields is expressed as a function of the geometry and dielectric properties of the target and the amount of biomass, and the use of multi-temporal SAR data within a vegetation period is effective for clarifying the change in scattering pattern with crop growth [27]. The Advanced Satellite with New system ARchitecture for Observation-2 (ASNARO-2), which carries an X-band SAR (XSAR), was launched on 17 January 2018 by Nippon Electric Company (NEC), Japan [28], and the data it has provided have been made available and distributed by Japan Space Imaging (http://www.spaceimaging.co.jp/en/). In addition, the Vietnam Academy of Science and Technology has finalized a deal to purchase a radar satellite that possesses the same specifications as ASNARO-2 from Japan for climate and natural disaster observations. This minisatellite, which features a Next Generation Star bus and can offer very-high-resolution imagery in Spotlight mode (1.0 m resolution), is expected to be used to supplement the data provided by larger satellites [29]. We therefore evaluated the potential of the use of ASNARO-2 XSAR Spotlight-mode data on its own and in combination with Sentinel-1 C-SAR data for generating crop maps. We also considered some radar indices (RIs) calculated from the backscattering coefficients for two different dates to improve classification accuracy and indices based on differences (Ds), simple ratios (SRs), and normalized differences (NDs) [30].

The use of machine learning algorithms in classification is essential for generating high-quality crop maps and remote sensing data. A support vector machine (SVM) with a Gaussian kernel function is one of the most effective classification approaches [31], and some previous studies have demonstrated its strong performance in the identification of soil and crop types [32,33]. The random forests (RF) approach is another algorithm for classification and regression using remote sensing data [34,35], and it exhibits similar performance to SVMs in terms of classification accuracy and training time [36]. In addition, some studies have demonstrated the advantage of an extreme learning machine (ELM) with a Gaussian radial basis function (RBF) kernel [37,38], and a multilayer feedforward neural network (FNN) has also been applied to remote sensing data for land cover classification [39]. We compared the performance of these widely used algorithms. Although grid-search strategies have been used to optimize the hyperparameters of these algorithms [40], these could be poor choices for configuring algorithms for new datasets. We therefore used Bayesian optimization in this study, since it allowed sequential optimization of the noisy, expansive black-box function hyperparameters [41].

Within this framework, the main objective of the present study was to evaluate the potential of ASNARO-2 data for crop-type classification using machine learning algorithms.

2. Materials and Methods

2.1. Study Area

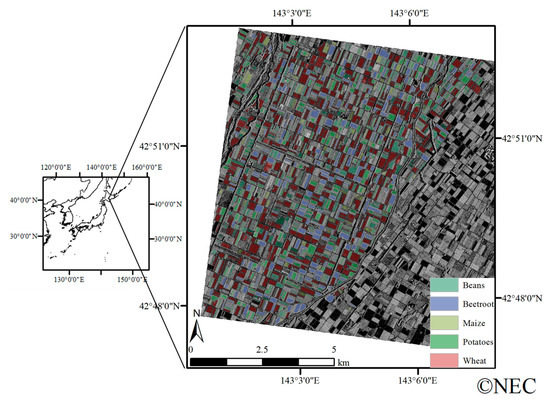

The study area is the farming area located in the town of Memuro, Hokkaido, Japan (143°00′30″ to 143°08′00″E, 42°47′58″ to 42°53′06″N; Figure 1), which is situated on the western Tokachi Plain. The climate is characterized as a continental humid climate, with warm summers, cold winters, an average annual temperature of 6 °C, and an annual precipitation of 920 mm.

Figure 1.

Cultivated crops based on manual surveys conducted by Tokachi Nosai and the Advanced Satellite with New system ARchitecture for Observation-2 (ASNARO-2) X-band Synthetic Aperture Radar (XSAR) horizontal transmit and receive (HH) polarization data (looking direction: Right) acquired during descending passes on 28 June 2018.

Although there are six main crop types on the western Tokachi Plain, one of these—grass—is poorly represented in this study area. The remaining five (beans, beetroot, maize, potato, and winter wheat) were therefore featured in this study. Beetroot and potatoes are transplanted between late April and early May, while beans and maize are sown in mid-May (Figure 2). Winter wheat is the most widely cultivated crop in this study area and is sown in the previous year. The harvesting periods are from late September to early November for beans, November for beetroots, late August to September for potatoes, and from late July to early August for winter wheat.

Figure 2.

Crop calendar and growth stages in the study area.

2.2. Reference Data

Field location and attribute data, including crop type and area, were obtained based on manual surveys conducted by Tokachi Nosai (Obihiro, Hokkaido) and recorded in a polygon shape file. A total of 1805 fields (367 bean fields, 310 beetroot fields, 148 maize fields, 451 potato fields, and 529 winter wheat fields) covered the area in 2018. Field size was 0.27–9.03 ha (median 1.70 ha) for beans, 1.56–8.60 ha (median 2.15 ha) for beetroot, 0.46–7.08 ha (median 1.46 ha) for maize, 0.17–8.57 ha (median 1.53 ha) for potatoes, and 0.19–14.51 ha (median 2.40 ha) for wheat.

2.3. Satellite Data

ASNARO-2 has a sun-synchronous (dawn–dusk) near-circular orbit at an altitude of 504 km and provides X-band SAR data with a 1-day cycle over Japan (in emergencies). The local time of the descending node is 06:00 a.m., to ensure sufficient battery charging time, and X-band SAR data can be obtained via three imaging modes: Spotlight, Stripmap, and ScanSAR. We used the Spotlight mode in this study and obtained the data at a spatial resolution of 1.0 m along a 10 km swath. We used the HH (horizontal transmit and receive) polarization data acquired during descending passes on 28 June and 9 August 2018 (Table 1).

Table 1.

Characteristics of the satellite data.

Sentinel-1 follows a sun-synchronous, near-polar, circular orbit at a height of 693 km, with a 12-day repeat cycle. The satellite is equipped with a C-band imager (C-SAR) at 5.405 GHz with an incidence angle between 20° and 45°. There are four imaging modes (Stripmap [SM], Interferometric Wide swath [IW], Extra Wide swath [EW], and Wave [WV]), but we used IW mode, which offers VV and VH polarization data and is commonly used as the default acquisition mode. We used data acquired during ascending passes on 21 June and 8 August 2018 (Table 1). Data were downloaded from the ESA Data Hub (https://scihub.copernicus.eu/dhus/) as Ground Range-detected (GRD) products, which are focused, multi-looked, calibrated, and projected to ground range prior to download. Sigma naught and gamma naught are trigonometric transformations of radar brightness on a logarithmic scale, and sigma naught values are used for monitoring phenology and other vegetation-related parameters [25,42]. Thus, our sigma naught values were calculated from XSAR and C-SAR data. Data were orthorectified using the 10 m mesh DEM produced by the Geospatial Information Authority of Japan (GSI) and the Earth Gravitational Model 2008 (EGM2008).

Some radar indices (RI), such as the normalized radar backscatter soil moisture index (NBMI), which is expressed as the ND between two backscattering coefficients at different times, have been applied to estimating soil moisture [43], vegetation biomass [44], and the vegetation water content [45]. In addition to ND, Ds and SRs were also considered in this study, and these indices were calculated using sigma naught:

where and are sigma naught values acquired in June and August, respectively. To compensate for spatial variability, to avoid problems related to uncertainty in georeferencing, and to remove the spike noise, average SAR data values were calculated for each field and observation using field polygons (shape file format) using QGIS software (version 2.18.27).

2.4. Classification Procedure

Jeffries–Matusita (J–M) distances [46], which range from 0 to 2.0 and indicate the degree to which two crop types are statistically separated, were calculated to compare the SAR data among crop types. In general, if the J–M value is greater than 1.9, then separation is good, and if it is between 1.7 and 1.9, then separation is fairly good. Subsequently, crop classifications were conducted using the following three datasets: Case 1, X-band HH polarization data from ASNARO-2 XSAR and C-band VH/VV polarization data from Sentinel-1B combined; Case 2, X-band HH polarization data from ASNARO-2 XSAR; and Case 3, C-band VH/VV polarization data from Sentinel-1B.

In order to handle overfitting and underfitting, a stratified random sampling approach was used to divide the data into three datasets (Table 2): A training set (50%), used to fit the models; a validation set (25%), used to estimate the prediction error associated with model selection; and a test set (25%), used to assess the generalization error in the final selected model [47]. This procedure was repeated 10 times (hereafter referred to as rounds 1–10) to ensure robust results.

Table 2.

Number of fields in each of the three datasets.

The four most widely applied machine learning algorithms, SVM, RF, FNN, and the kernel-based ELM (KELM), were used for crop classification based on the satellite data in R software (version 3.5.0) [48].

SVM category data with maximum separation margins [49] have been used with kernels to fit a nonlinear model [50]. A Gaussian RBF kernel, which has two hyperparameters that control the flexibility of the classifier (the regularization parameter, C, and the kernel bandwidth, γ), has been used in numerous previous studies [33,51,52,53]. With respect to the boundaries of classes, higher C values lead to higher penalties for inseparable points, which sometimes result in over-fitting, and smaller C values lead to under-fitting. The γ value defines the reach of a single training example; small values indicate a ‘far’ reach, and large values indicate a ‘close’ reach.

RF builds multiple trees based on random bootstrapped samples of the training data [54], and the nodes of each tree are split using the best split variable from a group of randomly selected variables [55]. The output is determined by a majority vote based on the trees. Although two hyperparameters, the number of trees (ntree) and the number of variables used to split the nodes (mtry), are optimized, the best split for a node can increase the classification accuracy [56,57,58]. Next, three additional hyperparameters are considered: The minimum number of unique cases in a terminal node (nodesize), the maximum depth of tree growth (nodedepth), and the number of random splits (nsplit).

FNN, which is a neural network trained to a back-propagation learning algorithm, is the most popular neural network. The first layer is called the input layer, the last, the output layer, and the layers in between are hidden layers [59]. Dropout was also used, since it has been shown to be able to provide classifications [60]. We optimized seven hyperparameters in this study: Number of hidden layers (num_layer), number of units (num_unit), dropout ratio (dropout) for each layer, learning rate (learning.rate), momentum (momentum), batch size (batch.size), and number of iterations of training data needed to train the model (num.round). An ELM is also expressed as a single hidden-layer FNN. However, a vast number of nonlinear nodes and the hidden layer bias are defined randomly in this algorithm, and the hyperparameters are the regulation coefficient (Cr) and the kernel parameter (Kp) when an RBF kernel is applied.

2.5. Accuracy Assessment

The crop maps that were generated were evaluated based on measures of quantity disagreement (QD), allocation disagreement (AD), and the F1 score, which is calculated based on producer accuracy (PA), user accuracy (UA), overall accuracy (OA), and the kappa index. QD and AD are much more useful for summarizing a cross-tabulation matrix than the kappa index of agreement, and their sum indicates the total disagreement [61].

McNemar’s test [62] was used to identify whether there were significant differences between the classification results; a χ2 value greater than 3.84 indicates a significant difference between two classification results at the 95% significance level.

3. Results and Discussion

3.1. Separability Assessments

ASNARO XSAR data acquired on 28 June (X-HH-0628) had relatively high J–M distances (>1.7) for three combinations of crops: Beans–potatoes, beetroot–wheat, and potatoes–wheat (Figure 3). For four of the remaining combinations (beans–maize, beans–potatoes, beetroot–potatoes, and maize–potatoes), they were <1.0.

Figure 3.

Jeffries–Matusita distances for sigma naught. In the legend, the first letter of each dataset name indicates the frequency of the microwaves, the next two letters indicate the polarization type, and the last four numbers indicate the observation date.

RIs were calculated using the backscattering coefficients obtained from the ASNARO-2 XSAR data from the two dates and were effective in distinguishing beans–potatoes, beetroot–potatoes, maize–potatoes, and potatoes–wheat, with J–M distance values of >1.9 (Figure 4). In particular, it is notable that the RIs were able to distinguish beans–potatoes, beetroot–potatoes, and maize–potatoes, since their J–M distance values were <1.0 when only the original sigma naught values were used. The growth of the potatoes was inhibited by chemicals in July to facilitate easy harvesting, with a resultant decrease in the backscattering of the X-band. In contrast, this backscattering increased with the growth of beetroots and maize. Wheat had already been harvested in mid-August, and the backscattering of the X-band from winter fields was similar to that from bare fields. These facts contributed to the good separability. This confirmed the advantages of RIs with respect to the capacity to identify crop types based on ASNARO-2 XSAR data.

Figure 4.

Jeffries–Matusita distances for radar-based vegetation indices using backscattering coefficients obtained for two dates in 2018. In the legend, the first letter indicates the frequency of the microwaves, the next two letters indicate the polarization type, and the last one or two letters indicate the type of index.

In contrast, for Sentinel-1 C-SAR data, all J–M distance values were <1.0. Vertical polarized microwaves have a less penetration than horizontal polarized waves over mature wheat fields [14], and their intensity is decreased via absorption by the vertical structure of dense, narrow stems [25,63]. The main scattering pattern was surface scattering and the intensity was low in August, with the result that the differences in sigma naught were small. The RIs from the C-SAR data were therefore not effective in identifying winter wheat. Although it was also difficult to distinguish bean, beetroot, and maize fields, their scattering patterns were different: They have a relatively high allocation of volume and single- and double-bounce scattering due to their structures [30]. Polarimetric analysis could therefore be used in future research for the identification of these crops. XSAR can provide single polarization data, but there are few opportunities to obtain HH or HV polarization data from C-SAR data. However, horizontal polarized data can penetrate deeper into crop canopies.

3.2. Accuracy Assessment

For all the algorithms, the combination of ASNARO-2 XSAR and Sentinel-1 C-SAR data was effective in improving the classification accuracy, and the classification results from Case 1 were superior to those from Cases 2 and 3 (Table 3 and Table 4). Of the four algorithms, SVM exhibited the best performance and achieved an overall accuracy of 0.854 ± 0.018 (kappa = 0.810 ± 0.023; AD + QD = 0.146 ± 0.018). Although the overall accuracy of every algorithm was >0.8, the differences among them were significant (p < 0.05; Table 4).

Table 3.

Accuracy of the four classification algorithms used in this study: Support vector machine (SVM), random forest (RF), multilayer feedforward neural network (FNN), and kernel-based extreme learning machine (KELM). PA: Producer accuracy; UA: User accuracy; OA: Overall accuracy; AD: Allocation disagreement; QD: Quantity disagreement. Case 1: X-band HH polarization data from ASNARO-2 XSAR and C-band vertical transmit, horizontal receive (VH)/ vertical transmit and receive (VV) polarization data from Sentinel-1B combined; Case 2: X-band HH polarization data from ASNARO-2 XSAR; Case 3: C-band VH/VV polarization data from Sentinel-1B.

Table 4.

Chi-square values from McNemar’s test. A chi-square value of ≥3.84 indicates a significant difference (p < 0.05) between two classification results. SVM: Support vector machine; RF: Random forest; KELM: Kernel-based extreme learning machine; FNN: Multilayer feedforward neural network. Case 1: X-band HH polarization data from ASNARO-2 XSAR and C-band VH/VV polarization data from Sentinel-1B combined; Case 2: X-band HH polarization data from ASNARO-2 XSAR; Case 3: C-band VH/VV polarization data from Sentinel-1B.

In particular, based on average Fl scores, the classification accuracy for bean, maize, and potato fields was improved by combining the two datasets (Case 1): For Cases 1, 2, and 3, respectively, the F1 scores were 0.817 (SVM), 0.682 (RF), and 0.671 (SVM) for beans; 0.519 (SVM), 0.397 (FNN), and 0.214 (FNN) for maize; and 0.815 (RF), 0.728 (FNN), and 0.676 (SVM) for potatoes. This indicates that ASNARO-2 can be used effectively for crop classification to supplement the data provided by larger satellites.

Many studies have been undertaken in different regions to classify crop types using remote sensing data (Table 5). Some of these focused on similar cultivation styles and crops (e.g., corn, soybean, beetroots, potatoes, and wheat) to this study, although none focused on exactly the same area or crop types as our study. We obtained similar accuracies to these studies, although some authors have reported higher accuracies using optical remote sensing data, which was not consistently available for this study area. Geographic object-based image analysis (GEOBIA) is a promising technique for mapping croplands, and some studies have confirmed its potential, although very fine resolutions (<1 m) are required for good results [64]. Since XSAR can provide remote sensing images with a resolution of 1.0 m, we are planning to evaluate the potential of GEOBIA with XSAR data in the future.

Table 5.

Summary of overall accuracy obtained in other studies.

3.3. Misclassified Fields with Respect to Field Area

XSAR data were superior to C-SAR data for identifying beetroot and potato fields, but there were no noticeable differences between them in the identification of bean and maize fields (Figure 5). Although XSAR data were effective in identifying wheat fields smaller than 2.0 ha, C-SAR data were better for identifying those larger than 2.0 ha. Combining the two types of data decreased the number of misclassified fields. In total, 36.4% of the misclassified fields were smaller than 100 a, and 41.8% were 1.0–2.0 ha in area. Therefore, a limitation related to the area of fields could improve the reliability of the classification maps. One approach to improve classification accuracy is the use of more SAR data scenes, since these data offer a significant amount of information related to vegetation parameters and crop height and cover rate [12,26,71,72], which affect the timing of seeding, transplanting, and harvesting. This could be useful for clarifying the differences in phenology among the five crops.

Figure 5.

Relationship between field area and number of misclassified fields for (a): Case 1, (b): Case 2, and (c): Case 3. Case 1: X-band HH polarization data from ASNARO-2 XSAR and C-band VH/VV polarization data from Sentinel-1B combined; Case 2: X-band HH polarization data from ASNARO-2 XSAR; Case 3: C-band VH/VV polarization data from Sentinel-1B.

4. Conclusions

Optical satellite data are affected by atmospheric and weather conditions, and the availability of data may thus be limited for Asian monsoon areas. In contrast, synthetic aperture radar (SAR) systems are not subject to atmospheric influences or weather conditions and can offer a significant amount of information related to plant phenology. As a result, Sentinel-1A and Sentinel-1B C-SAR data have proved valuable for managing agricultural fields. However, their resolution is not sufficient for monitoring some types of Japanese fields. In this study, we evaluated the potential of also using ASNARO-2 XSAR data for crop-type classification through machine learning algorithms (SVM, RF, FNN, and KELM), using SAR data acquired in June and August.

Combining XSAR and C-SAR data was effective for improving the classification accuracy, and an overall accuracy of 0.854 ± 0.018 (kappa = 0.810 ± 0.023; AD + QD = 0.146 ± 0.018) was achieved using SVM. The results of this study verify the validity of this remote sensing method, demonstrate its strong potential for crop classification, and suggest that the use of data from both satellites could be expanded in future.

Author Contributions

All analyses and the writing of the paper were performed by the author.

Funding

This research was supported by JSPS KAKENHI [grant number 19K06313].

Acknowledgments

The author would like to thank Hiroshi Tani from Hokkaido University, Tokachi Nosai for providing the field data, and the Japan Space Imaging Corporation and Nippon Electric Company for providing the ASNARO-2 XSAR images.

Conflicts of Interest

The author declares no conflicts of interest.

References

- Tilman, D.; Cassman, K.G.; Matson, P.A.; Naylor, R.; Polasky, S. Agricultural sustainability and intensive production practices. Nature 2002, 418, 671–677. [Google Scholar] [CrossRef] [PubMed]

- Wardlow, B.D.; Egbert, S.L. Large-area crop mapping using time-series MODIS 250 m NDVI data: An assessment for the U.S. Central Great Plains. Remote Sens. Environ. 2008, 112, 1096–1116. [Google Scholar] [CrossRef]

- Ministry of Agriculture, Forestry and Fisheries. Available online: http://www8.cao.go.jp/space/comittee/dai36/siryou3-5.pdf (accessed on 1 April 2019).

- Sarker, L.R.; Nichol, J.E. Improved forest biomass estimates using ALOS AVNIR-2 texture indices. Remote Sens. Environ. 2011, 115, 968–977. [Google Scholar] [CrossRef]

- Darvishzadeh, R.; Skidmore, A.; Abdullah, H.; Cherenet, E.; Ali, A.; Wang, T.; Nieuwenhuis, W.; Heurich, M.; Vrieling, A.; O’Connor, B.; et al. Mapping leaf chlorophyll content from Sentinel-2 and RapidEye data in spruce stands using the invertible forest reflectance model. Int. J. Appl. Earth Obs. Geoinf. 2019, 79, 58–70. [Google Scholar] [CrossRef]

- Darvishzadeh, R.; Wang, T.; Skidmore, A.; Vrieling, A.; O’Connor, B.; Gara, T.W.; Ens, B.J.; Paganini, M. Analysis of Sentinel-2 and RapidEye for Retrieval of Leaf Area Index in a Saltmarsh Using a Radiative Transfer Model. Remote Sens. 2019, 11, 671. [Google Scholar] [CrossRef]

- Useya, J.; Chen, S.B. Comparative Performance Evaluation of Pixel-Level and Decision-Level Data Fusion of Landsat 8 OLI, Landsat 7 ETM+ and Sentinel-2 MSI for Crop Ensemble Classification. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2018, 11, 4441–4451. [Google Scholar] [CrossRef]

- Sonobe, R.; Yamaya, Y.; Tani, H.; Wang, X.; Kobayashi, N.; Mochizuki, K.-I. Evaluating metrics derived from Landsat 8 OLI imagery to map crop cover. Geocarto Int. 2018, 34, 839–855. [Google Scholar] [CrossRef]

- Eitel, J.U.H.; Long, D.S.; Gessler, P.E.; Smith, A.M.S. Using in-situ measurements to evaluate the new RapidEye (TM) satellite series for prediction of wheat nitrogen status. Int. J. Remote Sens. 2007, 28, 4183–4190. [Google Scholar] [CrossRef]

- Roy, D.; Wulder, M.; Loveland, T.; Woodcock, C.E.; Allen, R.; Anderson, M.; Helder, D.; Irons, J.; Johnson, D.; Kennedy, R.; et al. Landsat-8: Science and product vision for terrestrial global change research. Remote Sens. Environ. 2014, 145, 154–172. [Google Scholar] [CrossRef]

- Zhang, X.; Wu, B.; Ponce-Campos, G.E.; Zhang, M.; Chang, S.; Tian, F. Mapping up-to-Date Paddy Rice Extent at 10 M Resolution in China through the Integration of Optical and Synthetic Aperture Radar Images. Remote Sens. 2018, 10, 1200. [Google Scholar] [CrossRef]

- Sonobe, R.; Tani, H. Application of the Sahebi model using ALOS/PALSAR and 66.3 cm long surface profile data. Int. J. Remote Sens. 2009, 30, 6069–6074. [Google Scholar] [CrossRef]

- Xu, J.; Li, Z.; Tian, B.; Huang, L.; Chen, Q.; Fu, S. Polarimetric analysis of multi-temporal RADARSAT-2 SAR images for wheat monitoring and mapping. Int. J. Remote Sens. 2014, 35, 3840–3858. [Google Scholar] [CrossRef]

- Sonobe, R.; Tani, H.; Wang, X.; Kobayashi, N.; Shimamura, H. Winter Wheat Growth Monitoring Using Multi-temporal TerraSAR-X Dual-polarimetric Data. Jpn. Agric. Res. Q. JARQ 2014, 48, 471–476. [Google Scholar] [CrossRef]

- Joerg, H.; Pardini, M.; Hajnsek, I.; Papathanassiou, K.P. Sensitivity of SAR Tomography to the Phenological Cycle of Agricultural Crops at X-, C-, and L-band. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2018, 11, 3014–3029. [Google Scholar] [CrossRef]

- Bouvet, A.; Le Toan, T. Use of ENVISAT/ASAR wide-swath data for timely rice fields mapping in the Mekong River Delta. Remote Sens. Environ. 2011, 115, 1090–1101. [Google Scholar] [CrossRef]

- McNairn, H.; Champagne, C.; Shang, J.; Holmstrom, D.; Reichert, G. Integration of optical and Synthetic Aperture Radar (SAR) imagery for delivering operational annual crop inventories. ISPRS J. Photogramm. Remote Sens. 2009, 64, 434–449. [Google Scholar] [CrossRef]

- Zonno, M.; Bordoni, F.; Matar, J.; de Almeida, F.Q.; Sanjuan-Ferrer, M.J.; Younis, M.; Rodriguez-Cassola, M.; Krieger, G. Sentinel-1 Next Generation: Trade-offs and Assessment of Mission Performance. In Proceedings of the ESA Living Planet Symposium, Milan, Italy, 13–17 May 2019. [Google Scholar]

- Sun, C.; Bian, Y.; Zhou, T.; Pan, J. Using of Multi-Source and Multi-Temporal Remote Sensing Data Improves Crop-Type Mapping in the Subtropical Agriculture Region. Sensors 2019, 19, 2401. [Google Scholar] [CrossRef] [PubMed]

- Mercier, A.; Betbeder, J.; Rumiano, F.; Baudry, J.; Gond, V.; Blanc, L.; Bourgoin, C.; Cornu, G.; Ciudad, C.; Marchamalo, M.; et al. Evaluation of Sentinel-1 and 2 Time Series for Land Cover Classification of Forest–Agriculture Mosaics in Temperate and Tropical Landscapes. Remote Sens. 2019, 11, 979. [Google Scholar] [CrossRef]

- Sonobe, R.; Yamaya, Y.; Tani, H.; Wang, X.; Kobayashi, N.; Mochizuki, K.-I. Assessing the suitability of data from Sentinel-1A and 2A for crop classification. GIScience Remote Sens. 2017, 54, 918–938. [Google Scholar] [CrossRef]

- Stendardi, L.; Karlsen, S.R.; Niedrist, G.; Gerdol, R.; Zebisch, M.; Rossi, M.; Notarnicola, C. Exploiting Time Series of Sentinel-1 and Sentinel-2 Imagery to Detect Meadow Phenology in Mountain Regions. Remote Sens. 2019, 11, 542. [Google Scholar] [CrossRef]

- Clauss, K.; Ottinger, M.; Leinenkugel, P.; Kuenzer, C. Estimating rice production in the Mekong Delta, Vietnam, utilizing time series of Sentinel-1 SAR data. Int. J. Appl. Earth Obs. Geoinf. 2018, 73, 574–585. [Google Scholar] [CrossRef]

- Ndikumana, E.; Minh, D.H.T.; Nguyen, H.D.; Baghdadi, N.; Courault, D.; Hossard, L.; El Moussawi, I. Estimation of Rice Height and Biomass Using Multitemporal SAR Sentinel-1 for Camargue, Southern France. Remote Sens. 2018, 10, 1394. [Google Scholar] [CrossRef]

- Fontanelli, G.; Paloscia, S.; Zribi, M.; Chahbi, A. Sensitivity analysis of X-band SAR to wheat and barley leaf area index in the Merguellil Basin. Remote Sens. Lett. 2013, 4, 1107–1116. [Google Scholar] [CrossRef]

- McNairn, H.; Jiao, X.; Pacheco, A.; Sinha, A.; Tan, W.; Li, Y. Estimating canola phenology using synthetic aperture radar. Remote Sens. Environ. 2018, 219, 196–205. [Google Scholar] [CrossRef]

- Costa, M.P.F. Use of SAR satellites for mapping zonation of vegetation communities in the Amazon floodplain. Int. J. Remote Sens. 2004, 25, 1817–1835. [Google Scholar] [CrossRef]

- National Research and Development Agency and Japan Aerospace Exploration Agency (JAXA). Launch Result, Epsilon-3 with ASNARO-2 Aboard. Available online: https://global.jaxa.jp/press/2018/01/20180118_epsilon3.html (accessed on 5 June 2019).

- Japan EO Satellite Service, Ltd. (JEOSS). Japan EO Satellite Service, Ltd. (JEOSS) Announces the Start of Commercial Operation. Available online: https://jeoss.co.jp/press/japan-eo-satellite-service-ltd-jeoss-announces-the-start-of-commercial-operation/ (accessed on 5 June 2019).

- Sonobe, R. Parcel-Based Crop Classification Using Multi-Temporal TerraSAR-X Dual Polarimetric Data. Remote Sens. 2019, 11, 1148. [Google Scholar] [CrossRef]

- Burges, C.J. A Tutorial on Support Vector Machines for Pattern Recognition. Data Min. Knowl. Discov. 1998, 2, 121–167. [Google Scholar] [CrossRef]

- Foody, G.; Mathur, A. A relative evaluation of multiclass image classification by support vector machines. IEEE Trans. Geosci. Remote Sens. 2004, 42, 1335–1343. [Google Scholar] [CrossRef]

- Sonobe, R.; Tani, H.; Wang, X.; Kobayashi, N.; Shimamura, H. Discrimination of crop types with TerraSAR-X-derived information. Phys. Chem. Earth Parts A B C 2015, 2–13. [Google Scholar] [CrossRef]

- Biau, G.; Scornet, E. A random forest guided tour. TEST 2016, 25, 197–227. [Google Scholar] [CrossRef]

- Sonobe, R.; Sano, T.; Horie, H. Using spectral reflectance to estimate leaf chlorophyll content of tea with shading treatments. Biosyst. Eng. 2018, 175, 168–182. [Google Scholar] [CrossRef]

- Pal, M. Random forest classifier for remote sensing classification. Int. J. Remote Sens. 2005, 26, 217–222. [Google Scholar] [CrossRef]

- Pal, M.; Maxwell, A.E.; Warner, T.A. Kernel-based extreme learning machine for remote-sensing image classification. Remote Sens. Lett. 2013, 4, 853–862. [Google Scholar] [CrossRef]

- Sonobe, R.; Tani, H.; Wang, X.F. An experimental comparison between KELM and CART for crop classification using Landsat-8 OLI data. Geocarto Int. 2017, 32, 128–138. [Google Scholar] [CrossRef]

- Cooner, A.J.; Shao, Y.; Campbell, J.B. Detection of Urban Damage Using Remote Sensing and Machine Learning Algorithms: Revisiting the 2010 Haiti Earthquake. Remote Sens. 2016, 8, 868. [Google Scholar] [CrossRef]

- Puertas, O.L.; Brenning, A.; Meza, F.J. Balancing misclassification errors of land cover classification maps using support vector machines and Landsat imagery in the Maipo river basin (Central Chile, 1975–2010). Remote Sens. Environ. 2013, 137, 112–123. [Google Scholar] [CrossRef]

- Bergstra, J.; Bengio, Y. Random Search for Hyper-Parameter Optimization. J. Mach. Learn. Res. 2012, 13, 281–305. [Google Scholar]

- Monsivais-Huertero, A.; Liu, P.W.; Judge, J. Phenology-Based Backscattering Model for Corn at L-Band. IEEE Trans. Geosci. Remote Sens. 2018, 56, 4989–5005. [Google Scholar] [CrossRef]

- Shoshany, M.; Svoray, T.; Curran, P.J.; Foody, G.M.; Perevolotsky, A. The relationship between ERS-2 SAR backscatter and soil moisture: Generalization from a humid to semi-arid transect. Int. J. Remote Sens. 2000, 21, 2337–2343. [Google Scholar] [CrossRef]

- Betbeder, J.; Fieuzal, R.; Philippets, Y.; Ferro-Famil, L.; Baup, F. Contribution of multitemporal polarimetric synthetic aperture radar data for monitoring winter wheat and rapeseed crops. J. Appl. Remote Sens. 2016, 10, 026020. [Google Scholar] [CrossRef]

- Kim, Y.; Jackson, T.; Bindlish, R.; Lee, H.; Hong, S. Radar Vegetation Index for Estimating the Vegetation Water Content of Rice and Soybean. IEEE Geosci. Remote Sens. Lett. 2012, 9, 564–568. [Google Scholar]

- Richards, J.A. Remote Sensing Digital Image Analysis; Springer: Berlin/Heidelberg, Germany, 2013. [Google Scholar]

- Hastie, T.; Tibshirani, R.; Friedman, J. The Elements of Statistical Learning: Data Mining, Inference, and Prediction, 2nd ed.; Springer: New York, NY, USA, 2009; p. 745. [Google Scholar]

- R Core Team. R: A Language and Environment for Statistical Computing. Available online: https://www.R-project.org/ (accessed on 5 June 2019).

- Cortes, C.; Vapnik, V. Support-Vector Networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Aizerman, M.; Braverman, E.; Rozonoer, L. Theoretical foundations of the potential function method in pattern recognition learning. Autom. Remote Control 1964, 25, 821–837. [Google Scholar]

- Melgani, F.; Bruzzone, L. Classification of hyperspectral remote sensing images with support vector machines. IEEE Trans. Geosci. Remote Sens. 2004, 42, 1778–1790. [Google Scholar] [CrossRef]

- Camps-Valls, G.; Bruzzone, L. Kernel-based methods for hyperspectral image classification. IEEE Trans. Geosci. Remote Sens. 2005, 43, 1351–1362. [Google Scholar] [CrossRef]

- Kavzoglu, T.; Colkesen, I. A kernel functions analysis for support vector machines for land cover classification. Int. J. Appl. Earth Obs. Geoinf. 2009, 11, 352–359. [Google Scholar] [CrossRef]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Liaw, A.; Wiener, M. Classification and regression by random Forest. R News 2002, 2, 18–22. [Google Scholar]

- Ishwaran, H.; Kogalur, U.B. Random survival forests for R. R News 2007, 7, 25–31. [Google Scholar]

- Ishwaran, H.; Kogalur, U.B.; Blackstone, E.H.; Lauer, M.S. Random survival forests. Ann. Appl. Stat. 2008, 2, 841–860. [Google Scholar] [CrossRef]

- Sonobe, R.; Yamaya, Y.; Tani, H.; Wang, X.; Kobayashi, N.; Mochizuki, K.I. Mapping crop cover using multi-temporal Landsat 8 OLI imagery. Int. J. Remote Sens. 2017, 38, 4348–4361. [Google Scholar] [CrossRef]

- Svozil, D.; Kvasnička, V.; Pospichal, J. Introduction to multi-layer feed-forward neural networks. Chemom. Intell. Lab. Syst. 1997, 39, 43–62. [Google Scholar] [CrossRef]

- Srivastava, N.; Hinton, G.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Dropout: A Simple Way to Prevent Neural Networks from Overfitting. J. Mach. Learn. Res. 2014, 15, 1929–1958. [Google Scholar]

- Pontius, R.G.; Millones, M. Death to Kappa: Birth of quantity disagreement and allocation disagreement for accuracy assessment. Int. J. Remote Sens. 2011, 32, 4407–4429. [Google Scholar] [CrossRef]

- McNemar, Q. Note on the sampling error of the difference between correlated proportions or percentages. Psychometrika 1947, 12, 153–157. [Google Scholar] [CrossRef]

- Macelloni, G.; Paloscia, S.; Pampaloni, P.; Marliani, F.; Gai, M. The relationship between the backscattering coefficient and the biomass of narrow and broad leaf crops. IEEE Trans. Geosci. Remote Sens. 2002, 39, 873–884. [Google Scholar] [CrossRef]

- Baker, B.A.; Warner, T.A.; Conley, J.F.; McNeil, B.E. Does spatial resolution matter? A multi-scale comparison of object-based and pixel-based methods for detecting change associated with gas well drilling operations. Int. J. Remote Sens. 2013, 34, 1633–1651. [Google Scholar] [CrossRef]

- Lv, T.T.; Liu, C. Study on extraction of crop information using time-series MODIS data in the Chao Phraya Basin of Thailand. Adv. Space Res. 2010, 45, 775–784. [Google Scholar] [CrossRef]

- Avci, Z.D.U.; Sunar, F. Process-based image analysis for agricultural mapping: A case study in Turkgeldi region, Turkey. Adv. Space Res. 2015, 56, 1635–1644. [Google Scholar] [CrossRef]

- Guarini, R.; Bruzzone, L.; Santoni, M.; Dini, L. Analysis on the Effectiveness of Multi-Temporal COSMO-SkyMed Images for Crop Classification. In Proceedings of the Conference on Image and Signal Processing for Remote Sensing XXI, Toulouse, France, 21–23 September 2015. [Google Scholar]

- Goodin, D.G.; Anibas, K.L.; Bezymennyi, M. Mapping land cover and land use from object-based classification: An example from a complex agricultural landscape. Int. J. Remote Sens. 2015, 36, 4702–4723. [Google Scholar] [CrossRef]

- Azar, R. Assessing in-season crop classification performance using satellite data: A test case in Northern Italy. Eur. J. Remote Sens. 2016, 49, 361–380. [Google Scholar] [CrossRef]

- Kussul, N.; Lemoine, G.; Gallego, F.J.; Skakun, S.V.; Lavreniuk, M.; Shelestov, A.Y. Parcel-Based Crop Classification in Ukraine Using Landsat-8 Data and Sentinel-1A Data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2016, 9, 2500–2508. [Google Scholar] [CrossRef]

- Gao, Q.; Zribi, M.; Escorihuela, M.J.; Baghdadi, N.; Segui, P.Q. Irrigation Mapping Using Sentinel-1 Time Series at Field Scale. Remote Sens. 2018, 10, 1495. [Google Scholar] [CrossRef]

- Amazirh, A.; Merlin, O.; Er-Raki, S.; Gao, Q.; Rivalland, V.; Malbeteau, Y.; Khabba, S.; Escorihuela, M.J. Retrieving surface soil moisture at high spatio-temporal resolution from a synergy between Sentinel-1 radar and Landsat thermal data: A study case over bare soil. Remote Sens. Environ. 2018, 211, 321–337. [Google Scholar] [CrossRef]

© 2019 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).