4.1. Validation

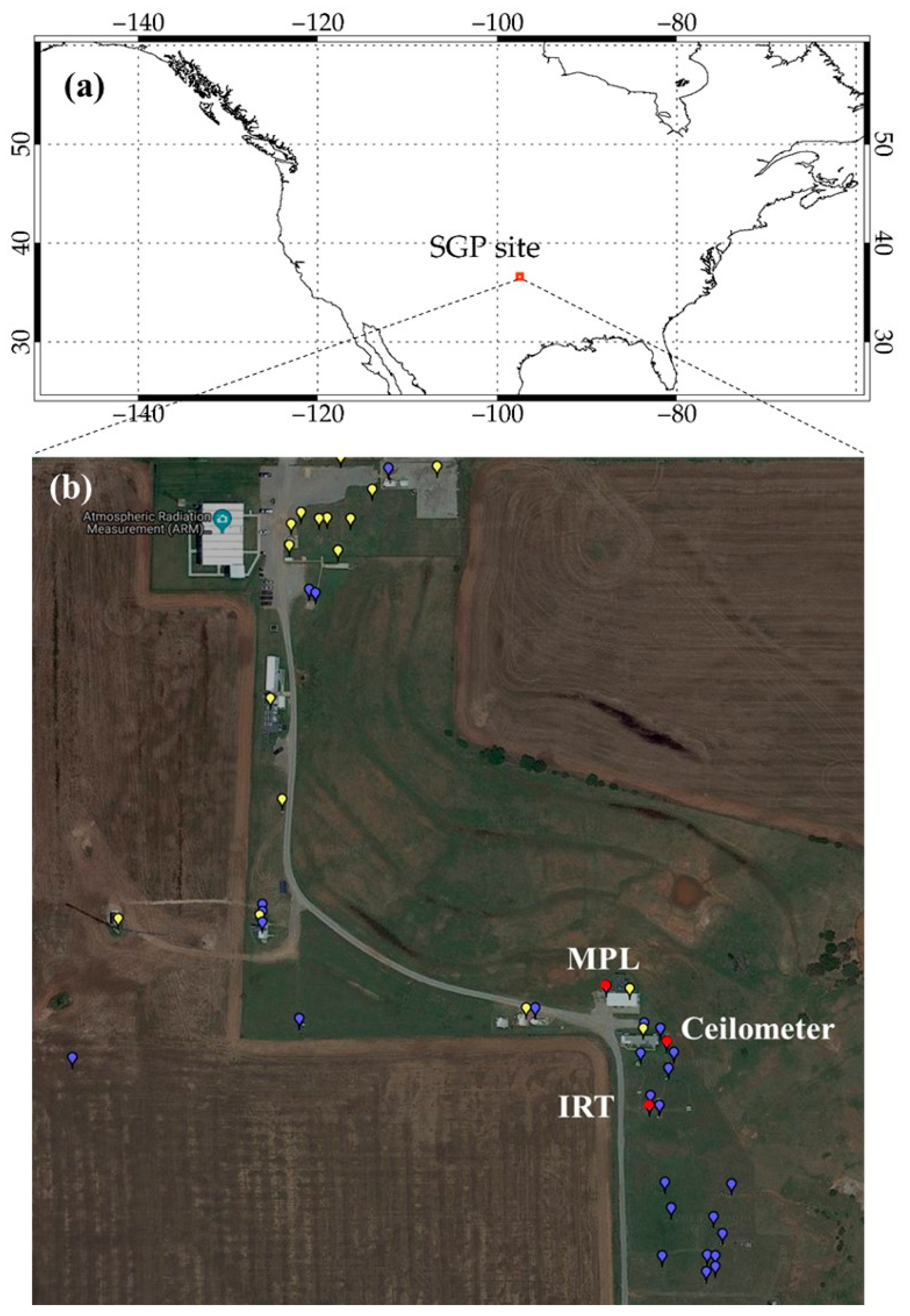

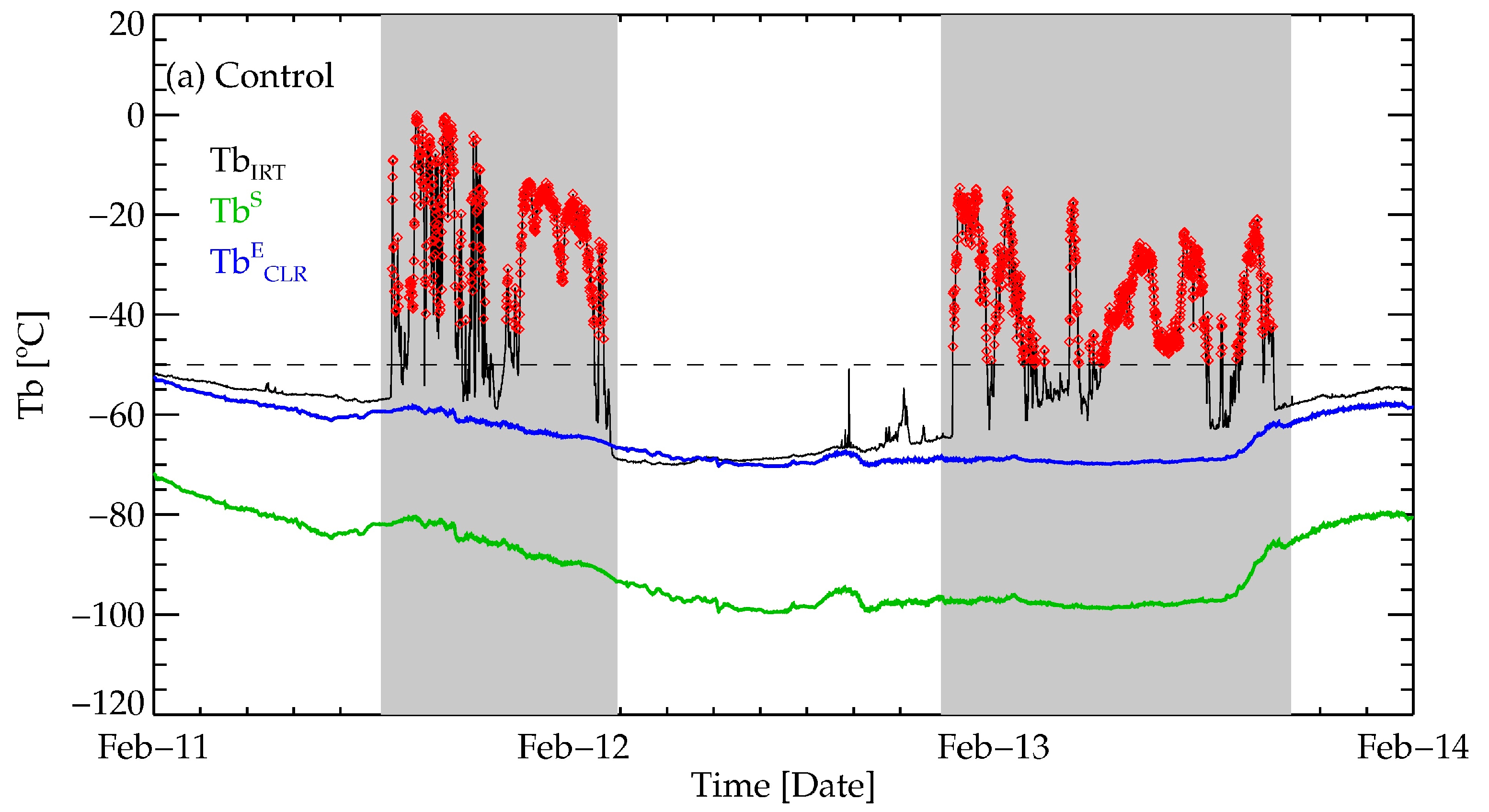

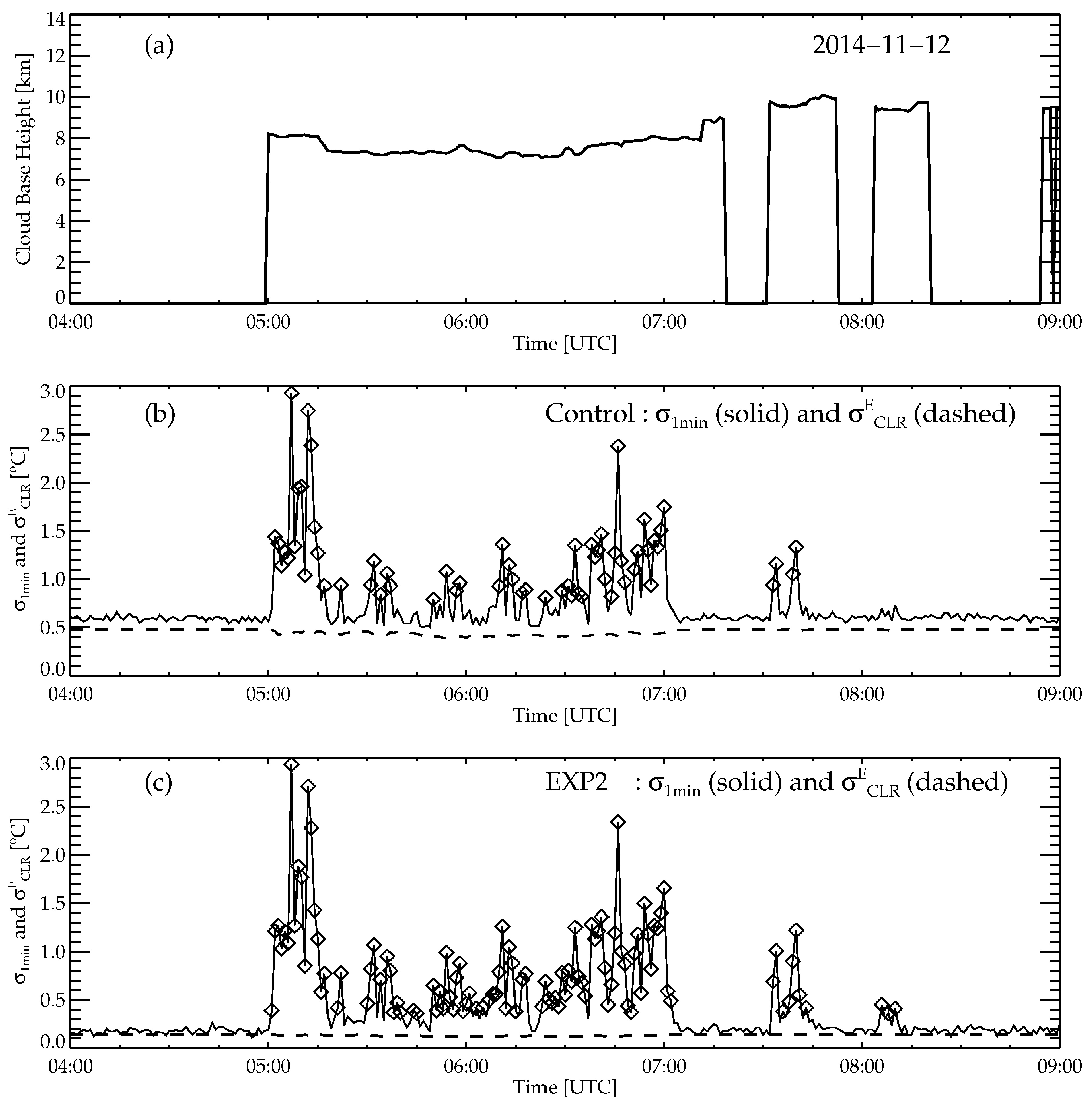

The characteristics of algorithm performance are analyzed through the comparison with the reference data, the ceilometer, and MPL measurements. A different period from the algorithm development—one year, from November 2014 to October 2015—was used for the validation. The IRT data used for the validation were also processed to have the same specifications of each dataset used for the algorithm development. The validation results are shown first for the Control, with the different reference data, followed by the results for the different experimental datasets, cloud altitudes, and seasons. Through the analysis of the validation results, the effects of the different types of IRT data on cloud detection are characterized.

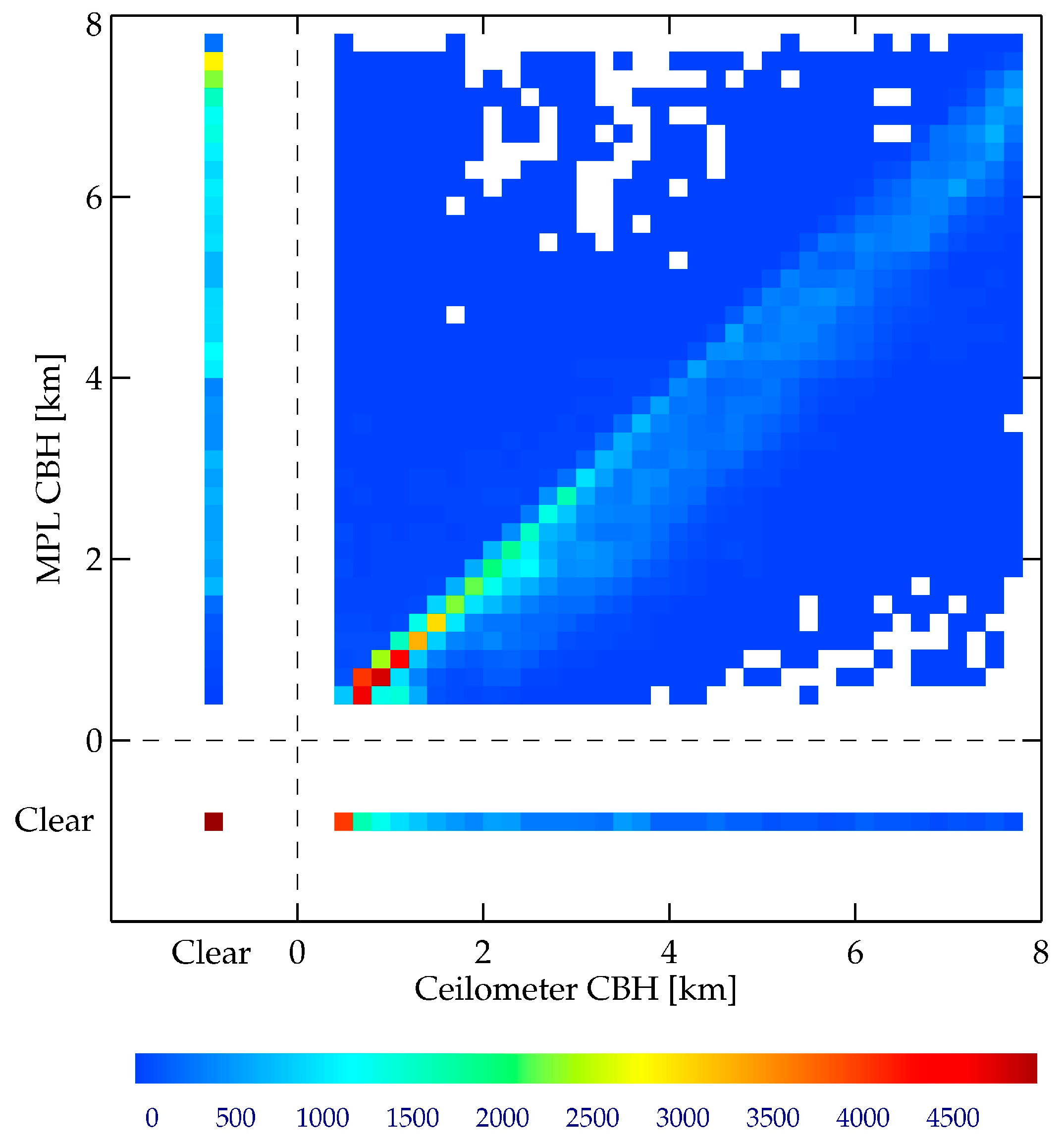

First, the overall performance of the Control is summarized into a contingency table,

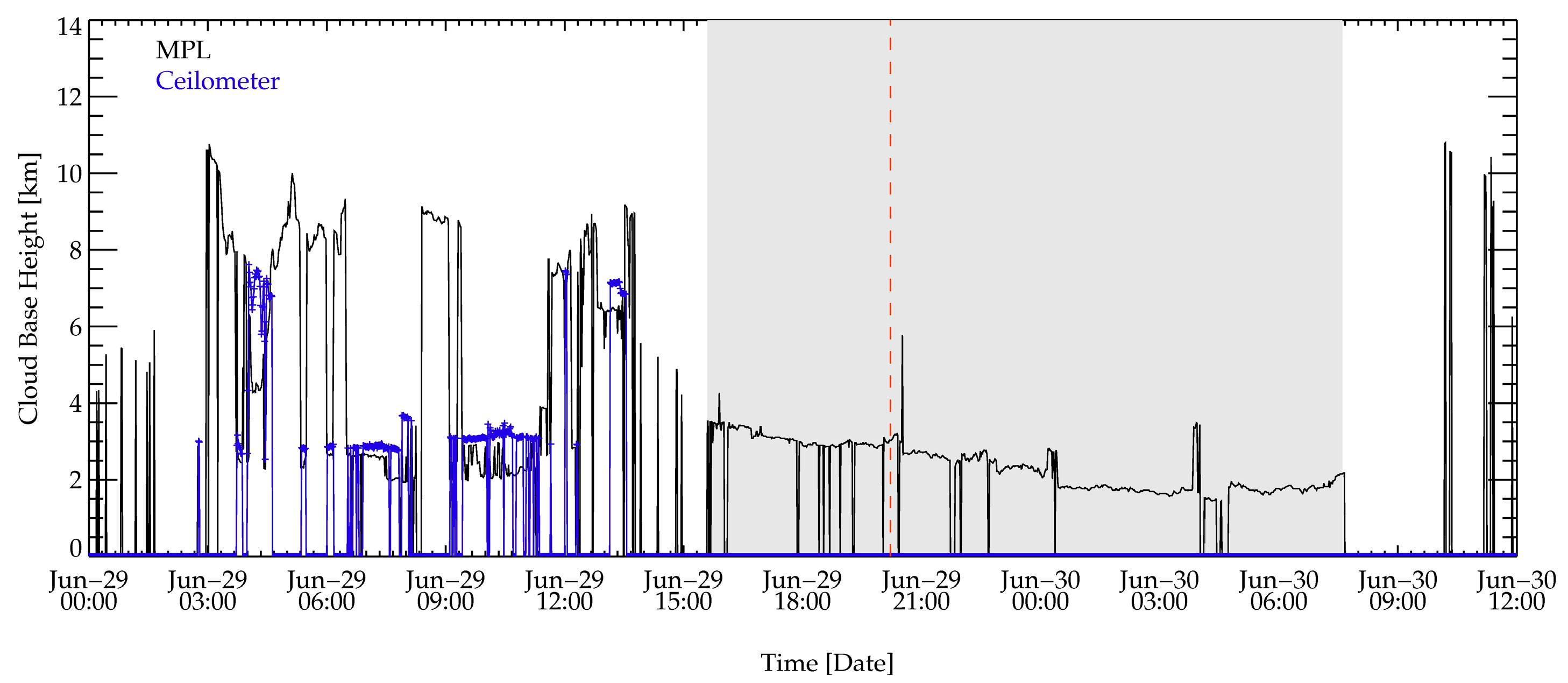

Table 6, which shows the number of cases for the four categories—hit, miss, false alarm, and corrective negative—in comparison with the measurement of the ceilometer and MPL. The successful detection includes hit and correct negative, while miss and false alarm correspond to detection failure. Regarding the ceilometer-based validation, the success rate is better than 93%, while it reduces to about 73% for the MPL-based validation. This dramatic decrease is due to the increase of both the false alarm and miss, especially due to misses. The percentage of miss increases from 3% for the ceilometer-based to 17% for the MPL-based validation. This degree of increase is mainly due to the increased detectability of MPL for the high clouds (see

Appendix B). Consider a case of high clouds that are not detected by the ceilometer but are detected by MPL, for example. Additionally, assume that the clouds are not detected by IRT. Then, the comparison result is a correct negative with the ceilometer-based validation, while it is a miss with the MPL-based validation. Indeed, the difference in the number of correct negatives between the ceilometer-based and MPL-based validation is 67,059, which is the same as the difference of misses between the two references. Moreover, it turns out that about 70% of misses in the MPL-based validation are caused by high clouds that the ceilometer could not detect.

Then again, the false alarms in MPL-based validation, which also increased compared with the ceilometer-based validation (from 20,808 to 52,502 points), are due to the characteristics of the reference instrument: MPL. The difference in the cases of hit for the two reference instruments is 31,694, which is the same as difference in the false alarms. Thus, the majority of false alarms with the MPL-based validation, about 65% of the false alarms, occurs when CBH is below 500 m. This corresponds to the lower boundary of the MPL cloud detection [

24,

26]. Therefore, the increased false alarms with the MLP-based validation is originated from the limitations in the reference data, not in the limitations of the detection algorithm, nor of the IRT.

The algorithm performances for the different experimental datasets are analyzed using two derived scores: POD (probability of detection) and FAR (false alarm ratio). While POD represents correct detection when the event actually occurs (thus estimated by the number of hits divided by the number of occurrences (i.e., hits plus misses)), FAR represents incorrect detection (thus estimated by the number of false alarms divided by the number of detections (i.e., false alarms plus hits).

Table 7 shows the two scores for the four experimental datasets using the two reference data. Overall, the characteristics of the validation results in terms of the reference data are the same as those of the Control: the higher (lower) PODs (FARs) for the ceilometer-based validation compared to the MPL-based validation. The larger FAR score, which is as large as about 25%, is evident with the MPL-based validation, which is due to the increase in false alarms with a limited detection of low clouds by MPL. It is interesting to note that the highest FAR of EXP2, with the ceilometer-based validation, is due to the increased false alarms from high clouds resulting from the limited capability of the ceilometer, rather than due to the limitations of the algorithm.

The root cause for the large POD difference compared with the reference data is clearly identified when PODs are estimated for the different cloud altitudes, as summarized in

Table 8. When the clouds are at lower altitude, the POD values are quite similar, all having POD values of higher than 97%, regardless of the reference data (a slightly better POD with the ceilometer-based validation due to lower false alarms). However, with increasing cloud altitudes, the POD values decrease significantly, especially with high clouds, although it depends on the experimental datasets. The POD score with the MPL-based validation shows the worst value of 32% for EXP1 and the best value of 52.2% for EXP2. This rather large difference between the two experimental datasets is due to the difference in the temporal test, which will be shown later. Here, it is important to note that the validation of cloud detection for the high clouds should be performed with the reference data from instruments that capable of detecting high clouds, such as the MPL used for the current study.

Table 8 also shows the different performances for the different experimental datasets. EXP2, having the full dynamic range and a lower sampling rate, shows the best performance, while the Control and EXP3 show a similar performance followed by EXP1, regardless of the reference data. Also, in general, EXP2 shows the best performance for all of the cloud layers, with the increasing degree of outperformance paralleling the increasing cloud altitude. EXP1 having a limited dynamic range and a higher sampling rate shows the worst performance for all of the cloud layers, in fact. Although it is not as prominent as the MPL-based validation, the results from the ceilometer-based validation also show similar characteristics. Thus, EXP2 outperforms regardless of cloud layers and reference data, which entails a more detailed analysis, especially for each spectral and temporal test.

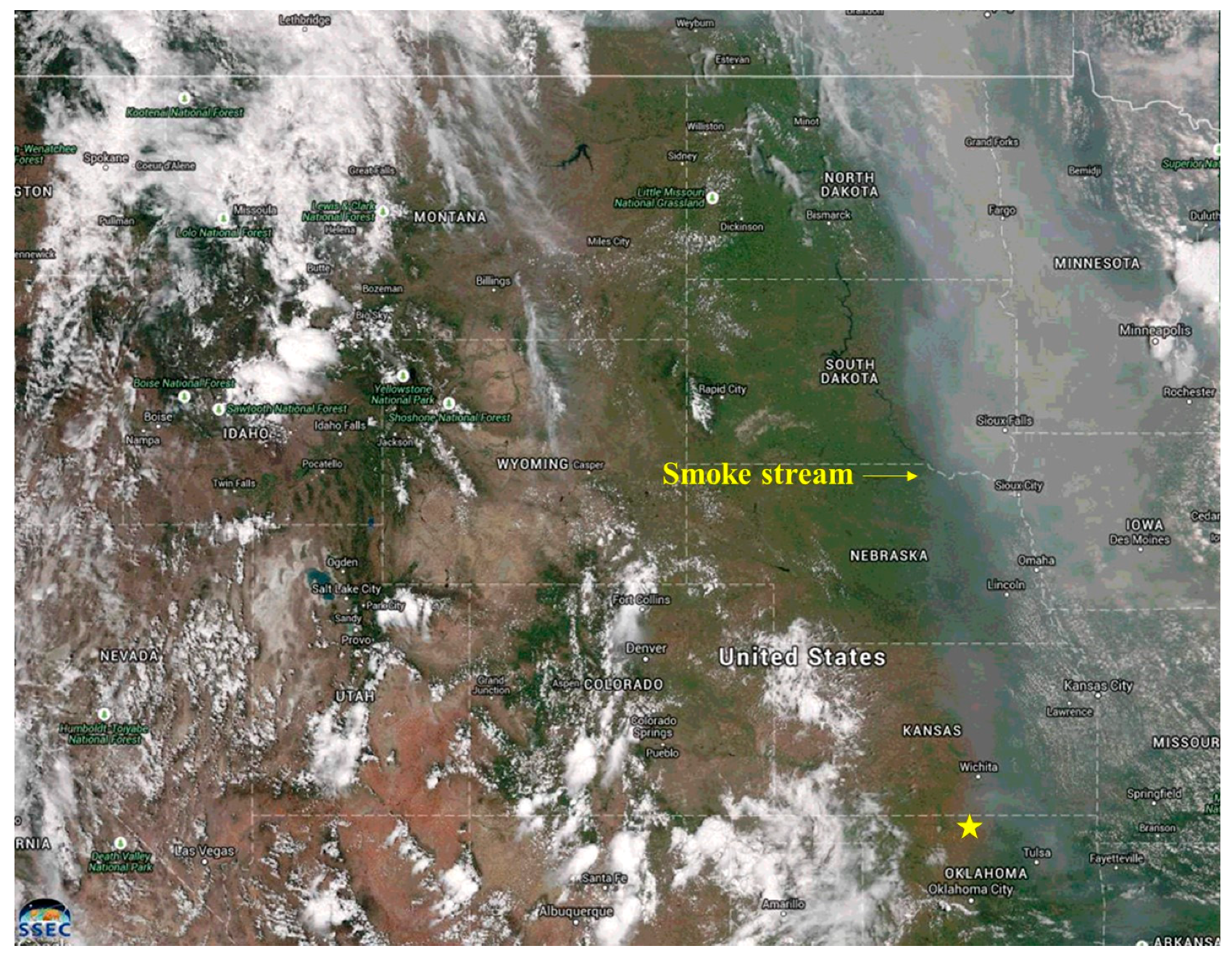

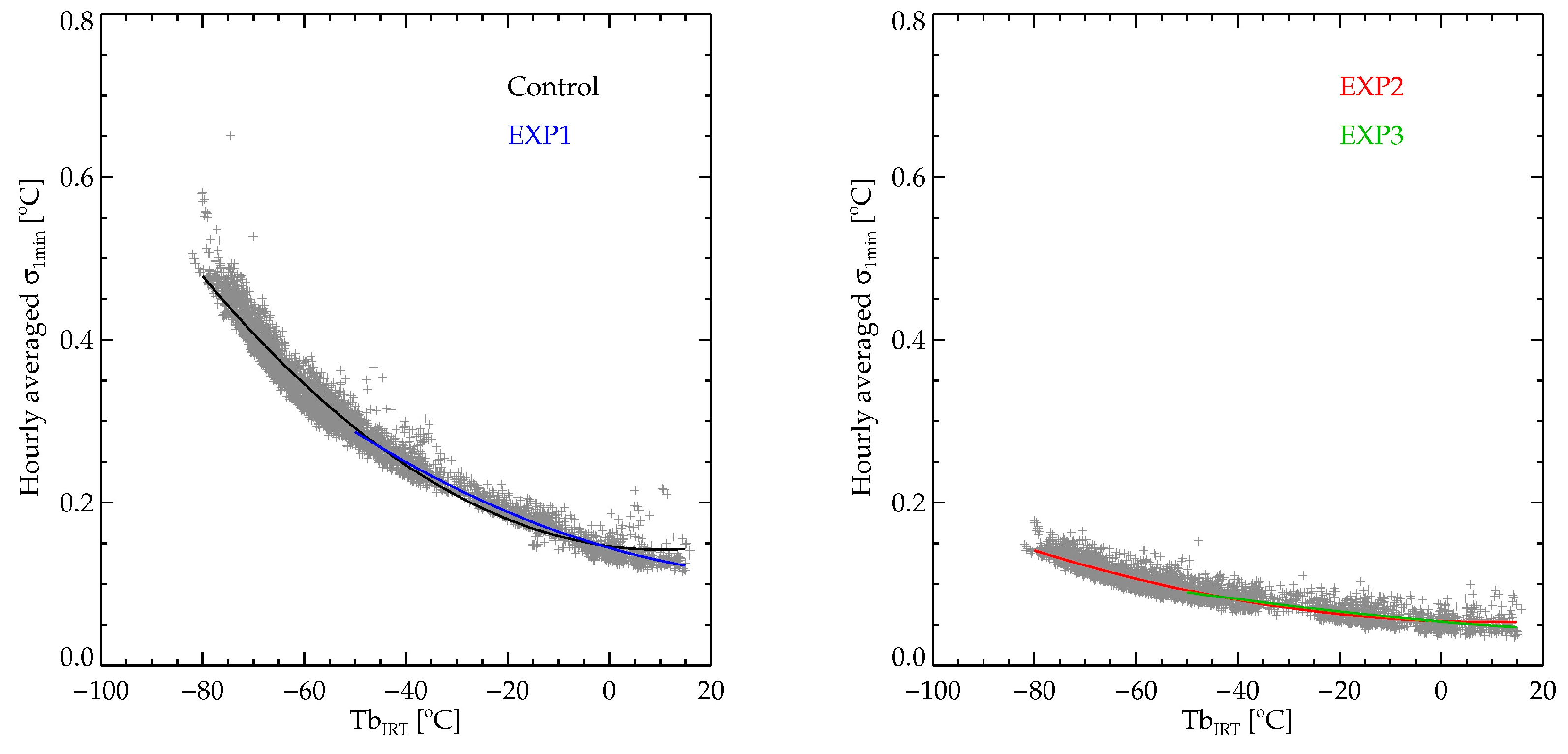

As shown in the algorithm development in

Section 3, the spectral and temporal tests are sensitive to the dynamic range and NEdT, respectively. Since both are dependent on the measured Tb, they also depend on the atmospheric conditions. Thus, the authors first check the POD performances for the different atmospheric conditions. To represent the dissimilar atmospheric conditions, the authors use two different seasons: the warm and moist summer, and the cold and dry winter. Here, the two seasons are grouped based on the T

SFC and

e measured at the SGP site; from June to September (JJAS) for the summer, and from November to February (NDJF) as the winter.

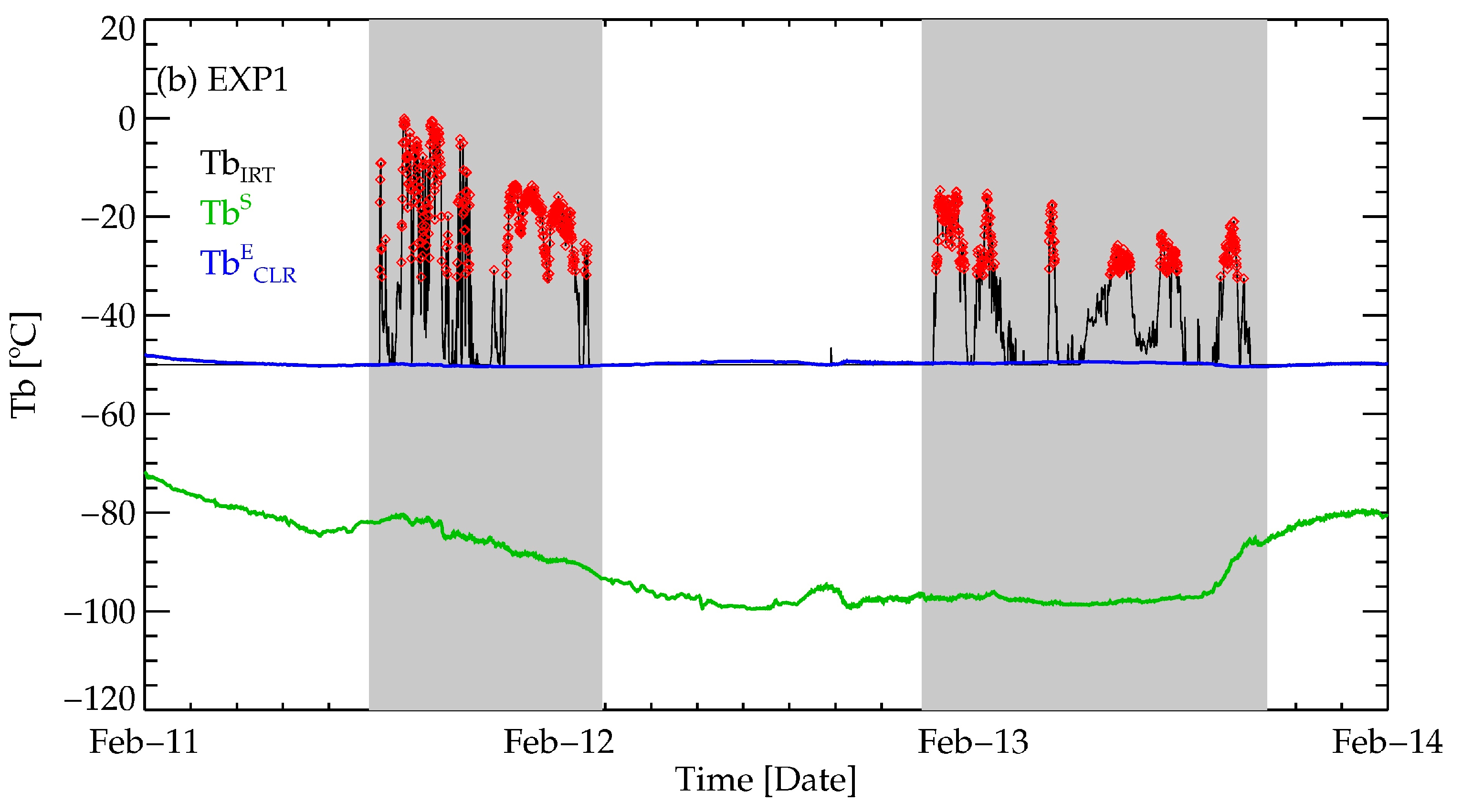

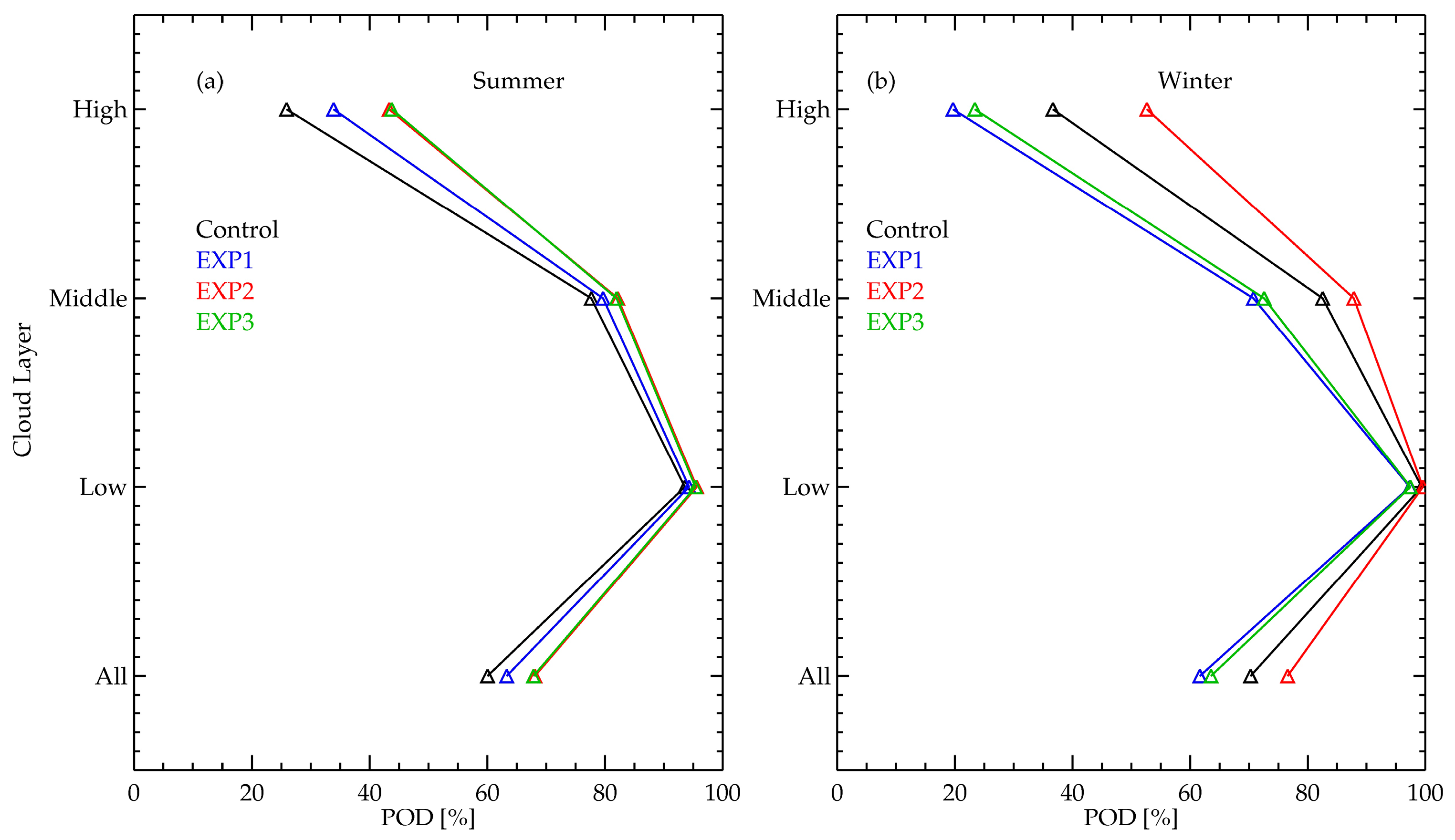

Figure 7 shows the POD values of the four experimental datasets with the MPL-based validation for the two seasons and the different cloud layers.

Overall, the POD scores decrease with increasing cloud altitudes, regardless of seasons and experimental datasets, which is the same as the results from the yearlong data. However, the performances with respect to the different seasons and experimental datasets show distinct characteristics. During the summer, for example, EXP2 and EXP3 (having the lower NEdT) show almost the same POD scores at all of the cloud layers, followed by the EXP1 and Control scores. Conversely, during the winter, while the EXP2 still shows the best POD performance, the Control performs the second best, followed by EXP3 and EXP1 (with the last two having the same dynamic range). It is interesting to note that there is a rather large performance difference between the two top performers—EXP2 and the Control—even though they have the same dynamic range. This is due to the performance differences in the temporal test, which will be shown later. To summarize, during the summer, NEdT, and thus the temporal test, is the key to differentiating the detection performance. During the winter, the dynamic range, and thus the spectral test, is the key for the winter, while the temporal test plays an important role when the dynamic range is the same.

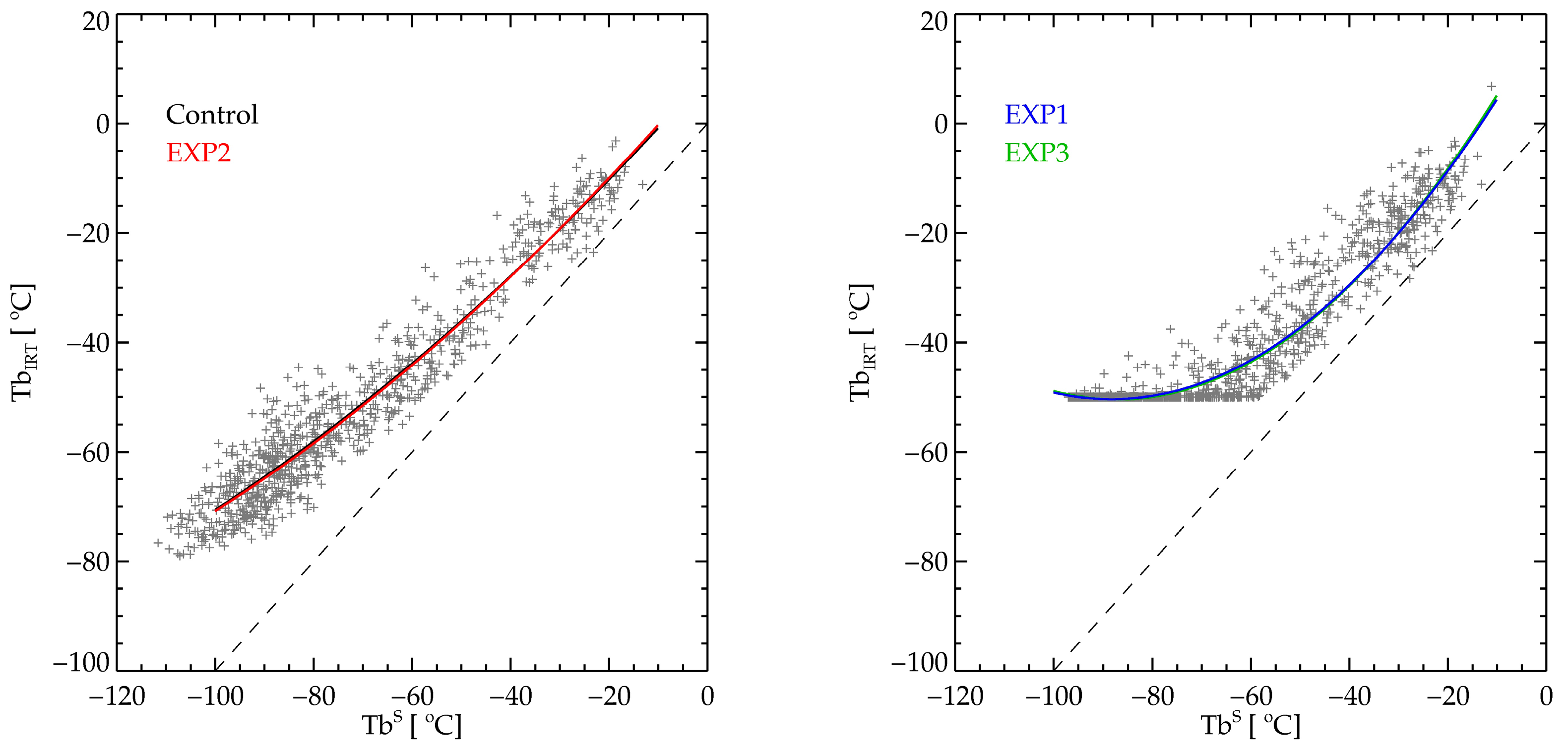

The reasons for such a performance characteristic are traced back to the combined effects of the IRT data type, the characteristics of algorithm tests, and the atmospheric conditions. First, during the summer, the clear sky Tb is rather warm, and thus, the estimated Tb

ECLR for all four experimental datasets are almost the same (see

Figure 3). Furthermore, the Tb contrast between the clouds and the clear sky is reduced due to the warmer clear sky Tb. This is especially true for the high altitude and thin clouds with the warm and humid atmosphere [

13,

29]. Overall, during the warm and humid summer, the spectral test for the cold clouds, but over −50 °C, becomes less effective than the temporal test [

3,

8]. Thus, the POD difference among the four experimental datasets is mainly determined by the temporal test, which shows a better performance with the lower NEdT. EXP2 and EXP3 equally outperform the other two experimental datasets as a result.

During the winter, the IRT data having limited dynamic range (EXP1 and EXP3) show the worst performance, because the actual TbIRT could be well below −50 °C for many cases. Regarding those cases, the measured TbIRT will be −50 °C, even though the actual Tb of the cloudy or the clear sky is cooler than −50 °C. Concurrently, due to the cold and dry atmospheric conditions, the estimated TbECLR would be about −50 °C. A combination of the two effects results in the failure of the spectral test, because the cloudy TbIRT would be almost the same as TbECLR. The limited dynamic range also introduces an issue with the temporal test, because the actual temporal variability of the cloudy Tb would be smeared out with the constant value of −50 °C. Thus, the dramatic reduction of POD with EXP1 and EXP3 compared with EXP2 is due to the limited performance of both spectral and temporal tests. Finally, the POD difference between the EXP2 and Control having the same dynamic range is mostly due to the performance difference of the temporal test due to the NEdT difference.

Finally, the POD performance for each test, season, and experimental dataset for the high clouds are summarized in

Table 9. First, regardless of seasons and experimental datasets, the largest POD contribution is from the temporal test, confirming the important role of the temporal test in detecting high clouds. Regarding the temporal test, the experimental datasets with the better NEdT, such as EXP2 and EXP3, show the better POD values, even though EXP3 has the limited dynamic range. However, the much smaller POD value of EXP3 during the winter is due to the limited dynamic range, which smears out the temporal variability, and thus makes the temporal test inefficient. Secondly, in the case of the spectral test, both the dynamic range and the fitting uncertainty play a role. During the summer, which has less Tb contrast due to the humid and warmer lower atmosphere, EXP1 and EXP3 show the better performance compared with the others, which have the larger fitting uncertainty (see

Table 4), for example. Then again, during winter, the Control shows a much better POD compared with the EXP1 and EXP3 values, which have a limited dynamic range. Here, it is noteworthy that EXP2 shows a smaller POD compared to the Control for the spectral test (8.0% versus 4.3%), while EXP2 shows a much larger POD value in both tests (12.5% versus 8.3%). Thus, the total PODs of the spectral test and both tests for the two experimental datasets are almost the same (about 16%).

4.2. Discussion

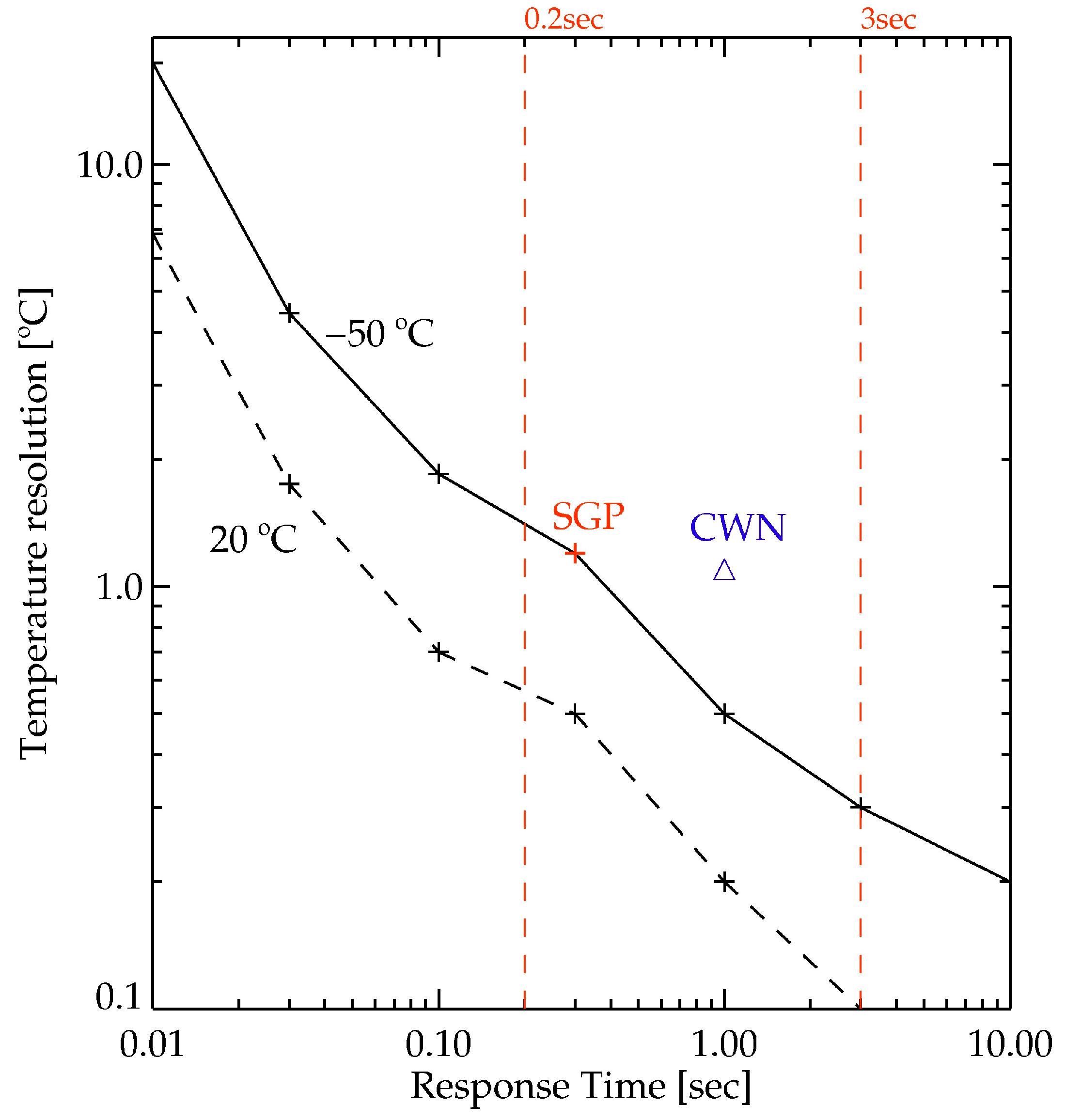

The results from the algorithm development and algorithm validation are used to characterize the relationship between the characteristics of IRT data and cloud detection. First, the sufficient dynamic range of IRT is shown to be a necessary condition for the accurate and realistic estimation of TbECLR, especially for cold atmospheric conditions. Regarding the case of the cold clouds, the dataset that has a limited dynamic range with a better NEdT shows an inferior performance compared with the case with a full dynamic range. Conversely, if the dynamic range is sufficient, the reduced sampling rate, which increases NEdT performance, is shown to be highly important for high-altitude clouds. Thus, the best detection performance for the worst situation—high thin clouds with warm and humid lower atmosphere—is achieved when the IRT data has the best NEdT performance with the full dynamic range.

One thing to note with the algorithm performance is the fitting uncertainty that is used as a threshold for the detection test, particularly for the estimation of TbECLR. Although the Control dataset covers the full dynamic range, its performance for the summer is the worst due to the larger fitting uncertainty (5.0 °C), which is much larger than that of EXP1 (3.3 °C) having the limited dynamic range. The increased fitting uncertainty is mainly due to the increased NEdT with the decreasing Tb. As the dynamic range extends toward cold Tb, the number of data with higher NEdT are going to be increased, and thus, the fitting uncertainty is going to be increased. Therefore, it is important to have a dataset with a sufficient NEdT performance and a full dynamic range to improve both the spectral and temporal tests.

Even though the cloud detection could be improved with better NEdT performance, the performance with the high clouds shows room to improve. Concerning the instrumentation, it is highly recommended to improve the NEdT performance, especially at the cooler Tb, which would require a substantial improvement in the noise reduction measures. Alternately, regarding algorithm improvement, there are at least two areas to be investigated further. The first is to improve the accuracy of Tb

ECLR by utilizing better information for atmospheric water vapor. Currently, the surface humidity is used for a proxy of total atmospheric water content, or total precipitable water (TPW), in the Tb

ECLR estimation. When the surface humidity does not properly represent TPW, the estimated Tb

ECLR does have an error, and consequently there are also errors in the detection algorithm. Thus, a utilization of TPW from a collocated instrument such as a microwave radiometer or GPS observation will be further investigated [

30]. Another possibility lies in the improvement of the threshold values used in both tests by utilizing the detection results, for example, a re-evaluation of the temporal variability using the measured clear sky Tb. Furthermore, the whole process ought to be performed in the radiance domain instead of the Tb domain, in order to resolve the non-linearity problem in digitization of the input signal.

Finally, it is quite important to use proper reference data for an accurate validation of algorithm performance, especially for the high altitude and optically thin clouds. When a ceilometer with limited detection capability for high clouds is used for the algorithm validation, the estimated POD is shown to be higher erroneously than the actual performance available with the comparison of the MPL data.