An Automatic Random Forest-OBIA Algorithm for Early Weed Mapping between and within Crop Rows Using UAV Imagery

Abstract

:1. Introduction

2. Materials and Methods

2.1. Study Site and UAV Flights

2.2. DSM and Orthomosaic Generation

2.3. Image Analysis Algorithm Description

2.3.1. Plot Field Analysis

- Object height calculation: A chessboard segmentation algorithm was used to segment the DSM of every sub-parcel into squares of 0.5 m. Then, the minimum height above sea level was calculated, to build the digital terrain model (DTM), i.e., a graphical representation of the terrain height. Next, the orthomosaic, containing the spectral and height information, was segmented, using the multiresolution segmentation algorithm (known as MRS) [31] (Figure 4). MRS is a bottom-up segmentation algorithm based on a pairwise region merging technique in which, on the basis of several parameters defined by the operator (scale, color/shape, smoothness/compactness), the image is subdivided into homogeneous objects.The scale parameter was fixed at 17, as obtained in a previous study, in which a large set of herbaceous crop plot imagery was tested by using a tool developed for scale value optimization, in accordance with [32]. This tool was implemented as a generic tool for the eCognition software in order to parameterize multi-scale image segmentation, enabling objectivity and automation of GEOBIA analysis. In addition, shape and compactness parameters were fixed to 0.4 and 0.5, respectively, to be well suited for vegetation detection by using UAV imagery [33].Once the objects were created, the height above the terrain was extracted from the Crop Height Model (CHM), which was calculated by subtracting the DTM from the DSM.

- Shadow removing: shadows were removed by using an overall intensity measurement given by the brightness feature, as shadows are less bright than vegetation and bare soil [34]. The 15th percentile brightness was used as the threshold to accurately select and remove shadow objects, based on previous studies (Figure 4).

- Vegetation thresholding: Once homogenous objects were created by MRS, vegetation (crop and weeds) objects were separated from bare soil by using the NIR/G band ratio. This is an easy to implement ratio, designed to detect structural and color differences in land classes [35], and is insensitive to soil effects, e.g., differences observed in this ratio have been used to separate vegetation and bare soil, as NIR reflectance is higher for vegetation than for bare soil, while more similar spectral values are shown for vegetation and bare soil when considering a broad waveband in the green region, which enhances these diferences [36]. The optimum ratio value for vegetation distinction was conducted using an automatic and iterative threshold approach, following the Otsu method [37], implemented in eCognition, in accordance with Torres-Sánchez et al. [28].

- Crop row detection: A new level was created to define the main orientation of the crop rows, where a merging operation was performed to create lengthwise vegetation objects following the shape of a crop row. In this operation, two candidate vegetation objects were merged only if the length/width ratio of the target object increased after the merging. Next, the largest-in-size object, with orientation close to row one, was classified as a seed object belonging to a crop row. Finally, the seed object grew in both directions, following the row orientation, and a looping merging process was performed until all the crop rows hit the parcel limits. This procedure is fully described in Peña et al. [38]. After a stripe was classified as a sunflower (or cotton) crop-line, the separation distance between rows was used to mask the adjacent stripes, to avoid misclassifying weed infestation as crop rows (Figure 4).

- Sample pre-classification: Once vegetation objects were identified, the algorithm searched for potential samples of crops and weeds in every sub-parcel. Inside the row, vegetation objects with an above average height, previously determined for each crop row as the average height of plants in a row, were pre-classified as crops. All vegetation objects outside the crop rows were classified as weeds (Figure 4). Weeds growing inside the row, regardless of height, could be properly classified in the next step.

2.3.2. RF Training Set Selection and Classification

2.3.3. Classification Enhancement

2.3.4. Prescription Map Generation

2.4. Crop Height Validation

2.5. Weed Detection Validation

3. Results and Discussion

3.1. OBIA-Based Crop Height Estimations

3.2. Automatic RF Training Set Selection and Classification

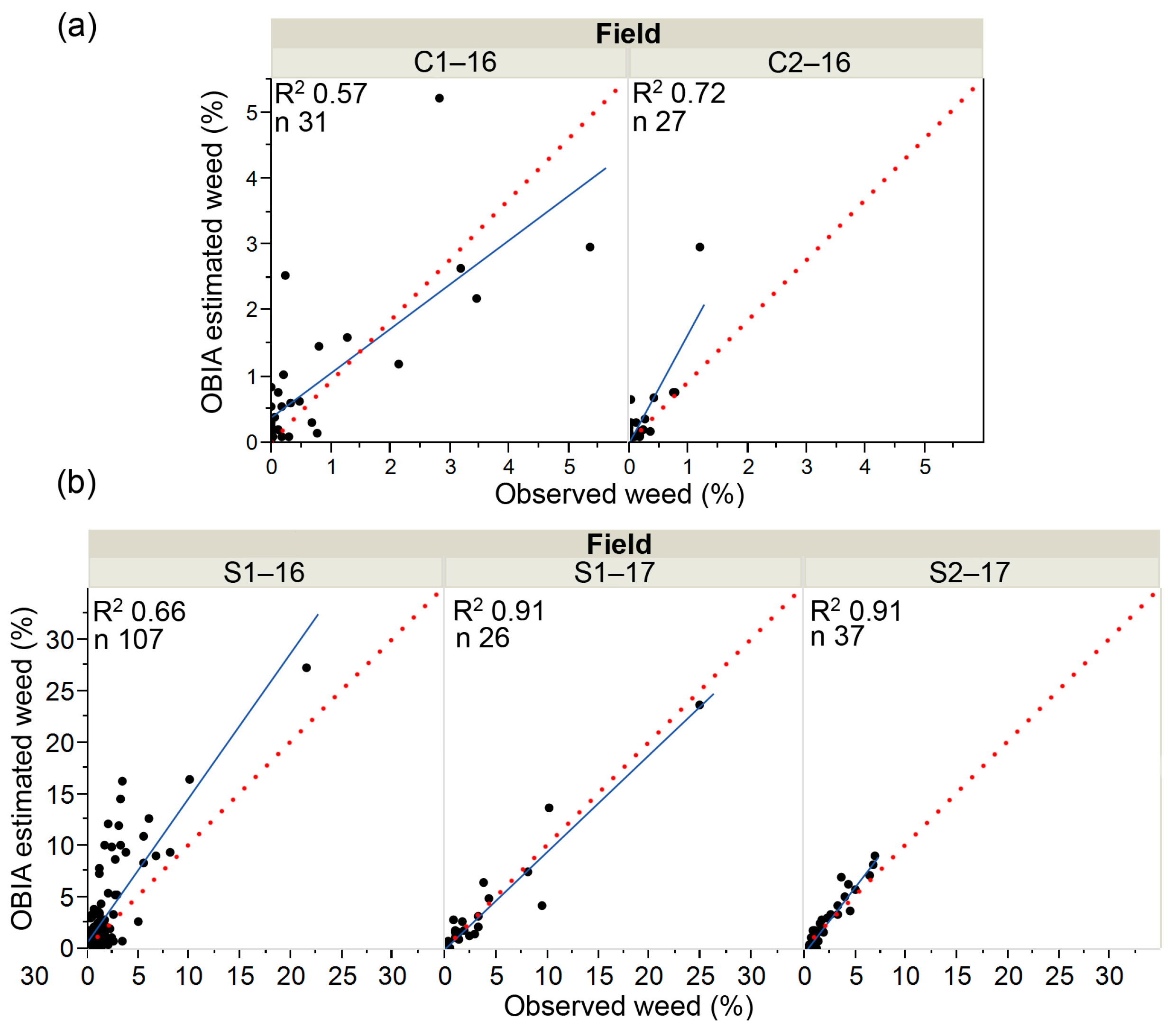

3.3. Weed Detection

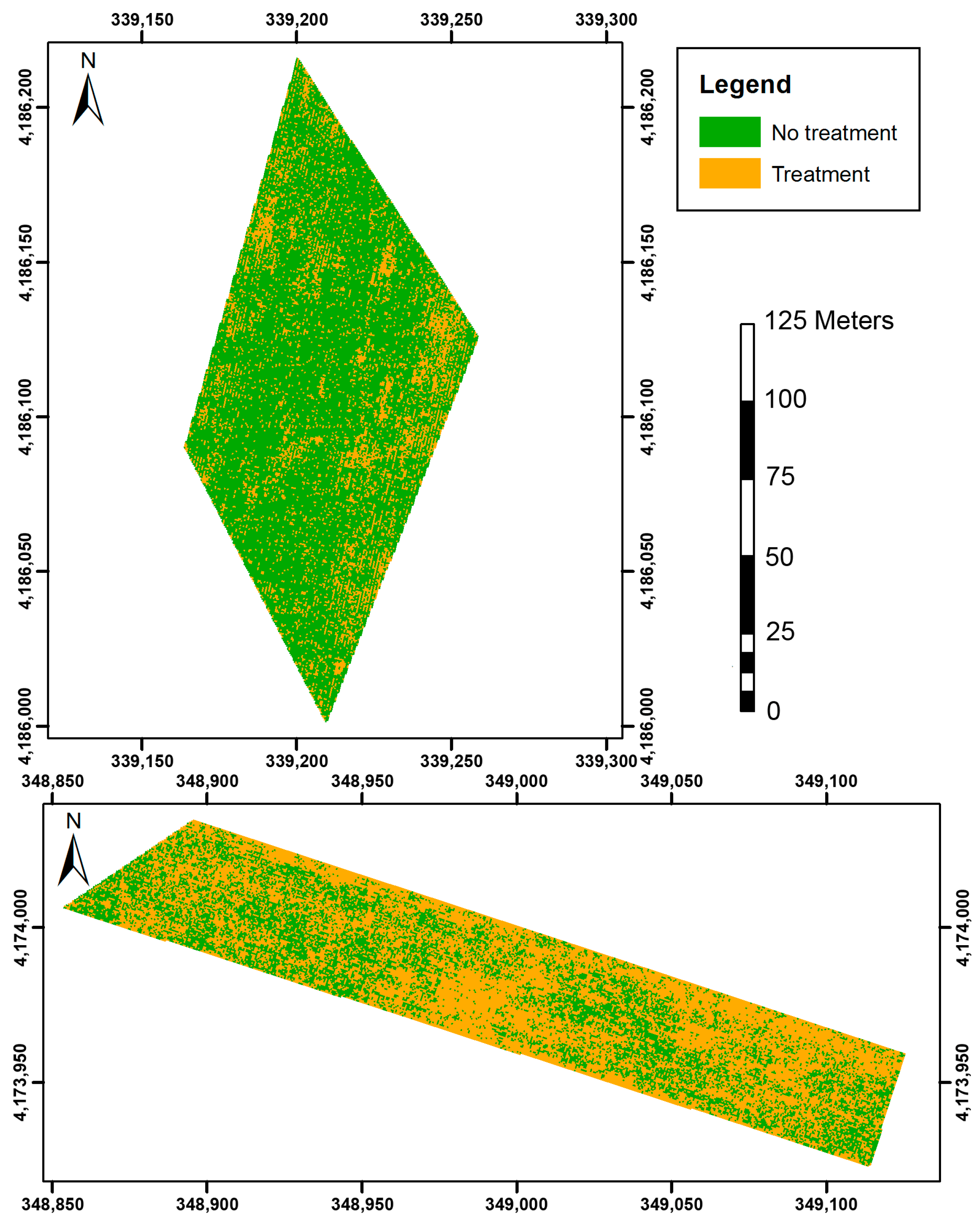

3.4. Prescription Maps

4. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Peña, J.M.; Torres-Sánchez, J.; Serrano-Pérez, A.; de Castro, A.I.; López-Granados, F. Quantifying efficacy and limits of unmanned aerial vehicle (UAV) technology for weed seedling detection as affected by sensor resolution. Sensors 2015, 15, 5609–5626. [Google Scholar] [CrossRef] [PubMed]

- Castaldi, F.; Pelosi, F.; Pascucci, S.; Casa, R. Assessing the potential of images from unmanned aerial vehicles (UAV) to support herbicide patch spraying in maize. Precis. Agric. 2017, 18, 76–94. [Google Scholar] [CrossRef]

- Pérez-Ortiz, M.; Peña, J.M.; Gutiérrez, P.A.; Torres-Sánchez, J.; Hervás-Martínez, C.; López-Granados, F. A semi-supervised system for weed mapping in sunflower crops using unmanned aerial vehicles and a crop row detection method. Appl. Soft Comput. 2015, 37, 533–544. [Google Scholar] [CrossRef]

- López-Granados, F. Weed detection for site-specific weed management: Mapping and real-time approaches. Weed Res. 2011, 51, 1–11. [Google Scholar] [CrossRef]

- López-Granados, F.; Torres-Sánchez, J.; de Castro, A.I.; Serrano-Pérez, A.; Mesas-Carrascosa, F.J.; Peña, J.M. Object-based early monitoring of a grass weed in a grass crop using high resolution UAV imagery. Agron. Sustain. Dev. 2016, 36, 67. [Google Scholar] [CrossRef]

- Nex, F.; Remondino, F. UAV for 3D mapping applications: A review. Appl. Geomat. 2013, 6, 1–15. [Google Scholar] [CrossRef]

- Bendig, J.; Bolten, A.; Bennertz, S.; Broscheit, J.; Eichfuss, S.; Bareth, G. Estimating biomass of barley using crop surface models (CSMs) derived from UAV-based RGB imaging. Remote Sens. 2014, 6, 10395–10412. [Google Scholar] [CrossRef]

- Geipel, J.; Link, J.; Claupein, W. Combined spectral and spatial modeling of corn yield based on aerial images and crop surface models acquired with an unmanned aircraft system. Remote Sens. 2014, 6, 10335. [Google Scholar] [CrossRef]

- Iqbal, F.; Lucieer, A.; Barry, K.; Wells, R. Poppy crop height and capsule volume estimation from a single UAS flight. Remote Sens. 2017, 9, 647. [Google Scholar] [CrossRef]

- Ostos, F.; de Castro, A.I.; Pistón, F.; Torres-Sánchez, J.; Peña, J.M. High-throughput phenotyping of bioethanol potential in cereals by using multi-temporal UAV-based imagery. Front. Plant Sci. 2018. under review. [Google Scholar]

- Peña, J.M.; Torres-Sánchez, J.; de Castro, A.I.; Kelly, M.; López-Granados, F. Weed mapping in early-season maize fields using object-based analysis of unmanned aerial vehicle (UAV) images. PLoS ONE 2013, 8, e77151. [Google Scholar] [CrossRef] [PubMed]

- Blaschke, T.; Hay, G.J.; Kelly, M.; Lang, S.; Hofmann, P.; Addink, E.; Queiroz Feitosa, R.; van der Meer, F.; van der Werff, H.; van Coillie, F.; et al. Geographic object-based image analysis—Towards a new paradigm. ISPRS J. Photogramm. Remote Sens. 2014, 87, 180–191. [Google Scholar] [CrossRef] [PubMed]

- López-Granados, F.; Torres-Sánchez, J.; Serrano-Pérez, A.; de Castro, A.I.; Mesas-Carrascosa, F.J.; Peña, J.M. Early season weed mapping in sunflower using UAV technology: Variability of herbicide treatment maps against weed thresholds. Precis. Agric. 2016, 17, 183–199. [Google Scholar] [CrossRef]

- Pérez-Ortiz, M.; Peña, J.M.; Gutiérrez, P.A.; Torres-Sánchez, J.; Hervás-Martínez, C.; López-Granados, F. Selecting patterns and features for between- and within- crop-row weed mapping using UAV-imagery. Expert Syst. Appl. 2016, 47, 85–94. [Google Scholar] [CrossRef]

- Ghimire, B.; Rogan, J.; Galiano, V.R.; Panday, P.; Neeti, N. An evaluation of bagging, boosting, and random forests for land-cover classification in cape cod, Massachusetts, USA. GISci. Remote Sens. 2012, 49, 623–643. [Google Scholar] [CrossRef]

- Rodriguez-Galiano, V.F.; Ghimire, B.; Rogan, J.; Chica-Olmo, M.; Rigol-Sanchez, J.P. An assessment of the effectiveness of a random forest classifier for land-cover classification. ISPRS J. Photogramm. Remote Sens. 2012, 67, 93–104. [Google Scholar] [CrossRef]

- Belgiu, M.; Drăguţ, L. Random forest in remote sensing: A review of applications and future directions. ISPRS J. Photogramm. Remote Sens. 2016, 114, 24–31. [Google Scholar] [CrossRef]

- Du, P.; Samat, A.; Waske, B.; Liu, S.; Li, Z. Random forest and rotation forest for fully polarized SAR image classification using polarimetric and spatial features. ISPRS J. Photogramm. Remote Sens. 2015, 105, 38–53. [Google Scholar] [CrossRef]

- Ho, T.K. Random Decision Forests. In Proceedings of the 3rd International Conference on Document Analysis and Recognition, Montreal, QC, Canada, 14–16 August 1995; pp. 278–282. [Google Scholar]

- Breiman, L. Random Forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Li, M.; Ma, L.; Blaschke, T.; Cheng, L.; Tiede, D. A systematic comparison of different object-based classification techniques using high spatial resolution imagery in agricultural environments. Int. J. Appl. Earth Obs. 2016, 49, 87–98. [Google Scholar] [CrossRef]

- Chan, J.C.W.; Beckers, P.; Spanhove, T.; Borre, J.V. An evaluation of ensemble classifiers for mapping Natura 2000 heathland in Belgium using spaceborne angular hyperspectral (CHRIS/Proba) imagery. Int. J. Appl. Earth Obs. 2012, 18, 13–22. [Google Scholar] [CrossRef]

- Foody, G.M. Thematic map comparison: Evaluating the statistical significance of differences in classification accuracy. Photogramm. Eng. Remote Sens. 2004, 70, 627–633. [Google Scholar] [CrossRef]

- Pal, M. Random forest classifier for remote sensing classification. Int. J. Remote Sens. 2005, 26, 217–222. [Google Scholar] [CrossRef]

- Lottes, P.; Khanna, R.; Pfeifer, J.; Siegwart, R.; Stachniss, C. UAV-based crop and weed classification for smart farming. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017; pp. 3024–3031. [Google Scholar]

- Ma, L.; Cheng, L.; Li, M.; Liu, Y.; Ma, X. Training set size, scale, and features in Geographic Object-based image analysis of very high resolution unmanned aerial vehicle imagery. ISPRS J. Photogramm. Remote Sens. 2015, 102, 14–27. [Google Scholar] [CrossRef]

- Meier, U. BBCH Monograph: Growth Stages for Mono- and Dicotyledonous Plants, 2nd ed.; Blackwell Wiss.-Verlag: Berlin, German, 2001. [Google Scholar] [CrossRef]

- Torres-Sánchez, J.; López-Granados, F.; Serrano, N.; Arquero, O.; Peña, J.M. High-throughput 3-D monitoring of agricultural-tree plantations with unmanned aerial vehicle (UAV) Technology. PLoS ONE 2015, 10, e0130479. [Google Scholar] [CrossRef] [PubMed]

- AESA. Aerial Work—Legal Framework. Available online: http://www.seguridadaerea.gob.es/LANG_EN/cias_empresas/trabajos/rpas/marco/default.aspx (accessed on 13 December 2017).

- Dandois, J.P.; Ellis, E.C. High spatial resolution three-dimensional mapping of vegetation spectral dynamics using computer vision. Remote Sens. Environ. 2013, 136, 259–276. [Google Scholar] [CrossRef]

- Baatz, M.; Schäpe, A. Multiresolution Segmentation: An Optimization Approach for High Quality Multi-Scale Image Segmentation. 2000. Available online: http://www.ecognition.com/sites/default/files/405_baatz_fp_12.pdf (accessed on 6 November 2017).

- Drăguţ, L.; Csillik, O.; Eisank, C.; Tiede, D. Automated parameterisation for multi-scale image segmentation on multiple layers. ISPRS J. Photogramm. Remote Sens. 2014, 88, 119–127. [Google Scholar] [CrossRef] [PubMed]

- Torres-Sánchez, J.; López-Granados, F.; Peña, J.M. An automatic object-based method for optimal thresholding in UAV images: Application for vegetation detection in herbaceous crops. Comput. Electron. Agric. 2015, 114, 43–52. [Google Scholar] [CrossRef]

- eCognition. Definiens Developer 9.2: Reference Book; Definiens AG: Munich, German, 2016. [Google Scholar]

- De Castro, I.A.; Ehsani, R.; Ploetz, R.; Crane, J.H.; Abdulridha, J. Optimum spectral and geometric parameters for early detection of laurel wilt disease in avocado. Remote Sens. Environ. 2015, 171, 33–44. [Google Scholar] [CrossRef]

- Peña, J.M.; López-Granados, F.; Jurado-Expósito, M.; García-Torres, L. Mapping Ridolfia segetum patches in sunflower crop using remote sensing. Weed Res. 2007, 47, 164–172. [Google Scholar] [CrossRef]

- Otsu, N. A Threshold Selection Method from Gray-Level Histograms. IEEE Trans. Syst. Man Cybern. 1979, 9, 62–66. [Google Scholar] [CrossRef]

- Peña, J.M.; Kelly, M.; de Castro, A.I.; López-Granados, F. Object-based approach for crow row characterization in UAV images for site-specific weed management. In Proceedings of the 4th International Conference on Geographic Object-Based Image Analysis (GEOBIA), Rio de Janeiro, Brazil, 7–9 May 2012; pp. 426–430. [Google Scholar]

- Williams, G.J. Rattle: A data mining GUI for R. R. J. 2009, 1, 45–55. [Google Scholar]

- Gonzalez-de-Santos, P.; Ribeiro, A.; Fernandez-Quintanilla, C.; Lopez-Granados, F.; Brandstoetter, M.; Tomic, S.; Pedrazzi, S.; Peruzzi, A.; Pajares, G.; Kaplanis, G.; et al. Fleets of robots for environmentally-safe pest control in agriculture. Precis. Agric. 2017, 18, 574–614. [Google Scholar] [CrossRef]

- Whitside, T.G.; Maier, S.F.; Boggs, G.S. Area-based and location-based validation of classified image objects. Int. J. Appl. Earth Obs. Geoinform. 2014, 28, 117–130. [Google Scholar] [CrossRef]

- Longchamps, L.; Panneton, B.; Simard, M.J.; Leroux, G.D. An imagery-based weed cover threshold established using expert knowledge. Weed Sci. 2014, 62, 177–185. [Google Scholar] [CrossRef]

- Chu, T.; Chen, R.; Landivar, J.A.; Maeda, M.M.; Yang, C.; Starek, M.J. Cotton growth modeling and assessment using unmanned aircraft system visual-band imagery. J. Appl. Remote Sens. 2016, 10, 036018. [Google Scholar] [CrossRef]

- Watanabe, K.; Guo, W.; Arai, K.; Takanashi, H.; Kajiya-Kanegae, H.; Kobayashi, M.; Yano, K.; Tokunaga, T.; Fujiwara, T.; Tsutsumi, N.; et al. High-throughput phenotyping of sorghum plant height using an unmanned aerial vehicle and its application to genomic prediction modeling. Front. Plant Sci. 2017, 8, 421. [Google Scholar] [CrossRef] [PubMed]

- Varela, S.; Assefa, Y.; Prasad, P.V.V.; Peralta, N.R.; Griffin, T.W.; Sharda, A.; Ferguson, A.; Ciampitti, I.A. Spatio-temporal evaluation of plant height in corn via unmanned aerial systems. J. Appl. Remote Sens. 2017, 11, 036013. [Google Scholar] [CrossRef]

- Colditz, R.R. An evaluation of different training sample allocation schemes for discrete and continuous land cover classification using decision tree-based algorithms. Remote Sens. 2015, 7, 9655–9681. [Google Scholar] [CrossRef]

- Vetrivel, A.; Gerke, M.; Kerle, N.; Vosselman, G. Identification of damage in buildings based on gaps in 3D point clouds from very high resolution oblique airborne images. ISPRS J. Photogramm. Remote Sens. 2015, 105, 61–78. [Google Scholar] [CrossRef]

- Yu, Q.; Gong, P.; Clinton, N.; Biging, G.; Kelly, M.; Schirokauer, D. Classification with airborne high spatial resolution remote sensing imagery. Photogramm. Eng. Remote Sens. 2006, 72, 799–811. [Google Scholar] [CrossRef]

- Gibson, K.D.; Dirk, R.; Medlin, C.R.; Johnston, L. Detection of weed species in soybean using multispectral digital images. Weed Technol. 2004, 18, 742–749. [Google Scholar] [CrossRef]

- De Castro, A.I.; López Granados, F.; Jurado-Expósito, M. Broad-scale cruciferous weed patch classification in winter wheat using QuickBird imagery for in-season site-specific control. Precis. Agric. 2013, 14, 392–413. [Google Scholar] [CrossRef]

- Pages, E.R.; Cerrudo, D.; Westra, P.; Loux, M.; Smith, K.; Foresman, C.; Wright, H.; Swanton, C.J. Why early season weed control is important in maize. Weed Sci. 2014, 60, 423–430. [Google Scholar] [CrossRef]

- O’Donovan, J.T.; de St. Remy, E.A.; O’Sullivan, P.A.; Dew, D.A.; Sharma, A.K. Influence of the relative time of emergence of wild oat (Avena fatua) on yield loss of barley (Hordeum vulgare) and wheat (Triticum aestivum). Weed Sci. 1985, 33, 498–503. [Google Scholar] [CrossRef]

- Swanton, C.J.; Mahoney, K.J.; Chandler, K.; Gulden, R.H. Integrated weed management: Knowledge based weed management systems. Weed Sci. 2008, 56, 168–172. [Google Scholar] [CrossRef]

- Knezevic, S.Z.; Evans, S.P.; Blankenship, E.E.; Acker, R.C.V.; Lindquist, J.L. Critical period for weed control: The concept and data analysis. Weed Sci. 2002, 50, 773–786. [Google Scholar] [CrossRef]

- Judge, C.A.; Neal, J.C.; Derr, J.F. Response of Japanese Stiltgrass (Microstegium vimineum) to Application Timing, Rate, and Frequency of Postemergence Herbicides. Weed Technol. 2005, 19, 912–917. [Google Scholar] [CrossRef]

- Wiles, L.J. Beyond patch spraying: Site-specific weed management with several herbicides. Precis. Agric. 2009, 10, 277–290. [Google Scholar] [CrossRef]

- Hamouz, P.; Hamouzová, K.; Holec, J.; Tyšer, L. Impact of site-specific weed management on herbicide savings and winter wheat yield. Plant Soil Environ. 2013, 59, 101–107. [Google Scholar] [CrossRef]

- Gerhards, R.; Oebel, H. Practical experiences with a system for site-specific weed control in arable crops using real-time image analysis and GPS-controlled patch spraying. Weed Res. 2006, 46, 185–193. [Google Scholar] [CrossRef]

- Chauhan, B.S.; Singh, R.G.; Mahajan, G. Ecology and management of weeds under conservation agriculture: A review. Crop Prot. 2012, 38, 57–65. [Google Scholar] [CrossRef]

- Dunan, C.M.; Westra, P.; Schweizer, E.E.; Lybecker, D.W.; Moore, F.D., III. The concept and application of early economic period threshold: The case of DCPA in onions (Allium cepa). Weed Sci. 1995, 43, 634–639. [Google Scholar]

- Weaver, S.E.; Kropff, M.J.; Groeneveld, R.M.W. Use of ecophysiological models for crop—Weed interference: The critical period of weed interference. Weed Sci. 1992, 40, 302–307. [Google Scholar] [CrossRef]

- García-Santillan, I.; Pajares, G. On-line crop/weed discrimination through the Mahalanobis distance from images in maize fields. Biosyst. Eng. 2018, 166, 28–43. [Google Scholar] [CrossRef]

- Barreda, J.; Ruíz, A.; Ribeiro, A. Seguimiento Visual de Líneas de Cultivo (Visual Tracking of Crop Rows). Master’s Thesis, Universidad de Murcia, Murcia, Spain, 2009. [Google Scholar]

- Slaughter, D.C.; Giles, D.K.; Downey, D. Autonomous robotic weed control systems: A review. Comput. Electron. Agric. 2008, 61, 63–78. [Google Scholar] [CrossRef]

- Andújar, D.; Escola, A.; Dorado, J.; Fernandez-Quintanilla, C. Weed discrimination using ultrasonic sensors. Weed Res. 2011, 51, 543–547. [Google Scholar] [CrossRef]

| Crop | Name | Flight Date | Crop Row Separation (m) | Area (ha) | Weed Validation Frames |

|---|---|---|---|---|---|

| Sunflower | S1–16 | June 2016 | 0.8 | 4.22 | 107 |

| S1–17 | May 2017 | 0.7 | 1.03 | 26 | |

| S2–17 | May 2017 | 0.7 | 1.56 | 37 | |

| Cotton | C1–16 | June 2016 | 0.95 | 1.13 | 31 |

| C2–16 | June 2016 | 0.95 | 1.05 | 27 |

| Trainning Set Data | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Selected Training Objects (%) * | Selected Training Area over Total Field Area Φ | |||||||||

| Field | Flight Altitude | Bare Soil | Crop | Shadow | Weed | Bare Soil | Crop | Shadow | Weed | Total |

| C1–16 | 30 | 23 | 28 | 27 | 22 | 1.0 | 0.9 | 0.7 | 0.5 | 3.0 |

| C2–16 | 30 | 23 | 28 | 28 | 22 | 0.6 | 0.5 | 0.5 | 0.3 | 1.8 |

| S1–16 | 30 | 19 | 28 | 30 | 23 | 1.4 | 1.1 | 1.1 | 0.8 | 4.2 |

| 60 | 17 | 32 | 36 | 16 | 2.6 | 2.0 | 1.4 | 0.5 | 6.5 | |

| S1–17 | 30 | 21 | 28 | 29 | 23 | 1.5 | 1.3 | 1.2 | 0.9 | 4.9 |

| 60 | 18 | 30 | 33 | 19 | 2.9 | 1.7 | 1.7 | 0.8 | 7.1 | |

| S2–17 | 30 | 20 | 28 | 29 | 23 | 1.4 | 1.4 | 1.4 | 1.0 | 5.2 |

| 60 | 18 | 30 | 33 | 19 | 2.5 | 1.8 | 1.7 | 0.9 | 6.8 | |

| Crop | Field | WdA (%) |

|---|---|---|

| Cotton | C1–16 | 84.0 |

| C2–16 | 63.0 | |

| Sunflower | S1–16 | 59.1 |

| S1–17 | 87.9 | |

| S2–17 | 81.1 |

| Crop | Field | Herbicide Saving (%) |

|---|---|---|

| Cotton | C1–16 | 60 |

| C2–16 | 79 | |

| Sunflower | S1–16 | 27 |

| S1–17 | 37 | |

| S2–17 | 28 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

De Castro, A.I.; Torres-Sánchez, J.; Peña, J.M.; Jiménez-Brenes, F.M.; Csillik, O.; López-Granados, F. An Automatic Random Forest-OBIA Algorithm for Early Weed Mapping between and within Crop Rows Using UAV Imagery. Remote Sens. 2018, 10, 285. https://doi.org/10.3390/rs10020285

De Castro AI, Torres-Sánchez J, Peña JM, Jiménez-Brenes FM, Csillik O, López-Granados F. An Automatic Random Forest-OBIA Algorithm for Early Weed Mapping between and within Crop Rows Using UAV Imagery. Remote Sensing. 2018; 10(2):285. https://doi.org/10.3390/rs10020285

Chicago/Turabian StyleDe Castro, Ana I., Jorge Torres-Sánchez, Jose M. Peña, Francisco M. Jiménez-Brenes, Ovidiu Csillik, and Francisca López-Granados. 2018. "An Automatic Random Forest-OBIA Algorithm for Early Weed Mapping between and within Crop Rows Using UAV Imagery" Remote Sensing 10, no. 2: 285. https://doi.org/10.3390/rs10020285

APA StyleDe Castro, A. I., Torres-Sánchez, J., Peña, J. M., Jiménez-Brenes, F. M., Csillik, O., & López-Granados, F. (2018). An Automatic Random Forest-OBIA Algorithm for Early Weed Mapping between and within Crop Rows Using UAV Imagery. Remote Sensing, 10(2), 285. https://doi.org/10.3390/rs10020285