Intra-Season Crop Height Variability at Commercial Farm Scales Using a Fixed-Wing UAV

Abstract

1. Introduction

2. Materials and Methods

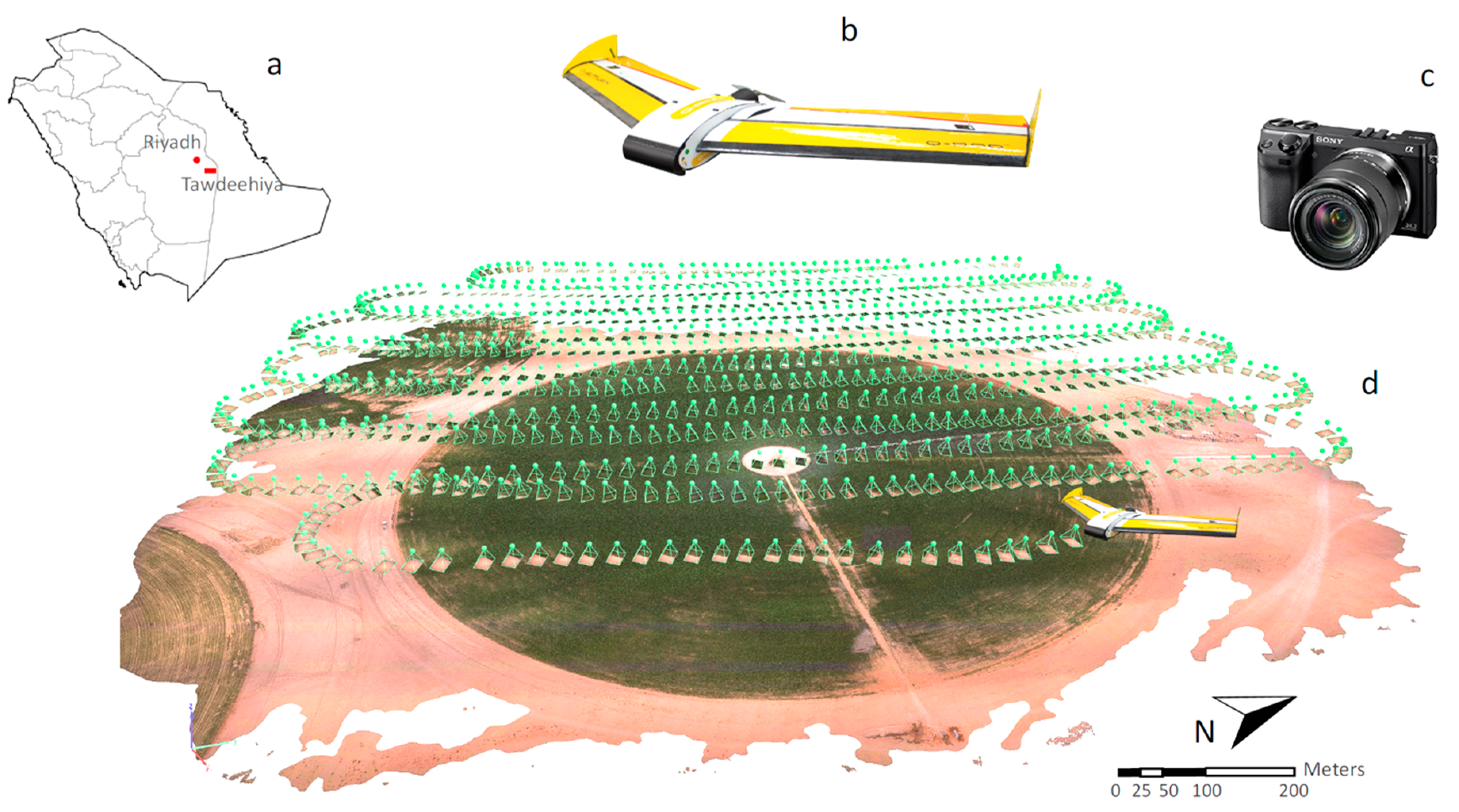

2.1. Study Site and Field Conditions

2.2. Description of UAV Flight Control and Sensor Payload

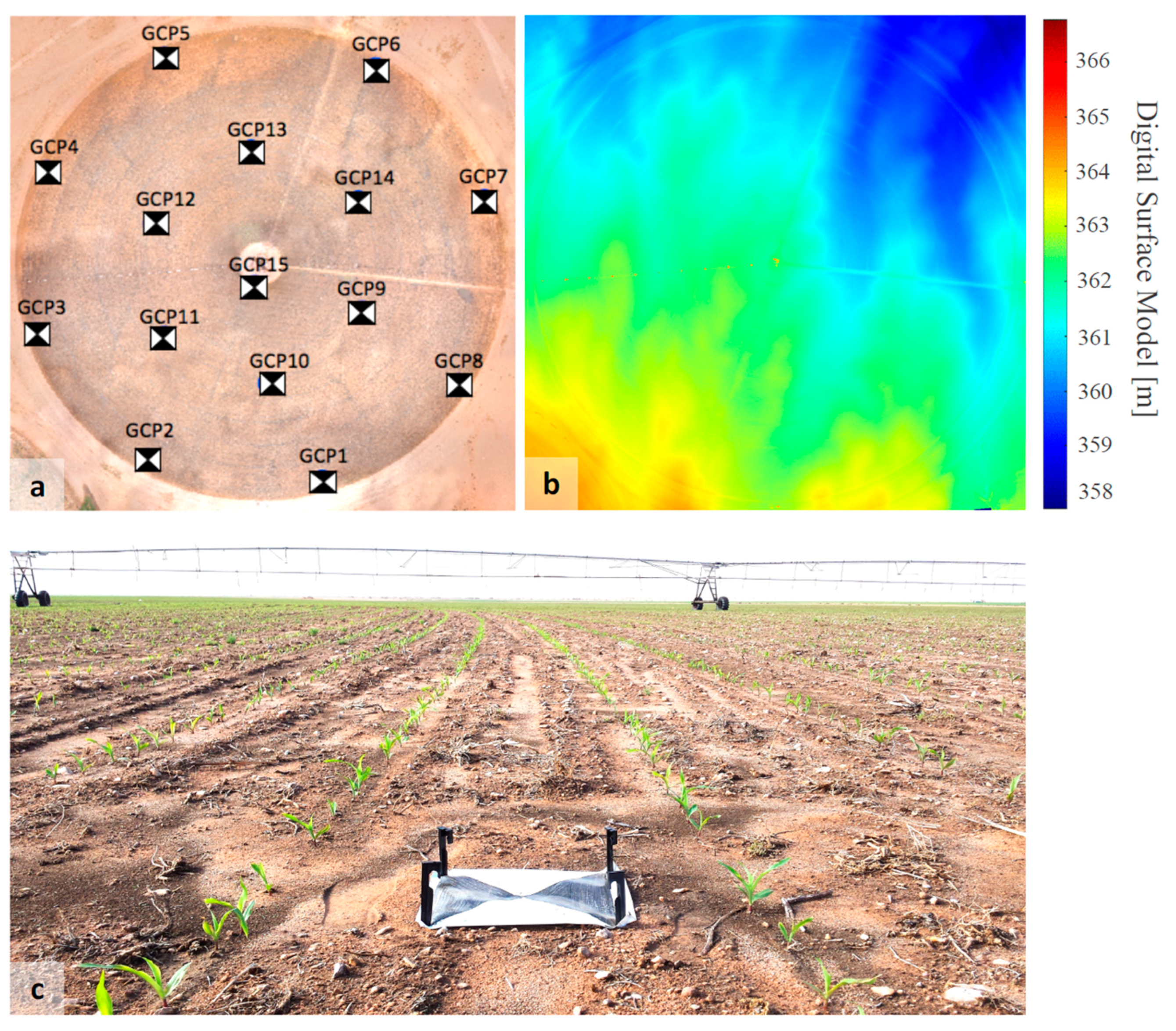

2.3. Image Processing: Georeferencing Using Computer Vision Approaches

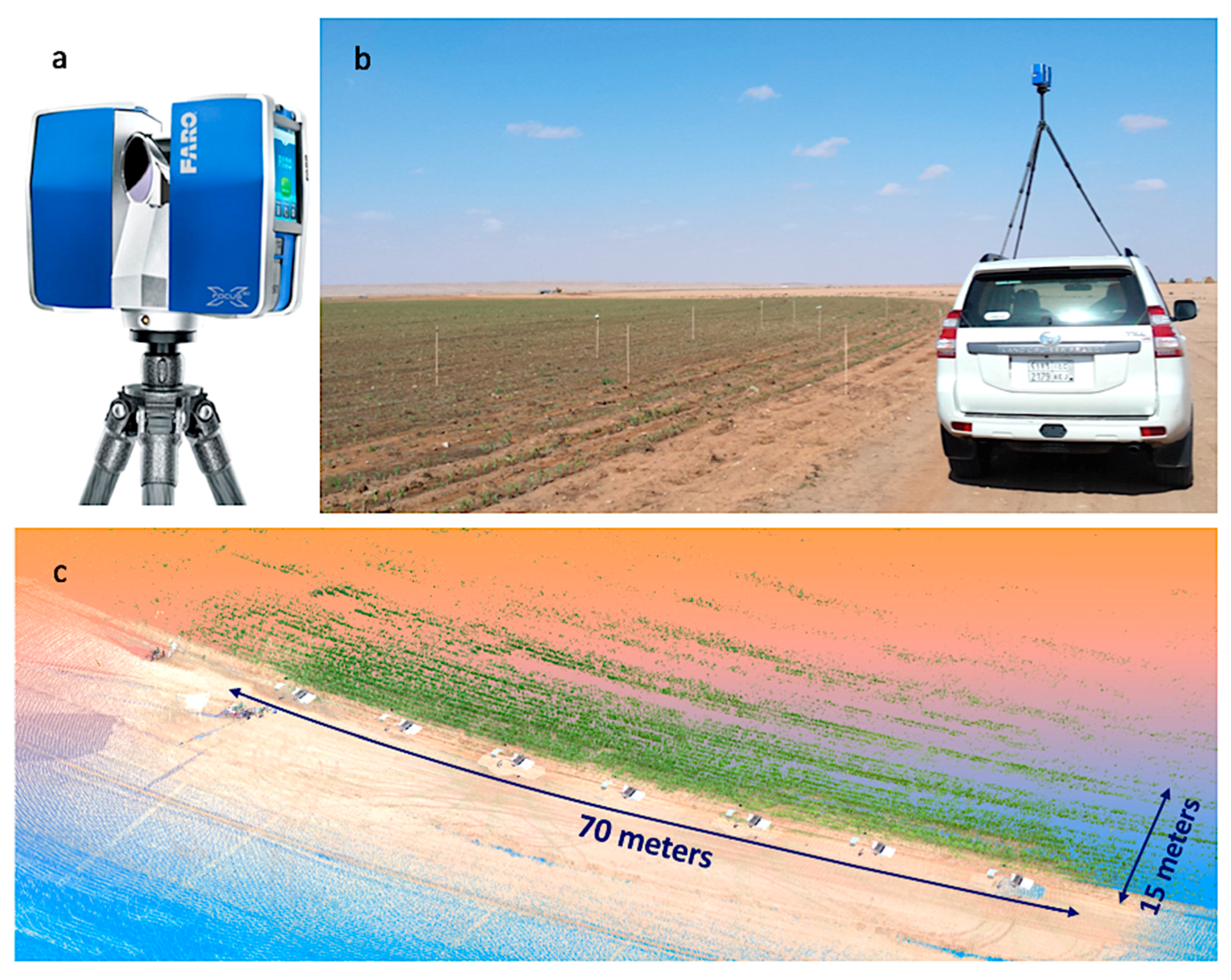

2.4. Crop Height Evaluation with LiDAR Data

3. Results and Discussion

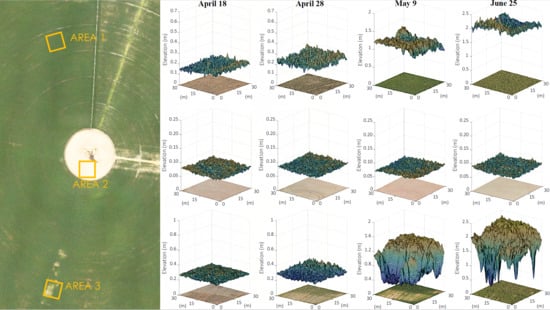

3.1. Crop Height Determination with UAV Point Cloud

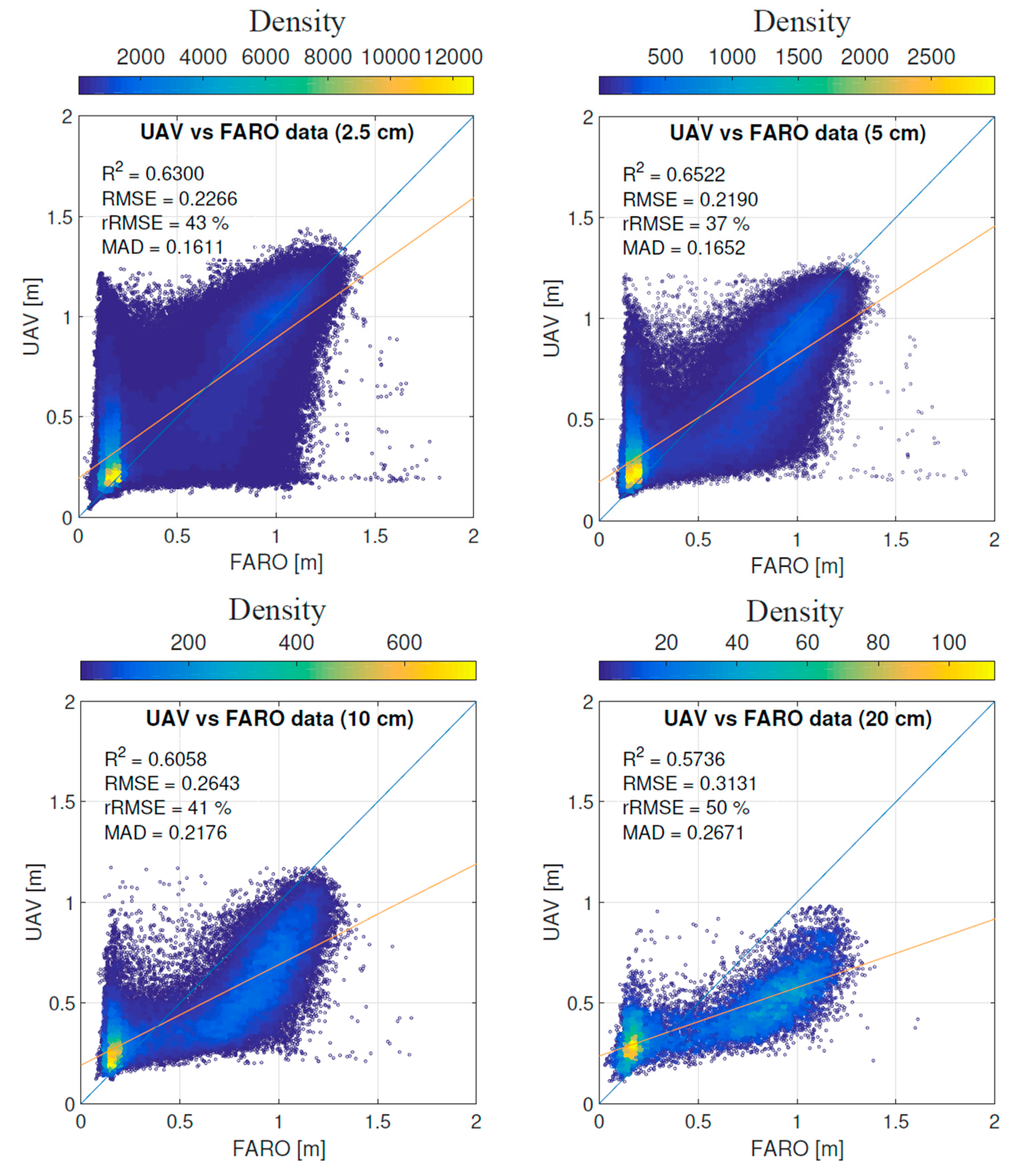

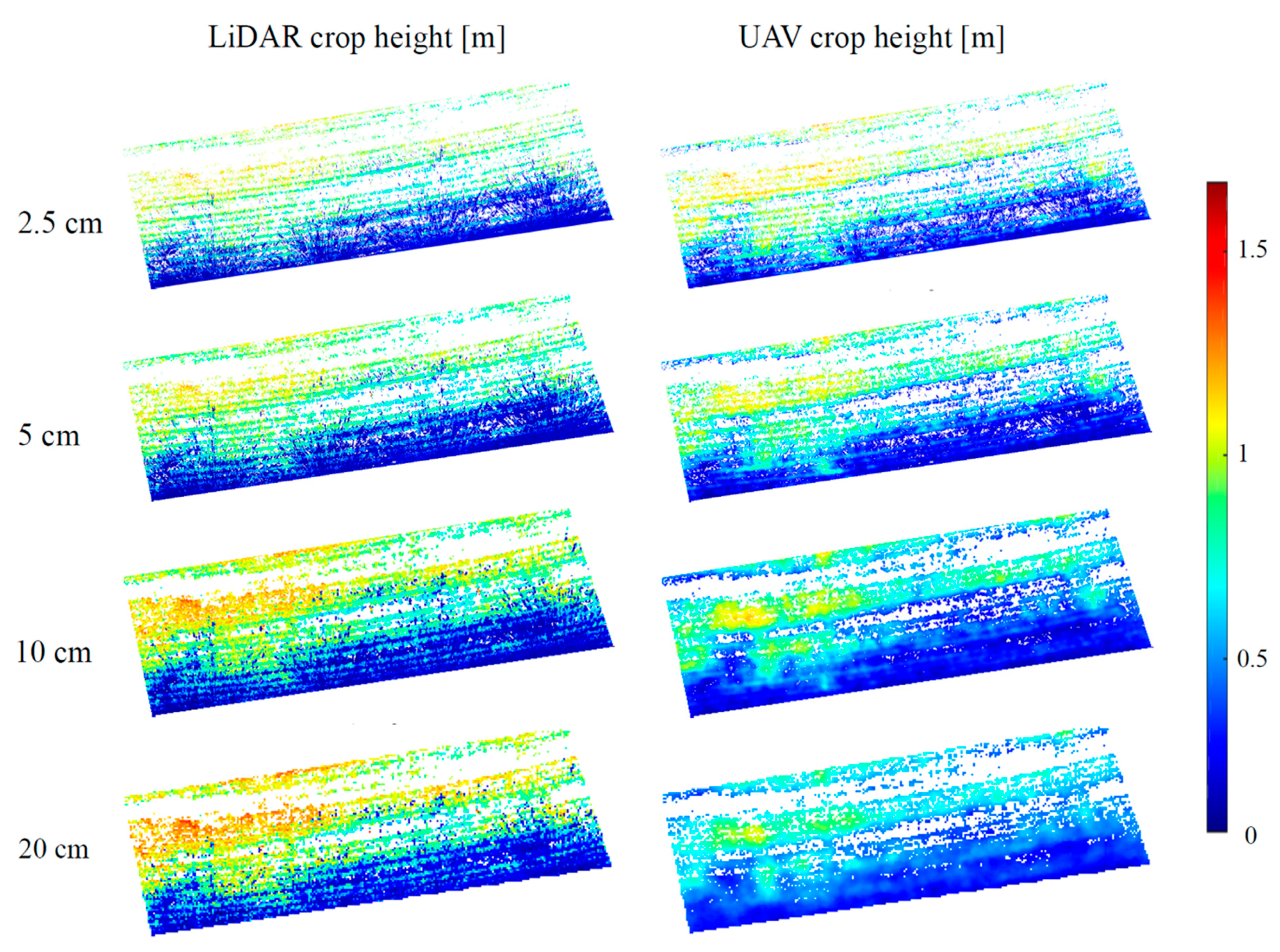

3.2. Evaluation of UAV-Based Retrievals with LiDAR Scans

3.3. Application and Limitations

4. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Zhang, C.; Kovacs, J.M. The application of small unmanned aerial systems for precision agriculture: A review. Precis. Agric. 2012, 13, 693–712. [Google Scholar] [CrossRef]

- Singh, K.K.; Frazier, A.E. A meta-analysis and review of unmanned aircraft system (UAS) imagery for terrestrial applications. Int. J. Remote Sens. 2018, 39. [Google Scholar] [CrossRef]

- Manfreda, S.; McCabe, M.F.; Miller, P.E.; Lucas, R.; Pajuelo Madrigal, V.; Mallinis, G.; Ben Dor, E.; Helman, D.; Estes, L.; Ciraolo, G. On the use of unmanned aerial systems for environmental monitoring. Remote Sens. 2018, 10, 641. [Google Scholar] [CrossRef]

- McCabe, M.F.; Rodell, M.; Alsdorf, D.E.; Miralles, D.G.; Uijlenhoet, R.; Wagner, W.; Lucieer, A.; Houborg, R.; Verhoest, N.E.; Franz, T.E. The future of earth observation in hydrology. Hydrol. Earth Syst. Sci. 2017, 21, 3879–3914. [Google Scholar] [CrossRef] [PubMed]

- Marqués, P. Aerodynamics of UAV configurations. In Advanced UAV Aerodynamics, Flight Stability and Control: Novel Concepts, Theory and Applications; Wiley: Hoboken, NJ, USA, 2017; p. 31. [Google Scholar]

- Anderson, K.; Gaston, K.J. Lightweight unmanned aerial vehicles will revolutionize spatial ecology. Front. Ecol. Environ. 2013, 11, 138–146. [Google Scholar] [CrossRef]

- Nex, F.; Remondino, F. UAV for 3D mapping applications: A review. Appl. Geomat. 2014, 6, 1–15. [Google Scholar] [CrossRef]

- Whitehead, K.; Hugenholtz, C.H. Remote sensing of the environment with small unmanned aircraft systems (UASs), part 1: A review of progress and challenges. J. Unmanned Veh. Syst. 2014, 2, 69–85. [Google Scholar] [CrossRef]

- Bongiovanni, R.; Lowenberg-DeBoer, J. Precision agriculture and sustainability. Precis. Agric. 2004, 5, 359–387. [Google Scholar] [CrossRef]

- Torres-Sánchez, J.; Peña, J.; De Castro, A.; López-Granados, F. Multi-temporal mapping of the vegetation fraction in early-season wheat fields using images from UAV. Comput. Electron. Agric. 2014, 103, 104–113. [Google Scholar] [CrossRef]

- Peña, J.M.; Torres-Sánchez, J.; de Castro, A.I.; Kelly, M.; López-Granados, F. Weed mapping in early-season maize fields using object-based analysis of unmanned aerial vehicle (UAV) images. PLoS ONE 2013, 8, e77151. [Google Scholar] [CrossRef]

- Torres-Sánchez, J.; López-Granados, F.; De Castro, A.I.; Peña-Barragán, J.M. Configuration and specifications of an unmanned aerial vehicle (UAV) for early site specific weed management. PLoS ONE 2013, 8, e58210. [Google Scholar] [CrossRef]

- Bendig, J.; Yu, K.; Aasen, H.; Bolten, A.; Bennertz, S.; Broscheit, J.; Gnyp, M.L.; Bareth, G. Combining UAV-based plant height from crop surface models, visible, and near infrared vegetation indices for biomass monitoring in barley. Int. J. Appl. Earth Obs. Geoinf. 2015, 39, 79–87. [Google Scholar] [CrossRef]

- Zarco-Tejada, P.J.; González-Dugo, V.; Berni, J.A. Fluorescence, temperature and narrow-band indices acquired from a UAV platform for water stress detection using a micro-hyperspectral imager and a thermal camera. Remote Sens. Environ. 2012, 117, 322–337. [Google Scholar] [CrossRef]

- Berni, J.A.; Zarco-Tejada, P.J.; Suárez, L.; Fereres, E. Thermal and narrowband multispectral remote sensing for vegetation monitoring from an unmanned aerial vehicle. IEEE Trans. Geosci. Remote Sens. 2009, 47, 722–738. [Google Scholar] [CrossRef]

- Hoffmann, H.; Nieto, H.; Jensen, R.; Guzinski, R.; Zarco-Tejada, P.; Friborg, T. Estimating evaporation with thermal UAV data and two-source energy balance models. Hydrol. Earth Syst. Sci. 2016, 20, 697–713. [Google Scholar] [CrossRef]

- Wallace, L.; Lucieer, A.; Watson, C.; Turner, D. Development of a UAV-lidar system with application to forest inventory. Remote Sens. 2012, 4, 1519–1543. [Google Scholar] [CrossRef]

- Wallace, L.; Musk, R.; Lucieer, A. An assessment of the repeatability of automatic forest inventory metrics derived from UAV-borne laser scanning data. IEEE Trans. Geosci. Remote Sens. 2014, 52, 7160–7169. [Google Scholar] [CrossRef]

- Falster, D.S.; Westoby, M. Plant height and evolutionary games. Trends Ecol. Evol. 2003, 18, 337–343. [Google Scholar] [CrossRef]

- Moles, A.T.; Warton, D.I.; Warman, L.; Swenson, N.G.; Laffan, S.W.; Zanne, A.E.; Pitman, A.; Hemmings, F.A.; Leishman, M.R. Global patterns in plant height. J. Ecol. 2009, 97, 923–932. [Google Scholar] [CrossRef]

- Hunt, R. Plant Growth Curves. The Functional Approach to Plant Growth Analysis; Edward Arnold Ltd.: London, UK, 1982. [Google Scholar]

- Li, D.; Liu, H.; Qiao, Y.; Wang, Y.; Cai, Z.; Dong, B.; Shi, C.; Liu, Y.; Li, X.; Liu, M. Effects of elevated CO2 on the growth, seed yield, and water use efficiency of soybean (Glycine max (L.) Merr.) under drought stress. Agric. Water Manag. 2013, 129, 105–112. [Google Scholar] [CrossRef]

- Shouzheng, Z.Q.L.Y.T. Tree height measurement based on affine reconstructure. Comput. Eng. Appl. 2005, 31, 006. [Google Scholar]

- Yin, X.; McClure, M.A.; Jaja, N.; Tyler, D.D.; Hayes, R.M. In-season prediction of corn yield using plant height under major production systems. Agron. J. 2011, 103, 923–929. [Google Scholar] [CrossRef]

- Law, C.; Snape, J.; Worland, A. The genetical relationship between height and yield in wheat. Heredity 1978, 40, 133. [Google Scholar] [CrossRef]

- Shrestha, D.; Steward, B.; Birrell, S.; Kaspar, T. Corn Plant Height Estimation Using Two Sensing Systems; ASABE Paper; ASAE: St. Joseph, MI, USA, 2002. [Google Scholar]

- Ehlert, D.; Adamek, R.; Horn, H.-J. Laser rangefinder-based measuring of crop biomass under field conditions. Precis. Agric. 2009, 10, 395–408. [Google Scholar] [CrossRef]

- Zhang, L.; Grift, T.E. A lidar-based crop height measurement system for miscanthus giganteus. Comput. Electron. Agric. 2012, 85, 70–76. [Google Scholar] [CrossRef]

- Gul, S.; Khan, M.; Khanday, B.; Nabi, S. Effect of sowing methods and NPK levels on growth and yield of rainfed maize (Zea mays L.). Scientifica 2015, 2015, 198575. [Google Scholar] [CrossRef] [PubMed]

- Araus, J.L.; Cairns, J.E. Field high-throughput phenotyping: The new crop breeding frontier. Trends Plant Sci. 2014, 19, 52–61. [Google Scholar] [CrossRef] [PubMed]

- Chang, A.; Jung, J.; Maeda, M.M.; Landivar, J. Crop height monitoring with digital imagery from unmanned aerial system (UAS). Comput. Electron. Agric. 2017, 141, 232–237. [Google Scholar] [CrossRef]

- Malambo, L.; Popescu, S.; Murray, S.; Putman, E.; Pugh, N.; Horne, D.; Richardson, G.; Sheridan, R.; Rooney, W.; Avant, R. Multitemporal field-based plant height estimation using 3D point clouds generated from small unmanned aerial systems high-resolution imagery. Int. J. Appl. Earth Obs. Geoinf. 2018, 64, 31–42. [Google Scholar] [CrossRef]

- Wang, L.; d’Odorico, P.; Evans, J.; Eldridge, D.; McCabe, M.; Caylor, K.; King, E. Dryland ecohydrology and climate change: Critical issues and technical advances. Hydrol. Earth Syst. Sci. 2012, 16, 2585–2603. [Google Scholar] [CrossRef]

- Famiglietti, J.S. The global groundwater crisis. Nat. Clim. Chang. 2014, 4, 945. [Google Scholar] [CrossRef]

- Wada, Y.; Bierkens, M.F. Sustainability of global water use: Past reconstruction and future projections. Environ. Res. Lett. 2014, 9, 104003. [Google Scholar] [CrossRef]

- McCabe, M.F.; Houborg, R.; Lucieer, A. High-resolution sensing for precision agriculture: From earth-observing satellites to unmanned aerial vehicles. In Proceedings of the Remote Sensing for Agriculture, Ecosystems, and Hydrology XVIII, Edinburgh, UK, 26–29 September 2016; International Society for Optics and Photonics: Bellingham, WA, USA, 2016; p. 999811. [Google Scholar]

- Turner, D.; Lucieer, A.; Watson, C. An automated technique for generating georectified mosaics from ultra-high resolution unmanned aerial vehicle (UAV) imagery, based on structure from motion (SfM) point clouds. Remote Sens. 2012, 4, 1392–1410. [Google Scholar] [CrossRef]

- Fonstad, M.A.; Dietrich, J.T.; Courville, B.C.; Jensen, J.L.; Carbonneau, P.E. Topographic structure from motion: A new development in photogrammetric measurement. Earth Surf. Process. Landf. 2013, 38, 421–430. [Google Scholar] [CrossRef]

- Gindraux, S.; Boesch, R.; Farinotti, D. Accuracy assessment of digital surface models from unmanned aerial vehicles’ imagery on glaciers. Remote Sens. 2017, 9, 186. [Google Scholar] [CrossRef]

- Lingua, A.; Marenchino, D.; Nex, F. Performance analysis of the sift operator for automatic feature extraction and matching in photogrammetric applications. Sensors 2009, 9, 3745–3766. [Google Scholar] [CrossRef] [PubMed]

- Lowe, D.G. Distinctive image features from scale-invariant keypoints. Int. J. Comput. Vis. 2004, 60, 91–110. [Google Scholar] [CrossRef]

- Bendig, J.; Bolten, A.; Bareth, G. UAV-based imaging for multi-temporal, very high resolution crop surface models to monitor crop growth variabilitymonitoring des pflanzenwachstums mit hilfe multitemporaler und hoch auflösender oberflächenmodelle von getreidebeständen auf basis von bildern aus UAV-befliegungen. Photogramm.-Fernerkund.-Geoinf. 2013, 2013, 551–562. [Google Scholar]

- Hoffmeister, D.; Bolten, A.; Curdt, C.; Waldhoff, G.; Bareth, G. High-resolution crop surface models (CSM) and crop volume models (CVM) on field level by terrestrial laser scanning. Proc. SPIE 2010, 7840, 78400E. [Google Scholar]

- Tilly, N.; Hoffmeister, D.; Cao, Q.; Huang, S.; Lenz-Wiedemann, V.; Miao, Y.; Bareth, G. Multitemporal crop surface models: Accurate plant height measurement and biomass estimation with terrestrial laser scanning in paddy rice. J. Appl. Remote Sens. 2014, 8, 083671. [Google Scholar] [CrossRef]

- Varela, S.; Assefa, Y.; Prasad, P.V.; Peralta, N.R.; Griffin, T.W.; Sharda, A.; Ferguson, A.; Ciampitti, I.A. Spatio-temporal evaluation of plant height in corn via unmanned aerial systems. J. Appl. Remote Sens. 2017, 11, 036013. [Google Scholar] [CrossRef]

- Grenzdörffer, G. Crop height determination with UAS point clouds. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2014, 40, 135–140. [Google Scholar] [CrossRef]

- Shi, Y.; Thomasson, J.A.; Murray, S.C.; Pugh, N.A.; Rooney, W.L.; Shafian, S.; Rajan, N.; Rouze, G.; Morgan, C.L.; Neely, H.L. Unmanned aerial vehicles for high-throughput phenotyping and agronomic research. PLoS ONE 2016, 11, e0159781. [Google Scholar] [CrossRef] [PubMed]

- Watanabe, K.; Guo, W.; Arai, K.; Takanashi, H.; Kajiya-Kanegae, H.; Kobayashi, M.; Yano, K.; Tokunaga, T.; Fujiwara, T.; Tsutsumi, N. High-throughput phenotyping of sorghum plant height using an unmanned aerial vehicle and its application to genomic prediction modeling. Front. Plant Sci. 2017, 8, 421. [Google Scholar] [CrossRef] [PubMed]

- Parkes, S.D.; McCabe, M.F.; Al-Mashhawari, S.K.; Rosas, J. Reproducibility of crop surface maps extracted from unmanned aerial vehicle (UAV) derived digital surface maps. In Proceedings of the Remote Sensing for Agriculture, Ecosystems, and Hydrology XVIII, Edinburgh, UK, 26–29 September 2016; International Society for Optics and Photonics: Bellingham, WA, USA, 2016; p. 99981B. [Google Scholar]

- Weiss, M.; Baret, F. Using 3D point clouds derived from UAV RGB imagery to describe vineyard 3D macro-structure. Remote Sens. 2017, 9, 111. [Google Scholar] [CrossRef]

- Matese, A.; Di Gennaro, S.F.; Berton, A. Assessment of a canopy height model (CHM) in a vineyard using UAV-based multispectral imaging. Int. J. Remote Sens. 2017, 38, 2150–2160. [Google Scholar] [CrossRef]

- De Souza, C.H.W.; Lamparelli, R.A.C.; Rocha, J.V.; Magalhães, P.S.G. Height estimation of sugarcane using an unmanned aerial system (UAS) based on structure from motion (SfM) point clouds. Int. J. Remote Sens. 2017, 38, 2218–2230. [Google Scholar] [CrossRef]

- Torres-Sánchez, J.; López-Granados, F.; Serrano, N.; Arquero, O.; Peña, J.M. High-throughput 3-D monitoring of agricultural-tree plantations with unmanned aerial vehicle (UAV) technology. PLoS ONE 2015, 10, e0130479. [Google Scholar] [CrossRef]

- Hoffmeister, D.; Waldhoff, G.; Korres, W.; Curdt, C.; Bareth, G. Crop height variability detection in a single field by multi-temporal terrestrial laser scanning. Precis. Agric. 2016, 17, 296–312. [Google Scholar] [CrossRef]

- Stanton, C.; Starek, M.J.; Elliott, N.; Brewer, M.; Maeda, M.M.; Chu, T. Unmanned aircraft system-derived crop height and normalized difference vegetation index metrics for sorghum yield and aphid stress assessment. J. Appl. Remote Sens. 2017, 11, 026035. [Google Scholar] [CrossRef]

- Madec, S.; Baret, F.; De Solan, B.; Thomas, S.; Dutartre, D.; Jezequel, S.; Hemmerlé, M.; Colombeau, G.; Comar, A. High-throughput phenotyping of plant height: Comparing unmanned aerial vehicles and ground lidar estimates. Front. Plant Sci. 2017, 8, 2002. [Google Scholar] [CrossRef] [PubMed]

- Bareth, G.; Bendig, J.; Tilly, N.; Hoffmeister, D.; Aasen, H.; Bolten, A. A comparison of UAV-and TLS-derived plant height for crop monitoring: Using polygon grids for the analysis of crop surface models (CSMs). Photogramm.-Fernerkund.-Geoinf. 2016, 2016, 85–94. [Google Scholar] [CrossRef]

- Holman, F.H.; Riche, A.B.; Michalski, A.; Castle, M.; Wooster, M.J.; Hawkesford, M.J. High throughput field phenotyping of wheat plant height and growth rate in field plot trials using UAV based remote sensing. Remote Sens. 2016, 8, 1031. [Google Scholar] [CrossRef]

- Possoch, M.; Bieker, S.; Hoffmeister, D.; Bolten, A.; Schellberg, J.; Bareth, G. Multi-temporal crop surface models combined with the RGB vegetation index from UAV-based images for forage monitoring in grassland. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2016, 41, 991. [Google Scholar] [CrossRef]

- Bendig, J.; Bolten, A.; Bennertz, S.; Broscheit, J.; Eichfuss, S.; Bareth, G. Estimating biomass of barley using crop surface models (CSMs) derived from UAV-based RGB imaging. Remote Sens. 2014, 6, 10395–10412. [Google Scholar] [CrossRef]

- Schirrmann, M.; Giebel, A.; Gleiniger, F.; Pflanz, M.; Lentschke, J.; Dammer, K.-H. Monitoring agronomic parameters of winter wheat crops with low-cost UAV imagery. Remote Sens. 2016, 8, 706. [Google Scholar] [CrossRef]

- Moeckel, T.; Dayananda, S.; Nidamanuri, R.R.; Nautiyal, S.; Hanumaiah, N.; Buerkert, A.; Wachendorf, M. Estimation of vegetable crop parameter by multi-temporal UAV-borne images. Remote Sens. 2018, 10, 805. [Google Scholar] [CrossRef]

- Peel, M.C.; Finlayson, B.L.; McMahon, T.A. Updated world map of the köppen-geiger climate classification. Hydrol. Earth Syst. Sci. Discuss. 2007, 4, 439–473. [Google Scholar] [CrossRef]

- El Kenawy, A.M.; McCabe, M.F. A multi-decadal assessment of the performance of gauge-and model-based rainfall products over Saudi Arabia: Climatology, anomalies and trends. Int. J. Climatol. 2016, 36, 656–674. [Google Scholar] [CrossRef]

- Dandois, J.P.; Olano, M.; Ellis, E.C. Optimal altitude, overlap, and weather conditions for computer vision UAV estimates of forest structure. Remote Sens. 2015, 7, 13895–13920. [Google Scholar] [CrossRef]

- Su, L.; Huang, Y.; Gibeaut, J.; Li, L. The index array approach and the dual tiled similarity algorithm for UAS hyper-spatial image processing. GeoInformatica 2016, 20, 859–878. [Google Scholar] [CrossRef]

- Tahar, K.N.; Ahmad, A.; Akib, W.; Mohd, W. Assessment on ground control points in unmanned aerial system image processing for slope mapping studies. Int. J. Sci. Eng. Res. 2012, 3, 1–10. [Google Scholar]

- Tahar, K. An evaluation on different number of ground control points in unmanned aerial vehicle photogrammetric block. ISPRS J. Photogramm. 2013, 40, 93–98. [Google Scholar] [CrossRef]

- Bhandari, B.; Oli, U.; Pudasaini, U.; Panta, N. Generation of high resolution DSM using UAV images. In Proceedings of the FIG Working Week, From the Wisdom of the Ages to the Challenges of the Modern World, Sofia, Bulgaria, 17–21 May 2015; pp. 17–21. [Google Scholar]

- Aguilar, M.; Aguilar, F.; Negreiros, J. Self-Calibration Methods for using historical aerial photographs with photogrammetric purposes. An. Ing. Gráf. 2009, 21, 33–40. [Google Scholar]

- Zhang, S.; Qu, X.; Ma, S.; Yang, Z.; Kong, L. A dense stereo matching algorithm based on triangulation. J. Comput. Inf. Syst. 2012, 8, 283–292. [Google Scholar]

- Houborg, R.; McCabe, M.F. Daily retrieval of NDVI and LAI at 3 m resolution via the fusion of cubesat, landsat, and MODIS data. Remote Sens. 2018, 10, 890. [Google Scholar] [CrossRef]

- El-Sharif, A. Climatic constraints and potential corn production in Saudi Arabia—A study in agroclimate. GeoJournal 1986, 13, 119–127. [Google Scholar] [CrossRef]

- Doorenbos, J.; Kassam, A. Yield response to water. Irrig. Drain. Pap. 1979, 33, 257. [Google Scholar]

- Gordon, R.; Bootsma, A. Analyses of growing degree-days for agriculture in Atlantic Canada. Clim. Res. 1993, 3, 169–176. [Google Scholar] [CrossRef]

- Fisher, J.I.; Richardson, A.D.; Mustard, J.F. Phenology model from surface meteorology does not capture satellite-based greenup estimations. Glob. Chang. Biol. 2007, 13, 707–721. [Google Scholar] [CrossRef]

- Moeletsi, M.E. Mapping of maize growing period over the free state province of South Africa: Heat units approach. Adv. Meteorol. 2017, 2017, 7164068. [Google Scholar] [CrossRef]

- Neog, P.; Bhuyan, J.; Baruah, N. Thermal indices in relation to crop phenology and seed yield of soybean (Glycine max L. Merrill). J. Agrometeorol. 2008, 10, 388–392. [Google Scholar]

- Brown, D.; Bootsma, A. Crop Heat Units for Corn and Other Warm-Season Crops in Ontario; Factsheet Agdex 111/31; Ontario Ministry of Agriculture and Food: Toronto, ON, Canada, 1993.

- Ritchie, S.; Hanway, J.; Benson, G. How a Corn Plant Develops; Special Report No. 48; Iowa State University: Ames, IA, USA, 1986. [Google Scholar]

- Abendroth, L.J.; Elmore, R.W.; Boyer, M.J.; Marlay, S.K. Corn Growth and Development; PMR 1009; Iowa State University Extension: Ames, IA, USA, 2011. [Google Scholar]

- Bitzer, M.; Herbek, J.; Bessin, R.; Green, J.; Ibendahl, G.; Martin, J.; McNeill, S.; Montross, M.; Murdock, L.; Vincelli, P. A Comprehensive Guide to Corn Management in Kentucky; University of Kentucky: Lexington, KY, USA, 2000. [Google Scholar]

- Zhang, N.; Wang, M.; Wang, N. Precision agriculture—A worldwide overview. Comput. Electron. Agric. 2002, 36, 113–132. [Google Scholar] [CrossRef]

- Fischer, R.; Byerlee, D.; Edmeades, G. Crop Yields and Global Food Security; ACIAR: Canberra, Australia, 2014.

- Mesas-Carrascosa, F.-J.; Torres-Sánchez, J.; Clavero-Rumbao, I.; García-Ferrer, A.; Peña, J.-M.; Borra-Serrano, I.; López-Granados, F. Assessing optimal flight parameters for generating accurate multispectral orthomosaicks by UAV to support site-specific crop management. Remote Sens. 2015, 7, 12793–12814. [Google Scholar] [CrossRef]

- Geipel, J.; Link, J.; Claupein, W. Combined spectral and spatial modeling of corn yield based on aerial images and crop surface models acquired with an unmanned aircraft system. Remote Sens. 2014, 6, 10335–10355. [Google Scholar] [CrossRef]

- Harwin, S.; Lucieer, A. Assessing the accuracy of georeferenced point clouds produced via multi-view stereopsis from unmanned aerial vehicle (UAV) imagery. Remote Sens. 2012, 4, 1573–1599. [Google Scholar] [CrossRef]

- Wu, M.; Yang, C.; Song, X.; Hoffmann, W.C.; Huang, W.; Niu, Z.; Wang, C.; Li, W. Evaluation of orthomosics and digital surface models derived from aerial imagery for crop type mapping. Remote Sens. 2017, 9, 239. [Google Scholar] [CrossRef]

- Li, W.; Niu, Z.; Chen, H.; Li, D.; Wu, M.; Zhao, W. Remote estimation of canopy height and aboveground biomass of maize using high-resolution stereo images from a low-cost unmanned aerial vehicle system. Ecol. Indic. 2016, 67, 637–648. [Google Scholar] [CrossRef]

- Anthony, D.; Elbaum, S.; Lorenz, A.; Detweiler, C. On crop height estimation with UAVs. In Proceedings of the 2014 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2014), Chicago, IL, USA, 14–18 September 2014; pp. 4805–4812. [Google Scholar]

- Khanal, S.; Fulton, J.; Shearer, S. An overview of current and potential applications of thermal remote sensing in precision agriculture. Comput. Electron. Agric. 2017, 139, 22–32. [Google Scholar] [CrossRef]

- Shakhatreh, H.; Sawalmeh, A.; Al-Fuqaha, A.; Dou, Z.; Almaita, E.; Khalil, I.; Othman, N.S.; Khreishah, A.; Guizani, M. Unmanned aerial vehicles: A survey on civil applications and key research challenges. arXiv, 2018; arXiv:1805.00881. [Google Scholar]

- Khan, S.; Hanjra, M.A.; Mu, J. Water management and crop production for food security in China: A review. Agric. Water Manag. 2009, 96, 349–360. [Google Scholar] [CrossRef]

- Fan, M.; Shen, J.; Yuan, L.; Jiang, R.; Chen, X.; Davies, W.J.; Zhang, F. Improving crop productivity and resource use efficiency to ensure food security and environmental quality in China. J. Exp. Bot. 2011, 63, 13–24. [Google Scholar] [CrossRef] [PubMed]

| Flight Date (2016) | DOY | Images | Resolution (Image Pixels) | Flight Time | GCP Elevation Range (m) | Min GSD (cm/Pixel) |

|---|---|---|---|---|---|---|

| 4 April | 95 | 892 | 6000 × 4000 | 10:57 a.m. | 359.595–363.971 | 2.47 |

| 18 April | 109 | 868 | 6000 × 3150 | 10:47 a.m. | 359.492–363.984 | 2.52 |

| 28 April | 119 | 882 | 6000 × 4000 | 10:51 a.m. | 359.924–363.999 | 2.50 |

| 9 May | 130 | 812 | 6000 × 4000 | 10:30 a.m. | 360.274–364.004 | 2.56 |

| 25 June | 177 | 813 | 6000 × 4000 | 10:12 a.m. | 360.987–364.104 | 2.49 |

| Model | Dense Cloud Quality | Alignment Accuracy/Depth Filtering | DSM Resolution (cm/Pixel) | Total Processing Time (h) |

|---|---|---|---|---|

| 1 | Ultra-High | High/Disabled | 2.5 | 64 |

| 2 | High | High/Disabled | 5 | 24 |

| 3 | Medium | High/Disabled | 10 | 9 |

| 4 | Low | High/Disabled | 20 | 6 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ziliani, M.G.; Parkes, S.D.; Hoteit, I.; McCabe, M.F. Intra-Season Crop Height Variability at Commercial Farm Scales Using a Fixed-Wing UAV. Remote Sens. 2018, 10, 2007. https://doi.org/10.3390/rs10122007

Ziliani MG, Parkes SD, Hoteit I, McCabe MF. Intra-Season Crop Height Variability at Commercial Farm Scales Using a Fixed-Wing UAV. Remote Sensing. 2018; 10(12):2007. https://doi.org/10.3390/rs10122007

Chicago/Turabian StyleZiliani, Matteo G., Stephen D. Parkes, Ibrahim Hoteit, and Matthew F. McCabe. 2018. "Intra-Season Crop Height Variability at Commercial Farm Scales Using a Fixed-Wing UAV" Remote Sensing 10, no. 12: 2007. https://doi.org/10.3390/rs10122007

APA StyleZiliani, M. G., Parkes, S. D., Hoteit, I., & McCabe, M. F. (2018). Intra-Season Crop Height Variability at Commercial Farm Scales Using a Fixed-Wing UAV. Remote Sensing, 10(12), 2007. https://doi.org/10.3390/rs10122007