Disease Diagnosis in Smart Healthcare: Innovation, Technologies and Applications

Abstract

1. Introduction

2. Emerging Optimization Algorithms and Machine Learning Algorithms

2.1. Optimization Algorithms

2.1.1. Evolutionary Optimization

2.1.2. Stochastic Optimization

2.1.3. Combinatorial Optimization

2.2. Machine Learning Algorithms

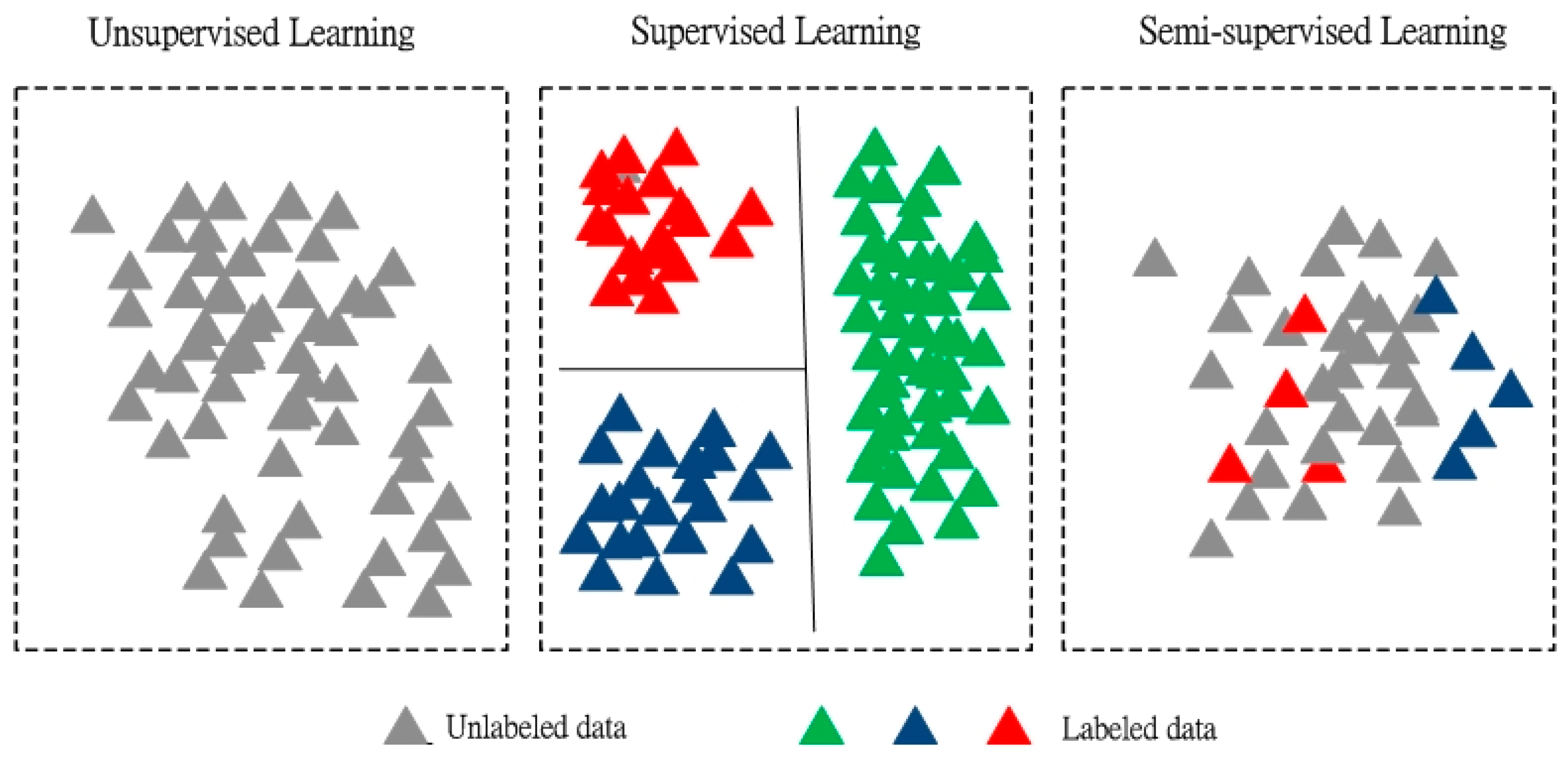

2.2.1. Un-Supervised Learning

2.2.2. Supervised Learning

2.2.3. Semi-Supervised Learning

2.2.4. Reinforcement Learning

3. Smart Healthcare Applications

3.1. Cardiovascular Diseases

3.2. Diabetes Mellitus

3.3. Alzheimer’s Disease and Other Forms of Dementia

4. Challenges in Smart Healthcare

4.1. Privacy

4.2. Pilot Studies and Real Projects

4.3. Communication between Data Scientists and Medical Personnel

4.4. No-Free Lunch Theorem

4.5. Increase Short-Term to Medium-Term Expenditure

5. Conclusions

Author Contributions

Conflicts of Interest

References

- Kondepudi, S.N.; Ramanarayanan, V.; Jain, A.; Singh, G.N.; Nitin Agarwal, N.K.; Kumar, R.; Singh, R.; Bergmark, P.; Hashitani, T.; Gemma, P.; et al. Smart Sustainable Cities: An Analysis of Definitions; International Telecommunication Union: Geneva, Switzerland, 2014. [Google Scholar]

- Scheffler, R.; Cometto, G.; Tulenko, K.; Bruckner, T.; Liu, J.; Keuffel, E.L.; Preker, A.; Stilwell, B.; Brasileiro, J.; Campbell, J. Health Workforce Requirements for Universal Health Coverage and the Sustainable Development Goals; World Health Organization: Geneva, Switzerland, 2016. [Google Scholar]

- Beard, J.; Ferguson, L.; Marmot, M.; Nash, P.; Phillips, D.; Staudinge, U.; Dua, T.; Saxena, S.; Ogawa, H.; Petersen, P.E.; et al. World Report on Ageing and Health 2015; World Health Organization: Geneva, Switzerland, 2015. [Google Scholar]

- Total Expenditure on Health as a Percentage of Gross Domestic product (US$); Global Health Observatory (GHO) Data; World Health Organization: Geneva, Switzerland, 2017; Available online: http://www.who.int/gho/health_financing/total_expenditure/en/ (accessed on 15 September 2017).

- Du, G.; Sun, C. Location planning problem of service centers for sustainable home healthcare: Evidence from the empirical analysis of Shanghai. Sustainability 2015, 7, 15812–15832. [Google Scholar] [CrossRef]

- Castro, M.D.F.; Mateus, R.; Serôdio, F.; Bragança, L. Development of benchmarks for operating costs and resources consumption to be used in healthcare building sustainability assessment methods. Sustainability 2015, 7, 13222–13248. [Google Scholar] [CrossRef]

- Momete, D.C. Building a sustainable healthcare model: A cross-country analysis. Sustainability 2016, 8, 836. [Google Scholar] [CrossRef]

- Friedman, G.J. Selective Feedback Computers for Engineering Synthesis and Nervous System Analogy. Master’s Thesis, University of California, Los Angeles, CA, USA, 1956. [Google Scholar]

- Friedberg, R.M. A Learning Machine: Part I. IBM J. Res. Dev. 1958, 2, 2–13. [Google Scholar] [CrossRef]

- Fogel, D.B. Evolutionary Computation: Toward a New Philosophy of Machine Intelligence; Wiley-IEEE Press: Hoboken, NJ, USA, 2006. [Google Scholar]

- Bäck, T. Selective Pressure in Evolutionary Algorithms: A Characterization of Selection Methods. In Proceedings of the First IEEE Conference on Evolutionary Computation, Orlando, FL, USA, 27–29 June 1994; IEEE Press: Piscataway, NJ, USA, 1994. [Google Scholar]

- Deb, K. Multi-objective optimization. In Search Methodologies; Springer: Berlin, Germany, 2014; pp. 403–449. [Google Scholar]

- Zhang, W.; Cao, K.; Liu, S.; Huang, B. A multi-objective optimization approach for health-care facility location-allocation problems in highly developed cities such as Hong Kong. Comput. Environ. Urban Syst. 2016, 59, 220–230. [Google Scholar] [CrossRef]

- Wen, T.; Zhang, Z.; Qiu, M.; Wu, Q.; Li, C. A Multi-Objective Optimization Method for Emergency Medical Resources Allocation. J. Med. Imaging Health Inform. 2017, 7, 393–399. [Google Scholar] [CrossRef]

- Karaman, S.; Ekici, B.; Cubukcuoglu, C.; Koyunbaba, B.K.; Kahraman, I. Design of rectangular façade modules through computational intelligence. In Proceedings of the 2017 IEEE Congress Evolutionary Computation (CEC), San Sebastián, Spain, 5–8 June 2017; IEEE Press: Piscataway, NJ, USA, 2017. [Google Scholar]

- Du, G.; Liang, X.; Sun, C. Scheduling Optimization of Home Health Care Service Considering Patients’ Priorities and Time Windows. Sustainability 2017, 9, 253. [Google Scholar] [CrossRef]

- Elkady, S.K.; Abdelsalam, H.M. A modified multi-objective particle swarm optimisation algorithm for healthcare facility planning. Int. J. Bus. Syst. Res. 2016, 10, 1–22. [Google Scholar] [CrossRef]

- Marti, K. Stochastic Optimization Methods; Springer: Berlin, Germany, 2008. [Google Scholar]

- Martin, B.; Correia, M.; Cruz, J. A certified Branch & Bound approach for reliability-based optimization problems. J. Glob. Optim. 2017, 1–24. [Google Scholar] [CrossRef]

- Duan, L.; Li, G.; Cheng, A.; Sun, G.; Song, K. Multi-objective system reliability-based optimization method for design of a fully parametric concept car body. Eng. Optim. 2017, 49, 1247–1263. [Google Scholar] [CrossRef]

- Zhang, L.Z. A reliability-based optimization of membrane-type total heat exchangers under uncertain design parameters. Energy 2016, 101, 390–401. [Google Scholar] [CrossRef]

- Rostami, M.A.; Kavousi-Fard, A.; Niknam, T. Expected cost minimization of smart grids with plug-in hybrid electric vehicles using optimal distribution feeder reconfiguration. IEEE Trans. Ind. Inform. 2015, 11, 388–397. [Google Scholar] [CrossRef]

- Noor, E.; Flamholz, A.; Bar-Even, A.; Davidi, D.; Milo, R.; Liebermeister, W. The protein cost of metabolic fluxes: Prediction from enzymatic rate laws and cost minimization. PLoS Comput. Biol. 2016, 12, e1005167. [Google Scholar] [CrossRef] [PubMed]

- Yong, K.L.; Nguyen, H.V.; Cajucom-Uy, H.Y.; Foo, V.; Tan, D.; Finkelstein, E.A.; Mehta, J.S. Cost minimization analysis of precut cornea grafts in Descemet stripping automated endothelial keratoplasty. Medicine 2016, 95. [Google Scholar] [CrossRef] [PubMed]

- Saadouli, H.; Jerbi, B.; Dammak, A.; Masmoudi, L.; Bouaziz, A. A stochastic optimization and simulation approach for scheduling operating rooms and recovery beds in an orthopedic surgery department. Comput. Ind. Eng. 2015, 80, 72–79. [Google Scholar] [CrossRef]

- Legrain, A.; Fortin, M.A.; Lahrichi, N.; Rousseau, L.M. Online stochastic optimization of radiotherapy patient scheduling. Health Care Manag. Sci. 2015, 18, 110–123. [Google Scholar] [CrossRef] [PubMed]

- Bagheri, M.; Devin, A.G.; Izanloo, A. An application of stochastic programming method for nurse scheduling problem in real word hospital. Comput. Ind. Eng. 2016, 96, 192–200. [Google Scholar] [CrossRef]

- Omar, E.R.; Garaix, T.; Augusto, V.; Xie, X. A stochastic optimization model for shift scheduling in emergency departments. Health Care Manag. Sci. 2015, 18, 289–302. [Google Scholar] [CrossRef]

- Saremi, A.; Jula, P.; ElMekkawy, T.; Wang, G.G. Bi-criteria appointment scheduling of patients with heterogeneous service sequences. Expert Syst. Appl. 2015, 42, 4029–4041. [Google Scholar] [CrossRef]

- Graham, R.L.; Hell, P. On the history of the minimum spanning tree problem. Ann. Hist. Comput. 1985, 7, 43–57. [Google Scholar] [CrossRef]

- Dorigo, M.; Gambardella, L.M. Ant colonies for the travelling salesman problem. Biosystems 1997, 43, 73–81. [Google Scholar] [CrossRef]

- Cardoen, B.; Demeulemeester, E.; Beliën, J. Operating room planning and scheduling: A literature review. Eur. J. Oper. Res. 2010, 201, 921–932. [Google Scholar] [CrossRef]

- Malik, M.M.; Khan, M.; Abdallah, S. Aggregate capacity planning for elective surgeries: A bi-objective optimization approach to balance patients waiting with healthcare costs. Oper. Res. Health Care 2015, 7, 3–13. [Google Scholar] [CrossRef]

- Hsia, R.Y.; Kothari, A.H.; Srebotnjak, T.; Maselli, J. Health care as a “market good”? Appendicitis as a case study. Arch. Intern. Med. 2012, 172, 818–819. [Google Scholar] [CrossRef] [PubMed]

- Denoyel, V.; Alfandari, L.; Thiele, A. Optimizing healthcare network design under Reference Pricing and parameter uncertainty. Eur. J. Oper. Res. 2017, 263, 996–1006. [Google Scholar] [CrossRef]

- Heching, A.; Hooker, J.N. Scheduling home hospice care with logic-based Benders decomposition. In Proceedings of the International Conference on AI and OR Techniques in Constraint Programming for Combinatorial Optimization Problems, Banff, AB, Canada, 29 May–1 June 2016; Springer International Publishing: Cham, Switzerland, 2016. [Google Scholar]

- Papp, D.; Bortfeld, T.; Unkelbach, J. A modular approach to intensity-modulated arc therapy optimization with noncoplanar trajectories. Phys. Med. Biol. 2015, 60. [Google Scholar] [CrossRef] [PubMed]

- Jemai, J.; Chaieb, M.; Mellouli, K. The home care scheduling problem: A modeling and solving issue. In Proceedings of the 2013 5th International Conference on Modeling, Simulation and Applied Optimization (ICMSAO), Hammamet, Tunisia, 28–30 April 2013; IEEE Press: Piscataway, NJ, USA, 2013. [Google Scholar]

- Corpet, F. Multiple sequence alignment with hierarchical clustering. Nucleic Acids Res. 1988, 16, 10881–10890. [Google Scholar] [CrossRef] [PubMed]

- Tarabalka, Y.; Benediktsson, J.A.; Chanussot, J. Spectral-spatial classification of hyperspectral imagery based on partitional clustering techniques. IEEE Trans. Geosci. Remote Sens. 2009, 47, 2973–2987. [Google Scholar] [CrossRef]

- Wallstrom, G.L.; Hogan, W.R. Unsupervised clustering of over-the-counter healthcare products into product categories. J. Biomed. Inform. 2007, 40, 642–648. [Google Scholar] [CrossRef] [PubMed]

- Fong, A.; Clark, L.; Cheng, T.; Franklin, E.; Fernandez, N.; Ratwani, R.; Parker, S.H. Identifying influential individuals on intensive care units: Using cluster analysis to explore culture. J. Nurs. Manag. 2017, 25, 384–391. [Google Scholar] [CrossRef] [PubMed]

- Lim, S.; Tucker, C.S.; Kumara, S. An unsupervised machine learning model for discovering latent infectious diseases using social media data. J. Biomed. Inform. 2017, 66, 82–94. [Google Scholar] [CrossRef] [PubMed]

- Sipes, T.; Jiang, S.; Moore, K.; Li, N.; Karimabadi, H.; Barr, J.R. Anomaly Detection in Healthcare: Detecting Erroneous Treatment Plans in Time Series Radiotherapy Data. Int. J. Semant. Comput. 2014, 8, 257–278. [Google Scholar] [CrossRef]

- Haque, S.A.; Rahman, M.; Aziz, S.M. Sensor anomaly detection in wireless sensor networks for healthcare. Sensors 2015, 15, 8764–8786. [Google Scholar] [CrossRef] [PubMed]

- Kadri, F.; Harrou, F.; Chaabane, S.; Sun, Y.; Tahon, C. Seasonal ARMA-based SPC charts for anomaly detection: Application to emergency department systems. Neurocomputing 2016, 173, 2102–2114. [Google Scholar] [CrossRef]

- Khan, F.A.; Haldar, N.; Ali, A.; Iftikhar, M.; Zia, T.; Zomaya, A. A Continuous Change Detection Mechanism to Identify Anomalies in ECG Signals for WBAN-based Healthcare Environments. IEEE Access 2017, 5, 13531–13544. [Google Scholar] [CrossRef]

- Ordóñez, F.J.; de Toledo, P.; Sanchis, A. Sensor-based Bayesian detection of anomalous living patterns in a home setting. Pers. Ubiquitous Comput. 2015, 19, 259–270. [Google Scholar] [CrossRef]

- Huang, Z.; Dong, W.; Ji, L.; Yin, L.; Duan, H. On local anomaly detection and analysis for clinical pathways. Artif. Intell. Med. 2015, 65, 167–177. [Google Scholar] [CrossRef] [PubMed]

- Fukumizu, K.; Bach, F.R.; Jordan, M.I. Dimensionality reduction for supervised learning with reproducing kernel Hilbert spaces. J. Mach. Learn. Res. 2004, 5, 73–99. [Google Scholar]

- Geman, S.; Bienenstock, E.; Doursat, R. Neural networks and the bias/variance dilemma. Neural Netw. 2008, 4, 1–58. [Google Scholar] [CrossRef]

- Nettleton, D.F.; Orriols-Puig, A.; Fornells, A. A study of the effect of different types of noise on the precision of supervised learning techniques. Artif. Intell. Rev. 2010, 33, 275–306. [Google Scholar] [CrossRef]

- Akata, Z.; Perronnin, F.; Harchaoui, Z.; Schmid, C. Good practice in large-scale learning for image classification. IEEE Trans. Pattern Anal. Mach. Intell. 2014, 36, 507–520. [Google Scholar] [CrossRef] [PubMed]

- Lewis, D.D.; Catlett, J. Heterogeneous uncertainty sampling for supervised learning. In Proceedings of the Eleventh International Conference on Machine Learning, New Brunswick, NJ, USA, 10–13 July 1994; Morgan Kaufmann Publishers: San Francisco, CA, USA, 1994. [Google Scholar]

- Unler, A.; Murat, A.; Chinnam, R.B. MR 2 PSO: A maximum relevance minimum redundancy feature selection method based on swarm intelligence for support vector machine classification. Inf. Sci. 2011, 181, 4625–4641. [Google Scholar] [CrossRef]

- Raducanu, B.; Dornaika, F. A supervised non-linear dimensionality reduction approach for manifold learning. Pattern Recognit. 2012, 45, 2432–2444. [Google Scholar] [CrossRef]

- Zhang, M.; Yang, L.; Ren, J.; Ahlgren, N.A.; Fuhrman, J.A.; Sun, F. Prediction of virus-host infectious association by supervised learning methods. BMC Bioinform. 2017, 18. [Google Scholar] [CrossRef] [PubMed]

- Cai, Y.; Tan, X.; Tan, X. Selective weakly supervised human detection under arbitrary poses. Pattern Recognit. 2017, 65, 223–237. [Google Scholar] [CrossRef]

- Ichikawa, D.; Saito, T.; Ujita, W.; Oyama, H. How can machine-learning methods assist in virtual screening for hyperuricemia? A healthcare machine learning approach. J. Biomed. Inform. 2016, 64, 20–24. [Google Scholar] [CrossRef] [PubMed]

- Muhammad, G. Automatic speech recognition using interlaced derivative pattern for cloud based healthcare system. Clust. Comput. 2015, 18, 795–802. [Google Scholar] [CrossRef]

- Wang, Y.; Wu, S.; Li, D.; Mehrabi, S.; Liu, H. A Part-Of-Speech term weighting scheme for biomedical information retrieval. J. Biomed. Inform. 2016, 63, 379–389. [Google Scholar] [CrossRef] [PubMed]

- Aydın, E.A.; Kaya Keleş, M. Breast cancer detection using K-nearest neighbors data mining method obtained from the bow-tie antenna dataset. Int. J. RF Microw. Comput. Aided Eng. 2017, 27, e21098. [Google Scholar] [CrossRef]

- Li, H.; Luo, M.; Luo, J.; Zheng, J.; Zeng, R.; Du, Q.; Ouyang, N. A discriminant analysis prediction model of non-syndromic cleft lip with or without cleft palate based on risk factors. BMC Pregnancy Childbirth 2016, 16, 368. [Google Scholar] [CrossRef] [PubMed]

- Li, J.; Fong, S.; Mohammed, S.; Fiaidhi, J.; Chen, Q.; Tan, Z. Solving the under-fitting problem for decision tree algorithms by incremental swarm optimization in rare-event healthcare classification. J. Med. Imaging Health Inform. 2016, 6, 1102–1110. [Google Scholar] [CrossRef]

- Miranda, E.; Irwansyah, E.; Amelga, A.Y.; Maribondang, M.M.; Salim, M. Detection of cardiovascular disease risk’s level for adults using naive Bayes classifier. Healthcare Inform. Res. 2016, 22, 196–205. [Google Scholar] [CrossRef] [PubMed]

- Choi, E.; Schuetz, A.; Stewart, W.F.; Sun, J. Using recurrent neural network models for early detection of heart failure onset. J. Am. Med. Inform. Assoc. 2016, 24, 361–370. [Google Scholar] [CrossRef] [PubMed]

- Hsu, W.C.; Lin, L.F.; Chou, C.W.; Hsiao, Y.T.; Liu, Y.H. EEG classification of imaginary lower limb stepping movements based on fuzzy support vector machine with Kernel-induced membership function. Int. J. Fuzzy Syst. 2017, 19, 566–579. [Google Scholar] [CrossRef]

- Gu, B.; Sheng, V.S.; Tay, K.Y.; Romano, W.; Li, S. Incremental support vector learning for ordinal regression. IEEE Trans. Neural Netw. Learn. Syst. 2015, 26, 1403–1416. [Google Scholar] [CrossRef] [PubMed]

- Eckardt, M.; Brettschneider, C.; Bussche, H.; König, H.H. Analysis of health care costs in elderly patients with multiple chronic conditions using a finite mixture of generalized linear models. Health Econ. 2017, 26, 582–599. [Google Scholar] [CrossRef] [PubMed]

- Hahne, J.M.; Biessmann, F.; Jiang, N.; Rehbaum, H.; Farina, D.; Meinecke, F.C.; Parra, L.C. Linear and nonlinear regression techniques for simultaneous and proportional myoelectric control. IEEE Trans. Neural Syst. Rehabil. Eng. 2014, 22, 269–279. [Google Scholar] [CrossRef] [PubMed]

- Wongchaisuwat, P.; Klabjan, D.; Jonnalagadda, S.R. A Semi-Supervised Learning Approach to Enhance Health Care Community-Based Question Answering: A Case Study in Alcoholism. JMIR Med. Inform. 2016, 4. [Google Scholar] [CrossRef] [PubMed]

- Albalate, A.; Minker, W. Semi-Supervised and Unsupervised Machine Learning: Novel Strategies; John Wiley & Sons: Hoboken, NJ, USA, 2013. [Google Scholar]

- Shi, D.; Zurada, J.; Guan, J. A Neuro-fuzzy System with Semi-supervised Learning for Bad Debt Recovery in the Healthcare Industry. In Proceedings of the 2015 48th Hawaii International Conference on System Sciences (HICSS), Kauai, HI, USA, 5–8 January 2015; IEEE Press: Piscataway, NJ, USA, 2015. [Google Scholar]

- Reitmaier, T.; Sick, B. The responsibility weighted Mahalanobis kernel for semi-supervised training of support vector machines for classification. Inform. Sci. 2015, 323, 179–198. [Google Scholar] [CrossRef]

- Wang, Y.; Chen, S.; Xue, H.; Fu, Z. Semi-supervised classification learning by discrimination-aware manifold regularization. Neurocomputing 2015, 147, 299–306. [Google Scholar] [CrossRef]

- Nie, L.; Zhao, Y.L.; Akbari, M.; Shen, J.; Chua, T.S. Bridging the vocabulary gap between health seekers and healthcare knowledge. IEEE Trans. Knowl. Data Eng. 2015, 27, 396–409. [Google Scholar] [CrossRef]

- Cvetković, B.; Kaluža, B.; Gams, M.; Luštrek, M. Adapting activity recognition to a person with Multi-Classifier Adaptive Training. J. Ambient Intell. Smart Environ. 2015, 7, 171–185. [Google Scholar] [CrossRef]

- Jin, L.; Xue, Y.; Li, Q.; Feng, L. Integrating human mobility and social media for adolescent psychological stress detection. In Proceedings of the International Conference on Database Systems for Advanced Applications, Dallas, TX, USA, 16–19 April 2016; Springer: Cham, Switzerland, 2016. [Google Scholar]

- Ashfaq, R.A.R.; Wang, X.Z.; Huang, J.Z.; Abbas, H.; He, Y.L. Fuzziness based semi-supervised learning approach for intrusion detection system. Inf. Sci. 2017, 378, 484–497. [Google Scholar] [CrossRef]

- Zhang, X.; Guan, N.; Jia, Z.; Qiu, X.; Luo, Z. Semi-supervised projective non-negative matrix factorization for cancer classification. PLoS ONE 2015, 10, e0138814. [Google Scholar] [CrossRef] [PubMed]

- Yan, Y.; Chen, L.; Tjhi, W.C. Fuzzy semi-supervised co-clustering for text documents. Fuzzy Sets Syst. 2013, 215, 74–89. [Google Scholar] [CrossRef]

- Go, A.S.; Mozaffarian, D.; Roger, V.L.; Benjamin, E.J.; Berry, J.D.; Borden, W.B.; Franco, S. Executive summary: Heart disease and stroke statistics—2013 update: A report from the American Heart Association. Circulation 2013, 127, 143–152. [Google Scholar] [CrossRef] [PubMed]

- Thompson, P.D.; Buchner, D.; Piña, I.L.; Balady, G.J.; Williams, M.A.; Marcus, B.H.; Fletcher, G.F. Exercise and physical activity in the prevention and treatment of atherosclerotic cardiovascular disease. Circulation 2003, 107, 3109–3116. [Google Scholar] [CrossRef] [PubMed]

- Ohira, T.; Iso, H. Cardiovascular disease epidemiology in Asia. Circ. J. 2013, 77, 1646–1652. [Google Scholar] [CrossRef] [PubMed]

- Feigin, V.L.; Norrving, B.; George, M.G.; Foltz, J.L.; Roth, G.A.; Mensah, G.A. Prevention of stroke: A strategic global imperative. Nat. Rev. Neurol. 2016, 12, 501–512. [Google Scholar] [CrossRef] [PubMed]

- Wannamethee, S.G.; Shaper, A.G. Physical activity and cardiovascular disease. In Seminars in Vascular Medicine; Thieme Medical Publishers: New York, NY, USA, 2002. [Google Scholar]

- Luna, A.B.D. Clinical Electrocardiography: A Textbook; Wiley-Blackwell: Oxford, UK, 2012. [Google Scholar]

- Macfarlane, P.W.; Edenbrandy, L.; Pahlm, O. 12-Lead Vectorcardiography; Butterworth Heinemann: Oxford, UK, 1995. [Google Scholar]

- Odinaka, I.; Lai, P.H.; Kaplan, A.D.; O’Sullivan, J.A.; Sirevaag, E.J.; Rohrbaugh, J.W. ECG biometric recognition: A comparative analysis. IEEE Trans. Inf. Forensics Secur. 2012, 7, 1812–1824. [Google Scholar] [CrossRef]

- Tripathy, R.K.; Sharma, L.N.; Dandapat, S. A new way of quantifying diagnostic information from multilead electrocardiogram for cardiac disease classification. Healthcare Technol. Lett. 2014, 1, 98–103. [Google Scholar] [CrossRef] [PubMed]

- Rahman, Q.A.; Tereshchenko, L.G.; Kongkatong, M.; Abraham, T.; Abraham, M.R.; Shatkay, H. Utilizing ECG-based heartbeat classification for hypertrophic cardiomyopathy identification. IEEE Trans. Nanobiosci. 2015, 14, 505–512. [Google Scholar] [CrossRef] [PubMed]

- Melillo, P.; De Luca, N.; Bracale, M.; Pecchia, L. Classification tree for risk assessment in patients suffering from congestive heart failure via long-term heart rate variability. IEEE J. Biomed. Health Inform. 2013, 17, 727–733. [Google Scholar] [CrossRef] [PubMed]

- Vafaie, M.H.; Ataei, M.; Koofigar, H.R. Heart diseases prediction based on ECG signals’ classification using a genetic-fuzzy system and dynamical model of ECG signals. Biomed. Signal Process. Control 2014, 14, 291–296. [Google Scholar] [CrossRef]

- Acharya, U.R.; Fujita, H.; Adam, M.; Lih, O.S.; Sudarshan, V.K.; Hong, T.J.; San, T.R. Automated characterization and classification of coronary artery disease and myocardial infarction by decomposition of ECG signals: A comparative study. Inf. Sci. 2017, 377, 17–29. [Google Scholar] [CrossRef]

- Oster, J.; Behar, J.; Sayadi, O.; Nemati, S.; Johnson, A.E.; Clifford, G.D. Semisupervised ECG ventricular beat classification with novelty detection based on switching Kalman filters. IEEE Trans. Biomed. Eng. 2015, 62, 2125–2134. [Google Scholar] [CrossRef] [PubMed]

- Li, P.; Wang, Y.; He, J.; Wang, L.; Tian, Y.; Zhou, T.S.; Li, J.S. High-Performance Personalized Heartbeat Classification Model for Long-Term ECG Signal. IEEE Trans. Biomed. Eng. 2017, 64, 78–86. [Google Scholar] [CrossRef] [PubMed]

- Acharya, U.R.; Oh, S.L.; Hagiwara, Y.; Tan, J.H.; Adam, M.; Gertych, A.; San, T.R. A deep convolutional neural network model to classify heartbeats. Comput. Biol. Med. 2017, 89, 389–396. [Google Scholar] [CrossRef] [PubMed]

- Elhaj, F.A.; Salim, N.; Harris, A.R.; Swee, T.T.; Ahmed, T. Arrhythmia recognition and classification using combined linear and nonlinear features of ECG signals. Comput. Methods Programs Biomed. 2016, 127, 52–63. [Google Scholar] [CrossRef] [PubMed]

- Haldar, N.A.H.; Khan, F.A.; Ali, A.; Abbas, H. Arrhythmia classification using Mahalanobis distance based improved Fuzzy C-Means clustering for mobile health monitoring systems. Neurocomputing 2017, 220, 221–235. [Google Scholar] [CrossRef]

- Global Report on Diabetes; World Health Organization: Geneva, Switzerland, 2016; Available online: http://apps.who.int/iris/bitstream/10665/204871/1/9789241565257_eng.pdf (accessed on 15 September 2017).

- Guariguata, L.; Whiting, D.R.; Hambleton, I.; Beagley, J.; Linnenkamp, U.; Shaw, J.E. Global estimates of diabetes prevalence for 2013 and projections for 2035. Diabetes Res. Clin. Pract. 2014, 103, 137–149. [Google Scholar] [CrossRef] [PubMed]

- Rodger, W. Non-insulin-dependent (type II) diabetes mellitus. Can. Med. Assoc. J. 1991, 145, 1571–1581. [Google Scholar]

- Han, L.; Luo, S.; Yu, J.; Pan, L.; Chen, S. Rule extraction from support vector machines using ensemble learning approach: An application for diagnosis of diabetes. IEEE J. Biomed. Health Inform. 2015, 19, 728–734. [Google Scholar] [CrossRef] [PubMed]

- Zheng, T.; Xie, W.; Xu, L.; He, X.; Zhang, Y.; You, M.; Chen, Y. A machine learning-based framework to identify type 2 diabetes through electronic health records. Int. J. Med. Inform. 2017, 97, 120–127. [Google Scholar] [CrossRef] [PubMed]

- Ganji, M.F.; Abadeh, M.S. A fuzzy classification system based on ant colony optimization for diabetes disease diagnosis. Expert Syst. Appl. 2011, 38, 14650–14659. [Google Scholar] [CrossRef]

- Lee, B.J.; Ku, B.; Nam, J.; Pham, D.D.; Kim, J.Y. Prediction of fasting plasma glucose status using anthropometric measures for diagnosing type 2 diabetes. IEEE J. Biomed. Health Inform. 2014, 18, 555–561. [Google Scholar] [CrossRef] [PubMed]

- Lee, B.J.; Kim, J.Y. Identification of type 2 diabetes risk factors using phenotypes consisting of anthropometry and triglycerides based on machine learning. IEEE J. Biomed. Health Inform. 2016, 20, 39–46. [Google Scholar] [CrossRef] [PubMed]

- Ling, S.H.; San, P.P.; Nguyen, H.T. Non-invasive hypoglycemia monitoring system using extreme learning machine for Type 1 diabetes. ISA Trans. 2016, 64, 440–446. [Google Scholar] [CrossRef] [PubMed]

- Vyas, R.; Bapat, S.; Jain, E.; Karthikeyan, M.; Tambe, S.; Kulkarni, B.D. Building and analysis of protein-protein interactions related to diabetes mellitus using support vector machine, biomedical text mining and network analysis. Comput. Biol. Chem. 2016, 65, 37–44. [Google Scholar] [CrossRef] [PubMed]

- Li, C.M.; Du, Y.C.; Wu, J.X.; Lin, C.H.; Ho, Y.R.; Lin, Y.J.; Chen, T. Synchronizing chaotification with support vector machine and wolf pack search algorithm for estimation of peripheral vascular occlusion in diabetes mellitus. Biomed. Signal Process. Control 2014, 9, 45–55. [Google Scholar] [CrossRef]

- Rau, H.H.; Hsu, C.Y.; Lin, Y.A.; Atique, S.; Fuad, A.; Wei, L.M.; Hsu, M.H. Development of a web-based liver cancer prediction model for type II diabetes patients by using an artificial neural network. Comput. Methods Programs Biomed. 2016, 125, 58–65. [Google Scholar] [CrossRef] [PubMed]

- Marateb, H.R.; Mansourian, M.; Faghihimani, E.; Amini, M.; Farina, D. A hybrid intelligent system for diagnosing microalbuminuria in type 2 diabetes patients without having to measure urinary albumin. Comput. Biol. Med. 2014, 45, 34–42. [Google Scholar] [CrossRef] [PubMed]

- Gaugler, J.; James, B.; Johnson, T.; Weuve, J. 2017 Alzheimer’s Disease Facts and Figures; Alzheimer’s Association: Chicago, IL, USA, 2017; Available online: https://www.alz.org/documents_custom/2017-facts-and-figures.pdf (accessed on 15 September 2017).

- Rizzi, L.; Rosset, I.; Roriz-Cruz, M. Global epidemiology of dementia: Alzheimer’s and vascular types. BioMed Res. Int. 2014, 2014, 908915. [Google Scholar] [CrossRef] [PubMed]

- Armañanzas, R.; Iglesias, M.; Morales, D.A.; Alonso-Nanclares, L. Voxel-Based Diagnosis of Alzheimer’s Disease Using Classifier Ensembles. IEEE J. Biomed. Health Inform. 2017, 21, 778–784. [Google Scholar] [CrossRef] [PubMed]

- Chen, Y.; Sha, M.; Zhao, X.; Ma, J.; Ni, H.; Gao, W.; Ming, D. Automated detection of pathologic white matter alterations in Alzheimer’s disease using combined diffusivity and kurtosis method. Psychiatry Res. Neuroimaging 2017, 264, 35–45. [Google Scholar] [CrossRef] [PubMed]

- Tanaka, H.; Adachi, H.; Ukita, N.; Ikeda, M.; Kazui, H.; Kudo, T.; Nakamura, S. Detecting Dementia through Interactive Computer Avatars. IEEE J. Transl. Eng. Health Med. 2017, 5, 1–11. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Dong, Z.; Phillips, P.; Wang, S.; Ji, G.; Yang, J.; Yuan, T.F. Detection of subjects and brain regions related to Alzheimer’s disease using 3D MRI scans based on eigenbrain and machine learning. Front. Comput. Neurosci. 2015, 9. [Google Scholar] [CrossRef] [PubMed]

- Moradi, E.; Pepe, A.; Gaser, C.; Huttunen, H.; Tohka, J.; Alzheimer’s Disease Neuroimaging Initiative. Machine learning framework for early MRI-based Alzheimer’s conversion prediction in MCI subjects. Neuroimage 2015, 104, 398–412. [Google Scholar] [CrossRef] [PubMed]

- Beheshti, I.; Demirel, H.; Matsuda, H. Classification of Alzheimer’s disease and prediction of mild cognitive impairment-to-Alzheimer’s conversion from structural magnetic resource imaging using feature ranking and a genetic algorithm. Comput. Biol. Med. 2017, 83, 109–119. [Google Scholar] [CrossRef] [PubMed]

- Doan, N.T.; Engvig, A.; Zaske, K.; Persson, K.; Lund, M.J.; Kaufmann, T.; Barca, M.L. Distinguishing early and late brain aging from the Alzheimer’s disease spectrum: Consistent morphological patterns across independent samples. NeuroImage 2017, 158, 282–295. [Google Scholar] [CrossRef] [PubMed]

- Shi, B.; Chen, Y.; Zhang, P.; Smith, C.D.; Liu, J. Nonlinear feature transformation and deep fusion for Alzheimer's Disease staging analysis. Pattern Recognit. 2017, 63, 487–498. [Google Scholar] [CrossRef]

- Billones, C.D.; Demetria, O.J.L.D.; Hostallero, D.E.D.; Naval, P.C. DemNet: A convolutional neural network for the detection of Alzheimer’s disease and mild cognitive impairment. In Proceedings of the 2016 IEEE Region 10 Conference (TENCON), Singapore, 22–25 November 2016; IEEE Press: Piscataway, NJ, USA, 2016. [Google Scholar]

- Morabito, F.C.; Campolo, M.; Ieracitano, C.; Ebadi, J.M.; Bonano, L.; Bramanti, A.; Desalvo, S.; Mammone, N.; Bramanti, P. Deep convolutional neural networks for classification of mild cognitive impaired and Alzheimer’s disease patients from scalp EEG recordings. In Proceedings of the 2016 IEEE 2nd International Forum on Research and Technologies for Society and Industry Leveraging a Better Tomorrow (RTSI), Bologna, Italy, 7–9 September 2016; IEEE Press: Piscataway, NJ, USA, 2016. [Google Scholar]

- Martínez-Ballesteros, M.; García-Heredia, J.M.; Nepomuceno-Chamorro, I.A.; Riquelme-Santos, J.C. Machine learning techniques to discover genes with potential prognosis role in Alzheimer’s disease using different biological sources. Inf. Fusion 2017, 36, 114–129. [Google Scholar] [CrossRef]

- Miao, Y.; Jiang, H.; Liu, H.; Yao, Y.D. An Alzheimers disease related genes identification method based on multiple classifier integration. Comput. Methods Programs Biomed. 2017, 150, 107–115. [Google Scholar] [CrossRef] [PubMed]

- Global Tuberculosis Report 2013; World Health Organization: Geneva, Switzerland, 2013; Available online: http://apps.who.int/iris/bitstream/10665/91355/1/9789241564656_eng.pdf (accessed on 15 September 2017).

- Lacerda, S.N.B.; de Abreu Temoteo, R.C.; de Figueiredo, T.M.R.M.; de Luna, F.D.T.; de Sousa, M.A.N.; de Abreu, L.C.; Fonseca, F.L.A. Individual and social vulnerabilities upon acquiring tuberculosis: A literature systematic review. Int. Arch. Med. 2014, 7, 35. [Google Scholar] [CrossRef] [PubMed]

- Hwang, L.Y.; Grimes, C.Z.; Beasley, R.P.; Graviss, E.A. Latent tuberculosis infections in hard-to-reach drug using population-detection, prevention and control. Tuberculosis 2009, 89, S41–S45. [Google Scholar] [CrossRef]

- Sulis, G.; Roggi, A.; Matteelli, A.; Raviglione, M.C. Tuberculosis: Epidemiology and control. Mediterr. J. Hematol. Infect. Dis. 2014, 6. [Google Scholar] [CrossRef] [PubMed]

- Sergeev, R.S.; Kavaliou, I.; Sataneuski, U.; Gabrielian, A.; Rosenthal, A.; Tartakovsky, M.; Tuzikov, A. Genome-wide Analysis of MDR and XDR Tuberculosis from Belarus: Machine-learning Approach. IEEE/ACM Trans. Comput. Biol. Bioinform. 2017. [Google Scholar] [CrossRef] [PubMed]

- Melendez, J.; van Ginneken, B.; Maduskar, P.; Philipsen, R.H.; Reither, K.; Breuninger, M.; Sánchez, C.I. A novel multiple-instance learning-based approach to computer-aided detection of tuberculosis on chest X-rays. IEEE Trans. Med. Imaging 2015, 34, 179–192. [Google Scholar] [CrossRef] [PubMed]

- Alcantara, M.F.; Cao, Y.; Liu, C.; Liu, B.; Brunette, M.; Curioso, W.H. Improving tuberculosis diagnostics using deep learning and mobile health technologies among resource-poor communities in Perú. Smart Health 2017, 1, 66–76. [Google Scholar] [CrossRef]

- Lopes, U.K.; Valiati, J.F. Pre-trained convolutional neural networks as feature extractors for tuberculosis detection. Comput. Biol. Med. 2017, 89, 135–143. [Google Scholar] [CrossRef] [PubMed]

- Yahiaoui, A.; Er, O.; Yumusak, N. A new method of automatic recognition for tuberculosis disease diagnosis using support vector machines. Biomed. Res. 2017, 28, 4208–4212. [Google Scholar]

- Évora, L.H.R.A.; Seixas, J.M.; Kritski, A.L. Neural network models for supporting drug and multidrug resistant tuberculosis screening diagnosis. Neurocomputing 2017, 265, 116–126. [Google Scholar] [CrossRef]

- Thompson, E.G.; Du, Y.; Malherbe, S.T.; Shankar, S.; Braun, J.; Valvo, J.; Shenai, S. Host blood RNA signatures predict the outcome of tuberculosis treatment. Tuberculosis 2017, 107, 48–58. [Google Scholar] [CrossRef] [PubMed]

- Sambarey, A.; Devaprasad, A.; Mohan, A.; Ahmed, A.; Nayak, S.; Swaminathan, S.; Vyakarnam, A. Unbiased Identification of Blood-based Biomarkers for Pulmonary Tuberculosis by Modeling and Mining Molecular Interaction Networks. EBioMedicine 2017, 15, 112–126. [Google Scholar] [CrossRef] [PubMed]

- Mamiya, H.; Schwartzman, K.; Verma, A.; Jauvin, C.; Behr, M.; Buckeridge, D. Towards probabilistic decision support in public health practice: Predicting recent transmission of tuberculosis from patient attributes. J. Biomed. Inform. 2015, 53, 237–242. [Google Scholar] [CrossRef] [PubMed]

- JoãoFilho, B.D.O.; de Seixas, J.M.; Galliez, R.; de Bragança Pereira, B.; de Q Mello, F.C.; dos Santos, A.M.; Kritski, A.L. A screening system for smear-negative pulmonary tuberculosis using artificial neural networks. Int. J. Infect. Dis. 2016, 49, 33–39. [Google Scholar] [CrossRef]

- Rockafellar, R.T. Lagrange multipliers and optimality. SIAM Rev. 1993, 35, 183–238. [Google Scholar] [CrossRef]

- Bellman, R. Dynamic Programming; Dover Publication: New York, NY, USA, 2013. [Google Scholar]

- Bertsekas, D.P. Nonlinear Programming; Athena Scientific: Belmont, CA, USA, 1999. [Google Scholar]

- Glover, F.W.; Kochenberger, G.A. Handbook of Metaheuristics; Kluwer Academic Publishers: New York, NY, USA, 2006. [Google Scholar]

- Steiner, M.T.A.; Datta, D.; Neto, R.J.S.; Scarpin, C.T.; Figueira, J.R. Multi-objective optimization in partitioning the healthcare system of Parana State in Brazil. Omega 2015, 52, 53–64. [Google Scholar] [CrossRef]

- Samuel, A.L. Some studies in machine learning using the game of checkers. IBM J. Res. Dev. 1959, 3, 210–229. [Google Scholar] [CrossRef]

- Lanza, J.; Sotres, P.; Sánchez, L.; Galache, J.A.; Santana, J.R.; Gutiérrez, V.; Muñoz, L. Managing Large Amounts of Data Generated by a Smart City Internet of Things Deployment. Int. J. Semant. Web Inf. Syst. 2016, 12, 22–42. [Google Scholar] [CrossRef]

- Assaf, A.; Senart, A.; Troncy, R. Towards an Objective Assessment Framework for Linked Data Quality: Enriching Dataset Profiles with Quality Indicators. Int. J. Semant. Web Inf. Syst. 2016, 12, 111–133. [Google Scholar] [CrossRef]

- Bertsekas, D.P.; Rheinboldt, W. Constrained Optimization and Lagrange Multiplier Methods; Athena Scientific: Belmont, MA, USA, 1982. [Google Scholar]

- Silver, D.; Huang, A.; Maddison, C.J.; Guez, A.; Sifre, L.; Van Den Driessche, G.; Schrittwieser, J.; Antonoglou, I.; Panneershelvan, V.; Lanctot, M.; et al. Mastering the game of Go with deep neural networks and tree search. Nature 2016, 484–489. [Google Scholar] [CrossRef] [PubMed]

- Schmidhuber, J. Deep learning in neural networks: An overview. Neural Netw. 2015, 61, 85–117. [Google Scholar] [CrossRef] [PubMed]

- Gatti, C. Design of Experiments for Reinforcement Learning; Springer: New York, NY, USA, 2014. [Google Scholar]

- Si, J. Handbook of Learning and Approximate Dynamic Programming; John Wiley & Sons: Hoboken, NJ, USA, 2004. [Google Scholar]

- Kaelbling, L.P.; Littman, M.L.; Moore, A.W. Reinforcement learning: A survey. J. Artif. Intell. Res. 1996, 4, 237–285. [Google Scholar]

- Liu, Y.; Logan, B.; Liu, N.; Xu, Z.; Tang, J.; Wang, Y. Deep Reinforcement Learning for Dynamic Treatment Regimes on Medical Registry Data. In Proceedings of the 2017 IEEE International Conference on Healthcare Informatics (ICHI), Park City, UT, USA, 23–26 August 2017; IEEE Press: Piscataway, NJ, USA, 2017. [Google Scholar]

- Shakshuki, E.M.; Reid, M.; Sheltami, T.R. Dynamic healthcare interface for patients. Procedia Comput. Sci. 2015, 63, 356–365. [Google Scholar] [CrossRef]

- Jagodnik, K.; Thomas, P.; Bogert, A.V.D.; Branicky, M.; Kirsch, R. Training an Actor-Critic Reinforcement Learning Controller for Arm Movement Using Human-Generated Rewards. IEEE Trans. Neural Syst. Rehabil. Eng. 2017, 25, 1892–1905. [Google Scholar] [CrossRef] [PubMed]

- Istepanian, R.S.; Philip, N.Y.; Martini, M.G. Medical QoS provision based on reinforcement learning in ultrasound streaming over 3.5G wireless systems. IEEE J. Sel. Areas Commun. 2009, 27. [Google Scholar] [CrossRef]

- Global Health Estimates 2015: Deaths by Cause, Age, Sex, by Country and by Region, 2000–2015; World Health Organization: Geneva, Switzerland, 2016; Available online: http://www.who.int/healthinfo/global_burden_disease/estimates/en/index1.html (accessed on 15 September 2017).

- Makoul, G.; Curry, R.H.; Tang, P.C. The use of electronic medical records: Communication patterns in outpatient encounters. J. Am. Med. Inform. Assoc. 2001, 8, 610–615. [Google Scholar] [CrossRef] [PubMed]

- Westin, A.; Krane, D.; Capps, K.; Peterson, T.; Kliner, S. Making It Meaningful: How Consumers Value and Trust Health It Survey; National Partnership for Women & Families: Washington, DC, USA, 2012; Available online: http://go.nationalpartnership.org/site/DocServer/HIT_Making_IT_Meaningful_National_Partnership_February_2.pdf (accessed on 15 September 2017).

- Sweeney, L. Computational disclosure control for medical microdata: The Datafly system. In Record Linkage Techniques 1997: Proceedings of an International Workshop and Exposition; The National Academies Press: Washington, DC, USA, 1997; pp. 442–453. [Google Scholar]

- Chang, C.C.; Li, Y.C.; Huang, W.H. TFRP: An efficient microaggregation algorithm for statistical disclosure control. J. Syst. Softw. 2007, 80, 1866–1878. [Google Scholar] [CrossRef]

- Yang, J.J.; Li, J.Q.; Niu, Y. A hybrid solution for privacy preserving medical data sharing in the cloud environment. Future Gener. Comput. Syst. 2015, 43, 74–86. [Google Scholar] [CrossRef]

- Liu, K.; Kargupta, H.; Ryan, J. Random projection-based multiplicative data perturbation for privacy preserving distributed data mining. IEEE Trans. Knowl. Data Eng. 2006, 18, 92–106. [Google Scholar] [CrossRef]

- Fung, B.C.; Wang, K.; Philip, S.Y. Anonymizing classification data for privacy preservation. IEEE Trans. Knowl. Data Eng. 2007, 19, 711–725. [Google Scholar] [CrossRef]

- Loukides, G.; Gkoulalas-Divanis, A.; Malin, B. Anonymization of electronic medical records for validating genome-wide association studies. Proc. Natl. Acad. Sci. USA 2010, 107, 7898–7903. [Google Scholar] [CrossRef] [PubMed]

- Turner, J.R. The role of pilot studies in reducing risk on projects and programmes. Int. J. Proj. Manag. 2005, 23, 1–6. [Google Scholar] [CrossRef]

- Zingg, W.; Holmes, A.; Dettenkofer, M.; Goetting, T.; Secci, F.; Clack, L.; Allegranzi, B.; Magiorakos, A.; Pittet, D. Hospital organization, management, and structure for prevention of health-care-associated infection: A systematic review and expert consensus. Lancet Infect. Dis. 2015, 15, 212–224. [Google Scholar] [CrossRef]

- Lichtenberg, F.R. The effect of government funding on private industrial research and development: A re-assessment. J. Ind. Econ. 1987, 97–104. [Google Scholar] [CrossRef]

- VomBrocke, J.; Lippe, S. Managing collaborative research projects: A synthesis of project management literature and directives for future research. Int. J. Proj. Manag. 2015, 33, 1022–1039. [Google Scholar] [CrossRef]

- Forkner-Dunn, J. Internet-based patient self-care: The next generation of health care delivery. J. Med. Internet Res. 2005, 5. [Google Scholar] [CrossRef] [PubMed]

- James, C. Global Status of Commercialized Biotech/GM Crops; The International Service for the Acquisition of Agri-biotech Applications (ISAAA): Metro Manila, Philippines, 2016; Available online: http://africenter.isaaa.org/wp-content/uploads/2017/06/ISAAA-Briefs-No-52.pdf (accessed on 15 September 2017).

- Huesch, M.D.; Mosher, T.J. Using It or Losing It? The Case for Data Scientists inside Health Care. 2017. Available online: http://catalyst.nejm.org/case-data-scientists-inside-health-care/ (accessed on 1 December 2017).

- Oaldem-Rayner, L. Artificial Intelligence Won’t Replace Doctors Soon But It Can Help with Diagnosis. 2017. Available online: http://www.abc.net.au/news/2017-09-19/ai-wont-replace-doctors-soon-but-it-can-help-diagnosis/8960530 (accessed on 1 December 2017).

- Wolpert, D.H. The Supervised Learning No-Free Lunch; Springer: London, UK, 2002. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

| Disease | Deaths (’000) in Particular Year | |||

|---|---|---|---|---|

| 2000 | 2005 | 2010 | 2015 | |

| Cardiovascular diseases | 14,375 | 15,338 | 16,570 | 17,631 |

| Diabetes mellitus | 945 | 1133 | 1333 | 1570 |

| Alzheimer’s disease and other forms of dementia | 649 | 843 | 1147 | 1534 |

| Tuberculosis | 1666 | 1573 | 1406 | 1373 |

| Work | Diseases | Methodology | TF | Ns | Performance (%) | ||

|---|---|---|---|---|---|---|---|

| Se | Sp | OA | |||||

| [90] | HC + Ca + MI + Hy + Dy | LS; SVM | NFF | 65 | / | / | 90.34 |

| [91] | HC + Ca | RF; SVM | FF | 221 | 87 | 92 | 94 |

| [92] | HC + CHF | CART | FF | 41 | 93.3 | 63.6 | / |

| [93] | HC + CHF + VT + AF | FL; GA | NFF | 300 | / | / | 93.34 |

| [94] | HC + CA + MI | KNN | NFF | 207 | 99.7 | 98.5 | 98.5 |

| [95] | HB | SKF; SVM | HF | 48 | / | / | 98.3 |

| [96] | HB | NN | FF | 17 | / | / | 95 |

| [97] | HB | CNN | NFF | 47 | 96.71 | 91.64 | 93.47 |

| [98] | HB | NN; SVM | NFF | 48 | 98.91 | 97.85 | 98.91 |

| [99] | HB | FCM | FF | 48 | / | / | 81.21 |

| Work | Applications | Methodology | Ns | Performance |

|---|---|---|---|---|

| [103] | Diagnosis of type 2 diabetes | RF; SVM | 7913 | Precision: 94.2%; Recall: 93.97% |

| [104] | Diagnosis of type 2 diabetes | DT; KNN; NBC LR; RF; SVM | 300 | average AUC = 98% |

| [105] | Diagnosis of type 2 diabetes | ACO; FL | 768 | Accuracy = 84.24% |

| [106] | Predicting of fasting plasma glucose status | LR; NBC | 4870 | AUC (Female): 0.74; (Male): 0.68 |

| [107] | Analysis of predictive power of hypertriglyceridemic waist phenotype for type 2 diabetes | LR; NBC | 11,937 | waist-to-hip ratio + triglyceride (men): AUC = 0.653; rib-to-hip ratio + triglyceride (women): AUC = 0.73 |

| [108] | Detection of hypoglycemic episodes for type 1 diabetes children | NN | 16 | Sensitivity = 78%; Specificity = 60% |

| [109] | Prediction of type 2 diabetes related proteins | SVM | 1296 | Accuracy = 78.2% |

| [110] | Prediction of peripheral vascular occlusion in type 2 diabetes | SVM; WPS | 33 | Accuracy = 100% |

| [111] | Predicting the development of liver cancer in type 2 diabetes | LR; NN | 2060 | Sensitivity = 75.7%; Specificity = 75.5% |

| [112] | Detection microalbuminuria in type 2 diabetes | FL; LR; PSO | 200 | Sensitivity = 95%; Specificity = 85%; Accuracy = 92% |

| Work | Applications | Methodology | Ns | Performance |

|---|---|---|---|---|

| [115] | Diagnosis of Alzheimer’s disease | NBC; RF; RLO; RS; SVM | 27 | Accuracy = 97.14% |

| [116] | Diagnosis of Alzheimer’s disease | SVM | 53 | Accuracy = 96.23% |

| [117] | Diagnosis of dementias | LR; SVM | 29 | Accuracy = 93% |

| [118] | Detection of Alzheimer’s disease related regions | SVM | 126 | Accuracy = 92.36% |

| [119] | Predicting mild cognitive impairment patients for conversion to Alzheimer’s disease | LDS | 164 | AUC = 0.7661 |

| [120] | Predicting mild cognitive impairment patients for conversion to Alzheimer’s disease | GA; SVM | 458 | Sensitivity = 76.92%; Specificity = 73.23%; Accuracy = 75% |

| [121] | Identification of dissociable multivariate morphological patterns | LC | 801 | AUC = 0.93 |

| [122] | Identification for Alzheimer’s disease and mild cognitive impairment | EM; SVM | 338 | Alzheimer’s disease: sensitivity = 84.86%, specificity = 91.69%, accuracy = 88.73%; Mild cognitive impairment: sensitivity = 79.07%, specificity = 82.7%, accuracy = 80.91% |

| [123] | Identification for Alzheimer’s disease and mild cognitive impairment | DCNN | 900 | Alzheimer’s disease: sensitivity = 98.89%, specificity = 97.78%, accuracy = 98.33%; Mild cognitive impairment: sensitivity = 92.23%, specificity = 91.11%, accuracy = 92.12% |

| [124] | Identification for Alzheimer’s disease and mild cognitive impairment | DCNN | 142 | Alzheimer’s disease: sensitivity = 85%, specificity = 82%, accuracy = 85%; Mild cognitive impairment: sensitivity = 84%, specificity = 81%, accuracy = 85% |

| [125] | Identification of genes related to Alzheimer’s disease | DT; QAR | 33 | 90 genes are related to Alzheimer’s disease |

| [126] | Identification of genes related to Alzheimer’s disease | ELM; RF; SVM | 31 | Sensitivity= 78.77%; Specificity= 83.1%; Accuracy = 74.67% |

| Work | Applications | Methodology | Ns | Performance |

|---|---|---|---|---|

| [131] | Identification of drug resistance-associated mutations in tuberculosis | LMM; LR; MOSS | 144 | Accuracy of over 90% in selected 9 drugs |

| [132] | Detection of tuberculosis | KNN; SVM | 917; 869; 850 | KNN: AUC = 0.84; 0.78; 0.82 SVM: AUC = 0.88; 0.79; 0.85 |

| [133] | Detection of tuberculosis | CNN | 4701 | Accuracy = 62.07% |

| [134] | Detection of tuberculosis | CNN | 138;662 | Accuracy = 82.6%; 92.6% AUC = 84.7%; 92.6% |

| [135] | Detection of tuberculosis | SVM | 150 | Accuracy = 96.68% |

| [136] | Detection of multidrug resistance tuberculosis | NN | 280 | Sensitivity = 95.1%; Specificity = 85% |

| [137] | Prediction of tuberculosis treatment failure | Xpert; Response5 | 153 | Sensitivity = 83–100%; Specificity = 26–100% |

| [138] | Identification between tuberculosis and HIV | Filtering | 54 | Accuracy = 79–93% |

| [139] | Predicting recent transmission of tuberculosis | LR | 1552 | Sensitivity = 53%; Specificity = 67% |

| [140] | Detection of smear-negative pulmonary tuberculosis | MLP | 136 | Sensitivity = 100%; Specificity = 80%; Accuracy = 88%; AUC = 91.8% |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chui, K.T.; Alhalabi, W.; Pang, S.S.H.; Pablos, P.O.d.; Liu, R.W.; Zhao, M. Disease Diagnosis in Smart Healthcare: Innovation, Technologies and Applications. Sustainability 2017, 9, 2309. https://doi.org/10.3390/su9122309

Chui KT, Alhalabi W, Pang SSH, Pablos POd, Liu RW, Zhao M. Disease Diagnosis in Smart Healthcare: Innovation, Technologies and Applications. Sustainability. 2017; 9(12):2309. https://doi.org/10.3390/su9122309

Chicago/Turabian StyleChui, Kwok Tai, Wadee Alhalabi, Sally Shuk Han Pang, Patricia Ordóñez de Pablos, Ryan Wen Liu, and Mingbo Zhao. 2017. "Disease Diagnosis in Smart Healthcare: Innovation, Technologies and Applications" Sustainability 9, no. 12: 2309. https://doi.org/10.3390/su9122309

APA StyleChui, K. T., Alhalabi, W., Pang, S. S. H., Pablos, P. O. d., Liu, R. W., & Zhao, M. (2017). Disease Diagnosis in Smart Healthcare: Innovation, Technologies and Applications. Sustainability, 9(12), 2309. https://doi.org/10.3390/su9122309