Resource-Governed BDA Adoption for Resilient Supply-Chain Operations: Qualitative Evidence from Malaysian Manufacturing Industry

Abstract

1. Introduction

Research Gap and Study Focus

- RQ1: “What organizational resources—and governance arrangements—are critical to adopting and embedding BDA in manufacturing supply chains to strengthen resilience?”

- RQ2: “What challenges do manufacturing firms encounter when adopting BDA for resilient supply chain operations?”

- RQ3: “What resilience benefits—in terms of decision-latency timers (Time-to-Detect/ Decide/Reconfigure/Recover)—do manufacturing supply chains realize following BDA adoption?”

2. Literature Review

2.1. Big Data Analytics

2.2. Supply Chain Resilience

2.3. BDA Adoption for Resilient Supply Chains

3. Research Methodology

3.1. Research Design and Rationale

3.2. Data Collection

3.3. Data Analysis

4. Findings

4.1. RQ1: Organizational Resources for BDA Adoption

4.1.1. Technological Infrastructure

“We started collecting sensor data from our production lines, and with the help of a cloud-based platform, we enabled live analytics. That changed how we looked at our operations—BDA became an actual capability, not just an idea”.

“Our procurement and warehouse systems are now connected through a unified data layer. That’s what allows our analytics models to run without delay and supports real-time decisions”.

4.1.2. Skilled Human Capital

“We invested in software tools and sensors, but the real progress came when we had people who could read the data, make sense of it, and convert it into action”.

“We started training sessions last year for our planning and logistics team on basic analytics tools, just so they can start thinking in a data-driven way. When your own people are confident in the data and can explain it, it builds trust across the organization and pushes others to use it”.

4.1.3. Organizational Culture and Data-Driven Mindset

“It’s not only about having the system; it’s about changing the way we think. Top management and even middle managers need to rely on data more than gut feeling”.

“We are moving from gut-based decisions to dashboard-driven insights. That shift in mindset has made our teams more responsive and confident in handling uncertainties. Supply chain issues are rarely isolated. We need input from finance, operations, and even marketing. Our data-sharing culture helps us respond faster and align strategies”.

4.1.4. Strategic Investment in Analytical Tools

“Just having the data is not enough; we needed to invest in software that helps make sense of it”.

“The challenge was not just buying the tool, but choosing the right one that our team could learn and use effectively without too much customization”.

4.1.5. Clear Objectives and Outputs for BDA Adoption

“You need to have clarity—what data you want, why you need it, and what exactly you expect it to deliver in terms of insights”.

“You don’t start with the tools. You start with the question you want answered. Then you find the data and tool that fits”.

4.1.6. Top Management Support

“Our analytics journey really took off once the CEO started asking data-driven questions. It sent a clear message to all departments that BDA was not optional—it was a priority”.

“Our leadership allowed us to test analytics solutions in one plant before scaling. That helped us learn without pressure. Without that support, we wouldn’t have tried anything new”.

4.1.7. Data Governance and Quality Management

“We created a cross-functional data governance team to define data standards and ensure quality across our systems. That investment paid off, especially when we needed real-time insights during COVID-related disruptions”.

“With multiple vendors and digital partners, we can’t afford loose ends. We need to know how our data is managed, secured, and used—otherwise, BDA becomes a liability instead of an asset”.

4.1.8. External Collaboration and Information-Sharing Culture

“We built an API-based link with our key suppliers and logistics companies… That’s the kind of visibility BDA makes possible—when everyone’s connected”.

“If only the data team has access and others can’t view or understand it, then what’s the point of calling it a data-driven system?”

4.1.9. Analytical Capability and BDA Maturity

“What most companies do when they purchase analytics software is believe that that is the answer. However, unless you are familiar with how to put the correct questions and read the results, the software are not fully used”.

“We moved beyond reporting. Our systems now trigger automated alerts and scenario simulations. It’s not just about dashboards—it’s about integrating analytics into how we respond and plan”.

4.2. RQ3: Benefits Realized After BDA Adoption

4.2.1. Internal End-to-End Supply Chain Visibility and Real-Time Monitoring

“We use a shared analytics platform with suppliers and customers in real time. If a shipment gets stuck upstream, our local line is alerted right away. That kind of transparency didn’t exist before”.

4.2.2. Predictive Risk Sensing, Early Warning, and Contingency Activation

“We installed IoT sensors across our production floor and logistics vehicles. The moment there’s a deviation—like temperature exceeding threshold in storage or abnormal idle time in equipment—we receive alerts through our BDA dashboard. This helps us act before small issues become crises”.

“One of our suppliers in China experienced flooding, and because our system flagged irregular dispatch patterns early, we preemptively switched to our alternate supplier before delays affected our production”.

4.2.3. Improved Operational Efficiency and Cost Optimization

“With analytics, we can now visualize every part of our operations—from inbound logistics to final delivery. Idle inventory, delayed suppliers, costly routes—it’s all visible, and we act on it instantly”.

“We don’t wait for equipment to fail anymore. Analytics alerts us before a breakdown. It’s saved us countless hours and repair costs”.

4.2.4. Collaborative Visibility and Supplier Network Integration

“We have integrated analytics with some key vendors. They can see our forecasts and stock status, and we can see theirs. During COVID, this transparency helped us receive supplies on time while others struggled. That mutual insight has become our strength”.

4.2.5. Data-Driven Innovation and Strategic Agility

“We are no longer relying only on past performance reports; now we use real-time dashboards and predictive models. It helps us change production plans and inventory strategies quickly if we sense something changing in demand or logistics. That agility was never possible before BDA”.

“Some of our best innovations came when we shared our analytics reports with our key suppliers. They were surprised to see how their delays impacted our lead time. Now, we work together using shared dashboards”.

“We saw the signals, but approvals for supplier switches or expediting took days. By then the window had passed”.

4.2.6. Competitive Advantage Through Analytics-Driven Positioning

“In today’s volatile market, data is a weapon. If you’re not using analytics to make faster and smarter decisions, you’ll be left behind. We’ve seen companies collapse during disruptions simply because they couldn’t predict or react fast enough”.

“We were previously reactive, waiting for problems to occur before fixing them. Now we are ahead of the game. With predictive analytics, we can plan better, source more competitively, and adjust pricing based on real-time demand”.

4.2.7. Enhanced Product Traceability and Compliance Management

“With BDA tools in place, we can now trace every part of a component back to its source. If there’s a defect reported by a customer, we no longer have to shut down an entire batch—we can identify the exact line and even the machine settings that produced the part”.

“Traceability is not optional. BDA gives us the ability to demonstrate full visibility across our supply chain—from raw material to finished product delivery. That’s a huge asset for both compliance and resilience”.

4.2.8. Rapid Scenario Planning and Disruption Simulation

“The real advantage of big data is not just knowing what happened, but asking what will happen. We build scenarios using data from past disruptions—like floods or transport strikes—and simulate their impact. This helps us allocate safety stock, assign alternative suppliers, and even modify production schedules before the crisis hits”.

4.2.9. Advanced Supplier Risk Monitoring and Diversification Strategies

“Before adopting analytics, we were largely reactive… Now we analyze delivery lead times, quality scores, and even financial risk indicators… we can act fast to avoid delays”.

“We used analytics to evaluate the resilience of our Tier 2 suppliers… we preemptively increased buffer stock and switched to a local supplier… That decision saved us millions”.

4.2.10. Dynamic Capacity Allocation and Adaptive Production Prioritization

“When the raw material shipment got delayed during the port congestion in Penang, we used analytics to quickly identify which products could still be produced with available inventory and which orders could be rescheduled without affecting our service-level agreements. This kind of agility was impossible without real-time data insights”.

“During the pandemic, traditional forecasting models collapsed. But using big data tools, we integrated real-time distributor data and social signals to sense demand drops and quickly reduced overproduction. It saved millions in inventory holding costs”.

4.3. RQ2: Barriers/Challenges in BDA Adoption

4.3.1. Lack of Data Integration and Infrastructure Readiness

“We still operate multiple systems that don’t communicate. Sometimes, we end up running analytics manually in Excel”.

“We tried to pilot predictive alerts, but the data pipeline kept breaking—different IDs, different time stamps, and no API from the old MES. By the time we reconciled files, the window to act had passed”.

4.3.2. Skill Gaps and Human Capital Deficiency

“Even if we buy the best tools, we still need people who can operate them. Most of our staff are good with ERP, not analytics”.

“Even when we find someone with strong data skills, they don’t stay long—tech companies outbid us”.

4.3.3. Organizational Resistance to Change and Cultural Inertia

“We’ve been doing things manually for 20 years. Convincing people that analytics can outperform their gut feeling is not easy”.

“Technology isn’t the issue—it’s people. Some staff see analytics as a threat, not a tool. Adoption only improved when we started celebrating small wins and involving them in the process”.

4.3.4. Poor Data Quality and Governance

“We do have data—but it sits in different systems: Excel, legacy ERP, even paper reports. When we try to combine them, it’s a nightmare. You can’t trust whether the numbers are accurate or current”.

“Every department collects data in its own way—some with SAP, others with homegrown systems, and some still manually. Before big data, we need to clean and unify our sources”.

4.3.5. Cost and Resource Constraints

“Implementing BDA isn’t just about buying software—you need servers, licenses, consultants, analysts, and training. The costs add up fast, and for SMEs, every dollar has to be justified”.

“People think dashboards work like magic, but the real work is cleaning, standardizing, and managing data—and we didn’t have the resources or staff to handle it consistently”.

4.3.6. Lack of Inter-Departmental Collaboration

“Our IT department collects the data, but the supply chain team doesn’t always understand how to use it. Production has their own systems and only shares data if asked. So, the data remains underutilized”.

“Each department has its own KPIs, its own priorities. There’s no centralized strategy to analyze and act on data collectively”.

4.3.7. Lack of Awareness and Interest

“There’s a general lack of urgency. Many managers still think data analytics is just for IT or R&D—they don’t realize how it could help solve real supply chain issues like stockouts or delays”.

“We had dashboards and analytics software installed, but nobody used them—not because they weren’t useful, but because people didn’t know what to look for or how to interpret the data”.

4.3.8. Privacy and Security

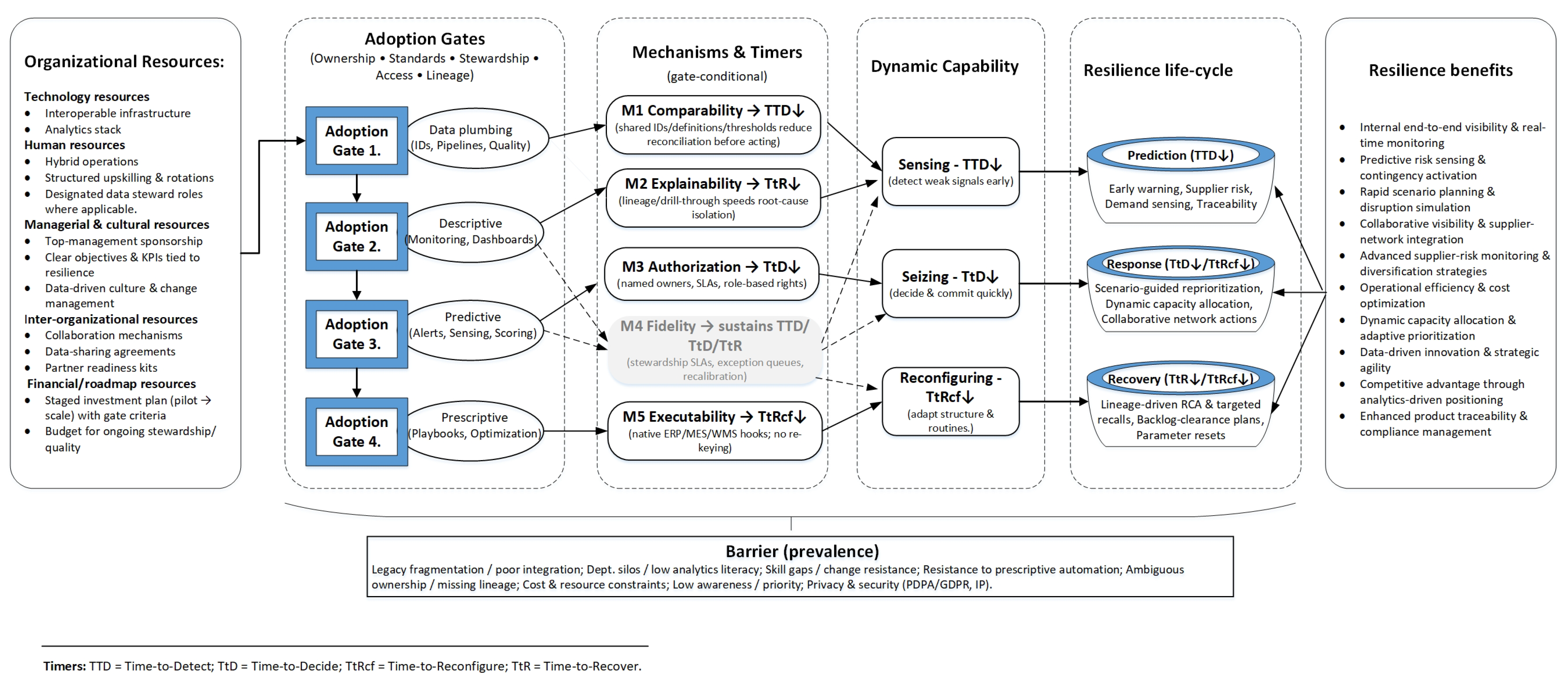

Cross-Case Explanatory Memo: When Mechanisms Are Absent

- 1

- Visibility without ownership (G2 present, M3 Authorization absent): alerts appear but lack named owners/rights, so TtD does not fall; teams revert to e-mails and spreadsheets.

- 2

- Signals without fidelity (G2–G3 present, M4 Fidelity weak): untuned thresholds and missing stewardship produce false positives and alert fatigue; TTD/TtD improvements decay over time.

- 3

- Recommendations without executability (M5 Executability absent at G4): optimization outputs cannot be enacted in ERP/MES/WMS; TtRcf remains high despite analytics.

5. BDA Adoption Framework: A Resource-Governance Overlay

6. Discussion

6.1. Theoretical Implications

6.2. Practical/Managerial Implications

7. Limitations and Future Research

8. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A. Semi-Structured Interview Guide

Appendix A.1. Intro Script (2–3 min)

Appendix A.2. Shared Understanding (No Leading, Optional)

Appendix A.3. Guide Structure

- Section A. Background and role (warm-up)

- A1.

- Please describe your current role and responsibilities. (Probe: decision rights; analytics involvement) Context

- A2.

- Briefly outline your organization’s supply chain (key products, nodes/tiers, major partners). Context

- A3.

- What are the main data/analytics systems or tools used in supply-chain processes? (Probe: ERP/MES/SCM suites; data lakes; visual analytics) Context

- Section B. Adoption storyline

- B1.

- Can you walk me through a specific BDA initiative in supply chain from initiation to current status? (Probe: trigger, scope, milestones) RQ1/RQ2

- B2.

- Which stakeholders were involved and how were responsibilities divided? (Probe: cross-functional team; partners) RQ1

- Section C. Organizational resources (human, data, tech, and governance) RQ1

- C1.

- What people resources were essential (skills, roles, training)? (Probe: domain experts, data engineers, product owners; internal vs. external)

- C2.

- What data resources were critical (sources, quality, standards, stewardship)? (Probe: cross-tier sharing; master data)

- C3.

- What technology resources were required (platforms, integration, OT/IT convergence)? (Probe: latency/throughput; cloud/edge)

- C4.

- What governance arrangements helped (policies, decision rights, funding, KPIs)? (Probe: privacy/security; interoperability agreements)

- C5.

- How were these resources mobilized and sequenced over time? (Probe: quick wins; capability building; hiring vs. upskilling)

- Section D. Barriers and workarounds (interdependencies) RQ2

- D1.

- What were the main adoption barriers? (Prompts: legacy integration; data quality; skills; vendor lock-in; standards; change resistance; ROI)

- D2.

- Which barriers were interdependent (one causing or amplifying another)? (Probe: examples of “barrier cascades”)

- D3.

- How did you address or sequence these? (Probe: pilots; governance fixes; contracts with partners; minimum viable datasets)

- D4.

- What would you do differently next time? (Probe: resourcing, stakeholder engagement, architecture)

- Section E. Resilience mechanisms and outcomes RQ3

- E1.

- Where, if anywhere, did BDA improve visibility? (Probe: earlier signals; inventory/flow transparency; supplier risk)

- E2.

- Where did BDA improve responsiveness/reconfiguration? (Probe: scenario planning; S&OP; dynamic allocation; expediting)

- E3.

- Where did BDA shorten recovery time? (Probe: incident post-mortems; learning loops; automation handoffs)

- E4.

- Could you describe a recent disruption and how BDA changed the response? (Probe: before/after; evidence; limits)

- E5.

- Any unintended consequences or risks introduced by BDA? (Probe: model brittleness; bias; over-reliance)

- Section F. Cross-tier collaboration and scaling

- F1.

- How did partner data-sharing and readiness affect adoption? (Probe: contracts, standards, incentives) RQ1/RQ2

- F2.

- What governance or operating model supported scaling across plants/tiers? (Probe: centers of excellence; playbooks; funding)

- Section G. Wrap-up

- G1.

- Top three lessons learned for adopting BDA to support resilience?

- G2.

- If you could advise a peer starting now, what would you prioritize first?

References

- Su, X.; Zeng, W.; Zheng, M.; Jiang, X.; Lin, W.; Xu, A. Big data analytics capabilities and organizational performance: The mediating effect of dual innovations. Eur. J. Innov. Manag. 2022, 25, 1142–1160. [Google Scholar] [CrossRef]

- Babalghaith, R.; Aljarallah, A. Factors Affecting Big Data Analytics Adoption in Small and Medium Enterprises. Inf. Syst. Front. 2024, 26, 2165–2187. [Google Scholar] [CrossRef]

- Liu, Y.; Xu, X. Industry 4.0 and cloud manufacturing: A comparative analysis. J. Manuf. Sci. Eng. 2017, 139, 034701. [Google Scholar] [CrossRef]

- Sagiroglu, S.; Sinanc, D. Big data: A review. In Proceedings of the 2013 International Conference on Collaboration Technologies and Systems (CTS), San Diego, CA, USA, 20–24 May 2013; IEEE: Piscataway, NJ, USA, 2013; pp. 42–47. [Google Scholar]

- Grover, V.; Chiang, R.H.; Liang, T.P.; Zhang, D. Creating strategic business value from big data analytics: A research framework. J. Manag. Inf. Syst. 2018, 35, 388–423. [Google Scholar] [CrossRef]

- Wamba, S.; Gunasekaran, A.; Akter, S.; Ren, S.; Dubey, R.; Childe, S. Big data analytics and firm performance: Effects of dynamic capabilities. J. Bus. Res. 2020, 120, 328–337. [Google Scholar] [CrossRef]

- Chen, D.Q.; Preston, D.S.; Swink, M. How big data analytics affects supply chain decision-making: An empirical analysis. J. Assoc. Inf. Syst. 2021, 22, 1224–1244. [Google Scholar] [CrossRef]

- Goes, P.B. Editor’s Comments: Big Data and IS Research. Mis Q. 2014, 38, iii–viii. [Google Scholar] [CrossRef]

- Ivanov, D.; Dolgui, A. Viability of intertwined supply networks: Extending the supply chain resilience angles towards survivability. A position paper motivated by COVID-19 outbreak. Int. J. Prod. Res. 2020, 58, 2904–2915. [Google Scholar] [CrossRef]

- Dubey, R.; Gunasekaran, A.; Childe, S.J.; Bryde, D.J.; Giannakis, M.; Foropon, C.; Roubaud, D.; Hazen, B.T. Big data analytics and artificial intelligence pathway to operational performance under the effects of entrepreneurial orientation and environmental dynamism: A study of manufacturing organisations. Int. J. Prod. Econ. 2020, 226, 107599. [Google Scholar] [CrossRef]

- Pettit, T.J.; Croxton, K.L.; Fiksel, J. The evolution of resilience in supply chain management: A retrospective on ensuring supply chain resilience. J. Bus. Logist. 2019, 40, 56–65. [Google Scholar] [CrossRef]

- Bahrami, M.; Shokouhyar, S. The role of big data analytics capabilities in bolstering supply chain resilience and firm performance: A dynamic capability view. Inf. Technol. People 2022, 35, 1621–1651. [Google Scholar] [CrossRef]

- Maheshwari, S.; Gautam, P.; Jaggi, C.K. Role of Big Data Analytics in supply chain management: Current trends and future perspectives. Int. J. Prod. Res. 2021, 59, 1875–1900. [Google Scholar] [CrossRef]

- Bag, S.; Dhamija, P.; Luthra, S.; Huisingh, D. How big data analytics can help manufacturing companies strengthen supply chain resilience in the context of the COVID-19 pandemic. Int. J. Logist. Manag. 2023, 34, 1141–1164. [Google Scholar] [CrossRef]

- JayaLakshmi, G.; Pandey, D.; Pandey, B.K.; Kaur, P.; Mahajan, D.A.; Dari, S.S. Smart big data collection for intelligent supply chain improvement. In AI and Machine Learning Impacts in Intelligent Supply Chain; IGI Global Scientific Publishing: Hershey, PA, USA, 2024; pp. 180–195. [Google Scholar]

- Liao, H.Y.; Hsu, P.C.; Wang, Y.C. Information systems adoption and knowledge performance: Roles of adoption, capabilities, and absorptive capacity. Heliyon 2023, 9, e12847. [Google Scholar] [CrossRef]

- Rogers, D.L. The Digital Transformation Playbook: Rethink your Business for the Digital Age; Columbia University Press: New York, NY, USA, 2016. [Google Scholar]

- Srinivasan, R.; Swink, M. An investigation of visibility and flexibility as complements to supply chain analytics: An organizational information processing theory perspective. Prod. Oper. Manag. 2018, 27, 1849–1867. [Google Scholar] [CrossRef]

- Akter, S.; Bandara, R.; Hani, U.; Wamba, S.F.; Foropon, C.; Papadopoulos, T. Analytics-based decision-making for service systems: A qualitative study and agenda for future research. Int. J. Inf. Manag. 2019, 48, 85–95. [Google Scholar] [CrossRef]

- Mikalef, P.; Pappas, I.O.; Krogstie, J.; Giannakos, M. Big data analytics capabilities: A systematic literature review and research agenda. Inf. Syst.-Bus. Manag. 2018, 16, 547–578. [Google Scholar] [CrossRef]

- Agrawal, K. Investigating the Determinants of Big Data Analytics (BDA) Adoption in Asian Emerging Economies. Master’s Thesis, Aalto University School of Business, Helsinki, Finland, 2015. [Google Scholar]

- Ramanathan, R.; Philpott, E.; Duan, Y.; Cao, G. Adoption of business analytics and impact on performance: A qualitative study in retail. Prod. Plan. Control 2017, 28, 985–998. [Google Scholar] [CrossRef]

- Maroufkhani, P.; Tseng, M.L.; Iranmanesh, M.; Ismail, W.K.W.; Khalid, H. Big data analytics adoption: Determinants and performances among small to medium-sized enterprises. Int. J. Inf. Manag. 2020, 54, 102190. [Google Scholar] [CrossRef]

- Tajul Urus, S.; Rahmat, F.; Othman, I.W.; Syed Mustapha Nazri, S.N.F.; Abdul Rasit, Z. Application of the technology-organization-environment framework on big data analytics deployment in manufacturing and service. Asia-Pac. Manag. Account. J. (APMAJ) 2024, 19, 57–92. [Google Scholar]

- Xu, J.; Pero, M.E.P. A resource orchestration perspective of organizational big data analytics adoption: Evidence from supply chain planning. Int. J. Phys. Distrib. Logist. Manag. 2023, 53, 71–97. [Google Scholar] [CrossRef]

- Maroufkhani, P.; Iranmanesh, M.; Ghobakhloo, M. Determinants of big data analytics adoption in small and medium-sized enterprises (SMEs). Ind. Manag. Data Syst. 2023, 123, 278–301. [Google Scholar] [CrossRef]

- Raj, R.; Kumar, S.; Jeyaraj, A. Antecedents and outcomes of big data adoption in supply chain: A meta-analysis. Am. Bus. Rev. 2024, 27, 15. [Google Scholar] [CrossRef]

- Babalghaith, A.; Aljarallah, N. Big data analytics adoption in SMEs: Evidence from Saudi Arabia. Inf. Syst. Front. 2024, 26, 923–940. [Google Scholar] [CrossRef]

- Huong, T.T.; Azmat, F.; Hadeed, H. Exploring big data analytics adoption for sustainable manufacturing supply chains: Insights from a TOE-guided systematic review. Clean. Logist. Supply Chain. 2025, 14, 100256. [Google Scholar] [CrossRef]

- Vafaei-Zadeh, A.; Thanabalan, P.; Hanifah, H.; Ramayah, T. Big data analytics adoption and supply chain resilience: Evidence from Malaysian manufacturing firms. Inf. Syst. Front. 2025, 27, 455–476. [Google Scholar]

- Anwar, M.; Zong, Z.; Mendiratta, A.; Yaqub, M.Z. Antecedents of big data analytics adoption and its impact on decision quality and environmental performance of SMEs in the recycling sector. Technol. Forecast. Soc. Change 2024, 203, 123468. [Google Scholar] [CrossRef]

- Waqar, M.; Paracha, Z. Big data analytics adoption in developing economies: Empirical evidence from Pakistan’s private sector. Foresight 2024, 26, 1–22. [Google Scholar] [CrossRef]

- El-Haddadeh, R.; Weerakkody, V.; Irani, Z.; Fosso, S.W.; Babar, M.A. Big data analytics adoption and value creation in supply chains: A resource-based view and machine learning approach. Bus. Process Manag. J. 2025, 31, 37–56. [Google Scholar] [CrossRef]

- Alorfi, A.; Alsaadi, A. Barriers to adopting big data analytics in manufacturing supply chains: An interpretive structural modelling approach. Systems 2025, 13, 250. [Google Scholar] [CrossRef]

- Aldossari, A.; Mokhtar, U.A.; Ghani, A.T.A. Empowering Saudi Manufacturing Small and Medium Enterprises: A Framework for Big Data Analytics Adoption and Its Impact on Decision-Making. SAGE Open 2025, 15, 21582440251369162. [Google Scholar] [CrossRef]

- Iftikhar, A.; Ali, I.; Arslan, A.; Tarba, S. Digital innovation, data analytics, and supply chain resiliency: A bibliometric-based systematic literature review. Ann. Oper. Res. 2024, 333, 825–848. [Google Scholar] [CrossRef]

- Al-shanableh, A.; Alzyoud, M.; Alomar, S.; Kilani, Y.; Nashnush, E.; Al-Hawary, S.; Al-Momani, A. Factors affecting big data analytics adoption in SME supply chains: Evidence from Jordan. Int. J. Data Netw. Sci. 2024, 8, 321–332. [Google Scholar] [CrossRef]

- Hamed, T.; Dandan, S.M.; Farah, A.A.; Barakat, S.A. Organisational factors affecting big data analytics adoption in supply chain operations: Evidence from Saudi Arabia. J. Transp. Supply Chain. Manag. 2024, 18, a842. [Google Scholar] [CrossRef]

- Waqar, J.; Paracha, O.S. Antecedents of big data analytics (BDA) adoption in private firms: A sequential explanatory approach. Foresight 2024, 26, 805–843. [Google Scholar] [CrossRef]

- Xu, J.; Pero, M.; Fabbri, M. Unfolding the link between big data analytics and supply chain planning. Technol. Forecast. Soc. Change 2023, 196, 122805. [Google Scholar] [CrossRef]

- Cui, Y.; Kara, S.; Chan, F. Manufacturing big data ecosystem: A systematic literature review. J. Manuf. Syst. 2020, 54, 101861. [Google Scholar] [CrossRef]

- Zamani, E.D.; Smyth, C.; Gupta, S.; Dennehy, D. Artificial intelligence and big data analytics for supply chain resilience: A systematic literature review. Ann. Oper. Res. 2022, 322, 119–149. [Google Scholar] [CrossRef]

- Aldossari, S.; Alharbi, F.; Alzahrani, F. Factors Influencing the Adoption of Big Data Analytics: A Systematic Literature Review on SMEs. SAGE Open 2023, 13, 21582440231217902. [Google Scholar] [CrossRef]

- Moktadir, M.A.; Kumar, A.; Ali, S.M.; Paul, S.; Sultana, R.; Rehman Khan, S. Barriers to big data analytics in manufacturing supply chains: A case study from Bangladesh. Comput. Ind. Eng. 2019, 128, 1063–1075. [Google Scholar] [CrossRef]

- Raut, R.; Narwane, V.; Kumar Mangla, S.; Yadav, V.S.; Narkhede, B.E.; Luthra, S. Unlocking causal relations of barriers to big data analytics in manufacturing firms. Ind. Manag. Data Syst. 2021, 121, 1939–1968. [Google Scholar] [CrossRef]

- Dubey, R.; Bryde, D.J.; Blome, C.; Gunasekaran, A.; Papadopoulos, T. Empirical investigation of data analytics capability and organizational flexibility as complements to supply chain resilience. Int. J. Prod. Res. 2021, 59, 110–128. [Google Scholar] [CrossRef]

- Jiang, B.; Xu, L.; Ahn, H. Antecedents of predictive analytics capability for supply chain resilience. Int. J. Prod. Econ. 2024, 257, 108787. [Google Scholar]

- Addo-Tenkorang, R.; Helo, P.T. Big data applications in operations/supply-chain management: A literature review. Comput. Ind. Eng. 2016, 101, 528–543. [Google Scholar] [CrossRef]

- Lau, R.Y.; Zhao, J.L.; Chen, G.; Guo, X. Big data commerce. Inf. Manag. 2016, 53, 929–943. [Google Scholar] [CrossRef]

- Mahmoudi, A.; Deng, X.; Javed, S.A.; Yuan, J. Large-scale multiple criteria decision-making with missing values: Project selection through TOPSIS-OPA. J. Ambient. Intell. Humaniz. Comput. 2021, 12, 9341–9362. [Google Scholar] [CrossRef]

- Gantz, J.; Reinsel, D. The Digital Universe in 2020: Big Data, Bigger Digital Shadows, and Biggest Growth in the Far East. IDC 2012, 2007, 1–16. [Google Scholar]

- Popovič, A.; Hackney, R.; Tassabehji, R.; Castelli, M. The impact of big data analytics on firms’ high value business performance. Inf. Syst. Front. 2018, 20, 209–222. [Google Scholar] [CrossRef]

- Choi, T.M.; Wallace, S.W.; Wang, Y. Big data analytics in operations management. Prod. Oper. Manag. 2018, 27, 1868–1883. [Google Scholar] [CrossRef]

- Alidrisi, H. Measuring the environmental maturity of the supply chain finance: A big data-based multi-criteria perspective. Logistics 2021, 5, 22. [Google Scholar] [CrossRef]

- Gunasekaran, A.; Papadopoulos, T.; Dubey, R.; Wamba, S.F.; Childe, S.J.; Hazen, B.; Akter, S. Big data and predictive analytics for supply chain and organizational performance. J. Bus. Res. 2017, 70, 308–317. [Google Scholar] [CrossRef]

- Wamba, S.F.; Dubey, R.; Gunasekaran, A.; Akter, S. The performance effects of big data analytics and supply chain ambidexterity: The moderating effect of environmental dynamism. Int. J. Prod. Econ. 2020, 222, 107498. [Google Scholar] [CrossRef]

- Nguyen, T.; Li, Z.; Spiegler, V.; Ieromonachou, P.; Lin, Y. Big data analytics in supply chain management: A state-of-the-art literature review. Comput. Oper. Res. 2018, 98, 254–264. [Google Scholar] [CrossRef]

- Lee, I. Big Data Analytics in Supply Chain Management: A Review. Appl. Sci. 2022, 12, 17. [Google Scholar]

- Wang, G.; Gunasekaran, A.; Ngai, E.W.; Papadopoulos, T. Big data analytics in logistics and supply chain management: Certain investigations for research and applications. Int. J. Prod. Econ. 2016, 176, 98–110. [Google Scholar] [CrossRef]

- Tiwari, S.; Wee, H.M.; Daryanto, Y. Big data analytics in supply chain management between 2010 and 2016: Insights to industries. Comput. Ind. Eng. 2018, 115, 319–330. [Google Scholar] [CrossRef]

- Demchenko, Y.; Grosso, P.; Membrey, P. Addressing Big Data Issues in Scientific Data Infrastructure. In Proceedings of the 2013 International Conference on Collaboration Technologies and Systems (CTS), San Diego, CA, USA, 20–24 May 2013; IEEE: Piscataway, NJ, USA, 2013; pp. 48–55. [Google Scholar]

- Jhang-Li, J.H.; Chang, C.W. Analyzing the operation of cloud supply chain: Adoption barriers and business model. Electron. Commer. Res. 2017, 17, 627–660. [Google Scholar] [CrossRef]

- Gamage, P. New development: Leveraging ‘big data’ analytics in the public sector. Public Money Manag. 2016, 36, 385–390. [Google Scholar] [CrossRef]

- Kache, F.; Seuring, S. Challenges and opportunities of digital information at the intersection of Big Data Analytics and supply chain management. Int. J. Oper. Prod. Manag. 2017, 37, 10–36. [Google Scholar] [CrossRef]

- Ren, S.J.; Wamba, S.F.; Akter, S.; Dubey, R.; Childe, S.J. Modelling quality dynamics, business value and firm performance in a big data analytics environment. Int. J. Prod. Res. 2017, 55, 5011–5026. [Google Scholar] [CrossRef]

- Yu, W.; Zhao, G.; Liu, Q.; Song, Y. Role of big data analytics capability in developing integrated hospital supply chains and operational flexibility: An organizational information processing theory perspective. Technol. Forecast. Soc. Change 2021, 163, 120417. [Google Scholar] [CrossRef]

- Legenvre, H.; Hameri, A.P. The emergence of data sharing along complex supply chains. Int. J. Oper. Prod. Manag. 2024, 44, 292–297. [Google Scholar] [CrossRef]

- Erboz, G.; Yumurtacı Hüseyinoğlu, I.Ö.; Szegedi, Z. The partial mediating role of supply chain integration between Industry 4.0 and supply chain performance. Supply Chain. Manag. Int. J. 2022, 27, 538–559. [Google Scholar] [CrossRef]

- Wamba, S.F.; Akter, S.; Edwards, A.; Chopin, G.; Gnanzou, D. How ‘big data’can make big impact: Findings from a systematic review and a longitudinal case study. Int. J. Prod. Econ. 2015, 165, 234–246. [Google Scholar] [CrossRef]

- Zhao, K.; Zuo, Z.; Blackhurst, J.V. Modelling supply chain adaptation for disruptions: An empirically grounded complex adaptive systems approach. J. Oper. Manag. 2019, 65, 190–212. [Google Scholar] [CrossRef]

- Scholten, K.; Sharkey Scott, P.; Fynes, B. Building routines for non-routine events: Supply chain resilience learning mechanisms and their antecedents. Supply Chain. Manag. Int. J. 2019, 24, 430–442. [Google Scholar] [CrossRef]

- Ribeiro, J.P.; Barbosa-Povoa, A. Supply Chain Resilience: Definitions and quantitative modelling approaches—A literature review. Comput. Ind. Eng. 2018, 115, 109–122. [Google Scholar] [CrossRef]

- Liu, H.; Lu, F.; Shi, B.; Hu, Y.; Li, M. Big data and supply chain resilience: Role of decision-making technology. Manag. Decis. 2023, 61, 2792–2808. [Google Scholar] [CrossRef]

- Christopher, M.; Peck, H. Building the resilient supply chain. Int. J. Logist. Manag. 2004, 15, 1–13. [Google Scholar] [CrossRef]

- Sheffi, Y.; Rice, J.B. A supply chain view of the resilient enterprise. MIT Sloan Manag. Rev. 2005, 47, 41–48. [Google Scholar]

- Dolgui, A.; Ivanov, D.; Sokolov, B. Ripple effect in the supply chain: An analysis and recent literature. Int. J. Prod. Res. 2018, 56, 414–430. [Google Scholar] [CrossRef]

- Ivanov, D. Digital twins, the ripple effect, and resilience in supply chains: Comparative analysis. IFAC-PapersOnLine 2019, 52, 1672–1678. [Google Scholar] [CrossRef]

- Simchi-Levi, D.; Schmidt, W.; Wei, Y. From superstorms to factory fires: Managing unpredictable supply-chain disruptions. Harv. Bus. Rev. 2014, 92, 96–101. [Google Scholar]

- Simchi-Levi, D. Find the Weak Link in Your Supply Chain. Harv. Bus. Rev. 2015, 93, 72–80. [Google Scholar]

- Sahlmueller, T.; Hellingrath, B. Measuring the resilience of supply chain networks. In Proceedings of the 19th International Conference on Information Systems for Crisis Response and Management (ISCRAM), Tarbes, France, 22–25 May 2022. [Google Scholar]

- Teece, D.J. Explicating Dynamic Capabilities: The Nature and Microfoundations of (Sustainable) Enterprise Performance. Strateg. Manag. J. 2007, 28, 1319–1350. [Google Scholar] [CrossRef]

- Ponomarov, S.Y.; Holcomb, M.C. Understanding the concept of supply chain resilience. Int. J. Logist. Manag. 2009, 20, 124–143. [Google Scholar] [CrossRef]

- Xu, Z.; Elomri, A.; Kerbache, L.; El Omri, A. Impacts of COVID-19 on global supply chains: Facts and perspectives. IEEE Eng. Manag. Rev. 2020, 48, 153–166. [Google Scholar] [CrossRef]

- Dubey, R.; Gunasekaran, A.; Bryde, D.; Dwivedi, Y.; Papadopoulos, T. Impact of big data analytics on supply chain resilience: Mediating role of visibility. Ann. Oper. Res. 2021, 302, 241–261. [Google Scholar]

- Zhao, N.; Hong, J.; Lau, K.H. Impact of supply chain digitalization on supply chain resilience and performance: A multi-mediation model. Int. J. Prod. Econ. 2023, 259, 108817. [Google Scholar] [CrossRef]

- Bag, S.; Wood, L.; Xu, L. Predictive analytics for building supply chain resilience. Ann. Oper. Res. 2023, 325, 271–293. [Google Scholar]

- Cooper, R.B.; Zmud, R.W. Information Technology Implementation Research: A Technological Diffusion Approach. Manag. Sci. 1990, 36, 123–139. [Google Scholar] [CrossRef]

- Khatri, V.; Brown, C.V. Designing Data Governance. Commun. Acm 2010, 53, 148–152. [Google Scholar] [CrossRef]

- Otto, B. Data Governance. Int. J. IT/Bus. Alignment Gov. 2011, 2, 4–11. [Google Scholar]

- Hazen, B.T.; Boone, C.A.; Ezell, J.D.; Jones-Farmer, L.A. Data Quality for Data Science, Predictive Analytics, and Big Data in Supply Chain Management. Int. J. Prod. Econ. 2014, 154, 72–80. [Google Scholar] [CrossRef]

- Shen, Z.M.; Sun, Y. Strengthening supply chain resilience during COVID-19: A case study of JD. com. J. Oper. Manag. 2023, 69, 359–383. [Google Scholar] [CrossRef]

- Culot, G.; Orzes, G.; Sartor, M.; Nassimbeni, G. The Data-Sharing Conundrum in the Era of Digital Transformation: Revisiting Established Theory. Supply Chain. Manag. Int. J. 2024, 29, 1–27. [Google Scholar] [CrossRef]

- Jorzik, N. Industrial Data Sharing and Data Readiness: A Law and Economics Perspective. Eur. J. Law Econ. 2024, 57, 181–205. [Google Scholar] [CrossRef]

- Gabellini, M.; Civolani, L.; Ronchi, M.; Naldi, L.D.; Regattieri, A. Data Spaces in Manufacturing and Supply Chains. Appl. Sci. 2025, 15, 5802. [Google Scholar] [CrossRef]

- Panetto, H.; Molina, A. Enterprise Integration and Interoperability in Manufacturing Systems: Trends and Issues. Comput. Ind. 2008, 59, 641–646. [Google Scholar] [CrossRef]

- Bousdekis, A.; Lepenioti, K.; Loumos, V.; Mantzari, E.; Apostolou, D.; Mentzas, G. Enterprise Integration and Interoperability for Big-Data-Driven Processes in Industry 4.0: A Systematic Literature Review. Front. Big Data 2021, 4, 624575. [Google Scholar] [CrossRef]

- Tracy, S.J. A phronetic iterative approach to data analysis in qualitative research. Qual. Res. 2018, 19, 61–76. [Google Scholar]

- Rowley, J. Conducting research interviews. Manag. Res. Rev. 2012, 35, 260–271. [Google Scholar] [CrossRef]

- Kallio, H.; Pietilä, A.M.; Johnson, M.; Kangasniemi, M. Systematic methodological review: Developing a framework for a qualitative semi-structured interview guide. J. Adv. Nurs. 2016, 72, 2954–2965. [Google Scholar] [CrossRef] [PubMed]

- Eisenhardt, K.M. Building theories from case study research. Acad. Manag. Rev. 1989, 14, 532–550. [Google Scholar] [CrossRef]

- Yin, R.K. Case Study Research and Applications, 6th ed.; Sage: Thousand Oaks, CA, USA, 2018. [Google Scholar]

- Ministry of International Trade and Industry (MITI). Industry4WRD: National Policy on Industry 4.0; Policy Document; Ministry of International Trade and Industry (MITI): Kuala Lumpur, Malaysia, 2018.

- Guest, G.; Bunce, A.; Johnson, L. How many interviews are enough? An experiment with data saturation and variability. Field Methods 2006, 18, 59–82. [Google Scholar] [CrossRef]

- Hennink, M.M.; Kaiser, B.N.; Marconi, V.C. Code saturation versus meaning saturation. Qual. Health Res. 2017, 27, 591–608. [Google Scholar] [CrossRef]

- Hagaman, A.K.; Wutich, A. How many interviews are enough to identify metathemes in multisited and cross-cultural research? Another perspective on Guest, Bunce, and Johnson’s (2006) landmark study. Field Methods 2017, 29, 23–41. [Google Scholar] [CrossRef]

- Hennink, M.; Kaiser, B.N. Sample sizes for saturation in qualitative research: A systematic review of empirical tests. Soc. Sci. Med. 2022, 292, 114523. [Google Scholar] [CrossRef]

- Yin, R.K. Applications of Case Study Research; Sage Publications: Thousand Oaks, CA, USA, 2011. [Google Scholar]

- Gosling, J.; Purvis, L.; Naim, M.M. Supply chain flexibility as a determinant of supplier selection. Int. J. Prod. Econ. 2010, 128, 11–21. [Google Scholar] [CrossRef]

- Jha, A.K.; Agi, M.A.; Ngai, E.W. A note on big data analytics capability development in supply chain. Decis. Support Syst. 2020, 138, 113382. [Google Scholar] [CrossRef]

- Kirchherr, J.; Charles, K. Enhancing the sample diversity of snowball samples. Sustain. Sci. 2018, 13, 1381–1391. [Google Scholar]

- Guest, G.; Namey, E.; Chen, M. A simple method to assess and report thematic saturation. PLoS ONE 2020, 15, e0232076. [Google Scholar] [CrossRef]

- Yin, R.K. Case Study Research: Design and Methods; Sage: Thousand Oaks, CA, USA, 2009; Volume 5. [Google Scholar]

- Braun, V.; Clarke, V. Using thematic analysis in psychology. Qual. Res. Psychol. 2006, 3, 77–101. [Google Scholar] [CrossRef]

- Saldaña, J. The Coding Manual for Qualitative Researchers, 3rd ed.; Sage: Thousand Oaks, CA, USA, 2016. [Google Scholar]

- Castleberry, A.; Nolen, A. Thematic analysis of qualitative research data: Is it as easy as it sounds? Curr. Pharm. Teach. Learn. 2018, 10, 807–815. [Google Scholar] [CrossRef]

- Braun, V.; Clarke, V. Toward good practice in thematic analysis. APA Open 2022, 2, e2129597. [Google Scholar]

- O’Connor, C.; Joffe, H. Intercoder reliability in qualitative research: Debates and practical guidelines. Int. J. Qual. Methods 2020, 19, 1–13. [Google Scholar] [CrossRef]

- Lincoln, Y.S. Naturalistic Inquiry; Sage: Thousand Oaks, CA, USA, 1985; Volume 75. [Google Scholar]

- Nowell, L.S.; Norris, J.M.; White, D.E.; Moules, N.J. Thematic analysis: Striving to meet trustworthiness criteria. Int. J. Qual. Methods 2017, 16, 1–13. [Google Scholar] [CrossRef]

- Miles, M.B.; Huberman, A.M.; Saldaña, J. Qualitative Data Analysis: A Methods Sourcebook, 3rd ed.; Sage: Thousand Oaks, CA, USA, 2014. [Google Scholar]

- Mikalef, P.; Boura, M.; Lekakos, G.; Krogstie, J. Big data analytics and firm performance: Findings from a mixed-method approach. J. Bus. Res. 2019, 98, 261–276. [Google Scholar] [CrossRef]

- Belhadi, A.; Bouzon, M.; Khan, S. Traceability and big data analytics for sustainable supply chains. J. Clean. Prod. 2024, 409, 137129. [Google Scholar]

- Demchenko, Y.; Grosso, P.; De Laat, C.; Membrey, P. Big Data architecture framework and components. In Proceedings of the 2013 International Conference on Collaboration Technologies and Systems (CTS), San Diego, CA, USA, 20–24 May 2013; pp. 104–112. [Google Scholar]

- Kumar, R.R.; Raj, A. Big data adoption and performance: Mediating mechanisms of innovation, supply chain integration and resilience. Supply Chain. Manag. Int. J. 2025, 30, 67–85. [Google Scholar] [CrossRef]

- Galbraith, J.R. Organization design: An information processing view. Interfaces 1974, 4, 28–36. [Google Scholar] [CrossRef]

- Dyer, J.H.; Singh, H. The relational view: Cooperative strategy and sources of interorganizational competitive advantage. Acad. Manag. Rev. 1998, 23, 660–679. [Google Scholar] [CrossRef]

- Poppo, L.; Zenger, T. Do formal contracts and relational governance function as substitutes or complements? Strateg. Manag. J. 2002, 23, 707–725. [Google Scholar] [CrossRef]

- Mikalef, P.; Pappas, I.O.; Krogstie, J.; Pavlou, P.A. Big data and business analytics: A research agenda for realizing business value. Inf. Manag. 2020, 57, 103237. [Google Scholar] [CrossRef]

- Ivanov, D. Supply chain viability and the COVID-19 pandemic: A conceptual and formal generalisation of four major adaptation strategies. Int. J. Prod. Res. 2021, 59, 3535–3552. [Google Scholar] [CrossRef]

- Sivarajah, U.; Kamal, M.M.; Irani, Z.; Weerakkody, V. Critical analysis of Big Data challenges and analytical methods. J. Bus. Res. 2017, 70, 263–286. [Google Scholar] [CrossRef]

- Chenger, J.; Lin, Y.; Liu, X. Leveraging big data analytics for resilient global supply chains under data privacy risks. Technovation 2023, 121, 102656. [Google Scholar]

- Rose, S.; Borchert, O.; Mitchell, S.; Connelly, S. Zero Trust Architecture; Technical Report Special Publication 800-207; National Institute of Standards and Technology (NIST): Gaithersburg, MD, USA, 2020. [Google Scholar]

- Kairouz, P.; McMahan, H.B.; Avent, B.; Bellet, A.; Bennis, M.; Bhagoji, A.N.; Bonawitz, K.; Charles, Z.; Cormode, G.; Cummings, R.; et al. Advances and open problems in federated learning. Found. Trends Mach. Learn. 2021, 14, 1–210. [Google Scholar] [CrossRef]

- Hardjono, T.; Pentland, A.S. Data Cooperatives: Towards a Foundation for Decentralized Personal Data Management. arXiv 2019, arXiv:1905.08819. [Google Scholar] [CrossRef]

| Sr. | Reference | Supply Chain Context | Industry/Country | Methodology | Framework or Theory Used | Key Enablers/Resources | Benefits Reported | Challenges/Barriers |

|---|---|---|---|---|---|---|---|---|

| 1 | Aldossari et al. (2025) [35] | supply chains; decision-making | Manufacturing SMEs/Saudi Arabia | Survey (n = 384) | TOE + DOI + Institutional theory | Security, compatibility, complexity, adaptability; top management support; relative advantage; IT infrastructure; training; government incentives; competitive pressure | Decision-making effectiveness; operational efficiency; competitive performance | Limited expertise; high costs; integration barriers; data/privacy concerns |

| 2 | Alorfi & Alsaadi (2025) [34] | operations/supply chain | Manufacturing/Saudi Arabia | Interpretive Structural Modelling (ISM) | ISM (barrier hierarchy) | Decision support, operational improvements | Security and privacy; limited data infrastructure; skills/data literacy; cost/resource constraints; regulatory/compliance; lack of standards; low awareness; interoperability; data ownership/access; analytics complexity; lack of use cases | |

| 3 | El-Haddadeh et al. (2025) [33] | SC value-chain processes | Multi-sector/UK | Survey (n = 369) | RBV | Process-level value drivers; resource orchestration | Adoption ’success’ via value-chain improvements; customer-centric value | SME constraints (finance, infrastructure, knowledge); alignment to organization size |

| 4 | Huong, Azmat & Hadeed (2025) [29] | Sustainable supply chains | Manufacturing | Systematic literature review (64 studies) | TOE + Triple Bottom Line | TOE-organized enablers: data governance and quality; organizational readiness; external/partner pressures | Economic, environmental and social (TBL) benefits; transparency and integration | Integration with legacy systems; skill shortages; cultural resistance; cost |

| 5 | Vafaei-Zadeh et al. (2025) [30] | supply chains | Manufacturing/Malaysia | Cross-sectional survey | TOE + Dynamic Capabilities + RBV | IT/data infrastructure; leadership commitment; digital maturity; integration readiness | Agility; visibility; sustainability-related performance | Cost; cultural resistance; vendor dependence; integration challenges |

| 6 | Al-shanableh et al. (2024) [37] | supply chains | SMEs/Jordan | Survey (n = 388) | TOE + DOI + TAM | Relative advantage; compatibility; top management support; perceived usefulness; security | Improved decision-making; competitiveness | Complexity; financial readiness; resource constraints |

| 7 | Anwar et al. (2024) [31] | supply chains | SMEs (recycling sector)/China | Cross-sectional survey (n = 317) | TOE + RBV + DOI | Green economic incentives; green SC information integration; green process innovation; organizational readiness | Improved decision quality; higher environmental performance | Complexity; resource limitations; data/security concerns |

| 8 | Babalghaith & Aljarallah (2024) [2] | SC operations, performance | SMEs/Saudi Arabia | Survey (n = 233) | TOE + RBV + DOI | Top management support; organizational readiness; data-driven culture; compatibility; | Financial, market and process performance improvements | Complexity; uncertainty; need for data-driven culture |

| 9 | Hamed et al. (2024) [38] | Supply chain operations | M&L Enterprises/Saudi Arabia | Survey of 402 practitioners | Organizational factors model | Top management support, IT expertise, organizational resources | Narrative positioning that BDA improves SC operations | Resistance to change tested as moderator; general cultural/data concerns discussed |

| 10 | Raj, Kumar & Jeyaraj (2024) [27] | SC capabilities and performance | Cross-industry/30 countries | Meta-analysis (133 studies) | Synthesizes TOE antecedents; moderator analysis | Top management support; data quality; infrastructure; skills; environmental pressure | SC integration, collaboration, CRM, innovation, operational and overall performance | Contextual heterogeneity by industry and economic region |

| 11 | Waqar & Paracha (2024) [39] | decision-making; digital transformation | Telecom, IT, agriculture, e-commerce/Pakistan | Mixed-methods: 156 surveys + 10 interviews | TOE + Diffusion of Innovation (DOI) | Perceived benefits; top management support | Improved decision quality, efficiency, competitiveness | Culture and skills gaps; policy/regulatory and financial constraints; integration and data quality |

| Analytics Type | Purpose | BDA Models |

|---|---|---|

| Descriptive Analytics | Recognize issues by analyzing and presenting the current state to answer “What is happening now?” | Statistics, Characterization, Discrimination, Visualization |

| Predictive Analytics | To Project and forecast future states based on historical and current data | Clustering, Classification, Forecasting, Association, Regression, Semantic Analysis |

| Prescriptive Analytics | Determine and evaluate alternative courses of action using algorithms and optimization/simulation | Simulation, Optimization |

| Sr. No. | Position in Firm | Gender | Experience (in Year) | Firm Size | Firm Type |

|---|---|---|---|---|---|

| 1 | Operation Manager | Man | 27 | >1000 | Electronics Manufacturing |

| 2 | Supply Chain Specialist | Woman | 20 | 251–500 | Food Manufacturing |

| 3 | Supply Chain Manager | Woman | 10 | 251–500 | Industrial Equipment Manufacturing |

| 4 | Production Manager | Man | 17 | 500–1000 | Precision Equipment Manufacturer |

| 5 | Supply Chain Manager | Man | 19 | >1000 | Plastic Goods Manufacturing |

| 6 | Supply Chain Manager | Man | 11 | 101–250 | Chemical Manufacturing Company |

| 7 | Production Manager | Man | 15 | >1000 | Electrical Products |

| 8 | Operation Manager | Man | 12 | 101–250 | Automotive Parts Manufacturer |

| 9 | Supply Chain Manager | Woman | 25 | 251–500 | Precision Engineering Firm |

| 10 | Supply Chain Manager | Man | 21 | 251–500 | FMCG Firm |

| 11 | Operation Manager | Woman | 22 | >1000 | Electrical Products |

| 12 | Global SC Expert | Woman | 19 | >1000 | Automotive Manufacturing |

| 13 | Operation Manager | Man | 16 | 500–1000 | Food Manufacturing |

| 14 | Supply Chain Manager | Man | 30 | >1000 | Automotive Components Manufacturer |

| 15 | Managing Director | Man | 28 | 101–250 | Furniture Manufacturing |

| 16 | Production Manager | Man | 13 | 500–1000 | Food Manufacturing |

| Themes | Sub-Themes | Supporting Quotes | Literature Alignment |

|---|---|---|---|

| Organizational Resources | Technological Infrastructure | “We had to modernize our entire IT backbone to even begin collecting data”. | [121] |

| Skilled Human Capital | “There’s a gap in talent who understand both data and supply chain processes”. | [19] | |

| Data-Driven Culture | “Getting people to adopt data culture is harder than implementing the tools”. | [6] | |

| Strategic Investment | “Without financial commitment, we can’t scale analytics”. | [5] | |

| Clear Objectives | “We had to define what success looks like before starting analytics projects”. | [6] | |

| Top Management Support | “Management’s support gave us the greenlight and confidence to proceed”. | [7] | |

| Data Governance and Quality | “Our analytics was useless without clean, well-structured data”. | [7] | |

| Collaboration and Information-Sharing | “We share and receive insights regularly with key partners”. | [15] | |

| Analytical Capability and Maturity | “Analytics maturity took us years of learning and platform building”. | [6] | |

| Benefits of BDA Adoption | Internal End-to-End SC Visibility | “We can now monitor our supply chain in real time across regions”. | [47,84] |

| Predictive Risk Sensing | “We simulated delays and adjusted shipments before they became a problem”. | [84,86] | |

| Operational Efficiency and Cost Optimization | “Analytics showed us where we were overstocking and wasting costs”. | [55] | |

| Integration and Collaboration | “Better data meant tighter collaboration with suppliers”. | [15,66] | |

| Data-Driven Innovation and Agility | “We tested new models faster because analytics helped us see gaps”. | [86,122] | |

| Competitive Advantage | “Analytics gave us an edge when negotiating with clients”. | [5,86] | |

| Traceability and Compliance | “We track every unit from origin to delivery with better accuracy now”. | [7,122] | |

| Scenario Planning and Simulation | “We model scenarios and create contingency plans faster”. | [47,86] | |

| Advanced Supplier Risk Monitoring | “Analytics warned us early about vendor unreliability”. | [15,84] | |

| Dynamic Capacity Allocation | “We rebalance production on-the-fly based on real-time analytics”. | [86] | |

| Barriers/Challenges | Data Integration and Infrastructure Readiness issue | “Our legacy systems just couldn’t connect and integrate analytics”. | [123] |

| Skill Gaps and Human Capital Deficiency | “We had good tools but lacked people who knew how to use them”. | [6,19] | |

| Resistance to Change and Cultural Inertia | “People were afraid analytics would replace them”. | [6] | |

| Poor Data Quality and Governance | “Data was siloed, messy, and lacked consistency”. | [7] | |

| Cost and Resource Constraints | “We couldn’t justify BDA until ROI was proven”. | [5] | |

| Lack of Inter-Departmental Collaboration | “Departments were not aligned in their data goals”. | [15] | |

| Lack of Awareness and Interest | “Many thought analytics was only IT’s job”. | [6] | |

| Privacy and Security | “Cybersecurity was a big concern with open data systems”. | [124] |

| Mechanism (M) | Primary Gate(s) | Observable Timer Outcome |

|---|---|---|

| M1 Comparability (shared IDs/definitions/thresholds) | G1–G2 (plumbing, descriptive) | TTD↓ (fewer reconciliations before acting) |

| M2 Explainability (lineage, drill-through, transformation trails) | G2 | TtR↓ (faster root-cause isolation, targeted holds) |

| M3 Authorization (named owners, SLAs, role-based rights) | G3 (predictive alerting) | TtD↓ (alerts → authorized actions) |

| M4 Fidelity (stewardship SLAs, exception queues, recalibration) | G2–G3 | Sustains/improves TTD/TtD/TtR over time |

| M5 Executability (native system hooks, API connectors) | G4 (prescriptive) | TtRcf↓ (changes executed without re-keying) |

| Barrier (prevalence) | G1 | G2 | G3 | G4 | Sensing | Seizing | Reconfig. |

|---|---|---|---|---|---|---|---|

| Legacy fragmentation/poor integration (n = 15) | ✓ | ✓ | |||||

| Ambiguous ownership/missing lineage (n = 13) | ✓ | ✓ | ✓ | ||||

| Department silos/low analytics literacy (n = 15) | ✓ | ✓ | |||||

| Skill gaps/change resistance (n = 15) | ✓ | ✓ | |||||

| Resistance to prescriptive decisioning (n = 14) | ✓ | ✓ | ✓ | ||||

| Cost and resource constraints (n = 16) | ✓ | ✓ | ✓ | ✓ | |||

| Low awareness/priority (n = 15) | ✓ | ✓ | |||||

| Privacy and security (PDPA/GDPR, IP) (n = 13) | ✓ | ✓ |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yasmeen, G.; Anthonysamy, L.; Ojo, A.O. Resource-Governed BDA Adoption for Resilient Supply-Chain Operations: Qualitative Evidence from Malaysian Manufacturing Industry. Sustainability 2025, 17, 9620. https://doi.org/10.3390/su17219620

Yasmeen G, Anthonysamy L, Ojo AO. Resource-Governed BDA Adoption for Resilient Supply-Chain Operations: Qualitative Evidence from Malaysian Manufacturing Industry. Sustainability. 2025; 17(21):9620. https://doi.org/10.3390/su17219620

Chicago/Turabian StyleYasmeen, Ghazala, Lilian Anthonysamy, and Adedapo Oluwaseyi Ojo. 2025. "Resource-Governed BDA Adoption for Resilient Supply-Chain Operations: Qualitative Evidence from Malaysian Manufacturing Industry" Sustainability 17, no. 21: 9620. https://doi.org/10.3390/su17219620

APA StyleYasmeen, G., Anthonysamy, L., & Ojo, A. O. (2025). Resource-Governed BDA Adoption for Resilient Supply-Chain Operations: Qualitative Evidence from Malaysian Manufacturing Industry. Sustainability, 17(21), 9620. https://doi.org/10.3390/su17219620