Review on Millimeter-Wave Radar and Camera Fusion Technology

Abstract

:1. Introduction

2. Materials and Methods

2.1. MMW Radar and Camera Information Fusion Technology

2.1.1. Definition of MMW Radar and Camera Information Fusion

2.1.2. MMW Radar and Camera Information Fusion Architecture

2.1.3. Layers of MMW Radar and Camera Data Fusion

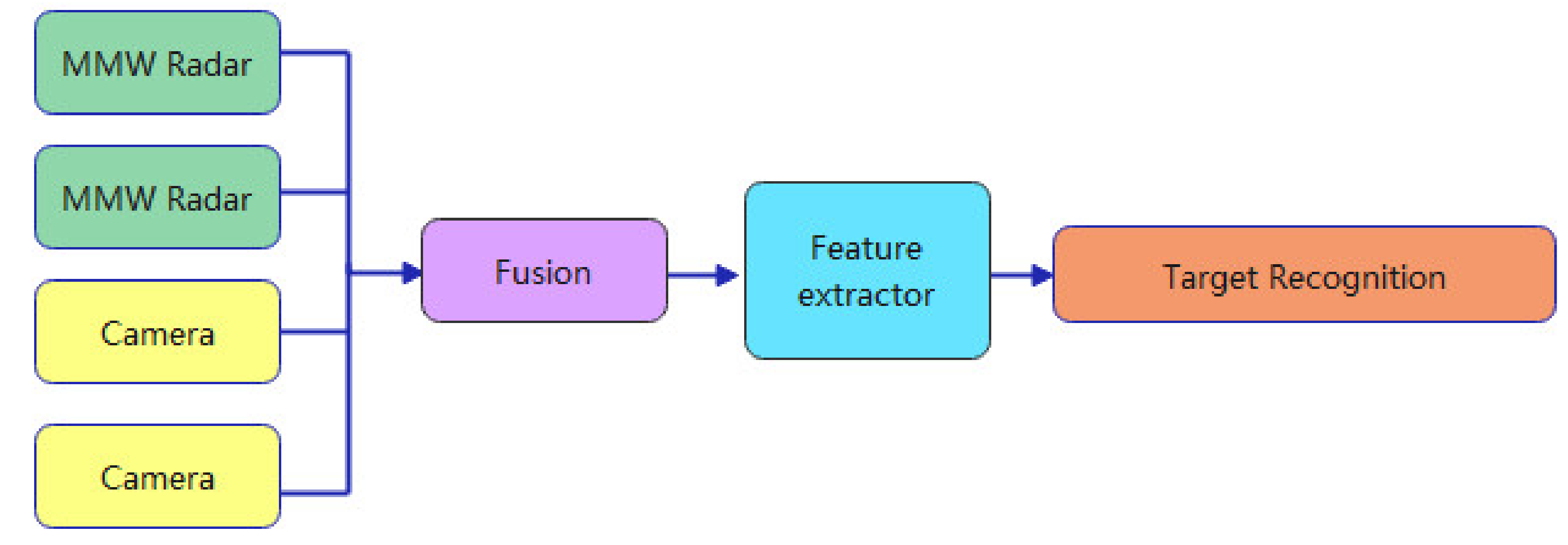

- The low level is data-level fusion, where low-level fusion combines several sources of raw data to produce new raw data.

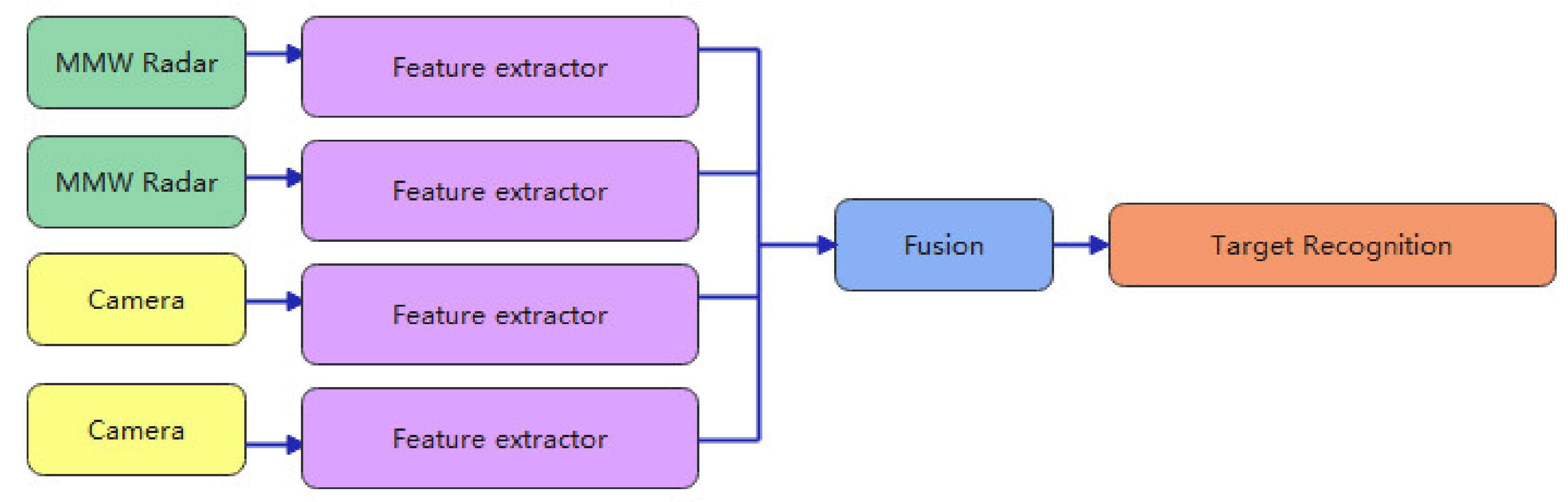

- Medium level fusion, which is target level fusion, which combines various features, such as edges, corners, lines, texture parameters, etc., into a feature map that is then further processed.

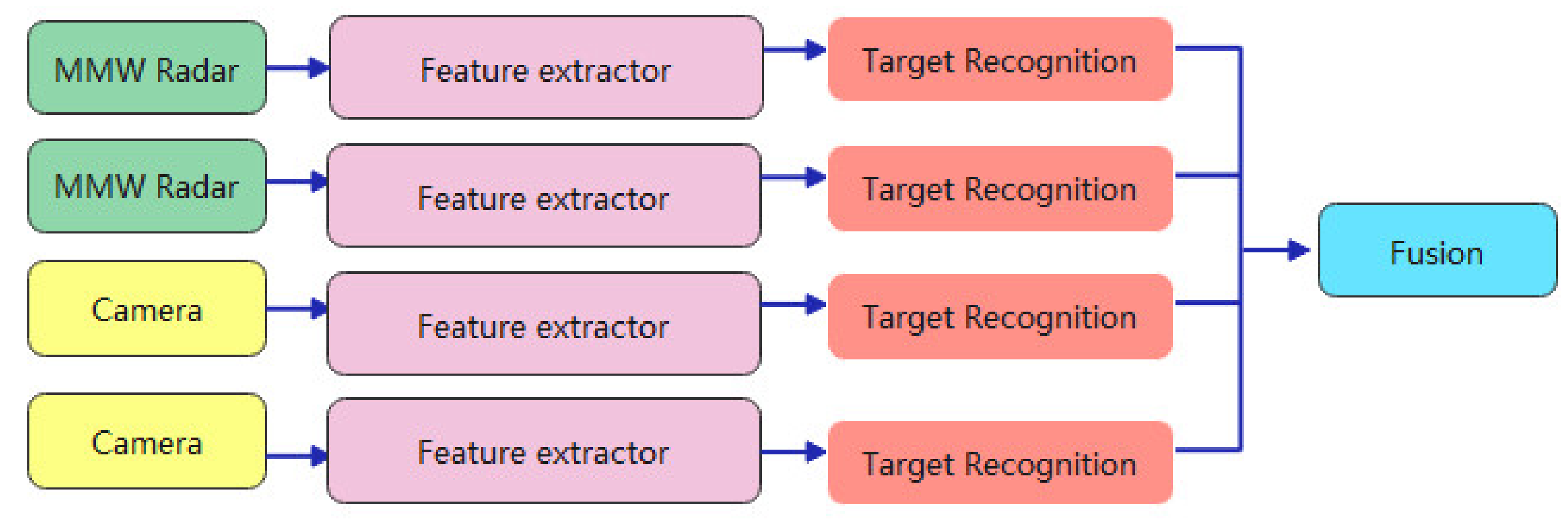

- Advanced fusion, also known as decision-level fusion, where each input source produces a decision and finally all decisions are combined.

Data Level Convergence

Target-Level Fusion

Decision-Level Integration

2.2. MMW Radars and Cameras Data Fusion Process

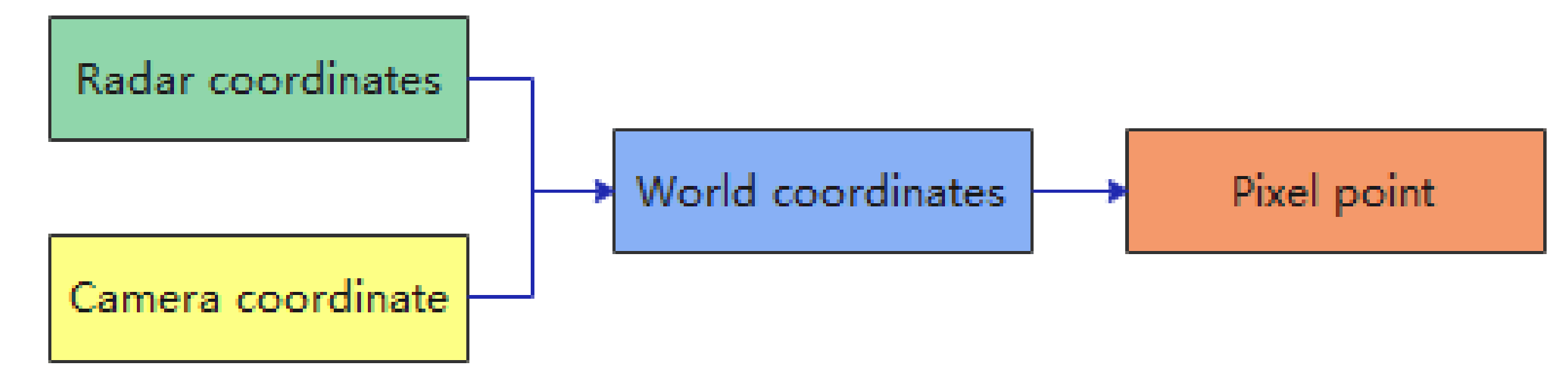

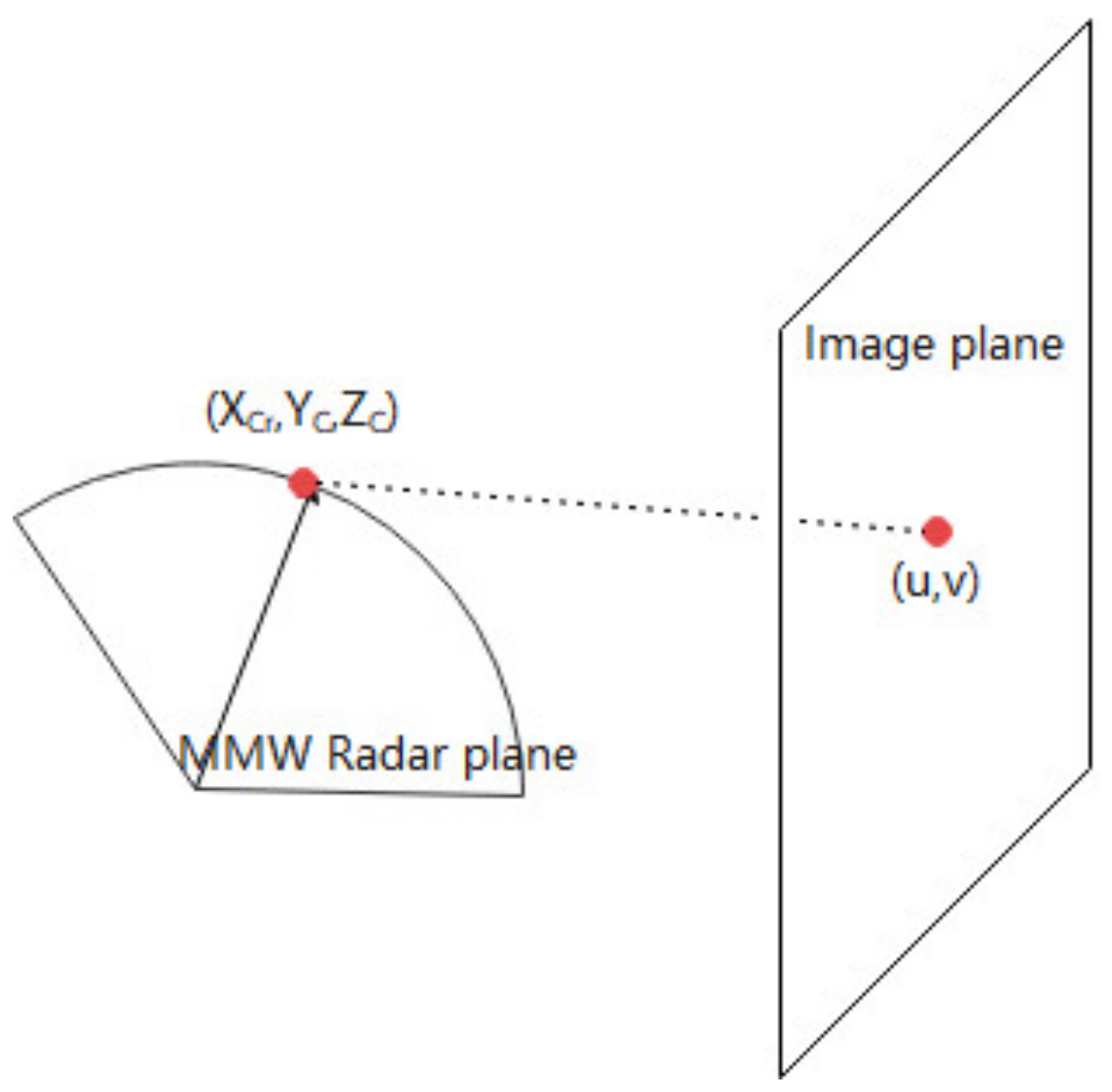

2.2.1. Spatial Fusion of MMW Radar and Vision Sensors

MMW Radar Coordinate System to Camera Coordinate System Conversion

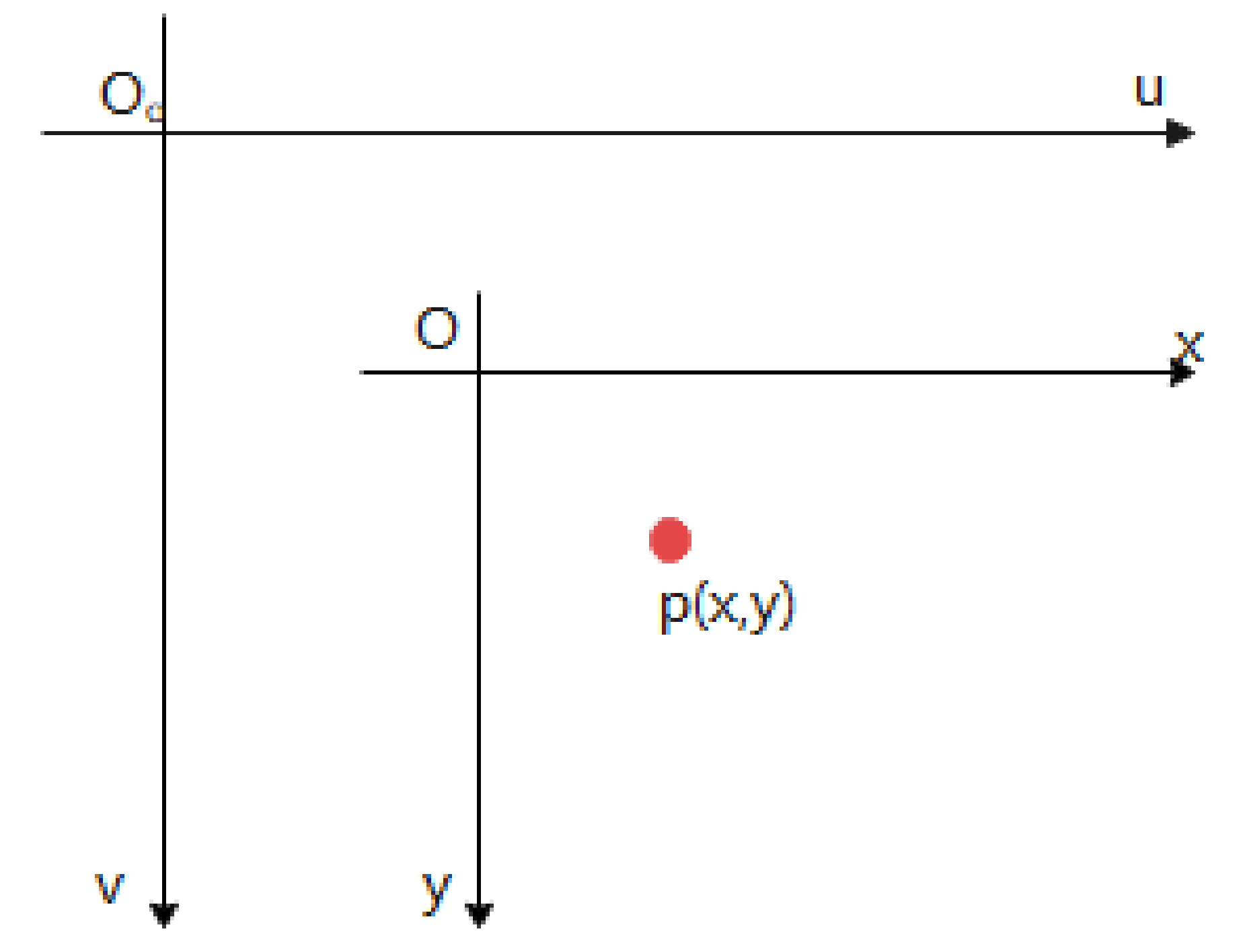

Conversion of Camera and Image Coordinate System

Conversion of Image and Pixel Coordinate Systems

2.2.2. Camera Calibration

Traditional Camera Calibration

- Direct linear calibration method

- 2.

- RAC two-step method

- 3.

- Zhengyou Zhang plane calibration

Camera Self-Calibration

Calibration Based on Active Vision

2.2.3. Combined MMW Radar and Camera Calibration

Traditional Joint Calibrations

- Geometric projection

- 2.

- Four-point calibration method

- The detection range of radar is generally larger than 100 m, so more points are needed to solve the mapping function;

- Any significant target in the camera image should occupy a certain area, so choosing a point in that area will create an error, and to reduce the error we need more pairs of points;

- If the devices are moved, they must be recalibrated.

Direct Calibration

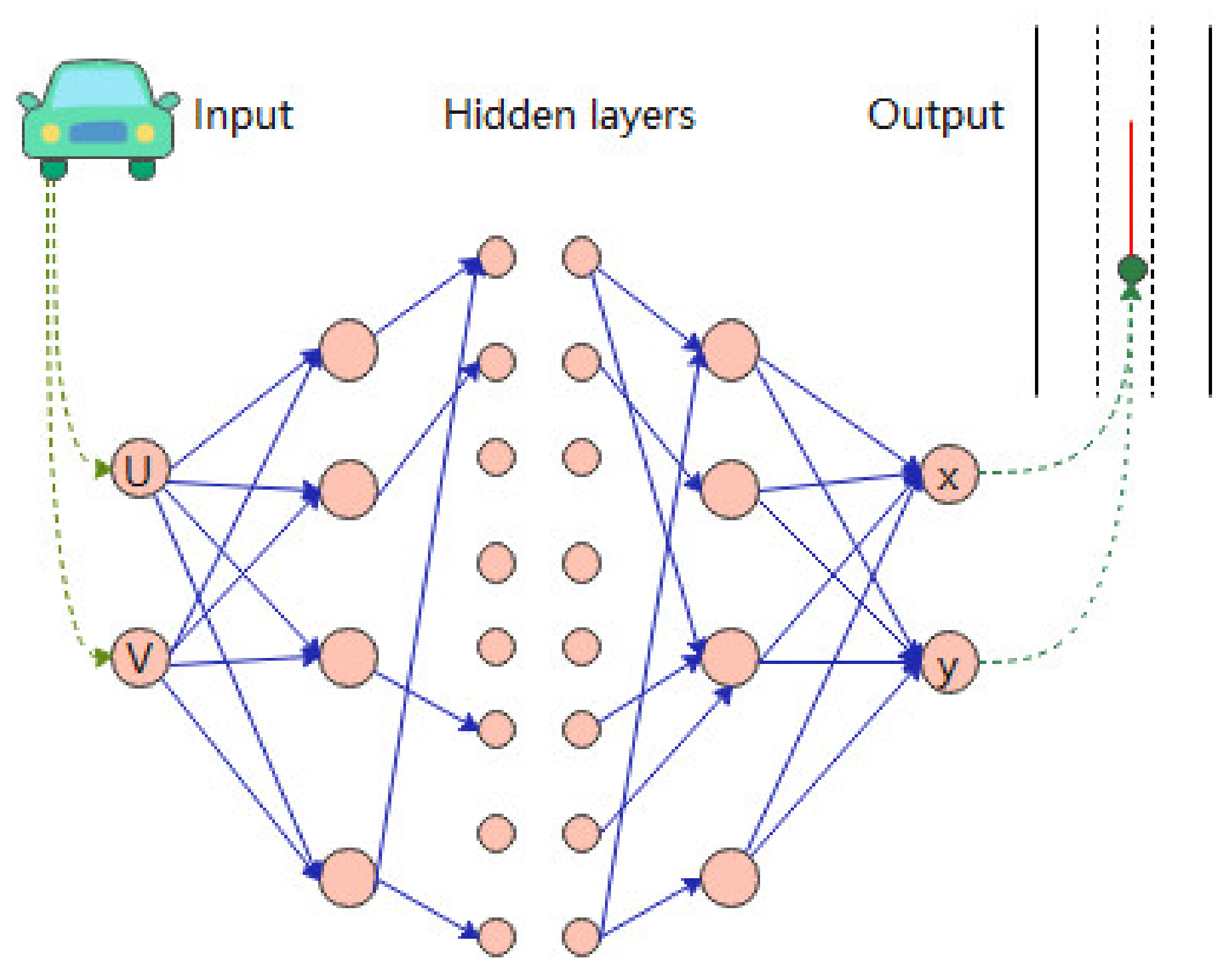

Intelligent Calibration

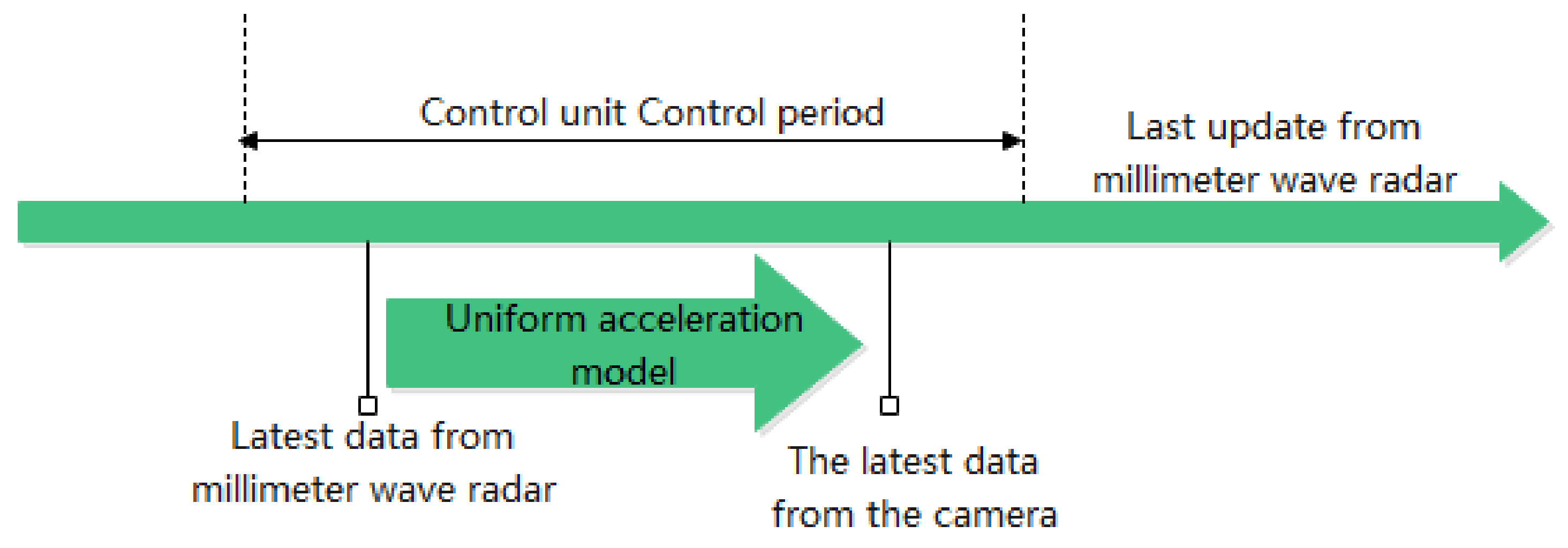

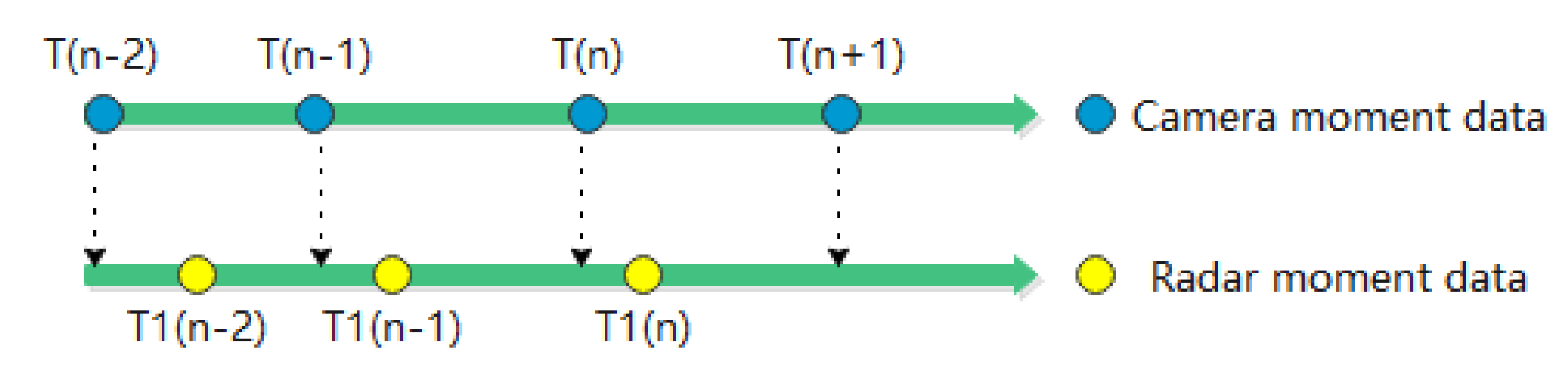

2.2.4. Temporal Fusion of MMW Radar and Vision Sensors

Hardware Synchronisation

Software Synchronisation

2.2.5. MMW Radar and Image Data Information Correlation

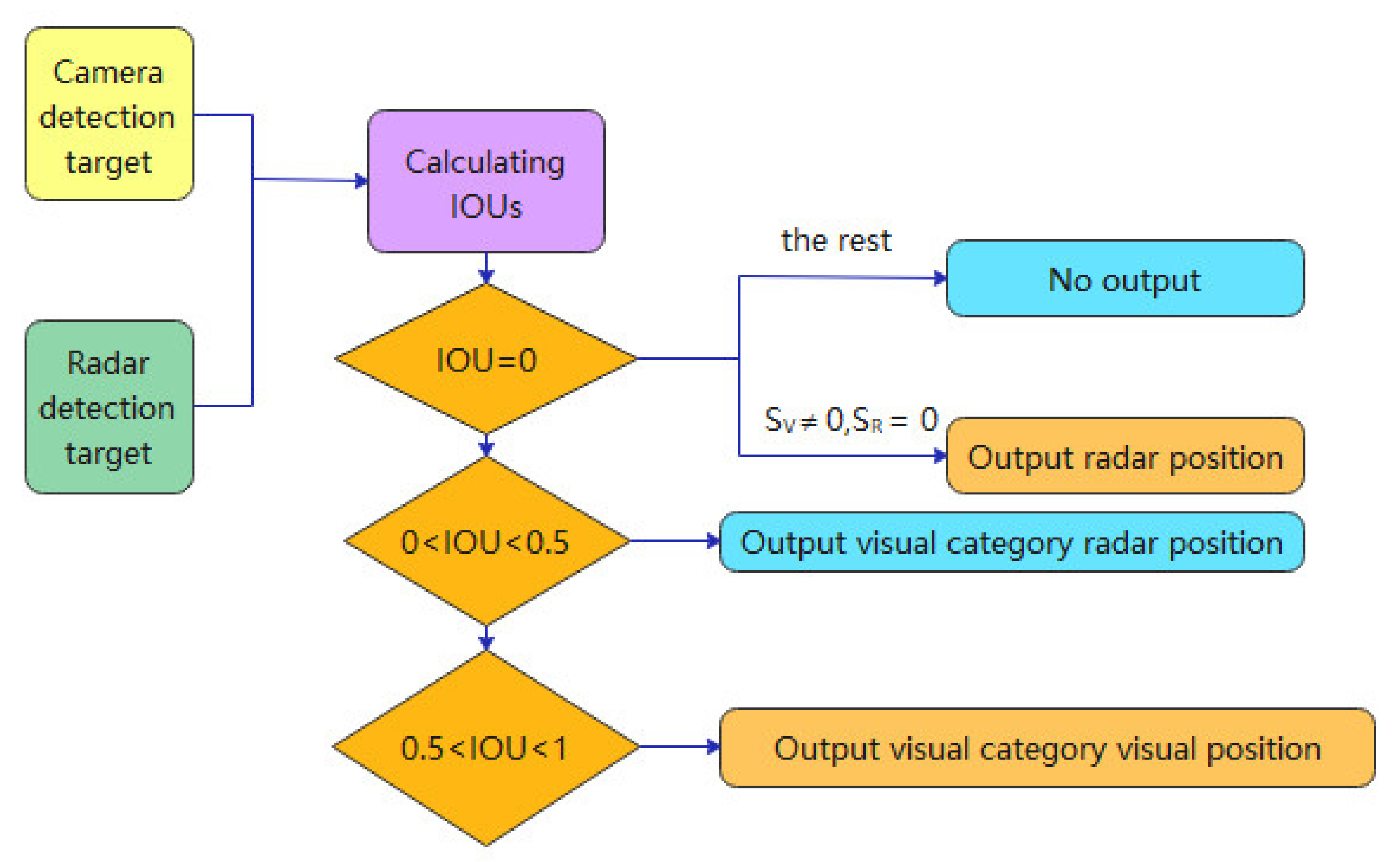

IOU Discriminations

Based on the Dichotomous Map Matching Principle

Typical Data Association Algorithms

- Joint Probabilistic Data Association (JPDA)

- 2.

- Multiple Hypothesis Tracking (MHT)

2.3. MMW Radar and Camera Information Fusion Algorithm

2.3.1. Traditional Information Fusion Algorithms

Weighted Average Method

Least Squares Method

Kalman Filtering Algorithm and Its Variants

Cluster Analysis

- The output of the function is between 0 and 1, when the two points , are very close to the output value, when the two points are very far away to the output value is small;

- A progressive decline in the trend of the function;

- When the two points , coincide (distance is 0), the output is 1, when the distance between the two points is infinite, the output is 0;

- is a continuous function.

Fuzzy Theory

Neural Network-Based Algorithms

Bayesian Approach

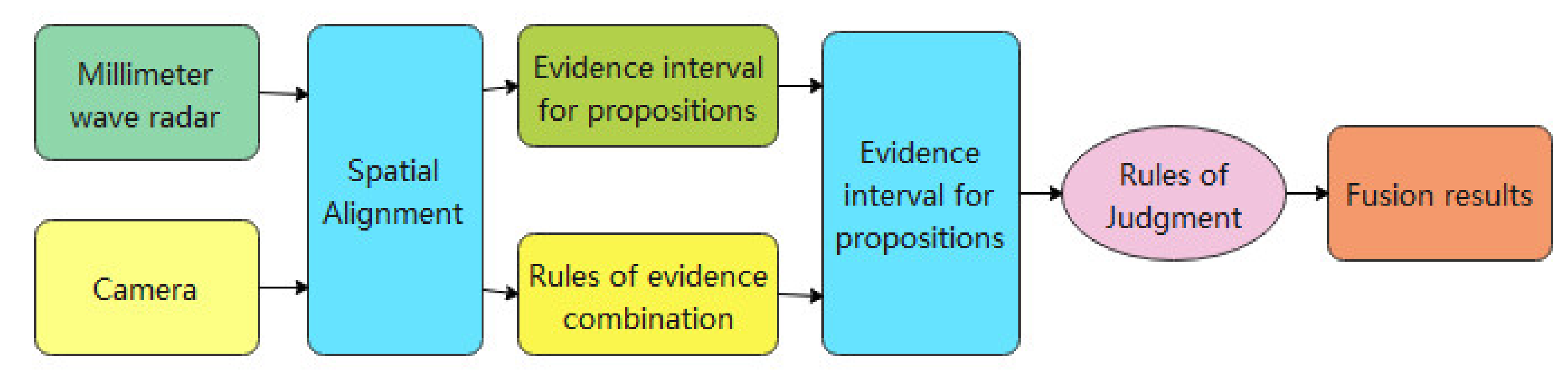

D-S Method

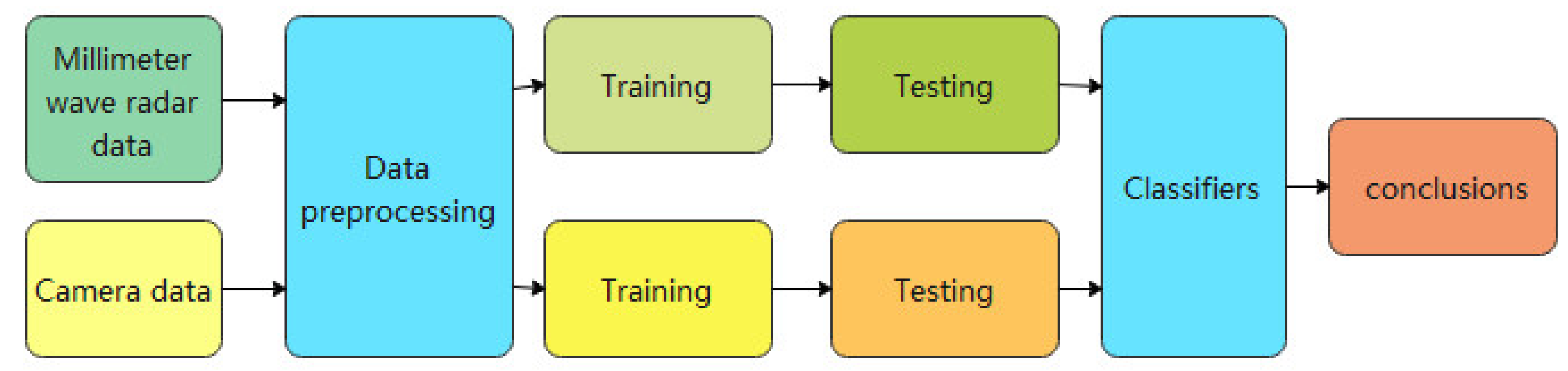

2.3.2. Deep Learning Based on Information Fusion Algorithms

CNN-Based Multi-Sensor Data Fusion Model

DLSTM-Based Data Fusion Model

3. Results

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Wei, W.B.; Rui, X.T.; Chen, Y.S. Study on millimeter wave radar homing head. Tactical Missile Technol. 2008, 24, 83–87. [Google Scholar]

- Hou, W.G. Key Technologies for UAV-Borne Millimeter-Wave Broadband Obstacle Avoidance Radar Based on FPGA. Master’s Thesis, Nanjing University of Science and Technology, Nanjing, China, 2017. [Google Scholar]

- Van der Sanden, J.J.; Short, N.H. Radar satellites measure ice cover displacements induced by moving vehicles. Cold Reg. Sci. Technol. 2017, 133, 56–62. [Google Scholar] [CrossRef]

- Signore, R.; Grazioso, S.; Fariello, A.; Murgia, F.; Selvaggioc, M.; Gironimo, G. Conceptual design and control strategy of a robotic cell for precision assembly in radar antenna systems. Procedia Manuf. 2017, 11, 397–404. [Google Scholar] [CrossRef]

- Gouveia, C.; Tomé, A.; Barros, F.; Soares, S.; Vieira, J.; Pinho, P. Study on the usage feasibility of continuous-wave radar for emotion recognition. J. Biomed. Signal Processing Control. 2020, 58, 101835. [Google Scholar] [CrossRef]

- Han, C.; Han, L.; Sun, L.; Guo, J. Millimeter wave radar gesture recognition algorithm based on spatio-temporal compressed feature representation learning. J. Electron. Inf. 2022, 44, 1274–1283. [Google Scholar]

- Javaid, M.; Haleem, A.; Singh, R.P.; Rab, S.; Suman, R. Exploring impact and features of machine vision for progressive industry 4.0 culture. J. Sens. Int. 2022, 3, 100132. [Google Scholar] [CrossRef]

- Bellocchio, E.; Crocetti, F.; Costante, G.; Fravolini, M.L.; Valigi, P. A novel vision-based weakly supervised framework for autonomous yield estimation in agricultural applications. J. Eng. Appl. Artif. Intell. 2022, 109, 104615. [Google Scholar] [CrossRef]

- Bowman, A.N. Executing a vision for regenerative medicine: A career beyond the bench. Dev. Biol. 2020, 459, 36–38. [Google Scholar] [CrossRef]

- Huang, S.L.; Zhang, J.X.; Bu, Z.F. Literature review of military applications of machine vision technology. Ordnance Ind. Autom. 2019, 2, 2. [Google Scholar]

- Da Silva Santos, K.R.; Villani, E.; de Oliveira, W.R.; Dttman, A. Comparison of visual servoing technologies for robotized aerospace structural assembly and inspection. Robot. Comput.-Integr. Manuf. 2022, 73, 102237. [Google Scholar] [CrossRef]

- Du, C.F.; Jiang, S.B. Application of computer vision and perception in intelligent security. J. Telecommun. Sci. 2021, 37, 142–147. [Google Scholar]

- Xia, Y.Q.; Kang, J.O.; Gan, Y.; Jin, B.H.; Qian, S.Y. Review of intelligent transportation system based on computer vision. J. Zhengzhou Inst. Light Ind. Nat. Sci. Ed. 2014, 29, 52–60. [Google Scholar]

- Wang, B.F.; Qi, Z.Q.; Ma, G.C.; Cheng, S.Z. Vehicle detection based on information fusion of radar and machine vision. J. Automot. Eng. 2015, 37, 674–678. [Google Scholar]

- Alessandretti, G.; Broggi, A.; Cerri, P. Vehicle and guard rail detection using radar and vision data fusion. IEEE Trans. Intell. Transp. Syst. 2007, 8, 95–105. [Google Scholar] [CrossRef] [Green Version]

- Zhao, L. Multi-sensor information fusion technology and its applications. Infrared 2021, 42, 21. [Google Scholar]

- Grover, R.; Brooker, G.; Durrant-Whyte, H.F. A low level fusion of millimeter wave radar and night-vision imaging for enhanced characterization of a cluttered environment. In Proceedings of the 2001 Australian Conference on Robotics and Automation, Sydney, Australia, 30 March–1 April 2001. [Google Scholar]

- Steux, B.; Laurgeau, C.; Salesse, L.; Wautier, D. Fade: A vehicle detection and tracking system featuring monocular color vision and radar data fusion. In Proceedings of the Intelligent Vehicle Symposium 2002, Versailles, France, 17–21 June 2002; Volume 2, pp. 632–639. [Google Scholar]

- Fang, Y.; Masaki, I.; Horn, B. Depth-based target segmentation for intelligent vehicles: Fusion of radar and binocular stereo. IEEE Trans. Intell. Transp. Syst. 2002, 3, 196–202. [Google Scholar] [CrossRef]

- Sugimoto, S.; Tateda, H.; Takahashi, H.; Okutomi, M. Obstacle detection using millimeter-wave radar and its visualization on image sequence. In Proceedings of the ICPR 2004, Cambridge, UK, 26 August 2004; Volume 3, pp. 342–345. [Google Scholar]

- Wu, S.G.; Decker, S.; Chang, P.; Camus, T.; Eledath, J. Collision sensing by stereo vision and radar sensor fusion. IEEE Trans. Intell. Transp. Syst. 2009, 10, 606–614. [Google Scholar]

- Wang, T.; Zheng, N.N.; Xin, J.; Ma, Z. Integrating millimeter wave radar with a monocular vision sensor for on-road obstacle detection applications. Sensors 2011, 11, 8992–9008. [Google Scholar] [CrossRef] [Green Version]

- Chavez-Garcia, R.O.; Burlet, J.; Vu, T.D.; Aycard, O. Frontal object perception using radar and mono-vision. In Proceedings of the 2012 IEEE Intelligent Vehicles Symposium, Madrid, Spain, 3–7 June 2012; pp. 159–164. [Google Scholar]

- Ji, Z.; Prokhorov, D. Radar-vision fusion for object classification. In Proceedings of the 2008, 11th International Conference on Information Fusion, Cologne, Germany, 30 June–3 July 2008; pp. 1–7. [Google Scholar]

- Steinberg, A.N.; Bowman, C.L. Rethinking the JDL data fusion levels. Nssdf Jhapl 2004, 38, 39. [Google Scholar]

- He, Y.; Guan, X.; Wang, G.H. Progress and prospects of multi-sensor information fusion research. J. Astronaut. 2005, 26, 524–530. [Google Scholar]

- Richter, E.; Schubert, R.; Wanielik, G. Radar and vision based data fusion-advanced filtering techniques for a multi object vehicle tracking system. In Proceedings of the 2008 IEEE Intelligent Vehicles Symposium, Eindhoven, The Netherlands, 4–6 June 2008; pp. 120–125. [Google Scholar]

- Ćesić, J.; Marković, I.; Cvišić, I.; Petrović, I. Radar and stereo vision fusion for multitarget tracking on the special Euclidean group. Robot. Auton. Syst. 2016, 83, 338–348. [Google Scholar] [CrossRef]

- Rong, S.H.; Wang, S.H.; She, H.Y. Application of millimeter wave radar and video fusion in vehicle-road cooperation system. In Proceedings of the 15th Annual China Intelligent Transportation Conference, Shenzhen, China, 4–7 November 2020; pp. 181–189. [Google Scholar]

- Du, X. Research on the Algorithm of Vehicle Front Target Detection Based on Millimeter Wave Radar and Visual Information Fusion. Master’s Thesis, Chongqing University of Technology, Chongqing, China, 2021. [Google Scholar] [CrossRef]

- Nabati, R.; Qi, H. Centerfusion: Center-based radar and camera fusion for 3d object detection. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, HI, USA, 3–8 January 2021; pp. 1527–1536. [Google Scholar]

- Liu, F.; Sparbert, J.; Stiller, C. IMMPDA vehicle tracking system using asynchronous sensor fusion of radar and vision. In Proceedings of the 2008 IEEE Intelligent Vehicles Symposium, Eindhoven, The Netherlands, 4–6 June 2008; pp. 168–173. [Google Scholar]

- Sole, A.; Mano, O.; Stein, G.P.; Kumon, H.; Tamatsu, Y.; Shashua, A. Solid or not solid: Vision for radar target validation. In Proceedings of the IEEE Intelligent Vehicles Symposium, Parma, Italy, 14–17 June 2004; pp. 819–824. [Google Scholar]

- Cao, C.; Gao, J.; Liu, Y.C. Research on space fusion method of millimeter wave radar and vision sensor. Procedia Comput. Sci. 2020, 166, 68–72. [Google Scholar] [CrossRef]

- Li, X. Forward vehicle information recognition based on millimeter wave radar and camera fusion. Master’s Thesis, Chang’an University, Xi’an, China, 2020. [Google Scholar] [CrossRef]

- Xiao, Y.J.; Huang, C.H.; Zhang, T. A review of camera calibration techniques. Commun. World Half Mon. 2015, 7, 206–207. [Google Scholar]

- Abdel-Aziz, Y.; Karara, H.M. Direct linear transformation into object space coordinates in close-range photogrammetry. In Proceedings of the Symposium on Close-Range Photogrammetry; University of Illinois: Urbana, IL, USA, 1971; pp. 1–18. [Google Scholar]

- Tsai, R. A versatile camera calibration technique for high-accuracy 3D machine vision metrology using off-the-shelf TV cameras and lenses. IEEE J. Robot. Autom. 1987, 3, 323–344. [Google Scholar] [CrossRef] [Green Version]

- Sun, P. Research on the Calibration Method of Camera; Northeast Petroleum University: Heilongjiang, China, 2016. [Google Scholar]

- Wu, J.M.; Li, Y.F.; Chen, N.N.; Wu, L. An improved DLT camera pose estimation method using RAC model. J. Southwest Univ. Sci. Technol. 2019, 34, 71. [Google Scholar]

- Zhang, Z.Y. A new camera calibration technique based on circular points. IEEE Trans. Pattern Anal. Mach. Intell. 2000, 22, 1330–1336. [Google Scholar] [CrossRef] [Green Version]

- Shu, N. Research on Camera Calibration Methods; Nanjing University of Technology: Nanjing, China, 2014. [Google Scholar]

- Maybank, S.J.; Faugeras, O.D. A theory of self-calibration of a moving camera. Int. J. Comput. Vis. 1992, 8, 123–151. [Google Scholar] [CrossRef]

- Luong, Q.T.; Faugeras, O.D. Self-calibration of a moving camera from point correspondences and fundamental matrices. Int. J. Comput. Vis. 1997, 22, 261–289. [Google Scholar] [CrossRef]

- Lourakis, M.I.A.; Deriche, R. Camera Self-Calibration Using the Singular Value Decomposition of the Fundamental Matrix: From Point Correspondences to 3D Measurements; INRIA: Paris, France, 1999. [Google Scholar]

- Huang, T.S.; Faugeras, O.D. Some properties of the E matrix in two-view motion estimation. IEEE Trans. Pattern Anal. Mach. Intell. 1989, 11, 1310–1312. [Google Scholar] [CrossRef]

- Mendonça, P.R.S.; Cipolla, R. A simple technique for self-calibration. In Proceedings of the 1999 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (Cat. No PR00149), Fort Collins, CO, USA, 23–25 June 1999; Volume 1, pp. 500–505. [Google Scholar]

- Xu, S.; Sun, X.X.; Liu, X.; Cai, M. A rectangle-based geometric method for self-calibration of cameras. J. Opt. 2014, 2014, 225–238. [Google Scholar]

- Wu, F.; Wa, G.; Hu, Z. A linear approach for determining intrinsic parameters and pose of cameras from rectangles. J. Softw. 2003, 14, 703–712. [Google Scholar]

- Wang, R.; Li, X.; Zhang, G. A linear algorithm for determining intrinsic parameters of zoomed monocular camera in the vision based landing of an UAV. Acta Aeronaut. Astronaut. Sin.-Ser. A B 2006, 27, 676. [Google Scholar]

- Olver, P.J.; Tannenbaum, A. Mathematical Methods in Computer Vision; Springer Science & Business Media: Berlin, Germany, 2003. [Google Scholar]

- Guo, Y.; Zhang, X.D.; Xu, X.H. An analytic solution for the noncoplanar P 4 P problem with an uncalibrated camera. Chin. J. Comput. 2011, 34, 748–754. [Google Scholar] [CrossRef]

- Songde, M. A self-calibration technique for active vision system. IEEE Trans. Robot. Autom. 1996, 12, 114–120. [Google Scholar]

- Hartley, R.I. Self-calibration of stationary cameras. Int. J. Comput. Vis. 1997, 22, 5–23. [Google Scholar] [CrossRef]

- Wu, F.C.; Hu, Z. A new theory and algorithm of linear camera self calibration. Chin. J. Comput. Chin. Ed. 2001, 24, 1121–1135. [Google Scholar]

- Li, H.; Wu, F. Chao. A new method for self-calibration of linear cameras. J. Comput. Sci. 2000, 23, 1121–1129. [Google Scholar]

- Gao, D.; Duan, J.; Yang, X.; Zheng, B.G. A method of spatial calibration for camera and radar. In Proceedings of the 8th World Congress on Intelligent Control and Automation, Jinan, China, 7–9 July 2010; pp. 6211–6215. [Google Scholar]

- Song, C.; Son, G.; Kim, H.; Gu, D.; Lee, J.H.; Kim, Y. A novel method of spatial calibration for camera and 2D radar based on registration. In Proceedings of the 6th IIAI International Congress on Advanced Applied Informatics (IIAI-AAI), Hamamatsu, Japan, 9–13 July 2017; pp. 1055–1056. [Google Scholar]

- Guo, L.; Liu, Z.F.; Wang, J.Q.; Li, K.Q.; Lian, X.M. A spatial synchronization method for radar and machine vision. J. Tsinghua Univ. Nat. Sci. Ed. 2006, 46, 1904–1907. [Google Scholar]

- Liu, X.; Cai, Z. Advanced obstacles detection and tracking by fusing millimeter wave radar and image sensor data. In Proceedings of the ICCAS, Gyeonggi-do, Korea, 27–30 October 2010; pp. 1115–1120. [Google Scholar]

- Wang, T.; Xin, J.; Zheng, N. A method integrating human visual attention and consciousness of radar and vision fusion for autonomous vehicle navigation. In Proceedings of the 2011 IEEE Fourth International Conference on Space Mission Challenges for Information Technology, Palo Alto, CA, USA, 2–4 August 2011; pp. 192–197. [Google Scholar]

- Liu, M.; Li, D.; Li, Q.K.; Lu, W. An online intelligent method to calibrate radar and camera sensors for data fusing. J. Phys. Conf. Ser. 2020, 1631, 012183. [Google Scholar] [CrossRef]

- Gao, J.D.; Jiao, X.; Liu, Q.Z. Machine vision and millimeter wave radar information fusion for vehicle detection. China Test. 2021, 9, 22. [Google Scholar]

- Zhai, G.Y.; Chen, R.; Zhang, J.F.; Zhang, J.G.; Wu, C.; Wang, Y.M. Obstacle detection based on millimeter wave radar and machine vision information fusion. J. Internet Things 2017, 1, 76–83. [Google Scholar]

- Qin, H. Research on Forward Vehicle Detection Algorithm Based on Vision and Millimeter Wave Radar Fusion. Hunan University: Changsha, China, 2019. [Google Scholar] [CrossRef]

- Liu, C.; Zhang, G.L.; Qiu, H.M. Research on target tracking method based on multi-sensor fusion. J. Chongqing Univ. Technol. 2021, 35, 1–7. [Google Scholar]

- Liu, T.; Zhu, Z.; Guan, H. Research on some key issues based on 1R1V sensing information fusion. Automot. Compon. 2021, 22, 22–25. [Google Scholar] [CrossRef]

- Ma, Z.G.; Zheng, Y. A sensor-based target track initiation decision algorithm for camera and millimeter wave radar fusion systems. Shanghai Automot. 2020, 17, 4–8. [Google Scholar]

- Gan, Y.D.; Zheng, L.; Zhang, Z.D.; Li, Y.N. Fusion of millimeter wave radar and depth vision for multi-target detection and tracking. Automot. Eng. 2021, 43, 1022–1029. [Google Scholar]

- Huang, Y. Research and Application of Obstacle Detection Algorithm by Fusing Camera and Millimeter Wave Radar; Yangzhou University: Yangzhou, China, 2020. [Google Scholar] [CrossRef]

- Wei, E.W.; Li, W.H.; Zhang, Z.H.; Zheng, J. A non-intrusive load matching method based on improved Hungarian algorithm. Electr. Meas. Instrum. 2019, 56, 58–64. [Google Scholar]

- Wang, P. 2-Factors in bipartite graphs containing perfect pair sets. J. Math. Phys. 2004, 24, 475–479. [Google Scholar]

- Liu, J.; Liu, Y.; He, Y.; Sun, S. A joint probabilistic data interconnection algorithm based on all-neighborhood fuzzy clustering in a cluttered wave environment. J. Electron. Inf. 2016, 38, 1438–1445. [Google Scholar]

- Zhang, J.; Xiong, W.; He, Y. Performance analysis of several simplified joint probabilistic data interconnection algorithms. Syst. Eng. Electron. Technol. 2005, 27, 1807–1810. [Google Scholar]

- Luo, S.Q.; Chen, X.; Wu, Q.; Zhou, Z.; Yu, S. Hfel: Joint edge association and resource allocation for cost-efficient hierarchical federated edge learning. IEEE Trans. Wirel. Commun. 2020, 19, 6535–6548. [Google Scholar] [CrossRef]

- Sun, N.; Qin, H.M.; Zhang, L.; Ge, R.H. Vehicle target recognition method based on multi-sensor information fusion. Automot. Eng. 2017, 39, 1310–1315. [Google Scholar]

- He, J.Z.; Wu, C.; Zhou, Z.; Cheng, S.F. A review of multi-hypothesis tracking techniques. Firepower Command. Control. 2004, 29, 1–5. [Google Scholar]

- Streit, R.L.; Luginbuhl, T.E. Probabilistic Multi-Hypothesis Tracking; Naval Underwater Systems Center: Newport, RI, USA, 1995. [Google Scholar]

- Gong, H.; Sim, J.; Likhachev, M.; Shi, J. Multi-hypothesis motion planning for visual object tracking. In Proceedings of the 2011 International Conference on Computer Vision, Barcelona, Spain, 6–13 November 2011; pp. 619–626. [Google Scholar]

- Zhai, H. Research on multi-hypothesis tracking algorithm and its application. Inf. Technol. Res. 2010, 18, 25–27. [Google Scholar]

- Chen, X. Development of Radar and Vision Information Fusion Algorithm for Vehicle Recognition in Front of Cars; Jilin University: Changchun, China, 2016. [Google Scholar]

- Xu, W.; Zhou, P.Y.; Zhang, F.; Huang, L. Vision and millimeter wave radar information fusion algorithm for pedestrian recognition. J. Tongji Univ. 2017, 45, 37–42+91. [Google Scholar]

- Wang, Y.Y.; Liu, Z.G.; Deng, H.Y.; Xu, Z.X.; Pan, D. Automatic lane-changing environment sensing system for electric trolleys. J. Tongji Univ. 2019, 47, 1201–1206. [Google Scholar]

- Chui, C.K.; Chen, G. Kalman Filtering; Springer International Publishing: Berlin/Heidelberg, Germany, 2017. [Google Scholar]

- Motwani, A.; Sharma, S.K.; Sutton, R.; Culverhouse, P. Interval Kalman filtering in navigation system design for an uninhabited surface vehicle. J. Navig. 2013, 66, 639–652. [Google Scholar] [CrossRef] [Green Version]

- Zhang, J.H.; Welch, G.; Bishop, G.; Huang, Z.Y. A two-stage Kalman filter approach for robust and real-time power system state estimation. IEEE Trans. Sustain. Energy 2013, 5, 629–636. [Google Scholar] [CrossRef]

- Liu, Y.H.; Zhang, Y.; Liu, G.P.; Zhang, H.K.; Jin, L.L.; Fan, Z.D. Design of a traffic flow data collection system based on Ravision. Comput. Meas. Control. 2022, 22, 1929. [Google Scholar]

- Liu, Y. Research on Multi-Sensor-Based Vehicle Environment Sensing Technology; Changchun University of Technology: Changchun, China, 2020. [Google Scholar]

- Lu, Y. Development of 1R1V-based sensor fusion algorithm. China Integr. Circuit 2020, 29, 66–71. [Google Scholar]

- Wu, X.; Wu, Y.; Shao, J. Research on target tracking based on millimeter wave radar and camera fusion. Mechatronics 2018, 24, 3–9+40. [Google Scholar]

- Amditis, A.; Polychronopoulos, A.; Floudas, N.; Andreone, L. Fusion of infrared vision and radar for estimating the lateral dynamics of obstacles. Inf. Fusion 2005, 6, 129–141. [Google Scholar] [CrossRef]

- Wu, X.; Ren, J.; Wu, Y. Study on target tracking based on vision and radar sensor fusion. SAE Techn. Paper 2018, 2018, 1–7. [Google Scholar] [CrossRef]

- Gong, P.; Wang, C.; Zhang, L. Mmpoint-GNN: Graph neural network with dynamic edges for human activity recognition through a millimeter-wave radar. In Proceedings of the 2021 International Joint Conference on Neural Networks (IJCNN), Shenzhen, China, 18–22 July 2021; pp. 1–7. [Google Scholar]

- McLachlan, G.J. Mahalanobis distance. Resonance 1999, 4, 20–26. [Google Scholar] [CrossRef]

- Milella, A.; Reina, G.; Underwood, J. A self-learning framework for statistical ground classification using radar and monocular vision. J. Field Robot. 2015, 32, 20–41. [Google Scholar] [CrossRef]

- Milella, A.; Reina, G.; Underwood, J.; Douillard, B. Combining radar and vision for self-supervised ground segmentation in outdoor environments. In Proceedings of the 2011 IEEE/RSJ International Conference on Intelligent Robots and Systems, San Francisco, CA, USA, 25–30 September 2011; pp. 255–260. [Google Scholar]

- Zhang, B.L.; Zhan, Y.H.; Pan, D.W.; Chen, J.; Song, W.J.; Liu, W.T. Vehicle detection based on millimeter wave radar and machine vision fusion. Automot. Eng. 2021, 43, 478–484. [Google Scholar]

- Jia, W. Research on Vehicle Detection Method Based on Radar and Vision Fusion; Dalian University of Technology: Dalian, China, 2021. [Google Scholar]

- Leondes, C.T. Fuzzy Theory Systems; Academic Press: Cambridge, MA, USA, 1999. [Google Scholar]

- Zimmermann, H.J. Fuzzy set theory. Wiley Interdiscip. Rev. Comput. Stat. 2010, 2, 317–332. [Google Scholar] [CrossRef]

- Boyd, R.V.; Glass, C.E. Interpreting ground-penetrating radar images using object-oriented, neural, fuzzy, and genetic processing//Ground Sensing. Int. Soc. Opt. Photonics 1993, 1941, 169–180. [Google Scholar]

- Cheng, Z.M.; Liu, X.L.; Qiu, T.Y. Vehicle target recognition system based on fuzzy control for millimeter wave radar. In Proceedings of the 9th International Conference on Intelligent Human-Machine Systems and Cybernetics (IHMSC), Hangzhou, China, 26–27 August 2017; Volume 1, pp. 175–178. [Google Scholar]

- Choi, B.; Kim, B.; Kim, E.; Lee, H.; Yang, K.W. A new target recognition scheme using 24GHz microwave RADAR. In Proceedings of the 2012 International conference on Fuzzy Theory and Its Applications (iFUZZY2012), Taichung, Taiwan, 16–18 November 2012; pp. 220–222. [Google Scholar]

- Cennamo, A.; Kaestner, F.; Kummert, A. A neural network based system for efficient semantic segmentation of radar point clouds. Neural Processing Lett. 2021, 53, 3217–3235. [Google Scholar] [CrossRef]

- Winter, M.; Favier, G. A neural network for data association. In Proceedings of the IEEE International Conference on Acoustics, Speech, and Signal Processing, Phoenix, AZ, USA, 12–17 May 1999; Volume 2, pp. 1041–1044. [Google Scholar]

- Liu, C.; Zhang, G.L.; Zheng, Y.W.; He, X.Y. A multi-sensor fusion method based on detection of trace-free information fusion algorithm. Automot. Eng. 2020, 42, 854–859. [Google Scholar]

- Coué, C.; Fraichard, T.; Bessiere, P.; Mazer, E. Multi-sensor data fusion using Bayesian programming: An automotive application. Intelligent Vehicle Symposium: Versailles, France, 2002; Volume 2, pp. 442–447. [Google Scholar]

- Dai, Y.P.; Ma, J.J.; Ma, X.H. Theory and Application of Intelligent Fusion of Multi-Sensor Data; Mechanical Industry Press: Beijing, China, 2021; pp. 119–140. [Google Scholar]

- Luo, Y.; Lei, Y.; Wang, I. A DS fusion method based on millimeter wave radar and CCD camera information. Data Acquis. Processing 2014, 29, 648–653. [Google Scholar]

- Jin, L.S.; Cheng, L.; Cheng, B. Detection of vehicles ahead at night based on millimeter wave radar and machine vision. J. Automot. Saf. Energy Conserv. 2016, 7, 1354. [Google Scholar]

- Liu, D.; Zhang, J.; Jin, J.; Dai, Y.S.; Li, L.G. A new approach of obstacle fusion detection for unmanned surface vehicles using Dempster-Shafer evidence theory. Appl. Ocean. Res. 2022, 119, 103016. [Google Scholar] [CrossRef]

- Mo, C. Research on Environment Perception Algorithm of Intelligent Vehicle Based on Vision and Radar Information Fusion; Chongqing University: Chongqing, China, 2018. [Google Scholar]

- Wang, S.; Zhang, R. Vehicle detection based on millimeter wave radar and vision information fusion. Sci. Technol. Wind. 2019, 7. [Google Scholar] [CrossRef]

- Zhang, X.; Zhou, M.; Qiu, P.; Huang, Y.; Li, J. Radar and vision fusion for the real-time obstacle detection and identification. Ind. Robot. Int. J. Robot. Res. Appl. 2019, 46, 391–395. [Google Scholar] [CrossRef]

- Shi, Y.; Li, J. Sensor fusion technology in the field of automotive autonomous driving. Equip. Mach. 2021, 21, 2140. [Google Scholar]

- Jiang, Y. Research on Forward Vehicle Detection and Tracking Algorithm with Millimeter Wave Radar and Machine Vision Fusion; Chongqing University: Chongqing, China, 2019. [Google Scholar] [CrossRef]

- Wu, S.J. Research on millimeter wave radar and vision fusion boarding bridge forward target recognition method. Univ. Electron. Sci. Technol. 2021. [Google Scholar] [CrossRef]

- Lekic, V.; Babic, Z. Automotive radar and camera fusion using generative adversarial networks. Comput. Vis. Image Underst. 2019, 184, 1–8. [Google Scholar] [CrossRef]

- Kim, J.; Kim, Y.; Kum, D. Low-level sensor fusion network for 3D vehicle detection using radar range-azimuth heatmap and monocular image. In Proceedings of the Asian Conference on Computer Vision, Kyoto, Japan, 30 November–4 December 2020. [Google Scholar]

- Meyer, M. Deep learning based 3D object detection for automotive radar and camera. In Proceedings of the European Radar Conference, Paris, France, 2–4 October 2019. [Google Scholar]

- Chadwick, S.; Maddern, W.; Newman, P. Distant vehicle detection using radar and vision. In Proceedings of the International Conference on Robotics and Automation (ICRA), Montreal, Canada, 20–24 May 2019; pp. 8311–8317. [Google Scholar]

- Lim, T.Y.; Ansari, A.; Major, B.; Fontijne, D.; Hamilton, M.; Gowaikar, R.; Subramanian, S. Radar and camera early fusion for vehicle detection in advanced driver assistance systems. In Proceedings of the Machine Learning for Autonomous Driving Workshop at the 33rd Conference on Neural Information Processing Systems, Vancouver, Canada, 8–14 December 2019; p. 2. [Google Scholar]

- Zhang, H.; Cheng, C.; Xu, Z.; Li, J. A review of research on data fusion methods based on deep learning. Comput. Eng. Appl. 2020, 24, 1–11. [Google Scholar]

- Wu, J.; Hu, K.; Cheng, Y.W.; Zhu, H.P.; Shao, X.Y.; Wang, Y.H. Data-driven remaining useful life prediction via multiple sensor signals and deep long short-term memory neural network. ISA Trans. 2019, 97, 241–250. [Google Scholar] [CrossRef]

| Fusion Structure | Definition | Advantages | Disadvantages |

|---|---|---|---|

| Centralised convergence | The centralised fusion architecture means that the raw data obtained by each MMW radar and camera is sent directly to the central processor for fusion processing, which allows for real-time fusion. | The structure has a low information loss rate and largely preserves the original data, it has a simple structure, high data processing accuracy, relatively flexible algorithms and fast fusion. | Each MMW radar and camera is independent of each other and the data flows directly to the fusion centre without the necessary connection; the method requires a large communication bandwidth for transmission and requires the fusion centre to have a high information processing computing power. The fusion centre is overloaded with computing and communication, the system is poorly fault tolerant and less reliable. |

| Distributed Convergence | In a distributed fusion architecture, each MMW radar and camera locally processes the raw data, makes an initial prediction and then sends the results separately to the fusion centre for fusion and finally obtains the target results. | Each MMW radar and camera in this approach has the ability to estimate global information, reducing the transmission and computing pressure on the fusion centre. Failure of any one MMW radar or camera will not cause the system to crash, resulting in high system reliability and fault tolerance. And with its low communication bandwidth requirements, fast computing speed, reliability and continuity, this fusion architecture has also become more popular with researchers in recent years. | Each MMW radar and camera requires an application processor, which is larger and more power-efficient; and the central processor can only access the processed object data from the individual sensors, not the raw data. |

| Hybrid integration | A hybrid architecture, a mixture of centralised and distributed applications, where some sensors use a centralised fusion architecture and the remaining sensors use a distributed one. Measurements from multiple sensors for each target are combined into a hybrid measurement, and the hybrid measurement is then used to update the full data. | The hybrid converged architecture retains the advantages of both centralised and distributed architectures, allowing for flexibility in fulfilling task requirements in different situations and a high degree of usability. | Hybrid sensor fusion has a more complex structure, increasing the communication and computational load, but it requires high structural design requirements for communication bandwidth and computational power, and the system is instable. |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhou, Y.; Dong, Y.; Hou, F.; Wu, J. Review on Millimeter-Wave Radar and Camera Fusion Technology. Sustainability 2022, 14, 5114. https://doi.org/10.3390/su14095114

Zhou Y, Dong Y, Hou F, Wu J. Review on Millimeter-Wave Radar and Camera Fusion Technology. Sustainability. 2022; 14(9):5114. https://doi.org/10.3390/su14095114

Chicago/Turabian StyleZhou, Yong, Yanyan Dong, Fujin Hou, and Jianqing Wu. 2022. "Review on Millimeter-Wave Radar and Camera Fusion Technology" Sustainability 14, no. 9: 5114. https://doi.org/10.3390/su14095114

APA StyleZhou, Y., Dong, Y., Hou, F., & Wu, J. (2022). Review on Millimeter-Wave Radar and Camera Fusion Technology. Sustainability, 14(9), 5114. https://doi.org/10.3390/su14095114