Power to the Learner: Towards Human-Intuitive and Integrative Recommendations with Open Educational Resources

Abstract

1. Introduction

2. Related Work

2.1. Opportunities and Challenges in Scaling Personalised Education

2.2. Scalable Content Representation

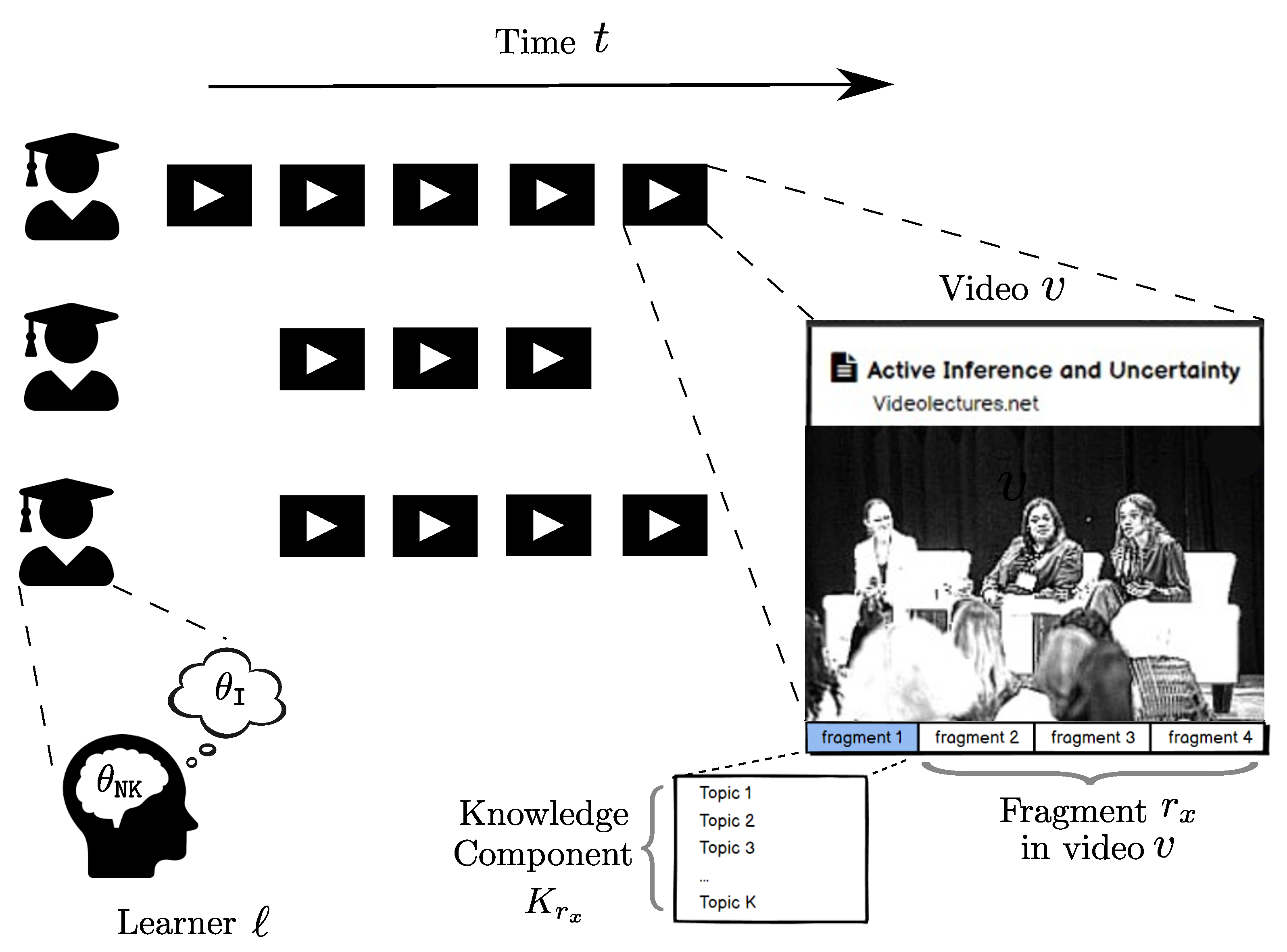

Fragments of Content

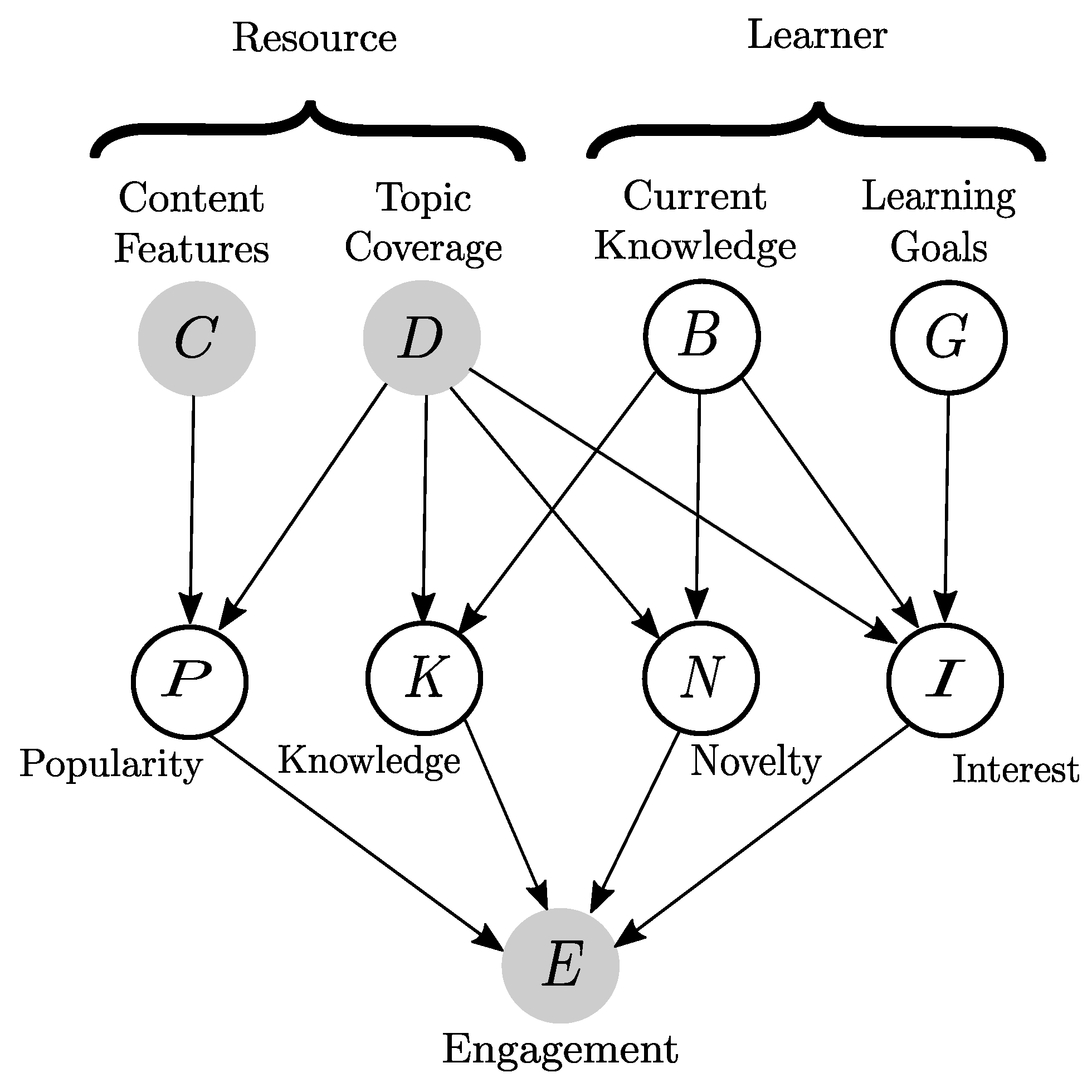

2.3. Learner Interest, Novelty, Knowledge, and Content Popularity

2.4. Combining Predictions

3. Integrative and Personalised Educational Recommendations

3.1. Problem Setting

3.2. Data

3.3. Baseline Models

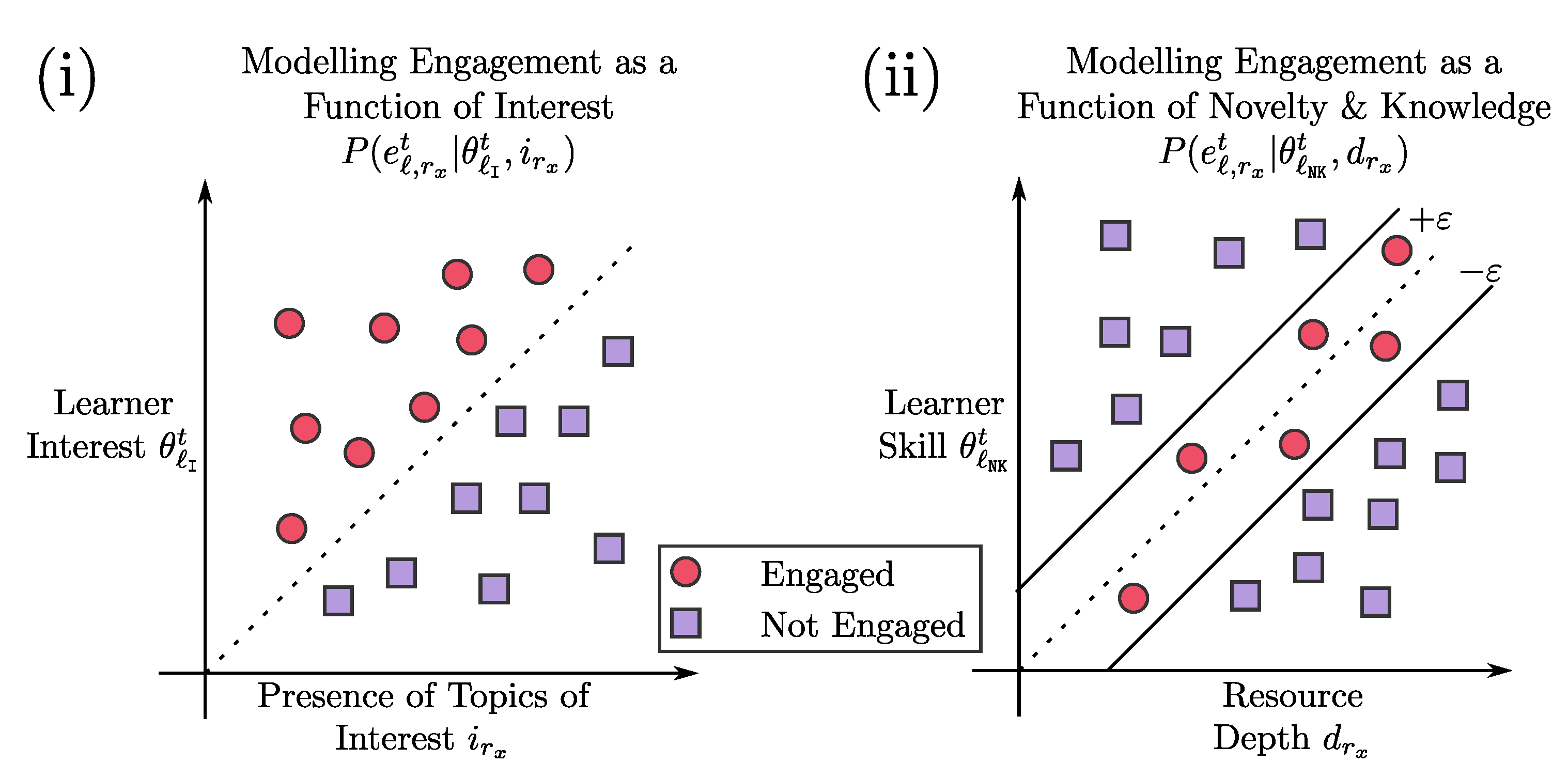

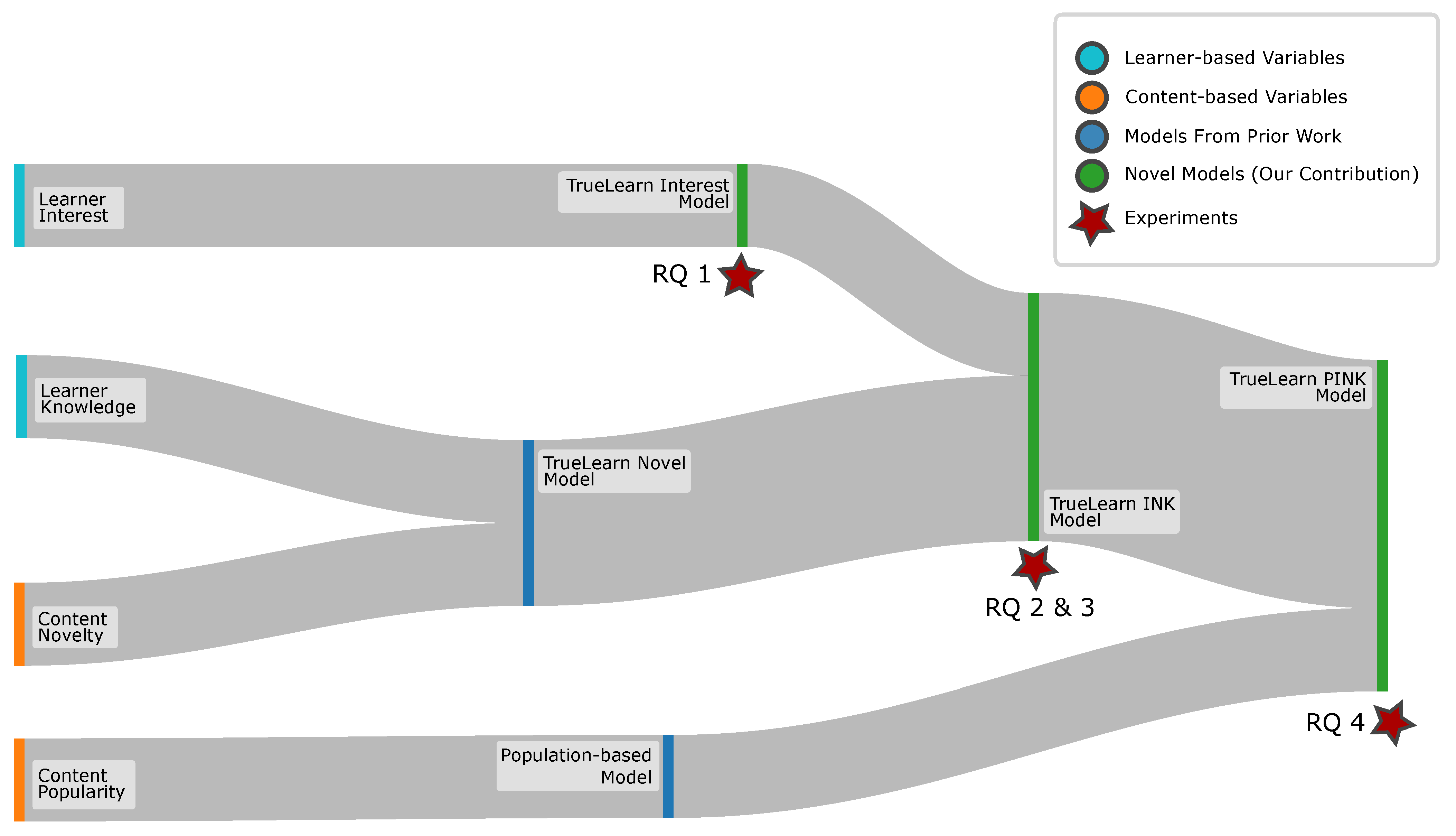

3.4. Learner Interest

3.4.1. Interest Tracing Model

3.4.2. TrueLearn Interest Model

3.5. Combining Interest, Novelty, and Knowledge: TrueLearn INK Model

- Probabilistic Combination of Outcomes: Using probability theory to combine the predictions together;

- Meta-Learner: Learning how to weigh the two predictions to obtain a more accurate final engagement prediction.

3.5.1. Using Probabilistic Combination with Existing Meta-Learners

3.5.2. Meta-TrueLearn

3.6. Combining Population-Based Prior (P + INK): TrueLearn PINK Model

3.6.1. TrueLearn PINK (Switching)

| Algorithm 1 Hybrid Recommender TrueLearn PINK using Switching | |

| Require:, | |

| Require: | ▹ upper ceiling of |

| Ensure: | |

| for do | |

| if then | ▹ scenario |

| ▹ estimate from population-based predictor | |

| else if then | |

| ▹ estimate from personalised model | |

| end if | |

| end for | |

3.6.2. TrueLearn PINK (Meta)

3.7. Experiments

- RQ 1: How well do the interest models perform?

- RQ 2: How well do different combining mechanisms perform with TrueLearn INK?

- RQ 3: Does combining the individual models lead to superior performance?

- RQ 4: Does combining the population-based component in early stage prediction further improve performance?

3.8. Evaluation Metrics

4. Results and Discussion

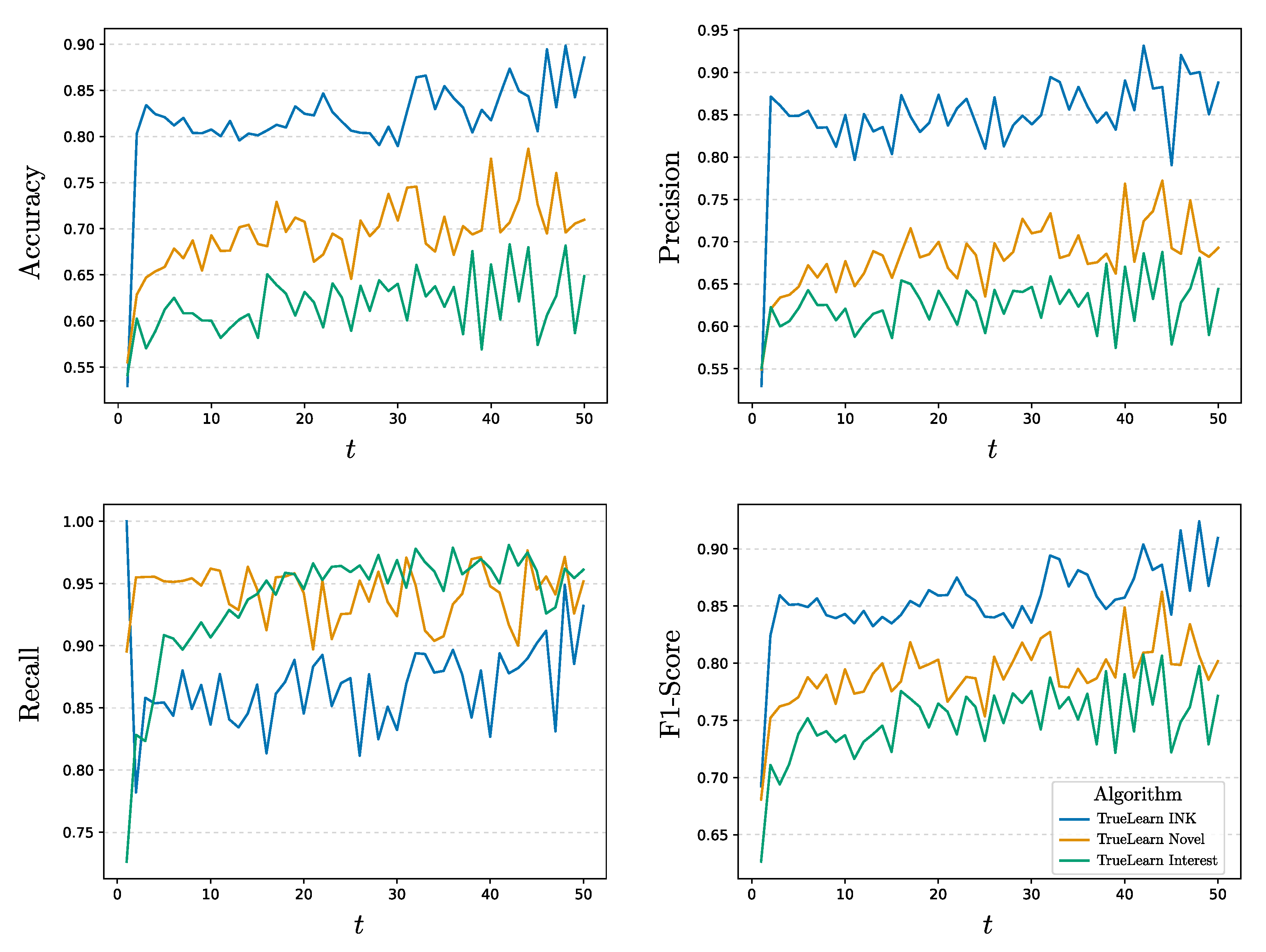

4.1. Predictive Performance of TrueLearn Interest (RQ 1)

4.1.1. On Performance of Model

4.1.2. TrueLearn Interest vs. TrueLearn Novel

4.2. Predictive Performance of TrueLearn INK (RQ 2 and 3)

Meta-Weights and Topic Sparsity

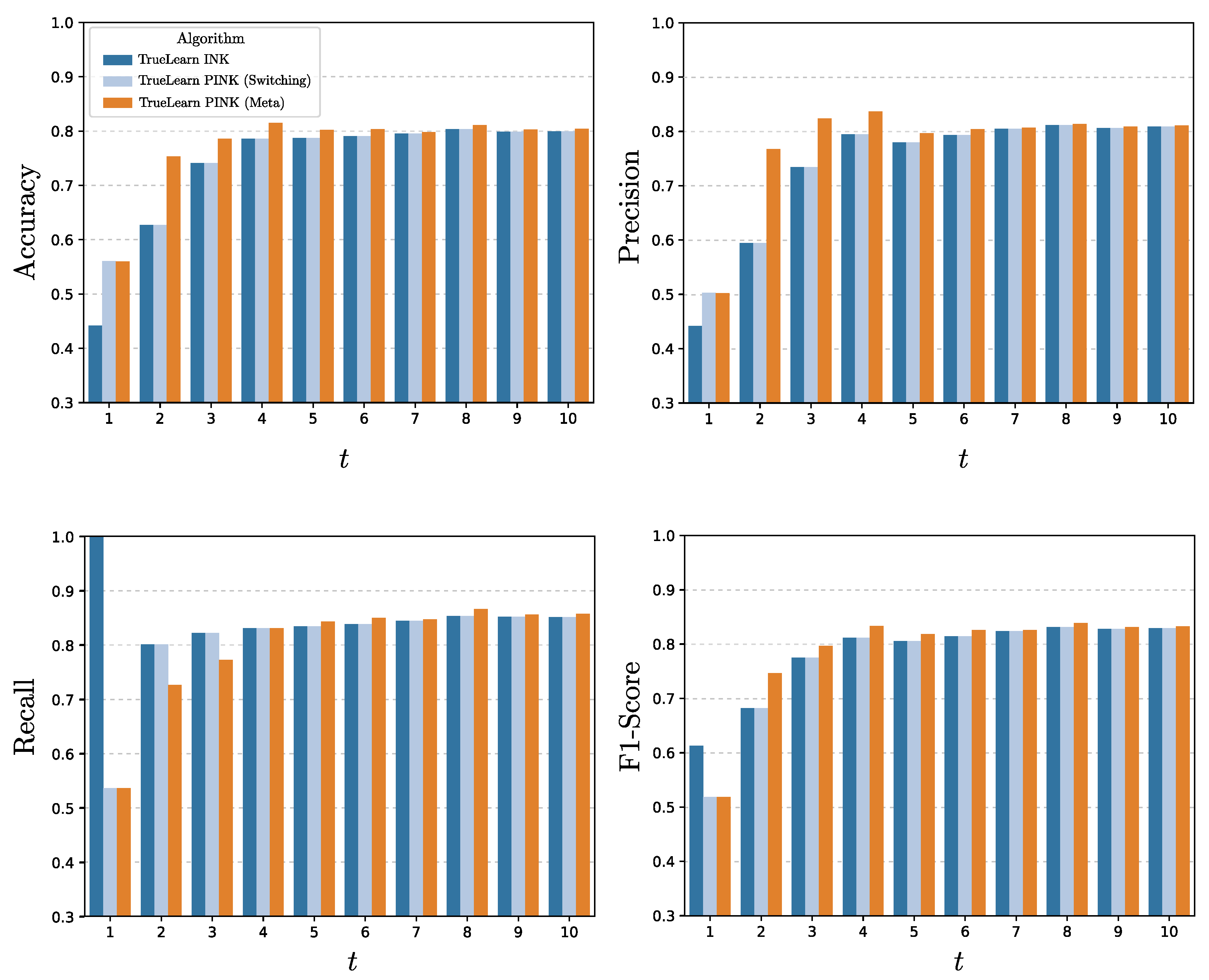

4.3. TrueLearn PINK: Addressing the Cold-Start Issue for TrueLearn INK (RQ 4)

Impact of the Population-Based Model

4.4. Opportunities and Limitations

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| SDG | Sustainable Development Goal |

| AI | Artificial Intelligence |

| EDM | Educational Data Mining |

| ITS | Intelligent Tutoring Systems |

| EdRecSys | Educational Recommendation Systems |

| OER | Open Educational Resources |

| MOOC | Massively Open Online Courses |

| TF | Term Frequency |

| TFIDF | Term-Frequency-Inverse Document Frequency |

| KT | Knowledge Tracing |

| IRT | Item Response Theory |

| KC | Knowledge Components |

| LDA | Latent Dirichlet Allocation |

| INK | Interest, Novelty, Knowledge |

| PINK | Popularity, Interest, Novelty, Knowledge |

| AMD | Advanced Micro Devices |

| CPU | Central Processing Unit |

| RAM | Random Access Memory |

| GB | Gigabyte |

References

- Bulathwela, S.; Pérez-Ortiz, M.; Holloway, C.; Shawe-Taylor, J. Could AI Democratise Education? Socio-Technical Imaginaries of an EdTech Revolution. In Proceedings of the NeurIPS Workshop on Machine Learning for the Developing World (ML4D), Online, 14 December 2021. [Google Scholar]

- Bulathwela, S.; Perez-Ortiz, M.; Yilmaz, E.; Shawe-Taylor, J. Towards an Integrative Educational Recommender for Lifelong Learners. In Proceedings of the AAAI Conference on Artificial Intelligence, New York, NY, USA, 7–12 February 2020. [Google Scholar]

- Bulathwela, S.; Verma, M.; Perez-Ortiz, M.; Yilmaz, E.; Shawe-Taylor, J. Can Population-based Engagement Improve Personalisation? A Novel Dataset and Experiments. In Proceedings of the International Conference on Educational Data Mining (EDM ’22), Durham, UK, 24–27 July 2022. [Google Scholar]

- Hlosta, M.; Krauss, C.; Verbert, K.; Bonnin, G.; Millecamp, M.; Bayer, V. Workshop on Educational Recommender Systems (EdRecSys@LAK2020). 2020. Available online: http://events.kmi.open.ac.uk/edrecsys2020/ (accessed on 3 June 2022).

- Yudelson, M.V.; Koedinger, K.R.; Gordon, G.J. Individualized Bayesian Knowledge Tracing Models. In Proceedings of the International Conference on Artificial Intelligence in Education, Memphis, TN, USA, 9–13 July 2013; Lane, H.C., Yacef, K., Mostow, J., Pavlik, P., Eds.; Springer: Berlin/Heidelberg, Germany, 2013. [Google Scholar]

- Vie, J.J.; Kashima, H. Knowledge tracing machines: Factorization machines for knowledge tracing. In Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, HI, USA, 27 January–1 February 2019; Volume 33, pp. 750–757. [Google Scholar]

- Piech, C.; Bassen, J.; Huang, J.; Ganguli, S.; Sahami, M.; Guibas, L.J.; Sohl-Dickstein, J. Deep Knowledge Tracing. In Proceedings of the Advances in Neural Information Processing Systems, Montreal, QC, Canada, 7–12 December 2015. [Google Scholar]

- Kim, S.; Kim, W.; Jang, Y.; Choi, S.; Jung, H.; Kim, H. Student Knowledge Prediction for Teacher-Student Interaction. In Proceedings of the AAAI Conference on Artificial Intelligence, Virtually, 2–9 February 2021; Volume 35, pp. 15560–15568. [Google Scholar]

- Pardos, Z.A.; Jiang, W. Designing for Serendipity in a University Course Recommendation System. In Proceedings of the Tenth International Conference on Learning Analytics & Knowledge (LAK ’20), Frankfurt am Main, Germany, 25–27 March 2020; pp. 350–359. [Google Scholar]

- Bulathwela, S.; Perez-Ortiz, M.; Yilmaz, E.; Shawe-Taylor, J. TrueLearn: A Family of Bayesian Algorithms to Match Lifelong Learners to Open Educational Resources. In Proceedings of the AAAI Conference on Artificial Intelligence, New York, NY, USA, 7–12 February 2020. [Google Scholar]

- Wang, L.; Meinel, C. Mining the Students’ Learning Interest in Browsing Web-Streaming Lectures. In Proceedings of the 2007 IEEE Symposium on Computational Intelligence and Data Mining, Honolulu, HI, USA, 1–5 April 2007. [Google Scholar] [CrossRef]

- Jiang, W.; Pardos, Z.A.; Wei, Q. Goal-based Course Recommendation. In Proceedings of the International Conference on Learning Analytics & Knowledge, Tempe, AZ, USA, 4–8 March 2019. [Google Scholar]

- Ahmad, N.; Bull, S. Learner Trust in Learner Model Externalisations. In Proceedings of the Conference on Artificial Intelligence in Education, Brighton, UK, 6–10 July 2009. [Google Scholar]

- Bull, S. There are open learner models about! IEEE Trans. Learn. Technol. 2020, 13, 425–448. [Google Scholar] [CrossRef]

- Williamson, K.; Kizilcec, R.F. Effects of Algorithmic Transparency in Bayesian Knowledge Tracing on Trust and Perceived Accuracy. In Proceedings of the 14th International Conference on Educational Data Mining (EDM 2021), Online, 29 June 29–2 July 2021. [Google Scholar]

- UNESCO. Open Educational Resources (OER). 2019. Available online: https://en.unesco.org/themes/building-knowledge-societies/oer (accessed on 1 April 2019).

- Ramesh, A.; Goldwasser, D.; Huang, B.; Daume III, H.; Getoor, L. Learning latent engagement patterns of students in online courses. In Proceedings of the AAAI Conference on Artificial Intelligence, Quebec City, QC, Canada, 27–31 July 2014. [Google Scholar]

- Ehlers, M.; Schuwer, R.; Janssen, B. OER in TVET: Open Educational Resources for Skills Development; Technical Report; UNESCO-UNEVOC International Centre for Technical and Vocational Education and Training: Bonn, Germany, 2018. [Google Scholar]

- Sunar, A.S.; Novak, E.; Mladenic, D. Users’ Learning Pathways on Cross-site Open Educational Resources. In Proceedings of the 12th International Conference on Computer Supported Education (CSEDU 2020), Prague, Czech Republic, 2–4 May 2020; pp. 84–95. [Google Scholar]

- Novak, E.; Urbančič, J.; Jenko, M. Preparing Multi-Modal Data for Natural Language Processing. In Proceedings of the Slovenian KDD Conf. on Data Mining and Data Warehouses (SiKDD), Ljubljana, Slovenia, 11 October 2018. [Google Scholar]

- Pérez Ortiz, M.; Bulathwela, S.; Dormann, C.; Verma, M.; Kreitmayer, S.; Noss, R.; Shawe-Taylor, J.; Rogers, Y.; Yilmaz, E. Watch Less and Uncover More: Could Navigation Tools Help Users Search and Explore Videos? In Proceedings of the ACM SIGIR Conference on Human Information Interaction and Retrieval, Regensburg, Germany, 1–5 March 2022; Association for Computing Machinery: New York, NY, USA, 2022; pp. 90–101. [Google Scholar] [CrossRef]

- Perez-Ortiz, M.; Novak, E.; Bulathwela, S.; Shawe-Taylor, J. An AI-Based Learning Companion Promoting Lifelong Learning Opportunities for All. 2020. Available online: https://ircai.org/wp-content/uploads/2021/01/IRCAI_REPORT_01.pdf (accessed on 3 June 2022).

- Kang, J.; Lee, H. Modeling user interest in social media using news media and wikipedia. Inf. Syst. 2017, 65, 52–64. [Google Scholar] [CrossRef]

- Abel, F.; Gao, Q.; Houben, G.J.; Tao, K. Twitter-Based User Modeling for News Recommendations. In Proceedings of the Twenty-Third International Joint Conference on Artificial Intelligence (IJCAI ’13), Beijing, China, 3–9 August 2013. [Google Scholar]

- Safari, R.M.; Rahmani, A.M.; Alizadeh, S.H. User behavior mining on social media: A systematic literature review. Multimed. Tools Appl. 2019, 78, 33747–33804. [Google Scholar] [CrossRef]

- Piao, G.; Breslin, J.G. Inferring user interests in microblogging social networks: A survey. User Model. -User-Adapt. Interact. 2018, 28, 277–329. [Google Scholar] [CrossRef]

- Piao, G. Recommending Knowledge Concepts on MOOC Platforms with Meta-path-based Representation Learning. In Proceedings of the International Conference on Educational Data Mining, Virtual, 29 June–2 July 2021. [Google Scholar]

- Selent, D.; Patikorn, T.; Heffernan, N. ASSISTments Dataset from Multiple Randomized Controlled Experiments. In Proceedings of the Third (2016) ACM Conf. on Learning @ Scale (L@S ’16), Scotland, UK, 25–26 April 2016; Association for Computing Machinery: New York, NY, USA, 2016. [Google Scholar] [CrossRef]

- Bauman, K.; Tuzhilin, A. Recommending remedial learning materials to students by filling their knowledge gaps. MIS Q. 2018, 42, 313–332. [Google Scholar] [CrossRef]

- Corbett, A.T.; Anderson, J.R. Knowledge tracing: Modeling the acquisition of procedural knowledge. User Model. -User-Adapt. Interact. 1994, 4, 253–278. [Google Scholar] [CrossRef]

- Blei, D.M.; Ng, A.Y.; Jordan, M.I. Latent Dirichlet Allocation. J. Mach. Learn. Res. 2003, 3, 993–1022. [Google Scholar]

- Brank, J.; Leban, G.; Grobelnik, M. Annotating Documents with Relevant Wikipedia Concepts. In Proceedings of the Slovenian KDD Conference on Data Mining and Data Warehouses (SiKDD), Ljubljana, Slovenia, 5 October 2017. [Google Scholar]

- Piao, G.; Breslin, J.G. Analyzing Aggregated Semantics-Enabled User Modeling on Google+ and Twitter for Personalized Link Recommendations. In Proceedings of the 2016 Conference on User Modeling Adaptation and Personalization (UMAP ’16), Halifax, NS, Canada, 13–17 July 2016. [Google Scholar]

- Piao, G.; Breslin, J.G. Analyzing MOOC Entries of Professionals on LinkedIn for User Modeling and Personalized MOOC Recommendations. In Proceedings of the 2016 Conference on User Modeling Adaptation and Personalization (UMAP ’16), Halifax, NS, Canada, 13–17 July 2016. [Google Scholar]

- Schenkel, R.; Broschart, A.; Hwang, S.; Theobald, M.; Weikum, G. Efficient text proximity search. In Proceedings of the International Symposium on String Processing and Information Retrieval, Orlando, FL, USA, 13–15 October 2007; Springer: Berlin/Heidelberg, Germany, 2007; pp. 287–299. [Google Scholar]

- Yu, Y.; Karlgren, J.; Bonab, H.; Clifton, A.; Tanveer, M.I.; Jones, R. Spotify at the TREC 2020 Podcasts Track: Segment Retrieval. In Proceedings of the Twenty-Ninth Text REtrieval Conference (TREC 2020), Gaithersburg, MD, USA, 16–20 November 2020. [Google Scholar]

- Mahapatra, D.; Mariappan, R.; Rajan, V.; Yadav, K.; Seby, A.; Roy, S. VideoKen: Automatic Video Summarization and Course Curation to Support Learning. In Proceedings of the Companion Proceedings of the The Web Conference 2018, Lyon, France, 23–27 April 2018; International World Wide Web Conferences Steering Committee: Geneva, Switzerland, 2018; pp. 239–242. [Google Scholar] [CrossRef]

- Chen, J.; Chen, X.; Ma, L.; Jie, Z.; Chua, T.S. Temporally grounding natural sentence in video. In Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, Brussels, Belgium, 31 October–4 November 2018; pp. 162–171. [Google Scholar]

- Verma, G.; Nalamada, T.; Harpavat, K.; Goel, P.; Mishra, A.; Srinivasan, B.V. Non-Linear Consumption of Videos Using a Sequence of Personalized Multimodal Fragments. In Proceedings of the 26th International Conference on Intelligent User Interfaces (IUI ’21), College Station, TX, USA, 14–17 April 2021; Association for Computing Machinery: New York, NY, USA, 2021; pp. 249–259. [Google Scholar] [CrossRef]

- Bulathwela, S.; Perez-Ortiz, M.; Novak, E.; Yilmaz, E.; Shawe-Taylor, J. PEEK: A Large Dataset of Learner Engagement with Educational Videos. In Proceedings of the RecSys Workshop on Online Recommender Systems and User Modeling (ORSUM’21), Online, 2 October 2021. [Google Scholar]

- Guo, P.J.; Kim, J.; Rubin, R. How Video Production Affects Student Engagement: An Empirical Study of MOOC Videos. In Proceedings of the First ACM Conference on Learning @ Scale, Atlanta, GA, USA, 4–5 March 2014. [Google Scholar]

- Frey, M. Netflix Recommends: Algorithms, Film Choice, and the History of Taste; University of California Press: Berkeley, CA, USA, 2021. [Google Scholar]

- Smith, B.; Linden, G. Two Decades of Recommender Systems at Amazon.com. IEEE Internet Comput. 2017, 21, 12–18. [Google Scholar] [CrossRef]

- Zarrinkalam, F.; Faralli, S.; Piao, G.; Bagheri, E. Extracting, Mining and Predicting Users’ Interests from Social Media. Found. Trends® Inf. Retr. 2020, 14, 445–617. [Google Scholar] [CrossRef]

- Dinh, X.T.; Van Pham, H. A Proposal of Deep Learning Model for Classifying User Interests on Social Networks. In Proceedings of the 4th International Conference on Machine Learning and Soft Computing, Association for Computing Machinery (ICMLSC 2020), Haiphong City, Vietnam, 17–19 January 2020. [Google Scholar] [CrossRef]

- Syed, R.; Collins-Thompson, K. Retrieval Algorithms Optimized for Human Learning. In Proceedings of the International Conference on Research and Development in Information Retrieval (SIGIR), Tokyo, Japan, 7–11 August 2017. [Google Scholar]

- Alvarez-Melis, D.; Saveski, M. Topic Modeling in Twitter: Aggregating Tweets by Conversations. In Proceedings of the International AAAI Conference on Web and Social Media, Virtually, 7–10 June 2021. [Google Scholar]

- Li, W.; Saigo, H.; Tong, B.; Suzuki, E. Topic modeling for sequential documents based on hybrid inter-document topic dependency. J. Intell. Inf. Syst. 2021, 56, 435–458. [Google Scholar] [CrossRef]

- Urdaneta-Ponte, M.C.; Mendez-Zorrilla, A.; Oleagordia-Ruiz, I. Recommendation systems for education: Systematic review. Electronics 2021, 10, 1611. [Google Scholar] [CrossRef]

- Guruge, D.; Kadel, R.; Halder, S. The State of the Art in Methodologies of Course Recommender Systems—A Review of Recent Research. Data 2021, 6, 18. [Google Scholar] [CrossRef]

- Herbrich, R.; Minka, T.; Graepel, T. TrueSkill(TM): A Bayesian Skill Rating System. In Proceedings of the Advances in Neural Information Processing Systems 20, Vancouver, BC, Canada, 3–6 December 2007; pp. 569–576. [Google Scholar]

- Bishop, C.; Winn, J.; Diethe, T. Model-Based Machine Learning; Early Access Version. 2015. Available online: http://www.mbmlbook.com/ (accessed on 23 May 2019).

- Schmucker, R.; Wang, J.; Hu, S.; Mitchell, T. Assessing the Performance of Online Students—New Data, New Approaches, Improved Accuracy. J. Educ. Data Min. 2022, 14, 1–45. [Google Scholar] [CrossRef]

- Mandalapu, V.; Gong, J.; Chen, L. Do we need to go deep? knowledge tracing with big data. arXiv 2021, arXiv:2101.08349. [Google Scholar]

- Pelánek, R.; Papoušek, J.; Řihák, J.; Stanislav, V.; Nižnan, J. Elo-based learner modeling for the adaptive practice of facts. User Model. -User-Adapt. Interact. 2017, 27, 89–118. [Google Scholar] [CrossRef]

- Bonafini, F.; Chae, C.; Park, E.; Jablokow, K. How much does student engagement with videos and forums in a MOOC affect their achievement? Online Learn. J. 2017, 21. Available online: https://www.learntechlib.org/p/183772/ (accessed on 12 January 2022). [CrossRef]

- Lan, A.S.; Brinton, C.G.; Yang, T.Y.; Chiang, M. Behavior-Based Latent Variable Model for Learner Engagement. In Proceedings of the International Conference on Educational Data Mining, Wuhan, China, 25–28 June 2017. [Google Scholar]

- Bulathwela, S.; Perez-Ortiz, M.; Lipani, A.; Yilmaz, E.; Shawe-Taylor, J. Predicting Engagement in Video Lectures. In Proceedings of the International Conference on Educational Data Mining (EDM ’20), Virtual, 10–13 July 2020. [Google Scholar]

- Kuncheva, L.I. Combining Pattern Classifiers: Methods and Algorithms; John Wiley & Sons: New York, NY, USA, 2014. [Google Scholar] [CrossRef]

- Inigo, M.; Jameson, J.; Kozak, K.; Lanzetta, M.; Sonier, K. Combining Probabilities with “And” and “Or”. 2021. Available online: https://math.libretexts.org/Bookshelves/Applied_Mathematics/Book%3A_College_Mathematics_for_Everyday_Life_(Inigo_et_al)/03%3A_Probability/3.02%3A_Combining_Probabilities_with_And_and_Or (accessed on 20 May 2021).

- Kuncheva, L.I. Ensemble Methods. In Combining Pattern Classifiers; John Wiley & Sons, Ltd.: New York, NY, USA, 2014; Chapter 6; pp. 186–229. [Google Scholar] [CrossRef]

- Pardos, Z.A.; Gowda, S.M.; Baker, R.S.; Heffernan, N.T. The Sum is Greater than the Parts: Ensembling Models of Student Knowledge in Educational Software. SIGKDD Explor. Newsl. 2012, 13, 37–44. [Google Scholar] [CrossRef]

- Shah, T.; Olson, L.; Sharma, A.; Patel, N. Explainable Knowledge Tracing Models for Big Data: Is Ensembling an Answer? arXiv 2020, arXiv:2011.05285. [Google Scholar]

- Burke, R. Hybrid recommender systems: Survey and experiments. User Model. -User-Adapt. Interact. 2002, 12, 331–370. [Google Scholar] [CrossRef]

- Wu, S.; Rizoiu, M.; Xie, L. Beyond Views: Measuring and Predicting Engagement in Online Videos. In Proceedings of the Twelfth International Conference on Web and Social Media, Stanford, CA, USA, 25–28 June 2018. [Google Scholar]

- Covington, P.; Adams, J.; Sargin, E. Deep Neural Networks for YouTube Recommendations. In Proceedings of the ACM Conference on Recommender Systems, Boston, MA, USA, 15 September 2016. [Google Scholar]

- Jannach, D.; Lerche, L.; Zanker, M. Recommending Based on Implicit Feedback. In Social Information Access; Springer: Berlin/Heidelberg, Germany, 2018. [Google Scholar]

- Frey, B.J.; MacKay, D. A Revolution: Belief Propagation in Graphs with Cycles. In Proceedings of the Advances in Neural Information Processing Systems, Denver, CO, USA, 30 November–5 December 1998; Jordan, M., Kearns, M., Solla, S., Eds.; MIT Press: Cambridge, MA, USA, 1998; Volume 10. [Google Scholar]

- Minka, T. Divergence Measures and Message Passing; Technical Report MSR-TR-2005-173; Microsoft Research: Redmond, WA, USA, 2005. [Google Scholar]

- Graepel, T.; Candela, J.Q.; Borchert, T.; Herbrich, R. Web-scale bayesian click-through rate prediction for sponsored search advertising in microsoft’s bing search engine. In Proceedings of the 27th International Conference on Machine Learning (ICML 2010), Haifa, Israel, 21–24 June 2010. [Google Scholar]

- Zaharia, M.; Chowdhury, M.; Das, T.; Dave, A.; Ma, J.; McCauly, M.; Franklin, M.J.; Shenker, S.; Stoica, I. Resilient distributed datasets: A fault-tolerant abstraction for in-memory cluster computing. In Proceedings of the 9th USENIX Symposium on Networked Systems Design and Implementation (NSDI 12), San Jose, CA, USA, 25–27 April 2012; pp. 15–28. [Google Scholar]

- Dean, J.; Ghemawat, S. MapReduce: Simplified data processing on large clusters. Commun. ACM 2008, 51, 107–113. [Google Scholar] [CrossRef]

- Molnar, C. Interpretable Machine Learning: A Guide for Making Black Box Models Explainable; Independent Publishers: Chicago, IL, USA, 2022. [Google Scholar]

- Bull, S.; Kay, J. Metacognition and open learner models. In Proceedings of the 3rd Workshop on Meta-Cognition and Self-Regulated Learning in Educational Technologies, at ITS2008, 2008; pp. 7–20. Available online: https://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.217.4070&rep=rep1&type=pdf (accessed on 12 January 2022).

- Hooshyar, D.; Pedaste, M.; Saks, K.; Leijen, Ä.; Bardone, E.; Wang, M. Open learner models in supporting self-regulated learning in higher education: A systematic literature review. Comput. Educ. 2020, 154, 103878. [Google Scholar] [CrossRef]

- Margetis, G.; Ntoa, S.; Antona, M.; Stephanidis, C. Human-Centered design of artificial intelligence. In Handbook of Human Factors and Ergonomics; John Wiley & Sons: New York, NY, USA, 2021; pp. 1085–1106. [Google Scholar]

- Slater, S.; Baker, R.; Ocumpaugh, J.; Inventado, P.; Scupelli, P.; Heffernan, N. Semantic Features of Math Problems: Relationships to Student Learning and Engagement. In Proceedings of the International Conference on Educational Data Mining, Raleigh, NC, USA, 29 June 2016. [Google Scholar]

- Ponza, M.; Ferragina, P.; Chakrabarti, S. On Computing Entity Relatedness in Wikipedia, with Applications. Knowl.-Based Syst. 2020, 188, 105051. [Google Scholar] [CrossRef]

- Liang, S. Collaborative, dynamic and diversified user profiling. In Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, HI, USA, 27 January–1 February 2019; Volume 33. [Google Scholar]

| Algorithm | Acc. | Prec. | Rec. | F1 | |

|---|---|---|---|---|---|

| Cosine | 55.08 | 57.86 | 58.45 | 54.06 | |

| 55.46 | 57.81 | 60.36 | 55.03 | ||

| Baseline | 64.05 | 57.85 | 72.76 | 61.22 | |

| Models | TF(Binary) | 55.19 | 56.71 | 66.60 | 57.38 |

| TF(Cosine) | 55.11 | 56.75 | 65.95 | 57.11 | |

| TFIDF(Cosine) | 41.80 | 31.70 | 9.05 | 10.67 | |

| Our New | Interest Tracing | 47.95 | 52.05 | 37.24 | 38.96 |

| Proposals | TrueLearn Interest | 57.70 | 56.83 | 78.74(*) | 62.50(*) |

| Algorithm | Acc. | Prec. | Rec. | F1 |

|---|---|---|---|---|

| Best Baselines from Table 1 | ||||

| TF(Binary) | 55.19 | 56.71 | 66.60 | 57.38 |

| 64.05 | 57.85 | 72.76 | 61.22 | |

| TrueLearn Models in Isolation | ||||

| TrueLearn Interest | 57.70 | 56.83 | 78.74 | 62.50 |

| TrueLearn Novel | 64.40 | 58.42 | 80.15 | 65.12 |

| TrueLearn INK Models (Our New Proposals) | ||||

| AND | 65.33 (*) | 58.70 (*) | 69.80 | 61.68 |

| OR | 56.74 | 56.74 | 88.92(*) | 65.63 (*) |

| Logistic | 78.58(*) | 64.07(*) | 68.17 | 65.86 (*) |

| Perceptron | 78.56 (*) | 64.05 (*) | 68.58 | 66.04(*) |

| Meta-TrueLearn | 78.71(*) | 64.19(*) | 68.62 | 66.14(*) |

| Algorithm | Predicting First Event | Predicting All Events | ||||||

|---|---|---|---|---|---|---|---|---|

| Acc. | Prec. | Rec. | F1 | Acc. | Prec. | Rec. | F1 | |

| Best Performing Model from Table 2 | ||||||||

| TrueLearn INK | 44.21 | 44.21 | 100.0 | 61.32 | 76.26 | 63.36 | 69.30 | 65.84 |

| TrueLearn PINK Models (Our New Proposals) | ||||||||

| Switching | 56.09(*) | 50.32(*) | 53.58 | 51.89 | 77.08(*) | 63.92(*) | 66.55 | 64.95 |

| Meta | 56.02(*) | 50.25(*) | 53.58 | 51.85 | 78.90(*) | 64.88(*) | 66.06 | 65.29 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bulathwela, S.; Pérez-Ortiz, M.; Yilmaz, E.; Shawe-Taylor, J. Power to the Learner: Towards Human-Intuitive and Integrative Recommendations with Open Educational Resources. Sustainability 2022, 14, 11682. https://doi.org/10.3390/su141811682

Bulathwela S, Pérez-Ortiz M, Yilmaz E, Shawe-Taylor J. Power to the Learner: Towards Human-Intuitive and Integrative Recommendations with Open Educational Resources. Sustainability. 2022; 14(18):11682. https://doi.org/10.3390/su141811682

Chicago/Turabian StyleBulathwela, Sahan, María Pérez-Ortiz, Emine Yilmaz, and John Shawe-Taylor. 2022. "Power to the Learner: Towards Human-Intuitive and Integrative Recommendations with Open Educational Resources" Sustainability 14, no. 18: 11682. https://doi.org/10.3390/su141811682

APA StyleBulathwela, S., Pérez-Ortiz, M., Yilmaz, E., & Shawe-Taylor, J. (2022). Power to the Learner: Towards Human-Intuitive and Integrative Recommendations with Open Educational Resources. Sustainability, 14(18), 11682. https://doi.org/10.3390/su141811682