The Half-Truth Effect and Its Implications for Sustainability

Abstract

:1. Introduction

“The lie which is half a truth is ever the blackest of lies.”[1] (St. 8).

2. Literature Review and Gap Analysis

3. The Half-Truth Effect

4. Sustainability and Misinformation

5. Countering the Half-Truth Effect through Poison Parasite Counter

6. Materials and Methods

6.1. Overview

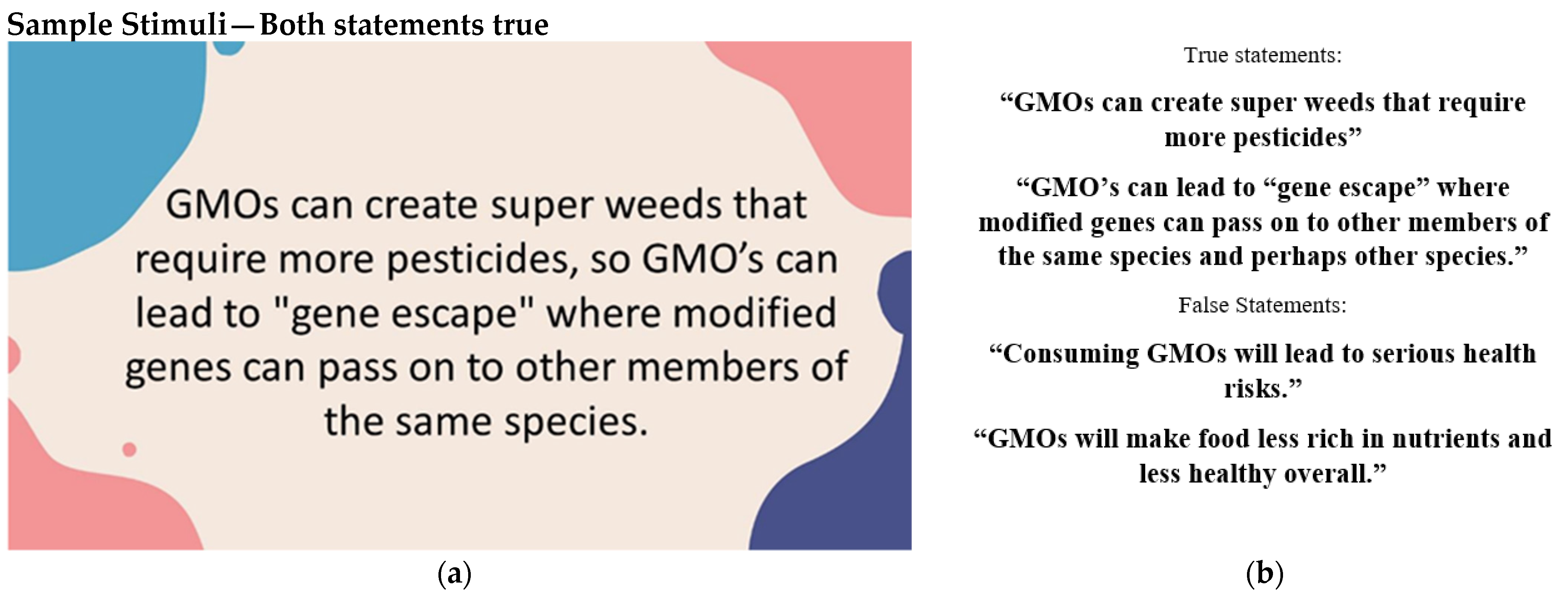

6.2. Pretest

7. Experimental Results

7.1. Overview of Experiments

7.2. Experiment 1: Participants and Design

7.3. Experiment 1: Procedure

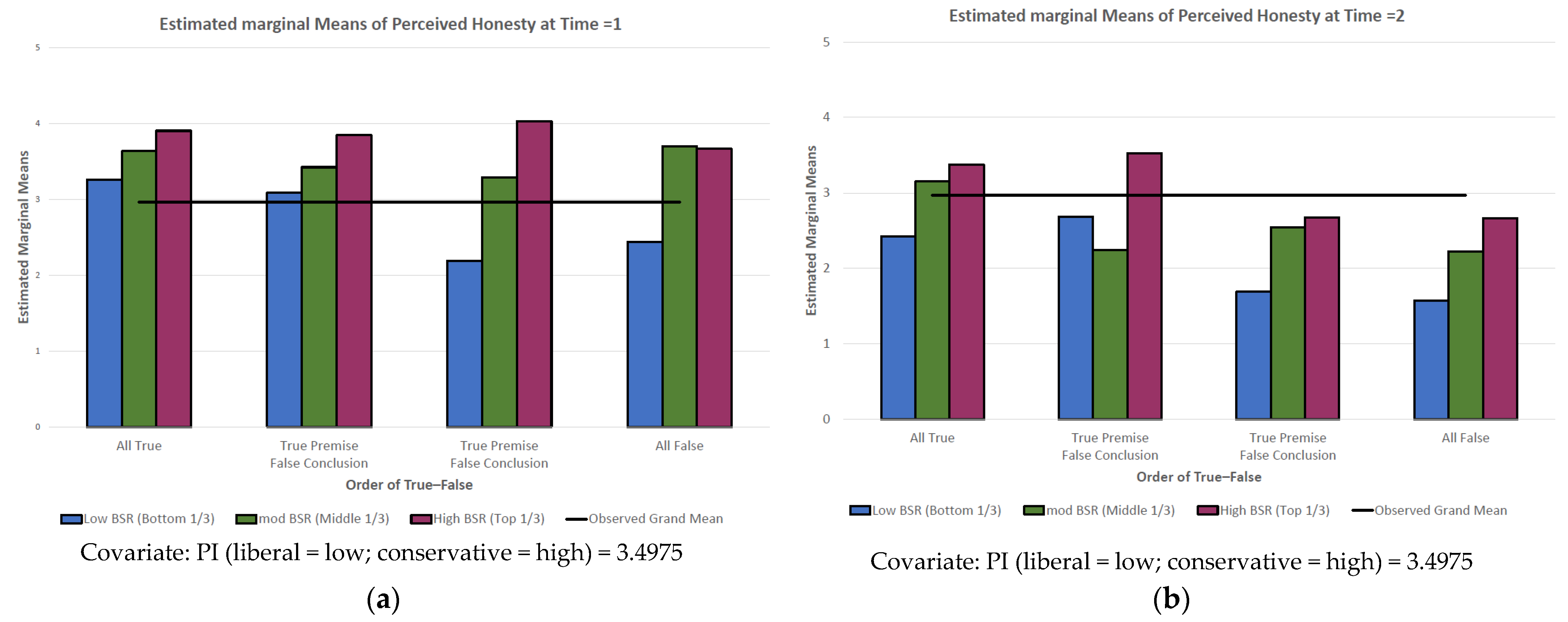

7.4. Experiment 1: Results and Discussion

7.5. Experiment 2: Participants and Design

7.6. Experiment 2: Procedure

7.7. Experiment 2: Results

8. Discussion

“That a lie which is half a truth is ever the blackest of lies,That a lie which is all a lie may be met and fought with outright,But a lie which is part a truth is a harder matter to fight.”[1] (St. 8).

9. Theoretical and Practical Implications, Limitations, and Future Research

10. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

Appendix B

References

- Tennyson, L.A. The Grandmother. 1864. Available online: https://collections.vam.ac.uk/item/O198447/the-grandmother-photograph-cameron-julia-margaret/ (accessed on 30 March 2022).

- Lewandowsky, S. Climate Change Disinformation and how to Combat It. Annu. Rev. Public Health 2021, 42, 1–21. [Google Scholar] [CrossRef] [PubMed]

- Treen, K.M.d.; Williams, H.T.P.; O’Neill, S.J. Online Misinformation about Climate Change. Wiley interdisciplinary reviews. Clim. Change 2020, 11, e665. [Google Scholar]

- Vicario, M.D.; Bessi, A.; Zollo, F.; Petroni, F.; Scala, A.; Caldarelli, G.; Stanley, H.E.; Quattrociocchi, W. The Spreading of Misinformation Online. Proc. Natl. Acad. Sci. USA 2016, 113, 554–559. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Wang, Y.; McKee, M.; Torbica, A.; Stuckler, D. Systematic Literature Review on the Spread of Health-Related Misinformation on Social Media. Soc. Sci. Med. 2019, 240, 112552. [Google Scholar] [CrossRef]

- Barthel, M.; Mitchell, A.; Holcomb, J. Many Americans Believe Fake News Is Sowing Confusion. 2016. Available online: https://www.pewresearch.org/journalism/2016/12/15/many-americans-believe-fake-news-is-sowing-confusion/ (accessed on 30 March 2022).

- Vosoughi, S.; Roy, D.; Aral, S. The Spread of True and False News Online. Sci. (Am. Assoc. Adv. Sci.) 2018, 359, 1146–1151. [Google Scholar] [CrossRef]

- Hong, S.C. Presumed Effects of “Fake News” on the Global Warming Discussion in a Cross-Cultural Context. Sustainability 2020, 12, 2123. [Google Scholar] [CrossRef] [Green Version]

- Kim, S.; Kim, S. The Crisis of Public Health and Infodemic: Analyzing Belief Structure of Fake News about COVID-19 Pandemic. Sustainability 2020, 12, 9904. [Google Scholar] [CrossRef]

- Ries, M. The COVID-19 Infodemic: Mechanism, Impact, and Counter-Measures—A Review of Reviews. Sustainability 2022, 14, 2605. [Google Scholar] [CrossRef]

- Scheufele, D.A.; Krause, N.M. Science Audiences, Misinformation, and Fake News. Proc. Natl. Acad. Sci. USA 2019, 116, 7662–7669. [Google Scholar] [CrossRef] [Green Version]

- De Sousa, Á.F.L.; Schneider, G.; de Carvalho, H.E.F.; de Oliveira, L.B.; Lima, S.V.M.A.; de Sousa, A.R.; de Araújo, T.M.E.; Camargo, E.L.S.; Oriá, M.O.B.; Ramos, C.V.; et al. COVID-19 Misinformation in Portuguese-Speaking Countries: Agreement with Content and Associated Factors. Sustainability 2021, 14, 235. [Google Scholar] [CrossRef]

- Farrell, J.; McConnell, K.; Brulle, R. Evidence-Based Strategies to Combat Scientific Misinformation. Nat. Clim. Change 2019, 9, 191–195. [Google Scholar] [CrossRef]

- Larson, H.J. The Biggest Pandemic Risk? Viral Misinformation. Nature 2018, 562, 309. [Google Scholar] [CrossRef] [Green Version]

- Loomba, S.; de Figueiredo, A.; Piatek, S.J.; de Graaf, K.; Larson, H.J. Measuring the Impact of COVID-19 Vaccine Misinformation on Vaccination Intent in the UK and USA. Nat. Hum. Behav. 2021, 5, 337–348. [Google Scholar] [CrossRef]

- Van Der Linden, S.; Maibach, E.; Cook, J.; Leiserowitz, A.; Lewandowsky, S. Inoculating Against Misinformation. Sci. (Am. Assoc. Adv. Sci.) 2017, 358, 1141–1142. [Google Scholar] [CrossRef]

- Cacciatore, M.A. Misinformation and Public Opinion of Science and Health: Approaches, Findings, and Future Directions. Proc. Natl. Acad. Sci. USA 2021, 118, 1. [Google Scholar] [CrossRef]

- Charlton, E. Fake News: What It Is, and How to Spot It. 2019. Available online: https://europeansting.com/2019/03/06/fake-news-what-it-is-and-how-to-spot-it/ (accessed on 30 March 2022).

- Merriam-Webster. Half-Truth. In Merriam-Webster.Com Dictionary. 2022. Available online: https://www-merriam-webster-com.uc.idm.oclc.org/dictionary/half-truth (accessed on 30 March 2022).

- Pennycook, G.; Cheyne, J.A.; Barr, N.; Koehler, D.J.; Fugelsang, J.A. On the Reception and Detection of Pseudo-Profound Bullshit. Judgm. Decis. Mak. 2015, 10, 549–563. [Google Scholar]

- Cialdini, R.B.; Lasky-Fink, J.; Demaine, L.J.; Barrett, D.W.; Sagarin, B.J.; Rogers, T. Poison Parasite Counter: Turning Duplicitous Mass Communications into Self-Negating Memory-Retrieval Cues. Psychol. Sci. 2021, 32, 1811–1829. [Google Scholar] [CrossRef]

- Xarhoulacos, C.; Anagnostopoulou, A.; Stergiopoulos, G.; Gritzalis, D. Misinformation Vs. Situational Awareness: The Art of Deception and the Need for Cross-Domain Detection. Sensors 2021, 21, 5496. [Google Scholar] [CrossRef]

- Van Bavel, J.J.; Pereira, A. The Partisan Brain: An Identity-Based Model of Political Belief. Trends Cogn. Sci. 2018, 22, 213–224. [Google Scholar] [CrossRef] [Green Version]

- Pereira, A.; Harris, E.; Van Bavel, J.J. Identity Concerns Drive Belief: The Impact of Partisan Identity on the Belief and Dissemination of True and False News. Group Processes Intergroup Relat. 2021, 136843022110300. [Google Scholar] [CrossRef]

- Pennycook, G.; Rand, D.G. Lazy, Not Biased: Susceptibility to Partisan Fake News is Better Explained by Lack of Reasoning than by Motivated Reasoning. Cognition 2019, 188, 39–50. [Google Scholar] [CrossRef]

- Fazio, L.K.; Pillai, R.M.; Patel, D. The Effects of Repetition on Belief in Naturalistic Settings. J. Exp. Psychol. Gen. 2022. [Google Scholar] [CrossRef]

- Fazio, L.K. Repetition Increases Perceived Truth Even for Known Falsehoods. Collabra. Psychol. 2020, 6, 38. [Google Scholar] [CrossRef]

- Pennycook, G.; Cannon, T.D.; Rand, D.G. Prior Exposure Increases Perceived Accuracy of Fake News. J. Exp. Psychol. Gen. 2018, 147, 1865–1880. [Google Scholar] [CrossRef]

- Begg, I.M.; Anas, A.; Farinacci, S. Dissociation of Processes in Belief: Source Recollection, Statement Familiarity, and the Illusion of Truth. J. Exp. Psychol. Gen. 1992, 121, 446–458. [Google Scholar]

- Unkelbach, C. Reversing the Truth Effect: Learning the Interpretation of Processing Fluency in Judgments of Truth. J. Exp. Psychol. Learn. Mem. Cogn. 2007, 33, 219–230. [Google Scholar] [CrossRef]

- Sanchez, C.; Dunning, D. Cognitive and Emotional Correlates of Belief in Political Misinformation: Who Endorses Partisan Misbeliefs? Emotion 2021, 21, 1091–1102. [Google Scholar] [CrossRef]

- Pennycook, G.; Rand, D.G. Who Falls for Fake News? the Roles of Bullshit Receptivity, Overclaiming, Familiarity, and Analytic Thinking. J. Pers. 2020, 88, 185–200. [Google Scholar] [CrossRef]

- Bronstein, M.V.; Pennycook, G.; Bear, A.; Rand, D.G.; Cannon, T.D. Belief in Fake News is Associated with Delusionality, Dogmatism, Religious Fundamentalism, and Reduced Analytic Thinking. J. Appl. Res. Mem. Cogn. 2019, 8, 108–117. [Google Scholar] [CrossRef]

- Gligorić, V.; Moreira da Silva, M.; Eker, S.; van Hoek, N.; Nieuwenhuijzen, E.; Popova, U.; Zeighami, G. The Usual Suspects: How Psychological Motives and Thinking Styles Predict the Endorsement of Well-Known and COVID-19 Conspiracy Beliefs. Appl. Cogn. Psychol. 2021, 35, 1171–1181. [Google Scholar] [CrossRef]

- De keersmaecker, J.; Dunning, D.; Pennycook, G.; Rand, D.G.; Sanchez, C.; Unkelbach, C.; Roets, A. Investigating the Robustness of the Illusory Truth Effect Across Individual Differences in Cognitive Ability, Need for Cognitive Closure, and Cognitive Style. Personal. Soc. Psychol. Bull. 2020, 46, 204–215. [Google Scholar] [CrossRef] [PubMed]

- Lerner, J.S.; Small, D.A.; Loewenstein, G. Heart Strings and Purse Strings: Carryover Effects of Emotions on Economic Decisions. Psychol. Sci. 2004, 15, 337–341. [Google Scholar] [CrossRef] [PubMed]

- Kruglanski, A.W.; Shah, J.Y.; Pierro, A.; Mannetti, L. When Similarity Breeds Content: Need for Closure and the Allure of Homogeneous and Self-Resembling Groups. J. Pers. Soc. Psychol. 2002, 83, 648–662. [Google Scholar] [CrossRef] [PubMed]

- Priester, J.R.; Petty, R.E. Source Attributions and Persuasion: Perceived Honesty as a Determinant of Message Scrutiny. Personal. Soc. Psychol. Bull. 1995, 21, 637–654. [Google Scholar] [CrossRef]

- Priester, J.R.; Petty, R.E. The Influence of Spokesperson Trustworthiness on Message Elaboration, Attitude Strength, and Advertising Effectiveness. J. Consum. Psychol. 2003, 13, 408–421. [Google Scholar] [CrossRef]

- Kruglanski, A.W.; Webster, D.M. Motivated Closing of the Mind: “Seizing” and “Freezing”. Psychol. Rev. 1996, 103, 263–283. [Google Scholar] [CrossRef]

- Roets, A.; Kruglanski, A.W.; Kossowska, M.; Pierro, A.; Hong, Y. The Motivated Gatekeeper of our Minds: New Directions in Need for Closure Theory and Research. In Advances in Experimental Social Psychology; Elsevier Science & Technology: Waltham MA, USA, 2015; Volume 52, pp. 221–283. [Google Scholar]

- Wyer, R.S.; Xu, A.J.; Shen, H. The Effects of Past Behavior on Future Goal-Directed Activity. In Advances in Experimental Social Psychology; Elsevier Science & Technology: Waltham, MA, USA, 2012; Volume 46, pp. 237–283. [Google Scholar]

- Xu, A.J.; Wyer, R.S. The Role of Bolstering and Counterarguing Mind-Sets in Persuasion. J. Consum. Res. 2012, 38, 920–932. [Google Scholar] [CrossRef] [Green Version]

- Berg, J.; Dickhaut, J.; McCabe, K. Trust, Reciprocity, and Social History. Games Econ. Behav. 1995, 10, 122–142. [Google Scholar] [CrossRef] [Green Version]

- Dechêne, A.; Stahl, C.; Hansen, J.; Wänke, M. The Truth about the Truth: A Meta-Analytic Review of the Truth Effect. Personal. Soc. Psychol. Rev. 2010, 14, 238–257. [Google Scholar] [CrossRef]

- Hasher, L.; Goldstein, D.; Toppino, T. Frequency and the Conference of Referential Validity. J. Verbal Learn. Verbal Behav. 1977, 16, 107–112. [Google Scholar] [CrossRef]

- Brown, A.S.; Nix, L.A. Turning Lies into Truths: Referential Validation of Falsehoods. J. Exp. Psychol. Learn. Mem. Cogn. 1996, 22, 1088–1100. [Google Scholar] [CrossRef]

- Reber, R.; Schwarz, N. Effects of Perceptual Fluency on Judgments of Truth. Conscious. Cogn. 1999, 8, 338–342. [Google Scholar] [CrossRef] [Green Version]

- Alter, A.L.; Oppenheimer, D.M. Uniting the Tribes of Fluency to Form a Metacognitive Nation. Personal. Soc. Psychol. Rev. 2009, 13, 219–235. [Google Scholar] [CrossRef]

- Hassan, A.; Barber, S.J. The Effects of Repetition Frequency on the Illusory Truth Effect. Cogn. Res. Princ. Implic. 2021, 6, 38. [Google Scholar] [CrossRef]

- Gigerenzer, G. External Validity of Laboratory Experiments: The Frequency-Validity Relationship. Am. J. Psychol. 1984, 97, 185–195. [Google Scholar] [CrossRef]

- Fazio, L.K.; Brashier, N.M.; Payne, B.K.; Marsh, E.J. Knowledge does Not Protect Against Illusory Truth. J. Exp. Psychol. Gen. 2015, 144, 993–1002. [Google Scholar] [CrossRef]

- Cook, J.; Oreskes, N.; Doran, P.T.; Anderegg, W.R.L.; Verheggen, B.; Maibach, E.W.; Carlton, J.S.; Lewandowsky, S.; Skuce, A.G.; Green, S.A.; et al. Consensus on Consensus: A Synthesis of Consensus Estimates on Human-Caused Global Warming. Environ. Res. Lett. 2016, 11, 48002–48008. [Google Scholar] [CrossRef]

- Marlon, J.; Neyens, L.; Jefferson, M.; Howe, P.; Mildenberger, M.; Leiserowitz, A. Yale Climate Opinion Maps 2021. 2022. Available online: https://climatecommunication.yale.edu/visualizations-data/ycom-us/ (accessed on 30 March 2022).

- Butters, C. Myths and Issues about Sustainable Living. Sustainability 2021, 13, 7521. [Google Scholar] [CrossRef]

- Piñeiro, V.; Arias, J.; Dürr, J.; Elverdin, P.; Ibáñez, A.M.; Kinengyere, A.; Opazo, C.M.; Owoo, N.; Page, J.R.; Prager, S.D. A Scoping Review on Incentives for Adoption of Sustainable Agricultural Practices and their Outcomes. Nat. Sustain. 2020, 3, 809–820. [Google Scholar] [CrossRef]

- Teklewold, H.; Kassie, M.; Shiferaw, B. Adoption of Multiple Sustainable Agricultural Practices in Rural Ethiopia. J. Agric. Econ. 2013, 64, 597–623. [Google Scholar] [CrossRef]

- Mannion, A.M.; Morse, S. Biotechnology in Agriculture: Agronomic and Environmental Considerations and Reflections Based on 15 Years of GM Crops. Prog. Phys. Geogr. 2012, 36, 747–763. [Google Scholar] [CrossRef] [Green Version]

- Zilberman, D.; Holland, T.G.; Trilnick, I. Agricultural GMOs-what we Know and Where Scientists Disagree. Sustainability 2018, 10, 1514. [Google Scholar] [CrossRef] [Green Version]

- Kamal, A.; Al-Ghamdi, S.G.; Koc, M. Revaluing the Costs and Benefits of Energy Efficiency: A Systematic Review. Energy Res. Soc. Sci. 2019, 54, 68–84. [Google Scholar] [CrossRef]

- Pew Research Center. Genetically Modified Foods (GMOs) and Views on Food Safety; Pew Research Center: Washington, DC, USA, 2015. [Google Scholar]

- Ecker, U.K.H.; Lewandowsky, S.; Jayawardana, K.; Mladenovic, A. Refutations of Equivocal Claims: No Evidence for an Ironic Effect of Counterargument Number. J. Appl. Res. Mem. Cogn. 2019, 8, 98–107. [Google Scholar] [CrossRef]

- Wahlheim, C.N.; Alexander, T.R.; Peske, C.D. Reminders of Everyday Misinformation Statements can Enhance Memory for and Beliefs in Corrections of those Statements in the Short Term. Psychol. Sci. 2020, 31, 1325–1339. [Google Scholar] [CrossRef]

- Autry, K.S.; Duarte, S.E. Correcting the Unknown: Negated Corrections may Increase Belief in Misinformation. Appl. Cogn. Psychol. 2021, 35, 960–975. [Google Scholar] [CrossRef]

- Berezow, A. The Pervasive Myth that GMOs Pose a Threat. 2013. Available online: https://www.usnews.com/debate-club/should-consumers-be-worried-about-genetically-modified-food/the-pervasive-myth-that-gmos-pose-a-threat (accessed on 30 March 2022).

- Alliance for Science. 10 Myths about GMOs. 2015. Available online: https://allianceforscience.cornell.edu/10-myths-about-gmos/ (accessed on 30 March 2022).

- Clarkson, J.J.; Chambers, J.R.; Hirt, E.R.; Otto, A.S.; Kardes, F.R.; Leone, C. The Self-Control Consequences of Political Ideology. Proc. Natl. Acad. Sci. USA 2015, 112, 8250–8253. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Faul, F.; Erdfelder, E.; Buchner, A.; Lang, A. Statistical Power Analyses using GPower 3.1: Tests for Correlation and Regression Analyses. Behav. Res. Methods 2009, 41, 1149–1160. [Google Scholar] [CrossRef] [Green Version]

- Hayes, A.F. Introduction to Mediation, Moderation, and Conditional Process Analysis: A Regression-Based Approach; The Guilford Press: New York, NY, USA, 2021. [Google Scholar]

- Safire, W. The New Language of Politics: An Anecdotal Dictionary of Catchwords, Slogans, and Political Usage; Random House: New York, NY, USA, 1968; pp. xvi+528. [Google Scholar]

- Stekelenburg, A.V.; Schaap, G.J.; Veling, H.P.; Buijzen, M.A. Boosting Understanding and Identification of Scientific Consensus can Help to Correct False Beliefs. Psychol. Sci. 2021, 32, 1549–1565. [Google Scholar] [CrossRef] [PubMed]

- Haugtvedt, C.P.; Wegener, D.T. Message Order Effects in Persuasion: An Attitude Strength Perspective. J. Consum. Res. 1994, 21, 205–218. [Google Scholar] [CrossRef]

- Tormala, Z.L.; Clarkson, J.J. Assimilation and Contrast in Persuasion: The Effects of Source Credibility in Multiple Message Situations. Personal. Soc. Psychol. Bull. 2007, 33, 559–571. [Google Scholar] [CrossRef]

- Nicolia, A.; Manzo, A.; Veronesi, F.; Rosellini, D. An Overview of the Last 10 Years of Genetically Engineered Crop Safety Research. Crit. Rev. Biotechnol. 2014, 34, 77–88. [Google Scholar] [CrossRef]

- Waeber, P.O.; Stoudmann, N.; Langston, J.D.; Ghazoul, J.; Wilmé, L.; Sayer, J.; Nobre, C.; Innes, J.L.; Fernbach, P.; Sloman, S.A.; et al. Choices we make in Times of Crisis. Sustainability 2021, 13, 3578. [Google Scholar] [CrossRef]

- Pronin, E.; Gilovich, T.; Ross, L. Objectivity in the Eye of the Beholder: Divergent Perceptions of Bias in Self Versus Others. Psychol. Rev. 2004, 111, 781–799. [Google Scholar] [CrossRef] [Green Version]

| Truth–Honesty Mean (Std. Err.) | Premise (True) Conclusion (True) N = 74 | Premise (True) Conclusion (False) N = 73 | Premise (False) Conclusion (True) N = 69 | Premise (False) Conclusion (False) N = 71 |

|---|---|---|---|---|

| Time 1—original stimulus | 5.3 b (0.185) | 5.0 b (0.186) | 4.1 c (0.192) | 4.0 c (0.188) |

| Time 2 a—after de-biasing | 4.5 b (0.197) | 4.6 b (0.198) | 3.7 c (0.204) | 3.6 c (0.201) |

| Truth–Honesty Mean (Std. Err.) | Premise (True) Conclusion (True) N = 74 | Premise (True) Conclusion (False) N = 73 g | Premise (False) Conclusion (True) N = 69 | Premise (False) Conclusion (False) N = 71 | |

|---|---|---|---|---|---|

| Time 1— original stimulus | Low BSR (<1.4) | 3.3 a (0.226) | 3.1 a (0.237) | 2.2 b (0.241) | 2.4 b (0.211) |

| Med. BSR (3.5 > 1.4) | 3.6 c (0.224) | 3.4 c (0.310) | 3.3 c (0.252) | 3.7 c (0.237) | |

| High BSR (>3.5) | 3.9 c (0.204) | 3.8 c (0.216) | 4.0 c (0.232) | 3.7 c (0.244) | |

| Time 2— after de-biasing | Low BSR (<1.4) | 2.4 a (0.240) | 2.7 a (0.252) | 1.7 b (0.256) | 1.6 b (0.224) |

| Med. BSR (3.5 > 1.4) | 3.2 d (0.238) | 2.2 e (0.329) | 2.5 f (0.268) | 2.2 e (0.252) | |

| High BSR (>3.5) | 3.4 a (0.216) | 3.5 a (0.230) | 2.7 b (0.246) | 2.7 b (0.259) |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Barchetti, A.; Neybert, E.; Mantel, S.P.; Kardes, F.R. The Half-Truth Effect and Its Implications for Sustainability. Sustainability 2022, 14, 6943. https://doi.org/10.3390/su14116943

Barchetti A, Neybert E, Mantel SP, Kardes FR. The Half-Truth Effect and Its Implications for Sustainability. Sustainability. 2022; 14(11):6943. https://doi.org/10.3390/su14116943

Chicago/Turabian StyleBarchetti, Alberto, Emma Neybert, Susan Powell Mantel, and Frank R. Kardes. 2022. "The Half-Truth Effect and Its Implications for Sustainability" Sustainability 14, no. 11: 6943. https://doi.org/10.3390/su14116943

APA StyleBarchetti, A., Neybert, E., Mantel, S. P., & Kardes, F. R. (2022). The Half-Truth Effect and Its Implications for Sustainability. Sustainability, 14(11), 6943. https://doi.org/10.3390/su14116943