Abstract

Learning about artificial intelligence (AI) has become one of the most discussed topics in the field of education. However, it has become an equally important learning approach in contemporary education to propose a “general education” agenda that conveys instructional messages about AI basics and ethics, especially for those students without an engineering background. The current study proposes a situated learning design for education on this topic. Through a three-week lesson session and accompanying learning activities, the participants undertook hands-on tasks relating to AI. They were also afforded the opportunity to learn about the current attributes of AI and how these may apply to understanding AI-related ethical issues or problems in daily life. A pre- and post-test design was used to compare the learning effects with respect to different aspects of AI (e.g., AI understanding, cross-domain teamwork, AI attitudes, and AI ethics) among the participants. The study found a positive correlation among all the factors, as well as a strong link between AI understanding and attitudes on the one hand and AI ethics on the other. The implications of these findings are discussed, and suggestions are made for possible future revisions to current instructional design and for future research.

1. Introduction

Learning about artificial intelligence (AI) has become one of the most discussed topics in the field of education [1,2]. For students without a computer engineering background (i.e., non-engineering students), it is crucial to learn about basic AI concepts in order for them to be able to picture a future AI-enriched world and the potential of AI applications. For such students, how AI necessitates cross-domain collaboration by experts with different backgrounds could be essential to understanding the development of AI. In the same vein, learning about ethical issues related to AI requires diverse perspectives from different fields of expertise. However, existing research studies have identified an absence of knowledge at present regarding how to create an appropriate learning environment that facilitates teaching non-engineering students about AI and ethics [2,3,4,5,6,7,8,9,10].

Hence, incorporating AI learning into general education (or liberal education) is worthwhile at the moment, since general education focuses on the value of humanity and its impact on society [11]. It has become increasingly evident that solving authentic problems in the world now requires not only the engineering sector but also cross-domain conversations and collaboration involving science, technology, and the humanities [12]. However, non-engineering students have displayed negative preconceptions regarding learning in an interdisciplinary environment about using technology based on a basic familiarity with the principles of programming [13]. Considering the traditional learning subjects of non-engineering students (e.g., linguistics, arts, literature, etc.), the rationale for cross-disciplinary learning about technology appears not to be always understood [14,15], and technology has never been their primary interest or requirement. Thus, in their learning paths in higher education, science, technology, engineering, or the basic logic of programming are not included in their curricula. A significant gap between the need to let all students learn about the prospects of AI in authentic life and the lack of understanding or unwillingness of non-engineering students to learn about technology can thus be seen. The following sections discuss the rationales and the findings in the existing literature relating to the arguments of this study.

1.1. Scientific Introductory Courses in General Education

With modernization, the emphasis in university education has shifted to the professional and practical. However, a focus on specialization, technology, and instrumentation is not conducive to the overall development of university education. The core value of universities should be the provision of a holistic education that includes both general and scientific education [16]. General education has been subjected to close examination since its early days. Among its strengths are that it can enhance students’ creativity, comprehensive ability, judgment, critical ability, cognitive skills, etc. in order for them to develop cross-discipline cooperation abilities or mature personalities. It can also address larger questions, such as finding meaning and purpose in life, not only through “culture” and “belief” types but also by having students study the world through multiple disciplines and perspectives. Therefore, apart from the humanities, science can also be considered an important part of general education [17].

Scientific introductory courses in general education cover a wide range of fields, including physics, chemistry, biology, and earth sciences, with the main emphasis being on the “scientific spirit” and “scientific literacy.” For non-engineering students, course materials that merely focus on “factual knowledge” can be fairly boring, which reduces their learning motivation [18]. Instead of defining scientific literacy with reference to specific learning outcomes, it is better to link such literacy to its application and experience in life and use appropriate teaching methods and content to achieve this goal [19]. In science education, the nature of science, as proposed by philosophers of science, is often too abstract for students; it should be similar to daily life experiences to help them understand [20], thereby enabling them to build a bridge between science and the humanities. Understanding how career scientists think and see the essence of problems and how knowledge is transformed over time to become technology can be applied in life today. Natural and other sciences do not have to be restricted to knowledge in textbooks but can be applied in life, which could help students rethink the meaning of natural sciences and arouse their interest in learning [21]. For example, Liou et al. [22] investigated the influence of augmented reality (AR) and virtual reality (VR) in an astronomy course, showing that students were motivated and encouraged with the assistance of technologies.

1.2. Important Scientific Issues—AI

The change in learning style in the field of science needs to be accompanied by a change in teaching content to keep pace with the times [23], with the most noticeable issue now being the development and application of AI. Following its conceptual definition by Alan Turing in the 1950s, AI technology has developed in several stages. From its earliest beginnings, when researchers used some symbols to define logical thinking and then let a computer use a large number of rules to make inferences to form an expert system, it is now possible to simply provide a computer with a lot of information for the purposes of machine learning and drawing conclusions [24]. The current development of AI belongs to the field of deep learning. It has dramatically improved state-of-the-art technology in speech recognition, visual object recognition, object detection, and many other domains, such as drug discovery and genomics [25]. With breakthroughs in algorithms and computing speeds, AI technology has developed rapidly and is widely used in different fields, such as manufacturing, finance, communications, transportation, medical care, and education, making AI the most interdisciplinary scientific issue [10].

The applications of AI are not covered by a single discipline. In addition to the information profession, it is still necessary to form an interdisciplinary learning group that brings together multiple professions to design AI applications. At the same time, the designed applications should be suitable for each field according to its needs. Thus, introducing the concept of AI into different professional courses in education will help train interdisciplinary teams, enabling members of different professions to develop good communication, coordination, and cooperation capabilities.

1.3. Ethics as a Social Scientific Issue (SSI) Element for an AI Course

The connotation of AI literacy includes ethical issues. With the development of technology, its application is usually accompanied by ethical and moral issues. Thus, for its practitioners, it is important that the implementation of AI includes its ethical aspects [26]. In terms of curriculum design, it is necessary not only to help students become practitioners of AI but also to understand the moral, ethical, and philosophical impact that AI will have on society. Therefore, including topics in the curriculum drawn from items such as movies and news headlines can stimulate discussion among students and help them to think deeply and understand the importance of AI ethics [27].

As AI technologies are being increasingly applied to people’s daily lives, these various applications also affect everyone significantly. Consequently, an increasing number of researchers are paying attention to issues of how to use AI technology properly in our lives. In recent years, particularly from 2016 to 2018, several research studies have been conducted with a focus on various ethical issues that arise in the use of AI. These issues include transparency, fairness, responsibility, and sustainability [3,4].

A school curriculum that allows students to correctly recognize contemporary scientific issues such as AI will help them develop their AI literacy. The development of literacy means not only the cultivation of knowledge and skills but also the formation and application of knowledge concepts in daily living. Thus, scientific literacy is the internalization of the knowledge and understanding of scientific concepts and scientific processes in one’s lifestyle, including decision-making, participation in civic affairs, and economic production [28]. Hung et al. [29] showed that literacy can be learned by developing games, especially for acquiring disciplinary literacy in computer science. Although it is possible to develop AI literacy through game design, this approach is difficult to apply to non-engineering students. In applying AI, which is an interdisciplinary technology, designers need to rely on cooperation with others and have critical and communication skills. Thus, future generations that encounter AI technology require AI literacy with problem cognition and problem-solving ability to apply it in their daily lives [30].

1.4. Situated Learning

The concept of situated learning has been developed and applied to explain how learning occurs in organizations [31]. Situated learning emphasizes that learning must take place through engaging in an authentic activity, and students search for a coherent interpretation of knowledge through interaction with real situations to build a complete body of knowledge [32]. Any kind of education that separates learning from context will result in students only acquiring fragmented knowledge and skills and will not allow them to apply what they have learned to solve everyday problems. Contextual learning emphasizes that learners should acquire knowledge and skills and develop rational and meaningful interpretations of knowledge through real activities in real social situations [33,34,35]. In the learning process, the focus of situated learning theory is on the “person-plus-the-surround”, which includes the learning environment, learning activities, and learning peers. In other words, learning is a continuous process of connecting meaning to knowledge, and learners are active constructors of knowledge in the whole environment. According to Winn [36], three main types of instructional design approaches may be adopted to achieve contextual learning goals: (1) designing learning activities as apprenticeships, (2) providing near-real-life learning experiences and transforming classroom learning activities into more realistic approaches, and (3) providing learners with a real-world learning experience.

In traditional education methods, the content of teaching was often abstract, and the need for more specific content was readily ignored. Using situational simulation at the right time in teaching allows students to understand where the knowledge they have learned can be applied [37]. Situated learning emphasizes how knowledge is used in real situations and provides students with simulated exercises and opportunities for cooperation. With the guidance of teachers, better learning results can be achieved [38]. In science education, situated teaching can connect students with actual social problems, thereby strengthening their educational experiences for future job opportunities [39].

Previous studies have confirmed that situated learning has had a good effect in fields such as nursing, medical treatment, language learning, and computer technology, since practicing is important for learning, and students had more opportunities to do so [40,41,42,43]. For applied knowledge such as AI, situated learning can more effectively improve students’ learning effectiveness and help them to understand how to apply AI in their life or workplace.

Since AI is an important scientific issue in this era, it has been identified as a teaching priority at different levels of educational institutions, from K-12 to departments in universities. While AI is a kind of technology for engineering students, it is more likely to be a tool for non-engineering ones. Hence, the designed AI lessons were incorporated into a general education course whose participants were all non-engineering students. This study aims not only to further the research on university students’ perceptions of AI but also to investigate the differences in students’ awareness of AI ethics before and after undertaking these courses, which will serve as a reference for future curriculum development and revision.

1.5. Purpose of the Current Study

The main purpose of this study was to design a situated-learning-based AI course and to examine whether this format of learning is effective in enhancing non-engineering students’ understanding and attitude toward AI. In addition, the study aims to understand the impact of the designed course on learners’ awareness of AI ethical issues.

Thus, this research attempts to answer the following two questions:

- Does the present situated-learning-based course have an effect on students’ understanding of AI, AI teamwork, and attitudes toward AI?

- Does the present course enhance students’ awareness of AI ethical issues?

2. Methodology

Starting from the premise that non-engineering students should focus on applying AI techniques to solve their problems rather than technology, we designed a situated-learning-based instructional design that aims to give these students an understanding of AI that can be put into practice through hands-on exercises. Figure 1 illustrates the research framework. This study also designed pre- and post-test surveys to evaluate the performance of students on different aspects of AI: AI understanding, AI teamwork, attitude toward AI, and awareness of AI ethical issues (AI ethics).

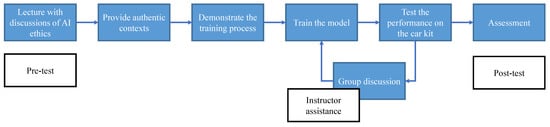

Figure 1.

The instructional design of this study.

2.1. Participants

This study involved 328 newly enrolled first-year students in non-engineering faculties at a university in northern Taiwan. There were 40–65 students per course and 13 classes in total. Regarding gender distribution, 108 of the students were male (32.9%) and 220 were female (67.1%). Of the students, 253 were from the Business School (77.1%), 44 were from the Design School (13.4%), 29 were from the School of Humanities and Education (8.8%), and 2 were from the School of Law (0.6%). The study was approved by the campus ethics committee, and all participants agreed to the experiments.

2.2. Procedure

The proposed instructional design in this study was prepared as part of a regular 18-week general education course entitled “The Introduction of Science and Technology.” The learning lessons, which lasted three weeks in the middle of the course, were in three parts. At the beginning, an experienced instructor gave a lecture to introduce the fundamental principles of AI, including a mention of some of the ethical issues arising in the use of AI, citing examples such as autonomous vehicles, public surveillance, and a personal assistant (such as Siri and Google Assistant). Next, the instructor provided authentic AI application scenarios to learners and demonstrated how to achieve specific goals with AI tools (Figure 1).

The learners then tried to train an AI model by themselves to achieve the same goal, with the instructor helping them tackle any problems they encountered. Finally, an assessment session was held to evaluate their achievements in accomplishing the assigned tasks. According to Herrington and Oliver [38], knowledge is best acquired by learners if the learning environment includes nine elements of situated learning design. This study draws on their work in designing the elements of situated learning and the corresponding learning activities, as illustrated in Table 1.

Table 1.

Elements of situated learning and the corresponding learning activities.

To evaluate the potential contribution of the proposed cultivation of AI ethics, a pre-test survey was administered before the course to ascertain the current levels of the learners’ AI understanding, AI teamwork, attitude toward AI, and awareness of AI ethical issues (AI ethics). The lecture at the beginning was given to establish a baseline of learners’ knowledge of AI. To deepen this knowledge, we provided them with a scenario involving an AI application: designing an autonomous vehicle. The scenario was presented in the following terms: If you have to design an autonomous vehicle, how can you make it drive safely? The first requirement is to follow the road. The second is for it to recognize road signs and, most importantly, it must stop if it “sees” potential obstacles, such as pedestrians or animals.

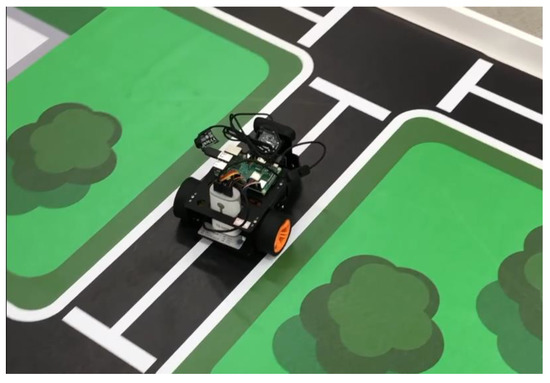

Because it was impossible to provide learners with real vehicles to undertake their exercises, this study used a motor-controlled car kit built on Raspberry Pi (see Figure 2 and Figure 3), on which it is possible to run computer programs. The goal of this exercise was to train an AI model to meet the aforementioned requirements and then apply the model to the car kit. The instructor first demonstrated how to prepare an AI model to recognize two different objects (which were cats and dogs in this study) and provided a series of instructions for learners to follow. The learners were then asked to collect road sign images to train their own AI models by utilizing the knowledge imparted by the instructor and applying the model to the car kit. When learners encountered problems during the exercise, such as the model’s inability to recognize an image correctly, the instructor helped them analyze the cause of the problem and provided possible solutions.

Figure 2.

The motor-controlled car kit built on Raspberry Pi used in this study.

Figure 3.

Actual teaching scenario in this study showing a discussion between a student and lecturers on improving the performance of the motor-controlled car kit built on Raspberry Pi.

Although AI has attracted a high level of interest in recent years, most non-engineering students have limited knowledge of the subject, because most AI discussions focus on technical improvements, which show people the results from its applications but not the rationale behind it or the manner in which such results are achieved. Thus, before starting the designed situated learning activities, students were taught about several important topics in the AI field: the history of AI and what scientists are trying to achieve, the definition of supervised learning and unsupervised learning, applications in AI, and ethical issues encountered in the development of AI. For this purpose, several professional instructors from the departments of Information and Computer Engineering, Information Management, and Electrical Engineering were enlisted to provide learners with a basic understanding of AI technology, including its objective, achievable goals, and current bottlenecks.

Following the introduction to the course, the instructors described authentic contexts for the practical application of AI: designing the autonomous vehicle. They then asked the learners to design an AI model that satisfied some requirements. In principle, knowledge of a programming language is required to design such a model, but this would make the task too difficult for non-engineering students to undertake. To overcome this problem, this study utilized a web service named “Custom Vision,” which is provided by Microsoft Azure. The service uses the deep learning technique known as the convolutional neural network (CNN) to create a web-based interface for users to design and train their own models through transfer learning [44]. The interface hides the implementation details of creating an AI model but provides users with the flexibility to solve their own problems with the provided model. Thus, users only need to upload an image dataset to complete an object-recognition task. In the first training activity, this study used a public dataset containing 25,000 images of two types of object. In view of the limited instruction time, each of the students was provided with a smaller dataset for training purposes and another from the original dataset for testing purposes. The modified training dataset contained two classes (i.e., images of cats and dogs), with each class consisting of 500 images, while the test dataset contained 20 images. The instructor then demonstrated an experiment and asked the students to perform the same experiment, which was to follow these steps:

- Use 5 images from each class to train the model and then test the accuracy of the result using all images in the test dataset;

- Use 20 images from each class to train the model and then test the classification accuracy;

- Increase the number of images to train the model until all images in the test dataset are correctly classified.

In this training activity, students discovered that the trained model was highly inaccurate with the use of only 10 training images, but the accuracy improved to almost 100% after more than 100 images were used for the training. This exercise made them aware that an effective AI model requires sufficient training data. In addition, students were asked to perform an experiment that involved choosing a picture containing neither of the two classes, feeding this picture into the network, and observing the recognition result. The purpose of this exercise was to let students know that the output of the AI model can only be what it knows. Thus, for the model to be able to distinguish between “true objects” and “none of the above,” it is necessary to provide additional images that do not contain any desired objects (called “negative samples”), place them in a category, and allow the model to learn to distinguish them from the correct ones.

For the next training activity, students were divided into groups, and each group was provided with a motor-controlled car kit, as shown in Figure 2. The car kit was built by a Raspberry Pi with Raspbian OS to enable it to execute programs. Those used in this study were equipped with USB cameras to capture images. At the same time, we provided two types of road sign (i.e., moving directions for the cars: a left-turn sign and a right-turn sign). The students were then asked to design a model that could drive the wheels under different circumstances; specifically, a car kit that “sees” a right-turn sign should turn right and turn left when it “sees” the left-turn sign. To accomplish this task, students first had to collect several images of different road signs and upload the images to the Custom Vision website for the purpose of training a model. In addition, they were asked to consider how the car should react when it does not “see” any road signs or “sees” objects that are not road signs. For example, if the car stops at a crossroad, it should wait until it “sees” a road sign. At that moment, it tries to detect whether there is a road sign in front of it. For this purpose, the students had to collect images that represented negative samples. Furthermore, if the car “sees” a specific object (e.g., a cat or a dog) when it is moving, it must stop immediately to avoid hitting that object. This practice was related to the activity they undertook in the previous exercise. After finalizing the design, the students were helped by teaching assistants in the class to deploy the model on the car kit. Considering the higher level of technicality involved in this step, we intentionally avoided having the students perform this task by themselves. However, they could determine if there was a problem with the model they had designed based on how it behaved on the car kit. If the model did not perform well enough—for example, the car was unable to recognize the road sign correctly—the instructors assisted them in finding solutions, such as collecting more data and re-training the model.

During this training activity, the students realized the importance of designing a proper algorithm for the car kit to respond correctly. We asked them to undertake this exercise in groups. Because the algorithm they designed could have flaws, teachers needed to guide them in revising it through discussions. Even in cases where it was well designed, the car kit might still react incorrectly due to the incorrect recognition of road signs. Furthermore, the students were prevented from using the model they had trained during the first exercise to tackle the problems they encountered in the second exercise. Although the problems in the first and second exercises were similar, it is worth emphasizing that the current AI model was a purpose-specific model, not a generic one. Hence, the learners had to train different models for different problems, although the backbone of each model was the same.

Following the completion of the activities, a post-test survey was undertaken to evaluate whether there was a learning effect on students’ AI literacy, understanding, and awareness of AI ethical issues (AI ethics).

In summary, the designed learning activities began with an introduction to the basic concepts of AI, which involved a presentation of several applications that would allow the students to think about the developmental process of AI and approaches that could be adopted to solve some practical problems with current AI models. During the first interaction, the students would usually have some questions about current AI technologies. The next two learning activities provided them with the opportunity to validate these questions by training their AI models to solve certain problems. From these experiments, they became aware of several basic features of AI. First, the training of AI models is a data-learning mechanism, which means that sufficient data must be provided to make the model accurate. Second, the current AI model is task specific; in other words, because it is rare to solve a given problem using an existing model, it is necessary for humans to design an appropriate algorithm to solve that problem with the help of the AI model. Finally, even if properly designed, AI models still have their limitations, and it is still possible for them to make wrong decisions. Thus, the manner in which such anomalies are to be dealt with remains an important task for humans.

2.3. Instruments

2.3.1. AI Understanding Scale (AI Understanding)

To measure the levels of the AI understanding of the learners after the course, eight question items were designed and then revised following comments from three experts in relevant fields (two professors of computer science and one professor of education), all of whom agreed on the appropriateness of the design of the tests. During the course, these question items also served as knowledge points to ensure a proper alignment with the lessons and learning activities. The survey on AI understanding was designed in the form of a Likert-style five-point scale, with 1 corresponding to “strongly disagree” and 5 corresponding to “strongly agree.” Such an approach was taken because, like other areas of science, AI is in a constant state of development and some scientific statements about the current state of development might not always be true in the future. Therefore, we prepared this set of questions in our experimental design to estimate the level of students’ current understanding of AI. The course instructors also used these questions as discussion topics during the course.

2.3.2. AI Literacy Scale

This study adapted an AI literacy scale developed by Lin et al. [45] to evaluate learners’ AI literacy. The scale was designed in the form of a Likert-style five-point scale, with 1 corresponding to “strongly disagree” and 5 corresponding to “strongly agree.” To understand the important factors of AI, this study applied factor analysis to determine validity. This resulted in a Kaiser-Meyer-Olkin (KMO) value of 0.945, while the significance value of the Bartlett spherical test was 0.000, suggesting that the dataset was suitable for factor analysis and could explain up to 65.29% of variance. Finally, two important elements were extracted: (1) teamwork (4 items) and (2) attitude toward AI (8 items). The overall internal consistency reliability (Cronbach’s alpha) was 0.943, suggesting that the scale maintained good reliability. As illustrated in Figure 1, the pre-test survey was administered before the first learning activity, whereas the post-test was administered at the end of the designed course. Table 2 lists some question items that correspond to different dimensions and learning activities.

Table 2.

Link between AI learning activities, question items, and dimensions.

2.3.3. AI Ethics Awareness Scale (AI Ethics)

To understand learners’ awareness of the ethical issues in AI, this study developed an AI Awareness Scale, with reference to the findings of Jobin et al. [3,4], using a Likert-style five-point scale, with 1 corresponding to “strongly disagree” and 5 corresponding to “strongly agree.” The scale contained 15 questions on four dimensions: transparency (4 items), responsibility (3 items), justice (4 items), and benefit (4 items). The reliability of the overall scale was also higher than 0.7, indicating that the scale has good reliability. Table 3 lists some examples of question items.

Table 3.

Examples of question items within the four dimensions of AI ethics.

3. Results

The analyses undertaken to respond to each of the research questions raised in this work are presented below.

- Does the present situated-learning-based course have an effect on students’ understanding of AI, AI teamwork, and attitudes toward AI?

To ascertain the levels of AI understanding among learners following their participation in the designed AI course, a repeated T-test analysis was applied in this study. The comparisons between the pre-test and post-test levels (see Table 3) showed that the score of students’ AI understanding (mean value) increased from 4.02 to 4.13, with a standard deviation of 0.60 and 0.62, respectively. The T-value was 2.99 (p = 0.003 < 0.01), indicating a significant difference between the pre-test and post-test scores. These results confirm that students’ levels of AI understanding improved significantly after the course. Hence, we can infer that the designed AI course can help enhance students’ understanding of AI. In addition, students’ performance in the two dimensions of teamwork and attitude toward AI also improved after the course (see Table 4). Previous studies have pointed out that hands-on activities are an important element that can effectively enhance students’ active learning and increase their learning effectiveness [46,47]. The experimental results in this study confirm this viewpoint, showing that students’ understanding of AI was improved through hands-on activities.

Table 4.

Results of the repeated T-test analysis of students’ understanding of AI.

This study found that combining hands-on activities and group work can help non-engineering students enhance their perceptions of AI issues and strengthen their awareness of interdisciplinary collaborative learning. In the process of completing tasks related to AI through group work, learners have the opportunity to realize clearly that cooperation is an important approach for completing tasks related to AI, an awareness that is a crucial element of AI literacy. This finding echoes that of a previous study [48], which showed that non-engineering students’ perceptions of AI understanding, AI teamwork, and attitude toward AI can be positively enhanced through situated learning.

From the analysis of the differences in the levels of understanding of AI and AI literacy among students in different faculties, we found that the understanding and literacy of students in the Business School significantly improved after the AI course. However, for those in the Design School and the School of Humanities and Education, although there was an improvement in the mean score in these two dimensions, it did not reach statistical significance. This finding indicates that the students in these faculties did not experience any significant improvement in their understanding of AI and AI literacy.

To determine whether a significant difference exists in the measurement of AI understanding and AI literacy among students from different faculties after the course, a covariance analysis of the data was performed. Although students from the Business School had the highest scores among the four faculties (see Table 5) in their understanding of AI and AI literacy, the covariance analysis results showed that no significant difference in AI understanding and AI literacy could be detected among students from different faculties (Table 6 and Table 7).

Table 5.

Students’ performance in understanding of AI and AI literacy among different faculties.

Table 6.

Comparison of students’ performance in understanding AI among different faculties.

Table 7.

Comparison of students’ performance in AI literacy among different faculties.

- Does the present AI course enhance students’ awareness of AI ethical issues?

In view of the ethical issues arising for the broader society from the maturation of AI technology, this study designed some question items to estimate the ethical awareness of students in respect to AI use. The results of the paired T-test showed that such awareness increased significantly after participation in the AI course (see Table 8). This finding indicates that the design of the course activities helped students pay more attention to the ethical issues that they should be aware of in their application of AI technology in daily life.

Table 8.

Results of repeated T-test on students’ awareness of AI ethical issues.

In the preceding experiment, we found that students’ levels of AI understanding, AI teamwork, attitude toward AI, and awareness of AI ethics were enhanced through the designed lesson. To establish the relationships among these dimensions, a Pearson correlation analysis was performed, and the results of which are presented in Table 9. The scores for the awareness of AI ethical issues have a significant positive correlation with all three factors, namely AI understanding, teamwork, and attitude toward AI, which shows that a correlation exists between students’ understanding of AI, attitude toward AI, teamwork skills, and awareness of AI ethical issues.

Table 9.

Correlation between students’ AI understanding, AI teamwork, attitude toward AI, and awareness of AI ethical issues.

To discover the important factors affecting students’ awareness of AI ethics, a regression analysis was performed, and the results of which showed that students’ understanding of AI and their attitude to AI explained 71% of the variation in their awareness of AI ethical issues (Table 10). Moreover, we found that their understanding of AI (Beta = 0.51, t = 12.79, and p < 0.001) and attitude to AI (Beta = 0.51, t = 10.37, and p < 0.001) could effectively predict their awareness of AI ethical issues. This finding indicates that the higher the student’s AI understanding and attitude toward AI, the higher their awareness of AI ethical issues (see Table 11).

Table 10.

Regression model summary.

Table 11.

Results of the regression analysis regarding students’ understanding of AI, AI literacy, and awareness of AI ethical issues.

The ethical issues of AI have received increasing attention in recent years, and this study finds that the performance of students’ AI understanding, teamwork, and attitude toward AI in a situated learning environment is significantly and positively correlated to their awareness of AI ethical issues. Furthermore, the results in Table 7 show that two of the factors, AI understanding and attitude toward AI, can predict learners’ awareness of AI ethical issues.

4. Discussion and Conclusions

This study proposes a set of situated-learning-based course modules in the form of lectures, case discussions, and hands-on activities for students with non-engineering backgrounds to learn about AI. The findings show that the course effectively improved students’ understanding, teamwork, and attitudes toward AI. Moreover, their awareness of AI ethics correlates with these factors and could be effectively predicted by their AI understanding and attitude toward AI. Teamwork was not an effective predictor, however. Cross-domain collaboration was not included in the design of the learning process; thus, the non-engineering students did not seek to collaborate in an “interdisciplinary teamwork” format when they encountered dilemmas regarding ethical issues. Therefore, an appropriately designed situated learning that includes problematic scenarios for students to “play” cross-domain collaboration in solving AI-related issues could be a future improvement to our AI lessons.

We had hoped to discover the students’ ability to define and discuss the problems encountered with their AI car kit. In the future, it would be useful to create learning modules that account for differences in levels in terms of the difficulty in computer programming (e.g., we did not ask students to do the coding themselves during lessons and activities) or even for students with different “AI understanding” or “AI attitudes”. We discovered that students without an engineering background (and even those with such a background) and possessing different mindsets had enrolled in the general education course for a variety of purposes. These were other minor factors that could have influenced the results of this study.

Finally, we discussed the effects of the situated-learning-based AI course on students’ understanding, teamwork, and attitudes toward AI and examined the effects on their awareness of ethical issues in AI. In the course of their teaching on AI, instructors would normally place great emphasis on students’ engagement in AI tasks, their motivation in learning-related content, and their learning performance. Whether using AI ethics as a set of instructional objectives for a situated learning scenario enhances the outcomes of AI learning would be a valuable line of inquiry to pursue in future research. Such a study would enable us to gain a deeper understanding of the relationship between AI ethics and the cultivation during students’ AI learning. General education is one appropriate way to cultivate students’ ethical awareness. For non-engineering students, it serves as a medium to expose them to important modern scientific issues, such as AI. The current study expands the scope and purpose of existing scientific introductory courses in general education by including the subject of AI. It thereby lays an empirical foundation for future suggestions regarding the instructional design of an AI course.

Moreover, this study compared the learning performance of students from different faculties. The mean scores for the understanding and AI literacy of such students show that those from the Business School had the highest score, followed by those from the School of Humanities and Education and then those from the Design School, with the lowest score being for students from the School of Law. Although the results showed some differences in the mean scores for the students from different faculties, the covariance analysis did not show any significant difference in the performance of such students. The possible reason for this is the uneven distribution of the number of students from the faculties covered by this study, with few students in some faculties (e.g., the School of Law and the Design School), which, in turn, makes it harder to detect statistical associations or differences in students’ performance among different faculties. In future research, the collection of larger samples from different faculties is recommended to permit a broader understanding of the differences in learning among students from different faculties. Finally, this study used quantitative data on students in its analysis to gain an understanding of the effects of students’ learning in AI. To achieve a clearer understanding of the learning process of the participants in AI courses, it would be more appropriate to apply some learning process analysis techniques, such as balancing the distribution of participants. In addition, students from the Business School may have been exposed to AI in their business courses, such as Financial Technology (Fintech) in which AI is used in the prediction of investment revenues. Hence, anecdotal data obtained from prior interviews with students could help draw valid conclusions consistent with the findings of this study.

Author Contributions

Conceptualization, C.-C.Y.; data curation, C.-H.L., P.-K.S., L.Y.W. and C.-C.Y.; formal analysis, C.-H.L., P.-K.S., L.Y.W. and C.-C.Y.; funding acquisition, L.Y.W. and C.-C.Y.; investigation, C.-H.L., P.-K.S., L.Y.W. and C.-C.Y.; methodology, C.-H.L., P.-K.S., L.Y.W. and C.-C.Y.; supervision, C.-C.Y. All authors have read and agreed to the published version of the manuscript.

Funding

This research was partially funded by the Ministry of Science and Technology, Taiwan (R.O.C.) and the Ministry of Education, Taiwan (R.O.C.) under grant nos. MOST 108-2511-H-033-003-MY2, MOST 108-2745-8-033-003-, MOST 109-2221-E-033-033-, and PGE1090426.

Data Availability Statement

In view of the policy governing internal institutional research funding, only partial data sharing is possible. Readers who wish to acquire the data used for this study should contact the authors.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Chiu, T.K.; Chai, C.-S. Sustainable Curriculum Planning for Artificial Intelligence Education: A Self-Determination Theory Perspective. Sustainability 2020, 12, 5568. [Google Scholar] [CrossRef]

- Goel, A. Editorial: AI Education for the World. AI Mag. 2017, 38, 3. [Google Scholar] [CrossRef]

- Hagendorff, T. The Ethics of AI Ethics: An Evaluation of Guidelines. Minds Mach. 2020, 30, 99–120. [Google Scholar] [CrossRef]

- Jobin, A.; Ienca, M.; Vayena, E. The global landscape of AI ethics guidelines. Nat. Mach. Intell. 2019, 1, 389–399. [Google Scholar] [CrossRef]

- Johnson, D.G. Can Engineering Ethics Be Taught; The Bridge: Malmö, Sweden, 2017; pp. 59–64. [Google Scholar]

- King, T.C.; Aggarwal, N.; Taddeo, M.; Floridi, L. Artificial intelligence crime: An interdisciplinary analysis of foreseeable threats and solutions. Sci. Eng. Ethics 2020, 26, 89–120. [Google Scholar] [CrossRef]

- Leonelli, S. Locating ethics in data science: Responsibility and accountability in global and distributed knowledge production systems. Philos. Trans. R. Soc. A Math. Phys. Eng. Sci. 2016, 374, 20160122. [Google Scholar] [CrossRef] [PubMed]

- Pekka, A.-P.; Bauer, W.; Bergmann, U.; Bieliková, M.; Bonefeld-Dahl, C.; Bonnet, Y.; Bouarfa, L. The European Commission’s high-level expert group on artificial intelligence: Ethics guidelines for trustworthy ai. In Working Document for Stakeholders’ Consultation; Publications Office of the EU: Luxembourg, 2018; pp. 1–37. [Google Scholar]

- Rosenberg, S. Why AI Is Still Waiting for Its Ethics Transplant. 2017. Available online: https://www.wired.com/story/why-ai-is-still-waiting-for-its-ethics-transplant/ (accessed on 11 September 2017).

- Yang, X. Accelerated Move for AI Education in China. ECNU Rev. Educ. 2019, 2, 347–352. [Google Scholar] [CrossRef]

- Pedró, F.; Subosa, M.; Rivas, A.; Valverde, P. Artificial intelligence in education: Challenges and opportunities for sustainable development. In The Global Education 2030 Agenda; UNESCO Education Sector: Paris, France, 2019. [Google Scholar]

- Katehi, L.; Pearson, G.; Feder, M. Engineering in K-12 Education Committee on K-12 Engineering Education; National Academy of Engineering and National Research Council of the National Academies: Washington, DC, USA, 2009; pp. 1–14. [Google Scholar]

- Hu, C.-C.; Yeh, H.-C.; Chen, N.-S. Enhancing STEM competence by making electronic musical pencil for non-engineering students. Comput. Educ. 2020, 150, 103840. [Google Scholar] [CrossRef]

- Lau, C.; Lo, K.; Chan, S.; Ngai, G. From zero to one: Integrating engineering and non-engineering students in a service-learning engineering project. In Proceedings of the International Service-Learning Conference, Washington, DC, USA, 23–25 October 2016. [Google Scholar]

- Lo, K.W.K.; Lau, C.K.; Chan, S.C.F.; Ngai, G. When non-engineering students work on an international service-learning engineering project—A case study. In Proceedings of the 2017 IEEE Global Humanitarian Technology Conference (GHTC), San Jose, CA, USA, 19–22 October 2017; pp. 1–7. [Google Scholar]

- Pan, J.D.; Pan, H.M. Accomplishments of the Holistic Education Idea: An Example from Chung Yuan Christian University. Chung Yuan J. 2005, 33, 237–251. [Google Scholar]

- Kirk-Kuwaye, M.; Sano-Franchini, D. Why do I have to take this course? How academic advisers can help students find personal meaning and purpose in general education. J. Gen. Educ. 2015, 64, 99–105. [Google Scholar] [CrossRef]

- Pintrich, P.R.; de Groot, E.V. Motivational and self-regulated learning components of classroom academic performance. J. Educ. Psychol. 1990, 82, 33–40. [Google Scholar] [CrossRef]

- DeBoer, G.E. Scientific literacy: Another look at its historical and contemporary meanings and its relationship to science education reform. J. Res. Sci. Teach. 2000, 37, 582–601. [Google Scholar] [CrossRef]

- Abd-El-Khalick, F.; Bell, R.L.; Lederman, N.G. The nature of science and instructional practice: Making the unnatural natural. Sci. Educ. 1998, 82, 417–436. [Google Scholar] [CrossRef]

- Glynn, S.M.; Aultman, L.P.; Owens, A.M. Motivation to learn in general education programs. J. Gen. Educ. 2005, 54, 150–170. [Google Scholar] [CrossRef]

- Liou, H.H.; Yang, S.J.; Chen, S.Y.; Tarng, W. The Influences of the 2D image-based augmented reality and virtual reality on student learning. J. Educ. Technol. Soc. 2017, 20, 110–121. [Google Scholar]

- Huang, C.J. A study of using science news as general education program teaching materials. Nanhua Gen. Educ. Res. 2005, 2, 59–83. [Google Scholar]

- Ongsulee, P. Artificial intelligence, machine learning and deep learning. In Proceedings of the 2017 15th International Conference on ICT and Knowledge Engineering (ICT&KE), Bangkok, Thailand, 22–24 November 2017; pp. 1–6. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Goldsmith, J.; Burton, E. Why teaching ethics to AI practitioners is important. In Proceedings of the 31st AAAI Conference on Artificial Intelligence, San Francisco, CA, USA, 4–9 February 2017; pp. 4836–4840. [Google Scholar]

- Burton, E.; Goldsmith, J.; Koenig, S.; Kuipers, B.; Mattei, N.; Walsh, T. Ethical Considerations in Artificial Intelligence Courses. AI Mag. 2017, 38, 22–34. [Google Scholar] [CrossRef]

- Maienschein, J. Scientific Literacy. Science 1998, 281, 917. [Google Scholar] [CrossRef]

- Hung, H.; Yang, J.; Tsai, Y. Student Game Design as a Literacy Practice: A 10-Year Review. J. Educ. Technol. Soc. 2020, 23, 50–63. [Google Scholar] [CrossRef]

- Konishi, Y. What is Needed for AI Literacy? In Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems, New York, NY, USA, 25–30 April 2020. [Google Scholar]

- Brown, J.S.; Duguid, P. Structure and Spontaneity: Knowledge and Organization. In Managing Industrial Knowledge: Creation, Transfer and Utilization; Sage: Thousand Oaks, CA, USA, 2012; pp. 44–67. [Google Scholar] [CrossRef]

- McLellan, H. Situated Learning Perspectives; Educational Technology: New York, NY, USA, 1996. [Google Scholar]

- Brown, J.S.; Collins, A.; Duguid, P. Situated cognition and the culture of learning. Educ. Res. 1989, 18, 32–42. [Google Scholar] [CrossRef]

- Cleveland, B.W. Engaging Spaces: Innovative Learning Environments, Pedagogies and Student Engagement in the Middle Years of School; University of Melbourne: Melbourne, VC, Australia, 2011. [Google Scholar]

- Goel, L.; Johnson, N.; Junglas, I.; Ives, B. Situated Learning: Conceptualization and Measurement. Decis. Sci. J. Innov. Educ. 2010, 8, 215–240. [Google Scholar] [CrossRef]

- Winn, W. Instructional design and situated learning: Paradox or partnership? Educ. Technol. 1993, 33, 16–21. [Google Scholar]

- Anderson, J.R.; Reder, L.M.; Simon, H.A. Situated learning and education. Educ. Res. 1996, 25, 5–11. [Google Scholar] [CrossRef]

- Herrington, J.; Oliver, R. An instructional design framework for authentic learning environments. Educ. Technol. Res. Dev. 2000, 48, 23–48. [Google Scholar] [CrossRef]

- Sadler, T.D. Situated learning in science education: Socio-scientific issues as contexts for practice. Stud. Sci. Educ. 2009, 45, 1–42. [Google Scholar] [CrossRef]

- Cope, P.; Cuthbertson, P.; Stoddart, B. Situated learning in the practice placement. J. Adv. Nurs. 2000, 31, 850–856. [Google Scholar] [CrossRef] [PubMed]

- Artemeva, N.; Rachul, C.; O’Brien, B.; Varpio, L. Situated Learning in Medical Education. Acad. Med. 2017, 92, 134. [Google Scholar] [CrossRef]

- Comas-Quinn, A.; Mardomingo, R.; Valentine, C. Mobile blogs in language learning: Making the most of informal and situated learning opportunities. Recall 2009, 21, 96–112. [Google Scholar] [CrossRef]

- Ben-Ari, M. Situated Learning in Computer Science Education. Comput. Sci. Educ. 2004, 14, 85–100. [Google Scholar] [CrossRef]

- Zhang, K.; Cheng, H.D.; Zhang, B. Unified Approach to Pavement Crack and Sealed Crack Detection Using Preclassification Based on Transfer Learning. J. Comput. Civ. Eng. 2018, 32, 04018001. [Google Scholar] [CrossRef]

- Lin, C.H.; Wu, L.Y.; Wang, W.C.; Wu, P.L.; Cheng, S.Y. Development and validation of an instrument for AI-Literacy. In Proceedings of the 3rd Eurasian Conference on Educational Innovation (ECEI 2020), Ha Long Bay, Vietnam, 5–7 February 2020. [Google Scholar]

- Yannier, N.; Hudson, S.E.; Koedinger, K.R. Active Learning is About More Than Hands-On: A Mixed-Reality AI System to Support STEM Education. Int. J. Artif. Intell. Educ. 2020, 30, 74–96. [Google Scholar] [CrossRef]

- Mater, N.R.; Hussein, M.J.H.; Salha, S.H.; Draidi, F.R.; Shaqour, A.Z.; Qatanani, N.; Affouneh, S. The effect of the integration of STEM on critical thinking and technology acceptance model. Educ. Stud. 2020, 1–17. [Google Scholar] [CrossRef]

- Hurson, A.R.; Sedigh, S.; Miller, L.; Shirazi, B. Enriching STEM education through personalization and teaching collaboration. In Proceedings of the 2011 IEEE International Conference on Pervasive Computing and Communications Workshops (PERCOM Workshops), Washington, DC, USA, 21–25 March 2011; pp. 543–549. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).