Abstract

Thinking skills are essential to achieve sustainable social development. Nonetheless, there is no specific instrument that assesses all of these skills as a whole. The present study aimed to design and validate a scale to assess complex thinking skills in adult people. A scale of 22 items assessing the following aspects: analysis and problem solving, critical analysis, metacognition, systemic analysis, and creativity, in five levels, was created. This scale was validated in 626 university students from Peru. In total, 16 experts in the field helped to determine the content validity of the scale (Aiken’s V value higher than 0.8). The confirmatory factor analysis allowed the evaluation of the structure of the five factors theoretically proposed and the goodness of fit indexes was satisfactory. An item was eliminated during the process and the scale resulted in 21 items. The composite reliability for the different factors was ranged between 0.794 and 0.867. The invariance between genders was also checked and the concurrent validity was proved. The study concludes that the content validity, construct validity, concurrent validity, and composite reliability levels of the COMPLEX-21 scale are appropriate.

1. Introduction

The development of complex thinking in citizens and communities is essential to achieve sustainable social development. An Australian study found that higher levels of complex thinking are positively associated with the prevention of fires in the community through better communication processes and more stable and less extreme attitudes [1]. The association between complex thinking and the development of math and natural sciences has also been established [2]. Several studies have assessed, from a complex point of view, the improvement in the medical treatment of patients with autoimmune diseases such as HIV [3], and it has been proposed as a reference point to better understand diabetes [4] Complex thinking also allows a better comprehension of the relationship between education and knowledge exchange [5]. Research carried out in the field of education based on complex thinking have improved student learning by helping them to develop skills for using computational programs [6]

In the last decades, progress in the field of intellectual or thinking skills [7] has allowed the design and validation of scales to assess these skills, with a special focus on critical thinking, creativity, metacognition, and problem solving, among others. Some of these instruments are general, meant to assess several skills at the same time (for example, the scale of Hanlon et al. [8] or the instrument of Peeters et al. [9] Others are highly specific and aimed at assessing certain thinking skills, such as the scale of Tran et al. [10], which is focused on the assessment of creativity in lessons for students, or the A-E scale of Martisen and Furnham [11] to assess aspects of motivation and problem solving. However, the number of instruments focused on the construction of complex thought itself within the socioformative framework is remarkably low or non-existent. There are currently no scales aimed at assessing complex thinking construction found on SCOPUS or Web of Science using the search equation: TITLE–ABS-KEY ((scale OR questionnaire OR rubric) AND (“complex thinking”) AND (validity OR reliability OR relevance)).

Thus, the aim of this study was: (1)to design a brief scale to assess the essential skills of complex thinking in one instrument by considering a socioformative approach and the suggestions of a group of experts in the field; (2) to test the validity of the scale with the support of a group of experts from several institutions who assess the appropriateness, writing, and satisfaction of the instrument; and (3) to determine the construct validity and concurrence, as well as the reliability of the new scale.

2. Literature Review

2.1. The Complex Thinking as an Epistemological Approach

Complex thinking has several definitions, which need to be explained in any study of this kind. For Morin [12], who represents the most epistemological line, complex thinking consists of the study of social and environmental events in a continuous organization and reorganization process resulting from the connection between different elements and economic, political, sociological, and mythological dimensions [13]. To this author, the network of elements and processes is the complex part [13] and not if it is either complicated or difficult [14]. Nonetheless, to understand the world in its totality, a complex mind is required because, otherwise, we could easily make assumptions about reality in a rigid, one-dimensional, lineal, and separated way.

A well-organized or complex mind [15] is made up of certain skills such as contextualization, systemic analysis, flexibility, connection, and confrontation of uncertainty. These allow the human being to withdraw from one dimensionality, linearity, reductionism, or non-willingness to change, which are typical characteristics of simplistic thinking.

2.2. Complex Thinking as High Order Thinking

Another branch in the study of complex thinking is established by the philosophy of cognitive science and the contributions of Lipman [16], who defines it as high order thinking that connects critical and creative thinking with problem solving or addressing situations. Critical thinking, as Lipman understands it, refers to critical reasoning based on arguments, whereas creative thinking refers to the generation of ideas or actions in the non-discursive field. In daily life, both kinds of thinking have elements in common and must connect and complement each other. Studies in this field have found a relationship between the critical and creative processes [17,18,19,20]. This is supported by several neuroscience studies that show an interaction between certain cerebral areas that allow both kinds of thinking in human beings. [21,22,23,24]. Simple thinking, unlike complex thinking, and according to Lipman, is mechanical and a routine; it responds to algorithms and fails to connect different skills [15].

2.3. Complex Thinking as a Macro-Competence

Another branch in the study of complex thinking understands it as a multidimensional macro-competence consisting of other competencies or skills [25]. These competencies can be defined as “effective behaviors and skills to achieve or carry out successful projects in the future and which allow self-sustainable growth and a more equal development” [26] (p. 233). These competencies have been suggested as “a dynamic combination of knowledge, comprehension, skills, and capabilities” [27] (p. 3).

Recently, the consideration of complex thinking has changed the definition of competence [28]. For this reason, complex thinking has been included in the definition of competence, as can be observed in the approach of Cuadra-Martínez et al. [29], who defines complex thinking as a “competence to develop, in which the student and future worker makes a non-naïve “use” (conscious, explicit and reflexive) of his theories by differentiating or connecting them when needed and according to the professional context” [29] (p. 25). Nonetheless, universities continue to teach a set of independent and non-related skills. This can be easily observed in the implementation of the Tuning Education Structures in Europe and Alfa Tuning Latin America Projects, which aim to equalize higher education in Europe and Latin America, respectively. In them, the competence of complex thinking is dispersed in a wide set of generic competencies such as the ability to block out the surroundings; analysis and synthesis; critical and self-critical ability; the ability to act properly in new situations; creative ability; the ability to detect, deal with and solve problems; and decision-making ability [30,31].

2.4. Complex Thinking as an or Comprehensive Performance

The last line of research is that of socioformation, a curricular, didactic, and assessable approach that aims at educating citizens to face the future challenges of sustainable social development (DSS) [32,33]. This consists of a process in which the members of society enjoy greater and better living conditions, allowing them, in turn, to prosper by means of economic welfare, collaborative work, inclusion, equity, health, and knowledge [34,35,36]. This in turn allows them to consider the construction of a new kind of society, the knowledge society [37]. The following features compose the DSS: (1) collaborative work is essential to creating communities that self-manage their development and consider the protection of the environment and biodiversity [38]; (2) problem solving is intended to promote science and technology to find renewable sources of energy and new means of production, construction, and transport [39]; (3) by empowering the ethical project of life, it is intended to empower, in turn, the citizens to implement urgent actions for an improved quality of life and coexistence with each other and the environment [35], and (4) to face the growing complexity of the new tasks that human beings face due to the emergence of artificial intelligence. To accomplish this, the development of certain skills such as creativity, innovation, and critical analysis needs to be promoted in people and communities [40].

Socioformation is a new educative, curricular, pedagogic, and assessable approach [41] to training citizens, organizations, and communities in sustainable social development. It entails implementing transversal and inclusive projects to overcome environmental challenges by means of ethical choices, collaborative work, entrepreneurship, knowledge co-creation, digital technology, and complex thinking. It is an alternative to other recent educative approaches such as social constructivism and meaningful learning, or even to connectivism. It is distinctive for its aim at the implementation of sustainability in the society, culture, economy, technology, environmental and educative processes.

In socioformation, complex thinking is an action aimed at solving contextual problems by connecting different kinds of knowledge, with creativity, critical thinking, systemic analysis, and metacognition, and by perceiving reality with flexibility, open-mindedness, and confrontation of uncertainty [42]. Socioformation features in the educative models of several Latin American universities, such as the Indo American University of Ecuador [43], the University Centre CIFE in Mexico [44], the National University Hermilio Valdizan in Peru [45], and the National University Federico Villar [46].

According to the socioformative approach, connecting critical analysis [47] with creativity is not enough to develop complex thinking. Three additional skills are necessary to direct the evaluation and development of complex thinking in the strict sense; namely, analysis and problem solving [48]; metacognition; and systemic thinking [49], this last focused on the comprehension of the contextual challenges and processes as dynamic systems demanding a multi-, inter-, and transdisciplinary approach.

2.5. Scales to Assess Complex Thinking

Several scales have been designed to assess some skills or dimensions of complex thinking, as shown in Table 1, which shows some of the most recent scales. Some of the scales shown in Table 1 are general, meant to assess a wide range of skills, whereas others are more specific and only focused on one or two abilities. By assessing the following examples, the following conclusions are expected: (1) there are no scales aimed at assessing exclusively the complex thinking construct in itself; (2) there are no scales allowing the assessment of complex thinking together with the contributions of the socioformative approach; (3) most of the scales have more than 30 items, making them of limited practicality due to the excessive number of questions; and (4) systemic thinking is barely considered in the instruments designed up to now. Regarding this last conclusion, we focus now on the case of the Study Engagement Questionnaire (SEQ), designed by Kember and Leung [50] and adapted to the Spanish population by Gargalloet al. [51] (2018). This scale assesses some of the dimensions of complex thinking such as critical thinking, creative thinking, self-managed learning, adaptability, and problem solving. Its purpose is to establish the elements involved in teaching and learning, but it fails in not accounting for systemic thinking or metacognition.

Table 1.

Recent scales to assess components of complex thinking.

3. Materials and Methods

3.1. Participants

In total, 626 university degree or pre-degree students from four public universities in Peru participated in the study. Of the participants, 64.1% and 35.9% were women and men, respectively. Their ages ranged between 16 and 33 years old, with an average age of 20.78 DS + 3.3). Additionally, 32.2% of the participants claimed to work complementarily to their studies at the university. The selection of the sample was non-probabilistic, by open call and email.

3.2. Procedure

An instrumental study was carried out around the design of a new instrument to evaluate complex thinking, based on the Likert scale. For this, four stages were carried out: (1) design and peer review of the instrument; (2) content validity analysis by expert judges; (3) study of construct validity by confirmatory factor analysis; and (4) analysis of the evidence of convergent and discriminant validity. The stages and participants are described below.

Stage 1. Design and peer review process. Based on the theoretical references described in the literature review (Morin’s epistemological approach, Lipman’s higher-order thinking, complex thinking as macrocompetence, and the contributions of socioformation), the essential complex thinking skills that he had to tackle the new instrument. Some recent instruments on the subject were also analyzed in Table 1. Based on this, a draft of the instrument was made by the authors, which was later improved with the support of three experts in the area. The three experts presented the following characteristics: (1) doctorate in psychology, education, or social sciences; (2) experience of more than 15 years in the design and validation of instruments; and (3) have at least five publications on cognitive processes.

Stage 2. Assessment of the content validity. After the scale was designed with the support of the experts, this was assessed by 16 judges with experience in the field of complex thinking. At this stage, every subscale was assessed with two indicators; appropriateness of the questions and clarity in the writing. A Likert-kind scale with four levels from 1 to 4 (in which 1 is the lowest level and 4 the highest one) was used. The judges were also asked to make suggestions to improve the quality of each scale, such as adding or removing questions, improving the readability, and adding new scales.

Afterward, the 16 judges were asked to evaluate the instrument as a whole, with the same indicators of appropriateness and writing. At this stage, a third indicator was added, satisfaction, which was measured on a scale of 1 to 5 (from a very low satisfaction (1) to a high one (5)). To assess the judges’ level of agreement, Aiken’s V was used and the values accepted were the ones higher than 0.8 [66]. All of the judges were previously contacted via email, in which they received all the necessary information regarding the purpose of the instrument, and were asked to make suggestions to remove, add or improve the questions. In selecting the judges, all were required to have provable experience in the field of complex thinking skills, to be investigators and university professors, to have at least one Master’s degree, to have published regarding the topic, and to have experience in the review or design of instruments of this nature. The features of the judges are described in Table 2.

Table 2.

Data of the judges who participated in the content validity.

Stage 3. Construct validity. The whole sample of university students (n = 626) was used to carry out a confirmatory factor analysis with the approach of the maximum likelihood estimation. This technique was used since there are theoretically five factors and it was intended to establish if these factors were verified in the study. For this reason, two models have been tested: the first one with four factors and the second one with five. Finally, Cronbach´s alpha [67] was used to determine the reliability and the composite reliability coefficient [68]. In the same way, and by using a multigroup analysis, a factor invariance analysis by gender was carried out. The confirmatory factor analysis was carried out in the AMOS 27 program.

Stage 4. Convergent and discriminant validity. To assess the convergent validity of the model, that is to say, if the constructs evaluated were effectively assessed by the instrument, three criteria were used. Firstly, the factor weight of the questions in their respective factors had to be higher than 0.50 [69]; secondly, the composite reliability index to be higher than 0.70 [69], and thirdly, the average variance extracted (AVE) to be higher than 0.50 [70]. A sample of 626 students was used to determine the convergent validity. Additionally, the assessment of the discriminant validity was carried out with the same program AMOS 27.

3.3. Ethical Aspects

The Mexican Law of Personal Data Protection was followed over the course of the research since the participants´ emails were requested in order to send them the instrument link. Additionally, all participants were informed of the aim of the study and each one signed an online consent letter. All participants were free to leave the process at any time and without consequences. After answering the questions, they were allowed to know the result of the research to benefit from the process and to implement improvements if needed. The study was approved by the Institutional Ethics Committee.

4. Results

4.1. Stage 1. Design and Peer Review Process

Table 3 describes the complex thinking skills chosen for the current study based on the review of scientific literature. A total of five complex thinking skills were chosen: problem solving, systemic thinking, creativity, metacognition, and flexibility. Each of these skills was evaluated on a frequency scale from 1 to 5, in which 1 is “Never” and 5 “Always”. The Likert-like scale of 22 items is shown in Appendix A.

Table 3.

Skills assessed by the Complex Thinking Essential Skills Scale.

4.2. Stage 2. Content Validity

All 16 judges agreed with the suitable level of appropriateness and comprehension of the Complex Thinking Skills Scale regarding its four subscales and the instrument as a whole, as shown in Table 4. The Aiken´s v values were higher than 0.8 in the two aspects assessed on the five scales. There was also agreement regarding the level of satisfaction of the global scale (V ≥ 0.8), thus, showing the validity of the instrument [66] (Table 4). Some judges made suggestions to improve several writing aspects, and these were implemented prior to the application to the general sample.

Table 4.

Expert judgment results.

4.3. Stage 3. Construct Validity

Confirmatory factor analysis was used to validate the scale. The distribution of all items is similar to the normal one, which was assessed by using the kurtosis approach and asymmetry (Table 5), and by using the values proposed by Curran et al. [76]: The asymmetry and kurtosis coefficients were within the range of +1 and −1, and the sample had a normal distribution, according to the Shapiro–Wilk test. With these data, it is possible to proceed with the factor analysis itself. The convenience of using the maximum likelihood estimation as an extraction method is confirmed [77].

Table 5.

Descriptive data of the sample.

The first step in the confirmatory factor analysis was to determine the factor weights, which are described in Table 6. All of the factor weights of the items were higher than 0.5, and hence are considered significant [69], except for item 6 (“Do you question the facts to find opportunity areas and to implement improvements?”) with a factor weight of only 0.425. For this reason, this item was eliminated and the confirmatory factor analysis was carried out again.

Table 6.

Factor loadings.

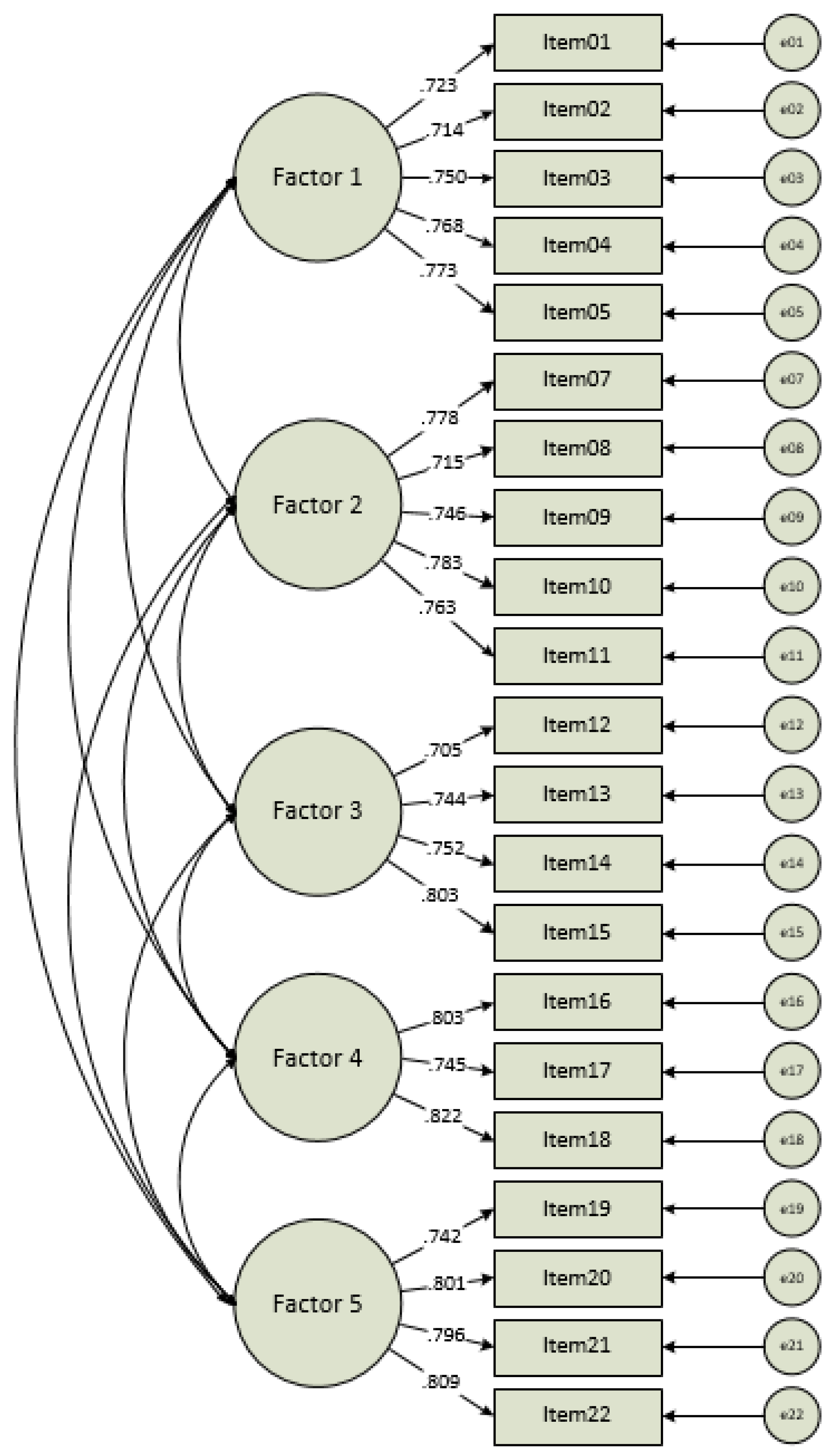

Next, the 5-factor model postulated in the instrument was tested. Table 7 shows the goodness of fit indexes; they were positive and confirmed the theoretical model proposed. Since there is a wide range of such indexes, techniques suggested by Hair et al. [69] were used: a combination of chi-square reduced (χ2/gl), Tucker-Lewis index (TLI), comparative fit index (CFI), and the root mean square error of approximation (RMSEA). Even using the strictest criteria [78], requiring the TLI and CFI to be higher than 0.95, and the RMSEA lower than 0.6, the model still fits the data. Itis important to point out that, even though the chi-square test was significant, this index is especially sensitive to sample size, unlike the TLI for example, which turns it into a less-reliable index in this case [79] (Figure 1).

Table 7.

Fit measures.

Figure 1.

5-factor model of the Complex Thinking Essential Skills Scale. Factor 1 = problem solving; Factor 2 = Critical analysis; Factor 3 = Metacognition; Factor 4: Systemic analysis; and Factor 5 = Creativity.

Finally, a multigroup analysis assessed the factor invariance of the instrument by gender. By following the most usual recommendations, configure, metrical, and scalar invariance was estimated, while the residual variance was discarded due to its low practical value [80]. Using the method of Cheung y Rensvold [81], differences lower than 0.01 in the CFI index not considered to be enough to discard the invariance hypothesis, whereas the differences between the RMSEA of the configurational model and the metrical and scalar models were not higher than 0.15 [82]. The results show no major differences between men and women (Table 8).

Table 8.

Factor invariance test.

4.4. Stage 4. Convergent Validity

Table 9 shows the results of the convergent validity. The average extracted variance was higher than 0.5 [83]. Additionally, the composite reliability was higher than 0.7, which is a positive indicator [84]. Thus, we conclude that the questions assess effectively the constructs established in each factor and demonstrate convergent validity.

Table 9.

Convergent validity of the model.

Additionally, the results of the discriminant validity, which assesses whether the factors are clearly different from each other, were more complex. Discriminant validity was assessed using the method proposed by Fornell and Larcker [70], in which the square root of the average extracted variance must be higher than the correlations matrix of that factor with the other factors. In this study, the correlation of the factors was high (see Figure 1) and we expect the correlations matrix to have higher scores than the square of the average extracted variance (Table 10). These results are varied. However, this is not necessarily problematic, since the scale assesses related factors. Thus, its purpose is not to reach a specific conclusion about the differences, but a conclusion of complex thinking practice itself.

Table 10.

Discriminant validity of the model.

5. Discussion

Complex thinking, in the socioformative approach, is an or comprehensive performance that citizens and communities must carry out to achieve sustainable social development. This is the main challenge that humankind faces nowadays, and encompasses other related challenges such as disease prevention and health promotion; quality of life and socioeconomic development; inclusive economy; housing; transport and education; and pollution prevention, mitigation of global warming, and biodiversity preservation. Therefore, developing this comprehensive performance would allow facing the context and his problems with a systemic vision. For example, the case of the current COVID-19 pandemic [85]. This context has highlighted the lack of training in complex thinking in many leaders and citizens. This issue needs to be understood as a consequence, prevention efforts in many countries have not had the expected impact (see, for example, Roozenbeek et al. [86]). In this specific problem, the need to generate transdisciplinary actions has been suggested [87], which also applies to the climate crisis and other problems of humanity.

These are the reasons why the current research offers a new instrument to efficiently assess the complex thinking skills in adult people, named the Complex Thinking Essential Skills Scale (COMPLEX-21), and to direct better the educative actions of human talent. To this end, the analysis of a group of 14 experts in the field proved the content validity of the COMPLEX-21, with Aiken’s V values higher than 0.80 [66]. This means that this scale is a useful, appropriate, and understandable instrument for potential users.

Additionally, the validity of the instrument was demonstrated, since the five factors postulated at a theoretical level for complex thinking (problem solving, critical analysis, metacognition, systemic analysis, and creativity) were assessed, and the results showed that the goodness of fit indexes met the criteria established in this field [88]. In addition, most of the items had a suitable factor weight and higher than 0.5. There was only one question with a non-suitable factor weight and it was eliminated from the instrument for this reason. Besides, the scale showed no difference by gender and. Therefore, it can be recommended for practical use.

The major problem is the one referred to the discriminant validity, in other words, the clear differentiation between the constructs assessed for each factor. We explain this in several ways. A possible explanation might be that the five factors are closely related to each other and thus, cannot be sufficiently separated. This would not be a single case (see Disabato et al. [89]; Gau [90]). Future studies of this scale will address this aspect to come to more decisive conclusions.

There is plenty of information and lots of proposals regarding complex thinking and its skills. Nonetheless, the instruments are quite general and do not address “Complex thinking” itself as a construct; many of these skills are assessed separately and systemic analysis does not seem to be that important. Nowadays, it is essential to assess these skills as a whole since complex thinking is increasingly a skill that every citizen must develop, including students, teachers, and university principals [43]. For this reason, the current study offers an appropriate scale with good initial psychometric properties to help meet this need in universities and encourage the practice of complex thinking in graduates. The end goal is the creation of a better and more environmentally friendly society.

In addition, the present study helps to understand the structure of complex thinking as a practice, just as socioformation proposes [41]: a new pedagogic approach, created with the support of leaders in education, community, and organizations to face the challenges of sustainable social development. Thus, the socioformative approach comprehends the complex thinking construct as an or comprehensive performance to solve the problems in the way of sustainable social development. This different from the assumption of the epistemological approach [12], which tends not to go beyond the philosophical plane; or from high order thinking [15] which does not consider the improvement of life conditions and biodiversity; or from macro-competence [25], which does consider the skills, attitudes, and knowledge but, does not go beyond a fragmented view of the concept, spends excessive time in the planning of processes and has no connection to sustainable development. Nonetheless, the contributions of these three perspectives have been considered in the socioformative approach but in the framework of a new proposal, more focused on the social aspect and nature.

Increasingly, Ibero-American Universities and educative institutions are implementing the socioformation in their systems [43,44,45,46]. Thus, the instrument validated in the present study will contribute to its consolidation by allowing university students, teachers, and principals a better and more efficient validation of complex thinking as a practice (or comprehensive performance). The current study provides information about the practical structure of complex thinking, which is composed of five essential skills interconnected to each other. Within the socioformation approach, and despite the changes taking place in higher education, it is essential to implement actions that promote complex thinking for all students. This is because many organizations keep insisting on teaching strategies that do not enhance complex thinking but the skills and abilities of simplistic thinking [48].

The COMPLEX-21 scale differs from other instruments assessing specific skills of complex thinking, such as the Study Engagement Questionnaire (SEQ) [50,51], the Watson–Glaser, the Critical Thinking Appraisal [52,53] and the California Critical Thinking Disposition Inventory [54,55] because these assess several dimensions of complex thinking but not the construct as a whole; these scales have a large number of items (around 70 items) and systemic analysis is relegated to a second plane.

The current scale has similar features to other instruments such as the study carried out by Gargallo et al. [51], which assesses, as a whole, the learning–teaching process in the university to provide feedback to the teachers and institutions so they can improve these processes. In that study, 805 people from three Valencian universities were evaluated with a survey assessing the abilities of several students and the ability of the teacher to create a suitable learning environment. Another related instrument is the one designed by Amrina et al. [75] which assesses the competence of the students in achieving the development of logic, critical and creative thinking by employing a Like scale (1–5 points). One difference between the study carried out by Amrina et al. [75] and ours is that our COMPLEX-21 is designed for several fields, not only for math. Additionally, their research did not consider systematic thinking. Amrina et al. [75] are similar to the COMPLEX-21 since both assume that both critical and creative thinking are necessary for the problem-solving process, just like the scales used by Heilat and Seifert [91], and Martinsen and Furnha [11]. Nonetheless, the COMPLEX-21 has its features, such as its focus on the complex thinking itself and not on the learning–teaching process.

The present study was exploratory; thus, it has limitations. Firstly, we considered only a subset of the possible skills of complex thinking due to the limited information available about this topic and the non-agreement among experts, who considered the five skills described above as essential and who decided not to add new ones to the list. The same happened with the judges during the content validity stage, since three of these judges proposed more skills, did not come into agreement. the two of the proposed skills were conceptual analysis and knowledge management, which have been associated with complex thinking [92]. For future studies, we recommend including an analysis of these two skills and integrating them with specific items. Secondly, the validity process would have been improved by joining it with analysis of other processes, such as concurrent validity with other similar instruments [93], predictive validity, and test-rest stability [94]. These studies were not carried out since this research was part of another research in university students. As a result, it was not possible to add further tests or tests on a larger subsample of students.

Although this is an exploratory study and new research is needed around the characteristics of the COMPLEX-21, some practical implications are described below: (1) the new scale will help to assess complex thinking as an integral performance from the framework of socioformation, because although complex thinking is considered a relevant axis for education [95]; There are no instruments to evaluate this process from the references discussed throughout this study; (2) COMPLEX-21 may contribute to reducing the high number of competencies studied in the training of citizens, since many skills integrated in complex thinking are addressed as separate competencies [30,31]; (3) the new instrument could help to determine more precisely the factors associated with better management to achieve sustainable social development, considering complex thinking as an integrative performance, as proposed from the socioformative approach; (4) based on COMPLEX-21, the educational models of educational institutions and universities could have more clarity regarding this process and its associated factors and (5) educational institutions could assess the level of development of complex thinking in students with the new scale and thus implement actions that help with that, which is a purpose in various educational centers [43,44,45].

Author Contributions

Conceptualization, instrument design and statistical analysis: S.T., bibliographic consultancy, application of instruments and article format: J.L.-N. Both authors have read and agreed to the published version of the manuscript.

Funding

This research was not externally funded.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

The ethical principles established by the Publications Ethics Committee (COPE, 1997) were accomplished. The participants were properly informed about the study and signed a consent letter.

Data Availability Statement

Not applicable.

Conflicts of Interest

The authors declare no conflict of interest.

Appendix A. Complex Thinking Scale in University Students

The present instrument is aimed at assessing the development level of complex thinking skills as applied to facing problems in context, by considering five essential processes: problem-solving, critical analysis, metacognition, systemic analysis and creativity.

Instructions:

- There are 21 questions classified in five dimensions assessing complex thinking.

- Read each question carefully and choose the frequency of the process: Never, practically never, sometimes, practically always, and always. Choose the most suitable option regarding what you´ve done in the last six months.

- All of the questions must be answered.

- Your answers are fully confidential.

- This is simply a self-evaluating instrument and it will allow you to assess your development level in complex thinking. This is not a personality or intelligence test.

- By answering the instrument, you agree to participate in the process. All of the information will be strictly confidential.

Table A1.

Complex Thinking Scale in University Students.

Table A1.

Complex Thinking Scale in University Students.

| Problem Solving | Never | Practically Never | Sometimes | Practically Always | Always |

|---|---|---|---|---|---|

| 1. Are you able to identify, detect and/or deal with a problem to be solved? | |||||

| 2. Do you understand what a problem is and the different aspects composing it, such as the need that must be solved, the context of the problem and the challenge to overcome? | |||||

| 3. Do you understand problems by establishing the causes and consequences, as well as the side effects and the appropriateness of possible solutions? | |||||

| 4. Do you propose alternatives to solve problems, analyze them, compare them to each other and then pick the best option while considering possible situations of uncertainty in the context? | |||||

| 5. While facing a problem, do you find the solution by analyzing the several factors, relating them to each other, taking into account the possible side consequences and considering the uncertainty elements? | |||||

| Critical Analysis | Never | Practically Never | Sometimes | Practically Always | Always |

| * 6. Do you question the facts to find opportunity areas and to implement improvements? | |||||

| 7. Do you verify information, taking into account the bibliographic resources and the facts of the context? | |||||

| 8. Do you analyze your own and other people´s ideas, recognize the positive aspects and detect possible weak points to suggest new improvements? | |||||

| 9. Do you argue about—situations and problems while avoiding the generalizations and by assuming the possible weak points of your analysis? | |||||

| 10. Do you make your choices by considering both the positive and negative aspects of a situation and to achieve a specific goal? | |||||

| 11. Do you think and act in a flexible way and are you able to adapt to the situations of the context to resolve the problems? | |||||

| Metacognition | Never | Practically Never | Sometimes | Practically Always | Always |

| 12. Do you think about how are you going to carry out activities with the purpose of focusing on them, finishing them and achieving a specific purpose, while trying to correct possible errors? | |||||

| 13. Do you make changes in the way actions are carried out by thinking about them and do you correct your errors with the purpose of finishing the activities and achieving a specific goal? | |||||

| 14. Do you self-assess achievements and aspects to improve in the implementation of activities, and are you aware of the learning generated in order to use it in new situations? | |||||

| 15. Do you self-assess your moral actions, acknowledge your mistakes and make changes for the better in your actions? | |||||

| Systemic Analysis | Never | Practically Never | Sometimes | Practically Always | Always |

| 16. Do you face a problem from different points of view or perspectives while looking for their complementarity? | |||||

| 17. Do you intend to join forces with others to understand and solve problems of context more efficiently? | |||||

| 18. Do you intent to identify uncertain situations while addressing problems and do you face them with flexible strategies? | |||||

| Creativiy | Never | Practically Never | Sometimes | Practically Always | Always |

| 19. Do you find solutions to the problems without letting yourself be carried away by tradition or authority? | |||||

| 20. Are you the one proposing solutions to the problems and are these different from the ones already established in the context and the reported ones in bibliography resources? | |||||

| 21. Do you change the way in which you explain and solve a problem, through a different synthesis, a question that changes the analysis or even a new solution? | |||||

| 22. Do you intend to make a great impact in the problem-solving process regarding what has been done up to now and by following new strategies? |

Note: the questions marked as * were eliminated from the scale as a result of the confirmatory factor analysis.

References

- Mylek, M.R.; Schirmer, J. Understanding acceptability of fuel management to reduce wildfire risk: Informing communication through understanding complexity of thinking. For. Policy Econ. 2020, 113, 102120. [Google Scholar] [CrossRef]

- Axpe, M.R.V. Ciencias de la complejidad vs. pensamiento complejo. Claves para una lectura crítica del concepto de cientificidad en Carlos Reynoso. Pensam. Rev. Investig. Inf. Filos. 2019, 75, 87–106. [Google Scholar]

- Costa, V.T.; Meirelles, B.H.S. Adherence to treatment of young adults living with HIV/AIDS from the perspective of complex thinking. Texto Context. Enferm. 2019, 28, 1–15. [Google Scholar] [CrossRef]

- Stoian, A.P.; Mitrofan, G.; Colceag, F.; Suceveanu, A.I.; Hainarosie, R.; Pituru, S.; Diaconu, C.C.; Timofte, D.; Nitipir, C.; Poiana, C.; et al. Oxidative Stress in Diabetes. A model of complex thinking applied in medicine. Rev. Chim. 2018, 69, 2515–2519. [Google Scholar] [CrossRef]

- Block, M. Complex Environment Calls for Complex Thinking: About Knowledge Sharing Culture. J. Rev. Glob. Econ. 2019, 8, 141–152. [Google Scholar] [CrossRef]

- Lin, Y.-T. Impacts of a flipped classroom with a smart learning diagnosis system on students’ learning performance, perception, and problem solving ability in a software engineering course. Comput. Hum. Behav. 2019, 95, 187–196. [Google Scholar] [CrossRef]

- Krevetzakis, E. On the Centrality of Physical/Motor Activities in Primary Education. J. Adv. Educ. Res. 2019, 4, 24–33. [Google Scholar] [CrossRef]

- Hanlon, J.P.; Prihoda, T.J.; Verrett, R.G.; Jones, J.D.; Haney, S.J.; Hendricson, W.D. Critical Thinking in Dental Students and Experienced Practitioners Assessed by the Health Sciences Reasoning Test. J. Dent. Educ. 2018, 82, 916–920. [Google Scholar] [CrossRef]

- Peeters, M.J.; Zitko, K.L.; Schmude, K.A. Development of Critical Thinking in Pharmacy Education. Innov. Pharm. 2016, 7. [Google Scholar] [CrossRef]

- Tran, T.B.L.; Ho, T.N.; MacKenzie, S.V.; Le, L.K. Developing assessment criteria of a lesson for creativity to promote teaching for creativity. Think. Ski. Creat. 2017, 25, 10–26. [Google Scholar] [CrossRef]

- Martinsen, Ø.L.; Furnham, A. Cognitive style and competence motivation in creative problem solving. Pers. Individ. Differ. 2019, 139, 241–246. [Google Scholar] [CrossRef]

- Morin, E. The Seven Knowledge Necessary for the Education of the Future; Santillana-Unesco: Paris, France, 1999. [Google Scholar]

- Morin, E. Introduction to Complex Thinking; Gedisa: Barcelona, Spain, 1995. [Google Scholar]

- Terrado, P.R. Aplicación de las teorías de la complejidad a la comprensión del territorio. Estud. Geogr. 2018, 79, 237–265. [Google Scholar] [CrossRef]

- Morin, E. La Mente Bien Ordenada. Repensar la Reforma. Reformar el Pensamiento; Seix Barral: Barcelona, Spain, 2000. [Google Scholar]

- Lipman, M. Pensamiento Complejo y Educación [Complex Thinking and Education]; Ediciones de la Torre: Madrid, Spain, 1997. [Google Scholar]

- Saremi, H.; Bahdori, S. The Relationship between Critical Thinking with Emotional Intelligence and Creativity among Elementary School Principals in Bojnord City, Iran. Int. J. Life Sci. 2015, 9, 33–40. [Google Scholar] [CrossRef]

- Klimenko, O.; Aristizábal, A.; Restrepo, C. Pensamiento crítico y creativo en la educación preescolar: Algunos aportes desde la neuropsicopedagogia. Katharsis 2019, 28, 59–89. [Google Scholar] [CrossRef]

- Hasan, R.; Lukitasari, M.; Utami, S.; Anizar, A. The activeness, critical, and creative thinking skills of students in the Lesson Study-based inquiry and cooperative learning. J. Pendidik. Biol. Indones. 2019, 5, 77–84. [Google Scholar] [CrossRef][Green Version]

- Siburian, O.; Corebima, A.D.; Saptasari, M. The Correlation between Critical and Creative Thinking Skills on Cognitive Learning Results. Eurasian J. Educ. Res. 2019, 19, 1–16. [Google Scholar] [CrossRef]

- Avetisyan, N.; Hayrapetyan, L.R. Mathlet as a new approach for improving critical and creative thinking skills in mathematics. Int. J. Educ. Res. 2017, 12. Available online: https://cutt.ly/otxYbSB (accessed on 8 May 2021).

- Beaty, R.E.; Benedek, M.; Silvia, P.J.; Schacter, D.L. Creative Cognition and Brain Network Dynamics. Trends Cogn. Sci. 2016, 20, 87–95. [Google Scholar] [CrossRef]

- Asefi, M.; Imani, E. Effects of active strategic teaching model (ASTM) in creative and critical thinking skills of architecture students. Archnet-IJAR Int. J. Arch. Res. 2018, 12, 209–222. [Google Scholar] [CrossRef]

- Beaty, R.E.; Seli, P.; Schacter, D.L. Network neuroscience of creative cognition: Mapping cognitive mechanisms and individual differences in the creative brain. Curr. Opin. Behav. Sci. 2019, 27, 22–30. [Google Scholar] [CrossRef]

- Pacheco, C.S. Art Education for the Development of Complex Thinking Metacompetence: A Theoretical Approach. Int. J. Art Des. Educ. 2019, 39, 242–254. [Google Scholar] [CrossRef]

- Murrain, E.; Barrera, N.F.; Vargas, Y. Cuatro reflexiones sobre la docencia. Rev. Repert. Med. Cirugía 2017, 26, 242–248. [Google Scholar] [CrossRef]

- Puziol, J.K.P.; Barreyro, G.B. Alfa tuning Latin America project: The relationship between elaboration and imple-mentation in participating Brazilian universities. Acta Sci. 2018, 40, e37338. [Google Scholar]

- Gomez, J.T.A. La competencia europeísta: Una competencia integradora. Bordón. Rev. Pedagog. 2015, 67, 35. [Google Scholar] [CrossRef]

- Cuadra-Martínez, D.J.; Castro, P.J.; Juliá, M.T. Tres Saberes en la Formación Profesional por Competencias: Integración de Teorías Subjetivas, Profesionales y Científicas. Form. Univ. 2018, 11, 19–30. [Google Scholar] [CrossRef]

- Palma, M.; Rios, I.D.L.; Miñán, E. Generic competences in engineering field: A comparative study between Latin America and European Union. Procedia Soc. Behav. Sci. 2011, 15, 576–585. [Google Scholar] [CrossRef]

- Beneitone, P.; Esquetini, C.; González, J.; Maletá, M.M.; Siufi, G.; Wagenaar, R. Reflexões e Perspectivas da Edu-Cação Superior na América Latina: Informe Final do Projeto Tuning—2004–2007; Universidad de Deusto: Bilbao, Spain, 2007. [Google Scholar]

- Anastacio, M.R. Proposals for Teacher Training in the Face of the Challenge of Educating for Sustainable Development: Beyond Epistemologies and Methodologies. In Universities and Sustainable Communities: Meeting the Goals of the Agenda 2030; Springer: Cham, Switzerland, 2020. [Google Scholar]

- Luna-Nemecio, J.; Tobón, S.; Juárez-Hernández, L.G. Sustainability-based on socioformation and complex thought or sustainable social development. Resour. Environ. Sustain. 2020, 2, 100007. [Google Scholar] [CrossRef]

- Luna-Nemecio, J. Para pensar el Desarrollo Social Sostenible: Múltiples Enfoques, un Mismo Objetivo. 2020. Available online: https://www.researchgate.net/profile/Josemanuel-Luna-Nemecio/publication/339628029_Para_pensar_el_desarrollo_social_sostenible_multiples_enfoques_un_mismo_objetivo/links/5e5d2bfaa6fdccbeba138607/Para-pensar-el-desarrollo-social-sostenible-multiples-enfoques-un-mismo-objetivo.pdf (accessed on 8 May 2021).

- Jarquín-Cisneros, L.M. How to generate ethical teachers through Socioformation to achieve Sustainable Social Development? Ecocience Int. J. 2019, 1, 29–32. [Google Scholar] [CrossRef]

- Santoyo-Ledesma, D. Approach to Sustainable Social Development and Human Talent Management in the context of socioformation. Ecocience Int. J. 2019, 1, 112–123. [Google Scholar] [CrossRef]

- Maury Mena, S.C.; Marín Escobar, J.C.; Ortiz Padilla, M.; Gravini Donado, M. Competencias genéricas en estudiantes de educación superior de una universidad privada de Barranquilla Colombia, desde la perspectiva del Proyecto Alfa Tuning América Latina y del Ministerio de Educación Nacional de Colombia (MEN). Rev. Espac. 2018, 39. Available online: https://cutt.ly/vtnRKmb (accessed on 8 May 2021).

- Batrićević, A.; Joldžić, V.; Stanković, V.; Paunović, N. Solving the Problems of Rural as Environmentally Desirable Segment of Sustainable Development. Econ. Anal. 2018, 51, 79–91. [Google Scholar] [CrossRef][Green Version]

- Kung, C.-C.; Mu, J.E. Prospect of China’s renewable energy development from pyrolysis and biochar applications under climate change. Renew. Sustain. Energy Rev. 2019, 114, 109343. [Google Scholar] [CrossRef]

- Gherheș, V.; Obrad, C. Technical and Humanities Students’ Perspectives on the Development and Sustainability of Artificial Intelligence (AI). Sustainability 2018, 10, 3066. [Google Scholar] [CrossRef]

- Prado, R.A. La socioformación: Un enfoque de cambio educativo. Rev. Iberoam. Educ. 2018, 76, 57–82. [Google Scholar] [CrossRef]

- Martínez, J.E.; Tobón, S.; López, E. Complex Thought and Quality Accreditation of Curriculum in Online Higher Education. Adv. Sci. Lett. 2019, 25, 54–56. [Google Scholar] [CrossRef]

- Universidad Tecnológica Indoamérica Modelo Educativo; Universidad Indoamérica: Quito, Ecuador, 2019; Available online: https://issuu.com/cife/docs/modelo_educativo_uti (accessed on 8 May 2021).

- CIFE. Modelo Educativo Socioformaivo; CIFE: Morelos, Mexico, 2017; Available online: www.cife.edu.mx (accessed on 8 May 2021).

- Universidad Nacional Hermilio Valdizan. Modelo Educativo; Unheval: Huánuco, Peru, 2017. [Google Scholar]

- UNFV. Modelo Educativo de la UNFV, Socioformativo-Humanista; UNFV: Lima, Peru, 2017; Available online: https://issuu.com/cife/docs/modelo_educativo_unfv (accessed on 8 May 2021).

- Serrano, R.; Macias, W.; Rodriguez, K.; Amor, M.I. Validating a scale for measuring teachers’ expectations about generic competences in higher education. J. Appl. Res. High. Educ. 2019, 11, 439–451. [Google Scholar] [CrossRef]

- Burgos, J.A.B.; Salvador, M.R.A.; Narváez, H.O.P. Del pensamiento complejo al pensamiento computacional: Retos para la educación contemporánea. Sophía 2016, 2, 143. [Google Scholar] [CrossRef]

- Ortega-Carbajal, M.F.; Hernández-Mosqueda, J.S.; Tobón-Tobón, S. Análisis documental de la gestión del conoci-miento mediante la cartografía conceptual. Ra Ximhai 2015, 11, 141–160. [Google Scholar] [CrossRef]

- Kember, D.; Leung, D.Y.P. Development of a questionnaire for assessing students’ perceptions of the teaching and learning environment and its use in quality assurance. Learn. Environ. Res. 2009, 12, 15–29. [Google Scholar] [CrossRef]

- Gargallo, B.; Suárez-Rodríguez, J.M.; Almerich, G.; Verde, I.; Iranzo, M.I.; Àngels, C. Validación del cuestionario SEQ en población universitaria española. Capacidades del alumno y entorno de enseñanza/aprendizaje. Anales Psicol. 2018, 34, 519–530. [Google Scholar] [CrossRef]

- Watson, G. Watson-Glaser Critical Thinking Appraisal; Psychological Corporation: San Antonio, TX, USA, 1980; Available online: https://cutt.ly/3tWodUY (accessed on 8 May 2021).

- D’Alessio, F.A.; Avolio, B.E.; Charles, V. Studying the impact of critical thinking on the academic performance of executive MBA students. Think. Ski. Creat. 2019, 31, 275–283. [Google Scholar] [CrossRef]

- Facione, P.; Facione, N. The California Critical Thinking Dispositions Inventory (CCTDI): And the CCTDI Test Manual; The California Academic Press: Milbrae, CA, USA, 1992. [Google Scholar]

- Bayram, D.; Kurt, G.; Atay, D. The Implementation of WebQuest-supported Critical Thinking Instruction in Pre-service English Teacher Education: The Turkish Context. Particip. Educ. Res. 2019, 6, 144–157. [Google Scholar] [CrossRef]

- Insight Assessment. California Critical Thinking Skills Test: CCTST Test Manual: “The Gold Standard” Test of Critical Thinking; California Academic Press: San Jose, CA, USA, 2013. [Google Scholar]

- Heilat, M.Q.; Seifert, T. Mental motivation, intrinsic motivation and their relationship with emotional support sources among gifted and non-gifted Jordanian adolescents. Cogent Psychol. 2019, 6, 1587131. [Google Scholar] [CrossRef]

- Kaufmann, G.; Martinsen, Ø. The AE Scale. Revised; Department of General Psychology, University of Bergen: Bergen, Norway, 1992; (revised 30 October 2020). [Google Scholar]

- Toapanta-Pinta, P.; Rosero-Quintana, M.; Salinas-Salinas, M.; Cruz-Cevallos, M.; Vasco-Morales, S. Percepción de los estudiantes sobre el proyecto integrador de saberes: Análisis métricos versus ordinales. Educ. Médica 2019. [Google Scholar] [CrossRef]

- Leach, S.; Immekus, J.C.; French, B.F.; Hand, B. The factorial validity of the Cornell Critical Thinking Tests: A multi-analytic approach. Think. Ski. Creat. 2020, 37, 100676. [Google Scholar] [CrossRef]

- Amrina, Z.; Desfitri, R.; Zuzano, F.; Wahyuni, Y.; Hidayat, H.; Alfino, J. Developing Instruments to Measure Students’ Logical, Critical, and Creative Thinking Competences for Bung Hatta University Students. Int. J. Eng. Technol. 2018, 7, 128–131. [Google Scholar] [CrossRef]

- Poondej, C.; Lerdpornkulrat, T. The reliability and construct validity of the critical thinking disposition scale. J. Psychol. Educ. Res. 2015, 23, 23–36. [Google Scholar]

- Said-Metwaly, S.; Kyndt, E.; Noortgate, W.V.D. The factor structure of the Verbal Torrance Test of Creative Thinking in an Arabic context: Classical test theory and multidimensional item response theory analyses. Think. Ski. Creat. 2020, 35, 100609. [Google Scholar] [CrossRef]

- Burin, D.I.; Gonzalez, F.M.; Barreyro, J.P.; Injoque-Ricle, I. Metacognitive regulation contributes to digital text comprehension in E-learning. Metacogn. Learn. 2020, 15, 391–410. [Google Scholar] [CrossRef]

- Gok, T. Development of problem solving strategy steps scale: Study of validation and reliability. Asia-Pac. Educ. Res. 2011, 20, 151–161. [Google Scholar]

- Penfield, R.D.; Giacobbi, J.P.R. Applying a Score Confidence Interval to Aiken’s Item Content-Relevance Index. Meas. Phys. Educ. Exerc. Sci. 2004, 8, 213–225. [Google Scholar] [CrossRef]

- Cronbach, L.J. Coefficient alpha and the internal structure of tests. Psychometrika 1951, 16, 297–334. [Google Scholar] [CrossRef]

- Raykov, T. Estimation of Composite Reliability for Congeneric Measures. Appl. Psychol. Meas. 1997, 21, 173–184. [Google Scholar] [CrossRef]

- Hair, J.F.; Black, W.; Babin, B.; Anderson, R. Multivariate Data Analysis, 7th ed.; Pearson: London, UK, 2014; Available online: https://files.pearsoned.de/inf/ext/9781292035116 (accessed on 8 May 2021).

- Fornell, C.; Larcker, D.F. Evaluating Structural Equation Models with Unobservable Variables and Measurement Error. J. Mark. Res. 1981, 18, 39. [Google Scholar] [CrossRef]

- Borgonovi, F.; Greiff, S. Societal level gender inequalities amplify gender gaps in problem solving more than in academic disciplines. Intelligence 2020, 79, 101422. [Google Scholar] [CrossRef]

- Fitriani, H.; Asy’Ari, M.; Zubaidah, S.; Mahanal, S. Exploring the Prospective Teachers’ Critical Thinking and Critical Analysis Skills. J. Pendidik. IPA Indones. 2019, 8, 379–390. [Google Scholar] [CrossRef][Green Version]

- Flavell, J.H. Metacognition and cognitive monitoring: A new area of cognitive-developmental inquiry. Am. Psychol. 1979, 34, 906–911. [Google Scholar] [CrossRef]

- Velkovski, V. Application of the systemic analysis for solving the problems of dependent events in agricultural lands-characteristics and methodology. Trakia J. Sci. 2019, 17, 319–323. [Google Scholar] [CrossRef]

- Curran, P.J.; West, S.G.; Finch, J.F. The robustness of test statistics to nonnormality and specification error in confirmatory factor analysis. Psychol. Methods 1996, 1, 16–29. [Google Scholar] [CrossRef]

- Fabrigar, L.R.; Wegener, D.T.; Maccallum, R.C.; Strahan, E.J. Evaluating the use of exploratory factor analysis in psychological research. Psychol. Methods 1999, 4, 272–299. [Google Scholar] [CrossRef]

- Hu, L.T.; Bentler, P.M. Cutoff criteria for fit indexes in covariance structure analysis: Conventional criteria versus new alternatives. Struct. Equ. Model. 1999, 6, 1–55. [Google Scholar] [CrossRef]

- Kaplan, D. Evaluating and Modifying Covariance Structure Models: A Review and Recommendation. Multivar. Behav. Res. 1990, 25, 137–155. [Google Scholar] [CrossRef] [PubMed]

- Putnick, D.L.; Bornstein, M.H. Measurement invariance conventions and reporting: The state of the art and future directions for psychological research. Dev. Rev. 2016, 41, 71–90. [Google Scholar] [CrossRef] [PubMed]

- Cheung, G.W.; Rensvold, R.B. Evaluating Goodness-of-Fit Indexes for Testing Measurement Invariance. Struct. Equ. Model. A Multidiscip. J. 2002, 9, 233–255. [Google Scholar] [CrossRef]

- Chen, F.F. Sensitivity of Goodness of Fit Indexes to Lack of Measurement Invariance. Struct. Equ. Model. A Multidiscip. J. 2007, 14, 464–504. [Google Scholar] [CrossRef]

- Wu, H.; Leung, S.-O. Can Likert Scales be Treated as Interval Scales?—A Simulation Study. J. Soc. Serv. Res. 2017, 43, 527–532. [Google Scholar] [CrossRef]

- Hair, J.F.; Black, W.C.; Babin, B.J.; Anderson, R.E. Multivariate Data Analysis: A Global Perspective; Prentice Hall: Upper Saddle River, NJ, USA, 2010. [Google Scholar]

- Luna-Nemecio, J. Determinaciones socioambientales del COVID-19 y vulnerabilidad económica, espacial y sanitario-institucional. Rev. Cienc. Soc. 2020, 26, 21–26. [Google Scholar] [CrossRef]

- Roozenbeek, J.; Schneider, C.R.; Dryhurst, S.; Kerr, J.; Freeman, A.L.J.; Recchia, G.; van der Bles, A.M.; van der Linden, S. Susceptibility to misinformation about COVID-19 around the world. R. Soc. Open Sci. 2020, 7, 1–15. [Google Scholar] [CrossRef]

- Lawrence, R.J. Responding to COVID-19: What’s the Problem? J. Hered. 2020, 97, 583–587. [Google Scholar] [CrossRef]

- Connelly, L.M. What Is Factor Analysis? Medsurg Nurs. 2019, 28, 322–330. [Google Scholar]

- Disabato, D.J.; Goodman, F.R.; Kashdan, T.B.; Short, J.L.; Jarden, A. Different types of well-being? A cross-cultural examination of hedonic and eudaimonic well-being. Psychol. Assess. 2016, 28, 471–482. [Google Scholar] [CrossRef] [PubMed]

- Gau, J.M. The Convergent and Discriminant Validity of Procedural Justice and Police Legitimacy: An Empirical Test of Core Theoretical Propositions. J. Crim. Justice 2011, 39, 489–498. [Google Scholar] [CrossRef]

- Cseh, M.; Crocco, O.S.; Safarli, C. Teaching for Globalization: Implications for Knowledge Management in Organizations. In Social Knowledge Management in Action; Springer: Berlin/Heidelberg, Germany, 2019; pp. 105–118. [Google Scholar]

- Knutson, J.S.; Friedl, A.S.; Hansen, K.M.; Hisel, T.Z.; Harley, M.Y. Convergent Validity and Responsiveness of the SULCS. Arch. Phys. Med. Rehabil. 2019, 100, 140–143.e1. [Google Scholar] [CrossRef] [PubMed]

- Vaughn, M.G.; Roberts, G.; Fall, A.-M.; Kremer, K.; Martinez, L. Preliminary validation of the dropout risk inventory for middle and high school students. Child. Youth Serv. Rev. 2020, 111, 104855. [Google Scholar] [CrossRef]

- Degener, S.; Berne, J. Complex Questions Promote Complex Thinking. Read. Teach. 2016, 70, 595–599. [Google Scholar] [CrossRef]

- Viguri, M. Science of complexity vs. complex thinking keys for a critical reading of the concept of scientific in Carlos Reynoso. Pensam. Rev. Investig. Inf. Filos. 2019, 75, 87–106. [Google Scholar] [CrossRef]

- Mara, J.; Lavandero, J.; Hernández, L.M. The educational model as the foundation of university action: The experience of the Technical University of Manabi, Ecuador. Rev. Cuba. Educ. Super. 2018, 37, 151–164. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).