Validation and Psychometric Properties of the Gameplay-Scale for Educative Video Games in Spanish Children

Abstract

1. Introduction

2. Related Work

3. Materials and Methods

3.1. Participants and Design

3.2. Measures

3.3. Procedure

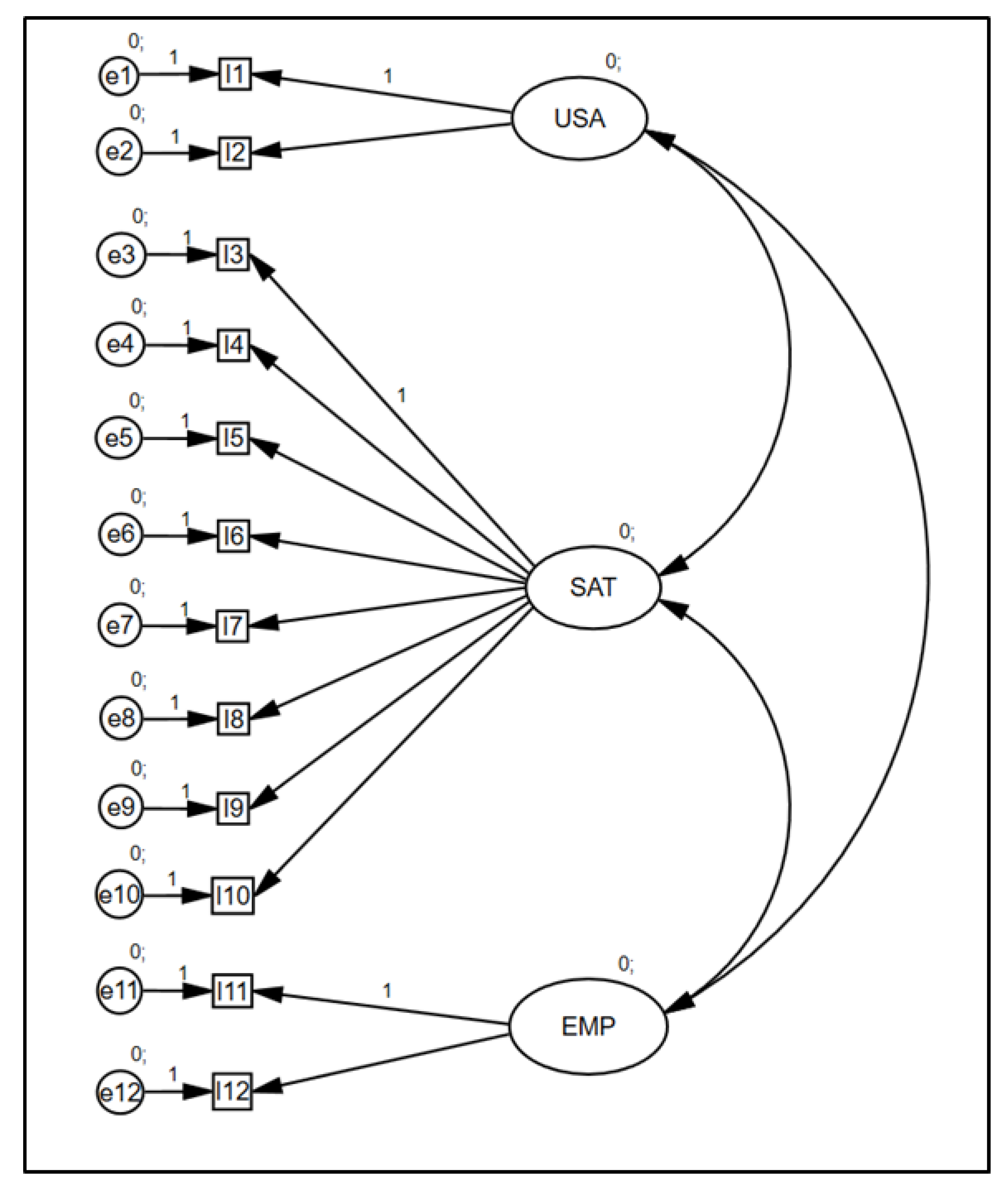

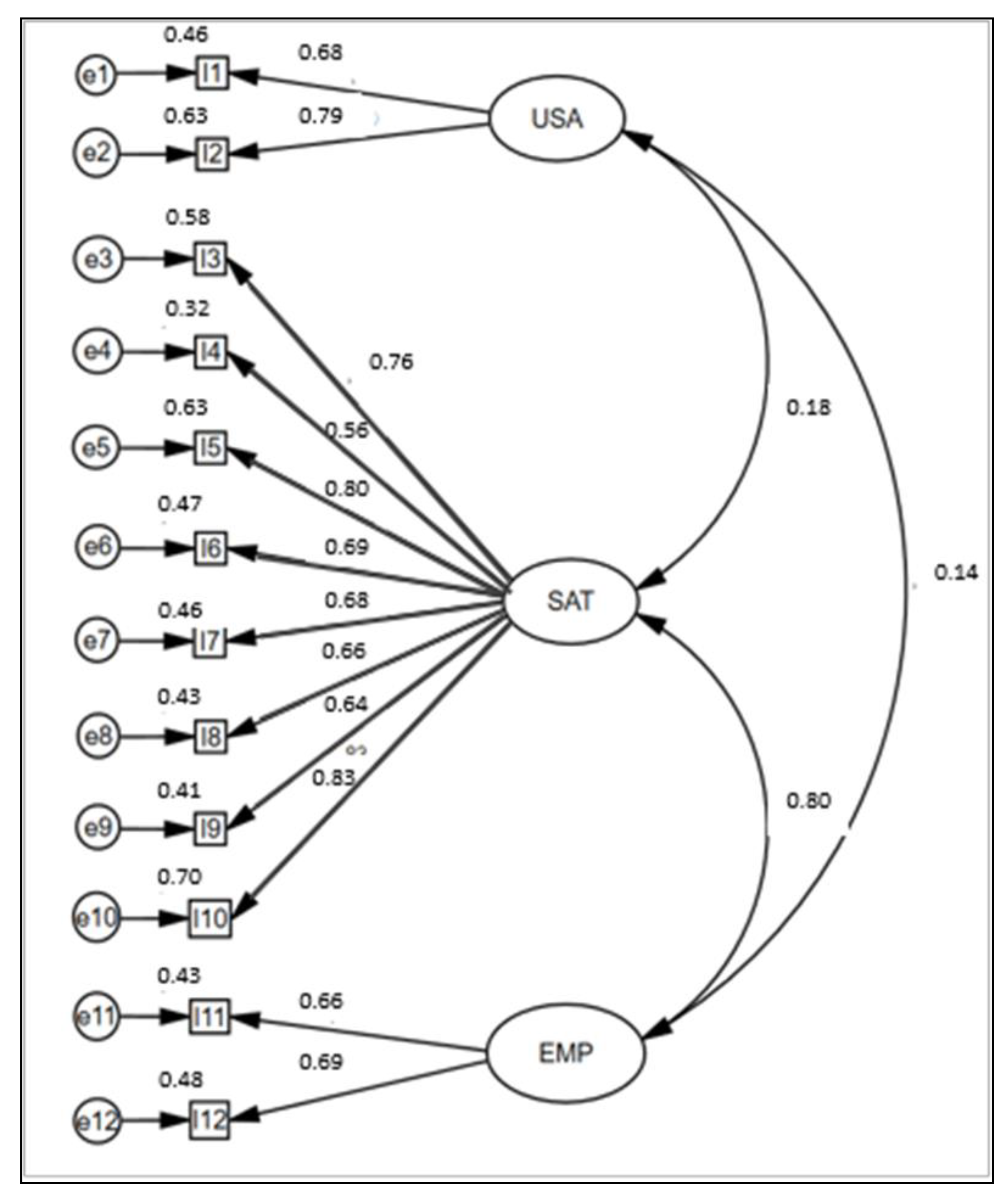

3.4. Statistical Analysis

4. Results

5. Discussion and Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Wang, Y.; Rajan, P.; Sankar, C.S.; Raju, P.K. Let Them Play: The Impact of Mechanics and Dynamics of a Serious Game on Student Perceptions of Learning Engagement. IEEE Trans. Learn. Technol. 2017, 10, 514–525. [Google Scholar] [CrossRef]

- Dankbaar, M.E.; Richters, O.; Kalkman, C.J.; Prins, G.; Ten Cate, O.T.; Van Merrienboer, J.J.; Schuit, S.C. Comparative effectiveness of a serious game and an e-module to support patient safety knowledge and awareness. BMC Med. Educ. 2017, 17, 30–40. [Google Scholar] [CrossRef]

- Johnsen, H.M.; Fossum, M.; Vivekananda-Schmidt, P.; Fruhling, A.; Slettebø, Å. Nursing students’ perceptions of a video-based serious game’s educational value: A pilot study. Nurs. Educ. Today 2018, 62, 62–68. [Google Scholar] [CrossRef]

- Knight, J.F.; Carley, S.; Tregunna, B.; Jarvis, S.; Smithies, R.; De Freitas, S.; Mackway-Jones, K. Serious gaming technology in major incident triage training: A pragmatic controlled trial. Resuscitation 2010, 81, 1175–1179. [Google Scholar] [CrossRef]

- Tan, A.J.Q.; Lee, C.C.S.; Lin, P.Y.; Cooper, S.; Lau, L.S.T.; Chua, W.L.; Liaw, S.Y. Designing and evaluating the effectiveness of a serious game for safe administration of blood transfusion: A randomized controlled trial. Nurs. Educ. Today 2017, 55, 38–44. [Google Scholar] [CrossRef]

- Boyle, E.A.; Hainey, T.; Connolly, T.M.; Gray, G.; Earp, J.; Ott, M.; Pereira, J. An update to the systematic literature review of empirical evidence of the impacts and outcomes of computer games and serious games. Comput. Educ. 2016, 94, 178–192. [Google Scholar] [CrossRef]

- Granic, I.; Lobel, A.; Engels, R.C. The benefits of playing video games. Am. Psychol. 2014, 69, 66–78. [Google Scholar] [CrossRef]

- Phan, M.H.; Keebler, J.R.; Chaparro, B.S. The development and validation of the game user experience satisfaction scale (GUESS). Hum. Factors 2016, 58, 1217–1247. [Google Scholar] [CrossRef]

- Ijsselsteijn, W.; De Kort, Y.; Poels, K. Characterising and Measuring User Experiences in Digital Games. In Proceedings of the ACE Conference’07, Salzburg, Austria, 13–15 June 2007; p. 27. [Google Scholar]

- Yap, K.; Yap, M.Y.; Ghani, M.Y.; Athreya, U.S. “Plants vs. Zombies”—Students’ Experiences with an In-House Pharmacy Serious Game. In Proceedings of the EDULEARN16, Barcelona, Spain, 4–6 July 2016; pp. 9049–9053. [Google Scholar]

- Blažič, A.J.; Cigoj, P.; Blažič, B.J. Serious game design for digital forensics training. In Proceedings of the Digital Information Processing, Data Mining, and Wireless Communications (DIPDMWC), Moscow, Russia, 6–8 July 2006; pp. 211–215. [Google Scholar] [CrossRef]

- Callies, S.; Sola, N.; Beaudry, E.; Basque, J. An empirical evaluation of a serious simulation game architecture for automatic adaptation. In Proceedings of the 9th European Conference on Games Based Learning, Steinkjer, Norway, 8–9 October 2015; pp. 107–116. [Google Scholar]

- Martin-Dorta, N.; Sanchez-Berriel, I.; Bravo, M.; Hernández, J.; Saorin, J.L.; Contero, M. Virtual Blocks: A serious game for spatial ability improvement on mobile devices. Multim. Tools Appl. 2014, 73, 1575–1595. [Google Scholar] [CrossRef]

- Duque, G.; Fung, S.; Mallet, L.; Posel, N.; Fleiszer, D. Learning while having fun: The use of video gaming to teach geriatric house calls to medical students. J. Am. Geriatr. Soc. 2008, 56, 1328–1332. [Google Scholar] [CrossRef]

- Malhotra, Y.; Galletta, D. A multidimensional commitment model of volitional systems adoption and usage behavior. J. Manag. Inf. Syst. 2005, 22, 117–151. [Google Scholar] [CrossRef]

- Guo, Y.M.; Klein, B.D. Beyond the test of the four channel model of flow in the context of online shopping. Communic. Assoc. Informat. Systems 2009, 24, 837–856. [Google Scholar] [CrossRef]

- Koufaris, M. Applying the technology acceptance model and flow theory to online consumer behavior. Informat. Systems Res. 2002, 13, 205–223. [Google Scholar] [CrossRef]

- Agarwal, R.; Karahanna, E. Time flies when you’re having fun: Cognitive absorption and beliefs about information technology usage. MIS Q. 2000, 24, 665–694. [Google Scholar] [CrossRef]

- Johnsen, H.M.; Fossum, M.; Vivekananda-Schmidt, P.; Fruhling, A.; Slettebø, Å. Teaching clinical reasoning and decision-making skills to nursing students: Design, development, and usability evaluation of a serious game. Int. J. Med Inform. 2016, 94, 39–48. [Google Scholar] [CrossRef]

- Butler, S.; Ahmed, D.T. Gamification to Engage and Motivate Students to Achieve Computer Science Learning Goals. In Proceedings of the Computational Science and Computational Intelligence (CSCI), Las Vegas, NV, USA, 15–17 December 2016; pp. 237–240. [Google Scholar] [CrossRef]

- Kleinert, R.; Heiermann, N.; Wahba, R.; Chang, D.H.; Hölscher, A.H.; Stippel, D.L. Design, realization, and first validation of an immersive web-based virtual patient simulator for training clinical decisions in surgery. J. Surg. Educ. 2015, 72, 1131–1138. [Google Scholar] [CrossRef]

- Lino, J.E.; Paludo, M.A.; Binder, F.V.; Reinehr, S.; Malucelli, A. Project management game 2D (PMG-2D): A serious game to assist software project managers training. In Proceedings of the Frontiers in Education Conference (FIE), El Paso, TX, USA, 21–24 October 2015; pp. 1–8. [Google Scholar] [CrossRef]

- Lorenzini, C.; Faita, C.; Barsotti, M.; Carrozzino, M.; Tecchia, F.; Bergamasco, M. ADITHO–A Serious Game for Training and Evaluating Medical Ethics Skills. In International Conference on Entertainment Computing; Springer: Cham, Switzerland, 2015; pp. 59–71. [Google Scholar]

- Witmer, B.G.; Singer, M.J. Measuring presence in virtual environments: A presence questionnaire. Presence 1998, 7, 225–240. [Google Scholar] [CrossRef]

- Brooke, J. SUS-A quick and dirty usability scale. Usability Eval. Ind. 1996, 189, 4–7. [Google Scholar]

- Dudzinski, M.; Greenhill, D.; Kayyali, R.; Nabhani, S.; Philip, N.; Caton, H.; Gatsinzi, F. The design and evaluation of a multiplayer serious game for pharmacy students. In European Conference on Games Based Learning; Academic Conferences International Limited: Porto, Portugal, 2013; p. 140. [Google Scholar]

- Canals, P.C.; Font, J.F.; Minguell, M.E.; Regàs, D.C. Design and Creation of a Serious Game: Legends of Girona. In Proceedings of the ICERI2013, Seville, Spain, 18–20 November 2013; pp. 3749–3757. [Google Scholar] [CrossRef][Green Version]

- Zhang, J.; Walji, M.F. TURF: Toward a unified framework of EHR usability. J. Biomed. Inform. 2011, 44, 1056–1067. [Google Scholar] [CrossRef]

- Boletsis, C.; McCallum, S. Smartkuber: A serious game for cognitive health screening of elderly players. Games Health J. 2016, 5, 241–251. [Google Scholar] [CrossRef]

- Vallejo, V.; Wyss, P.; Rampa, L.; Mitache, A.V.; Müri, R.M.; Mosimann, U.P.; Nef, T. Evaluation of a novel Serious Game based assessment tool for patients with Alzheimer’s disease. PLoS ONE 2017, 12, e0175999. [Google Scholar] [CrossRef] [PubMed]

- Norman, K.L. GEQ (game engagement/experience questionnaire): A review of two papers. Interact. Comput. 2013, 25, 278–283. [Google Scholar] [CrossRef]

- Brockmyer, J.H.; Fox, C.M.; Curtiss, K.A.; McBroom, E.; Burkhart, K.M.; Pidruzny, J.N. The development of the Game Engagement Questionnaire: A measure of engagement in video game-playing. J. Exper. Soc. Psychol. 2009, 45, 624–634. [Google Scholar] [CrossRef]

- Gao, Z.; Zhang, P.; Podlog, L.W. Examining elementary school children’s level of enjoyment of traditional tag games vs. interactive dance games. Psych. Health Med. 2014, 19, 605–613. [Google Scholar] [CrossRef] [PubMed]

- Harrington, B.; O’Connell, M. Video games as virtual teachers: Prosocial video game use by children and adolescents from different socioeconomic groups is associated with increased empathy and prosocial behaviour. Comput. Hum. Behav. 2016, 63, 650–658. [Google Scholar] [CrossRef]

- Zurita-Ortega, F.; Medina-Medina, N.; Chacón-Cuberos, R.; Ubago-Jiménez, J.L.; Castro-Sánchez, M.; González-Valero, G. Validation of the “GAMEPLAY” questionnaire for the evaluation of sports activities. J. Hum. Sport Exerc. 2018, 13, S178–S188. [Google Scholar] [CrossRef]

- Lope, R.P.; Arcos, J.R.; Medina-Medina, N.; Paderewski, P.; Gutiérrez-Vela, F.L. Design methodology for educational games based on graphical notations: Designing Urano. Entertain. Comput. 2017, 18, 1–14. [Google Scholar] [CrossRef]

- Lorenzo-Seva, U.; Ferrando, P.J. FACTOR: A computer program to fit the exploratory factor analysis model. Behav. Res. Methods 2006, 38, 88–91. [Google Scholar] [CrossRef]

- Hu, L.T.; Bentler, P.M. Fit indices in covariance structure modeling: Sensitivity to underparameterized model misspecification. Psychol. Methods 1988, 3, 424–453. [Google Scholar] [CrossRef]

- Schmider, E.; Ziegler, M.; Danay, E.; Beyer, L.; Bühner, M. Is it really robust? Reinvestigating the robustness of ANOVA against violations of the normal distribution assumption. Methodology 2010, 6, 147–151. [Google Scholar] [CrossRef]

- Poppelaars, M.; Lichtwarck-Aschoff, A.; Kleinjan, M.; Granic, I. The impact of explicit mental health messages in video games on players’ motivation and affect. Comput. Hum. Behav. 2018, 83, 16–23. [Google Scholar] [CrossRef]

- Hartanto, A.; Toh, W.X.; Yang, H.J. Context counts: The Different implications of weekday and weekend video gaming for academic performance in mathematics, reading, and science. Comput. Educ. 2018, 120, 51–63. [Google Scholar] [CrossRef]

- Fernández-Revelles, A.B.; Zurita-Ortega, F.; Castañeda-Vázquez, C.; Martínez-Martínez, A.; Padial-Ruz, R.; Chacón-Cuberos, R. Videogame rating systems, narrative review. ESHPA Educ. Sport Health Phys. Act. 2018, 2, 62–74. [Google Scholar]

- Veraksa, A.N.; Bukhalenkova, D.A. Computer game-based technology in the development of preschoolers’executive funtions. Russ. Psychol. J. 2017, 14, 106–132. [Google Scholar]

- Hainey, T.; Connolly, T.M.; Boyle, E.A.; Wilson, A.; Razak, A. A systematic literature review of games-based learning empirical evidence in primary education. Comput. Educ. 2016, 102, 202–223. [Google Scholar] [CrossRef]

- Turkay, S.; Hoffman, D.; Kinzer, C.K.; Chantes, P.; Vicari, C. Toward understanding the potential of games for learning: Learning theory, game design characteristics, and situating video games in classrooms. Comput. Sch. 2014, 31, 2–22. [Google Scholar] [CrossRef]

- Chen, H.; Sun, H.C. The effects of active videogame feedback and practicing experience on children´s physical activity intensity and enjoyment. Games Health J. 2017, 6, 200–204. [Google Scholar] [CrossRef] [PubMed]

- Thompson, D. Incorporating behavioral techniques into a serious videogame for children. Games Health J. 2017, 6, 75–86. [Google Scholar] [CrossRef] [PubMed]

| Work | Main Objective | Population | Evaluated Attributes | How is the Usability Evaluated? |

|---|---|---|---|---|

| Johnsen et al. [3] | To obtain the educational value in terms of face, content, and construct validity | Second-year nursing students | Realism/authenticity (face validity), alignment of content with curricula (content validity), ability to meet the learning objectives (construct validity), usability and preferences regarding future use. | Learning to use, use, engagement and likeability |

| Tan et al. [5] | To validate the effectiveness of a serious game in improving the knowledge and confidence of nursing students on blood transfusion practice | Second-year nursing students | Effectiveness of learning with the game, immersion, teacher-learner interaction, learner-learner interaction, imagination, motivation, enhanced problem-solving capability. | - |

| Dankbaar et al. [2] | To evaluate the effectiveness of serious games compared to more traditional formats such e-modules | Fourth-year medical student | Knowledge, self-efficacy, motivation, stress and patient safety awareness | - |

| Yap,Yap, Bin Abdol Ghani, Yap, and Athreya, [10] | To develop and evaluate an in-house role-playing game simulating various patient encounters in a futuristic post-apocalyptic world | Pharmacy undergraduates | Effectiveness of learning, comparison with lectures, preferences of game elements (storyline, resources, item grants, plot animations, vitality and life bars, experience points), and person perspective (collaborative and competitive aspects) | - |

| Blažič, Cigoj and Blažič [11] | To develop and evaluate a game for digital forensics training, incorporating learnability properties | Postgraduate students | Competency and cognitive abilities (knowledge, comprehension, application), expectations and motivation (confidence, satisfaction, relevance) and interaction and control | Interaction and control (attractiveness, being informed about the learning objectives, stimulating, guidance and feedback) |

| Callies, Sola, Beaudry, and Basque, [12] | To evaluate a simulation game developed following a proposed architecture for automatic adaptation | College students | Learning and motivation (feeling of challenge, curiosity, control, feedback, focus, immersion, relevance) | Challenges (difficulty of challenges, sensation of game adaptability, sensation of pressure and fair challenges) and control (if the game permits making different choices) |

| Martin-Dorta et al. [13] | To present and evaluate a novel spatial instruction system for improving spatial abilities of engineering students | Engineering students | Improvement in spatial skills, interest generated by the game, recommendation to others and usability | Help, system speed, navigation through scenes, easy to learn, capable of solving task, interface friendliness |

| Knight et al. [4] | To evaluate the effectiveness of a serious game in the teaching of major incident triage by comparing it with traditional training methods | Medical students | Effectivity of the learning (tagging accuracy, step accuracy and time) | - |

| Duque, Fung, Mallet, Posel and Fleiszer [14] | To create a serious game to teach Geriatric House Calls to medical students | Medical students | Improvement in students’ knowledge using the game, students’ perceptions about the geriatric home visit and the use of video gaming in medical education | - |

| Work | Main Objective | Population | Evaluated Attributes | How the Usability is Evaluated? |

|---|---|---|---|---|

| Wang et al. [1] | To determine the relations between the perceived usefulness, the perceived ease of use and the perceived goal clarity regarding concentration and enjoyment | College students | Usefulness and ease of use (based on Malhotra and Galletta [15]), goal clarity (based on (Guo and Klein [16]), concentration (based on (Koufaris [17]) and enjoyment (based on Agarwal and Karahanna [18]). | Ease of learning to use, flexibility of interaction, ease of use, ease of achieving objectives, clarity and comprehensibility of interaction (based on Malhotra and Galletta [15]) |

| Johnsen, Fossum, Vivekananda-Schmidt, Fruhling, and Slettebø [19] | Design, development and usability evaluation of a video-based serious game for teaching clinical reasoning and decision | Nursing students | Electronic Health Records Usability (based on Malhotra and Galletta [15]). | Usefulness (accomplishment of goals), usablility (easy to learn, easy to use, and error-tolerant) and satisfying (subjective impression of the users) |

| Butler and Ahmed [20] | To compare the user enjoyment in learning through a serious game for learning Computer Science as opposed to traditional classroom learning | Computer science students | Preferred learning methods, difficulty of Computer Science concepts, motivation using the serious game and fun using the game | - |

| Kleinert et al. [21] | To design and evaluate a simulator for training in surgery | Medical students | Acceptance (fun, frequency of use, use of computers, preferred learning medium), effectiveness and applicability | Ease of learning, ease of use and overall impression |

| Lino et al. [22] | To design and evaluate a serious educational game that aims to assist inexperienced software project managers to be trained | Software development professionals | Relevance, confidence, satisfaction, immersion, challenge, skills and competence, fun and knowledge (phases of the game and matters of software project management, motivation) | Difficulty in understanding the game, difficulty of activities, difficulty of materials and content (confidence) |

| Lorenzini, Faita, Barsotti, Carrozzino, Tecchia, and Bergamasco [23] | To provide and evaluate a technological tool for both evaluating and training ethical skills of medical staff personnel | Hospital residents | Sense of presence (control, sensory, distraction, realism) (based on (Witmer and Singer [24]) and Usability | System Usability Scale (SUS) (Brooke, [25]) |

| Dudzinski et al. [26] | To identify a successful game design for a serious multiplayer game to be used in learning | Pharmacy students | Game preferences, games usage, study patterns, views about educational games and learning, and opinion about a prototype of a serious game about pharmacy challenges | Usefulness, interactivity, confidence, speed and feedback |

| Canals, Font, Minguell and Regàs [27] | To create and evaluate a serious game called “Legends of Girona” to be used in the classroom as a teaching resource | Young people (between 10 and 16 years) | Aspects of the game: images, graphics, scenes, characters, game plot, graphics, voices, music, texts; and use of the game | How the game works, playability, game stability, use of commands and controls, instructions given, speed, treatment of failures |

| Items Gameplay-Scale | M | SD | V | A | K |

|---|---|---|---|---|---|

| V01. Indicate the degree to which it has been easy for you to learn to play | 3.56 | 0.898 | 0.807 | −0.185 | 0.250 |

| V02. Indicate the degree to which it has been easy for you to play | 3.46 | 0.875 | 0.766 | −0.112 | 0.536 |

| V03. Indicate to what extent you liked the artistic part of the game (graphics) | 3.49 | 1.072 | 1.149 | −0.315 | −0.553 |

| V04. Indicate to what extent you liked the artistic part of the game (sounds) | 3.20 | 1.132 | 1.281 | −0.229 | −0.527 |

| V05. Indicate how much you liked the story told in the game | 3.73 | 1.118 | 1.251 | −0.633 | −0.340 |

| V06. Indicate how much you liked the characters in the game | 3.25 | 1.110 | 1.232 | −0.308 | −0.516 |

| V07. Indicate how much you liked the challenges of the game | 3.68 | 1.072 | 1.149 | −0.589 | −0.320 |

| V08. Indicate to what extent you liked the way you interact with the game (pick and use objects, dialogue with characters, etc.). | 3.34 | 1.056 | 1.114 | −0.318 | −0.318 |

| V09. Indicate how much you liked the way the game is scored (using stickers) | 3.33 | 1.090 | 1.188 | −0.308 | −0.476 |

| V10. In summary, indicate to what extent you liked the game | 3.81 | 1.046 | 1.095 | −0.664 | −0.025 |

| V11. Indicate to what extent you understood how the protagonist feels | 3.52 | 0.972 | 0.946 | −0.416 | −0.126 |

| V12. Indicate to what extent you identified with the actions of the protagonist | 2.97 | 1.219 | 1.486 | 0.014 | −0.910 |

| Variables | Rotated Factor Matrix | Variables | Load Factor Dimensions of GAMEPLAY-SCALE | ||||

|---|---|---|---|---|---|---|---|

| F1 | F2 | F3 | F1 | F2 | F3 | ||

| V 01 | −0.104 | 0.842 | −0.027 | V 01 | 0.842 | ||

| V 02 | −0.022 | 0.660 | −0.013 | V 02 | 0.660 | ||

| V 03 | 0.217 | 0.023 | 0.559 | V 03 | 0.559 | ||

| V 04 | −0.079 | 0.071 | 0.623 | V 04 | 0.623 | ||

| V 05 | 0.074 | 0.035 | 0.712 | V 05 | 0.712 | ||

| V 06 | 0.134 | 0.020 | 0.566 | V 06 | 0.566 | ||

| V 07 | −0.128 | −0.081 | 0.831 | V 07 | 0.831 | ||

| V 08 | 0.052 | 0.081 | 0.606 | V 08 | 0.606 | ||

| V 09 | −0.007 | −0.083 | 0.699 | V 09 | 0.699 | ||

| V 10 | 0.233 | −0.029 | 0.632 | V 10 | 0.632 | ||

| V 11 | 0.469 | 0.046 | 0.127 | V 11 | 0.469 | ||

| V 12 | 1.051 | −0.028 | −0.311 | V 12 | 1.051 | ||

| Alpha (0.868) | 0.712 | 0.702 | 0.886 | ||||

| Relationship between Variables | R.W. | S.R.W. | |||||

|---|---|---|---|---|---|---|---|

| Estimate | S.E. | C.R. | P | Estimate | |||

| I.1 | ← | USA | 1.000 | - | - | *** | 0.681 |

| I.2 | ← | USA | 1.136 | 0.399 | 2.847 | * | 0.794 |

| I.3 | ← | SAT | 1.000 | - | - | *** | 0.760 |

| I.4 | ← | SAT | 0.783 | 0.065 | 12.113 | *** | 0.564 |

| I.5 | ← | SAT | 1.091 | 0.062 | 17.710 | *** | 0.795 |

| I.6 | ← | SAT | 0.935 | 0.062 | 15.015 | *** | 0.686 |

| I.7 | ← | SAT | 0.893 | 0.060 | 14.832 | *** | 0.679 |

| I.8 | ← | SAT | 0.851 | 0.059 | 14.315 | *** | 0.657 |

| I.9 | ← | SAT | 0.856 | 0.062 | 13.913 | *** | 0.640 |

| I.10 | ← | SAT | 1.071 | 0.057 | 18.682 | *** | 0.834 |

| I.11 | ← | EMP | 1.000 | - | - | *** | 0.656 |

| I.12 | ← | EMP | 1.320 | 0.124 | 10.605 | *** | 0.690 |

| EMP | ↔ | SAT | 0.414 | 0.046 | 9.076 | *** | 0.800 |

| SAT | ↔ | USA | 0.090 | 0.036 | 2.524 | * | 0.181 |

| EMP | ↔ | USA | 0.056 | 0.030 | 1.867 | * | 0.144 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zurita Ortega, F.; Medina Medina, N.; Gutiérrez Vela, F.L.; Chacón Cuberos, R. Validation and Psychometric Properties of the Gameplay-Scale for Educative Video Games in Spanish Children. Sustainability 2020, 12, 2283. https://doi.org/10.3390/su12062283

Zurita Ortega F, Medina Medina N, Gutiérrez Vela FL, Chacón Cuberos R. Validation and Psychometric Properties of the Gameplay-Scale for Educative Video Games in Spanish Children. Sustainability. 2020; 12(6):2283. https://doi.org/10.3390/su12062283

Chicago/Turabian StyleZurita Ortega, Félix, Nuria Medina Medina, Francisco Luis Gutiérrez Vela, and Ramón Chacón Cuberos. 2020. "Validation and Psychometric Properties of the Gameplay-Scale for Educative Video Games in Spanish Children" Sustainability 12, no. 6: 2283. https://doi.org/10.3390/su12062283

APA StyleZurita Ortega, F., Medina Medina, N., Gutiérrez Vela, F. L., & Chacón Cuberos, R. (2020). Validation and Psychometric Properties of the Gameplay-Scale for Educative Video Games in Spanish Children. Sustainability, 12(6), 2283. https://doi.org/10.3390/su12062283