On Decision Makers’ Perceptions of What an Ecological Computer Model is, What It Does, and Its Impact on Limiting Model Acceptance

Abstract

1. Introduction

2. Material and Methods

3. Results

- An ecological computer model is perceived as a ‘black box’ whose functioning is poorly understood.

- Poor quality input data makes ecological computer models unreliable. Data may be poorly documented, sparse, collected in a location of poor ecological and geographical relevance and out-of-date.

- Model results may not be trusted because ecological computer models can be manipulated to produce any outcome.

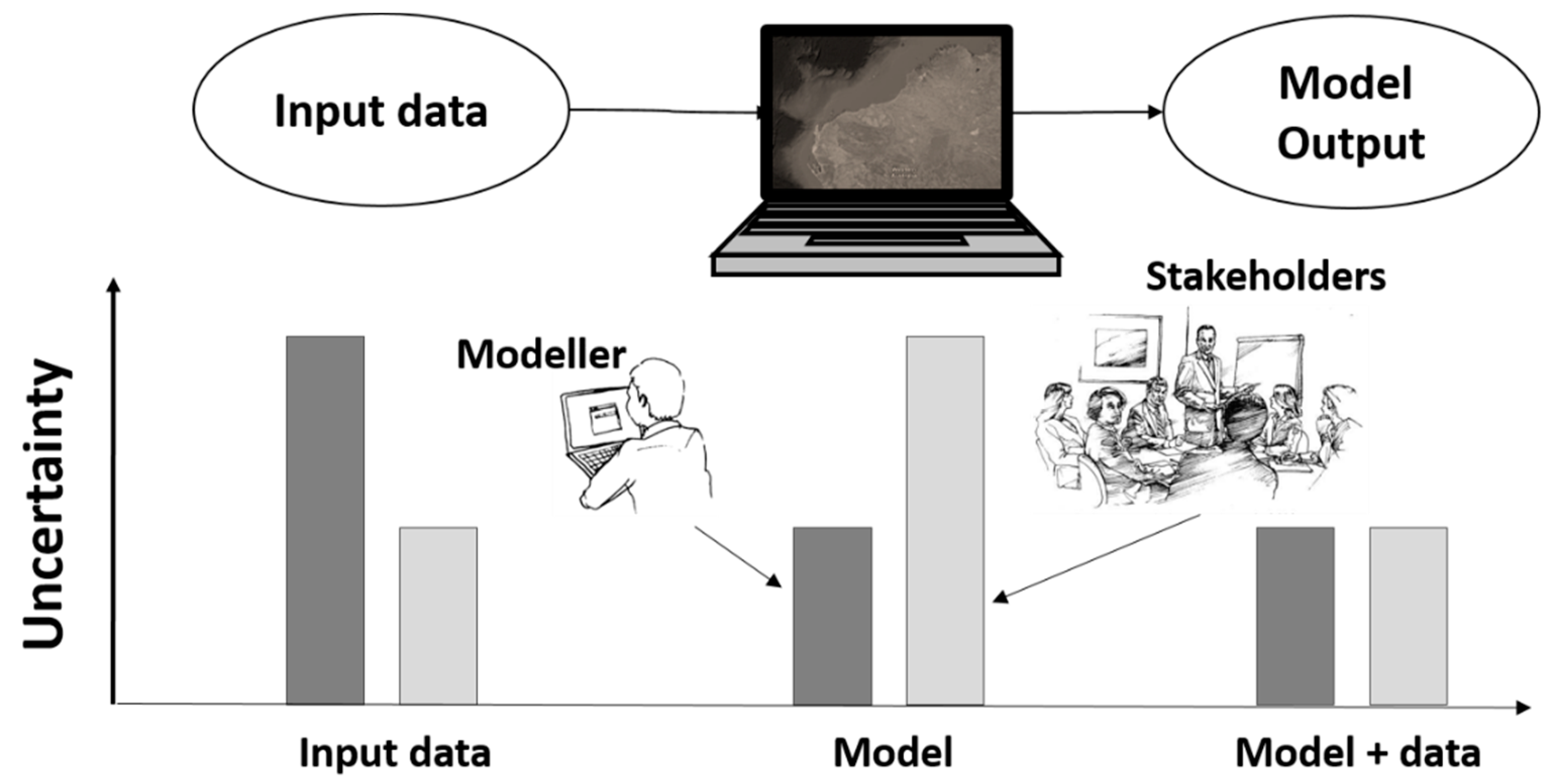

3.1. A Computer Model is a ‘Black Box’

3.2. Poor Quality Input Data Makes Computer Models Unreliable

3.3. Lack of Trust, Models Can Be Manipulated to Produce Any Outcome

4. Discussion

4.1. An Issue of Communication about Models?

4.2. Addressing Concerns about Computer Models

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Conflicts of Interest

References

- Gibson, R.B. Avoiding sustainability trade-offs in environmental assessment. Impact Assess. Proj. Apprais. 2013, 31, 2–12. [Google Scholar] [CrossRef]

- Boschetti, F.; Grigg, N.J.; Enting, I. Modelling = conditional prediction. Ecol. Complex. 2011, 8, 86–91. [Google Scholar] [CrossRef]

- Boschetti, F.; Fulton, E.A.; Bradbury, R.H.; Symons, J. What is a model, why people don’t trust them and why they should. In Negotiating Our Future: Living scenarios for Australia to 2050; Raupach, M.R., McMichael, A.J., Finnigan, J.J., Manderson, L., Walker, B.H., Eds.; Australian Academy of Science: Canberra, Australia, 2013; pp. 107–118. [Google Scholar]

- Boschetti, F.; Symons, J. Why models’ outputs should be interpreted as predictions. In Proceedings of the International Congress on Modelling and Simulation (MODSIM 2011), Perth, Australia, 12–16 December 2011. [Google Scholar]

- Hastrup, K. The Social Life of Climate Change Models: Anticipating Nature; Routledge: Abingdon, UK, 2013. [Google Scholar]

- Meadows, D.H.; Meadows, D. The history and conclusions of The Limits to Growth. Syst. Dyn. Rev. 2007, 23, 191–197. [Google Scholar] [CrossRef]

- Oreskes, N. Why believe a computer? Models, measures, and meaning in the natural world. In The Earth Around Us: Maintaining a Livable Planet; Schneiderman, J., Ed.; W.H. Freeman and Co.: San Francisco, CA, USA, 2000; pp. 70–82. [Google Scholar]

- Fulton, E.A.; Boschetti, F.; Sporcic, M.; Jones, T.; Little, L.R.; Dambacher, J.M.; Gray, R.; Gorton, S. A multi-model approach to engaging stakeholder and modellers in complex environmental problems. Environ. Sci. Policy. 2015, 48, 44–56. [Google Scholar]

- Fulton, E.A.; Jones, T.; Boschetti, F.; Chapman, K.L.; Little, R.L.; Syme, G.; Dzidic, P.; Gorton, R.; Sporcic, M.; de la Mare, W. Assessing the impact of stakeholder engagement in Management Strategy Evaluation. IJEME 2013, 3, 82–98. [Google Scholar]

- Neuman, W.L. Social Research Methods: Qualitative and Quantitative Approaches; Pearson Education: London, UK, 2013. [Google Scholar]

- Flick, U. An Introduction to Qualitative Research; Sage: London, UK, 2014. [Google Scholar]

- Hughes, M.; Jones, T.; Phau, I. Community Perceptions of a World Heritage Nomination Process: The Ningaloo Coast Region of Western Australia. Coast. Manag. 2016, 44, 139–155. [Google Scholar] [CrossRef]

- Hughes, M.; Tye, M.; Chandler, P. Urban fringe bushwalking: Eroding the experience. Soc. Nat. Resour. 2016, 29, 1311–1324. [Google Scholar] [CrossRef]

- Mayring, P. Qualitative Content Analysis: Theoretical Foundation, Basic Procedures and Software Solution; GESIS: Klagenfurt, Austria, 2014. [Google Scholar]

- Christensen, V.; Walters, C.J. Ecopath with Ecosim: Methods, capabilities and limitations. Ecol. Modell. 2004, 172, 109–139. [Google Scholar] [CrossRef]

- Fulton, E.A.; Link, J.S.; Kaplan, I.C.; Rolland, M.S.; Johnson, P.; Ainsworth, C.; Horne, P.; Gorton, R.; Gamble, R.J.; Smith, A.D.M.; et al. Lessons in modelling and management of marine ecosystems: The Atlantis experience. Fish. Fish. 2011, 12, 171–188. [Google Scholar] [CrossRef]

- Oreskes, N. Philosophical Issues in Model Assessment. In Model Validation: Perspectives in Hydrological Science; Anderson, M.G., Bates, P.D., Eds.; John Wiley and Sons: London, UK, 2001; pp. 23–41. [Google Scholar]

- Boschetti, F. Models and people: An alternative view of the emergent properties of computational models. Complexity 2015, 26, 202–213. [Google Scholar] [CrossRef]

- Pilkey, O.H.; Pilkey-Jarvis, L. Useless Arithmetic: Why Environmental Scientists Can’t Predict the Future; Columbia University Press: New York, NY, USA, 2007. [Google Scholar]

- Schmolke, A.; Thorbek, P.; DeAngelis, D.L.; Grimm, V. Ecological models supporting environmental decision making: A strategy for the future. Trends Ecol. Evol. 2010, 25, 479–486. [Google Scholar] [CrossRef] [PubMed]

- Grimm, V.; Augusiak, J.; Focks, A.; Frank, B.M.; Gabis, F.; Johnston, A.S.A.; Liu, C.; Martin, B.T.; Meli, M.; Radchuk, V.; et al. Towards better modelling and decision support: Documenting model development, testing, and analysis using TRACE. Ecol. Modell. 2014, 280, 129–139. [Google Scholar] [CrossRef]

- Coro, G.; Vilas, L.G.; Magliozzi, C.; Ellenbroek, A.; Scarponi, P.; Pasquale, P. Forecasting the ongoing invasion of Lagocephalus sceleratus in the Mediterranean Sea. Ecol. Modell. 2018, 371, 37–49. [Google Scholar] [CrossRef]

- Funtowicz, S.O.; Ravetz, J.R. Uncertainty and Quality in Science for Policy; Springer: Dordrecht, The Netherlands, 1990. [Google Scholar]

- Steenbeek, J.; Buszowski, J.; Christensen, V.; Akoglu, E.; Aydin, K.; Ellis, N.; Felinto, D.; Guitton, J.; Guitton, J.; Lucey, S.; et al. Ecopath with Ecosim as a model-building toolbox: Source code capabilities, extensions, and variations. Ecol. Modell. 2016, 319, 178–189. [Google Scholar] [CrossRef]

- Pauly, D. Ecopath, Ecosim, and Ecospace as tools for evaluating ecosystem impact of fisheries. ICES J. Mar. Sci. 2000, 57, 697–706. [Google Scholar] [CrossRef]

- Boschetti, F.; Richert, C.; Walker, L.; Price, J.; Dutra, L. Assessing attitudes and cognitive styles of stakeholders in environmental projects involving computer modelling. Ecol. Modell. 2012, 247, 98–111. [Google Scholar] [CrossRef]

- Grimm, V.; Berger, U.; DeAngelis, D.L.; Polhill, J.G.; Giske, J.; Railsback, S.F. The ODD protocol: A review and first update. Ecol. Modell. 2010, 221, 2760–2768. [Google Scholar] [CrossRef]

- Symons, J.; Boschetti, F. How Computational Models Predict the Behavior of Complex Systems. Found. Sci. 2013, 18, 809–821. [Google Scholar] [CrossRef]

- Kitchin, R.; McArdle, G. What makes Big Data, Big Data? Exploring the ontological characteristics of 26 datasets. Big Data Soc. 2016, 3, 2053951716631130. [Google Scholar] [CrossRef]

- Reichman, O.J.; Jones, M.B.; Schildhauer, M.P. Challenges and opportunities of open data in ecology. Science 2011, 331, 703–705. [Google Scholar] [CrossRef] [PubMed]

- Harford, T. Big data: A big mistake? Significance 2014, 11, 14–19. [Google Scholar] [CrossRef]

- Symons, J.; Alvarado, R. Can we trust Big Data? Applying philosophy of science to software. Big Data Soc. 2016, 3, 2053951716664747. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Warburton, A. As Reality and CGI Become Indistinguishable, We Need Guidance from Those at Art’s Frontiers. Available online: https://www.reddit.com/r/Futurology/comments/7etv9j/as_reality_and_cgi_become_indistinguishable_we/ (accessed on 3 August 2018).

| Participant | Responses |

|---|---|

| RP1 | “... a little computer... bits of information going in, all the bits of information are synthesised, brought together and then you use that to come up with answers or something”. |

| RP3 | “a tool which is computer-based and it’s mathematically driven I suspect. It’s a mathematically contrived algorithm which has been designed to help answer some questions. … A lot of people look at [a model] as a black box. They haven’t got a clue what’s in that black box.” |

| RP6 | “... almost an equation that has a whole series of inputs, and functions or factors that get inputted and spits out an answer at the other end”. |

| RP7 | “Computer modellers use as much information, or data, as they can get their hands on, to make predictions about different scenarios going into the future.” |

| M3 | “Models can get very complicated very quickly. People [are] unable to understand all the linkages in a complicated system”. |

| Participant | Responses |

|---|---|

| RP1 | “A good model relies on good information, so if you don’t have good information going in, you’re not going to have a good answer coming out.” |

| RP3 | “… a [model] output’s only as good as the input”. |

| RP4 | “It all depends on how good the information is going in.” |

| RP5 | “The level of data that you put in gives an indication of the accuracy of the outputs …it’s all about the data that goes into it.” |

| RP6 | “My biggest concern with models is … how recent is the data that has gone in ... models are fantastic when they are all fresh and new … 10 years ago the data might have been fantastic, today it’s out of date.” |

| M1 | “I am sceptical of models using only limited or surrogate data.” |

| M2 | “Limited data can make them [ecological models] difficult to interpret correctly.” |

| M4 | “Data must be of high quality for applied models.” |

| RS3 | “Quality data is potentially very important [for model confidence], especially if outputs are spurious.” |

| Participant | Responses |

|---|---|

| RP1 | “If people have a vested interest in wanting a decision and it is not the kind of information that the model’s supporting, they’re going to try and take the model less seriously.” |

| RP4 | “... you can get such big differences in the results depending on which group does it and which factors you use and all those sorts of things I think [a model] can be misused, and managers might be tempted, and contractors might be tempted to find the right answer, or the answer they think people want ...” |

| RP5 | “I’ve seen people in senior positions look at a model, disagree with the outputs and say that it’s providing the wrong scenario and then to ignore the whole modelling rather than to tweak the inputs and make them more accurate.” |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Boschetti, F.; Hughes, M.; Jones, C.; Lozano-Montes, H. On Decision Makers’ Perceptions of What an Ecological Computer Model is, What It Does, and Its Impact on Limiting Model Acceptance. Sustainability 2018, 10, 2767. https://doi.org/10.3390/su10082767

Boschetti F, Hughes M, Jones C, Lozano-Montes H. On Decision Makers’ Perceptions of What an Ecological Computer Model is, What It Does, and Its Impact on Limiting Model Acceptance. Sustainability. 2018; 10(8):2767. https://doi.org/10.3390/su10082767

Chicago/Turabian StyleBoschetti, Fabio, Michael Hughes, Cheryl Jones, and Hector Lozano-Montes. 2018. "On Decision Makers’ Perceptions of What an Ecological Computer Model is, What It Does, and Its Impact on Limiting Model Acceptance" Sustainability 10, no. 8: 2767. https://doi.org/10.3390/su10082767

APA StyleBoschetti, F., Hughes, M., Jones, C., & Lozano-Montes, H. (2018). On Decision Makers’ Perceptions of What an Ecological Computer Model is, What It Does, and Its Impact on Limiting Model Acceptance. Sustainability, 10(8), 2767. https://doi.org/10.3390/su10082767