Adaptive Multidimensional Model for User Interface Quality Assessment

Abstract

1. Introduction

2. Related Work

3. Methodological Approach

3.1. Phase I: Conceptual Foundation and Dimensional Structuring

3.2. Phase II: Formal Specification and Calibration Logic

3.3. Phase III: Model Illustration and Functional Demonstration

4. Concept of an Adaptive Multidimensional Model

- Multidimensionality—the evaluation considers several interrelated dimensions;

- Integration of heterogeneous evaluation inputs—neither type of indicator is sufficient on its own;

- Adaptivity—the model adjusts evaluation priorities based on user characteristics.

4.1. Dimensions of the Model

4.1.1. Functional–Objective Dimension

- Interaction Efficiency quantifies the effort required to achieve goals, a core component of usability as defined by ISO 9241-11 [13];

- Reliability captures interface robustness and error-prevention capabilities, which are critical for maintaining user trust [30];

- Interface Consistency reflects the principle that predictable system behavior reduces learning effort and cognitive workload [3].

Methods for Functional–Objective Metrics Assessment

4.1.2. Cognitive–Perceptual Dimension

- Usability & Intuitiveness captures perceived ease of use and self-efficacy, which are key predictors of system acceptance [1];

- Clarity & Comprehensibility addresses the cognitive effort required to process information and relates to perceived cognitive load [35];

- Structural & Feedback Quality evaluates system transparency and the effectiveness of feedback mechanisms [30];

- Aesthetic & Emotional Satisfaction reflects the Aesthetic–Usability Effect, where visual appeal influences perceived quality and overall satisfaction [17].

Methods for Cognitive–Perceptual Metrics Assessment

4.1.3. Contextual–Individual Dimension (User-Centered)

- Domain Experience—prior knowledge of the subject matter or business logic, reflecting the maturity of users’ mental models [30];

- Digital Literacy—general proficiency with interface conventions and technological self-efficacy [1];

- Task-Context Complexity—typical complexity of performed tasks and the characteristics of the interaction environment (e.g., mobile vs. desktop) [31].

Methods for Contextual–Individual Metrics Assessment

4.2. Integrated Evaluation and Interpretation

- Personalized assessment of interface quality that reflects profile-specific sensitivities to different usability factors;

- Systematic comparison of interface performance across distinct user groups, highlighting where design solutions benefit or disadvantage particular profiles;

- Data-driven identification of interface elements that may require adaptation or redesign for specific user segments.

5. Formal Definition of the Proposed Model

5.1. Indicator Sets

- is the set of objective indicators (e.g., task completion time, error rate, etc.);

- is the set of subjective indicators (e.g., perceived usability, visual simplicity, satisfaction, etc.);

- represents user-centered indicators (e.g., domain experience, digital literacy, task-context complexity, etc.).

5.2. Normalization of Indicators

5.3. Determination of Minimum and Maximum Values

5.4. Context-Dependent User Representation and Profiling

5.5. Hierarchical Indicator Aggregation

5.6. Integrated Assessment Function

- denotes the user profile (e.g., Beginner, Intermediate, Advanced);

- and are the adaptive weights assigned to each composite index, determined by the function .

5.7. Calibration and Operationalization Principles

- Level 1: Baseline configuration

- Level 2: Expert-informed refinement

- Level 3: Data-driven refinement

Synthesis of Calibration Levels

6. Illustrative Example: Profile-Dependent Weight Configuration

- Beginner users—greater emphasis on clear visual cues and straightforward interaction patterns. Higher weights may be assigned to clarity, intuitiveness, perceptual comfort, and aesthetic support to facilitate orientation during early interactions.

- Intermediate users—a more balanced distribution of priorities. With moderate familiarity, both usability-related and efficiency-related criteria remain relevant.

- Advanced users—stronger emphasis on efficiency and performance-oriented factors, such as rapid task completion and streamlined interaction flows. Cognitive–Perceptual factors may retain a secondary, but still meaningful, role.

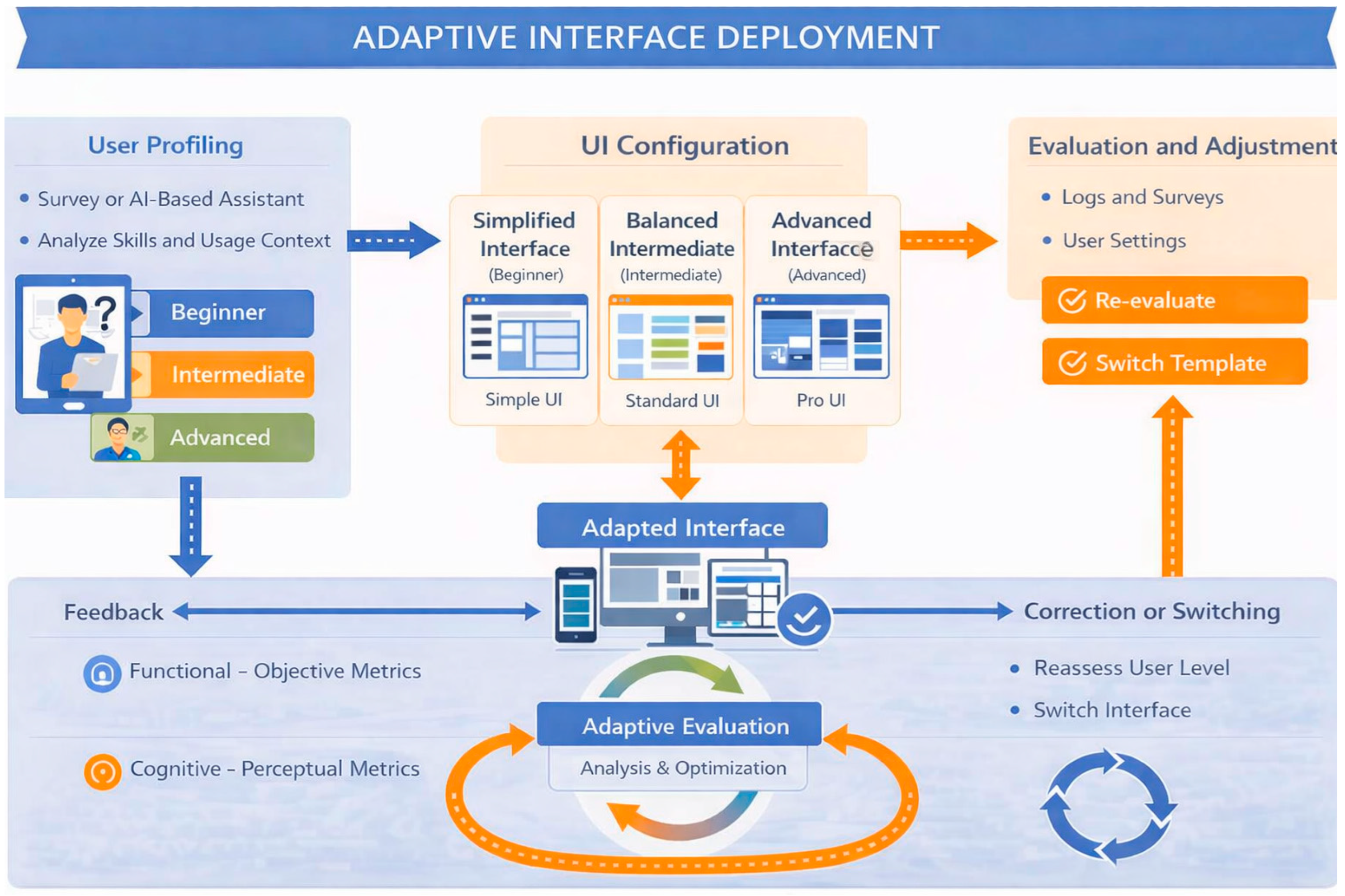

7. Illustrative Scenario: Dynamic Interface Adaptation

7.1. Adaptation Pathways

- Automatic Profile Inference—behavioral data are analyzed to infer the user’s profile and deploy the most suitable UI configuration.

- User-Controlled Configuration—users may manually select or adjust their preferred UI configuration, ensuring transparency and preserving autonomy in environments where personal preference or professional expertise is critical.

- Adviser-Based Suggestions—a recommendation mechanism periodically evaluates accumulated logs and survey responses. When behavioral evidence suggests that another configuration may better support the user, the system proposes trying an alternative template.

- Initial Onboarding—for first-time users, the system may rely on self-reported experience, goals, and preferences to generate an initial profile before behavioral data become available.

7.2. Dynamic Deployment and Feedback Loop

8. Discussion and Implications

8.1. Comparison with Related Work

8.2. Practical and Design Implications

8.3. Limitations and Validation Roadmap

- Experimental Design and Data Collection: A representative interface and task set will be selected to enable the collection of objective performance metrics and subjective assessments.

- User Profiling and Model Application: Participants are assigned to profiles based on their experience levels. To isolate the effect of adaptive profiling, the framework is tested in two configurations: an adaptive version (using profile-dependent weighting) and a non-adaptive baseline (using fixed weights). In both cases, the balance between objective and subjective metrics remains identical.

- Evaluation and Comparative Analysis: This phase will examine the evaluation outcomes to assess differences across user groups and the alignment between model outputs and users’ overall quality judgments. The goal is to determine whether adaptive weighting yields more consistent and profile-sensitive interpretations of interface quality compared to fixed schemes.

8.4. Summary of Contribution

9. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| UI | User Interface |

| HCI | Human–Computer Interaction |

| KLM | Keystroke-Level Model |

| MOS | Mean Opinion Score |

| AMM | Adaptive Multidimensional Model |

| UX | User Experience |

| QoE | Quality of Experience |

| SUS | System Usability Scale |

| PSSUQ | Post-Study System Usability Questionnaire |

| UEQ | User Experience Questionnaire |

| MCDM | Multi-criteria decision-making |

| AHP | Analytic Hierarchy Process |

| PACT | People, Activities, Contexts, Technologies |

| NASA-TLX | NASA Task Load Index |

| GOMS | Goals, Operators, Methods, and Selection rules |

| NHPP | Non-Homogeneous Poisson Process |

| AI | Artificial Intelligence |

References

- Davis, F.D. Perceived Usefulness, Perceived Ease of Use, and User Acceptance of Information Technology. MIS Q. 1989, 13, 319–340. [Google Scholar] [CrossRef] [PubMed]

- Sonderegger, A.; Sauer, J. The influence of design aesthetics in usability testing: Effects on user performance and perceived usability. Appl. Ergon. 2010, 41, 403–410. [Google Scholar] [CrossRef] [PubMed]

- Nielsen, J. Usability Engineering; Morgan Kaufmann: San Diego, CA, USA, 1993. [Google Scholar] [CrossRef]

- Card, S.K.; Moran, T.P.; Newell, A. The Psychology of Human–Computer Interaction; Lawrence Erlbaum Associates: Hillsdale, NJ, USA, 1983. [Google Scholar]

- Bevan, N. Extending quality in use to provide a framework for usability measurement. In Human Centered Design; Springer: Berlin/Heidelberg, Germany, 2009; pp. 13–22. [Google Scholar] [CrossRef]

- Raake, A.; Egger, S. Quality and Quality of Experience. In Quality of Experience: Advanced Concepts, Applications and Methods; Möller, S., Raake, A., Eds.; Springer: Cham, Switzerland, 2014; pp. 11–33. [Google Scholar]

- Möller, S.; Raake, A.; Fiedler, M.; Hossfeld, T.; Keimel, C.; Schatz, R. Towards a Holistic View of Quality of Experience. Dagstuhl Manifesto. In Dagstuhl Reports; Schloss Dagstuhl–Leibniz Center for Informatics: Wadern, Germany, 2017. [Google Scholar]

- Vermeeren, A.P.; Law, E.L.C.; Roto, V.; Obrist, M.; Hoonhout, J.; Väänänen-Vainio-Mattila, K. User experience evaluation methods: Current state and development needs. In Proceedings of the 6th Nordic Conference on Human-Computer Interaction; ACM: Reykjavik, Iceland, 2010; pp. 521–530. [Google Scholar] [CrossRef]

- Purificato, I.; Narducci, F.; Musto, C.; Semeraro, G. User Modeling and Profiling: A Comprehensive Survey. arXiv 2024, arXiv:2402.09660. [Google Scholar] [CrossRef]

- Ntoa, S. Usability and User Experience Evaluation in Intelligent Environments: A Review and Reappraisal. Int. J. Hum.-Comput. Interact. 2024, 41, 2829–2858. [Google Scholar] [CrossRef]

- Fitts, P.M. The information capacity of the human motor system in controlling the amplitude of movement. J. Exp. Psychol. 1954, 47, 381–391. [Google Scholar] [CrossRef]

- Hick, W.E. On the rate of gain of information. Q. J. Exp. Psychol. 1952, 4, 11–26. [Google Scholar] [CrossRef]

- ISO 9241-11:2018; Ergonomics of Human–System Interaction—Part 11: Usability: Definitions and Concepts. ISO: Geneva, Switzerland; IEC: Geneva, Switzerland, 2018.

- Bangor, A.; Kortum, P.; Miller, J. Determining What Individual SUS Scores Mean: Adding an Adjective Rating Scale. J. Usability Stud. 2009, 4, 114–123. [Google Scholar]

- Lewis, J.R. Psychometric evaluation of the PSSUQ using data from five years of usability studies. Int. J. Hum.-Comput. Interact. 2002, 14, 463–488. [Google Scholar]

- Hart, S.; Staveland, L. Development of NASA TLX (Task Load Index): Results of empirical and theoretical research. In Human Mental Workload; Hancock, P.A., Meshkati, N., Eds.; North Holland: Amsterdam, The Netherlands, 1988; pp. 139–183. [Google Scholar]

- Tractinsky, N.; Katz, A.S.; Ikar, D. What is beautiful is usable: The impact of website aesthetics on perceived usability. Interact. Comput. 2000, 13, 127–145. [Google Scholar] [CrossRef]

- Hassenzahl, M. User experience (UX): Towards an experiential perspective on product quality. In IHM’08: Proceedings of the 20th Conference on l’Interaction Homme-Machine; Association for Computing Machinery: New York, NY, USA, 2008. [Google Scholar] [CrossRef]

- Borsci, S.; Federici, S.; Malizia, A. A Multidimensional Approach to Usability and UX Evaluation: A Critical Review. Behav. Inf. Technol. 2023, 42, 389–407. [Google Scholar]

- Mortazavi, E.; Doyon-Poulin, P.; Imbeau, D.; Taraghi, M.; Robert, J.-M. Exploring the Landscape of UX Subjective Evaluation Tools and UX Dimensions: A Systematic Literature Review (2010–2021). Interact. Comput. 2024, 36, iwae017. [Google Scholar] [CrossRef]

- Saaty, T.L. The Analytic Hierarchy Process; McGraw-Hill: New York, NY, USA, 1980. [Google Scholar]

- OECD. Handbook on Constructing Composite Indicators: Methodology and User Guide; OECD Publishing: Paris, France, 2008. [Google Scholar]

- Triantaphyllou, E. Multi-Criteria Decision Making Methods: A Comparative Study; Kluwer Academic Publishers: Boston, MA, USA, 2000. [Google Scholar]

- Brdnik, A.; Heričko, M.; Šumak, B. Intelligent user interfaces and their evaluation: A systematic mapping study. Sensors 2022, 22, 5830. [Google Scholar] [CrossRef] [PubMed]

- Carrera-Rivera, A.; Larrinaga, F.; Lasa, G.; Martínez-Arellano, G.; Unamuno, G. AdaptUI: A Framework for the Development of Adaptive User Interfaces in Smart Product–Service Systems. User Model. User-Adapt. Interact. 2024, 34, 1929–1980. [Google Scholar] [CrossRef]

- Knijnenburg, B.P.; Willemsen, M.C.; Gantner, Z.; Soncu, H.; Newell, C. Evaluating Recommender Systems with User Experiments. In Recommender Systems Handbook; Ricci, F., Rokach, L., Shapira, B., Eds.; Springer: Boston, MA, USA, 2015; pp. 309–352. [Google Scholar] [CrossRef]

- Wang, G.; Zhang, X.; Tang, S.; Zheng, H.; Zhao, B.Y. Unsupervised Clickstream Clustering for User Behavior Analysis. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems (CHI ’16), San Jose, CA, USA, 7–12 May 2016; ACM; New York, NY, USA, 2016; pp. 225–236. [Google Scholar] [CrossRef]

- Hosmer, D.W.; Lemeshow, S.; Sturdivant, R.X. Applied Logistic Regression, 3rd ed.; Wiley: Hoboken, NJ, USA, 2013. [Google Scholar]

- Duda, R.O.; Hart, P.E.; Stork, D.G. Pattern Classification, 2nd ed.; Wiley: New York, NY, USA, 2001. [Google Scholar]

- Shneiderman, B.; Plaisant, C.; Cohen, M.; Jacobs, S.; Elmqvist, N.; Diakopoulos, N. Designing the User Interface: Strategies for Effective Human–Computer Interaction, 6th ed.; Pearson: Boston, MA, USA, 2016. [Google Scholar]

- Benyon, D. Designing Interactive Systems: A Comprehensive Guide to HCI, UX and Interaction Design, 4th ed.; Pearson Education Limited: Harlow, UK, 2019. [Google Scholar]

- Sauro, J.; Lewis, J.R. Quantifying the User Experience: Practical Statistics for User Research, 2nd ed.; Morgan Kaufmann: Cambridge, MA, USA, 2016. [Google Scholar]

- Subramaniam, H.; Zulzalil, H. Software quality assessment using flexibility: A systematic literature review. Int. Rev. Comput. Softw. 2012, 7, 5. [Google Scholar]

- Bass, L.; Clements, P.; Kazman, R. Software Architecture in Practice, 4th ed.; Addison-Wesley Professional: Boston, MA, USA, 2021. [Google Scholar]

- Sweller, J.; Ayres, P.; Kalyuga, S. Cognitive Load Theory; Springer: New York, NY, USA, 2011. [Google Scholar]

- Musa, J.D.; Iannino, A.; Okumoto, K. Software Reliability: Measurement, Prediction, Application; McGraw Hill: New York, NY, USA, 1987. [Google Scholar]

- Lyu, M.R. (Ed.) Handbook of Software Reliability Engineering; McGraw Hill: New York, NY, USA, 1996. [Google Scholar]

- Nielsen, J.; Molich, R. Heuristic evaluation of user interfaces. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Washington, DC, USA, 1–5 April 1990; pp. 249–256. [Google Scholar]

- Luo, X.; Li, Y.; Xu, J.; Zheng, Z.; Ying, F.; Huang, G. AI in medical questionnaires: Scoping review. J. Med. Internet Res. 2025, 27, e72398. [Google Scholar] [CrossRef] [PubMed]

- Streijl, R.; Winkler, S.; Hands, D. Mean opinion score (MOS) revisited: Methods and applications, limitations and alternatives. Multimed. Syst. 2016, 22, 213–227. [Google Scholar] [CrossRef]

| Approach/Model | Objective Indicators | Subjective Indicators | User Profiles | Adaptive Weighting | Multidimensional Structure |

|---|---|---|---|---|---|

| Classical Usability Metrics | ✔ | ✖ | ✖ | ✖ | ✖ |

| UX Questionnaire Based Evaluation | ✖ | ✔ | ✖ | ✖ | ✖ |

| Composite UX/QoE Models | ✔ | ✔ | ✖ | ✖ | ✔ |

| Adaptive UI Systems | ✖ | ✖ | ✔ | ✔ | ✖ |

| Proposed Framework | ✔ | ✔ | ✔ | ✔ | ✔ |

| Composite Indices | Sub-Metrics | Description |

|---|---|---|

| Interaction Efficiency | Task completion time, Number of steps, Actions per task | Measures how quickly and easily users can complete tasks, minimizing effort and redundant actions |

| Reliability | Error rate, System failures, Incorrect states | Captures the frequency of errors and system instability, reflecting robustness of the interface |

| Interface Consistency | Repeatability of elements, Uniform behavior across screens | Evaluates whether design elements behave predictably, providing a coherent experience |

| Composite Indices | Sub-Metrics | Description |

|---|---|---|

| Usability & Intuitiveness | Usability, Perceived ease of use, Intuitiveness | Measures how naturally and predictably users can interact with the interface |

| Clarity & Comprehensibility | Clarity, Visual simplicity, Cognitive load | Evaluates how easily users understand information and navigate the interface without unnecessary mental effort |

| Structural & Feedback Quality | Logical hierarchy, Structural organization, Adequacy of feedback | Assesses how well the interface organizes content and communicates system status to the user |

| Aesthetic & Emotional Satisfaction | Emotional perception, Aesthetic satisfaction | Captures the emotional and aesthetic experience, influencing overall satisfaction and perceived reliability |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Naydenova, I.A.; Kovacheva, Z.S.; Georgiev, I.K. Adaptive Multidimensional Model for User Interface Quality Assessment. Future Internet 2026, 18, 261. https://doi.org/10.3390/fi18050261

Naydenova IA, Kovacheva ZS, Georgiev IK. Adaptive Multidimensional Model for User Interface Quality Assessment. Future Internet. 2026; 18(5):261. https://doi.org/10.3390/fi18050261

Chicago/Turabian StyleNaydenova, Ina Asenova, Zlatinka Svetoslavova Kovacheva, and Iliya Krasimirov Georgiev. 2026. "Adaptive Multidimensional Model for User Interface Quality Assessment" Future Internet 18, no. 5: 261. https://doi.org/10.3390/fi18050261

APA StyleNaydenova, I. A., Kovacheva, Z. S., & Georgiev, I. K. (2026). Adaptive Multidimensional Model for User Interface Quality Assessment. Future Internet, 18(5), 261. https://doi.org/10.3390/fi18050261