Enhancing Handball Analytics with Computer Vision and Machine Learning: An Exploratory Experiment

Abstract

1. Introduction

- The creation and release of a publicly available annotated image dataset for handball.

- The development of tools for automated analysis of handball matches.

- A discussion of key technological challenges in automated handball analysis.

- A foundation for future research on handball analytics and sports technology.

2. Background and Literature Review

2.1. Sports and Handball

2.2. Key Technologies for Handball Analysis Automation

- (a)

- IoT-based approaches:

- (b)

- Machine Learning (ML): ML techniques enable automatic learning from large datasets to recognize patterns, predict player performance, and assess injury risks. In the context of handball, ML can be applied to model player trajectories, predict goal-scoring probabilities, and optimize tactical strategies.

- (c)

- Computer Vision: Computer vision methods, often combined with ML, analyze images and videos to detect and track key entities such as players, referees, and the ball. Techniques proposed by Schrapf et al. [8] illustrate the potential of computer vision in approximating trajectories and identifying game events with high accuracy.

- (d)

- Integration of Technologies: The fusion of IoT, ML, and computer vision creates a comprehensive framework for automated handball analysis. Camera-based systems provide visual streams, sensor data capture ball velocity and movement, and ML models synthesize these inputs to generate actionable insights. Together, these technologies enable real-time decision support for coaches and referees, tactical evaluations for teams, and enhanced experiences for fans and broadcasters.

2.3. Literature Review

2.3.1. Existing Studies in Handball and Related Sports

2.3.2. Insights and Limitations

2.3.3. Gaps and Challenges

- Lack of datasets: Publicly available datasets for handball are rare, limiting the development of robust and generalizable models [12].

- Disconnect between research and practice: A gap persists between researchers and practitioners, as highlighted in interviews with coaches and players. This reduces the practical implementation of technological solutions.

3. Approach

- Reducing the gap between coaches/players and researchers by conducting interviews with both groups to understand their needs, preferences, and challenges in using sports analytics.

- Data gathering and training of a machine learning model by collecting video data, extracting frames from them, and applying computer vision and deep learning techniques to extract relevant features and patterns.

- Brief review of camera technologies by exploring the current state-of-the-art and future trends in camera systems and sensors for sports analytics.

- qualitative interviews with coaches and players to capture practical needs and challenges;

- dataset development and training of computer vision models for object detection and;

- exploration of camera technologies to support depth-based analysis.

3.1. Coach and Player Interviews

- current practices in performance analysis;

- challenges in data collection and interpretation, and;

- desired analytics tools and outputs.

- Data collection: Coaches reported that manual data gathering is time-consuming and often discourages systematic analysis. Nevertheless, imperfect data were perceived as more valuable than no data.

- Psychological aspects: Coaches emphasized that player psychology strongly influences performance, requiring analytics that account for contextual and personal factors.

- Team-level over individual metrics: Data on team formations, collective effort, and tactical execution were considered more useful than individual statistics.

- Data quality gap: Compared with sports such as basketball (NBA), handball analytics suffer from limited reliability and poor-quality datasets.

- Multi-class annotation design: coaches’ emphasis on the need to simultaneously observe all match participants motivated annotating four entity classes (player, goalkeeper, referee, handball) rather than a simpler single-class approach.

- Open dataset priority: interviewees’ identification of data scarcity as a key barrier.

- Transparent per-class reporting: the practitioners’ pragmatic view that imperfect data are more valuable than no data informed our decision to report per-class true positive rates honestly, including the low handball detection rate.

- Acknowledged gap: The focus on team-level and collective metrics highlighted that frame-level object detection is necessary but not a sufficient step; this gap is explicitly framed as a known limitation and future research direction in Section 5.5 and Section 6.2.

3.2. Dataset Development and Computer Vision Models

3.2.1. Data Extraction and Annotation

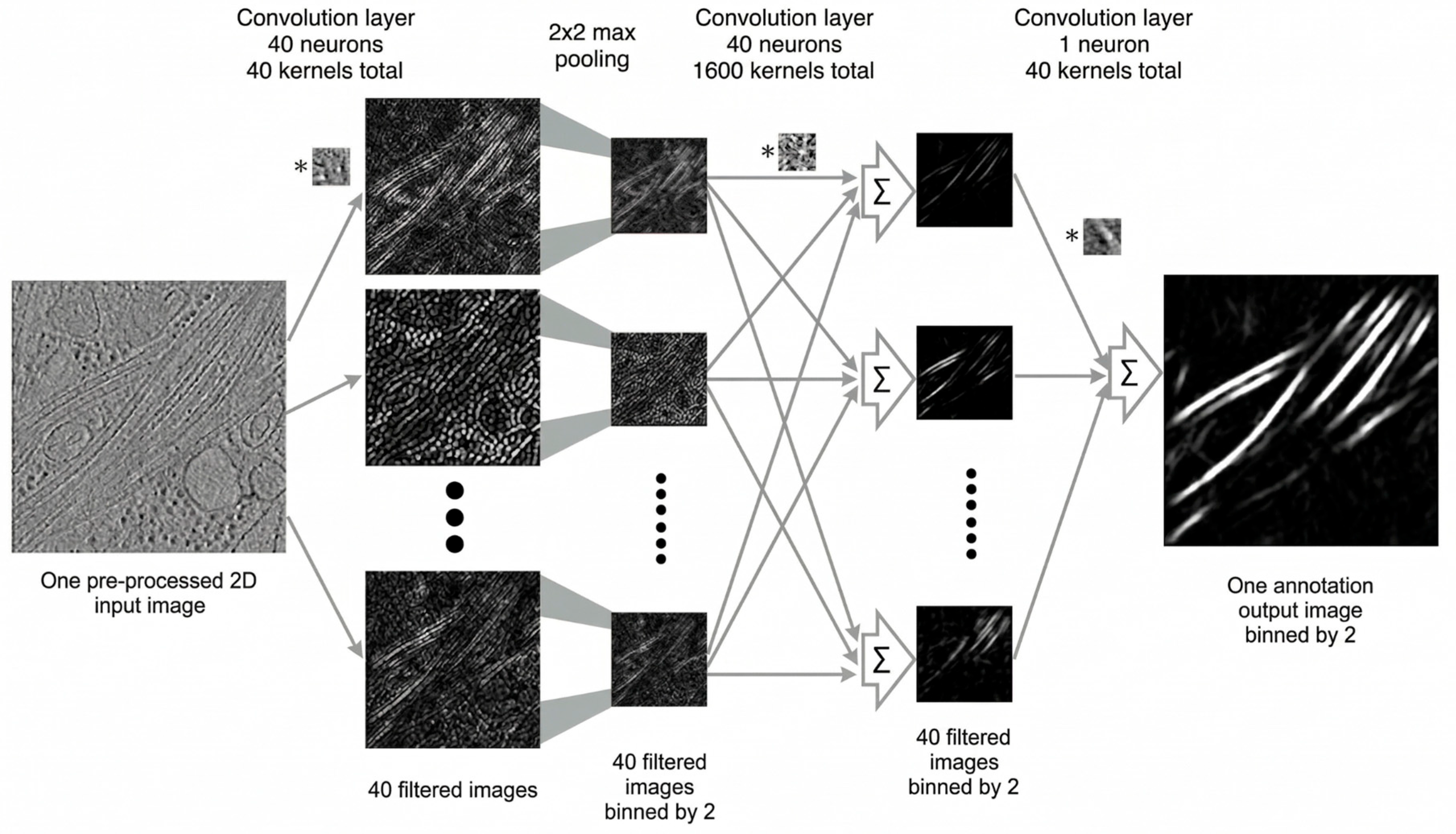

3.2.2. Model Selection and Training

3.3. Cameras Technology

- Event-based cameras: Event-based cameras capture pixel-level intensity changes asynchronously, rather than recording full frames. This enables efficient motion tracking with high temporal resolution. Prophesee [7] proposed such sensors that mimic human retinal activity. However, owing to their high cost and limited availability, event-based cameras were not used in this study but are considered promising for future research.

- Stereo cameras: Stereo cameras use two cameras to estimate depth information from a scene. We used a Zed 2 Camera 2, which is a passive stereo camera that can estimate depths up to 40 m. It also has a comprehensive Software Development Kit (SDK) that includes neural networks, Robot Operating System (ROS) integration, and an object detector. Stereo cameras enable the 3D reconstruction of a game.

3.4. Justification of the Approach and Limitations

- Mixed methods: Interviews ensured practical relevance, whereas computer vision experiments contributed technical rigor.

- Dataset focus: Creating a public dataset to address the scarcity of handball-specific data identified in prior studies.

- Model selection: YOLOv8, YOLONAS, and InternImage were chosen for their balance of accuracy, speed, and robustness to occlusion.

- Cameras: Stereo vision was prioritized owing to its affordability and accessibility compared with event-based sensors.

4. Implementation

4.1. Data Wrangling

4.2. Tools and Novel Software

4.3. Volunteering Campaign for Data Annotation

4.4. Models and Setup

4.4.1. Hardware Availability

4.4.2. Model Selection

- YOLOv8 [13]: A popular and fast object detection model developed by Ultralytics, which incorporates various enhancements over previous YOLO versions.

- YOLO-NAS: A variant of YOLO that uses neural architecture search (NAS) to automatically design a customized model architecture for object detection tasks.

- InternImage [16]: A state-of-the-art computer vision model that employs a transformer-like layer on top of a traditional CNN.

4.4.3. Models Training

4.5. Training Evaluation

- Intersection over Union (IoU);

- Confusion Matrix: especially the true-positive score for each category;

- Mean Average Precision (mAP) Score.

5. Results and Evaluation

5.1. Research Questions

- How can we create a comprehensive and annotated dataset of handball images to facilitate automated analysis?

- What are the challenges and opportunities associated with using different computer vision models for object detection in handball?

- What are the prospects and current limitations of integrating advanced camera technologies, such as event-based and stereo cameras, into a handball analytics pipeline?

5.2. Volunteering Campaign

5.3. Models Performance

5.3.1. YOLOv8 Control Model

5.3.2. YOLOv8 Evaluation

5.3.3. YOLO-NAS Evaluation

5.3.4. InternImage Evaluation

5.4. Models Comparison

5.5. Results Takeaways

6. Conclusions and Future Work

6.1. Conclusions

- Real-time performance tracking: The developed models can serve as a foundation for integration into camera systems to track player movements and ball trajectories during live matches, providing coaches and analysts with immediate feedback on player performance and team strategies. Achieving reliable real-time deployment will require temporal modeling, tracking pipelines, and substantially expanded and validated datasets.

- Post-game analysis: The annotated dataset and trained models lay a foundation for future systems that analyze recorded matches, enabling coaches to identify tactical patterns, evaluate player decisions, and develop targeted training programs.

- Referee assistance: Object detection capabilities, once sufficiently accurate and validated, can assist referees in making more accurate decisions, particularly in fast-paced or complex situations where it may be difficult to track all players and the ball simultaneously.

- Automated event tagging: The developed framework represents an early-stage foundation that can be extended to automatically identify events from a pre-recorded match or in real-time.

6.2. Future Work

- Statistical validation: The current results are based on a single experimental run evaluated on a relatively small held-out test set of 72 images, without k-fold cross-validation or multi-run variance reporting. Future work should prioritize k-fold cross-validation (e.g., 5-fold or 10-fold) to obtain more reliable performance estimates and confidence intervals; multi-run experiments with different random seeds to quantify result variability; and evaluation on an independent external handball dataset.

- Camera technology: Although stereo cameras were tested, other emerging technologies such as event-based cameras have not been fully explored. Future studies should investigate their integration to capture finer motion details and improve model performance.

- Coaches’ and players’ feedback: Interviews with coaches and analysts provided valuable perspectives; however, the sample size was limited. Expanding this engagement with a broader and more diverse group of stakeholders will ensure that the developed systems address practical needs more effectively.

- Dataset expansion: The annotated dataset created in this study is a first step. Expanding it with more match recordings, varied camera angles, female players, and action-specific annotations will improve generalizability and enable more complex analyses, such as tactical recognition.

- Computer vision models: Our focus was primarily on detection accuracy rather than inference speed. Future research should prioritize model optimization, including hyperparameter tuning, lightweight architectures, and the use of temporal or depth information for faster and more efficient detections.

- Object tracking: Object tracking is a natural progression from object detection. Assigning consistent IDs to entities over time will enable the analysis of player movement patterns, ball trajectories, and tactical formations. Our dataset provides a foundation for advancing research in this direction. Future iterations will incorporate temporal information such as multi-frame smoothing, optical flow, or dedicated tracking algorithms, to leverage ball trajectory continuity and substantially improve detection reliability. In addition, we plan to report performance variability across multiple runs to strengthen the scientific validity.

- Future work will also include full-match latency benchmarking under realistic streaming conditions, accounting for preprocessing overhead, I/O bottlenecks, and hardware variability, to provide a more credible assessment of the real-time deployment potential.

- In future work, we plan to conduct a more detailed error analysis in a more in-depth manner. Moreover, we plan to incorporate attention mechanisms or instance segmentation to help differentiate overlapping entities in future model iterations.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- IEEE Standard P2413/D0.4.6; IEEE Standard for an Architectural Framework for the Internet of Things (IoT). IEEE: New York, NY, USA, 2019.

- Marck, A.; Antero-Jacquemin, J.; Berthelot, G.; Saulière, G.; Jancovici, J.M.; Masson-Delmotte, V.; Gilles, B.; Spedding, M.; Le Bourg, É.; Toussaint, J.F. Are We Reaching the Limits of Homo sapiens? Front. Physiol. 2017, 8, 812. [Google Scholar] [CrossRef]

- Rajšp, A.; Fister, I., Jr. A systematic literature review of intelligent data analysis methods for smart sport training. Appl. Sci. 2020, 10, 3013. [Google Scholar] [CrossRef]

- BBC. Handball-Factfile. Available online: https://www.bbc.co.uk/bitesize/guides/zxspfrd/revision/1 (accessed on 30 June 2023).

- Kinexon. Kinexon Handball Homepage. Available online: https://kinexon-sports.com/sports/handball (accessed on 30 June 2023).

- Foina, A.G.; Badia, R.M.; El-Deeb, A.; Ramirez-Fernandez, F.J. Player Tracker-a tool to analyze sport players using RFID. In Proceedings of the 2010 8th IEEE International Conference on Pervasive Computing and Communications Workshops (PERCOM Workshops), Mannheim, Germany, 29 March–2 April 2010; IEEE: New York, NY, USA, 2010; pp. 772–775. [Google Scholar]

- Prophesee. Prophesee Homepage. Available online: https://www.prophesee.ai (accessed on 30 June 2023).

- Schrapf, N.; Alsaied, S.; Tilp, M. Tactical interaction of offensive and defensive teams in team handball analysed by artificial neural networks. Math. Comput. Model. Dyn. Syst. 2017, 23, 363–371. [Google Scholar] [CrossRef]

- Labs, S. Zed 2 Camera Homepage. Available online: https://www.stereolabs.com/zed-2 (accessed on 30 June 2023).

- Vallance, E.; Sutton-Charani, N.; Imoussaten, A.; Montmain, J.; Perrey, S. Combining internal-and external-training-loads to predict non-contact injuries in soccer. Appl. Sci. 2020, 10, 5261. [Google Scholar] [CrossRef]

- Host, K.; Ivasic-Kos, M.; Pobar, M. Action recognition in handball scenes. In Intelligent Computing: Proceedings of the 2021 Computing Conference; Springer: Berlin/Heidelberg, Germany, 2022; Volume 1, pp. 645–656. [Google Scholar]

- Biermann, H.; Theiner, J.; Bassek, M.; Raabe, D.; Memmert, D.; Ewerth, R. A unified taxonomy and multimodal dataset for events in invasion games. In Proceedings of the 4th International Workshop on Multimedia Content Analysis in Sports, Chengdu, China, 20 October 2021; Association for Computing Machinery: New York, NY, USA, 2021; pp. 1–10. [Google Scholar]

- Jocher, G.; Chaurasia, A.; Qiu, J. Ultralytics YOLOv8, version 8.0.0; Ultralytics: Los Angeles, CA, USA, 2023; Available online: https://github.com/ultralytics/ultralytics (accessed on 7 April 2026).

- Redmon, J.; Farhadi, A. Yolov3: An incremental improvement. arXiv 2018, arXiv:1804.02767. [Google Scholar] [CrossRef]

- TofyLion. GitHub Repository for Annotations Helper Tool. Available online: https://github.com/tofylion/annotations_helper (accessed on 30 June 2023).

- Wang, W.; Dai, J.; Chen, Z.; Huang, Z.; Li, Z.; Zhu, X.; Hu, X.; Lu, T.; Lu, L.; Li, H.; et al. Internimage: Exploring large-scale vision foundation models with deformable convolutions. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 14408–14419. [Google Scholar]

- TofyLion. GitHub Repository for Tool That Extracts Random Frames from Videos. Available online: https://github.com/tofylion/frozen-video (accessed on 30 June 2023).

- Roboflow. Roboflow Home. Available online: https://roboflow.com (accessed on 30 June 2023).

- Aharon, S.; Louis-Dupont; Masad, O.; Yurkova, K.; Fridman, L.; Lkdci; Khvedchenya, E.; Rubin, R.; Bagrov, N.; Tymchenko, B.; et al. Super-Gradients, version 3.0.8; Zenodo: Geneva, Switzerland, 2021. [CrossRef]

- Kyriakides, G.; Margaritis, K.G. An Introduction to Neural Architecture Search for Convolutional Networks. arXiv 2020, arXiv:2005.11074. [Google Scholar] [CrossRef]

- Khan, S.H.; Naseer, M.; Hayat, M.; Zamir, S.W.; Khan, F.S.; Shah, M. Transformers in Vision: A Survey. ACM Comput. Surv. 2022, 54, 200. [Google Scholar] [CrossRef]

| Model | Resolution | Count | mAP@50 | Handball | Player | Referee | Goalkeeper | FPS |

|---|---|---|---|---|---|---|---|---|

| Control | 640 × 640 | - | - | 0.00 | - | - | - | - |

| YOLOv8v1 | 640 × 640 | 119 | 0.847 | 0.39 | 0.91 | 0.94 | 0.95 | 15.6 |

| YOLONAS | 640 × 640 | 119 | 0.6249 | 0.14 | 0.82 | 0.48 | 0.57 | 185.5 |

| InternImage | 640 × 640 | 119 | 0.718 | 0.45 | 0.89 | 0.83 | 0.84 | 2.3 |

| Model | Resolution | Count | mAP@50 | Handball | Player | Referee | Goalkeeper | FPS |

|---|---|---|---|---|---|---|---|---|

| Control | 640 × 640 | - | - | 0.00 | - | - | - | - |

| YOLOv8v1 | 640 × 640 | 119 | 0.847 | 0.39 | 0.91 | 0.94 | 0.95 | 15.6 |

| YOLOv8v2 | 640 × 640 | 508 | 0.866 | 0.51 | 0.97 | 0.95 | 0.96 | 15.6 |

| YOLOv8v3 | 1024 × 576 | 508 | 0.868 | 0.51 | 0.92 | 0.84 | 0.95 | 7.4 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Farahat, M.; Soubra, H.; Moulla, D.K.; Abran, A. Enhancing Handball Analytics with Computer Vision and Machine Learning: An Exploratory Experiment. Future Internet 2026, 18, 199. https://doi.org/10.3390/fi18040199

Farahat M, Soubra H, Moulla DK, Abran A. Enhancing Handball Analytics with Computer Vision and Machine Learning: An Exploratory Experiment. Future Internet. 2026; 18(4):199. https://doi.org/10.3390/fi18040199

Chicago/Turabian StyleFarahat, Mostafa, Hassan Soubra, Donatien Koulla Moulla, and Alain Abran. 2026. "Enhancing Handball Analytics with Computer Vision and Machine Learning: An Exploratory Experiment" Future Internet 18, no. 4: 199. https://doi.org/10.3390/fi18040199

APA StyleFarahat, M., Soubra, H., Moulla, D. K., & Abran, A. (2026). Enhancing Handball Analytics with Computer Vision and Machine Learning: An Exploratory Experiment. Future Internet, 18(4), 199. https://doi.org/10.3390/fi18040199